Infinera introduces flexible grid 500G super-channel ROADM

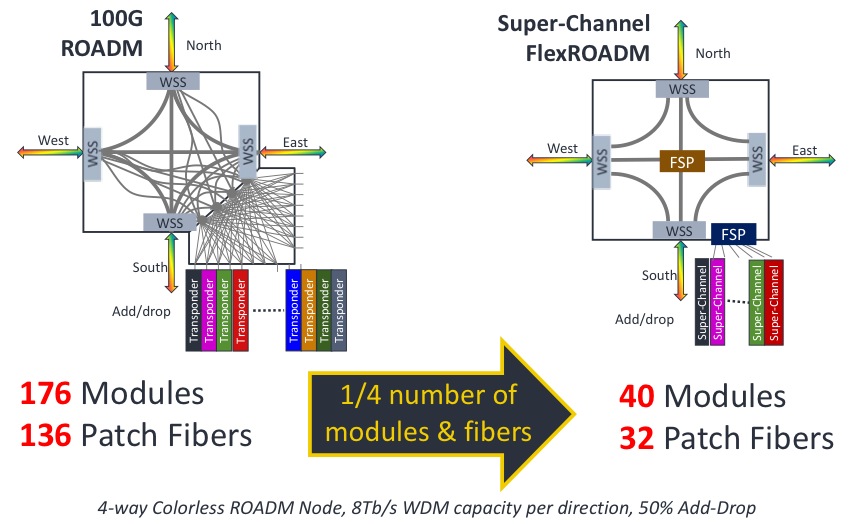

An example showing the impact of a 500G super-channel ROADM node. Source: Infinera

An example showing the impact of a 500G super-channel ROADM node. Source: Infinera

"The FlexROADM will open up the Tier-1 operators in a way Infinera has not been able to do before," says Dana Cooperson, vice president, network infrastructure at market research firm, Ovum. "The DTN-X was necessary but not sufficient; the ROADM is the last piece."

The FlexROADM is claimed to deliver two industry firsts: it can add and drop flexible-grid-based 500 Gig super-channels, and uses the Internet Engineering Task Force’s (IETF) spectrum switched optical networks (SSON).

"SSON is the next generation of WSON [Wavelength Switched Optical Network control plane], except it manages spectrum," says Ron Kline, principal analyst, network infrastructure also at Ovum.

The DTN-X platform combines Infinera's 500 Gig photonic integrated circuits and OTN (Optical Transport Network) switching. With the FlexROADM, Infinera has added switching at the optical layer in 500 Gig increments. Infinera can now offer enhanced multi-layer network optimisation with the combination of electrical and optical switching.

"Optical bypass before was manual using patch cords, now operators can reconfigure with the FlexROADM," says Kline. "It also provides new optical restoration capabilities that Infinera did not have."

The FlexROADM supports up to nine degrees, and is available in colourless, colourless and directionless, and full colourless, directionless and contentionless (CDC) versions.

"The debate about contentionless continues," says Kline. "It is safe to assume that for the majority of applications flexible grid, colourless and directionless will be the high runner." Contentionless will be used by the big carriers, he says, but in certain locations only.

Infinera says the line system announced will support up to 24 Terabit-per-second (Tbps) when it ships in September. The maximum long-haul capacity using its current PM-QPSK super-channels is 9.5Tbps per fibre pair.

"In the future when we enable metro-reach super-channels using PM-16-QAM, they will support 24 Terabit-per-second per fibre pair using the line system we are announcing," says Geoff Bennett, director, solutions and technology at Infinera.

Bennett says the data rate and the spectral efficiency for a given sub-carrier can be varied depending on the reach required. The spacing between sub-carriers that make up a super-channel also can be varied depending on reach. Many different transmission possibilities exist, says Bennett, but to explain the concept, he cites two examples.

The 24Tbps capacity with PM-16-QAM modulation uses pulse shaping at the transmitter to achieve 'Nyquist DWDM' channel spacing, the spacing between channels that approximates the baud rate, says Bennett.

"At this time we are not disclosing the details of the channel spacing, or the number of sub-carriers used by our future line modules," says Bennett. "But the total super-channel spectral width is the equivalent of 200GHz if you are transmitting a one Terabit super-channel, for example." This equates to a spectral efficiency of 5b/s/Hz, and using 16-QAM, the reach achieved will be 600-700km.

"The system we have just launched is designed to operate in long-haul networks and uses PM-QPSK," says Bennett. "For an ultra long-haul reach requirement of 4,500km, the super-channel comprises ten sub-carriers; a total of 500 Gbps over a spectral width of 250 GHz." These line cards are available now, he says.

Infinera continues to make steady market progress, according to Ovum. The company is in the top 10 system vendors globally, while in backbone and 100 Gigabit, Infinera is fourth.

Books in 2013 - Part 2

Steve Alexander, CTO of Ciena

David and Goliath: Underdogs, Misfits, and the Art of Battling Giants by Malcolm Gladwell.

I’ve enjoyed some of Gladwell’s earlier works such as The Tipping Point and Outliers: The Story of Success. You often have to read his material with a bit of a skeptic's eye since he usually deals with people and events that are at least a standard deviation or two away from whatever is usually termed “normal.” In this case he makes the point that overcoming an adversity (and it can be in many forms) is helpful in achieving extraordinary results. It also reminded me of the many people who were skeptical about Ciena’s initial prospects back in the middle '90s when we first came to market as a “David” in a land of giant competitors. We clearly managed to prosper and have now outlived some of the giants of the day.

Overconnected: The Promise and Threat of the Internet by William Davidow.

I downloaded this to my iPad a while back and finally got to read it on a flight back from South America. On my trip what had I been discussing with customers? Improving network connections of course. I enjoyed it quite a bit because I see some of his observations within my own family. The desire to “connect” whenever something happens and the “positive feedback” that can result from an over-rich set of connections can be both quite amusing as well as a little scary! I don’t believe that all of the events that the author attributes to being overconnected are really as cause-and-effect driven as he may portray, but I found the possibilities for fads, market bubbles, and market collapses entertaining.

For another insight into such extremes see Extraordinary Popular Delusions and the Madness of Crowds by Charles Mackay, first published in the 1840s. We, as a species, have been a bit wacky for a long time.

Shadow Divers: The True Adventure of Two Americans Who Risked Everything to Solve One of the Last Mysteries of World War II by Robert Kurson.

Having grown up in the New York / New Jersey area and having listened to stories from my parents about the fear of sabotage in World War II (Google Operation Pastorius for some background) and grandparents, who had experienced the Black Tom Explosion during WW1, this book was a “don’t put it down till done” for me. I found it by accident when browsing a used book store. It’s available on Kindle and is apparently somewhat controversial because another diver has written a rebuttal to at least some of what was described. It is a great example of what it takes to both dive deep and solve a mystery.

David Welch, President, Infinera

Here is my cut. The first three books offer a perspective on how people think and I apply it to business.

- The Talent Code: Greatness Isn't Born. It's Grown. Here's How by Daniel Coyle.

- Mindset: The New Psychology of Success by Carol Dweck

- Moneyball: The Art of Winning an Unfair Game by Michael Lewis.

My non-work related book is Unbroken: A World War II Story of Survival, Resilience, and Redemption by Laura Hillenbrand.

Unfortunately, I rarely get time to read books, so the picking can be thin at times.

Marcus Weldon, President of Bell Labs and CTO, Alcatel-Lucent

I am currently re-reading Jon Gertner's history of Bell labs, called The Idea Factory: Bell Labs and the Great Age of American Innovation which should be no surprise as I have just inherited the leadership of this phenomenal place, and much of what he observes is still highly relevant today and will inform the future that I am planning.

I joined Bell Labs in 1995 as a post-doctoral researcher in the famous, Nobel-prize winning Physics Division (Div111, as it was known) and so experienced much of this first hand. In particular, I recall being surrounded by the most brilliant, opinionated, odd, inspired, collaborative, competitive, driven, relaxed, set of people I had ever met. And with the shared goal of solving the biggest problems in information and telecommunications.

Having recently returned back to the 'bosom of bell', I find that, remarkably, much of that environment and pool of talent still remains. And that is hugely exciting as it means that we still have the raw ingredients for the next great era of Bell Labs. My hope is that 10 years from now Gertner will write a second edition or updated version of the tale that includes the renewed success of Bell Labs, and not just the historical successes.

On the personal front, I am reading whatever my kids ask me to read them. Two of the current favourites are: Turkey Claus, about a turkey trying to avoid becoming the centrepiece of a Christmas feast by adapting and trying various guises, and Pete the Cat Saves Christmas, about a world of an ailing feline Claus, requiring average cat, Pete, to save the big day.

I am not sure there is a big message here, but perhaps it is that 'any one of us can be called to perform great acts, and can achieve them, and that adaptability is key to success'. And of course, there is some connection in this to the Bell Labs story above, so I will leave it there!

Books in 2013: Part 1, click here

SDN starts to fulfill its network optimisation promise

Infinera, Brocade and ESnet demonstrate the use of software-defined networking to provision and optimise traffic across several networking layers.

Infinera, Brocade and network operator ESnet are claiming a first in demonstrating software-defined networking (SDN) performing network provisioning and optimisation using platforms from more than one vendor.

Mike Capuano, Infinera

Mike Capuano, Infinera

The latest collaboration is one of several involving optical vendors that are working to extend SDN to the WAN. ADVA Optical Networking and IBM are working to use SDN to connect data centres, while Ciena and partners have created a test bed to develop SDN technology for the WAN.

The latest lab-based demonstration uses ESnet's circuit reservation platform that requests network resources via an SDN controller. ESnet, the US Department of Energy's Energy Sciences Network, conducts networking R&D and operates a large 100 Gigabit network linking research centres and universities. The SDN controller, the open source Floodlight Project design, oversees the network comprising Brocade's 100 Gigabit MLXe IP router and Infinera's DTN-X platform.

The goal of provisioning and optimising traffic across the routing, switching and optical layers has been a work in progress for over a decade. System vendors have undertaken initiatives such as External Network-Network Interface (ENNI) and multi-domain GMPLS but with limited success. "They have been talked about, experimented with, but have never really made it out of the labs," says Mike Capuano, vice president of corporate marketing at Infinera. "SDN has the opportunity to solve this problem for real."

"In the world of Web 2.0, the general approach is not to sit and wait till standards are done, but to prototype, test, find the gaps, report back, and do it again"

"SDN, and technologies like the OpenFlow protocol, allow all of the resources of the entire network to be abstracted to this higher level control," says Daniel Williams, director of product marketing for data center and service provider routing at Brocade.

Daniel William, BrocadeInfinera and ESnet demonstrated OpenFlow provisioning transport resources a year ago. This latest demonstration has OpenFlow provisioning at the packet and optical layers and performing network optimisation. "We have added more carrier-grade capabilities," says Capuano. "Not just provisioning, but now we have topology discovery and network configuration."

Daniel William, BrocadeInfinera and ESnet demonstrated OpenFlow provisioning transport resources a year ago. This latest demonstration has OpenFlow provisioning at the packet and optical layers and performing network optimisation. "We have added more carrier-grade capabilities," says Capuano. "Not just provisioning, but now we have topology discovery and network configuration."

“The demonstration is a positive step in the development of SDN because it showcases the multi-layer transport provisioning and management that many operators consider the prime use case for transport SDN,” says Rick Talbot, principal analyst, optical infrastructure at Current Analysis. "The demonstration’s real-time network optimisation is an excellent example of the potential benefits of transport SDN, leveraging SDN to minimise transit traffic carried at the router layer, saving both CapEx and OpEx."

Using such an SDN setup, service providers can request high-bandwidth links to meet specific networking requirements. "There can be a request from a [software] app: 'I need a 80 Gigabit flow for two days from Switzerland to California with a 95ms latency and zero packet loss'," says Capuano. "The fact that the network has the facility to set that service up and deliver on those parameters automatically is a huge saving."

Such a link can be established the same day of the request being made, even within minutes. Traditionally, such requests involving the IP and optical layers - and different organisations within a service provider - can take weeks to fulfill, says Infinera.

Current Analysis also highlights another potential benefit of the demonstration: how the control of separate domains - the Infinera wavelength and TDM domain and the Brocade layer 2/3 domain - with a common controller illustrates how SDN can provide end-to-end multi-operator, multi-vendor control of connections.

What next

The Open Networking Foundation (ONF) has an Optical Transport Working Group that is tasked with developing OpenFlow extensions to enable SDN control beyond the packet layer to include optical.

How is the optical layer in the demonstration controlled given the ONF work is unfinished?

"Our solution leverages Web 2.0 protocols like RESTful and JSON integrated into the Open Transport Switch [application] that runs on the DTN-X," says Capuano. "In the world of Web 2.0, the general approach is not to sit and wait till standards are done, but to prototype, test, find the gaps, report back, and do it again."

Further work is needed before the demonstration system is robust enough for commercial deployment.

"This is going to take some time: 2014 is the year of test and trials in the carrier WAN while 2015 is when you will see production deployment," says Capuano. "If service providers are making decision on what platforms they want to deploy, it is important to chose ones that are going to position them well to move to SDN when the time comes."

Infinera speeds up network restoration

- Claimed to be the only hardware implementation of the Shared Mesh Protection protocol

- Provides network-wide protection against multiple network failures

- The chip is already within the DTN-X system; protocol will be activated this year

Pravin Mahajan, Infinera

Pravin Mahajan, Infinera

Infinera has developed a chip to speed up network restoration following faults.

The chip implements the Shared Mesh Protection (SMP) protocol being developed by the International Telecommunication Union (ITU) and the Internet Engineering Task Force (IETF) and Infinera believes it is the only vendor with hardware acceleration of the protocol.

The SMP standard is still being worked on and will be completed this year. Infinera demonstrated its hardware SMP implementation at OFC/NFOEC 2013 and will activate the scheme in operators' networks using a platform software upgrade this year.

The chip, dubbed Fast Shared Mesh Protection (FastSMP), is sprinkled across cards within Infinera's DTN-X platform and will be linked to other FastSMP ICs across the network. The FastSMP chips exchange signalling information and use internal look-up tables with pre-calculated routing data to determine the required protection action when one or more network failures occur.

Network faults

The causes of network faults range from fibre cuts from construction work to natural disasters such as Hurricane Sandy and the Asia Pacific tsunami. Level 3 Communications cited in 2011 that squirrels were the second most common cause of fibre cuts after construction work. The squirrels, chewing through fibre, accounted for 17 percent of all cuts. Meanwhile, one Indian service provider says it experiences 100 fibre cuts nationwide each day, according to Infinera.

Operators are also having to share their network maps with enterprises that want to assess the risk based on geography before choosing a service provider. "End customers no longer necessarily trust the service level agreements they have with operators," says Pravin Mahajan, director, corporate marketing and messaging at Infinera. In riskier regions, for example those prone to earthquakes, enterprises may choose two operators. "A form of 1+1 protection,” says Mahajan.

Operators want resilient networks that adapt to faults quickly, ideally within 50ms, without adding extra cost.

Traditional resiliency schemes include SONET/SDH’s 1+1 protection. This meets the sub-50ms requirement but addresses single faults only and requires dedicated back-up for each circuit. At the IP/MPLS (Internet Protocol/ Multiprotocol Label Switching) layer, the MPLS Fast Re-Route scheme caters for multiple failures and is sub-50ms. But it only addresses local faults, not the full network. And being packet-based - at a higher layer of the network - the scheme is costlier to implement.

"End customers no longer necessarily trust the service level agreements they have with operators"

Infinera's protection scheme uses its digital optical networking approach based on its photonic integrated circuits (PICs) coupled with Optical Transport Networking (OTN). OTN resides between the packet and optical layers, and using a mesh network topology, it can handle multiple failures. By sharing bandwidth at the transport layer, the approach is cheaper than at the packet layer. But being software-based, restoration takes seconds.

Infinera has speeded up the scheme by implementing SMP with its chip such that it meets the 50ms goal.

FastSMP chip

Infinera plans for multiple failures using the Generalized Multiprotocol Label Switching (GMPLS) control plane. “The same intelligence is now implemented in hardware [using the FastSMP processor],” says Mahajan.

The chip is on each 500 Gigabit-per-second (Gbps) line card, within the platform's OTN switch fabric, the client side and as part of the controller. The FastSMP, described as a co-processor to the CPU, hosts look-up tables with rules as to what should happen with each failure. The chips, located in the platform and across the network, then adjust to the back-up plan for each service failure.

Infinera says that the protection is at the service level not at the link level. "It does this at ODU [OTN's optical data unit] granularity," says Mahajan; each circuit can hold different sized services, 2.5 Gigabit-per-second (Gbps) or 10Gbps for example, all carried within a 100Gbps light path. "By defining failure scenarios on a per-service basis, you now need to put all these entries in hardware," says Mahajan.

To program the chip, network failures are simulated using Infinera's network planning tool to determine the required back-up schemes. These can be chosen based on shortest path or lowest latency, for example.

The GMPLS control plane protocol determines the rules as to how the network should be adapted and these are written on-chip. When a failure occurs, the chip detects the failure and performs the required actions.

The FastSMP chip is already on all the DTN-X line cards Infinera has shipped and will be enabled using software upgrade.

The GMPLS control plane recomputes backup paths after a failure has occurred. Typically no action is required but if several failures occur, the new GMPLS backup paths will be distributed to update the FastSMPs' tables. "Only on the third or fourth failure typically will a new backup plan be needed," says Mahajan.

In effect, the more meshed the network topology, the greater the number of failures that can be tolerated. "When you have three or four failures, you need to have new computation at the GMPLS control plane and then it can repopulate the backups for failures 3, 4, and 5," he says.

Instant bandwidth and FastSMP

Infinera is able to turn up bandwidth in real-time using its 500Gbps super-channel PIC. "We slice up the 500 Gig capacity available per line card into 100 Gig chunks," says Mahajan.

This feature, combined with FastSMP, aids operators dealing with failures once traffic is rerouted. The next backup route, if it is close to its full capacity, can have an extra 100 Gigabit of capacity added in case the link is called into use.

A study based on an example 80-node network by ACG Research estimates that the Shared Mesh Protection scheme uses 30 percent less line-side ports compared to an equivalent network implementing the 1+1 protection scheme.

Space-division multiplexing: the final frontier

System vendors continue to trumpet their achievements in long-haul optical transmission speeds and overall data carried over fibre.

Alcatel-Lucent announced earlier this month that France Telecom-Orange is using the industry's first 400 Gigabit link, connecting Paris and Lyon, while Infinera has detailed a trial demonstrating 8 Terabit-per-second (Tbps) of capacity over 1,175km and using 500 Gigabit-per-second (Gbps) super-channels.

"Integration always comes at the cost of crosstalk"

Peter Winzer, Bell Labs

Yet vendors already recognise that capacity in the frequency domain will only scale so far and that other approaches are required. One is space-division multiplexing such as using multiple channels separated in space and implemented using multi-core fibre with each core supporting several modes.

"We want a technology that scales by a factor of 10 to 100," says Peter Winzer, director of optical transmission systems and networks research at Bell Labs. "As an example, a fibre with 10 cores with each core supporting 10 modes, then you have the factor of 100."

Space-division multiplexing

Alcatel-Lucent's research arm, Bell Labs, has demonstrated the transmission of 3.8Tbps using several data channels and an advanced signal processing technique known as multiple-input, multiple-output (MIMO).

In particular, 40 Gigabit quadrature phase-shift keying (QPSK) signals were sent over a six-spatial mode fibre using two polarisation modes and eight wavelengths to achieve 3.8Tbps. The overall transmission uses 400GHz of spectrum only.

Alcatel-Lucent stresses that the commercial deployment of space-division multiplexing remains years off. Moreover operators will likely first use already-deployed parallel strands of single-mode fibre, needing the advanced signal processing techniques only later.

"You might say that is trivial [using parallel strands of fibre], but bringing down the cost of that solution is not," says Winzer.

First, cost-effective integrated amplifiers will be needed. "We need to work on a single amplifier that can amplify, say, ten existing strands of single-mode fibre at the cost of two single-mode amplifiers," says Winzer. An integrated transponder will also be needed: one transponder that couples to 10 individual fibres at a much lower cost than 10 individual transponders.

With a super-channel transponder, several wavelengths are used, each with its own laser, modulator and detector. "In a spatial super-channel you have the same thing, but not, say, three different frequencies but three different spatial paths," says Winzer. Here photonic integration is the challenge to achieve a cost-effective transponder.

Once such integrated transponders and amplifiers become available, it will make sense to couple them to multi-core fibre. But operators will only likely start deploying new fibre once they exhaust their parallel strands of single-mode fibre.

Such integrated amplifiers and integrated transponders will present challenges. "The more and more you integrate, the more and more crosstalk you will have," says Winzer. "That is fundamental: integration always comes at the cost of crosstalk."

Winzer says there are several areas where crosstalk may arise. An integrated amplifier serving ten single-mode fibres will share a multi-core erbium-doped fibre instead of ten individual strands. Crosstalk between those closely-spaced cores is likely.

The transponder will be based on a large integrated circuit giving rise to electrical crosstalk. One way to tackle crosstalk is to develop components to a higher specification but that is more costly. Alternatively, signal processing on the received signal can be used to undo the crosstalk. Using electronics to counter crosstalk is attractive especially when it is the optics that dominate the design cost. This is where MIMO signal processing plays a role. "MIMO is the most advanced version of spatial multiplexing," says Winzer.

To address crosstalk caused by spatial multiplexing in the Bell Labs' demo, 12x12 MIMO was used. Bell Labs says that using MIMO does not add significantly to the overall computation. Existing 100 Gigabit coherent ASICs effectively use a 2x2 MIMO scheme, says Winzer: “We are extending the 2x2 MIMO to 2Nx2N MIMO.”

Only one portion of the current signal processing chain is impacted, he adds; a portion that consumes 10 percent of the power will need to increase by a certain factor. The resulting design will be more complex and expensive but not dramatically so, he says.

Winzer says such mitigation techniques need to be investigated now since crosstalk in future systems is inevitable. Even if the technology's deployment is at least a decade away, developing techniques to tackle crosstalk now means vendors have a clear path forward.

Parallelism

Winzer points out that optical transmission continues to embrace parallelism. "With super-channels we go parallel with multiple carriers because a single carrier can’t handle the traffic anymore," he says. This is similar to parallelism in microprocessors where multi-core designs are now used due to the diminishing return in continually increasing a single core's clock speed.

For 400Gbps or 1 Terabit over a single-mode fibre, the super-channel approach is the near term evolution.

Over the next decade, the benefit of frequency parallelism will diminish since it will no longer increase spectral efficiency. "Then you need to resort to another physical dimension for parallelism and that would be space," says Winzer.

MIMO will be needed when crosstalk arises and that will occur with multiple mode fibre.

"For multiple strands of single mode fibre it will depend on how much crosstalk the integrated optical amplifiers and transponders introduce," says Winzer.

Part 1: Terabit optical transmission

Infinera adds software to its PIC for instant bandwidth

Infinera has enabled its DTN-X platform to deliver rapidly 100 Gigabit services. The ability to fulfill capacity demand quickly is seen as a competitive advantage by operators. Gazettabyte spoke with Infinera and TeliaSonera International Carrier, a DTN-X customer, about the merits of its 'instant bandwidth' and asked several industry analysts for their views.

Infinera has added a WDM line card hosting its 500 Gigabit super-channel photonic integrated circuit to its DTN-X platform

Infinera has added a WDM line card hosting its 500 Gigabit super-channel photonic integrated circuit to its DTN-X platform

Pravin Mahajan, Infinera.

Infinera is claiming an industry first with the software-enablement of 100 Gigabit capacity increments. The company's DTN-X platform's 'instant bandwidth' feature shortens the time to add new capacity in the network, from weeks as is common today to less than a day.

The ability to add bandwidth as required is increasingly valued by operators. TeliaSonera International Carrier points out that its traffic demands are increasingly variable, making capacity requirements harder to forecast and manage.

"It [the DTN-X's instant bandwidth] enables us to activate 100 Gig services between network spans to manage our own IP traffic which is growing rapidly," says Ivo Pascucci, head of sales, Americas at TeliaSonera International Carrier. "We will also be able to sell in the market 100 Gig services and activate the capacity much more rapidly."

What has been done

Infinera has added three elements to enable its DTN-X platform to enable 100 Gigabit services.

One is a new wavelength division multiplexing (WDM) line card that features its 500 Gigabit-per-second (Gbps) super-channel photonic integrated circuit (PIC). Infinera says the line card has 500Gbps of capacity enabled, of which only 100Gbps is activated. "The remaining 400Gbps is latent, waiting to be activated," says Pravin Mahajan, director of corporate marketing and messaging at Infinera.

Infinera uses the DTN-X's Optical Transport Network (OTN) switch fabric to pack the client side signals onto any of the 100Gbps channels activated on the line side. This capacity pool of up to 500 Gbps, says Infinera, results in better usage of backbone capacity compared to traditional optical networking equipment based on individual 100Gbps 'siloed' channels.

A software application has also been added to Infinera's network management system, the digital network administrator (DNA), to activate the 100Gbps capacity increments.

Lastly, Infinera has in place a just-in-time system that enables client-side 10 Gigabit Ethernet optical transceivers to be delivered to customers within 10 days, if they out of stock. Infinera says it is achieving a 6-day delivery time in 95% of the cases.

Advantages

TeliaSonera International Carrier confirms the advantages to having 100 Gigabit capacities pre-provisioned and ready for use.

"Having the ability to turn up large bandwidth is critical to our business, especially as the [traffic] numbers continue to grow"

"Having the ability to turn up large bandwidth is critical to our business, especially as the [traffic] numbers continue to grow"

Ivo Pascucci, TeliaSonera International Carrier

"If it is individual line cards across the network when you have as many PoPs as we do, it does get tricky," says Pascucci. "If we have 500 Gig channels pre-provisioned with the ability to activate 100 Gig segments as needed, that gives us an advantage versus having to figure out how many line cards to have deployed in which nodes, and forecasting which nodes should have the line cards in the first place."

The operator is already seeing demand for 100 Gigabit services, from the carrier market and large content providers. The operator already provides 10x10Gbps and 20x10Gbps services to customers. "With that there are all the challenges of provisioning ten or 20 10 Gig circuits and 10 or 20 cross-connects for each site," says Pascucci. The operator also manages one and two Terabits of network capacity for certain customers.

"Having the ability to turn up large bandwidth is critical to our business, especially as the [traffic] numbers continue to grow," says Pascucci.

Analysts' comments

Gazettabye asked several industry analysts about the significance of Infinera's announcement. In particular the uniqueness of the offering, the claim to reduce rapidly bandwidth enablement times and its importance for operators.

Infonetics Research

Andrew Schmitt, directing analyst for optical

Schmitt believes Infinera's announcement is significant as it is the first announced North American win. It also shows the company has a solution for carriers that only want to roll out a single 100 Gbps but don't want to buy 500Gbps.

More importantly, it should allow some carriers to deploy extra capacity for future use at no cost to them and that opens up interesting possibilities for automatically switched optical network (ASON) management or even software-defined networking (SDN).

"As to the claim that it reduces capacity enablement from weeks to potential minutes, to some degree, yes," says Schmitt.

Certainly Ciena, Alcatel-Lucent or Cisco could ship extra line cards into customers and not charge the customer until they are used and that would effectively achieve the same result. "But if the PIC truly has better economics than the discrete solutions from these vendors then Infinera can ship hardware up front and then recognise the profits on the back end," he says.

"You simply can't predict where the best places to put bandwidth will be"

In turn, if customers get free inventory management out of the deal and Infinera equipment can support that arrangement more economically, that is a significant advantage for Infinera.

"This instant bandwidth is unique to Infinera. As I said, anyone could do this deal. But you need a hardware cost structure that can support it or it gets expensive quickly," says Schmitt. "Everyone is working on super-channels but it is clear from the legacy of the way the 10 Gig DTN hardware and software worked that Infinera gets it."

Schmitt believes the term super-channel is abused. He prefers the term virtualised bandwidth - optical capacity that can be allocated the same way server or storage resources are assigned through virtualization.

"The SDN hype is hitting strong in this business but Infinera is really one of the only companies that have a history of a hardware and software architecture that lends itself well to this concept," he says. This is validated with its customer list which is loaded heavily with service providers that are not just talking about SDN but actively doing something, he says.

"It [turning capacity up quickly] is important for SDN as well as more advanced protection arrangements. You simply can't predict where the best places to put bandwidth will be," says Schmitt. "If you can have spare capacity in the network that is lit on demand but not paid for if you don't need it, it is the cheapest approach for avoiding overbuilding a network for corner-case requirements.

"I think the accounting for this product will be interesting, it is likely that we will know in a year how successful this concept was just by a careful examination of the company's financials," he concludes.

ACG Research

Eve Griliches, vice president of optical networking

Infinera delivered this year the DTN-X with 500 Gig super-channels based on PIC technology. Now, a new 500 Gig line card has been added that can operate at 100 Gig and the remaining 400 Gig can be lit in 100 Gig increments using software. This allows customers to purchase 100 Gig at a time, and turn up subsequent bandwidth via software when they require it.

“No other vendor has a software-based solution, and no one else is delivering 500 Gig yet either,” says Griliches.

With this solution, ACG Research says in its research note, operators can start to develop a flexible infrastructure where bandwidth can grow and move around the network instantly. This is useful to address varying demands in bandwidth, triggered by incidents such as natural disasters or sporting events.

Rapid bandwidth enablement has always been important and takes way too long, so this development is key, says Griliches: “Also, it enables Infinera to enter markets which only need one 100 Gig wavelength for now, which they could not do before.”

“No other vendor has a software-based solution, and no one else is delivering 500 Gig yet either”

Looking forward, ACG Research expects this software and hardware-based instant bandwidth utility model will enable Infinera to widen its potential market base and increase its global market share in 2013 and 2014.

Ovum

Ron Kline, principal analyst, and Dana Cooperson, vice president, of the network infrastructure practice

Ovum also thinks Infinera's announcement is significant. It brings essentially the same value proposition Infinera had with 10 Gigabit to the 100 Gigabit market - low operational expenditure (opex) and quick time-to-market. ”Remember 10 Gig in 10 days?” says Kline.

It further fixes an issue for customers in that with the 10x10Gbps, they had to essentially pay for the full 100Gbps up front, and then they could be very efficient with turn-up and opex. Customers made an efficient opex for more capital expenditure (capex) up-front trade. "With instant bandwidth, they don't have to make the upfront capex-versus-opex tradeoff; they can be most efficient with both,” says Cooperson.

Any vendor can shorten capacity enablement times if they can convince the operator to pre-position bandwidth in the network that is ready to be turned on at a moment's notice.

Ron Kline

Kline says operators has different processes for turning up services and in many cases it is these processes and not the equipment directly that is the cause of the additional time for provisioning. “For example the operator may not use the DNA system or may have a very complex OSS/BSS used in the process,” says Kline.

Nevertheless, the capability to have really short provisioning is there, if an operator wants to take advantage. In the TeliaSonera case, Infinera is managing the network so the quick time to market will be there, says Kline.

Cooperson adds that there can be many factors that impede the capacity enablement process, based on Ovum's own research. “But it is clear from talking to Infinera's customers that its system design and approach is a big benefit to those carriers, often the competitive carriers, in competing in the market,” she says. “Multiple carriers told us that with the Infinera system, they were able to win business from competitors.”

Any vendor can shorten capacity enablement times if they can convince the operator to pre-position bandwidth in the network that is ready to be turned on at a moment's notice. However what is unique to Infinera is its system is deployed 500Gbps at a time and all the switching is done electrically by the OTN switch at each node. Others are working on super-channels but none are close to deploying, says Ovum.

“Multiple carriers told us that with the Infinera system, they were able to win business from competitors.”

Dana Cooperson

The ability to turn on bandwidth rapidly is becoming increasingly important. From a wholesale operator perspective it is very important and a key differentiator.

"It's particularly relevant to wholesale applications where large bandwidth chunks are required and the customer is another carrier," says Cooperson. "Whether you view a Google or a Facebook as a carrier or a very large enterprise, it would apply to them as well as a more traditional carrier."

Briefing: Flexible elastic-bandwidth networks

Vendors and service providers are implementing the first examples of flexible, elastic-bandwidth networks. Infinera and Microsoft detailed one such network at the Layer123 Terabit Optical and Data Networking conference held earlier this year.

Optical networking expert Ioannis Tomkos of the Athens Information Technology Center explains what is flexible, elastic bandwidth.

Part 1: Flexible elastic bandwidth

"We cannot design anymore optical networks assuming that the available fibre capacity is abundant"

Prof. Tomkos

Several developments are driving the evolution of optical networking. One is the incessant demand for bandwidth to cope with the 30+% annual growth in IP traffic. Another is the changing nature of the traffic due to new services such as video, mobile broadband and cloud computing.

"The characteristics of traffic are changing: A higher peak-to-average ratio during the day, more symmetric traffic, and the need to support higher quality-of-service traffic than in the past," says Professor Ioannis Tomkos of the Athens Information Technology Center.

"The growth of internet traffic will require core network interfaces to migrate from the current 10, 40 and 100Gbps to 1 Terabit by 2018-2020"

Operators want a more flexible infrastructure that can adapt to meet these changes, hence their interest in flexible elastic-bandwidth networks. The operators also want to grow bandwidth as required while making best use of the fibre's spectrum. They also require more advanced control plane technology to restore the network elegantly and promptly following a fault, and to simplify the provisioning of bandwidth.

The growth of internet traffic will require core network interfaces to migrate from the current 10, 40 and 100Gbps to 1 Terabit by 2018-2020, says Tomkos. Such bit-rates must be supported with very high spectral efficiencies, which according to latest demonstrations are only a factor of 2 away of the Shannon's limit. Simply put, optical fibre is rapidly approaching its maximum limit.

"We cannot design anymore optical networks assuming that the available fibre capacity is abundant," says Tomkos. "As is the case in wireless networks where the available wireless spectrum/ bandwidth is a scarce resource, the future optical communication systems and networks should become flexible in order to accommodate more efficiently the envisioned shortage of available bandwidth.”

The attraction of multi-carrier schemes and advanced modulation formats is the prospect of operators modifying capacity in a flexible and elastic way based on varying traffic demands, while maintaining cost-effective transport.

Elastic elements

Optical systems providers now realise they can no longer keep increasing a light path's data rate while expecting the signal to still fit in the standard International Telecommunication Union (ITU) - defined 50GHz band.

It may still be possible to fit a 200 Gigabit-per-second (Gbps) light path in a 50GHz channel but not a 400Gbps or 1 Terabit signal. At 400Gbps, 80GHz is needed and at 1 Terabit it rises to 170GHz, says Tomkos. This requires networks to move away from the standard ITU grid to a flexible-based one, especially if operators want to achieve the highest possible spectral efficiency.

Vendors can increase the data rate of a carrier signal by using more advanced modulation schemes than dual polarisation, quadrature phase-shift keying (DP-QPSK), the defacto 100Gbps standard. Such schemes include amplitude modulation at 16-QAM, 64-QAM and 256-QAM but the greater the amplitude levels used and hence the data rates, the shorter the resulting reach.

Another technique vendors are using to achieve 400Gbps and 1Tbps data rates is to move from a single carrier to multiple carriers or 'super-channels'. Such an approach boosts the data rate by encoding data on more than one carrier and avoids the loss in reach associated with higher order QAM. But this comes at a cost: using multiple carriers consumes more, precious spectrum.

As a result, vendors are looking at schemes to pack the carriers closely together. One is spectral shaping. Tomkos also details the growing interest in such schemes as optical orthogonal frequency division multiplexing (OFDM) and Nyquist WDM. For Nyquist WDM, the subcarriers are spectrally shaped so that they occupy a bandwidth close or equal to the Nyquist limit to avoid inter symbol interference and crosstalk during transmission.

Both approaches have their pros and cons, says Tomkos, but they promise optimum spectral efficiency of 2N bits-per-second-per-Hertz (2N bits/s/Hz), where N is the number of constellation points.

The attraction of these techniques - multi-carrier schemes and advanced modulation formats - is the prospect of operators modifying capacity in a flexible and elastic way based on varying traffic demands, while maintaining cost-effective transport.

"With flexible networks, we are not just talking about the introduction of super-channels, and with it the flexible grid," says Tomkos. "We are also talking about the possibility to change either dynamically."

According to Tomkos, vendors such as Infinera with its 5x100Gbps super-channel photonic integrated circuit (PIC) are making an important first step towards flexible, elastic-bandwidth networks. But for true elastic networks, a flexible grid is needed as is the ability to change the number of carriers on-the-fly.

"Once we have those introduced, in order to get to 1 Terabit, then you can think about playing with such parameters as modulation levels and the number of carriers, to make the bandwidth really elastic, according to the connections' requirements," he says.

Meanwhile, there are still technology advances needed before an elastic-bandwidth network is achieved, such as software-defined transponders and a new advanced control plane.

Tomkos says that operators are now using control plane technology that co-ordinates between layer three and the optical layer to reduce network restoration time from minutes to seconds. Microsoft and Infinera cite that they have gone from tens of minutes down to a few seconds using the more advanced optical infrastructure. "They [Microsoft] are very happy with it," says Tomkos.

But to provision new capacity at the optical layer, operators are talking about requirements in the tens of minutes; something they do not expect will change in the coming years. "Cloud services could speed up this timeframe," says Tomkos.

"There is usually a big lag between what operators and vendors do and what academics do," says Tomkos. "But for the topic of flexible, elastic networking, the lag between academics and the vendors has become very small."

Further reading:

60-second interview with .... Sterling Perrin

Heavy Reading has published a report Photonic Integration, Super Channels & the March to Terabit Networks. In this 60-second interview, Sterling Perrin, senior analyst at the market research company, talks about the report's findings and the technology's importance for telecom and datacom.

"PICs will be an important part of an ensemble cast, but will not have the starring role. Some may dismiss PICs for this reason, but that would be a mistake – we still need them."

Sterling Perrin, Heavy Reading

Heavy Reading's previous report on optical integration was published in 2008. What has changed?

The biggest change has been the rise of coherent detection, bringing electronics to prominence in the world of optics. This is a big shift - and it has taken some of the burden off photonic integration. Simply put, electronics has taken some of the job away from optics.

How important is optical Integration, for optical component players and for system vendors?

Until now, photonic integration has not been a ‘must have’ item for systems suppliers. For the most part, there have been other ways to get at lower costs and footprint reductions.

I think we are starting to see photonic integration move into the must-have category for systems suppliers, in certain applications, which means that it becomes a must-have item for the components companies that supply them.

How should one view silicon photonics and what importance does Heavy Reading attach to Cisco System's acquisition of silicon photonics' startup, Lightwire?

When we published the last [2008] report, silicon photonics was definitely within the hype cycle. We’ve seen the hype fade quite a bit – it’s now understood that just because a component is made with silicon, it’s not automatically going to be cheaper. Also, few in the industry continue to talk about a Moore’s Law for optics today. That said, there are applications for silicon photonics, particularly in data centre and short-reach applications, and the technology has moved forward.

Cisco’s acquisition of Lightwire is a good testament for how far the technology has come. This is a strategic acquisition, aimed at long-term differentiation, and Cisco believes that silicon photonics will help them get there.

"It will be interesting to watch what other [optical integration] M&A activity occurs, and how this activity affects the components players"

What are the main optical integration market opportunities?

In long haul, we already see applications for photonic integrated circuits (PICs). Certainly, Infinera’s PIC-based DTN and DTN-X systems stand out. But also, the OIF has specified photonic integration in its 100 Gigabit long haul, DWDM (dense wavelength division multiplexing) MSA (multi-source agreement) – it was needed to get the necessary size reduction.

Moving forward, there is opportunity for PICs in client-side modules as PICs are the best way to reduce module sizes and improve system density. Then, beyond 100G, to super-channel-based long-haul systems, PICs will play a big role here, as parallel photonic integration will be used to build these super-channels.

Were you surprised by any of the report's findings?

When I start researching a report, I am always hopefully for big black and white kinds of findings – this is the biggest thing for the industry or this is a dud. With photonic integration, we found such a wide array of opinions and viewpoints that, in the end, we had to place photonic integration somewhere in the middle.

It’s clear that system vendors are going to need PICs but it’s also clear that PICs alone won’t solve all the industry’s challenges. PICs will be an important part of an ensemble cast, but will not have the starring role. Some may dismiss PICs for this reason, but that would be a mistake – we still need them.

What optical integration trends/ developments should be watched over the next two years?

The year started with two major system suppliers buying PIC companies: Cisco and Lightwire and Huawei and CIP Technologies. With Alcatel-Lucent having in-house abilities, and, of course, Infinera, this should put pressure on other optical suppliers to have a PIC strategy.

It will be interesting to watch what other M&A activity occurs, and how this activity affects the components players.

The editor of Gazettabyte worked with Heavy Reading in researching photonic integration for the report.

OFC/NFOEC 2012 industry reflections - Part 1

The recent OFC/NFOEC show, held in Los Angeles, had a strong vendor presence. Gazettabyte spoke with Infinera's Dave Welch, chief strategy officer and executive vice president, about his impressions of the show, capacity challenges facing the industry, and the importance of the company's photonic integrated circuit technology in light of recent competitor announcements.

OFC/NFOEC reflections: Part 1

"I need as much fibre capacity as I can get, but I also need reach"

Dave Welch, Infinera

Dave Welch values shows such as OFC/NFOEC: "I view the show's benefit as everyone getting together in one place and hearing the same chatter." This helps identify areas of consensus and subjects where there is less agreement.

And while there were no significant surprises at the show, it did highlight several shifts in how the network is evolving, he says.

"The first [shift] is the realisation that the layers are going to physically converge; the architectural layers may still exist but they are going to sit within a box as opposed to multiple boxes," says Welch.

The implementation of this started with the convergence of the Optical Transport Network (OTN) and dense wavelength division multiplexing (DWDM) layers, and the efficiencies that brings to the network.

That is a big deal, says Welch.

Optical designers have long been making transponders for optical transport. But now the transponder isn't an element in the integrated OTN-DWDM layer, rather it is the transceiver. "Even that subtlety means quite a bit," say Welch. "It means that my metrics are no longer 'gray optics in, long-haul optics out', it is 'switch-fabric to fibre'."

Infinera has its own OTN-DWDM platform convergence with the DTN-X platform, and the trend was reaffirmed at the show by the likes of Huawei and Ciena, says Welch: "Everyone is talking about that integration."

The second layer integration stage involves multi-protocol label switching (MPLS). Instead of transponder point-to-point technology, what is being considered is a common platform with an optical management layer, an OTN layer and, in future, an MPLS layer.

"The drive for that box is that you can't continue to scale the network in terms of bandwidth, power and cost by taking each layer as a silo and reducing it down," says Welch. "You have to gain benefits across silos for the scaling to keep up with bandwidth and economic demands."

Super-channels

Optical transport has always been about increasing the data rates carried over wavelengths. At 100 Gigabit-per-second (Gbps), however, companies now use one or two wavelengths - carriers - onto which data is encoded. As vendors look to the next generation of line-side optical transport, what follows 100Gbps, the use of multiple carriers - super-channels - will continue and this was another show trend.

Infinera's technology uses a 500Gbps super-channel based on dual polarisation, quadrature phase-shift keying (DP-QPSK). The company's transmit and receive photonic integrated circuit pair comprise 10 wavelengths (two 50Gbps carriers per 50GHz band).

Ciena and Alcatel-Lucent detailed their next-generation ASICs at OFC. These chips, to appear later this year, include higher-order modulation schemes such as 16-QAM (quadrature amplitude modulation) which can be carried over multiple wavelengths. Going from DP-QPSK to 16-QAM doubles the data rate of a carrier from 100Gbps to 200Gbps, using two carriers each at 16-QAM, enables the two vendors to deliver 400Gbps.

"The concept of this all having to sit on one wavelength is going by the wayside," say Welch.

Capacity challenges

"Over the next five years there are some difficult trends we are going to have to deal with, where there aren't technical solutions," says Welch.

The industry is already talking about fibre capacities of 24 Terabit using coherent technology. Greater capacity is also starting to be traded with reach. "A lot of the higher QAM rate coherent doesn't go very far," says Welch. "16-QAM in true applications is probably a 500km technology."

This is new for the industry. In the past a 10Gbps service could be scaled to 800 Gigabit system using 80 DWDM wavelengths. The same applies to 100Gbps which scales to 8 Terabit.

"I'm used to having high-capacity services and I'm used to having 80 of them, maybe 50 of them," says Welch. "When I get to a Terabit service - not that far out - we haven't come up with a technology that allows the fibre plant to go to 50-100 Terabit."

This issue is already leading to fundamental research looking at techniques to boost the capacity of fibre.

PICs

However, in the shorter term, the smarts to enable high-speed transmission and higher capacity over the fibre are coming from the next-generation DSP-ASICs.

Is Infinera's monolithic integration expertise, with its 500 Gigabit PIC, becoming a less important element of system design?

"PICs have a greater differentiation now than they did then," says Welch.

Unlike Infinera's 500Gbps super-channel, the recently announced ASICs use two carriers and 16-QAM to deliver 400Gbps. But the issue is the reach that can be achieved with 16-QAM: "The difference is 16-QAM doesn't satisfy any long-haul applications," says Welch.

Infinera argues that a fairer comparison with its 500Gbps PIC is dual-carrier QPSK, each carrier at 100Gbps. Once the ASIC and optics deliver 400Gbps using 16-QAM, it is no longer a valid comparison because of reach, he says.

Three parameters must be considered here, says Welch: dollars/Gigabit, reach and fibre capacity. "I have to satisfy all three for my application," he says.

Long-haul operators are extremely sensitive to fibre capacity. "I need as much fibre capacity as I can get," he says. "But I also need reach."

In data centre applications, for example, reach is becoming an issue. "For the data centre there are fewer on and off ramps and I need to ship truly massive amounts of data from one end of the country to the other, or one end of Europe to the other."

The lower reach of 16-QAM is suited to the metro but Welch argues that is one segment that doesn't need the highest capacity but rather lower cost. Here 16-QAM does reduce cost by delivering more bandwidth from the same hardware.

Meanwhile, Infinera is working on its next-generation PIC that will deliver a Terabit super-channel using DP-QPSK, says Welch. The PIC and the accompanying next-generation ASIC will likely appear in the next two years.

Such a 1 Terabit PIC will reduce the cost of optics further but it remains to be seen how Infinera will increase the overall fibre capacity beyond its current 80x100Gbps. The integrated PIC will double the 100Gbps wavelengths that will make up the super-channel, increasing the long-haul line card density and benefiting the dollars/ Gigabit and reach metrics.

In part two, ADVA Optical Networking, Ciena, Cisco Systems and market research firm Ovum reflect on OFC/NFOEC. Click here

The post-100 Gigabit era

Feature: Beyond 100G - Part 4

The latest coherent ASICs from Ciena and Alcatel-Lucent coupled with announcements from Cisco and Huawei highlight where the industry is heading with regard high-speed optical transport. But the announcements also raise questions too.

Source: Gazettabyte

Source: Gazettabyte

Observations and queries

- Optical transport has had a clear roadmap: 10 to 40 to 100 Gigabit-per-second (Gbps). 100Gbps optical transport will be the last of the fixed line-side speeds.

- After 100Gbps will come flexible speed-reach deployments. Line-side optics will be able to implement 50Gbps, 100Gbps, 200Gbps or even faster speeds with super-channels, tailored to the particular link.

- Variable speed-reach designs will blur the lines between metro and ultra long-haul. Does a traditional metro platform become a trans-Pacific submarine system simply by adding a new line card with the latest coherent ASIC boasting transmit and receive digital signal processors (DSPs), flexible modulation and soft-decision forward error correction?

Source: Gazettabyte

- The cleverness of optical transport has shifted towards electronics and digital signal processing and away from photonics. Optical system engineers are being taxed as never before as they try to extend the reach of 100, 200 and 400Gbps to match that of 10 and 40Gbps but what is key for platform differentiation is the DSP algorithms and ASIC design.

- Optical is the new radio. This is evident with the adding of a coherent transmit DSP that supports the various modulation schemes and allows spectral shaping, bunching carriers closer to make best use of the fibre's bandwidth.

- The radio analogy is fitting because fibre bandwidth is becoming a scarce resource. Usable fibre capacity has more than doubled with these latest ASIC announcements. Moving to 400Gbps doubles overall capacity to some 18 Terabits. Spectral shaping boosts that even further to over 23 Terabits. Last week 8.8 Terabits (88x100Gbps) was impressive.

- Maximising fibre capacity is why implementing single-carrier 100Gbps signals in 50GHz channels is now important.

- Super-channels, combining multiple carriers, have a lot of operational merits (see the super-channel section in the Cisco story). Infinera announced its 500Gbps super-channel over 250GHz last year. Now Ciena and Alcatel-Lucent highlight how a dual-carrier, dual-polarisation 16-QAM approach in 100GHz implements a 400Gbps signal.

- Despite all the talk of 16-QAM and 400Gbps wavelengths, 100Gbps is still in its infancy and will remain a key technology for years to come. Alcatel-Lucent, one of the early leaders in 100Gbps, has deployed 1,450 100 Gig line units since it launched its system in June 2010.

- Photonic integration for coherent will remain of key importance. Not so much in making yet more complex optical structures than at 100Gbps but shrinking what has already been done.

- Is there a next speed after 100Gbps? Is it 200Gbps until 400Gbps becomes established? Is it 500Gbps as Infinera argues? The answer is that it no longer matters. But then what exactly will operators use to assess the merits of the different vendors' platforms? Reach, power, platform density, spectral efficiency and line speeds are all key performance parameters but assessing each vendor's platform has clearly got harder.

- It is the system vendors not the merchant chip makers that are driving coherent ASIC innovation. The market for 100Gbps coherent merchant chips will remain an important opportunity given the early status of the market but how will coherent merchant chip vendors compete, several of them startups, with the system vendors' deeper pockets and sophisticated ASIC designs?

- Optical transponder vendors at least have more scope for differentiation but it is now also harder. Will one or two of the larger module makers even acquire a coherent ASSP maker?

- Infinera announced its 100G coherent system last year. Clearly it is already working on its next-generation ASIC. And while its DTN-X platform boasts a 500Gbps super-channel photonic chip, its overall system capacity is 8 Terabit (160x50Gbps, each in 25GHz channels). How will Infinera respond, not only with its next ASIC but also its next-generation PIC, to these latest announcements from Ciena and Alcatel-Lucent?