Real-time visibility makes optical networking smarter

Systems vendors are making optical networks smarter. Their latest equipment, combining intelligent silicon and software, can measure the status of the network and enable dynamic network management.

Ciena recently announced its Liquid Spectrum networking product while Infinera has launched its Instant Network. Both vendors exploit the capabilities of their latest generation coherent DSPs to allow greater network automation and efficiency. The vendors even talk about their products being an important step towards autonomous or cognitive networks.

"Operators need to do things more efficiently," says Helen Xenos, director, portfolio solutions marketing at Ciena. "There is a lot of unpredictability in how traffic needs to be connected over the network." Moreover, demands on the network are set to increase with 5G and the billions of devices to be connected with the advent of Internet of Things.

Existing optical networks are designed to meet worse-case conditions. Margins are built into links based on the fibre used and assumptions are made about the equipment's end-of-life performance and the traffic to be carried. Now, with Ciena's latest WaveLogic Ai coherent DSP-ASIC, not only is the performance of the network measured but the coherent DSP can be used to exploit the network's state rather than use the worse-case end-of-life conditions. "With Liquid Spectrum, you now don't need to operate the network in a static mode," says Xenos.

We are at the beginning of this new world of operating networks

Software applications

Ciena has announced the first four software applications as part of Liquid Spectrum. The first, Performance Meter, uses measured signal-to-noise ratio data from the coherent DSP-ASICs to gauge the network's state to determine how efficiently the network is operating.

Bandwidth Optimiser acts on the network planner's request for bandwidth. The app recommends the optimum capacity that can be run on the link, based on exploiting baud rate and the reach, and also where to place the wavelengths within the C-band spectrum. Moreover, if service demands change, the network engineer can decide to reduce the built-in margins. "I may decide I don't need to reserve a 3dB margin right now and drop it down to 1dB," says Xenos. Bandwidth Optimiser can then be rerun to see how the new service demand can be met.

This approach contrasts with the existing way end points are connected, where all the wavelengths used are at the same capacity, a user decides their wavelengths and no changes are made once the wavelengths are deployed. "It is much simpler, it [the app] takes away complexity from the user," says Xenos.

The Liquid Restoration app ensuring alternative capacity in response to the loss of a 300-gigabit route due to a fault. Source: Ciena

The two remaining apps launched are Liquid Restoration and Wave-Line Synchroniser. Liquid Restoration looks at all the available options if a particular path fails. "It will borrow against margin to get as much capacity as possible," says Xenos. Wave-Line Synchroniser is a tool that helps with settings so that Ciena's optics can work with another vendor's line system or optics from another vendor work with Ciena's line system.

Liquid Spectrum will be offered as a bundle as part of Ciena's latest BluePlanet Manage, Control and Plan tool that combines service and network management, resource control and planning.

Xenos says Liquid Spectrum represents the latest, significant remaining piece towards the industry's goal of developing an agile optical infrastructure. Sophisticated reconfigurable optical add-drop multiplexers (ROADMs) and flexible coherent DSPs have existed for a while but how such flexible technology has been employed has been limited because of the lack of knowledge of the real-time state of the network. Moreover, with these latest Liquid Spectrum software tools, much of the manual link engineering and complexity regarding what capacity can be supported and where in the spectrum it should be placed, says Xenos.

"We are at the beginning of this new world of operating networks," says Xenos. "Going forward, there will be an increasingly level of sophistication that will be built into the software."

Ciena demonstrated Liquid Spectrum at the OFC show held in Los Angeles last month.

Part 2: Infinera's Instant Network, click here

Infinera goes multi-terabit with its latest photonic IC

In his new book, The Great Acceleration, Robert Colvile discusses how things we do are speeding up.

In 1845 it took U.S. President James Polk six months to send a message to California. Just 15 years later Abraham Lincoln's inaugural address could travel the same distance in under eight days, using the Pony Express. But the use of ponies for transcontinental communications was shortlived once the electrical telegraph took hold. [1]

The relentless progress in information transfer, enabled by chip advances and Moore's law, is taken largely for granted. Less noticed is the progress being made in integrated photonic chips, most notably by Infinera.

In 2000, optical transport sent data over long-haul links at 10 gigabit-per-second (Gbps), with 80 such channels supported in a platform. Fifteen years later, Infinera demonstrated its latest-generation photonic integrated circuit (PIC) and FlexCoherent DSP-ASIC that can transmit data at 600Gbps over 12,000km, and up to 2.4 terabit-per-second (Tbps) - three times the data capacity of a state-of-the-art dense wavelength-division multiplexing (DWDM) platform back in 2000 - over 1,150km.

Infinite Capacity Engine

Infinera dubs its latest optoelectronic subsystem the Infinite Capacity Engine. The subsystem comprises a pair of indium-phosphide PICs - a transmitter and a receiver - and the FlexCoherent DSP-ASIC. The performance capabilities that the Infinite Capacity Engine enables were unveiled by Infinera in January with its Advanced Coherent Toolkit announcement. Now, to coincide with OFC 2016, Infinera has detailed the underlying chips that enable the toolkit. And company product announcements using the new hardware will be made later this year, says Pravin Mahajan, the company's director of product and corporate marketing.

The claimed advantages of the Infinite Capacity Engine include a 82 percent reduction in power consumption compared to a system using discrete optical components and a dozen 100-gigabit coherent DSP-ASICs, and a 53 percent reduction in total-cost-of-ownership compared to competing dense WDM platforms. The FlexCoherent chip also features line rate data encryption.

"The Infinite Capacity Engine is the industry's first multi-terabit it super-channel, says Mahajan. "It also delivers the industry's first multi-terabit layer one encryption."

Multi-terabit PIC

Infinera's first transmitter and receiver PIC pair, launched in 2005, supported 10, 10-gigabit channels and implemented non-coherent optical transmission.

In 2011 Infinera introduced a 500-gigabit super-channel coherent PIC pair used with Infinera's DTN-X platforms and also its Cloud Xpress data centre interconnect platform launched in 2014. The 500 Gigabit design implemented 10, 50 gigabit channels that implemented polarisation-multiplexed, quadrature phase-shift keying (PM-QPSK) modulation. The accompanying FlexCoherent DSP-ASIC was implemented using a 40nm CMOS process node and support a symbol rate of 16 gigabaud.

The PIC design has since been enhanced to also support additional modulation schemes such as as polarisation-multiplexed, binary phase-shift keying (PM-BPSK) and 3 quadrature amplitude modulation (PM-3QAM) that extend the DTN-X's ultra long-haul performance.

In 2015 Infinera also launched the oPIC-100, a 100-gigabit PIC for metro applications that enables Infinera to exploit the concept of sliceable bandwidth by pairing oPIC-100s with a 500 gigabit PIC. Here the full 500 gigabit super-channel capacity can be pre-deployed even if not all of the capacity is used. Using Infinera's time-based instant bandwidth feature, part of that 500 gigabit capacity can be added between nodes in a few hours based on a request for greater bandwidth.

Now, with the Infinite Capacity Engine PIC, the effective number of channels has been expanded to 12, each capable of supporting a range of modulation techniques (see table below) and data rates. In fact, Infinera uses multiple Nyquist sub-carriers spread across each of the 12 channels. By encoding the data across multiple sub-carriers a lower-baud rate can be used, increasing the tolerance to non-linear channel impairments during optical transmission.

Mahajan says the latest PIC has a power consumption similar to its current 500 Gigabit super-channel PIC but because the photonic design supports up to 2.4 terabit, the power consumption in gigabit-per-Watt is reduced by 70 percent.

FlexCoherent encryption

The latest FlexCoherent DSP-ASIC is Infinera's most complex yet. The 1.6 billion transistor 28nm CMOS IC can process two channels, and supports a 33 gigabaud symbol rate. As a result, six DSP-ASICs are used with the 12-channel PIC.

It is the DSP-ASIC that enables the various elements of the advanced coherent toolkit that includes improved soft-decision forward error correction. "The net coding gain is 11.9dB, up 0.9 dB, which improves the capacity-reach," says Mahajan. Infinera says the ultra long-haul performance has also been improved from 9,500km to over 12,000km.

Source: Infinera

Source: Infinera

The DSP also features layer one encryption implementing the 256-bit Advanced Encryption Standard (AES-256). Infinera says the request for encryption is being led by the Internet content providers but wholesale operators and co-location providers also want to secure transmissions between sites.

Infinera introduced layer two MACsec encryption with its Cloud Xpress platform. This encrypts the Ethernet payload but not the header. With layer one encryption, it is the OTN frames that are encoded. "When we get down to the OTN level, everything is encrypted," says Mahajan. An operator can choose to encrypt the entire super-channel or encrypt at the service level, down to the ODU0 (1.244 Gbps) level.

System benefits

Using the Infinite Capacity Engine, the transmission capacity over a fibre increases from 9.5 terabit to up to 26.4 terabit.

And with the newest PIC, Infinera can expand the sliceable transponder concept for metro-regional applications. The 2.4 terabits of capacity can be pre-deployed and new capacity turned up between nodes. "You can suddenly turn up 200 gigabit for a month or two, rent and then return it," says Mahajan. However, to support the full 2.4 terabits of capacity, the PIC at the other end of the link would also need to support 16-QAM.

Infinera does say there will be other Infinite Capacity Engine variants. "There will be specific engines for specific markets, and we would choose a subset of the modulations," says Mahajan.

One obvious platform that will benefit from the first Infinite Capacity Engine is the DTN-X. Another that will likely use an ICE variant is Infinera's Cloud Xpress. At present Infinera integrates its 500-gigabit PIC in a 2 rack-unit box for data centre interconnect applications. By using the new PIC and implementing PM-16QAM, the line-side capacity per rack unit of a second-generation Cloud Xpress would rise from 250 gigabit to 1.2 terabit. And with layer one encryption, the MACsec IC may no longer be needed.

Mahajan says the Infinite Capacity Engine has already been tested in the Telstra trial detailed in January. "We have already proven its viability but it is not deployed and carrying live traffic," he says.

Next-generation coherent adds sub-carriers to capabilities

Part 2: Infinera's coherent toolkit

Source: Infinera

Source: Infinera

Infinera has detailed coherent technology enhancements implemented using its latest-generation optical transmission technology. The system vendor is still to launch its newest photonic integrated circuit (PIC) and FlexCoherent DSP-ASIC but has detailed features the CMOS and indium phosphide ICs support.

The techniques highlight the increasing sophistication of coherent technology and an ever tighter coupling between electronics and photonics.

The company has demonstrated the technology, dubbed the Advanced Coherent Toolkit, on a Telstra 9,000km submarine link spanning the Pacific. In particular, the demonstration used matrix-enhanced polarisation-multiplexed, binary phased-shift keying (PM-BPSK) that enabled the 9,000km span without optical signal regeneration.

Using the ACT is expected to extend the capacity-reach product for links by the order of 60 percent. Indeed the latest coherent technology with transmitter-based digital signal processing delivers 25x the capacity-reach of 10-gigabit wavelengths using direct-detection, the company says.

Infinera’s latest PIC technology includes polarisation-multiplexed, 8-quadrature amplitude modulation (PM-8QAM) and PM-16QAM schemes. Its current 500-gigabit PIC supports PM-BPSK, PM-3QAM and PM-QPSK. The PIC is expected to support a 1.2-terabit super-channel and using PM-16QAM could deliver 2.4 terabit.

“This [the latest PIC] is beyond 500 gigabit,” confirms Pravin Mahajan, Infinera’s director of product and corporate marketing. “We are talking terabits now.”

Sterling Perrin, senior analyst at Heavy Reading, sees the Infinera announcement as less PIC related and more an indication of the expertise Infinera has been accumulating in areas such as digital signal processing.

Nyquist sub-carriers

Infinera is the first to announce the use of sub-carriers. Instead of modulating the data onto a single carrier, Infinera is using multiple Nyquist sub-carriers spread across a channel.

Using a flexible grid, the sub-carriers span a 37.5GHz-wide channel. In the example shown above, six are used although the number is variable depending on the link. The sub-carriers occupy 35GHz of the band while 2.5GHz is used as a guard band.

“Information you were carrying across one carrier can now be carried over multiple sub-carriers,” says Mahajan. “The benefit is that you can drive this as a lower-baud rate.”

Lowering the baud rate increases the tolerance to non-linear channel impairments experienced during optical transmission. “The electronic compensation is also much less than what you would be doing at a much higher baud rate,” says Abhijit Chitambar, Infinera’s principal product and technology marketing manager.

While the industry is looking to increase overall baud rate to increase capacity carried and reduce cost, the introduction of sub-carriers benefits overall link performance. “You end up with a better Q value,” says Mahajan. The ‘Q’ refers to the Quality Factor, a measure of the transmission’s performance. The Q Factor combines the optical signal-to-noise ratio (OSNR) and the optical bandwidth of the photo-detector, providing a more practical performance measure, says Infinera.

Infinera has not detailed how it implements the sub-carriers. But it appears to be a combination of the transmitter PIC and the digital-to-analogue converter of the coherent DSP-ASIC.

It is not clear what the hardware implications of adopting sub-carriers are and whether the overall DSP processing is reduced, lowering the ASIC’s power consumption. But using sub-carriers promotes parallel processing and that promises chip architectural benefits.

“Without this [sub-carrier] approach you are talking about upping baud rate,” says Mahajan. “We are not going to stop increasing the baud rate, it is more a question of how much you can squeeze with what is available today.“

SD-FEC enhancements

The FlexCoherent DSP also supports enhanced soft-decision forward-error correction (SD-FEC) including the processing of two channels that need not be contiguous.

SD-FEC delivers enhanced performance compared to conventional hard-decision FEC. Hard-decision FEC decides whether a received bit is a 1 or a 0; SD-FEC also uses a confidence measure as to the likelihood of the bit being a 1 or 0. This additional information results in a net coding gain of 2dB compared to hard-decision FEC, benefiting reach and extending the life of submarine links.

By pairing two channels, Infinera shares the FEC codes. By pairing a strong channel with a weak one and sharing the codes, some of the strength of the strong signal can be traded to bolster the weaker one, extending its reach or even allowing for a more advanced modulation scheme to be used.

The SD-FEC can also trade performance with latency. SD-FEC uses as much as a 35 percent overhead and this adds to latency. Trading the two supports those routes where low latency is a priority.

Matrix-enhanced PSK

Infinera has implemented a technique that enhances the performance of PM-BPSK used for the longest transmission distances such as sub-sea links. The matrix-enhancement uses a form of averaging that adds about a decibel of gain. “Any innovation that adds gain to a link, the margin that you give to operators is always welcome,” says Mahajan.

The toolkit also supports the fine-tuning of channel widths. This fine-tuning allows the channel spacing to be tailored for a given link as well as better accommodating the Nyquist sub-carriers.

Product launch

The company has not said when it will launch its terabit PIC and FlexCoherent DSP.

“Infinera is saying it is the first announcing Nyquist sub-carriers, which is true, but they don’t give a roadmap when the product is coming out,” says Heavy Reading’s Perrin. “I suspect that Nokia [Alcatel-Lucent], Ciena and Huawei are all innovating on the same lines.”

There could be a slew of announcements around the time of the OFC show in March, says Perrin: “So Infinera could be first to announce but not necessarily first to market.”

Ovum Q&A: Infinera as an end-to-end systems vendor

Infinera hosted an Insight analyst day on October 6th to highlight its plans now that it has acquired metro equipment player, Transmode. Gazettabyte interviewed Ron Kline, principal analyst, intelligent networks at market research firm, Ovum, who attended the event.

Q. Infinera’s CEO Tom Fallon referred to this period as a once-in-a-decade transition as metro moves from 10 Gig to 100 Gig. The growth is attributed mainly to the uptake of cloud services and he expects this transition to last for a while. Is this Ovum’s take?

Ron Kline, OvumRK: It is a transition but it is more about coherent technology rather than 10 Gig to 100 Gig. Coherent enables that higher-speed change which is required because of the level of bandwidth going on in the metro.

Ron Kline, OvumRK: It is a transition but it is more about coherent technology rather than 10 Gig to 100 Gig. Coherent enables that higher-speed change which is required because of the level of bandwidth going on in the metro.

We are going to see metro change from 10 Gig to 100 Gig, much like we saw it change from 2.5 Gig to 10 Gig. Economically, it is going to be more feasible for operators to deploy 100 Gig and get more bang for their buck.

Ten years is always a good number from any transition. If you look at SONET/SDH, it began in the early 1990s and by 2000 was mainstream.

If you look at transitions, you had a ten-year time lag to get from 2.5 Gig to 10 Gig and you had another ten years for the development of 40 Gig, although that was impacted by the optical bubble and the [2008] financial crisis. But when coherent came around, you had a three-year cycle for 100 gigabit. Now you are in the same three-year cycle for 200 and 400 gigabit.

Is 100 Gig the unit of currency? I think all logic tells us it is. But I’m not sure that ends up being the story here.

If you get line systems that are truly open then optical networking becomes commodity-based transponders - the white box phenomenon - then where is the differentiation? It moves into the software realm and that becomes a much more important differentiator.

Infinera’s CEO asserted that technology differentiation has never been more important in this industry. Is this true or only for certain platforms such as for optical networking and core routers?

If you look at Infinera, you would say their chief differentiator is the PIC (photonic integrated circuit) as it has enabled them to do very well. But other players really have not tried it. Huawei does a little but only in the metro and access.

It is true that you need differentiation, particularly for something as specialised as optical networking. The edge has always gone to the company that can innovate quickest. That is how Nortel did it; they were first with 10 gigabit for long haul and dominated the market.

When you look at coherent, the edge has gone to the quickest: Ciena, Alcatel-Lucent, Huawei and to a certain extent Infinera. Then you throw in the PIC and that gives Infinera an edge.

But then, on the flip side, there is this notion of disaggregation. Nobody likes to say it but it is the commoditisation of the technology; that is certainly the way the content providers are going.

If you get line systems that are truly open then optical networking becomes commodity-based transponders - the white box phenomenon - then where is the differentiation? It moves into the software realm and that becomes a much more important differentiator.

I do think differentiation is important; it always is. But I’m not sure how long your advantage is these days.

Infinera argues that the acquisition of Transmode will triple the total available market it can address.

Infinera definitely increases its total available market. They only had an addressable market related to long haul and submarine line terminating equipment. Now this [acquisition of Transmode] really opens the door. They can do metro, access, mobile backhaul; they can do a lot of different things.

We don’t necessarily agree with the numbers, though, it more a doubling of the addressable market.

The rolling annual long-haul backbone global market (3Q 2014 to 2Q 2015) and the submarine line terminating equipment market where they play [pre-Transmode] was $5.2 billion. If you assume the total market of $14.2 billion is addressable then yes it is nearly a tripling but that includes the legacy SONET/SDH and Bandwidth Management segments which are rapidly declining. Nevertheless, Tom’s point is well-taken, adding a further $5.8 billion for the metro and access WDM markets to their total addressable market is significant.

Tom Fallon also said vendor consolidation will continue, and companies will need to have scale because of the very large amounts of R&D needed to drive differentiation. Is scale needed for a greater R&D spend to stay ahead of the competition?

When you respond to an operator’s request-for-proposal, that is where having end-to-end scale helps Infinera; being able to be a one-stop shop for the metro and long haul.

If I’m an operator, I don’t have to get products from several vendors and be the systems integrator.

Infinera announced a new platform for long haul, the XT-500, which is described as a telecom version of its data centre interconnect Cloud Xpress platform. Why do service providers want such a platform, and how does it differ from cloud Xpress?

Infinera’s DTN-X long haul platform is very high capacity and there are applications where you don’t need a such a large platform. That is one application.

The other is where you lease space [to house your equipment]. If I am going to lease space, if I have a box that is 2 RU (rack unit) high and can do 500 gigabit point-to-point and I don’t need any cross-connect, then this smaller shelf size makes a lot of sense. I’m just transporting bandwidth.

Cloud Xpress is a scaled-down product for the metro. The XT-500 is carrier-class, e.g. NEBS [Network Equipment-Building System] compliant and can span long-haul distances.

Infinera has also announced the XTC-2. What is the main purpose of this platform?

The platform is a smaller DTN-X variant to serve smaller regions. For example you can take a 500 gigabit PIC super-channel and slice it up. That enables you to do a hub-and-spoke virtual ring and drop 100 Gig wavelengths at appropriate places. The system uses the new metro PICs introduced in March. At the hub location you use an ePIC that slices up the 500G into individually routable 100G channels and at the hub location, where the XTC-2 is, you use an oPIC-100.

Does the oPIC-100 offer any advantage compared to existing100 Gig optics?

I don’t think it has a huge edge other than the differentiation you get from a PIC. In fact it might be a deterrent: you have to buy it from Infinera. It is also anti-trend, where the trend is pluggables.

But the hub and spoke architecture is innovative and it will be interesting to see what they do with the integration of PIC technology in Transmode’s gear.

Acquiring Transmode provides Infinera with an end-to-end networking portfolio? Does it still lack important elements? For example, Ciena acquired Cyan and gained its Blue Planet SDN software.

Transmode has a lot of different technologies required in the metro: mobile back-haul, synchronisation, they are also working on mobile front-hauling, and their hardware is low power.

Transmode has pretty much everything you need in these smaller platforms. But it is the software piece that they don’t have. Infinera has a strategy that says: we are not going to do this; we are going to be open and others can come in through an interface essentially and run our equipment.

That will certainly work.

But if you take a long view that says that in future technology will be commoditised, then you are in a bad spot because all the value moves to the software and you, as a company, are not investing and driving that software. So, this could be a huge problem going forward.

What are the main challenges Infinera faces?

One challenge, as mentioned, is hardware commoditisation and the issue of software.

Hardware commodity can play in Infinera’s favour. Infinera should have the lowest-cost solution given its integrated solution, so large hardware volumes is good for them. But if pluggable optics is a requirement, then they could be in trouble with this strategy

The other is keeping up with the Joneses.

I think the 500 Gig in 100 Gig channels is now not that exciting. The 500 Gig PIC is not creating as much advantage as it did before. Where is the 1.2 terabit PIC? Where is the next version that drives Infinera forward?

And is it still going to be 100 Gig? They are leading me to believe it won’t just be. Are they going to have a PIC that is 12 channels that are tunable in modulation formats to go from 100 to 200 to 400 Gig.

They need to if they want to stay competitive with everyone else because the market is moving to 200 Gig and 400 Gig. Our figures show that over 2,000 multi-rate (QPSK and 16-QAM) ports have been shipped in the last year (3Q 2014 to 2Q 2015). And now you have 8-QAM coming. Infinera’s PIC is going to have to support this.

Infinera’s edge is the PIC but if you don’t keep progressing the PIC, it is no longer an edge.

These are the challenges facing Infinera and it is not that easy to do these things.

ECOC 2015: Reflections

Valery Tolstikhin, head of a design consultancy, Intengent

ECOC was a big show and included a number of satellite events, such as the 6th European Forum on Photonic Integration, the 3rd Optical Interconnect in Data Center Symposium and Market Focus, all of which I attended. So, lots of information to digest.

My focus was mainly on data centre optical interconnects and photonic integration.

Data centre interconnects

What became evident at ECOC is that 50 Gig modulation and the PAM-4 modulation format will be the basis of the next generation (after 100 Gig) data centre interconnect. This is in contrast to the current 100 Gig non-return-to-zero (NRZ) modulation using 25 Gig lanes.

This paves the way towards 200 Gig (4 x PAM-4 lanes at 25 Gig) and 400 Gig (4 x PAM-4 lanes at 50 Gig) as a continuation of quads of 4 x NRZ lanes at 25 Gig, the state-of-the-art data centre interconnect still to take off in terms of practical deployment.

The transition from 100 Gig to 400 Gig seems to be happening much faster than from 40Gig to 100 Gig. And 40 Gig serial finally seems to have gone; who needs 40 Gig when 50 Gig is available?

Another observation is that despite the common agreement that future new deployments should use single-mode fibre rather than multi-mode fibre, given the latter’s severe reach limitation that worsens with modulation speed, the multi-mode fibre camp does not give up easily.

That is because of the tons of multi-mode fibre interconnects already deployed, and the low cost of gallium arsenide 850 nm VCSELs these links use. However, the spectral efficiency of such interconnects is low, resulting in high multi-mode fibre count and the associated cost. This is a strong argument against such fibre.

Now, a short-wave WDM (SWDM) initiative is emerging as a partial solution to this problem, led by Finisar. Both OM3 and OM4 multi-mode fibre can be used, extending link spans to 100m at 25 Gig speeds.

Single mode fibre 4 x 25 Gig QSFP28 pluggables with a reach of up to 2 km, which a year ago were announced with some fanfare, seems to have become more of a commodity.

The SWDM Alliance was announced just before ECOC 2015, with major players like Finisar and Corning on board, suggesting this is a serious effort not to be ignored by the single mode fibre camp.

Lastly, single mode fibre 4 x 25 Gig QSFP28 pluggables with a reach of up to 2 km, which a year ago were announced with some fanfare, seems to have become more of a commodity. Two major varieties – PSM and WDM – are claimed and, probably shipping, by a growing number of vendors.

Since these are pluggables with fixed specs, the only difference from the customer viewpoint is price. That suggests a price war is looming, as happens in all massive markets. Since the current price still are an order of magnitude or more above the target $1/Gig set by Facebook and the like, there is still a long way to go, but the trend is clear.

This reminds me of that I’ve experienced in the PON market: a massive market addressed by a standardised product that can be assembled, at a certain time, using off-the-shelf components. Such a market creates intense competition where low-cost labour eventually wins over technology innovation.

Photonic integration

Two trends regarding photonic integration for telecom and datacom became clear at ECOC 2015.

One positive development is an emerging fabless ecosystem for photonic integrated circuits (PICs), or at least an understanding of a need for such. These activities are driven by silicon photonics which is based on the fabless model since its major idea is to leverage existing silicon manufacturing infrastructure. For example, Luxtera, the most visible silicon component vendor, is a fabless company.

There are also signs of the fabless ecosystem building up in the area of III-V photonics, primarily indium-phosphide based. The European JePPIX programme is one example. Here you see companies providing foundry and design house services emerging, while the programme itself supports access to PIC prototyping through multi-project wafer (MPW) runs for a limited fee. That’s how the ASIC business began 30 to 40 years ago.

A link to OEM customers is still a weak point, but I see this being fixed in the near future. Of course, Intengent, my design house company, does just that: links OEM customers and the foundries for customised photonic chip and PIC development.

As soon as PICs give a system advantage, which Infinera’s chips do, they become a system solution enabler, not merely ordinary components made a different way

The second, less positive development, is that photonic integration continues to struggle to find applications and markets where it will become a winner. Apart from devices like the 100 Gig coherent receiver, where phase control requirements are difficult to meet using discretes, there are few examples where photonic integration provides an edge.

Even a 4 x 25 Gig assembly using discrete components for today’s 100 Gig client side and data centre interconnect has been demonstrated by several vendors. It then becomes a matter of economies of scale and cheap labour, leaving little space for photonic integration to play. This is what happened in the PON market despite photonic integrated products being developed by my previous company, OneChip Photonics.

On a flip side, the example of Infinera shows where the power of photonic integration is: its ability to create more complicated PICs as needed without changing the technology.

One terabit receiver and transmitter chips developed by Infinera are examples of complex photonic circuits, simply undoable by means of an optical sub-assembly. As soon as PICs give a system advantage, which Infinera’s chips do, they become a system solution enabler, not merely ordinary components made a different way.

However, most of the photonic integration players - silicon photonics and indium phosphide alike - still try to do the same as what an optical sub-assembly can do, but more cheaply. This does not seem to be a winning strategy.

And a comment on silicon photonics. At ECOC 2015, I was pleased to see that, finally, there is a consensus that silicon photonics needs to aim at applications with a certain level of complexity if it is to provide any advantage to the customer.

Silicon photonics must look for more complex things, maybe 400 Gig or beyond, but the market is not there yet

For simpler circuits, there is little advantage using photonic integration, least of all silicon photonics-based ones. Where people disagree is what this threshold level of complexity is. Some suggest that 100 Gig optics for data centres is the starting point but I’m unsure. There are discrete optical sub-assemblies already on the market that will become only cheaper and cheaper. Silicon photonics must look for more complex things, maybe 400 Gig or beyond, but the market is not there yet.

One show highlight was the clear roadmap to 400 Gig and beyond, based on a very high modulation speed (50 Gig) and the PAM-4 modulation format, as discussed. These were supported at previous events, but never before have I seen the trend so clearly and universally accepted.

What surprised me, in a positive way, is that people have started to understand that silicon photonics does not automatically solve their problems, just because it has the word silicon in its name. Rather, it creates new challenges, cost efficiency being an important one. The conditions for cost efficient silicon photonics are yet to be found, but it is refreshing that only a few now believe that the silicon photonics can be superior by virtue of just being ‘silicon’.

I wouldn’t highlight one thing that I learned at the show. Basically, ECOC is an excellent opportunity to check on the course of technology development and people’s thoughts about it. And it is often better seen and felt on the exhibition floor than attending the conference’s technical sessions.

For the coming year, I will continue to track data centre interconnect optics, in all its flavours, and photonic integration, especially through a prism of the emerging fabless ecosystem.

Vishnu Shukla, distinguished member technical staff in Verizon’s network planning group.

There were more contributions related to software-defined networking (SDN) and multi-layer transport at ECOC. There were no new technology breakthroughs as much as many incremental evolutions to high-speed optical networking technologies like modulation, digital signal processors and filtering.

I intend to track technologies and test results related to transport layer virtualisation and similar efforts for 400 Gig-and-beyond transport.

Vladimir Kozlov, CEO and founder of LightCounting

I had not attended ECOC since 2000. It is a good event, a scaled down version of OFC but just as productive. What surprised me is how small this industry is even 15 years after the bubble. Everything is bigger in the US, including cars, homes and tradeshows. Looking at our industry on the European scale helps to grasp how small it really is.

What is the next market opportunity for optics? The data centre market is pretty clear now, but what next?

Listening to the plenary talk of Sir David Paine, it struck me how infinite technology is. It is so easy to get overexcited with the possibilities, but very few of the technological advances lead to commercial success.

The market is very selective and it takes a lot of determination to get things done. How do start-ups handle this risk? Do people get delusional with their ideas and impact on the world? I suspect that some degree of delusion is necessary to deal with the risks.

As for issues to track in the coming year, what is the next market opportunity for optics? The data centre market is pretty clear now, but what next?

Verizon tips silicon photonics as a key systems enabler

Part 3: An operator view

Glenn Wellbrock is upbeat about silicon photonics’ prospects. Challenges remain, he says, but the industry is making progress. “Fundamentally, we believe silicon photonics is a real enabler,” he says. “It is the only way to get to the densities that we want.”

Glenn Wellbrock

Glenn Wellbrock

Wellbrock adds that indium phosphide-based photonic integrated circuits (PICs) can also achieve such densities.

But there are many potential silicon photonics suppliers because of its relatively low barrier to entry, unlike indium phosphide. "To date, Infinera has been the only real [indium phosphide] PIC company and they build only for their own platform,” says Wellbrock.

That an operator must delve into emerging photonics technologies may at first glance seem surprising. But Verizon needs to understand the issues and performance of such technologies. “If we understand what the component-level capabilities are, we can help drive that with requirements,” says Wellbrock. “We also have a better appreciation for what the system guys can and cannot do.”

Verizon can’t be an expert in the subject, he says, but it can certainly be involved. “To the point where we understand the timelines, the cost points, the value-add and the risk factors,” he says. “There are risk factors that we also want to understand, independent of what the system suppliers might tell us.”

The cost saving is real, but it is also the space savings and power saving that are just as important

All the silicon photonics players must add a laser in one form or another to the silicon substrate since silicon itself cannot lase, but pretty much all the other optical functions can be done on the silicon substrate, says Wellbrock: “The cost saving is real, but it is also the space savings and power saving that are just as important.”

The big achievement of silicon photonics, which Wellbrock describes as a breakthrough, is the getting rid of the gold boxes around the discrete optical components. “How do I get to the point where I don’t have fibre connecting all these discrete components, where the traces are built into the silicon, the modulator is built in, even the detector is built right in.” The resulting design is then easier to package. “Eventually I get to the point where the packaging is glass over the top of that.”

So what has silicon photonics demonstrated that gives Verizon confidence about its prospects?

Wellbrock points to several achievements, the first being Infinera’s PICs. Yes, he says, Infinera’s designs are indium phosphide-based and not silicon photonics, but the company makes really dense, low-power and highly reliable components.

He also cites Cisco’s silicon photonics-based CPAK 100 Gig optical modules, and Acacia, which is applying silicon photonics and its in-house DSP-ASICs to get a lower power consumption than other, high-end line-side transmitters.

Verizon believes the technology will also be used in CFP4 and QSFP28 optical modules, and at the next level of integration that avoids pluggable modules on the equipment's faceplate altogether.

But challenges remain. Scale is one issue that concerns Verizon. What makes silicon chips cheap is the fact that they are made in high volumes. “It [silicon photonics] couldn’t survive on just the 100 gigabit modules that the telecom world are buying,” says Wellbrock.

If these issues are not resolved, then indium phosphide continues to win for a long time because that is where the volumes are today

When Verizon asks the silicon photonics players about how such scale will be achieved, the response it gets is data centre interconnect. “Inside the data centre, the optics is growing so rapidly," says Wellbrock. "We can leverage that in telecom."

The other issue is device packaging, for silicon photonics and for indium phosphide. It is ok making a silicon-photonics die cheaply but unless the packaging costs can be reduced, the overall cost saving is lost. ”How to make it reliable and mainstream so that everyone is using the same packaging to get cost down,” says Wellbrock.

All these issues - volumes, packaging, increasing the number of applications a single part can be applied to - need to be resolved and almost simultaneously. Otherwise, the technology will not realise its full potential and the start-ups will dwindle before the problems are fixed.

“If these issues are not resolved, then indium phosphide continues to win for a long time because that is where the volumes are today,” he says.

Verizon, however, is optimistic. “We are making enough progress here to where it should all pan out,” says Wellbrock.

Optical networking: The next 10 years

Predicting the future is a foolhardy endeavour, at best one can make educated guesses.

Ioannis Tomkos is better placed than most to comment on the future course of optical networking. Tomkos, a Fellow of the OSA and the IET at the Athens Information Technology Centre (AIT), is involved in several European research projects that are tackling head-on the challenges set to keep optical engineers busy for the next decade.

“We are reaching the total capacity limit of deployed single-mode, single-core fibre,” says Tomkos. “We can’t just scale capacity because there are limits now to the capacity of point-to-point connections.”

Source: Infinera

Source: Infinera

The industry consensus is to develop flexible optical networking techniques that make best use of the existing deployed fibre. These techniques include using spectral super-channels, moving to a flexible grid, and introducing ‘sliceable’ transponders whose total capacity can be split and sent to different locations based on the traffic requirements.

Once these flexible networking techniques have exhausted the last Hertz of a fibre’s C-band, additional spectral bands of the fibre will likely be exploited such as the L-band and S-band.

After that, spatial-division multiplexing (SDM) of transmission systems will be used, first using already deployed single-mode fibre and then new types of optical transmission systems that use SDM within the same optical fibre. For this, operators will need to put novel fibre in the ground that have multiple modes and multiple cores.

SDM systems will bring about change not only with the fibre and terminal end points, but also the amplification and optical switching along the transmission path. SDM optical switching will be more complex but it also promises huge capacities and overall dollar-per-bit cost savings.

Tomkos is heading three European research projects - FOX-C, ASTRON & INSPACE.

FOX-C involves adding and dropping all-optically sub-channels from different types of spectral super-channels. ASTRON is undertaing the development of a one terabit transceiver photonic integrated circuit (PIC). The third, INSPACE, will undertake the development of new optical switch architectures for SDM-based networks.

FOX-C

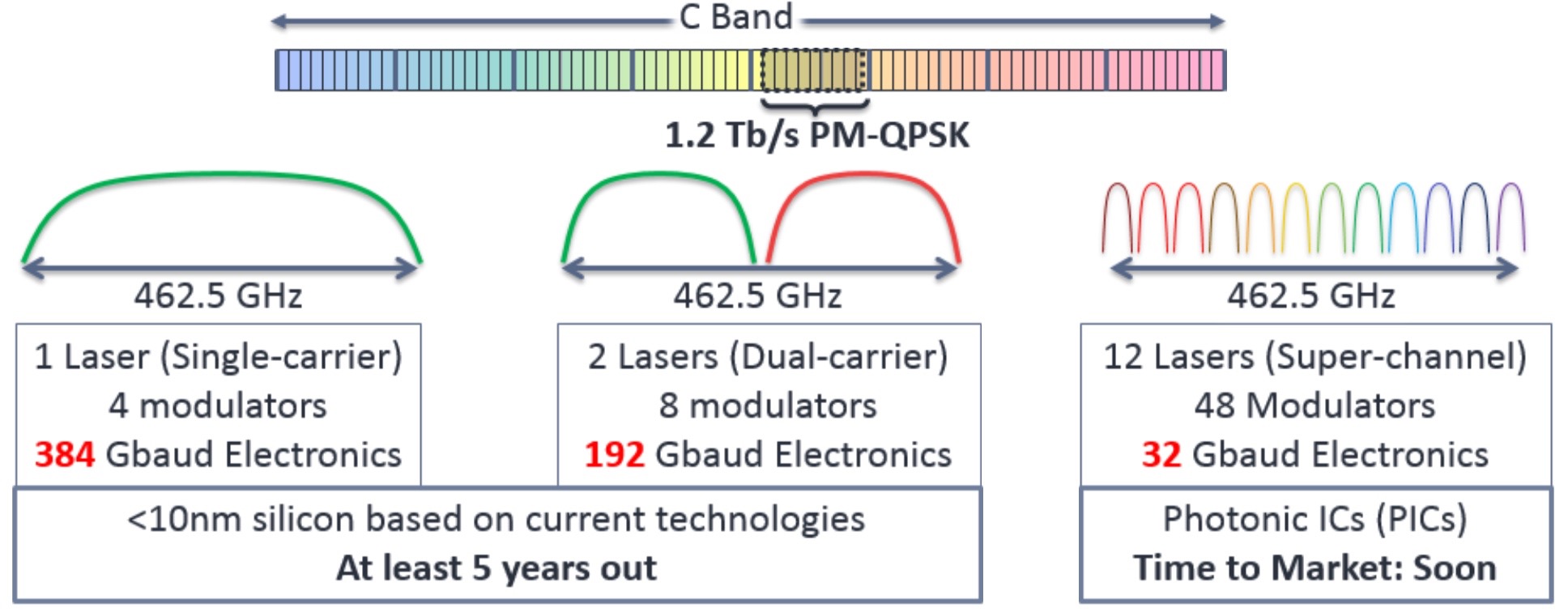

Spectral super-channels are used to create high bit-rate signals - 400 Gigabit and greater - by combining a number of sub-channels. Combining sub-channels is necessary since existing electronics can’t create such high bit rates using a single carrier.

Infinera points out that a 1.2 Terabit-per-second (Tbps) signal implemented using a single carrier would require 462.5 GHz of spectrum while the accompanying electronics to achieve the 384 Gigabaud (Gbaud) symbol rate would require a sub-10nm CMOS process, a technology at least five years away.

- Those that use non-overlapping sub-channels implemented using what is called Nyquist multiplexing.

- And those with overlapping sub-channels using orthogonal frequency division multiplexing (OFDM).

Heading off the capacity crunch

Improving optical transmission capacity to keep pace with the growth in IP traffic is getting trickier.

Engineers are being taxed in the design decisions they must make to support a growing list of speeds and data modulation schemes. There is also a fissure emerging in the equipment and components needed to address the diverging needs of long-haul and metro networks. As a result, far greater flexibility is needed, with designers looking to elastic or flexible optical networking where data rates and reach can be adapted as required.

Figure 1: The green line is the non-linear Shannon limit, above which transmission is not possible. The chart shows how more bits can be sent in a 50 GHz channel as the optical signal to noise ratio (OSNR) is increased. The blue dots closest to the green line represent the performance of the WaveLogic 3, Ciena's latest DSP-ASIC family. Source: Ciena.

Figure 1: The green line is the non-linear Shannon limit, above which transmission is not possible. The chart shows how more bits can be sent in a 50 GHz channel as the optical signal to noise ratio (OSNR) is increased. The blue dots closest to the green line represent the performance of the WaveLogic 3, Ciena's latest DSP-ASIC family. Source: Ciena.

But perhaps the biggest challenge is only just looming. Because optical networking engineers have been so successful in squeezing information down a fibre, their scope to send additional data in future is diminishing. Simply put, it is becoming harder to put more information on the fibre as the Shannon limit, as defined by information theory, is approached.

"Our [lab] experiments are within a factor of two of the non-linear Shannon limit, while our products are within a factor of three to six of the Shannon limit," says Peter Winzer, head of the optical transmission systems and networks research department at Bell Laboratories, Alcatel-Lucent. The non-linear Shannon limit dictates how much information can be sent across a wavelength-division multiplexing (WDM) channel as a function of the optical signal-to-noise ratio.

A factor of two may sound a lot, says Winzer, but it is not. "To exhaust that last factor of two, a lot of imperfections need to be compensated and the ASIC needs to become a lot more complex," he says. The ASIC is the digital signal processor (DSP), used for pulse shaping at the transmitter and coherent detection at the receiver.

Our [lab] experiments are within a factor of two of the non-linear Shannon limit, while our products are within a factor of three to six of the Shannon limit - Peter Winzer

At the recent OFC 2015 conference and exhibition, there was plenty of announcements pointing to industry progress. Several companies announced 100 Gigabit coherent optics in the pluggable, compact CFP2 form factor, while Acacia detailed a flexible-rate 5x7 inch MSA capable of 200, 300 and 400 Gigabit rates. And research results were reported on the topics of elastic optical networking and spatial division multiplexing, work designed to ensure that networking capacity continues to scale.

Trade-offs

There are several performance issues that engineers must consider when designing optical transmission systems. Clearly, for submarine systems, maximising reach and the traffic carried by a fibre are key. For metro, more data can be carried on a single carrier to improving overall capacity but at the expense of reach.

Such varied requirements are met using several design levers:

- Baud or symbol rate

- The modulation scheme which determines the number of bits carried by each symbol

- Multiple carriers, if needed, to carry the overall service as a super-channel

The baud rate used is dictated by the performance limits of the electronics. Today that is 32 Gbaud: 25 Gbaud for the data payload and up to 7 Gbaud for forward error correction and other overhead bits.

Doubling the symbol rate from 32 Gbaud used for 100 Gigabit coherent to 64 Gbaud is a significant challenge for the component makers. The speed hike requires a performance overhaul of the electronics and the optics: the analogue-to-digital and digital-to-analogue converters and the drivers through to the modulators and photo-detectors.

"Increasing the baud rate gives more interface speed for the transponder," says Winzer. But the overall fibre capacity stays the same, as the signal spectrum doubles with a doubling in symbol rate.

However, increasing the symbol rate brings cost and size benefits. "You get more bits through, and so you are sharing the cost of the electronics across more bits," says Kim Roberts, senior manager, optical signal processing at Ciena. It also implies a denser platform by doubling the speed per line card slot.

As you try to encode more bits in a constellation, so your noise tolerance goes down - Kim Roberts

Modulation schemes

The modulation used determines the number of bits encoded on each symbol. Optical networking equipment already use binary phase-shift keying (BPSK or 2-quadrature amplitude modulation, 2-QAM) for the most demanding, longest-reach submarine spans; the workhorse quadrature phase-shift keying (QPSK or 4-QAM) for 100 Gigabit-per-second (Gbps) transmission, and the 200 Gbps 16-QAM for distances up to 1,000 km.

Moving to a higher QAM scheme increases WDM capacity but at the expense of reach. That is because as more bits are encoded on a symbol, the separation between them is smaller. "As you try to encode more bits in a constellation, so your noise tolerance goes down," says Roberts.

One recent development among system vendors has been to add more modulation schemes to enrich the transmission options available.

From QPSK to 16-QAM, you get a factor of two increase in capacity but your reach decreases of the order of 80 percent - Steve Grubb

Besides BPSK, QPSK and 16-QAM, vendors are adding 8-QAM, an intermediate scheme between QPSK and 16-QAM. These include Acacia with its AC-400 MSA, Coriant, and Infinera. Infinera has tested 8-QAM as well as 3-QAM, a scheme between BPSK and QPSK, as part of submarine trials with Telstra.

"From QPSK to 16-QAM, you get a factor of two increase in capacity but your reach decreases of the order of 80 percent," says Steve Grubb, an Infinera Fellow. Using 8-QAM boosts capacity by half compared to QPSK, while delivering more signal margin than 16-QAM. Having the option to use the intermediate formats of 3-QAM and 8-QAM enriches the capacity tradeoff options available between two fixed end-points, says Grubb.

Ciena has added two chips to its WaveLogic 3 DSP-ASIC family of devices: the WaveLogic 3 Extreme and the WaveLogic 3 Nano for metro.

WaveLogic3 Extreme uses a proprietary modulation format that Ciena calls 8D-2QAM, a tweak on BPSK that uses longer duration signalling that enhances span distances by up to 20 percent. The 8D-2QAM is aimed at legacy dispersion-compensated fibre that carry 10 Gbps wavelengths and offers up to 40 percent additional upgrade capacity compared to BPSK.

Ciena has also added 4-amplitude-shift-keying (4-ASK) modulation alongside QPSK to its WaveLogic3 Nano chip. The 4-ASK scheme is also designed for use alongside 10 Gbps wavelengths that introduce phase noise, to which 4-ASK has greater tolerance than QPSK. Ciena's 4-ASK design also generates less heat and is less costly than BPSK.

According to Roberts, a designer’s goal is to use the fastest symbol rate possible, and then add the richest constellation as possible "to carry as many bits as you can, given the noise and distance you can go".

After that, the remaining issue is whether a carrier’s service can be fitted on one carrier or whether several carriers are needed, forming a super-channel. Packing a super-channel's carriers tightly benefits overall fibre spectrum usage and reduces the spectrum wasted for guard bands needed when a signal is optically switched.

Can symbol rate be doubled to 64 Gbaud? "It looks impossibly hard but people are going to solve that," says Roberts. It is also possible to use a hybrid approach where symbol rate and modulation schemes are used. The table shows how different baud rate/ modulation schemes can be used to achieve a 400 Gigabit single-carrier signal.

Note how using polarisation for coherent transmission doubles the overall data rate. Source: Gazettabyte

But industry views differ as to how much scope there is to improve overall capacity of a fibre and the optical performance.

Roberts stresses that his job is to develop commercial systems rather than conduct lab 'hero' experiments. Such systems need to be work in networks for 15 years and must be cost competitive. "It is not over yet," says Roberts.

He says we are still some way off from when all that remains are minor design tweaks only. "I don't have fun changing the colour of the paint or reducing the cost of the washers by 10 cents,” he says. “And I am having a lot of fun with the next-generation design [being developed by Ciena].”

"We are nearing the point of diminishing returns in terms of spectrum efficiency, and the same is true with DSP-ASIC development," says Winzer. Work will continue to develop higher speeds per wavelength, to increase capacity per fibre, and to achieve higher densities and lower costs. In parallel, work continues in software and networking architectures. For example, flexible multi-rate transponders used for elastic optical networking, and software-defined networking that will be able to adapt the optical layer.

After that, designers are looking at using more amplification bands, such as the L-band and S-band alongside the current C-band to increase fibre capacity. But it will be a challenge to match the optical performance of the C-band across all bands used.

"I would believe in a doubling or maybe a tripling of bandwidth but absolutely not more than that," says Winzer. "This is a stop-gap solution that allows me to get to the next level without running into desperation."

The designers' 'next level' is spatial division multiplexing. Here, signals are launched down multiple channels, such as multiple fibres, multi-mode fibre and multi-core fibre. "That is what people will have to do on a five-year to 10-year horizon," concludes Winzer.

For Part 2, click here

See also:

- Scaling Optical Fiber Networks: Challenges and Solutions by Peter Winzer

- High Capacity Transport - 100G and Beyond, Journal of Lightwave Technology, Vol 33, No. 3, February 2015.

A version of this article first appeared in an OFC 2015 show preview

Cyan's stackable optical rack for data centre interconnent

"The drivers for these [data centre] guys every day of the week is lowest cost-per-gigabit"

Joe Cumello

The amount of traffic moved between data centres can be huge. According to ACG Research, certain cloud-based applications shared between data centres can require between 40 to 500 terabits of capacity. This could be to link adjacent data centre buildings to appear as one large logical one, or connect data centres across a metro, 20 km to 200 km apart. For data centres separated across greater distances, traditional long-haul links are typically sufficient.

Cyan says it developed the N-series platform following conversations conducted with internet content providers over the last two years. "We realised that the white box movement would make its way into the data centre interconnect space," says Cumello.

White box servers and white box switches, manufactured by original design manufacturers (ODMs), are already being used in the data centre due to their lower cost. Cyan is using a similar approach for its N-Series, using commercial-off-the-shelf hardware and open software.

"The drivers for these [data centre] guys every day of the week is lowest cost-per-gigabit," says Cumello.

N-Series platform

Cyan's N-Series N11 is a 1-rack-unit (1RU) box that has a total capacity of 800 Gigabit-per-second (Gbps). The 1RU shelf comprises two units, each using two client-side 100Gbps QSFP28s and a line-side interface that supports 100 Gbps coherent transmission using PM-QPSK, or 200 Gbps coherent using PM-16QAM. The transmission capacity can be traded with reach: using 100 Gbps, optical transmission up to 2,000 km is possible, while capacity can be doubled using 200 Gbps lightpaths for links up to 600 km. Cyan is using Clariphy's CL20010 coherent transceiver/ framer chip. Stacking 42 of the 1RUs within a chassis results in an overall capacity - client side and line side - of 33.6 terabit.

There is a whole ecosystem of companies competing to drive better capacity and scale

The N-Series N11 uses a custom line-side design but Cyan says that by adopting commercial-off-the-shelf design, it will benefit from the pluggable line-side optical module roadmap. The roadmap includes 200 Gbps and 400 Gbps coherent MSA modules, pluggable CFP2 and CFP4 analogue coherent optics, and the CFP2 digital coherent optics that also integrates the DSP-ASIC.

"There is a whole ecosystem of companies competing to drive better capacity and scale," says Cumello. "By using commercial-off-the-shelf technology, we are going to get to better scale, better density, better energy efficiency and better capacity."

To support these various options, Cyan has designed the chassis to support 1RU shelves with several front plate options including a single full-width unit, two half-width ones as used for the N11, or four quarter-width units.

Open software

For software, the N-series platform uses a Linux networking operating system. Using Linux enables third-party applications to run on the N-series, and enables IT staff to use open source tools they already know. "The data centre guys use Linux and know how to run servers and switches so we have provided that kind of software through Cyan's Linux," says Cumello. Cyan has also developed its own networking applications for configuration management, protocol handling and statistics management that run on the Linux operating system.

The open software architecture of the N-Series. Also shown are the two units that make up a rack. Source: Cyan.

The open software architecture of the N-Series. Also shown are the two units that make up a rack. Source: Cyan.

"We have essentially disaggregated the software from the hardware," says Cumello. Should a data centre operator chooses a future, cheaper white box interconnect product, he says, Cyan's applications and Linux networking operating system will still run on that platform.

The N-series will be available for customer trials in the second quarter and will be available commercially from the third quarter of 2015.

Photonics and optics: interchangeable yet different

Many terms in telecom are used interchangeably. Terms gain credibility with use but over time things evolve. For example, people understand what is meant by the term carrier [of traffic] or operator [of a network] and even the term incumbent [operator] even though markets are now competitive and 'telephony' is no longer state-run.

"For me, optics is the equivalent of electrical, and photonics is the equivalent of electronics - LSI, VLSI chips and the like" - Mehdi Asghari

Operators - ex-incumbents or otherwise - also do more that oversee the network and now provide complex services. But of course they differ from service providers such as the over-the-top players [third-party providers delivering services over an operator's infrastructure, rather than any theatrical behaviour] or internet content providers.

Google is an internet content provider but with its gigabit broadband service it is rolling out in the US, it is also an operator/ carrier/ communications service provider. And Google may soon become a mobile virtual network operator.

So having multiple terms can be helpful, adding variety especially when writing, but the trouble is it is also confusing.

Recent discussions including interviewing silicon photonics pioneer, Richard Soref, raised the question whether the terms photonics and optics are the same. I decided to ask several industry experts, starting with The Optical Society (OSA).

Tom Hausken, the OSA's senior engineering & applications advisor, says that after many years of thought he concludes the following:

-

People have different definitions for them [optics and photonics] that range all over the map.

-

I find it confusing and unhelpful to distinguish them.

-

The National Academies's report is on record saying there is no difference as far as that study is concerned.

-

That works for me.

Michael Duncan, the OSA's senior science advisor, puts the difference down to one of cultural usage. "Photonics leans more towards the fibre optics, integrated optics, waveguide optics, and the systems they are used in - mostly for communication - while optics is everything else, especially the propagation and modification of coherent and incoherent light," says Duncan. "But I could easily go with Tom's third bullet point."

"Photonics does include the quantum nature, and sort of by convention, the term optics is seen to mean classical" - Richard Soref

Duncan also cites Wikipedia, with its discussion of classical optics that embraces the wave nature of light, and modern optics that also includes light's particle nature. And this distinction is at the core of the difference, without leading to an industry consensus.

"Photonics does include the quantum nature, and sort of by convention, the term optics is seen to mean classical," says Richard Soref. He points out that the website Arxiv.org categorises optics as the subset of physics, while the OSA Newsletter is called Optics & Photonics News, covering all bases.

"Photonics is the larger category, and I might have been a bit off base when throwing around the optics term," says Soref. If only everyone was as off base as Professor Soref.

"We need to remember that there is no canonical definition of these terms, and there is no recognised authority that would write or maintain such a definition," says Geoff Bennett, director, solutions and technology at Infinera. For Bennett, this is a common issue, not confined to the terms optics and photonics: "We see this all the time in the telecoms industry, and in every other industry that combines rapid innovation with aggressive marketing."

That said, he also says that optics refers to classical optics, in which light is treated as a wave, whereas photonics is where light meets active semiconductors and so the quantum nature of light tends to dominate. Examples of the latter would be photonic integrated circuits (PICs). "These contain active lasers components, semiconductor optical amplifiers and photo-detectors " says Bennett. "All of these rely on quantum effects to do their job."

"We need to remember that there is no canonical definition of these terms, and there is no recognised authority that would write or maintain such a definition" - Geoff Bennett

Bennett says that the person who invented the term semiconductor optical amplifier (SOA) was not aware of the definition because the optical amplifier works on quantum principles, the same way a laser does. "So really it should be a semiconductor photonic amplifier," he says.

"At Infinera, we seem for the most part to have abided to the definitions in terminology that we use, but I can’t say that this was a conscious decision," says Bennett. "I am sure that if our marketing department thought that photonic sounded better than optical in a given situation they would have used it."

Mehdi Asghari, vice president, silicon photonics research & development at Mellanox, says optics refers to the classical use and application of light, with light as a ray. He describes optics as having a system-level approach to it.

"We create a system of lenses to make a microscope or telescope to make an optical instrument using classical optics models or we use optical components to create an optical communication system," he says. This classical or system-level perspective makes it optics or optical, a term he prefers. "We are not concerned with the nature - particle versus wave - of light, rather its classical behaviour, be it in an instrument or a system," he says.

But once things are viewed closer, at the device level, especially devices comparable in size of photons, then a system-level approach no longer works and is replaced with a quantum approach. "Here we look at photons and the quantum behaviour they exhibit," says Asghari.

In a waveguide, be it silicon photonics (integrated devices based on silicon), a planar lightwave circuit (glass-based integrated devices), or a PIC based on III-V or active devices, the size of the structure or device used is often comparable or even smaller than the size of the photons it is manipulating, he says: "This is where we very much feel the quantum nature of light, and this is where light becomes photons - photonics - and not optics."

ADVA Optical Networking's senior principal engineer, Klaus Grobe, held a discussion with the company's physicists, and both, independently, had the same opinion.

"Both [photonics and optics] are not strictly defined," he says. "Optics clearly also includes classic school-book ray optics and the like. Photonics already deals with photons, the wave-particle dualism, and hence, at least indirectly, with quantum mechanics, and possibly also quantum electro-dynamics (QED)."

Since in fibre-optics for transport, ray-propagation models no longer can be used, and also since they rely on the quantum-mechanical behaviour, for example of diode receivers, fibre-optics are better filed under photonics, says Grobe: "But they are not called fibre-photonics".

So, the industry view seems to be that the two terms are interchangeable but optics implies the classical nature of light while photonics suggests light as particles. Which term includes both seems to be down to opinion. Some believe optics covers both, others believe photonics is the more encompassing term.

Mellanox's Asghari once famously compared photons and electrons to cats and dogs. Electrons are like dogs: they behave, stick by you and are loyal; they do exactly as you tell them, he said, whereas cats are their own animals and do what they like. Just like photons. So what is his take?

He believes optics is more general than photonics. He uses the analogy of electrical versus electronics to make his point. An electronics system or chip is still an electrical device but it often refers to the integrated chip, while an electrical system is often seen as global and larger, made up of classical devices.

"For me, optics is the equivalent of electrical, and photonics is the equivalent of electronics - LSI, VLSI chips and the like," says Asghari. "One is a subset or specialised version of the other due to the need to get specific on the quantum nature of light and the challenges associated with integration."

"Optics refers to all types of cats, be it the tiger or the lion or the domestic pet. Photonics refers to the so called domestic cat that has domesticated and slaved us to look after it" - Mehdi Asghari

To back up his point, Ashgari says take a look at older books and publications that use the term optics. The term photonics started to be used once integration and size reduction became important, just as how electrical devices got replaced with electronic devices.

Indeed, this rings true in the semiconductor industry: microelectronics has now become nano-electronics as CMOS feature sizes have moved from microns to nanometer dimensions.

And this is why optical fibre or the semiconductor optical amplifier are used because these terms were invented and used when the industry was primarily engaged with the use of light at a system level and away from the quantum limits and challenges of integration.

"In short, photonics is used when we acknowledge that light is made of photons with all the fun and challenges that photons bring to us and optics is when we deal with light at a system level or a classical approach is sufficient," says Asghari.

Happily, cats and dogs feature here too.

"Optics refers to all types of cats, be it the tiger or the lion or the domestic pet," says Asghari. "Photonics refers to the so called domestic cat that has domesticated and slaved us to look after it."

Last word to Infinera's Bennett: "I suppose the moral is: be aware of the different meanings, but don’t let it bug you when people misuse them."