NeoPhotonics showcases a CFP2-ACO roadmap to 400G

The company demonstrated the CFP2-ACO module transmitting at 100 gigabit using polarisation multiplexed, quadrature phase-shift keying (PM-QPSK) modulation at the recent OFC show. The line-side module is capable of transmitting over 1,000km and also supports PM-16QAM that doubles capacity over metro network distances.

At OFC, NeoPhotonics also demonstrated single-wavelength 400-gigabit transmission using more advanced modulation and a higher symbol rate, and a short-reach 100-gigabit link for inside the data centre using 4-level pulse-amplitude modulation (PAM4) signalling.

Roadmap to 400 gigabit

One benefit of the CFP2-ACO is that the pluggable module can be deployed only when needed. Another is that the optics will work with coherent DSP-ASICs for different systems vendors and merchant chip suppliers.

“After a lot of technology-bragging about the CFP2-ACO, this is the year it is commercial,” says Ferris Lipscomb, vice president of marketing at NeoPhotonics.

Also demonstrated were the components needed for a next-generation CFP2-ACO: NeoPhotonics’ narrow line-width tunable laser and its higher-bandwidth integrated coherent receiver. To achieve 400 gigabit, 32-QAM and a 45 gigabaud symbol rate were used.

Traditional 100-gigabit coherent uses a 32-gigabaud symbol rate. That combined with QPSK and the two polarisations results in a total bit rate of 2 polarisations x 2bits/symbol x 32 gigabaud or 128 gigabits: a 100-gigabit payload and the rest overhead bits. Using 32-QAM instead of QPSK increases the number of bits encoded per symbol from 2 to 5, while increasing the baud rate from 32 to 45 gigabaud adds a speed-up factor of 1.4. Combining the two, the resulting bit rate is 45 gigabaud x 5bits/symbol x 2 polarisations or 450 gigabit overall.

After a lot of technology-bragging about the CFP2-ACO, this is the year it is commercial

Using 32-QAM curtails the transmission distance to 100km due to the denser constellation but such distances are suited for data centre interconnect applications.

“That was the demo [at OFC] but the product is also suitable for metro distances of 500km using 16-QAM and long-haul of 1,000km using 200 gigabit and 8-QAM,” says Lipscomb.

PAM4

The PAM4 demonstration highlighted NeoPhotonics’ laser and receiver technology. The company showcased a single-wavelength link running at 112 gigabits-per-second using its 56Gbaud externally modulated laser (EML) with an integrated driver. The PAM4 link can span 2km in a data centre.

“What is not quite ready for building into modules is the [56Gbaud to 112 gigabit PAM4] DSP, which is expected to be out in the middle to the second half of the year,” says Lipscomb. The company will develop its own PAM4-based optical modules while selling its laser to other module makers.

Lipscomb says four lanes at 56 gigabaud using PAM4 will deliver a cheaper 400-gigabit solution than eight lanes, each at 25 gigabaud.

Silicon Photonics

NeoPhotonics revealed that it is supplying new 1310nm and 1550nm distributed feedback (DFB) lasers to optical module players that are using silicon photonics for their 100-gigabit mid-reach transceivers. These include the 500m PSM-4, and the 2km CWDM4 and CLR4.

Lipscomb says the benefits of its lasers for silicon photonics include their relatively high output power - 40 to 60mW - and the fact that the company also makes laser arrays which are useful for certain silicon photonics applications.

NeoPhotonics’ laser products have been for 100-gigabit modules with reaches of 2km to 10km. “Silicon photonics is usually used for shorter reaches of a few hundred meters,” says Lipscomb. “This new product is our first one aimed at the short reach data centre market segment.”

“Our main products have been for 100-gigabit modules for the longer reaches of 2km to 10km,” says Lipscomb. “Silicon photonics is usually used for shorter reaches of a few hundred meters, and this new [laser] product is our first one aimed at the short reach data centre market segment."

The company says it has multiple customer engagements spanning various wavelength plans and approaches for Nx100-gigabit data centre transceiver designs. Mellanox Technologies is one vendor using silicon photonics that NeoPhotonics is supplying.

The white box concept gets embraced at the optical layer

White boxes have arisen to satisfy the data centre operators’ need for simple building-block functions in large number that they can direct themselves.

“They [data centre operators] started using very simple white boxes - rather simple functionality, much simpler than the large router companies were providing - which they controlled themselves using software-defined networking orchestrators,” says Brandon Collings, CTO of Lumentum.

Such platforms have since evolved to deliver high-performance switching, controlled by third-party SDN orchestrators, and optimised for the simple needs of the data centre, he says. Now this trend is moving to the optical layer where the same flexibility of function is desired. Operators would like to better pair the functionality that they are going to buy with the exact functionality they need for their network, says Collings.

“There is no plan to build networks with different architectures to what is built today,” he says. “It is really about how do we disaggregate conventional platforms to something more flexible to deploy, to control, and which you can integrate with control planes that also manage higher layers of the network, like OTN and the packet layer.”

White box products

Lumentum has a background in integrating optical functions such as reconfigurable optical add/drop multiplexers (ROADMs) and amplifiers onto line cards, known as its TrueFlex products. “That same general element is now the element being demanded by these white box strategies, so we are putting them in pizza boxes,” says Collings.

At OFC, Lumentum announced several white box designs for linking data centres and for metro applications. Such designs are for large-scale data centre operators that use data centre interconnect platforms. But several such operators also have more complex, metro-like optical networking requirements. Traditional telcos such as AT&T are also interested in pursuing the approach.

The first Lumentum white box products include terminal and line amplifiers, a dense WDM multiplexer/ demultiplexer and a ROADM. These hardware boxes come with open interfaces so that they can be controlled by an SDN orchestrator and are being made available to interested parties.

OpenFlow, which is used for electrical switches in the data centre, could be use with such optical white boxes. Other more likely software are the Restconf and Netconf protocols. “They are just protocols that are being defined to interface the orchestrator with a collection of white boxes,” says Collings.

Lumentum’s mux-demux is defined as a white box even though it is passive and has no power or monitoring requirements. That is because the mux-demux is a distinct element that is not part of a platform.

AT&T is exploring the concept of a disaggregated ROADM. Collings says a disaggregated ROADM has two defining characteristics. One is that the hardware isn’t required to come with a full network control management system. “You can buy it and operate it without buying that same vendor’s control system,” he says. The second characteristic is that the ROADM is physically disaggregated - it comes in a pizza box rather than a custom, proprietary chassis.

There remains a large amount of value between encompassing optical hardware in a pizza box to delivering an operating network

Lumentum: a systems vendor?

The optical layer white box ecosystem continues to develop, says Collings, with many players having different approaches and different levels of ‘aggressiveness’ in pursuing the concept. There is also the issue of the orchestrators and who will provide them. Such a network control system could be written by the hyper-scale data centre operators or be developed by the classical network equipment manufacturers, says Collings.

Collings says selling pizza boxes does not make Lumentum a systems vendor. “There is a lot of value-add that has to happen between us delivering a piece of hardware with simple open northbound control interfaces and a complete deployed, qualified, engineered system.”

Control software is needed as is network engineering; value that traditional system vendors have been adding. “That is not our expertise; we are not trying to step into that space,” says Collings. There remains a large amount of value between encompassing optical hardware in a pizza box to delivering an operating network, he says.

This value and how it is going to be provided is also at the core of an ongoing industry debate. “Is it the network provider or the people that are very good at it: the network equipment makers, and how that plays out.”

Lumentum’s white box ROADM was part of an Open Networking Lab proof-of-concept demonstration at OFC.

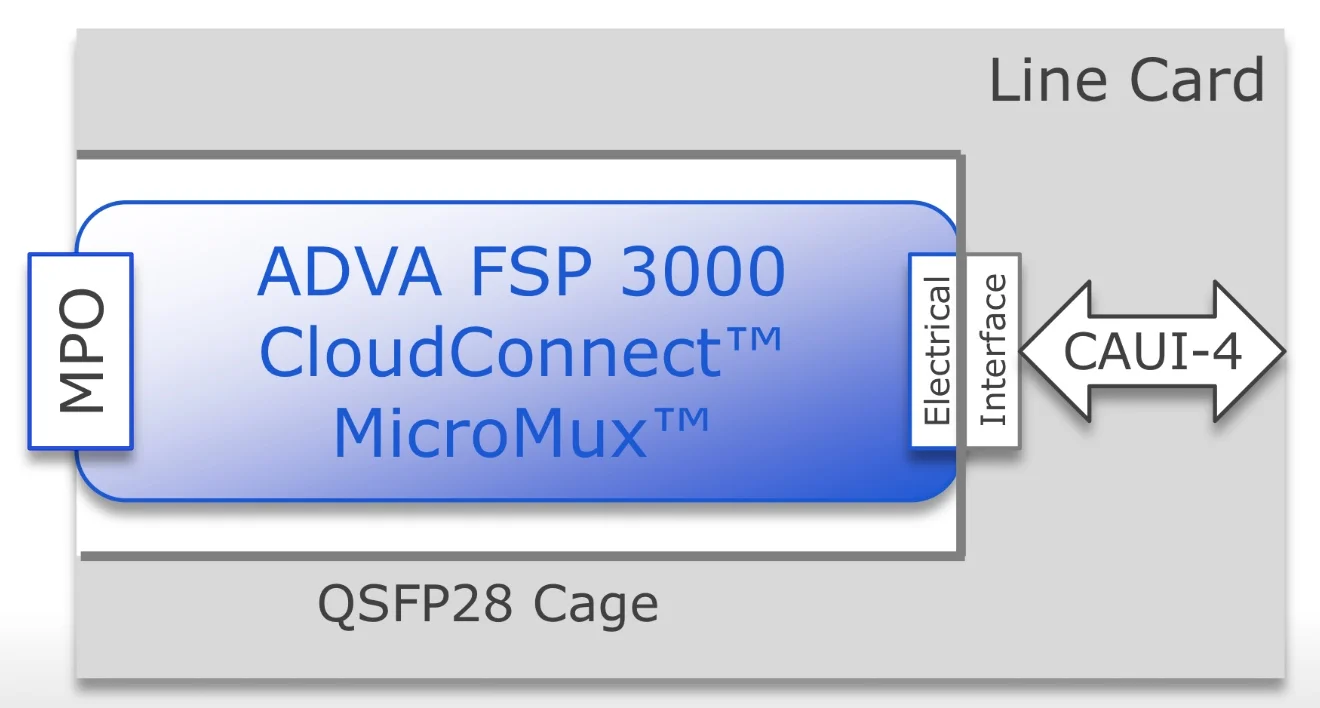

QSFP28 MicroMux expands 10 & 40 Gig faceplate capacity

- ADVA Optical Networking's MicroMux aggregates lower rate 10 and 40 gigabit client signals in a pluggable QSFP28 module

- ADVA is also claiming an industry first in implementing the Open Optical Line System concept that is backed by Microsoft

The need for terabits of capacity to link Internet content providers’ mega-scale data centres has given rise to a new class of optical transport platform, known as data centre interconnect.

Source: ADVA Optical Networking

Source: ADVA Optical Networking

Such platforms are designed to be power efficient, compact and support a variety of client-side signal rates spanning 10, 40 and 100 gigabit. But this poses a challenge for design engineers as the front panel of such platforms can only fit so many lower-rate client-side signals. This can lead to the aggregate data fed to the platform falling short of its full line-side transport capability.

ADVA Optical Networking has tackled the problem by developing the MicroMux, a multiplexer placed within a QSFP28 module. The MicroMux module plugs into the front panel of the CloudConnect, ADVA’s data centre interconnect platform, and funnels either 10, 10-gigabit ports or two, 40-gigabit ports into a front panel’s 100-gigabit port.

"The MicroMux allows you to support legacy client rates without impacting the panel density of the product," says Jim Theodoras, vice president of global business development at ADVA Optical Networking.

Using the MicroMux, lower-speed client interfaces can be added to a higher-speed product without stranding line-side bandwidth. An alternative approach to avoid wasting capacity is to install a lower-speed platform, says Theodoras, but then you can't scale.

ADVA Optical Networking offers four MicroMux pluggables for its CloudConnect data centre interconnect platform: short-reach and long-reach 10-by-10 gigabit QSFP28s, and short-reach and intermediate-reach 2-by-40 gigabit QSFP28 modules.

The MicroMux features an MPO connector. For the 10-gigabit products, the MPO connector supports 20 fibres, while for the 40-gigabit products, it is four fibres. At the other end of the QSFP28, that plugs into the platform, sits a CAUI-4 4x25-gigabit electrical interface (see diagram above).

“The key thing is the CAUI-4 interface; this is what makes it all work," says Theodoras.

Inside the MicroMux, signals are converted between the optical and electrical domains while a gearbox IC translates between 10- or 40-gigabit signals and the CAUI-4 format.

Theodoras stresses that the 10-gigabit inputs are not the old 100 Gigabit Ethernet 10x10 MSA but independent 10 Gigabit Ethernet streams. "They can come from different routers, different ports and different timing domains," he says. "It is no different than if you had 10, 10 Gigabit Ethernet ports on the front face plate."

Using the pluggables, a 5-terabit CloudConnect configuration can support up to 520, 10 Gigabit Ethernet ports, according to ADVA Optical Networking.

The first products will be shipped in the third quarter to preferred customers that help in its development while the products will be generally available at the year-end.

ADVA Optical Networking unveiled the MicroMux at OFC 2016, held in Anaheim, California in March. ADVA also used the show to detail its Open Optical Line System demonstration with switch vendor, Arista Networks.

Two years after Microsoft first talked about the [Open Optical Line System] concept at OFC, here we are today fully supporting it

Open Optical Line System

The Open Optical Line System is a concept being promoted by the Internet content providers to afford them greater control of their optical networking requirements.

Data centre players typically update their servers and top-of-rack switches every three years yet the optical transport functions such as the amplifiers, multiplexers and ROADMs have an upgrade cycle closer to 15 years.

“When the transponding function is stuck in with something that is replaced every 15 years and they want to replace it every three years, there is a mismatch,” says Theodoras.

Data centre interconnect line cards can be replaced more frequently with newer cards while retaining the chassis. And the CloudConnect product is also designed such that its optical line shelf can take external wavelengths from other products by supporting the Open Optical Line System. This adds flexibility and is done in a way that matches the work practices of the data centre players.

“The key part of the Open Optical Line System is the software,” says Theodoras. “The software lets that optical line shelf be its own separate node; an individual network element.”

The data centre operator can then manage the standalone CloudConnect Open Optical Line System product. Such a product can take coloured wavelength inputs and even provide feedback with the source platform, so that the wavelength is tuned to the correct channel. “It’s an orchestration and a management level thing,” says Theodoras.

Arista recently added a coherent line card to its 7500 spine switch family.

The card supports six CFP2-ACOs that have a reach of up to 2,000km, sufficient for most data centre interconnect applications, says Theodoras. The 7500 also supports the layer-two MACsec security protocol. However, it does not support flexible modulation formats. The CloudConnect does, supporting 100-, 150- and 200-gigabit formats. CloudConnect also has a 3,000km reach.

Source: ADVA Optical Networking

Source: ADVA Optical Networking

In the Open Optical Line System demonstration, ADVA Optical Networking squeezed the Arista 100-gigabit wavelength into a narrower 37.5GHz channel, sandwiched between two 100 gigabit wavelengths from legacy equipment and two 200 gigabit (PM-16QAM) wavelengths from the CloudConnect Quadplex card. All five wavelengths were sent over a 2,000km link.

Implementing the Open Optical Line System expands a data centre manager’s options. A coherent card can be added to the Arista 7500 and wavelengths sent directly using the CFP2-ACOs, or wavelengths can be sent over more demanding links, or ones that requires greater spectral efficiency, by using the CloudConnect. The 7500 chassis could also be used solely for switching and its traffic routed to the CloudConnect platform for off-site transmission.

Spectral efficiency is important for the large-scale data centre players. “The data centre interconnect guys are fibre-poor; they typically only have a single fibre pair going around the country and that is their network,” says Theodoras.

The joint demo shows that the Open Optical Line System concept works, he says: “Two years after Microsoft first talked about the concept at OFC, here we are today fully supporting it.”

Infinera goes multi-terabit with its latest photonic IC

In his new book, The Great Acceleration, Robert Colvile discusses how things we do are speeding up.

In 1845 it took U.S. President James Polk six months to send a message to California. Just 15 years later Abraham Lincoln's inaugural address could travel the same distance in under eight days, using the Pony Express. But the use of ponies for transcontinental communications was shortlived once the electrical telegraph took hold. [1]

The relentless progress in information transfer, enabled by chip advances and Moore's law, is taken largely for granted. Less noticed is the progress being made in integrated photonic chips, most notably by Infinera.

In 2000, optical transport sent data over long-haul links at 10 gigabit-per-second (Gbps), with 80 such channels supported in a platform. Fifteen years later, Infinera demonstrated its latest-generation photonic integrated circuit (PIC) and FlexCoherent DSP-ASIC that can transmit data at 600Gbps over 12,000km, and up to 2.4 terabit-per-second (Tbps) - three times the data capacity of a state-of-the-art dense wavelength-division multiplexing (DWDM) platform back in 2000 - over 1,150km.

Infinite Capacity Engine

Infinera dubs its latest optoelectronic subsystem the Infinite Capacity Engine. The subsystem comprises a pair of indium-phosphide PICs - a transmitter and a receiver - and the FlexCoherent DSP-ASIC. The performance capabilities that the Infinite Capacity Engine enables were unveiled by Infinera in January with its Advanced Coherent Toolkit announcement. Now, to coincide with OFC 2016, Infinera has detailed the underlying chips that enable the toolkit. And company product announcements using the new hardware will be made later this year, says Pravin Mahajan, the company's director of product and corporate marketing.

The claimed advantages of the Infinite Capacity Engine include a 82 percent reduction in power consumption compared to a system using discrete optical components and a dozen 100-gigabit coherent DSP-ASICs, and a 53 percent reduction in total-cost-of-ownership compared to competing dense WDM platforms. The FlexCoherent chip also features line rate data encryption.

"The Infinite Capacity Engine is the industry's first multi-terabit it super-channel, says Mahajan. "It also delivers the industry's first multi-terabit layer one encryption."

Multi-terabit PIC

Infinera's first transmitter and receiver PIC pair, launched in 2005, supported 10, 10-gigabit channels and implemented non-coherent optical transmission.

In 2011 Infinera introduced a 500-gigabit super-channel coherent PIC pair used with Infinera's DTN-X platforms and also its Cloud Xpress data centre interconnect platform launched in 2014. The 500 Gigabit design implemented 10, 50 gigabit channels that implemented polarisation-multiplexed, quadrature phase-shift keying (PM-QPSK) modulation. The accompanying FlexCoherent DSP-ASIC was implemented using a 40nm CMOS process node and support a symbol rate of 16 gigabaud.

The PIC design has since been enhanced to also support additional modulation schemes such as as polarisation-multiplexed, binary phase-shift keying (PM-BPSK) and 3 quadrature amplitude modulation (PM-3QAM) that extend the DTN-X's ultra long-haul performance.

In 2015 Infinera also launched the oPIC-100, a 100-gigabit PIC for metro applications that enables Infinera to exploit the concept of sliceable bandwidth by pairing oPIC-100s with a 500 gigabit PIC. Here the full 500 gigabit super-channel capacity can be pre-deployed even if not all of the capacity is used. Using Infinera's time-based instant bandwidth feature, part of that 500 gigabit capacity can be added between nodes in a few hours based on a request for greater bandwidth.

Now, with the Infinite Capacity Engine PIC, the effective number of channels has been expanded to 12, each capable of supporting a range of modulation techniques (see table below) and data rates. In fact, Infinera uses multiple Nyquist sub-carriers spread across each of the 12 channels. By encoding the data across multiple sub-carriers a lower-baud rate can be used, increasing the tolerance to non-linear channel impairments during optical transmission.

Mahajan says the latest PIC has a power consumption similar to its current 500 Gigabit super-channel PIC but because the photonic design supports up to 2.4 terabit, the power consumption in gigabit-per-Watt is reduced by 70 percent.

FlexCoherent encryption

The latest FlexCoherent DSP-ASIC is Infinera's most complex yet. The 1.6 billion transistor 28nm CMOS IC can process two channels, and supports a 33 gigabaud symbol rate. As a result, six DSP-ASICs are used with the 12-channel PIC.

It is the DSP-ASIC that enables the various elements of the advanced coherent toolkit that includes improved soft-decision forward error correction. "The net coding gain is 11.9dB, up 0.9 dB, which improves the capacity-reach," says Mahajan. Infinera says the ultra long-haul performance has also been improved from 9,500km to over 12,000km.

Source: Infinera

Source: Infinera

The DSP also features layer one encryption implementing the 256-bit Advanced Encryption Standard (AES-256). Infinera says the request for encryption is being led by the Internet content providers but wholesale operators and co-location providers also want to secure transmissions between sites.

Infinera introduced layer two MACsec encryption with its Cloud Xpress platform. This encrypts the Ethernet payload but not the header. With layer one encryption, it is the OTN frames that are encoded. "When we get down to the OTN level, everything is encrypted," says Mahajan. An operator can choose to encrypt the entire super-channel or encrypt at the service level, down to the ODU0 (1.244 Gbps) level.

System benefits

Using the Infinite Capacity Engine, the transmission capacity over a fibre increases from 9.5 terabit to up to 26.4 terabit.

And with the newest PIC, Infinera can expand the sliceable transponder concept for metro-regional applications. The 2.4 terabits of capacity can be pre-deployed and new capacity turned up between nodes. "You can suddenly turn up 200 gigabit for a month or two, rent and then return it," says Mahajan. However, to support the full 2.4 terabits of capacity, the PIC at the other end of the link would also need to support 16-QAM.

Infinera does say there will be other Infinite Capacity Engine variants. "There will be specific engines for specific markets, and we would choose a subset of the modulations," says Mahajan.

One obvious platform that will benefit from the first Infinite Capacity Engine is the DTN-X. Another that will likely use an ICE variant is Infinera's Cloud Xpress. At present Infinera integrates its 500-gigabit PIC in a 2 rack-unit box for data centre interconnect applications. By using the new PIC and implementing PM-16QAM, the line-side capacity per rack unit of a second-generation Cloud Xpress would rise from 250 gigabit to 1.2 terabit. And with layer one encryption, the MACsec IC may no longer be needed.

Mahajan says the Infinite Capacity Engine has already been tested in the Telstra trial detailed in January. "We have already proven its viability but it is not deployed and carrying live traffic," he says.

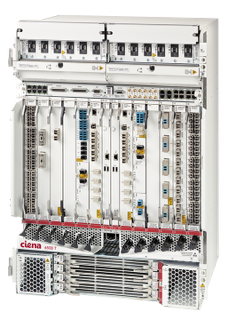

Ciena enhances its 6500 packet-optical transport family

"The 6500 T-Series is a big deal as Ciena can offer two different systems depending on what the customer is looking for," says Andrew Schmitt, founder and principal analyst of market research firm, Cignal AI.

Helen XenosIf customers want straightforward transport and the ability to reach a number of different distances, there is the existing 6500 S-series, says Schmitt. The T-series is a system specifically for metro-regional networks that can accommodate multiple traffic types – OTN or packet.

Helen XenosIf customers want straightforward transport and the ability to reach a number of different distances, there is the existing 6500 S-series, says Schmitt. The T-series is a system specifically for metro-regional networks that can accommodate multiple traffic types – OTN or packet.

"It has very high density for a packet-optical system and offers pay-as-you-grow with CFP2-ACO [coherent pluggable] modules," says Schmitt.

Ciena says the T-series has been developed to address new connectivity requirements service providers face. Content is being shifted to the metro to improve the quality of experience for end users and reduce capacity on backbone networks. Such user consumption of content is one factor accounting for the strong annual 40 percent growth in metro traffic.

According to Ciena, service providers have to deploy multiple overlays of network elements to scale capacity, including at the photonic switch layer, because they need more than 8-degree reconfigurable optical add/ drop multiplexers (ROADMs).

Operators are looking for a next-generation platform for these very high-capacity switching locations to efficiently distribute content

But overlays add complexity to the metro network and slow the turn-up times of services, says Helen Xenos, director, product and technology marketing at Ciena: "Operators are looking for a next-generation platform for these very high-capacity switching locations to efficiently distribute content."

U.S. service provider Verizon is the first to announce the adoption of the 6500 T-series to modernise its metro and is now deploying the platform. "Verizon is dealing with a heterogeneous network in the metro with many competing requirements," says Schmitt. "They don’t have the luxury of starting over or specialising like some of the hyper-scale transport architectures."

The T-series, once deployed, will handle the evolving requirements of Verizon's network. "Sure, it comes with additional costs compared with bare-bones transport but my conversation with folks at Verizon would indicate flexibility is worth the price," says Schmitt.

Ciena has over 500 customers in 50 countries for its existing 6500 S-series. Customers include 18 of the top 25 communications service providers and three of the top five content providers.

Xenos says an increasing number of service providers are interested in its latest platform. The T-series is part of six request-for-proposals (RFPs) and is being evaluated in several service providers' labs. The 6500 T-series will be generally available this month.

6500 T-series

The existing 6500 S-series family comprises four platforms, from the 2 rack-unit (RU) 6500-D2 chassis to the 22RU 6500-S32 that supports Ethernet, time-division multiplexed traffic and wavelength division multiplexing, and 3.2 terabit-per-second (Tbps) packet/ Optical Transport Network (OTN) switching.

The two T-series platforms are the half rack 6500-12T and the full rack 6500-24T. The cards have been upgraded from 100-gigabit switching per slot to 500-gigabit per slot.

The 6500-T12 has 12 service slots which house either service interfaces or photonic modules. There are also 2 control modules. Shown at the base of the chassis are four 500 Gig switching modules. Source: Ciena

The 6500-T12 has 12 service slots which house either service interfaces or photonic modules. There are also 2 control modules. Shown at the base of the chassis are four 500 Gig switching modules. Source: Ciena

The 500 gigabit switching per slot means the 6500-12T supports 6 terabits of switching capacity while the -24T will support 12 terabits by year end. The platforms have been tested and will support 1 terabit per slot, such that the -24T will deliver the full 24 terabit. Over 100 terabit of switching capacity will be possible in a multiple-chassis configuration, managed as a single switching node.

The latest platforms can use Ciena's existing coherent line cards that support two 100 gigabit wavelengths. The T-Series also supports a 500-gigabit coherent line card with five CFP2-ACOs coupled with Ciena's WaveLogic 3 Nano DSP-ASIC.

"We will support higher-capacity wavelengths in a muxponder configuration using our existing S-series," says Xenos. "But for switching applications, switching lower-speed traffic across the shelf onto a very high-capacity wavelength, this is something that the T-series would be used for."

The T-series also adds a denser, larger-degree ROADM, from an existing 6500 S-series 8-degree to a 16-degree flexible grid, colourless, directionless and contentionless (CDC) design. Xenos says the ROADM design is also more compact such that the line amplifiers fit on the same card.

"The requirements of this platform is that it has full integration of layer 0, layer 1 and layer 2 functions," says Xenos.

The 6500 T-series supports open application programming interfaces (APIs) and is being incorporated as part of Ciena's Emulation Cloud. The Emulation Cloud enabling customers to test software on simulated network configurations without requiring 6500 hardware and is being demonstrated at OFC 2016.

The 6500 is also being integrated as part of Ciena's Blue Planet orchestration and management architecture.

OFC 2016: 20-plus technical paper highlights

To download a copy of the complete mind map, click here.

Daryl Inniss reflects on a career in market research

Daryl Inniss

Daryl Inniss

Rocky beginnings

I jumped ship in 2001 joining RHK, a market research firm, knowing nothing about the craft. I had been a technical manager and loved research and development, but work was 500 miles from my family and the weekly commute was gruelling.

Back then, the telecom market was crashing and I believed my job was at risk. Moving to a small market research firm could hardly be described as good planning, but it turned out to be a godsend.

I had no idea what I was getting into and my first months did not help. My mother passed away within a month of joining and I was absent for half of my first 40 days. But my boss was very supportive. Meanwhile, work consisted of unintelligible, endless conference calls. And while in this daze, September 11th occurred.

The first report - getting the job done

Completing my first market research report helped ground me in the art. I wrote about optical dispersion compensators. After interviewing many companies, I wrote a long and complicated piece, an exercise that I found difficult. I also struggled with who would read the report and what would be done with the data.

The report aimed to explain technical issues simply and included a market forecast. Completing it proved hard because there was always more information to include, a better explanation, and better forecast data to be gathered.

I felt unsatisfied but the report received kudos. Internally I was told that I was the second or third analyst to tackle that topic and the first to complete the work. And an optical company complimented me on the report. But I felt dissatisfied and wished I had done better. I wanted to understand the subject more, wished I could provide clearer, simpler explanations and also provide a better forecast.

Nonetheless, I learned the importance of completing assignments as they can go on forever.

A market researcher's role

An analyst tries to identify market opportunities and winning strategies. Looking at new products, for example, the goal is to explain what they are, why they are being introduced, who will use them, their value, and the competitive landscape. The issues must be explained to novices and experts alike. The technical novice may get a glimpse of what the technology means and how it works, while a technical expert may understand the ecosystem more deeply.

An analyst must strive to prepare simple messages that are steeped in facts. You need to have a story—say why something is happening and explain it in the context of the bigger picture.

Forecasts, market share, rankings, prices and volumes are all important. Everyone loves numbers. But the story underpinning the numbers is far more important and most people do not take the time to determine the causes behind the numbers.

Where is the industry going?

I have spent the last 15 years analysing the optical components market. Sustainable profitability is the biggest topic, and consolidation is viewed as providing the best approach. Notwithstanding the mergers and acquisitions, the market is fragmented, margins remain low, and there is still no evidence of true consolidation.

Independent of all the change, optical component suppliers post gross margins below 40 percent and most are below 30 percent while semiconductor companies are routinely above 50 percent. There is a force keeping the industry stuck at this level, in part because there is little product differentiation.

Forecasts, market share, rankings, prices and volumes are all important. Everyone loves numbers. But the story underpinning the numbers is far more important

Avago Technologies’ divestiture of its optical module business to Foxxconn Interconnect Technology Group points to one high-margin path. Discrete components—particularly lasers and modulators, and to a lesser extent photodiodes and receivers - command higher margins. Vendors can offer differentiated products at this level. Total revenues are lower so the challenge is to win enough business to fill the factory because these are fixed-cost, intensive businesses.

Subsystems offer another high-margin path, particularly for vertically-integrated companies. Here vendors are challenged with a long time-to-market, requiring a strong design team to support customer requests. Also business can be lumpy because solutions are customer-specific.

Acacia Communications' coherent 100 gigabit transponders is an example solution that has the basis to win broad-based business and high margins. The products offer a one-stop-shop solution including optics, electronics, and software. Acacia is developing silicon photonics so it controls most of the bill of materials, keeping down product cost. And its solution is differentiated in that it helps customers get their products to market while achieving a high level of performance.

Market research: even more important now

The communications industry is going through extensive change making market research more important than ever. The Web 2.0 companies are the new optical communication mindshare leaders, driving technology and business practices.

Simultaneously China is the biggest consumer of optical gear, both for long-haul and access networks. Optical component suppliers need to understand how to compete in this new environment. What are the new rules? How are they evolving? How can companies best position themselves to win more business?

Just like when I started, I ask how can a market researcher help component companies navigate this new world. No doubt, this is a challenge, but market researchers provide the collective market voice. They are the market mirror that shows the beauty spots and the warts. They are given license to say what everyone is thinking. They can raise market consciousness so participants may act fearlessly.

But market researchers need to understand the story from top to bottom—end customer to suppliers. They must communicate well which includes not only delivering the story but also being humble, admitting mistakes, keeping sources and information confidential, and taking corrective actions.

This is indeed a challenge and I feel honoured to have had the opportunity to participate. I could not have done the job without the help of wonderful people from all over the world. Their generosity, warmth, and kindness made all the difference. At bottom, it is these relationships that mattered as we tried to help each other navigate.

Biography

Dr. Daryl Inniss is Director, New Business Development at OFS Fitel, the designer, manufacturer and provider of optical fibre, fibre optic cable, connectivity, fibre-to-the-subscriber and specialty photonics products.

He was formerly Components Practice Leader at market research firm Ovum and RHK. Daryl was Technical Manager at JDSU and Lucent Technologies, Bell Laboratories, and started his career as a Member of the Technical Staff, AT&T Bell Labs.

OFC 2016: a sample of the technical paper highlights

Here is a small sample of the technical paper highlights being presented at the conference.

Doubling core network capacity

Microsoft has conducted a study measuring the performance of its North American core backbone network to determine how the use of bandwidth-variable transceivers (BVTs) could boost capacity.

The highest capacity modulation scheme suited for each link from the choice of polarisation-multiplexed, quadrature phase-shift keying (PM-QPSK), polarisation-multiplexed, 8 quadrature amplitude modulation (PM-8QAM) and PM-16QAM can then be used.

By measuring the signal (Q-factor) for all its PM-QPSK based 100 gigabit links, Microsoft's study found that network capacity could be increased by 70 percent using BVTs. Equally, having the ability to increase capacity in 25-gigabit increments would increase capacity by a further 16 percent while a finer resolution of 1-gigabit would add an extra 13 percent.

Microsoft says such tuning of links need not be done in real time but rather when a link is commissioned or undergoing maintenance.

[paper M2J.2]

Architecting a new metro

How can operators redesign their metro network to enable rapid service innovation? This is the subject of a joint paper from AT&T, the Open Networking Lab and Stanford University. The work is part of a programme dubbed CORD to redesign the central office as a data centre using commodity hardware and open software to enable the rapid scaling of services. In particular, OpenFlow-enabled white boxes, the Open Network Operating System (ONOS) - a software-defined networking (SDN) operating system, and network control and management applications are used.

As part of CORD, three legacy telecom devices - optical line termination (OLT), customer premises equipment (CPE), and broadband network gateways (BNG) - have been virtualised and implemented on servers.

The paper details how a single SDN control plane based on ONOS is used to create a converged packet-optical metro network and how its support for bandwidth on-demand and automatic restoration at the optical level is used for enterprise connectivity and video distribution services.

The paper also discusses how the metro architecture supports 'disaggregated' reconfigurable optical add/ drop multiplexers (ROADMs). By disaggregating a chassis-based ROADM into commodity components, an operator can size its infrastructure as required and grow it with demand, the paper says.

[paper Th1A.7]

400 gigabit single-carrier transmission

Nokia Bell Labs reports work on 400 gigabit-per-second (Gbps) single-carrier optical transmission over submarine distances. The attraction of adopting 400 gigabit single-carrier transmission as that it is the most efficient way to reduce the cost-per-bit of optical transmission systems.

The Bell Labs' paper reviews state-of-the-art 400 gigabit single-channel transmissions over 6,000km and greater distances, and discusses the tradeoffs between such variables as symbol rate, modulation and forward error correction (FEC) schemes.

400Gbps single-carrier submarine transmission is likely in the next few years

PM-16QAM is proposed as a promising modulation scheme to achieve beyond 6,000km distances and a spectral efficiency exceeding 5b/s/Hz. But this requires a symbol rate of greater than 60 gigabaud to accommodate the 20 percent overhead FEC. Pulse-shaping at the transmitter is also used.

Exploring the receiver performance with the varying symbol rate/ FEC overhead, Bell Labs reports that the best tradeoff between coding gain and implementation penalties is 64 gigabaud and 27.3% overhead. It concludes that single-carrier 400Gbps submarine transmission is likely in the next few years.

[paper Th1B.4]

Silicon modulator for CFP2-ACOs

Cisco has developed a compact flip-chip assembly that combines a silicon photonics modulator and a silicon germanium BiCMOS Mach-Zehnder modulator driver. Such an assembly forms the basis for low-cost advanced coherent optical transceivers such as the CFP2-ACO.

Cisco has demonstrated the assembly operating at 128.7Gbps using PM-QPSK and 257.3Gbps using PM-16QAM. Cisco believes this is the first demonstration of transmission at 257.3Gbps using PM-16QAM over 1,200km of standard single-mode fibre using a silicon photonics-based device.

The modulator has also been demonstrated operating at 321.4Gbps using PM-16QAM transmission and a 20 percent FEC overhead, the highest bit rate yet achieved using a silicon-photonics based transmitter, claims Cisco.

Cisco is already using CFP2-ACO modules as part of its NCS 1002 data centre interconnect platform that implement PM-16QAM and deliver 250 gigabit due to the use of a higher baud rate than the 32 gigabaud used for existing 100-gigabit coherent systems.

[paper Th1F.2]

Flexible Ethernet to exploit line-side efficiencies

Given how the optical network network is starting to use adaptive-rate interfaces, a paper from Google asks how the client side can benefit from such line-side flexibility.

The paper points out that traditional DWDM transport equipment typically multiplexes lower-rate client ports but that this doesn't apply to network operators that manage their own data centres. Here, traffic is exclusively packet-based from IP routers and typically matches the line rate. This is why data centre interconnect platforms have become popular as they require limited grooming capability.

Google highlights how Flexible Ethernet (FlexE), for which the Optical Internetworking Forum has just defined an Implementation Agreement for, combined with data centre interconnect equipment is an extremely effective combination.

FlexE supports Ethernet MAC rates independent of the Ethernet physical layer rate being used. Google shows examples of how using FlexE, sub client rates can match the line-side rate as well as how multiple client ports can support a higher speed router logical port.

The paper concludes that combining FlexE with data centre interconnect results in a low cost, low power, compact design that will enable Internet content providers to scale their networks.

[paper W4G.4]

100 gigabit the next stop on PON's roadmap

Source: Huawei

Source: Huawei

Q. What are the various ways the industry is considering implementing 100 Gigabit?

FE: The work happening now is to do a 25 gigabit-per-second wavelength, and then multiple wavelengths will be combined in some way to create 50-gigabit or 100-gigabit speed offerings.

Q. The IEEE 802.3ca is developing 100 gigabit EPON whereas the ITU-T/FSAN has defined NG-PON2. What does the development of 100 gigabit EPON mean for the future of NG-PON2?

The ITU NG-PON2 was a future PON project that was started in 2011 with the goal of reaching at least 40 Gigabit per PON system. This was achieved in 2015 when the whole series of standards was completed. During this time, the highest economical speed was 10 gigabit.

Now that time has passed, 25 gigabit-per-lambda is becoming more reasonable, and so the ITU plans to study 25 gigabit in both the multi-channel setting - that is NG-PON2, and in a single-channel setting that would be a follow-on to the XGS-PON system. These PON systems should not be seen as a single-point development but part of an evolution.

The IEEE 802.3ca work is complementary to this work, and many of the same people go to both standards groups - certainly Huawei does. It is in everybody’s interest to reuse components and technologies, and I’m confident that this will happen for the 25-gigabit generation just as it has in previous PON generations.

Q. In a press release, Huawei mentioned it is demonstrating a 100-Gigabit PON prototype. What is Huawei demonstrating and how has it been implemented?

The 100 gigabit PON prototype is an early look at the 25 gigabit-per-wavelength technology. Given that the standardisation is only beginning, we had to make many assumptions, but such prototypes give us a chance to find any issues early.

The details of the prototype will be more fully explained at the OFC conference, but in brief, what we have is a system that implements 25 gigabit downstream and 10 gigabit upstream per wavelength pair. A real PON MAC is operating in this system, and full PON operations: activation, ranging, dynamic bandwidth allocation etc. are taking place. The 25-gigabit transmission is done using either conventional non-return-to-zero (NRZ) or optical duobinary line coding.

Q. How is 100 gigabit PON envisaged to work with existing systems?

100 gigabit PON will co-exist with previous PON generations, most likely using a WDM-style of interworking. This is the same method used to allow G-PON, XG-PON, and TWDM-PON to co-exist on a single-fibre network.

Q. What in Huawei’s opinion are the main challenges to be overcome regarding 100 gigabit PON?

Frank EffenbergerThere are two technical challenges to this system.

Frank EffenbergerThere are two technical challenges to this system.

First, 25 gigabit-per-wavelength transmission over 20km of standard fibre that meets the approximate 30dB loss budget and the aggressive cost targets of access is difficult. This will take time to solve.

Second, combining multiple PON channels into a single virtual channel is not trivial because it requires some level of coordination between the channels. There are solutions, but they need more study to confirm their operation.

Q. What will the advent of Nx25 gigabit do to reduce the cost of PON?

That is a loaded question, because you presuppose that 25 gigabit will lower the cost. To first order, our hope is to keep the cost-per-endpoint increase to a reasonable level. Obviously, the cost-per-bit will decline significantly, but the access business is not so tied to this factor. The key factor in higher-speed PON is to increase the system and user speed while trying to hold costs steady.

The industrial readiness of TWDM-PON is not really at a mass deployment stage; furthermore, the 'killer app' that just absolutely demands TWDM’s capacity is not immediately at hand

Q. Besides showing operators an extended roadmap beyond 40 gigabit, what applications will require 100 gigabit PON?

In reality, while 100 gigabit PON makes for a nice headline, the story is more complex. There are two separate applications where we see the technology.

The first is a single-channel 25-gigabit system that would be useful for residential applications. This would serve as a follow-on to the XG-PON or 10GEPON deployments, and it could be at a price point that allows that.

The second is the full 100 gigabit PON with four channels. This would be useful for business services, for mobile backhaul and fronthaul, and fibre-deep architectures like G.fast (fibre-to-the-distribution point) and DOCSIS3.1 (D-CMTS).

Q. Huawei has also detailed a 10 and 40 gigabit NG-PON. Is this a combination of time- and wavelength-division multiplexing that in effect supports a traditional time-division multiplexed PON per wavelength i.e. TWDM-PON with rates that include 10 gigabit symmetrical, 10 gigabit downstream and 2.5 gigabit upstream, and 2.5 gigabit symmetrical?

You describe it correctly. The NG-PON2 system’s major solution is the TWDM-PON, which defines 4- or 8-wavelength pairs that can be used simultaneously, with 10 gigabit- or 2.5 gigabit-per-wavelength. So, it can reach a total capacity of 80 gigabit per PON.

In addition, NG-PON2 also defines a WDM overlay component, which supports eight or more channels of 10 gigabit per wavelength (not TDMed). All in all, the system capacity could reach over 160 gigabit.

Q. Is this system commercially deployed?

So far, there have been only small trial deployments. Huawei has deployed its early TWDM systems in many operators' networks in Europe and in Asia, but we are not at liberty to disclose these collaborations other than what has been announced. The industrial readiness of TWDM-PON is not really at a mass deployment stage; furthermore, the 'killer app' that just absolutely demands TWDM’s capacity is not immediately at hand.

When will it be deployed is a hard question.

Keep in mind that 10 gigabit PONs were standardised and prototyped by 2010, and yet it is 2016 and we still don’t see huge deployment; the first small-scale movements are starting to happen in China. That’s a six-year lag!

If NG-PON2 is true to that, then it predicts 2021 as the start of significant deployment. Barring the introduction of some super bandwidth-hungry service, I think it will still be some time before we deploy any next-generation system.

Q. What will be the first applications for 10G-40G NG-PON systems?

The envisioned applications for TWDM-PON was primarily residential and small business, with the simple assumption that bandwidth increase will continue to grow to 1 gigabit-per-second. A 40-gigabit PON, serving 32 customers typically, fits this Gigabit FTTH picture well. But that seems far away for now. Early deployments will likely be business services and wireless backhaul.

Silicon photonics adds off-chip comms to a RISC-V processor

"For the first time a system - a microprocessor - has been able to communicate with the external world using something other than electronics," says Vladimir Stojanovic, associate professor of electrical engineering and computer science at the University of California, Berkeley.

Vladimir Stojanovic

Vladimir Stojanovic

The microprocessor is the result of work that started at MIT nearly a decade ago as part of a project sponsored by the US Defense Advanced Research Projects Agency (DARPA) to investigate the integration of photonics and electronics for off-chip and even intra-chip communications.

The chip features a dual-core 1.65GHz RISC-V open instruction set processor and 1 megabyte of static RAM and integrates 70 million transistors and 850 optical components.

The work is also notable in that the optical components were developed without making any changes to an IBM 45nm CMOS process used to fabricate the processor. The researchers have demonstrated two of the processors communicating optically, with the RISC core on one chip reading and writing to the memory of the second device and executing programs such as image rendering.

This CMOS process approach to silicon photonics, dubbed 'zero-change' by the researchers, differs from that of the optical industry. So far silicon photonics players have customised CMOS processes to improve the optical components' performance. Many companies also develop the silicon photonics separately, using a trailing-edge 130nm or 90nm CMOS process while implementing the driver electronics on a separate chip using more advanced CMOS. That is because photonic devices such as a Mach-Zehnder modulator are relatively large and waste expensive silicon real-estate if implemented using a leading-edge process.

IBM is one player that has developed the electronics and optics on one chip using a 90nm CMOS process. However, the company says that the electronics use feature sizes closer to 65nm to achieve electrical speeds of 25 gigabit-per-second (Gbps), and being a custom process, it will only be possible to implement 50-gigabit rates using 4-level pulse amplitude modulation (PAM-4).

We are now reaping the benefits of this very precise process which others cannot do because they are operating at larger process nodes

"Our approach is that photonics is sort of like a second-class citizen to transistors but it is still good enough," says Stojanovic. This way, photonics can be part of an advanced CMOS process.

Pursuing a zero-change process was first met with skepticism and involved significant work by the researchers to develop. "People thought that making no changes to the process would be super-restrictive and lead to very poor [optical] device performance," says Stojanovic. Indeed, the first designs produced didn't work. "We didn't understand the IBM process and the masks enough, or it [the etching] would strip off certain stuff we'd put on to block certain steps."

But the team slowly mastered the process, making simple optical devices before moving on to more complex designs. Now the team believes its building-block components such as its vertical grating couplers have leading-edge performance while its ring-resonator modulator is close to matching the optical performance of designs using custom CMOS processes.

"We are now reaping the benefits of this very precise process which others cannot do because they are operating at larger process nodes," says Stojanovic.

Silicon photonics design

The researchers use a micro ring-resonator for its modulator design. The ring-resonator is much smaller than a Mach-Zehnder design and is 10 microns in diameter. Stojanovic says the dimensions of its vertical grating couplers are 10 to 20 microns while its silicon waveguides are 0.5 microns.

Photonic components are big relative to transistors, but for the links, it is the transistors that occupy more area than the photonics. "You can pack a lot of utilisation in a very small chip area," he says.

A key challenge with a micro ring-resonator is ensuring its stability. As the name implies, modulation of light occurs when the device is in resonance but this drifts with temperature, greatly impairing its performance.

Stojanovic cites how even the bit sequence can affect the modulator's temperature. "Given the microprocessor data is uncoded, you can have random bursts of zeros," he says. "When it [the modulator] drops the light, it self-heats: if it is modulating a [binary] zero it gets heated more than letting a one go through."

The researchers have had to develop circuitry that senses the bit-sequence pattern and counteracts the ring's self-heating. But the example also illustrates the advantage of combining photonics and electronics. "If you have a lot of transistors next to the modulator, it is much easier to tune it and make it work," says Stojanovic.

A prototype set-up of the chip-to-chip interconnect using silicon photonics. Source: Vladimir Stojanovic

A prototype set-up of the chip-to-chip interconnect using silicon photonics. Source: Vladimir Stojanovic

Demonstration

The team used two microprocessors - one CPU talking to the memory of the second chip 4m away. Two chips were used rather than one - going off-chip before returning - to prove that the communication was indeed optical since there is also an internal electrical bus on-chip linking the CPU and memory. "We wanted to demonstrate chip-to-chip because that is where we think the biggest bang for the buck is," says Stojanovic.

In the demonstration, a single laser operating at 1,183nm feeds the two paths linking the memory and processor. Each link is 2.5Gbps for a total bandwidth of 5Gbps. However the microprocessor was clocked at one-eightieth of its 1.65GHz clock speed because only one wavelength was used to carry data. The microprocessor design can support 11 wavelengths for a total bandwidth of 55Gbit/s while the silicon photonics technology itself will support between 16 and 32 wavelengths overall.

The group is already lab-testing a new iteration of the chip that promises to run the processor at full speed. The latest chip also features improved optical functions. "It has better devices all over the place: better modulators, photo-detectors and gratings; it keeps evolving," says Stojanovic.

We can ship that kind of bandwidth [3.2 terabits] from a single chip

Ayar Labs

Ayar Labs is a start-up still in stealth mode that has been established to use the zero-change silicon photonics to make interconnect chips for platforms in the data centre.

Stojanovic says the microprocessor demonstrator is an example of a product that is two generations beyond existing pluggable modules. Ayar Labs will focus on on-board optics, what he describes as the next generation of product. On-board optics sit on a card, close to the chip. Optics integrated within the chip will eventually be needed, he says, but only once applications require greater bandwidth and denser interfaces.

"One of the nice things is that this technology is malleable; it can be put in various form factors to satisfy different connectivity applications," says Stojanovic.

What Ayar Labs aims to do is replace the QSFP pluggable modules on the face plate of a switch with one chip next to the switch silicon that can have a capacity of 3.2 terabits. "We can ship that kind of bandwidth from a single chip," says Stojanovic.

Such a chip promises cost reduction given how a large part of the cost in optical design is in the packaging. Here, packaging 32, 100 Gigabit Ethernet QSFP modules can be replaced with a single optical module using the chip. "That cost reduction is the key to enabling deeper penetration of photonics, and has been a barrier for silicon photonics [volumes] to ramp," says Stojanovic.

There is also the issue of how to couple the laser to the silicon photonics chip. Stojanovic says such high-bandwidth interface ICs require multiple lasers: "You definitely don't want hundreds of lasers flip-chipped on top [of the optical chip], you have to have a different approach".

Ayar Labs has not detailed what it is doing but Stojanovic says that its approach is more radical than simply sharing one laser across a few links, "Think about the laser as the power supply to the box, or maybe a few racks," he says.

The start-up is also exploring using standard polycrystalline silicon rather than the more specialist silicon-on-isolator wafers.

"Poly-silicon is much more lossy, so we have had to do special tricks in that process to make it less so," says Stojanovic. The result is that changes are needed to be made to the process; this will not be a zero-change process. But Stojanovic says the changes are few in number and relatively simple, and that it has already been shown to work.

Having such a process available would allow photonics to be added to transistors made using the most advanced CMOS processes - 16nm and even 7nm. "Then silicon-on-insulator becomes redundant; that is our end goal,” says Stojanovic.

Further information

Single-chip microprocessor that communicates directly using light, Nature, Volume 528, 24-31 December 2015