Imec gears up for the Internet of Things economy

It is the imec's CEO's first trip to Israel and around us the room is being prepared for an afternoon of presentations the Belgium nanoelectronics research centre will give on its work in such areas as the Internet of Things and 5G wireless to an audience of Israeli start-ups and entrepreneurs.

Luc Van den hove

Luc Van den hove

iMinds merger

Imec announced in February its plan to merge with iMinds, a Belgium research centre specialising in systems software and security, a move that will add 1,000 staff to imec's 2,500 researchers.

At first glance, the world-renown semiconductor process technology R&D centre joining forces with a systems house is a surprising move. But for Van den hove, it is a natural development as the company continues to grow from its technology origins to include systems-based research.

"Over the last 15 years we have built up more activities at the system level," he says. "These include everything related to the Internet of Things - our wireless and sensor programmes; we have a very strong programme on biomedical applications, which we sometimes refer to as the Internet of Healthy Things - wearable and diagnostics devices, but always leveraging our core competency in process technology."

Imec is also active in energy research: solar cells, power devices and now battery technology.

For many of these systems R&D programmes, an increasing challenge is managing data. "If we think about wearable devices, they collect data all the time, so we need to build up expertise in data fusion and data science topics," says Van den hove. There is also the issue of data security, especially regarding personal medical data. Many security solutions are embedded in software, says Van den hove, but hardware also plays a role.

Imec expects the Internet of Things to generate massive amounts of data, and more and more intelligence will need to be embedded at different levels in the network

"It just so happens that next to imec we have iMinds, a research centre that has top expertise in these areas [data and security]," says Van den hove. "Rather than compete with them, we felt it made more sense to just merge."

The merger also reflects the emergence of the Internet of Things economy, he says, where not only will there be software development but also hardware innovation: "You need much more hardware-software co-development". The merger is expected to be completed in the summer.

Internet of Things

Imec expects the Internet of Things to generate massive amounts of data, and more and more intelligence will need to be embedded at different levels in the network.

"Some people refer to it as the fog - you have the cloud and then the fog, which brings more data processing into the lower parts of the network," says Van den hove. "We refer to it as the Intuitive Internet of Things with intelligence being built into the sensor nodes, and these nodes will understand what the user needs; it is more than just measuring and sending everything to the cloud."

Van den hove says some in the industry believe that these sensors will be made in cheap, older-generation chip technologies and that processing will be performed in data centres. "We don't think so," he says. "And as we build in more intelligence, the sensors will need more sophisticated semiconductors."

Imec's belief is that the Internet of Things will be a driver for the full spectrum of semiconductor technologies. "This includes the high-end [process] nodes, not only for servers but for sophisticated sensors," he says.

"In the previous waves of innovation, you had the big companies dominating everything," he says. "With the Internet of Things, we are going to address so many different markets - all the industrial sectors will get innovation from the Internet of Things." There will be opportunities for the big players but there will also be many niche markets addressed by start-ups and small to medium enterprises.

Imec's trip to Israel is in response to the country's many start-ups and its entrepreneurship. "Especially now with our wish to be more active in the Internet of Things, we are going to work more with start-ups and support them," he says. "I believe Israel is an extremely interesting area for us in the broad scope of the Internet of Things: in wireless and all these new applications."

Herzliya

Herzliya

Semiconductor roadmap

Van den hove's background is in semiconductor process technology. He highlights the consolidation going on in the chip industry due, in part, to the CMOS feature nodes becoming more complex and requiring greater R&D expenditure to develop, but this is a story he has heard throughout his career.

"It always becomes more difficult - that is Moore's law - and [chip] volumes compensate for those challenges," says Van den hove. When he started his career 30 years ago the outlook was that Moore's law would end in 10 years' time. "If I talk to my core CMOS experts, the outlook is still 10 years," he says.

Imec is working on 7nm, 5nm and 3nm feature-size CMOS process technologies. "We see a clear roadmap to get there," he says. He expects the third dimension and stacking will be used more extensively, but he does not foresee the need for new materials like graphene or carbon nanotubes being used for the 3nm process node.

Imec is pursuing finFET transistor technology and this could be turned 90 degrees to become a vertical nanowire, he says. "But this is going to be based on silicon and maybe some compound semiconductors like germanium and III-V materials added on top of silicon." The imec CEO believes carbon-based materials will appear only after 3nm.

"The one thing that has to happen is that we have a cost-effective lithography technique and so EUV [extreme ultraviolet lithography] needs to make progress," he says. Here too he is upbeat pointing to the significant progress made in this area in the last year. "I think we are now very close to real introduction and manufacturing," he says.

We see strong [silicon photonics] opportunities for optical interconnect and that is one of our biggest activities, but also sensor technology, particularly in the medical domain

Silicon Photonics

Silicon photonics is another active research area with some 200 staff at imec and at its associated laboratory at Ghent university. "We see strong opportunities for optical interconnect and that is one of our biggest activities, but also sensor technology, particularly in the medical domain," he says.

Imec views silicon photonics as an evolutionary technology. "Photonics is being used at a certain level of a system now and, step by step, it will get closer to the chip," he says. "We are focussing more on when it will be on the board and on the chip."

Van den hove talks about integrating the photonics on a silicon interposer platform to create a cost-effective solution for the printed circuit board and chip levels. For him, first applications of such technology will be at the highest-end technologies of the data centre.

For biomedical sensors, silicon photonics is a very good detector technology. "You can grow molecules on top of the photonic components and by shining light through them you can perform spectroscopy; the solution is extremely sensitive and we are using it for many biomedical applications," he says.

Looking forward, what most excites Van den hove is the opportunity semiconductor technology has to bring innovation to so many industrial sectors: "Semiconductors have created a fantastic revolution is the way we communicate and compute but now we have an opportunity to bring innovation to nearly all segments of industry".

He cites medical applications as one example. "We all know people that have suffered from cancer in our family, if we can make a device that would detect cancer at a very early stage, it would have an enormous impact on our lives."

Van den hove says that while semiconductors is a mature technology, what is happening now is that semiconductors will miniaturise some of the diagnostics devices just like has happened with the cellular phone.

"We are developing a single chip that will allow us to do a full blood analysis in 10 minutes," he says. DNA sequencing will also become a routine procedure when visiting a doctor. "That is all going to be enabled by semiconductor technology."

Such developments is also a reflection of how various technologies are coming together: the combination of photonics with semiconductors, and the computing now available.

Imec is developing a disposable chip designed to find tumour cells in the blood that requires the analysis of thousands of images per second. "The chip is disposable but the calculations will be done on a computer, but it is only with the most advanced technology that you can do that," says Van den hove.

OIF document aims to spur line-side innovation

The CFP2-ACO. Source: OIF

The CFP2-ACO. Source: OIF

The pluggable CFP2-ACO houses the coherent optics, known as the analogue front end. The components include the tuneable lasers, modulation, coherent receiver, and the associated electronics - the drivers and the trans-impedance amplifier. The Implementation Agreement also includes the CFP2-ACO's high-speed electrical interface connecting the optics to the coherent DSP chip that sits on the line card.

One historical issue involving the design of innovative optical components into systems has been their long development time, says Ian Betty of Ciena, and OIF board member and editor of the CFP2-ACO Implementation Agreement. The lengthy development time made it risky for systems vendors to adopt such components as part of their optical transport designs. Now, with the CFP2-ACO, much of that risk is removed; system vendors can choose from a range of CFP2-ACO suppliers based on the module's performance and price.

Implementation Agreement

Much of the two-year effort developing the Implementation Agreement involved defining the management interface to the optical module. "This is different from our historical management interfaces," says Betty. "This is much more bare metal control of components."

For 7x5-inch and 4x5-inch MSA transponders, the management interface is focused on system-level parameters, whereas for the CFP2-ACO, lower-level optical parameters are accessible given the module's analogue transmission and receive signals.

"A lot of the management is to interrogate information about power levels, or adjusting transfer functions with pre-emphasis, or adjusting drive levels on drivers internal to the device, or asking for information: 'Have you received my RF signal?'," says Betty. "It is very much a lower-level interface because you have separated between the DSP and the optical interface."

The Implementation Agreement's definitions for the CFP2-ACO are also deliberately abstract. The optical technology used is not stated, nor is the module's data rate. "The module has no information associated with the system level - if it is 16-QAM or QPSK [modulation] or what the dispersion is," says Betty.

This is a strength, he says, as it makes the module independent of a data rate and gives it a larger market because any coherent ASIC can make use of this analogue front end. "It lets the optics guys innovate, which is what they are good at," says Betty.

Innovation

The CFP2-ACO is starting to be adopted in a variety of platforms. Arista Networks has added a CFP2-ACO line card to its 7500 data centre switches while several optical transport vendors are using the module for their data centre interconnect platforms.

One obvious way optical designers can innovate is by adding flexible modulation formats to the CFP2-ACO. Coriant's Groove G30 data centre interconnect platform uses CFP2-ACOs that support polarisation-multiplexed, quadrature phase-shift keying (PM-QPSK), polarisation-multiplexed, 8-state quadrature amplitude modulation (PM-8QAM) and PM-16QAM, delivering 100, 150 and 200 gigabit-per-second transmission, respectively. Coriant says the CFP2-ACOs it uses are silicon photonics and indium phosphide based.

Cisco Systems uses CFP2-ACO modules for its first data centre interconnect product, the NCS 1002. The system can use a CFP2-ACO with a higher baud rate to deliver 250 gigabit-per-second using a single carrier and PM-16QAM.

Ian BettyThe CFP2-ACO enables a much higher density line-side solution than other available form factors. The Groove G30, for example, fits eight such modules on one rack-unit line card. "That is the key enabler that -ACOs give," says Betty.

And being agnostic to a particular DSP, the CFP2-ACO enlarges the total addressable market. Betty hopes that by being able to sell the CFP2-ACO to multiple systems vendors, the line-side optical module players can drive down cost.

Roadmap

Betty says that the CFP2-ACO may offer the best route to greater overall line side capacity rather than moving to a smaller form factor module in future. He points out that in the last decade, the power consumption of the optics has gone down from some 16W to 12W. He does not foresee the power consumption coming down further to the 6W region that would be needed to enable a CFP4-ACO. "The size [of the CFP4] with all the technology available is very doable," says Betty. "But there is not an obvious way to make it [the optics] 6W."

The key issue is the analogue interface which determines what baud rate and what modulation or level of constellation can be put through a module. "The easiest way to lump all that together is with an implementation penalty for the optical front end," says Betty. "If you make the module smaller, you might have a higher implementation penalty, and with this penalty, you might not be able to put a higher constellation through it."

In other words, there are design trade-offs: the data rates supported by the CFP2 modules may achieve a higher overall line-side rate than more, smaller modules, each supporting a lower maximum data rate due to a higher implementation penalty.

"What gets you the ultimate maximum density of data rate through a given volume?" says Betty. "It is not necessarily obvious that making it smaller is better."

Could a CFP2-ACO support 32-QAM and 64-QAM? "The technical answer is what is the implementation penalty of the module?" says Betty. This is something that the industry will answer in time.

"This isn't the same as client optics where there is a spec, you do the spec and there are no brownie points for doing better than the spec, so all you can compete on is cost," says Betty. "Here, you can take your optical innovation and compete on cost, and you can also compete by having lower implementation penalties."

Start-up Sicoya targets chip-to-chip interfaces

“The trend we are seeing is the optics moving very close to the processor,” says Sven Otte, Sicoya’s CEO.

Sicoya was founded last year and raised €3.5 million ($3.9 million) towards the end of 2015. Many of the company’s dozen staff previously worked at the Technical University of Berlin. Sicoya expects to grow the company’s staff to 20 by the year end.

Otte says a general goal shared by silicon photonics developers is to combine the optics with the processor but that the industry is not there yet. “Both are different chip technologies and they are not necessarily compatible,” he says. “Instead we want the ASPIC very close to the processor or even co-packaged in a system-in-package design.”

Vertical-cavity surface-emitting lasers (VCSELs) are used for embedded optics placed alongside chips. VCSELs are inexpensive to make, says Otte, but they need to be packaged with driver chips. A VCSEL also needs to be efficiently coupled to the fibre which also requires separate lenses. ”These are hand-made transceivers with someone using a microscope to assemble,” says Otte. “But this is not scalable if you are talking about hundreds of thousands or millions of parts.”

He cites the huge numbers of Intel processors used in servers. “If you want to put an optical transceiver next to each of those processors, imagine doing that with manual assembly,” says Otte. “It just does not work; not if you want to hit the price points.”

In contrast, using silicon photonics requires two separate chips. The photonics is made using an older CMOS process with 130nm or 90nm feature sizes due to the relatively large dimensions of the optical functions, while a more advanced CMOS process is used to implement the electronics - the control loops, high-speed drivers and the amplifiers - associated with the optical transceiver. If an advanced CMOS process is used to implement both the electronics and optics on the one chip, the photonics dominates the chip area.

“If you use a sophisticated CMOS process then you pay all the money for the electronics but you are really using it for the optics,” says Otte. “This is why recently the two have been split: a sophisticated CMOS process for the electronics and a legacy, older process for the optics.”

Sicoya is adopting a single-chip approach, using a 130nm silicon germanium BiCMOS process technology for the electronics and photonics, due to its tiny silicon photonics modulator. “Really it is an electronics chip with a little bit of optics,” says Otte.

You can’t make a data centre ten times larger, and data centres can’t become ten times more expensive. You need to do something new.

Modulation

The start-up does not use a traditional Mach-Zehnder modulator or the much smaller ring-resonator modulator. The basic concept of the ring resonator is that by varying the refractive index of the ring waveguide, it can build up a large intensity of light, starving light in an adjacent coupled waveguide. This blocking and passing of light is what is needed for modulation.

The size of the ring resonator is a big plus but its operation is highly temperature dependent. “One of its issues is temperature control,” says Otte. “Each degree change impacts the resonant frequency [of the modulator].” Moreover, the smaller the ring-resonator design, the more sensitive it becomes. “You may shrink the device but then you need to add a lot more [controlling] circuitry,” he says.

Stefan Meister, Sicoya’s CTO, explains that there needs to be a diode with a ring resonator to change the refractive index to perform the modulation. The diode must be efficient otherwise, the resonance region is narrow and hence more sensitive to temperature change.

Sicoya has developed its own modulator which it refers to as a node-matched diode modulator. The modulator uses a photonic crystal; a device with a periodic structure which blocks certain frequencies of light. Sicoya’s modulator acts like a Fabry-Perot resonator and uses an inverse spectrum approach. “It has a really efficient diode inside so that the Q factor of the resonator can be really low,” says Meister. “So the issue of temperature is much more relaxed.” The Q factor refers to the narrowness of the resonance region.

Operating based on the inverse spectrum also results in Sicoya’s modulator having a much lower loss, says Meister.

Sicoya is working with the German foundry IHP to develop its technology and claims its modulator has been demonstrated operating at 25 gigabit and at 50 gigabit. But the start-up is not yet ready to detail its ASPIC designs nor when it expects to launch its first product.

5G wireless

However the CEO believes such technology will be needed with the advent of 5G wireless. The 10x increase in broadband bandwidth that the 5G cellular standard promises coupled with the continual growth of mobile subscribers globally will hugely impact data centres.

“You can’t make a data centre ten times larger, and data centres can’t become ten times more expensive, says Otte. “You need to do something new.”

This is where Sicoya believes its ASPICs can play a role.

“You can forward or process ten times the data and you are not paying more for it,” says Otte. “The transceiver chip is not really more expensive than the driver chip.”

Ciena shops for photonic technology for line-side edge

Part 3: Acquisitions and silicon photonics

Ciena is to acquire the high-speed photonics components division of Teraxion for $32 million. The deal includes 35 employees and Teraxion’s indium phosphide and silicon photonics technologies. The systems vendor is making the acquisition to benefit its coherent-based packet-optical transmission systems in metro and long-haul networks.

Sterling Perrin

Sterling Perrin

“Historically Ciena has been a step ahead of others in introducing new coherent capabilities to the market,” says Ron Kline, principal analyst, intelligent networks at market research company, Ovum. “The technology is critical to own if they want to maintain their edge.”

“Bringing in-house not everything, just piece parts, are becoming differentiators,” says Sterling Perrin, senior analyst at Heavy Reading.

Ciena designs its own WaveLogic coherent DSP-ASICs but buys its optical components. Having its own photonics design team with expertise in indium-phosphide and silicon photonics will allow Ciena to develop complete line-side systems, optimising the photonics and electronics to benefit system performance.

Owning both the photonics and optics also promises to reduce power consumption and improve line-side port density.

“These assets will give us greater control of a critical roadmap component for the advancement of those coherent solutions,” a Ciena spokesperson told Gazettabyte. “These assets will give us greater control of a critical enabling technology to accelerate the pace of our innovation and speed our time-to-market for key packet-optical solutions.”

Ciena have always been do-it-yourself when it comes to optics, and it is an area where they has a huge heritage. So it is an interesting admission that they need somebody else to help them.

The OME 6500 packet optical platform remains a critical system for Ciena in terms of revenues, according to a recent report from the financial analyst firm, Jefferies.

Ciena have always been do-it-yourself when it comes to optics, and it is an area where they have a huge heritage, says Perrin: “So it is an interesting admission that they need somebody else to help them.” It is the silicon photonics technology not just photonic integration that is of importance to Ciena, he says.

Coherent competition

Infinera, which designs its own photonic integrated circuits (PICs) and coherent DSP-ASIC, recently detailed its next-generation coherent toolkit prior to the launch of its terabit PIC and coherent DSP-ASIC. The toolkit uses sub-carriers, parallel processing soft-decision forward-error correction (SD-FEC) and enhanced modulation techniques. These improvements reflect the tighter integration between photonics and electronics for optical transport.

Cisco Systems is another system vendor that develops its own coherent ASICs and has silicon photonics expertise with its Lightwire acquisition in 2012, as does Coriant which works with strategic partners while using merchant coherent processors. Huawei has photonic integration expertise with its acquisitions of indium phosphide UK specialist CIP Technologies in 2012 and Belgian silicon photonics start-up Caliopa in 2013.

Cisco may have started the ball rolling when they acquired silicon photonics start-up Lightwire, and at the time they were criticised for doing so, says Perrin: “This [Ciena move] seems to be partially a response, at least a validation, to what Cisco did, bringing that in-house.”

Optical module maker Acacia also has silicon photonics and DSP-ASIC expertise. Acacia has launched 100 gigabit and 200-400 gigabit CFP optical modules that use silicon photonics.

Companies like Coriant and lots of mid-tier players can use Acacia and rely on the expertise the start-up is driving in photonic integration on the line side, says Perrin. ”Now Ciena wants to own the whole thing which, to me, means they need to move more rapidly, probably driven by the Acacia development.”

Teraxion

Ciena has been working with Canadian firm Teraxion for a long time and the two have a co-development agreement, says Perrin.

Teraxion was founded in 2000 during the optical boom, specialising in dispersion compensation modules and fibre Bragg gratings. In recent years, it has added indium-phosphide and silicon photonics expertise and in 2013 acquired Cogo Optronics, adding indium-phosphide modulator technology.

Teraxion detailed an indium phosphide modulator suited to 400 gigabit at ECOC 2015. Teraxion said at the time that it had demonstrated a 400-gigabit single-wavelength transmission over 500km using polarisation-multiplexed, 16-QAM (PM-16QAM), operating at a symbol rate of 56 gigabaud.

It also has a coherent receiver technology implemented using silicon photonics.

The remaining business of Teraxion covers fibre-optic communication, fibre lasers and optical-sensing applications which employs 120 staff will continue in Québec City.

Next-generation coherent adds sub-carriers to capabilities

Part 2: Infinera's coherent toolkit

Source: Infinera

Source: Infinera

Infinera has detailed coherent technology enhancements implemented using its latest-generation optical transmission technology. The system vendor is still to launch its newest photonic integrated circuit (PIC) and FlexCoherent DSP-ASIC but has detailed features the CMOS and indium phosphide ICs support.

The techniques highlight the increasing sophistication of coherent technology and an ever tighter coupling between electronics and photonics.

The company has demonstrated the technology, dubbed the Advanced Coherent Toolkit, on a Telstra 9,000km submarine link spanning the Pacific. In particular, the demonstration used matrix-enhanced polarisation-multiplexed, binary phased-shift keying (PM-BPSK) that enabled the 9,000km span without optical signal regeneration.

Using the ACT is expected to extend the capacity-reach product for links by the order of 60 percent. Indeed the latest coherent technology with transmitter-based digital signal processing delivers 25x the capacity-reach of 10-gigabit wavelengths using direct-detection, the company says.

Infinera’s latest PIC technology includes polarisation-multiplexed, 8-quadrature amplitude modulation (PM-8QAM) and PM-16QAM schemes. Its current 500-gigabit PIC supports PM-BPSK, PM-3QAM and PM-QPSK. The PIC is expected to support a 1.2-terabit super-channel and using PM-16QAM could deliver 2.4 terabit.

“This [the latest PIC] is beyond 500 gigabit,” confirms Pravin Mahajan, Infinera’s director of product and corporate marketing. “We are talking terabits now.”

Sterling Perrin, senior analyst at Heavy Reading, sees the Infinera announcement as less PIC related and more an indication of the expertise Infinera has been accumulating in areas such as digital signal processing.

Nyquist sub-carriers

Infinera is the first to announce the use of sub-carriers. Instead of modulating the data onto a single carrier, Infinera is using multiple Nyquist sub-carriers spread across a channel.

Using a flexible grid, the sub-carriers span a 37.5GHz-wide channel. In the example shown above, six are used although the number is variable depending on the link. The sub-carriers occupy 35GHz of the band while 2.5GHz is used as a guard band.

“Information you were carrying across one carrier can now be carried over multiple sub-carriers,” says Mahajan. “The benefit is that you can drive this as a lower-baud rate.”

Lowering the baud rate increases the tolerance to non-linear channel impairments experienced during optical transmission. “The electronic compensation is also much less than what you would be doing at a much higher baud rate,” says Abhijit Chitambar, Infinera’s principal product and technology marketing manager.

While the industry is looking to increase overall baud rate to increase capacity carried and reduce cost, the introduction of sub-carriers benefits overall link performance. “You end up with a better Q value,” says Mahajan. The ‘Q’ refers to the Quality Factor, a measure of the transmission’s performance. The Q Factor combines the optical signal-to-noise ratio (OSNR) and the optical bandwidth of the photo-detector, providing a more practical performance measure, says Infinera.

Infinera has not detailed how it implements the sub-carriers. But it appears to be a combination of the transmitter PIC and the digital-to-analogue converter of the coherent DSP-ASIC.

It is not clear what the hardware implications of adopting sub-carriers are and whether the overall DSP processing is reduced, lowering the ASIC’s power consumption. But using sub-carriers promotes parallel processing and that promises chip architectural benefits.

“Without this [sub-carrier] approach you are talking about upping baud rate,” says Mahajan. “We are not going to stop increasing the baud rate, it is more a question of how much you can squeeze with what is available today.“

SD-FEC enhancements

The FlexCoherent DSP also supports enhanced soft-decision forward-error correction (SD-FEC) including the processing of two channels that need not be contiguous.

SD-FEC delivers enhanced performance compared to conventional hard-decision FEC. Hard-decision FEC decides whether a received bit is a 1 or a 0; SD-FEC also uses a confidence measure as to the likelihood of the bit being a 1 or 0. This additional information results in a net coding gain of 2dB compared to hard-decision FEC, benefiting reach and extending the life of submarine links.

By pairing two channels, Infinera shares the FEC codes. By pairing a strong channel with a weak one and sharing the codes, some of the strength of the strong signal can be traded to bolster the weaker one, extending its reach or even allowing for a more advanced modulation scheme to be used.

The SD-FEC can also trade performance with latency. SD-FEC uses as much as a 35 percent overhead and this adds to latency. Trading the two supports those routes where low latency is a priority.

Matrix-enhanced PSK

Infinera has implemented a technique that enhances the performance of PM-BPSK used for the longest transmission distances such as sub-sea links. The matrix-enhancement uses a form of averaging that adds about a decibel of gain. “Any innovation that adds gain to a link, the margin that you give to operators is always welcome,” says Mahajan.

The toolkit also supports the fine-tuning of channel widths. This fine-tuning allows the channel spacing to be tailored for a given link as well as better accommodating the Nyquist sub-carriers.

Product launch

The company has not said when it will launch its terabit PIC and FlexCoherent DSP.

“Infinera is saying it is the first announcing Nyquist sub-carriers, which is true, but they don’t give a roadmap when the product is coming out,” says Heavy Reading’s Perrin. “I suspect that Nokia [Alcatel-Lucent], Ciena and Huawei are all innovating on the same lines.”

There could be a slew of announcements around the time of the OFC show in March, says Perrin: “So Infinera could be first to announce but not necessarily first to market.”

BT makes plans for continued traffic growth in its core

Part 1

Kevin Smith: “A lot of the work we are doing with the trials have demonstrated we can scale our networks gracefully rather than there being a brick wall of a problem.”

Kevin Smith: “A lot of the work we are doing with the trials have demonstrated we can scale our networks gracefully rather than there being a brick wall of a problem.”

BT is confident that its core network will accommodate the expected IP traffic growth for the next decade. Traffic in BT’s core is growing at between 35 and 40 percent annually, compared to the global average growth rate of 20 to 30 percent. BT attributes the higher growth to the rollout of fibre-based broadband across the UK.

The telco is deploying 100-gigabit wavelengths in high-traffic areas of its network. “These are key sites where we're running out of wavelengths such that we need to implement higher-speed ones,” says Kevin Smith, research leader for BT’s transport networks. The operator is now trialling 200-gigabit wavelengths using polarisation multiplexing, 16-quadrature amplitude modulation (PM-16QAM).

Adopting higher-order modulation increases capacity and spectral efficiency but at the expense of a loss in system performance which can be significant.

Systems vendors use polarisation-multiplexed, quadrature phase-shift keying (PM-QPSK) for 100-gigabit wavelengths. Moving to PM-16QAM doubles the bits on the wavelength but the received data has less tolerance to noise. The result is a 6-decibel loss compared to PM-QPSK, such that the transmission distance drops to a quarter. If PM-QPSK spans a 4,000km link, using PM-16QAM the reach on the same link is only 1,000km.

The transmitted capacity can also be increased by using pulse-shaping at the transmitter to cram a wavelength into a narrower channel. BT’s existing optical network uses fixed 50GHz-wide channels. But in a recent network trial with Huawei, a 3 terabit super-channel was transmitted over a 360km link using a flexible grid.

The super-channel comprised 15 channels, each carrying 200 gigabit using PM-16QAM. Using the flexible grid, each carrier occupied a 33.5GHz channel, increasing fibre capacity by a factor of 1.5 compared to a 50GHz fixed-grid. “For 16-QAM, it [33.5GHz] is pretty close to the limit,” says Smith.

Increasing the baud rate is the most structurally-efficient way to accommodate the high speed

Another way to boost the carrier’s data as well as reduce system cost is to up the signalling rate. Current optical transport systems use a 30Gbaud symbol rate. Here, two carriers each using PM-16QAM are needed to deliver 400 gigabit. Doubling the symbol rate to 60Gbaud enables a single 400 gigabit wavelength. Doubling the baud rate also halves a platform’s transponder count, reducing the overall cost-per-bit, and increases platform density.

“Increasing the baud rate is the most structurally-efficient way to accommodate the high speed,” says Smith. Going to 16QAM increases the data that is carried but at the expense of reach. By increasing the baud rate, reach can be extended while also keeping the modulation rate at a lower level, he says.

BT says it is seeing signs of such ‘flexrate’ transponders that can adapt modulation format and baud rate. “This is a very interesting area we can mine,” says Smith. The fundamental driver is about reducing cost but also giving BT more flexibility in its network, he says.

Traffic growth

Coping with traffic growth is a constant challenge, says BT.

“I’m not worried about a capacity crunch,” says Smith. “A lot of the work we are doing with the trials have demonstrated we can scale our networks gracefully rather than there being a brick wall of a problem.”

The operator is confident that 25 to 30 terabit of traffic can be squeezed into the C-band using flexgrid and narrower bands. Beyond that, BT says broadening the spectral window using additional spectral bands such as the L-band could boost a fibre’s capacity to 100 terabit. Vendors are already looking at extending the spectral window, says BT.

Sliceable transponders

BT is also part of longer-term research exploring an extension to the ‘flexrate' transponder, dubbed the sliceable bit rate variable transponder (S-BVT).

“It is very much early days but the idea is to put multiple modulators on the same big super transponder so that it can kick out super-channels that can be provisioned on demand,” says Andrew Lord, head of optical research at BT.

The large multi-terabit super-channel would be sent out and sliced further down the network by flexible grid wavelength-selective switches such that parts of the super-channel would end up at different destinations. “You don’t need all that capacity to go to one other node but you might need it to go to multiple nodes,” says Lord.

Such a sliceable transponder promises several benefits. One is an ability to keep repartitioning the multi-terabit slice based on demand. “It is a good thing if we see that kind of dynamics happening, but not fast dynamics,” says Lord. The repartitioning would more likely be occasional, adding extra capacity between nodes based on demand. Accordingly, the sliced multi-terabit super-channel would end up at fewer destinations over time.

The sliceable transponder concept also promises cost reduction through greater component integration.

BT stresses this is still early research but such a transponder could end up in the network in five years’ time.

Space-division multiplexing

Another research area that promises to increase significantly the overall capacity of a fibre is space-division multiplexing (SDM).

SDM promises to boost the capacity by a factor of between 10 and 100 through the adoption of parallel transmission paths. The simplest way to create such parallel paths is to bundle several standard single-mode fibres in a cable. But speciality fibre could also be used, either multi-core or multi-mode.

BT says it is not researching spatial multiplexing.

”I’m very much more interested in how we use the fibre we have already got,” says Lord. The priority is pushing channels together as close as possible and getting the 25 terabit figure higher, as well as exploring the L-band. “That is a much more practical way to go forward,” says Lord.

However, BT welcomes the research into SDM. “What it [SDM] is pushing into the industry is a knowledge about how to do integration and the expertise that comes out of that is still really valid,” says Lord. “As it is, I don’t see how it fits.”

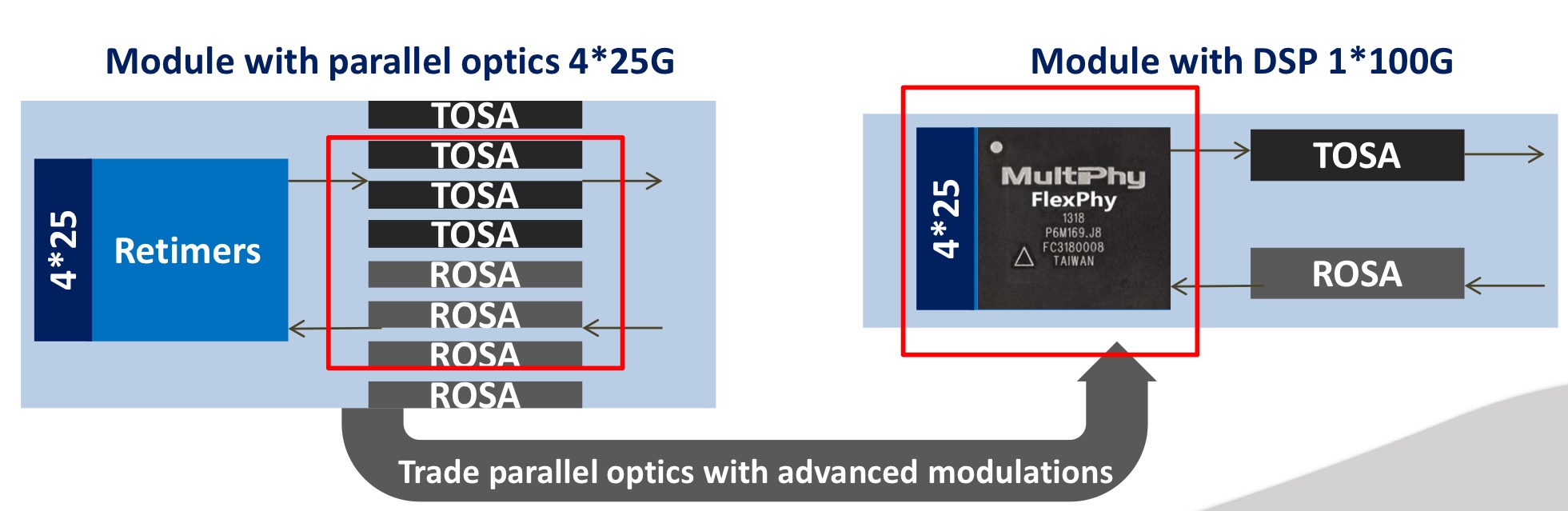

MultiPhy raises $17M to develop 100G serial interfaces

MultiPhy is developing chips to support serial 100-gigabit-per-second transmission using 25-gigabit optical components. The design will enable short reach links within the data centre and up to 80km point-to-point links for data centre interconnect.

Source: MultiPhy

Source: MultiPhy

“It is not the same chip [for the two applications] but the same technology core,” says Avi Shabtai, the CEO of MultiPhy. The funding will be used to bring products to market as well as expand the company’s marketing arm.

There is a huge benefit in moving to a single-wavelength technology; you throw out pretty much three-quarters of the optics

100 gigabit serial

The IEEE has specified 100-gigabit lanes as part of its ongoing 400 Gigabit Ethernet standardisation work. “It is the first time the IEEE has accepted 100 gigabit on a single wavelength as a baseline for a standard,” says Shabtai.

The IEEE work has defined 4-by-100 gigabit with a reach of 500 meters using four-level pulse-amplitude modulation (PAM-4) that encodes 2 bits-per-symbol. This means that optics and electronics operating at 50 gigabit can be used. However, MultiPhy has developed digital signal processing technology that allows the optics to be overdriven such that 25-gigabit optics can be used to deliver the 50 gigabaud required.

“There is a huge benefit in moving to a single-wavelength technology,” says Shabtai. ”You throw out pretty much three-quarters of the optics.”

The chip MultiPhy is developing, dubbed FlexPhy, supports the CAUI-4 (4-by-28 gigabit) interface, a 4:1 multiplexer and 1:4 demultiplexer, PAM-4 operating at 56 gigabaud and the digital signal processing.

The optics - a single transmitter optical sub-assembly (TOSA) and a single receiver optical sub-assembly (ROSA) - and the FlexPhy chip will fit within a QSFP28 module. “Taking into account that you have one chip, one laser and one photo-diode, these are pretty much the components you already have in an SFP module,” says Shabtai. “Moving from a QSFP form factor to an SFP is not that far.”

MultiPhy says new-generation switches will support 128 SFP28 ports, each at 100 gigabit, equating to 12.8 terabits of switching capacity.

Using digital signal processing also benefits silicon photonics. “Integration is much denser using CMOS devices with silicon photonics,” says Shabtai. DSP also improves the performance of silicon photonics-based designs such as the issues of linearity and sensitivity. “A lot of these things can be solved using signal processing,” he says.

FlexPhy will be available for customers this year but MultiPhy would not say whether it already has working samples.

MultiPhy raised $7.2 million venture capital funding in 2010.

Books in 2015 - Final Part

Sterling Perring, senior analyst, Heavy Reading

My ambitions to read far exceed my actual reading output, and because I have such a backlog of books on my reading list, I generally don’t read the latest.

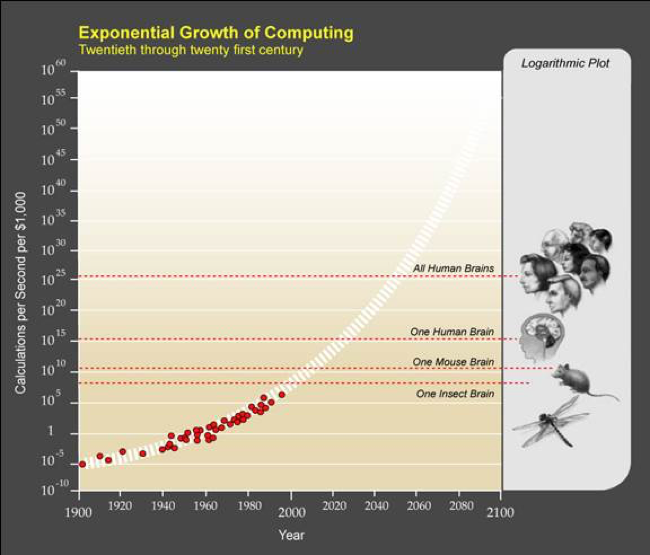

Source: The Age of Spiritual Machines

Source: The Age of Spiritual Machines

I have long been fascinated by a graphic from futurist Ray Kurweil which depicts the exponential growth of computing and plots it against living intelligence. The graphic is from Kurzweil’s 1999 book on artificial intelligence The Age of Spiritual Machines: When Computers Exceed Human Intelligence, which I read in 2015.

The book contains several predictions, but this one about computer intelligence vastly exceeding collective human intelligence in our own lifetimes interested me most. Kurzweil translates the brain power of living things into computational speeds and storage capacity and plots them against exponentially growing computing power, based on Moore’s law and its expected successors.

He writes that by 2020, a $1,000 personal computer will have enough speed and memory to match the human brain. But science fiction (and beyond) becomes reality quickly because computational power continues to grow exponentially while collective human intelligence continues on its plodding linear progression. The inevitable future, in Kurzweil’s scenario, blends human intelligence and AI to the point where by the end of this century, it’s no longer possible or relevant to distinguish between the two.

There have been many criticisms of Kurzweil’s theory and methodologies on AI evolution, but reading a futures book 15 years after publication gives you the ability to validate its predictions. On this, Kurzweil has been quite amazing, including self-driving cars, headset-based virtual reality gaming (which I experienced this year at the mall), tablet computing coming of age in 2009, and the coming end of Moore’s law, to name a few in this book that struck me as astoundingly accurate.

Of newer books, I read Yuval Noah Harari’s Sapiens: A Brief History of Humankind (originally published in Hebrew in 2011 but first published in English in 2014). I was attracted to this book because it provides a succinct summary of millions of years of human history and, from its high level vantage point, is able to draw fascinating conclusions about why our human species of sapiens has been so successful.

Harari’s thesis is that it’s not our thumbs, or the invention of fire, or even our languages that led to our dominance over all animals and other humans but rather the creation of fictional constructs – enabled by our languages – that unified sapiens in collective groups vastly larger than otherwise achievable.

Here, the book can strike some nerves because all religions qualify as fictional constructs, but he’s really talking about all intangible constructs under which humans can massively align themselves, including nations, empires, corporations, money and even capitalism. Without fictional constructs, he writes, it’s hard for humans to form meaningful social organizations beyond 150 people – a number also famously cited by Malcolm Gladwell in The Tipping Point.

In fiction, I completed the fifth and final published installment of George RR Martin’s Song of Ice and Fire Series, A Dance with Dragons. I’ve been drawn to this series in large part, I think, because the simpler medieval setting is such a stark contrast to the ultra-high-tech world in which we live and work.

I thought I had timed the reading to coincide with the release of the 6th book, The Winds of Winter, but I’ve heard that the book is delayed again. Fortunately, I’m still two seasons behind on the HBO series.

Aaron Zilkie, vice president of engineering at Rockley Photonics

I recommend the risk assessment principles in the book, Projects at Warp - Speed with QRPD: The Definitive Guidebook to Quality Rapid Product Development by Adam Josephs, Cinda Voegtli, and Orion Moshe Kopelman.

These principles provide valuable one-stop teaching of fundamental principles for the often under-utilised and taken-for-granted engineering practice of technology risk management and prioritisation. This is an important subject for technology and R&D managers in small-to-medium size technology companies to include in their thinking as they perform the challenging task of selecting new technologies to make next-generation products and product improvements.

The book Who: The A Method for Hiring by Geoff Smart and Randy Street teaches good practices for focused hiring, to build A-teams in technology companies, a topic of critical importance for the rapid success of start-up companies that is not taught in schools.

Tom Foremski, SiliconValleyWatcher

Return of a King: The Battle for Afghanistan, 1839-42 by William Dalrymple. This is one of the best reads, an amazing story! Only one survivor on an old donkey.

Books in 2015 - Part 2

Yuriy Babenko, senior network architect, Deutsche Telekom

The books I particularly enjoyed in 2015 dealt with creativity, strategy, and social and organisational development.

People working in IT are often right-brained people; we try to make our decisions rationally, verifying hypotheses and build scenarios and strategies. An alternative that challenges this status quo and looks at issues from a different perspective is Thinkertoys by Michael Michalko.

Thinkertoys develops creativity using helpful tools and techniques that show problems in a different light that can help a person stumble unexpectedly on a better solution.

Some of the methods are well known such as mind-mapping and "what if" techniques but there is a bunch of intriguing new approaches. One of my favourites this year, dubbed Clever Trevor, is that specialisation limits our options, whereas many breakthrough ideas come from non-experts in a particular field. It is thus essential to talk to people outside your field and bounce ideas with them. It leads to the surprising realisation that many problems are common across fields.

The book offers a range of practical exercises, so grab them and apply.

I found From Third World to First: The Singapore Story - 1965-2000 by by Lee Kuan Yew, the founder of modern Singapore, inspiring.

Over 700 pages, Mr. Lee describes the country’s journey to ‘create a First World oasis in a Third World region". He never tired to learn, benchmark and optimise. The book offers perspectives on how to stay confident no matter what happens, focus and execute the set strategy; the importance of reputation and established ties, and fact-based reasoning and argumentation.

Lessons can be drawn here for either organisational development or business development in general. You need to know your strengths, focus on them, not rush and become world class in them. To me, there is a direct link to a resource-based approach, or strategic capability analysis here.

The massive Strategy: A History by Lawrence Freeman promises to be the reference book on strategy, strategic history and strategic thinking.

Starting with the origins of strategy including sources such as The Bible, the Greeks and Sun Tzu, the author covers systematically, and with a distinct English touch, the development of strategic thinking. There are no mathematics or decision matrices here, but one is offered comprehensive coverage of relevant authors, thinkers and methods in a historical context.

Thus, for instance, Chapter 30 (yes, there are a lot of chapters) offers an account of the main thinkers of strategic management of the 20th century including Peter Drucker, Kenneth Andrews, Igor Ansoff and Henry Mintzberg.

The book offers a reference for any strategy-related questions, in both personal or business life, with at least 100 pages of annotated, detailed footnotes. I will keep this book alive on my table for months to come.

The last book to highlight is Continuous Delivery by Jez Humble and David Farley.

The book is a complete resource for software delivery in a continuous fashion. Describing the whole lifecycle from initial development, prototyping, testing and finally releasing and operations, the book is a helpful reference in understanding how companies as diverse as Facebook, Google, Netflix, Tesla or Etsy develop and deliver software.

With roots in the Toyota Production System, continuous delivery emphasises empowerment of small teams, the creation of feedback processes, continuous practise, the highest level of automation and repeatability.

Perhaps the most important recommendation is that for a product to be successful, ‘the team succeeds or fails’. Given the levels of ever-rising complexity and specialisation, the recommendation should be taken seriously.

Roy Rubenstein, Gazettabyte

I asked an academic friend to suggest a textbook that he recommends to his students on a subject of interest. Students don’t really read textbooks anymore, he said, they get most of their information from the Internet.

How can this be? Textbooks are the go-to resource to uncover a new topic. But then I was at university before the age of the Internet. His comment also made me wonder if I could do better finding information online.

Two textbooks I got in 2015 concerned silicon photonics. The first, entitled Handbook of Silicon Photonics provides a comprehensive survey of the subject from noted academics involved in this emerging technology. At 800-pages-plus, the volume packs a huge amount of detail. My one complaint with such compilation books is that they tend to promote the work and viewpoints of the contributors. That said, the editors Laurent Vivien and Lorenzo Pavesi have done a good job and while the chapters are specialist, effort is made to retain the reader.

The second silicon photonics book I’d recommend, especially from someone interested in circuit design, is Silicon Photonics Design: From Devices to Systems by Lukas Chrostowski and Michael Hochberg. The book looks at the design and modelling of the key silicon photonics building blocks and assumes the reader is familiar with Matlab and EDA tools. More emphasis is given to the building blocks than systems but the book is important for two reasons: it is neither a textbook nor a compendium of the latest research, and is written for engineers to get them designing. [1]

I also got round to reading a reflective essay by Robert W. Lucky included in a special 100th anniversary edition of the Proceedings of the IEEE magazine, published in 2012. Lucky started his career as an electrical engineer at Bell Labs in 1961. In his piece he talks about the idea of exponential progress and cites Moore’s law. “When I look back on my frame of reference in 1962, I realise that I had no concept of the inevitability of constant change,” he says.

1962 was fertile with potential. Can we say the same about technology today? Lucky doesn’t think so but accepts that maybe such fertility is only evident in retrospect: “We took the low-hanging fruit. I have no idea what is growing further up the tree.”

A common theme of some of the books I read in the last year is storytelling.

I read journalist Barry Newman’s book News to Me: Finding and Writing Colorful Feature Stories that gives advice on writing. Newman has been writing colour pieces for the Wall Street Journal for over four decades: “I’m a machine operator. I bang keys to make words.”

I also recommend Storytelling with Data: A Data Visualization Guide for Business Professionals by Cole Nussbaumer Knaflic about how best to present one’s data.

I discovered Abigail Thomas’s memoirs A Three Dog Life: A Memoir and What Comes Next and How to Like It. She writes beautifully and a chapter of hers may only be a paragraph. Storytelling need not be long.

Three other books I hugely enjoyed were Atul Gawande's Being Mortal: Medicine and What Matters in the End, Roger Cohen’s The Girl from Human Street: A Jewish Family Odyssey and the late Oliver Sacks’ autobiography On the Move: A Life. Sacks was a compulsive writer and made sure he was never far away from a notebook and pen, even when going swimming. A great habit to embrace.

Lastly, if I had to choose one book - a profound work and a book of our age - it is One of Us: The Story of Anders Breivik and the Massacre in Norway by Asne Seierstad.

For Books in 2015 - Part 1, click here

Further Information

[1] There is an online course that includes silicon photonics design, fabrication and data analysis and which uses the book. For details, click here

Arista adds coherent CFP2 modules to its 7500 switch

Martin Hull

Martin Hull

Several optical equipment makers have announced ‘stackable’ platforms specifically to link data centres in the last year.

Infinera’s Cloud Xpress was the first while Coriant recently detailed its Groove G30 platform. Arista’s announcement offers data centre managers an alternative to such data centre interconnect platforms by adding dense wavelength-division multiplexing (DWDM) optics directly onto its switch.

For customers investing in an optical solution, they now have an all-in-one alternative to an optical transport chassis or the newer stackable data centre interconnnect products, says Martin Hull, senior director product management at Arista Networks. Insert two such line cards into the 7500 and you have 12 ports of 100 gigabit coherent optics, eliminating the need for the separate optical transport platform, he says.

The larger 11RU 7500 chassis has eight card slots such that the likely maximum number of coherent cards used in one chassis is four or five - 24 or 30 wavelengths - given that 40 or 100 Gigabit Ethernet client-side interfaces are also needed. The 7500 can support up to 96, 100 Gigabit Ethernet (GbE) interfaces.

Arista says the coherent line card meets a variety of customer needs. Large enterprises such as financial companies may want two to four 100 gigabit wavelengths to connect their sites in a metro region. In contrast, cloud providers require a dozen or more wavelengths. “They talk about terabit bandwidth,” says Hull.

With the CFP2-ACO, the DSP is outside the module. That allows us to multi-source the optics

As well as the CFP2-ACO modules, the card also features six coherent DSP-ASICs. The DSPs support 100 gigabit dual-polarisation, quadrature phase-shift keying (DP-QPSK) modulation but do not support the more advanced quadrature amplitude modulation (QAM) schemes that carry more bits per wavelength. The CFP2-ACO line card has a spectral efficiency that enables up to 96 wavelengths across the fibre's C-band.

Did Arista consider using CFP coherent optical modules that support 200 gigabit, and even 300 and 400 gigabit line rates using 8- and 16-QAM? “With the CFP2-ACO, the DSP is outside the module,” says Hull. “That allows us to multi-source the optics.”

The line card also includes 256-bit MACsec encryption. “Enterprises and cloud providers would love to encrypt everything - it is a requirement,” says Hull. “The problem is getting hold of 100-gigabit encryptors.” The MACsec silicon encrypts each packet sent, avoiding having to use a separate encryption platform.

CFP4-ACO and COBO

As for denser CFP4-ACO coherent modules, the next development after the CFP2-ACO, Hull says it is still too early, as it is with for 400 gigabit on-board optics being developed by COBO and which is also intended to support coherent. “There is a lot of potential but it is still very early for COBO,” he says.

“Where we are today, we think we are on the cutting edge of what can be delivered on a line card,” says Hull. “Getting everything onto that line card is an engineering achievement.”

Future developments

Arista does not make its own custom ASICs or develop optics for its switch platforms. Instead, the company uses merchant switch silicon from the likes of Broadcom and Intel.

According to Hull, such merchant silicon continues to improve, adding capabilities to Arista’s top-of-rack ‘leaf’ switches and its more powerful ‘spine’ switches such as the 7500. This allows the company to make denser, higher-performance platforms that also scale when coupled with software and networking protocol developments.

Arista claims many of the roles performed by traditional routers can now be fulfilled by the 7500 such as peering, the exchange of large routing table information between routers using the Border Gateway Protocol (BGP). “[With the 7500], we can have that peering session; we can exchange a full set of routes with that other device,” says Hull.

"We think we are on the cutting edge of what can be delivered on a line card”

The company uses what it calls selective route download where the long list of routes is filtered such that the switch hardware is only programmed with the routes to be communicated with. Hull cites as an example a content delivery site that sends content to subscribers. The subscribers are typically confined to a known geographic region. “I don’t need to have every single Internet route in my hardware, I just need the routes to reach that state or metro region,” says Hull.

By having merchant silicon that supports large routing tables coupled with software such as selective route download, customers can use a switch to do the router’s job, he says.

Arista says that in 2016 and 2017 it will continue to introduce leaf and spine switches that enable data centre customers to further scale their networks. In September Arista launched Broadcom Tomahawk-based switches that enable the transition from 10 gigabit server interfaces to 25 gigabit and the transition from 40 to 100 gigabit uplinks.

Longer term, there will be 50 GbE and iterations of 400 and one terabit Ethernet, says Hull. And all this relates to the switch silicon. At present 3.2 terabit switch chips are common and already there is a roadmap to 6.4 and even 12.8 terabits by increasing both the chip’s pin count and using PAM-4 alongside the 25 gigabit signalling to double input/ output again. A 12.8 terabit switch may be a single chip, says Hull, or it could be multiple 3.2 terabit building blocks integrated together.

“It is not just a case of more ports on a box,” says Hull. “The boxes have to be more capable from a hardware perspective so that the software can harness that.”