High fives: 5 Terabit OTN switching and 500 Gig super-channels.

Infinera has announced a core network platform that combines Optical Transport Network (OTN) switching with dense wavelength division multiplexing (DWDM) transport. "We are looking at a system that integrates two layers of the network," says Mike Capuano, vice president of corporate marketing at Infinera.

"This is 100Tbps of non-blocking switching, all functioning as one system. You just can't do that with merchant silicon."

"This is 100Tbps of non-blocking switching, all functioning as one system. You just can't do that with merchant silicon."

Mike Capuano, Infinera

The DTN-X platform is based on Infinera's third-generation photonic integrated circuit (PIC) that supports five, 100Gbps coherent channels.

Each DTN-X platform can deliver 5 Terabits-per-second (Tbps) of non-blocking OTN switching using an Infinera-designed ASIC. Ten DTN-X platforms can be combined to scale the OTN switching and transport capacity to 50Tbps currently.

Infinera also plans to add Multiprotocol Label Switching (MPLS) to turn the DTN-X into a hybrid OTN/ MPLS switch. With the next upgrades to the PIC and the switching, the ten DTN-X platforms will scale to 100Tbps optical transport and 100Tbps OTN and MPLS switching capacity.

The platform is being promoted by Infinera as a way for operators to tackle network traffic growth and support developments such as cloud computing where applications and content increasingly reside in the network. "What that means [for cloud-based services to work] is a network with huge capacity and very low latency," says Capuano.

Platform details

The 5x100Gbps PIC supports what Infinera calls a 500Gbps 'super-channel'. Each super-channel is a multi-carrier implementation comprising five, 100Gbps wavelengths. Combined with OTN, the 500Gbps super-channel can be filled with 1, 10, 40 and 100 Gigabit streams (SONET/SDH, Ethernet, video etc). Moreover, there is no spectral efficiency penalty: the super-channel uses 250GHz of fibre spectrum, provisioning five 50GHz-wide, 100Gbps wavelengths at a time.

"We have seen 40 and 100Gbps come on the market and they are definitely helping with fibre capacity issues," says Capuano. "But they are more expensive from a cost-per-bit perspective than 10Gbps." By introducing the 500Gbps PIC, Infinera says it is reducing the cost-per-bit performance of high speed optical transport.

DTN-X: shown are 5 line and tributary cards top and bottom with switching cards in the centre of the chassis. Source: Infinera

DTN-X: shown are 5 line and tributary cards top and bottom with switching cards in the centre of the chassis. Source: Infinera

Integrating OTN switching within the platform results in the lowest cost solution and is more efficient when compared to multiplexed transponders (muxponder) configured manually, or an external OTN switch which must be optically connected to the transport platform.

The DTN-X also employs Generalised MPLS (GMPS) software. "GMPLS makes it easy to deploy networks and services with point-and-click provisioning," says Capuano.

Each DTX-N line card supports a 500Gbps PIC but the chassis backplane is specified at 1Tbps, ready for Infinera's next-generation 10x100Gbps PIC that will upgrade the DTN-X to a 10Tbps system. "We have already presented our test results for our 1Tbps PIC back in March," says Capuano. The fourth-generation PIC, estimated around 2014 (based on a company slide although Infinera has made no public comment), will support a 1Tbps super-channel.

Adding MPLS will add the transport capability of the protocol to the DTN-X. "You will have MPLS transport, OTN switching and DWDM all in one platform," says Capuano.

OTN switching is the priority of the tier-one operators to carry and process their SONET/SDH traffic; adding MPLS will enable extra traffic processing capabilities to the system, he says.

Infinera says that by eventually integrating MPLS switching into the optical transport network, operators will be able to bypass expensive router ports and simplify their network operation.

Performance

Infinera says that the DTX-N 5Tbps performance does not dip however the system is configured: whether solely as a switch (all line card slots filled with tributary modules), mixed DWDM/ switching (half DWDM/ half tributaries, for example) or solely as a DWDM platform. Depending on the cards in the DTN-X platform, the transport/ switching configuration can be varied but the 5Tbps I/O capacity is retained. Infinera says other switches on the market do lose I/O capacity as the interface mix is varied.

Overall, Infinera claims the platform requires half the power of competing solutions and takes up a third less space.

The DTN-X will be available in the first half of 2012.

Analysis

Gazettabyte asked several market research firms about the significance of the DTN-X announcement and the importance of combining OTN, DWDM and soon MPLS within one platform.

Ovum

Ron Kline, principal analyst, and Dana Cooperson, vice president, of the network infrastructure practice

"MPLS switching is setting up a very interesting competitive dynamic among vendors"

Dana Cooperson, Ovum

The DTN-X is a platform for the largest service providers and their largest sites, says Ovum.

It sees the DTN-X in the same light as other integrated OTN/ WDM platforms such as Huawei's OSN 8800, Nokia Siemens Networks' hiT 7100, Alcatel-Lucent's 1830 PSS and Tellabs' 7100 OTS.

"It fits the mold for Verizon's long-haul optical transport platform (LH OTP), especially once MPLS is added," says Kline. "NSN is also claiming it will add MPLS to the 7100. Once MPLS is added, then you have the big packet optical transport box that Verizon wants."

The DTN-X platform will boost the business case for 100 Gig in a similar way to how Infinera's current PIC has done at 10 Gig. "The others will be forced to lower price," says Kline.

Having GMPLS is important, especially if there is a need to do dynamic bandwidth allocation, however it is customer-dependent. "When you start digging, it's hard to find large-scale implementations of GMPLS," says Kline.

The Ovum analysts stress that the need for OTN in the core depends on the customer. Content service providers like Google couldn't care less about OTN. "It's really an issue for multi-service providers like BT and AT&T," says Cooperson,

There is a consensus about the need for MPLS in the core. "Different service providers are likely to take different approaches — some might prefer an integrated box and others might not, it depends on their business," she says. "I think MPLS switching is setting up a very interesting competitive dynamic among vendors that focus on IP/MPLS, those that focus on optical, and those that are trying to do both [optical and IP/MPLS].

Ovum highlights several aspects regarding the DTN-X's claimed performance.

"Assuming it performs as advertised, this should finally give Infinera what it needs to be of real interest to the tier-ones," says Cooperson. "The message of scalability, simplicity, efficiency, and profitability is just what service providers want to hear."

Cooperson also highlights Infinera's approach to optical-electrical-optical conversion and the benefit this could deliver at line speeds greater than 100Gbps.

At present ROADMs are being upgraded to support flexible spectrum channel configurations, also known as gridless. This is to enable future line speeds that will use more spectrum than current 50GHz DWDM channels. Operators want ROADMs that support flexible spectrum requirements but managing the network to support these variable width channels is still to resolved.

"It fits the mold for Verizon's long-haul optical transport platform (LH OTP), especially once MPLS is added"

"It fits the mold for Verizon's long-haul optical transport platform (LH OTP), especially once MPLS is added"

Ron Kline, Ovum

Infinera's approach is based on conversion to the electrical domain when dropping and regenerating wavelengths such that the issue of flexible channels does not arise or is at least forestalled. This, says Cooperson, could be Infinera's biggest point of differentiation.

"What impresses me is the 500Gbps super-channel using five, 100Gbps carriers and the size of the switch fabric," adds Kline. The 5Tbps switching performance also exceeds that of everyone else: "Alcatel-Lucent is closest with 4Tbps but most range from 1-3Tbps and top out at 3Tbps."

The ease of use is also a big deal. Infinera did very well in marketing rapid turn up: 10 Gig in 10 days for example, says Kline: "It looks like they will be able to do the same here with 100 Gig."

Infonetics Research

Andrew Schmitt, directing analyst, optical

"GMPLS isn't that important, yet."

The DTN-X is a WDM platform which optionally includes a switch fabric for carriers that want it integrated with the transport equipment, says Schmitt. Once MPLS is added, it has the potential to be a full-blown packet-optical system.

"[The announcement is] pretty significant though not unexpected," says Schmitt. "I think the key question is what it costs, and whether the 500G PIC translates into compelling savings."

Having MPLS support is important for some carriers such as XO Communications and Google but not for others.

Schmitt also says GMPLS isn't that important, yet. "Infinera's implementation of regen-rich networks should make their GMPLS implementation workable," he says. "It has been building networks like that for a while."

OTN in the core is still an open debate but any carrier that doesn't have the luxury of a homogenous data network needs it, he says

Schmitt has yet to speak with carriers who have used the DTN-X: "I can't comment on claimed performance but like I said, cost is important."

ACG Research

Eve Griliches, managing partner

"Infinera has already introduced the 500G PIC, but the OTN is significant in that it can be used as a standalone OTN switch, and it has the largest capacity out there today"

The DTN-X as an OTN/ WDM platform awaiting label switch router (LSR) functionality, says Griliches: "With the LSR functionality it will be able to do statistical multiplexing for direct router connections."

Infinera has already introduced the 500 Gig PIC but the OTN is significant in that it can be used as a standalone OTN switch, and it has the largest capacity out there today. An OTN survey conducted last year by ACG Research found that the switch capacity sweet spot is between 4 and 8Tbps.

Griliches says that LSR-based products are taking time to incorporate WDM and OTN technologies, while it is unclear when the DTN-X will support MPLS to add LSR capabilities. The race is on as to whom can integrate everything first, but DWDM and OTN before MPLS is the right direction for most tier-one operators, she says.

Infinera has over eight thousand of its existing DTNs deployed at 85 customers in 50 countries. The scale of the DTN-X will likely broaden Infinera's customer base to include tier-one operators, says Griliches.

ACG Research has heard positive feedback from operators it has spoken to. One stressed that the decreased port count due to the larger OTN cross-connect significantly improves efficiencies. Another operator said it would pick Infinera and said the beta version of the 500Gbps PIC is "working beautifully".

Rational and innovative times: JDSU's CTO Q&A Part II

"What happens after 100 Gig is going to be very interesting"

Brandon Collings (right), JDSU

How has JDS Uniphase (JDSU) adapted its R&D following the changes in the optical component industry over the last decade?

JDSU has been a public company for both periods [the optical boom of 1999-2000 and now]. The challenge JDSU faced in those times, when there was a lot of venture capital (VC) money flowing into the system, was that the money was sort of free money for these companies. It created an imbalance in that the money was not tied to revenue which was a challenge for companies like JDSU that ties R&D spend to revenue. You also have much more flexibility [as a start-up] in setting different price points if you are operating on VC terms.

The situation now is very straightforward, rational and predictable.

There is not a huge army of R&D going on. That lack of R&D does not speed up the industry but what it does do is allow those companies doing R&D - and there is still a significant number - a lot of focus and clarity. It also requires a lot of partnership between us, our customers [equipment makers] and operators. The people above us can't just sit back and pick and choose what they like today from myriad start-ups doing all sorts of crazy things.

We very much appreciate this rational time. Visions can be more easily discussed, things are more predictable and everyone is playing from a similar set of rules.

Given the changes at the research labs of system vendor and operators, is there a risk that insufficient R&D is being done, impeding optical networking's progress?

It is hard to say absolutely not as less people doing things can slow things down. But the work those labs did, covered a wide space including outside of telecom.

There is still a sufficient critical mass of research at placed like Alcatel-Lucent Bell Labs, AT&T and BT; there is increasingly work going on in new regions like Asia Pacific, and a lot more in and across Europe. It is also much more focussed - the volume of workers may have decreased but the task still remains in hand.

"There are now design tradeoffs [at speeds higher than 100Gbps] whereas before we went faster for the same distance"

How does JDSU foster innovation and ensure it is focussing on the right areas?

I can't say that we have at JDSU a process that ensures innovation. Innovation is fleeting and mysterious.

We stay very connected to our key customers who are more on the cutting edge. We have very good personal and professional relationships with their key people. We have the same type of relationship with the operators.

I and my team very regularly canvass and have open discussions about what is coming. What does JDSU see? What do you see? What technologies are blossoming? We talk through those sort of things.

That isn't where innovation comes from. But what that can do is sow the seeds for the opportunity for innovation to happen.

We take that information and cycle it through all our technology teams. The guys in the trenches - the material scientists, the free-space optics design guys - we try to educate them with as much of an understanding of the higher-level problems that ultimately their products, or the products they design into, will address.

What we find is that these guys are pretty smart. If you arm them with a wider understanding, you get a much more succinct and powerful innovation than if you try to dictate to a material scientist here is what we need, come back when you are done.

It is a loose approach, there isn't a process, but we have found that the more we educate our keys [key guys] to the wider set of problems and the wider scope of their product segments, the more they understand and the more they can connect their sphere of influence from a technology point of view to a new capability. We grab that and run with it when it makes sense.

It is all about communicating with our customers and understanding the environment and the problem, then spreading that as wide as we can so that the opportunity for innovation is always there. We then nurse it back into our customers.

Turning to technology, you recently announced the integration of a tunable laser into an SFP+, a product you expect to ship in a year. What platforms will want a tunable laser in this smallest pluggable form factor?

The XFP has been on routers and OTN (Optical Transport Network) boxes - anything that has 10 Gig - and those interfaces have been migrated over to SFP+ for compactness and face plate space. There are already packet and OTN devices that use SFP+, and DWDM formats of the SFP+, to do backhaul and metro ring application. The expectation is that while there are more XFP ports today, the next round of equipment will move to SFP+.

Certainly the Ciscos, Junipers and the packet guys are using tunable XFPs in great volume for IP over DWDM and access networks, but the more telecom-centric players riding OTN links or maybe native Ethernet links over their metro rings are probably the larger volume.

What distance can the tunable SFP+ achieve?

The distances will be pretty much the same as the tunable XFP. We produce that in a number of flavours, whether it is metro and long-haul. The initial SFP+ will like be the metro reaches, 80km and things like that.

What is the upper limit of the tunable XFP?

We produce a negative chirp version which can do 80km of uncompensated dispersion, and then we produce a zero chirp which is more indicative of long-haul devices.

In that case the upper limit is more defined by the link engineering and the optical signal-to-noise ratio (OSNR), the extent of the dispersion compensation accuracy and the fibre type. It starts to look and smell like a long-haul lithium niobate transceiver where the distances are limited by link design as much as by the transceiver itself. As for the upper limit, you can push 1000km.

An XFP module can accommodate 3.5W while an SFP+ is about 1.5W. How have you reduced the power to fit the design into an SFP+?

It may be a generation before we get to that MSA level so we are working with our customers to see what level they can tolerate. We'll have to hit a lot less that 3.5W but it is not clear that we have to hit the SFP+ MSA specification. We are already closer now to 1.5W than 3.5W.

"I can't say that we have at JDSU a process that ensures innovation. Innovation is fleeting and mysterious."

Semiconductors now play a key role in high-speed optical transmission. Will semiconductors take over more roles and become a bigger part of what you do?

Coherent transmission [that uses an ASIC incorporating a digital signal processor (DSP)] is not going away. There is a lot of differentiation at the moment in what happens in that DSP, but I think overall it is going to be a tool the system houses use to get the job done.

If you look at 10 Gig, the big advancement there was FEC [forward error correction] and advanced FEC. In 2003 the situation was a lot like it is today: who has the best FEC was something that was touted.

If you look at coherent technology, it is certainly a different animal but it is a similar situation: that is, the big enabler for 40 and 100 Gig. Coherent is advanced technology, enhanced FEC was advanced technology back then, and over time it turned into a standardised, commoditised piece that is central and ubiquitously used for network links.

Coherent has more diversity in what it can do but you'll see some convergence and commoditisation of the technology. It is not going to replace or overtake the importance of photonics. In my mind they play together intimately; you can't replace the functions of photonics with electronics any time soon.

From a JDSU perspective, we have a lot of work to do because the bulk of the cost, the power and the size is still in the photonics components. The ASIC will come down in power, it will follow Moore's Law, but we will still need to work on all that photonics stuff because it is a significant portion of the power consumption and it is still the highest portion of the cost.

JDSU has made acquisitions in the area of parallel optics. Given there is now more industry activity here, why isn't JDSU more involved in this area?

We have been intermittently active in the parallel optics market.

The reality is that it is a fairly fragmented market: there are a lot of applications, each one with its own requirements and customer base. It is tough to spread one platform product around these applications. That said, parallel optics is now a mainstay for 40 and 100 Gig client [interfaces] and we are extremely active in that area: the 4x10, 4x25 and 12x10G [interfaces]. So that other parallel optics capability is finding its way into the telecom transceivers.

We do stay active in the interconnect space but we are more selective in what we get engaged in. Some of the rules there are very different: the critical characteristics for chip interconnect are very different to transceivers, for example. It may be much better to have on-chip optics versus off-chip optics. Obviously that drives completely different technologies so it is a much more cloudy, fragmented space at the moment.

We are very tied into it and are looking for those proper opportunities where we do have the technologies to fit into the application.

How does JDSU view the issues of 200, 400 Gigs and 1 Terabit optical transmission?

What happens after 100 Gig is going to be very interesting.

Several things have happened. We have used up the 50GHz [DWDM] channel, we can't go faster in the 50GHz channel - that is the first barrier we are bumping into.

Second, we're finding there is a challenge to do electronics well beyond 40 Gigabit. You start to get into electronics that have to operate at much higher rates - analogue-to-digital converters, modulator drivers - you get into a whole different class of devices.

Third, we have used all of our tools: we have used FEC, we are using soft-decision FEC and coherent detection. We are bumping into the OSNR problem and we don't have any more tools to run lines rates that have less power to noise yet somehow recover that with some magic technology like FEC at 10 Gig, and soft decision FEC and coherent at 40 and 100 Gig.

This is driving us into a new space where we have to do multi-carrier and bigger channels. It is opening up a lot of flexibility because, well, how wide is that channel? How many carriers do you use? What type of modulation format do you use?

What format you use may dictate the distance you go and inversely the width of the channel. We have all these new knobs to play with and they are all tradeoffs: distance versus spectral efficiency in the C-band. The number of carriers will drive potentially the cost because you have to build parallel devices. There are now design tradeoffs whereas before we went faster for the same distance.

We will be seeing a lot of devices and approaches from us and our customers that provide those tradeoffs flexibly so the carriers can do the best they can with what mother nature will allow at this point.

That means transponders that do four carriers: two of them do 200 Gig nicely packed together but they only achieve a few hundred kilometers, but a couple of other carriers right next door go a lot further but they are a little bit wider so that density versus reach tradeoff is in play. That is what is going to be necessary to get the best of what we can do with the technology.

That is the transmission side, the transport side - the ROADMS and amplifiers - they have to accommodate this quirky new formats and reach requirements.

We need to get amplifiers to get the noise down. So this is introducing new concepts like Raman and flex[ible] spectrum to get the best we can do with these really challenging requirements like trying to get the most reach with the greatest spectral efficiency.

How do you keep abreast of all these subject areas besides conversations with customers?

It is a challenge, there aren't many companies in this space that are broader than JDSU's optical comms portfolio.

We do have a team and the team has its area of focus, whether it is ROADMs, modulators, transmission gear or optical amplifiers. We segment it that way but it is a loose segmentation so we don't lose ideas crossing boundaries. We try to deal with the breadth that way.

Beyond that, it is about staying connected with the right people at the customer level, having personal relationships so that you can have open discussions.

And then it is knowing your own organisation, knowing who to pull into a nebulous situation that can engage the customer, think on their feet and whiteboard there and then rather than [bringing in] intelligent people that tend to require more of a recipe to do what they are doing.

It is all about how to get the most from each team member and creating those situations where the right things can happen.

For Part I of the Q&A, click here

Q&A: Ciena’s CTO on networking and technology

In Part 2 of the Q&A, Steve Alexander, CTO of Ciena, shares his thoughts about the network and technology trends.

Part 2: Networking and technology

"The network must be a lot more dynamic and responsive"

Steve Alexander, Ciena CTO

Q. In the 1990s dense wavelength division multiplexing (DWDM) was the main optical development while in the '00s it was coherent transmission. What's next?

A couple of perspectives.

First, the platforms that we have in place today: III-V semiconductors for photonics and collections of quasi-discrete components around them - ASICs, FPGAs and pluggables - that is the technology we have. We can debate, based on your standpoint, how much indium phosphide integration you have versus how much silicon integration.

Second, the way that networks built in the next three to five years will differentiate themselves will be based on the applications that the carriers, service providers and large enterprises can run on them.

This will be in addition to capacity - capacity is going to make a difference for the end user and you are going to have to have adequate capacity with low enough latency and the right bandwidth attributes to keep your customers. Otherwise they migrate [to other operators], we know that happens.

You are going to start to differentiate based on the applications that the service providers and enterprises can run on those networks. I see the value of networking changing from a hardware-based problem-set to one largely software-based.

I'll give you an analogy: You bought your iPhone, I'll claim, not so much because it is a cool hardware box - which it is - but because of the applications that you can run on it.

The same thing will happen with infrastructure. You will see the convergence of the photonics piece and the Ethernet piece, and you will be able to run applications on top of that network that will do things such as move large amounts of data, encrypt large amounts of data, set up transfers for the cloud, assemble bandwidth together so you can have a good cloud experience for the time you need all that bandwidth and then that bandwidth will go back out, like a fluid, for other people to use.

That is the way the network is going to have to operate in future. The network must be a lot more dynamic and responsive.

How does Ciena view 40 and 100 Gig and in particular the role of coherent and alternative transmission schemes (direct detection, DQPSK)? Nortel Metro Ethernet Networks (MEN) was a strong coherent adherent yet Ciena was developing 100Gbps non-coherent solutions before it acquired MEN.

If you put the clock back a couple of years, where were the classic Ciena bets and what were the classic MEN bets?

We were looking at metro, edge of network, Ethernet, scalable switches, lots of software integration and lots of software intelligence in the way the network operates. We did not bet heavily on the long distance, submarine space and ultra long-haul. We were not very active in 40 Gig, we were going straight from 10 to 100 Gig.

Now look at the bets the MEN folks placed: very strong on coherent and applying it to 40 and 100 Gig, strong programme at 100 Gig, and they were focussed on the long-haul. Well, to do long-haul when you are running into things like polarisation mode dispersion (PMD), you've got to have coherent. That is how you get all those problems out of the network.

Our [Ciena's] first 100 Gig was not focussed on long-haul; it was focussed on how you get across a river to connect data centres.

When you look at putting things together, we ended up stopping our developments that were targeted at competing with MEN's long-haul solutions. They, in many cases, stopped developments coming after our switching, carrier Ethernet and software integration solutions. The integration worked very well because the intent of both companies was the same.

Today, do we have a position? Coherent is the right answer for anything that has to do with physical propagation because it simplifies networks. There are a whole bunch of reasons why coherent is such a game changer.

The reason why first 40 Gig implementations didn't go so well was cost. When we went from 10 to 40 Gig, the only tool was cranking up the clock rate.

At that time, once you got to 20GHz you were into the world of microwave. You leave printed circuit boards and normal manufacturing and move into a world more like radar. There are machined boxes, micro-coax and a very expensive manufacturing process. That frustrated the desires of the 40 Gig guys to be able to say: Hey, we've got a better cost point than the 10 Gig guys.

Well, with coherent the fact that I can unlock the bit rate from the baud rate, the signalling rate from the symbol rate, that is fantastic. I can stay at 10GHz clocks and send four-bits per symbol - that is 40Gbps.

My basic clock rate, which determines manufacturing complexity, fabrication complexity and the basic technology, stays with CMOS, which everyone knows is a great place to play. Apply that same magic to 100 Gig. I can send 100Gbps but stay at a 25GHz clock - that is tremendous, that is a huge economic win.

Coherent lets you continue to use the commercial merchant silicon technology base which where you want to be. You leverage the year-on-year cost reduction, a world onto itself that is driving the economics and we can leverage that.

So you get economics with coherent. You get improvement in performance because you simplify the line system - you can pop out the dispersion compensation, and you solve PMD with maths. You also get tunability. I'm using a laser - a local oscillator at the receiver - to measure the incoming laser. I have a tunable receiver that has a great economic cost point and makes the line system simpler.

Coherent is this triple win. It is just a fantastic change in technology.

What is Ciena’s thinking regarding bringing in-house sub-systems/ components (vertical integration), or the idea of partnerships to guarantee supply? One example is Infinera that makes photonic integrated circuits around which it builds systems. Another is Huawei that makes its own PON silicon.

The two examples are good ones.

With Huawei you have to treat them somewhat separately as they have some national intent to build a technology base in China. So they are going to make decisions about where they source components from that are outside the normal economic model.

Anybody in the systems business that has a supply chain periodically goes through the classic make-versus-buy analysis. If I'm buying a module, should I buy the piece-parts and make it? You go through that portion of it. Then you look within the sub-system modules and the piece-parts I'm buying and say: What if I made this myself? It is frequently very hard to say if I had this component fully vertically integrated I'd be better off.

A good question to ask about this is: Could the PC industry have been better if Microsoft owned Intel? Not at all.

You have to step back and say: Where does value get delivered with all these things? A lot of the semiconductor and component pieces were pushed out [by system vendors] because there was no way to get volume, scale and leverage. Unless you corner the market, that is frequently still true. But that doesn't mean you don't go through the make-versus-buy analysis periodically.

Call that the tactical bucket.

The strategic one is much different. It says: There is something out there that is unique and so differentiated, it would change my way of thinking about a system, or an approach or I can solve a problem differently.

"Coherent is this triple win. It is just a fantastic change in technology"

If it is truly strategic and can make a real difference in the marketplace - not a 10% or 20% difference but a 10x improvement - then I think any company is obligated to take a really close look at whether it would be better being brought inside or entering into a good strategic partnership arrangement.

Certainly Ciena evaluates its relationships along these lines.

Can you cite a Ciena example?

Early when Ciena started, there was a technology at the time that was differentiated and that was Fibre Bragg Gratings. We made them ourselves. Today you would buy them.

You look at it at points in time. Does it give me differentiation? Or source-of-supply control? Am I at risk? Is the supplier capable of meeting my needs? There are all those pieces to it.

Optical Transport Network (OTN) integrated versus standalone products. Ciena has a standalone model but plans to evolve to an integrated solution. Others have an integrated product, while others still launched a standalone box and have since integrated. Analysts say such strategies confuse the marketplace. Why does Ciena believe its strategy is right?

Some of this gets caught up in semantics.

Why I say that is because we today have boxes that you would say are switches but you can put pluggable coloured optics in. Would you call that integrated probably depends more on what the competition calls it.

The place where there is most divergence of opinion is in the network core.

Normally people look at it and say: one big box that does everything would be great - that is the classic God-Box problem. When we look at it - and we have been looking at it on and off for 15 years now - if you try to combine every possible technology, there are always compromises.

The simplest one we can point to now: If you put the highest performance optics into a switch, you sacrifice switch density.

You can build switches today that because of the density of the switching ASICs, are I/O-port constrained: you can't get enough connectors on the face plate to talk to the switch fabric. That will change with time, there is always ebb and flow. In the past that would not have been true.

If I make those I/O ports datacom plugabbles, that is about as dense as I'm going to get. If I make them long-distance coherent optics, I'm not going to get as many because coherent optics take up more space. In some cases, you can end up cutting by half your port density on the switch fabric. That may not be the right answer for your network depending on how you are using that switch.

While we have both technologies in-house, and in certain application we will do that. Years ago we put coloured optics on CoreDirector to talk to CoreStream, that was specific for certain applications. The reason is that in most networks, people try to optimise switch density and transport capacity and these are different levers. If you bolt those levers together you don't often get the right optimal point.

Any business books you have read that have been particularly useful for your job?

The Innovator's Dilemma (by Clayton Christensen). What is good about it is that it has a couple of constructs that you can use with people so they will understand the problem. I've used some of those concepts and ideas to explain where various industries are, where product lines are, and what is needed to describe things as innovation.

The second one is called: Fad Surfing in the Boardroom (by Eileen Shapiro). It is a history of the various approaches that have been used for managing companies. That is an interesting read as well.

Click here for Part 1 of the Q&A

MultiPhy eyes 40 and 100 Gigabit direct-detect and coherent schemes

MultiPhy's Avi Shabtai (left) and Ronen Weinberg

MultiPhy's Avi Shabtai (left) and Ronen Weinberg

MultiPhy is developing transceiver designs to boost the transmission performance of metro and long-haul 40 and 100 Gigabit-per-second (Gbps) links. The start-up is aiming its advanced digital signal processing (DSP) chips at direct detection and coherent-based modulation schemes.

“We are the only company, as far as we know, who is doing DSP-based semiconductors for the 40G and 100G direct-detect world,” says Avi Shabtai, CEO of Multiphy.

At 40Gbps the main direct-detection schemes are differential phase-shift keying (DPSK) and differential quadrature phase-shift keying (DQPSK), while at 100Gbps several direct-detect modulation schemes are being considered. “The fact that we are doing DSP at 40G and 100G enables us to achieve much better performance than regular hard-detection technology,” says Shabtai.

Established in 2007, the fabless semiconductor start-up raised US$7.2m in its latest funding round in May. MultiPhy is targeting its physical layer chips at module makers and system vendors. “While there is a clear ecosystem involving optical module companies and systems vendors, there is a lot of overlap,” says Shabtai. “You can find module companies that develop components; you can find system companies that skip the module companies, buying components to make their own line cards.”

MultiPhy’s CMOS chips include high-speed analogue-to-digital converters (ADC) and hardware to implement the maximum-likelihood sequence estimation (MLSE) algorithm. The company is operating the MLSE algorithm at “tens of gigasymbols-per-second”, says Shabtai. “We believe we are the only company implementing MLSE at these speeds.”

MultiPhy's office is alongside Finisar's Israeli headquartersMultiPhy will not disclose the exact sampling rate but says it is sampling at the symbol rate rather than at the Nyquist sampling theorem rate of double the symbol rate. Since commercial ADCs for 100Gbps have been announced that sample at 65Gsample/s, it suggests MultiPhy is sampling at up to half that rate.

MLSE is used to compensate for the non-linear impairments of fibre transmission, to improve overall transmission performance. “We implement an anti-aliasing filter at the input to the ADC and we use the MLSE engine to compensate for impairments due to the low-bandwidth sampling,” says Shabtai.

“There is a good chance that 100Gbps will leapfrog 40Gbps coherent deployments”

Avi Shabtai, MultiPhy

MultiPhy benefits from using one-sample-per-symbol in terms of simplifying the chip design and its power consumption but the MLSE algorithm must counter the resulting distortion. Shabtai claims the result is a significant reduction in power consumption compared to the tradition two-samples-per-symbol approach: “Tens of percent – I won’t say the exact number but it is not 10 percent.”

Other chip companies implementing MLSE designs for optical transmission include CoreOptics, which was acquired by Cisco in May, and Clariphy. (See Oclaro and Clariphy)

Does using MLSE make sense for 40Gbps DPSK and DQPSK?

“If you use DSP for DQPSK at 40Gbps you can significantly improve polarisation mode dispersion tolerance, the limiting factor today of DQPSK transceivers,” says Shabtai. MultiPhy expects the 40 Gigabit direct-detect market to shift towards DQPSK, accounting for the bulk of deployments in two years’ time.

Market applications

MultiPhy is delivering two solutions: for 40 and 100Gbps direct-detect, and 40 and 100Gbps coherent designs. The company has not said when it will deliver products but hinted that first it will address the direct-detect market and that chip samples will be available in 2011.

Not only will the samples enhance the reach of DQPSK-modulation based links but also allow the optical component specifications to be relaxed. For example, cheaper 10Gbps optical components can be used which, says MultiPhy, will reduce total design cost by “tens of percent”.

This is noteworthy, says Shabtai, as the direct-detect markets are increasingly cost-sensitive. “Coherent is being positioned as the high-end solution, and there will be pressure on the direct-detect market to show lower cost solutions,” he says.

MultiPhy is eyeing two 100Gbps spaces

MultiPhy is eyeing two 100Gbps spaces

MultiPhy’s view is that direct-detect modulation schemes will be deployed for quite some time due to their price and power advantage compared to coherent detection.

Another factor against 40Gbps coherent technology will be the price difference between 40Gbps and 100Gbps coherent schemes. “There is a good chance that 100Gbps will leapfrog 40Gbps coherent deployments,” he says. “The 40Gbps coherent modules will need to go a long way to get to the right price.” MultiPhy says it is hearing about the expense of coherent modules from system vendors and module makers, as well as industry analysts.

Metro and long-haul

The company says it has received several requests for 40Gbps and 100Gbps direct-detect schemes for the metro due to its sensitivity to cost and power consumption. “We are getting to the point in optical communications where one solution does not fit all – that the same solution for long-haul will also suit metro,” says Shabtai.

He believes 100Gbps coherent will become a mainstream solution but will take time for the technology to mature and its costs to come down. It will thus take time before 100Gbps coherent expands beyond long-haul and into the metro. He also expects a different 100Gbps coherent solution to be used in the metro. “The requirements are different – in reach, in power constraints” he says. “The metro will increasingly become a segment, not only for direct-detect but also for coherent.”

Coherent: Already a crowded market

There are at least a dozen companies actively developing silicon for coherent transmission, while half-a-dozen leading system vendors developing designs in-house. In addition, no-one really knows when the 100Gbps market will take off. So how does MultiPhy expect to fare given the fierce competition and uncertain time-to-revenues?

“It is very hard to predict the exact ramp up to high volumes,” says Shabtai. “At the end of the day, 100Gbps will come instead of 10Gbps and when people look back in five and six years’ time, they will say: ‘Gee, who would have expected so much capacity would have been needed?’.”

The big question mark is when will coherent technology ramp and this explains why MultiPhy is also targeting next-generation direct-detect schemes with its technology. “We cannot come to market doing the same thing as everyone else,” says Shabtai. “Having a solution that addresses power consumption based on one-sample-per-symbol gives us a significant edge.”

MultiPhy admits it has received greater market interest following Cisco’s acquisition of CoreOptics. “While Cisco said it would fulfill all previous commitments, still it worried some of CoreOptics’ customers,” says Shabtai. The acquisition also says something else to Shabtai: 100Gbps coherent is a strategic technology.

Did Cisco consider MultiPhy as a potential acquisition target? “First, I can’t comment, and I wasn’t at the company at the time,” says Shabtai.

As for design wins, Shabtai says MultiPhy is in “advanced discussion” with several leading module and system vendor companies concerning its 40Gbps and 100Gbps direct-detect and coherent technologies.

Further reading

See Opnext's multiplexer IC plays its part in 100Gbps trial

To efficiency and beyond

Part 3: ROADM and control plane developments

ROADMs and control plane technology look set to finally deliver reconfigurable optical networks but challenges remain.

Operators are assessing how best to architect their networks - from the router to the optical layer - to boost efficiencies and reduce costs. It is developments at the photonic layer that promise to make the most telling contribution to lowering the cost of transport, a necessity given how the revenue-per-bit that carriers receive continues to dwindle.

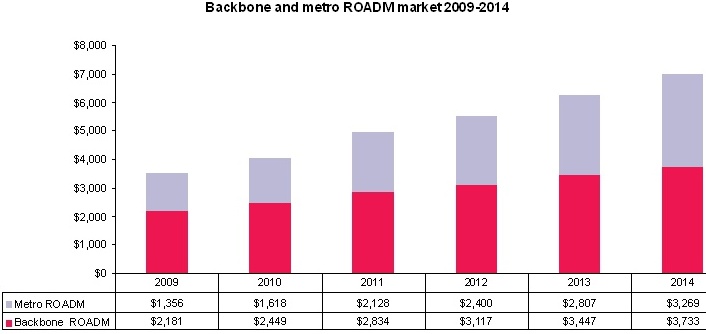

Global ROADM forecast 2009 -14 in US $ miliions Source: Ovum

Global ROADM forecast 2009 -14 in US $ miliions Source: Ovum

“The challenge of most service providers largely hasn’t changed for some time: dealing with growth in demand economically,” says Drew Perkins, CTO of Infinera. “How can operators grow the capacity on each route and switch it, largely on a packet-by-packet basis, without increasing the numbers of dollars going into the network.”

Until now, dynamic optical networking has been conducted at the electrical layer. Electrical switches set up connections within a second, support shared mesh restoration in under 100 milliseconds (ms), and have a proven control plane that oversees networks up to 1,000 networking nodes. This is the baseline performance that a photonic layer scheme will be compared to, says Joe Berthold, vice president of network architecture at Ciena.

AT&T’s Optical Mesh is one such electrically-enable service. Using Ciena’s CoreDirector electrical switches, customers can change their access circuits in SONET STS-1 (50 Megabits-per-second) increments via a web interface. AT&T wants to expand further the capacity increments it places in customers’ hands.

“The real problem with operators today is that it takes way too long to set up a new connection with existing optical infrastructure.”

Tom McDermott, Fujitsu Network Communications

Developments at the photonic layer such as advances in reconfigurable optical add-drop multiplexers (ROADMs) as well as control plane and management software complement the electrical layer control. ROADMs enable the redirection of large bandwidths while the electrical layer, with its sub-wavelength grroming and switching at the packet level, accommodates more rapid traffic changes. Operators will benefit as the two layers are used more efficiently.

“The photonic layer is the cheapest per bit per function, cheaper than the transport layer – OTN (Optical Transport Network) or the SONET layer – and the packet layer,” says Brandon Collings, CTO of JDS Uniphase’s consumer and commercial optical products division. “The more efficient, functional and powerful the control plane, the better off operators will be.”

ROADM evolution

ROADMs sit at the core of the network and largely define its properties. “The network’s wavelengths may be 10, 40 or 100 Gig; that is just bolting something on the edge. The ROADM sits in the middle, it’s there, and it has to handle whatever you throw at it,” says Simon Poole, director new business ventures at Finisar.

Operators have gone from using fixed optical add-drop multiplexers (OADMs) to ROADMs with fixed add-drop ports to now colourless and directionless ROADMs. Each step increases the flexibility of the switching devices while reducing the manual intervention required when setting up new lightpaths.

“There has been a much greater drive in the US [for ROADMs] but it is now picking up in Europe”

“There has been a much greater drive in the US [for ROADMs] but it is now picking up in Europe”

Ulf Persson, Transmode Systems

Network architectures also reflect these advances. First ROADMs were typically two-dimensional nodes that enabled metro rings. Now optical mesh networks are possible using the ROADMs’ greater number of interfaces, or degrees.

Transmode Systems has many of its customers – smaller tier one and tier two operators - in Europe, and focusses on the access, metro and metro-regional markets.

“It is not just the type and size of the operators, there are also regional differences in how all-optical and ROADMs are used,” says Ulf Persson, director of network architecture at Transmode. “There has been a much greater drive in the US [for ROADMs] but it is now picking up in Europe.” One reason for limited ROADM demand in Europe, says Persson, is that for smaller networks it is easier to design and predict growth.

Operators with fixed OADMs must plan their networks carefully. When provisioning a service, engineers have to visit and reconfigure the nodes needed to support the new route. In contrast, with fixed add-drop port ROADMs, engineers only need visit the end points.

The end points require manual intervention since the ROADM restricts the lightpath’s direction and wavelength. ROADMs at nodes along the route can at least change the direction but not the lightpath’s wavelength. “You can do the express routing efficiently, it is the [ROADM] drop side that that is not automated at this point,” says Poole.

This still benefits operators even if it doesn’t meet all their optical layer requirements. “Where ROADMs have helped is that while service technicians must visit the end points - connecting the transponder card and client equipment - they save on the intermediate site visits,” says Jörg-Peter Elbers, vice president, advanced technology at ADVA Optical Networking. “Just by a mouse click, you can set up all the nodes in the right configurations without the hassle of doing this manually.”

The result is largely static networks that once set up are seldom changed. “The real problem with operators today is that it takes way too long to set up a new connection with existing optical infrastructure,” says Tom McDermott, distinguished strategic planner, Fujitsu Network Communications.

Colourless and directionless ROADMs aim to solve this. A tunable transponder can now be pointed to any of the ROADM’s network interfaces while exploiting its tunability for the lightpath’s wavelength or colour.

The ROADM percentage of the total metro WDM market. The market comprises Coarse WDM, fixed and reconfigurable OADMs. Source: Ovum

The ROADM percentage of the total metro WDM market. The market comprises Coarse WDM, fixed and reconfigurable OADMs. Source: Ovum

Such colourless and directionless ROADMs offer several benefits. An operator can have several transponders ready for provisioning to meet new service demand. This arrangement for ‘deployment velocity’ has yet to take hold since operators are reluctant to have costly transponders idle, especially if they are 40 and 100 Gigabit-per-second (Gbps) ones.

Colourless and directionless ROADMs will more likely be used for network restoration during maintenance or a fibre cut. This is slow restoration, nowhere near the 100ms associated with electrical signalling; optical signals are analogue and each lightpath must be turned up carefully. “When you re-route an optical signal going more than 1,000km, taking it off one route affects all the other signals on that route and they need to be rebalanced; then putting it on another route, they too need to be rebalanced,” says Infinera’s Perkins. “It is very difficult to manage.”

ADVA Optical Networks cites the example of operators using 1+1 route protection. When one route is down for maintenance, the remaining route is left unprotected. Colourless and directionless ROADMs can be used to set up a spare route during the maintenance. In developing countries, where fibre cuts are more common, 1+N protection can provide operators with redundancy to survive multiple failures. Such a restoration strategy is especially needed if getting to a fault in remote area may take days.

The extra flexibility of the newer ROADMs provide will also aid operators with load balancing, moving traffic away from hotspots as the network grows.

WSSs at the core

The building block at the core of the ROADM is the wavelength-selective switch (WSS). Such switch building-blocks are implemented using light-switching technologies such as liquid crystal, liquid crystal on silicon and MEMS. The WSS routes a lightpath to a particular fibre, with the WSS’s degree in several configurations: 1x2, 1x4, 1x9 and, under development, a 1x23. The ROADM’s degree relates to the network interfaces it supports - a 2-degree ROADM supports two fibre pairs, pointing east and west. A WSS is used for each ROADM degree and hence with each fibre pair.

A 1:9 WSS supports an 8-degree ROADM with the remaining two ports used for local multiplexing and demultiplexing. So far, eight fibre pairs have been sufficient. A 1:23 WSS is being developed to support yet more degrees at a node. For example, more than one fibre pair can be sent in the same direction (doubling a route’s capacity) and for adding extra add-drop banks. JDS Uniphase is one vendor developing a 1:23 WSS.

"Where I’m seeing first interest beyond 1x9 is business service or edge applications - where at a given node point operators need a lot of attachments to different enterprise networks at high capacity multi-wavelength levels,” says Tom Rarick, principal engineer, transport, at Tellabs. “Within infrastructure applications, a 1x9 provides the degree of fibre connectivity necessary; I rarely see beyond a 1x6.”

“The next big thing [after colourless and directionless] is what people call contentionless and gridless, adding yet more flexibility to the optical infrastructure.”

“The next big thing [after colourless and directionless] is what people call contentionless and gridless, adding yet more flexibility to the optical infrastructure.”

Jörg-Peter Elbers, ADVA Optical Networking

For a WSS, the common port connects to the outbound fibre of any given direction, whereas the 9 ports [for a 1:9] face inwards to the centre of the node, says JDSU’s Collings (click here for a JDSU presentation). He describes the WSS as a gatekeeper that determine which lightpaths from which fibres leave a node. As for the incoming fibre, the lightpaths carried are sent to each of the other ROADM node WSSs, one for each direction. “Each WSS selects which channel from which port leaves the node,” says Collings.

To make a ROADM colourless and directionless, extra add-drop ports hardware must be added. These route lightpaths to any node and on any wavelength, or drop any lightpath from any of the other nodes. The add-drop node is built using further WSSs.

One issue still to be resolved is whether WSS sub-system vendors provide all the elements that are added to the WSS to make the ROADM colourless and directionless. “It is not clear whether we should be developing the whole thing or providing the modules for the customers’ line cards and systems,” says Poole.

“The next big thing [after colourless and directionless] is what people call contentionless and gridless, adding yet more flexibility to the optical infrastructure,” says Elbers.(Click here for an ADVA Optical Networking ROADM presentation)

Contentionless refers to avoiding same-wavelength contention. With a colourless and directional ROADM, only one copy of a particular wavelength can be dropped. “With a four-degree node you can have a 96-channel fibre on each,” says Krishna Bala, executive vice president, WSS division at Oclaro. “You may want to drop at the node lambda1 from the east and the same lambda1 coming from the west.” To drop the two, same wavelengths, an extra add-drop block is required.

To make the ROADM fully contentionless, as many add-drop blocks as ROADM degrees are needed. This requires more WSSs, or alternatively 1:N splitters, as well as N:1 selection switches. This way any of the dropped wavelengths from any of the incoming fibres can be routed to any one of the add-drop’s transponders.

“The building blocks for colourless and directionless ROADMs are there; we sell them as a product,” says Elbers, who stresses that the cost of the WSS building block is coming down. But the question remains whether an operator values such network functionality sufficiently to pay. “Without naming names, big carriers are looking at these – they want to have a future-proof, simple-to-plan network,” says Elders.

“It is strictly economics,” agrees Ciena’s Berthold. “We’ve offered a colourless-directionless ROADM for some time. Some buy that but more often they are going for lower cost.”

Gridless

A further ROADM attribute being added to the WSS is gridless even though it will be several years before it is needed. WSS vendors are keen to add the feature – adaptive channel widths - now so that operators’ ROADM deployments will be future-proofed.

“They [WSS vendors] have got to be in a tough spot. They have to invest all that [R&D] money while they [carriers/ system vendors] ask for the world.”

“They [WSS vendors] have got to be in a tough spot. They have to invest all that [R&D] money while they [carriers/ system vendors] ask for the world.”

Ron Kline, Ovum.

Channel bandwidths wider than 50GHz will be needed for line speeds above 100Gbps. Gridless refers to the ability to accommodate lightpaths that do not just fit on the International Telecommunication Union’s (ITU) 50 or 100GHz grid. WSS makers are developing fine pass-band filters that when combined in integer increments form variable channel widths.

“There is a great deal of concern from operators about how they can efficiently use the spectrum to maximize fibre capacity,” says Poole. What operators want is the ability to generate channel bandwidth with much finer granularity and to move away from fixed channel widths.

According to Poole, NTT have demonstrated a ROADM with 12.5GHz increments, others are thinking 25GHz or even 37.5GHz. Finisar says this issue has gained much operator attention in the last six months and that there is urgency for WSS vendors to implement gridless so that any ROADM deployed will be able to support future transmission rates beyond 100Gbps.

“They [WSS vendors] have got to be in a tough spot,” says Ron Kline, principal analyst for network infrastructure at Ovum. “They have to invest all that [R&D] money while they [carriers/ system vendors] ask for the world.”

Coherent receiver technology used for 100Gbps optical transmission will also help enable dynamic optical networking by overcoming technical issues when rerouting paths.

Optical signal distortion in the form of chromatic dispersion and polarisation mode dispersion (PMD) are so much worse at 40Gbps and 100Gbps. Even on 10Gbps routes, where tolerance to dispersion is greater, compensation can be an issue when redirecting a lightpath during network restoration. That is because the alternative route is likely to be longer. Unless the dispersion compensation is correct, there is uncertainty as to whether the alternative link will work, says Ciena’s Berthold.

“With a coherent receiver, you are now independent of dispersion since you can adaptively compensate for dispersion using the [receiver’s] DSP ASIC,” says Berthold. “You no longer have to worry is you have it [the compensation tuning] just right.”

The ASIC can also deliver real-time latency, chromatic dispersion and PMD network measurements at path set-up. This avoids first testing the link, and possible errors when entering measurements in the planning-path network set-up tools. “Coherent technology for 40 Gig and 100 Gig is potentially a game changer in making ROADMs work,” says Berthold.

Coherent digital transponders at 40 and 100Gbps will also drive the deployment of more advanced ROADMs, argues Oclaro. “The need to extract value from the bank of [40 and 100G coherent] transponders in a colourless-directionless sense becomes a lot more important,” says Peter Wigley, director, marketing and technology strategy at Oclaro.

Control plane

Tunable lasers, flexible ROADMS and even coherent technology may be prerequisites for agile optical networks, but another key component is the control plane software. “Many of the networks today have some of the hardware components to make them agile but lack the software,” says Andrew Schmitt, directing analyst, optical, at Infonetics Research. (See ROADM Q&A with Andrew Schmitt.)

The network can be split into the data plane, used to transport traffic, the control plane that uses routing and signalling protocols to set up connections between nodes, and the management plane that oversees the control plane.

The network can be split into the data plane, used to transport traffic, the control plane that uses routing and signalling protocols to set up connections between nodes, and the management plane that oversees the control plane.

“What is deployed mostly today is a SONET/SDH control plane,” says Tellabs’ Rarick. “This is to manage SONET/SDH ring or mesh networks, using standalone cross-connects or partnered with ROADMs, with the switching primarily done electrically.”

Three industry bodies are advancing control plane technology in several areas including the optical level.

The Internet Engineering Task Force (IEFT) is standardising Generalized Multiprotocol Label Switching (GMPLS) while the ITU is developing control plane requirements and architecture dubbed Automatically Switched Optical Networks (ASON). The third body, the Optical Internetworking Forum (OIF) oversees the implementation efforts.

“The [GMPLS/ASON] control plane comprises a common part and technology-specific part,” says Hans-Martin Foisel, OIF president. The specific technologies include SONET/SDH, OTN, MPLS Transport Profile (MPLS-TP) and the all-optical layer.

"The more efficient, functional and powerful, the control plane, the better off operators will be"

"The more efficient, functional and powerful, the control plane, the better off operators will be"

Brandon Collings, JDS Uniphase.

“Using a control plane with all-optical is a challenge,” says Foisel. “The control plane has to have a very simplified knowledge of the optical parameters.” The photonic layer has numerous optical parameters that can be used. Any protocol needs to streamline the process such that simple rules are used by operators to decide whether a route can be completed or whether signal regeneration is needed.

The IETF is working on wavelength switched optical networks (WSON), the all-optical component of GMPLS, to enable such simplified rules within a single network domain. “GMPLS cannot control wavelengths today using ROADMs and that is what is being standardised in WSON,” says Persson.

What is beyond of scope of WSON is routing transparently between vendors, says Foisel. "It is almost impossible to indentify all the optical parameters in an inter-vendor way for operators to fully use,” says Fujitsu’s McDermott. "You end up with a huge parameter set."

So what will the photonic control plane look like?

“The whole architecture of control will be different that what is done in the electrical domain,” says Berthold. It will combine three main functions. One is embedded intelligence that will learn fibre-route characteristics and optical parameters from the network, data which will use be by each vendor in a proprietary way. Another is a propagation modelling planning tool that will process data offline to determine the viable network paths. These paths will then be preloaded into network elements as well as recommendations as to the preferred ones to use to avoid contention. Finally, use will be made of the signalling to turn these paths up as rapidly as possible. “This is certainly not the same model as electrical,” says Berthold.

By combining electrical and optical switching, operators will be able to continually optimise their networks. “They can devolve their networks to the lowest cost and most power-efficient solution,” says Berthold.

Ciena, for example, is adding colourless-directionless ROADMs to its 3.6 terabit-per-second 5430 electrical switch. “When you start growing traffic from a low level you need electrical switches in many places in order to efficiently fill wavelengths,” says Berthold. “But as traffic grows there is more opportunity to bypass intermediate nodes with an optical path.” By tying the ROADM with the electric switch, traffic can be regroomed and electrical paths set up on-demand to continually optimise the network.

Challenges

Despite progress in ROADM hardware and control plane management, challenges remain before a remotely controlled all-ROADM mesh network will be achieved.

One is handling customer application rates at 1, 10 and 40Gbps on 100 Gbps infrastructure. This will use the OTN protocol and will require electrical switch and control plane support.

Interoperability between vendors’ equipment must also be demonstrated. “Interlayer management – it is not enough just to do optical,” says Kline. “And it is not only between layers but interoperability between vendors’ equipment.” Thus, even if Verizon Business is correct that colourless, directionless ROADMs will become generally available in 2012; the vision of a dynamic optical network will take longer.

“Coherent technology for 40 Gig and 100 Gig is potentially a game changer in making ROADMs work”

“Coherent technology for 40 Gig and 100 Gig is potentially a game changer in making ROADMs work”

Joe Berthold, Ciena

“GMPLS/ASON are still years out and some operators may never deploy them,” says Schmitt at Infonetics. But Kline highlights the vendors Huawei and Alcatel-Lucent as keen promoters of control-plane-enabled dynamic optical networking.

“Huawei has 250 ASON applications with over 80 carriers, and 30-plus OTN WDM ASON applications,” says Kline. Here, an ASON application is described a ring or nodes that use a control plane for automated networking. “These are small and self-contained; not AT&T’s and Verizon’s [sized] meshed networks,” says Kline, who adds that Alcatel-Lucent also has such ASON deployments.

There are also business-case hurdles associated with photonic switching to be overcome.

Doing things on-demand may be compelling but need to be proven, says Jim King, executive director of new technology product development and engineering at AT&T Labs. This is easier to prove deeper in the network. “In the middle of the network it is easy because the law of large numbers means I know I need lots of capacity out of Chicago, say; I just don’t know whether it needs to go north, east or south,” says King. “But when a just-in-time delivery requirement extends to the end of the network, the financials are much more challenging based on how close you need to get to customer premises, cell towers or critical customer data centres.”

What next?

Oclaro too believes that it will be another two years before colourless, directionless and contentionless ROADMs start to be deployed in volume. The challenge thereafter is driving down their cost.

Another development that is likely to emerge after gridless is faster switching speeds to reduce network latency. Operators are using their mesh networks for restoration but there is a growing interest in protection and restoration at the optical layer, says Bala. “We were seeing RFPs (request-for-proposals) where a WSS of below 2s was ok,”’ he says. “Now it is: ‘How fast can you switch?’ and “Can you switch below 100ms’.”

This is driving interest in optical channel monitoring. “Ultimately it will require the ability to monitor the ROADM ports from signal power and to detect contention, and you’ll need to do this quickly,” says Wigley. “It is not very useful having a fast WSS unless you know quickly where the traffic is going.”

Technology will continue to provide incremental enhancements. The cost-per-bit-per-kilometer has come down six or seven orders of magnitude in the last two decade, says Finisar’s Poole. Apart from the erbium-doped fibre amplifier (EDFA), no single technology has made such a sizable contribution. Rather it has been a sequence of multiple incremental optimisations. “Coherent technology is one way of getting more data down a pipe; gridless is another to get a 2x improvement down a pipe,” says Poole. “Each of these is incremental, but you have to keep doing these steps to drive the cost-per-bit down.”

Meanwhile operators will look to further efficiencies to keep driving down transport costs. “Operators are looking at tradeoffs of router versus optical switching,” says McDermott. “They are going through various tradeoffs, the new services they might offer, and what is a flexible but cost effective solution.” As yet there is no universal agreement, he says.

“The balance between the two [the optical and electrical layer] is the key,” says Infinera’s Perkins. “There is a balance you have to reach to achieve the best economics: the lowest cost network supporting the highest capacity possible at a cost you can afford, and operate it with the fewest people.”

And ROADMs will be deployed more widely. “In 3-5 years’ time everything will have a ROADM in it – it better have a ROADM in it,” says Kline. At the electrical layer it will be Ethernet and at the optical it will be OTN and lightpaths. “It is all about simplification and saving costs.”

Other dynamic optical network briefing sections

Part 1: Still some way to go

Part 2: ROADMS: reconfigurable but still not agile

40 and 100Gbps: Growth assured yet uncertainty remains

Part 2: 40 and 100Gbps optical transmission

The market for 40 and 100 Gigabit-per-second optical transmission is set to grow over the next five years at a rate unmatched by any other optical networking segment. Such growth may excite the industry but vendors have tough decisions to make as to how best to pursue the opportunity.

Market research firm Ovum forecasts that the wide area network (WAN) dense wavelength division multiplexing (DWDM) market for 40 and 100 Gigabit-per-second (Gbps) linecards will have a 79% compound annual growth rate (CAGR) till 2014.

In turn, 40 and 100Gbps transponder volumes will grow even faster, at 100% CAGR till 2015, while revenues from 40 and 100Gbps transponder sale will have a 65% CAGR during the same period.

Yet with such rude growth comes uncertainty.

“We upgraded to 40Gbps because we believe – we are certain, in fact – that across the router and backbone it [40Gbps technology] is cheaper.”

Jim King, AT&T Labs

Systems, transponder and component vendors all have to decide what next-generation modulation schemes to pursue for 40Gbps to complement the now established differential phase-shift keying (DPSK). There are also questions regarding the cost of the different modulation options, while vendors must assess what impact 100Gbps will have on the 40Gbps market and when the 100Gbps market will take off.

“What is clear to us is how muddled the picture is,” says Matt Traverso, senior manager, technical marketing at Opnext.

Economics

Despite two weak quarters in the second half of 2009, the 40Gbps market continues to grow.

One explanation for the slowdown was that AT&T, a dominant deployer of 40Gbps, had completed the upgrade of its IP backbone network.

Andreas Umbach, CEO of u2t Photonics, argues that the slowdown is part of an annual cycle that the company also experienced in 2008: strong 40Gbps sales in the first half followed by a weaker second half. “In the first quarter of 2010 it seems to be repeating with the market heating up,” says Umbach.

This is also the view of Simon Warren, Oclaro’s director product line managenent, transmission product line. “We are seeing US metro demand coming,” he says. “And it is very similar with European long-haul.”

BT, still to deploy 40Gbps, sees the economics of higher-speed transmission shifting in the operator’s favour. “The 40Gbps wavelengths on WDM transmission systems have just started to cost in for us and we are likely to start using it in the near future,” says Russell Davey, core transport Layer 1 design manager at BT.

What dictates an operator upgrade from 10Gbps to 40Gbps, and now also to 100Gbps, is economics.

The transition from 2.5Gbps to 10Gbps lightpaths that began in 1999 occurred when 10Gbps approached 2.5x the cost of 2.5Gbps. This rule-of-thumb has always been assumed to apply to 40Gbps yet thousands of wavelengths have been deployed while 40Gbps remains more than 4x the cost of 10Gbps. Now the latest rule-of-thumb for 100Gbps is that operators will make the transition once 100Gbps reaches 2x 40Gbps i.e. gaining 25% extra bandwidth for free.

The economics is further complicated by the continuing price decline of 10Gbps. “Our biggest competitor is 10Gbps,” says Niall Robinson, vice president of product marketing at 40Gbps module maker Mintera.

“The traditional multiplier of 2.5x for the transition to 10Gbps is completely irrelevant for the 10 to 40 Gigabit and 10 to 100 Gigabit transitions,” says Andrew Schmitt, directing analyst of optical at Infonetics Research. “The transition point is at a higher level; even higher than cost-per-bit parity.”

So far two classes of operators adopting 40Gbps have emerged: AT&T, China Telecom and cable operator Comcast which have made, or plan, significant network upgrades to 40Gbps, and those such as Verizon Business and Qwest that have used 40Gbps more strategically for selective routes. For Schmitt there is no difference between the two: “These are economic decisions.”

AT&T is in no doubt about the cost benefits of moving to higher speed transmission. “We upgraded to 40Gbps because we believe – we are certain, in fact – that across the router and backbone it [40Gbps technology] is cheaper,” says Jim King, executive director of new technology product development and engineering, AT&T Labs.

King stresses that 40Gbps is cheaper than 10Gbps in terms of capital expenditure and operational expense. IP efficiencies result and there are fewer larger pipes to manage whereas at lower rates “multiple WDM in parallel” are required, he says.

“We see 100Gbps wavelengths on transmission systems available within a year or so, but we think the cost may be prohibitive for a while yet, especially given we are seeing large reductions in 10Gbps,” says Davey. BT is designing the line-side of new WDM systems to be compatible with 40Gbps – and later 100Gbps - even though it will not always use the faster line-cards immediately.

Even when an operator has ample fibre, the case for adopting 40Gbps on existing routes is compelling. That’s because lighting up new fibre is “enormous costly”, says Joe Berthold, Ciena’s vice president of network architecture. By adding 40Gbps to existing 10Gbps lightpaths at 50GHz channel spacing, capacity on an existing link is boosted and the cost of lighting up a separate fibre is forestalled.

According to Berthold, lighting a new fibre costs about the same as 80 dense DWDM channels at 10Gbps. “The fibre may be free but there is the cost of the amplifiers and all the WDM terminals,” he says. “If you have filled up a line and have plenty of fibre, the 81st channel costs you as much as 80 channels.”

The same consideration applies to metropolitan (metro) networks when a fibre with 40, 10Gbps channels is close to being filled. “The 41st channel also means six ROADMs (reconfigurable optical add/drop multiplexers) and amps which are not cheap compared to [40Gbps] transceivers,” says Berthold.

Alcatel-Lucent segments 40Gbps transmission into two categories: multiplexing of lower speed signals into a higher speed 40Gbps line-side trunk link - ‘muxing to trunk’ - and native 40Gbps transmission where the client-side, signal is at 40Gbps.

“The economics of the two are somewhat different,” says Sam Bucci, vice president, optical portfolio management at Alcatel-Lucent. The economics favour moving to higher capacity trunks. That said, Alcatel-Lucent is seeing native 40Gbps interfaces coming down in price and believes 100GbE interfaces will be ‘quite economical’ compared to 10x10Gbps in the next two years.

Further evidence regarding the relative expense of router interfaces is given by Jörg-Peter Elbers, vice president, advanced technology at ADVA Optical Networking, who cites that in overall numbers currently only 20% go into 40Gbps router interfaces while the remaining 80% go into muxponders.

Modulation Technologies

While economics dictate when the transition to the next-generation transmission speed occurs, what is complicating matters is the wide choice of modulation schemes. Four modulation technologies are now being used at 40Gbps with operators having the additional option of going to 100Gbps.

The 40Gbps market has already experienced one false start back in 2002/03. The market kicked off in 2005, at least that is when the first 40Gbps core router interfaces from Cisco Systems and Juniper Networks were launched.

"There is an inability for guys like us to do what we do best: take an existing interface and shedding cost by driving volumes and driving the economics.”

"There is an inability for guys like us to do what we do best: take an existing interface and shedding cost by driving volumes and driving the economics.”

Rafik Ward, Finisar

Since then four 40Gbps modulation schemes are now shipping: optical duobinary, DPSK, differential quadrature phase-shift keying (DQPSK) and polarisation multiplexing quadrature phase-shift keying (PM-QPSK). PM-QPSK is also referred to as dual-polarisation QPSK or DP-QPSK.

“40Gbps is actually a real mess,” says Rafik Ward, vice president of marketing at Finisar.

The lack of standardisation can be viewed as a positive in that it promotes system vendor differentiation but with so many modulation formats available the lack of consensus has resulted in market confusion, says Ward: “There is an inability for guys like us to do what we do best: take an existing interface and shedding cost by driving volumes and driving the economics.”

DPSK is the dominant modulation scheme deployed on line cards and as transponders. DPSK uses relatively simple transmitter and receiver circuitry although the electronics must operate at 40Gbps. DPSK also has to be modified to cope with tighter 50GHz channel spacing.

“DPSK’s advantage is relatively simple,” says Loi Nguyen, founder, vice president of networking, communications, and multi-markets at Inphi. “For 1200km it works fine, the drawback is it requires good fibre.”

The DQPSK and DP-QPSK modulation formats being pursued at 40Gbps offer greater transmission performance but are less mature.

DQPSK has a greater tolerance to polarisation mode dispersion (PMD) and is more resilient when passing through cascaded 50GHz channels compared to DPSK. However DQPSK uses more complex transmitter and receiver circuitry though it operates at half the symbol rate – at 20Gbaud/s - simplifying the electronics.