ECOC 2024 industry reflections - Part II

Gazettabyte is asking industry figures for their thoughts after attending the recent 50th-anniversary ECOC show in Frankfurt. Here are contributions from Nubis Communications' Dan Harding, imec's Peter Ossieur, and Chris Cole.

Dan Harding, CEO, Nubis Communications

Our biggest takeaway from ECOC is the increased confidence not just in 200-gigabit electrical and optical interfaces but also in 400 gigabit. It is becoming clear that in 2025 and 2026, the industry will broadly launch platforms using a 200 gigabit per lane serdes [serialiser/deserialiser interfaces] that will connect to 200 gigabit per lane optics.

At ECOC, we were shown demonstrations of 400-gigabit serdes. We had several discussions with industry leaders who expressed confidence that serdes can scale to 400 gigabit per lane and that the industry will need optics to support this in the next few years. Different optics approaches were shown at ECOC, but the main takeaway was that serdes speeds continue to advance, and optics needs to figure out a way to keep up.

Our second takeaway is that advancements in materials have significantly reduced loss across printed circuit boards (PCBs), so linear pluggable optics (LPO) at 200 gigabit looks increasingly feasible with vertical line cards and even with traditional ones.

Generally, the 200 gigabit per lane generation will be more similar to the 100-gigabit generation than we thought a year ago. That said, the transition to each new service speed is becoming fuzzy, such that 100 gigabits per lane will have years of overlap with 200 gigabits per lane. The data centre operators and system vendors remain committed to copper for short-reach links, even at 200 gigabit per lane. However, there will be more “active” copper links, so the mix between passive and active copper will shift to more active at 200 gigabit per lane.

As a supplier of optics for AI/ machine-learning networks, the third big takeaway for us is that the speed at which new architectures are being deployed puts an extreme focus on delivering a solution that can quickly move from first samples into volume production. That means we must constantly consider our entire development flow to support this.

Lastly, we were encouraged to see progress on a new form factor for pluggable optics to eliminate the “gold fingers.” This will help the optics industry take advantage of silicon photonics and the density it can deliver. Let’s see how quickly this form factor work progresses, but this is the right direction for the optics industry.

Peter Ossieur, Program Manager, High-Speed Transceivers at imec-IDLab

I noted the speed at which the industry has embraced the concept of linear pluggable optics (LPO). But I’m still unsure how linear pluggable optics will play out.

At ECOC, it was evident that linear pluggable optics are now driving rapid adoption of new materials, notably thin-film lithium niobate. This is excellent news for imec, which is putting significant effort into integrating lithium niobate on its 200mm silicon photonics platform.

As for surprises, one is that co-packaged optics continues to struggle. Another is that the industry’s focus is already turning to 400 gigabit per lane.

Chris Cole, Optical Communications Advisor

The overall ECOC impression was like this year’s OFC show; optics are back with a vengeance. The excitement, buzz, and optimism were infectious. Also uplifting was the focus of the technical conference and the exhibition on solving tough engineering challenges and going after new markets rather than eking out a living. This period may yet be a bubble, but it is a fun ride while it lasts.

There were two important technical trends of note. First, parallelism will become increasingly important, including more fibre and wavelengths. Second, reliability must be approached holistically, and today’s data centre paradigm of swapping failed modules is inadequate to support AI/ machine-learning growth, especially for training.

Also necessary are significantly lower Failures in Time (FIT) for all parts of an optical link, along with system-level redundancy schemes. Achieving this will likely require a shift to fab process-based integration, replacing current discrete assembly methods. This transition is not just a suggestion but a crucial step towards ensuring the reliability and efficiency of optical systems.

ECOC 2024 industry reflections

Gazettabyte is asking industry figures for their thoughts after attending the recent 50th-anniversary ECOC show in Frankfurt. Here are the first contributions from Huawei's Maxim Kuschnerov, Coherent's Vipul Bhatt, and Broadcom's Rajiv Pancholy.

Maxim Kuschnerov, Director R&D, Optical & Quantum Communication Laboratory at Huawei.

At ECOC, my main interest concerned the evolution of data centre networking to 400 gigabits per lane for optics and electronics. Historically, the adoption of new optical line rates always preceded the serdes electrical interconnects but now copper cables are likely to drive much of the leading development work at 400 gigabit per lane.

Arista Networks argued that 448G-PAM6 works better for copper, while 448G-PAM4 is the better choice for optics – a recurring argument. While PAM6 signalling is certainly more suitable for longer copper cables, it will face even tougher challenges on the optical side with increasing reflection requirements in newly built, dusty data centres. Also, a linear drive option for future Ethernet will be imperative, given the DSP’s increasing share of the the consumption in pluggable modules. Here, a native 448G-PAM4 format for the serdes (the attachment unit interface or AUI) and optics looks more practical.

My most important takeaway regarding components was the initial feasibility of electro-absorption modulated lasers (EMLs) with a greater than 100GHz analogue bandwidth, presented by Lumentum and Mitsubishi publicly and other companies privately. Along with thin-film lithium niobate (TFLN) Mach–Zehnder modulators suited for Direct Reach (DR) applications with shared lasers, EMLs have historically offered low cost, small size and native laser integration.

For 1.6-terabit modules, everyone is waiting on the system availability of 224-gigabit serdes at a switch and network interface card (NIC) level. The power consumption of 1.6-terabit optical modules will improve with 3nm CMOS DSPs and native 200 gigabit per lane. Still, it gets into an unhealthy region where the network cable power consumption is in the same ballpark as the system function of switching. Here, the bet on LPO certainly didn’t pay off at 100 gigabits per lane and will not pay off at 200 gigabits per lane at scale. The question is whether linear receive optics (LRO)/ half-retimed approaches will enter the market. Technically, it’s feasible. So, it might take one big market player with enough vertical integration capability and a need to reduce power consumption to move the needle into this more proprietary, closed-system direction. Nvidia showcased their PAM4 DSP at the show. Just saying…

212G VCSELs are still uncertain. There is a tight initial deployment window to be hit if these high-speed VCSELS are to displace single-mode fibre-based optics at the major operators. Coherent’s results of 34GHz bandwidth are not sufficient and don’t look like something that could yet be produced at scale. Claims by some companies that a 400 gigabit per lane VCSEL is feasible sound hollow for now, with the industry crawling around the 30GHz bandwidth window.

Last but not least, co-packaged optics. For years, this technology couldn’t escape gimmick status. Certainly, reliability, serviceability, and testability of co-packaged optics using today’s methodology would make a deployment impractical. However, the big prize at 400 gigabit per lane is saving power – a significant operational expense for operators – something that is too attractive to ignore.

The targets of improving optics diagnostics, developing higher-performance dust-reflection DSP algorithms to deal with multi-path interference, adopting more resiliency to failure in the network, and introducing a higher degree of laser sparing are not insurmountable tasks if the industry sets its mind to them. Given the ludicrous goals of the AI industry, which is reactivating and rebranding nuclear power plants, a significant reduction in network power might finally serve a higher purpose than just building a plumber’s pipe.

Vipul Bhatt, Vice President of Marketing, Datacom Vertical, Coherent

ECOC 2024 was the most convincing testimony that the optical transceiver industry has risen to the challenge of AI’s explosive growth. There was hype, but I saw more solid work than hype. I saw demonstrations and presentations affirming that the 800-gigabit generation was maturing quickly, while preparations are underway for the next leap to 1.6 terabit and then 3.2 terabit.

This is no small feat, because the optics for AI is more demanding in three ways. I call them the three P’s of AI optics: performance, proliferation, and pace.

Performance because 200 gigabit PAM4 optical lanes must work with a low error rate at higher bandwidth. Proliferation because the drive to reduce power consumption has added new transceiver variants like linear packaged optics (LPO) and linear receive optics (LRO). And pace because the specifications of AI optics are evolving at a faster pace than traditional IEEE standards.

Rajiv Pancholy, Director of Hyperscale Strategy and Products, Optical Systems Division, Broadcom

As generative AI systems move to unsupervised, transformer-based parallel architectures, there is less time for resending packets due to data transmission errors. Improved bit error rates are thus required to reduce training times while higher interconnect bandwidth and data rates are needed to support larger GPU clusters. These compute networks are already moving to 224 gigabit PAM4 well before the previous generation at 112 gigabit PAM4 was allowed to reach hyperscale deployment volumes.

The problem is scalability with a high-radix supporting all-to-all connectivity. The power for a single rack of 72 GPUs is 120kW, and even with liquid cooling, this becomes challenging. Interconnecting larger scale-up and scale-out AI computing clusters requires more switching layers which increases latency.

Furthermore, after 224 gigabit PAM4, the losses through copper at 448 gigabit PAM4 make link distances from the ASIC too short. Moving to modulation schemes like PAM-6 or PAM-8 presents a problem for the optics, which would need to stay at 448 gigabit PAM4 to minimize crosstalk and insertion losses.

Supporting 448 gigabit PAM4 with optics then potentially requires new materials to be integrated into silicon, like thin-film lithium niobate (TFLN) and Barium Titanate (BaTiO3), electro-optic (EO) polymers, and III-V materials like Indium Phosphate (InP) and Gallium Arsenide (GaAs). So now we have a gearbox and, potentially, a higher forward error correction (FEC) coding gain is required, adding more power and latency before the signal even gets to the transmit-side optics.

There were 1.6-terabit OSFP transceivers operating with eight lanes of 212.5 gigabit PAM4 while vendors continue to work towards a 3.2-terabit OSFP-XD. With 32 x 3.2Tbps pluggables operating at 40W each, the optical interconnect power would be 1.3kW for a 102.4Tbps switch. And if you use 64 x 1.6Tbps OSFP at 25W each, the optical interconnect power will be eben higher, at 1.6kW. I wonder how linear pluggable optics can compensate for all the path impairments and reflections at high data rates from pluggable solutions. Perhaps you can relax link budgets, temperature requirements, and interoperability compliance.

The best session this year was the last ECOC Market Focus panel on the Tuesday, which kept everyone a bit longer before they could figure out where in Frankfurt Oktoberfest beer was on tap. The panel addressed “Next-Gen Networking Optics like 1.6T or 3.2T”. All but one of the participants discussed the need and a migration to co-packaged optics, which we at Broadcom first demonstrated in March 2022.

It was great to also present at the ECOC Market Focus forum. My presentation was titled “Will you need CPO in 3 years?” Last year in Glasgow, I gave a similar presentation: “Will you need CPO in 5 years?”

Pluggable optics in need of a makeover

Current pluggable optics have stunted optical innovation for the last decade. So argues Chris Cole, industry veteran and an advisor at start-up Quintessent.

Cole calls for a new form factor supporting hundreds of electrical and optical channels. In a workshop on massively parallel optics held at the recent ECOC conference and exhibition in Frankfurt, he outlined other important specifications such a module should have.

Cole, working with other interested parties in the new form factor, will present their proposal to the OIF industry body at its next meeting in November.

“I’m very optimistic it will be approved,” says Cole.

Limitations

Pluggable optics require improvement in several areas.

One limitation is the large, limited number of gold-fingered interconnects on the edge of the printed circuit board (PCB) that fits inside the pluggable module. “This technology goes back 30 or 40 years,” says Cole.

The high-speed OSFP (Octal Small Form-factor Pluggable) module has a row of eight transmit-receive pairs of gold-fingered edge interconnects. The OSFP interface supports 800 gigabits per second (Gbps), and 1.6 terabits per second (Tbps) if 200Gbps signalling is used. The industry can also double capacity to 3.2-terabit with 8x400Gbps signals.

In turn, the QSFP-XD has 16 such pairs arranged in two rows. That promises 3.2Tbps capacity using 16x200Gbps signals and 6.4Tbps with 16x400Gbps signals. However, Cole expects huge signal integrity issues using such a design.

Heat dissipation is another challenge with pluggables. Heat is extracted from a pluggable using a metal plate on the top, which Cole says limits power consumption to 50W.

It is is the low channel count, however, that is the biggest restriction, says Cole.

Meanwhile, yield and reliability have yet to advance. He cites the significant reliability performance achieved by Intel with its integrated laser technology. “It doesn’t do any good because who cares?” he says. “You have a four-channel module, and something’s wrong; you throw it away,” says Cole.

Proposed form factor

The high-capacity form factor proposal calls for a dense, high-bandwidth design. Significant numbers of electrical and optical lanes are needed for that: hundreds rather than eight or 16. Moreover, hundreds of electrical interfaces is not a new concept: Cole cites the large 300-pin MSA used for early embedded coherent modems.

The new form factor would have 2D electrical connections with at least a 0.5mm lower pitch. A high-speed 256-lane count is envisaged, that would also enable many optical lanes and wavelength counts. Each electrical lane should have a bandwidth of 200GHz to support 448Gbps signalling. If implemented, the package’s capacity would be 114Tbps.

“[200GHz lanes] is not very hard to do if you have a connection that is almost negligible height,” says Cole.

Many optical connections are also required, says Cole. He suggests 512, where individual links are supported to ensure a high radix. “We can quibble about the correct number, but it’s not eight or 16,” says Cole. The design should also support liquid cooling to ensure a 100W power consumption.

Optical options

Cole says the design must be optics-agnostic. Nobody can predict the future, he says.

To support 12.8Tbps, for example, it could use 32 optical lanes each at 400Gbps or 16 lanes at 800Gbps both using a thin-film lithium niobate modulator. However, the design should also support many more slower optical channels.

One such 12.8Tbps optical transmission example is 256 channels, each a 50Gbps non-return-to-zero signal, making use of a compact ring-resonator modulators. It could even be 3,200x4Gbps MicroLED channels using an approach favoured by Avicena. It is not out of the question, says Cole.

Cole stresses that while 12.8-terabit and 25.6-terabit capacities may sound high compared to existing pluggable, but the numbers should be viewed as aggregate package capacities. “You would be breaking them out into many channels,” he says.

Retaining features that work

Cole argues that the benefits of pluggable modules must be retained. These include front-plate access, testing, and easy replacement. Equally, the proposed form factor should preserve existing industry business models.

He is also adamant that conventional assembly must be replaced with process-based photonic integration to improve reliability. “The consistency you get in a fab versus what you get in a discrete assembly, there’s an order of magnitude difference there,” he says.

ECOC 2024: AI and Optics

An interview with Gareth Spence of Adtran at the recent ECOC 2024 conference and exhibition

Users embrace OpenLight's silicon photonics platform

OpenLight, the open silicon photonics platform provider, can point to a successful 2024 signing up new customers.

In 2023, OpenLight had three customers bringing photonic integrated circuit (PIC) designs to market. OpenLight has since added 14 more.

OpenLight was formed in 2022 when Juniper Networks carved out its silicon photonics arm. Synopsys acquired a three-quarters stake in OpenLight, while Juniper retained a quarter.

“In the past, the company hadn’t really done any revenue, including when they were in Juniper,” says Adam Carter, OpenLight’s CEO (pictured). “We’ve seen a ten times increase and shown that we can be very profitable.”

The start-up has been creating industry partnerships to better serve its customers’ circuit design, chip manufacturing, and packaging needs.

Silicon photonics foundry partner

Tower Semiconductor was the silicon photonic design team’s chosen foundry long before OpenLight was spun out of Juniper.

Tower now has three dedicated process design kits (PDKs) that take an OpenLight circuit design and run it through the foundry’s wafer manufacturing process line.

The PDKs cover a standard silicon photonics process, one tailored for light detection and ranging (Lidar) applications and one offering distributed feedback (DFB) lasers for artificial intelligence (AI) and high-bandwidth data centre PIC applications.

“You have different specified components in a Lidar process than the data centre one,” says Carter.

For example, the DFB laser requires a diffraction grating to be built into the silicon. The DFBs are used for optical engines supporting coarse wavelength division multiplexing (CWDM) and will support 200-gigabit optical lanes.

OpenLight, working with Riga Technical University and Keysight Technologies, published a post-deadline paper at OFC earlier this year, showing a DFB laser design path for 400-gigabit optical lanes. Future 3.2-terabit transceiver optical engines will need such DFBs.

More partnerships

OpenLight also works using several electronic design automation (EDA) tool environments.

Unsurprisingly, OpenLight works closely with photonic EDA tool specialist Synopsys. “We help them with some of their optical simulation models, and they use us for anything new they’re rolling out,” says Carter.

However, OpenLight also works with other tool vendors to support its customers’ needs and has a partnership with Luceda Photonics, a provider of layout tools.

The start-up has also signed deals with PIC specialists VLC Photonics and Spark Photonics. Since OpenLight has only so many design engineers, it has partnered with the two firms to provide additional design support.

“We have a chip design that we give them, and we ensure their work follows the design rules and guidelines,” says Carter. “Once the design looks good, we sign it off.” The two photonic design firms also develop photonic components for OpenLight to grow its PDK library offerings.

OpenLight has a partnership with manufacturing firm Jabil to provides its customers with services such as assembly, packaging, burn-in, and testing. “As a customer, you take a wafer from Tower, give it to Jabil, and what comes out is a packaged product,” says Carter.

Jabil bought Intel’s optical module unit last year, and Carter says there is an opportunity to supply PICs to that unit, too.

The start-up is also exploring other packaging partners based in Asia to support its customers there.

Lidar opportunities

Two styles of Lidar systems are used for such applications as automotive, autonomous vehicles, and machine vision systems.

One approach, known as time-of-flight, uses VCSELs as the light source. Such systems have limited reach, and the weather can curtail their performance. The second approach is frequency-modulated continuous wave (FMCW) Lidar, which achieves superior range and resolution performance.

But FWCM requires signal amplification, says Carter, something OpenLight can offers with its monolithic process technology that can integrate semiconductor optical amplifiers.

“Much of the work we’re doing with potential Lidar customers is around the fact that we can amplify the signal,” he says.

Carter says time-of-flight systems are the dominant approach for automotive applications in China, but players there are considering alternative architectures. “We are working with Chinese customers on this type of application,” says Carter.

800-gigabit reference designs

OpenLight also offers two 800-gigabit PIC reference designs: an 8×100-gigabit (800G-DR8) design and an 800-gigabit 2xFR4 CWDM one.

The reference designs help OpenLight’s work with sub-assembly companies with their fibre attach units. OpenLight is also using them with its work with electronics suppliers providing PAM-4 (4-level pulse amplitude modulation) digital signal processing (DSP) chips.

“And we are also expanding to an 8×200-gigabit part – a 1.6-terabit DR8, and we’re also doing a [1.6-terabit] CWDM variant,” says Carter. “These will be the first parts to employ the DFB as the laser source.”

At 200 gigabit, the waveguide optical losses are higher. “To meet the specifications, we can add amplification with a semiconductor optical amplifier to boost the output power, compensating for the losses,” he says.

Carter became OpenLight’s CEO at the start of 2023 and is pleased with what the start-up has achieved.

“The team is seeing that what they’ve been working on for several years is becoming a business that can stand on its own,” says Carter.

Ciena sends a 1.6T optical lambda over a 470km link

- Ciena has detailed the first live field trial using its WaveLogic 6 Extreme coherent modem.

- The WaveLogic 6 modem will be generally available from the end of the month.

Ciena’s newest coherent optical modem has sent 1.6 terabits of data over a 470km link. The field trial used Ciena’s WaveLogic 6 Extreme coherent modem in telecom operator Arelion’s live network.

The link connects an Equinix data centre in Ashburn to a Telxius submarine cable landing station, both sites in the state of Virginia.

“The fact that we are achieving 1.6-terabit wavelengths across close to 500 kilometres is a testament to the performance and integrity of the design,” says Helen Xenos, senior director of portfolio marketing at Ciena.

Ciena has won over 20 orders for the WaveLogic 6 Extreme from telecom operators and hyperscalers.

In Ciena’s latest quarterly results, CEO Gary Smith mentioned how Ciena had won business with significant cloud providers covering terrestrial, submarine, and coherent pluggable applications. “The majority [of these are] driven by expected growth in AI and cloud traffic,” said Smith.

WaveLogic 6 Extreme DSP

Ciena announced its WaveLogic 6 Extreme digital signal processor (DSP) early in 2023.

The WaveLogic 6 Extreme is the industry’s first 3nm CMOS process coherent DSP with a maximum symbol rate of 200 gigabaud (GBd). Current leading coherent DSPs deployed use symbol rates ranging from 120-150GBd and support up to 1.2-terabit wavelengths.

The Ciena DSP can execute 1,600 trillion (1.6 x 1015) operations per second and uses the equivalent of 4km of on-chip copper interconnect.

In contrast, Ciena’s leading deployed DSP device—the WaveLogic 5 Extreme, announced in 2019—is a 7nm CMOS DSP. Over 300 customers have ordered the WaveLogic 5 Extreme.

When it was first announced, the WaveLogic 5 had a baud rate ranging from 60 to 95GBd. Now, its baud rate ranges from 71.0 to 106.9GBd. This highlights how the coherent modem performance has been improved over the years and the same should be expected for the WaveLogic 6 Extreme.

Arelion trial

The Arelion trial is the first Ciena has announced.

“It’s a high bandwidth route connecting a submarine landing station to the data centre capital of the world – Ashburn, Virginia,” says Xenos. “It’s an ideal link to show how WaveLogic 6 can support this massive data volume transmission at today’s fastest speeds.”

The optical wavelength sent 1.6 terabits per second (Tbps) of data over nearly 500 km reach. The WaveLogic 5 Extreme, when first announced, sent 800Gbps some 200km.

“The performance that we can achieve at the highest line rate with WaveLogic 6 is better than what we were able to achieve at the highest line rate with WaveLogic 5 Extreme,” says Xenos. This is because a 3nm CMOS process can cram more digital logic on-chip, enabling the execution of advanced digital signal processing algorithms.

The WaveLogic 6 Extreme improves spectral efficiency by 15 per cent over existing links. The device also delivers 0.25-1 decibel (dB) of signal performance improvement by better tackling nonlinearities introduced by the communication channel during transmission.

The DSP also uses multi-dimensional coding technology to tackle noisy and nonlinear impaired fibre links.

Ciena expects to be able to send 1.6-terabit wavelengths several hundred kilometres over metro networks that feature reconfigurable optical add-drop multiplexers (ROADMs).

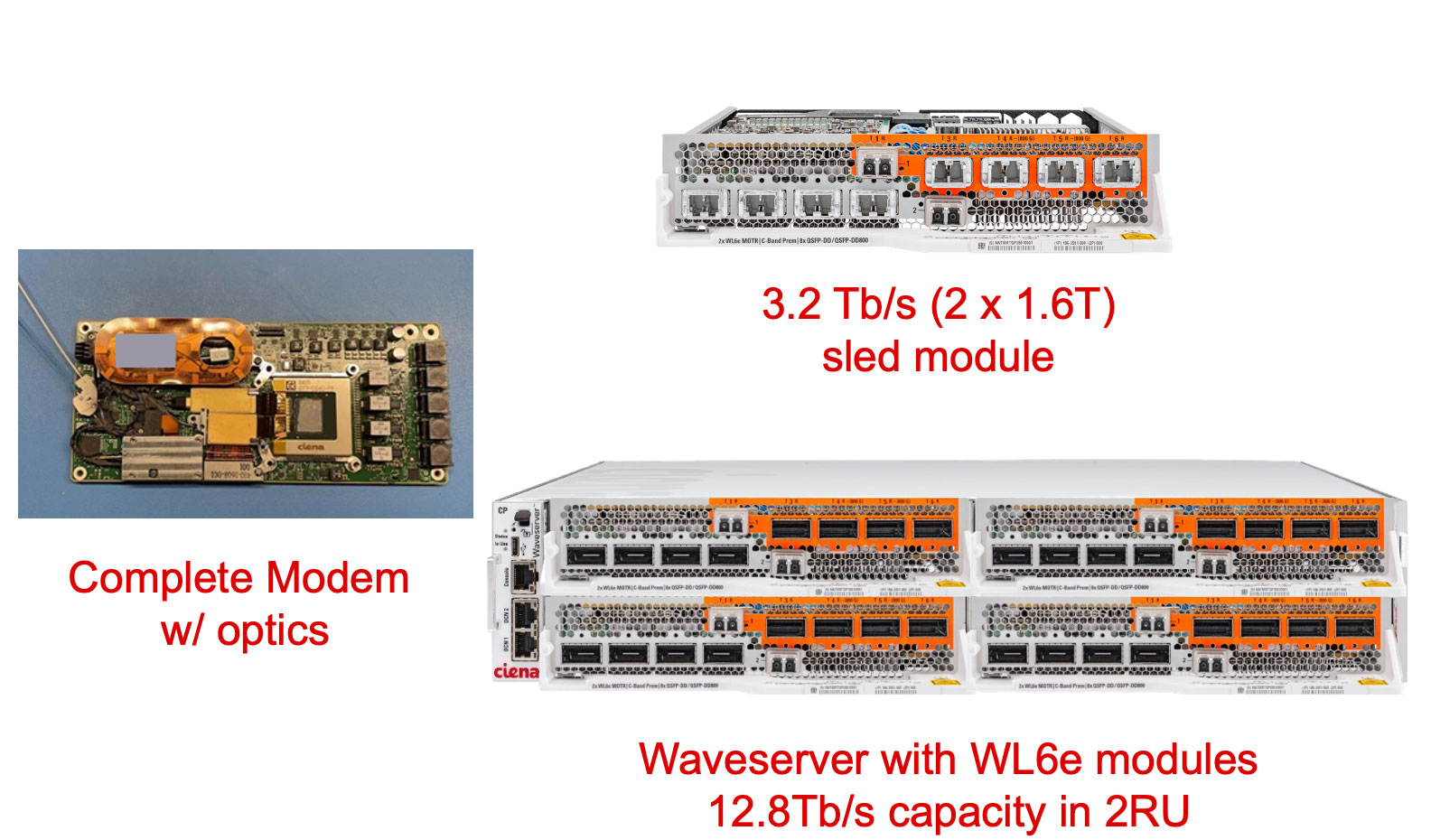

The Arelion trial used Ciena’s Waveserver, a two-rack-unit (2RU) optical transport chassis and Ciena’s open 6500 reconfigurable line system (see image below).

The Waveserver chassis uses insertable sleds, each sled hosting two WaveLogic 6 Extreme coherent modems. Four sleds fit into the Waveserver chassis for a total of eight optical wavelengths and 12.8Tbps transmission capacity.

Overall, 24 1.6Tbps wavelengths, each occupying a 200GHz channel, fit into a fibre’s C-band spectrum, giving a total capacity of 38.4Tbps. The same applies to the fibre’s L-band.

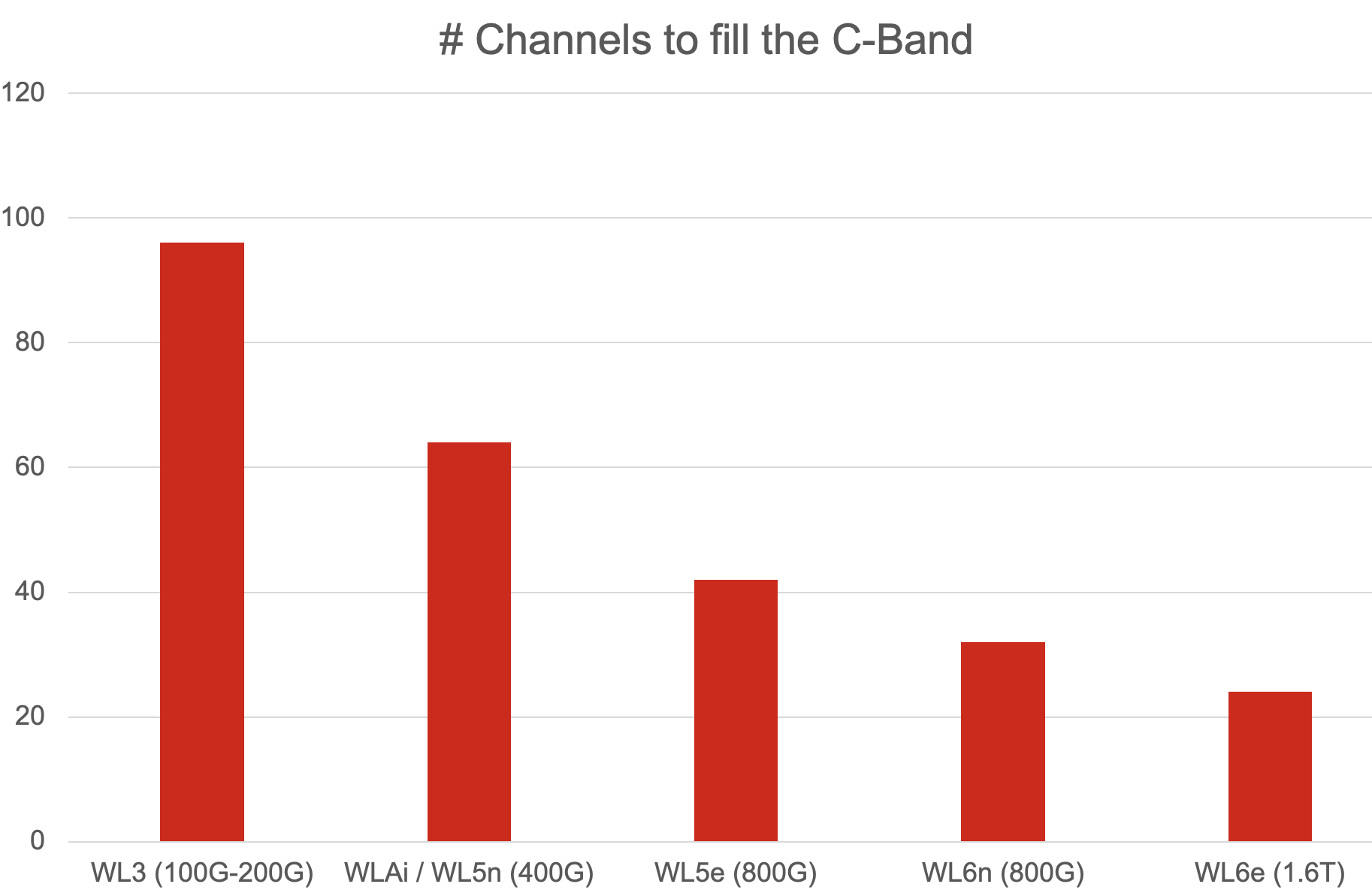

“You are filling up the full C-band with only 24 wavelengths,” says Xenos. “It wasn’t that long ago when we filled the C-band with 96, 100-gigabit channels or 64, 400-gigabit channels.” (See chart.)

Ciena says other field trials are planned. Based on its simulation work, Ciena expects its latest coherent modem to send 1.2Tbps across the Atlantic Ocean, 1Tbps across the Pacific Ocean, and 800Gbps over 3,000km. “We expect to see performance improvements because we are still tweaking,” says Xenos.

Embedded and pluggable modules

Coherent pluggable optics continue to advance, especially with the demands of hyperscalers. Coherent pluggable modules, driven by hyperscalers’ data centre interconnect demands, also continue to advance. So, is the requirement for the highest-performance embedded modules diminishing?

Ciena believes that both classes of devices are needed: performance-optimised (embedded) and pluggable coherent optical modules.

“There are more capabilities that are becoming available in pluggables, with the 800-gigabit generation extending to 1,000km and beyond and with L-band offerings coming in the near future,” she says. “If you can integrate the pluggable into a router platform, that allows you to save on space and power.”

However, the pluggable can’t match the spectral efficiency of an embedded coherent module. An 800-gigabit pluggable uses a 150GHz channel spacing, which is the same spectral efficiency as the earlier generation Ciena WaveLogic AI.

The latest embedded modules, in contrast, can be significantly better than equivalent line reate pluggables: from 1.3x to 2x. This is an important consideration in environments where fibre is scarce.

Another point Xenos makes is the nature of the optical network and its total cost, not just the optics (embedded or pluggable) but also the optical line system. If a link has many amplification stages and ROADMs, the optical line makes a more significant contribution to the overall cost. Here embedded optics is needed to span the more complex optical route while being one part of the overall cost.

“You have to look at the total overall cost of building the network,” says Xenos. “It is the optics and the photonic line system.”

General availability

The WaveLogic 6 Extreme will be generally available at the end of September 2024.

When Ciena first announced the coherent DSP, the company expected the device to be generally available in the first half of 2024. In March at OFC 2024, Ciena gave an update saying trials would start this summer.

Xenos says the delivery date did slip by several months. But she stresses the achievement of delivering such a complex coherent modem system. As well as designing the chip in a 3nm CMOS process, the DSP also includes very high-speed mixed-signal analogue-to-digital and digital-to-analogue converters.

“When you target a certain availability, it is the best date that is also realistic,” says Xenos. “The fact that we’re only a few months off means that the team has executed to deliver a working product.”

Ciena is also integrating WaveLogic 6 into its 6500 optical transport platform and will make a coherent WaveLogic 6 module available for OEMs or developers that have custom equipment requirements.

Meanwhile, Ciena continues to develop the Nano, the second WaveLogic 6 device designed for the coherent pluggable market. The WaveLogic 6 Nano is expected to be sampled at the end of the year.

Market consolidation

In June, Nokia announced its intention to acquire Infinera. If the deal passes regulatory approval, it will reduce the number of companies capable of developing high-end coherent DSPs.

More players mean greater competition which pushes the marketplace. But developing coherent DSPs using shrinking CMOS nodes is getting more expensive. One fewer player can be viewed as good news for the rest. Xenos says Ciena has expected fewer high-end coherent players going forward.

“Those who are vertically integrated have an advantage of improved cost efficiencies that they can offer to their end-customers,” she says. “And we’ve been investing in vertical integration because it allows us to control our destiny and come to market with new technology at an earlier time.”

This is what the company has done with the WaveLogic 6 Extreme, where Ciena had to develop its own 100GHz analogue bandwidth coherent optics to achieve the 200GBd symbol rate.

Future developments

The roadmap for coherent pluggable modules is well defined due to the industry organisation, the OIF’s 1600ZR and 1600ZR+ 1.6Tbps coherent pluggable modules which are expected from 2028. For embedded modules the roadmap is less clear.

“There is nothing to announce at this time, but we know that the next step has to be bold enough to provide a meaningful benefit for its adoption into the network,” says Xenos.

ECOC celebrates its 50th anniversary

- The European Conference on Optical Communications (ECOC) is celebrating its 50th anniversary.

- The conference and exhibition will take place in Frankfurt, Germany, from September 22-26.

- Key themes at the show include satellite optical communication, artificial intelligence, networking for AI within the data centre, photonic integration, quantum and Green ICT.

ECOC will celebrate its 50th anniversary this month.

The event will include a special session highlighting the progress made in photonics over the last half century and will feature luminary speakers.

There will also be a celebratory event with food stalls from different countries.

“There will be an opportunity to mingle, and we also plan an exhibition to look back at what has been achieved combined with what directions we need to take for the future,” says Jörg-Peter Elbers, an ECOC General Chair this year.

“Photonics is now very important for digitalising different protocols,” adds Ronald Freund, another of the General Chairs. “It is not just telecommunications but also photonic networks for the industrial production of almost anything you can imagine.”

Conference themes

The use of photonics for communications has become so diverse that no one theme captures the show. Rather, the General Chairs highlight several themes at this year’s event.

“There are hot topics that we have tried to cover, while also recognising that we have a broad audience,” says Elbers. The audience ranges from communications service providers to component makers and chip foundry operators.

“One topic is satellite network integration in the optical domain,” says Professor Carmen Mas Machuca, the third ECOC General Chair. “Satellite networks using all this optical technology is one of the new topics.”

There is ongoing research work to combine terrestrial and space networks to address capacity gaps and bolster networking resiliency. Satellite can take over for any failures in the terrestrial core network. Satellite networks also offer latency benefits which can benefit niche applications like high-frequency trading.

Combining satellite and terrestrial networks represents a tremendous opportunity but has it challenges, says Elbers. An optical satellite symposium will be hosted at ECOC.

Another topic is sensing that will be addressed in multiple sessions. Examples include using optical fibre network for sensing, to wearables such as brain-computer interfaces (see Conference keynotes, next section).

Freund highlights other networking symposia addressing the data centre, and Green ICT. “How photonics can contribute to reach net zero targets,” says Freund.

“We wanted to highlight the breadth of the topic, how many different problems we can solve with optical technologies,” says Elbers.”

Conference keynotes

ECOC will feature four keynote speakers.

This year, the topics are AI interconnect requirements in the data centre, optical networking, past and future, for telecom, silicon photonics, and using photonics for computation.

- Andy Bechtolsheim, co-founder and Chief Architect at Arista Networks, will address whether interconnects can keep up with the demands of AI. Bechtolsheim will outline ways to improve the power, cost, and reliability of optics for interconnects needed for AI, the boundaries between copper and optics, and packaging innovations.

- Thomas van Briel is the Senior Vice President of Architecture and Strategy at the communications service provider Deutsche Telekom. His will address the evolution of IP and optical networks and the technologies that have driven and will drive optical networking.

- Roel Baets is an Emeritus Professor at Ghent University and former Group Leader at imec. His talk is on ‘Silicon Photonics 4.0’. If the title puzzles you, then it is well chosen, quips Professor Baets. “What I mean is that it will be important for silicon photonics to make use of smart and agile manufacturing, a notion associated with Industry 4.0,” says Baets. “Silicon photonics can be expected to be an enabling technology for Industry 4.0 since it can enable more performant AI, sensors for IoT, and more.”

- The final speaker is Joyce Poon, Head of Photonics Architecture at Lightmatter. She will address future computing examples involving integrated photonics. Lightmatter believes the most significant opportunities and challenges in computing lie at the extremes in size and scale: large data centres and personal devices. Poon will detail how advanced integrated photonics can transform computing, from making large-scale AI systems more efficient to enabling wearables and human brain-computer interfaces.

Rump Session

This year’s Rump Session is titled: Quantum Technologies: Research hype or on track to commercial success?

The Rump Session will look at the funding Quantum players are attracting and address what financial return, if any, has been achieved. The topics to be tackled include quantum secure communications and quantum computing.

“Quantum companies are mushrooming everywhere, with Europe maybe an extreme case,” says Elbers. “It is interesting to explore what is behind this and what happens if the funding dries up?”

The session will address what is real and ready for near-term commercial deployment and what remains longer term.

Individual interests

Each Chair has their own ECOC interests regarding the latest research.

“My interest is to see how the energy bottleneck in the data centre will be solved concerning the demands of the AI processors,” says Freund. “One interesting approach is to move forward with integration, for instance, co-packaged optics and whether there are other possibilities.”

Professor Mas Machuca is interested in network security. “How can we use all these entanglement properties [to detect eavesdropping] so they can be applied in real networks?” she says. “For now, they are far from deployment, and it will be interesting to see how far it can go.”

The challenges with entanglement are the limited distances and durations achieved, measured in milliseconds, says Professor Mas Machuca.

Elbers’s hot topic is photonic integration: “The progress here has been tremendous.” What interests him is how to bring the functional blocks closer and building new functions using chiplets, for example.

Another of his interests at the show is how generative AI can be ued to enable customers to operate their networks more efficiently.

Industry issues

What are the Chairs’ concerns and observations regarding the photonics industry?

For Europe, there is a gap between research and forming of companies, says Freund. There is progress but more momentum is needed for start-ups to transfer innovation from research into applications so that smaller companies can provide solutions for larger ones.

“There’s a lot of money around, but there also has to be the people – entrepreneurs – to do that,” says Freund.

Professor Mas Machuca highlights sovereignty issues such that telecom operators in Europe have the suppliers to guarantee working networks. “How to design your network so that if one manufacturer is banned from your country, you still have an operating network,” says Professor Mas Machuca.

It requires standardisation efforts that the equipment makers must follow to ensure interoperability. “This is always a challenge,” she says.

Elbers overall is positive but does highlight challenges. The fundamental drivers remain: traffic growth is continuing while there are government investments to promote broadband in rural areas.

But there are also geopolitical issues that are forcing the telecom operators to chose carefully their suppliers. These geopolitical issues are also forcing equipment vendors to focus on particular markets.

There is also equipment-maker consolidation taking place, the recent Nokia-Infinera announcement being an example.

“That certainly brings challenges for some people, but I think it also brings opportunities for others,” says Elbers.

Industry issues

Carmen Mas Machuca is the Professor of Communication Networks at the Department of Electrical Engineering and Information Technology at the University of the Bundeswehr, Munich. Her interests include network planning, resource allocation, and resiliency of telecom networks, not just core but also access networks.

Jörg-Peter Elbers is Senior VP, Advanced Technology, Standards and IPR, at Adtran Networks. His responsibilities cover optical packet networks, optical access, and residential gateways.

As passive optical network speeds rise to 50 gigabit-per-second and beyond, there is a blurring between access, aggregation, and metro regional networks, says Elbers. There is also the growing importance of photonic integration as part of system design and this is also impacting his work interests.

Ronald Freund heads the telecommunications systems group at the Fraunhofer Heinrich Hertz Institute (HHI), Germany. The HHI is the largest applied research organization in Europe.

Freund’s group research interests span the full spectrum of networks – from in-house, access, and metro to wide area networks. A key focus of research is fibre-based communications to advance reach, capacity, and data rates, as well as network security and power consumption issues. The group has also started to address optical satellite and optical free-space communications.

Making best use of data at the network's edge

Moshe Shadmon has always been interested in data, the type that is spread out and requires scrutiny.

He read law at university but was also fascinated by maths and computers.

By the time Shadmon graduated with a law degree, he had set up a software company. He never practiced law.

“I think that part [not having an engineering degree] has always allowed me to look at things differently,” he says.

More recently, Shadmon’s interest in data has focussed on the network edge. Here, the data is typically across locations and too plentiful to fit within one machine.

“If the data needs to be managed across many machines, it is a problem,” says Shadmon. “Suddenly, solutions become complicated and expensive.”

Distributed edge data

Edge data refers to data generated by sensors or Internet of Things (IoT) devices located at several sites. Extracting insights from such edge data is challenging.

Shadmon refers to this as a ‘big data’ problem, ‘big’ being a relative term. Data keeps growing, proportional to the hardware used. Data generated two decades ago is now tiny compared to today’s data. The evolution of IoT devices, with billions now deployed, is a testament to such growth.

The real challenge with edge data lies in its management. There is currently no efficient technology to manage such distributed data – the data is raw and has no universal format. It is an issue many players in the industry can relate to.

Adding management software to the endpoints is also a challenge as edge hardware typically has limited resources. Alternatively, moving the data to the cloud where there are software tools and ample computing, is expensive: renting processing and storage and requiring networking to upload the data to the cloud.

“Companies move the data to the cloud or into centralized databases, not because it’s a great way to deal with the data, but because they don’t have a choice,” says Shadmon.

It is these edge data challenges that Shadmon’s start-up company, AnyLog, is addressing.

Shadmon founded AnyLog six years ago. AnyLog spent its first five years developing the edge data management platform. In the last year, AnyLog has been demonstrated its working product and is collaborating with large companies, such as IBM, that are building offerings using its technology.

AnyLog has also contributed an open-source version of its edge data technology to the Linux Foundation, a project known as EdgeLake.

The technology's workings

The hardware at the edge typically comprises one or more sensors, a programmable logic controller—an industrial computer interfaced to sensors—and an edge ‘node’ that extracts the data. The node may be a switch, a gateway, or a server next to the sensors and is typically connected to a network.

AnyLog has developed software that resides at the node. “You plug it on the node, and it’s a stack of services that manages the data in an automated way,” says Shadmon. “You could think of it as the equivalent of the data services you have in the cloud.”

The software does two clever things.

It adds a virtual layer that makes all the data in all the interest nodes appear centralised. This means that any node in the set of nodes of interest can be queried, and the software will identify the locations where the relevant data resides to satisfy the query first sent to one of the nodes. The outcome is identical to a setup where all the data is stored in the cloud except that here, the data remains at the edge.

Blockchain technology is used to locate and manage the distributed data. According to Shadmon, this is transparent to the end user, but it oversees the ‘metadata’- data about the data – and serves as a directory to identify where the needed data is located.

Shadmon cites a smart city example where the query is to quantify the electricity usage in San Francisco in the last hour. There may be thousands of nodes hosting the data. The technology identifies the nodes with the relevant data of electricity usage in San Francisco. These nodes are accessed and they return their data to the first node which then performs data aggregation.

Data may also be more substantial than time-stamped electricity usage numbers. For example, the data could be video streams from high-definition cameras across multiple locations.

The key benefit of AnyLog’s approach is that only the needed data is read wherever it is stored. This avoids moving and processing all the data from multiple locations into the cloud. Moreover, any of the nodes can be queried to satisfy a request.

“If you don’t get the performance you need, you can add more nodes and increase the data distribution,” says Shadmon. “Now you will have a higher degree of parallelism and less data on each node; it’s a very scalable model.”

AnyLog’s technology can also be used for machine learning at the edge, a market opportunity that excites Shadmon.

AI at the edge

Engineers must decide how they apply machine learning to data at the edge.

The necessary AI training and inferencing hardware can be deployed at the edge, but only if the application justifies such a solution. More commonly, the data is first moved to the cloud, especially when the edge data is spread across locations. Once the data is in the cloud, AI hardware and software can be applied.

“What companies want to do is to enable AI in real-time in a simple and cost-effective way,” says Shadmon. Cloud is used not because it’s a great solution but because the alternative – building and trying to deal with the data at the edge – is much more complicated, he says.

AnyLog’s proprietary solution — and the Linux Foundation open-source EdgeLake equivalent — enables the training of an AI model using federated learning without having to move the local data.

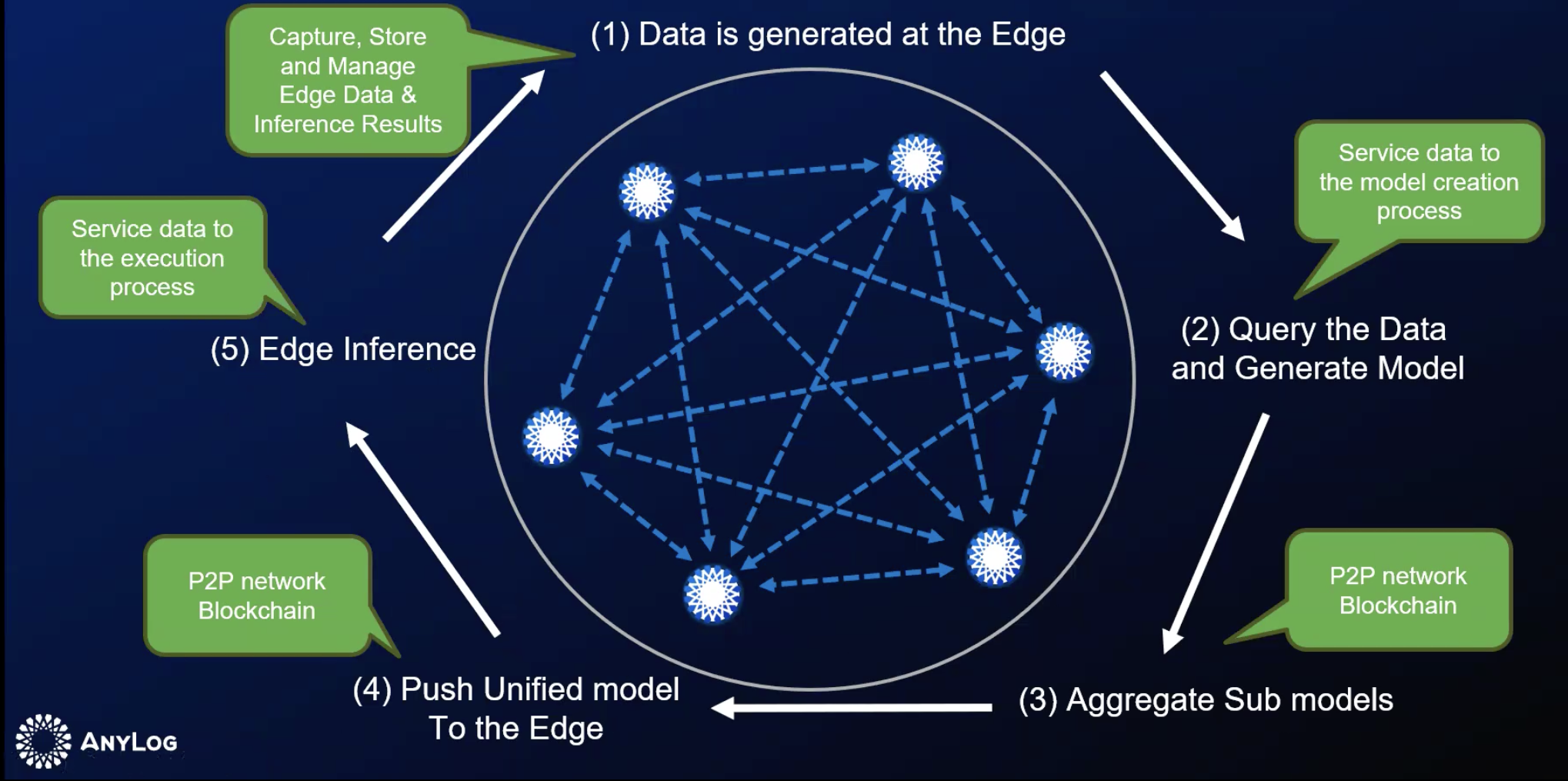

The data at each node is used for local training, creating a ‘sub’ model. The AnyLog software can locate and aggregate all the sub-models to form the complete training model, which is then pushed to each node for AI inferencing at the network edge. The AI learning cycle is repeated – see diagram – to incorporate new data as it is generated.

“All of this is automated,” says Shadmon.

Bypassing the cloud players

Today, the telcos are the leading connectivity providers uploading data from the edge to the cloud.

“But they are not just moving the data; the telcos are also moving the business from the edge to the cloud,” says Shadmon. It is the cloud computing players, not the telcos, that benefit from data hosting and data processing.

However, by virtualizing the data, a telco’s network also serves the end-user’s data requirements; the cloud players are bypassed. Here is an edge opportunity for the telcos. For once, they can take business away from the cloud providers, says Shadmon: “Every per cent of data that remains at the edge and doesn’t go to the cloud is a multi-billion-dollar opportunity for the telcos.”

AnyLog is in discussion with several telcos.

Boosting copper’s reach in the data centre

Marvell has unveiled a chip that enables copper cables to send 1.6 terabits-per-second (Tbps) of data between equipment in the data centre.

Copper cabling, also referred to as direct attach copper, is the standard interconnect used to connect compute nodes in a server, and between servers when building larger computing systems.

Data centre operators prefer to use passive copper cables. A copper cable costs less than an optical cable, a critical consideration when tens of thousands may be used in a large data centre.

Compute servers using the latest processors and AI accelerator chips have increasing input-output (I/O) requirements. This is causing interface speeds between servers, and between servers and switches, to keep doubling—from 400 gigabits to 800 gigabits and soon 1.6Tbps.

Moreoever, with each speed hike, the copper cable’s reach shrinks. A copper cable sending 25 gigabits of data has a reache of 7m, but it is only 2m at 100Gbps and is only 1m at 200Gbps.

The solution is to add a digital signal processing (DSP) chip to the passive copper cabling to create an ’active’ electrical cable. The chip boosts the signal thereby extending the reach. (See diagram below.)

“As speeds go up and the physical distance remains the same, the interconnects have to become active,” says Venu Balasubramanian, vice president of product marketing, connectivity business unit at Marvell.

Marvell says its Alaska chip is the industry’s first to enable 1.6Tbps active electrical cables.

Data centre networking

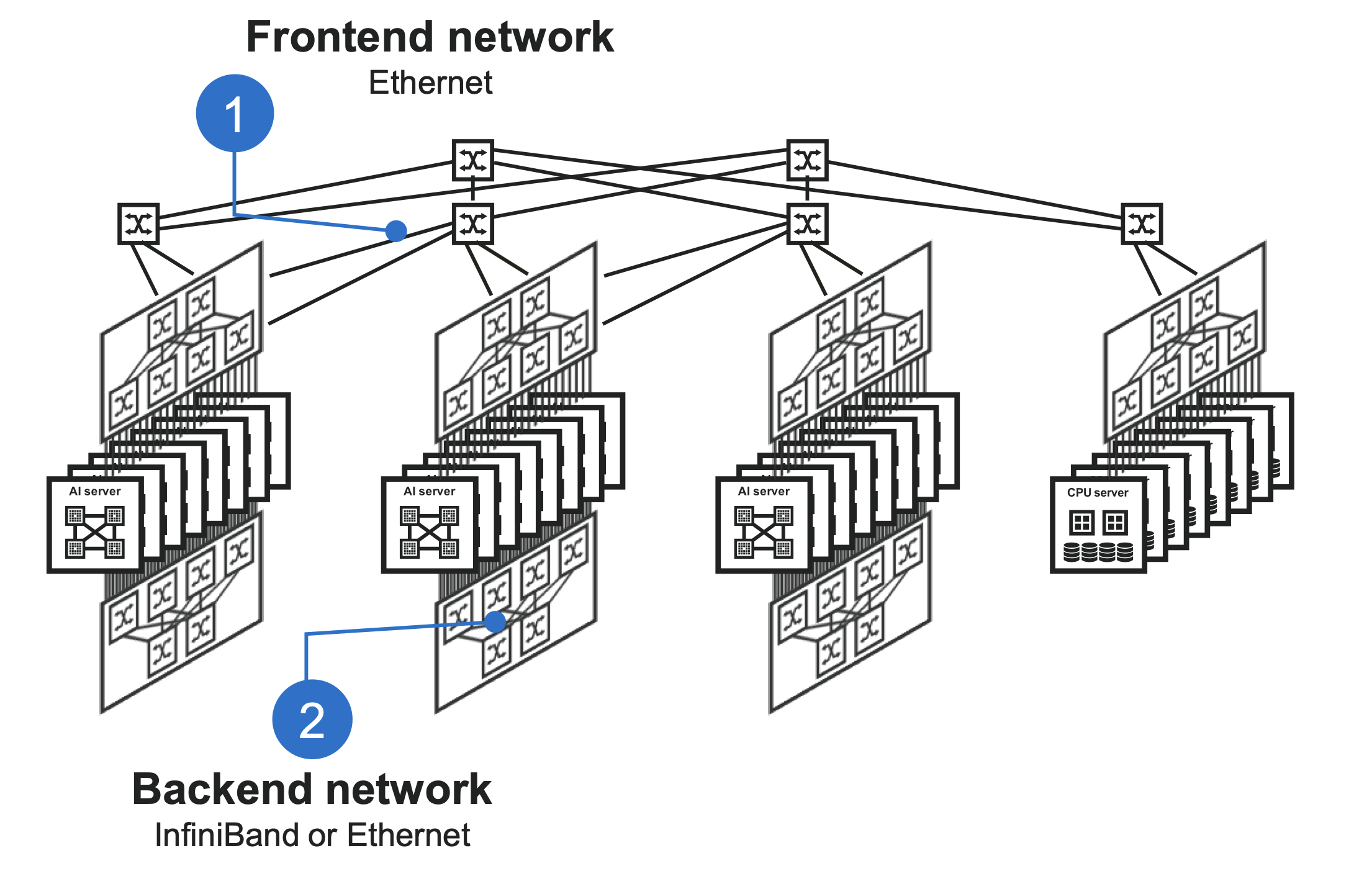

Two main networks – the front-end and backend – are used in data centres supporting AI workloads.

The front-end network, using a traditional Clos network, interfaces servers with the outside world. The Clos network uses a hierarchy of Ethernet switches, with the top-of-rack switches connecting a rack’s servers to leaf and spine switches. The network enables any server to communicate with any other server.

The second, backend network, is optimised to meet the networking requirements of AI. When training an AI model, the servers’ accelerator chips perform intensive calculations before exchanging their results. These steps are repeated many times. The goal is to keep the AI accelerators occupied while ensuring minimal delay when data is exchanged. The backend network’s protocol used to meet these traffic requirements is either Infiniband or Ethernet.

The diagram below shows the typical reaches connecting the compute nodes in a rack and between racks.

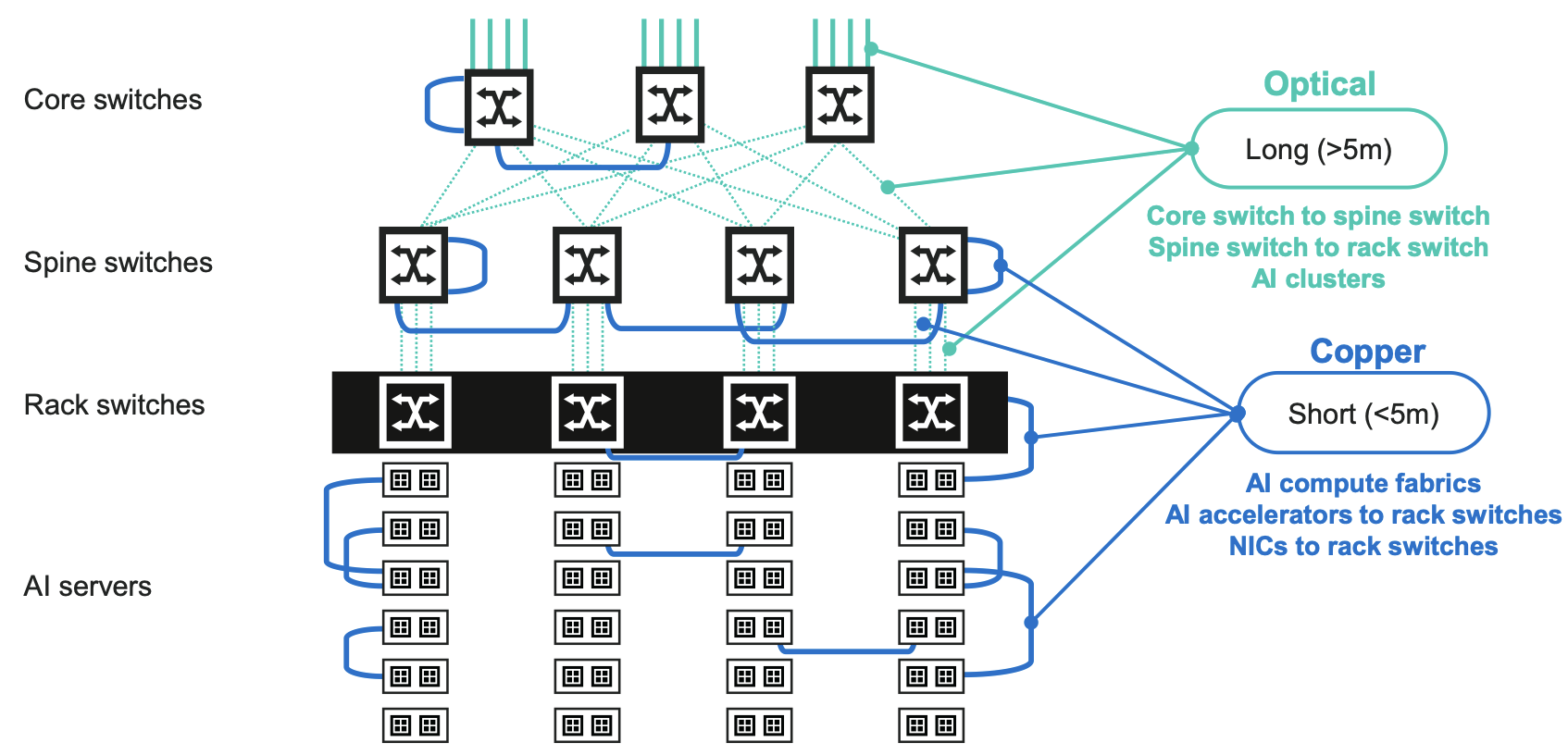

Copper links are preferred for all the links within reach. These are the point-to-point links in a rack and the connections between ervers and the top-of-rack switches. Links between adjacent racks or switches are also within copper’s reach. But optical connections must be used for distances 5m and greater.

“Up to five meters, and previously seven meters, you could connect with passive copper, that has been the interconnect all along,” says Balasubramonian. “Now those links are getting replaced with active copper, and if copper can do it, that is what customers prefer.”

AI accelerator chips’ continual processing performance advancement is also reflected in their I/O requirements.

Nvidia’s latest Blackwell graphics processing unit (GPU) uses 200 gigabit-per-second serialiser-deserialisers (serdes) while AI accelerator designs from other vendor are also adopting 200-gigabit serdes, says Balasubramonian.

The drastic shortening of the reach of passive copper cabling at 200Gbps is driving active electrical cabling usage.

The Alaska DSP

The Alaska chip is implemented using a 5nm CMOS process. To achieve 1.6Tbps, the DSP supports eight channels at 200Gbps, each implementing 4-level pulse amplitude modulation (PAM-4) signalling.

The DSP device amplifies, equalises, and reshapes the signals to achieve extended link distances. The Alaska chip also has a ‘gearbox’ feature that translates between signal speeds. This enables end users to adopt new servers with AI chips that support 200Gbps while using existing switches which may only have 100Gbps ports.

The Alaska chip also includes telemetry and debug features so that data centre operators can note the status of traffic flows and any networking issues.

The chip measures 12mm x14mm, to occupy as little space as possible inside a QSFP-DD or OSFP module, says Balasubramonian.

Using the Alaska device for active electrical cabling means 50Gbps signals can span over 7m, 100Gbps signals over 5m, and 200Gbps signals over 3m.

The 1.6Tbps active electrical cables using the Alaska device also use thinner gauge copper wire. The thinner wiring makes connecting systems easier as the thinner gauge cabling has a higher bend radius. The cabling also improves the air flow, helping equipment cooling.

Marvell says it is working with such active electrical cabling specialists as Amphenol, Molex and TE Connectivity.

Future trends

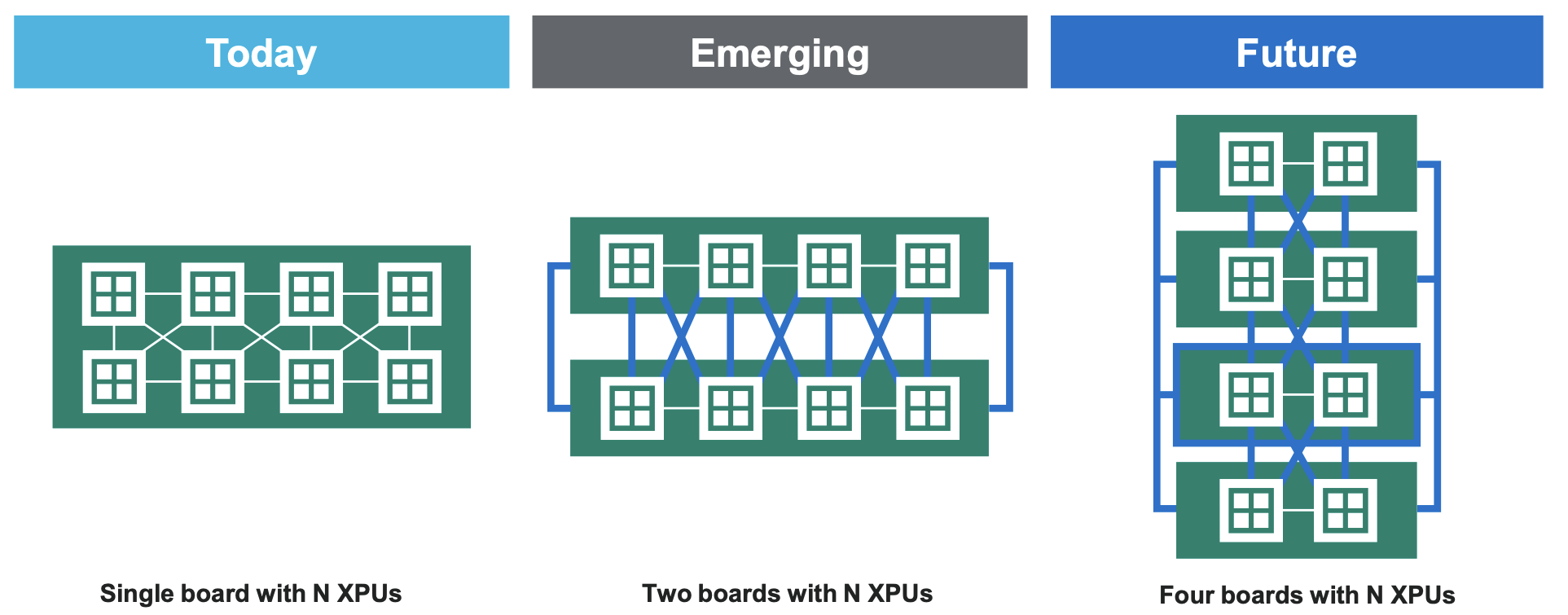

Marvell points out that AI servers are becoming increasingly distributed. The trend is that a board holding N GPUs will become two boards and in future four boards to host the same number of GPUs.

This will requires even more copper interconnects. Passive copper cabling will be used where possible. “If you can do it you with direct attached copper you would do because there will be no power and latency impact [as no DSP chip need be added],” says Balasubramonian.

Marvell expects a combination of passive and active copper cabling to be used in the data centre with the percentage of the links served with passive cabling shrinking as speeds increase.

Marvell typically develops two generations of chip at each speed, with the second released some two years after the first.

The next chip will likely support 1.6Tbps with half the number of channels. This implies 200-gigabit serdes and PAM-4 to achieve 4x400Gbps links.

The cabling will require not just a new generation of serdes but also connectors and cables for a 1.6Tbs active electrical cable implemented using 4x400Gbps channels.

The goal at 400Gbps would be to achieve a reach of 2m. “We don’t yet know [if that is possible],” says Balasubramonian. “It is early.”

Is network traffic growth dwindling to a trickle?

“Network capacities are sufficient, and with data usage expected to plateau in the coming years, further capacity expansion is not needed. We have reached the end of history for communications.”

– Willian Webb, The End of Telecoms History

William Webb has pedigree when it comes to foreseeing telecoms trends.

Webb wrote The 5G Myth in 2016, warning that 5G would be a flop.

In the book, he argued that the wireless standard’s features would create limited interest and fail to grow revenues for mobile operators.

The next seven years saw the telcos promoting 5G and its capabilities. Now, they admit their considerable investments in 5G have delivered underwhelming returns.

His latest book, The End of Telecoms History, argues that telecoms has reached a maturity that satisfies the link speeds needed and that traffic growth is slowing.

“There will be no end of new applications,” says Webb. “But they won’t result in material growth in data requirements or in data speeds.”

What then remains for the telcos is filling in the gaps to provide connectivity everywhere.

Traffic growth slowdown

Earlier this year, AT&T’s CEO, John Stankey, mentioned that its traffic had grown 30 per cent year over year, the third consecutive year of such growth for the telco. The 30 per cent annual figure is the typical traffic growth rate that has been reported for years.

“My take is that we are at about 20 per cent a year annual growth rate worldwide, and it’s falling consistently by about 5 per cent a year,” says Webb.

In 2022, yearly traffic growth was 30 per cent; last year, it was 25 per cent. These are the average growth rates, notes Webb, and there are enormous differences worldwide.

“I was just looking at some data and Greece grew 45 per cent whereas Bahrain declined 10 per cent,” says Webb. “Clearly, there will be big differences between operators.”

He also cites mobile data growth numbers from systems vendor Ericsson. In North America, the growth between 2022 and 2024 was 24 per cent, 17 per cent, and 26 per cent.

“So it is fluctuating around the 20 per cent mark,” says Webb.

Other developments

What about trends like the ever-greater use of digital technologies experienced by many industries, including telecoms? Or the advent of artificial intelligence (AI), which is leading to significant data centre builds, and how AI is expected to change traffic?

“If you look at all non-personal data use, such as the Internet of Things and so on, traffic levels are tiny,” says Webb. There are exceptions, such as security cameras generating video streams. “I don’t see that trend materially changing overall data rates,” says Webb.

He also doesn’t see AI meaningfully growing overall traffic. AI is useful for improving the running of networks but not changing the amount of wireless traffic. “If anything, it might reduce it because you can be more intelligent about what you need to send,” he says.

While Webb admits that AI data centre builds will require extra fixed networking capacity, as will sharing workloads over distributed data centres in a metropolitan area, he argues that this represents a tiny part of the overall network.

He does not see any new devices emerging that will replace the smartphone, dramatically changing how we consume and interact with data.

5G and 6G

Webb also has doubts about the emerging 6G wireless standard. The academic community is busy developing new capabilities for the next wireless standard. “The problem with that is that academics are generally not grounded in the reality of what will make money in the future,” says Webb. Instead, developers should challenge academics to develop the technologies needed for their applications to succeed.

Webbs sees two 6G camps emerging. The first camp wants 6G to address all the shortfalls of 5G using terahertz frequencies and delivering hundreds of gigabits speeds.

“Let’s max out on everything, and then surely, something wonderful must happen,” says Webb. “This strikes me as not learning the lessons of 5G.”

The second camp, including several telcos, does not want to spend any money on 6G but instead wants the technology, in the form of software updates, to address high operational costs and the difficulties in running different network types.

“In this case, 6G improves the operator’s economics rather than improve the end-user offering, which I think makes sense,” says Webb.

“We may end up in a situation where 6G has all this wondrous stuff, and the operators turn around and say they are not interested,” says Webb. “I see a significant risk for 6G, that it just isn’t ever really deployed anywhere.”

Webb’s career in telecoms spans 35 years. His PhD addressed modulation schemes for radio communications. He spent seven years at the UK regulator Ofcom addressing radio spectrum strategy, and he has also been President of the IET, the UK’s equivalent of the IEEE. Webb also co-founded an IoT startup that Huawei bought. For the last 15 years, he has been a consultant covering telecom strategy and technology.

Outlook

The dwindling growth in traffic will impact the telecom industry.

Webb believes the telcos’ revenues will remain the same resulting in somewhat profitable businesses. “They’re making more profit than utilities but less than technology companies,” says Webb.

He also expects there will be more mergers, an obvious reaction to a market flattening out. The aim is to improve profitability.

Given his regulatory background, is that likely? Regulators shun consolidation as they want to keep competition high. He expects it to happen indirectly, with telcos increasingly sharing networks. Each market will offer three or four brands for consumers per market but fewer networks; operators merging in all but name.

Will there even be a need for telecom consultants? “I have to say, as I’ve made these predictions, I’ve been thinking what am I needed for now?” says Webb, laughing.

If he is right, the industry will be going through a period of change.

But if the focus becomes extending connectivity everywhere, there is work to be done in understanding and addressing the regulatory considerations, and also how best to transition the industry.

“I do suspect that just as the rest of the industry is effectively more a utility, it will need fewer and fewer consultants,” he says.