OFC 2025: industry reflections

Gazettabyte is asking industry figures for their thoughts after attending the recent 50th-anniversary OFC show in San Francisco. Here are the first contributions from Huawei’s Maxim Kuschnerov, NLM Photonics’ Brad Booth, LightCounting’s Vladimir Kozlov, and Jürgen Hatheier, Chief Technology Officer, International, at Ciena.

Maxim Kuschnerov, Director of R&D, Huawei

The excitement of last year’s Nvidia’s Blackwell graphics processing unit (GPU) announcement has worn off, and there was a slight hangover at OFC from the market frenzy then.

The 224 gigabit-per-second (Gbps) opto-electronic signalling is reaching mainstream in the data centre. The last remaining question is how far VCSELs will go—30 m or perhaps even further. The clear focus of classical Ethernet data centre optics for scale-out architectures is on the step to 448Gbps-per-lane signalling, and it was great to see many feasibility demonstrations of optical signalling showing that PAM-4 and PAM-6 modulation schemes will be doable.

The show demonstrations either relied on thin-film lithium niobate (TFLN) or the more compact indium-phosphide-based electro-absorption modulated lasers (EMLs), with thin-film lithium niobate having the higher overall optical bandwidth.

Higher bandwidth pure silicon Mach-Zehnder modulators have also been shown to work at a 160-175 gigabaud symbol rate, sufficient to enable PAM-6 but not high enough for PAM-4 modulation, which the industry prefers for the optical domain.

Since silicon photonics has been the workhorse at 224 gigabits per lane for parallel single-mode transceivers, a move away to thin-film lithium niobate would affect the density of the optics and make co-packaged optics more challenging.

With PAM-6 being the preferred modulation option in the electrical channel for 448 gigabit, it begs the question of whether the industry should spend more effort on enabling PAM-6 optical to kill two birds with one stone: enabling native signalling in the optical and electrical domains would open the door to all linear drive architectures, and keep the compact pure-silicon platform in the technology mix for optical modulators. Just as people like to say, “Never bet against copper,” I’ll add, “Silicon photonics isn’t done until Chris Doerr says so.”

If there was one topic hotter than the classical Ethernet evolution, it was the scale-up domain for AI compute architectures. The industry has gone from scale-up in a server to a rack-level scale-up based on a copper backplane. But future growth will eventually require optics.

While the big data centre operators have yet to reach a conclusion about the specifications of density, reach, or power, it is clear that such optics must be disruptive to challenge the classical Ethernet layer, especially in terms of cost.

Silicon photonics appears to be the preferred platform for a potential scale-up, but some vendors are also considering VCSEL arrays. The challenge of merging optics onto the silicon interposer along with the xPU is a disadvantage for VCSELs in terms of tolerance to high-temperature environments.

Reliability is always discussed when discussing integrated optics, and several studies were presented showing that optical chips hardly ever fail. After years of discussing how unreliable lasers seem, it’s time to shift the blame to electronics.

But before the market can reasonably attack optical input-output for scale-up, it has to be seen what the adoption speed of co-packaged optics will be. Until then, linear pluggable optics (LPO) or linear retimed optics (LRO) pluggables will be fair game in scaling up AI ‘pods’ stuffed with GPUs.

Brad Booth, CEO of NLM Photonics

At OFC, the current excitement in the photonics industry was evident due to the growth in AI and quantum technologies. Many of the industry’s companies were represented at the trade show, and attendance was excellent.

Nvidia’s jump on the co-packaged optics bandwagon has tipped the scales in favour of the industry rethinking networking and optics.

What surprised me at OFC was the hype around thin-film lithium niobate. I’m always concerned when I don’t understand why the hype is so large, yet I have still to see the material being adopted in the datacom industry.

Vladimir Kozlov, CEO of LightCounting

This year’s OFC was a turning point for the industry, a mix of excitement and concern for the future. The timing of the tariffs announced during the show made the event even more memorable.

This period might prove to be a peak of the economic boom enabled by several decades of globalisation. It may also be the peak in the power of global companies like Google and Meta and their impact on our industry.

More turbulence should be expected, but new technologies will find their way to the market.

Progress is like a flood. It flows around and over barriers, no matter what they are. The last 25 years of our industry is a great case study.

We are now off for another wild ride, but I look forward to OFC 2050.

Jürgen Hatheier, Chief Technology Officer, International, at Ciena

This was my first trip to OFC, and I was blown away. What an incredible showcase of the industry’s most innovative technology

One takeaway is how AI is creating a transformative effect on our industry, much like the cloud did 10 years ago and smartphones did 20 years ago.

This is an unsurprising observation. However, many outside our industry do not realise the critical importance of optical technology and its role in the underlying communication network. While most of the buzz has been on new AI data centre builds and services, the underlying network has, until recently, been something of an afterthought.

All the advanced demonstrations and technical discussions at OFC emphasise that AI would not be possible without high-performance network infrastructure.

There is a massive opportunity for the optical industry, with innovation accelerating and networking capacity scaling up far beyond the confines of the data centre.

The nature of AI — its need for intensive training, real-time inferencing at the edge, and the constant movement of data across vast distances between data centres — means that networks are evolving at pace. We’re seeing a significant architectural shift toward more agile, scalable, and intelligent infrastructure with networks that can adapt dynamically to AI’s distributed, data-hungry nature.

The diversity of optical innovation presented at the conference ranged from futuristic Quantum technologies to technology on the cusp of mainstream adoption, such as 448-gigabit electrical lanes.

The increased activity and development around high-speed pluggables also show how critical coherent optics has become for the world’s most prominent computing players.

oDAC: Boosting data centre speeds with less power

Academics have developed an optical digital-to-analogue converter (oDAC) that promises to rethink how high-speed optical transmission is done.

Conceived under the European Commission-funded Flex-Scale project for 6G front-haul, the oDAC also promises terabit links inside the data centre.

The oDAC is expected to deliver a 40 per cent power savings for a 1.6 terabit optical transmitter, the ‘send’ path of an optical module.

“It might not be not 50 or 60 per cent, but in this field, even a 25 per cent power saving turns heads,” says Ioannis Tomkos, a professor at the Department of Electrical and Computer Engineering at the University of Patras, Greece, one of the researchers leading the work.

The first proof-of-concept oDAC photonic integrated circuit (PIC) has sent 250 gigabits per second (Gbps) over a single wavelength as part of the European Proteus programme.

The goal is to bring the oDAC to market in 2026.

High-speed optical transmission challenges

An optical interface acts as a gateway between the electrical and optical domains.

The main two classes of optical interfaces—pluggable modules for the data centre and coherent designs for longer-distance links—continue to grow in data rate.

The upcoming rate today is 1.6 terabits per second (Tbps), with 3.2Tbps optical links are in development. But going faster adds design complexity and consumes extra power.

Faster electrical signalling must use encoding schemes such as 4-level pulse amplitude modulation (PAM-4). And in the optical domain, PAM-4 is used in the data centre while higher-order modulation schemes such as 16-ary quadrature amplitude modulation (16-QAM) are used for long-haul optical transmissions. Quadrature amplitude modulation uses amplitude and phase modulation thyat doubles transmission capacity.

Such schemes require fast analogue-to-digital converters (ADCs), digital-to-analogue converters (DACs), and digital signal processing (DSPs) to compensate for transmission impairments. But as speeds increase, so does the signalling complexity and sampling rates, driving up the overall cost and power consumption.

The trends are leading researchers to consider alternative approaches, such as signal processing in the optical domain, to lessen the demands placed on the DSP and its DACs and ADCs. Researchers are even wondering if such an approach could remove the DSP altogether.

“Step by step,” cautions Tomkos.

Tomkos is working with Professor Moshe Nazarathy, a founder of the oDAC work, at the Faculty of Electrical Engineering at Technion University, Israel.

And it is developing the oDAC where they have first focussed their efforts.

Electronic DAC versus the oDAC

One way to view the oDAC is as a high-speed optical modulator. Another is as a multiplexer of multiple optical amplitude data streams.

The oDAC is a fundamental building block that trades extra optical components to simplify the electrical drivers for the high-speed transmitter. This is how the 40 per cent power saving is achieved.

The oDAC’s architecture is similar to that of a coherent optical transmitter but with notable differences.

In a coherent optical transmitter setup, the laser source is split evenly to feed the in-phase and quadrature Mach-Zehnder modulators (MZMs), with a 90-degree phase shifter in one of the modulator’s arms (see diagram above, left).

In contrast, the oDAC employs a variable splitter and a combiner at the input and output stages, paired with identical Mach-Zehnder modulators (no phase shifter is used in one of the modulator’s arms, see diagram, right). The ODACs can be used in a nested arrangement, as part of in-phase and quadrature arms, for coherent optical transmission.

Conventional electronic DACs (eDACs in the diagram) sample the data at least as high as the symbol rate and have a finite bit resolution, which limits the higher-order modulation schemes that can be used.

They are used to drive the optical Mach-Zehnder modulator, which has a non-linear sine-shaped response. The non-linearity forces the modulator to work only in the linear region of its transfer function. (See graph below.)

This curtailing of the driver saves power but results in ‘modulator loss’ – the full potential of the modulator is not being used, impacting signal recovery at the optical receiver.

In contrast, the oDAC can drive fully the modulator, thereby avoiding the modulator backoff loss.

Another key oDAC benefit is that each of its Mach-Zehnder modulators is driven using simpler PAM driver chips to produce higher-order PAM signals: two standard PAM-4 drivers can produce PAM-16 and using two oDAC PAM-16s can be used to generate PAM-256 (each symbol carrying 4- or 8-bits, respectively).

No commercial electronic PAM-16 drivers exist, says Tomkos.

Scaling data rates using PAM-4 drivers

A PAM-4 driver for the oDAC’s Mach-Zehnder modulator arm produces a four-level “staircase” waveform. Adjusting the oDAC’s splitter ratio to 4:1 and summing the outputs yields 16 distinct levels (diagram, below)

n effect, two simple signals can be stacked in multiple combinations to mimic a complex one. For PAM-16, one Mach-Zehnder modulator handles levels 0, 1, 2, and 3, while the other one, scaled differently (e.g., 0, 4, 8, 12), ensures a sum from 0 to 15.

The catch? Achieving a smooth staircase signal requires precise in-phase combining and level controls so there are no differences between the two Mach-Zehnder arms, which requires careful circuit control.

“Every programmable photonic circuit in general, for whatever application, needs some parametric control of the actual circuit,” says Tomkos. “For our case, it is so that it will not deviate if you change the temperature if you have vibrations or any other environmental changes.”

David Moor, a post-doctorate researcher at ETH-Zürich, part of the Flex-Scale project, and the director of photonic IC design at Emitera, the start-up tasked with bringing the oDAC to market, has been putting the prototype oDAC photonic integrated circuit through lab tests.

To send 500 Gbps over a single wavelength, a two-arm oDAC is used, with each PAM4-driven arm operating at 120 gigabaud symbol rate, or 250Gbps. While using two oDACs feeding an in-phase and quadrature coherent structure, doubles the data rate to 1Tbps.

Then, using a pair of PAM-16 oDACs (each driven by a pair of PAM-4 signals, in-phase and quadrature-combined in a coherent transmitter structure, further doubles the data rate to 1.6Tbps.

Transmissions at 3.2 terabits would need the symbol rate at 240 GBd.

What next?

Professor Nazarathy, working with Professor Birbas and his team at the University of Patras, are developing an FPGA-based control system to ensure the device operates optimally in real-world conditions.

“In the lab, the device has been quite stable,” says Moor. But any environmental changes throw it off track. oDAC device needs robust control to be a commercial product.

A second-generation oDAC photonic integrated circuit design and an FPGA-based control system are in the works and are expected to be up and running in six months.

Applications: Data centres and front-haul

“The higher-order the modulation format used, from 16-QAM to 256-QAM, the less the distance,” says Tomkos. “This is a law of information theory. You cannot do otherwise; nobody can.”

But the benefit of the design grows the higher the modulation order and the higher bit rate. Thus, the oDAC comes into its own when using 16-QAM and higher-order signalling schemes.

Accordingly, the ODAC’s sweet spot is for links up to 20 or even 40km, where terabits of data can be pushed over an optical wavelength. This makes the oDAC concept ideal for “coherent-lite” spans between campuses and when used inside the data centre.

Crossing oceans: Loi Nguyen's engineering odyssey

Loi Nguyen arrived in the US with nothing but determination and went on to co-found Inphi, a semiconductor company acquired by Marvell for $10 billion. Now, the renowned high-speed semiconductor entrepreneur is ready for his next chapter.

“What is the timeline?”

It’s a question the CEO of Marvell, Matt Murphy, would pose to Loi Nguyen each year during their one-on-one meetings. “I’ve always thought of myself as a young guy; retirement seemed far away,” says Nguyen. “Then, in October, it seemed like the time is now.”

Nguyen will not, however, disappear. He will work on specific projects and take part in events, but this will no longer be a full-time role.

Early life and journey to the US

One of nine children, Nguyen grew up in Ho Chi Minh City, Vietnam. Mathematically inclined from an early age, he faced limited options when considering higher education.

“In the 1970s, you could only apply to one university, and you either passed or failed,” he says. “That decided your career.”

Study choices were also limited, either engineering or physics. Nguyen chose physics, believing entrance would be easier.

After just one year at university, he joined the thousands of ‘boat people’ that left Vietnam by sea following the end of the Vietnam War in 1975.

But that one year at university was pivotal. “It proved I could get into a very tough competitive environment,” he says. “I could compete with the best.”

Nguyen arrived in the US with limited English and no money. He found work in his first year before signing up at a community college. Here, he excelled and graduated with first-class honours.

Finding a mentor & purpose

Nguyen’s next achievement was to gain a full scholarship to study at Cornell University. At Cornell, Nguyen planned to earn his degree, find a job, and support his family in Vietnam. Then a Cornell academic changed everything.

The late Professor Lester Eastman was a pioneer researcher in high-speed semiconductor devices and circuits using materials such as gallium arsenide and indium phosphide. “Field-effect transistors (FETs), bipolar – any kind of high-speed devices,” says Nguyen. “I was just so inspired by how he talked about his research.”

In his senior year, Nguyen talked to his classmates about their plans. Most students sought industry jobs, but the best students were advancing to graduate school.

“What is graduate school?” Nguyen asked and was told about gaining a doctorate. “How one does that?” he asked and was told about the US Graduate Record Examination (GRE). “I hadn’t a clue,” he says.

The GRE deadline to apply to top US universities was only a week away, including an exam. Nguyen passed. He could now pursue a doctorate at leading US universities, but he chose to stay at Cornell under Professor Eastman: “I wanted to do high-speed semiconductors.”

His PhD addressed gallium arsenide FETs, which became the basis for today’s satellite communications.

Early career breakthroughs

After graduating, he worked for a satellite company focussing on low-noise amplifiers. NASA used some of the work for a remote sensing satellite to study cosmic microwave background radiation. “We were making what was considered the most sensitive low-noise receivers ever,” says Nguyen.

However, the work concluded in the early 1990s, a period of defence and research budget cuts. “I got bored and wondered what to do next,” he says.

Nguyen’s expertise was in specialised compound semiconductor devices, whereas CMOS was the dominant process technology for chip designs. He decided to undertake an MBA, which led to his co-founding the high-speed communications chip company Inphi.

While studying for his MBA, he met Tim Semones, another Inphi co-founder. The third co-founder was Gopal Raghavan whom Nguyen describes as a classic genius: “The guy could do anything.”

Building Inphi: innovation through persistence

The late 1990s internet boom created the perfect environment for a semiconductor start-up. Nguyen, Semones, and Raghavan raised $12 million to found Inphi, shorthand for indium phosphide.

The company’s first decade was focused on analogue and mixed-signal design. The market used 10-gigabit optics, so Inphi focused on 40 gigabits. But then the whole optical market collapsed, and the company had to repurpose.

Inphi went from designing indium phosphide chips at 40 gigabits-per-second (Gbps) to CMOS process circuits for memory working at 400 megabits-per-second (Mbps).

In 2007, AT&T started to deploy 40Gbps, indicating that the optical market was returning. Nguyen asked the chairman for a small team which subsequently developed components such as trans-impedance amplifiers and drivers. Inphi was too late for 40Gbps, so it focussed on chips for 100Gbps coherent optics.

Inphi also identified the emerging cloud data centre opportunity for optics. Initially, Nguyen considered whether 100Gbps coherent optics could be adopted within the data centre. However, coherent was too fast and costly compared to traditional non-return-to-zero (NRZ) signalling-based optics.

It led to Inphi developing a 4-level pulse-amplitude modulation (PAM4) chip. Nguyen says that, at the time, he didn’t know of PAM4 but understood that Inphi needed to develop technology that supported higher-order modulation schemes.

“We had no customer, so we had to spend our own money to develop the first PAM4 chip,” says Nguyen.

Nguyen also led another Inphi group in developing an in-house silicon photonics design capability.

These two core technologies – silicon photonics and PAM4 – would prove key in Inphi’s fortunes and gain the company a key design win with hyperscaler Microsoft with the COLORZ optical module.

Microsoft met Inphi staff at a show and described wanting a 100Gbps optical module that could operate over 80km to link data centre sites yet would consume under 3.5W. No design had done that before.

Inphi had PAM4 and silicon photonics by then and worked with Microsoft for a year to make it happen. “That’s how innovation happens; give engineers a good problem, and they figure out how to solve it,” says Nguyen.

Marvell transformation

The COVID-19 pandemic created unlikely opportunities. Marvell’s CEO, Matt Murphy, and then-Inphi CEO, Ford Tamer, served on the Semiconductor Industry Association (SIA) board together. It led to them discussing a potential acquisition during hikes in the summer of 2020 when offices were closed. By 2021, Marvell acquired Inphi for $10 billion.

“Matt asked me to stay on to help with the transition,” says Nguyen. “I knew that for the transition to be successful, I could play a key role as an Inphi co-founder.”

Nguyen was promoted to manage most of the Inphi optical portfolio and Marvell’s copper physical layer portfolio.

“Matt runs a much bigger company, and he has very well thought-out measurement processes that he runs throughout the year,” he says. “It is one of those things that I needed to learn: how to do things differently.”

The change as part of Marvell was welcome. “It invigorated me and asked me to take stock of who I am and what skills I bring to the table,” says Nguyen.

AI and connectivity

After helping ensure a successful merger integration, Nguyen returned to his engineering roots, focusing on optical connectivity for AI. By studying how companies like Nvidia, Google, and Amazon architect their networks, he gained insights into future infrastructure needs.

“You can figure out roughly how many layers of switching they will need for this and the ratio between optical interconnect and the GPU, TPU or xPU,” he says. “Those are things that are super useful.”

Nguyen says there are two “buckets” to consider: scale-up and scale-out networks. Scale-out is needed when connecting 10,000s, 100,000 and, in the future, 1 million xPUs via network interface cards. Scale-out networks use protocols such as Infiniband or Ethernet that minimise and handle packet loss.

Scale-up refers to the interconnect between xPUs in a very high bandwidth, low latency network. This more local network allows the xPUs to share each other’s memory. Here, copper is used: it is cheap and reliable. “Everyone loves copper,” says Nguyen. But copper’s limitation is reach, which keeps shrinking as signalling speeds increase.

“At 200 gigabits, if you go outside the rack, optics is needed,” he says. “So next-gen scale-up represents a massive opportunity for optics,” he says.

Nguyen notes that scale-up and scale-out grow in tandem. It was eight xPUs in a scale-up for up to a 25,000 xPU scale-out network cluster. Now, it is 72 xPUs scale-up for a 100,000 xPU cluster. This trend will continue.

Beyond Technology

Nguyen’s passion for wildlife photography is due to his wife. Some 30 years ago, he and his wife supported the reintroduction of wolves to the Yellowstone national Park in the US.

After Inphi’s initial public offering (IPO) in 2010, Nguyen could donate money to defend wildlife, and he and his wife were invited to a VIP retreat there.

“I just fell in love with the place and started taking up photography,” he says. Though initially frustrated by elusive wolves, his characteristic determination took over. “The thing about me is that if I’m into something, I want to be the best at it. I don’t dabble in things,” he says, laughing. “I’m very obsessive about what I want to spend my time on.

He has travelled widely to pursue his passion, taking what have proved to be award-winning photos.

Full Circle: becoming a role model

Perhaps most meaningful in Nguyen’s next chapter is his commitment to Vietnam, where he’s been embraced as a high-tech role model and a national hero.

He plans to encourage young people to pursue engineering careers and develop Vietnam’s high-speed semiconductor industry, completing a circle that began with his departure decades ago.

He also wants to spend time with his wife and family, including going on an African safari.

He won’t miss back-to-back Zoom calls and evenings away from home. In the last two years, he estimates that he has been away from home between 60 and 70 per cent of the time.

It seems retirement isn’t an ending but a new beginning.

How CPO enables disaggregated computing

A significant shift in cloud computing architecture is emerging as start-up Drut Technologies introduces its scalable computing platform. The platform is attracting attention from major banks, telecom providers, and hyperscalers.

At the heart of this innovation is a disaggregated computing system that can scale to 16,384 accelerator chips, enabled by pioneering use of co-packaged optics (CPO) technology.

“We have all the design work done on the product, and we are taking orders,” says Bill Koss, CEO of Drut (pictured).

System architecture

The start-up’s latest building block as part of its disaggregated computing portfolio is the Photonic Resource Unit 2500 (PRU 2500) chassis that hosts up to eight double-width accelerator chips. The chassis also features Drut’s interface cards that use co-package optics to link servers to the chassis, link between the chassis directly or, for larger systems, through optical or electrical switches.

The PRU 2500 chassis supports various vendors’ accelerator chips: graphics processing units (GPUs), chips that combine general processing (CPU) and machine learning engines, and field programmable gate arrays (FPGAs).

Drut has been using third-party designs for its first-generation disaggregated server products. More recently the start-up decided to develop its own PRU 2500 chassis as it wanted to have greater design flexibility and be able to support planned enhancements.

Koss says Drut designed its disaggregated computing architecture to be flexible. By adding photonic switching, the topologies linking the chassis, and the accelerator chips they hold, can be combined dynamically to accommodate changing computing workloads.

Up to 64 racks – each rack hosting eight PRU 2500 chassis or 64 accelerator chips – can be configured as a 4096-accelerator chip disaggregated compute cluster. Four such clusters can be networked together to achieve the full 16,384 chip cluster. Drut refers to its compute cluster concept as the DynamicXcelerator virtual POD architecture.

The architecture can also be interfaced to an enterprise’s existing IT resources such as Infiniband or Ethernet switches. “This set-up has scaling limitations; it has certain performance characteristics that are different, but we can integrate existing networks to some degree into our infrastructure,” says Koss.

PRU-2500

The PRU 2500 chassis is designed to support the PCI Express 5.0 protocol. The chassis supports up to 12 PCIe 5.0 slots, including eight double-width slots to host PCIe 5.0-based accelerators. The chassis comes with two or four tFIC 2500 interface cards, discussed in the next section.

The remaining four of the 12 PCIe slots can be used for single-width PCIe 5.0 cards or Drut’s rFIC-2500 remote direct memory access (RDMA) network cards for optical-based accelerator-to-accelerator data transfers.

Also included in the PRU 2500 chassis are two large Broadcom PEX89144 PCIe 5.0 switch chips. Each PEX chip can switch 144 PCIe 5.0 lanes for a total bandwidth of 9.2 terabits-per-second (Tbps).

Co-packaged optics and photonic switching

The start-up is a trailblazer in adopting co-packaged optics. Due to the input-output requirements of its interface cards, Drut chose to use co-packaged optics since traditional pluggable modules are too bulky and cannot meet the bandwidth density requirements of the cards.

There are two types of interface cards. The iFIC 2500 is added to the host while the tFIC 2500 is part of the PRU 2500 chassis, as mentioned. Both cards are a half-length PCIe Gen 5.0 card and each has two variants: one with two 800-gigabit optical engines to support 1.6Tbps of I/O and one with four engines for 3.2Tbps I/O. It should be noted that these cards are used to carry PCIe 5.0 lanes, each lane operating at 32 gigabits-per-second (Gbps) using non-return-to-zero (NRZ) signalling.

The cards interface to the host server and connect to their counterparts in other PRU 2500 chassis. This way, the server can interface with as accelerator resources across multiple PRU 2500s.

Drut uses co-packaged optics engines due to their compact size and superior bandwidth density compared to traditional pluggable optical modules. “Co-package optics give us a high amount of density endpoints in a tiny physical form factor,” says Koss.

The co-packaged optics engines include integrated lasers rather than using external laser sources. Drut has already sourced the engines from one supplier and is also waiting on sources from two others.

“The engines are straight pipes – 800 gigabits to 800 gigabits,” says Koss. “We can drop eight lasers anywhere, like endpoints on different resource modules.”

Drut also uses a third-party’s single-mode-fibre photonic switch. The switch can be configured from 32×32 up to 384×384 ports. Drut will talk more about the photonic switching aspect of its design later this year.

The final component that makes the whole system work is Drut’s management software, which oversees the system’s traffic requirements and the photonic switching. The complete system architecture is shown below.

More development

Koss says being an early adopter of co-package optics has proven to be a challenge.

The vendors are still at the stage of ramping up volume manufacturing and resolving quality and yield issues. “It’s hard, right?” he says,

Koss says WDM-based co-packaged optics are 18 to 24 months away. Further out, he still foresees photonic switching of individual wavelengths: “Ultimately, we will want to turn those into WDM links with lots of wavelengths and a massive increase in bandwidth in the fibre plant.”

Meanwhile, Drut is already looking at its next PRU chassis design to support the PCIe 6.0 standard, and that will also include custom features driven by customer needs.

The chassis could also feature heat extraction technologies such as water cooling or immersion cooling, says Koss. Drut could also offer a PRU filled with CPUs or a PRU stuffed with memory to offer a disaggregated memory pool.

“A huge design philosophy for us is the idea that you should be able to have pools of GPUs, pools of CPUs, and pools of other things such as memory,” says Koss. “Then you compose a node, selecting from the best hardware resources for you.”

This is still some way off, says Koss, but not too far out: “Give us a couple of years, and we’ll be there.”

The long game: Acacia's coherent vision

In 2007, Christian Rasmussen made a career-defining gamble. After attending a conference featuring presentations on coherent optical transmission, he returned home, consulted his family, and quit his job at Mintera, then an optical networking equipment maker.

The technology he’d seen discussed promised to solve the transmission impairments associated with direct-detection-based optical transmission – chromatic dispersion and polarisation mode dispersion – that had stymied optical transport to go beyond 40 gigabits-per-second (Gbps).

“We came back and were completely excited that there was a technology that addressed all the problems that we had experienced firsthand,” says Rasmussen, now Chief Technology Officer at Acacia.

His bet paid off. Acacia which he helped co-found in 2009, had a successful IPO in 2016 and would later be acquired by Cisco Systems for $4.5 billion in 2021.

Unfolding coherent optics

Increasing the baud rate has proved spectacularly successful in accommodating traffic growth in the network and reducing transport costs measured in dollar-per-bit.

In 2009, coherent modems operated around 32 gigabaud (GBd) for 100 gigabit-per-second (Gbps) wavelength transmissions. By 2024, the symbol rate has reached 200GBd, enabling 1.6 terabit-per-second (Tbps) wavelengths.

Is the priority still to keep upping the symbol rate of a single carrier when designing next-generation coherent modems?

“We are not just saying that increasing baud rate is right,” says Rasmussen. The fundamental goal is reducing optical transport’s cost and power consumption. “Increasing the baud rate is generally the right approach to achieve that goal but it’s always to a certain degree.”

Acacia’s focus from the beginning has been on integrating the components that make up the coherent modem. The resulting modem need not be expensive and can deliver higher speed and extra bandwidth economically while meeting the power consumption target, he says.

“Until now, we feel that increasing the baud rate has been the right approach,” says Rasmussen. “The question will be how frequently you can go up in baud rate, now that developments are expensive.”

Given the rising cost of developing coherent modems, upping the baud rate only makes sense if designers can double it with each new design, he says. Increasing the baud rate by 30 or 40 percent is too small a return, given the development effort and the costs involved.

That implies Acacia’s follow-on high-end coherent modem will have a symbol rate of around 280GBd.

Acacia’s coherent modules

Acacia’s Coherent Interconnect Module 8 (CIM 8), launched in 2021, was the industry’s first single-carrier 1.2Tbps pluggable module. The module operates at a 140GBd symbol rate.

At ECOC 2024, the company showcased its 800 gigabit ZR+ OSFP pluggable modules, featuring the Delphi coherent DSP implemented in 4nm CMOS process.

The module supports up to 131GBd and implements interoperable probabilistic constellation shaping. The Acacia module has C-band and L-band variants and supports ultra-long-haul distances when sending 400Gbps over a single carrier (see Table).

Challenges and opportunities

The path forward presents challenges and opportunities. There are several design considerations when developing a coherent DSP ASIC.

One is choosing what CMOS process to use. Considerations include cost – the smaller the geometry the more expensive the design, the transistors’ switching speed, whether the chip’s resulting power consumption is acceptable, and the CMOS process’s maturity. If the process is under development, what confidence is there that it will deliver the promised performance once the ASIC design is completed and ready for manufacturing?

The state-of-the-art CMOS process used for coherent DSPs is 3nm. Ciena’s 200GBd WaveLogic 6e is the first coherent DSP to ship using a 3nm CMOS process. Rasmussen is confident that a 3nm CMOS process can achieve at least a 250GBd symbol rate.

Another consideration is to ensure that the DSP’s analogue-to-digital converters (ADCs) and digital-to-analogue converters (DACs) can achieve the required sampling speed and quality. Typically, the ADC sample at 1.1x-1.2x the baud rate, which, for a 250GBd symbol rate, equates to the order of 300 giga-samples a second (GS/s). Achieving such speeds is exceptionally challenging.

Some research is exploring other ways to keep boosting converter sampling speed. One idea is to split the converter’s design between the DSP and a higher-bandwidth III-V material used for the driver or receiver circuitry.

Rasmussen stresses that the key is to keep the ADCs and DACs in CMOS as a part of the DSP. “Once you start going there [splitting the DAC and ADC designs], you start risking your cost and power advantage of the single-carrier approach,” he says.

Acacia timeline

- 2007: Rasmussen attends pivotal conference on coherent transmission

- 2009: Acacia founded; 32GBd coherent modems achieve 100Gbps

- 2014: Acacia is first to ship samples of a coherent pluggable 100G CFP module and announced the industry’s first 100G coherent transceiver in a single silicon photonics integrated circuit package

- 2021: Cisco acquires Acacia for $4.5 billion

- 2021: Launch of CIM 8 (140GBd, 1.2Tbps)

- 2024: Acacia showcases its 800ZR+ OSFP module

Team-oriented approach

As CTO, Rasmussen emphasises the importance of working with colleagues to make decisions. “I’m very passionate about this: team-oriented decision-making,” he says. His role involves extensive conversations with product managers and colleagues that interact with customers to understand market needs, alongside technical discussions and conference attendance to guide technology development.

This collaborative approach has shaped Acacia’s integration strategy as well as the company becoming more vertically integrated. “Owning the whole stack so you always have everything in control,” as Rasmussen puts it, has proven crucial to their success.

From Denmark to Cisco

Rasmussen’s journey began in Denmark, where he completed his electrical engineering degree and doctorate in optical communications before moving to Boston. There, he joined Benny Mikkelsen, now Acacia’s senior vice president and general manager, at Mintera, where they grappled with the limitations of pre-coherent optical systems.

The struggle with 40Gbps direct-detect optical transport systems ultimately led to that pivotal moment in 2007. “It did not make much commercial sense to struggle so much to get to 40 gigabits,” Rasmussen recalls. When coherent transmission emerged as a solution, he and his colleagues seized the opportunity, despite the industry’s post-dot-com bubble and the 2008 financial crisis.

He began working with Mikkelsen and Mehrdad Givehchi on business plans and developing the technology. “Digital signal processing was new to us, so there was a lot of stuff to learn,” he says.

After being turned down by numerous venture capital firms, one – Matrix Parners- backed the Acacia team, which also received corporate funding from OFS, part of Furukawa Electric.

Beyond Technology

Outside the lab, Rasmussen finds balance in gardening, appreciating its immediate rewards compared to the years-long cycle of DSP design. “It’s nice to do something where you can see the immediate result of your work,” he says.

His interests also extend to reading. He recommends “Right Hand, Left Hand” by Chris McManus, praising its exploration of symmetry in nature, and “The Magic of Silence” by Florian Illies, which examines the enduring relevance of painter Caspar David Friedrich.

Looking ahead, Rasmussen remains optimistic about the industry’s innovative capacity.

He says that semiconductor foundries do not tend to publicise their CMOS transistors’ switching frequency, but it is already above 500GHz and approaching 1,000GHz. This suggests that a DSP supporting a baud rate of 400GBd will be possible. And four to five years hence, two more generations of CMOS after 3nm are likely. This all suggests that a further doubling of baud rate to 500GBd is feasible.

“Just look at the record of innovation at Acacia and other companies in the industry; people keep coming up with solutions,” says Rasmussen.

Steve Alexander's 30-Year Journey at Ciena

After three decades of shaping optical networking technology, Steve Alexander is stepping down as Ciena’s Chief Technology Officer (CTO).

His journey, from working on early optical networking systems to helping to implement AI as part of Ciena’s products, mirrors the evolution of telecommunications itself.

The farewell

“As soon as you say, ‘Hey guys, you know, there’s an end date’, certain things start moving,” says Alexander reflecting on his current transition period. “Some people want to say goodbye, others want more of your time.”

After 30 years of work, the bulk of it as CTO, Alexander is ready to reclaim his time, starting with the symbolic act of shutting down Microsoft Outlook.

“I don’t want to get up at six o’clock and look at my email and calendar to figure out my day,” he says.

His retirement plans blend the practical and the fun. The agenda includes long-delayed home projects and traveling with his wife. “My kids gave us dancing lessons for a Christmas present, that sort of thing,” he says with a smile.

Career journey

The emergence of the erbium-doped fibre amplifier shaped Alexander’s career.

The innovation sparked the US DARPA’s (Defense Advanced Research Projects Agency) interest in exploring all-optical networks, leading to a consortium of AT&T, Digital Equipment Corp., and MIT Lincoln Labs, where Alexander was making his mark.

“I did coherent in the late 80s and early 90s, way before coherent was cool,” he recalls. The consortium developed a 20-channel wavelength division multiplexing (WDM) test bed, though data rates were limited to around 1 Gigabit-per-second due to technology constraints.

“It was all research with components built by PhD students, but the benefits for the optical network were pretty clear,” he says.

The question was how to scale the technology and make it commercial.

A venture capitalist’s tip about a start-up working on optical amplifiers for cable TV led Alexander to Ciena in 1994, where he became employee number 12.

His first role was to help build the optical amplifier. “I ended up doing what effectively was the first kind of end-to-end link budget system design,” says Alexander. “The company produced its first product, took it out into the industry, and it’s been a great result since.”

The CTO role

Alexander became the CTO at Ciena at the end of the 1990s.

A CTO needs to have a technology and architecture mindset, he says, and highlights three elements in particular.

The first includes such characteristics as education and experience, curiosity, and imagination. Education is essential, but over time, it is interchangeable with experience. “They are fungible,” says Alexander.

Another aspect is curiosity, the desire to know how things work and why things are the way they are. Imagination refers to the ability to envisage something different from what it is now.

“One of the nicest things about the engineering skill set, whatever the field of engineering you’re in, is that with the right tools and team of people, once you have the idea, you can make it happen,” says Alexander.

Other aspects of the CTO’s role are talking, travelling, trouble-making, and tantrum throwing. “Trouble-making comes from the imagination and curiosity, wanting to do things maybe a little bit different than the status quo,” says Alexander.

And tantrums? “When things get really bad, and you just have to make a change, and you stomp your foot and pound the table,” says Alexander.

The third aspect a CTO needs is being in the “crow’s nest”, the structure at the top of a ship’s mast: “The guy looking out to figure out what’s coming: is it an opportunity? A threat? And how do we navigate around it,” says Alexander.

Technology and business model evolution

Alexander’s technological scope has grown over time, coinciding with the company’s expanding reach to include optical access and its Blue Planet unit.

“One of the reasons I stayed at the company for 30 years is that it has required a constant refresh,” says Alexander. “It’s a challenge because technology expands and goes faster and faster.”

His tenure saw the transformation from single-channel Sonet/ SDH to 16-channel WDM systems. But Alexander emphasizes that capacity wasn’t the only challenge.

“It’s not just delivering more capacity to more places, the business model of the service providers relies on more and more levels of intelligence to make it usable,” he says.

The gap between cloud operators’ agility and that of the traditional service providers became evident during Covid-19. “The reason we’re so interested in software and Blue Planet is changing that pretty big gap between the speed at which the cloud can operate and the speed at which the service provider can operate.”

Coherent optics

Ciena is shipping the highest symbol rate coherent modem, the WaveLogic 6 Extreme. This modem operates at up to 200 gigabaud and can send 1.6 terabits of data over a single carrier.

Alexander says coherent optics will continue to improve in terms of baud rate and optical performance. But he wonders about the desired direction the industry will take.

He marvels at the success of Ethernet whereas optical communications still has much to do in terms of standardization and interoperability.

There’s been tremendous progress by the OIF and initiatives such as 400ZR, says Alexander: “We are way better off than we were 10 years ago, but we’re still not at the point where it’s as ubiquitous and standardised as Ethernet.”

Such standardisation is key because it drives down cost.

“People have discussed getting on those Ethernet cost curves from the photonic side for years. But that is still a big hurdle in front of us,” he says.

AI’s growing impact

It is still early days for AI, says Alexander, but there are already glimmers of success. Longer term, the impact will likely be huge.

AI is already having an impact on software development and on network operations.

Ciena’s customers have started by looking to do simple things with AI, such as reconciling databases. Service providers have many such data stores: an inventory database, a customer database, a sales database, and a trouble ticket database.

“Sometimes you have a phone number here, an email there, a name elsewhere, things like a component ID, all these different things,” he says. ”If you can get all that reconciled into a consistent source of knowledge, that’s a huge benefit.”

Automation is another area that typically requires using multiple manual systems. There are also research papers appearing where AI is being used to design photonic components delivering novel optical performance.

AI will also impact the network. Humans may still be the drivers but it will be machines that do the bulk of the work and drive traffic.

“If you are going to centralize learning and distributed inferencing, it’s going to have to be closer to the end user,” says Alexander.

He uses a sports application as an example as to what could happen.

“If you’re a big soccer/ football fan, and you want to see every goal scored in every game that was broadcast anywhere in the world in the last 24 hours, ranked in a top-10 best goals listing, that’s an interesting task to give to a machine,” he says.

Such applications will demand unprecedented network capabilities. Data will need to be collected, and there will be a lot of machine-to-machine interactions to generate maybe a 10-minute video to watch.

“If you play those sorts of scenarios out, you can convince yourself that yes, networks are going to have lots of demand placed on them.”

Personal Reflection

While Alexander won’t miss his early morning Outlook checks, he’ll miss his colleagues and the laboratory environment.

A Ciena colleague, paying tribute to Alexander, describes him as being an important steward of Ciena’s culture. “He always has lived by the credo that if you care for your people, people will care for the company,” he says.

Alexander plans to keep up with technology developments, but he acknowledges that losing the inside view of innovation will be a significant change.

When people have asked him why he has stayed at Ciena, his always has answered the same way: “I joined Ciena for the technology but I stayed because of the people.”

Further Information

Ciena’s own tribute, click here

OIF adds a short-reach design to its 1600ZR/ ZR+ portfolio

The OIF (Optical Internetworking Forum) has broadened its 1600-gigabit coherent optics specification work to include a third project, complementing the 1600ZR and 1600ZR+ initiatives.

The latest project will add a short-reach ‘coherent-lite’ digital design to deliver a reach of 2km to 20km and possibly 40km with a low latency below 300ns

The low latency will suit workloads and computing resources distributed across data centres.

“The coherent-lite is more than just the LR (long reach) work that we have done [at 400 gigabits and 800 gigabits],” says Karl Gass, optical vice chair of the OIF’s physical link layer (PLL) working group, adding that the 1600-gigabit coherent-lite will be a distinct digital design.

Doubling the data rate from 800 gigabits to 1600 gigabits is the latest battle line between direct-detect and coherent pluggable optics for reaches of 2km to 40km.

At 800 gigabits, the OIF members debated whether the same coherent digital signal processor would implement 800ZR and 800-gigabit LR. Certain OIF members argued that unless a distinct, coherent DSP is developed, a coherent optics design will never be able to compete with direct-detect LR optics.

“We have that same acknowledgement that unless it’s a specific design for [1600 gigabit] coherent-lite, then it’s not going to compete with the direct detect,” says Gass.

OIF’s 1600-gigabit specification work

The OIF’s 1600-gigabit roadmap has evolved rapidly in the last year.

In September 2023, the OIF announced the 1600ZR project to develop 1.6-terabit coherent optics with a reach of 80km to 120km. In January 2024, the OIF announced it would undertake a 1600ZR+ specification, an enhanced version of 1600ZR with a reach of 1,000km.

The OIF’s taking the lead in ZR+ specification work is a significant shift in the industry, promising industry-wide interoperability compared to the previous 400ZR+ and 800ZR+ developments.

Now, the OIF has started a third 1600-gigabit coherent-lite design.

1600ZR development status

Work remains to complete the 1600ZR Implementation Agreement, the OIF’s specification document. However, member companies have agreed upon the main elements, such as the framing schemes for the client side and the digital signal processing and using oFEC as the forward error correction scheme.

oFEC is a robust forward error correction scheme but adds to the link’s latency. It has also been chosen as the forward error correction scheme for 1600ZR. The OIF members want the ‘coherent-lite’ version to use a less powerful forward error correction to achieve lower latency.

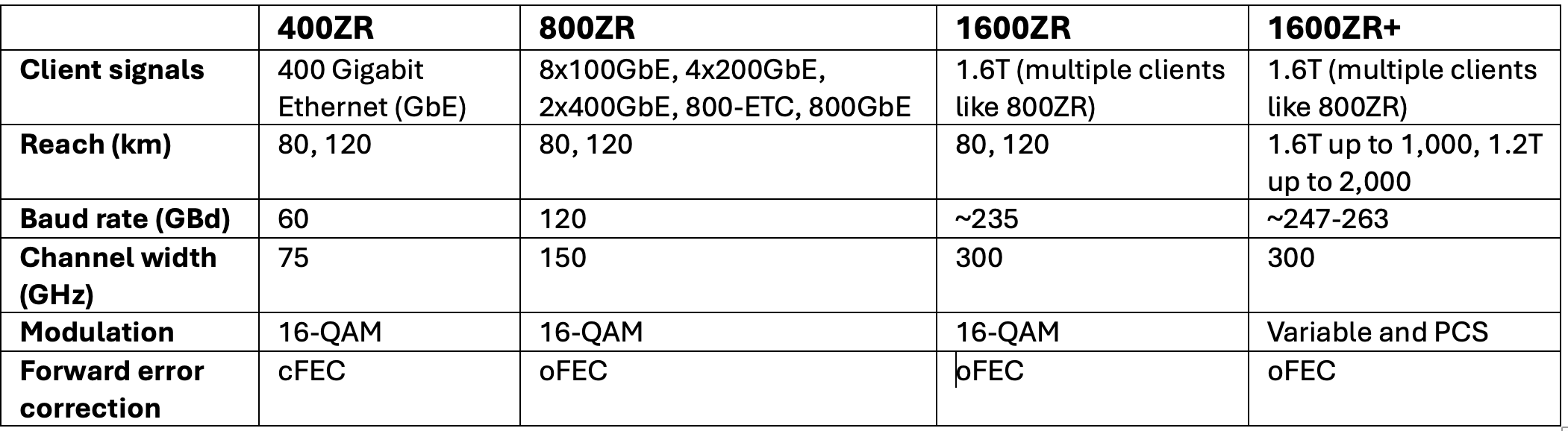

The 1600ZR symbol rate chosen is around 235 gigabaud (GBd), while the modulation scheme is 16-ary quadrature amplitude modulation (16-QAM). The specified reach will be 80km to 120km. (See table below.)

The members will likely agree on the digital issues this quarter before starting the optical specification work. Before completing the Implementation Agreement, members must also spell out interoperability testing.

1600ZR+ development status

The 1600ZR+ work still has some open questions.

One is whether members choose a single carrier, two sub-carriers, or four to achieve the 1,000km reach. The issue is equalisation-enhanced phase noise (EEPN), which imposes tighter constraints on the received laser. Using sub-carriers, the laser constraints can be relaxed, enabling more suppliers. The single-carrier camp argues that sub-carriers complicate the design of the coherent digital signal processor (DSP).

The workgroup members have also to choose the probabilistic constellation shaping to use. Probabilistic constellation shaping gain can extend the reach, but it can also reduce the symbol rate and, hence, the bandwidth specification of the coherent modem’s components.

The symbol rate of the 1600ZR+ is targeted in the range of 247GBd to 263GBd.

Power consumption

The 1600ZR design’s power consumption was hoped to be 26W, but it is now expected to be 30W or more. The 1600ZR+ is expected to be even higher.

The coherent pluggable’s power consumption will depend on the CMOS process that the coherent DSP developers choose for their 1600ZR and 1600ZR+ ASIC designs. Will they choose the state-of-the-art 3nm CMOS process or wait for 2nm or even 1.8nm to become available to gain a design advantage?

Timescales

The target remains to complete the 1600ZR Implementation Agreement document quickly. Gass says the 1600ZR and 1600ZR+ Implementation Agreements could be completed this year, paving the way for the first 1600ZR/ZR+ products in 2026.

“We are being pushed by customers, which isn’t a bad thing,” says Gass.

The coherent-lite design will be completed later given that it has only just started. At present, the OIF will specify the digital design and not the associated optics, but this may change, says Gass.

Books of 2024: Final Part

Gazettabyte has been asking industry figures to pick their reads of 2024. In the final part, Professor Polina Bayvel, Hojjat Salemi, Professor Laura Lechuga, and the editor of Gazettabyte share their selections.

Professor Polina Bayvel, Royal Society Research Professor & Head of the Optical Networks Group, Department of Electronic & Electrical Engineering, UCL

I recently attended a Royal Society Discussion Meeting where Leslie Valiant gave a brilliant talk on educability as a better definition than intelligence. A Harvard professor, he has developed many algorithms that underpin today’s networks, including Valiant’s load balancing. He is a profound thinker, and I wanted immediately to read his book, The Importance of Being Educable: A New Theory of Human Uniqueness.’

Although written in a popular style, it argues that educability (a precisely defined computational model) is a better term than intelligence, for which no agreed definition exists. He explains how we, as a human race, have been able to create the technological civilisation that we have and argues that this civilisation enabler is educability. He also implies that current AI models are not educable. The book is masterful in its lucidity in explaining complex concepts in computation. I really could not put it down.

Another read which has taken my breath away is A. N. Tolstoy’s The Road to Calvary (Russian: Хождение по мукам, romanised: Khozhdeniye po mukam, lit. ’Walking Through Torments’), also translated as Ordeal, is a trilogy set just before the Russian Revolution (starting 1914) and follows the lives of two sisters and their lovers/ husbands goes through the revolution and the Russian Civil War. It was a staple in Soviet schools, but leaving at age 12, I missed it and have only recently read it.

It’s a monument to history, and when one reads it, one realises that the well-to-do Russian liberals who argued for change and the removal of the Czarist rules had no idea what fate would face them or how their lives would change forever.

It made me think of today’s parallel – do we always understand the consequences of wanting liberal changes? The Russian pre-Revolution liberals, the intelligentsia, wanted democracy and more power for the people. What they got was the opposite – totalitarian oppression.

I was also struck by the stark realisation that had WWI not occurred, there would not have been a revolution, and the lives of so many people, including that of my own family, would have followed a completely different course.

Hojjat Salemi, Chief Business Development Officer, Ranovus

Several years ago, I decided to avoid social media platforms like Instagram and TikTok, as well as the news channels Fox News and CNN. I found them to be major distractions and wasteful of time.

I used the time instead to read and listen to author interviews (podcasts) on YouTube, which often provide deeper insights into why they wrote their books and their key ideas. One of the best decisions I’ve made is controlling what I watch on YouTube—without ads! If you’re looking for good books about technology, here are my recommendations:

The book that won the Financial Times Business Book of the Year for 2024 is Supremacy: AI, ChatGPT, and the Race that Will Change the World by Party Olson.

It offers a fascinating narrative starting in 2012, focusing on how AI systems have developed, with a spotlight on two main figures: Dennis Hassabis, co-founder of DeepMind, and Sam Altman, the co-founder of OpenAI.

The book explores three major themes:

- how AI could reshape society as it grows increasingly intelligent,

- the unintended consequences of the technologies we create,

- and the moral dilemmas and risks of pushing these innovations too far. It’s a fast-paced, thought-provoking look at the future.

Another suggestion is Read Write Own: Building the Next Era of the Internet by Chris Dixon. The book is written clearly and engagingly and explains complex ideas like blockchain, NFTs, and decentralised networks. Dixon describes the evolution of the internet: the early days of reading information, the read-write era of social media where people shared but didn’t own content, and the emerging read-write-own era (Web3), where blockchain allows users to own digital assets.

While I’ve been thinking about decentralised networks a lot, I’m still not convinced they can take off, given our geopolitical challenges. Take Bitcoin, for example; if something goes wrong, who do you call? Moreover, Web3’s dominant players still rely on centralised computing power. It’s a thoughtful read, but only time will tell how Web3 unfolds.

Lastly, I recommend Ethics of Socially Disruptive Technologies: An Introduction. The book, available as a free PDF, is highly educational on how new technologies disrupt societal norms and ethical frameworks.

The book examines four specific technologies: social media, robots, climate engineering, and artificial wombs. For instance, social media was supposed to give everyone a voice and bring people together. Instead, it has often divided us, spread misinformation, and allowed foreign powers to interfere in elections. It challenges the idea of “government of the people, by the people, for the people” today. This book is perfect for anyone wanting to understand new technologies’ unintended consequences.

Professor Laura Lechuga, Head of the Nanobiosensors and Bioanalytical Application Group at the Catalan Institute of Nanoscience and Nanotechnology (ICN2).

I love reading and do it frequently, especially during the many work trips I take throughout the year.

My favourite reading of 2024 was Chip War: The Fight for the World’s Most Critical Technology by Chris Miller. It is an impressive book about the development of microelectronics and the pivotal role of chips in shaping the world powers.

Having a PhD focused on microelectronics, I enjoyed reading a book that will become a masterpiece. What I appreciated most were the personal stories of the brilliant scientists and engineers who conceived, developed, and solved all the technical obstacles to transforming the semiconductor industry that helped found some of the most influential companies in the world. This is a must-read book.

My second favourite book was The Maniac by Benjamin Labatut. The book is a combination of history and novel in which Labatut tells the story of brilliant physicists such as John von Neumann, a genius able to invent new fields. But the same prodigy whose work impacted future advances in computing terrified the people around him, and his personal life was miserable. The book describes the evolution of von Neumann’s work through to the battle between AI and a world champion player of the game Go. It is a book that reflects on the limits of technology, an original, addictive, and beautiful read.

Another book I loved in 2024 was Lessons in Chemistry by Bonnie Garmus. It is a feminist novel about how difficult a professional career was for women scientists in the 1960s. I felt totally reflected in it, as our position has not changed much. It is a book that mixes funny and sad situations, is easy to read, very enjoyable, and has a clear message.

My last recommendation is the old Atlas Shrugged book by Ayn Rand. It isn’t easy to read due to its length but it is a fascinating futuristic story about a dystopian United States, and is now more actual than ever. It is a story of how human stupidity gains a significant advantage over intelligence and the devastating consequences for the U.S. This could also be extended to the rest of the world, perhaps a prophecy to be fulfilled in the coming years.

Roy Rubenstein, Editor of Gazettabyte

I read many books in 2024 and will highlight three. One is Strength in What Remains by Tracy Kidder. I had read his most recent book, Rough Sleepers: Dr. Jim O’Connell’s Urgent Mission to Bring Healing to Homeless People, and this was my follow-up read. Kidder is a master storyteller who finds the most remarkable individuals to write about. I highly recommend both.

Dame Hilary Mantel is best known for her Wolf Hall trilogy. Last year, a book of her writings—articles for literary magazines, essays, film reviews, and her BBC Reith Lectures—was published. A Memoir of My Former Self: A Life in Writing is an excellent read by a fabulous writer.

Lastly, I recommend the 55-hour audible version of Alexandre Dumas’s The Count of Monte Cristo. While listening, I walked past the local cinema and realised there was a 2024 film version being shown. I entered, showed the attendant the audible version and asked if the film was shorter.

Books of 2024: Part 3

Gazettabyte is asking industry figures to pick their reads of the year. In the penultimate entry, Prof. Yosef Ben Ezra, Dave Welch, William Webb, and Abdul Rahim share their favourite reads.

Professor Yosef Ben Ezra, PhD, CTO, NewPhotonics

My reading in 2024 continued to augment my technical knowledge with insights on how to bring innovation to the market.

As part of our mission to shift the industry with innovative products, I have been focussing on decision-making as the key to transitioning from technology development to product-market impact and fit. Our company entered a new phase at the beginning of last year, moving from an early-stage technology start-up to a customer-centred growth company. In reading The Lean Startup: How Today’s Entrepreneurs Use Continuous Innovation to Create Radically Successful Businesses by Eric Ries, I better understood how we must apply evidence-based decision-making even as we establish a more agile environment where rapid experimentation and learning from customer input takes precedence over extensive planning and development cycles. This insight was critical as we moved from research to delivering a product that met market demand.

Another instrumental read in 2024 was Thinking, Fast and Slow by Daniel Kahneman. With our team growing quickly, the company leadership began facing significantly broader input and issues tied to decision-making that reached beyond engineering. Kahneman’s insights on the interplay between two systems of thinking—intuitive and deliberate—provided an expanded mindset for dealing with a range of cognitive perspectives and biases that influence contextual, practical, and effective decision-making, which is vital to our progress.

The final book I’ll reference has proven to be an essential follow-up to an earlier read that played an instrumental role in starting our company: Blue Ocean Strategies. We strongly identify with this, so Peter Thiel’s Zero to One: Notes on Startups, or How to Build the Future, was an excellent follow-up for me. Ultimately, it spotlights the importance of originality and boldness in innovation. It aligns strongly with our aim to avoid imitation and incremental improvements to connectivity and instead seek transformative advances that offer substantial, long-term value.

I identify strongly with the idea of pursuing a daring and groundbreaking product introduction that reaches new heights, like the distinction Thiel explains in horizontal versus vertical innovation.

Dave Welch, CEO and Founder, AttoTude

One Summer: America, 1927, by Bill Bryson. A fun read about a similarly fascinating time of technology, politics, and human behavior.

William Webb, Independent Consultant, Board Member and Author

I much prefer fact to fiction and often read books about politics, economics and philosophy. But occasionally Amazon suggests something different and I give it a try. Two such random suggestions this year stood out.

The first is Ingrained: The Making of a Craftsman by Callum Robinson. A true story of a woodworker in Scotland with his own small company that has to suddenly change tack when a major client cancelled a huge order. It’s beautifully written with a love for woodwork, craftsmanship and friends. It’s not normally my sort of thing, but this book is one that you won’t put down and will make you think again about what’s important in life.

My second suggestion is completely different – Why Machines Learn: The Elegant Maths Behind Modern AI by Anil Ananthaswamy. The book sets out the mathematics behind how large language models and other AI systems work. It is written for someone with fairly rudimentary mathematical skills. It isn’t a light read, but I found it valuable to understand just how models are trained and the compromises and choices behind it all. AI is so important for the future and now I feel that I’ve got a good handle on how it works.

Abdul Rahim, Ecosystem Manager, PhotonDelta

The book I enjoyed most this year is Overcrowded: Designing Meaningful Products in a World Awash with Ideas, by Roberto Verganti.

The book treats innovation as a gift towards the beneficiaries of innovation and presents a framework for innovation of meaning. This framework is different from design thinking, which is geared towards finding solutions to a problem in an empathetic manner. Roberto’s framework requires a sparring partner who challenges, questions and criticises in the journey of innovation of meaning. The photonics integrated circuit (PIC) community can learn a lot from this book.

The other book I read – well, listened to – is How to Win Friends and Influence People, by Dale Carnegie. This one needs no explanation.

Books of 2024: Part 2

Gazettabyte asks industry figures to pick their reads of the year. In Part 2, Scott Wilkinson, Nigel Toon and Kailem Anderson select their best reads.

Scott Wilkinson, Lead Analyst, Networking Components, Cignal AI

I spent the year enjoying a poem a day from Brian Bilston’s Days Like These: An Alternative Guide to the Year in 366 Poems.

You may have seen his poems on social media, as he’s sometimes called The Poet Laureate of Twitter. It’s been a joy to end the day with one of his hilarious, occasionally poignant, and always topical poems.

I ended the year completing Andrew Roberts’ Napoleon: A Life. After the disappointing 2023 film, I wanted to know more about the person for whom an era of European history is named. At almost 1,000 pages, the author is remarkably thorough. Napoleon had a brilliant mind and his many achievements are lost in the legend of his military wins and losses. There’s no way I’ll remember all the details in the biography, but living in it for a few months was fascinating.

One book that I recommend to everyone is An Immense World: How Animal Senses Reveal the Hidden Realms Around Us by Ed Yong. In this amazing book, the author spends a chapter on the senses and looks at how animals experience the world around us. We have historically coloured the world based on our ability to perceive it, which is just a small fraction of the stimuli surrounding us. The author doesn’t just cover the five senses that humans rely on but investigates echolocation, the ability of seals to follow fish trails through water, magnetic navigation, and more.

I guarantee you won’t be able to read a chapter without relaying fascinating facts to anyone sitting nearby. The chapter on smell will change how you walk your dog. The chapter on sight will help you understand why we use RGB colour codes – and why they wouldn’t be the right choice for other animals. The chapters on senses humans don’t use will blow your mind and leave you wondering how much you’re missing on a casual walk through the park. It’s a book that any engineer, scientist, or curious mind will enjoy, and it is a great gift. And no, I don’t get residuals.

Every year at the holidays, I get a huge stack of books, a few of which I discovered through these articles. I didn’t get through my complete stack this year thanks to Mr. Bonaparte’s rich history, but that won’t stop me from picking up a few more again this holiday season. I look forward to seeing everyone else’s picks.

Nigel Toon, co-founder and CEO at Graphcore

My recommendation is Henry Kissinger on China. The book offers amazing insights into the relationship between the USA and China, which is as relevant today as when it was written in 2011.

Kailem Anderson, Vice President, Global Products & Delivery, Blue Planet

I love reading books on the history of technology. I’m currently reading Palo Alto: A History of Silicon Valley Capitalism and the World by Malcolm Harris. As an industry, it’s amazing how new technology can become old and then the old becomes new again. We forget the old and recycle many of the same issues from one technology transition to the next. I’m fascinated by learning from the past to see if solutions from our technology past can apply to the future.

I’m also reading Stephen Hawking’s Brief Answer to the Big Questions. This is a great book that stretches the mind on abstract concepts such as the universe, technology, predicting the future, and examining whether artificial intelligence will outsmart us. The book provides an insight into one of the most amazing minds, Stephen Hawking, and asks big-picture questions that we are afraid to ask ourselves.

Lastly, I would recommend Ali—A Life by Jonathan Eig. The book is an amazing read about Muhammad Ali’s life, how he took on the establishment, and how he broke down stereotypes and prejudices. Despite being rejected for his beliefs, Ali stood by his convictions to change people’s perceptions and become one of the greatest and most admired people of the 20th century.