Is the tunable laser market set for an upturn?

Part 2: Tunable laser market

"The tunable laser market requires a lot of patience to research." So claims Vladimir Kozlov, CEO of LightCounting Market Research. Kozlov should know; he has spent the last 15 years tracking and forecasting lasers and optical modules for the telecom and datacom markets.

Source: LightCounting, Gazettabyte

Source: LightCounting, Gazettabyte

The tunable laser market is certainly sizeable; over half a million units will be shipped in 2014, says LightCounting. But the market requires care when forecasting. One subtlety is that certain optical component companies - Finisar, JDSU and Oclaro - are vertically integrated and use their own tunable lasers within the optical modules they sell. LightCounting counts these as module sales rather than tunable laser ones.

Another issue is that despite the development of advanced reconfigurable optical add/ drop multiplexers (ROADMs) and tunable lasers, the uptake of agile optical networking has been limited.

"Verizon is bullish on getting the next generation of colourless, directionless and contentionless ROADMS to reconfigure the network on-the-fly," says Kozlov. "But I'm not so sure Verizon is going to be successful in convincing the industry that this is going to be a good market for [ROADM] suppliers to sell into."

Reconfigurability helps engineers at installations when determining which channels to add or drop, but there is little evidence of operators besides Verizon talking about using ROADMS to change bandwidth dynamically, first in one direction and then the other, he says.

Another indicator of the reduced status of tunable lasers is NeoPhotonics's intention to purchase Emcore's tunable external cavity laser as well as its module assets for US $17.5 million. Emcore acquired the laser when it bought Intel's optical platform division for $85 million in 2007, while Intel acquired it from New Focus in 2002 for $50 million. NeoPhotonics has also spent more in the past: it bought Santur's tunable laser for $39 million in 2011.

"There was so much excitement with so many players [during the optical bubble of 1999-2000], the market was way too competitive and eventually it drove vendors to the point where they would prefer to sell the business for pennies rather than keep it running," says Kozlov. "Emcore has been losing money, it is not a highly profitable business." Yet for Kozlov, Emcore's tunable laser is probably the best in the business with its very narrow line-width compared to other devices.

Tunable laser market

Tunable lasers have failed to get into the mainstream of the industry. "If you look at DWDM, I'm guessing that 70 percent of lasers sold are still fixed wavelength or temperature-tunable over a few wavelengths," says Kozlov. System vendors such as Huawei and ZTE advertise their systems with tunable lasers. "But when we asked them how they are using tunable lasers, they admitted that the bulk of their shipments are fixed-wavelength devices because whatever little they can save on cost, they will."

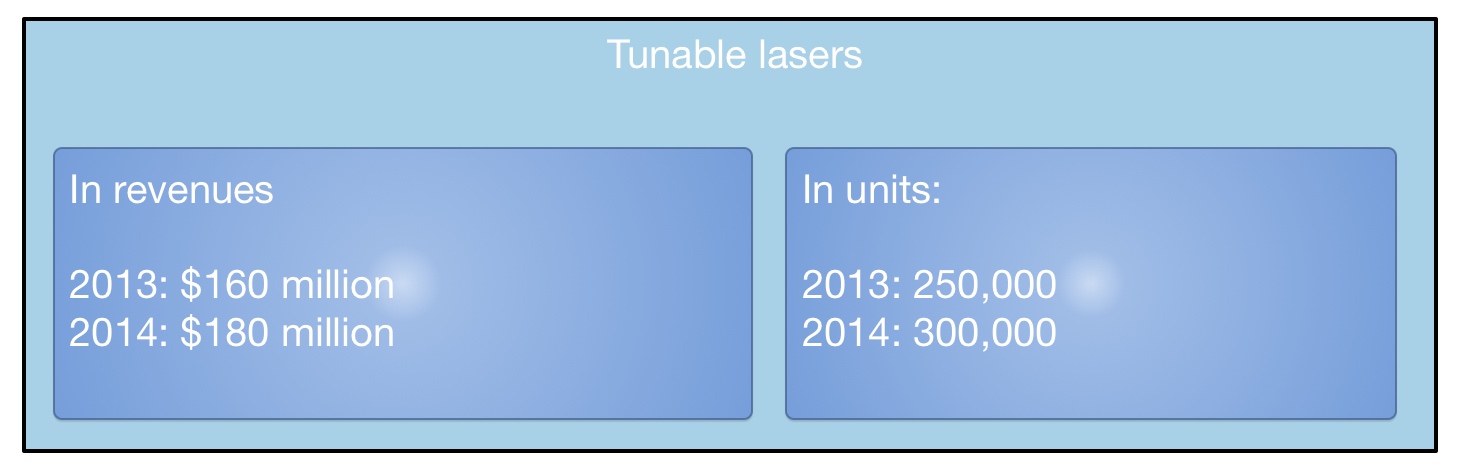

LightCounting valued the 2013 tunable laser market at $160 Million, growing to $180 Million in 2014. This equates to 250,000 units sold in 2013 and 300,000 units this year. "Most of these are for coherent systems," says Kozlov. The number of tunable lasers sold in modules - mainly XFPs but also SFPs and 300-pin modules - is 250,000 million units. "Half a million units a year; if you look at actual shipments, it is quite a lot," says Kozlov.

What next?

"I'm hoping we are reaching the low point in the tunable laser market as vendors are struggling and sales are at a very low valuation," says Kozlov.

The advent of more complex modulation schemes for 400 Gigabit and greater speed optical transmission, and the adoption of silicon photonics-based modulators for long haul will require higher powered lasers. But so much progress has been made by laser designers over the last 15 years, especially during the bubble, that it will last the industry for at least another decade or two, says Kozlov: "Incremental progress will continue and hopefully greater profitability."

For Part 1: NeoPhotonics to expand its tunable laser portfolio, click here

North American operators in an optical spending rethink

Optical transport spending by the North American operators dropped 13 percent year-on-year in the third quarter of 2014, according to market research firm Dell'Oro Group.

Operators are rethinking the optical vendors they buy equipment from as they consider their future networks. "Software-defined networking (SDN) and Network Functions Virtualisation (NFV) - all the futuristic next network developments, operators are considering what that entails," says Jimmy Yu, vice president of optical transport research at Dell’Oro. "Those decisions have pushed out spending."

NFV will not impact optical transport directly, says Yu, and could even benefit it with the greater signalling to central locations that it will generate. But software-defined networks will require Transport SDN. "You [as an operator] have to decide which vendors are going to commit to it [Transport SDN]," says Yu.

SDN and NFV - all the futuristic next network developments, operators are considering what that entails. Those decisions have pushed out spending

The result is that the North American tier-one operators reduced their spending in the third quarter 2014. Yu highlights AT&T which during 2013 through to mid 2014 undertook robust spending. "What we saw growing [in that period] was WDM metro equipment, and it is that spending that has dropped off in the third quarter," says Yu. For equipment vendors Ciena and Fujitsu that are part of AT&T's Domain 2.0 supplier programme, the Q3 reduced spending is unwelcome news. But Yu expects North American optical transport spending in 2015 to exceed 2014's. This, despite AT&T announcing that its capital expenditure in 2015 will dip to US $18 billion from $21 billion in 2014 now that its Project VIP network investment has peaked.

But Yu says AT&T has other developments that will require spending. "Even though AT&T may reduce spending on Project VIP, it is purchasing DirecTV and the Mexican mobile carrier, lusacell," he says. "That type of stuff needs network integration." AT&T has also committed to passing two million homes with fibre once it acquires DirecTV.

Verizon is another potential reason for 2015 optical transport growth in North America. It has a request-for-proposal for metro DWDM equipment and the only issue is when the operator will start awarding contracts. Meanwhile, each year the large internet content providers grow their optical transport spending.

Dell'Oro expects 2014 global optical transport spending to be flat, with 2015 forecast to experience three percent growth

Asia Pacific remains one of the brighter regions for optical transport in 2014. "Partly this is because China is buying a lot of DWDM long-haul equipment, with China Mobile being one of the biggest buyers of 100 Gig," says Yu. EMEA continues to under-perform and Yu expects optical transport spending to decline in 2014. "But there seems to be a lot of activity and it's just a question of when that activity turns into revenue," he says.

Dell'Oro expects 2014 global optical transport spending to be flat compared to 2013, with 2015 forecast to experience three percent growth. "That growth is dependent on Europe starting to improve," says Yu.

One area driving optical transport growth that Yu highlights is interconnected data centres. "Whether enterprises or large companies interconnecting their data centres, internet content providers distributing their networks as they add more data centres, or telecom operators wanting to jump on the bandwagon and build their own data centres to offer services; that is one of the more interesting developments," he says.

NeoPhotonics to expand its tunable laser portfolio

Part 1: Tunable lasers

NeoPhotonics will become the industry's main supplier of narrow line-width tunable lasers for high-speed coherent systems once its US $17.5 million acquisition of Emcore's tunable laser business is completed. Gazettabyte spoke with Ferris Lipscomb of NeoPhotonics about Emcore's external cavity laser and the laser performance attributes needed for metro and long haul.

Key specifications and attributes of Emcore's external cavity laser and NeoPhotonics's DFB laser array. Source: NeoPhotonics.

Key specifications and attributes of Emcore's external cavity laser and NeoPhotonics's DFB laser array. Source: NeoPhotonics.

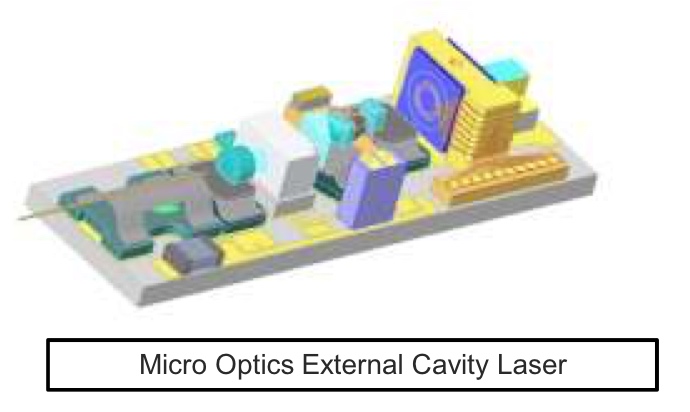

Emcore and NeoPhotonics are leading suppliers of tunable lasers for the 100 Gigabit coherent market, according to market research firm Ovum. NeoPhotonics will gain Emcore's external cavity laser (ECL) on the completion of the deal, expected in January. The company will also gain Emcore's integrable tunable laser assembly (ITLA), micro ITLA, tunable XFP transceiver, tunable optical sub-assemblies, and 10, 40, 100 and 400 Gig integrated coherent transmitter products.

Emcore's ECL has a long history. Emcore acquired the laser when it bought Intel's optical platform division for $85 million in 2007, while Intel acquired the laser from New Focus in 2002 in a $50 million deal. Meanwhile, NeoPhotonics bought Santur's distributed feedback (DFB) tunable laser array in 2011 in a $39 million deal.

The two lasers satisfy different needs: Emcore's is suited for high-speed long distance transmission while NeoPhotonics's benefits metro and intermediate distances.

The Emcore laser uses mirrors and optics external to the gain medium to create the laser's relatively long cavity. This aids high-performance coherent systems as it results in a laser with a narrow line-width. Coherent detection uses a mixing technique borrowed from radio where an incoming signal is recovered by compared it with a local oscillator or tone. "The narrower the line-width, the more pure that tone is that you are comparing it to," says Lipscomb.

Source: NeoPhotonics

Source: NeoPhotonics

A narrower line-width also means less digital signal processing (DSP) is needed to resolve the ambiguity that results from that line-width, says Lipscomb: "And the more DSP power can be spent on either compensating fibre impairments or going further [distances], or compensating the higher-order modulation schemes which require more DSP power to disentangle."

The ECL has a narrow line-width that is specified at under 100kHz. "It is probably closer to 20kHz," says Lipscomb. One of the laser's drawbacks is that its uses a mechanical tuning mechanism that is relatively slow. It also has a lower output power of 16dBm compared to NeoPhotonics's DFB laser array that is up to 18dBm.

The metro market for 100 Gig coherent will emerge in volume towards the end of 2015 or early 2016

In contrast, NeoPhotonics' DFB laser array, suited to metro and intermediate reach applications, has a wider line-width specified at 300kHz, although 200kHz is typical. The DFB design comprises multiple lasers integrated compactly. The laser design also uses a MEMS that results in efficient coupling and the higher - 18dBm - output power. "Using the MEMS structure, you can integrate the laser with other indium phosphide or silicon photonics devices," says Lipscomb. "That is a little bit harder to do with the Emcore device."

Source: NeoPhotonics

Source: NeoPhotonics

It is the compactness and higher power of the DFB laser array that makes it suited to metro networks. The higher output power means that one laser can be used for both transmission and the local oscillator used to recover the received coherent signal. "More power can be good if you can live with the broader line-width," says Lipscomb. "It reduces overall system cost and can support higher-order modulation schemes over shorter distances."

Market opportunities

NeoPhotonics' focus is on narrow line-width lasers for coherent systems operating at 100 Gigabit and greater speeds. Lipscomb says the metro market for 100 Gig coherent will emerge in volume towards the end of 2015 or early 2016. "The distance here is less and therefore less compensation is needed and a little bit more line-width is tolerable," he says. "Also cost is an issue and a more integrated product can have potentially a lower cost."

For long haul, and especially at transmission rates of 200 and 400 Gig, the demands placed on the DSP are considerable. This is where Emcore's laser, with is narrow line-width, is most suited.

System vendors are already investigating 400 Gig and above transmission speeds. "For the high-end, line-width is going to be a critical factor," says Lipscomb. "Whatever modulation schemes there are to do the higher speeds, they are going to be the most demanding of laser performance."

For Part 2: Is the tunable laser market set for an upturn? click here

Alcatel-Lucent serves up x86-based IP edge routing

Alcatel-Lucent has re-architected its edge IP router functions - its service router operating system (SR OS) and applications - to run on Intel x86 instruction-set servers.

Shown is the VSR running on one server and distributed across several servers. Source: Alcatel-Lucent.

Shown is the VSR running on one server and distributed across several servers. Source: Alcatel-Lucent.

The company's Virtualized Service Router portfolio aims to reduce the time it takes operators to launch services and is the latest example of the industry trend of moving network functions from specialist equipment onto stackable servers, a development know as network function virtualisation (NFV).

"It is taking IP routing and moving it into the cloud," says Manish Gulyani, vice president product marketing for Alcatel-Lucent's IP routing and transport business.

IP edge routers are located at the edge of the network where services are introduced. By moving IP edge functions and applications on to servers, operators can trial services quickly and in a controlled way. Services can then be scaled according to demand. Operators can also reduce their operating costs by running applications on servers. "They don't have to spare every platform, and they don't need to learn its hardware operational environment," says Gulyani

Alcatel-Lucent has been offering two IP applications running on servers since mid-year. The first is a router reflector control plane application used to deliver internet services and layer-2/ layer-3 virtual private networks (VPNs). Gulyani says the application product has already been sold to two customers and over 20 are trialling it. The second application is a routing simulator used by customers for test and development work.

More applications are now being made available for trial: a provider edge function that delivers layer-2 and layer-3 VPNs, and an application assurance application that performs layer-4 to layer-7 deep-packet inspection. "It provides application level reporting and control," says Gulyani. Operators need to understand application signatures to make decisions based on which applications are going through the IP pipe, he says, and based on a customer's policy, the required treatment for an app.

Additional Virtualized Service Router (VSR) software products planned for 2015 include a broadband network gateway to deliver triple-play residential services, a carrier Wi-Fi solution and an IP security gateway.

Alcatel-Lucent claims a two rack unit high (2RU) server hosting two 10-core Haswell Intel processors achieves 160 Gigabit-per-second (Gbps) full-duplex throughput. The company has worked with Intel to determine how best to use the chipmaker's toolkit to maximise the processing performance on the cores.

"Using 16, 10 Gigabit ports, we can drive the full capacity with a router application," says Gulyani. "But as more and more [router] features are turned on - quality of service and security, for example - the performance goes below 100 Gigabit. We believe the sweet-spot is in the sub-100 Gig range from a single-server perspective."

In comparison, Alcatel-Lucent's own high-end network processor chipset, the FP3, that is used within its router platforms, achieves 400 Gigabit wireline performance even when all the features are turned on.

"With the VSR portfolio and the rest of our hardware platforms, we can offer the right combination to customers to build a performing network with the right economics," says Gulyani.

Alcatel-Lucent's server router portfolio split into virtual systems and IP platforms. Also shown (in grey) are two platforms that use merchant processors on which runs the company's SR OS router operating system i.e. the company has experience porting its OS onto hardware besides its own FPx devices before it tackled the x86. Source: Alcatel-Lucent.

Alcatel-Lucent's server router portfolio split into virtual systems and IP platforms. Also shown (in grey) are two platforms that use merchant processors on which runs the company's SR OS router operating system i.e. the company has experience porting its OS onto hardware besides its own FPx devices before it tackled the x86. Source: Alcatel-Lucent.

Gazettabyte asked three market research analysts about the significance of the VSR announcement, the applications being offered, the benefits to operators, and what next for IP.

Glen Hunt, principal analyst, transport & routing infrastructure at Current Analysis

Alcatel-Lucent's full routing functionality available on an x86 platform enables operators to continue with their existing infrastructures - the 7750SR in Alcatel-Lucent's case - and expand that infrastructure to support additional services. This is on less expensive platforms which helps support new services that were previously not addressable due to capital expenditure and/ or physical restraints.

The edge of the service provider network is where all the services live. By supporting all services in the cloud, operators can retain a seamless operational model, which includes everything they currently run. The applications being discussed here are network-type functions - Evolved Packet Core (EPC), broadband network gateway (BNG), wireless LAN gateways (WLGWs), for example - not the applications found in the application layer. These functions are critical to delivering a service.

Virtualisation expands the operator’s ability to launch capabilities without deploying dedicated routing/ device platforms, not in itself a bad thing, but with the ability to spin up resources when and where needed. Using servers in a data centre, operators can leverage an on-demand model which can use distributed data centre resources to deliver the capacity and features.

Other vendors have launched, or are about to launch, virtual router functionality, and the top-level stories appear to be quite similar. But Alcatel-Lucent can claim one of the highest capacities per x86 blade, and can scale out to support Nx160Gbps in a seamless fashion; having the ability to scale the control plane to have multiple instances of the Virtualized Service Router (VSR) appear as one large router.

Furthermore, Alcatel-Lucent is shipping its VSR route reflector and the VSR simulator capabilities and is in trials with VSR provider edge and VSR application assurance – noting it has two contracts and 20-plus trials. This shows there is a market interest and possibly pent-up demand for the VSR capabilities.

It will be hard for an x86 platform to achieve the performance levels needed in the IP core to transit high volumes of packet data. Most of the core routers in the market today are pushing 16 Terabit-per-second of throughput across 100 Gigabit Ethernet ports and/ or via direct DWDM interfaces into an optical transport core. This level of capability needs specialised silicon to meet demands.

Performance will remain a key metric moving forward, even though an x86 is less expensive than most dedicated high performance platforms, it still has a cost basis. The efficiency which an application uses resources will be important. In the VSR case, the more work a single blade can do, the better. Also of importance is the ability for multiple applications to work efficiently, otherwise the cost savings are limited to the reduction in hardware costs. If the management of virtual machines is made more efficient, the result is even greater efficiency in terms of end-to-end performance of a service which relies on multiple virtualised network functions.

Ultimately, more and more services will move to the cloud, but it will take a long time before everything, if ever, is fully virtualised. Creating a network that can adapt to changing service needs is a lengthy exercise. But the trend is moving rapidly to the cloud, a combination of physical and virtual resources.

Michael Howard, co-founder and principal analyst, Infonetics Research

There is overwhelming evidence from the global surveys we’ve done with operators that they plan to move functions off the physical IP edge routers and use software versions instead.

These routers have two main functions: to handle and deliver services, and to move packets. I’ve been prodding router vendors for the last two years to tell us how they plan to package their routing software for the NFV market. Finally, we hear the beginnings, and we’ll see lots more software routing options.

The routing options can be called software routers or vRouters. The services functions will be virtualised network functions (VNFs), like firewalls, intrusion detection systems and intrusion prevention systems, deep-packet inspection, and caching/ content delivery networks that will be delivered without routing code. This is important for operators to see what routing functions they can buy and run in NFV environments on servers, so they can plan how to architect their new software-defined networking and NFV world.

It is important for router vendors to play in this world and not let newcomers or competitors take the business. Of course, there is a big advantage to buy their vRouter software — route reflection for example — from the same router vendor they are already using, since it obviously works with the router code running on physical routers, and the same software management tools can be used.

Juniper has just made its first announcement. We believe all router vendors are doing the same; we’ve been expecting announcements from all the router vendors, and finally they are beginning.

It will be interesting to see how the routing code is packaged into targeted use cases - we are just seeing the initial use cases now from Juniper and Alcatel-Lucent - like the route reflection control plane function, IP/ MPLS VPNs and others.

Despite the packet-processing performance achieved by Alcatel-Lucent using x86 processors, it should be noted that some functions like the control plane route reflection example only need compute power, not packet processing or packet-moving power.

There already is, and there will always be, a need for high performance for certain places in the network or for serving certain customers. And then there are places and customers where traffic can be handled with less performance.

As for what next for IP, the next 10 to 15 years will be spent moving to SDN- and NFV-architected networks, just as service providers have spent over 10 years moving from time-division multiplexing-based networks to packet-based ones, a transition yet to be finished.

Ray Mota, chief strategist and founder, ACG Research

Carriers have infrastructure that is complex and inflexible, which means they have to be risk-averse. They need to start transitioning their architecture so that they just program the service, not re-architect the network each time they have a new service. Having edge applications becoming more nimble and flexible is a start in the right direction. Alcatel-Lucent has decided to create a NFV edge product with a carrier-grade operating system.

It appears, based on what the company has stated, that it achieves faster performance than competitors' announcements.

Alcatel-Lucent is addressing a few areas: this is great for testing and proof of concepts, and an area of the market that doesn't need high capacity for routing, but it also introduces the potential to expand new markets in the webscaler space (that includes the large internet content providers and the leading hosting/ co-location companies).

You will see more and more IP domain products overlap into the IT domain; the organisationals and operations are lagging behind the technology but once service providers figure it out, only then will they have a more agile network.

STMicro chooses PSM4 for first silicon photonics product

- Lowers the manufacturing cost of optical modules

- Improves link speeds

- Reduces power consumption

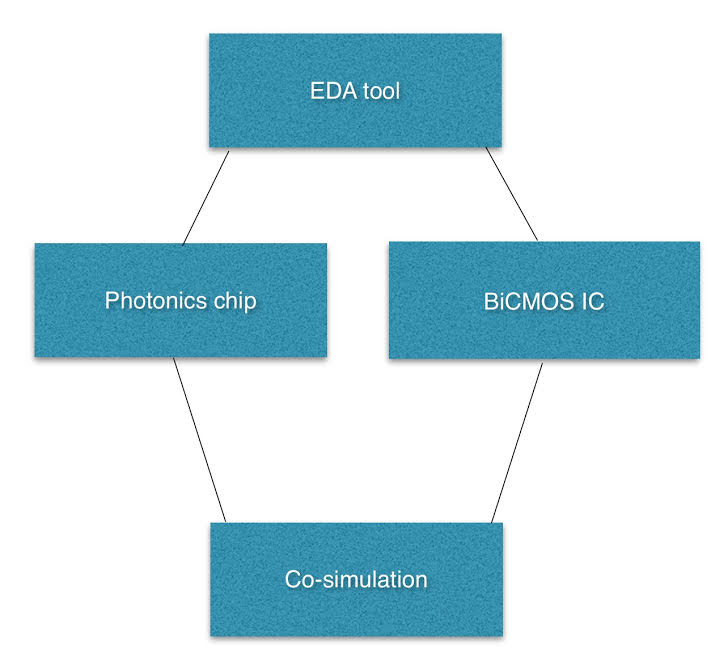

STMicro's in-house silicon photonics EDA. "We will develop the EDA tools to the level needed for the next generation products," says Flavio Benetti.

STMicro's in-house silicon photonics EDA. "We will develop the EDA tools to the level needed for the next generation products," says Flavio Benetti.

Silicon photonics book scheduled for early 2016

The work will provide an assessment of silicon photonics and its market impact over the next decade. The title will explore key trends and challenges facing the telecom and datacom industries, provide a history of silicon photonics, and detail its importance. The title will also pinpoint those applications that will benefit most from the technology.

ECOC reflections: final part

Gazettabyte asked several attendees at the recent ECOC show, held in Cannes, to comment on key developments and trends they noted, as well as the issues they will track in the coming year.

Dr. Ioannis Tomkos, Fellow of OSA & Fellow of IET, Athens Information Technology Center (AIT)

With ECOC 2014 celebrating its 40th anniversary, the technical programme committee did its best to mark the occasion. For example, at the anniversary symposium, notable speakers presented the history of optical communications. Actual breakthroughs discussed during the conference sessions were limited, however.

Ioannis Tomkos

Ioannis Tomkos

It appears that after 2008 to 2012, a period of significant advancements, the industry is now more mainstream, and significant shifts in technologies are limited. It is clear that the original focus four decades ago on novel photonics technologies is long gone. Instead, there is more and more of a focus on high-speed electronics, signal processing algorithms, and networking. These have little to do with photonics even if they greatly improve the overall efficient operation of optical communication systems and networks.

Coherent detection technology is making its way in metro with commercial offerings becoming available, while in academia it is also discussed as a possible solution for future access network applications where long-reach, very-high power budgets and high-bit rates per customer are required. However, this will only happen if someone can come up with cost-effective implementations.

Advanced modulation formats and the associated digital signal processing are now well established for ultra-high capacity spectral-efficient transmission. The focus in now on forward-error-correction codes and their efficient implementations to deliver the required differentiation and competitive advantage of one offering versus another. This explains why so many of the relevant sessions and talks were so well attended.

There were several dedicated sessions covering flexible/ elastic optical networking. It was also mentioned in the plenary session by operator Orange. It looks like a field that started only fives years ago is maturing and people are now convinced about the significant short-term commercial potential of related solutions. Regarding latest research efforts in this field, people have realised that flexible networking using spectral super-channels will offer the most benefit if it becomes possible to access the contents of the super-channels at intermediate network locations/ nodes. To achieve that, besides traditional traffic grooming approaches such as those based on OTN, there were also several ground-breaking presentations proposing all-optical techniques to add/ drop sub-channels out of the super-channel.

Progress made so far on long-haul high-capacity space-division-multiplexed systems, as reported in a tutorial, invited talks and some contributed presentations, is amazing, yet the potential for wide-scale deployment of such technology was discussed by many as being at least a decade away. Certainly, this research generates a lot of interesting know-how but the impact in the industry might come with a long delay, after flexible networking and terabit transmission becomes mainstream.

Much attention was also given at ECOC to the application of optical communications in data centre networks, from data-centre interconnection to chip-to-chip links. There were many dedicated sessions and all were well attended.

Besides short-term work on high-bit-rate transceivers, there is also much effort towards novel silicon photonic integration approaches for realising optical interconnects, space-division-multiplexing approaches that for sure will first find their way in data centres, and even efforts related with the application of optical switching in data centres.

At the networking sessions, the buzz was around software-defined networking (SDN) and network functions virtualisation (NFV) now at the top of the “hype-cycle”. Both technologies have great potential to disrupt the industry structure, but scientific breakthroughs are obviously limited.

As for my interests going forward, I intend to look for more developments in the field of mobile traffic front-haul/ back-haul for the emerging 5G networks, as well as optical networking solutions for data centres since I feel that both markets present significant growth opportunities for the optical communications/ networking industry and the ECOC scientific community.

Dr. Jörg-Peter Elbers, vice president advanced technology, CTO Office, ADVA Optical Networking

The top topics at ECOC 2014 for me were elastic networks covering flexible grid, super-channels and selectable higher-order modulation; transport SDN; 100-Gigabit-plus data centre interconnects; mobile back- and front-hauling; and next-generation access networks.

For elastic networks, an optical layer with a flexible wavelength grid has become the de-facto standard. Investigations on the transceiver side are not just focussed on increasing the spectral efficiency, but also at increasing the symbol rate as a prospect for lowering the number of carriers for 400-Gigabit-plus super-channels and cost while maintaining the reach.

Jörg-Peter Elbers

Jörg-Peter Elbers

As we approach the Shannon limit, spectral efficiency gains are becoming limited. More papers were focussed on multi-core and/or few-mode fibres as a way to increase fibre capacity.

Transport SDN work is focussing on multi-tenancy network operation and multi-layer/ multi-domain network optimisation as the main use cases. Due to a lack of a standard for north-bound interfaces and a commonly agreed information model, many published papers are relying on vendor-specific implementations and proprietary protocol extensions.

Direct detect technologies for 400 Gigabit data centre interconnects are a hot topic in the IEEE and the industry. Consequently, there were a multitude of presentations, discussions and demonstrations on this topic with non-return-to-zero (NRZ), pulse amplitude modulation (PAM) and discrete multi-tone (DMT) being considered as the main modulation options. 100 Gigabit per wavelength is a desirable target for 400 Gig interconnects, to limit the overall number of parallel wavelengths. The obtainable optical performance on long links, specifically between geographically-dispersed data centres, though, may require staying at 50 Gig wavelengths.

In mobile back- and front-hauling, people increasingly recognise the timing challenges associated with LTE-Advanced networks and are looking for WDM-based networks as solutions. In the next-generation access space, components and solutions around NG-PON2 and its evolution gained most interest. Low-cost tunable lasers are a prerequisite and several companies are working on such solutions with some of them presenting results at the conference.

Questions around the use of SDN and NFV in optical networks beyond transport SDN point to the access and aggregation networks as a primary application area. The capability to programme the forwarding behaviour of the networks, and place and chain software network functions where they best fit, is seen as a way of lowering operational costs, increasing network efficiency and providing service agility and elasticity.

What did I learn at the show/ conference? There is a lot of development in optical components, leading to innovation cycles not always compatible with those of routers and switches. In turn, the cost, density and power consumption of short-reach interconnects is continually improving and these performance metrics are all lower than what can be achieved with line interfaces. This raises the question whether separating the photonic layer equipment from the electronic switching and routing equipment is not a better approach than building integrated multi-layer god-boxes.

There were no notable new trends or surprises at ECOC this year. Most of the presented work continued and elaborated on topics already identified.

As for what we will track closely in the coming year, all of the above developments are of interesting. Inter-data centre connectivity, WDM-PON and open programmable optical core networks are three to mention in particular.

For the first ECOC reflections, click here

Infinera targets the metro cloud

Infinera has styled its latest Cloud Xpress product used to connect data centres as a stackable platform, similar to how servers and storage systems are built. The development is another example of how the rise of the data centre is influencing telecoms.

"There is a drive in the industry that is coming from the data centre world that is starting to slam into the telecom world," says Stuart Elby, Infinera's senior vice president of cloud network strategy and technology.

Cloud Xpress is designed to link data centres up to 200km apart, a market Infinera coins the metro cloud. The two-rack-unit-high (2RU) stackable box features Infinera's 500 Gigabit photonic integrated circuit (PIC) for line side transmission and a total of 500 Gigabit of client side links made up of 10, 40 or 100 Gigabit interfaces. Typically, up to 16 units will be stacked in a rack, providing 8 Terabits of transmission capacity over a fibre.

Cloud Xpress has also been designed with the data centre's stringent power and space requirements in mind. The resulting platform has significantly improved power consumption and density metrics compared to traditional metro networking platforms, claims Infinera.

Metro split

Elby describes how the metro network is evolving into two distinct markets: metro aggregation and metro cloud. Metro aggregation, as the name implies, combines lower speed multi-service traffic from consumers' broadband links and from enterprises into a hub where it is switched onto a network backbone. Metro cloud, in contrast, concerns date centre interconnect: point-to-point links that, for the larger data centres, can total several terabits of capacity.

Cloud Xpress is Infinera's first metro platform that uses its PIC. "We have plans to offer it all the way out to ultra long haul," says Elby. "There are some data centres that need to get tied between continents."

Cloud Xpress is being aimed at several classes of customer: internet content providers companies (or webcos), entreprises, cloud operators and traditional service providers. The primary end users are webcos and enterprises, which is why the platform is designed as a rack-and-stack. "These are not networking companies, they are data centre ones; they think of equipment in the context of the data centre," says Elby.

But Infinera expects telcos will also adopt Cloud Xpress. They need to connect their data centres and link data centres to points-of-presence, especially when increasing amounts of traffic from end users now goes to the cloud. Equally, a business customer may link to a cloud service provider through a colocation point, operated by companies such as Equinix, Rackspace and Verizon Terremark.

"There will be a bleed-over of the use of this product into all these metro segments," says Elby. "But the design point [of Cloud Xpress] was for those that operate data centres more than those that are network providers."

Google has shared that a single internet search query travels on average 2,400km before being resolved, while Facebook has revealed that a single http request generates some 930 server-to-server interactions.

The Magnification Effect

Webcos' services generate significantly more internal traffic than the triggering event, what Elby calls the magnification effect.

Google has shared that a single internet search query travels on average 2,400km before being resolved, while Facebook has revealed that a single http request generates some 930 server-to-server interactions. These servers may be in one data centre or spread across centres.

"It is no longer one byte in, one byte out," says Elby. "The amount of traffic generated inside the network, between data centres, is much greater than the flow of traffic into or out of the data centre." This magnification effect is what is driving the significant bandwidth demand between data centres. "When we talk to the internet content providers, they talk about terabits," says Elby.

Cloud Xpress

Cloud Xpress is already being evaluated by customers and will be generally available from December.

The stackable platform will have three client-side faceplate options: 10 Gig, 40 Gig and 100 Gig. The 10 Gig SFP+ faceplate is the sweet spot, says Elby, and there is also a 40 Gig one, while the 100 Gig is in development. "In the data centre world, we are hearing that they [webcos] are much more interested in the QSFP28 [optical module]."

Infinera says that the Ethernet client signals connect to a simple mapping function IC before being placed onto 100 Gig tributaries. Elby says that Infinera has minimised the latency through the box, to achieve 4.4 microseconds. This is an important requirement for certain data centre operators.

The 500 Gig PIC supports Infinera's 'instant bandwidth' feature. Here, all the 500 Gig super-channel capacity is lit but a user can add 100 Gig increments as required. This avoids having to turn up wavelengths and simplifies adding more capacity when needed.

The Cloud Xpress rack can accommodate 21 stackable units but Elby says 16 will be used typically. On the line side, the 500 Gigabit super-channels are passively multiplexed onto a fibre to achieve 8 Terabits. The platform density of 500 Gig per rack unit (500 Gig client and 500 Gig line side per 2RU box), exceeds any competitor's metro platform, says Elby, saving important space in the data centre.

The worse-case power consumption is 130W-per-100 Gig, an improvement on the power consumption performance of competitors' platforms. This is despite the fact that coherent detection is always used, even for links as short as between a data centre's buildings. "We have different flavours of the optical engine for different reaches," says Elby. "It [coherent] is just used because it is there."

The reduced power consumption of Cloud Xpress is achieved partly because of Infinera's integrated PIC, and by scrapping Optical Transport Network (OTN) framing and switching which is not required. "There are no extra bells and whistles for things that aren't needed for point-to-point applications," says Elby. The stackable nature of the design, adding units as needed, also helps.

The Cloud Xpress rack can be controlled using either Infinera's management system or software-defined networking (SDN) application programming interfaces (APIs). "It supports the sort of interfaces the SDN community wants: Web 2.0 interfaces, not traditional telco ones."

Infinera is also developing a metro aggregation platform that will support multi-service interfaces and aggregate flows to the hub, a market that it expects to ramp from 2016.

Ranovus readies its interfaces for deployment

- Products will be deployed in the first half of 2015

- Ranovus has raised US $24 million in a second funding round

- The start-up is a co-founder of the OpenOptics MSA; Oracle is now also an MSA member.

Ranovus says its interconnect products will be deployed in the first half of 2015. The start-up, which is developing WDM-based interfaces for use in and between data centres, has raised US $24 million in a second stage funding round. The company first raised $11 million in September 2013.

Saeid Aramideh"There is a lot of excitement around technologies being developed for the data centre," says Saeid Aramideh, a Ranovus co-founder and chief marketing and sales officer. He highlights such technologies as switch ICs, software-defined networking (SDN), and components that deliver cost savings and power-consumption reductions. "Definitely, there is a lot of money available if you have the right team and value proposition," says Aramideh. "Not just in Silicon Valley is there interest, but in Canada and the EU."

Saeid Aramideh"There is a lot of excitement around technologies being developed for the data centre," says Saeid Aramideh, a Ranovus co-founder and chief marketing and sales officer. He highlights such technologies as switch ICs, software-defined networking (SDN), and components that deliver cost savings and power-consumption reductions. "Definitely, there is a lot of money available if you have the right team and value proposition," says Aramideh. "Not just in Silicon Valley is there interest, but in Canada and the EU."

The optical start-up's core technology is a quantum dot multi-wavelength laser which it is combining with silicon photonics and electronics to create WDM-based optical engines. With the laser, a single gain block provides several channels while Ranovus is using a ring resonator implemented in silicon photonics for modulation. The company is also designing the electronics that accompanies the optics.

Aramideh says the use of silicon photonics is a key part of the design. "How do you enable cost-effective WDM?" he says."It is not possible without silicon photonics." The right cost points for key components such as the modulator can be achieved using the technology. "It would be ten times the cost if you didn't do it with silicon photonics," he says.

The firm has been working with several large internet content providers to turn its core technology into products. "We have partnered with leading data centre operators to make sure we develop the right products for what these folks are looking for," says Aramideh.

In the last year, the start-up has been developing variants of its laser technology - in terms of line width and output power - for the products it is planning. "A lot goes into getting a laser qualified," says Aramideh. The company has also opened a site in Nuremberg alongside its headquarters in Ottawa and its Silicon Valley office. The latest capital will be used to ready the company's technology for manufacturing and recruit more R&D staff, particularly at its Nuremberg site.

Ranovus is a founding member, along with Mellanox, of the 100 Gigabit OpenOptics multi-source agreement. Oracle, Vertilas and Ghiasi Quantum have since joined the MSA. The 4x25 Gig OpenOptics MSA has a reach of 2km-plus and will be implemented using a QSFP28 optical module. OpenOptics differs from the other mid-reach interfaces - the CWDM4, PSM4 and the CLR4 - in that it uses lasers at 1550nm and is dense wavelength-division multiplexed (DWDM) based.

It is never good that an industry is fragmented

That there are as many as four competing mid-reach optical module developments, is that not a concern? "It is never good that an industry is fragmented," says Aramideh. He also dismisses a concern that the other MSAs have established large optical module manufacturers as members whereas OpenOptics does not.

"We ran a module company [in the past - CoreOptics]; we have delivered module solutions to various OEMs that are running is some of the largest networks deployed today," says Aramideh. "Mellanox [the other MSA co-founder] is also a very capable solution provider."

Ranovus plans to use contract manufacturers in Asia Pacific to make its products, the same contract manufacturers the leading optical module makers use.

Table 1: The OpenOptics MSA

Table 1: The OpenOptics MSA

End markets

"I don't think as a business, anyone can ignore the big players upgrading data centres," says Aramideh. "The likes of Google, Facebook, Amazon, Apple and others that are switching from a three-tier architecture to a leaf and spine need longer-reach connectivity and much higher capacity." The capacity requirements are much beyond 10 Gig and 40 Gig, and even 100 Gig, he says.

Ranovus segments the adopters of interconnect into two: the mass market and the technology adopters. "Mass adoption today is all MSA-based," says Aramideh. "The -LR4 and -SR10, and the same thing is happening at 100 Gig with the QSFP28." The challenge for the optical module companies is who has the lowest cost.

Then there are the industry leaders such as the large internet content providers that want innovative products that address their needs now. "They are less concerned about multi-source standard-based solutions if you can show them you can deliver a product they need at the right cost," says Aramideh.

Ranovus will offer an optical engine as well as the QSFP28 optical module. "The notion of the integration of an optical engine with switch ICs and other piece parts in the data centre are more of an urgent need," he says.

Using WDM technology, the company has a scalable roadmap that includes 8x25 Gig and 16x25 Gig (400 Gig) designs. Also, by adding higher-order modulation, the technology will scale to 1.6 Terabit (16x100 Gig), says Aramideh.

I don't see a roadmap for coherent to become cost-effective to address the smaller distances

Ranovus is also working on interfaces to link data centres.

"These are distances much shorter than metro/ regional networks," says Aramideh, with the bulk of the requirements being for links of 15 to 40km. For such relatively short distances, coherent detection technology has a high-power consumption and is expensive. "I don't see a roadmap for coherent to become cost-effective to address the smaller distances," says Aramideh.

Instead, the company believes that a direct-detection interconnect that supports 15 to 40km and which has a spectral efficiency that can scale to 9.6 Terabit is the right way to go. If that can be achieved, then switching from coherent to direct detection becomes a no-brainer, he says. "For inter-data-centres, we are really offering an alternative to coherent."

The start-up says its technology will be in product deployment with lead customers in the first half of 2015.

ECOC 2014: Industry reflections on the show

Gazettabyte asked several attendees at the recent ECOC show, held in Cannes, to comment on key developments and trends they noted, as well as the issues they will track in the coming year.

Daryl Inniss, practice leader, components at market research firm, Ovum

It took a while to unwrap what happened at ECOC 2014. There was no one defining event or moment that was the highlight of the conference.

It took a while to unwrap what happened at ECOC 2014. There was no one defining event or moment that was the highlight of the conference.

The location was certainly beautiful and the weather lovely. Yet I felt the participants were engaged with critical technical and business issues, given how competitive the market has become.

Kaiam’s raising US $35 million, Ranovus raising $24 million, InnoLight Technology raising $38 million and being funded by Google Capital, and JDSU and Emcore each splitting into two companies, all are examples of the shifting industry structure.

On the technology and product development front, advances in 100 Gig metro coherent solutions were reported although products are coming to market later than first estimated. The client-side 100 Gig is transitioning to CFP2. Datacom participants agree that QSFP28 is the module but what goes inside will include both parallel single mode solutions and wavelength multiplexed ones.

Finisar’s 50 Gig transmission demonstration that used silicon photonics as the material choice surprised the market. Compared to last year, there were few multi-mode announcements. ECOC 2014 had little excitement and no one defining show event but there were many announcements showing the market’s direction.

There is one observation from the show, which while not particularly exciting or sexy, is important, and it seems to have gone unnoticed in my opinion. Source Photonics demonstrated the 100GBASE-LR4, the 10km 100 Gigabit Ethernet standard, in the QSFP28 form factor. This is not new as Source Photonics also demonstrated this module at OFC. What’s interesting is that no one else has duplicated this result.

There will be demand for a denser -LR4 solution that’s backward compatible with the CFP, CFP2, and CFP4 form factors. It is unlikely that the PSM4, CWDM4, or CLR4 will go 10km and they are not optically compatible with the -LR4. The market is on track to use the QSFP28 for all 100 Gig distances so it needs the supporting optics. The Source Photonics demonstration shows a path for 10km. We expect to see other solutions for longer distances over time.

One surprise at the show was Finisar's and STMicroelectronics's demonstration of 50 Gig non-return-to-zero transmission over 2.2km on standard single mode fiber. The transceiver was in the CFP4 form factor and uses heterogeneous silicon technologies inside. The results were presented in a post-deadline paper (PD.2.4). The work is exciting because it demonstrates a directly modulated laser operating above 28 Gig, the current state-of-the-art.

The use of silicon photonics is surprising because Finisar has been forced to defend its legacy technology against the threat of transceivers based on silicon photonics. These results point to one path forward for next-generation 100 Gig and 400 Gig solutions.

In the coming year, I’m looking for the dominant metro 100G solution to emerge. When will the CFP2 analogue coherent optical module become generally available? Multiple suppliers with this module will help unleash the 100 Gig line-side transmission market, drive revenue growth and the development for the next-generation solution.

Slow product development gives competing approaches like the digital CFP a chance to become the dominant solution. At present, there is one digital CFP vendor with a generally available product, Acacia Communications, with a second, Fujitsu Optical Components, having announced general availability in the first half of 2015.

Neal Neslusan, vice president of sales and marketing at fabless chip company, MultiPhy.

It was impressive to see Oclaro's analogue CFP2 for coherent applications on the show floor, albeit only in loopback mode. Equally impressive was seeing ClariPhy's DSP on the evaluation board behind the CFP2.

I saw a few of the motherboard-based optics solutions at the show. They looked very interesting and in questioning various folks in the business I learned that for certain data centre applications these optics are considered acceptable. Indeed, they represent an ability to extract much higher bandwidth from a given motherboard as compared to edge-of-the-board based optics, but they are not pluggable.

I saw a few of the motherboard-based optics solutions at the show. They looked very interesting and in questioning various folks in the business I learned that for certain data centre applications these optics are considered acceptable. Indeed, they represent an ability to extract much higher bandwidth from a given motherboard as compared to edge-of-the-board based optics, but they are not pluggable.

Traditionally, pluggable optics has been the mainstay of the datacom and enterprise segments and these motherboard-based optics have been relegated to supercomputing. This is just another example, in my opinion, of how the data centre market is becoming distinct from the datacom market.

Where there any surprises at the show? I was surprised and alarmed at the cost of the Martini drinks at the hotel across the street from the show, and they weren't even that good!

Regarding developments in the coming year, the 8x50 Gig versus 4x100 Gig fight in the IEEE is clearly a struggle I will follow. I think it will have a great impact on product development in our industry. If 8x50 Gig wins, it may be one of the few times in the history of our industry that a less advanced solution is chosen over a more advanced and future-proofed one.

The physical size of the next-generation Terabit Ethernet switch chips will have a much larger impact on the optics they connect to in the coming years, compared to the past. This work combined with the motherboard-based optics may create a significant change in the solutions brought to bear for high-performance communications.

John Lively, principal analyst at market research firm, LightCounting.

There were several developments that I noted at the show. ECOC helped cement the view that 100 Gig coherent is mainstream for metro networks. Also more and more system vendors are incorporating Raman/ remote optically pumped amplifier (ROPA) into their toolkit. ROPA is a Raman-based amplifier where the pump is located at one end of the link, not in some intermediate node. Another trend evident at ECOC is how the network boundary between terrestrial and submarine is blurring.

There were several developments that I noted at the show. ECOC helped cement the view that 100 Gig coherent is mainstream for metro networks. Also more and more system vendors are incorporating Raman/ remote optically pumped amplifier (ROPA) into their toolkit. ROPA is a Raman-based amplifier where the pump is located at one end of the link, not in some intermediate node. Another trend evident at ECOC is how the network boundary between terrestrial and submarine is blurring.

As for developments to watch, I intend to follow mobile fronthaul/ backhaul, higher speed transceiver developments, of course, and how the mega-data-centre operators are disrupting networks, equipment, and components.

For the ECOC reflections, final part, click here