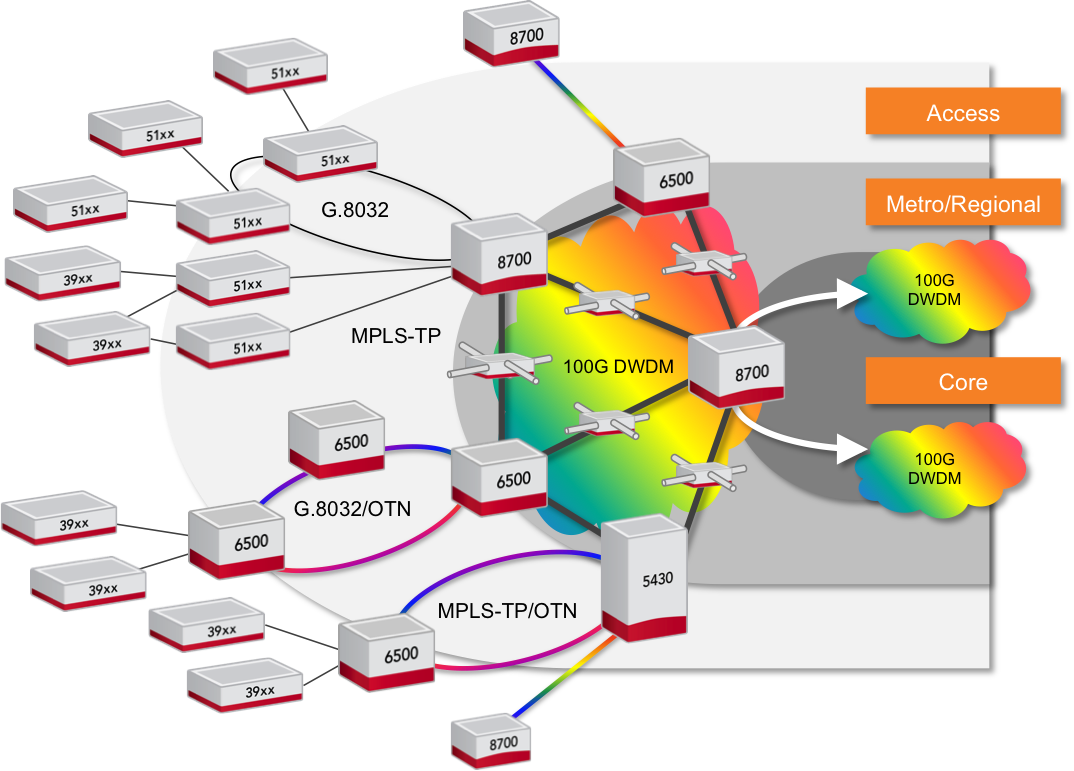

Ciena launches the 8700 metro Ethernet-over-WDM platform

- The 8700 Packetwave is an Ethernet-over-DWDM platform

- The 8700 is claimed to deliver double the Ethernet density while halving the power consumption and space required.

Source: Ciena

Source: Ciena

Ciena has launched the 8700 Packetwave platform that combines high-capacity Ethernet switching with 100 Gigabit coherent optical transmission.

The platform is designed to cater for the significant growth in metro traffic, estimated at between 30 and 50 percent each year, and the ongoing shift from legacy to Ethernet services.

Brian Lavallée

Analysts predict that by 2017, 75 percent of the overall bandwidth in the metro will be Ethernet-based.

"In the major markets we compete in, our customers are telling us that Ethernet has already surpassed 75 percent," says Brian Lavallée, director of technology & solutions marketing at Ciena.

"What you're seeing is a trend towards convergence," says Ray Mota, managing partner at ACG Research. "The economics make sense and it should have happened but organisational issues have caused the delay."

Many service providers have two separate groups, one for packet and another for transport. "Now, many service providers are merging the two groups, feeling the time is right, so you will see more and more converge products get more penetration," says Mota.

The 8700 can be viewed as a slimmed-down packet-optical transport system (P-OTS), tailored for Ethernet. The platform's packet features include Ethernet and MPLS-TP for connection-oriented Ethernet, while optically it has 100 Gigabit coherent WDM.

"This is a more specialised machine hitting this target spot in the aggregation networks," says Michael Howard, co-founder and principal analyst, carrier networks at Infonetics Research. "It doesn't need much MPLS, it doesn’t need OTN switching, and it doesn’t need SDH/TDM."

The main applications for the 8700 include aggregation of telcos' business services, data centre interconnect, wireless backhaul, and the distribution of cable operators' Ethernet traffic. "It [the 8700] is a good product for edge aggregation, where the bandwidth is getting cranked up," says Howard. "I see it as an Ethernet-over-DWDM platform, performing the aggregation on the customer side and the fan-in on the upstream side."

Ray MotaTwo Packetwave platforms have been announced: an 800 Gigabit full-duplex switching capacity platform and a 2-Terabit one. The platform's line cards support 10, 40 and 100 Gigabit client-side interfaces while a line-side card has two 100 Gigabit coherent interfaces based on Ciena's WaveLogic DSP-ASIC technology.

Ciena says the platform will support double the capacity when it introduces WaveLogic devices that deliver 100 and 200 Gig rates. "It has been tested," says Lavallée. "It is just a matter of changing the cards."

The 8700 is claimed to deliver double the Ethernet density compared to competing platforms, while halving the power consumption and space required. "Given it is a new category of product, we don't have a direct competitor," says Lavallée. "But when we say half the power and space, that is the average across these multiple products from competitors."

Lavallée would not detail the competitor platforms used in the comparison but Mota cites Alcatel-Lucent's 7450 and 7950 platforms, Juniper's MX and PTX platforms and Cisco's ASR 9000 as the ones likely used.

Using merchant silicon for the Ethernet switching has helped achieve greater density, as has using Ciena's own WaveLogic DSP-ASIC. "The further development we have done on our [WaveLogic] coherent optical processor does give us significant savings, not just in power but also real-estate," says Lavallée.

Being a layer-2 platform, the 8700 has none of the packet processing and specialist memory hardware requirements associated with layer-3 IP routers, also benefitting the platform's overall power consumption.

Michael HowardCiena stresses that P-OTS is not going away and that it will continue to deliver significant value for certain customers. "The biggest concern of customers is complexity," says Lavallée. "There are a lot of ways of reducing complexity in your network and some customers believe that is Ethernet-over-dense WDM."

ACG's Mota sees the launch of the 8700 as an important move by Ciena. "The metro is the hot area that needs transitioning," he says. "Many of the traditional core requirements are moving to the metro so the timing of Ciena playing in this space with a converge platform could be strategic, providing they partner well with companies like Ericsson and the network functions virtualisation software providers."

Lavallée says that with the advent of software-defined networking and the applications that make use of the technology, there is an underlying shift from the hardware towards software. But he dismisses the notion that hardware is becoming less important.

"What is lost in this whole discussion is that if you don't have a programmable piece of hardware below, you can't write these apps," says Lavallée. The 8700 hardware is programmable and there are open interfaces to access it, he says: "We have a lot of knobs and switches that the software can use."

Further reading

Paper: Ciena 8700 Packetwave platform, click here

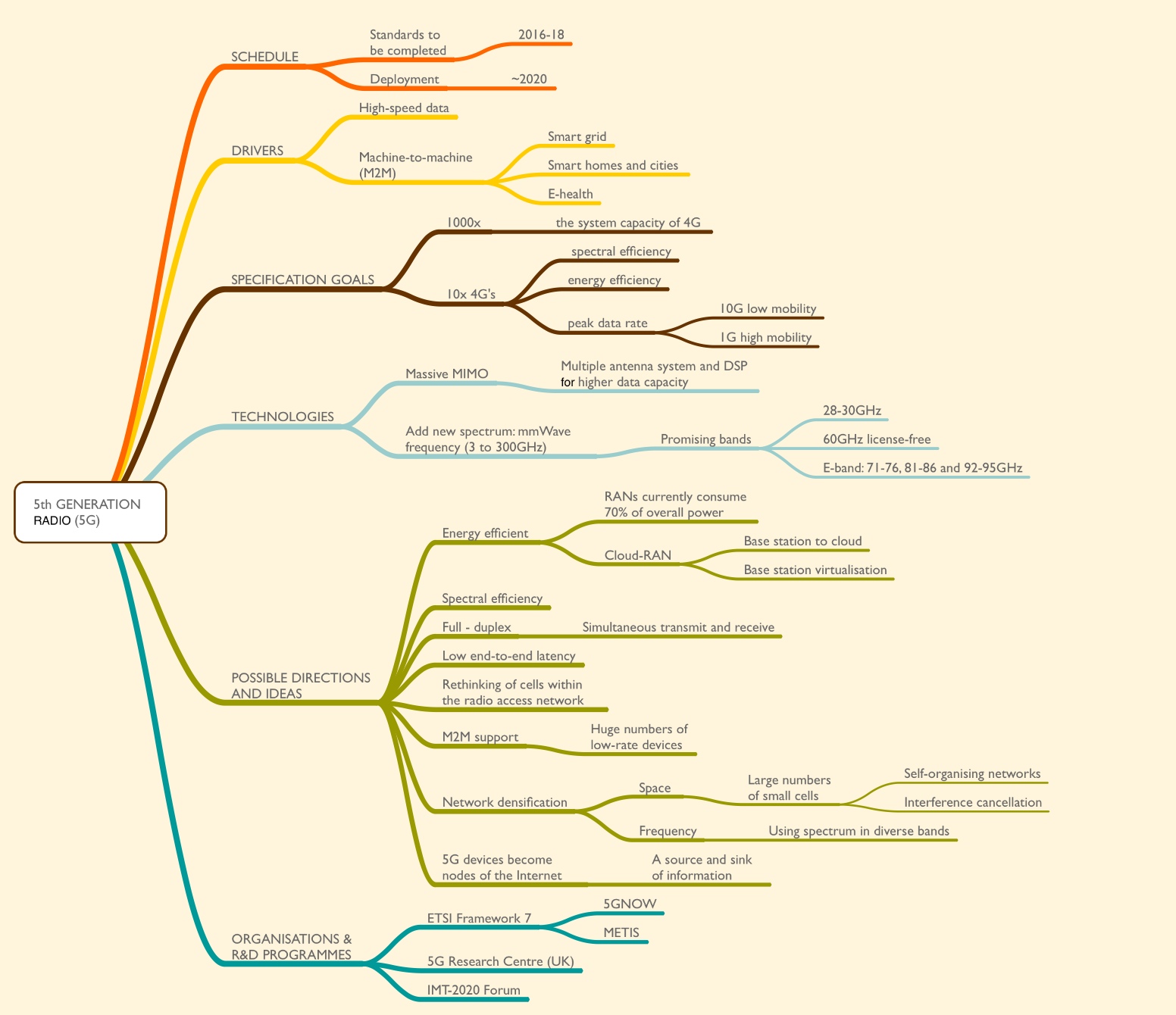

5th Generation radio: A summary

The IEEE Communications magazine published in its February and May 2014 issues a series of excellent articles on the early work and thoughts regarding 5th Generation radio. Here is a mind-map of some of the issues and thoughts raised in the articles in the February issue.

Click here for a .pdf of the mind-map.

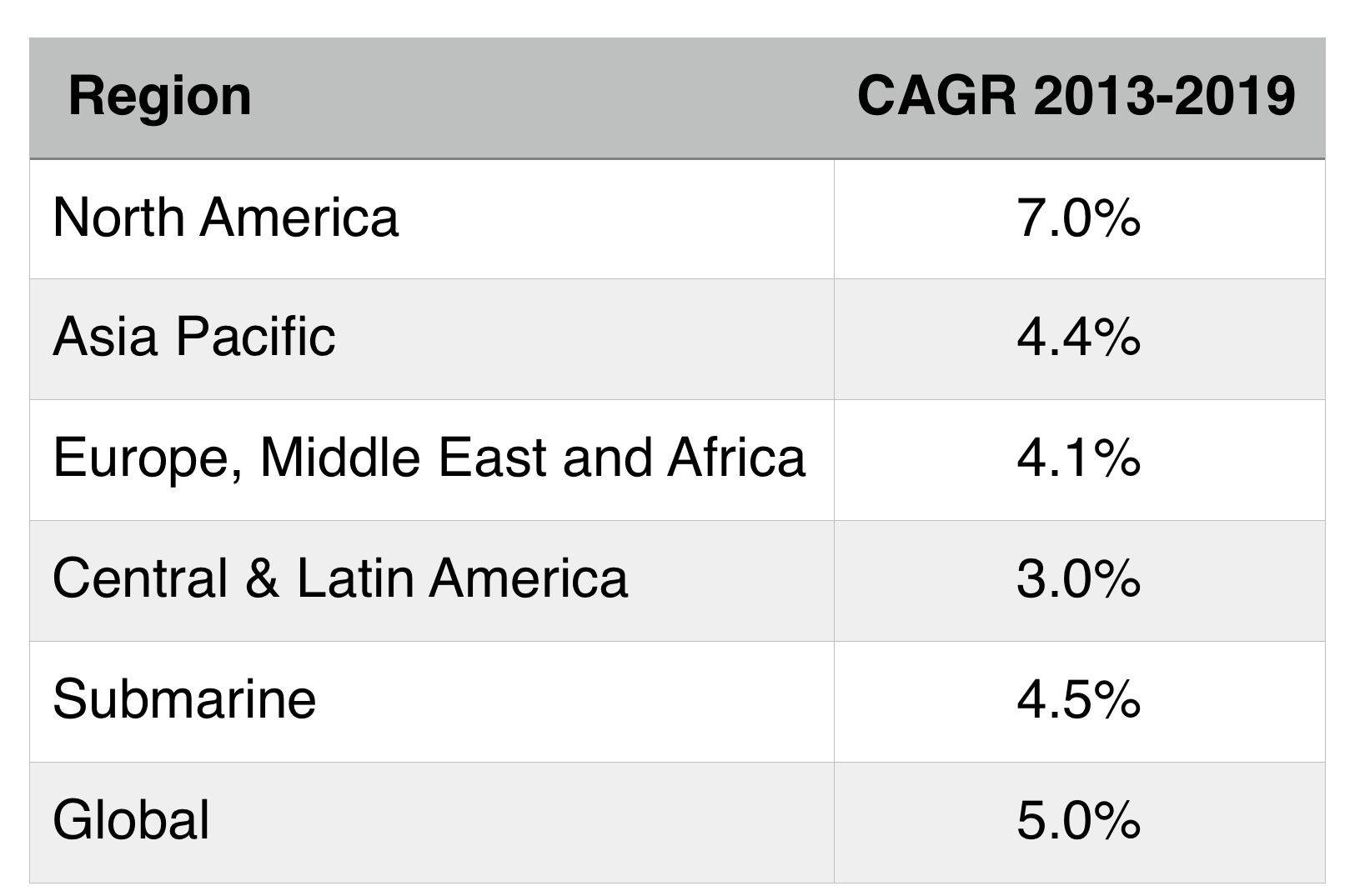

Global optical networking market set for solid growth

Source: Ovum

Source: Ovum

The global optical networking market will grow at a year-over-year rate of 5 percent through 2019. So claims market research firm, Ovum, in its optical networking forecast for 2013 to 2019. North America will lead the market growth, with data centre deployments and demand for 100 Gigabit being the main drivers.

The building of data centres drives demand for optical interconnect. "It [data centre operators] is almost a new category of buyer," says Ian Redpath, principal analyst, network infrastructure at Ovum. The segment is growing faster than telco spending on fixed and mobile networks.

"This whole phenomenon of the large data centre operators is more pronounced in North America, and we think that will continue throughout the forecast period," says Redpath.

Demand for 100 Gigabit is coming from several segments: large incumbent operators, cable operators and internet content service providers. "All these entities are buying a technology [100 Gig] that is prime time," says Redpath.

Asia Pacific will be the region with the second largest growth for the forecast period, at 4.4 percent compound annual growth rate (CAGR).

The deployment of optical equipment in China and Japan was down in 2013: China dipped 6 percent while Japan was down a huge 23 percent compared to 2012 market demand.

The underlying trend in China is one of growth, with the optical market valued at US $3 billion. "They just had to have a pause," says Redpath, who points out that the Chinese market has tripled in a relatively short period. "They are now retooling for the next big thing: LTE; it it just a matter of time," he says. The deployment of 100 Gig, by the large three domestic operators, may start by the year end or spill into 2015.

Optics is the foundation of an industry that is growing

Japan's sharp decline in 2013 follows massive growth in 2012, the result of replacing networks lost following the 2011 earthquake and tsunami. "That was a one-time bump followed by a one-time reset, with the market now back to normal," says Redpath.

Meanwhile, the EMEA optical networking market will growth at 4.1 percent. "This is a pretty modest growth rate, with more upside coming in the latter period," says Redpath. "The operators have been neglecting their core for so long, they are going to have to come back and reinvest."

Ovum says the weakness of the European market will run its course during the forecast period and expects Europe's northern countries - the UK and Germany - to lead the recovery, followed by the likes of Spain, Italy and Greece.

The market research firm singles out the UK market as being particularly dynamic, and an economy that will lead Europe out of recession. "It is probably closer to the North America market than any other country in terms of competitors and non-carrier spending," says Redpath. "The UK is also one of the leading data centre markets in the world."

Ovum remains upbeat about the long-term prospects of the global optical networking market. "Optics is the foundation of an industry that is growing," says Redpath.

He also points to recent developments in the net neutrality debate, and cites how over-the-top TV and film player, Netflix, has signed agreements with telecom and cable operators. "If over-the-top players realise that they can't keep free-riding on these networks, and to get performance they give a little money to the telcos, then that is a good thing for the ultimate food chain," says Redpath.

Further reading:

Global market soft in 1Q14; North America bucks trend, click here

Verizon readies its metro for next-generation P-OTS

Verizon is preparing its metro network to carry significant amounts of 100 Gigabit traffic and has detailed its next-generation packet-optical transport system (P-OTS) requirements. The operator says technological advances in 100 Gig transmission and new P-OTS platforms - some yet to be announced - will help bring large scale 100 Gig deployments in the metro in the next year or so.

Glenn Wellbrock

Glenn Wellbrock

The operator says P-OTS will be used for its metro and regional networks for spans of 400-600km. "That is where we have very dense networks," says Glenn Wellbrock, director of optical transport network architecture and design at Verizon. "The amount of 100 Gig is going to be substantially higher than it was in long haul."

Verizon announced in April that it had selected Fujitsu and Coriant for a 100 Gig metro upgrade. The operator has already deployed Fujitsu's FlashWave 9500 and the Coriant 7100 (formerly Tellabs 7100) P-OTS platforms. "The announcement [in April] is to put 100 Gig channels in that embedded base," says Wellbrock.

The operator has 4,000 reconfigurable optical add/ drop multiplexers (ROADMs) across its metro networks worldwide and all support 100 Gig channels. But the networks are not tailored for high-speed transmission and hence the cost of 100 Gig remains high. For example, dispersion compensation fibre, and Erbium-doped fibre amplifiers (EDFA) rather than hybrid EDFA-Raman are used for the existing links. "It [the network] is not optimised for 100 Gig but will support it, and we are using [100 Gig] on an as-needed basis," says Wellbrock.

The metro platform will be similar to those used for Verizon's 100 Gig long-haul in that it will be coherent-based and use advanced, colourless, directionless, contentionless and flexible-grid ROADMs. "But all in a package that fits in the metro, with a much lower cost, better density and not such a long reach," says Wellbrock.

The amount of 100 Gig is going to be substantially higher than it was in long haul

One development that will reduce system cost is the advent of the CFP2-based line-side optical module; another is the emergence of third- or fourth-generation coherent DSP-ASICs. "We are getting to the point where we feel it is ready for the metro," says Wellbrock. "Can we get it to be cost-competitive? We feel that a lot of the platforms are coming along."

The latest P-OTS platforms feature enhanced packet capabilities, supporting carrier Ethernet, multi-protocol label switching - transport profile (MPLS-TP), and high-capacity packet and Optical Transport Network (OTN) switching. Recently announced P-OTS platforms suited to Verizon's metro request-for-proposal include Cisco Systems' Network Convergence System (NCS) 4000 and Coriant's mTera. Verizon says it expects other vendors to introduce platforms in the next year.

Verizon still has over 250,000 SONET elements in its network. Many are small and reside in the access network but SONET also exists in its metro and regional networks. The operator is keen to replace the legacy technology but with such a huge number of installed network elements, this will not happen overnight.

Verizon's strategy is to terminate the aggregated SONET traffic at its edge central offices so that it only has to deal with large Ethernet and OTN flows at the network node. "We plan to terminate the SONET, peel out the packets and send them in a packet-optimised fashion," says Wellbrock. In effect, SONET is to be stopped from an infrastructure point of view, he says, by converting the traffic for transport over OTN and Ethernet.

SDN and multi-layer optimisation

The P-OTS platform, with its integrated functionality spanning layer-0 to layer-2, will have a role in multi-layer optimisation. The goal of multi-layer optimisation is to transport services on the most suitable networking layer, typically the lowest, most economical layer possible. Software-defined networking (SDN) will be used to oversee such multi-layer optimisation.

However, P-OTS, unlike servers used in the data centre, are specialist rather than generic platforms. "Optical stuff is not generic hardware," says Wellbrock. Each P-OTS platform is vendor-proprietary. What can be done, he says, is to use 'domain controllers'. Each vendor's platform will have its own domain controller, above which will sit the SDN controller. Using this arrangement, the vendor's own portion of the network can be operated generically by an SDN controller, while benefitting from the particular attributes of each vendor's platform using the domain controller.

There is always frustration; we always want to move faster than things are coming about

Verizon's view is that there will be a hierarchy of domain and SDN controllers."We assume there are going to be multiple layers of abstraction for SDN," says Wellbrock. There will be no one, overriding controller with knowledge of all the networking layers: from layer-0 to layer-3. Even layer-0 - the optical layer - has become dynamic with the addition of colourless, directionless, contentionless and flexible-grid ROADM features, says Wellbrock.

Instead, as part of these abstraction layers, there will be one domain that will control all the transport, and another that is all-IP. Some software element above these controllers will then inform the optical and IP domains how best to implement service tasks such as interconnecting two data centres, for example. The transport controller will then inform each layer its particular task. "Now I want layer-0 to do that, and that is my Ciena box; I need layer-1 to do this and that happens to be a Cyan box; and we need MPLS transport to do this, and that could be Juniper," says Wellbrock, pointing out that in this example, three vendor-domains are involved, each with its own domain controller.

Is Verizon happy with the SDN progress being made by the P-OTS vendors?

"There is always frustration; we always want to move faster than things are coming about," says Wellbrock. "The issue, though, is that there is nothing I see that is a showstopper."

The art of virtualised network function placement

Cyan is delivering its Blue Planet NFV orchestrator software that will make use of enhancements being made to OpenStack developed by Red Hat in close collaboration with Telefónica.

Gazettabyte asked Nirav Modi, director of software innovations at Cyan, about the work.

Q: The concept of deterministic placement of virtualised network functions. Why is this important?

NM: We are attempting to solve a fundamental technology challenge required to make NFV successful for carriers. Telefónica has been doing a lot of internal trials and its own NFV R&D and has found that the placement of virtualised network functions greatly affects their performance.

We are working with Telefónica and Red Hat to solve this problem, to ensures virtualised network functions perform consistently and at their peak from one instance to another. This is particularly important for composite or clustered virtualised network function architectures, where the placement of various components can affect performance and availability.

Also, you need to ensure that the virtualised network functions are located where the most suitable compute, storage or networking resources are located. Other important performance metrics that need to be considered include latency and bandwidth availability into the cloud environment.

What is involved in enabling deterministic virtualised network functions, and what is the impact on Cyan's orchestration platform?

Deterministic placement requires an orchestration platform, such as Cyan’s Blue Planet, to be aware of the resources available at various NFV points of presence (PoPs) and to map operator-provided placement policies, such as performance and high-availability requirements, into placement decisions. In other words, which servers should be used and which PoP should host the virtualised network function.

For the operator, interested in application service-level agreements (SLAs) and performance, Cyan’s orchestration platform provides the intelligence to translate those policies into a placement architecture.

Does this Telefonica work also require Cyan's Z-Series packet-optical transport system (P-OTS)?

NFV is all about taking network functions currently deployed on purpose-built, vertically-integrated hardware platforms, and deploying them on industry-standard commercial off-the-shelf (COTS) servers, possibly in a virtualised environment running OpenStack, for example.

In such an set-up, Cyan’s Blue Planet orchestration platform is responsible for the deployment of the virtualised network functions into the NFV infrastructure or telco cloud. Cyan’s orchestration software is always deployed on COTS servers. There is no dependency on using Cyan’s P-OTS to use the Blue Planet software-defined networking (SDN) and NFV software.

The Z-Series platform can be used in the metro and wide area network to enable a scalable and programmable network. And this can supplement the virtual network functions deployed in the cloud to replace existing hardware-based solutions, but the Z-Series is not involved in this joint-effort with Telefónica and Red Hat.

WDM and 100G: A Q&A with Infonetics' Andrew Schmitt

The WDM optical networking market grew 8 percent year-on-year, with spending on 100 Gigabit now accounting for a fifth of the WDM market. So claims the first quarter 2014 optical networking report from market research firm, Infonetics Research. Overall, the optical networking market was down 2 percent, due to the continuing decline of legacy SONET/SDH.

In a Q&A with Gazettabyte, Andrew Schmitt, principal analyst for optical at Infonetics Research, talks about the report's findings.

Q: Overall WDM optical spending was up 8% year-on-year: Is that in line with expectations?

Andrew Schmitt: It is roughly in line with the figures I use for trend growth but what is surprising is how there is no longer a fourth quarter capital expenditure flush in North America followed by a down year in the first quarter. This still happens in EMEA but spending in North America, particularly by the Tier-1 operators, is now less tied to calendar spending and more towards specific project timelines.

This has always been the case at the more competitive carriers. A good example of this was the big order Infinera got in Q1, 2014.

You refer to the growth in 100G in 2013 as breathtaking. Is this growth not to be expected as a new market hits its stride? Or does the growth signify something else?

I got a lot of pushback for aggressive 100G forecasts in 2010 and 2011 when everyone was talking about, and investing in, 40G. You can read a White Paper I wrote in early 2011 which turned out to be pretty accurate.

My call was based on the fact that, fundamentally, coherent 100G shouldn’t cost more than 40G, and that service providers would move rapidly to 100G. This is exactly what has happened, outside AT&T, NTT and China which did go big with 40G. But even my aggressive 100G forecasts in 2012 and 2013 were too conservative.

I have just raised my 2014 100G forecast after meeting with Chinese carriers and understanding their plans. 100G will essentially take over almost all of the new installations in the core by 2016, worldwide, and that is when metro 100G will start. But there is too much hype on metro 100G right now given the cost, but within two years the price will be right for volume deployment by service providers.

There is so much 'blah blah blah' about video but 90 percent is cacheable. Cloud storage is not

You say the lion's share of 100G revenue is going to five companies: Alcatel-Lucent, Ciena, Cisco, Huawei, and Infinera. Most of the companies are North American. Is the growth mainly due to the US market (besides Huawei, of course). And if so, is it due to Verizon, AT&T and Sprint preparing for growing LTE traffic? Or is the picture more complex with cable operators, internet exchanges and large data centre players also a significant part of the 100G story, as Infinera claims.

It’s a lot more complex than the typical smartphone plus video-bandwidth-tsunami narrative. Many people like to attach the wireless metaphor to any possible trend because it is the only area perceived as having revenue and profitability growth, and it has a really high growth rate. But something big growing at 35 percent adds more in a year than something small growing at 70 percent.

The reality is that wireless bandwidth, as a percentage of all traffic, is still small. 100G is being used for the long lines of the network today as a more efficient replacement for 10G and while good quantitative measures don’t exist, my gut tells me it is inter-data-centre traffic and consumer/ business to data centre traffic driving most of the network growth today.

I use cloud storage for my files. I’m a die-hard Quicken user with 15 years of data in my file. Every time I save that file, it is uploaded to the cloud – 100MB each time. The cloud provider probably shifts that around afterwards too. Apply this to a single enterprise user - think about how much data that is for just one person. There is so much 'blah blah blah' about video but 90 percent is cacheable. Cloud storage is not.

Each morning a hardware specialist must wake up and prove to the world that they still need to exist

Cisco is in this list yet does not seek much media attention about its 100G. Why is it doing well in the growing 100G market?

Cisco has a slice of customers that are fibre-poor who are always seeking more spectral efficiency. I also believe Cisco won a contract with Amazon in Q4, 2013, but hey, it’s not Google or Facebook so it doesn’t get the big press. But no one will dispute Amazon is the real king of public cloud computing right now.

You’ve got to do hard stuff that others can’t easily do or you are just a commodity provider

In the data centre world, there is a sense that the value of specialist hardware is diminishing as commodity platforms - servers and switches - take hold. The same trend is starting in telecoms with the advent of Network Functions Virtualisation (NFV) and software-defined networking (SDN). WDM is specialist hardware and will remain so. Can WDM vendors therefore expect healthy annual growth rates to continue for the rest of the decade?

I am not sure I agree.

There is no reason transport systems couldn’t be white-boxed just like other parts of the network. There is an over-reaction to the impact SDN will have on hardware but there have always been constant threats to the specialist.

Each morning a hardware specialist must wake up and prove to the world that they still need to exist. This is why you see continued hardware vertical integration by some optical companies; good examples are what Ciena has done with partners on intelligent Raman amplification or what Infinera has done building a tightly integrated offering around photonic-integrated circuits for cheap regeneration. Or Transmode which takes a hacker’s approach to optics to offer customers better solutions for specific category-killer applications like mobile backhaul. Or you swing to the other side of the barbell, and focus on software, which appears to be Cyan’s strategy.

You’ve got to do hard stuff that others can’t easily do or you are just a commodity provider. This is why Cisco and Intel are investing in silicon photonics – they can use this as an edge against commodity white-box assemblers and bare-metal suppliers.

NFV moves from the lab to the network

Dor Skuler

Dor Skuler

In October 2012, several of the world's leading telecom operators published a document to spur industry action. Entitled Network Functions Virtualisation - Introductory White Paper, the document stressed the many benefits such a telecom transformation would bring: reduced equipment costs, power consumption savings, portable applications, and nimbleness instead of ordeal when a service is launched.

Eighteen months on and much progress has been made. Operators and vendors have been identifying the networking functions to virtualise on servers, and the impact Network Functions Virtualisation (NFV) will have on the network.

A group within ETSI, the standards body behind NFV, is fleshing out the architectural layers of NFV: the virtual network functions layer that resides above the management and orchestration one that oversees the servers, distributed in data centres across the network.

In the lab, network functions have been put on servers and then onto servers in the cloud. "Now we are at the start of the execution phase: leaving the lab and moving into first deployments in the network," says Dor Skuler, vice president and general manager of CloudBand, the NFV spin-in of Alcatel-Lucent. Skuler views 2014 as the year of experimentation for NFV. By 2015, there will be pockets of deployments but none at scale; that will start in 2016.

SDN is a simple way for virtual network functions to get what they need from the network through different commands

Deploying NFV in the network and at scale will require software-defined networking (SDN). That is because network functions make unique requirements of the network, says Skuler. Because the network functions are distributed, each application must make connections to the different sites on demand. "SDN is a simple way for virtual network functions to get what they need from the network through different commands," he says.

CloudBand's customers include Deutsche Telekom, Telefonica and NTT. Overall, the company says it is involved in 14 customer projects.

CloudBand 2.0

CloudBand has developed a management and orchestration platform, and launched an 'ecosystem' that includes 25 companies. Companies such as Radware and Metaswitch Networks are developing virtual network functions that use the CloudBand platform.

More recently, CloudBand has upgraded its platform, what it calls CloudBand 2.0, and has launched its own virtualised network functions (VNFs) for the Long Term Evolution (LTE) cellular standard. In particular, VNFs for the Evolved Packet Core (EPC), IP Multimedia Subsystem (IMS) and the radio access network (RAN). "These are now virtualised and running in the cloud," says Skuler.

SDN technology from Nuage Networks, another Alcatel-Lucent spin-in, has been integrated into the CloudBand node that is set up in a data centre. The platform also has enhanced management systems. "How to manage the many nodes into a single logical cloud, with a lot of tools that help applications," says Skuler. CloudBand 2.0 has also added support for OpenStack alongside its existing support for CloudStack. OpenStack and CloudStack are open-source platforms supporting cloud.

For the EPC, the functions virtualised are on the network side of the basestation: the Mobility Management Entity (MME), the Serving Gateway and Packet Data Network Gateway (S- and P-Gateways) and the Policy and Charging Rules Function (PCRF).

IMS is used for Voice over LTE (VoLTE). "Operators are looking for more efficient ways of delivering VoLTE," says Skuler. This includes reducing deployment times and scalability, growing the service as more users sign up.

The high-frequency parts of the radio access network, typically located in a remote radio head (RRH), cannot be virtualised. What can is the baseband processing unit (BBU). The BBUs run on off-the-shelf servers in pools up to 40km away from the radio heads. "This allows more flexible capacity allocation to different radio heads and easier scaling and upgrading," says Skuler.

Skuler points out that virtualising a function is not simply a case of putting a piece of code on a server running a platform such as CloudBand. "The VNF itself needs to go through a lot of change; a big monolithic application needs to be broken up into small components," he says.

"The VNF needs to use the development tools we offer in CloudBand so it can give rules so it can run in the cloud." The VNF also needs to know what key performance indicators to look at, and be able to request scaling, and inform the system when it is unhealthy and how to remedy the situation.

These LTE VNFs are designed to run on CloudBand and on other vendors' platforms. "CloudBand won't be run everywhere which is why we use open standards," says Skuler.

Pros and cons

The benefits from adopting NFV include prompt service deployment, "Today it can take 9-18 months for an operator to scale [a service]," says Skuler. The services, effectively software on servers, can scale more easily whereas today, typically, operators have to overprovision to ensure extra capacity is in place.

Less equipment also needs to be kept by operators for maintainance. "A typical North America mobile operator may have 450,000 spare parts," says Skuler; items such as line cards and power supplies. With automation and the use of dedicated servers, the number of spare parts held is typically reduced by a factor of ten.

Services can be scaled and healed, while functionality can be upgraded using software alone. "If I have a new verison of IMS, I can test it in parallel and then migrate users; all behind my desk at the push of a button," says Skuler.

The NFV infrastructure - comprising compute, storage, and networking resources - reside at multiple locations - the operator's points-of-presence. These resources are designed to be shared by applications - VNFs - and it is this sharing of a common pool of resources that is one of the biggest advantages of NFV, says Skuler.

But there are challenges.

"Operating [existing] systems has been relatively simple; if there is a faulty line card, you simply replace it," says Skuler. "Now you have all these virtual functions sitting on virtual machines across data centres and that creates complexities."

An application needs to be aware of this and provide the required rules to the management and orchestration system such as CloudBand. Such systems need to provide the necessary operational tools to operators to enable automated upgrades and automated scaling as well as pinpoint causes of failures.

For example, an IMS core might have 12 tiers. In cloud-speak, a tier is one of a set of virtual machines making up a virtual network function. Examples of a tier include a load balancer, an application or a database server. Each tier consists of one or more virtual machines. Scaling of capacity is enabled by adding or removing virtual machines from a tier.

In a cloud deployment, these linkages between tiers must be understood by the system to allow scaling. Two tiers may be placed in the same data centre to ensure low latency, but an extra pair of the tier-pair may be placed in separate sites in case one pair goes down. SDN is used to connect the different sites, says Skuler: "All this needs to be explained simply to the system so that it understands it and execute it".

That, he says, is what CloudBand does.

See also:

Telcos eye servers and software to meet networking needs, click here

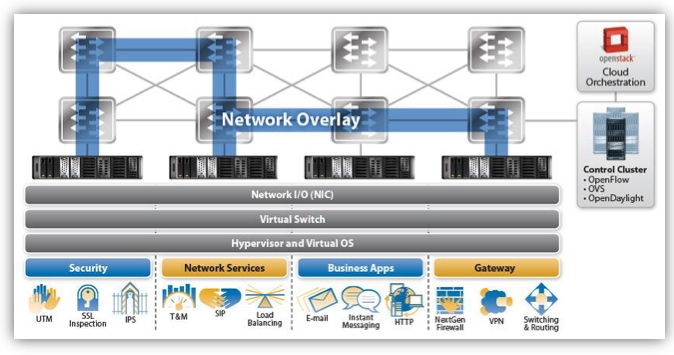

Netronome prepares cards for SDN acceleration

Source: Netronome

Source: Netronome

Netronome has unveiled 40 and 100 Gigabit Ethernet network interface cards (NICs) to accelerate data-plane tasks of software-defined networks.

Dubbed FlowNICs, the cards use Netronome's flagship NFP-6xxx network processor (NPU) and support open-source data centre technologies such as the OpenFlow protocol, the Open vSwitch virtual switch, and the OpenStack cloud computing platform.

Four FlowNIC cards have been announced with throughputs of 2x40 Gigabit Ethernet (GbE), 4 x 40GbE, 1 x 100GbE and 2 x 100GbE. The NICs use either two or four PCI Express 3.0 x8 lane interfaces to achieve the 100GbE and 200GbE throughputs.

"We are the first to provide these interface densities on an intelligent network interface card, built for SDN use-cases," says Robert Truesdell, product manager, software at Netronome. "We have taken everything that has been done in Open vSwitch that would traditionally run on an [Intel] x86-based server, and run it on our NFP-6xxx-based FlowNICs at a much faster rate."

The cards have accompanying software that supports OpenFlow and Open vSwitch. There are also two additional software packages: cloud services, which performs such tasks as implementing the tunnelling protocols used for network virtualisation, and cyber-security.

Implementing the switch and packet processing functions on a FlowNIC card instead of using a virtual switch frees up valuable computation resources on a server's CPUs, enabling data centre operators to better use their servers for revenue-generating tasks. "They are trying to extract the maximum revenue out of the server and that is what this buys," says Truesdell.

Designing the FlowNICs around a programable network processor has other advantages. "As standards evolve, that allows us to do a software revision rather than rely on a hardware or silicon revision," says Truesdell. The OpenFlow specification, for example, is revised every 6-12 months, he says, the most recent release being V1.4.

In the data centre, software-defined networking (SDN) uses a central controller to oversee the network connecting the servers. A server's NIC provides networking input/ output, while the server runs the software-based virtualised switch and the hypervisor that supports virtual machines and operating systems on which the applications run. Applications include security tasks such as SSL inspection, intrusion detection and intrusion prevention systems; load balancing; business applications like email, the hypertext transfer protocol and instant messaging; and gateway functions such as firewalls and virtual priviate networks (VPNs).

We have taken everything that has been done in Open vSwitch that would traditionally run on an x86-based server, and run it on our NFP-6xxx-based FlowNICs at a much faster rate

With Open vSwitch running on a server, the SDN protocols such as OpenFlow pass communications between the data plane device - either the softswitch or the FlowNIC - and the controller. "By supporting Open vSwitch and OpenFlow, it allows us to inherit compatibility with the [SDN] controller and orchestration layers," says Truesdell. The FlowNIC is compatible with controllers from OpenDaylight, Ryu and VMWare's NFX network virtualisation system, as well as orchestration platforms like OpenStack.

The virtual switch performs two main tasks. It inspects packet headers and passes the traffic to the appropriate virtual machines. The virtual switch also implements network virtualisation: setting up network overlays between servers for virtual machines to talk to each other.

Packet encapsulation and unwrapping performance required for network virtualisation tops out when implemented using the virtual switch, such that the throughput suffers. Moreover, packet header inspections can be nested if, for example, encryption and network virtualisation are used, further impacting performance. "The throughput rates can really suffer on a server because it is not optimised for this type of workload," says Truesdell.

Performing the tasks using the FlowNIC's network processor enables packet processing to keep up with the line rate including for the most demanding, shortest packet sizes. The issue with packet size is that the shorter the packet (the smaller the data payload), the more frequent the header inspections. "Data centre traffic is very bursty," says Truesdell. "These are not long-lived flows - they have high connection rates - and this drives the packet sizes down."

For a 10 Gigabit stream performing the Network Virtualization using Generic Routing Encapsulation (NVGRE) protocol, the forwarding throughput is at line rate for all packet sizes using the company's existing FlowNIC acceleration cards, based on its previous generation NFP-3240 network processor.

In contrast, NVGRE performance using Open vSwitch on the server is at 9Gbps for lengthy 1,500-byte packets and drops continually to 0.5Gbps for 64-byte packets. The average packet length is around 400 bytes, says Truesdell.

Overall, Netronome claims that using its FlowNICs, virtualised networking functions and server applications are boosted by over twentyfold compared to using the virtual switch on the server's CPU.

Netronome's FlowNIC-32xx cards are already used by one Tier 1 operator to perform gateway functions. The gateway, overseen using a Ryu controller running OpenFlow, translates between multi-tenant IP virtual LANs in the data centre and MPLS-based VPNs that connect the operator's enterprise customers.

The NFP-6xxx-based FlowNICs will be available for early access partners later this quarter. FlowNIC customers include data centre equipment makers, original design manufacturers and the largest content service providers - the 'internet juggernauts' - that operate hyper-scale data centres.

For an article written for Fibre Systems on network virtualisation and data centre trends, click here

G.fast adds to the broadband options of the service providers

Feature: G.fast

Source: Alcatel-Lucent

Source: Alcatel-Lucent

Competition is commonly what motivates service providers to upgrade their access networks. And operators are being given every incentive to respond. Cable operators are offering faster broadband rates and then there are initiatives such as Google Fiber.

Internet giant Google is planning 1 Gigabit fibre rollouts in up to 34 US cities covering 9 metro areas. The initiative prompted AT&T to issue its own list of 21 cities it is considering to offer a 1 Gigabit fibre-to-the-home (FTTH) service.

But delivering fibre all the way to the home is costly, and then there is the engineering time required to connect the home gateway to the network. Hence the operator interest in the emerging G.fast standard, the latest digital subscriber line (DSL) development that promises Gigabit rates using the telephone wire.

"G.fast eliminates the need to run fibre for the last 250 meters [to the home]," says Dudi Baum, CEO of Sckipio, an Israeli start-up developing G.fast chipsets. "Providing 1 Gigabit over a copper pair is cheaper and faster to deploy, compared to running fibre all the way."

For G.fast, you need the fibre closer to your house to get the Gigabit and that is not available today with most carriers

Until recently, operators faced a choice of whether to deploy FTTH or use fibre-to-the-node (FTTN) and VDSL to boost broadband rates. Now, such boundaries are disappearing, says Stefaan Vanhastel, marketing director for fixed networks, Alcatel-Lucent. Operators are more pragmatic in their deployments and are choosing the most suitable technology for a given deployment based on what is most cost effective and fastest to deploy.

"It is very much no longer black and white," agrees Julie Kunstler, principal analyst, components at market research firm, Ovum. "The same service providers will be supporting multiple access networks."

The advent of G.fast will enhance the operators' choice, boosting data rates while using existing copper to bridge the gap between the fibre and the home. But the technology is still some way off and views differs as to whether deployments will begin in 2015 or 2016.

"For G.fast, you need the fibre closer to your house to get the Gigabit and that is not available today with most carriers," says Arun Hiremath, director, marketing at DSL chip company, Ikanos Communications. It will likely start with some small scale deployments, he says, "but the carriers will wait a little more for things to mature".

G.fast

G.fast enables Gigabit rates over telephone wire by expanding the usable spectrum to 106MHz. This compares to the 17MHz spectrum used by VDSL2, the current most advanced deployed DSL standard. But adopting the wider spectrum exacerbates two local-loop characteristics that dictate DSL performance: signal attenuation and crosstalk.

Operating at higher frequencies induces signal attenuation, shortening the copper reach over which data can be sent. VDSL2 is deployed over 1,500m links typically, G.fast distances will more likely be 200m or less.

Dudi BaumCrosstalk refers to signal leakage between copper pairs in a cable bundle. A cable can be made up of tens or hundreds of copper twisted pairs. The leakages causes each twisted pair not only to carry the signal sent but also noise, the sum of the leakage components from neighbouring DSL pairs.

Crosstalk becomes more prominent the higher the frequency. "One reason why no one has developed G.fast technology until now is the challenge of handling crosstalk at the much higher frequencies," says Baum. Indeed, from G.fast field trials, observed crosstalk is so severe that from certain frequencies upwards, the interference is as strong as the received signal, says Paul Spruyt, DSL strategist for fixed networks at Alcatel-Lucent.

Vectoring

Vectoring is a technique use to tackle crosstalk and restore a line's data capacity. Vectoring uses digital signal processing to implement noise cancellation, and is already used for VDSL2. "Vectoring is considered a key aspect of G.fast, even more than for VDSL2," says Spruyt.

G.fast can be seen as a logical evolution of VDSL2 but there are also differences. Besides the wider 106MHz spectrum, G.fast has a different duplexing scheme. VDSL2 uses frequency-division duplexing (FDD) where the data transmission is continuous - upstream (from the home) and downstream - but on different frequency bands or tones. In contrast, G.fast uses time-division duplexing (TDD) where all the spectrum is used to either send data (upstream) or receive data.

If a cable carries both services to homes/ businesses, G.fast is started from the 17-106MHz band to avoid overlapping with VDSL2, since crosstalk cannot be cancelled between the two because of their differing duplexing schemes.

Paul Spruyt

Both DSL schemes use discrete multi-tone, where each tone carries data bits. But G.fast uses half the number of tones - 2,048 - with each tone 12 times the bandwidth of the tones used for VDSL2.

Operators can also configure the upstream and downstream ratio more easily using TDD. An 80 percent downstream/ 20 percent upstream is common to the home whereas businesses have symmetric data flows.

Only transmitting or only receiving also simplifies the G.fast analogue front-end circuitry since it is less susceptible to signal echo, whereas such an echo is an issue with VDSL2 due to the simultaneous sending and receiving of data.

Operators want G.fast to deliver 150 Megabit-per-second (Mbps) aggregate data rates over 250m, 200Mbps over 200m, 500Mbps over 100m and up to 1 Gigabit-per-second over shorter spans. This compares to VDSL2's 70Mbps (50Mbps downstream, 20Mbps upstream) over 400m. With vectoring, VDSL2 performance is doubled: 100Mbps downstream and 40Mbps for the same span.

Vectoring works by measuring the crosstalk coupling on each line before the DSLAM - the platform at the cabinet, or the fibre distribution point unit for G.fast - generates anti-noise to null each line's crosstalk.

The crosstalk coupling between the pairs is estimated using special modulated ‘sync’ symbols that are sent between data transmissions. A user's DSL modem expects to see the modulated sync symbol, but in reality receives the symbol distorted with crosstalk from modulated sync symbols transmitted on the neighbouring lines.

The modem measures the error – the crosstalk – and sends it to the DSLAM. The DSLAM correlates the received error values on the ‘victim’ line with the pilot sequences transmitted on all the other ‘disturber’ lines. This way, the DSLAM measures the crosstalk coupling for every disturber–victim pair. Anti-noise is then generated using a vectoring chip in the DSLAM, and injected into the victim line on top of the transmitted signal to cancel the crosstalk picked up, a process repeated for each line.

Such an approach is known as pre-coding: in the downstream direction anti-noise signals are generated and injected in the DSLAM before the signal is transmitted on the line. For the upstream, post-coding is used: the DSLAM generates and adds the anti-noise after reception of the signal distorted with crosstalk. In this case, the DSL modem sends modulated sync symbols and the DSLAM measures the error signal and performs the correlations and anti-noise calculations.

G.fast vectoring is more complex than vectoring for VDSL2.

Besides the strength of the crosstalk at higher frequencies, G.fast uses a power-saving mode that deactivates the line when no data is being sent. The vectoring algorithm must stop generating anti-noise each time the line is deactivated, while quickly generate anti-noise when transmission restarts. A VDSL2 modem line can also be deactivated but this is much less commonplace.

"The number of computations you need to do is proportional to the square of the number of lines," says Spruyt. For G.fast, the lines used are far less - 4 to 24 and even 48 in certain cases - because the G.fast mini-DSLAM is much closer to the home. For VDSL2, the number of lines can be 200 or 400.

However, the symbol rate of G.fast is related to the tone spacing and hence is 12 times faster than VDSL2. That requires faster calculation, but since G.fast has half the number of tones of VDSL2, and crosstalk cancellation is performed for each tone, the overall G.fast processing for G.fast is six times greater.

G.fast vectoring may thus be more complex but the overall computation - and power consumption - of the vectoring processor is lower than VDSL2 due to the fewer DSL lines.

We should expect the first generation of G.fast to consume more power than VDSL2 silicon

Chip developments

The G.fast analogue silicon requires much faster analogue-to-digital and digital-to-analogue converters due to the broader spectrum used, while the G.fast line drivers use a lower transmit power due to the shorter reach requirements. "We should expect the first generation of G.fast to consume more power than VDSL2 silicon," says Spruyt.

Stefaan Vanhastel

Stefaan Vanhastel

The main functional blocks for G.fast and VDSL2 include the baseband digital signal processor, vectoring, the analogue front end, and the line driver. The degree to which they are integrated in silicon - whether one chip or four if the home-gateway functions are included - depends on where they are used.

"The chipsets will be designed differently for the different segments where they are used," says Hiremath. For example, the G.fast modem could be implemented as a single chip that includes the baseband, home gateway, and even the line driver due to the short lengths involved, he says.

Moreover, while the G.fast standard does not require backward compatibility with VDSL2, there is nothing stopping chipmakers from supporting both. The same was true with VDSL2 yet the resulting chipsets also supported ADSL2.

Ikanos has yet to unveil its G.fast silicon but it has announced its Neos development platform for customers to test and trial the technology. Hiremath says its G.fast design is based on the Neos architecture and that it expects first samples later this year.

Start-up Sckipio has also to detail its G.fast silicon design but says it will provide more information in the coming months. G.fast has system requirements that are difficult to meet, says Baum: "The challenge is not to show the technology working but to meet the standard's boundary requirements with a small, efficient design that provides 1 Gigabit." By boundary conditions Baum is referring to performance requirements that the modem needs to achieve, such as certain speeds and distances with a given packet loss, for example.

Sckipio already has first samples of its silicon. The company ported the RTL design of its silicon onto a Cadence Palladium system - a box with hundreds of FPGAs that allows the complete hardware design to be built. The company also has DSL models - bundles of twisted copper pairs measured at greater than 200MHz - to test the design's performance. "We use those models to see the expected performance running our protocol over those wires," says Baum.

Alcatel-Lucent has developed its own vectoring know-how for VDSL2 and has now added G.fast. "Having our own vectoring technology means that we have our own vectoring processing," says Alcatel-Lucent's Vanhastel.

Alcatel-Lucent has conducted G.fast trials with A1 Telecom Austria. "The good news is that we have been able to show that with vectoring, you can get really close to single-user capacity; the same capacity you have if there is only a single user active on the line," says Vanhastel. In the trial using over 100m of cable, G.fast achieved 60Mbps due to crosstalk. "Activating G.fast vectoring it rose to 500Mbps - almost a factor of 10," he says.

Much work remains before G.fast is deployed in the network, says Alcatel-Lucent. The International Telecommunication Union's G.9701 G.fast physical layer document is 300 pages long and while consent has been achieved, approving the standard is expected to take the rest of the year. Interoperability, test, functionality and performance specifications are still to be written by the Broadband Forum and then there are regulatory issues to be overcome: G.fast's 106MHz spectrum overlaps with FM radio, for example.

Sckipio is more upbeat about timescales, believing operators will start deployments in 2015 due to competition including the cable operators. The start-up says it has multiple field trials of its G.fast silicon this year.

Meanwhile, extending the spectrum to 212MHz is the next logical step in the development of G.fast. "Bonding is another concept that could be applied," says Spruyt.

There is life in the plain old telephone service yet.

This is an extended version of an article that first appeared in New Electronics, click here.

OFDM promises compact Terabit transceivers

Source ECI Telecom

Source ECI Telecom

A one Terabit super-channel, crafted using orthogonal frequency-division multiplexing (OFDM), has been transmitted over a live network in Germany. The OFDM demonstration is the outcome of a three-year project conducted by the Tera Santa Consortium comprising Israeli companies and universities.

Current 100 Gig coherent networks use a single carrier for the optical transmission whereas OFDM imprints the transmitted data across multiple sub-carriers. OFDM is already used as a radio access technology, the Long Term Evolution (LTE) cellular standard being one example.

With OFDM, the sub-carriers are tightly packed with a spacing chosen to minimise the interference at the receiver. OFDM is being researched for optical transmission as it promises robustness to channel impairments as well as implementation benefits, especially as systems move to Terabit speeds.

"It is clear that the market has voted for single-carrier transmission for 400 Gig," says Shai Stein, chairman of the Tera Santa Consortium and CTO of system vendor, ECI Telecom. "But at higher rates, such as 1 Terabit, the challenge will be to achieve compact, low-power transceivers."

The real contribution [of OFDM] is implementation efficiency

Shai Stein

One finding of the project is that the OFDM optical performance matches that of traditional coherent transmission but that the digital signal processing required is halved. "The real contribution [of OFDM] is implementation efficiency," says Stein.

For the trial, the 175GHz-wide 1 Terabit super-channel signal was transmitted through several reconfigurable optical add/drop multiplexer (ROADM) stages. The 175GHz spectrum comprises seven, 25GHz bands. Two OFDM schemes were trialled: 128 sub-carriers and 1024 sub-carriers across each band.

To achieve 1 Terabit, the net data rate per band was 142 Gigabit-per-second (Gbps). Adding the overhead bits for forward error corrections and pilot signals, the gross data rate per band is closer to 200Gbps.

The 128 or 1024 sub-carriers per band are modulated using either quadrature phase-shift keying (QPSK) or 16-quadrature amplitude modulation (16-QAM). One modulation scheme - QPSK or 16-QAM - was used across a band, although Stein points out that the modulation scheme can be chosen on a sub-carrier by sub-carrier basis, depending on the transmission conditions.

The trial took place at the Technische Universität Dresden, using the Deutsches Forschungsnetz e.V. X-WiN research network. The signal recovery was achieved offline using MATLAB computational software. "It [the trial] was in real conditions, just the processing was performed offline," says Stein. The MATLAB algorithms will be captured in FPGA silicon and added to the transciever in the coming months.

Using a purpose-built simulator, the Tera Santa Consortium compared the OFDM results with traditional coherent super-channel transmission. "Both exhibited the same performance," says David Dahan, senior research engineer for optics at ECI Telecom. "You get a 1,000km reach without a problem." And with hybrid EDFA-Raman amplification, 2,000km is possible. The system also demonstrated robustness to chromatic dispersion. Using 1024 sub-carriers, the chromatic dispersion is sufficient low that no compensation is needed, says ECI.

Stein says the project has been hugely beneficial to the Israeli optical industry: "There has been silicon photonics, transceiver and algorithmic developments, and benefits at the networking level." For ECI, it is important that there is a healthy local optical supply chain. "The giants have that in-house, we do not," says Stein.

One Terabit transmission will be realised in the marketplace in the next two years. Due to the project, the consortium companies are now well placed to understand the requirements, says Stein.

Set up in 2011, the Tera Santa Consortium includes ECI Telecom, Finisar, MultiPhy, Cello, Civcom, Bezeq International, the Technion Israel Institute of Technology, Ben-Gurion University, and the Hebrew University in Jerusalem, Bar-Ilan University and Tel-Aviv University.