Ciena's stackable platform for data centre interconnect

Ciena is the latest system vendor to unveil its optical transport platform for the burgeoning data centre interconnect market. Data centre operators require scalable platforms that can carry significant amounts of traffic to link sites over metro and long-haul distances, and are power efficient.

The Waveserver stackable interconnect system delivers 800 Gig traffic throughput in a 1 rack unit (1RU) form factor. The throughput comprises 400 Gigabit of client-side interfaces and 400 Gigabit coherent dense WDM transport.

For the Waveserver’s client-side interfaces, a mix of 10, 40 and 100 Gigabit interfaces can be used, with the platform supporting the latest 100 Gig QSFP28 optical module form factor. One prominent theme at the recent OFC 2015 show was the number of interface types now supported in a QSFP28.

On the line side, Ciena uses two of its latest WaveLogic 3 Extreme coherent DSP-ASICs. Each DSP-ASIC supports polarisation multiplexing, 16 quadrature amplitude modulation (PM–16-QAM), equating to 200 Gigabit transmission capacity.

The Extreme was chosen rather than Ciena’s more power-efficient WaveLogic 3 Nano DSP-ASIC to maximise capacity over a fibre. “The amount of fibre the internet content providers have tends to be limited so getting high capacity is key,” says Michael Adams, vice president of product and technical marketing at Ciena. The Nano DSP-ASIC does not support 16-QAM.

A rack can accommodate up to 44 Waveserver stackable units to deliver 88 wavelengths, each 50GHz wide, or 17.6 Terabit-per-second (Tbps) of capacity. And up to 96 wavelengths, or 19.2Tbps, is supported on a fibre pair.

"We are going down the path of opening the platform to automation"

“We could add flexible grid and probably get closer to 24 or 25 Tbps,” says Adams. Flexible grid refers to moving off the C-band's set ITU grid by using digital signal processing at the transmitter. By shaping the signal before it is sent, each carrier can be squeezed from a 50GHz channel into a 37.5GHz wide one, boosting overall capacity carried over the fibre.

Adams says that it is not straightforward to compare the power consumption of different vendors’ data centre interconnect platforms but Ciena believes its platform is competitive. He estimates that the Waveserver consumes between 1W and 1.5W per Gigabit line side.

Ciena has stated that between five and 10 percent of its revenues come from web-scale customers, and accounts for a third of its total 100 Gig line-side port shipments.

Web-scale companies include Internet content providers, providers of data centre co-location and interconnect, and enterprises. Web-scale companies also drive the traditional telecom optical networking market as they also use large amounts of the telcos' network capacity to link their sites.

The global data centre interconnect market grew 16 percent in 2014 to reach $US 2.5 billion, according to market research firm, Ovum. Almost half of the spending was by the communications service providers whereas the Internet content providers spending grew 64 percent last year.

Open software

Ciena also announced an open application development environment, dubbed emulation cloud, that allows applications to be developed without needing Waveserver hardware.

One obvious application is the moving server virtual machines between data centres. But more novel applications can be developed by the data centre operators and third-party developers. Ciena cites what it calls an augmented reality application that allows a mobile phone to be pointed at a Waveserver to inform the of user the status of the machine: which ports are active and what type of bandwidth each port is consuming. “It can also show power and specific optical parameters of each line port,” says Adams. “Right there, you have all the data you need to know.”

The Waveserver platform also comes with software that allows data centre managers to engineer, plan, provision and operate links via a browser. More sophisticated users can benefit from Ciena’s OPn architecture and a set of open application programming interfaces (APIs).

“We are going down the path of opening the platform to automation,” says Adams. “We can foresee for the most sophisticated users, plugging into APIs and going to some very specific optical parameters and playing with them.”

Waveserver Status

Ciena is demonstrating its Waveserver platform to over 100 customers, as part of an annual event at the company’s Ottawa site.

“We are well engaged with a variety of Internet content providers,” says Adams. “We will be in trials with many of those folks this summer.” General availability is expected at the end of the third quarter.

In May, Ciena announced it had entered a definitive agreement to acquire Cyan. Cyan announced its own N-Series data centre interconnect platform earlier this year. Ciena says it is premature to comment on the future of the N-Series platform.

OIF moves to raise coherent transmission baud rate

"We want the two projects to look at those trade-offs and look at how we could build the particular components that could support higher individual channel rates,” says Karl Gass of Qorvo and the OIF physical and link layer working group vice chair, optical.

Karl Gass

Karl Gass

The OIF members, which include operators, internet content providers, equipment makers, and optical component and chip players, want components that work over a wide bandwidth, says Gass. This will allow the modulator and receiver to be optimised for the new higher baud rate.

“Perhaps I tune it [the modulator] for 40 Gbaud and it works very linearly there, but because of the trade-off I make, it doesn’t work very well anywhere else,” says Gass. “But I’m willing to make the trade-off to get to that speed.” Gass uses 40 Gbaud as an example only, stressing that much work is required before the OIF members choose the next baud rate.

"We want the two projects to look at those trade-offs and look at how we could build the particular components that could support higher individual channel rates”

The modulator and receiver optimisations will also be chosen independent of technology since lithium niobate, indium phosphide and silicon photonics are all used for coherent modulation.

The OIF has not detailed timescales but Gass says projects usually take 18 months to two years.

Meanwhile, the OIF has completed two projects, the specification outputs of which are referred to as implementation agreements (IAs).

One is for integrated dual polarisation micro-intradyne coherent receivers (micro-ICR) for the CFP2. At OFC 2015, several companies detailed first designs for coherent line side optics using the CFP2 module.

The second completed IA is the 4x5-inch second-generation 100 Gig long-haul DWDM transmission module.

OFC 2015 digest: Part 2

- CFP4- and QSFP28-based 100GBASE-LR4 announced

- First mid-reach optics in the QSFP28

- SFP extended to 28 Gigabit

- 400 Gig precursors using DMT and PAM-4 modulations

- VCSEL roadmap promises higher speeds and greater reach

OFC 2015 digest: Part 1

- Several vendors announced CFP2 analogue coherent optics

- 5x7-inch coherent MSAs: from 40 Gig submarine and ultra-long haul to 400 Gig metro

- Dual micro-ITLAs, dual modulators and dual ICRs as vendors prepare for 400 Gig

- WDM-PON demonstration from ADVA Optical Networking and Oclaro

- More compact and modular ROADM building blocks

JDSU also showed a dual-carrier coherent lithium niobate modulator capable of 400 Gig for long-reach applications. The company is also sampling a dual 100 Gig micro-ICR also for multiple sub-channel applications.

Avago announced a micro-ITLA device using its external cavity laser that has a line-width less than 100kHz. The micro-ITLA is suited for 100 Gig PM-QPSK and 200 Gig 16-QAM modulation formats and supports a flex-grid or gridless architecture.

Optical networking: The next 10 years

Predicting the future is a foolhardy endeavour, at best one can make educated guesses.

Ioannis Tomkos is better placed than most to comment on the future course of optical networking. Tomkos, a Fellow of the OSA and the IET at the Athens Information Technology Centre (AIT), is involved in several European research projects that are tackling head-on the challenges set to keep optical engineers busy for the next decade.

“We are reaching the total capacity limit of deployed single-mode, single-core fibre,” says Tomkos. “We can’t just scale capacity because there are limits now to the capacity of point-to-point connections.”

Source: Infinera

Source: Infinera

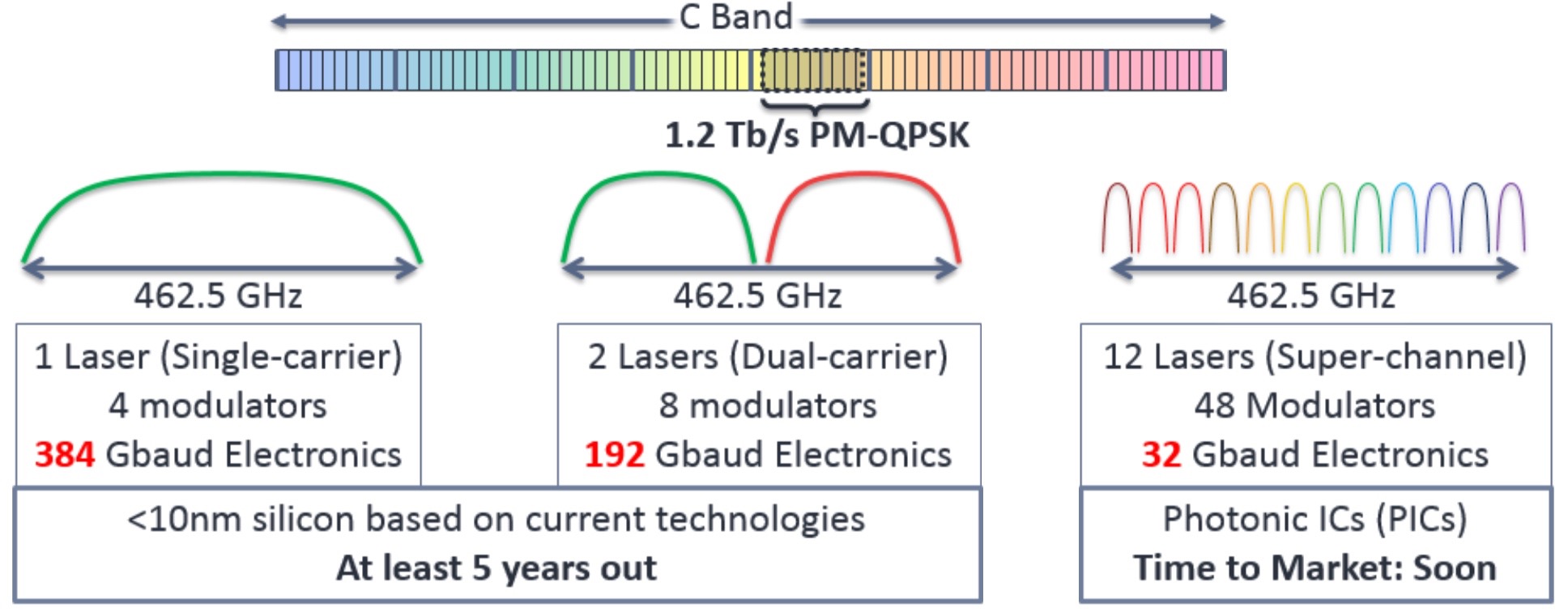

The industry consensus is to develop flexible optical networking techniques that make best use of the existing deployed fibre. These techniques include using spectral super-channels, moving to a flexible grid, and introducing ‘sliceable’ transponders whose total capacity can be split and sent to different locations based on the traffic requirements.

Once these flexible networking techniques have exhausted the last Hertz of a fibre’s C-band, additional spectral bands of the fibre will likely be exploited such as the L-band and S-band.

After that, spatial-division multiplexing (SDM) of transmission systems will be used, first using already deployed single-mode fibre and then new types of optical transmission systems that use SDM within the same optical fibre. For this, operators will need to put novel fibre in the ground that have multiple modes and multiple cores.

SDM systems will bring about change not only with the fibre and terminal end points, but also the amplification and optical switching along the transmission path. SDM optical switching will be more complex but it also promises huge capacities and overall dollar-per-bit cost savings.

Tomkos is heading three European research projects - FOX-C, ASTRON & INSPACE.

FOX-C involves adding and dropping all-optically sub-channels from different types of spectral super-channels. ASTRON is undertaing the development of a one terabit transceiver photonic integrated circuit (PIC). The third, INSPACE, will undertake the development of new optical switch architectures for SDM-based networks.

FOX-C

Spectral super-channels are used to create high bit-rate signals - 400 Gigabit and greater - by combining a number of sub-channels. Combining sub-channels is necessary since existing electronics can’t create such high bit rates using a single carrier.

Infinera points out that a 1.2 Terabit-per-second (Tbps) signal implemented using a single carrier would require 462.5 GHz of spectrum while the accompanying electronics to achieve the 384 Gigabaud (Gbaud) symbol rate would require a sub-10nm CMOS process, a technology at least five years away.

- Those that use non-overlapping sub-channels implemented using what is called Nyquist multiplexing.

- And those with overlapping sub-channels using orthogonal frequency division multiplexing (OFDM).

COBO acts to bring optics closer to the chip

The goal of COBO, announced at the OFC 2015 show and backed by such companies as Microsoft, Cisco Systems, Finisar and Intel, is to develop a technology roadmap and common specifications for on-board optics to ensure interoperability.

“The Microsoft initiative is looking at the next wave of innovation as it relates to bringing optics closer to the CPU,” says Saeid Aramideh, co-founder and chief marketing and sales officer for start-up Ranovus, one of the founding members of COBO. “There are tremendous benefits for such an architecture in terms of reducing power dissipation and increasing the front panel density.”

On-board optics refers to optical engines or modules placed on the printed circuit board, close to a chip. The technology is not new; Avago Technologies and Finisar have been selling such products for years. But these products are custom and not interoperable.

Placing the on-board optics nearer the chip - an Ethernet switch, network processor or a microprocessor for example - shortens the length of the board’s copper traces linking the two. The fibre from the on-board optics bridges the remaining distance to the equipment’s face plate connector. Moving the optics onto the board reduces the overall power consumption, especially as 25 Gigabit-per-second electrical lanes start to be used. The fibre connector also uses far less face plate area compared to pluggable modules, whether the CFP2, CFP4, QSFP28 or even an SFP+.

“The [COBO] initiative is going to be around defining the electrical interface, the mechanical interface, the power budget, the heat-sinking constraints and the like,” says Aramideh.

To understand why such on-board optics will be needed, Aramideh cites Broadcom’s StrataXGS Tomahawk switch chips used for top-of-rack and aggregation switches. The Tomahawk is Broadcom’s first switch family that use 25 Gbps serialiser/ deserialiser (serdes) and has an aggregate switch bandwidth of up to 3.2 terabit. And Broadcom is not alone. Cavium through its Xplaint acquisition has the CNX880xx line of Ethernet switch chips that also uses 25 Gbps lanes and has a switch capacity up to 3.2 terabit.

“You have 1.6 terabit going to the front panel and 1.6 terabit going to the back panel; that is a lot of traces,” says Aramideh. “If you make this into opex [operation expense], and put the optics close to the switch ASIC, the overall power consumption is reduced and you have connectivity to the front and the back.”

This is the focus of Ranovus, with the OpenOptics MSA initiative. “Scaling into terabit connectivity over short distances and long distances,” he says.

OpenOptics MSA

At OFC, members of the OpenOptics MSA, of which Ranovus and Mellanox are founders, published its WDM specification for an interoperable 100 Gbps WDM standard that will have a two kilometer reach.

The 100 Gigabit standard uses 4x25 Gbps wavelengths but Aramideh says the standard scales to 8, 16 and 32 lanes. In turn, there will also be a 50 Gbps lane version that will provide a total connectivity of 1.6 terabit (32x50 Gbps).

Ranovus has not detailed what modulation scheme it will use to achieve 50 Gbps lanes, but Aramideh says that PAM-4 is one of the options and an attractive one at that. “There are also a lot of chipsets [supporting PAM-4] becoming available,” he says.

Ranovus’s first products will be an OpenOptics MSA optical engine and an QSFP28 optical module. “We are not making any product announcements yet but there will be products available this year,” says Aramideh.

Meanwhile, Ciena has become the sixth member to join the OpenOptics MSA.

Calient uses its optical switch to boost data centre efficiency

The company had been selling its 320x320 non-blocking optical circuit switch (OCS) for applications such as submarine cable landing sites and for government intelligence. Then, five years ago, a large internet content provider contacted Calient, saying it had figured out exactly where Calient’s OCS could play a role in the data centre.

This solution could deliver a significant percentage-utilisation improvement

This solution could deliver a significant percentage-utilisation improvement

Daniel Tardent

But before the hyper-scale data centre operator would adopt Calient’s switch, it wanted the platform re-engineered. It viewed Calient’s then-product as power-hungry and had concerns about the switch’s reliability given it had not been deployed in volume. If Calient could make its switch cheaper, more power efficient and prove its reliability, the internet content provider would use it in its data centres.

Calient undertook the re-engineering challenge. The company did not change the 3D micro electromechanical system (MEMS) chip at the heart of the OCS, but it fundamentally redesigned the optics, electronics and control system surrounding the MEMS. The result is Calient’s S-Series switch family, the first product of which was launched three years ago.

“That switch family represented huge growth for us,” says Daniel Tardent, Calient’s vice president of marketing and product line manager. “We went from making one switch every two weeks to a large number of switches each week, just for this one customer.”

Calient has remained focussed on the data centre ever since, working to understand the key connectivity issues facing the hyper-scale data centre operators.

“All these big cloud facilities have very large engineering teams that work on customising their architectures for exactly the applications they are running,” says Tardent. “There isn’t one solution that fits all.”

There are commonalities among the players in how Calient’s OCS can be deployed but what differs is the dimensioning and the connectivity used by each.

Greater commonality will be needed by those customers that represent the tier below the largest data centre players, says Tardent: “These don’t have a lot of engineering resource and want a more packaged solution.”

What really interests the big data players is how they can better utilise their compute and storage resources because that is where their major cost is

LightConnect fabric manager

Calient unveiled at the OFC 2015 show held in Los Angeles last month its LightConnect fabric manager software. The software, working with Calient’s S-Series switches, is designed to better share the data centre’s computing and storage resources.

The move to improve the utilisation of data centre resources is a new venture for the company. Initially, the company tackled how the OCS could improve data centre networking linking the servers and storage. The company explored using its OCS products to offload large packet flows - dubbed elephant flows - to improve overall efficiency.

Elephant flows are specific packet flows that need to be moved across the data centre. Examples include moving a virtual machine from one server to another, or replicating or relocating storage. Different data centre operators have different definitions as to what is an elephant flow but one data centre defines it as any piece of data greater than 20 Gbyte, says Calient.

If persistent elephant flows run through the network, they clog up the network buffers, impeding the shorter ‘mice’ flows that are just as important for the efficient working of the data centre. Congested buffers increase latency and adversely affect workloads. “If you are moving a large piece of data across the data centre, you want to move it quickly and efficiently,” says Tardent.

Calient’s OCS, by connecting top-of-rack switches, can be used to offload the elephants flows. In effect, the OCS acts as an optical expressway, bypassing the electrical switch fabric.

Now, with the launch of the LightConnect fabric manager, Calient is tackling a bigger issue: how to benefit the overall economics of the data centre by improving server and storage utilisation.

“What really interests the big data players is how they can better utilise their compute and storage resources because that is where their major cost is,” says Tardent.

Today’s data centres run at up to 40 percent server utilisation. Given that the largest data centres can house 100,000 servers, just one percent improvement in usage has a significant impact on overall cost.

Calient claims that a 1.6 percent improvement in server utilisation covers the cost of introducing its OCS into the data centre. An average of nine to 14 percent utilisation improvement and all the data centre’s networking costs are covered. “The nine to 14 percent is a range that depends on how ‘thin’ or ‘fat’ the network layer is,” says Tardent. “A thin network design has less bandwidth and is less expensive.” Both types exist depending on the particular functions of the data centre, he says.

Virtual pods

Data centres are typically organised into pods or clusters. A pod is a collection of servers, storage and networking. A pod varies with data centre operator but an example is 16 rows of 8 server racks plus storage.

Pods are popular among the large data centre players because they enable quick replication, whether it is bringing resources online or by enabling pod maintenance by switching in a replacement pod first.

One issue data centre managers must grapple with is when one pod is heavily loaded while others have free resources. One approach is to move the workload to the other lightly-used pods. This is non-trivial, though; it requires policies, advanced planning and it is not something that can be done in real-time, says Tardent: “And when you move a big workload between pods, you create a series of elephant flows."

An alternative approach is to move part of the workload to a less-used pod. But this runs the risk of increasing the latency between different parts of the workload. “In a big cloud facility with some big applications, they require very tightly-coupled resources,” he says.

Instead, data centre players favour over-provisioning: deliberately under-utilising their pods to leave headroom for worst-case workload expansion. “You spend a lot of money to over-provision every pod to allow for a theoretical worst-case,” says Tardent.

Calient proposes that its OCS switch fabric be used to effectively move platform resources to pods that are resource-constrained rather move workloads to pods. Hence the term virtual pods or v-pods.

For example, some of the resources in two under-utilised pods can be connected to a third, heavily-loaded pod to create a virtual pod with effectively more rows of racks. “Because you are doing it at the physical layer as opposed to going through a layer-2 or layer-3 network, it truly is within the same physical pod,” says Tardent. “It is as if you have driven a forklift, picked up that row and moved it to the other pod.”

In practice, data centre managers can pull resources from anywhere in the data centre, or they can allocate particular resources permanently to one pod by not going through the OCS optical layer.

The LightConnect fabric manager software can be used as a standalone system to control and monitor the OCS switch fabric. Or the fabric manager software can be integrated within the existing data centre management system using several application programming interfaces.

Calient has not quoted exact utilisation improvement figures that result from using its OCS switches and LightConnect software.

“We have had acknowledgement that this solution could deliver a significant percentage-utilisation improvement and we will be going into a proof-of-concept deployment with one of the large cloud data centres very soon,” says Tardent. Calient is also in discussion with several other cloud providers.

LightConnect will be a commercially deployable system starting mid-year.

PMC advances OTN with 400 Gigabit processor

Optical modules for the line-side are moving beyond 100 Gigabits to 200 Gigabit and now 400 Gigabit transmission rates. Such designs are possible thanks to compact photonics designs and coherent DSP-ASICs implemented using advanced CMOS processes.

An example switching application showing different configurations of the DIGi-G4 OTN processor on the line cards. Source: PMC

An example switching application showing different configurations of the DIGi-G4 OTN processor on the line cards. Source: PMC

For engineers, the advent of higher-speed line-side interfaces sets new challenges when designing the line cards for optical networking equipment. In particular, the framer silicon that interfaces to the coherent DSP-ASIC, on the far side of the optics, must cope with a doubling and quadrupling of traffic.

Such line cards for metro network platforms is where PMC-Sierra is targeting its latest 400 Gigabit DIGI-G4 Optical Transport Network (OTN) processor.

The OTN standard, defined by the telecom standards body of the International Telecommunication Union (ITU-T), performs several roles in the network. It is a layer-one technology that packages packet and circuit-switched traffic. OTN wraps traffic in a variety of container sizes for transport, from 1 Gigabit (OTU1) to 100 Gigabit (OTU4). And now 100 Gigabit can be viewed as a sub-frame, multiples of which can be combined to create even larger frames, dubbed OTUCn, where n is a multiple of 100 Gig.

Using OTN, container traffic can be broken up, switched and recombined within new containers before being transmitted optically. OTN also provides forward error correction and network management features.

PMC’s DIGI-G4 OTN processor is aimed at next-generation packet-optical transport systems (P-OTS) adopting 400 Gig line cards, and for platforms for the burgeoning data centre interconnect market.

“The amounts of traffic internet content providers need between their data centres is astonishing; they are talking hundreds of terabits of traffic,” says Hamish Dobson, director of strategic marketing at PMC. Hyper-scale data centre operators, unlike telcos, do not require OTN switching but they are keen on OTN as the DWDM management layer, he says: “I’m not aware of any of the hyper-scale players who are deploying their own networks who are not using OTN as the un-channelised digital wrapper on their systems.”

The DIGI-G4 does more than simply quadruple OTN traffic throughput compared to PMC’s existing DIGI 120G OTN processor. The chip also adds encryption hardware to secure links while supporting the emerging Transport Software-Defined Networking (Transport SDN).

DIGI-G4

The DIGI-G4 increases by fourfold the traffic throughput while halving the power-per-port compared to PMC's DIGI 120G. System designers must control the total power consumption of the line card, given the greater interface density, and when metro equipment platforms’ power profile is already at 500W-per-slot, says Dobson. PMC has halved the power consumption-per-port by implementing the latest OTN processor in a 28 nm CMOS process and by using more power-efficient serialisers/ deserialisers (serdes).

Internet content providers with their use of distributed data centres is one reason for the device’s introduction of the Advanced Encryption Standard (AES-256). Another is the emergence of cloud services and the need to secure individual customer’s traffic.

“We have added a channelised hardware [encryption] engine,” says Dobson. “The encryption engine is capable of being applied to any OTN channel in the device.”

Other features of the Digi-G4 include input/ output (I/O) capable of 28 Gigabit-per-second (Gbps). This enables the DIGI-G4 to connect directly to CFP2 and CFP4 pluggable optics without the need for gearbox devices on the line card, reducing power and overall cost.

The OTN chip is a hybrid design capable of processing 400 Gigabit of packet traffic or 400 Gig of circuit (time-division multiplexed) traffic, or any combination of the two, with a granularity of one gigabit channels. “It can switch a full 400 Gig's worth of one Gigabit ODU0 channels,” says Dobson.

The Digi-G4 also support a pre-standard implementation of the OTUC2 and OTUC4 transport units that are two and four multiples of 100 Gigabit, respectively. The OTUCn standard is not expected to be ratified before 2017.

We will see the capabilities of these new packet-optical systems coming together with SDN to enable interesting things to be done in the metro

Hamish Dobson

Transport SDN

SDN will have a significant effect on the transport network, says Dobson. In particular Transport SDN where SDN is applied to the transport layers of the wide area network (WAN). As such, OTN plays an important role in multi-layer optimisation. Packet-optical transport systems, which support packet and optical within the same platform, are ideal for getting much more efficiency out of the optical spectrum, he says.

Using Transport SDN to co-ordinate packet, OTN and the optical layer, routing decisions can be made aware of available capacity in the optical domain. In turn, network protection decisions can also be based on optical capacity availability. “The DIGI-G4, being a hybrid processor to enable these multi-layer platforms, is an important element to bring this all together,” says Dobson.

OTN also aids the virtualisation of optical resources whereby individual enterprises can be given a simpler, subset view of the network. “We need more than just wavelength granularity in the network,” says Dobson. Since 100 Gigabit and, in future, 200 and 400 Gig lightwaves, are such large pipes, these are inevitably filled with multiple traffic flows. “Channelised OTN and OTN switching are how carriers are going to break down these massive amounts of optical capacity and partition them for various uses,” says Dobson.

A third element whereby OTN aids Transport SDN is the move to on-demand provisioning by adapting capacity at the OTN layer. Dobson cites the ITU-T G.7044/Y.1347 (G.HAO) standard, which the DIGI-G4 supports, whereby frame size can be adjusted using ODUflex without impacting existing network traffic.

“We will see the capabilities of these new packet-optical systems coming together with SDN to enable interesting things to be done in the metro,” says Dobson.

Samples of the DIGI-G4 are already with customers.

Further reading

White Paper: Benefits of OTN in Transport SDN, click here and then 'documentation'

Heading off the capacity crunch

Improving optical transmission capacity to keep pace with the growth in IP traffic is getting trickier.

Engineers are being taxed in the design decisions they must make to support a growing list of speeds and data modulation schemes. There is also a fissure emerging in the equipment and components needed to address the diverging needs of long-haul and metro networks. As a result, far greater flexibility is needed, with designers looking to elastic or flexible optical networking where data rates and reach can be adapted as required.

Figure 1: The green line is the non-linear Shannon limit, above which transmission is not possible. The chart shows how more bits can be sent in a 50 GHz channel as the optical signal to noise ratio (OSNR) is increased. The blue dots closest to the green line represent the performance of the WaveLogic 3, Ciena's latest DSP-ASIC family. Source: Ciena.

Figure 1: The green line is the non-linear Shannon limit, above which transmission is not possible. The chart shows how more bits can be sent in a 50 GHz channel as the optical signal to noise ratio (OSNR) is increased. The blue dots closest to the green line represent the performance of the WaveLogic 3, Ciena's latest DSP-ASIC family. Source: Ciena.

But perhaps the biggest challenge is only just looming. Because optical networking engineers have been so successful in squeezing information down a fibre, their scope to send additional data in future is diminishing. Simply put, it is becoming harder to put more information on the fibre as the Shannon limit, as defined by information theory, is approached.

"Our [lab] experiments are within a factor of two of the non-linear Shannon limit, while our products are within a factor of three to six of the Shannon limit," says Peter Winzer, head of the optical transmission systems and networks research department at Bell Laboratories, Alcatel-Lucent. The non-linear Shannon limit dictates how much information can be sent across a wavelength-division multiplexing (WDM) channel as a function of the optical signal-to-noise ratio.

A factor of two may sound a lot, says Winzer, but it is not. "To exhaust that last factor of two, a lot of imperfections need to be compensated and the ASIC needs to become a lot more complex," he says. The ASIC is the digital signal processor (DSP), used for pulse shaping at the transmitter and coherent detection at the receiver.

Our [lab] experiments are within a factor of two of the non-linear Shannon limit, while our products are within a factor of three to six of the Shannon limit - Peter Winzer

At the recent OFC 2015 conference and exhibition, there was plenty of announcements pointing to industry progress. Several companies announced 100 Gigabit coherent optics in the pluggable, compact CFP2 form factor, while Acacia detailed a flexible-rate 5x7 inch MSA capable of 200, 300 and 400 Gigabit rates. And research results were reported on the topics of elastic optical networking and spatial division multiplexing, work designed to ensure that networking capacity continues to scale.

Trade-offs

There are several performance issues that engineers must consider when designing optical transmission systems. Clearly, for submarine systems, maximising reach and the traffic carried by a fibre are key. For metro, more data can be carried on a single carrier to improving overall capacity but at the expense of reach.

Such varied requirements are met using several design levers:

- Baud or symbol rate

- The modulation scheme which determines the number of bits carried by each symbol

- Multiple carriers, if needed, to carry the overall service as a super-channel

The baud rate used is dictated by the performance limits of the electronics. Today that is 32 Gbaud: 25 Gbaud for the data payload and up to 7 Gbaud for forward error correction and other overhead bits.

Doubling the symbol rate from 32 Gbaud used for 100 Gigabit coherent to 64 Gbaud is a significant challenge for the component makers. The speed hike requires a performance overhaul of the electronics and the optics: the analogue-to-digital and digital-to-analogue converters and the drivers through to the modulators and photo-detectors.

"Increasing the baud rate gives more interface speed for the transponder," says Winzer. But the overall fibre capacity stays the same, as the signal spectrum doubles with a doubling in symbol rate.

However, increasing the symbol rate brings cost and size benefits. "You get more bits through, and so you are sharing the cost of the electronics across more bits," says Kim Roberts, senior manager, optical signal processing at Ciena. It also implies a denser platform by doubling the speed per line card slot.

As you try to encode more bits in a constellation, so your noise tolerance goes down - Kim Roberts

Modulation schemes

The modulation used determines the number of bits encoded on each symbol. Optical networking equipment already use binary phase-shift keying (BPSK or 2-quadrature amplitude modulation, 2-QAM) for the most demanding, longest-reach submarine spans; the workhorse quadrature phase-shift keying (QPSK or 4-QAM) for 100 Gigabit-per-second (Gbps) transmission, and the 200 Gbps 16-QAM for distances up to 1,000 km.

Moving to a higher QAM scheme increases WDM capacity but at the expense of reach. That is because as more bits are encoded on a symbol, the separation between them is smaller. "As you try to encode more bits in a constellation, so your noise tolerance goes down," says Roberts.

One recent development among system vendors has been to add more modulation schemes to enrich the transmission options available.

From QPSK to 16-QAM, you get a factor of two increase in capacity but your reach decreases of the order of 80 percent - Steve Grubb

Besides BPSK, QPSK and 16-QAM, vendors are adding 8-QAM, an intermediate scheme between QPSK and 16-QAM. These include Acacia with its AC-400 MSA, Coriant, and Infinera. Infinera has tested 8-QAM as well as 3-QAM, a scheme between BPSK and QPSK, as part of submarine trials with Telstra.

"From QPSK to 16-QAM, you get a factor of two increase in capacity but your reach decreases of the order of 80 percent," says Steve Grubb, an Infinera Fellow. Using 8-QAM boosts capacity by half compared to QPSK, while delivering more signal margin than 16-QAM. Having the option to use the intermediate formats of 3-QAM and 8-QAM enriches the capacity tradeoff options available between two fixed end-points, says Grubb.

Ciena has added two chips to its WaveLogic 3 DSP-ASIC family of devices: the WaveLogic 3 Extreme and the WaveLogic 3 Nano for metro.

WaveLogic3 Extreme uses a proprietary modulation format that Ciena calls 8D-2QAM, a tweak on BPSK that uses longer duration signalling that enhances span distances by up to 20 percent. The 8D-2QAM is aimed at legacy dispersion-compensated fibre that carry 10 Gbps wavelengths and offers up to 40 percent additional upgrade capacity compared to BPSK.

Ciena has also added 4-amplitude-shift-keying (4-ASK) modulation alongside QPSK to its WaveLogic3 Nano chip. The 4-ASK scheme is also designed for use alongside 10 Gbps wavelengths that introduce phase noise, to which 4-ASK has greater tolerance than QPSK. Ciena's 4-ASK design also generates less heat and is less costly than BPSK.

According to Roberts, a designer’s goal is to use the fastest symbol rate possible, and then add the richest constellation as possible "to carry as many bits as you can, given the noise and distance you can go".

After that, the remaining issue is whether a carrier’s service can be fitted on one carrier or whether several carriers are needed, forming a super-channel. Packing a super-channel's carriers tightly benefits overall fibre spectrum usage and reduces the spectrum wasted for guard bands needed when a signal is optically switched.

Can symbol rate be doubled to 64 Gbaud? "It looks impossibly hard but people are going to solve that," says Roberts. It is also possible to use a hybrid approach where symbol rate and modulation schemes are used. The table shows how different baud rate/ modulation schemes can be used to achieve a 400 Gigabit single-carrier signal.

Note how using polarisation for coherent transmission doubles the overall data rate. Source: Gazettabyte

But industry views differ as to how much scope there is to improve overall capacity of a fibre and the optical performance.

Roberts stresses that his job is to develop commercial systems rather than conduct lab 'hero' experiments. Such systems need to be work in networks for 15 years and must be cost competitive. "It is not over yet," says Roberts.

He says we are still some way off from when all that remains are minor design tweaks only. "I don't have fun changing the colour of the paint or reducing the cost of the washers by 10 cents,” he says. “And I am having a lot of fun with the next-generation design [being developed by Ciena].”

"We are nearing the point of diminishing returns in terms of spectrum efficiency, and the same is true with DSP-ASIC development," says Winzer. Work will continue to develop higher speeds per wavelength, to increase capacity per fibre, and to achieve higher densities and lower costs. In parallel, work continues in software and networking architectures. For example, flexible multi-rate transponders used for elastic optical networking, and software-defined networking that will be able to adapt the optical layer.

After that, designers are looking at using more amplification bands, such as the L-band and S-band alongside the current C-band to increase fibre capacity. But it will be a challenge to match the optical performance of the C-band across all bands used.

"I would believe in a doubling or maybe a tripling of bandwidth but absolutely not more than that," says Winzer. "This is a stop-gap solution that allows me to get to the next level without running into desperation."

The designers' 'next level' is spatial division multiplexing. Here, signals are launched down multiple channels, such as multiple fibres, multi-mode fibre and multi-core fibre. "That is what people will have to do on a five-year to 10-year horizon," concludes Winzer.

For Part 2, click here

See also:

- Scaling Optical Fiber Networks: Challenges and Solutions by Peter Winzer

- High Capacity Transport - 100G and Beyond, Journal of Lightwave Technology, Vol 33, No. 3, February 2015.

A version of this article first appeared in an OFC 2015 show preview

Acacia unveils 400 Gigabit coherent transceiver

- The AC-400 5x7 inch MSA transceiver is a dual-carrier design

- Modulation formats supported include PM-QPSK, PM-8-QAM and PM-16-QAM

- Acacia’s DSP-ASIC is a 1.3 billion transistor dual-core chip

Acacia Communications has unveiled the industry's first flexible rate transceiver in a 5x7-inch MSA form factor that is capable of up to 400 Gigabit transmission rates. The company made the announcement at the OFC show held in Los Angeles.

Dubbed the AC-400, the transceiver supports 200, 300 and 400 Gigabit rates and includes two silicon photonics chips, each implementing single-carrier optical transmission, and a coherent DSP-ASIC. Acacia designs its own silicon photonics and DSP-ASIC ICs.

"The ASIC continues to drive performance while the optics continues to drive cost leadership," says Raj Shanmugaraj, Acacia's president and CEO.

The AC-400 uses several modulation formats that offer various capacity-reach options. The dual-carrier transceiver supports 200 Gig using polarisation multiplexing, quadrature phase-shift keying (PM-QPSK) and 400 Gig using 16-quadrature amplitude modulation (PM-16-QAM). The 16-QAM option is used primarily for data centre interconnect for distances up to a few hundred kilometers, says Benny Mikkelsen, co-founder and CTO of Acacia: "16-QAM provides the lowest cost-per-bit but goes shorter distances than QPSK."

Acacia has also implemented a third, intermediate format - PM-8-QAM - that improves reach compared to 16-QAM but encodes three bits per symbol (a total of 300 Gig) instead of 16-QAM's four bits (400 Gig). "8-QAM is a great compromise between 16-QAM and QPSK," says Mikkelsen. "It supports regional and even long-haul distances but with 50 percent higher capacity than QPSK." Acacia says one of its customer will use PM-8-QAM for a 10,000 km submarine cable application.

Source: Gazettabyte

Source: Gazettabyte

Other AC-400 transceiver features include OTN framing and forward error correction. The OTN framing can carry 100 Gigabit Ethernet and OTU4 signals as well as the newer OTUc1 format that allows client signals to be synchronised such that a 400 Gigabit flow from a router port can be carried, for example. The FEC options include a 15 percent overhead code for metro and a 25 percent overhead code for submarine applications.

The 28 nm CMOS DSP-ASIC features two cores to process the dual-carrier signals. According to Acacia, its customers claim the DSP-ASIC has a power consumption less than half that of its competitors. The ASIC used for Acacia’s AC-100 CFP pluggable transceiver announced a year ago consumes 12-15W and is the basis of its latest DSP design, suggesting an overall power consumption of 25 to 30+ Watts. Acacia has not provided power consumption figures and points out that since the device implements multiple modes, the power consumption varies.

The AC-400 uses two silicon photonics chips, one for each carrier. The design, Acacia's second generation photonic integrated circuit (PIC), has a reduced insertion loss such that it can now achieve submarine transmission reaches. "Its performance is on a par with lithium niobate [modulators]," says Mikkelsen.

It has been surprising to us, and probably even more surprising to our customers, how well silicon photonics is performing

The PIC’s basic optical building blocks - the modulators and the photo-detectors - have not been changed from the first-generation design. What has been improved is how light enters and exits the PIC, thereby reducing the coupling loss. The latest PIC has the same pin-out and fits in the same gold box as the first-generation design. "It has been surprising to us, and probably even more surprising to our customers, how well silicon photonics is performing," says Mikkelsen.

Acacia has not tried to integrate the two wavelength circuits on one PIC. "At this point we don't see a lot of cost savings doing that," says Mikkelsen. "Will we do that at some point in future? I don't know." Since there needs to be an ASIC associated with each channel, there is little benefit in having a highly integrated PIC followed by several discrete DSP-ASICs, one per channel.

The start-up now offers several optical module products. Its original 5x7 inch AC-100 MSA for long-haul applications is used by over 10 customers, while it has two 5x7 inch modules for submarine operating at 40 Gig and 100 Gig are used by two of the largest submarine network operators. Its more recent AC-100 CFP has been adopted by over 15 customers. These include most of the tier 1 carriers, says Acacia, and some content service providers. The AC-100 CFP has also been demonstrated working with Fujitsu Optical Components's CFP that uses NTT Electronics's DSP-ASIC. Acacia expects to ship 15,000 AC-100 coherent CFPs this year.

Each of the company's module products uses a custom DSP-ASIC such that Acacia has designed five coherent modems in as many years. "This is how we believe we out-compete the competition," says Shanmugaraj.

Meanwhile, Acacia’s coherent AC-400 MSA module is now sampling and will be generally available in the second quarter.