AI’s next wave

The spectacular rise in the capabilities of artificial intelligence (AI) is directly attributable to the scaling of the computing hardware used to train AI models.

“People discovered early on that if you increase the size of those models and the amount of data to train those models, you get a big step-up in accuracy and performance,” says Nigel Toon, CEO and chairman of AI processor firm Graphcore. “The results have been stunning.”

Toon cites research that shows that for large language models the size of the model and the data must be scaled equally.

However, AI developers have started to see a slowdown in the gains achieved solely by such scaling. This is leading to new thinking in how engineers build an AI model and how it generates its output when prompted. The result is a new wave of AI, says Toon.

Model changes

Toon introduces several concepts to explain the characteristics of AI’s latest wave.

Instead of a single model containing all the learned information, models can be combined, each with its own expertise, an approach known as a mixture of experts.

GPT-4 was the first time OpenAI started down this path with some eight experts, says Toon, while DeepSeek, a Chinese research company, has taken it much further by using many experts, he says.

“Rather than having one model that contains all the information, you end up with many more models, each of which is an expert in a particular area,” says Toon. “Then you find a way of working out which of those experts you will call upon at any particular moment.”

Another crucial development is how the model performs its reasoning, referred to as agentic AI. What is notable about agentic workflows is that instead of producing a one-shot output, the model performs what Toon calls a chain of thought. The model goes back and does some reflection, says Toon. The results are promising, delivering performance akin to using a much larger model.

We are thus on the cusp of a new wave of AI, says Toon, with Open AI’s o1 release one of the first indications of this. DeepSeek also uses a reasoning approach coupled with its large mixture-of-experts.

This ability to go back and apply reasoning is also important in terms of context, a concept that reflects how much of a view an AI model can keep track of.

Toon cites the example of using a large language model to generate a long piece of text or an AI model creating a video sequence. Maintaining context across a whole piece becomes more and more difficult, especially with video.

“In generating that, you want to go back, you want to try different trains of thought, you want to pull in different pieces of information, and you probably want to pull in different experts,” says Toon. “The complexity of the models you end up building and the inference process that you apply over those models are just increasing.”

AI system scaling

Toon stresses that the next wave of AI will require continual computing and networking system scaling.

“On the one hand, you can say the age of scaling is maybe over, but it is one-dimensional scaling that is over,” says Toon. “The models will still get bigger and will be much more complex.”

The next wave of models will need more computing power and their underlying structure will change. They will consist of multiple models working together, and there will be numerous steps before the model generates its output.

Toon expects clusters of AI accelerators such as graphics processing units (GPUs) to become larger still while the way the accelerators interact will also change: “It’s going to become more complex.” The way a GPU talks to memory will also change because of the need to store context. “You will want to pull pieces back and forth,” says Toon.

So not only will the model’s make-up change, but inferencing will becoming increasingly important.

“Rather than just producing a set of tokens, it’s going backwards and forwards, maybe producing multiple sets of tokens, working out which are the right ones, and changing things,” says Toon. “There’s a real imperative here [with inferencing], because that is cost to the user.”

Performing the inferencing promptly and computationally efficiently will thus be key.

Open-source AI

Toon is a proponent of an open-source approach to AI.

“When you’re in a phase of dynamic innovation, which we’re still at, sharing that knowledge across different innovative groups will allow people to move forward much more quickly.”

Adopting an open-source approach will benefit more responsible AI. “The more eyeballs you have on it from clever people, the better it will be,” says Toon.

Graphcore

Softbank Group acquired Toon’s company, Graphcore, in July 2024 as part of the Group’s broader AI strategy.

“Distinct from the Vision Fund, [a huge technology investment fund managed by Softbank], we are a SoftBank Group company,” says Toon. “We sit alongside ARM under the SoftBank Group, which is helping us build the next generation of products.”

Softbank’s telecommunications arm, Softbank Corp., is one of several Asian telcos that view AI as a crucial business opportunity.

In September, SoftBank Corp announced that it is working with photonics chip specialist, NewPhotonics, to develop technology for linear pluggable optics, co-packaged optics, and an all-optics switch fabric for the AI-RAN initiative.

Toon notes a growing divide in AI strategy between Asia, and the US and Europe. “I’m not sure if it’s a good thing, but it is part of what is going on in the world,” he says. He is also a member on the UK Research and Innovation (UKRI) board, a non-government body sponsored by the UK’s Department of Science, Innovation and Technology. “It helps to steer £9 billion ($11.5 billion) from the UK government into universities and the research councils that fit within UKRI and Innovate UK,” says Toon.

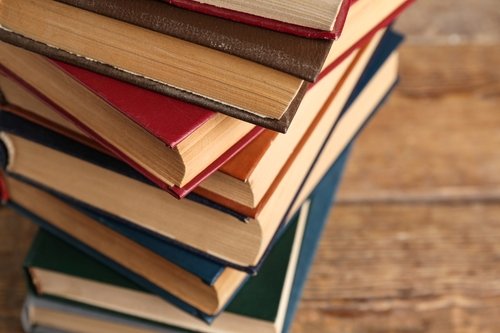

Toon authored the book: How AI thinks: How we built it, how it can help us, and how we can control it, that was published in 2024.

Books of 2024: Part 1

Gazettabyte asks industry figures to pick their notable reads during the year. Harald Bock, Jonathan Homa, and Maxim Kuschenrov kick off with their chosen books.

Harald Bock, Vice President Network Architecture, Infinera

I love reading but have not read as many books as I would have liked in recent years. I decided to change that in 2024.

My pick of fictional books this year was mainly classic science fiction after seeing the movie Dune Part 2 with my family. I read the book Dune by Frank Herbert, published in 1965, a while ago, and I wasn’t sure that the movies did the book justice.

My son advised me to launch myself into all five sequels of Dune, which kept me busy. While the sequels are for die-hard fans, I recommend the first of the books whether or not you’ve seen the movie. Frank Herbert’s modern and sophisticated thinking adds unconventional perspectives to up-to-date societal, environmental and political questions.

I went on to read Ray Bradbury’s The Martian Chronicles and H.G. Wells’ Time Machine, published in 1950 and 1895, respectively. The two books are fascinating as they are timeless and do not require any adaptation to modern times. They are classics of their genre.

I also found time for non-fictional books. I was looking for unconventional thoughts by unlikely authors to challenge my thinking.

One that adds to the discussions about sustainability is a book by Fred Vargas, a French author who normally writes crime fiction and is an archaeologist and historian. ‘L’humanité en péril: Virons de bord, toute !‘ was published as a follow-up to an older, shorter text by the same author read on the occasion of the conference on climate change COP24 in Paris in 2018. Surprisingly, the book does not yet exist in English.

Another interesting author is a professor of computer science, Katharina Zweig. Her books: Awkward Intelligence: Where AI Goes Wrong, Why It Matters, and What We Can Do about It and Die KI war’s: Von absurd bis tödlich: Die Tücken der künstlichen Intelligenz (‘It was the AI: From absurd to deadly: The pitfalls of artificial intelligence’, in German only to date) do a good job exploring considerations, boundary conditions, and limits of using AI systems in practical decision-making.

Jonathan Homa, Senior Director of Solutions Marketing at Ribbon Communications

I recommend a book I re-read this year: The Name of the Rose by Umberto Eco. As my wife points out, re-reading a book is its own recommendation.

This is an intricate and beautifully written murder mystery novel set in late medieval Europe. Through the eyes of the protagonist, Brother William of Baskerville, we begin to see glimpses of enlightenment. I also recommend the 1986 movie by the same name, starring Sean Connery.

Maxim Kuschnerov, director of R&D at Huawei

I had a light year of reading. One book I did read was Nuclear War: A Scenario by Annie Jacobsen which details the scenario of how a nuclear war would go down if someone started it. The answer: a surprisingly quick annihilation of humankind.

I also read Angela Merkel’s autobiography, Freedom: Memoirs 1954 – 2021 – that was published recently. I was hoping for more insight into her thinking when dealing with the immigration crisis or with Vladimir Putin, but the book added nothing that I didn’t already know about her. The book clarified how Angela Merkel was profoundly shaped in her upbringing by Eastern German communism and Russia.

The request to highlight my reads of 2024 made me think about what I have been reading this past year. Perhaps disappointingly, it turned out to be mostly not-noteworthy fiction.

Podcast: Is AI driving a new wave of photonic innovation?

AI is still in its infancy, but it’s already pushing the photonics and computing industries to rethink product roadmaps and drive new levels of innovation.

Adtran’s Gareth Spence talks with authors and analysts Daryl Inniss and the editor of Gazettabyte about the fast pace of AI development and the changes needed to unlock its full potential. They also discuss the upcoming sequel to their book on silicon photonics and its focus on AI.

To listen to the podcast, click here.

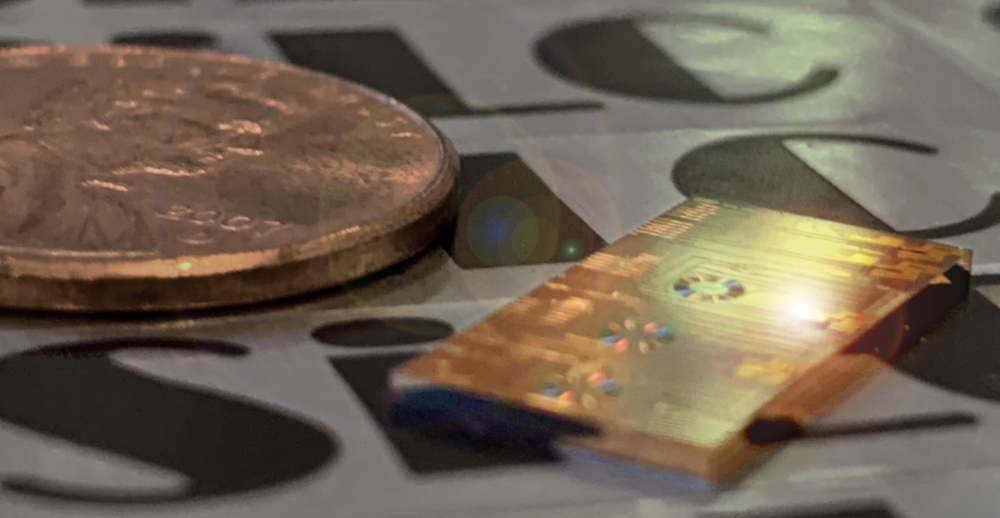

NextSilicon’s Maverick-2 locks onto bottleneck code

- NextSilicon has developed a novel chip that adapts its hardware to accelerate high-performance computing applications.

- The Maverick-2 is claimed to have up to 4x the processing performance per watt of graphics processing units (GPUs) and 20x that of high-performance general processors (CPUs).

After years of work, the start-up NextSilicon has detailed its Maverick-2, what it claims is a new class of accelerator chip.

A key complement to the chip is NextSilicon’s software, which parses the high-performance computing application before mapping it onto the Maverick-2.

“CPUs and GPUs treat all the code equally,” says Brandon Draeger, vice president of marketing at NextSilicon. “Our approach looks at the most important, critical part of the high-performance computing application and we focus on accelerating that.”

With the unveiling of the Maverick-2 NextSilicon has exited its secrecy period.

Founded in 2017, the start-up has raised $303 million in funding and has 300 staff. The company is opening two design centres—in Serbia and Switzerland—with a third planned for India. The bulk of the company’s staff is located in Israel.

High-performance computing and AI

High-performance computing simulates complex physical processes such as drug design and weather forecasting. Such computations require high-precision calculations and use 32-bit or 64-bit floating-point arithmetic. In contrast, artificial intelligence (AI) workloads have more defined computational needs, and can use 16-bit and fewer floating-point formats. Using these shorter data formats results in greater parallelism per clock cycle.

Using NextSilicon’s software, a high-performance computing workload written in such programming languages as C/C++, Fortran, OpenMP, or Kokkos, is profiled to identify critical flows. These are code sections that run most frequently and benefit from acceleration.

“We look at the most critical part of the high-performance computing application and focus on accelerating that,” says Draeger.

This is an example of the Pareto principle: a subset of critical code (the principle’s 20 per cent) that runs most (80 per cent) of the time. The goal is to accelerate these most essential code segments.

The Maverick-2

These code flows are mapped onto the Maverick-2 processor and replicated hundreds or thousands of times, depending on their complexity and the on-chip resources available.

However, this is just the first step. “We run telemetry with the application,” says Draeger. “So, when the chip first runs, the telemetry helps us to size and identify the most likely codes.” The application’s mapping onto the hardware is then refined as more telemetry data is collected, further improving performance.

“In the blink of an eye, it can reconfigure what is being replicated and how many times,” says Draeger. “The more it runs, the better it gets.”

Source: NextSilicon

Source: NextSilicon

The time taken is a small fraction of the overall run time (see diagram). “A single high-performance computing simulation can run for weeks,” says Draeger. “And if something significant changes within the application, the software can help improve performance or power efficiency.”

NextSilicon’s software saves developers months of effort when porting applications ported onto a high-performance computing accelerator, it says.

NextSilicon describes the Maverick-2 as a new processor class, which it calls an Intelligent Compute Accelerator (ICA). Unlike a CPU or GPU, it differentiates the code and decides what is best to speed up. The configurable hardware of the Maverick-2 is thus more akin to a field-programmable gate array (FPGA). But unlike an FPGA, the Maverick-2’s hardware adapts on the fly.

Functional blocks and specifications

The Maverick-2 is implemented using a 5nm CMOS process and is based on a dataflow architecture. Its input-output (I/O) includes 16 lanes of PCI Express (PCIe 5.0) and a 100 Gigabit Ethernet interface. The device features 32 embedded cores in addition to the main silicon logic onto which the flows are mapped. The chip’s die is surrounded by four stacks of high-bandwidth memory (HBM3E), providing 96 gigabytes (GB) of high-speed storage.

NextSilicon is also developing a dual-die design – two Maverick-2s combined – designed with the OCP Acceleration Module (OAM) packaged form factor in mind. The OAM variant, arriving in 2025, will use HBM3E memory for an overall store capacity of 192 gigabytes (GB) (see diagram).

Source: NextSilicon

Source: NextSilicon

The OCP, the open-source industry organisation, has developed an open-source Universal Base Board (OBB) specification that hosts up to eight such OAMs or, in this case, Maverick-2s. NextSilicon is aiming to use the OAM dual-die design for larger multi-rack platforms.

The start-up says it will reveal the devices’ floating-point operations per second (FLOPS) processing performance and more details about the chip’s architecture in 2025.

Source: NextSilicon

Source: NextSilicon

Partners

NextSilicon has been working with vendor Penguin Solutions to deliver systems that integrated their PCI Express modules based on its first silicon, the Maverick-1, a proof-of-concept design. Sandia National Laboratories led a consortium of US labs, including Lawrence Livermore National Laboratory and Los Alamos National Laboratory, in trialling the first design.

“We’re currently sampling dozens of customers across national labs and commercial environments. That’s been our focus,” says Draeger. “We have early-adopter programs that will be available at the start of 2025 with Dell Technologies and Penguin Solutions, where customers can get engaged with an evaluation system.”

Volume production is expected by mid-2025.

Next steps

AI and high-performance computing are seen as two disparate disciplines, but Draeger says AI is starting to interact with the latter in exciting ways.

Customers may pre-process data sets using machine-learning techniques before running a high-performance computing simulation. This is referred to as data cleansing.

A second approach is the application of machine-learning to the simulation’s results for post-processing analysis. Here, the simulation results are used to improve AI models that aim to approximate what a simulation is doing, to deliver results deemed ‘good enough’. Weather forecasting is one application example.

An emerging approach is to run small AI models in parallel with the high-performance simulation. “It offers a lot of promise for longer-running simulations that can take weeks, to ensure that the simulation is on track,” says Draeger.

Customers welcome anything that speeds up the results or provides guidance while the calculations are taking place.

NextSilicon is focussing on HPC but is eyeing data centre computing.

“We’re starting with HPC because that market has many unique requirements, says Draeger. “If we can deliver performance benefits to high-performance computing customers then AI is quite a bit simpler.”

There is a need for alternative accelerator chips that are flexible, power efficient, and can adapt in whatever direction a customer’s applications or workloads take them, says Draeger.

NextSilicon is betting that its mix of software and self-optimising hardware will become increasingly important as computational needs evolve.

By invitation: Professor Roel Baets on Silicon Photonics 4.0

Roel Baets, Emeritus Professor at Ghent University and former Group Leader at imec gave a plenary talk on ‘Silicon Photonics 4.0’ at the recent ECOC conference. “It will be important for silicon photonics to make use of smart and agile manufacturing, a notion associated with Industry 4.0,” said Professor Baets, explaining the title.

In a guest piece, he explains his thoughts and discusses what he saw at ECOC. He also has a request.

One of the things I discussed in my ECOC plenary talk was the large gap between research and product development for new applications of photonic integrated circuits (PICs) on the one hand, and product sales and new industrial process flows on the other.

Among many reasons for this gap, one stands out: the major barriers that fabless start-ups face when developing a product based on a still immature industrial supply chain.

This often implies that part of the start-up’s non-recurring engineering (NRE) budget needs to be spent on co-investment in a new process flow by a technology provider, which can easily be too expensive for a start-up. The growing diversity in materials added to silicon photonics process flows to meet the needs of new applications is a major compounding factor in this context.

I showed a slide that listed the companies that I am aware of that sell non-transceiver products based on PICs (silicon or other). I try to keep this list up to date with my Ghent University colleague, Prof. Wim Bogaerts, chair of ePIXfab. The slide showed only seven companies, while there are probably between 100 and 200 companies around the world that develop such products.

These companies are Genalyte (biosensors for diagnostics), Anello (optical gyroscope), Sentea and PhotonFirst (fibre Bragg grating readout), Quix (quantum processor), Thorlabs (>100GHz opto-electronic converter) and iPronics (originally a programmable photonic processor company now focussing on optical switching). These companies will likely sell only in modest numbers, but at least they sell a product.

After my talk, I eagerly went to the ECOC exhibition in the hope of spotting additional companies. I found two that I could add to the list: Chilas (tunable low-linewidth lasers) and SuperLight Photonics (supercontinuum lasers).

A few weeks later, I discovered yet another fledgling company ready to sell: hQphotonics (ultra-low-noise microwave oscillators). So the list is double-digit now! Perhaps this is an important milestone towards Silicon Photonics 4.0.

Interestingly, four of those ten companies use Silicon-on-Insulator (SOI) technology, four use silicon nitride PICs, one uses InP, and one uses thin-film Lithium Niobate (TFLN).

Undoubtedly, the list is incomplete. There may be other companies with a product (not just a prototype or a demo kit or a technology service) that we do not know.

So let me make a call to contact me if you know of any company not on the list of ten that sells a non-transceiver product based on PICs.

roel.baets@ugent.be

ECOC 2024 industry reflections - Final Part

In the final part, industry figures share their thoughts after attending the recent 50th-anniversary ECOC show in Frankfurt. Contributions are from Adtran’s Jörg-Peter Elbers, Lightwave Logic’s Michael Lebby, and Heavy Reading’s Sterling Perrin.

ECOC exhibition floor

Jörg-Peter Elbers, senior vice presendent, advanced technology, standards and IPR, Adtran, and a General Chair at this year’s ECOC.

ECOC celebrated its 50th anniversary this year. It was great to see scientists, engineers, and industry leaders from all around the globe at a vibrant gathering in Frankfurt.

ECOC dates to September 1975 when the inaugural event – dubbed the “European Conference on Optical Fiber Technology” – was held in London. In the early days, the focus was on megabit-per-second transmission for telephony applications. Now, we are advancing to petabit-per-second speeds to meet AI and cloud services demands.

This year’s ECOC explored various cutting-edge topics, including 1.6 and 3.2 terabit-per-second (Tb/s) transceivers, multi-band and spatial division multiplexing (SDM) transmission, and innovations in access and home networks. Other discussions centred on the merits of linear drive versus regenerated optics, pluggable modules versus co-packaged engines, and the latest IP-over-DWDM architectures and technologies for the coherent edge.

The 50 years of ECOC symposium celebrated the amazing progress of optical communications in the past and painted a promising picture for the future.

David Payne, one of the luminary speakers, stated that hollow-core fibre would enable a new generation of WDM transmission systems (“amplifier-less”) with simpler terminals and higher fibre capacity. In a post-deadline paper, Linfiber reported a hollow-core fibre deployment with a fibre loss lower than solid-core fibre and progress on manufacturing and deployment issues, critical for mass-market adoption.

In the ECOC plenary session, Arista’s Andy Bechtolsheim discussed the race to build AI clusters for generative AI learning and inference. He emphasized that the next generation of hyperscale AI data centres could contain a million AI nodes requiring more than 3GW of electrical power—comparable to the output of a vast nuclear plant. These data centres present opportunities for millions of cost-efficient, low-power terabit-per-second optical interconnects.

The theme of optics for AI was complemented by exploring AI for optics, with multiple contributions examining how generative AI and agent-based models could streamline network operations. The accuracy, predictability, and the explainability of results remain active research topics.

Another highlight was the optical satellite symposium, which discussed using 100 gigabit-per-second (Gbps) coherent optics for satellite communications. While inter-satellite links in commercial low-earth orbit (LEO) constellations use coherent transceiver technology, the use of optical ground links is still in its infancy. Panelists emphasised the challenges of maintaining cloud-free line-of-sight conditions and compensating for atmospheric turbulence to ensure continuous communication. They agreed that combining adaptive optics with time diversity (e.g., by interleaving) offers the best solution for turbulence mitigation, though it adds latency.

Other discussions covered fibre sensing for infrastructure and environmental monitoring and the commercial potential of quantum technologies, sparking much interest and heated debate in this year‘s Rump Session.

As ECOC 2024 concluded, it was clear that the conference not only celebrated five decades of advancement in optical communications but also set the stage for future innovations and challenges.

Dr. Michael Lebby, CEO, Lightwave Logic

As the Chair of the Market Focus at ECOC’s Industry Exhibit, I can say that this year, we had probably the best sessions in ECOC’s 50-year history. For three days, each seat was taken at the Market Focus, which featured wall-to-wall programming on commercial trends, technologies, and roadmaps in optical communications.

Presentations at the market focus sessions supported the big-show exhibition themes. Many talks focused on modules and subsystems. Lightwave Logic showed polymer silicon slot modulators with reliability data operating at 200Gbps with less than 1V drive, with initial results of polymer-plasmonic modulators operating with open 400Gbps eyes. While 400Gbps lanes are still on the roadmap, there were many discussions on what technologies could reach this level of performance, especially modulators. Polymer-plasmonic-based modulators seem to be the leader, with optical bandwidths exceeding 500GHz.

While incumbent technologies are hard to displace, the emerging area of co-packaged pluggables is gaining interest among suppliers, especially for the terabit-per-second data rates sought. While progress was impressive, the reach of silicon photonics modulators for 200Gbps and beyond was a show floor concern.

NewPhotonics discussed how to double data rates using its integrated optical equaliser, while others, such as Pilot Photonics, conveyed the exciting progress with comb laser arrays. Several speakers discussed the metrics of standards that support the AI/ machine learning trends for data centre operators and how optics can support the drive to higher data rates and lower power consumption.

Areas of power consumption driven in part by digital signal processor (DSP) evolution were discussed. The interesting perspective is that if coherent optics are to be developed to serve the edge of the network, then using electronics to help the optics may not be enough; the optics need to perform better so that the electronics can be scaled down to reduce power consumption. It is a trade-off at the heart of many approaches to bring coherent optics to compete with direct-detect solutions for pluggable transceivers.

The indication is that direct detection in data centre optics is not waning as quickly as the community once thought and looks to be a mainstay for pluggable transceiver solutions from 800Gbps, 1.6Tbps, 3.2Tbps, and even 6.4Tbps.

A fireside chat explored the opportunities for copper at super short interconnects where the direct-attach copper (DAC) cables dominate. This 1m to 3m range has been evolving to active electrical copper (AEC) interconnects using smart electronics in recent years. Those of us who are solidly in the optics camp, while acknowledging that copper has owned this segment forever, are still hoping that platforms such as silicon photonics could sneak in and take share in the next five years. However, displacing an incumbent technology such as copper will not be easy, especially when metrics such as economies of scale, cost, and reliability come into play.

Several talks looked at next-generation implementations, such as quantum-dot lasers and photonic wire bonding, and driving VCSELs to ever-increasing speeds. Discussions took place that wondered if VCSELs have reached their limit in bandwidth and speed and if electronics could help them push performance further. A common theme evident was the innovative ideas and concepts to address 224Gbps per lane with optical technologies. While it has been generally accepted that this metric is emerging, several companies are still deciding how to address this speed and 400Gbps per lane.

One big takeaway is that if you have a new and innovative platform to enable things like 3.2Tbps transceivers that is disruptive, think very carefully about whether that disruptive technology needs the infrastructure to be disruptive, too.

Sterling Perrin, Senior Principal Analyst, Heavy Reading

Although I’ve attended nearly every OFC show over the past 25 years, this was my first ECOC. Most of my meetings were centred around an IP-over-DWDM project I’ve worked on for several months, including video interviews conducted at the show with the partnering companies: the OIF, Ciena, Juniper, and Infinera. These are all posted on Light Reading.

Building on its work at OFC 2024, the OIF’s pluggables demo at ECOC spotlighted four applications:

- 400ZR and 800ZR,

- Open ZR+ at 400GbE,

- OpenROADM at 400GbE, and

- 100ZR

The expanding scope of coherent pluggable is impressive, and the interop work includes optics that the OIF is not directly defining—such as Open ZR+, OpenROADM, and 100ZR. ECOC 2024 marked OIF’s first interoperability demonstration of 100ZR modules, an application driven by telecom operators as opposed to hyperscalers.

Another key aspect of the OIF’s IP-over-DWDM work demonstrated at ECOC is the common management interface specification (CMIS) for plug-to-host interoperability between routers and pluggable optics. Plug-to-host interop is essential for wider IP-over-DWDM adoption among telecom operators, so the work is timely.

Related to pluggables management in IP-over-DWDM networks, I attended the Open XR Forum’s symposium on the show floor. The organisation is promoting a dual management approach to pluggables that includes host independent management to support pluggables features that aren’t yet supported in the routers or in CMIS.

During a Q&A, Telefonica’s Oscar Gonzales de Dios acknowledged that host-independent management is controversial (including within Telefonica) but said it is the only way to add point-to-multipoint functions on pluggables for now.

Quantum-safe encryption is another area of research interest, particularly quantum key distribution (QKD), and ECOC 2024 was a great place to get up to date. I attended the rump session debate on quantum technologies, expertly hosted by Peter Winzer (Nubis), Rupert Ursin (QTlabs), and David Neilson (Nokia Bell Labs). It was standing-room-only, and I anticipated strong pro-QKD sentiment. I was wrong! The dominant view was that QKD is impractical, technically limited, too expensive, and needs more real customer demand. Several people argued that post quantum cryptography (PQC) algorithms are sufficient to meet the market needs, without the complexity and costs that QKD brings.

For analysts, conferences like ECOC are the most efficient means of quickly learning what’s hot in the industry. Conferences are equally great places to know what is not hot. I didn’t hear the words “5G,” “xHaul,” “fronthaul,” “6G,” or even “mobility” uttered once during the four days I was in Frankfurt.

The markets for photonic integrated circuits in 2030

What will be the leading markets for photonic integrated circuits (PICs) by the decade’s end? And what are the challenges facing the PIC industry?

A panel session at the recent PIC Summit Europe event held in Eindhoven, The Netherlands, looked at what would be the markets for photonic integrated circuits by 2030.

The market for PICs is dominated by datacom and telecom. However, emerging applications include medical and wearable devices, optical computing, autonomous vehicles, and sensing applications for the oil, gas, water, and agriculture industries.

Taking part in the PIC Summit Europe panel on behalf of LightCounting Market Research, I shared two forecast charts. One showed LightCounting’s latest Ethernet module forecast, highlighting the rapid growth expected in the next five years, including the adoption of 1.6-terabit and 3.2-terabit pluggables. Also shown was how silicon photonics is gaining market share and will account for nearly half of all optical transceivers by 2029.

No surprise then that LightCounting’s view is that datacom and telecom will remain the dominant markets for PICs in 2030. Moreover, the challenges AI is posing the optical industry means the photonics developments will continue to drive the PIC market overall.

Before the PIC Summit Europe panel, Gazettabyte sought some industry views. What would help the PIC landscape, and what should the PIC industry be addressing? Also, what were the views regarding the PIC marketplace in 2030?

Those approached focus mainly on datacom and telecom. But Julie Eng, the CTO of Coherent, has a broader remit that includes emerging photonics markets, while Mehdi Asghari is CEO of SiLC Technologies, a silicon photonics start-up focused on the Lidar marketplace.

Emerging PIC markets

Dave Welch, founder of Infinera and now founder and CEO of stealth start-up AttoTude, says PICs for datacom and telecom are alive and thriving, while PICs for Lidar and sensing are burgeoning applications with real volume.

“Datacom and telecom will dominate for the foreseeable future,” says Welch. “I do not see where any other application of comparable size can come from.”

Maxim Kuschnerov, director of R&D, points out that 2030 is not as far out as it used to be: “That is like two bigger product cycles at most.” He, too, says datacom and telecom will remain the main markets for PICs.

Coherent’s Eng agrees: “The primary driver of PICs will be datacom and telecom, but if you’re looking for additional drivers, health monitoring is a possible one.”

Coherent experienced an uptick for optical components for medical equipment during the COVID-19 pandemic. “The pandemic increased demand for PCR [polymerase chain reaction] testing, which grew the business for products we sell, such as optical filters and thermoelectric coolers,” says Eng. “The pandemic focused people more on health monitoring, and that, combined with advanced health-monitoring featured in smart-watches, has grown interested in personal health monitoring.”

Eng notes that component sales into PCR testing declined post-COVID although interest remains high in personal health monitoring.

Companies are also addressing biosensing using silicon photonics and semiconductor lasers. “In some cases, a silicon photonics PIC for this application could be fairly large as it is often helpful to monitor many wavelengths,” says Eng. She also highlights potential volumes. “If biosensing in the watch takes off, that could be a higher volume than datacom transceivers, and the PICs may be large. So that is an application to watch.”

“The pandemic experience has pushed the point-of-care testing market, which include biosensors, exponentially,” says Professor Laura Lechuga, a leading biosensor researcher. “This is an increasing market every year with an intensive research and development at academic and industrial level. Point-of-care will be for sure the future of diagnostics.”

Kuschnerov also highlights the health-monitoring market. “There has been a lot of work on non-invasive glucose monitoring using optical sensing, but it is not clear if this could pass FDA [U.S. Food and Drug Agency] approval,” says Kuschnerov. “It could be a life changer for people with diabetes.”

Rafik Ward is a consultant working with companies on PIC developments including the point-of-care medical device marketplace. One start-up developing PIC technology realised it was competing with low-cost point-of-care diagnostics devices. Another challenge the start-up faced is the long development cycles and difficulty entering the medical marketplace. The start-up decided to refocus on communications.

“One thing that did come from COVID was the realisation of how fragile our distribution and logistics ecosystem was and how dependent it was on low-cost labour,” says SiLC’s Asghari. The labour shortage persisted after COVID-19 and has driven a push for warehouse and logistic automation. “For our business in Lidar, this has created a significant demand in robotics and automation,” says Asghari.

Another driver is the drop in the working-age population—about 1 per cent a year—caused by the drop in population growth in industrial countries over the past 20 years.

“This is a major issue and is becoming even more critical over time,” says Asghari. “If robots need to do the kind of work that people do, then they need to see the way we do, and cameras and even 3D imagers don’t cut it.”

Kuschnerov says gas, oil, water quality, and agriculture will eventually use optical sensing variants, but he does not expect high volumes.

Another wearable market is augmented reality/ virtual reality (AR/VR) glasses. This volume market has been predicted for years, but work is taking place, such as the development of diffractive waveguides for such glasses. “Research is happening here, which can’t be ignored, but it’s a non-existent market today,” says Kuschnerov.

Infra-red sensors are set to grow for autonomous drone warfare. Military drones being used in Ukraine and the Middle East are changing modern warfare, he says, a development noted by the leading militaries.

VCSELs for a 3D vision of robots will continue to stay relevant. “The future is full of these (Tesla-like) robots. I’m sure they will need VCSEL arrays,” says Kuschnerov.

Challenges facing the PIC industry

Ward says the highest priority regarding PICs is for the fabrication plants [fabs] to reduce cycle times from tape-out to returned chips.

Indium phosphide fabs regularly turn around chips in six to eight weeks, whereas several of the big silicon photonics fabs take five months. Moreover, chip designs can often take two to three iterations to get to production. “We shouldn’t be surprised that indium phosphide has consistently been six or more months ahead of silicon photonics at each generation,” says Ward. “It’s simple maths.” Silicon photonics needs to be developed to launch new generations of communication devices at the same time as indium phosphide products.

Ward also highlights the need for improved process design kits (PDKs). “While this is improving, there is still too much redundant work by PIC customers because PDKs are immature,” he says.

AttoTude’s Welch notes that, in years past, the value of PICs has been in integrating optics, specifically lasers. The issue with lasers, however, is their environmental compatibility with silicon circuits. “This problem needs to be improved if we expect greater integration into the system needs,” says Welch.

Prof. Lechuga says that one of the main obstacles for biosensors is mass-fabrication at low cost. “It will be interested to see if Europe could offer such fabrication,” she says.

Another issue is the benefits a PIC brings. For Eng, PICs must solve a problem and offer value at a lower cost than existing solutions. “That is a big ask,” says Eng. “Optical technologists must understand new markets with many established technologies.”

Getting help

Asghari suggests several ways the optics industry and governments can help, and not just for PICs. The industry and governments must be measured to avoid boom-and-bust cycles, or at least not feed them.

“I see the AI hype now, and it brings back bitter memories of the 2000 era,” says Asghari. “That did not help anyone and set back the industry in a major way.”

He also calls for fairer trade but not through tariffs. We need fairness, he says: “Our gates are wide open, and we hold ourselves to rules that do not allow governments to support industry.”

But China does whatever it likes, he says. “Our reaction is to add tariffs on imports on things that our industry needs to manufacture, and unfortunately, a lot of these are still from China.” It is, therefore, important to make it easier for companies to manufacture in the West and help bring back basic key capabilities. “We should enable investments and not tax them, and we should stimulate the venture capital communities to invest in hardware, which no one does anymore,” says Asghari.

The issue of population shrinkage and the need for automation is the photonics industry’s chance to lead. “But we are losing again due to lack of investment in the same way that we are losing the electrical vehicle market,” he warns.

For Asghari, what is needed is a long-term vision, stability, and fairness.

ECOC 2024 industry reflections - Part III

Gazettabyte is asking industry figures for their thoughts after attending the recent 50th-anniversary ECOC show in Frankfurt. Here are contributions from Aloe Semiconductor’s Chris Doerr, Hacene Chaouch of Arista Networks, and Lumentum’s Marc Stiller.

Autumn morning near the ECOC congress centre in Frankfurt

If there was one overall message from ECOC 2024 this year, it is that incumbent technologies are winning in the communications market.

Copper is not giving up. It consumes less power and is cheaper than optics, and now, more electronics such as retimers are being applied to keep direct-attach copper (DAC) cables going. Also, 200-plus gigabaud (GBd) made a debut in coherent optics, but in intensity-modulation direct-detect (IMDD), 50GBd and 100GBd look like they are here to stay for several more years.

Pluggables are entrenching themselves more deeply. For large-scale co-packaged optics to unseat them seems further away than ever. The reason for the recent success of incumbent technologies is practicality. Large computing clusters and data centres need more bandwidth immediately, and there is not enough time to develop new technologies.

Probably the most significant practical constraint is power consumption. Communications is becoming a significant fraction of total power consumption, further driven by the desire to disaggregate to spread out the power consumption. Liquid cooling demonstrations are becoming commonplace.

Power consumption may limit the market as customers cannot obtain more power. This may mean the lowest power solution will win, making cost, complexity, and size secondary considerations.

Hacene Chaouch, Distinguished Engineer, Arista Networks

Unlike the 2023 edition, ECOC 2024 overwhelmingly and unanimously put power consumption on a pedestal.

Sleepwalking the last decade on incremental power-per-bit improvements, the AI boom has caught the optics industry off guard. Every extra Watt wasted on optics and the associated cooling systems matters since that power is not available to the Graphics Processing Units (GPUs) that generate revenue.

In this context, seeing 30W 1.6-terabit digital signal processor (DSP) optical modules demonstrated at the show floor was disappointing. This is especially so when compared to 1.6-terabit linear pluggable optics (LPO) with prototypes consuming only 10W.

The industry must and can do better to address the power gap of 1.6-terabit DSP-based optics.

Marc Stiller, Lumentum’s Vice President of Product Line Management, Cloud and Networking

ECOC 2024 saw AI emerge as a focal point for many discussions and technology drivers, continuing trends we observed at OFC earlier this year. ECOC showcased numerous new technologies and steady progress on products addressing the insatiable appetite for bandwidth.

LPO was visible, with steady advancements in performance and interoperability and a new multi-source agreement (MSA) pending. There was an overall emphasis on power efficiency and cooling solutions, driven by the increasing scale of machine learning/ AI clusters and the power availability to cool them.

Another focus was 1.6-terabit interfaces with multiple suppliers showcasing their progress. Electro-absorption modulated laser (EML) and silicon photonics solutions continue to evolve, with EMLs showing an early lead.

Other notable demonstrations emphasised breakthroughs in higher data rates and energy-efficient solutions, addressing the critical challenge of increasing memory bandwidth. Nvidia signalled their commitment to driving the pace of optics with a newly developed PAM4 DSP.

From a networking perspective, 800-gigabit is becoming the new standard, particularly with C- and L-bands gaining traction as the industry approaches the Shannon limit. Integration is more critical than ever for achieving power and cost efficiencies, especially as 800-gigabit ZR and ZR+ solutions become more prominent.

Lumentum showcased high-performance transceivers and provided critical insights into the future of networking at ECOC, reinforcing our leadership in driving these innovations forward.

Is 6G’s fate to repeat the failings of 5G wireless?

Will the telecom industry embark on another costly wireless upgrade? Telecom consultant and author William Webb thinks so and warns that it risks repeating what happened with 5G.

William Webb published the book The 5G Myth in 2016. In it, he warned that the then-emerging 5G standard would prove costly and fail to deliver on the bold promises made for the emerging wireless technology.

Webb sees history repeating itself with 6G, the next wireless standard generation. In his latest book, The 6G Manifesto, he reflects on the emerging standard and outlines what the industry and its most significant stakeholder – the telecom operators – could do instead.

Developing a new generation wireless standard every decade has proved beneficial, says Webb. However, the underlying benefits with each generation has diminished to the degree that, with 5G, it is questionable whether the latest generation was needed.

Wireless generations

There was no first-generation (1G) cellular standard. Instead, there was a mishmash of analogue cellular standards that were regional and manufacturer-specific.

The second-generation (2G) wireless standard brought technological alignment and, with it, economies of scale. Then, the 3G and the 4G standards advanced the wireless radio’s air interface. 5G was the first time the air interface didn’t change; an essential ingredient of generational change was no longer needed.

The issue now is that the wireless industry is used to new generations. But if the benefits being delivered are diminishing, who exactly is this huge undertaking serving? asks Webb. With 5G, certainly not the operators. The operators have invested heavily in rolling out 5G and may eventually see a return but that is far from their experience to date.

The wireless industry is unique in adopting a generation approach. There are no such generations for cars, aeroplanes, computers, and the internet, says Webb: “Ok, the internet went from IPv4 to IPv6, but that was an update, as and when needed.” With 5G, there was no apparent need for a new generation, he says. It wasn’t as if networks were failing, or there was a fundamental security issue. or that 4G suffered from a lack of bandwidth.

Instead, 5G was driven by academics and equipment vendors. “They postulated what some of the new applications might be,” says Webb. “Some of them were crazy guesses, the most obvious being remote surgery.” That implied a need for faster wireless links and more bandwidth. Extra bandwidth meant higher and wider radio frequency bands which came at a cost for the operators. Higher radio spectrum – above 3GHz – means greater radio signal attenuation requiring smaller-area radio cells and a greater network investment for the operators.

The industry has been working on 6G for several years. Yet, it is still early to discuss the likely outcome. Webb outlines three possible approaches for 6G: HetNets, 5G-on-steroids, and 6G in the form of software updates only.

HetNets

Webb is a proponent for operators collaborating on heterogenous networks (HetNets).

He says the idea originated with 3G but has never been adopted. The concept requires service providers to collaborate to combine disparate networks — cellular, WiFi, and satellite — to improve connectivity and coverage and ultimately improve the end-user experience.

“Perhaps this is the time to do it,” says Webb, even if he is not optimistic: operators have never backed the idea because they favour their own networks.

In the book The 6G Manifesto, Webb explores the HetNets concept, how it could be implemented and the approach’s benefits. The implementation could also be done primarily in software, which the operators favour for 6G (see below).

“They would need to remove a few things like authentication and native provisioning of voice from their networks,” says Webb. There would also need to be some form of coordinator, essentially a database-switch that could run in the cloud.

5G on steroids

The approach adopted for 5G is an application-driven approach, whereby academics and equipment vendors identified applications and their requirements and developed the necessary technologies. Such an approach for 6G, says Webb, is yet more 5G on steroids. 6G will be faster than 5G, require higher frequency spectrum and be designed to address more sectors, each with their own requirements.

“The operators understand their economics, of course, and are closer to their customers,” says Webb. It is the operators not the manufacturers that should be driving 6G.

6G as software

The third approach is for 6G to be the first cellular generation that involves software only to avoid substantial and costly hardware upgrades.

Webb says the operators have not suggested what exactly these software upgrades would do, more that after their costly 5G network upgrades, they want to avoid another cycle of expensive network investment.

Backing a software approach allows operators to avoid being seen as dragging their feet. Rather, they could point to the existing industry organisation, the GSMA, and its releases that occur every 18 months that enhance the current generation and are largely software-based. This could become the new model in future.

5G could have been avoided and simply been an upgrade to 4.5G, says Webb. With periodic releases and software updates 6G could be avoided.

But the operators need to be more vocal. However, there is no consensus among operators globally. China will deploy 6G, whatever its form. But, warns Webb, if the operators don’t step up, 6G will be forced on them. “Hence my call to arms in the book, which says to the operators: ‘If you want an outcome that is different to 5G, you need to step up’.”

A manifesto

Webb argues that the pressure and expectation from 6G wireless are so great that the likely outcome is that it will repeat what happened with 5G.

The logic that 6G is not needed and its needs served with software upgrades will not be enough to halt the momentum driving 6G. 6G will thus not help the operators reverse their fortunes and generate new growth. This is not good news given that service providers already operate in a utility market while facing fierce competition.

“If you look at most utilities – gas, electricity, water – you end up with a monopoly network supplier and then perhaps some competition around the edges,” says Webb. “Telecoms is now a utility in that each mobile operator is delivering something that towards every consumer looks indistinguishable.”

It is not good news too for the equipment vendors. Vendors may drive 6G and get one more generation of equipment sales but it is just delaying the inevitable.

Webb believes the telcos’ revenues will remain the same, resulting in a somewhat profitable businesses: “They’re making more profit than utilities but less than technology companies.”

Webb’s book ends with a manifesto for 6G.

Mobile technology underpins modern life, and having an always-present connectivity is increasingly important yet must also be affordable to all. He calls for operators to drive 6G standards and for governments to regulate in a way that benefits citizens’ connectivity services.

Users have not benefitted from 5G. If that is to change with 6G, there needs to be a clear voice that makes a wireless world better for everyone.

Further Information:

The significance of 6G, click here

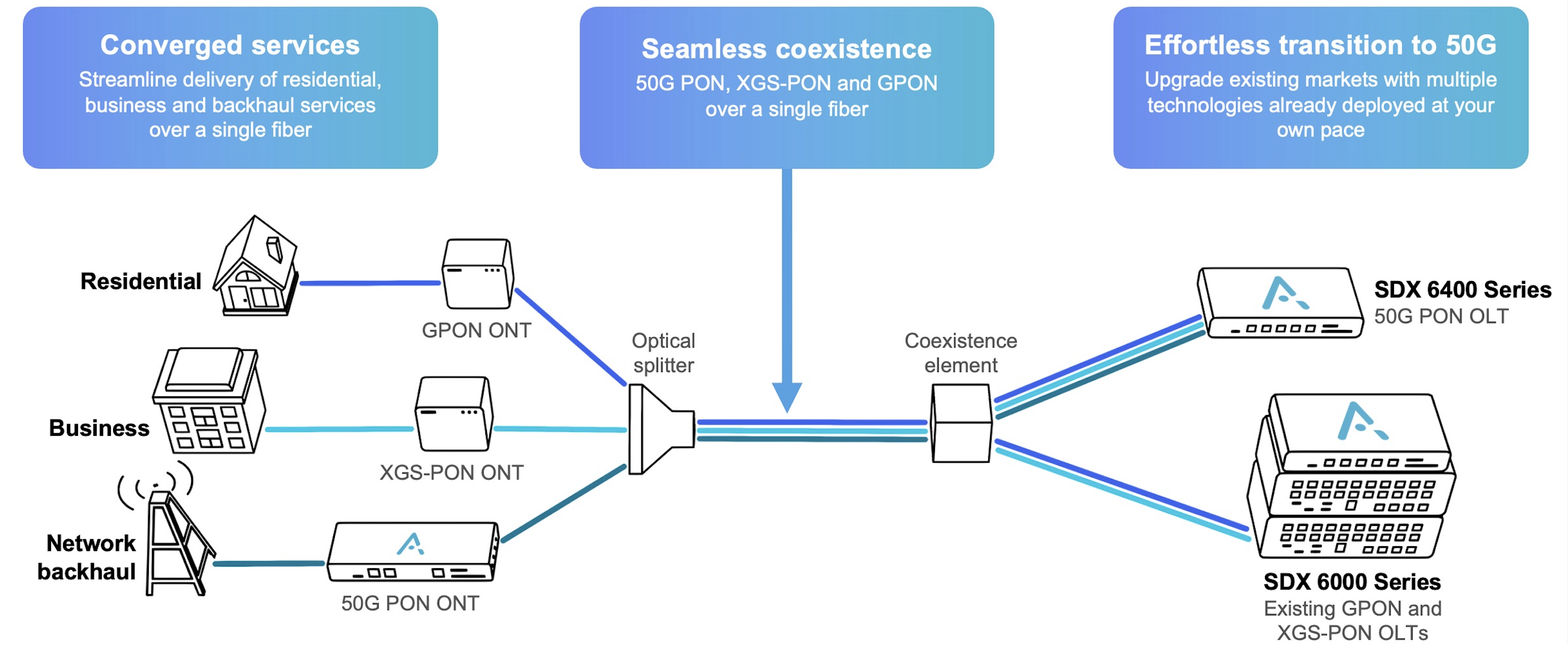

First 50G-PON merchant silicon spurs operator trials

Broadcom has unveiled the industry’s first merchant silicon for the 50-gigabit passive optical network (50G-PON) access standard. Until now, only access equipment players such as Huawei and ZTE had their own 50G-PON silicon.

Broadcom has announced two 50G-PON devices: an optical line terminal (OLT) chip and the optical networking unit (ONU) 50-PON port to the users. Both chips include custom hardware from Broadcom to run artificial intelligence (AI) machine-learning algorithms.

Jim Muth, senior manager of product marketing at Broadcom, says supporting AI benefits the operator and the quality of the end user’s broadband service.

High-speed PON

The 50G-PON is the ITU-T’s latest high-speed access standard.

50G-PON follows the organisation’s XGS-PON, a 10-gigabit PON standard, and before that, the original Gigabit PON (GPON) fibre-to-the-home scheme. GPON supports 2.5 gigabit-per-second (Gbps) downstream (to the user) and 1.25Gbps upstream. 50G-PON is backwards compatible with XGS-PON and GPON.

The leading three Chinese operators—China Mobile, China Telecom, and China Unicom—will deploy 50G-PON and have been trialling the technology. Working with Huawei, Orange has undertaken a 50G-PON field trial in Brittany. Meanwhile, European operators such as Altice, BT, Deutsche Telekom, Swisscom, and Telefonica plan to deploy the technology. “It’s too far into the future for them to make a public statement about their activity,” says Muth.

In a separate announcement, Adtran announced that it is working with alternative network (altnet) operator Netomnia to offer 50G-PON services to the UK market. Adtran is using Broadcom’s 50G-PON chips.

Netomnia, after merging with bsk, has the UK’s fourth-largest full-fibre network. Netomnia says adopting 50G-PON will enable it to scale. Its target is to serve one million customers by 2028.

“Many altnets in the US and the UK are interested in 50G-PON technology,” says Stephan Rettenberger, head of marketing and corporate communications at Adtran. “As they’re much smaller than Tier-1 providers, they’re more agile and generally able to deploy new technologies much faster.”

25GS-PON

A competing PON scheme is 25 Gigabit Symmetrical PON (25GS-PON). The 25GS-PON is not a standard but an industry multi-source agreement (MSA) whose members include operators AT&T, Cox Communications, Chunghwa Telecom and equipment makers Nokia, and Ciena. The MSA uses work developed for 25 gigabit Ethernet PON and XGS-PON standards.

Google Fiber offers customers a 20-gigabit connectivity service that includes Wi-Fi 7 that costs $250 a month in select US markets. The high-bandwidth access service uses 25GS-PON.

Adtran favours 50G-PON because it is a standard. “The ITU-standardised generations of PON (GPON, XGS-PON and 50G PON) all co-exist and are the recommended evolutionary steps in the access network,” says Rettenberger.

Applications

Having 50 gigabits of access bandwidth enables other applications besides traditional connectivity services for businesses and residential users. Broadcom cites autonomous driving, where comprehensive cellular coverage is required. For that, many small-cell phone towers are needed along a highway. Instead of each having a backhaul fibre link, several cell towers could share the 50G-PON.

Autonomous driving is some years out, but Muth says infrastructure first needs to be in place. “It’s going to happen over the decade or more, so you have got to start deploying that sooner rather than later,” he says.

Having 50 gigabits of capacity also allows a service provider to offer businesses dedicated bandwidth links, In contrast, XGS-PON is more restricted with its total 10 gigabits of capacity.

Machine learning and PON

The training of AI/ machine-learning algorithms for access is performed by the operator, or by the operator working with a third party. Broadcom says it too works with interested operators.

The training results are weights that are downloaded to its 50G-PON chips to perform inferencing and run the machine-learning algorithms on the operator’s network data. “Most operators are already using AI machine-learning algorithms, but they are running them in the cloud,” says Muth. By adding inferencing hardware on-chip, data remains local to the user and the PON OLT without using precious bandwidth to upload the data for processing in the cloud.

The on-chip AI performs such tasks as intrusion detection and flow classification. Using AI on-chip, intrusion detection can be shut down quicker. Flow classification identifies such traffic as video conferencing, voice calls, and bulk data.

PON hardware has packet processing and traffic management functions, but the issue is that packet headers are increasingly encrypted. Using a machine learning algorithm allows traffic to be identified based on a flow’s nature. “The number of packets over time identifies it,” says Muth. A voice call’s flow has regular if small amounts of data. A video conferencing flow is also regular but has more data. Video traffic can be prioritised ensuring a better experience for the user.

50G-PON chips

Broadcom uses a 7nm CMOS process for the 50G-PON OLT and ONU chips.

The BCM68660 OLT chip integrates what was previously three chips. The chip combines the switch function with packet and traffic processing and two media access controllers (MACs). This allows the chip to support 16 XGS-PON and 16 GPON ports. Each channel supports up to 256 users, but a small split ratio of 1:128 or 1:64 is used in practice. “Now, if you’re going to do 50-gig, it’s a little bit smaller,” says Muth. “It’s eight ports of 50 gig, eight ports of XGS-PON, and eight ports of GPON so that you can do all three simultaneously.”

The chips also support 50-gigabit symmetrical (download and upload), 50Gbps downstream and 25Gbps upstream and 50Gbps downstream and 12.5Gbps upstream. The capacity of the BCM68660 OLT chip totals 500 gigabits.

Having an OLT-on-a-chip reduces power consumption. Muth says the switch chip consumes about 60W, and each PON MAC 15W, for an OLT total of 90W. In contrast, the 7nm CMOS single-chip PON OLT consumes 45-50W.

The ONU BCM 55050 chip is an Ethernet bridge chip. “It terminates the 50-gigabit PON and provides Ethernet to something else, either a switch chip for distribution within a home or a company, or to a gateway chip.”

Meanwhile, Adtran says it is seeing growing interest in the latest PON standard. Now having silicon for a 50G-OLT line card, Adtran can start trialling work. Five customers will trial 50G-PON in the coming months, it says.