Open Eye MSA offers an alternative to PAM-4 DSPs

A group of companies, led by Macom and Semtech, have launched a multi-source agreement (MSA) to offer an alternative to using a digital signal processor (DSP) in high-speed client-side optical modules.The Open Eye MSA is developing a set of specifications for optical modules that use 50-gigabit 4-level pulse-amplitude modulation (PAM-4) signals whereby only analogue clock and data recovery (CDR) circuitry is required at the receiver.

By using the CDR instead of a PAM-4 DSP, the optical module will consume less power, have lower latency and be less costly to make, says the MSA.

To ensure interoperability, however, module makers using a PAM-4 DSP will need to meet the new MSA specification.

MSA motivation

The IEEE’s 50-gigabit PAM-4 specification was created several years ago before hardware existed, says Marek Tlalka, director of product marketing, high-performance analogue at Macom.

The IEEE specifications assumed that the received signal would be severely distorted such that the ‘eyes’ would be closed when sampled and displayed on an oscilloscope. Recovering the transmitted 4-level encoded signal would thus be a challenge.

“They had to plan for the worst-case transmitters and receivers which, in the end, led them to define the specifications such that a DSP would be required for signal recovery,” says Tlalka.

Marek Tlalka, MacomBut what has transpired is that the transmitters and receivers now used in high-speed modules have much better optical performance than originally anticipated. “You can get a nice eye on the receive side such that an analogue CDR is quite capable of the recovery,” says Tlalka.

Both Semtech and Macom, which already have such CDR solutions, went independently to their customers to promote such PAM-4 DSP-free module designs. The customers responded favourably to the claimed power, cost and latency savings, but the two companies were told that a standard was needed to generate industry backing and ensure wide availability of components and modules.

The led Macom and Semtech to found the MSA which now has 19 members. And since announcing the MSA in May, some 20 companies have enquired about joining. The companies include systems vendors, module makers, chip, and test companies.

The MSA’s current focus is on 50, 100, 200 and 400-gigabit single-mode module designs that have a reach of 500m to 2km. A draft specification, which involves the optical transmitter and receiver, is almost done, and the final version will be published in the autumn.

The MSA work will then address multi-mode module designs with the final phase tackling 100-gigabit PAM-4 (see table above).

Coexistence

The Open Eye MSA expects PAM-4 DSP modules to co-exist with its own modules.

“We want to be clear that we are not excluding DSPs,” says Timothy Vang, vice president marketing and applications at Semtech. “In this MSA spec, the analogue solution may have a power and a latency advantage, that is all.”

Timothy Vang, Semtech

Moreover, there are interface applications where a PAM-4 DSP chip will be needed. One example is for longer reaches, for data centre interconnect up to distances of 80km that are not coherent-based.

Another is for applications where a ‘gearbox’ function is used to translate the electrical signal speeds, for example, from 25-gigabit non-return-to-zero (NRZ) to 50-gigabit PAM-4, or 50-gigabit PAM-4 to 100-gigabit PAM-4.

Issue of interoperability

Tlalka stresses that what the Open Eye MSA is doing is tightening the specification of the module’s optical transmitter: “That open eye can be recovered with an analogue CDR, it can also be recovered with a DSP.”

Inphi, a maker of PAM-4 DSP chips, says it has no current plans to join the Open Eye MSA.

Eric Hayes, senior vice president, networking interconnect at Inphi, agrees that with a wide-open eye, a DSP-based optical module will be compliant with the MSA. He also points out that with an open eye, less signal processing will be required and hence the power consumption will be less.

Eric Hayes, Inphi

DSP-based modules using older laser technology, however, may be IEEE compliant but not pass the Open Eye specification. “That is where you potentially have interop issues,” says Tlalka.

“What we believe is going to happen is that the DSP module makers will just have to modify the test procedure for their modules and they will become compliant,” says Tlalka.

Inphi says the current testing procedure for DSP-based optical modules that are IEEE compliant is simple and cost-effective.

“TDECQ-compliance measurement is a highly effective yet simple quantitative procedure already supported by all test equipment,” says Hayes. TDECQ - the transmitter dispersion eye closure, quaternary - is the test used for PAM-4 rather than a traditional eye mask used for NRZ signals.

By removing the TDECQ requirement and using an eye mask to measure the PAM-4 received eye, the Open Eye MSA is forcing optical transceiver module makers to have to implement an extra layer of testing, adding cost, says Hayes.

Tlalka says the MSA does not expect the PAM-4 DSP module makers to adopt two test procedures, nor will two be necessary. “They will just have to test once [using the Open Eye test procedure],” he says.

There are already test companies as part of the Open Eye consortium and two more are joining as part of the 20 companies that have expressed an interest in joining the MSA.

Hyperscalers' preference

Inphi claims that the hyperscalers have a preference for PAM-4 DSP-based modules. This is also a view shared by Dale Murray, principal analyst at LightCounting, the market research firm.

Dale Murray, LightCounting

“We do see that hyperscalers prefer to stay with DSP-based modules,” says Hayes. “With DSP-based modules, hyperscalers and their supply chains can take advantage of lower bandwidth optics, which is already in high volume production for several years.”

DSP-based solutions also provide value to customers, such as diagnostics, health and performance monitoring, and firmware upgrades in the field. “These features could lower the total cost of ownership for the hyperscalers and reduce the operational expenditure,” says Hayes.

“I think they are going to go with what is the cheapest and lowest power,” counters Tlalka. “A generic statement is easy to make that they will stay with what is out there, but if they start getting modules that are tens of per cent lower cost and tens of per cent lower power, I think they will pretty quickly change their minds”

“We heard from the end users what they wanted the technology to enable in terms of latency, power and cost,” adds Semtech’s Vang. “We are hopefully being responsive as an ecosystem to the big data centre market.”

“At the end of the day, it will be up to the MSA players to make Open Eye a success,” says LightCounting's Murray.

ONF’s published reference designs start to be deployed

Operators are already deploying the first reference designs published by the Open Networking Foundation (ONF). Three of the ONF’s five reference designs have now been made public.

Just over a year ago, eight operators - AT&T, Comcast, China Unicom, Deutsche Telekom, Google, NTT Group, Telefonica and Turk Telekom - took the step to design key components of their edge and access networks after becoming frustrated with what they perceived as foot-dragging by the systems vendors.

AT&T is deploying one of the reference designs - the SDN-enabled broadband access scheme (SEBA). Deutsche Telekom and Telefónica have also said they will deploy SEBA during 2019 and 2020.

Andrew Fuetsch, president AT&T Labs and CTO of AT&T, said in a keynote talk at the recent Open Networking Summit North America event that SEBA is resulting in more commoditised hardware solutions and that such open source solutions are bringing higher speeds and better services across AT&T’s wireline infrastructure.

Meanwhile, an undisclosed operator has deployed a second reference design - Trellis, a leaf-spine switch fabric that supports network functions virtualisation (NFV). Trellis can be deployed as part of SEBA although the operator is deploying it for a different application, says the ONF.

The final of the three published reference designs is the Open Disaggregated Transport Network (ODTN). However, unlike SEBA and Trellis, ODTN is not yet available as a deployable platform, what ONF calls an exemplar platform.

Reference designs

The ONF started developing four reference designs as part of the open source organisation’s ongoing cloud optimised remote data centre work, known as CORD. In addition to SEBA, Trellis and ODTN, it is developing a next generation software-defined networking (NG-SDN) solution, previously known as Unified, Programmable & Automated Network (UPAN).

In February, the ONF announced a fifth reference design dubbed Converged Multi-Access and Core (COMAC) that combines 5G mobile and fixed access.

A reference design defines the assembly of components used for key platforms in the access and edge of the network. By creating reference designs, the operators are defining what they need while also committing to deploy the resulting solutions in their networks. This gives the vendors working alongside the operators a confidence that the work will lead to sales.

The components making up a reference design may be ONF-developed but need not be. They may also be open-source or a vendor-specific solution.

In parallel, the ONF operators have been working with vendors to develop an exemplar platform that is a working implementation of a reference design. The ONF only publishes a reference design once the specification is complete and the associated exemplar platform is ready for trialling. However, the ODTN reference design work is an exception in being published while its exemplar platform is unfinished.

“The ONF board felt the work was substantial and mature enough that it was worth getting out there,” says Timon Sloane, the ONF’s vice president of marketing and ecosystem.

CORD evolution

The ONF is known for its software-defined networking (SDN) work - the OpenFlow protocol and ONOS open-source SDN controller - and for its CORD open networking access and edge projects.

Originally known as the Central Office Re-architected as a Data Centre, CORD comprises two key initiatives: a broadband residential CORD (R-CORD) and a mobile CORD (M-CORD). “Once we had the foundation [of SDN], we created R-CORD, the first incarnation, and then we did M-CORD for the mobile space,” says Sloane.

The ONF has since disaggregated both M-CORD and R-CORD into their access and core components. “We realised they both covered a pretty big space,” says Sloane.

R-CORD has been disaggregated into the SEBA reference design and VOLTHA, an open-source abstraction of broadband hardware. Meanwhile, M-CORD has been split into Open Radio Access Network (ORAN) and a newer project, the Open Mobile Evolved Core (OMEC).

The ONF has also upgraded Trellis to not only provide a leaf-spine architecture switch fabric for inside a data centre but also as a multi-tier architecture that spans sites.

Meanwhile, the ODTN reference design is based on first a point-to-point and then a multi-point dense WDM (DWDM) scheme that connects the CORD edge to the network core.

The ONF has been working with the Telecom Infra Project’s (TIP) Open Optical Packet Transport group with a view to using its Cassini white box platform.

“CORD has grown in scope and we recognised that people want to consume the inner pieces in separate ways and that they have separate value,” said Sloane. “And everything of CORD ride on the SDN stack that is being gracefully migrated to the next generation SDN.”

The SDN software stack is based on the ONF’s Stratum project and the P4 language, which is now under the ONF’s stewardship. Stratum provides a software layer that supports various application programming interfaces (APIs) and the underlying hardware. The Stratum project started in 2018 and builds on software provided by Google. In addition, the ONF is also developing a micro-service ONOS.

The newest reference design, COMAC, creates a unified converged access for the radio access network and broadband, says Sloane.

First, the various elements of the fixed and mobile network access and core elements are disaggregated before convergence will bring a common framework for both. For example, a single subscriber management system for users will be used whether they are connected over broadband or mobile. This is a sophisticated project and will be developed in line with the work of the 3GPP and Broadband Forum organisations, says the ONF.

“We expect this migration to [COMAC to] be smooth but to enable a whole new set of capabilities for all the applications that run above it,” says Sloane.

Each time a new reference design is created within the ONF, it means operators committing yet more staff from different parts of their organisation to fulfil the project, says Sloane: “Additional people to pursue yet another angle of attack to transform and push their networks forward.”

Next steps

The ONF has started working on version 2.0 releases for SEBA, Trellis and the ODTN reference designs.

“This will be the case for some time as the work matures,” says Sloane. “There will be versions under development for the foreseeable future.”

Operators have a ‘bunch of work’ they want to progress and drive via this work, he says: “We don’t see an end in sight right now.”

Further information

SEBA, click here

Trellis, click here

ODTN, click here

COBO brings operational benefits to the data centre

Brad Booth admits the hyperscalers have a problem.

“Our operational inefficiencies are massive and it is only going to get worse,” says Booth, principal network architect for Microsoft’s Azure Infrastructure and chair of the Consortium for On-Board Optics (COBO).

The issue, he says, is that when a switch arrives at the data centre, it comes without the optics installed. The operations staff must unpack the optical modules, plug them into the switch and verify that each is working; an exercise that is repeated thousands of times when they commission a new data centre.

“The time it takes for us to get the network up and running impacts how quickly we can monetise the data centre,” says Booth.

The COBO-enabled 12.8-terabit demonstrator switch. Source: COBO

Moreover, Microsoft wants to use optics to link its servers to the switches. “That is an order of magnitude more optics, which means an order of magnitude more hours,” says Booth. The sum of these hours approaches almost a year, just for the data centre’s optics.

Operational efficiencies

Booth admits that the focus of the COBO venture has been to overcome the technical challenges and publish the first release of the on-board optics specification. Only recently have the operational benefits of on-board optics become evident.

One of these benefits is that, by its very nature, the on-board optics is already installed and tested before the switch is delivered to the data centre. Booth compares on-board optics to the inveterate RJ45 Ethernet connector: “You didn't plug the RJ45 PHY layer in and then plug in the cable; it was already built into the switch.”

COBO also uses new connector designs that simplify the cleaning required when connecting the fibre to the switch.

OFC demonstrator

Microsoft, working with electronic manufacturer Delta, module maker Applied Optoelectronics, and connector suppliers Sumitomo Electric, 3M and Senko, showcased at the OFC show a demonstrator switch using COBO modules.

The proof-of-concept four-rack-unit switch uses Broadcom’s 12.8-terabit Tomahawk 3 Ethernet switch chip (see picture). The top part of the demonstrator switch has been modified to incorporate four 8-lane COBO modules, four 16-lane COBO modules and the new connector types. The switch’s front panel also uses QSFP-DD and OSFP modules that support 400-gigabit pluggable interfaces.

As for the COBO modules, Applied Optoelectronics has developed a 400-gigabit COBO module that supports four 100-gigabit PSM4 interfaces. Other vendors are also developing COBO modules but they have yet to be disclosed. The proof-of-concept platform also offers a choice of COBO connectors from Sumitomo, 3M and Senko.

Sumitomo’s contactless connector that has an air gap when it mates. The result is a more open connector that is far simpler to clean than traditional pluggable connectors. The 3M COBO connector has two polymer faces that slide over each other to create the mate. “You just squirt a can of air [onto the surface], insert it, and you are connected,” says Booth. Meanwhile, Senko's SN connector has also been designed with easy cleaning in mind.

Such operational simplification using the connectors promises significant time savings. “We have got to start doing things smarter,” says Booth. “And COBO is enabling that to happen.”

In a separate development, Petra has developed a 16x25-gigabit 400-gigabit COBO multi-mode module that has a 300m reach. Petra is using two such modules on an FPGA card for artificial intelligence tasks. “They have 800 gigabits of bandwidth coming off what looks like a standard network interface card,” says Booth. Microsoft is also exploring using Petra's module for the switch.

At OFC, the proof-of-concept platform was not operational. Delta only received the platform two weeks before the show, fitted the COBO modules and sent it straight back untested. Delta does have tested platforms up and running, however, says Booth.

>

“By 2021, I expect Microsoft will be deploying COBO switches”

Market Status

COBO was founded in 2015 and only now is the first hardware appearing. At the time, developing 400-gigabit pluggables was seen as a significant technical challenging and there were even questions raised as to whether they would deliver the required density needed for data centre switches. Now, a range of 400-gigabit interfaces in the QSFP-DD and OSFP form factors have been announced.

Meanwhile, the topic of co-packaged optics - which brings the optics from the faceplate to reside alongside the chip in the one package - is now receiving industry attention.

Do both developments limit the COBO opportunity?

“When we did this [COBO], we knew people would be able to do a faceplate with 400-gigabit [pluggable] optics,” says Booth. “And what I only got in the last few months is the operational impact [of using COBO].”

At a panel discussion at OFC, companies said they would have 800-gigabit QSFP-DD and OSFP modules by OFC 2020. “At ECOC [in September 2019], we are going to have 800 gigabits in one of these,” says Booth, pointing to a COBO module. “We are already ahead.”

COBO modules can also support 1.6 terabit interfaces once 100-gigabit PAM4 electrical interfaces become available.

InnoLight and Huawei recently joined COBO and during OFC, four other companies - systems vendors and module makers - told COBO they would be joining.

“By 2021, I expect Microsoft will be deploying COBO switches,” says Booth.

>

“Our operational inefficiencies are massive and it is only going to get worse”

Paving the way

COBO is also paving the way to co-packaged optics, or as Booth puts it, COBO has opened up Pandora’s box.

When the idea of an interoperable on-board optics standard was first discussed, there was skepticism regarding how such modules would be serviced, how they couldn't be replaced in the field, and how the switch would need to be disabled when accessing the modules.

“All these things people were saying were correct if you had certain assumptions,” says Booth. “And that was part of the problem with our industry: we got ourselves trained to be lazy.”

But by pursuing COBO, there has been a realisation that things can be done differently such as the new style of connectors.

“By cracking that open, COBO has started a more serious discussion about co-packaged optics because now we have moved the optics away from the faceplate.”

Co-packaged optics to debut with 25.6 terabit switch chips

The second article in a series on co-packaged optics.

Part 2: Broadcom - a switch-chip vendor

Source: Gazettabyte

The hyperscalers require ever more switching capacity in their data centres to scale the applications they run. A hierarchy of connected switches fitted with optical interfaces is used to provide the pathways that link the tens of thousands of servers found in data centres.

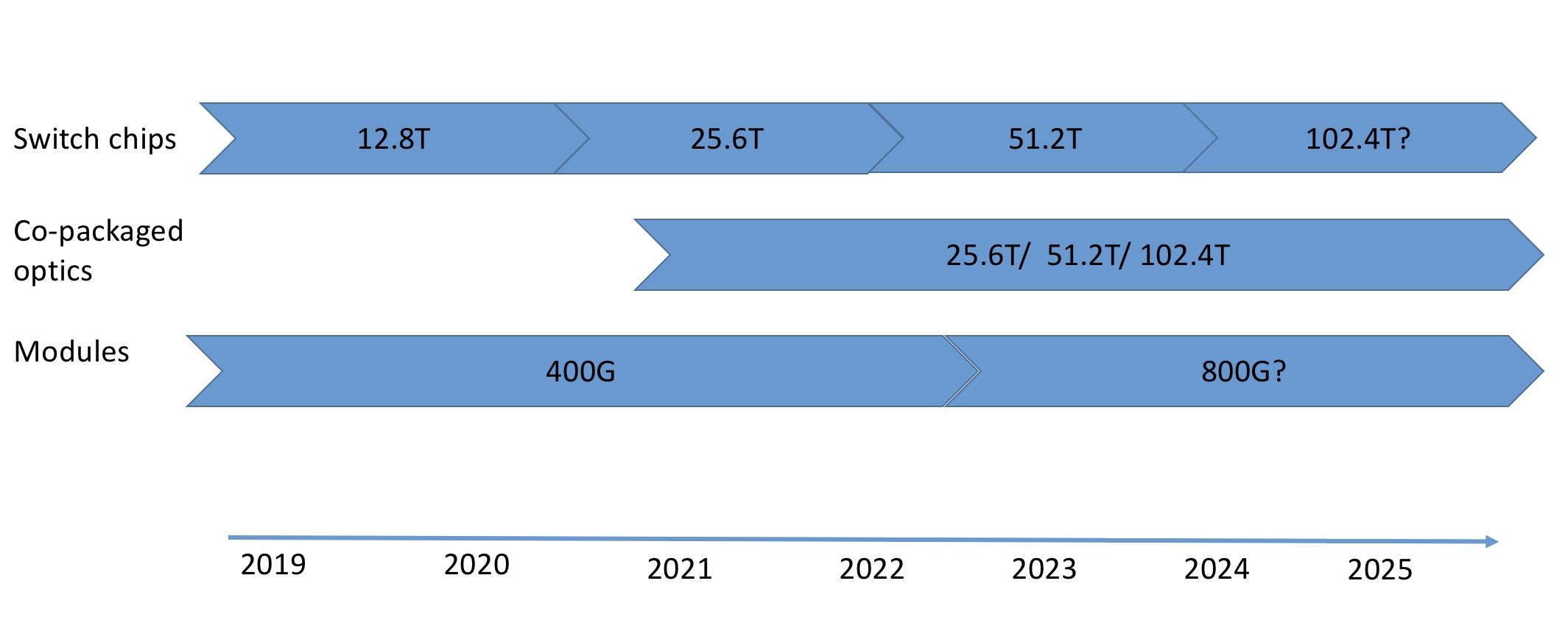

Silicon vendors are responding to this need by doubling the capacity of their switch chips every two years. The largest switch chips have a 12.8-terabit capacity and the first 25.6-terabit devices are expected next year. This relentless pace, however, is one that the optical module makers are struggling to match.

“It is a problem for the optics industry,” says Robert Stone, Distinguished Engineer at leading switch chip player, Broadcom. “The cadence at which we can evolve silicon generally moves a lot faster than the optics guys can monetise a generation of investment, and then reinvest it.”

Co-packaged optics

The result is a schism between the optics and the switch ICs, says Stone.

Data centre operators have had to use 100-gigabit interfaces across two generations of switching silicon. The lag between the two camps is also evident in how certain hyperscalers are adopting 200-gigabit pluggables as a stopgap measure before 400-gigabit modules become mainstream.

The doubling of chip capacity every two years will continue to challenge the optical module engineers. Can faster, power-hungry pluggable optical modules - 800-gigabit modules will follow 400-gigabit ones - fit on the faceplate of a switch at the density required? Currently, 32 400-gigabit optical modules fit on the front panel of a 1-rack-unit (1RU) switch. And if not, what are the alternatives to pluggable modules?

Two options are being pursued by the optical industry.

One is moving the optics from the switch’s front panel onto the motherboard. Such on-board optics shorten the length of the high-speed traces on the printed circuit board (PCB) linking the switch ASIC and the pluggable optics. In turn, the freed-up space on the front panel by ditching pluggables improves the ventilation and cooling of the switch.

The second option, co-packaged optics, places the optics with the ASIC in the same package.

Placing the optics next to the switch chip enables the high-speed serialiser-deserialiser (serdes), the circuit that gets data on and off the chip, to be simplified. No longer will the serdes have to drive very high-speed signals all the way to the front panel’s pluggables. This simplifies the PCB design, constrains the switch chip’s overall power consumption given how hundreds of serdes are used, and reduces the overall die area they consume.

>

“It may just be that operators hedge their bets by deploying half co-packaged optics and half conventional optics to make sure they don’t completely strand themselves”

At a recent panel session at the OFC show, held in San Diego in March, entitled Beyond 400G for Hyperscaler Data Centres, the consensus was that front-panel pluggable optics will continue for at least two more generations of switch chip: 25.6 terabits and 51.2 terabits.

“We can build such systems with conventional front-panel optics with a bit of hard work,” says Stone, a participant in the OFC panel discussion. For example, a 2RU-high 25.6-terabit switch platform will accommodate 64, 800-gigabit modules.

The ‘hard work’ refers to tackling the heat generated by the chip and, in particular, the power consumed all the serdes. But these are engineering challenges, not fundamental physics issues, they can be overcome, says Stone.

But there is an industry acceptance that continuing to increase the speed of client-side pluggables has a limited future and that change is coming.

Overlapping worlds

Stone’s belief is that co-packaged optics will first be deployed alongside pluggables, enabling the hyperscalars to deploy both technologies in their data centres. This will reduce the risk associated with introducing the new technology, such as a supply constraint or a reliability issue.

Indeed, this is the guidance Stone has been giving the silicon photonics players developing co-packaged optics. It is all well and good for a company to come up with very low power, dense wavelength grid optical interface design, he says, but the co-packaged device will need to interoperate with conventional front-panel pluggable optics.

“It may just be that operators hedge their bets by deploying half co-packaged optics and half conventional optics to make sure they don’t completely strand themselves,” says Stone.

Stone is confident that there will be co-packaged optics solutions with the advent of 25.6-terabit and 51.2-terabit switch chips.

“I don’t think they will be necessary,” he adds. “It will be more a cost and power optimisation in those generations.” But pursuing co-packaged optics provides a way for companies to differentiate themselves and innovate.

Stone also notes that certain switch chip vendors are separating the serdes input-output (I/O) circuitry, resulting in standalone dies that surround the packet-processing core. Such serdes ‘tiles’ lend themselves to a co-packaged design.

“Once you have split off the I/O, you may then switch the electrical I/O to optical I/O,” says Stone. “Obviously, there is a lot of detail to be worked out but the move away from a big monolithic-chip package makes it friendlier to do such integration.”

Challenges

But co-packaged optics presents its own challenges.

Stone says that it is still uncertain whether co-packaged optics will be a must for the switch chip generation after 51.2 terabits although he believes that is when such optics becomes compelling.

“Having said that, it is not a complete slam-dunk in that generation either because now you are aggregating a lot of heat in a very small space,” he says. “If you take a 100-terabit chip, or whatever the number will be, and you pack all that optics onto it, trying to cool that is going to be very hard.” That suggests water-cooling will be needed as air-cooling will not be sufficient.

Unless there is a need to solve a feasibility challenge, designers will continue to cling to what they have done historically. “It is comfortable, people know how to do it, and the supply chain is already built up,” he says. All these factors change with the embrace of co-packaged optics.

The industry has yet to run into that feasibility gap but what has changed is that the industry now acknowledges that it is coming.

Lumentum completes sale of certain datacom lines to CIG

Brandon Collings, CTO of Lumentum, talks CIG, 400ZR and 400ZR+, COBO, co-packaged optics and why silicon photonics is not going to change the world.

Lumentum has completed the sale of part of its datacom product lines to design and manufacturing company, Cambridge Industries Group.

The sale will lower the company's quarterly revenues by between $20 million to $25 million. Lumentum also said that it will stop selling datacom transceivers in the next year to 18 months.

The move highlights how fierce competition and diminishing margins from the sale of client-side modules is causing optical component companies to rethink their strategies.

Lumentum’s focus is now to supply its photonic chips to the module makers, including CIG. “From a value-add point of view, there is a lot more value in selling those chips than the modules,” says Brandon Collings, CTO of Lumentum.

400ZR and ZR+

Lumentum will continue to design and sell line-side coherent optical modules, however.

“With coherent, there is a lot of complexity and challenge in the module’s design and manufacture,” says Collings. “We believe we can extract the value we need to continue in that business.”

The emerging 400ZR and 400ZR+ are examples of such challenging coherent interfaces.

The 400ZR specification, developed by the Optical Internetworking Forum (OIF), is a 400-gigabit coherent interface with an 80km reach. The 400 gigabit-per-second (Gbps) line rate will be achieved using a 64-gigabaud symbol rate and a 16-QAM modulation scheme.

>

“[400ZR] is not client-side. Sixty-four gigabaud is very hard to do in such an extremely compact form factor.

”

Module makers will implement the 400ZR interface using client-side pluggable modules such as the QSFP-DD and the OSFP to enable data centre operators to add coherent interfaces directly to their switches.

But implementing 400ZR will be a challenge. “This is not client-side,” says Collings. “Sixty-four gigabaud is very hard to do in such an extremely compact form factor.”

First samples of 400ZR modules are expected by year-end.

The 400ZR+ interface, while not a specification, is a catch-all for a 400-gigabit coherent that exceeds the 400ZR specification. The 400ZR+ will be a multi-rate design that will support additional line rates of 300, 200 and 100Gbps. Such rates coupled with more advanced forward-error correction (FEC) schemes will enable the 400ZR+ to span much greater distances than 80km.

The 400ZR+ interface helps the developers of next-generation coherent DSP chips to recoup their investment by boosting the overall market their devices can address. “It is basically a way of saying I’m going to spend $50 million developing a coherent DSP, and the 400ZR market alone is not big enough for that investment,” says Collings.

Lumentum says there will be some additional functionality that will be possible to fit into a QSFP-DD such that at least one of the ZR+ modes will be supported. But given the QSFP-DD module’s compactness and power constraints, the ZR+ will also be implemented in the CFP2 form factor that has the headroom needed to fully exploit the coherent DSP’s capabilities to also address metro and regional networks.

400ZR+ modules are expected in volume by the end of 2020 or early 2021.

DSP economics

Lumentum will need to source a coherent DSP for its 400ZR/ ZR+ designs as it does not have its own coherent chip. At the recent OFC show held in San Diego, the talk was of new coherent DSP players entering the marketplace to take advantage of the 400ZR/ZR+ opportunity. Collings says he is aware of five DSP players but did not cite names.

NEL and Inphi are the two established suppliers of merchant coherent DSPs. Lumentum (Oclaro) has partnered with Acacia Communications to use its Meru DSP for Lumentum’s CFP2-DCO design, although it is questionable whether Acacia will license its DSP for 400ZR/ ZR+, at least initially.

>

“God forbid if 10 or more players are doing this as no matter how you slice it, people will be losing [money]”

Lumentum and Oclaro also partnered with Ciena to use its WaveLogic Ai for a long-haul module. That leaves room for at least one more provider of a coherent DSP that could be a new entrant or an established system vendor that will license an internal design.

Collings points out that it makes no sense economically to have more than five players. If it takes $50 million to tape out a 7nm CMOS coherent DSP, the five players will invest a total of $250 million. And if the investment cost for the module, photonics and everything else is a comparable amount, that equates to $500 million being spent on the 400-gigabit coherent generation.

As for the opportunity, Collings talks of about a total of up to 500,000 ports a year by 2020. That equates to an investment return in the first year of $1,000 per device sold. “God forbid if 10 or more players are doing this as no matter how you slice it, people will be losing [money].”

Beyond Pluggables

The evolution of optics beyond pluggables was another topic under discussion at OFC.

The Consortium of On-Board Optics (COBO), the developerof an interoperable optical solution that embeds optics on the line card, had a stand at the show and a demonstration of its technology. In turn, co-packaged optics, the stage after COBO in the evolution of optical interfaces that will integrate the optics with the silicon in one package, is also now also on companies' agenda.

Collings explains that COBO came about because the industry thought on-board optics would be needed given the challenge of 400-gigabit pluggables meeting the interface density needed for 12.8-terabit switches . “I shared that opinion four to five years ago,” he says, adding that Lumentum is a member of COBO.

>

“That problem is real. It is a matter of how far the current engineering can go before it becomes too painful.”

But 400-gigabit optics has been engineered to meet the required faceplate density, including ZR for coherent. As a result, COBO is less applicable. “That need to break the paradigm is a lot less,” he says.

That said, Collings says COBO has driven valuable industry discussion given that the data centre is heading in a direction where 32 ports of 800-gigabit interfaces will be needed to get data in and out of next-generation, 25-terabit switches.

“That problem is real,” says Collings. “It is a matter of how far the current engineering can go before it becomes too painful.” Scaling indefinitely what is done today is not an option, he says.

It is possible with the next generation of switch chip to simply use a two-rack-unit box with twice as many 400-gigabit modules. “That has already been done at the 100-gigabit generation that lasted longer because it doubled up the 100-gigabit port count,” he says.

“In the generation after that, you are now asking for stuff that looks very challenging with today’s technology,” he says. “And that is where co-packaging is focused, the 50-terabit switch generation.” Switches using such capacity silicon are expected in the next four years.

But this is where it gets tricky, as co-packaging not only presents significant technical challenges but also will change the supply chain and business models.

Collings points out that hyperscalars do not like making big pioneering investments in new technology, rather they favour buying commodity hardware. “They don’t like risk, they love competition, and they like a healthy ecosystem,” he says.

“There is a lot of talk from the technology direction of how we can solve this problem [using co-packaged optics] but I think on the business side, the riskside, the investment side is putting a lot of pressure on that actually happening,” says Collings. “Where it ends up I don’t honestly know.”

Silicon photonics

One trend evident at OFC was the growing adoption of silicon photonics by optical component companies.

Indeed, the market research firm, LightCounting, in a research note summarising OFC 2019, sees silicon photonics as a must-have technology given co-packaged optics is now clearly on the industry’s roadmap.

However, Collings stresses that Lumentum’s perspective remains unchanged regarding the technology.

“It’s a fabless exercise so we can participate in silicon photonics and, quite frankly, that is why a lot of other companies are participating because the barrier to entry is quite low,” says Collings. “Nevertheless, we look at silicon photonics as another tool in the toolbox: it has advantages in some areas, some significant disadvantages in others, and in some places, it is simply comparable.”

When looking at a design from a system perspective such as a module, other considerations come into play besides the cost of the silicon photonics chip itself. Collings cites the CFP2 coherent module. While the performance of its receiver is good using silicon photonics, the modulator is questionable. You also need a laser and a semiconductor optical amplifier to compensate for silicon photonics higher loss, he says,

The alternative is to use an indium phosphide-based design and that has its own design issues. “What we are finding when you look at the right level is that the two are the same or indium phosphide has the advantage,” says Collings. “And as we go faster, we are finding silicon is not really keeping up in bandwidth and performance.”

As a result, Lumentum is backing indium phosphide for coherent operating at 64 gigabaud.

“A lot of people are talking about silicon photonics because they can talk about it,” says Collings. “It’s not worthless, don’t get me wrong, but its success outside of Acacia has been niche, and Acacia is top notch at doing this stuff.”

Macom readies its silicon photonics platform for 400G

-

Macom has announced a laser-integrated photonic integrated circuit (L-PIC) for the 400G-FR4 standard

-

The company is also working with GlobalFoundries to use the semiconductor foundry’s 300mm wafer silicon photonics process

Vivek Rajgarhia (centre) being interviewed at OFC. Source: Macom.

Macom has detailed its latest silicon photonics chip to meet the upcoming demand for 400-gigabit interfaces within the data centre.

The chip, a laser-integrated photonic integrated circuit (L-PIC), was unveiled at the OFC show held last month in San Diego. The L-PIC implements the transmitter circuitry for the 400G FR4 2km interface standard.

Backing silicon photonics

“Five to six years ago, we saw that silicon photonics would have a key role to play in photonics and optical interconnect,” says Vivek Rajgarhia, senior vice president and general manager, lightwave at Macom.

Macom acquired several companies to gain the capabilities needed to become a silicon photonics player.

In 2014 the company paid $230 million for BinOptics which provided Macom with etched facet laser technology that plays a key role in how its L-PIC platform is assembled. Also acquired was the silicon photonics design company, Photonic Controls. In 2015 Macom added FiBest, a packaging specialist, for $60 million.

“We also have the electronics expertise to go alongside [the photonics] to provide chipset solutions,” says Rajgarhia.

>

“Today, as a photonics company, if you don’t have a play in silicon photonics, you are legacy ”

Laser-integrated PIC

The biggest challenge in silicon photonics is integrating the laser, says Rajgarhia. Coupling and aligning the laser, especially when developing optical interfaces for the high-volume data centre market, needs to be done in a cost-effective and scalable way, he says.

The L-PIC, a coarse wavelength division multiplexing (CWDM) design, tackles this by having four cavities for the lasers. “Each laser is flip-chipped and inserted into a cavity without any lens or isolator, and without active alignment,” says Rajgarhia.

The self-alignment is possible by using the etched-facet laser technology from BinOptics. “When you cleave the laser facet, the dimensional control has a lot of play - the tolerance is very high - but with an etched facet, you lithographically define the mechanical dimensions,” he says. “We create a cavity in the silicon that matches the laser’s dimensions.” Macom has also incorporated multiple alignment structures as part of its L-PIC platform to enable the self-alignment.

Macom has already developed the L-PIC for the 100-gigabit CWDM4 standard. “We started with the CWDM4 because it had four wavelengths,” says Rajgarhia. “The CWDM4 is a more challenging design [than the 100-gigabit PSM4 interface] because it requires multiplexing.”

The L-PIC has now been extended to support 100-gigabit channels, to address the DR single channel and the four-channel 400-gigabit FR4 standards. The modulator bandwidth had to be extended and the laser power is different but the approach - the platform - remains the same, says Rajgarhia.

Macom refers to the L-PIC as a smart device. The electro-absorptive modulated lasers (EMLs) used for the FR4 are uncooled. The L-PIC includes ‘structures’ in the silicon such as heaters for tuning the optical elements and photo-detectors that monitor the optical performance. Macom has developed an accompanying micro-controller that sets and controls the device using such structures.

“We have developed software which we give to customers,” says Rajgarhia. “You can type in what extinction ratio you want, what power you want and it sets that up.”

The company has also started the FR4 receiver development that will also be an integrated design with a demultiplexer and four optical receiver channels.

Macom is not saying when the L-PIC will be available. However, the company says 'meaningful demand' for 400-gigabit interfaces will start from 2021.

GlobalFoundries

Macom also announced at OFC that it is working with GlobalFoundries to use the chip maker’s 90nm silicon-on-insulator 300mm wafer processing line.

“Today, as a photonics company, if you don’t have a play in silicon photonics, you are legacy,” says Rajgarhia, adding that in order to make money, what is needed is a working solution that can scale.

“When we started developing [silicon photonics devices], we and others used research foundries to get our products ready,” says Rajgarhia. “Now, what we have announced is that we are scaling this up at GlobalFoundries.”

Macom has started the development at GlobalFoundaries’ East Fishkill fab, the former IBM Microelectronics site that has undertaken a lot of research in silicon photonics, says Rajgarhia.

GlobalFoundries recently created a process development kit (PDK) for its silicon photonics line. Now Macom is an early user of the PDK.

Last year, silicon photonics start-up, Ayar Labs, entered into a strategic agreement with GlobalFoundries, providing the foundry with its optical input-output (I/O) technology while gaining access to its 45nm silicon photonics process.

Acacia bets on silicon as coherent enters its next phase

Gazettabyte interviewed Acacia Communications’ president and CEO, Murugesan ‘Raj’ Shanmugaraj, as the coherent technology company celebrates its 10th anniversary.

Raj Shanmugaraj

Acacia Communications has come a long way since Raj Shanmugaraj (pictured) first joined the company as CEO in early 2010. “It was just a few conference rooms and we didn't have enough chairs,” he says.

The company has since become a major optical coherent player with revenues of $340 million in 2018; revenues that would have been higher but for the four-month trade ban imposed by the US on Chinese equipment maker ZTE, an Acacia customer.

And as the market for coherent technology continues to grow, Acacia and other players are preparing for new opportunities.

“We are still in the early stages of the disruption," says Shanmugaraj. “You will see higher performance [coherent systems] in some parts of the network but there is going to be growth as coherent moves closer to the network edge.”

Here, lower power, flexibility and more integrated coherent solutions will be needed as the technology moves inside the data centre and closer to the network edge with the advent of 5G, higher-speed access and the Internet of Things (IoT).

Competitive landscape

Shanmugaraj prefers to focus on Acacia’s own strengths and products when asked about the growing competition in the coherent marketplace. However, recent developments present challenges for the company.

Systems vendors such as Huawei and Ciena are becoming more vertically integrated, developing not only their own coherent digital signal processor (DSP) ASICs but also optics. Ciena has also made its WaveLogic Ai DSP available to optical module makers Lumentum and NeoPhotonics and will sell its own optical modules using its latest WaveLogic 5 coherent silicon.

>

“You will see higher performance [coherent systems] in some parts of the network but there is going to be growth as coherent moves closer to the network edge ”

New coherent digital signal processor (DSP) players are also expected to enter the marketplace alongside established competitors, NEL and Inphi. The entrance of new players developing coherent DSPs is motivated by the unit volumes promised by 400ZR, the emerging 80km data centre interconnect interface standard.

“We are proponents of the fact that the merchant market will continue to grow, driven by interoperability and standardisation,” says Shanmugaraj. Such growth will lead to multiple markets where coherent technology will play. “There are going to be a few winners, not just one or two,” he says.

Acacia’s revenues were hit in 2018 following the US Department of Commerce’s enforced trade ban imposed on ZTE. However, the company recorded a strong fourth quarter posting revenues of $107 million, up almost a quarter on the revenues a year earlier. This followed strong ZTE orders after the ban was revoked.

Shanmugaraj says diversification has always been a priority for the company, independent of the trade issues between the US and China. The company has also been working to diversify its Chinese customer base. “So we are well positioned as these trade issues get resolved,” he says.

Origins

Acacia was established in mid-2009 by a core team from Mintera, a sub-system supplier that provided 40-gigabit DPSK line cards to network equipment suppliers. But Mintera folded and was eventually sold to Oclaro in July 2010.

Before joining Acacia, Shanmugaraj was at systems vendor Alcatel-Lucent where he learned two lessons.

One is that the long-term success of a company is based on technology leadership. “You want to be driven by technology or you fall behind your competitors,” he says. The second lesson was that the largest systems companies build products internally before an ecosystem becomes established, after which they buy from merchant suppliers.

This matched the vision of Acacia’s founders that sought to exploit their optical expertise gained at Mintera to become a leading merchant supplier of coherent transmission technology.

Stealth years

Acacia remained in secrecy for nearly half its existence, only revealing its technology and products in 2014 with the launch of the AC-100 CFP coherent pluggable module. The AC-100 is aimed at metro networks delivering a transmission reach of 80km to 1,200km. However, Acacia had already been selling 5x7-inch modules for 100-gigabit long-haul and ultra-long-haul applications as well as a 40-gigabit ultra-long-haul module.

“In the early years, there were just a few companies working on coherent,” says Shanmugaraj. “We had to be careful in terms of what products we were developing and what customers we were going after.”

Shanmugaraj says Acacia secured multi-million dollar commitments from customers even before it had a product. “It was the expertise of the founding team as well as the product concepts they were proposing that got them the commitments,” he says.

The backing enabled the company to manage with only $53 million of venture funding prior to its successful initial public offering in 2016.

“This was a pretty significant feat,” says Shanmugaraj. “Hardware start-ups, whether semiconductor or systems companies, use significantly more cash; these are expensive technologies to get off the ground.”

Shanmugaraj describes the early years as intense, with staff working between 60 and 70 hours a week.The then start-up had to be prudent with funding, not growing too quickly yet having sufficient resources to meet orders from systems customers that had their own orders to fulfil.

Coherent technologies

Acacia’s founders chose silicon for its coherent solutions, to replace ‘exotic materials’ such as indium phosphide and lithium niobate used in traditional optical transmission systems.

The company backed silicon photonics for the coherent optics, an industry trailblazing decision. To this aim, Acacia recruited Chris Doerr, the renowned optical integration specialist and Bell Labs Fellow.

The company also decided to develop its own coherent DSPs. By developing the optics and the DSP, Acacia could use a co-design approach when designing the hardware, trading off the performance of the optics and the signal processing to achieve an optimal design.

Shanmugaraj explains that the company chose a silicon-based approach to exploit the huge investment made by the semiconductor industry in chips and their packaging. Basing the components on silicon would not only simplify high-speed networks, he says, but it would also lower their power consumption and enable products to be made more quickly and cheaply.

“The beauty of silicon photonics is that it can be placed right next to a heat source, in this case, the high-power coherent DSP ASIC that generates a lot of heat,” says Shanmugaraj. “This allows for smaller form-factor designs.” In contrast, indium phosphide-based optics need to be temperature controlled when placed next to a hot chip, he says.

“Five or six years ago, people were challenging whether silicon photonics was even going to work at 100 and 200 gigabits,” says Shanmugaraj. Acacia has now used silicon photonics in all its products, including its latest high-end 1.2 terabits AC1200 coherent module.

Shanmugaraj sees Acacia's portfolio of coherent products as the company's biggest achievement: "You see start-ups that come out with one product that is a bestseller but we have continued to innovate and today we have a broad portfolio."

AC1200

The AC1200 module supports two optical wavelengths, each capable of supporting 100 to 600-gigabit transmissions in increments of 50 gigabits.

The AC1200 can be used for data centre interconnect links through to long distance submarine links. Acacia recently demonstrated the AC1200 transmitting a 400-gigabit signal over a 6,600km submarine cable.

“We are seeing strong interest in our AC1200 from network operators and expect our equipment customers to begin deployments this quarter,” says Shanmugaraj.

There are several reasons why network operators are choosing to deploy the AC1200, he says: “High capacity is important in data centre interconnect edge applications where we expect hyperscale operators may use the AC1200 in its full 1.2-terabit mode, but these applications are also sensitive to cost, power and density.”

The AC1200 also provides higher capacity in a smaller footprint than the 5x7-inch form factors currently available, he says, while for longer-reach applications, the AC1200 offers a combination of performance and flexibility that is setting the pace for the competition.

The data centre interconnect market represents a good opportunity for coherent interconnect suppliers because the operators drive and deploy technology at pace, says Shanmugaraj. Hyperscalers are continually looking to add more capacity in the same size and power constraints that exist today. Accordingly, this has been a priority development area for Acacia.

To increase capacity, companies have boosted the symbol rate from 32 gigabaud to 64 gigabaud while systems vendors Ciena and Infinera have recently detailed upcoming systems that support 800-gigabit wavelengths that use a symbol rate approaching 100 gigabaud.

The AC1200, which is due in systems in the coming quarter, demonstrates silicon photonics based modulation operating at up to 70 gigabaud while first indium-phosphide 800-gigabit per wavelength systems are due by the year-end.

“We don’t really see silicon photonics lagging behind indium phosphide,” says Shanmugaraj. “We think there is a path to even higher baud rates with silicon photonics, and 128 gigabaud is the next logical step up because it would double the data rate without needing to increase the modulation order.”

Higher modulation orders are also possible but the benefits must be weighed against increased complexity, he says.

400-gigabit coherent pluggables

Shanmugaraj says that the 400ZR pluggable module standard continues the trend to reduce the size and power consumption of optical transport systems in the data centre.

>

“You want to be driven by technology or you fall behind your competitors”

The current generation of data centre interconnect platforms, ranging from a 1 rack unit pizza box to a several rack-unit-sized chassis, were developed to be more compact than conventional optical transport platforms.

Now, with the advent of 400ZR that fits into a client-side QSFP-DD or OSFP module, data centre operators will be able to do away with such platforms for distances up to 80km by plugging the modules into the switch or router platforms and connecting them to open line systems.

“Costs come down because it [coherent] is getting down to the client-side form factors and that gives the hyperscalers more faceplate density,” says Shanmugaraj. “The hyperscalers also gain multi-vendor interoperability [with 400ZR] which is important as they want standardisation.”

Shanmugaraj admits that with the advent of 400ZR will bring greater competition. But he points out that the 400ZR is a complicated product to built that will challenge companies. Those players that have both the optics and a low-power DSP will have an advantage. “As long as it opens up the market wider, it is good for Acacia as it is in our control how we can win in the market,” says Shanmugaraj.

The industry expectation is that the 400ZR will start to be deployed in the second half of 2020.

There is also industry talk about 400ZR+, an interface that will be able to go beyond 80km that will require more advanced dispersion compensation and forward error correction schemes.

Shanmugaraj says it will be the same DSP ASIC that will support both the 400ZR and 400ZR+. However, a 400ZR+ interface will consume more power and so will likely require a larger module form factor than the ZR.

Meanwhile, the 400-gigabit CFP2-DCO pluggable for metro networks is built along the same lines as the 400ZR, says Shanmugaraj.

“Here you have applications like the Open ROADM MSA where network operators are trying to drive the same interoperability and not be stuck with one vendor,” he says. “This is driving the 400-gigabit evolution in the metro network for some of the largest telcos.”

There is also the open networking packet-optical opportunity, white-box platforms such as the Voyager and Cassini being developed by the Telecom Infra Project (TIP). Shanmugaraj says such white boxes rely on software solutions that are a work-in-progress and that much work is still to be done.

“The first generation showed that there is more work required to standardise the software and how that can be used by the hyperscalers,” he says. “It is an opportunity but we view it as more of a longer-term one.”

Emerging opportunities

The markets that are growing today are the metro, long haul, sub-sea and data centre interconnect, says Shanmugaraj.

The coherent applications that are emerging will result in products within the data centre as well as for 5G, access, the Internet of Things (IoT) and even autonomous vehicles.

Ultimately, what will lead to coherent being adopted within the data centre is the speed of the interfaces. “As you go to higher speeds, direct detection technology gets constrained [due to dispersion and other impairments],” says Shanmugaraj.

But for this to happen certain conditions will need to be met: the speed of interfaces on switches will need to increase, not just to 400 gigabits but 800 gigabits and greater.

“Looking to higher data rates beyond 400 gigabits, it gets more challenging for direct detect to achieve the necessary link budgets cost-effectively,” says Shanmugaraj. “It may be necessary to move from four-lane solutions to eight lanes in order to support the desired reaches. At the same time, we are working to make coherent more cost-effective for these applications.”

The other two conditions are the challenge of what form factors the coherent technology be squeezed into, andcost. Coherent optics is more expensive but its cost is driven by such factors as volumes, the level of automation that can be used to make the module, and the yield.

“There could be inflextion points where coherent becomes cost-competitive for some applications in the data centre,” says Shanmugaraj.

Companies will continue to innovate in both direct detect and coherent technologies and the market will determine the transition points. “But we do believe that coherent can be adopted inside data centres in the future,” he says.

In turn, metro and long-haul networks are already being upgraded in anticipation of 5G and the access requirements. “4G networks have a lot of 1-gigabit and 10-gigabit links but 5G has an order of magnitude higher throughput requirement,” says Shanmugaraj.

That means more capacity is needed for backhaul and that will lead to a proliferation of low-cost 100-gigabit coherent. A similar story is unfolding in access with the likes of the cable operators moving fibre closer to the network edge. This too will need low-cost 100-gigabit coherent interfaces.

IoT is a longer term opportunity and will be dependent on dense deployments of devices before the traffic will require sufficient aggregation to justify coherent.

“I don’t know if your refrigerator will have a coherent interface,” concludes Shanmugaraj. “But as you aggregated these [devices] into aggregation points, that becomes a driver for coherent at the edge.”

Data centre interconnect drives coherent

-

NeoPhotonics announced at OFC a high-speed modulator and intradyne coherent receiver (ICR) that support an 800-gigabit wavelength

-

It also announced limited availability of its nano integrable tunable laser assembly (nano-ITLA) and demonstrated its pico-ITLA, an even more compact silicon photonics-based laser assembly

-

The company also showcased a CFP2-DCO pluggable

NeoPhotonics unveiled several coherent optical transmission technologies at the OFC conference and exhibition held in San Diego last month.

“There are two [industry] thrusts going on right now: 400ZR and data centre interconnect pizza boxes going to even higher gigabits per wavelength,” says Ferris Lipscomb, vice president of marketing at NeoPhotonics.

Ferris Lipscomb

The 400ZR is an interoperable 400-gigabit coherent interface developed by the Optical Internetworking Forum (OIF).

Optical module makers are developing 400ZR solutions that fit within the client-side QSFP-DD and OSFP pluggable form factors, first samples of which are expected by year-end.

800-gigabit lambdas

Ciena and Infinera announced in the run-up to OFC their latest coherent systems - the WaveLogic 5 and ICE6, respectively - that will support 800-gigabit wavelengths. NeoPhotonics announced a micro intradyne coherent receiver (micro-ICR) and modulator components that are capable of supporting such 800-gigabit line-rate transmissions.

NeoPhotonics says its micro-ICR and coherent driver modulator are class 50 devices that support symbol rates of 85 to 90 gigabaud required for such a state-of-the-art line rate.

The OIF classification defines categories for devices based on their analogue bandwidth performance. “With class 20, the 3dB bandwidth of the receiver and the modulator is 20GHz,” says Lipscomb. “With tricks of the trade, you can make the symbol rate much higher than the 3dB bandwidth such that class 20 supports 32 gigabaud.” Thirty-two gigabaud is used for 100-gigabit and 200-gigabit coherent transmissions.

Class 50 refers to the highest component performance category where devices have an analogue bandwidth of 50GHz. This equates to a baud rate close to 100 gigabaud, fast enough to achieve data transmission rates exceeding a terabit. “But you have to allow for the overhead the forward-error correction takes, such that the usable data rate is less than the total,” says Lipscomb (see table).

Source: Gazettabyte, NeoPhotonics

Silicon photonics-based COSA

NeoPhotonics also announced a 64-gigabaud silicon photonics-based coherent optical subassembly (COSA). The COSA combines the receiver and modulator in a single package that is small enough to fit within a QSFP-DD or OSFP pluggable for applications such as 400ZR.

Last year, the company announced a similar COSA implemented in indium phosphide. In general, it is easier to do higher speed devices in indium phosphide, says Lipscomb, but while the performance in silicon photonics is not quite as good, it can be made good enough.

“It [silicon photonics] is now stretching certainly into the Class 40 [that supports 600-gigabit wavelengths] and there are indications, in certain circumstances, that you might be able to do it in the Class 50.”

Lipscomb says NeoPhotonics views silicon photonics as one more material that complements its indium phosphide, planar lightwave circuit and gallium arsenide technologies. “Our whole approach is that we use the material platform that is best for a certain application,” says Lipscomb.

In general, coherent products for telecom applications take time to ramp in volumes. “With the advent of data centre interconnect, the volume growth is much greater than it ever has been in the past,” says Lipscomb.

NeoPhotonics’ interested in silicon photonics is due to the manufacturing benefits it brings that help to scale volumes to meet the hyperscalers’ requirements. “Whereas indium phosphide has very good performance, the infrastructure is still limited and you can’t duplicate it overnight,” says Lipscomb. “That is what silicon photonics does, it gives you scale.”

NeoPhotonics also announced the limited availability of its nano integrable tunable laser assembly (nano-ITLA). “This is a version of our external cavity ITLA that has the narrowest line width in the industry,” says Lipscomb.

The nano-ITLA can be used as the source for Class 50, 800-gigabit systems and current Class 40 600 gigabit-per-wavelength systems. It is also small enough to fit within the QDFP-DD and OSFP client-side modules for 400ZR designs. “It is a new compact laser that can be used with all those speeds,” says Lipscomb.

NeoPhotonics also showed a silicon-photonics based pico-ITLA that is even smaller than the nano-ITLA.“The [nano-ITLA’s] optical cavity is now made using silicon photonics so that makes it a silicon photonics laser,” says Lipscomb.

Instead of having to assemble piece parts using silicon photonics, it can be made as one piece. “It means you can integrate that into the same chip you put your modulator and receiver on,” says Lipscomb. “So you can now put all three in a single COSA, what is called the IC-TROSA.” The IC-TROSA refers to an integrated coherent transmit-receive optical subassembly, defined by the OIF, that fits within the QSFP-DD and OSFP.

Despite the data centre interconnect market with its larger volumes and much faster product uptakes, indium phosphide will still be used in many places that require higher optical performance. “But for bulk high-volume applications, there are lots of advantages to silicon photonics,” says Lipscomb.

400ZR and 400ZR+

A key theme at this year’s OFC was the 80km 400ZR. Also of industry interest is the 400ZR+, not an OIF specification but an interface that extends the coherent range to metro distances.

Lipscomb says that the initial market for the 400ZR+ will be smaller than the 400ZR, while the ZR+’s optical performance will depend on how much power is left after the optics is squeezed into a QSFP-DD or OSFP module.

“The next generation of DSP will be required to have a power consumption low enough to do more than ZR distances,” he says. “The further you go, the more work the DSP has to do to eliminate the fibre impairments and therefore the more power it will consume.”

Will not the ZR+ curtail the market opportunity for the 400-gigabit CFP2-DCO that is also aimed at the metro?

“It’s a matter of timing,” says Lipscomb. “The advantage of the 400-gigabit CFP2-DCO is that you can almost do it now, whereas the ZR+ won’t be in volume till the end of 2020 or early 2021.”

Meanwhile, NeoPhotonics demonstrated at the show a CFP2-DCO capable of 100-gigabit and 200-gigabit transmissions.

NeoPhotonics has not detailed the merchant DSP it is using for its CFP2-DCO except to say that it working with ‘multiple ones’. This suggests it is using the merchant coherent DSPs from NEL and Inphi.

OFC interview regarding silicon photonics and our book

ADVA Optical Networking's Gareth Spence interviewed Daryl Inniss, director, new business development at OFS, and me at the OFC conference and exhibition held earlier this month in San Diego, California. We were interviewed regarding the status of silicon photonics and our book on the topic.

Click here for the interview.

Inphi adds a laser driver to its 100-gigabit PAM-4 DSP

Inphi has detailed its second-generation Porrima chip family for 100-gigabit single-wavelength optical module designs.

Source: Inphi

The Porrima family of devices is targeted at the 400G DR4 and 400G FR4 specifications as well as 100-gigabit module designs that use 100-gigabit 4-level pulse-amplitude modulation (PAM-4). Indeed, the two module types can be combined when a 400-gigabit pluggable such as a QSFP-DD or an OSFP is used in breakout mode to feed four 100-gigabit modules using such form factors as the QSFP, uQSFP or SFP-DD.

The Gen2 family has been launched a year after the company first announced the Porrima. The original 400-gigabit and 100-gigabit Porrima designs each have three ICs: a PAM-4 digital signal processor (DSP), a trans-impedance amplifier (TIA) and a laser-driver.

“With Gen2, the DSP and laser driver are integrated into a single monolithic CMOS chip, and there is a separate amplifier chip,” says Siddharth Sheth, senior vice president, networking interconnect at Inphi. The benefit of integrating the laser driver with the DSP is lower cost, says Sheth, as well as a power consumption saving.

The second-generation Porrima family is now sampling with general availability expected in mid-2019.

PAM-4 families

Inphi has three families of PAM-4 ICs targeting 400-gigabit interfaces: the Polaris, Vega and Porrima.

The Polaris, Inphi’s first product family, uses a 200-gigabit die and two are used within the same package for 400-gigabit module designs. As well as the PAM-4 DSP, the Polaris family also comprises two companion chips: a laser driver and an amplifier.

Inphi’s second family is the Vega, a 8x50-gigabit PAM-4 400-gigabit DSP chip that sits on a platform’s line card.

“The chip is used to drive backplanes and copper cables and can be used as a retimer chip,” says Sheth.

Siddharth Sheth

“For the Porrima family, you have a variant that does 4x100-gigabit and a variant that does 1x100-gigabit,” says Sheth. The Porrima can interface to a switch chip that uses either 4x25-gigabit non-return-to-zero (NRZ) or 2x50-gigabit PAM-4 electrical signals.

Why come out with a Gen2 design only a year after the first Porrima? Sheth says there was already demand for 400-gigabit PAM-4 chips when the Porrima first became available in March 2018. Optical module makers needed such chips to come to market with 400-gigabit modules to meet the demand of an early hyperscale data centre operator.

“Now, the Gen2 solution is for the second wave of customers,” says Sheth. “There are going to be two or three hyperscalers coming online in 2020 but maybe not as aggressively as the first hyperscaler.” These hyperscalers will be assessing the next generation of 400-gigabit PAM-4 silicon available, he says.

The latest design, like the first generation Porrima, is implemented using 16nm CMOS. The DSP itself has not been modified; what has been added is the laser-driver circuitry. Accordingly, it is the transmitter side that has been changed, not the receiver path where Inphi does the bulk of the signal processing. “We did not want to change a whole lot because that would require a change to the software,” he says.

A 400-gigabit optical module design using the first generation Porrima consumes under 10W but only 9W using the Gen2. The power saving is due to the CMOS-based laser driver consuming 400mW only compared to a gallium arsenide or silicon germanium-based driver IC that consumes between 1.6W to 2W, says Inphi.

The internal driver can achieve transmission distances of 500m while a standalone driver will still be needed for longer 2km spans.

Sheth says that the advent of mature low-swing-voltage lasers will mean that the DSP’s internal driver will also support 2km links.

PAM-4 DSP

The aim of the DSP chip is to recover the transmitted PAM-4 signal. Sheth says PAM-4 chip companies differ in how much signal processing they undertake at the transmitter and how much is performed at the receiver.

“It comes down to a tradeoff, we believe that we are better off putting the heavier signal processing on the receive side,” says Sheth.

Inphi performs some signal processing on the transit side where transmit equalisation circuits are used in the digital domain, prior to the digital-to-analogue converter.

The goal of the transmitter is to emit a signal with the right amplitude, pre-emphasis, and having a symmetrical rise and fall. But even generating such a signal, the PAM-4 signal recovered at the receiver may look nothing like the signal sent due to degradations introduced by the channel. “So we have to do all kind of tricks,” he says.

Inphi uses a hybrid approach at the receiver where some of the signal processing is performed in the analogue domain and the rest digitally. A variable-gain amplifier is used up front to make sure the received signal is at the right amplitude and then feed-forward equalisation is performed. After the analogue-to-digital stage, post equalisation is performed digitally.

Sheth says that depending on the state of the received signal - the distortion, jitter and loss characteristics it has - different functions of the DSP may be employed.

One such DSP function is a reflection canceller that is turned on, depending on how much signal reflection and crosstalk occur. Another functional block that can be employed is a maximum likelihood sequence estimator (MLSE) used to recover a signal sent over longer distances. In addition, forward-error correction blocks can also be used to achieve longer spans.

“We have all sorts of knobs built into the chip to get an error-free link with really good performance,” says Sheth. “At the end of the day, it is about closing the optical link with plenty of margin.”

What next?

Sheth says the next-generation PAM-4 design will likely use an improved DSP implemented using a more advanced CMOS process.

“We will take the learning from Gen1 and Gen2 and roll it into a ‘Gen3’,” says Sheth.

Such a design will also be implemented using a 7nm CMOS process. “We are now done with 16nm CMOS,” concludes Sheth.