Infinera adds software to its PIC for instant bandwidth

Infinera has enabled its DTN-X platform to deliver rapidly 100 Gigabit services. The ability to fulfill capacity demand quickly is seen as a competitive advantage by operators. Gazettabyte spoke with Infinera and TeliaSonera International Carrier, a DTN-X customer, about the merits of its 'instant bandwidth' and asked several industry analysts for their views.

Infinera has added a WDM line card hosting its 500 Gigabit super-channel photonic integrated circuit to its DTN-X platform

Infinera has added a WDM line card hosting its 500 Gigabit super-channel photonic integrated circuit to its DTN-X platform

Pravin Mahajan, Infinera.

Infinera is claiming an industry first with the software-enablement of 100 Gigabit capacity increments. The company's DTN-X platform's 'instant bandwidth' feature shortens the time to add new capacity in the network, from weeks as is common today to less than a day.

The ability to add bandwidth as required is increasingly valued by operators. TeliaSonera International Carrier points out that its traffic demands are increasingly variable, making capacity requirements harder to forecast and manage.

"It [the DTN-X's instant bandwidth] enables us to activate 100 Gig services between network spans to manage our own IP traffic which is growing rapidly," says Ivo Pascucci, head of sales, Americas at TeliaSonera International Carrier. "We will also be able to sell in the market 100 Gig services and activate the capacity much more rapidly."

What has been done

Infinera has added three elements to enable its DTN-X platform to enable 100 Gigabit services.

One is a new wavelength division multiplexing (WDM) line card that features its 500 Gigabit-per-second (Gbps) super-channel photonic integrated circuit (PIC). Infinera says the line card has 500Gbps of capacity enabled, of which only 100Gbps is activated. "The remaining 400Gbps is latent, waiting to be activated," says Pravin Mahajan, director of corporate marketing and messaging at Infinera.

Infinera uses the DTN-X's Optical Transport Network (OTN) switch fabric to pack the client side signals onto any of the 100Gbps channels activated on the line side. This capacity pool of up to 500 Gbps, says Infinera, results in better usage of backbone capacity compared to traditional optical networking equipment based on individual 100Gbps 'siloed' channels.

A software application has also been added to Infinera's network management system, the digital network administrator (DNA), to activate the 100Gbps capacity increments.

Lastly, Infinera has in place a just-in-time system that enables client-side 10 Gigabit Ethernet optical transceivers to be delivered to customers within 10 days, if they out of stock. Infinera says it is achieving a 6-day delivery time in 95% of the cases.

Advantages

TeliaSonera International Carrier confirms the advantages to having 100 Gigabit capacities pre-provisioned and ready for use.

"Having the ability to turn up large bandwidth is critical to our business, especially as the [traffic] numbers continue to grow"

"Having the ability to turn up large bandwidth is critical to our business, especially as the [traffic] numbers continue to grow"

Ivo Pascucci, TeliaSonera International Carrier

"If it is individual line cards across the network when you have as many PoPs as we do, it does get tricky," says Pascucci. "If we have 500 Gig channels pre-provisioned with the ability to activate 100 Gig segments as needed, that gives us an advantage versus having to figure out how many line cards to have deployed in which nodes, and forecasting which nodes should have the line cards in the first place."

The operator is already seeing demand for 100 Gigabit services, from the carrier market and large content providers. The operator already provides 10x10Gbps and 20x10Gbps services to customers. "With that there are all the challenges of provisioning ten or 20 10 Gig circuits and 10 or 20 cross-connects for each site," says Pascucci. The operator also manages one and two Terabits of network capacity for certain customers.

"Having the ability to turn up large bandwidth is critical to our business, especially as the [traffic] numbers continue to grow," says Pascucci.

Analysts' comments

Gazettabye asked several industry analysts about the significance of Infinera's announcement. In particular the uniqueness of the offering, the claim to reduce rapidly bandwidth enablement times and its importance for operators.

Infonetics Research

Andrew Schmitt, directing analyst for optical

Schmitt believes Infinera's announcement is significant as it is the first announced North American win. It also shows the company has a solution for carriers that only want to roll out a single 100 Gbps but don't want to buy 500Gbps.

More importantly, it should allow some carriers to deploy extra capacity for future use at no cost to them and that opens up interesting possibilities for automatically switched optical network (ASON) management or even software-defined networking (SDN).

"As to the claim that it reduces capacity enablement from weeks to potential minutes, to some degree, yes," says Schmitt.

Certainly Ciena, Alcatel-Lucent or Cisco could ship extra line cards into customers and not charge the customer until they are used and that would effectively achieve the same result. "But if the PIC truly has better economics than the discrete solutions from these vendors then Infinera can ship hardware up front and then recognise the profits on the back end," he says.

"You simply can't predict where the best places to put bandwidth will be"

In turn, if customers get free inventory management out of the deal and Infinera equipment can support that arrangement more economically, that is a significant advantage for Infinera.

"This instant bandwidth is unique to Infinera. As I said, anyone could do this deal. But you need a hardware cost structure that can support it or it gets expensive quickly," says Schmitt. "Everyone is working on super-channels but it is clear from the legacy of the way the 10 Gig DTN hardware and software worked that Infinera gets it."

Schmitt believes the term super-channel is abused. He prefers the term virtualised bandwidth - optical capacity that can be allocated the same way server or storage resources are assigned through virtualization.

"The SDN hype is hitting strong in this business but Infinera is really one of the only companies that have a history of a hardware and software architecture that lends itself well to this concept," he says. This is validated with its customer list which is loaded heavily with service providers that are not just talking about SDN but actively doing something, he says.

"It [turning capacity up quickly] is important for SDN as well as more advanced protection arrangements. You simply can't predict where the best places to put bandwidth will be," says Schmitt. "If you can have spare capacity in the network that is lit on demand but not paid for if you don't need it, it is the cheapest approach for avoiding overbuilding a network for corner-case requirements.

"I think the accounting for this product will be interesting, it is likely that we will know in a year how successful this concept was just by a careful examination of the company's financials," he concludes.

ACG Research

Eve Griliches, vice president of optical networking

Infinera delivered this year the DTN-X with 500 Gig super-channels based on PIC technology. Now, a new 500 Gig line card has been added that can operate at 100 Gig and the remaining 400 Gig can be lit in 100 Gig increments using software. This allows customers to purchase 100 Gig at a time, and turn up subsequent bandwidth via software when they require it.

“No other vendor has a software-based solution, and no one else is delivering 500 Gig yet either,” says Griliches.

With this solution, ACG Research says in its research note, operators can start to develop a flexible infrastructure where bandwidth can grow and move around the network instantly. This is useful to address varying demands in bandwidth, triggered by incidents such as natural disasters or sporting events.

Rapid bandwidth enablement has always been important and takes way too long, so this development is key, says Griliches: “Also, it enables Infinera to enter markets which only need one 100 Gig wavelength for now, which they could not do before.”

“No other vendor has a software-based solution, and no one else is delivering 500 Gig yet either”

Looking forward, ACG Research expects this software and hardware-based instant bandwidth utility model will enable Infinera to widen its potential market base and increase its global market share in 2013 and 2014.

Ovum

Ron Kline, principal analyst, and Dana Cooperson, vice president, of the network infrastructure practice

Ovum also thinks Infinera's announcement is significant. It brings essentially the same value proposition Infinera had with 10 Gigabit to the 100 Gigabit market - low operational expenditure (opex) and quick time-to-market. ”Remember 10 Gig in 10 days?” says Kline.

It further fixes an issue for customers in that with the 10x10Gbps, they had to essentially pay for the full 100Gbps up front, and then they could be very efficient with turn-up and opex. Customers made an efficient opex for more capital expenditure (capex) up-front trade. "With instant bandwidth, they don't have to make the upfront capex-versus-opex tradeoff; they can be most efficient with both,” says Cooperson.

Any vendor can shorten capacity enablement times if they can convince the operator to pre-position bandwidth in the network that is ready to be turned on at a moment's notice.

Ron Kline

Kline says operators has different processes for turning up services and in many cases it is these processes and not the equipment directly that is the cause of the additional time for provisioning. “For example the operator may not use the DNA system or may have a very complex OSS/BSS used in the process,” says Kline.

Nevertheless, the capability to have really short provisioning is there, if an operator wants to take advantage. In the TeliaSonera case, Infinera is managing the network so the quick time to market will be there, says Kline.

Cooperson adds that there can be many factors that impede the capacity enablement process, based on Ovum's own research. “But it is clear from talking to Infinera's customers that its system design and approach is a big benefit to those carriers, often the competitive carriers, in competing in the market,” she says. “Multiple carriers told us that with the Infinera system, they were able to win business from competitors.”

Any vendor can shorten capacity enablement times if they can convince the operator to pre-position bandwidth in the network that is ready to be turned on at a moment's notice. However what is unique to Infinera is its system is deployed 500Gbps at a time and all the switching is done electrically by the OTN switch at each node. Others are working on super-channels but none are close to deploying, says Ovum.

“Multiple carriers told us that with the Infinera system, they were able to win business from competitors.”

Dana Cooperson

The ability to turn on bandwidth rapidly is becoming increasingly important. From a wholesale operator perspective it is very important and a key differentiator.

"It's particularly relevant to wholesale applications where large bandwidth chunks are required and the customer is another carrier," says Cooperson. "Whether you view a Google or a Facebook as a carrier or a very large enterprise, it would apply to them as well as a more traditional carrier."

AppliedMicro samples 100Gbps CMOS multiplexer

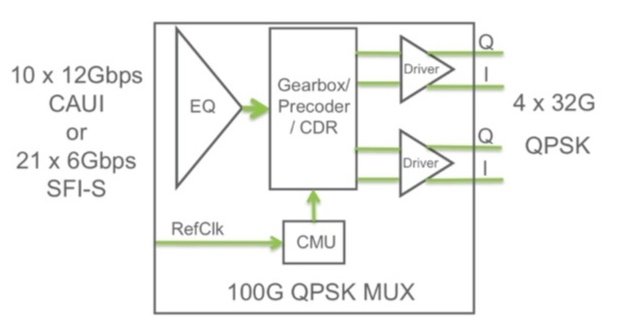

AppliedMicro has announced the first CMOS merchant multiplexer chip for 100Gbps coherent optical transmission. The S28032 device supports dual polarisation, quadrature phase-shift keying (DP-QPSK) and has a power consumption of 4W, half that of current multiplexer chip designs implemented in BiCMOS.

The S28032 100 Gig multiplexer IC. Source: AppliedMicro

The S28032 100 Gig multiplexer IC. Source: AppliedMicro

"CMOS has a very low gain-bandwidth product, typically 100GHz," says Tim Warland, product marketing manager, connectivity solutions at AppliedMicro. “Running at 32GHz, we have been able to achieve a very high bandwidth with CMOS."

Significance

The availability of a CMOS merchant device will be welcome news for optical transport suppliers and 100Gbps coherent module makers. CMOS has better economics than BiCMOS due to the larger silicon wafers used and the chip yields achieved. The reduced power consumption also promotes the move to smaller-sized optical modules than the current 5x7-inch multi-source agreement (MSA).

"By reducing the power and the size, we can get to a 4x6-inch next-generation module,” says Warland. “And perhaps if we go for a shorter [optical transmission] reach - 400-600km - we could get into a CFP; then you can get four modules on a card.”

"Coherent ultimately is the solution people want to go to [in the metro] but optical duo-binary will do just fine for now"

Tim Warland, AppliedMicro

Chip details

The S28032 has a CAUI interface: 10x12Gbps input lanes that are multiplexed into four lanes at 28Gbps to 32Gbps. The particular data rate depends on the forward error correction (FEC) scheme used. The four lanes are DQPSK-precoded before being fed to the polarisation multiplexer to create the DP-QPSK waveforms.

The device also supports the SFI-S interface - 21 input channels, each at 6Gbps. This is significant as it enables the S28032 to be interfaced to NTT Electronics' (NEL) DSP-ASIC coherent receiver chip that has been adopted by 100Gbps module makers Oclaro and Opnext (now merged) as well as system vendors including Fujitsu Optical Systems and NEC.

The mux IC within a 100Gbps coherent 5x7-inch optical module. Source: AppliedMicro

The mux IC within a 100Gbps coherent 5x7-inch optical module. Source: AppliedMicro

The AppliedMicro multiplexer IC, which is on the transmit path, interfaces with NEL's DSP-ASIC that is on the receiver path, because the FEC needs to be a closed loop to achieve the best efficiency, says Warland. "If you know what you are transmitting and receiving, you can improve the gain and modify the coherent receiver sampling points if you know what the transmit path looks like," he says.

The DSP-ASIC creates the transmission payloads and uses the S28032 to multiplex those into 28Gbps or greater speed signals.

The SFI-S interface is also suited to interface to FPGAs, for those system vendors that have their own custom FPGA-based FEC designs.

"Packet optical transport systems is more a potential growth engine as the OTN network evolves to become a real network like SONET used to be"

Francesco Caggioni. AppliedMicro

The multiplexer chip's particular lane rate is set by the strength of the FEC code used and its associated overhead. Using OTU4 frames with its 7% overhead FEC, the resulting data rate is 27.95Gbps. With a stronger 15% hard-decision FEC, each of the 4 channel's data rate is 30Gbps while it is 31.79Gbps with soft-decision FEC.

"It [the chip] has got sufficient headroom to accommodate everything that is available today and that we are considering in the OIF [Optical Internetworking Forum],” says Warland. The multiplexer is expected to be suitable for coherent designs that achieve a reach of up to 2,000-2,500km but the sweet spot is likely to be for metro networks with a reach of up to 1,000km, he says.

But while the CMOS device can achieve 32Gbps, it has its limitations. "For ultra long haul, we can't support a FEC rate higher than 20%," says Warland. "For that, a 25% to 30% FEC is needed."

AppliedMicro is sampling the device to lead customers and will start production in 1Q 2013.

What next

The S28032 joins AppliedMicro's existing S28010 IC suited for the 10km 100 Gigabit Ethernet 100GBASE-LR4 standard, and for optical duo-binary 100Gbps direct detection that has a reach of 200-1,000km.

"Our next step is to try and get a receiver to match this chip," says Warland. But it will be different to NEL's coherent receiver: "NEL's is long haul." Instead, AppliedMicro is eyeing the metro market where a smaller, less power-hungry chip is needed.

"Coherent ultimately is the solution people want to go to [in the metro] but optical duo-binary will do just fine for now," says Warland.

Two million 10Gbps OTN ports

AppliedMicro has also announced that it has shipped 2M 10Gbps OTN silicon ports. This comes 18 months after it announced that it had shipped its first million.

"OTN is showing similar growth to the 10 Gigabit Ethernet market but with a four-year lag," says Francesco Caggioni, strategic marketing director, connectivity solutions at AppliedMicro.

The company sees OTN growth in the IP edge router market and for transponder and muxponder designs, while packet optical transport systems (P-OTS) is an emerging market.

"Packet optical transport systems is more a potential growth engine as the OTN network evolves to become a real network like SONET used to be," says Caggioni. "We are seeing development but not a lot of deployment."

Further reading:

The OTN transport and switching market

Source: Infonetics Research

Source: Infonetics Research

The OTN transport and switching market is forecast to grow at a 17% compound annual growth rate (CAGR) from 2011 to 2016, outpacing the 5.5% CAGR of the optical equipment market (WDM, SONET/SDH). So claims a recent study on the OTN equipment marketplace by Infonetics Research.

A Q&A with report author, Andrew Schmitt, principal analyst for optical at Infonetics.

How should OTN (Optical Transport Network) be viewed? As an intermediate technology bridging the legacy SONET/SDH and the packet world? Or is OTN performing another, more fundamental networking role?

There is a deep misconception that once the voyage to an all-packet nirvana is complete, there is no need for SONET/SDH or an equivalent technology. This isn’t true. Networks that are 100% packet still need an OSI layer 1 mechanism, and to date this is mostly SDH and increasingly OTN.

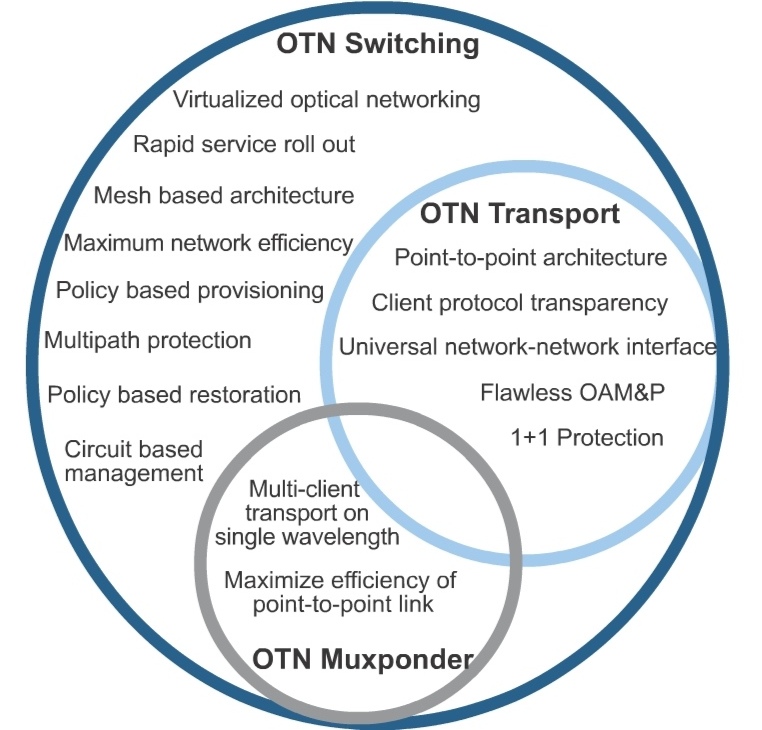

OTN should be viewed as the carrier transport protocol for the foreseeable future. For many carriers, OTN will be used not just for carrying a single packet client, but for interleaving multiple clients onto the same wavelength. This is OTN switching, and it is a superset of OTN transport functionality.

Most people talk about the OTN market but they fail to distinguish between whether OTN is used as a point-to-point technology or as a switching technology that allows the creation of an electronic mesh network.

What is OTN doing within operators' networks that accounts for their strong investment in the technology?

OTN is the new physical layer protocol carrying out the OSI [Open Systems Interconnection] layer 1 functions. Carriers are investing in OTN as part of their continuing investments in WDM [wavelength division multiplexing] equipment, most of which supports OTN transport, a maturing market. The new market is that of OTN switching, which resembles the SONET/SDH multiplexing scheme, but with much better features and management.

OTN switching deployments are directly related to large scale deployments of 40G and 100G transport networks as part of what I like to call The Optical Reboot. As these new wavelength speeds are rolled out, often on unused fibre, other technologies are being introduced at the same time – things like OTN switching and new control plane methods.

"People are underestimating how hard it is to build this [OTN] hardware and combine it with control plane software"

Please explain the difference between the main platforms - OTN transport, OTN switching and P-OTS. And will they have the same relative importance by 2016?

OTN switching is a superset of OTN transport, and the differences are shown in a Venn diagram (chart above) from a recent whitepaper I wrote, Integrated OTN Switching Virtualizes Optical Networks. Somewhere between the two is the muxponder application, which is good for low-volume deployments but becomes expensive and tough to manage when used in quantity.

P-OTS (packet-optical transport systems) are boxes that combine both layer 1 (SONET/SDH and/or OTN switching) with layer 2 (Ethernet, MPLS-TP, other circuit-oriented Ethernet (COE) protocols) in the same hardware and management platform.

Cisco was one of the early leaders in this space with some creative brute-force upgrades to the venerable 15454 platform. Since then, many legacy SONET/SDH multi-service provisioning platforms (MSPPs) have seen upgrades to carry Ethernet. Some of the best examples of this platform type are the Fujitsu 9500, Tellabs' 7100, and Alcatel-Lucent's 1850.

You say a big vendor battle is brewing in the P-OTS space: Cisco, Tellabs, and Alcatel-Lucent are the top 3 vendors, but Fujitsu, Ciena, and Huawei are gaining. What factors will determine a vendor's P-OTS success here?

It really depends. In the metro-regional applications of bigger boxes, things like 100G optics and OTN switching will be more important, as the layer 2 functions are handed off to dedicated layer 2/3 machines. As you get closer to the edge, though, OTN switching will have no importance and everything will depend on the layer 2 and layer zero features.

For layer 2, this means supporting a lightweight circuit-oriented Ethernet protocol with awareness of all the various service types that might be in play. For layer zero, it is all about cheap tunable optics (tunable XFP and SFP+), but particularly ROADMs. I think BTI Photonics, Cyan, Transmode, and ADVA Optical Networking are some of the smaller players to watch here. Mobile backhaul, data centre interconnect, and enterprise data services are the big engines of growth here.

Were there any surprises as part of your research for the report?

There just are not that many vendors shipping OTN switching systems today. I think people are underestimating how hard it is to build this hardware and combine it with control plane software. In 2011, only Ciena, Huawei, and ZTE shipped OTN switching for revenue. This year we should see Alcatel-Lucent, Infinera, Nokia Siemens, and maybe a few more.

Is there one OTN trend currently unclear that you'd highlight as worth watching?

Yes: It isn’t clear to what degree carriers want integrated WDM optics in OTN switches. In the past, big SONET/SDH switches like Ciena’s CoreDirector were always shipped with short-reach optics that connected it to standalone WDM systems. I think going forward, OTN switching and the WDM transport functions must be built into the same hardware in order to get the benefits of OTN switching at the best price, and that’s why I wrote the Integrated OTN Switching white paper – to try to communicate why this is important. It is a shift in the way carriers use this equipment, though, and as you know, some carrier habits are hard to break.

Further reading

OTN Processors from the core to the network edge, click here

OTN processors from the core to the network edge

The latest silicon design announcements from PMC and AppliedMicro reflect the ongoing network evolution of the Optical Transport Network (OTN) protocol.

"There is a clear march from carriers, led in particular by China, to adopt OTN in the metro"

Scott Wakelin, PMC

The OTN standard, defined by the telecom standards body of the International Telecommunication Union (ITU-T), has existed for a decade but only recently has it emerged as a key networking technology.

OTN's growing importance is due to the enhanced features being added to the protocol coupled with developments in the network. In particular, OTN enhances capabilities that operators have long been used to with SONET/SDH, while also supporting packet-based traffic. Moreover chip vendors are unveiling OTN designs that now span the core to the network edge.

"OTN switching is a foundational technology in the network"

Michael Adams, Ciena

OTN supports 1 Gigabit Ethernet (GbE) with ODU0 framing alongside ODU1 (2.5G), ODU2 (10G), ODU3 (40G) and ODU4 (100G). The standard packs efficiently client signals such as SONET/SDH, Ethernet, video and Fibre Channel, at the various speed increments up to 100Gbps prior to transmission over lightpaths. Meanwhile, the Optical Internetworking Forum (OIF) has recently developed the OTN-over-Packet-Fabric standard that allows OTN to be switched using packet fabrics.

"OTN switching is a foundational technology in the network," says Michael Adams, Ciena’s vice president of product & technology marketing.

Operator benefits

Whereas 10Gbps services matched 10Gbps lightpaths only a few years ago, transport speeds have now surged ahead. Common services are at 1 and 10 GbE while transport is now at 40Gbps and 100Gbps speeds. OTN switching allows client signals to be combined efficiently to fill the higher capacity lightpaths and avoid stranded bandwidth in the network.

OTN also benefits network connectivity changes. With AT&T's Optical Mesh Service, for example, customers buy a total capacity and, using a web portal, can adapt connectivity between their sites as requirements change. "It [OTN] can manage GbE streams and switch them through the network in an efficient manner," says Adams.

The ability to adapt connectivity is also an important requirement for cloud computing, with OTN switching and a mesh control plane seen as a promising way to enable dynamic networking that provides guaranteed bandwidth when needed, says Ciena.

OTN also offers an alternative to IP-over-DWDM, argues Ciena. By adding a 100Gbps wavelength, service routers can exploit OTN to add 10G services as needed rather than keep adding a 10Gbps wavelength for each service using IP-over-DWDM. "To enable service creation quickly, why not put your router network on top of that network versus running it directly?" says Adams.

OTN hardware announcements

The latest OTN chip announcements from PMC and Applied Micro offer enhanced capacity when aggregating and switching client signals, while also supporting the interfacing to various switch fabrics.

PMC has announced two metro OTN processors, dubbed the HyPHY 20Gflex and 10Gflex. The devices are targeted at compact "pizza boxes" that aggregate residential, enterprise and mobile backhaul traffic, as well as packet-optical and optical transport platforms.

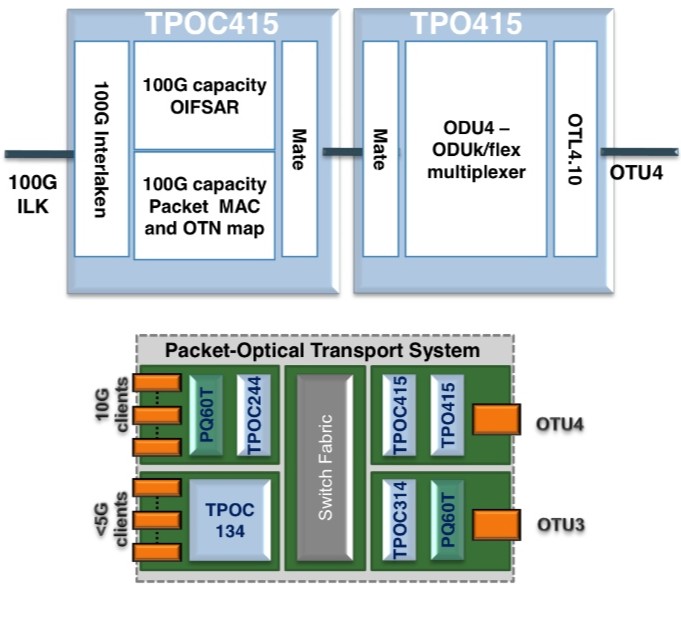

AppliedMicro's TPACK unit has unveiled two additions to its OTN designs: a 100Gbps chipset and the TPO134. The company also announced the general availability of its 100Gbps muxponder and transponder OTN design, now being deployed in the network.

Source: AppliedMicro

Source: AppliedMicro

"OTN has long had a home in the core of the network," says Scott Wakelin, product manager for HyPHY flex at PMC. "But there is a clear march from carriers, led in particular by China, to adopt OTN in the metro, whether layer-zero or layer-one switched."

Using various market research forecasts, PMC expects the global OTN chip market to reach US $600 million in 2015, the bulk being metro.

PMC and AppliedMicro offer application-specific standard product (ASSP) OTN ICs while AppliedMicro also offers FPGA-based OTN designs.

The benefits of using an FPGA, says AppliedMicro, include time-to-market, the ability to reprogramme the design to accommodate standards’ tweaks, and enabling system vendors to add custom logic elements to differentiate their designs. PMC develops ASSPs only, arguing that such chips offer superior integration, power efficiency and price points.

Both companies, when developing an ASSP, know that the resulting design will be adopted by end customers. When PMC announced its original HyPhy family of devices, seven of the top nine OEMs were developing board designs based on the chip family.

PMC's metro OTN processors

The HyPHY 20Gflex has 16 SFP (up to 5Gbps) and two 10Gbps XFP/SFP+ interfaces, whose streams it can groom using the device's 100Gbps cross-connect. The cross-connect can manipulate streams down to SONET/SDH STS-1/ STM-0 rates and ODU0 (1GbE) OTN channels.

Both ODU0 and ODUflex channels are supported. Before adding ODU0, a Gigabit Ethernet channel could only sit in a 2.5Gbps (ODU1) container, which wastes half the capacity. Similarly by supporting ODUflex, signals such as video can be mapped into frames made up of increments of 1.25Gbps. "For efficient use of resources from the metro into the core, you need to start at the access," said Wakelin.

Source: PMC

Source: PMC

The chip also supports the OTN-over-Packet-Fabric protocol. The devices can interface to OTN, SONET/SDH and packet switch fabrics.

The 20Gflex offers 40Gbps of OTN framing and a further 20Gbps of OTN mapping. The OTN mapping is used for those client signals to be fitted into ODU frames. With the additional 40Gbps interfaces that connect to the switch fabric, the total interface throughput is 100Gbps, matching the device's cross-connect capacity.

Other chip features include Fast Ethernet, Gigabit Ethernet and 10GbE MACs for carrier Ethernet transport, and support for timing over packet standards, including IEEE 1588v2 over OTN, used to carry mobile backhaul timing information.

The 10Gflex variant has similar functionality to the 20Gflex but with lower throughput.

PMC is now sampling the HyPHY Gflex devices to lead customers.

AppliedMicro's OTN designs

AppliedMicro's TPACK unit has unveiled two OTN designs: a TPO415/C415 OTN multiplexer chipset for use in 100Gbps packet optical transport line cards, and the TPO134 device used at the network edge.

The two devices combined - the TPO415 and TPOC415 - are implemented using FPGAs, what AppliedMicro dubs softsilicon. The two devices interface between the 100Gbps line side and the switch fabric. The TPO415 takes the OTU4 line side OTN signal and demultiplexes it to the various channel constituents. These can be ODU0, ODU1, ODU2, ODU3, ODU4 and ODUflex - capacity from 1Gbps to 100Gbps.

"The [100Gbps muxponder] design comes with an API that makes it look like one component"

Lars Pedersen, AppliedMicro

The TPOC415 has a 100Gbps, 80-channel segmentation and reassembly function (SAR) compliant with the OIF OTN-over-Packet-Fabric standard. The TPOC415 also has a 100Gbps, 80 channel packet mapper function for the transport of Ethernet and MPLS-TP over ODUk or ODUflex. The device's 100Gbps Interlaken interface is used to connect to the switch fabric for packet switching and ODU cross-connection. The devices can also be used in a standalone fashion for designs where the switch fabric does not use Interlaken, or when working with integrated switches and network processors.

Source: AppliedMicro

Source: AppliedMicro

"This is the first solution in the market for doing these hybrid functions at 100Gbps," says Lars Pedersen, CTO of AppliedMicro's TPACK.

The second design is the softsilicon TPO134, a 10Gbps add/drop multiplexer that can take in up to 16 clients signals and has two OTU2 interfaces. In between is the cross-connect that supports ODU0, ODU1 and ODUflex channels. Two devices can be combined to support 32 client channels and four OTU2 interfaces. Such a dual-design in a pizza-box system would be used to combine multiple client streams.

Being softsilicon, the TPO134 can also be used for packet optical transport systems. Here by downloading a different FPGA image, the design can also implement the segmentation and reassembly function required for the OIF's OTN-over-Packet-Fabric standard. "The interface to the switch fabric is Interlaken again," says Pedersen.

The TPO134 design doubles the capacity of AppliedMicro's previous add/drop multiplexer designs and is the first to support the OIF standard.

AppliedMicro has also announced the general availability of its 100G muxponder design. The muxponder design is a three-device chipset based on two PQ60 ASSPs and a TPO404 softsilicon design.

The PQ60T devices map 10 and 40Gbps clients into OTN and the TPO404 performs the multiplexing to OTU4 with forward error correction. The client signals supported include SONET/SDH, Ethernet and Fibre Channel. On the line side the design also supports various FEC schemes including an enhanced FEC. The TPO404 differ from the TPOT414/424 devices that link 100GbE and 100Gbps line side.

"The [100Gbps muxponder] design comes with an API [application programming interface] that makes it look [from a software perspective] like one component with some client and line ports, similar to the TPO134 device," says Pedersen.

Further reading:

Transport processors now at 100 Gigabit

Huawei's novel Petabit switch

The Chinese equipment maker showcased a prototype optical switch at this year's OFC/NFOEC that can scale to 10 Petabit.

"Although the numbers [400,000 lasers] appear quite staggering, they point to a need for photonic integration"

Reg Wilcox, Huawei

Huawei has demonstrated a concept Petabit Packet Cross Connect (PPXC), a switching platform to meet future metro and data centre requirements. The demonstrator is not expected to be a commercial product before 2017.

Current platforms have switching capacities of several Terabits. Yet Huawei believes a one thousand-fold increase in switching capacity will be needed. Fibre capacity will be filled to 20 and eventually 50 Terabits using higher-order modulation schemes and flexible spectrum. This will add up to a Petabit (one million Gigabits) per site, assuming 200 switched fibres at busy network exchanges.

"We are not saying we will introduce a 10 Petabit product in five years' time, although the technology is capable of that," says Reg Wilcox, vice president of network marketing and product management at Huawei. "We will size it to what we deem the market needs at that time."

Source: Huawei

Source: Huawei

The PPXC uses optical burst transmission to implement the switching. Such burst transmission uses ultra-fast switching lasers, each set to a particular wavelength in nanoseconds. Like Intune Networks’ Verisma iVX8000 optical packet switching and transport system, each wavelength is assigned to a particular destination port. As OTN traffic or packets arrive, they are assigned a wavelength before being sent to a destination port.

Huawei's switch demonstration linked two Huawei OSN8800 32-slot platforms, each with an Optical Transport Network (OTN) switching capacity of 2.56 Terabit-per-second (Tbps), to either side of the core optical switch, to implement what is known as a three-stage Clos switching matrix.

With each OSN8800, half the slots are for inter-machine trunks to the core optical switch, the middle stage of the Clos switch. "The other half [of the OSN8800] would be dedicated to whatever services you want to have: Gigabit Ethernet, 10 Gigabit Ethernet; whatever traffic you want riding over OTN," says Wilcox.

The core optical switch implements an 80x80 matrix using 80 wavelengths, each operating at 25Gbps. The 80x80 matrix is surrounded by MxM fast optical switches to implement a larger 320x320 matrix that has an 8 Terabit capacity. It is these larger matrices - 'switch planes' - that are stacked to achieve 10 Petabit. The PPXC grooms traffic starting at 1 Gigabit rates and can switch 100Gbps and even higher speed incoming wavelengths in future.

Oclaro provided Huawei with the ultra-fast lasers for the demonstrator. The laser - a digital supermode-distributed Bragg reflector (DS-DBR) - has an electro-optic tuning mechanism, says Robert Blum, director of product marketing for Oclaro's photonic component. Here current is applied to the grating to set the laser's wavelength. The resulting tuning speed is in nanoseconds although Oclaro will not say the exact switching speed specified for the switch.

Each switch plane uses 4x80 or 320, 25Gbps lasers. A 10 Petabit switch requires 400,000 (320x1250) lasers. "Although the numbers appear quite staggering, they point to a need for photonic integration," says Wilcox. Huawei recently acquired photonic integration specialist CIP Technologies.

The demonstration highlighted the PPXC switching OTN traffic but Wilcox stresses that the architecture is cell-based and can support all packet types: "We are flexible in the technology as the world evolves to all-packet.” The design is therefore also suited to large data centres to switch traffic between servers and for linking aggregation routers. "It is applicable in the data centre as a flattened [switch] architecture," says Wilcox.

Huawei claims the Petabit switch will deliver other benefits besides scalability. "Rough estimates comparing this device to OTN switches, MPLS switches and routers yields savings of greater than 60% on power, anywhere from 15-80% on footprint and at least a halving of fibre interconnect," says Wilcox.

Meanwhile Oclaro says Huawei is not the only vendor interested in the technology. "We have seen quite some interest recently in this area [of optical burst transmission]." says Oclaro's Blum. "I wouldn't be surprised if other companies make announcements in this space."

Further reading:

- OFC/ NFOEC 2012 paper: An Optical Burst Switching Fabric of Multi-Granularity for Petabit/s Multi-Chassis Switches and Routers

OFC/NFOEC 2012 industry reflections - Part 1

The recent OFC/NFOEC show, held in Los Angeles, had a strong vendor presence. Gazettabyte spoke with Infinera's Dave Welch, chief strategy officer and executive vice president, about his impressions of the show, capacity challenges facing the industry, and the importance of the company's photonic integrated circuit technology in light of recent competitor announcements.

OFC/NFOEC reflections: Part 1

"I need as much fibre capacity as I can get, but I also need reach"

Dave Welch, Infinera

Dave Welch values shows such as OFC/NFOEC: "I view the show's benefit as everyone getting together in one place and hearing the same chatter." This helps identify areas of consensus and subjects where there is less agreement.

And while there were no significant surprises at the show, it did highlight several shifts in how the network is evolving, he says.

"The first [shift] is the realisation that the layers are going to physically converge; the architectural layers may still exist but they are going to sit within a box as opposed to multiple boxes," says Welch.

The implementation of this started with the convergence of the Optical Transport Network (OTN) and dense wavelength division multiplexing (DWDM) layers, and the efficiencies that brings to the network.

That is a big deal, says Welch.

Optical designers have long been making transponders for optical transport. But now the transponder isn't an element in the integrated OTN-DWDM layer, rather it is the transceiver. "Even that subtlety means quite a bit," say Welch. "It means that my metrics are no longer 'gray optics in, long-haul optics out', it is 'switch-fabric to fibre'."

Infinera has its own OTN-DWDM platform convergence with the DTN-X platform, and the trend was reaffirmed at the show by the likes of Huawei and Ciena, says Welch: "Everyone is talking about that integration."

The second layer integration stage involves multi-protocol label switching (MPLS). Instead of transponder point-to-point technology, what is being considered is a common platform with an optical management layer, an OTN layer and, in future, an MPLS layer.

"The drive for that box is that you can't continue to scale the network in terms of bandwidth, power and cost by taking each layer as a silo and reducing it down," says Welch. "You have to gain benefits across silos for the scaling to keep up with bandwidth and economic demands."

Super-channels

Optical transport has always been about increasing the data rates carried over wavelengths. At 100 Gigabit-per-second (Gbps), however, companies now use one or two wavelengths - carriers - onto which data is encoded. As vendors look to the next generation of line-side optical transport, what follows 100Gbps, the use of multiple carriers - super-channels - will continue and this was another show trend.

Infinera's technology uses a 500Gbps super-channel based on dual polarisation, quadrature phase-shift keying (DP-QPSK). The company's transmit and receive photonic integrated circuit pair comprise 10 wavelengths (two 50Gbps carriers per 50GHz band).

Ciena and Alcatel-Lucent detailed their next-generation ASICs at OFC. These chips, to appear later this year, include higher-order modulation schemes such as 16-QAM (quadrature amplitude modulation) which can be carried over multiple wavelengths. Going from DP-QPSK to 16-QAM doubles the data rate of a carrier from 100Gbps to 200Gbps, using two carriers each at 16-QAM, enables the two vendors to deliver 400Gbps.

"The concept of this all having to sit on one wavelength is going by the wayside," say Welch.

Capacity challenges

"Over the next five years there are some difficult trends we are going to have to deal with, where there aren't technical solutions," says Welch.

The industry is already talking about fibre capacities of 24 Terabit using coherent technology. Greater capacity is also starting to be traded with reach. "A lot of the higher QAM rate coherent doesn't go very far," says Welch. "16-QAM in true applications is probably a 500km technology."

This is new for the industry. In the past a 10Gbps service could be scaled to 800 Gigabit system using 80 DWDM wavelengths. The same applies to 100Gbps which scales to 8 Terabit.

"I'm used to having high-capacity services and I'm used to having 80 of them, maybe 50 of them," says Welch. "When I get to a Terabit service - not that far out - we haven't come up with a technology that allows the fibre plant to go to 50-100 Terabit."

This issue is already leading to fundamental research looking at techniques to boost the capacity of fibre.

PICs

However, in the shorter term, the smarts to enable high-speed transmission and higher capacity over the fibre are coming from the next-generation DSP-ASICs.

Is Infinera's monolithic integration expertise, with its 500 Gigabit PIC, becoming a less important element of system design?

"PICs have a greater differentiation now than they did then," says Welch.

Unlike Infinera's 500Gbps super-channel, the recently announced ASICs use two carriers and 16-QAM to deliver 400Gbps. But the issue is the reach that can be achieved with 16-QAM: "The difference is 16-QAM doesn't satisfy any long-haul applications," says Welch.

Infinera argues that a fairer comparison with its 500Gbps PIC is dual-carrier QPSK, each carrier at 100Gbps. Once the ASIC and optics deliver 400Gbps using 16-QAM, it is no longer a valid comparison because of reach, he says.

Three parameters must be considered here, says Welch: dollars/Gigabit, reach and fibre capacity. "I have to satisfy all three for my application," he says.

Long-haul operators are extremely sensitive to fibre capacity. "I need as much fibre capacity as I can get," he says. "But I also need reach."

In data centre applications, for example, reach is becoming an issue. "For the data centre there are fewer on and off ramps and I need to ship truly massive amounts of data from one end of the country to the other, or one end of Europe to the other."

The lower reach of 16-QAM is suited to the metro but Welch argues that is one segment that doesn't need the highest capacity but rather lower cost. Here 16-QAM does reduce cost by delivering more bandwidth from the same hardware.

Meanwhile, Infinera is working on its next-generation PIC that will deliver a Terabit super-channel using DP-QPSK, says Welch. The PIC and the accompanying next-generation ASIC will likely appear in the next two years.

Such a 1 Terabit PIC will reduce the cost of optics further but it remains to be seen how Infinera will increase the overall fibre capacity beyond its current 80x100Gbps. The integrated PIC will double the 100Gbps wavelengths that will make up the super-channel, increasing the long-haul line card density and benefiting the dollars/ Gigabit and reach metrics.

In part two, ADVA Optical Networking, Ciena, Cisco Systems and market research firm Ovum reflect on OFC/NFOEC. Click here

Transport processors now at 100 Gigabit

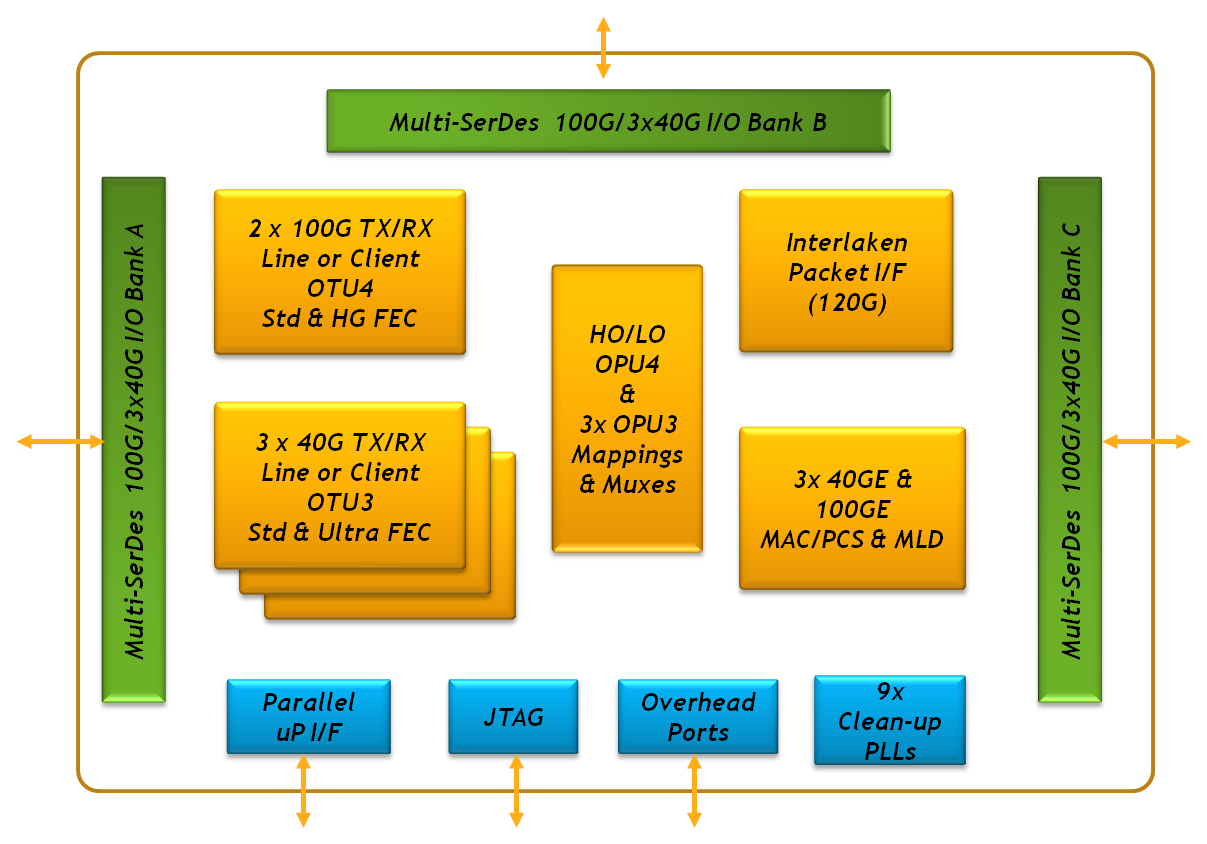

Cortina Systems has detailed its CS605x family of transport processors that support 100 Gigabit Ethernet and Optical Transport Network (OTN).

The CS6051 transport processor architecture. Source: Cortina Systems

The CS6051 transport processor architecture. Source: Cortina Systems

The application-specific standard product (ASSP) family from Cortina Systems is aimed at dense wavelength division multiplexing (DWDM) platforms, packet optical transport systems, carrier Ethernet switch routers and Internet Protocol edge and core routers. The chip family can also be used in data centre top-of-rack Ethernet aggregation switches.

"Our traditional business in OTN has been in the WDM market," says Alex Afshar, product line manager, transport products at Cortina Systems. "What we see now is demand across all those platforms."

ASSP versus FPGA

Until now, equipment makers have used field programmable gate arrays (FPGAs) to implement 100 Gigabit-per-second (Gbps) designs. This is an important sector for FPGA vendors, with Altera and Xilinx making several company acquisitions to bolster their IP offerings to address the high end sector. System vendors have also used FPGA board-based designs from specialist firm TPACK, acquired by Applied Micro in 2010.

The advantage of an FPGA design is that it allows faster entry to market, while supporting relevant standards as they mature. FPGAs also enable equipment makers to use their proprietary intellectual property (IP); for example, advanced forward error correction (FEC) codes, to distinguish their designs.

However, once a market reaches a certain maturity, ASSPs become available. "ASSPs are more efficient in terms of cost, power and integration," says Afshar.

But industry analysts point out that ASSP vendors have a battle on their hands. "In this class of product, there is a lot of customisation and proprietary design and FPGAs are well suited for that," says Jag Bolaria, senior analyst at The Linley Group.

CS605x family

The CS605x extends Cortina's existing CS604x 40Gbps OTN transport processors launched in April 2011. The CS605x devices aggregate 40 Gigabit Ethernet or OTN streams into 100Gbps or map between 100 Gigabit Ethernet and OTN frames. Combining devices from the two families enables 10 and 40 Gigabit OTN/ Ethernet traffic to be aggregated into 100 Gigabit streams.

The CS6051 is the 100 Gigabit family's flagship device. The CS6051 can interface directly to three 40Gbps optical modules, a 100 Gigabit CFP or a 12x10Gbit/s CXP module. The device also supports the Interlaken interface to 120 Gigabit (10x12.5Gbps) to interface to devices such as network processors, traffic managers and FPGAs.

The CS6051 supports several forward error correction (FEC) codes including the standard G.709, a 9.4dB coding gain FEC with only a 7% overhead, and an 'ultra-FEC' whose strength can be varied with overhead, from 7% to 20%.

The CS6053 is similar to the CS6051 but uses a standard G.709 FEC only, aimed at system vendors with their own powerful FECs such as the latest soft-decision FEC. The CS6052 supports Ethernet and OTN mapping but not aggregation while the CS6054 supports Ethernet only. It is the C6054 which is used for top-of-rack switches in the data centre.

The devices consume between 10-12W. Samples of the CS605x family have been available since October 2011 and will be in volume production in the first half of this year.

Further reading:

For a more detailed discussion of the C605x family, click on the article featured in New Electronics

OIF promotes uni-fabric switches & 100G transmitter

The OIF's OTN implementation agreement (IA) allows a packet fabric to also switch OTN traffic. The result is that operators can now use one switch for both traffic types, aiding IP/ Ethernet and OTN convergence. Source: OIF

The OIF's OTN implementation agreement (IA) allows a packet fabric to also switch OTN traffic. The result is that operators can now use one switch for both traffic types, aiding IP/ Ethernet and OTN convergence. Source: OIF

Improving the switching capabilities of telecom platforms without redesigning the switch as well as tinier 100 Gigabit transmitters are just two recent initiatives of the Optical Internetworking Forum (OIF).

The OIF, the industry body tackling design issues not addressed by the IEEE and International Telecommunication Union (ITU) standards bodies, has just completed its OTN-over-Packet-Fabric protocol that enables optical transport network (OTN) traffic to be carried over a packet switch. The protocol works by modifying the line cards at the switch's input and output, leaving the switch itself untouched (see diagram above).

In contrast, the OIF is starting a 100 Gigabit-per-second (Gbps) transmitter design project dubbed the integrated dual-polarisation quadrature modulated transmitter assembly (ITXA). The Working Group aims to expand the 100Gbps applications with a transmitter design half the size of the OIF's existing 100Gbps transmitter module.

The Working Group also wants greater involvement from the system vendors to ensure the resulting 100 Gig design is not conservative. "We joke about three types of people that attend these [working group] meetings," says Karl Gass, the OIF’s Physical and Link Layer Working Group vice-chair. "The first group has something they want to get done, the second group has something already and they don't want something to get done, and the third group want to watch." Quite often it is the system vendors that fall into this third group, he says.

OTN-over-Packet-Fabric protocol

The OTN protocol enable a single switch fabric to be used for both traffic types - packets and time-division multiplexed (TDM) OTN - to save cost for the operators.

"OTN is out there while Ethernet is prevalent," says Winston Mok, technical author of the OTN implementation agreement. "What we would like to do is enable boxes to be built that can do both economically."

The existing arrangement where separate packet and OTN time-division multiplexing (TDM) switches are required. Source: OIF

The existing arrangement where separate packet and OTN time-division multiplexing (TDM) switches are required. Source: OIF

Platforms using the protocol are coming to market. ECI Telecom says its recently announced Apollo family is one of the first OTN platforms to use the technique.

The protocol works by segmenting OTN traffic into a packet format that is then switched before being reconstructed at the output line card. To do this, the constant bit-rate OTN traffic is chopped up so that it can easily go through the switch as a packet. "We want to keep the [switch] fabric agnostic to this operation," says Mok. "Only the line cards need to do the adaptations."

The OTN traffic also has timing information which the protocol must convey as it passes through the switch. The OIF's solution is to vary the size of the chopped-up OTN packets. The packet is nominally 128-bytes long. But the size will occasionally be varied to 127 and 126 bytes as required. These sequences are interpreted at the output of the switch as rate information and used to control a phase-locked loop.

Mok says the implementation agreement document that describes the protocol is now available. The protocol does not define the physical layer interface connecting the line card to the switch, however. "Most people have their own physical layer," says Mok.

100 Gig ITXA

The ITXA project will add to the OIF's existing integrated transmitter document. The original document addresses the 100 Gigabit transmitter for dual-polarisation, quadrature phase-shift keying (DP-QPSK) for long-haul optical transmission. The OIF has also defined 100Gbps receiver assembly and tunable laser documents.

The latest ITXA Working Group has two goals: to shrink the size of the assembly to lower cost and increase the number of 100Gbps interfaces on a line card, and to expand the applications to include metro. The ITXA will still address 100Gbps coherent designs but will not be confined to DP-QPSK, says Gass.

"We started out with a 7x5-inch module and now there is interest from system vendors and module makers to go to smaller [optical module] form factors," says Gass. "There is also interest from other modulator vendors that want in on the game."

The reduce size, the ITXA will support other modulator technologies besides lithium niobate that is used for long-haul. These include indium phosphide, gallium arsenide and polymer-based modulators.

Gass stresses that the ITXA is not a replacement for the current transmitter implementation. "We are not going to get the 'quality' that we need for long-haul applications out of other modulator technologies," he says. "This is not a Gen II [design].

The Working Group's aim is to determine the 'greatest common denominator' for this component. "We are trying to get the smallest form factor possible that several vendors can agree on," says Gass. "To come out with a common pin out, common control, common RF (radio frequency) interface, things like that."

Gass says the work directions are still open for consideration. For example, adding the laser with the modulator. "We can come up with a higher level of integration if we consider adding the laser, to have a more integrated transmitter module," says Gass.

As for wanting great system-vendor input, the Working Group wants more of their system-requirement insights to avoid the design becoming too restrictive.

"You end up with component vendors that do all the work and they want to be conservative," says Gass. "The component vendors don't want to push the boundaries as they want to hit the widest possible customer base."

Gass expects the ITXA work to take a year, with system demonstrations starting around mid-2013.

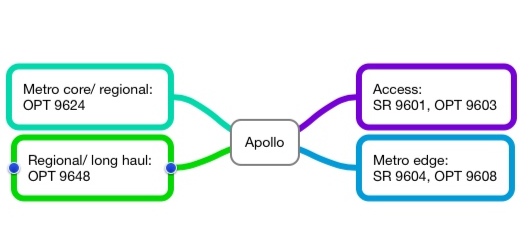

ECI Telecom's Apollo mission

The privately-owned system vendor has launched Apollo, a family of what it calls optimised multi-layer transport platforms.

Event

ECI Telecom has launched a family of platforms that combines optical transmission, Ethernet and optical transport network (OTN) switching and IP routing.

The 9600 series platforms, dubbed Apollo, combines the functionality of what until now has required a packet-optical transport system (P-OTS) and a carrier Ethernet switch router (CESR).

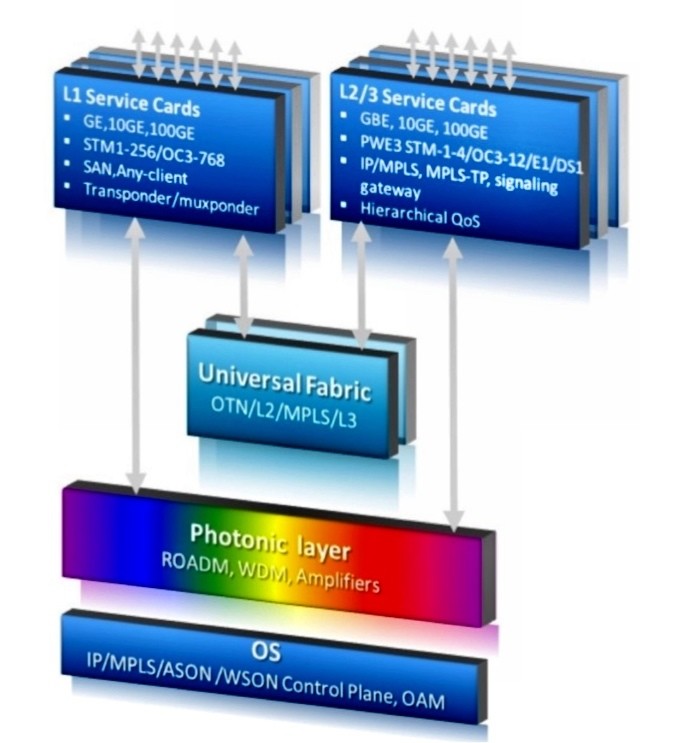

The Apollo 9600 series architecture. Source: ECI Telecom

The Apollo 9600 series architecture. Source: ECI Telecom

ECI refers to the capabilities of such a combined platform as optimised multi-layer transport (OMLT). Analysts view the platform as a natural evolution of P-OTS rather than a new category of system.

Why is it important?

ECI's Apollo 9600 series is the first to combine dense wavelength-division multiplexing (DWDM) with carrier Ethernet switch routing. It is also one of the first platforms that bring OTN switching to the metro; until now OTN switching has been confined to the network core.

Apollo addresses a shortfall of packet optical transport, namely its limited layer 2 capabilities, says ECI. This is addressed with Apollo that also adds layer 3 routing, another first.

“In the buying cycle, operators start with optical networking and add carrier Ethernet switch routing,” says Oren Marmur, head of optical networking & CESR lines of Business at ECI Telecom. Now with Apollo, operators can simplify their networks: they don't have to provision, or maintain, two separate platforms.

ECI claims the Apollo platform, with 100 Gigabit-per-second (Gbps) transport and hybrid Ethernet and OTN cards, more than halves the equipment cost compared to using separate ROADM and CESR platforms. The company also says such an Apollo configuration reduces rack space by 38% and power consumption by some 60%.

What has been done

ECI has announced six Apollo platforms that span the access, metro and core networks. The platforms include the SR 9601 and OPT 9603 for metro access and the metro edge SR 9604 and OPT 9608 with four and eight input-output (I/O) cards respectively that support WDM or 100Gbps Ethernet MPLS packet switching. The final two platforms are the OPT 9624 for metro core and the OPT 9648 for regional and long haul, and both can accommodate a terabit universal switch.

Overall Apollo can support 44 or 88 light paths at 10, 40 and 100Gbps, 2-degree and multi-degree colourless, directionless and contentionless ROADMs, OTN and Ethernet switching, and IP/ MPLS and MPLS-TP. "MPLS-TP versus IP/ MPLS is almost a religious issue yet both are valid," says Marmur, who adds that at 40 Gig, ECI will use coherent and direct detection technologies but at 100 Gig it will use only coherent.

The universal fabric of the OPT 9624 and 9648 is cell based - ODUs and packets, not lower-order SONET/SDH traffic. If an operator has any significant amount of SONET/SDH traffic, ECI’s XDM platform or another aggregation box is needed.

The platforms can be configured as CESR platforms, OTN switches, optical transport platforms or combinations of the three.

Analysis

Gazettabyte asked Sterling Perrin, senior analyst at Heavy Reading; Rick Talbot, senior analyst, optical infrastructure at Current Analysis and Dana Cooperson, vice president of the network infrastructure practice at Ovum for their views about the ECI announcement.

Sterling Perrin, Heavy Reading

Apollo has several noteworthy aspects, according to Heavy Reading.

“It is a big announcement for ECI and a big announcement for the industry," says Perrin. “They are doing with the technology some fundamental things that are new.” That said, it remains to be seen how quickly operators will embrace an OMLT-style platform, he says.

Apollo confirms one networking trend - moving the OTN switching fabric into the metro network. So far OTN has been confined largely to the core network. “I know operators are interested but they are still evaluating it,” says Perrin. “But OTN will migrate down from the core to the metro.” Others that have announced such a capability include Ciena and Huawei.

ECI has also put the DWDM transport with the CESR platform. “This is another trend we figured would happen,” he says. “This puts ECI very early, if not first, in doing that function.”

Perrin has his doubts about how quickly the layer 3 functionality added to the platform will be embraced by operators: “What I've seen from the industry is that MPLS-TP will give you that functionality over time as it matures, so this sort of platform may not need the full layer 3 functions.”

The modular nature of the design that allows operators to add the functionality they need helps avoid one issue associated with integrated platforms, paying for functionality that is not needed. And there are cost savings by having a single integrated platform. “You do want to save capex and opex and this is definitely a way to get that done,” says Perrin.

In the network core, the question remains whether packet needs to be combined with the optics. “Metro lends itself more to the integration than the core does,” he says.

ECI’s biggest competitor is probably Huawei and over time also ZTE, says Perrin. ECI has done well in India and other emerging markets that many of the system vendors were ignoring. “Now they have Huawei in the mix, it is definitely tougher,” he says. “This [Apollo] announcement will definitely help them.”

Rick Talbot, Current Analysis

Current Analysis categorises the smaller members of the Apollo family as a packet-optical access (POA) portfolio, playing the same role as Ericsson’s SPO 1400 family and Cisco’s CPT series. The market research firm views the largest two Apollo platforms - the OPT 9624 and 48 - as packet-optical transport systems.

The Apollo POAs bring protocol-agnostic packet switching to the aggregation network, says Talbot, a rarity in this part of the network. Several vendors offer P-OTS with universal switching fabrics but most do not extend that architecture into the aggregation network, Tellabs with the 7100 Nano OTS being the exception. Also the 9600 series IP/ MPLS and MPLS-TP options are very strong, providing what Cisco and Ericsson call unified MPLS, he says.

For Current Analysis, the significance of the portfolio is that the Apollo family delivers converged packet and time-division multiplexing (TDM) switching in a single switch fabric, and provides an infrastructure that extends from the network core to the access network edge.

The switching fabric provides the greatest efficiency for the ultimate traffic type - packets - while simplifying the network architecture and minimising equipment cost. In turn, the breadth of the portfolio provides a common set of capabilities across an operator’s network, minimising training costs and spares inventory.

As for the specification, the wide range of MPLS features integrated into this product family, its terabit universal switch and its 100Gbps DWDM transport capabilities are impressive, says Talbot.

“The primary gap in the portfolio, and it is hard to fault ECI for this, is that the highest capacity member of the family supports ’only’ 1 Terabit-per-second of switching capacity,” he says. “This is not large enough for a Tier 1 core optical switch.”

ECI must first execute on the production of the Apollo family, but if it does, Talbot believe that ECI will capture the interest of larger and more end-to-end operators in markets they already serve.

ECI will also have positioned itself to capture the attention of many European operators and, if it makes a push there, the North American market. However Talbot believes ECI will still be challenged to capture the attention of Tier 1 operators because of the family’s limited maximum scale.

Dana Cooperson, Ovum

Size and scale breeds specialisation, says Cooperson. “Large service providers, including the Tier 1s, won’t be so interested in the OMLT, but they aren’t the target anyway,” she says. Large service providers need plenty of scale when it comes to WDM and CESR functionality, while they also tend to have compartmentalised operations groups. “So an all-in-one product like the OMLT isn’t targeted at them,” she says.

ECI has always done well selling to the Tier 2 and Tier 3 carriers as well as enterprises such as utilities that have carrier-like networks. That is because ECI's modular, packet-based platforms are sized and priced to match such operators' and enterprises’ requirements. “I see the OMLT as a continuation of ECI's positioning of its XDM platform,” she says.

Cooperson says that it can be difficult to position vendors’ switch announcements and that they should do more to explain where they sit. But she stresses that the Apollo 9600 series is very different from Juniper's PTX, for example.

“The PTX is positioned in the core as a lower-cost alternative to core routers, while the OMLT as a CESR or even an OTN switch is meant more for smaller sites,” she says. Also the switch capacities of the smaller Apollo platforms fit with ECI's focus and positioning on smaller customers and smaller sites.

Cooperson also highlights the need for the XDM platform if an operator requires SONET/SDH support but says ECI has alluded to add/drop multiplexer blades as well as packet blades. "The [Apollo] focus is on the packet and photonic bits,” says Cooperson. “ECI did emphasize that the XDM isn’t going anywhere, but we’ll see what happens over time and how much SONET/SDH ECI builds in [if any to the Apollo].”

Further Reading

For accompanying White Papers, click here

High fives: 5 Terabit OTN switching and 500 Gig super-channels.

Infinera has announced a core network platform that combines Optical Transport Network (OTN) switching with dense wavelength division multiplexing (DWDM) transport. "We are looking at a system that integrates two layers of the network," says Mike Capuano, vice president of corporate marketing at Infinera.

"This is 100Tbps of non-blocking switching, all functioning as one system. You just can't do that with merchant silicon."

"This is 100Tbps of non-blocking switching, all functioning as one system. You just can't do that with merchant silicon."

Mike Capuano, Infinera

The DTN-X platform is based on Infinera's third-generation photonic integrated circuit (PIC) that supports five, 100Gbps coherent channels.

Each DTN-X platform can deliver 5 Terabits-per-second (Tbps) of non-blocking OTN switching using an Infinera-designed ASIC. Ten DTN-X platforms can be combined to scale the OTN switching and transport capacity to 50Tbps currently.

Infinera also plans to add Multiprotocol Label Switching (MPLS) to turn the DTN-X into a hybrid OTN/ MPLS switch. With the next upgrades to the PIC and the switching, the ten DTN-X platforms will scale to 100Tbps optical transport and 100Tbps OTN and MPLS switching capacity.

The platform is being promoted by Infinera as a way for operators to tackle network traffic growth and support developments such as cloud computing where applications and content increasingly reside in the network. "What that means [for cloud-based services to work] is a network with huge capacity and very low latency," says Capuano.

Platform details

The 5x100Gbps PIC supports what Infinera calls a 500Gbps 'super-channel'. Each super-channel is a multi-carrier implementation comprising five, 100Gbps wavelengths. Combined with OTN, the 500Gbps super-channel can be filled with 1, 10, 40 and 100 Gigabit streams (SONET/SDH, Ethernet, video etc). Moreover, there is no spectral efficiency penalty: the super-channel uses 250GHz of fibre spectrum, provisioning five 50GHz-wide, 100Gbps wavelengths at a time.

"We have seen 40 and 100Gbps come on the market and they are definitely helping with fibre capacity issues," says Capuano. "But they are more expensive from a cost-per-bit perspective than 10Gbps." By introducing the 500Gbps PIC, Infinera says it is reducing the cost-per-bit performance of high speed optical transport.

DTN-X: shown are 5 line and tributary cards top and bottom with switching cards in the centre of the chassis. Source: Infinera

DTN-X: shown are 5 line and tributary cards top and bottom with switching cards in the centre of the chassis. Source: Infinera

Integrating OTN switching within the platform results in the lowest cost solution and is more efficient when compared to multiplexed transponders (muxponder) configured manually, or an external OTN switch which must be optically connected to the transport platform.

The DTN-X also employs Generalised MPLS (GMPS) software. "GMPLS makes it easy to deploy networks and services with point-and-click provisioning," says Capuano.

Each DTX-N line card supports a 500Gbps PIC but the chassis backplane is specified at 1Tbps, ready for Infinera's next-generation 10x100Gbps PIC that will upgrade the DTN-X to a 10Tbps system. "We have already presented our test results for our 1Tbps PIC back in March," says Capuano. The fourth-generation PIC, estimated around 2014 (based on a company slide although Infinera has made no public comment), will support a 1Tbps super-channel.

Adding MPLS will add the transport capability of the protocol to the DTN-X. "You will have MPLS transport, OTN switching and DWDM all in one platform," says Capuano.

OTN switching is the priority of the tier-one operators to carry and process their SONET/SDH traffic; adding MPLS will enable extra traffic processing capabilities to the system, he says.

Infinera says that by eventually integrating MPLS switching into the optical transport network, operators will be able to bypass expensive router ports and simplify their network operation.

Performance

Infinera says that the DTX-N 5Tbps performance does not dip however the system is configured: whether solely as a switch (all line card slots filled with tributary modules), mixed DWDM/ switching (half DWDM/ half tributaries, for example) or solely as a DWDM platform. Depending on the cards in the DTN-X platform, the transport/ switching configuration can be varied but the 5Tbps I/O capacity is retained. Infinera says other switches on the market do lose I/O capacity as the interface mix is varied.

Overall, Infinera claims the platform requires half the power of competing solutions and takes up a third less space.

The DTN-X will be available in the first half of 2012.

Analysis

Gazettabyte asked several market research firms about the significance of the DTN-X announcement and the importance of combining OTN, DWDM and soon MPLS within one platform.

Ovum

Ron Kline, principal analyst, and Dana Cooperson, vice president, of the network infrastructure practice

"MPLS switching is setting up a very interesting competitive dynamic among vendors"

Dana Cooperson, Ovum

The DTN-X is a platform for the largest service providers and their largest sites, says Ovum.

It sees the DTN-X in the same light as other integrated OTN/ WDM platforms such as Huawei's OSN 8800, Nokia Siemens Networks' hiT 7100, Alcatel-Lucent's 1830 PSS and Tellabs' 7100 OTS.

"It fits the mold for Verizon's long-haul optical transport platform (LH OTP), especially once MPLS is added," says Kline. "NSN is also claiming it will add MPLS to the 7100. Once MPLS is added, then you have the big packet optical transport box that Verizon wants."

The DTN-X platform will boost the business case for 100 Gig in a similar way to how Infinera's current PIC has done at 10 Gig. "The others will be forced to lower price," says Kline.

Having GMPLS is important, especially if there is a need to do dynamic bandwidth allocation, however it is customer-dependent. "When you start digging, it's hard to find large-scale implementations of GMPLS," says Kline.

The Ovum analysts stress that the need for OTN in the core depends on the customer. Content service providers like Google couldn't care less about OTN. "It's really an issue for multi-service providers like BT and AT&T," says Cooperson,

There is a consensus about the need for MPLS in the core. "Different service providers are likely to take different approaches — some might prefer an integrated box and others might not, it depends on their business," she says. "I think MPLS switching is setting up a very interesting competitive dynamic among vendors that focus on IP/MPLS, those that focus on optical, and those that are trying to do both [optical and IP/MPLS].

Ovum highlights several aspects regarding the DTN-X's claimed performance.

"Assuming it performs as advertised, this should finally give Infinera what it needs to be of real interest to the tier-ones," says Cooperson. "The message of scalability, simplicity, efficiency, and profitability is just what service providers want to hear."

Cooperson also highlights Infinera's approach to optical-electrical-optical conversion and the benefit this could deliver at line speeds greater than 100Gbps.

At present ROADMs are being upgraded to support flexible spectrum channel configurations, also known as gridless. This is to enable future line speeds that will use more spectrum than current 50GHz DWDM channels. Operators want ROADMs that support flexible spectrum requirements but managing the network to support these variable width channels is still to resolved.

"It fits the mold for Verizon's long-haul optical transport platform (LH OTP), especially once MPLS is added"

"It fits the mold for Verizon's long-haul optical transport platform (LH OTP), especially once MPLS is added"

Ron Kline, Ovum

Infinera's approach is based on conversion to the electrical domain when dropping and regenerating wavelengths such that the issue of flexible channels does not arise or is at least forestalled. This, says Cooperson, could be Infinera's biggest point of differentiation.

"What impresses me is the 500Gbps super-channel using five, 100Gbps carriers and the size of the switch fabric," adds Kline. The 5Tbps switching performance also exceeds that of everyone else: "Alcatel-Lucent is closest with 4Tbps but most range from 1-3Tbps and top out at 3Tbps."

The ease of use is also a big deal. Infinera did very well in marketing rapid turn up: 10 Gig in 10 days for example, says Kline: "It looks like they will be able to do the same here with 100 Gig."

Infonetics Research

Andrew Schmitt, directing analyst, optical

"GMPLS isn't that important, yet."

The DTN-X is a WDM platform which optionally includes a switch fabric for carriers that want it integrated with the transport equipment, says Schmitt. Once MPLS is added, it has the potential to be a full-blown packet-optical system.

"[The announcement is] pretty significant though not unexpected," says Schmitt. "I think the key question is what it costs, and whether the 500G PIC translates into compelling savings."

Having MPLS support is important for some carriers such as XO Communications and Google but not for others.

Schmitt also says GMPLS isn't that important, yet. "Infinera's implementation of regen-rich networks should make their GMPLS implementation workable," he says. "It has been building networks like that for a while."

OTN in the core is still an open debate but any carrier that doesn't have the luxury of a homogenous data network needs it, he says

Schmitt has yet to speak with carriers who have used the DTN-X: "I can't comment on claimed performance but like I said, cost is important."

ACG Research

Eve Griliches, managing partner

"Infinera has already introduced the 500G PIC, but the OTN is significant in that it can be used as a standalone OTN switch, and it has the largest capacity out there today"

The DTN-X as an OTN/ WDM platform awaiting label switch router (LSR) functionality, says Griliches: "With the LSR functionality it will be able to do statistical multiplexing for direct router connections."

Infinera has already introduced the 500 Gig PIC but the OTN is significant in that it can be used as a standalone OTN switch, and it has the largest capacity out there today. An OTN survey conducted last year by ACG Research found that the switch capacity sweet spot is between 4 and 8Tbps.

Griliches says that LSR-based products are taking time to incorporate WDM and OTN technologies, while it is unclear when the DTN-X will support MPLS to add LSR capabilities. The race is on as to whom can integrate everything first, but DWDM and OTN before MPLS is the right direction for most tier-one operators, she says.

Infinera has over eight thousand of its existing DTNs deployed at 85 customers in 50 countries. The scale of the DTN-X will likely broaden Infinera's customer base to include tier-one operators, says Griliches.

ACG Research has heard positive feedback from operators it has spoken to. One stressed that the decreased port count due to the larger OTN cross-connect significantly improves efficiencies. Another operator said it would pick Infinera and said the beta version of the 500Gbps PIC is "working beautifully".