Briefing: Flexible elastic-bandwidth networks

Vendors and service providers are implementing the first examples of flexible, elastic-bandwidth networks. Infinera and Microsoft detailed one such network at the Layer123 Terabit Optical and Data Networking conference held earlier this year.

Optical networking expert Ioannis Tomkos of the Athens Information Technology Center explains what is flexible, elastic bandwidth.

Part 1: Flexible elastic bandwidth

"We cannot design anymore optical networks assuming that the available fibre capacity is abundant"

Prof. Tomkos

Several developments are driving the evolution of optical networking. One is the incessant demand for bandwidth to cope with the 30+% annual growth in IP traffic. Another is the changing nature of the traffic due to new services such as video, mobile broadband and cloud computing.

"The characteristics of traffic are changing: A higher peak-to-average ratio during the day, more symmetric traffic, and the need to support higher quality-of-service traffic than in the past," says Professor Ioannis Tomkos of the Athens Information Technology Center.

"The growth of internet traffic will require core network interfaces to migrate from the current 10, 40 and 100Gbps to 1 Terabit by 2018-2020"

Operators want a more flexible infrastructure that can adapt to meet these changes, hence their interest in flexible elastic-bandwidth networks. The operators also want to grow bandwidth as required while making best use of the fibre's spectrum. They also require more advanced control plane technology to restore the network elegantly and promptly following a fault, and to simplify the provisioning of bandwidth.

The growth of internet traffic will require core network interfaces to migrate from the current 10, 40 and 100Gbps to 1 Terabit by 2018-2020, says Tomkos. Such bit-rates must be supported with very high spectral efficiencies, which according to latest demonstrations are only a factor of 2 away of the Shannon's limit. Simply put, optical fibre is rapidly approaching its maximum limit.

"We cannot design anymore optical networks assuming that the available fibre capacity is abundant," says Tomkos. "As is the case in wireless networks where the available wireless spectrum/ bandwidth is a scarce resource, the future optical communication systems and networks should become flexible in order to accommodate more efficiently the envisioned shortage of available bandwidth.”

The attraction of multi-carrier schemes and advanced modulation formats is the prospect of operators modifying capacity in a flexible and elastic way based on varying traffic demands, while maintaining cost-effective transport.

Elastic elements

Optical systems providers now realise they can no longer keep increasing a light path's data rate while expecting the signal to still fit in the standard International Telecommunication Union (ITU) - defined 50GHz band.

It may still be possible to fit a 200 Gigabit-per-second (Gbps) light path in a 50GHz channel but not a 400Gbps or 1 Terabit signal. At 400Gbps, 80GHz is needed and at 1 Terabit it rises to 170GHz, says Tomkos. This requires networks to move away from the standard ITU grid to a flexible-based one, especially if operators want to achieve the highest possible spectral efficiency.

Vendors can increase the data rate of a carrier signal by using more advanced modulation schemes than dual polarisation, quadrature phase-shift keying (DP-QPSK), the defacto 100Gbps standard. Such schemes include amplitude modulation at 16-QAM, 64-QAM and 256-QAM but the greater the amplitude levels used and hence the data rates, the shorter the resulting reach.

Another technique vendors are using to achieve 400Gbps and 1Tbps data rates is to move from a single carrier to multiple carriers or 'super-channels'. Such an approach boosts the data rate by encoding data on more than one carrier and avoids the loss in reach associated with higher order QAM. But this comes at a cost: using multiple carriers consumes more, precious spectrum.

As a result, vendors are looking at schemes to pack the carriers closely together. One is spectral shaping. Tomkos also details the growing interest in such schemes as optical orthogonal frequency division multiplexing (OFDM) and Nyquist WDM. For Nyquist WDM, the subcarriers are spectrally shaped so that they occupy a bandwidth close or equal to the Nyquist limit to avoid inter symbol interference and crosstalk during transmission.

Both approaches have their pros and cons, says Tomkos, but they promise optimum spectral efficiency of 2N bits-per-second-per-Hertz (2N bits/s/Hz), where N is the number of constellation points.

The attraction of these techniques - multi-carrier schemes and advanced modulation formats - is the prospect of operators modifying capacity in a flexible and elastic way based on varying traffic demands, while maintaining cost-effective transport.

"With flexible networks, we are not just talking about the introduction of super-channels, and with it the flexible grid," says Tomkos. "We are also talking about the possibility to change either dynamically."

According to Tomkos, vendors such as Infinera with its 5x100Gbps super-channel photonic integrated circuit (PIC) are making an important first step towards flexible, elastic-bandwidth networks. But for true elastic networks, a flexible grid is needed as is the ability to change the number of carriers on-the-fly.

"Once we have those introduced, in order to get to 1 Terabit, then you can think about playing with such parameters as modulation levels and the number of carriers, to make the bandwidth really elastic, according to the connections' requirements," he says.

Meanwhile, there are still technology advances needed before an elastic-bandwidth network is achieved, such as software-defined transponders and a new advanced control plane.

Tomkos says that operators are now using control plane technology that co-ordinates between layer three and the optical layer to reduce network restoration time from minutes to seconds. Microsoft and Infinera cite that they have gone from tens of minutes down to a few seconds using the more advanced optical infrastructure. "They [Microsoft] are very happy with it," says Tomkos.

But to provision new capacity at the optical layer, operators are talking about requirements in the tens of minutes; something they do not expect will change in the coming years. "Cloud services could speed up this timeframe," says Tomkos.

"There is usually a big lag between what operators and vendors do and what academics do," says Tomkos. "But for the topic of flexible, elastic networking, the lag between academics and the vendors has become very small."

Further reading:

Industry underestimating 25 Gigabit parallel optics challenge

Ten Gigabit-based parallel optics is set to dominate the marketplace for several years to come. So claims datacom module specialist, Avago Technologies.

"One customer told us it has to keep the interface speed below 20Gbps due to the cost of the SerDes"

Sharon Hall, Avago

"People are underestimating what is going to be involved in doing 25 Gigabit [channels]," says Sharon Hall, product line manager for embedded optics at Avago Technologies. "Ten Gigabit is going to last quite a bit longer because of the price point it can provide."

Eventually 25 Gig-based parallel optics, with its lower lane count, will be cheaper than 10 Gigabit - but is will take several years. One challenge is the cost of 25 Gigabit-per-second (Gbps) electrical interfaces, due to the large relative size of the circuitry. One customer told Avago that it has to keep the interface speed below 20Gbps for now due to the cost of the serial/ deserialiser (SerDes).

Avago has announced that its 120 Gigabit aggregate bandwidth (12x10Gbps) MiniPod and CXP parallel optics products are now in volume production. The company first detailed the MiniPod and CXP technologies in late 2010 yet many equipment makers are still to launch their first designs.

The CXP is a pluggable optical transceiver while the MiniPod is Avago's packaged optical engine used for embedded designs. The 22x18mm MiniPod is based on Avago's 8x8mm MicroPod optical engine but uses a 9x9 electrical MegArray connector with its more relaxed pitch.

Equipment makers face a non-trivial decision as to whether to adopt copper or optical interfaces for their platform designs. "This is a major design decision with a lot of customers going back and forth deciding which way to go," says Hall. "They might do a mix with some short connections staying copper but if they need 10 Gig at anything longer than a few meters then they are going to go optical."

Having chosen parallel optics, the style of form factor - pluggable or embedded - is largely based on the interface density required. "Certain customers prefer field pluggability [of CXP] with its pay-as-you-go and ease of installation features, but are limited on port density due to the number of CXP transceivers that can physically fit on a 19 inch board," says Hall.

Up to 14 CXPs can fit onto a 19-inch board. In contrast, some 50-100 transmit and receive MiniPod pairs can fit on the 19-inch board. "You have the whole board space to work with," she says. The embedded optics sit closer to the board's ASICs, shortening the electrical path and solving signal integrity issues that can arise using edge-mounted pluggables. Thermal management - not having all the pluggable optics at the card edge furthest from the fans - is also simplified using embedded optics.

Generally, connections to data centre top-of-rack switches and between chassis use the pluggable CXP while internal backplane and mid-plane designs use the MiniPod. The CXP is also used by core switches and routers; Alcatel-Lucent's recently announced 7950 core router has a four-port CXP-based card. But Avago stresses that there are no hard rules: It has customers that have chosen the CXP and others the MiniPod for the same class of platform.

Source: Gazettabyte

25 Gigabit parallel optics

Finisar recently demonstrated its board mounted optical assembly that it says will support channel speeds of 10, 14, 25 and 28Gbps, while silicon photonics vendors Luxtera and Kotura have announced 4x25Gbps optical engines. OneChip Photonics has announced photonic integrated circuits for the 4x25Gbps, 100GBase-LR4 10km standard that will also address short and mid-reach applications

Avago has yet to make an announcement regarding higher speed parallel optics. "It is just a matter of time," says Hall. "We have done a demonstration of our 25Gbps VCSEL in an SFP+ package over a year ago, and we are developing parallel optics 25Gbps solutions."

But 25Gbps will take time before it gets to volume production, says Hall: "It is going to be a long, long design cycle for system companies - doing 25Gbps on their boards and their systems is a completely new design."

Supercomputers and system mid-plane and backplane applications could happen a lot earlier than 4x25GbE applications. "Some customers are interested in getting 4x25Gbps samples in the 2013 timeframe," says Hall. "But we expect that volume is going to take at least another year from that."

Meanwhile, Avago says it has already shipped 600,000 MicroPods which has been generally available for over a year.

OneChip Photonics targets the data centre with its PICs

OneChip Photonics is developing integrated optical components for the IEEE 40GBASE-LR4 and 100GBASE-LR4 interface standards.

The company believes its photonic integrated circuits (PICs) will more than halve the cost of the 40 and 100 Gigabit 10km-reach interfaces, enough for LR4 to cost-competitively address shorter reach applications in the data centre.

"I think we can cut the price [of LR4 modules] by half or better”

Andy Weirich, OneChip Photonics

The products mark an expansion of the Canadian startup's offerings. Until now OneChip has concentrated on bringing PIC-based passive optical network (PON) transceivers to market.

LR4 PICs

The startup is developing separate LR4 transmitter and receiver PICs. The 40 and 100GBASE-LR4 receivers are due in the third quarter of 2012, while the transmitters are expected by the year end.

The 40GBASE-LR4 receiver comprises a wavelength demultiplexer - a 4-channel arrayed waveguide grating (AWG) - and four photo-detectors operating around 1300nm. A spot-size converter - an integrated lens - couples the receiver's waveguide's mode field to the connecting fibre.

"[Data centre operators] are saying that they are having to significantly bend out of shape their data centre architecture to accommodate even 300m reaches”

The 40GBASE-LR4 transmitter PIC comprises four directly-modulated distributed feedback (DFB) lasers while the 100GBASE-LR4 use four electro-absorption modulator DFB lasers. Different lasers for the two PICs are required since the four wavelengths at 100 Gig, also around 1300nm, are more tightly spaced: 5nm versus 20nm. "They are much closer together than the 40 Gig version,” says Andy Weirich, OneChip Photonics' vice president of product line management.

Another consequence of the wider wavelength spacings is that the 40 Gig transmitter uses four discrete lasers. “Because the 40 Gig wavelengths are much further apart, putting all the lasers on the one die is problematic," says Weirich. The 40GBASE-LR4 design thus uses five indium phosphide components: four lasers and the AWG, while the 40GBASE-LR4 receiver and the two 100GBASE-LR4 devices are all monolithic PICs.

Both LR4 transmitter designs also include monitor photo-diodes for laser control

Lower size and cost

OneChip says the resulting PICs are tiny, measuring less than 3mm in length. “We think the PICs will enable the packaging of LR4 in a QSFP,” says Weirich. 40GBASE-LR4 products already exists in the QSFP form factor but the 100GBASE-LR4 uses a CFP module.

The startup expects module makers to use its receiver chips once they become available rather than wait for the receiver-transmitter PIC pair. "Reducing the size of one half the solution is possibly good enough to fit the whole hybrid design - the PIC for the receive and discretes for the transmit - into a QSFP,” says Weirich.

The PICs are expected to reduce significantly the cost of LR4 modules. "I think we can cut the price by half or better,” says Weirich. “Right now the LR4 is far too expensive to be used for data centre interconnect.” OneChip expects its LR4 PICs to be cost-competitive with the 2km reach 10x10 MSA interface.

Meanwhile, short-reach 40 and 100 Gig interfaces use VCSEL technology and multi-mode fibre to address 100m reach requirements. In larger data centres this reach is limiting. Extended reach - 300-400m - multimode interfaces have emerged but so far these are at 40 Gig only.

"[Data centre operators] are saying that they are having to significantly bend out of shape their data centre architecture to accommodate even 300m reaches,” says Weirich. “They really want more than that.”

OneChip believes interfaces distances of 200m-2km is underserved and it is this market opportunity that it is seeking to address with its LR4 designs.

Roadmap

Will OneChip integrate the design further to product a single PIC LR4 transceiver?

"It can be put into one chip but it is not clear that there is an economic advantage,” says Weirich. Indeed one PIC might even be more costly than the two-PIC chipset.

Another factor is that at 100 Gig, the 25Gbps electronics present a considerable signal integrity design challenge. “It is very important to keep the electronics very close to the photo-detectors and the modulators,” he says. “That becomes more difficult if you put it all on the one chip.” The fabrication yield of a larger single PIC would also be reduced, impacting cost.

OneChip, meanwhile, has started limited production of its PON optical network unit (ONU) transceivers based on its EPON and GPON PICs. The company's EPON transceivers are becoming generally available while the GPON transceivers are due in two months’ time.

The company has yet to decide whether it will make its own LR4 optical modules. For now OneChip is solely an LR4 component supplier.

Further reading:

See OFC/ NFOEC 2012 highlights, the Kotura story in the Optical Engines section

2020 vision

In a panel discussion at the recent Level123 Terabit Optical and Data Networking conference, Kim Roberts, senior director coherent systems at Ciena, shared his thoughts about the future of optical transmission.

Final part : Optical transmission in 2020

"Four hundred Gigabit and one Terabit are not going to start in long-haul"

"Four hundred Gigabit and one Terabit are not going to start in long-haul"

Kim Roberts, Ciena

Kim Roberts starts on a cautionary note, warning of the dangers when predicting the future. "It is always wrong," he says. But in his role as a developer of systems, he must consider what technologies are going to be useful in 2020.

The simple answer is cheap, flexible optical spectrum and coherent modems (DSP-ASICs).

Since DSP-ASICs will become cheaper and consume less power as they are implemented using the latest CMOS processes, they will migrate from their initial use in long-haul/ regional networks to the metro and even the campus. "Four hundred Gigabit and one Terabit are not going to start in long-haul," says Roberts.

Traditionally, the long-haul network has been where new technology is introduced since it is the part of the network where premium prices can first be justified. "It is not going to start there; it won't have that reach," he says. Instead 400 Gigabit-per-second (Gbps) and one Terabit wavelengths will start over medium reaches - 500-700km - once they become more economical.

One consequence is that when going distances beyond medium reach, more spectrum will be required. "You'll have to light up more fibres [for long-haul], whereas in metro-regional you can put more down one fibre," says Roberts.

The current trend of greater functionality and intelligence being encapsulated in an ASIC will continue but Roberts does not rule out a new kind of optical device delivering a useful function. "It can happen quite suddenly - optical amplifiers happened really suddenly." That said, he does not see any such candidate optical technology for now.

The trends Roberts does expect through to 2020 are as follows:

- Optical pulse shaping: Technologies such as optical regeneration and optical demultiplexing have existed in the labs. But such techniques are not spectrally efficiency and are hot, large and expensive, he says. As a result, he does not expect them to become economical for commercial products by 2020.

- Photonic Switching: Optical burst switch, optical label switching, optical packet switching, all will not prove themselves to be economical by 2020. "Optics is not the right answer in the medium term," says Roberts.

- Optical wavelength conversion, optical logic, optical CDMA and optical solitons are other technologies in Roberts' view that will not be economical by 2020.

What Roberts does identify as being useful through 2020 are:

- Low loss, high dispersion, low non-linearities fibre: "New fibres from the likes of Sumitomo and Corning allow the exploitation of coherent modems," says Roberts. "High dispersion is good, it is your friend: it helps minimise non-linearities." This was not an accepted view as recently as 2005, he says, but now it is well accepted.

- Low cost, heat and noise, high-powered optical amplifiers: "This is a fairly simple function, let's just make them better and better," he says.

- Low cost, frequency-selective switching: This refers to taking a wavelength-selective switch (WSS) and getting rid of the ITU grid; making the WSS more flexible while lowering its cost and size.

- Coherent modems: As mentioned, these will improve in efficiency in terms of bits/s/dollar as well as higher performance in terms of decibels (dBs), reach and spectral efficiency. "Polishing these [metrics]," says Roberts.

Roberts admits that his useful items listed are not exciting, radical breakthroughs: "I think we are in an interval of improving on the trends we already have until there is some breakthrough."

Part 1: The capacity limits facing optical networking

Part 2: Optical transmission's era of rapid capacity growth

Further reading on photonic switching:

Packet optical transport: Hollowing the network core

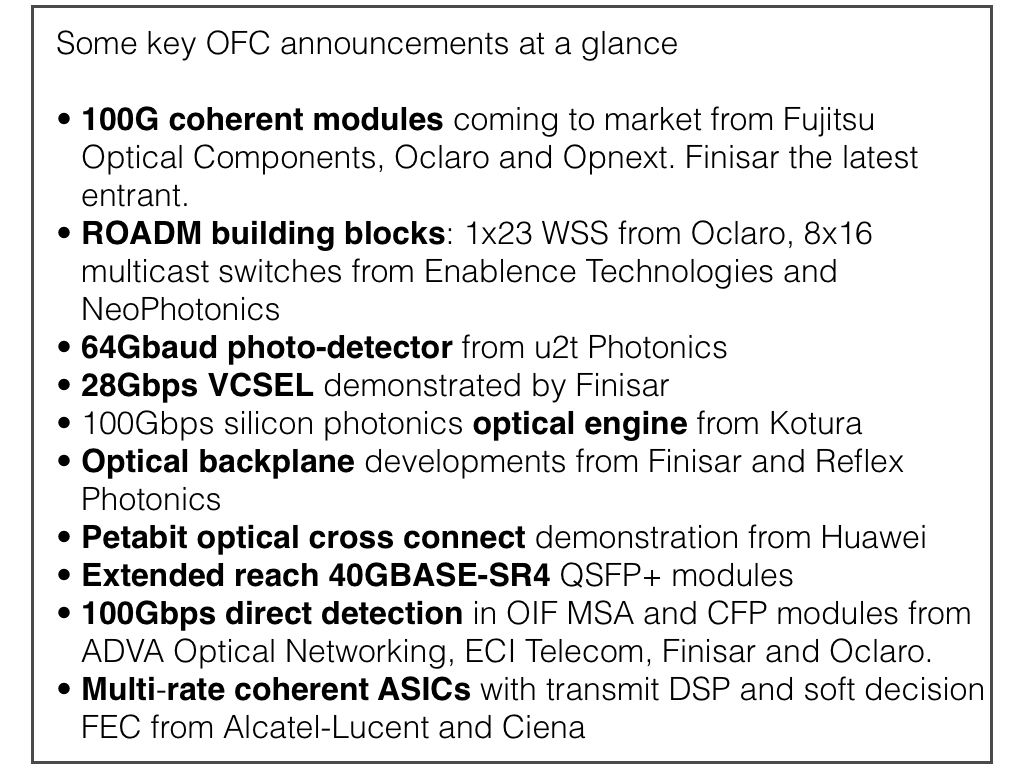

OFC/NFOEC 2012: Some of the exhibition highlights

A round-up of some of the main announcements and demonstrations at the recent OFC/NFOEC 2012 exhibition and conference.

100 Gigabit coherent

Finisar demonstrated its first 100 Gigabit coherent receiver transponder. The 5x7inch dual-polarisation, quadrature phase-shift keying (DP-QPSK) module complies with the Optical Internetworking Forum's (OIF) multi-source specification. The companies joins Fujitsu Optical Components, Opnext and Oclaro that have already detailed their 100 Gigabit coherent modules. Since OFC/NFOEC, Oclaro and Opnext have announced their intention to merge.

"We can take off-the-shelf DSP technology and match it with vertically-integrated optics and come up with a module that is cost effective while enabling higher density for system vendors," says Rafik Ward, vice president of marketing at Finisar. "This will start the shift away from the system vendors' proprietary line cards."

Opnext announced it has demonstrated interoperability between its OMT-100 100 Gigabit-per-second (Gbps) coherent module and 100 Gigabit systems from Fujitsu Optical Systems and NEC. All three designs use NTT Electronics' (NEL) DSP-ASIC coherent receiver chip. "For those that use the same NEL modem chip, we can interoperate with each other," says Ross Saunders, general manager, next-generation transport for Opnext Subsystems.

They come back to folks like us and say: 'If you can hit this price point, then we will use you'

Ross Saunders, Opnext

Oclaro's MI 8000XM 100Gbps module also uses the NEL DSP-ASIC but was not part of the interoperability test sponsored by Japanese operator, NTT.

Oclaro announced its 100Gbps coherent module is now being manufactured using all its own optical components. These include a micro integrated tunable laser assembly (ITLA) - the latest ratified MSA that is more compact and has a higher output power, its modulator and its coherent receiver module.

Using its components enables the company to control performance-cost tradeoffs, says Per Hansen, vice president of product marketing, optical networks solutions at Oclaro: "This [vertical integration] gives us a flexibility we didn’t have in the past."

Finisar is not saying which merchant DSP-ASIC it is using. But like the NEL device, the DSP-ASIC supports soft-decision forward error correction (SD-FEC) to achieve a reach of over 2,000km.

Meanwhile, the module makers' 100Gbps modules are starting to be shipped to customers.

"We shipped [samples] to four customers last quarter and we are probably going to ship to another four or five by the end of this quarter," says Opnext's Saunders.

Opnext says nearly all of its early customers do not have their own in-house 100Gbps developments. However, the systems vendors that have internal 100Gbps programmes have designed their line cards using the same 168-pin interface. This allows them to replace their own 100Gbps daughter cards with a merchant 5x7-inch module.

"This [vertical integration] gives us a flexibility we didn’t have in the past."

"This [vertical integration] gives us a flexibility we didn’t have in the past."

Per Hansen, Oclaro

The company also announced its OTS-100FLX 100Gbps muxponder, transponder and regenerator line cards that use the OTM-100 module and which slot into its OTS-4000 chassis. The chassis supports eight 100Gbps cards. Opnext's smaller 4RU OTS-mini platform hosts two 100Gbps line cards, mounted horizontally. Over half of Opnext's revenues are from subsystems sales which it brands and sells to system vendors.

As for the other 100Gbps transponder makers, Oclaro is sending out its first module samples now. Finisar says its module will be generally available by the year-end, while Fujitsu Optical Components' module was released in April.

Optical components for 200Gbps DP-QPSK

u2t Photonics announced its latest 64Gbaud photo-detector that points to the next speed shift in line-side transmission. The photo-detector is one key building block to the eventual development of a single-carrier DP-QPSK capable of 200Gbps or using 16-QAM, 400Gbps.

"We can already support the higher interface speed and data throughput"

Jens Fiedler, u2t Photonics

"System companies are looking for two things: to increase the baud rate and to use more complex modulation schemes," says Jens Fiedler, vice president sales and marketing at u2t Photonics. "[With this announcement] from the optical component perspective, we can already support the higher interface speed and data throughput."

1x23 Wavelength-selective switch

Oclaro announced a 1x23 wavelength selective switch at OFC. According to Oclaro, the 1x23 WSS has come about due to the operators' desire to support 12-degree nodes: an input port (1 degree) and through-connections on 11 other ports. The remaining 12 [of the WSS's 24 ports] are used as drop ports.

"If for each of those ports you have a fan-out that is steerable to 8 ports, you have 12x8 or 96 as the total channels you can support for a full add-drop," says Hansen. Such a 12-degree, 96-channel requirement was set by operators early on, or at least it was an industry desire, says Hansen.

Switching elements that address these drop requirements - multicast switches - were announced by NeoPhotonics and Enablence Technologies at OFC. The switches, planar lightwave circuit (PLC) hybrid integration designs, implement 8x16 multicast switches.

"The multicast switch takes signals from eight different inputs - 8 different directions in a ROADMs node and distributes those signals to up to 16 drop ports," says Ferris Lipscomb, vice president of marketing at NeoPhotonics.

Such PLC designs are complex, comprising power splitters, waveguide switching, variable optical attenuators and photo-detectors for channel monitoring.

According to NeoPhotonics, the number of optical functions used to implement the multicast switch is in the hundreds.

Enablence already has 8x8 and 8x12 multicast switches and has launched its 8x16 device. Although the company is a hybrid PIC specialist and has PLC technology, it uses polymer PLCs for the multicast designs, claiming they are lower power. NEL is another company offering 8x8 and 8x12 multicast switches.

Passive optical networking

Finisar also demonstrated a mini-PON network, highlighting its optical line terminal (OLT) transceivers, splitters and its latest GPON-stick, an GPON optical network unit built into an SFP. The demo involved using the ONU SFP transceiver in an Ethernet switch port as part of a PON network to deliver high-definition video and audio from the OLT to a high-definition TV.

The company also introduced two splitter products a 1:128 port splitter and a 2:64 (used for redundancy). These high-split ratios are being prepared for the advent of 10 Gigabit PON.

Enablence also demonstrated a WDM-PON 32-channel receiver module at OFC. "It takes 32 TO-can receivers and replaces them with a small module which includes the AWG (arrayed waveguide grating demultiplexer) and the 32 receivers," says Matt Pearson, vice president, technology, optical components division at Enablence Technologies. The design promises to increase system density by fitting two such receivers on a single blade.

Optical engines

Silicon photonics firm, Kotura, detailed its 100Gbps optical engine chip, implemented as a 4x25Gbps design. The optical engine consumes 5W and has a reach of at least 10km, making it suitable for requirements in the data centre including the 100 Gigabit Ethernet IEEE 100GBASE-LR4 standard.

"The 100Gbps chip - 5mmx6mm - is small enough to fit in the QSFP+ and emerging CFP4 optical modules

Arlon Martin, Kotura

Kotura demonstrated to select customers its optical engine. "We are not announcing the product yet," says Arlon Martin, vice president of marketing at Kotura.

Optical engines are used in several applications: pluggable modules on a system's face-plate, the optics at each end of an active optical cable, and for board-mounted embedded applications.

For embedded applications, the optical engine is mounted deeper within the line card, close to high-speed chips, for example, with the signals routed over fibre to the face-plate connector. Using optics rather than high-speed copper traces simplifies the printed circuit board design.Embedded optical engines will also be used for optical backplane-based platforms.

Kotura's silicon photonics-based optical engine integrates all the functions needed for the transmitter and receiver on-chip. These include the 25Gbps optical modulators and drivers, the 4:1 multiplexer and 1:4 demultiplexer and four photo-detectors. To create the lasers, an array of four gain blocks are coupled to the chip. Each of laser's wavelength, around 1550nm, is set using on-chip gratings.

The 100Gbps chip, measuring about 5mmx6mm, is small enough to fit in the QSFP+ and emerging CFP4 optical modules, says Martin. The QSFP+ is likely to be the first application for Kotura's 100Gbps optical engine, used to connect switches within the data centre.

Finisar demonstrated its own VCSEL-based board mount optical assembly - also an optical engine - to highlight the use of the technology for future optical backplanes.

The demonstration, involving Vario-optics and Huber + Suhner, included boards in a chassis. The board includes the optical engine coupled to polymer waveguides from Vario-optics which connect it to a backplane connector, built by Huber + Suhner. "The idea is to show what an integrated optical chassis will look like," says Ward.

Finisar's optical backplane demo using board-mounted optics. Source: Finisar

Finisar's optical backplane demo using board-mounted optics. Source: Finisar

The optical engine comprises 24 channels - 12 transmitters at 10Gbps and 12 receivers in a single board-mounted package. The optics can operate at 10, 12, 14, 25 and 28Gbps, says Finisar. The connector allows the optical engines on different cards to interface via the waveguides. The advantage of polymer waveguides is that they are relatively easy to etch on printed circuit boards and since they replace fibre, they remove fibre management issues. However the technology needs to be proven before system vendors will use such waveguides as standard in their platforms.

Interconnect specialist Reflex Photonics demonstrated an 8.6Tbps optical backplane at OFC. The demonstrator uses Reflex's LightABLE optical engines to implement 864 point-to-point optical fibre links to achieve 8.6Tbps in a single chassis.

The optical fabric comprises six layers of 12x12 fully connected broadcast meshes. Each line card supports 720Gbps into the optical backplane and 60Gbps direct bandwidth between any two cards.

32G Fibre channel

Finisar also highlighted its 28Gbps VCSEL that will be used for the 32 Gigabit Fibre Channel standard. The actual line rate for 32Gbps Fibre Channel is 28.05Gbps. The VCSEL is packaged into a transmitter optical sub-assembly (TOSA) that fits inside a SFP+ module.

"We view 28Gbps VCSEL as strategic due to all the applications it will enable," says Ward.

Besides 32Gbps Fibre Channel, the high-speed VCSEL is suited for the next Infiniband data rate - enhanced data rate (EDR) at 4x25Gbps or 12x25Gbps. There is also standards work in the IEEE for a new 100Gbps Ethernet standard that can use 4x25Gbps VCSELs.

Further reading:

Gazettabyte's full OFC NFOEC 2012 coverage

LightCounting: Notes from OFC 2012: Onset of the Terabit Age

Ovum's OFC coverage

Huawei's novel Petabit switch

The Chinese equipment maker showcased a prototype optical switch at this year's OFC/NFOEC that can scale to 10 Petabit.

"Although the numbers [400,000 lasers] appear quite staggering, they point to a need for photonic integration"

Reg Wilcox, Huawei

Huawei has demonstrated a concept Petabit Packet Cross Connect (PPXC), a switching platform to meet future metro and data centre requirements. The demonstrator is not expected to be a commercial product before 2017.

Current platforms have switching capacities of several Terabits. Yet Huawei believes a one thousand-fold increase in switching capacity will be needed. Fibre capacity will be filled to 20 and eventually 50 Terabits using higher-order modulation schemes and flexible spectrum. This will add up to a Petabit (one million Gigabits) per site, assuming 200 switched fibres at busy network exchanges.

"We are not saying we will introduce a 10 Petabit product in five years' time, although the technology is capable of that," says Reg Wilcox, vice president of network marketing and product management at Huawei. "We will size it to what we deem the market needs at that time."

Source: Huawei

Source: Huawei

The PPXC uses optical burst transmission to implement the switching. Such burst transmission uses ultra-fast switching lasers, each set to a particular wavelength in nanoseconds. Like Intune Networks’ Verisma iVX8000 optical packet switching and transport system, each wavelength is assigned to a particular destination port. As OTN traffic or packets arrive, they are assigned a wavelength before being sent to a destination port.

Huawei's switch demonstration linked two Huawei OSN8800 32-slot platforms, each with an Optical Transport Network (OTN) switching capacity of 2.56 Terabit-per-second (Tbps), to either side of the core optical switch, to implement what is known as a three-stage Clos switching matrix.

With each OSN8800, half the slots are for inter-machine trunks to the core optical switch, the middle stage of the Clos switch. "The other half [of the OSN8800] would be dedicated to whatever services you want to have: Gigabit Ethernet, 10 Gigabit Ethernet; whatever traffic you want riding over OTN," says Wilcox.

The core optical switch implements an 80x80 matrix using 80 wavelengths, each operating at 25Gbps. The 80x80 matrix is surrounded by MxM fast optical switches to implement a larger 320x320 matrix that has an 8 Terabit capacity. It is these larger matrices - 'switch planes' - that are stacked to achieve 10 Petabit. The PPXC grooms traffic starting at 1 Gigabit rates and can switch 100Gbps and even higher speed incoming wavelengths in future.

Oclaro provided Huawei with the ultra-fast lasers for the demonstrator. The laser - a digital supermode-distributed Bragg reflector (DS-DBR) - has an electro-optic tuning mechanism, says Robert Blum, director of product marketing for Oclaro's photonic component. Here current is applied to the grating to set the laser's wavelength. The resulting tuning speed is in nanoseconds although Oclaro will not say the exact switching speed specified for the switch.

Each switch plane uses 4x80 or 320, 25Gbps lasers. A 10 Petabit switch requires 400,000 (320x1250) lasers. "Although the numbers appear quite staggering, they point to a need for photonic integration," says Wilcox. Huawei recently acquired photonic integration specialist CIP Technologies.

The demonstration highlighted the PPXC switching OTN traffic but Wilcox stresses that the architecture is cell-based and can support all packet types: "We are flexible in the technology as the world evolves to all-packet.” The design is therefore also suited to large data centres to switch traffic between servers and for linking aggregation routers. "It is applicable in the data centre as a flattened [switch] architecture," says Wilcox.

Huawei claims the Petabit switch will deliver other benefits besides scalability. "Rough estimates comparing this device to OTN switches, MPLS switches and routers yields savings of greater than 60% on power, anywhere from 15-80% on footprint and at least a halving of fibre interconnect," says Wilcox.

Meanwhile Oclaro says Huawei is not the only vendor interested in the technology. "We have seen quite some interest recently in this area [of optical burst transmission]." says Oclaro's Blum. "I wouldn't be surprised if other companies make announcements in this space."

Further reading:

- OFC/ NFOEC 2012 paper: An Optical Burst Switching Fabric of Multi-Granularity for Petabit/s Multi-Chassis Switches and Routers

OFC/NFOEC 2012 industry reflections - Part 1

The recent OFC/NFOEC show, held in Los Angeles, had a strong vendor presence. Gazettabyte spoke with Infinera's Dave Welch, chief strategy officer and executive vice president, about his impressions of the show, capacity challenges facing the industry, and the importance of the company's photonic integrated circuit technology in light of recent competitor announcements.

OFC/NFOEC reflections: Part 1

"I need as much fibre capacity as I can get, but I also need reach"

Dave Welch, Infinera

Dave Welch values shows such as OFC/NFOEC: "I view the show's benefit as everyone getting together in one place and hearing the same chatter." This helps identify areas of consensus and subjects where there is less agreement.

And while there were no significant surprises at the show, it did highlight several shifts in how the network is evolving, he says.

"The first [shift] is the realisation that the layers are going to physically converge; the architectural layers may still exist but they are going to sit within a box as opposed to multiple boxes," says Welch.

The implementation of this started with the convergence of the Optical Transport Network (OTN) and dense wavelength division multiplexing (DWDM) layers, and the efficiencies that brings to the network.

That is a big deal, says Welch.

Optical designers have long been making transponders for optical transport. But now the transponder isn't an element in the integrated OTN-DWDM layer, rather it is the transceiver. "Even that subtlety means quite a bit," say Welch. "It means that my metrics are no longer 'gray optics in, long-haul optics out', it is 'switch-fabric to fibre'."

Infinera has its own OTN-DWDM platform convergence with the DTN-X platform, and the trend was reaffirmed at the show by the likes of Huawei and Ciena, says Welch: "Everyone is talking about that integration."

The second layer integration stage involves multi-protocol label switching (MPLS). Instead of transponder point-to-point technology, what is being considered is a common platform with an optical management layer, an OTN layer and, in future, an MPLS layer.

"The drive for that box is that you can't continue to scale the network in terms of bandwidth, power and cost by taking each layer as a silo and reducing it down," says Welch. "You have to gain benefits across silos for the scaling to keep up with bandwidth and economic demands."

Super-channels

Optical transport has always been about increasing the data rates carried over wavelengths. At 100 Gigabit-per-second (Gbps), however, companies now use one or two wavelengths - carriers - onto which data is encoded. As vendors look to the next generation of line-side optical transport, what follows 100Gbps, the use of multiple carriers - super-channels - will continue and this was another show trend.

Infinera's technology uses a 500Gbps super-channel based on dual polarisation, quadrature phase-shift keying (DP-QPSK). The company's transmit and receive photonic integrated circuit pair comprise 10 wavelengths (two 50Gbps carriers per 50GHz band).

Ciena and Alcatel-Lucent detailed their next-generation ASICs at OFC. These chips, to appear later this year, include higher-order modulation schemes such as 16-QAM (quadrature amplitude modulation) which can be carried over multiple wavelengths. Going from DP-QPSK to 16-QAM doubles the data rate of a carrier from 100Gbps to 200Gbps, using two carriers each at 16-QAM, enables the two vendors to deliver 400Gbps.

"The concept of this all having to sit on one wavelength is going by the wayside," say Welch.

Capacity challenges

"Over the next five years there are some difficult trends we are going to have to deal with, where there aren't technical solutions," says Welch.

The industry is already talking about fibre capacities of 24 Terabit using coherent technology. Greater capacity is also starting to be traded with reach. "A lot of the higher QAM rate coherent doesn't go very far," says Welch. "16-QAM in true applications is probably a 500km technology."

This is new for the industry. In the past a 10Gbps service could be scaled to 800 Gigabit system using 80 DWDM wavelengths. The same applies to 100Gbps which scales to 8 Terabit.

"I'm used to having high-capacity services and I'm used to having 80 of them, maybe 50 of them," says Welch. "When I get to a Terabit service - not that far out - we haven't come up with a technology that allows the fibre plant to go to 50-100 Terabit."

This issue is already leading to fundamental research looking at techniques to boost the capacity of fibre.

PICs

However, in the shorter term, the smarts to enable high-speed transmission and higher capacity over the fibre are coming from the next-generation DSP-ASICs.

Is Infinera's monolithic integration expertise, with its 500 Gigabit PIC, becoming a less important element of system design?

"PICs have a greater differentiation now than they did then," says Welch.

Unlike Infinera's 500Gbps super-channel, the recently announced ASICs use two carriers and 16-QAM to deliver 400Gbps. But the issue is the reach that can be achieved with 16-QAM: "The difference is 16-QAM doesn't satisfy any long-haul applications," says Welch.

Infinera argues that a fairer comparison with its 500Gbps PIC is dual-carrier QPSK, each carrier at 100Gbps. Once the ASIC and optics deliver 400Gbps using 16-QAM, it is no longer a valid comparison because of reach, he says.

Three parameters must be considered here, says Welch: dollars/Gigabit, reach and fibre capacity. "I have to satisfy all three for my application," he says.

Long-haul operators are extremely sensitive to fibre capacity. "I need as much fibre capacity as I can get," he says. "But I also need reach."

In data centre applications, for example, reach is becoming an issue. "For the data centre there are fewer on and off ramps and I need to ship truly massive amounts of data from one end of the country to the other, or one end of Europe to the other."

The lower reach of 16-QAM is suited to the metro but Welch argues that is one segment that doesn't need the highest capacity but rather lower cost. Here 16-QAM does reduce cost by delivering more bandwidth from the same hardware.

Meanwhile, Infinera is working on its next-generation PIC that will deliver a Terabit super-channel using DP-QPSK, says Welch. The PIC and the accompanying next-generation ASIC will likely appear in the next two years.

Such a 1 Terabit PIC will reduce the cost of optics further but it remains to be seen how Infinera will increase the overall fibre capacity beyond its current 80x100Gbps. The integrated PIC will double the 100Gbps wavelengths that will make up the super-channel, increasing the long-haul line card density and benefiting the dollars/ Gigabit and reach metrics.

In part two, ADVA Optical Networking, Ciena, Cisco Systems and market research firm Ovum reflect on OFC/NFOEC. Click here

100 Gigabit direct detection gains wider backing

More vendors are coming to market with 100 Gigabit direct detection products for metro and private networks.

The emergence of a second de-facto 100 Gigabit standard, a complement to 100 Gigabit coherent, has gained credence with 4x28 Gigabit-per-second (Gbps) direct detection announcements from Finisar and Oclaro, as well as backing from system vendor, ECI Telecom.

"We believe that in some cases operators will prefer to go with this technology instead of coherent"

Shai Stein, CTO, ECI Telecom

ECI Telecom and chip vendor MultiPhy announced at OFC/NFOEC that they have been collaborating to develop a 168-pin MSA, 5x7-inch 100 Gigabit-per-second (Gbps) direct detection module. Finisar and Oclaro used the show held in Los Angeles to announce their market entry with 100Gbps direct detection CFP pluggable optical modules.

Late last year ADVA Optical Networking announced the industry's first 100Gbps direct detection product. At the same time, MultiPhy detailed its MP1100Q receiver chip designed for 100Gbps direct detection.

According to ECI, by having the 168-pin MSA interface, one line card can support a 100Gbps coherent transponder or the 100Gbps direct detection. "This is important as it enables us to fit the technology and price to the needs of end customers," says Shai Stern, CTO of ECI Telecom.

100 Gigabit transmission

Coherent technology has become the de-facto standard for 100Gbps long-haul transmission. Using dense wavelength division multiplexing (DWDM), system vendors can achieve 1,500km and greater reaches using a 50GHz channel.

But coherent designs are relatively costly and 100Gbps direct detection offers a cost-conscious alternative for metro networks and for linking data centres, achieving a reach of up to 800km.

"It [100 Gig direct detection] provides needed performance at an attractive cost, in particular when you are looking at private optical networks," says Per Hansen, vice president of product marketing, optical networks solutions at Oclaro.

Such networks need not be owned by private enterprises, they can belong to operators, says Hansen, but they are typically simple point-to-point connections or 3- to 4-node rings serving enterprises. "Bonding adjacent [4x28Gbps] wavelengths to create a 100Gbps channel that connects efficiently to your [IP] router is very attractive in such networks," says Hansen.

For more complex mesh metro networks, coherent is more attractive. "Simply because of the spectral resources being taken up through the mesh [with 4x28Gbps], and the operational aspect of routeing that," says Hansen.

ECI Telecom says that it has yet to decide whether it will adopt 100Gbps direct detection. But it does see a role for the technology in the metro since the 100Gbps technology works well alongside networks with 10 and 40 Gigabit on-off keying (OOK) channels. "We believe that in some cases operators will prefer to go with this technology instead of coherent," says Stein.

Some operators have chosen to deploy coherent over new overlay networks, to avoid the non-linear transmission effects that result from mixing old and new technologies on the one network. "With this technology, operators can stay with their existing networks yet benefit from 100 Gig high capacity links," says Stein.

Finisar says 100Gbps direct detection is also suited to low-latency applications. "The fact that it is not coherent means it doesn't include a DSP chip, enabling it to be used for low latency applications," says Rafik Ward, vice president of marketing at Finisar.

Implementation

The announced 100Gbps direct detection designs all use 4x28Gbps channels and optical duo-binary (ODB) modulation, although MultiPhy also promotes an 80km point-to-point OOK version (see Table).

Source: Gazettabyte

Source: Gazettabyte

The module input is a 10x10Gbps electrical interface: a CFP interface or the 168-pin line side MSA. A 'gearbox' IC is used to translate between the 10x10Gbps electrical interface and the four 28Gbps channels feeding the optics.

"There are a few suppliers that are offering that [gearbox IC]," says Robert Blum, director of product marketing for Oclaro's photonic components. AppliedMicro recently announced a duplex multiplexer-demultiplexer IC.

MultiPhy's receiver chip has a digital signal processor (DSP) that implements the maximum likelihood sequence estimation (MLSE) algorithm, which is says enables 10 Gig opto-electronics to be used for each channel. The result is a 100Gbps module based on the cost of 4x10Gbps optics. However, over-driving the 10Gbps opto-electronics creates inter-symbol interference, where the energy of a transmitted bit leaks into neighbouring signals. MultiPhy's DSP using MLSE counters the inter-symbol interference.

100G direct detection module showing MultiPhy's MP1100Q chip. Source: MultiPhy

100G direct detection module showing MultiPhy's MP1100Q chip. Source: MultiPhy

Oclaro and Finisar claim that using ODB alone enables the use of lower-speed opto-electronics. "This is irrespective of whether you use MLSE or hard decision," says Blum. "The advantage of using optical duo-binary modulation is that you can use 10G-type optics."

Finisar's Ward points out that by using ODB, the 100Gbps direct-detection module avoids the price/ power penalty associated with a receiver DSP running MLSE to compensate for sub-optimal optical components.

Oclaro, however, has not ruled out using MLSE in future. The company endorsed MultiPhy's MLSE device when the product was first announced but its first 100G transceiver is not using the IC.

Finisar and Oclaro's modules require 200GHz to transmit the 100Gbps signal: 4x50GHz channels, each carrying the 28Gbps signal. "This architecture will enable 2.5x the spectral efficiency of tunable XFPs," says Ward. Using XFPs, ten would be needed for a 100Gbps throughput, each channel requiring 50GHz or 500GHz in total.

MultiPhy claims that it can implement the 100Gbps in a 100GHz channel, 5x the efficiency but still twice the spectrum used for 100Gbps coherent.

Finisar demonstrated its 100Gbps CFP module with SpectraWave, a 1 rack unit (1U) DWDM transport chassis, at OFC/NFOEC. "It provides all the things you need in line to enable a metro Ethernet link: an optical multiplexer and demultiplexer, amplification and dispersion compensation," says Ward. Up to four CFPs can be plugged into the SpectraWave unit.

Operator interest

In a recent survey published by Infonetics Research, operators had yet to show interest in 100Gbps direct detection. Infonetics attributed the finding to the technology still being unavailable and that operators hadn't yet assessed its merits.

"Operators are aware of this technology," says ECI's Stein. "It is true they are waiting to get a proof-of-concept and to test it in their networks and see the value they can get.

"That is why ECI has not yet decided to go for a generally-available product: we will deliver to potential customers, get their feedback and then take a decision regarding a commercial product," says Stein.

However MultiPhy claims that this is the first technology that enables 100Gbps in a pluggable module to achieve a reach beyond 40km. That fact coupled with the technology's unmatched cost-performance is what is getting the interest. "Every time you show a potential user some way they can save on cost, they are interested," says Neal Neslusan, vice president of sales and marketing at MultiPhy.

Direct detection roadmap

Recent announcements by Cisco Systems, Ciena, Alcatel-Lucent and Huawei highlight how the system vendors will use advanced modulation and super-channels to evolve coherent to speeds beyond 100Gbps. Does direct detection have a similar roadmap?

"I don't think that this on-off keying technology is coming instead of coherent," says Stein. "Once we move to super-channel and the spectral densities it can achieve, coherent technology is a must and will be used." But for 40Gbps and 100Gbps, what ECI calls intermediate rates, direct detection extends the life of OOK and existing network infrastructure.

ECI and MultiPhy are members of the Tera Santa Consortium developing 1 Terabit coherent technology, and MultiPhy stresses that as well as its direct detection DSP chips, it is also developing coherent ICs.

Further reading: 100 Gigabit: The coming metro opportunity

Altera optical FPGA in 100 Gigabit Ethernet traffic demo

Altera is demonstrating its optical FPGA at OFC/NFOEC, being held in Los Angeles this week. The FPGA, coupled to parallel optical interfaces, is being used to send and receive 100 Gigabit Ethernet packets of various sizes.

The technology demonstrator comprises an Altera Stratix IV FPGA with 28, 11.3Gbps electrical transceivers coupled to two Avago Technologies' MicroPod optical modules.

"FPGAs are now being used for full system level solutions"

"FPGAs are now being used for full system level solutions"

Kevin Cackovic, Altera

The MicroPods - a 12x10Gbps transmitter and a 12x10Gbps optical transceiver - are co-packaged with the FPGA. "All the interconnect between the serdes and the optics are on the package, not on the board," says Steve Sharp, marketing program manager, fiber optic products division at Avago. Such a design benefits signal integrity and power consumption, he says: "It opens up a different world for FPGA users, and for system integration for optic users."

Both Altera and Avago stress that the optical FPGA has been designed deliberately using proven technologies. "We wanted to focus on demonstrating the integration of the optics, not pushing either of the process technologies to the absolute edge," says Sharp.

The nature of FPGA designs has changed in recent years, says Kevin Cackovic, senior strategic marketing manager of Altera's transmission business unit. Many designs no longer use FPGAs solely to interface application-specific standard products to ASICs, or as a co-processor. "FPGAs are now being used for full system level solutions, things like a framer or MAC technology, forward error correction at very high rates, mapper engines, packet processing and traffic management," he says.

Having its FPGAs in such designs has highlighted for Altera current and upcoming system bottlenecks. "This is what is driving our interest in looking at this technology and what is possible integrating the optics into the FPGA," says Cackovic. Applications requiring the higher bandwidth and the greater reach of optical - rack-to-rack rather than chip-to-module - include next-generation video, cloud computing and 3D gaming, he says.

Altera has still to announce its product plans regarding the optical FPGA dsign. Meanwhile Avago says it is looking at higher-speed versions of MicroPod.

"The request for higher line rates is obviously there," says Sharp. "Whether it goes all the way to 28 [Gigabit] or one of the steps in-between, we are not sure yet."

Challenges, progress & uncertainties facing the optical component industry

In recent years the industry has moved from direct detection to coherent transmission and has alighted on a flexible ROADM architecture. The result is a new level in optical networking sophistication. OFC/NFOEC 2012 will showcase the progress in these and other areas of industry consensus as well as shining a spotlight on issues less clear.

Optical component players may be forgiven for the odd envious glance towards the semiconductor industry and its well-defined industry dynamics.

The semiconductor industry has Moore’s Law that drives technological progress and the economics of chip-making. It also experiences semiconductor cycles - regular industry corrections caused by overcapacity and excess inventory. The semiconductor industry certainly has its challenges but it is well drilled in what to expect.

Optical challenges

The optical industry experienced its own version of a semiconductor cycle in 2010-11 - strong growth in 2010 followed by a correction in 2011. But such market dynamics are irregular and optical has no Moore's Law.

Optical players must therefore work harder to develop components to meet the rapid traffic growth while achieving cost efficiencies, denser designs and power savings.

Such efficiencies are even more important as the marketplace becomes more complex due to changes in the industry layers above components. The added applications layer above networks was highlighted in the OFC/NFOEC 2012 news analysis by Ovum’s Karen Liu. The analyst also pointed out that operators’ revenues and capex growth rates are set to halve in the years till 2017 compared to 2006-2010.

Such is the challenging backdrop facing optical component players.

Consensus

Coherent has become the defacto standard for long-haul high-speed transmission. Optical system vendors have largely launched their 100Gbps systems and have set their design engineers on the next challenge: addressing designs for line rates beyond 100Gbps.

Infinera detailed its 500Gbps super-channel photonic integrated circuit last year. At OFC/NFOEC it will be interesting to learn how other equipment makers are tackling such designs and what activity and requests optical component vendors are seeing regarding the next line rates after 100Gbps.

Meanwhile new chip designs for transport and switching at 100Gbps are expected at the show. AppliedMicro is sampling its gearbox chip that supports 100 Gigabit Ethernet and OTU4 optical interfaces. More announcements should be expected regarding merchant 100Gbps digital signal processing ASIC designs.

An architectural consensus for wavelength-selective switches (WSSes) - the key building block of ROADMs - are taking shape with the industry consolidating on a route-and-select architecture, according to analysts.

Gridless - the ROADM attribute that supports differing spectral widths expected for line rates above 100Gbps - is a key characteristic that WSSes must support, resulting in more vendors announcing liquid crystal on silicon designs.

Client-side 40 and 100 Gigabit Ethernet (GbE) interfaces have a clearer module roadmap than line-side transmission. After the CFP comes the CFP2 and CFP4 which promise denser interfaces and Terabit capacity blades. Module form factors such as the QSFP+ at 40GbE and in time 100GbE CFP4s require integrated photonic designs. This is a development to watch for at the show.

Others areas to note include tunable-laser XFPs and even tunable SFP+, work on which has already been announced by JDS Uniphase.

Lastly, short-link interfaces and in particular optical engines is another important segment that ultimately promises new system designs and the market opportunity that will unleash silicon photonics.

Optical engines can simplify high-speed backplane designs and printed circuit board electronics. Electrical interfaces moving to 25Gbps is seen as the threshold trigger when switch makers decide whether to move their next designs to an optical backplane.

The Optical Internetworking Forum will have a Physical and Link Layer (PLL) demonstration to showcase interoperability of the Forum’s Common Electrical Interface (CEI) 28Gbps Very Short Reach (VSR) chip-to-module electrical interfaces, as well as a demonstration of the CEI-25G-LR backplane interface.

Companies participating in the interop include Altera, Amphenol, Fujitsu Optical Components, Gennum, IBM, Inphi, Luxtera, Molex, TE Connectivity and Xilinx.

Altera has already unveiled a FGPA prototype that co-packages 12x10Gbps transmitter and receiver optical engines alongside its FPGA.

Uncertainties

OFC/NFOEC 2012 also provides an opportunity to assess progress in sectors and technology where there is less clarity. Two sectors of note are next-generation PON and the 100Gbps direct-detect market.

For next-generation PON, several ideas are being pursued, faster extensions of existing PON schemes such as a 40Gbps version of the existing time devision multiplexing PON schemes, 40G PON based on hybrid WDM and TDM schemes, WDM-PON and even ultra dense WDM-PON and OFDM-based PON schemes.

The upcoming show will not answer what the likely schemes will be but will provide an opportunity to test what the latest thinking is.

The same applies for 100 Gigabit direct detection.

There are significant cost advantages to this approach and there is an opportunity for the technology in the metro and for data centre connectivity. But so far announcements have been limited and operators are still to fully assess the technology. Further announcements at OFC/NFOEC will highlight the progress being made here.

The article has been written as a news analysis published by the organisers before this year's OFC/NFOEC event.