Business services and mobile revive WDM-PON interest

"WDM-PON is many things to many people" - Jon Baldry

It was in 2005 that Novera Optics, a pioneer of WDM-PON (wavelength-division multiplexing, passive optical networking), was working with Korea Telecom in a trial involving 50,000 residential lines. Yet, one decade later, WDM-PON remains an emerging technology. And when a WDM-PON deployment does occur, it is for business services and mobile backhaul rather than residential broadband.

WDM-PON delivers high-capacity, symmetrical links using a dedicated wavelength. The links are also secure, an important consideration for businesses, and in contrast to PON where data is shared between all the end points, each selecting its addressed data.

One issue hindering the uptake of WDM-PON is the lack of a common specification. "WDM-PON is many things to many people," says Jon Baldry, technical marketing director at Transmode.

One view of WDM-PON is as the ultimate broadband technology; this was Novera's vision. Other vendors, such as Transmode, emphasise the WDM component of the technology, seeing it as a way to push metro-style networking towards the network edge, to increase bandwidth and for operational simplicity.

WDM-PON's uptake for residential access has not yet happened because the high bandwidth it offers is still not needed, while the system economics do not match those of PON.

Gigabit PON (GPON) and Ethernet PON (EPON) are now deployed in the tens of millions worldwide. And operators can turn to 10G-EPON and XG-PON when the bandwidth of GPON and EPON are insufficient. Beyond that, TWDM-PON (Time and Wavelength Division Multiplexing PON) is an emerging approach, promoted by the likes of Alcatel-Lucent and Huawei. TWDM-PON uses wavelength-division multiplexing as a way to scale PON, effectively supporting multiple 10 Gigabit PONs, each riding on a wavelength.

Carriers like the reassurance a technology roadmap such as PON's provides, but their broadband priority is wireless rather than wireline. The bigger portion of their spending is on rolling out LTE since wireless is their revenue earner.

As for fixed broadband, operators are being creative.

G.fast is one fixed broadband example. G.fast is the latest DSL standard that supports gigabit speeds over telephone wire. Using G.fast, operators can combine fibre and DSL to achieve gigabit rates and avoid the expense of taking fibre all the way to the home. BT is one operator backing G.fast, with pilot schemes scheduled for the summer. And if the trials are successful, G.fast deployments could start next year.

Deutsche Telekom is promoting a hybrid router to customers that combines fixed and wireless broadband, with LTE broadband kicking in when the DSL line becomes loaded.

Meanwhile, vendors with a WDM background see WDM-PON as a promising way to deliver high-volume business services, while also benefiting from the operator's cellular push by supporting mobile backhaul and mobile fronthaul. They don't dismiss WDM-PON for residential broadband but accept that the technology must first mature.

Transmode announced recently its first public customer, US operator RST Global Communications, which is using the vendor's iWDM-PON platform for business services.

"Our primary focus is business and mobile backhaul, and we are pushing WDM deeper into access networks," says Baldry. "We don't want a closed network where we treat WDM-PON differently to the way we treat the rest of the network." This means using the C-band wavelength grid for metro and WDM-PON. This avoids having to use optical-electrical-optical translation, as required between PON and WDM networks, says Baldry.

The iWDM-PON system showing the seeder light source at the central office (CO) optical line terminal (OLT), and the multiplexer (MDU) that selects the individual light band for the end point customer premise equipment (CPE). Source: Transmode.

The iWDM-PON system showing the seeder light source at the central office (CO) optical line terminal (OLT), and the multiplexer (MDU) that selects the individual light band for the end point customer premise equipment (CPE). Source: Transmode.

Transmode's iWDM-PON

Several schemes are being pursued to implement WDM-PON. One approach is seeded or self-tuning, where a broadband light source is transmitted down the fibre from the central office. An optical multiplexer is then used to pick off narrow bands of the light, each a seeder source to set the individual wavelength of each end point optical transceiver. An alternative approach is to use a tunable laser transceiver to set the upstream wavelength. A third scheme combines the broadband light source concept with coherent technology that picks off each transceiver's wavelength. The coherent approach promises extremely dense, 1,000 wavelength WDM-PONs.

Transmode has chosen the seeded scheme for the iWDM-PON platform. The system delivers 40, 1 Gigabit-per-second (Gbps) wavelengths spaced 50 GHz apart. The reach between the WDM-PON optical line terminal (OLT) and the optical network unit (ONU) end-points is 20 km without dispersion compensation fibre, or 30 km using such fibre. The platform uses WDM-PON SFP pluggable modules. The SFPs are MSA-compliant and use a fabry-perot laser and an avalanche photo-detector optimised for the injection-locked signal.

"We use the C-band and pluggable optics, so the choice of using WDM-PON optics or not is up to the customer," says Baldry. "It should not be a complicated decision, and the system should work seamlessly with everything else you do, enabling a mix of WDM-PON and regular higher speed or longer reach WDM over the same access network, as needed."

Baldry claims the approach has economic advantages as well as operational benefits. While there is a need for a broadband light source, the end point SFP WDM-PON transceivers are cheaper compared to fixed or tunable optics. Also setting the wavelengths is automated; the engineers do not need to set and lock the wavelength as they do using a tunable laser.

"The real advantage is operational simplicity," says Baldry, especially when an operator needs to scale optically connected end-points as they grow business and mobile backhaul services. "That is the intention of a PON-like network; if you are ramping up the end points then you have to think of the skill levels of the installation crews as you move to higher service volumes," he says.

RST Global Communications uses Transmode's Carrier Ethernet 2.0 as the service layer between the demarcation device (network interface device or NID) at the customer's premises, while using Transmode's packet-optical cards in the central office. WDM-PON provides the optical layer linking the two.

An early customer application for RST was upgrading a hotel's business connection from a few megabits to 1Gbps to carry Wi-Fi traffic in advance of a major conference it was hosting.

Overall, Transmode has a small number of operators deploying the iWDM-PON, with more testing or trialing it, says Baldry. The operators are interested in using the WDM-PON platform for mobile backhaul, mobile fronthaul and business services.

There are also operators that use installed access/ customer premise equipment from other vendors, exploring whether Transmode's WDM-PON platform can simplify the optical layer in their access networks.

Further developments

Transmode's iWDM-PON upgrade plans include moving the system from a two fibre design - one for the downstream traffic and one for the upstream traffic - to a single fibre one. To do this, the vendor will segment the C-band into two: half the C-band for the uplink and half for the downlink.

Another system requirement is to increase the data rate carried by each wavelength beyond a gigabit. Mobile fronthaul uses the Common Public Radio Interface (CPRI) standard to connect the remote radio head unit that typically resides on the antenna and the baseband unit.

CPRI data rates are multiples of the basic rate of 614.4 Mbps. As such 3 Gbps, 6 Gbps and rates over 10 Gbps are used. Baldry says the current iWDM-PON system can be extended beyond 1 Gbps to 2.5 Gbps and potentially 3 Gbps but because the system in noise-limited, the seeder light scheme will not stretch to 10 Gbps. A different optical scheme will be needed for 10 Gigabit. The iWDM-PON's passive infrastructure will allow for an in-service upgrade to 10 Gigabit WDM-PON technology once it becomes technically and economically viable.

Transmode has already conducted mobile fronthaul field trials in Russia and in Asia, and lab trials in Europe, using standard active and passive WDM and covering the necessary CPRI rates. "We are not mixing it with WDM-PON just yet; that is the next step," says Baldry.

Further information

WDM-PON Forum, click here

Lightwave Magazine: WDM-PON is a key component in next generation access

Is the tunable laser market set for an upturn?

Part 2: Tunable laser market

"The tunable laser market requires a lot of patience to research." So claims Vladimir Kozlov, CEO of LightCounting Market Research. Kozlov should know; he has spent the last 15 years tracking and forecasting lasers and optical modules for the telecom and datacom markets.

Source: LightCounting, Gazettabyte

Source: LightCounting, Gazettabyte

The tunable laser market is certainly sizeable; over half a million units will be shipped in 2014, says LightCounting. But the market requires care when forecasting. One subtlety is that certain optical component companies - Finisar, JDSU and Oclaro - are vertically integrated and use their own tunable lasers within the optical modules they sell. LightCounting counts these as module sales rather than tunable laser ones.

Another issue is that despite the development of advanced reconfigurable optical add/ drop multiplexers (ROADMs) and tunable lasers, the uptake of agile optical networking has been limited.

"Verizon is bullish on getting the next generation of colourless, directionless and contentionless ROADMS to reconfigure the network on-the-fly," says Kozlov. "But I'm not so sure Verizon is going to be successful in convincing the industry that this is going to be a good market for [ROADM] suppliers to sell into."

Reconfigurability helps engineers at installations when determining which channels to add or drop, but there is little evidence of operators besides Verizon talking about using ROADMS to change bandwidth dynamically, first in one direction and then the other, he says.

Another indicator of the reduced status of tunable lasers is NeoPhotonics's intention to purchase Emcore's tunable external cavity laser as well as its module assets for US $17.5 million. Emcore acquired the laser when it bought Intel's optical platform division for $85 million in 2007, while Intel acquired it from New Focus in 2002 for $50 million. NeoPhotonics has also spent more in the past: it bought Santur's tunable laser for $39 million in 2011.

"There was so much excitement with so many players [during the optical bubble of 1999-2000], the market was way too competitive and eventually it drove vendors to the point where they would prefer to sell the business for pennies rather than keep it running," says Kozlov. "Emcore has been losing money, it is not a highly profitable business." Yet for Kozlov, Emcore's tunable laser is probably the best in the business with its very narrow line-width compared to other devices.

Tunable laser market

Tunable lasers have failed to get into the mainstream of the industry. "If you look at DWDM, I'm guessing that 70 percent of lasers sold are still fixed wavelength or temperature-tunable over a few wavelengths," says Kozlov. System vendors such as Huawei and ZTE advertise their systems with tunable lasers. "But when we asked them how they are using tunable lasers, they admitted that the bulk of their shipments are fixed-wavelength devices because whatever little they can save on cost, they will."

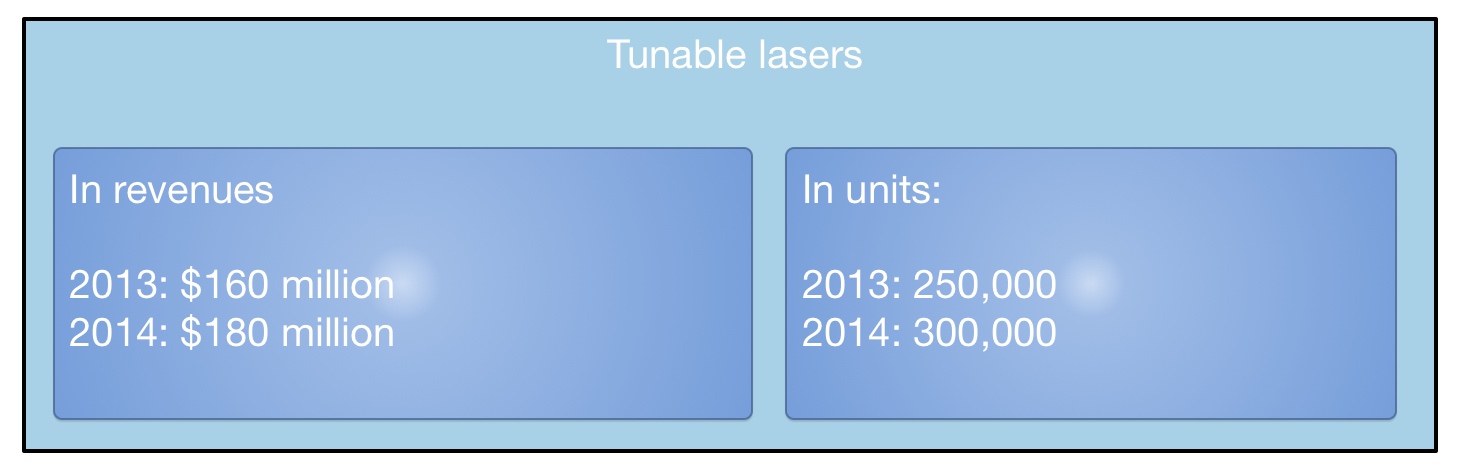

LightCounting valued the 2013 tunable laser market at $160 Million, growing to $180 Million in 2014. This equates to 250,000 units sold in 2013 and 300,000 units this year. "Most of these are for coherent systems," says Kozlov. The number of tunable lasers sold in modules - mainly XFPs but also SFPs and 300-pin modules - is 250,000 million units. "Half a million units a year; if you look at actual shipments, it is quite a lot," says Kozlov.

What next?

"I'm hoping we are reaching the low point in the tunable laser market as vendors are struggling and sales are at a very low valuation," says Kozlov.

The advent of more complex modulation schemes for 400 Gigabit and greater speed optical transmission, and the adoption of silicon photonics-based modulators for long haul will require higher powered lasers. But so much progress has been made by laser designers over the last 15 years, especially during the bubble, that it will last the industry for at least another decade or two, says Kozlov: "Incremental progress will continue and hopefully greater profitability."

For Part 1: NeoPhotonics to expand its tunable laser portfolio, click here

Infinera introduces flexible grid 500G super-channel ROADM

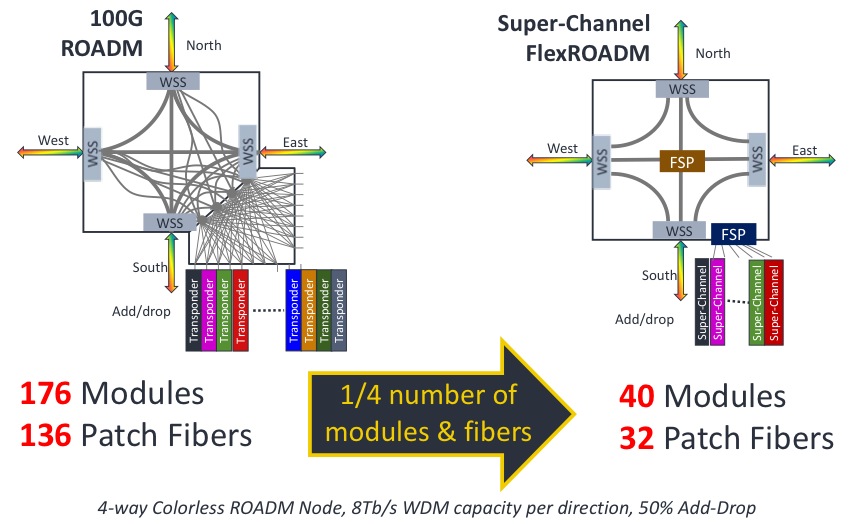

An example showing the impact of a 500G super-channel ROADM node. Source: Infinera

An example showing the impact of a 500G super-channel ROADM node. Source: Infinera

"The FlexROADM will open up the Tier-1 operators in a way Infinera has not been able to do before," says Dana Cooperson, vice president, network infrastructure at market research firm, Ovum. "The DTN-X was necessary but not sufficient; the ROADM is the last piece."

The FlexROADM is claimed to deliver two industry firsts: it can add and drop flexible-grid-based 500 Gig super-channels, and uses the Internet Engineering Task Force’s (IETF) spectrum switched optical networks (SSON).

"SSON is the next generation of WSON [Wavelength Switched Optical Network control plane], except it manages spectrum," says Ron Kline, principal analyst, network infrastructure also at Ovum.

The DTN-X platform combines Infinera's 500 Gig photonic integrated circuits and OTN (Optical Transport Network) switching. With the FlexROADM, Infinera has added switching at the optical layer in 500 Gig increments. Infinera can now offer enhanced multi-layer network optimisation with the combination of electrical and optical switching.

"Optical bypass before was manual using patch cords, now operators can reconfigure with the FlexROADM," says Kline. "It also provides new optical restoration capabilities that Infinera did not have."

The FlexROADM supports up to nine degrees, and is available in colourless, colourless and directionless, and full colourless, directionless and contentionless (CDC) versions.

"The debate about contentionless continues," says Kline. "It is safe to assume that for the majority of applications flexible grid, colourless and directionless will be the high runner." Contentionless will be used by the big carriers, he says, but in certain locations only.

Infinera says the line system announced will support up to 24 Terabit-per-second (Tbps) when it ships in September. The maximum long-haul capacity using its current PM-QPSK super-channels is 9.5Tbps per fibre pair.

"In the future when we enable metro-reach super-channels using PM-16-QAM, they will support 24 Terabit-per-second per fibre pair using the line system we are announcing," says Geoff Bennett, director, solutions and technology at Infinera.

Bennett says the data rate and the spectral efficiency for a given sub-carrier can be varied depending on the reach required. The spacing between sub-carriers that make up a super-channel also can be varied depending on reach. Many different transmission possibilities exist, says Bennett, but to explain the concept, he cites two examples.

The 24Tbps capacity with PM-16-QAM modulation uses pulse shaping at the transmitter to achieve 'Nyquist DWDM' channel spacing, the spacing between channels that approximates the baud rate, says Bennett.

"At this time we are not disclosing the details of the channel spacing, or the number of sub-carriers used by our future line modules," says Bennett. "But the total super-channel spectral width is the equivalent of 200GHz if you are transmitting a one Terabit super-channel, for example." This equates to a spectral efficiency of 5b/s/Hz, and using 16-QAM, the reach achieved will be 600-700km.

"The system we have just launched is designed to operate in long-haul networks and uses PM-QPSK," says Bennett. "For an ultra long-haul reach requirement of 4,500km, the super-channel comprises ten sub-carriers; a total of 500 Gbps over a spectral width of 250 GHz." These line cards are available now, he says.

Infinera continues to make steady market progress, according to Ovum. The company is in the top 10 system vendors globally, while in backbone and 100 Gigabit, Infinera is fourth.

Ericsson and Ciena collaborate on IP-over-WDM and SDN

Jan Häglund

Jan Häglund

Ericsson and Ciena have signed a global strategic agreement that provides Ericsson with Ciena's optical networking technology, while Ciena benefits from Ericsson's broader service provider relationships.

In particular, Ciena's WaveLogic coherent optical processor will be integrated into a module and added to Ericsson's Smart Service IP routers, while Ericsson will resell Ciena's 6500 Packet-Optical Platform and 5400 Reconfigurable Switching Systems.

Both companies will also collaborate in developing SDN in the WAN, also known as service provider SDN or Transport SDN.

IP-over-WDM will grow rapidly, accounting for over 30 percent of the total market by 2020.

Ericsson says the IP market will reach US $15 billion and optical networking $10 billion in 2014. Jan Häglund, vice president, head of IP and broadband at Ericsson, says the two markets are not independent and that IP-over-WDM will grow rapidly, accounting for over 30 percent of the total market by 2020.

Ciena's motivation for the deal is somewhat different.

"We are focussed on packet optical convergence - Layer 2 down to Layer 0 - creating a scalable, cost effective WAN infrastructure for service providers," said James Frodsham, Ciena’s senior vice president and chief strategy officer. "We have been looking around our core value proposition, we have been looking to expand our distribution into geographies and customers where we lack presense." The deal with Ericsson clearly addresses that, he says.

There is now more to think about. It is a very interesting time.

James Frodsham, Ciena

The company also has a different view regarding IP-over-WDM. IP routers are a vital part of the network but for cost reasons they are better used in centralised locations, interconnected using packet optical networking, said Tom Mock, senior vice president, corporate communications at Ciena.

Working with Ericsson widens the network applications Ciena can address. "But our view of the prevalence of IP-over-WDM hasn't really changed," said Mock.

Tom MockEricsson and Ciena both highlight the changes taking place in the network, namely Network Functions Virtualisation (NFV) and SDN, as another reason for the tie-up.

Tom MockEricsson and Ciena both highlight the changes taking place in the network, namely Network Functions Virtualisation (NFV) and SDN, as another reason for the tie-up.

NFV is turning telecom functions that previously required dedicated platforms into software that is virtualised and executed on servers. NFV promises to bring to telecom the benefits of IT and cloud computing, enabling operators to introduce services more quickly and scale them according to demand.

SDN, meanwhile, not only oversees such virtualised services, but also the network layers over which they run. This is where IP-over-WDM plays a role and why the two companies are working to develop Transport SDN.

It also gives us exposure to the Evolved Packet Core that is going into new wireless installations

Ciena's optical infrastructure and Ericsson's service-provider SDN and IP portfolio will result in a competitive solution, said Ericsson. "Combining the two network layers, and jointly making sure that the control protocol optimises the traffic network, will lead to CapEx and OpEx savings," said Ericsson's Häglund, in a company webcast announcing the deal.

Other benefits of the agreement include growing Ciena's relationships with services providers, especially in wireless. "It also gives us exposure to the Evolved Packet Core that is going into new wireless installations," said Mock.

Ciena also highlights Ericsson's strengths in operations and business support systems (OSS/ BSS). Ciena says the transition to SDN will be gradual. "That evolution is going to have to take into account OSS/ BSS technologies and having a partner that is strong in that area will help us both," said Mock.

Ciena believes more such industry collaboration should be expected. "We see that with programs like AT&T's Domain 2.0 Program, such thinking is also happening in the marketplace," said Mock. For the Supplier Domain 2.0 Program, AT&T is selecting vendors to provide a modern, cloud-based architecture that includes NFV and SDN technologies.

The collaboration between Ciena and Ericsson should boost their position as possible Domain 2.0 suppliers. "Both of us are suppliers under AT&T's current domain program, and as with any relationship, incumbency has advantages" said Mock. "The fact that we are beginning to collaborate on SDN-oriented applications ought to help."

Industry collaboration between telecom vendors and IT equipment providers will also likely increase.

"The data centre is a very important piece of real-estate in the future infrastructure," said Frodsham. The data centre hosts the storage and servers that manage the bulk of applications that pass across the network. Greater collaboration will be needed between telco and IT vendors to optimise how the data centre interacts with the WAN.

"There is now more to think about," said Frodsham. "It is a very interesting time."

Transmode adopts 100 Gigabit coherent CFPs

Transmode has detailed line cards that bring 100 Gigabit coherent CFP optical modules to its packet optical transport platforms.

We can be quicker to market when newer DSP-based CFPs appear

Jon Baldry

"We believe we are the first to market with line-side coherent CFPs, bringing pluggable line-side optics to a WDM portfolio," says Jon Baldry, technical marketing director at Transmode. Baldry says that other system vendors already support non-coherent CFP modules on their line cards and that further vendor announcements using coherent CFPs are to be expected.

The Swedish system vendor announced three line cards: a 100 Gig transponder, a 100 Gig muxponder and what it calls its Ethernet muxponder (EMXP) card. The first two cards support wavelength division multiplexing (WDM) Layer 1 transport: the 100 Gig transponder card supports two 100 Gig CFP modules while the 100 Gig muxponder supports 10x10 Gig ports and a CFP.

The third card, the EMXP220/IIe, has a capacity of 220 Gig: 12x10 Gigabit Ethernet ports and the CFP, with all 13 ports supporting optional Optical Transport Network (OTN) framing. "You can think of it as a Layer 2 switch on a card with 13 embedded transponders," says Baldry. The three cards each take up two line card slots.

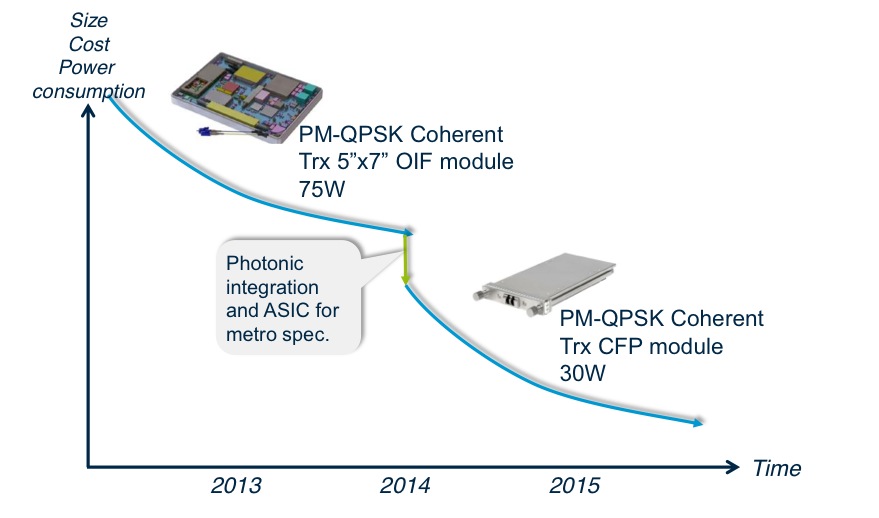

Transmode's platforms are used for metro and metro regional networks. Metro has more demanding cost, space and power efficiency requirements than long distance core networks. "The move that the whole industry is taking to CFP or pluggable-based optics is a big step forward [in meeting metro's requirements]," says Baldry.

The line cards will be used with Transmode's metro edge TM-Series packet optical family that is suited for applications such as mobile backhaul and business services. "Within packet-optical networks, we can do customer premise Gigabit Ethernet all the way through to 100 Gig handover to the core on the same family of cards running the same Layer 2 software," says Baldry. Transmode believes this capability is unique in the industry.

The TM-3000 chassis is 11 rack units (RU) high and can hold eight double-slot cards, for a total of 800 Gig CFP line side capacity.

Source: Transmode

Source: Transmode

100 Gig coherent modules

Transmode is talking to several 100 Gig coherent CFP module makers. The company will use multiple suppliers but says it is currently working with one manufacturer whose product is closer to market.

Acacia Communications announced the first CFP module late last year and other module makers are expected to follow at the upcoming OFC show in March 2014.

The roadmap of the CFP modules envisages the CFP to be followed by the smaller CFP2 and smaller still CFP4. For the CFP2 and CFP4, the coherent digital signal processor (DSP) ASIC is expected to be external to the module's optics, residing on the line card instead. The 100 Gig CFP, however, integrates the DSP-ASIC within the module and this approach is favoured by Transmode.

"We can be quicker to market when newer DSP-based CFPs appear; today we can do 800km and in the future that will go to a longer reach or lower power consumption," says Baldry. "Also, the same port can take a coherent CFP or a 100BASE-SR4 or -LR4 CFP without having the cost burden of a DSP on the card whether it is needed or not."

The company also points out that by using the integrated CFP it can choose from all the coherent designs available whereas modules that separate the optics and DSP-ASIC will inevitably offer a more limited choice.

There are also 100 Gigabit direct detection CFPs from the likes of Finisar and Oplink Communications and Transmode's cards would support such a CFP module. But for now the company says its main interest is in coherent.

"One key requirement for anything we deploy is that it works with the existing optical infrastructure," says Baldry. "One difference between 100 Gig metro and long haul is that a lot of the long distance 100 Gig is new build, whereas the metro will require 100 Gig over existing infrastructure and coherent works very nicely with the existing design rules and existing 10 Gig networks."

The 100 Gig transponder card consumes 75W, with the coherent CFP accounting for 30W of the total. The card can be used for signal regeneration, hosting two 100 Gig coherent CFPs rather than the more typical arrangement of a client-side and a line-side CFP. The card's power consumption exceeds 75W, however, when two coherent CFPs are used.

The 100 Gig transponder and Ethernet muxponder will be available in the second quarter of 2014, says Transmode, while the 100 Gig muxponder card will follow early in the third quarter of the year.

Reporting the optical component & module industry

LightCounting recently published its six-monthly optical market research covering telecom and datacom. Gazettabyte interviewed Vladimir Kozlov, CEO of LightCounting, about the findings.

When people forecast they always make a mistake on the timeline because they overestimate the impact of new technology in the short term and underestimate in the long term

Q: How would you summarise the state of the optical component and module industry?

VK: At a high level, the telecom market is flat, even hibernating, while datacom is exceeding our expectations. In datacom, it is not only 40 and 100 Gig but 10 Gig is growing faster than anticipated. Shipments of 10 Gigabit Ethernet (GbE) [modules] will exceed 1GbE this year.

The primary reason is data centre connectivity - the 'spine and leaf' switch architecture that requires a lot more connections between the racks and the aggregation switch - that is increasing demand. I suspect it is more than just data centres, however. I wouldn't be surprised if enterprises are adopting 10GbE because it is now inexpensive. Service providers offer Ethernet as an access line and use it for mobile backhaul.

Can you explain what is causing the flat telecom market?

Part of the telecom 'hibernation' story is the rapidly declining SONET/SDH market. The decline has been expected but in fact it had been growing up till as recently as two years ago. First, 40 Gigabit OC-768 declined and then the second nail in the coffin was the decline in 10 Gig sales: 10GbE is all SFP+ whereas 0C-192 SONET/SDH is still in the XFP form factor.

The steady dense WDM module market and the growth in wireless backhaul are compensating for the decline in SONET/SDH market as well as the sharp drop this year in FTTx transceiver and BOSA (bidirectional optical sub assembly) shipments, and there is a big shift from transceivers to BOSAs.

LightCounting highlights strong growth of 100G DWDM in 2013, with some 40,000 line card port shipments expected this year. Yet LightCounting is cautious about 100 Gig deployments. Why the caution?

We have to be cautious, given past history with 10 Gig and 40 Gig rollouts.

If you look at 10 Gig deployments, before the optical bubble (1999-2000) there was huge expected demand before the market returned to normality, supporting real traffic demand. Whatever 10 Gig was installed in 1999-2000 was more than enough till 2005. In 2006 and 2007 10 Gig picked up again, followed by 40 Gig which reached 20,000 ports in 2008. But then the financial crisis occurred and the 40 Gig story was interrupted in 2009, only picking up from 2010 to reach 70,000 ports this year.

So 40 Gig volumes are higher than 100 Gig but we haven't seen any 40 Gig in the metro. And now 100 Gig is messing up the 40G story.

The question in my mind is how much metro is a bottleneck today? There may be certain large cities which already require such deployments but equally there was so much fibre deployed in metropolitan areas back in the bubble. If fibre cost is not an issue, why go into 100 Gig? The operator will use fibre and 10 Gig to make more money.

CenturyLink recently announced its first customer purchasing 100 Gig connections - DigitalGlobe, a company specialising in high-definition mapping technology - which will use 100 Gig connectivity to transfer massive amounts of data between its data centers. This is still a special case, despite increasing number of data centers around the world.

There is no doubt that 100 Gig will be a must-have technology in the metro and even metro-access networks once 1GbE broadband access lines become ubiquitous and 10 Gig will be widely used in the access-aggregation layer. It is starting to happen.

So 100 Gigabit in the metro will happen; it is just a question of timing. Is it going to be two to three years or 10-15 years? When people forecast they always make a mistake on the timeline because they overestimate the impact of new technology in the short term and underestimate in the long term.

LightCounting highlights strong sales in 10 Gig and 40 Gig within the data centre but not at 100 Gig. Why?

If you look at the spine and leaf architecture, most of the connections are 10 Gig, broken out from 40 Gig optical modules. This will begin to change as native 40GbE ramps in the larger data centres.

If you go to super-spine that takes data from aggregation to the data centre's core switches, there 100GbE could be used and I'm sure some companies like Google are using 100GbE today. But the numbers are probably three orders of magnitude lower than in a spine and leaf layers. The demand for volume today for 100GbE is not that high, and it also relates to the high price of the modules.

Higher volumes reduce the price but then the complexity and size of the [100 Gig CFP] modules needs to be reduced as well. With 10 Gig, the major [cost reduction] milestone was the transition to a 10 Gig electrical interface. It has to happen with 100 Gig and there will be the transition to a 4x25Gbps electrical interface but it is a big transition. Again, forget about it happening in two-three years but rather a five- to 10-year time frame.

I suspect that one reason for Google offerings of 1Gbps FTTH services to a few communities in the U.S. is to find out what these new application are, by studying end-user demand

You also point out the failure of the IEEE working group to come up with a 100 GbE solution for the 500m-reach sweet spot. What will be the consequence of this?

The IEEE is talking about 400GbE standards now. Go back to 40GbE that was only approved some three years, the majority of the IEEE was against having 40GbE at all, the objective being to go to 100GbE and skip 40GbE altogether. At the last moment a couple of vendors pushed 40GbE. And look at 40GbE now, it is [deployed] all over the place: the industry is happy, suppliers are happy and customers are happy.

Again look at 40GbE which has a standard at 10km. If you look at what is being shipped today, only 10 percent of 40GBASE-LR4 modules are compliant with the standard. The rest of the volume is 2km parts - substandard devices that use Fabry-Perot instead of DFB (distributed feedback) lasers. The yields are higher and customers love them because they cost one tenth as much. The market has found its own solution.

The same thing could happen at 100 Gig. And then there is Cisco Systems with its own agenda. It has just announced a 40 Gig BiDi connection which is another example of what is possible.

What will LightCounting be watching in 2014?

One primary focus is what wireline revenues service providers will report, particularly additional revenues generated by FTTx services.

AT&T and Verizon reported very good results in Q3 [2013] and I'm wondering if this is the start of a longer trend as wireline revenues from FTTx pick up, it will give carriers more of an incentive to invest in supporting those services.

AT&T and Verizon customers are willing to pay a little more for faster connectivity today, but it really takes new applications to develop for end-user spending on bandwidth to jump to the next level. Some of these applications are probably emerging, but we do not know what these are yet. I suspect that one reason for Google offerings of 1Gbps FTTH services to a few communities in the U.S. is to find out what these new application are, by studying end-user demand.

A related issue is whether deployments of broadband services improve economic growth and by how much. The expectations are high but I would like to see more data on this in 2014.

Q&A with Jerry Rawls - Part 2

The concluding part of the interview with Finisar's executive chairman and company co-founder, Jerry Rawls, to mark the company's 25th anniversary.

Second and final part

Guys that are in the silicon photonics industry have a religion. It does not make any difference what the real economics are, what the real performance is, they talk with a religious fervour about what might be possible with silicon

Q: Over 25 years, what has been one of your better decisions?

Jerry Rawls: After the crash of 2001, we asked what are we going to do in the optics business? Are we going to stay in it? Is there a bright future? And if so, how are we going to respond to it?

We still believed that this was an attractive market and we had built an important brand. And, we knew we could make it more successful in the future, but we were going to have to change the way we did business.

Deciding to become vertically integrated was the key change. At that time, every other company was trying to sell their assets and remove their fixed costs. They were outsourcing manufacturing instead of bringing it in-house. Everyone wanted a variable cost business model, not a fixed cost model. We clearly went against the mainstream.

That is one of the better decisions we ever made.

Equally, with the benefit of hindsight, what do you regret?

A couple of acquisitions that we made in our early years turned out less than desirable. We were sold some technology for which we believed the probability of success was high. We bought the companies based on their technology, not necessarily on their business, and it did not pan out. One thing we learned from those experiences is that when we buy a company, we try to be much more careful about our due diligence.

Another one I regret, although I don't think it was a bad decision: We had created a division in the company called Network Tools that was the leading company in the SAN (storage area network) industry for protocol analysis.

Every company in the world that was creating SAN equipment bought our protocol analysers for Fibre Channel. That was about a $40 million-a-year business and nicely profitable. We sold it [to JDSU] in 2009 and I regret that because we started that business from scratch. It really helped create the SAN industry; it helped our customers prove their equipment interoperability.

We sold it because we had that $250 million in debt we had to pay off. We had borrowed the money and it was now due. It [2009] was still not a great time, we were trying to raise cash and one asset that had value was this division.

How would you describe the current state of the optical component industry and the main challenges it faces?

The optical component industry is in a pretty healthy place. For the most part, the larger companies are doing quite well. Our business is doing nicely. We have had four quarters in a row where revenues have grown, our profitability metrics are improving and our outlook is good. A lot of that has to do with our focus on the data centre market.

We anticipate increasing dollars spent worldwide by phone companies over the next five years

The speeds and feeds in data centres are increasing dramatically: data centres are becoming larger, the connections are faster - connections that used to be copper back in the days of Gigabit Ethernet are now at 10 Gigabits and mostly optical. That transformation of copper to optics that took place in the telephone world 35 years ago is now in full bloom in the data centres. So it is a great time to be in optics because the trends are rolling our way.

We are anticipating spending growth in the telecommunications world with an upgrade in global networks to deal with growing Internet traffic. These networks are changing to very sophisticated ROADM [reconfigurable optical add/drop multiplexer] architectures and 100 Gigabit transmission rates.

We anticipate increasing dollars spent worldwide by phone companies over the next five years. So that sector is going to become healthier and hopefully a larger percentage of our business.

I believe the optical component industry has a number of market opportunities that are going to keep it pretty healthy for some time.

It does not mean that we don't have challenges. The industry, and in particular telecommunications, is fragmented. There are a number of competitors that have very small market share. Many of these competitors are focussing their R&D efforts on the same products - the next generation of telecom equipment - and that is very inefficient. That is the main challenge that the optical industry has, that this fragmentation leads to inefficiency.

That limits the margins of the companies and the industry. It also means that pricing in the industry is at a lower level than component suppliers would like to see.

How that works out is not clear. You could say that in a fragmented industry, you would like to see more consolidation. There will be a little of that. But there are some parts of the industry where consolidation will be very slow.

For example, all of the Japanese optical suppliers are likely to stay in business for some time. Almost every big Japanese electronics company has an optical division, and they always have. None went out of business in the crash of '01 and none went out in the crash of '08 – ’09. That is because these optics divisions are small parts of giant conglomerates. This fragmentation problem is difficult to solve.

Datacom and the data centre appear to be a more interesting segment in terms of driving change than telecom. How do you view the two segments going forward?

I think both are interesting.

The data centre is interesting because of the increased density of Gigabits-per-square-inch on the faceplates of equipment, whether it is switches, storage or servers. Then there is the faster connection speeds between devices and the demand for low latency. The physical size of some of these data centres is demanding that certain connections become single mode - more like wiring a campus as opposed to multi-mode historically used in single buildings.

The datacom market is also very interesting because of a number of connections changing from copper to optical as speeds get faster. Copper transmission demands too much power through big cables at these higher speeds.

In telecom, today what is really exciting is the advent of coherent transmission systems, in particular at 100 Gigabits moving to 400 Gigabit and 1 Terabit-per-second in the next decade.

Coherent transmission is revolutionary in that by using electronics rather than optics to do signal correction for long distance fibre transmission, these signals can be much more efficient, run faster and be much less costly than they have ever been in the past.

Coupled with that is the automation of these optical networks through the extensive use of sophisticated ROADMs. With the next generation of networks, truck rolls to do provisioning and reconfigurations will be almost eliminated.

So there is a lot of excitement for us just because of what is coming to telecom networks. We have been through a lull for the last couple of years but it is a cyclical industry that tends to follow technology waves. We are entering the 100 Gigabit transmission wave and the sophisticated use of many, many ROADMs in these networks for automation.

We have designed silicon photonic chips here at Finisar and have evaluations that are ongoing

Silicon photonics is spoken of as a disruptive technology for datacom and telecom. It also promises to disrupt the component supply chain. What is Finisar's take on the technology?

As a company, we are very product focussed and we want to deliver transmission products and switching products, etc. that fulfill our customers' needs. We don't really care what the technology is. We are going to invest in technology that enables us to build the highest performing and most efficient devices that we can.

Silicon photonics is an interesting technology. We haven't used it in any of our products so far with the exception of a silicon waveguide in an integrated receiver. The most interesting thing about silicon photonics is not just to be able to make waveguides for multiplexers or demultiplexers, but to make modulators.

People have been speculating for years that we will have to use external modulators to achieve higher transmission speeds as we won’t be able to directly drive a laser fast enough.

We make VCSELs by the tens of millions. When we were making them at one Gigabit-per-second [Gbps], there were those in the industry that predicted that we would never be able to run at 2 Gbps as it would be impossible to modulate the lasers that fast. Then we did 2 Gbps, and then there were those that said it would be impossible to do 4, 8 or 10 Gigabits. Well, we are shipping devices today that are 25Gbps VCSELs that are directly modulated.

At every one of those steps there were people investing in silicon photonics companies because they could build modulators they thought would run that fast. I believe every one of those silicon photonics companies went broke.

We now have a new wave of silicon photonics companies. And because Cisco Systems happened to buy one [LightWire], there has been a lot of excitement about silicon photonics.

Well, the physics are such that it is always more efficient to directly modulate a laser - that is, to drive it with an injection of current - than it is to have a continuous wave laser where you externally modulate the light. The external modulation takes more power, more components and more cost.

Guys that are in the silicon photonics industry have a religion. It does not make any difference what the real economics are, what the real performance is, they talk with a religious fervor about what might be possible with silicon.

To date, no one has been able to make light out of silicon. That means one can make a silicon modulator and a silicon waveguide but still have to buy an indium phosphide laser to create light. Then they would have to bond that laser to the silicon substrate in a way that it efficiently launches light, is mechanically stable, and hermetic and that it will stand the rigours of all these networks. That means it can be deployed for 10 or 20 years over temperatures of 0 to 85 degrees C, and survive the qualification torture tests of high humidity, high heat and temperature cycling.

One of the things in the silicon photonics industry to date has been that the packaging - and therefore the yields - have been so difficult, such that the costs have been very high.

I promise you today that for almost every application, silicon photonics costs are higher than using traditional indium phosphide and gallium arsenide lasers and direct modulation.

We don't ignore silicon photonics as a potential technology.

We have designed silicon photonic chips here at Finisar and have evaluations that are ongoing. There are many companies that now offer silicon photonics foundry services. You can lay out a chip and they will build it for you.

We can go to a foundry; we can use their design rules and libraries and design silicon modulators and waveguides and put together a chip with as many splits and Mach-Zehnders that we want. The problem is we haven't found a place where it can be as efficient or offer the performance as using traditional lasers and free-space optics.

Our packaging has been more efficient and our output has been at a higher performance level. Remember that silicon is optically quite lossy. That means you have to launch a lot of light into it to get a little light out.

So far we just haven't found a product where we thought silicon photonics modulation was as efficient as we could build using some other technology. That is true today.

We may use silicon photonics one of these days. In fact, if we look back five or 10 years ago, when we predicted what we would need to build a 100 Gig transponder, silicon photonics was one of our alternatives, and one of the paths we went down in parallel in completing the design.

As it turns out, traditional optics and micro-optical components exceeded our own expectations.

I compare it to the disc drive industry. Twenty years ago people were predicting the demise of the disc drive industry because of solid state memory. It was thought impossible that disc drives would be around five years hence. Well, the guys in the disc industry learned how to increase the bit density and the resolution of the heads and look at the industry today. You can buy a Terabyte drive for less than a hundred dollars. The amazing technology advances they have made have kept them in the game.

What are the biggest challenges facing Finisar?

The biggest challenge we face is meeting the changes in the industry. The use of information is becoming so pervasive - video everywhere and 4G networks - that means all the kids are going to be streaming HD video to some device in their hand. And there is going to be billions of them.

Also, another challenge is managing the expectations of our customers - the equipment companies - in terms of delivering the speeds, densities and the low power performance needed to provide all this information.

It is a daunting task.

We have customers today trying to design systems that will have Terabit-per-second optical links. We don't know how we are going to get there yet but I promise you we will.

The industry in 25 years' time: Still datacom & telecom or something else by then?

In 25 years' time, datacom and telecom will be much more converged.

The data center today is becoming more like wiring a campus network than it is wiring a building as the distances become larger and the speeds faster. Today in data centers we only use point-to-point connections; we use no multiple wavelengths on fibres.

In the telephone world, everything is WDM. Today we are using mostly 96 wavelengths on a single fibre. Those 96 channels can all run at 100 Gbps – a total of nearly 10 Terabit on a single fiber. In the data center world most connections are single wavelengths, point-to-point. But in 25 years, the data centers are going to be using many of the techniques that are used in the telecom networks today in terms of making efficient use of fibres, using multiple colors of light, and being able to switch those individual colours.

For the first part, click here

Interview with Finisar's Jerry Rawls

Finisar is celebrating its 25th anniversary. Gazettabyte interviewed Finisar's executive chairman and company co-founder, Jerry Rawls, to mark the anniversary.

Part 1

Jerry Rawls, Finisar's executive chairman and co-founder

Jerry Rawls, Finisar's executive chairman and co-founder

Q: How did you meet fellow Finisar co-founder Frank Levinson?

JR: I was a general manager of a division at Rachem, a company in Menlo Park, California. We were developing and manufacturing electric interconnect products; our markets were mostly defence electronics and the computer industry.

Our customers were starting to talk a lot about fibre optics and we had no products. It seemed like it was going to be a hole in our portfolio. So I started a fibre optics product development group and hired a bright young physicist from Bell Labs to be the principal technologist. His name was Frank Levinson.

What decided you both to set up Finisar?

The division I was running was very successful: we were the fastest growing and the most profitable. Frank was lured away by our chairman to work on a fibre-optics start-up that was internally funded: Raynet.

Raynet lost almost a billion dollars over the next few years. It was the biggest venture loss in the history of Silicon Valley, and it may still be the biggest venture capital loss in Silicon Valley history.

At they were losing money, and it was sucking money from the rest of the company, our division was unable to fund a lot of projects we would have liked to have funded if we were to continue to grow. Frank was very frustrated as they were jousting at windmills.

We had lunch one day and talked about the possibility of starting a fibre-optics company. It was as simple as that: we could do better on our own. This was in 1987.

What convinced you both that high-speed fibre optics was a business to pursue?

Frank LevinsonFrank had some original patents from Bell Labs on wavelength division multiplexing (WDM) and the use of fibre optics in telephony. That is where fibre optics first had a major impact.

Frank LevinsonFrank had some original patents from Bell Labs on wavelength division multiplexing (WDM) and the use of fibre optics in telephony. That is where fibre optics first had a major impact.

As we started a little company, the thing that was happening in 1988 was that the Mac OS had just been introduced and Windows was right behind it. This was the first time colour and graphics were introduced to the PC. As we watched the change to graphics and colour, we knew video was not going to be too far behind. It was clear that files would be larger, and the bandwidth between systems, and between storage and systems, would need to be greater.

And so we started to think about high-speed optics for data centres. And the corollary to that was low-cost, high-speed optics for data centres.

We did not think we were up to competing with the telecommunications industry because in those days AT&T Bell Labs (Lucent), Alcatel and Nortel dominated the world of fibre optics. They built their own components, they built their own sub-systems and we did not think there was any chance of a start-up competing with them.

But in the world of computer networks, there were no established suppliers as fibre optics was almost non-existent there. Our goal was to focus on Gigabit-per-second speeds and how we could build low-cost Gigabit optical links for data centres.

The reason low cost was so important was that to buy an OC-12 (622 Megabit-per-second SONET) link, the cost was thousands of dollars at each end. This was a telephony fibre link but there was no chance you could be successful in any sort of computer installation with an optical connection at such prices.

So the question was: How do you bring the cost down and the prices down to a level that networks could afford, and that were priced lower than the computers at each end?

"Frank and I started the company with our own money. We had no outside investors. I took a second mortgage on my house and off we went to start a company"

So we looked for compromises. One was distance. OC-12s went 20km, 40km, 80km but data centres only needed a few hundred meters. Ok, if we can build a link that goes 500m, we have covered any data centre in the world.

The next thing was: What does that open up? And what can we do? It quickly led us to multi-mode transmission, and multi-mode transmission turned out for us to be much, much cheaper to build because the core of the fibre was either 50 or 62.5 microns versus 8 microns in telephony fibre. That means that the core is enormous compared to telephone fibres, and our job for alignment [with the laser] was that much easier.

We built some early samples. We went through several iterations to get there. We put together the components and ICs and we finally had a product that we thought was pretty good. We had a 1 Gigabit transmitter with 17 pins and a 1 Gigabit receiver with 17 pins, and we had a Gigabit transceiver with 28 pins.

Our first customers for these devices were the national laboratories. Lawrence Livermore National Lab was one of the pioneers in the world of Fibre Channel. They, working with IBM, had a big hand in the whole Fibre Channel protocol.

Our engagement with Lawrence Livermore led to other labs. All these physicists, building high-energy physics experiments, all of a sudden started buying these optical transceivers from us by the thousands. That was our first product.

Finisar's initial focus included consulting. What sort of things was the company doing during this period?

Consulting, we did a tiny bit. Mostly, what we did was contract design engineering.

Frank and I started the company with our own money. We had no outside investors. I took a second mortgage on my house and off we went to start a company. That meant we had to be able to support ourselves and our employees. We had to have customers that pay their bills.

Early optical transceiver product from Finisar

Early optical transceiver product from Finisar

So one of the things we did in the early days is we found customers to do design work for. We designed fibre optic systems, we designed cable TV fibre optics systems, we designed special fibre interconnects, we did some special fibre testing - which you might call consulting. We designed a scuba-diver computer that calculated dive tables - whether you would get the bends or not, how long you could stay down, and what depth and pressure. We designed a swimming pool chlorination control system.

We did a lot of things along the way to generate revenue to support our simultaneous product development work to build the Gigabit optics devices.

We didn't start the company to be a contract design house; we started it to be a product company. But the financial reality was we had to have enough money coming in to support our employees and ourselves.

"His firm had so much inventory of the products from that company that he didn’t think they would buy anything for the next three or four years"

In the late 1990s, Finisar experienced the optical boom and then the crash. Do you recall when you first realised all was not well?

In November and December of 2000, we were about to acquire two companies. Both were component suppliers in the telecommunications industry. They both sold to big customers like Alcatel, Nortel and Lucent.

In the due-diligence process for one of the companies, I was on a phone call with Lucent who had been a huge customer – maybe 40 percent of their business came from Lucent. Talking to the VP of procurement about his history with this company and what his company’s future prospects were - all the things you do normally do in due diligence - he confirmed what his previous business had been and that he was satisfied with them as a supplier. They were a good company.

But, as we talked about future business, he went silent. And, then he came back with some devastating news: his firm had so much inventory of the products from that company that he didn’t think they would buy anything for the next three or four years. This fact was unknown to the company we were acquiring. That was my first signal that something bad was going on.

We did not acquire this company. We were in the late stages of the acquisition discussions – talking to their customers is usually one of the last things you do in due diligence – but there was obviously a material adverse change in the outlook of this company. So, we quickly terminated discussions.

A very similar thing happened with the other company only a couple of weeks later. This was late 2000, it was clear the bell was ringing. Something bad was about to happen in the optics, telephony, networking industry.

In our January quarter of 2001, we could see the incoming order rate falling. And by our February-April quarter that year, our revenues had dropped something like 47 percent in two quarters. It rolled through the industry pretty fast.

How did Finisar navigate the turbulent aftermath?

We were in a bit in shock, as most of the industry was.

To put it in perspective, our revenues dropped 47 percent in two quarters; Nortel’s High Performance Optical Components division, which had sales in one quarter during 2000 of $1.4 billion, their revenues dropped to something like $28 million. Some 98.5 percent of their revenue disappeared, it was that disastrous a time, particularly in telecom.

The issue with Finisar was that the business we built was predominantly about computer networks. We didn’t have that much business with telecom. We were selling optics for data centres and so our business didn’t decline as much as the Nortels, Alcatels and the Lucents. But it was still a precipitous decline and so we had to decide: Were we still going to stay in this business or were we going to open a hamburger stand or some other kind of a business? And our answer was we didn’t know much about the hamburger business or any other business.

We thought that, long term, fibre optics was going to be a good business. The use of information was only going to increase and that was a place where we had built a fundamental market position and we ought to continue.

To do that, we had to change our spots, that is, change our way of doing business. We were going to have to be more cost competitive. Enormous capacity had been created in the optics industry in the '90s and that capacity didn’t all evaporate [with the bust]. We knew we were going to have to be much more cost-competitive.

We decided that our strategy was to be a vertically-integrated company. In the ‘90s we were not vertically integrated: we bought lasers from the Japanese or Honeywell who made VCSELs, we bought photo-detectors from either US or Japanese suppliers, we bought ICs from merchant semiconductor companies, and we put it all together. We even outsourced all of our assembly and manufacturing. But in the future, we were convinced that we had to be more cost-competitive.

"One of the things that I think is really important here is that we allow people to make mistakes"

During this period Finisar had an IPO. How did it impact the company and this strategy?

We had previously had an IPO in 1999 that raised some money. The first thing we did after the crash was to buy a factory in Malaysia. This was around March 2001, business had started to crash, everyone was selling, and if you were buying, you could get a pretty good deal on almost anything. So we bought this factory from Seagate – 640,000 sq. ft. of almost brand new building, with 200,000 sq. ft. of clean room, 20 acres of land – we bought it for $10 million.

Then we decided we had to be vertically integrated with our ICs. We weren’t going to start an IC foundry but we had to start an IC design group. So we hired a senior IC design manager from National Semiconductor who had led their analogue design efforts and we started a semiconductor design group. Today we design almost all of the ICs that go into our datacom products. We have some 60 people worldwide who are involved in IC design, layout, testing and verification.

Next, we bought the Honeywell VCSEL fab. They were our big supplier, we were their largest customer. Honeywell decided that that business was not strategic and so we bought it.

We also bought a small laser fab in Fremont, California to make edge-emitting lasers. We could also make photo-detectors in both those fabs. So we were now in a position we could make photo-detectors and lasers, and we could design ICs and go to foundry with them instead of buying them from merchant semiconductor companies and pay their margins.

We had a beautiful big factory we could build our products in, and expand for years to come. We are still expanding in that factory. Today we have over 5,000 employees in that plant in Malaysia.

To finance all the tomfoolery, we needed a lot more money than we were able to raise with our IPO. I went to New York and Boston and peddled a convertible bond issue for $250 million. So we raised a enough cash that we could finance these acquisitions and also support the company through this crash and downturn.

It was great we were a public company because we couldn’t raise that much money if we had been a private company. It worked out well; and we eventually paid all that debt off.

Fast-forward to today, we are targeting more than a billion dollars in revenue this year, we are the largest company in our industry and I think we are the most profitable.

In 2006 IEEE Spectrum Magazine ranked Finisar top in terms of patent power among telecom equipment manufacturers. Is this still a key strategic goal of Finisar? And if so, how do you ensure innovation continues year after year?

I wouldn’t say patents are a strategic goal of ours. The IEEE Spectrum ranking was based on the number of patents you had, how many you had issued recently, but it also was importantly weighted by how many times your patents were referenced by other patent applications. A lot of ours were referenced by others who were filing patents. We ended up pretty high on the list.

We do have over 1,000 issued US patents, and we have about 500 issued international patents. We employ maybe as many as 1,300 engineers and almost 300 of them have Ph.Ds. We will continue to innovate. We have been a leader in this industry for years. Our goal is to try to be out in front, to deliver the products that meet the speeds, the power, the density that our customers need for high-speed transmission. That means we have to have a lot of talented people, we have to be focussed. And, I promise you that innovation is very important to our success.

It is not so much about how many patents we get issued. Patents are important many times for defensive purposes as much as anything else. People can’t come after us and sue us frivolously for patent infringement because we have so many patents that cover products they likely make. In the end, patents for defence is really important.

Is there something that you have learnt over the years that has proved successful regarding innovation?

First, we want to be an innovative company. When we hire, we look for innovative people, we look for clever people, smart people, but also people with good interpersonal skills, that is a part of our culture.

But one of the things that I think is really important here is that we allow people to make mistakes. We don’t encourage people to make mistakes but we allow people to make mistakes. If they are trying to do their job and they make a mistake, we don’t fire them. We try to learn from the mistakes.

Over time, we have had guys make what appeared to be pretty serious mistakes that I am sure people might have been fired for in many other companies. But, for us, we are supportive of our employees. As long as we know they are not being lazy or dishonest, we support them.

I think that environment where you can try to innovate, you can work on projects but you know the culture of the company is not vengeful and that we will tolerate mistakes is an important part of our innovative environment.

For the second and final part, click here

Ciena and partners build SDN testbed for carriers

Ciena, working with partners, is building a network to enable the development of software-defined networking (SDN) applied to the wide area network (WAN). The motivation in creating the testbed network is to boost carrier confidence in SDN while aiding its development.

"When you get very serious vice presidents in tier-one carriers saying, 'This [SDN] is the biggest change in my career', there is something to it."

"When you get very serious vice presidents in tier-one carriers saying, 'This [SDN] is the biggest change in my career', there is something to it."

Chris Janz, Ciena

Many software elements will be needed for SDN in the carrier environment, spanning the network through to the back office. Much is made of the benefits SDN will deliver, but it is difficult for operators to gauge SDN's full potential until they transform their networks. Carriers also want the confidence that the industry will deliver the SDN components needed.

To this aim, Ciena, along with research and education partners, CANARIE, Internet2 and StarLight, are developing the SDN test network. Carriers, research partners and Ciena's R&D team will use the network to experiment and validate SDN's benefits for packet and optical WANs.

Parts of the SDN network have already been demonstrated and Ciena expects the SDN test environment to be up and running in the next couple of months. Many of the components are in prototype form.

"The goal is to leap to the end point by providing the key parts of the future system for a carrier-style SDN-powered WAN, thereby demonstrating conclusively the macro SDN service cases that people imagine can be delivered," says Chris Janz, vice president of market development at Ciena.

Another aim is to help carriers determine how best to migrate their networks to the future SDN framework.

Testbed

The testbed conforms to the Open Networking Foundation's SDN architecture that comprises three layers. "Two of them are software: business applications talking to a network controller system which drives the physical network," says Janz.

The business application layer includes such systems as customer management, service creation and billing. "What we think of as OSS/ BSS and cloud orchestration systems," says Janz. Components such as cloud orchestration systems and portals that simulate customer actions are being contributed by partners to exercise the testbed.

Ciena has chosen OpenFlow, the open standard, to drive the packet and transport layers. "This [SDN] is not the data centre, it is not all packet; it is a model carrier-style network," he says.

The SDN controller is designed to add flexibility and open up the design. "There is a clear spirit in SDN that customers want to take more affirmative control of their competitive destiny," says Janz. "They do not want to be locked into services, features and functions that their vendors deliver to them and their competitors."

Ciena is part of OpenDaylight, the Linux-based SDN controller industry initiative, and this will be included. "There is a modular structure with internal interfaces," says Janz. "There is leveraging of some early generation open source components for part of the structure."

The control system is designed to be the heart of what Janz refers to as 'autonomic intelligence' to deliver the sought-after benefits of SDN.

One such benefit is for carriers to contain their capital costs by better filling their networks with traffic - running them 'hotter'. "Can they move their networks from 35 percent average utilisation to 95 percent?" says Janz.

Software-based intelligence as delivered by SDN can match dynamically demand with fulfillment. "You have all the service demands coming into the [SDN] control system, and you have control at that point of the entire configuration and state of the network," says Janz.

Ciena has added real-time analytics software to the controller prototype to aid such optimisation. "It is piece parts like this that prove the postulated benefits of SDN," he says.

The platforms used for the network includes 4 Terabit core switches with 400 Gigabit packet blades and optical and Optical Transport Network (OTN) transport using Ciena's 6500 and 5400 converged packet-optical product families, all configured using OpenFlow.

The 2500km network will connect Ciena’s headquarters in Hanover, Maryland with the company's R&D center in Ottawa. International connectivity is provided by Internet2 through the StarLight International/National Communications Exchange in Chicago and CANARIE, Canada's national optical fiber based advanced R&E network.

"If we look ten years down the road, the whole [software] stack - from the bottom of the network to the top of the back office - will look different to what it does today"

Testbed goals

The initial goal is to implement key SDN services and prove use cases. The open testbed will run indefinitely, says Janz: "SDN will unfold and we view the testbed as a standing platform that will change over time with new software and hardware." In effect, the testbed will implement an end-to-end infrastructure whose state is controlled in fine detail by a centralised controller.

Janz cites mass-customised network-as-a-service (NaaS) as one service SDN-in-the-WAN can enable.

Traditional Ethernet connectivity is a static service where the customer requests a given bandwidth and specifies the end points. "It is a very limited template and once it is locked in, the customer generally can't change it," says Janz.

SDN promises more sophisticated connection services. "Instead of defining just the end points, you can define virtual end points," says Janz. All sorts of parameters can then be specified: bandwidth, latency, availability and the restoration required, and these can be changed with time. Moreover, all can be ordered using an application programming interface (API) to the orchestration system at the customer's site.

"It would enable the customer to have many effective service pipes rather than one big one, and resolve and match each of them to a specific application need or flow," says Janz. The customer can then optimise them as the needs of each changes with time.

The benefits to the operator include better meeting the customer's needs, and an ability to charge across multiple service parameters, not just two. "That should be the path to greater revenue," says Janz.

The trick is managing such a system. "Can you price it effectively and know that you are targeting maximum revenue? Can you co-manage all these customer changes while respecting the changing service parameters of each?" says Janz. "You need the critical mass of piece parts to show that such a situation is workable and that, hey, I can make more money with a service like that."

Ovum on Infinera's Intelligent Transport Network strategy

Infinera announced that TeliaSonera International Carrier (TSIC) is extending the use of its DTN-X to its European network, having already adopted the platform in the US. Infinera has also outlined the next evolution in its networking strategy, dubbed the Intelligent Transport Network.

Dana Cooperson

Dana Cooperson

Gazettabyte asked Dana Cooperson, vice president and practice leader, and Ron Kline, principal analyst, both in the network infrastructure group at market research firm, Ovum, about the announcement and Infinera's outlined strategy.

What has been announced

TSIC is adding Infinera's DTN-X to boost network capacity in Europe and accommodate its own growing IP traffic. TSIC already has deployed 100 Gig technology in its European network, using a Coriant product. The wholesale operator will sell 100 Gig services, activating capacity using the DTN-X's 'instant bandwidth' feature based on already-lit 100 Gig light paths that make up its 500 Gigabit super-channels.

Meanwhile, Infinera has detailed its Intelligent Transport Network strategy that extends its digital optical network that performs optical-electrical-optical (OEO) conversion using its 500 Gig photonic integrated circuits (PICs) coupled with OTN (Optical Transport Network) switching to include additional features. These include multi-layer switching – reconfigurable optical add/drop multiplexers (ROADMs) and MPLS (Multi-Protocol Label Switching) – and PICs with terabit capacity

Q&A with Dana Cooperson and Ron Kline

Q. What is significant about Infinera's Intelligent Transport Network strategy?

Dana C: Infinera is being more public about its longer-term strategy - to 2020 - which includes evolving from its digital optical network messaging to a network that includes multiple layers and types of switching, and more automation. Infinera is not announcing more functionality availability now.

Infinera makes much play about its 500 Gig super-channels. More recently it has detailed such platform features as instant bandwidth and Fast Shared Mesh Protection supported in hardware. Are these features giving operators something new and is Infinera gaining market share as a result?

Dana C: Instant Bandwidth provides a way for Infinera’s operator customers to have their cake and eat it. They can install 500 Gig super-channels ahead of demand, and not pay for each 100 Gig sub-channel until they have a need for that bandwidth. It is a simple process at that point to 'turn on' the next 100 Gig worth of bandwidth within the super-channel.

By installing all five 100 Gig channels at once, the operator can simplify operations - lower opex - and allow quicker time-to-revenue without having to take the capex hit until the bandwidth needs materialise. This is an improvement over the DTN platform, which gave customers the 10x10 Gig architecture to let them pre-position bandwidth before the need for it materialised and save on opex, but at the cost of higher up-front capex than was ideal.

Talking to TSIC confirm that this added flexibility the DTN-X provides has allowed them to win wholesale business from competitors while tying capex more directly to revenue.

Ron K: Although pay-as-you go capability is available, analysis of 100 Gig shipments to date indicate most customers are paying for all five up front.

Dana C: I have not directly talked with an Infinera customer that has confirmed the benefit of Fast Shared Mesh Protection, but the feature certainly seems to be of value to customers and prospects. Our research indicates the continued search for better, more efficient mesh protection. Hardware-enabled protection should provide better latency (higher speed).

Ron K: Resiliency and mesh protection are critical requirements if you want to participate in the market. Shared mesh assumes that you have idle protection capacity available in case there is a failure. That is expensive. However, with Infinera’s technology - the PIC and Instant Bandwidth - it is not as difficult.

Restoration is all about speed – how fast can you get the network back up. It is not always milliseconds, sometimes it is half a minute. But during catastrophic failure events such as an earthquake, where a user can loose entire nodes, 30 seconds may not be so bad. Infinera has implemented the switch in hardware, based on a pre-planned map, so it is quicker.

Dana C: As for what impact these capabilities are having on market share, Infinera has climbed to the No.3 player in 100 Gig DWDM in three quarters since the DTN-X has become available.

They’ve jumped back up to No.4 globally in backbone WDM/CPO-T (converged packet optical transport) after sinking to sixth when they were losing share because they were without a viable 40 Gig solution. They made the right call at that time to focus on 100 Gig systems based on the 500 Gig PIC rather than chase 40 Gig. They are both keeping and expanding with existing DTN customers, TSIC being one, and picking up new customers.

Ron Kline

Ron Kline

Ron K:They are definitely picking up share. However, I’m not sure if they can sustain it. The reason for the share jump is they are selling 100 Gig, five at a time. Remember, most customers elect to pay for all five. That means future sales will lag because customers have pre-positioned the bandwidth.

Looking at the customers is probably a better indicator: Infinera has some 27 customers, maybe 30 by now, which provide a good embedded base. Still, 27 customers is low compared to Ciena, Alcatel-Lucent, Huawei and even Cisco.

When Infinera first announced the DTN-X in 2011 it talked about how it would add MPLS support. Now outlining its Intelligent Transport Network strategy it has still to announce MPLS support. Do operators not need this network feature yet in such platforms and if not, why?

Dana C: The market is still sorting out exactly what is needed for sub-wavelength switching and where it is needed. Cisco’s and Juniper’s approaches are very different in the routing world —essentially, a lower-cost MPLS blade for the CRS versus a whole new box in the PTX; there is no right way there.

Within packet-aware optical products, the same is true: What is the right level of integration of OTN versus MPLS? It depends on where you are in the network, what that carrier’s service mix is, and how fast the mix is changing.