u2t Photonics pushes balanced detectors to 70GHz

- u2t's 70GHz balanced detector supports 64Gbaud for test and measurement and R&D

- The company's gallium arsenide modulator and next-generation receiver will enable 100 Gigabit long-haul in a CFP2

"The performance [of gallium arsenide] is very similar to the lithium niobate modulator"

Jens Fiedler, u2t Photonics

u2t Photonics has announced a balanced detector that operates at 70GHz. Such a bandwidth supports 64 Gigabaud (Gbaud), twice the symbol rate of existing 100 Gigabit coherent optical transmission systems.

The German company announced a coherent photo-detector capable of 64Gbaud in 2012 but that had an operating bandwidth of 40GHz. The latest product uses two 70GHz photo-detectors and different packaging to meet the higher bandwidth requirements.

"The achieved performance is a result of R&D work using our experience with 100GHz single photo-detectors and balanced detector technology at a lower speed,” says Jens Fiedler, executive vice president sales and marketing at u2t Photonics.

The monolithically-integrated balanced detector has been sampling since March. The markets for the device are test and measurement systems and research and development (R&D). "It will enable engineers to work on higher-speed interface rates for system development," says Fiedler.

The balanced detector could be used in next-generation transmission systems operating at 64 Gbaud, doubling the current 100 Gigabit-per-second (Gbps) data rate while using the same dual-polarisation, quadrature phase-shift keying (DP-QPSK) architecture.

A 64Gbaud DP-QPSK coherent system would halve the number of super-channels needed for 400Gbps and 1 Terabit transmissions. In turn, using 16-QAM instead of QPSK would further halve the channel count - a single dual-polarisation, 16-QAM at 64Gbaud would deliver 400Gbps, while three channels would deliver 1.2Tbps.

However, for such a system to be deployed commercially the remaining components - the modulator, device drivers and the DSP-ASIC - would need to be able to operate at twice the 32Gbaud rate; something that is still several years out. That said, Fiedler points out that the industry is also investigating baud rates in between 32 Gig and 64 Gig.

Gallium arsenide modulator

u2t acquired gallium arsenide modulator technology in June 2009, enabling the company to offer coherent transmitter as well as receiver components.

At OFC/NFOEC 2013, u2t Photonics published a paper on its high-speed gallium arsenide coherent modulator. The company's design is based on the Mach-Zehnder modulator specification of the Optical Internetworking Forum (OIF) for 100 Gigabit DP-QPSK applications.

The DP-QPSK optical modulation includes a rotator on one arm and a polarisation beam combiner at the output. u2t has decided to support an OIF compatible design with a passive polarisation rotator and combiner which could also be integrated on chip. The resulting coherent modulator is now being tested before being integrated with the free space optics to create a working design.

"The performance [of gallium arsenide] is very similar to the lithium niobate modulator," says Fiedler. "Major system vendors have considered the technology for their use and that is still ongoing."

The gallium arsenide modulator is considerably smaller than the equivalent lithium niobate design. Indeed u2t expects the technology's power and size requirements, along with the company's coherent receiver, to fit within the CFP2 optical module. Such a pluggable 100 Gigabit coherent module would meet long-haul requirements, says Fiedler.

The gallium arsenide modulator can also be used within the existing line-side 100 Gigabit 5x7-inch MSA coherent transponder. Fiedler points out that by meeting the OIF specification, there is no space saving benefit using gallium arsenide since both modulator technologies fit within the same dimensioned package. However, the more integrated gallium arsenide modulator may deliver a cost advantage, he says.

Another benefit of using a gallium arsenide modulator is its optical performance stability with temperature. "It requires some [temperature] control but it is stable," says Fiedler.

Coherent receiver

u2t's current 100Gbps coherent receiver product uses two chips, each comprising the 90-degree hybrid and a balanced detector. "That is our current design and it is selling in volume," says Fiedler. "We are now working on the next version, according to the OIF specification, which is size-reduced."

The resulting single-chip design will cost less and fit within a CFP2 pluggable module.

The receiver might be small enough to fit within the even smaller CFP4 module, concludes Fiedler.

ROADMs and their evolving amplification needs

Technology briefing: ROADMs and amplifiers

Oclaro announced an add/drop routing platform at the recent OFC/NFOEC show. The company explains how the platform is driving new arrayed amplifier and pumping requirements.

A ROADM comprising amplification, line-interfaces, add/ drop routing and transponders. Source: Oclaro

A ROADM comprising amplification, line-interfaces, add/ drop routing and transponders. Source: Oclaro

Agile optical networking is at least a decade-old aspiration of the telcos. Such networks promise operational flexibility and must be scalable to accommodate the relentless annual growth in network traffic. Now, technologies such as coherent optical transmission and reconfigurable optical add/drop multiplexers (ROADMs) have reached a maturity to enable the agile, mesh vision.

Coherent optical transmission at 100 Gigabit-per-second (Gbps) has become the base currency for long-haul networks and is moving to the metro. Meanwhile, ROADMs now have such attributes as colourless, directionless and contentionless (CDC). ROADMs are also being future-proofed to support flexible grid, where wavelengths of varying bandwidths are placed across the fibre's spectrum without adhering to a rigid grid.

Colourless and directionless refer to the ROADM's ability to transmit or drop any light path from any direction or degree at any network interface port. Contentionless adds further flexibility by supporting same-colour light paths at an add or a drop.

"You can't add and drop in existing architectures the same colour [light paths at the same wavelength] in different directions, or add the same colour from a given transponder bank," says Bimal Nayar, director, product marketing at Oclaro's optical network solutions business unit. "This is prompting interest in contentionless functionality."

The challenge for optical component makers is to develop cost-effective coherent and CDC-flexgrid ROADM technologies for agile networks. Operators want a core infrastructure with components and functionality that provide an upgrade path beyond 100 Gigabit coherent yet are sufficiently compact and low-power to minimise their operational expenditure.

ROADM architectures

ROADMs sit at the nodes of a mesh network. Four-degree nodes - the node's degree defined as the number of connections or fibre pairs it supports - are common while eight-degree is considered large.

The ROADM passes through light paths destined for other nodes - known as optical bypass - as well as adds or drops wavelengths at the node. Such add/drops can be rerouted traffic or provisioned new services.

Several components make up a ROADM: amplification, line-interfaces, add/drop routing and transponders (see diagram, above).

"With the move to high bit-rate systems, there is a need for low-noise amplification," says Nayar. "This is driving interest in Raman and Raman-EDFA (Erbium-doped fibre amplifier) hybrid amplification."

The line interface cards are used for incoming and outgoing signals in the different directions. Two architectures can be used: broadcast-and-select and route-and select.

With broadcast-and-select, incoming channels are routed in the various directions using a passive splitter that in effect makes copies the incoming signal. To route signals in the outgoing direction, a 1xN wavelength-selective switch (WSS) is used. "This configuration works best for low node-degree applications, when you have fewer connections, because the splitter losses are manageable," says Nayar.

For higher-degree node applications, the optical loss using splitters is a barrier. As a result, a WSS is also used for the incoming signals, resulting in the route-and-select architecture.

Signals from the line interface cards connect to the routing platform for the add/drop operations. "Because you have signals from any direction, you need not a 1xN WSS but an LxM one," says Nayar. "But these are complex to design because you need more than one switching plane." Such large LxM WSSes are in development but remain at the R&D stage.

Instead, a multicast switch can be used. These typically are sized 8x12 or 8x16 and are constructed using splitters and switches, either spliced or planar lightwave circuit (PLC) based .

"Because the multicast switch is using splitters, it has high loss," says Nayar. "That loss drives the need for amplification."

Add/drop platform

With an 8-degree-node CDC ROADM design, signals enter and exit from eight different directions. Some of these signals pass through the ROADM in transit to other nodes while others have channels added or dropped.

In the Oclaro design, an 8x16 multicast switch is used. "Using this [multicast switch] approach you are sharing the transponder bank [between the directions]," says Nayar.

The 8-degree node showing the add/drop with two 8x16 multicast switches and the 16-transponder bank. Source: Oclaro

A particular channel is dropped at one of the switch's eight input ports and is amplified before being broadcast to all 16, 1x8 switches interfaced to the 16 transponders.

It is the 16, 1x8 switches that enable contentionless operation where the same 'coloured' channel is dropped to more than one coherent transponder. "In a traditional architecture there would only be one 'red' channel for example dropped as otherwise there would be [wavelength] contention," says Nayar.

The issue, says Oclaro, is that as more and more directions are supported, greater amplification is needed. "This is a concern for some, as amplifiers are associated with extra cost," says Nayar.

The amplifiers for the add/drop thus need to be compact and ideally uncooled. By not needing a thermo-electrical cooler, for example, the design is cheaper and consumes less power.

The design also needs to be future-proofed. The 8x16 add/ drop architecture supports 16 channels. If a 50GHz grid is used, the amplifier needs to deliver the pump power for a 16x50GHz or 800GHz bandwidth. But the adoption of flexible grid and super-channels, the channel bandwidths will be wider. "The amplifier pumps should be scalable," says Nayar. "As you move to super-channels, you want pumps that are able to deliver the pump power you need to amplify, say, 16 super-channels."

This has resulted in an industry debate among vendors as to the best amplifier pumping scheme for add/drop designs that support CDC and flexible grid.

EDFA pump approaches

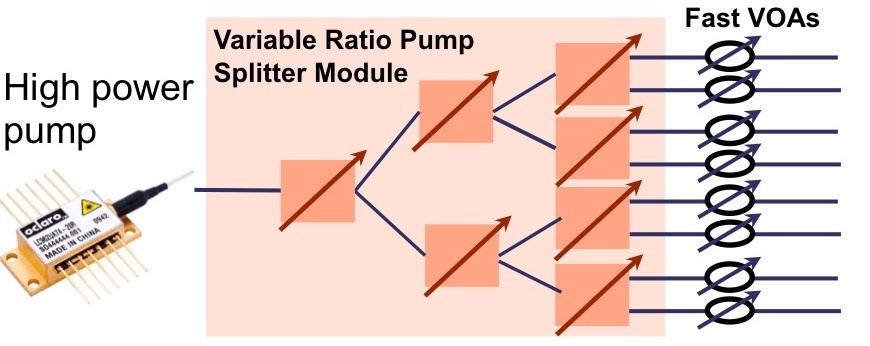

Two schemes are being considered. One option is to use one high-power pump coupled to variable pump splitters that provides the required pumping to all the amplifiers. The other proposal is to use discrete, multiple pumps with a pump used for each EDFA.

Source: Oclaro

Source: Oclaro

In the first arrangement, the high-powered pump is followed by a variable ratio pump splitter module. The need to set different power levels at each amplifier is due to the different possible drop scenarios; one drop port may include all the channels that are fed to the 16 transponders, or each of the eight amplifiers may have two only. In the first case, all the pump power needs to go to the one amplifier; in the second the power is divided equally across all eight.

Oclaro says that while the high-power pump/ pump-splitter architecture looks more elegant, it has drawbacks. One is the pump splitter introduces an insertion loss of 2-3dB, resulting in the pump having to have twice the power solely to overcome the insertion loss.

The pump splitter is also controlled using a complex algorithm to set the required individual amp power levels. The splitter, being PLC-based, has a relatively slow switching time - some 1 millisecond. Yet transients that need to be suppressed can have durations of around 50 to 100 microseconds. This requires the addition of fast variable optical attenuators (VOAs) to the design that introduce their own insertion losses.

"This means that you need pumps in excess of 500mW, maybe even 750mW," says Nayar. "And these high-power pumps need to be temperature controlled." The PLC switches of the pump splitter are also temperature controlled.

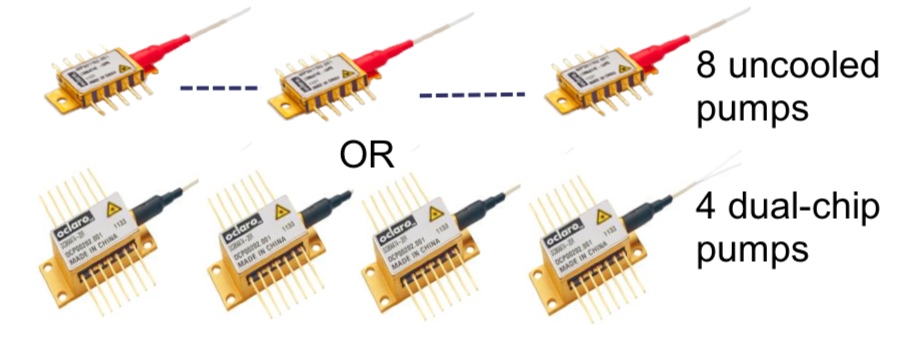

The individual pump-per-amp approach, in contrast, in the form of arrayed amplifiers, is more appealing to implement and is the approach Oclaro is pursuing. These can be eight discrete pumps or four uncooled dual-chip pumps, for the 8-degree 8x16 multicast add/drop example, with each power level individually controlled.

Source: Oclaro

Source: Oclaro

Oclaro says that the economics favour the pump-per-amp architecture. Pumps are coming down in price due to the dramatic price erosion associated with growing volumes. In contrast, the pump split module is a specialist, lower volume device.

"We have been looking at the cost, the reliability and the form factor and have come to the conclusion that a discrete pumping solution is the better approach," says Nayar. "We have looked at some line card examples and we find that we can do, depending on a customer’s requirements, an amplified multicast switch that could be in a single slot."

Achieving 56 Gigabit VCSELs

A Q&A with Finisar's Jim Tatum, director of new product development. Tatum talks about the merits of the vertical-cavity surface-emitting laser (VCSEL) and the challenges to get VCSELs to work at 56 Gigabit.

Briefing: VCSELs

VCSELs galore! A wafer of 28 Gig devices Source: Finisar

VCSELs galore! A wafer of 28 Gig devices Source: Finisar

Q. What are the merits of VCSELs compared to other laser technologies?

A: VCSELs have been a workhorse for the datacom industry for some 15 years. In that time there have been some 500 million devices deployed for data infrastructure links, with Finisar being a major producer of these VCSELs.

The competition is copper which means you need to be at a cost that makes such [optical] links attractive. This is where VCSELs have value: operating at 850nm which means running on multi-mode fibre.

Coupling VCSELs to multi-mode fibre [the core diameter] is in the tens of microns whereas it is one micron for single-mode fibre and that is where the cost is. Also with VCSELs and multi-mode fibre, we don't need optical isolators which add significant cost to the assemblies. It is not the cost of the laser die itself; the difference in terms of the link [approaches] is the cost of the optics and getting light in and out of the fibre.

There are also advantages to the VCSEL itself: wafer-level testing that allows rapid testing of the die before you commit to further packaging costs. This becomes more important as the VCSEL speed gets higher.

What are the differences with 850nm VCSELs compared to longer wavelength (1300nm and 1550nm) VCSELs?

At 850nm you are growing devices that are all epitaxial - the laser mirrors are grown epitaxially and the quantum wells are grown in one shot. At the other wavelengths, it is much harder.

People have managed it at 1300nm but it is not yet proven to be a reliable material system for getting high-speed operation. When you go to 1550nm, you are doing wafer bonding of the mirrors and active regions or you are doing more complex epitaxial processing.

That is where 850nm VCSELs has a nice advantage in that the whole thing is done in one shot; the epitaxy and the fabrication are relatively simple. You don't have the complex manufacturing of chip parts that you do at 1550nm.

What link distances are served by 850nm VCSELs?

The longest standards are for 500m. As we venture to higher speeds - 28 Gigabit-per-second (Gbps) - 100m is more the maximum. And this trend will continue, at 56Gbps I would anticipate less than 50m and maybe 25m.

The good news is that the number of links that become economically viable at those speeds grows exponentially at these shorter distances. Put another way, copper is very challenged at 56Gbps lane rates and we'll see optics and VCSEL technology move inside the chassis for board-to-board and even chip-to-chip interconnects. Such applications will deliver much higher volumes.

"Taking that next step - turning the 28Gbps VCSEL into a product - is where all the traps lie"

What are the shortest distances?

There are the edge-mounted connections and those are typically 1-5m. There is also a lot of demonstrated work with VCSELs on boards doing chip-to-chip interconnect. That is a big potential market for these devices as well.

The 28Gbps VCSEL has been demonstrated but commercial products are not yet available. It is difficult to sense whether such a device is relatively straightforward to develop or a challenge.

Achieving a 28Gbps VCSEL is hard. Certainly there have been many companies that have demonstrated a modulation capability at that speed. However, it is one thing to do it one time, another to put a reliable VCSEL product into a transceiver with everything around it.

Taking that next step - turning the 28Gbps VCSEL into a product - is where all the traps lie. That is where the bulk of the work is being done today. Certainly this year there will be 25Gbps/ 28Gbps products out in customers' hands.

"With a VCSEL, you have to fill up a volume of active region with enough carriers to generate photons and you can only put in so many, so fast. The smaller you can make that volume, the faster you can lase."

What are the issues that dictate a VCSEL's speed?

When you think about going to the next VCSEL speed, it helps to think about where we came from.

All the devices shipped, from 1 to 10 Gig, had gallium arsenide active regions. It has lots of wonderful attributes but one of its less favourable ones is that it is not the highest speed. Going to 14Gbps and 28Gbps we had to change the active region from gallium arsenide to indium gallium arsenide and that gives us an enhancement of the differential gain, a key parameter for controlling speed.

What you really want to do when you are dealing with speed is that for every incremental bit of current I give the [VCSEL] device, how much more does that translate into gain, or more photons coming out? If you can make that happen more efficiently, then the edge speed of the device increases. In other words, you don't have to deal with other parasitics - carriers going into non-recombination centres and that sort of thing; everything is going into the production of photons rather than other parasitic things.

With a VCSEL, you have to fill up a volume of active region with enough carriers to generate photons and you can only put in so many, so fast. The smaller you can make that volume, the faster you can lase.

Differential gain is a measure of the efficiency in terms of the number of photons generated by a particular carrier. If I can increase that efficiency of making photons, then my transition speed and my edge speed of the laser increases.

Shown is the chart on the y-axis is the differential gain and on the x-axis is the current density going into the part. The decay tells you that if I'm running really high currents, the differential gain is worse for indium gallium arsenide parts. So you want to operate your device with a carrier density that maximises the differential gain.

Part of that maximisation is using less carriers in smaller quantum wells so that it ramps up the curve. You want to operate at a lower current density while also doing a better job of each carrier transitioning into photons.

What else besides differential gain dictates VCSEL performance?

The speed of the laser increases above threshold as the square root of the current. That gives you a return-on-investment in terms of how much current you put into the device.

However, the reliability of the part degrades with the cube of the current you put into it. So you get to a boundary condition where speed varies as the square root of the current and you have the reliability which is degrading with the cube of the current. The intersection of those two points is where you are willing to live in terms of reliability.

That is the trade-off we constantly have to deal with when designing lasers for high speed communications.

Having explained the importance of this region of operation, what changes in terms of the laser when operating at 28Gbps and at 56Gbps?

At 14Gbps and even at 28Gbps the lasers are directly modulated with little analogue trickery. That said, 28Gbps Fibre Channel does allow you to use equalisation at the receiver.

My feeling today is that at 56Gbps, direct modulation of the laser is going to be pretty tricky. At that speed there is going to have to be dispersion compensation or equalisation built into the optical system.

There are a lot of ways to incorporate some analogue or even digital methods to reduce the effective bandwidth of the device from 56Gbps to running less. One of these is a little bit of pre-emphasis and equalisation. Another way is to use analogue modulation levels. Alternatively, you can start borrowing a whole lot more from the digital communication world and look at sub-carrier multiplexing or other more advanced modulation schemes. In other words pull the bandwidth of the laser down instead of doing 1, 0 on-off stuff. At 56 Gig those things are going to be a requirement.

The bottom line is that a 28Gbps VCSEL design maybe something pretty similar to a 56 Gig part with the addition of bandwidth enhancements techniques.

"I can see [VCSEL] modulation rates going to 100Gbps"

So has VCSEL technology already reached its peak?

In terms of direct modulation of a VCSEL - pushing current into it and generating photons - 28 Gig is a reasonable place. And 56 Gig or 40Gig VCSELs may happen with some electronic trickery around it.

The next step - and even at 56Gbps - there is a fair amount of investigation of alternate modulation techniques for VCSELs.

Instead of modulating the current in the active region, you can do passive modulation of an external absorber inside the epitaxial structure. That starts to look like a modulated laser you would see in the telecom industry but it is all grown expitaxially. Once you are modulating a passive component, the modulation speed can get significantly higher. I can see modulation rates going to 100Gbps, for example.

The VCSEL roadmap isn't running out then, but it is getting more complicated. Will it take longer to achieve each device transition: from 28 to 56Gbps, and from 56Gbps to 112Gbps?

A question that is difficult to answer.

The time line will probably scale out every time you try to scale the bandwidth. But maybe not if you are able to do things like combine other technologies at 56Gbps or you do things that are more package related. For example, one way to achieve a 56 Gig link is to multiplex two lasers together on a multi-core fibre. That is significantly less challenging thing to do from a technology development point of view than lasers fundamentally capable of 56Gbps. Is such a solution cost optimised? Well, it is hard to say at this point but it may be time-to-market optimised, at least for the first generation.

Multi-core fibre is one way, another is spatial-division multiplexing. In other words, coarse WDM, making lasers at 850nm, 980nm, 1040nm - a whole bunch of different colours and multiplexing them.

There is more than one way to achieve a total aggregate throughput.

Does all this make your job more interesting, more stressful, or both?

It means I have options in my job which is always a good thing.

Optical transceiver market to grow 50 percent by 2017

- The optical transceiver market will grow to US $5.1bn in 2017

- The fierce price declines of 2012 will lessen during the forecast period

- Stronger traffic growth could have a significant positive effect on transceiver market growth

"The price declines in 2012 were brutal but they will not happen again [during the forecast period]"

"The price declines in 2012 were brutal but they will not happen again [during the forecast period]"

Vladimir Kozlov, LightCounting

The global optical transceiver market will grow strongly over the next five year to $5.1bn in 2017, from $3.4bn in 2012. So claims market research company, LightCounting, in its latest telecom and datacom forecast.

"That [market value] does not include tunable lasers, wavelength-selective switches, pump lasers and amplifiers which will add some $1bn or $2bn more [in 2017]," says Vladimir Kozlov, CEO of LightCounting.

One key assumption underpinning the forecast is that competitive pressures will ease. "The price declines in 2012 were brutal but they will not happen again [during the forecast period]," says Kozlov.

Optical transceivers

The optical transceiver market saw price declines as high as 30 percent last year. These were not new products ramping in volume where sharp price declines are to be expected, says Kozlov. Last year also saw fierce competition among the service providers while the steepest price declines were experienced by the telecom equipment makers.

One optical transceiver sector that performed well last year is high-speed optical transceivers and in particular Ethernet.

The 100 Gigabit Ethernet (GbE) market saw revenue growth due to strong demand for the 100GBASE-LR4 10km transceiver even though its unit price declined 30 percent. This is a sector the Chinese optical transceiver players are eyeing as they look to broaden the markets they address.

One unheralded market that did well was 40 Gigabit transceivers for telecoms and the data centre. "This is 40 Gig short reach mostly - up to 100m - but also 10km reach transceivers did well in the data centre," says Kozlov.

LightCounting expects the steady growth of 40GbE to continue; 40GbE transceivers use 10 Gig technology co-packaged into one module, offer improved port density and have a lower power and cost compared to four 10GbE transceivers.

Even the veteran 10GbE market continues to grow. Some 7-8M 10GbE short reach and long reach units were sold in 2012 growing to 10M units this year.

Meanwhile, the 100 Gigabit coherent long-haul transponder market was small in 2012. The optical vendors only started selling in volume last year and most of the system vendors manufacture their own 100 Gigabit-per-second (Gbps) designs using discrete components. "Those companies that sell modulators and receivers for 100 Gig did really well in 2012," says Kozlov.

LightCounting expects the 100Gbps coherent transponder market will grow in 2013 as system vendors embrace more third-party 100 Gig transponders. "We estimate that the optical transceiver vendors captured 10-15 percent of the 40 and 100 Gig market and this will grow to 18-20 percent in 2013," says Kozlov.

Other markets that grew in 2012 include optical access. The fibre-to-the-x (FTTx) continues to grow in terms of units shipped, with transceivers and board optical sub-assembly (BOSA) designs sharing the volumes.

LightCounting says that the number of optical network units (ONU) exceeded by more than double the number of FTTx subscribers added in 2012: 35-40M ONU transceivers and BOSAs compared to 15M new subscribers.

The result was a market value of $700M in 2012 compared to $300M in 2009. But because of the excess in shipments compared to new subscribers, Kozlov expects the FTTx market to slow down. "That is probably a sure sign that it is going to grow again," he quips.

Market expectations

Kozlov will be watching how the optical interconnect market does this year. The active optical cable market did well in 2012 and this is likely to continue. Kozlov is interested to see if silicon photonics starts to make its mark in the transceiver market, citing as an example Cisco's in-house silicon photonics-based CPAK transceiver. He also expects the 40G and 100Gbps module makers to do well.

LightCounting stresses the wide discrepancy between video traffic growth through 2017 as forecast by Bell Labs and by Cisco Systems. This is important because the optical transceiver forecast model developed by LightCounting is sensitive to traffic growth. LightCounting has averaged the two forecasts but if video traffic grows more quickly, the overall transceiver market will exceed the market research company's 2017 forecast.

Another reason why Kozlov is upbeat about the market's prospects is that while the system vendors suffered the sharpest price declines - up to 35 percent in 2012 - this will not continue.

The sharp falls in equipment prices were due largely to the fierce competition provided by the Chinese giants Huawei and ZTE. But relief is expected with government initiatives in Europe and the United States to limit the influence of Huawei and ZTE, says Kozlov.

The U.S. government has effectively restricted sales of Huawei and ZTE networking equipment to major U.S. carriers due to cyber security concerns, while the European Commission has determined that Huawei and ZTE are both inflicting damage on European equipment vendors by dumping products onto the European market.

Transmode's evolving packet optical technology mix

- Transmode adds MPLS-TP, Carrier Ethernet 2.0 and OTN

- The three protocols make packet transport more mesh-like and service-aware

- The 'native' in Native Packet Optical 2.0 refers to native Ethernet

Transmode has enhanced its metro and regional network equipment to address the operators' need for more efficient and cost-effective packet transport.

“Native Packet Optical 2.0 extends what the infrastructure can do, with operators having the option to use MPLS-TP, Carrier Ethernet 2.0 and OTN, making the network much more service-aware”

Jon Baldry, Transmode

Three new technologies have been added to create what Transmode calls Native Packet Optical 2.0 (NPO2.0). Multiprotocol Label Switching - Transport Profile (MPLS-TP) was launched in June 2012 to which has now been added the Metro Ethernet Forum's (MEF) latest Carrier Ethernet 2.0 (CE2.0) standard. The company will also have line cards that support Optical Transport Network (OTN) functionality from April 2013.

Until several years ago operators had distinct layer 2 and layer 1 networks. “The first stage of the evolution was to collapse those two layers together,” says Jon Baldry, technical marketing director at Transmode. “NPO2.0 extends what the infrastructure can do, with operators having the option to use MPLS-TP, CE2.0 and OTN, making the network much more service-aware.”

By adopting the enhanced capabilities of NPO2.0, operators can use the same network for multiple services. “A ROADM based optical layer with native packet optical at the wavelength layer,” says Baldry. “That could be a switched video distribution network or a mobile backhaul network; doing many different things but all based on the same stuff.”

Transmode uses native Ethernet in the metro and OTN for efficient traffic aggregation. “We are using native Ethernet frames as the payload in the metro,” says Baldry. “A 10 Gig LAN PHY frame that is moved from node to node, once it is aggregated from Gigabit Ethernet to 10 Gig Ethernet; we are not doing Ethernet over SONET/SDH or Ethernet over OTN.”

Shown are the options as to how layer 2 services can be transported and interfaced to multiple core networks. The Ethernet muxponder supports MPLS-TP, native Ethernet and the option for OTN, all over a ROADM-based optical layer. “It is not just a case of interfacing to three core network types, we can be aware of what is going on in these networks and switch traffic between types,” says Transmode's Jon Baldry. Note: EXMP is the Ethernet muxponder. Source: Transmode.

Once the operator no longer needs to touch the Ethernet traffic, it is then wrapped in an OTN frame for aggregation and transport. This, says Baldry, means that unnecessary wrapping and unwrapping of OTN frames is avoided, with OTN being used only where needed.

There are economical advantages in adopting NPO2.0 for an operator delivering layer 2 services. There are also considerable operational advantages in terms of the way the network can be run using MPLS-TP, the service types offered with CE2.0, and how the metro network interworks with the core network, says Baldry.

MPLS-TP and Carrier Ethernet 2.0

Introducing MPLS-TP and the latest CE2.0 standard benefits transport and services in several ways, says Baldry.

MPLS-TP provides better traffic engineering as well as working practices similar to SONET/SDH that operators are familiar with. “MPLS-TP creates a transport-like way of dealing with Ethernet which is good for operators having to move from a layer-1-only world to a packet world,” says Baldry. MPLS-TP is also claimed to have a lower total cost of ownership compared to IP/MPLS when used in the metro.

The protocol is also more suited to the underlying infrastructure. “Quite a lot of the networks we are deploying have MPLS-TP running on top of a ROADM network, which is naturally mesh-like,” says Baldry.

In contrast Ethernet provides mainly point-to-point and ring-based network protection mechanisms; there is no support for mesh-based restoration. This resiliency option is supported by MPLS-TP with its support of mesh-styled ‘tunnelling’. A MPLS-TP tunnel creates a service layer path over which traffic is sent.

“You can build tunnels and restoration paths through a network in a way that is more suited to the underlying [ROADM-based] infrastructure, thereby adding resiliency when a fibre cut occurs,” says Baldry.

MPLS-TP also benefits service scalability. It is much easier to create a tunnel and its protection scheme and define the services at the end points than to create many individual circuits across the network, each time defining the route and the protection scheme.

“Because MPLS-TP is software-based, we can mix and match MPLS-TP and Ethernet on any port,” says Baldry. “You can use MPLS-TP as much or as little as you like over particular parts of the network.”

The second new technology, the MEF’s Carrier Ethernet 2.0, benefits services. The MEF has extended the range of services available, from three to eight with CE2.0, while improving class-of-service handling and management features.

Transmode says its equipment is CE2.0 compliant and suggests its systems will become CE2.0-certified in the new year.

Hardware

The packet-optical products of Transmode comprise the TM-Series transport platforms and Ethernet demarcation units.

The company's single and double slot cards - Ethernet muxponders – fit into the TM-Series transport platforms. The single-slot Ethernet muxponder has ten, 1 Gigabit Ethernet (GbE) and 2x10GbE interfaces while the double-slot card supports 22, 1GbE and 2x10GbE interfaces. Transmode also offers 10GbE only cards: the single slot is 4x10GbE and the double-slot has 8x10GbE interfaces. These cards are software upgradable to support MPLS-TP and the MEF’s CE2.0.

“In early 2013, we are introducing a couple of new cards – enhanced Ethernet muxponders – with more gutsy processors and optional hardware support for OTN on 10 Gigabit lines,” says Baldry.

The Ethernet demarcation unit, also known as a network interface device (NID), is a relatively small unit that resides for example at a cell site. The unit undertakes such tasks as defining an Ethernet service and performance monitoring. The box or rack mounted units have Gigabit Ethernet uplinks and interface to Transmode’s platforms.

Baldry cites the UK mobile operator, Virgin Media, which is using its platforms for mobile backhaul. Here, the Ethernet demarcation units reside at the cell sites, and at the first aggregation point the10- or 22-port GbE card is used. These Ethernet muxponder cards then feed 10GbE pipes to the 4- or 8-port 10GbE cards.

“For the first few thousand cell sites there are hundreds of these aggregation points,” says Baldry. “And those aggregation points go back to Virgin Media’s 50-odd main sites and it is at those points we put the 8x 10GbE cards.” Thus the traffic is backhauled from the edge of the network and aggregated before being handed over as a 10GbE circuit to Virgin Media’s various radio network controller (RNC) sites.

Transmode says that half of it customers use its existing native packet optical cards in their networks. Since MPLS-TP and CE2.0 are software options, these customers can embrace these features once they are required.

However, operators will only likely start deploying CE2.0-based services once Transmode’s offering becomes certified.

Further reading:

Detailed NPO2.0 application note, click here

ECI Telecom’s next-generation metro packet transport family

- The Native Packet Transport (NPT) family targets the cost-conscious metro network

- Supports Ethernet, MPLS-TP and TDM

- ECI claims a 65% lower total cost of ownership using MPLS-TP and native TDM

NPT's positioning as part of the overall network. Source: ECI Telecom

NPT's positioning as part of the overall network. Source: ECI Telecom

ECI Telecom has announced a product line for packet transport in the metro. The Native Packet Transport (NPT) family aims to reduce the cost of operating packet networks while supporting traditional time division multiplexing (TDM) traffic.

“Eventually, in terms of market segments, it [NPT] is going to replace the multi-service provisioning platform,” says Gil Epshtein, product market manager at ECI Telecom. “The metro is moving to packet and so it is moving to new equipment to support this shift.”

The NPT is ECI’s latest optimised multi-layer transport (OMLT) architecture, and is the feeder or aggregator platform to the optical backbone, addressed by the company's Apollo OMLT product family announced in 2011.

“The whole point of shifting to packet is to lower the [transport] cost-per-bit”

“The whole point of shifting to packet is to lower the [transport] cost-per-bit”

Gil Epshtein, ECI Telecom

Packet transport issues

“Building carrier-grade packet transport is proving more costly than anticipated,” says Epshtein. “Yet the whole point of shifting to packet is to lower the [transport] cost-per-bit.”

Several packet control plane schemes can be used for the metro, a network that can be divided further into the metro core and metro access/ aggregation. The two metro segments can use either IP/MPLS (Internet Protocol/ Multiprotocol Label Switching) or MPLS-TP (Multiprotocol Label Switching Transport Profile). Alternatively, the two metro segments can use different schemes: the metro core IP/MPLS and metro access MPLS-TP, or MPLS-TP for the core and Ethernet for metro access.

Based on total cost of ownership (TCO) analysis, ECI argues that the most cost-effective packet control plane scheme is MPLS-TP. “The NPT product line is based on MPLS-TP, designed to simplify and make MPLS affordable for transport networks,” says Epshtein.

Three issues contribute to the cost of building and operating packet-based transport. The first is capital expenditure (capex) – the cost of the equipment and what is needed to make the network carrier grade such as redundancy and availability.

The second is operational expenditure or opex. Factors include the training and expertise needed by the staff, and their number and salaries. In turn, issues such as network availability, equipment footprint and the power consumption requirements.

“More and more operators view opex as a key factor in their TCO considerations,” says Epshtein. Operators look at the entire network and want to know what its cost of operation will be.

A third cost factor is the existence of both TDM and packet data in the operators’ networks. “When you look at the overall TCO, you need to take this into consideration,” says Epshtein. For some operators it [TDM] is more significant but it is always there, he says.

The NPT family is being aimed at various customers. One is operators that want to extend MPLS from the core to the metro network. “Here, TDM is not a factor,” says Epshtein. “We find this in wireless backhaul, in triple-play, carriers-of-carriers and business applications.” The second class of operators is those with legacy TDM traffic. Also being targeted are utilities. “Here reliability and security are key.”

Analysis

The choice of packet control plane - whether to use IP/MPLS or MPLS-TP - impacts both capex and opex. How the TDM traffic is handled, whether using circuit emulation over packets or native TDM, also impacts overall costs.

According to ECI, the number of network elements grows some tenfold with each segment transition towards the network edge. In the network core there are 100s of network elements, 1000s in the metro core and 10,000s in the metro access. The choice of packet control plane for these network elements clearly impacts the overall cost, especially in the cost-conscious metro as the number of platforms grows. “A network element based on MPLS-TP is lower cost than IP/MPLS,” says Epshtein. “The main reason being it is a lot less complex.”

He stresses that MPLS-TP is not a competing standard to IP/MPLS; IP/MPLS is the defacto standard in the network core. Rather, MPLS-TP is a derivative designed for transport. The debate here, says Epshtein, is what is best for metro.

“The main difference between the two standards is the control plane, not the data plane,” says Epshtein. MPLS-TP removes unnecessary control plane functions supported by IP/MPLS leading to simpler metro platform functionality, and simpler management and operation of the equipment. “We believe MPLS-TP is more suited to the metro due to its simplicity, scalability and capex benefits.”

Working with market research company, ACG Research, the TCO analysis (opex and capex) over five years using MPLS-TP was 55% lower than using IP/MPLS for metro packet transport (with no TDM traffic).

The cost savings was even greater with both packet and some TDM traffic.

Using the NPT, capex goes up 5% due to the line cards needed to support native TDM traffic. But for IP/MPLS using circuit emulation capex increases 37%, resulting in the NPT having a 66% lower capex overall. The resulting opex is also 64% lower. Overall TCO is lowered by 65% using MPLS-TP and native TDM compared to IP/MPLS and circuit emulation.

NPT portfolio

ECI says its NPT supports circuit emulation and native TDM. Having circuit emulation enables the network to converge to packet only. But native TDM simplifies the interfacing to legacy networks and also has lower latency than circuit emulation.

The NPT packet switch and TDM switch fabrics and the traffic types carried over each. Source: ECI Telecom

There are five NPT platforms ranging from the NPT-1020 for metro access to the NPT-1800 for the metro core. The NPT-1020 has a 10 or 50 Gigabit packet switch capacity option and a TDM capacity of 2.5 Gigabit. The NPT-1800 has a packet switching capacity of 320 or 640 Gigabit and 120 Gigabit for TDM.

The metro aggregation NPT-1600 and 1600c (160 Gig packet/120 Gig TDM capacity) platforms are available now. The remaining platforms will be available in the first half of 2013.

ECI says it has already completed several trials with existing and new customers. "We have already won a few deals," says Epshtein.

The platforms are managed using ECI’s LightSoft software, the same network management system used for the Apollo. ECI has added software specifically for packet transport including service provisioning, performance management and troubleshooting.

Further information, click here.

Infinera adds software to its PIC for instant bandwidth

Infinera has enabled its DTN-X platform to deliver rapidly 100 Gigabit services. The ability to fulfill capacity demand quickly is seen as a competitive advantage by operators. Gazettabyte spoke with Infinera and TeliaSonera International Carrier, a DTN-X customer, about the merits of its 'instant bandwidth' and asked several industry analysts for their views.

Infinera has added a WDM line card hosting its 500 Gigabit super-channel photonic integrated circuit to its DTN-X platform

Infinera has added a WDM line card hosting its 500 Gigabit super-channel photonic integrated circuit to its DTN-X platform

Pravin Mahajan, Infinera.

Infinera is claiming an industry first with the software-enablement of 100 Gigabit capacity increments. The company's DTN-X platform's 'instant bandwidth' feature shortens the time to add new capacity in the network, from weeks as is common today to less than a day.

The ability to add bandwidth as required is increasingly valued by operators. TeliaSonera International Carrier points out that its traffic demands are increasingly variable, making capacity requirements harder to forecast and manage.

"It [the DTN-X's instant bandwidth] enables us to activate 100 Gig services between network spans to manage our own IP traffic which is growing rapidly," says Ivo Pascucci, head of sales, Americas at TeliaSonera International Carrier. "We will also be able to sell in the market 100 Gig services and activate the capacity much more rapidly."

What has been done

Infinera has added three elements to enable its DTN-X platform to enable 100 Gigabit services.

One is a new wavelength division multiplexing (WDM) line card that features its 500 Gigabit-per-second (Gbps) super-channel photonic integrated circuit (PIC). Infinera says the line card has 500Gbps of capacity enabled, of which only 100Gbps is activated. "The remaining 400Gbps is latent, waiting to be activated," says Pravin Mahajan, director of corporate marketing and messaging at Infinera.

Infinera uses the DTN-X's Optical Transport Network (OTN) switch fabric to pack the client side signals onto any of the 100Gbps channels activated on the line side. This capacity pool of up to 500 Gbps, says Infinera, results in better usage of backbone capacity compared to traditional optical networking equipment based on individual 100Gbps 'siloed' channels.

A software application has also been added to Infinera's network management system, the digital network administrator (DNA), to activate the 100Gbps capacity increments.

Lastly, Infinera has in place a just-in-time system that enables client-side 10 Gigabit Ethernet optical transceivers to be delivered to customers within 10 days, if they out of stock. Infinera says it is achieving a 6-day delivery time in 95% of the cases.

Advantages

TeliaSonera International Carrier confirms the advantages to having 100 Gigabit capacities pre-provisioned and ready for use.

"Having the ability to turn up large bandwidth is critical to our business, especially as the [traffic] numbers continue to grow"

"Having the ability to turn up large bandwidth is critical to our business, especially as the [traffic] numbers continue to grow"

Ivo Pascucci, TeliaSonera International Carrier

"If it is individual line cards across the network when you have as many PoPs as we do, it does get tricky," says Pascucci. "If we have 500 Gig channels pre-provisioned with the ability to activate 100 Gig segments as needed, that gives us an advantage versus having to figure out how many line cards to have deployed in which nodes, and forecasting which nodes should have the line cards in the first place."

The operator is already seeing demand for 100 Gigabit services, from the carrier market and large content providers. The operator already provides 10x10Gbps and 20x10Gbps services to customers. "With that there are all the challenges of provisioning ten or 20 10 Gig circuits and 10 or 20 cross-connects for each site," says Pascucci. The operator also manages one and two Terabits of network capacity for certain customers.

"Having the ability to turn up large bandwidth is critical to our business, especially as the [traffic] numbers continue to grow," says Pascucci.

Analysts' comments

Gazettabye asked several industry analysts about the significance of Infinera's announcement. In particular the uniqueness of the offering, the claim to reduce rapidly bandwidth enablement times and its importance for operators.

Infonetics Research

Andrew Schmitt, directing analyst for optical

Schmitt believes Infinera's announcement is significant as it is the first announced North American win. It also shows the company has a solution for carriers that only want to roll out a single 100 Gbps but don't want to buy 500Gbps.

More importantly, it should allow some carriers to deploy extra capacity for future use at no cost to them and that opens up interesting possibilities for automatically switched optical network (ASON) management or even software-defined networking (SDN).

"As to the claim that it reduces capacity enablement from weeks to potential minutes, to some degree, yes," says Schmitt.

Certainly Ciena, Alcatel-Lucent or Cisco could ship extra line cards into customers and not charge the customer until they are used and that would effectively achieve the same result. "But if the PIC truly has better economics than the discrete solutions from these vendors then Infinera can ship hardware up front and then recognise the profits on the back end," he says.

"You simply can't predict where the best places to put bandwidth will be"

In turn, if customers get free inventory management out of the deal and Infinera equipment can support that arrangement more economically, that is a significant advantage for Infinera.

"This instant bandwidth is unique to Infinera. As I said, anyone could do this deal. But you need a hardware cost structure that can support it or it gets expensive quickly," says Schmitt. "Everyone is working on super-channels but it is clear from the legacy of the way the 10 Gig DTN hardware and software worked that Infinera gets it."

Schmitt believes the term super-channel is abused. He prefers the term virtualised bandwidth - optical capacity that can be allocated the same way server or storage resources are assigned through virtualization.

"The SDN hype is hitting strong in this business but Infinera is really one of the only companies that have a history of a hardware and software architecture that lends itself well to this concept," he says. This is validated with its customer list which is loaded heavily with service providers that are not just talking about SDN but actively doing something, he says.

"It [turning capacity up quickly] is important for SDN as well as more advanced protection arrangements. You simply can't predict where the best places to put bandwidth will be," says Schmitt. "If you can have spare capacity in the network that is lit on demand but not paid for if you don't need it, it is the cheapest approach for avoiding overbuilding a network for corner-case requirements.

"I think the accounting for this product will be interesting, it is likely that we will know in a year how successful this concept was just by a careful examination of the company's financials," he concludes.

ACG Research

Eve Griliches, vice president of optical networking

Infinera delivered this year the DTN-X with 500 Gig super-channels based on PIC technology. Now, a new 500 Gig line card has been added that can operate at 100 Gig and the remaining 400 Gig can be lit in 100 Gig increments using software. This allows customers to purchase 100 Gig at a time, and turn up subsequent bandwidth via software when they require it.

“No other vendor has a software-based solution, and no one else is delivering 500 Gig yet either,” says Griliches.

With this solution, ACG Research says in its research note, operators can start to develop a flexible infrastructure where bandwidth can grow and move around the network instantly. This is useful to address varying demands in bandwidth, triggered by incidents such as natural disasters or sporting events.

Rapid bandwidth enablement has always been important and takes way too long, so this development is key, says Griliches: “Also, it enables Infinera to enter markets which only need one 100 Gig wavelength for now, which they could not do before.”

“No other vendor has a software-based solution, and no one else is delivering 500 Gig yet either”

Looking forward, ACG Research expects this software and hardware-based instant bandwidth utility model will enable Infinera to widen its potential market base and increase its global market share in 2013 and 2014.

Ovum

Ron Kline, principal analyst, and Dana Cooperson, vice president, of the network infrastructure practice

Ovum also thinks Infinera's announcement is significant. It brings essentially the same value proposition Infinera had with 10 Gigabit to the 100 Gigabit market - low operational expenditure (opex) and quick time-to-market. ”Remember 10 Gig in 10 days?” says Kline.

It further fixes an issue for customers in that with the 10x10Gbps, they had to essentially pay for the full 100Gbps up front, and then they could be very efficient with turn-up and opex. Customers made an efficient opex for more capital expenditure (capex) up-front trade. "With instant bandwidth, they don't have to make the upfront capex-versus-opex tradeoff; they can be most efficient with both,” says Cooperson.

Any vendor can shorten capacity enablement times if they can convince the operator to pre-position bandwidth in the network that is ready to be turned on at a moment's notice.

Ron Kline

Kline says operators has different processes for turning up services and in many cases it is these processes and not the equipment directly that is the cause of the additional time for provisioning. “For example the operator may not use the DNA system or may have a very complex OSS/BSS used in the process,” says Kline.

Nevertheless, the capability to have really short provisioning is there, if an operator wants to take advantage. In the TeliaSonera case, Infinera is managing the network so the quick time to market will be there, says Kline.

Cooperson adds that there can be many factors that impede the capacity enablement process, based on Ovum's own research. “But it is clear from talking to Infinera's customers that its system design and approach is a big benefit to those carriers, often the competitive carriers, in competing in the market,” she says. “Multiple carriers told us that with the Infinera system, they were able to win business from competitors.”

Any vendor can shorten capacity enablement times if they can convince the operator to pre-position bandwidth in the network that is ready to be turned on at a moment's notice. However what is unique to Infinera is its system is deployed 500Gbps at a time and all the switching is done electrically by the OTN switch at each node. Others are working on super-channels but none are close to deploying, says Ovum.

“Multiple carriers told us that with the Infinera system, they were able to win business from competitors.”

Dana Cooperson

The ability to turn on bandwidth rapidly is becoming increasingly important. From a wholesale operator perspective it is very important and a key differentiator.

"It's particularly relevant to wholesale applications where large bandwidth chunks are required and the customer is another carrier," says Cooperson. "Whether you view a Google or a Facebook as a carrier or a very large enterprise, it would apply to them as well as a more traditional carrier."

OpenFlow extends its control to the optical layer

"We see OpenFlow as an additional solution to tackle the problem of network control"

Jörg-Peter Elbers, ADVA Optical Networking

The largest data centre players have a single-mindedness when it comes to service delivery. Players such as Google, Facebook and Amazon do not think twice about embracing and even spurring hardware and software developments if they will help them better meet their service requirements.

Such developments are also having a wider impact, interesting traditional telecom operators that have their own service challenges.

The latest development causing waves is the OpenFlow protocol. An open standard, OpenFlow is being developed by the Open Networking Foundation, an industry body that includes Google, Facebook and Microsoft, telecom operators Verizon, NTT and Deutsche Telekom, and various equipment makers.

OpenFlow is already being used by Google, and falls under the more general topic of software-defined networking (SDN). A key principle underpinning SDN is the separation of the data and control planes to enable more centralised and simplified management of the network.

OpenFlow is being used in the management of packet switches for cloud services. "The promise of software-defined networking and OpenFlow is to give [data centre operators] a virtualised network infrastructure," says Jörg-Peter Elbers, vice president, advanced technology at ADVA Optical Networking.

The growing interest in OpenFlow is reflected in the activities of the telecom system vendors that have extended the protocol to embrace the optical layer. But whereas the content service provider giants need only worry about tailoring their networks to optimise their particular services, telecom operators must consider legacy equipment and issues of interoperability.

OFELIA

ADVA Optical Networking has started the ball rolling by running an experiment to show OpenFlow controlling both the optical and packet layers of the network. Until now the protocol, which provides a software-programmable interface, has been used to manage packet switches; the adding of the optical layer control is an industry first, the company claims.

The OpenFlow demonstration is part of the European “OpenFlow in Europe, Linking Infrastructure and Applications” (OFELIA) research project involving ADVA Optical Networking and the University of Essex. A test bed has been set up that uses the ADVA FSP 3000 to implement a colourless and directionless ROADM-based optical network.

"We have put a network together such that people can run the optical layer through an OpenFlow interface, as they do the packet switching layer, under one uniform control umbrella," says Elbers. "The purpose of this project is to set up an experimental facility to give researchers access to, and have them play with, the capabilities of an OpenFlow-enabled network."

"The fact that Google is doing it [SDN] is not a strong indication that service providers are going to do it tomorrow"

Mark Lutkowitz, Telecom Pragmatics

Remote researchers can access the test bed via GÉANT, a high-bandwidth pan-European backbone connecting national research and education networks.

ADVA Optical Networking hopes the project will act as a catalyst to gain useful feedback and ideas from the users, leading to further developments to meet emerging requirements.

OpenFlow and GMPLS

A key principle of SDN, as mentioned, is the separation of the data plane from the control plane. "The aim is to have a more unified control of what your network is doing rather than running a distributed specialised protocol in the switches," says Elbers.

That is not that much different from the Generalized Multi-Protocol Label Switching (GMPLS), he says: "With GMPLS in an optical network you effectively have a data plane - a wavelength switched data plane - and then you have a unified control plane implementation running on top, decoupled from the data plane."

But clearly there are differences. OpenFlow is being used by data centre operators to control their packet switches and generate packet flows. The goal is for their networks to gain flexibility and agility: "A virtualised network that can be run as you, the user, want it," said Elbers.

But the protocol only gives a user the capability to manage the forwarding behavior of a switch: an incoming packet's header is inspected and the user can program the forwarding table to determine how the packet stream is treated and the port it goes out on.

And while OpenFlow has since been extended to cater for circuit switches as well as wavelength circuits, there are aspects at the optical layer which OpenFlow is not designed to address - issues that GMPLS does.

To run end-to-end, the control plane needs to be aware of the blocking constraints of an optical switch, while when provisioning it must also be aware of such aspects as the optical power levels and optical performance constraints. "The management of optical is different from managing a packet switch or a TDM [circuit switched] platform," says Elbers. “We need to deal with transmission impairments and constraints that simply do not exist inside a packet switch.”

That said, having GMPLS expertise, it is relatively simple for a vendor to provide an OpenFlow interface to an optical controlled network, he says: "We see OpenFlow as an additional solution to tackle the problem of network control."

Operators want mature and proven interoperable standards for network control, that incorporate all the different network layers and that use GMPLS.

"We are seeing that in the data centre space, the players think that they may not have to have that level of complexity in their protocols and can run something lower level and streamlined for their applications," says Elbers.

While operators see the benefit of OpenFlow for their own data centres and managed service offerings, they also are eyeing other applications such as for access and aggregation to allow faster service mobility and for content management, says Elbers.

ADVA Optical Networking sees the adding of optical to OpenFlow as a complementary approach: the integration of optical networking into an existing framework to run it in a more dynamic fashion, an approach that benefits the data centre operators and the telcos.

"If you have one common framework, when you give server and compute jobs then you know what kind of connectivity and latency needs to go with this and request these resources and reconfigure the network accordingly," says Elbers.

But longer term the impact of OpenFlow and SDN will likely be more far-reaching: applications themselves could program the network, or it could be used to enable dial-up bandwidth services in a more dynamic fashion. "By providing software programmability into a network, you can develop your own networking applications on top of this - what we see as the heart of the SDN concept," says Elbers. “The long term vision is that the network will also become a virtualised resource, driven by applications that require certain types of connectivity.”

Providing the interface is the first step, the value-add will be the things that players do with the added network flexibility, either the vendors working with operators, or by the operators' customers and by third-party developers.

"This is a pretty significant development that addresses the software side of things," says Elbers, adding that software is becoming increasingly important, with OpenFlow being an interesting step in that direction.

Cisco Systems' 100 Gigabit spans metro to ultra long-haul

Cisco Systems has demonstrated 100 Gigabit transmission over a 3,000km span. The coherent-based system uses a single carrier in a 50GHz channel to transmit at 100 Gigabit-per-second (Gbps). According to Cisco, no Raman amplification or signal regeneration is needed to achieve the 3,000km reach.

Feature: Beyond 100G - Part 2

"The days of a single modulation scheme on a part are probably going to come to an end in the next two to three years"

Greg Nehib, Cisco

The 100Gbps design is also suited to metro networks. Cisco's design is compact to meet the more stringent price and power requirements of metro. The company says it can fit 42, 100Gbps transponders in its ONS 15454 Multi-service Transport Platform (MSTP), which is a 7-foot rack. "We think that is double the density of our nearest competitor today," claims Greg Nehib, product manager, marketing at Cisco Systems.

Also shown as part of the Cisco demonstration was the use of super-channels, multiple carriers that are combined to achieve 400 Gigabit or 1 Terabit signals.

Single-carrier 100 Gigabit

Several of the first-generation 100Gbps systems from equipment makers use two carriers (each carrying 50Gbps) in a 50GHz channel, and while such equipment requires lower-speed electronics, twice as many coherent transmitters and receivers are needed overall.

Alcatel-Lucent is one vendor that has a single-carrier 50GHz system and so has Huawei. Ciena via its Nortel acquisition offers a dual-carrier 100Gbps system, as does Infinera. With Ciena's announcement of its WaveLogic 3 chipset, it is now moving to a single-carrier solution. Now Cisco is entering the market with a single-carrier system.

"When you have a single carrier, you can get upwards of 96 channels of 100Gbps in the C-band," says Nehib. "The equation here is about price, performance, density and power."

What has been done

Cisco's 100Gbps design fits on a 1RU (rack unit) card and uses the first 100Gbps coherent receiver ASIC designed by the CoreOptics team acquired by Cisco in May 2010.

The demonstrated 3,000km reach was made using low-loss fibre. "This is to some degree a hero experiment," says Nehib. "We have achieved 3,000km with SMF ULL fibre from Corning; the LL is low loss." Normal fibre has a loss of 0.20-0.25dB/km while for ULL fibre it is in the 0.17dB/km range.

"You can do the maths and calculate the loss we are overcoming over 3,000km. We just want to signal that we have very good performance for ultra long-haul," says Nehib, who admits that results will vary in networks, depending on the fibre.

Nehib says Cisco's coherent receiver achieves a chromatic dispersion tolerance of 70,000 ps/nm and 100ps differential group delay. Differential group delay is a non-linear effect, says Nehib, that is overcome using the DSP-ASIC. The greater the group delay tolerance, the better the distance performance. These metrics, claims Cisco, are currently unmatched in the industry.

The company has not said what CMOS process it is using for its ASIC design. But this is not the main issue, says Nehib: "We are trying to develop a part that is small so that it fits in many different platforms, and we can now use a single part number to go from metro performance all the way to ultra long-haul."

Another factor that impacts span performance is the number of lit channels. Cisco, in the test performed by independent test lab EANTC, the European Advanced Network Test Center, used 70 wavelengths. "With 70 channels the performance would have been very close to what we would have achieved with [a full complement of] 80 channels," says Nehib.

Super-channels

A super-channel refers to a signal made up of several wavelengths. Infinera, with its DTN-X, uses a 500Gbps super-channel, comprising five 100Gbps wavelengths.

Using a super-channel, an operator can turn up multiple 100Gbps channels at once. If an operator wants to add a 100Gbps wavelength, a client interface is simply added to a spare 100Gbps wavelength making up the super-channel. In contrast turning up a 100Gbps wavelength in current systems usually requires several days of testing to ensure it can carry live traffic alongside existing links.

Another benefit of super-channels is scale by turning up multiple wavelengths simultaneously. As traffic grows so does the work load on operators' engineering teams. Super-channels aid efficiency.

"There is one other point that we hear quite often," says Nehib. "One other attraction of super-channels is overall spectral efficiency." The carriers that make up the signal can be packed more closely, expanding overall fibre capacity.

"Just like with 10 Gig, we think at some point in the future the 100 Gig network will be depleted, especially in the largest networks, and operators will be interested in 400 Gig and Terabit interfaces," says Nehib. "If that wavelength can further benefit from advanced modulation schemes and super-channels through flex[ible] spectrum deployment then you can get more total bandwidth on the fibre and better utilisation of your amplifiers."

Cisco's 100Gbps lab demonstration also showed 400 Gigabit and 1 Terabit super-channels, part of its research work with the Politechnico di Torino. "We are going to move on to other advanced modulation techniques and deliver 400 Gigabit and Terabit interfaces in future," says Nehib.

Existing 100Gbps systems use dual-polarisation, quadrature phase-shift keying (DP-QPSK). Using 16-QAM (quadrature amplitude modulation) at the same baud rate doubles the data rate. Using 16-QAM also benefits spectral utilisation. If the more intelligent modulation format is used in a super-channel format, and the signal is fitted in the most appropriate channel spacing using flexible spectrum ROADMs, overall capacity is increased. However, the spectral efficiency of 16-QAM comes at the expense of overall reach.

"You are able to best match the rate to the reach to the spectrum," says Nehib. "The days of a single modulation scheme on a part are probably going to come to an end in the next two to three years."

Cisco has yet to discuss the addition of a coherent transmitter DSP which through spectral shaping can bunch wavelengths. Such an approach has just been detailed by Ciena with its WaveLogic 3 and Alcatel-Lucent with its 400 Gig photonic service engine.

For the Terabit super-channel demonstration, Cisco used 16-QAM and a flexible spectrum multiplexer. "The demo that we showed is not necessarily indicative of the part we will bring to market," says Nehib, pointing out that it is still early in the development cycle. "We are looking at the spectral efficiency of super-channels, different modulation schemes, flex-spectrum multiplexer, availability, quality, loss etc.," says Nehib. "We have not made firm technology choices yet."

Cisco's 100Gbps system is in trials with some 40 customers and can be ordered now. The product will be generally available in the near future, it says.

Further reading:

Light Reading: EANTC's independent test of Cisco's CloudVerse architecture. Part 4: Long-haul optical transport

u2t Photonics: Adapting to a changing marketplace

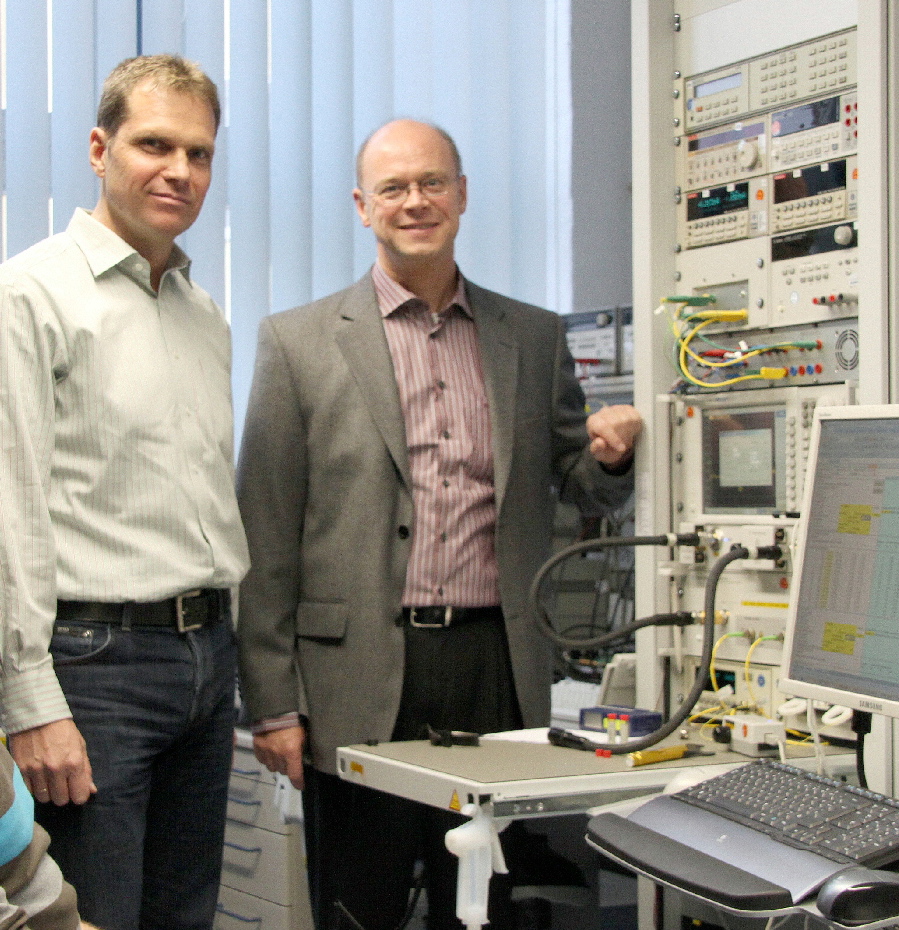

u2t Photonics' Jens Fiedler, vice president sales and marketing (left), and CEO Andreas Umbach.

u2t Photonics' Jens Fiedler, vice president sales and marketing (left), and CEO Andreas Umbach.

u2t Photonics has begun sampling its second-generation coherent receiver module. The dual-polarisation, quadrature phase-shift keying (DP-QPSK) coherent transmission receiver adds polarisation diversity to the company’s first-generation design – an indium-phosphide 90O hybrid design that includes balanced photo-detectors – all within an integrated module. u2t Photonics has developed two such coherent receiver designs, to address the 40 Gigabit-per-second (Gbps) and the 100Gbps markets, adding to the company’s first-generation design now available in small volumes.

“We can explore [next-gen coherent] solutions before we need them and we learn a lot from these partnerships” Andreas Umbach

The latest coherent receiver design represents what CEO Andreas Umbach believes u2t Photonics does best: using its radio frequency (RF) and optical component expertise to design high-speed integrated optical receiver modules.

Differentiation at 100 Gigabit

u2t Photonics is a leading component supplier for the 40 Gigabit market with its photo-detectors and more recently differential phase-shift keying (DPSK) integrated receiver designs that combine a delay-line interferometer with a balanced receiver. Such receivers are used for optical transponder and line card designs. Now, with its latest integrated coherent receiver, the German company aims to exploit the emerging 100 Gigabit market.

“The 40 Gig market will be strong for awhile yet, but 100 Gig is coming and will start to squeeze 40 Gig,” says Umbach. “Right now we do not see 100 Gig cannibalising 40 Gig,” says Jens Fiedler, vice president sales and marketing at u2t Photonics. “For DP-QPSK, 100 Gig might cannibalise 40 Gig since the technology for 40 Gig does not offer a big cost benefit for the customer.”

The emergence of 100 Gigabit optical links and its use of more advanced modulation have changed component requirements. Whereas the DPSK modulation scheme for 40Gbps requires photo-detectors with bandwidths that match the data rate, 100Gbps coherent requires photo-detectors with bandwidths of 28GHz only.

“In principle, not having the requirement of a very high-speed photo-detector makes it [100Gbps] a little bit easier, yet having 40 Gig serial is not unique anymore; there are differences in performance but it is not a limiting factor,” says Umbach.

Instead what matters for optical component players is to understand the 100Gbps functional requirements and deliver a design that meets them as early as possible, says Umbach. The challenges after that are scaling volume production and driving down cost. “We are the first company with a second generation design, offering the highest integration based on the [100G OIF] standard,” says Fiedler. “No doubt our competition is tough, but so far we are doing pretty well.”

u2t Photonics dismisses the view that the advent of high-speed CMOS ASICs that execute digital signal processing algorithms at the DP-QPSK receiver is eroding the need for the company’s expertise by enabling less specialist optics to be used. The optical specification requirements the company faces are challenging enough because customers still want to get the best performance from the links, it says.

"u2t Photonics has grown its revenue tenfold in the last five years" Jens Fiedler

“The challenge for us now is not just a photo-detector with a higher bandwidth but a coherent receiver that can detect the polarisation, phase and amplitude of the optical signal,” says Umbach. “That puts a much higher challenge on the components – not just high speed and efficiency but linearity in all these parameters.”

EC Galactico and Mirthe projects

To keep on top of next-generation coherent optical transmission schemes, u2t Photonics is a member of two European Commission (EC) Framework 7 projects dubbed Galactico (Blending diverse photonics and electronics on silicon for integrated and fully functional coherent Tb Ethernet) and Mirthe (Monolithic InP-based dual polarization QPSK integrated receiver and transmitter for coherent 100-400Gb Ethernet).

The Galactico project, which includes Nokia Siemens Networks, will develop photonic integrated circuits (PICS) that will implement a 100Gbps DP-QPSK coherent transmitter and receiver, a 600Gbps dense wavelength division multiplexing (DWDM) DP-QPSK coherent transmitter and receiver and a 280Gbps DP-128 quadrature amplitude modulation (QAM) transmitter that will deliver 10bit/sec/Hz spectral efficiency. The second project, Mirthe, is tasked with developing multi-level coding schemes using QPSK and QAM.

“Both projects address next generation [high-speed optical transmission] and the next level of integration of coherent receivers and transmitters for complex coherent systems,” says Umbach.

u2t is working with the projects’ partners in defining the devices needed for next-generation networks. In particular it is helping define the specifications needed for the ICs to drive such optical devices as well as what the product should look like to aid integration and packaging. “We have partners in the Mirthe project such as the Heinrich Hertz Institute and [Alcatel Thales] III V Lab that are focusing on chip design according to our requirements and matching our packaging development efforts,” says Umbach. The Galactico project is similar but here u2t Photonics is also contributing its integrated modulator technology for more complex transmission formants compared to the current DP-QPSK.

The company says its involvement in these projects is less to do with the research funding made available. Rather, it is the chance to work with partners on the R&D side. “We can explore solutions before we need them and we learn a lot from these partnerships,” says Umbach.

Changing markets

The emergence of three or four dominant module makers as the optical market matures presents new challenges for u2t Photonics. These emerging leaders are increasingly vertically integrated, using their own in-house components within their modules.

“Vertical integration is something we have to face and are fully aware of,” says Fiedler. To remain a valid component supplier, what matters is delivering component performance, volume capability, and cost that meet customer targets and challenge their own developments. “That is what we need to – at least be the second source for these vertical integrated companies,” says Fiedler.

Umbach points out that many of the system vendors are developing their own 100Gbps systems on line cards, and this represents another market opportunity for u2t Photonics, independent of the module makers.

“u2t might not offer the best pricing and might have issues - technical challenges common when you have early, leading-edge components,” says Fiedler. “But finally u2t is chosen as the supplier. They know we deliver the products.” To prove his point, Fiedler claims u2t Photonics has grown its revenue tenfold in the last five years.

Europe’s optical vendors

The last few years has seen significant consolidation among European optical component firms. Whereas Europe has system vendors that include Alcatel-Lucent, Nokia Siemens Networks, Ericsson, ADVA Optical Networking and Transmode Systems, the number of component vendors has continued to shrink. Bookham became a US company before merging with Avanex to become Oclaro, MergeOptics folded and its assets were acquired by FCI, while CoreOptics was acquired by Cisco Systems in May 2010.