FSAN adds WDM for next-generation PON standard

The Full Service Access Network (FSAN) group has chosen wavelength division multiplexing (WDM) to complement PON's traditional time-sharing scheme for the NG-PON2 standard.

"The technology choice allows us to have a single platform supporting both business and residential services"

"The technology choice allows us to have a single platform supporting both business and residential services"

Vincent O'Byrne, Verizon

The TWDM-PON scheme for NG-PON2 will enable operators to run several services over one network: residential broadband access, business services and mobile back-hauling. In addition, NG-PON2 will support dedicated point-to-point links – via a WDM overlay - to meet more demanding service requirements.

FSAN will work through the International Telecommunication Union (ITU) to turn NG-PON2 into a standard. Standards-compliant NG-PON2 equipment is expected to become available by 2014 and be deployed by operators from 2015. But much work remains to flush out the many details and ensure that the standard meets the operators’ varied requirements

Significance

The choice of TWDM-PON represents a pragmatic approach by FSAN. TWDM-PON has been chosen to avoid having to make changes to the operators' outside plant. Instead, changes will be confined to the PON's end equipment: the central office's optical line terminal (OLT) and the home or building's optical networking unit (ONU).

Operators yet to adopt PON technology may use NG-PON2's extended reach to consolidate their network by reducing the number of central offices they manage. Other operators already having deployed PON may use NG-PON2 to boost broadband capacity while consolidating business and residential services onto the one network.

US operator Verizon has deployed GPON and says the adoption of NG-PON2 will enable it to avoid the intermediate upgrade stage of XGPON (10Gbps GPON).

"The [NG-PON2] technology choice allows us to have a single platform supporting both business and residential services," says Vincent O'Byrne, director of technology, wireline access at Verizon. "With the TWDM wavelengths, we can split them: We could have a 10G/10G service or ten individual 1G/1G services and, in time, have also residential customers."

The technology choice for NG-PON2 is also good news for system vendors such as Huawei and Alcatel-Lucent that have already done detailed work on TWDM-PON systems.

Specification

NG-PON2's basic configuration will use four wavelengths, resulting in a 40Gbps PON. Support for eight (80G) and 16 wavelengths (160G) are also being considered.

Each wavelength will support 10Gbps downstream (from the central office to the end users) and 2.5Gbps upstream (XGPON) or 10Gbps symmetrical services for business users.

"The idea is to reuse as much as possible the XGPON protocol in TWDM-PON, and carry that protocol on multiple wavelengths," says Derek Nesset, co-chair of FSAN's NGPON task group.

The PON's OLT will support the 4, 8 or 16 wavelengths using lasers and photo-detectors as well as optical multiplexing, while the ONU will require a tunable laser and a tunable filter, to set the ONU to the PON's particular wavelengths.

Other NG-PON2 specifications include the support of at least 1Gbps services per ONU and a target reach of 40km. NG-PON2 will also support 60-100km links but that will require technologies such as optical amplification.

"The [NG-PON2] ONUs should be something like the cost of a VDSL or a GPON modem, so there is a challenge there for the [tunable] laser manufacturers"

"The [NG-PON2] ONUs should be something like the cost of a VDSL or a GPON modem, so there is a challenge there for the [tunable] laser manufacturers"

Derek Nesset, co-chair of FSAN's NGPON task group

What next?

"The big challenge and the first challenge is the wavelength plan [for NG-PON2]," says O'Byrne.

One proposal is for TWDM-PON's wavelengths to replace XGPON's. Alternatively, new unallocated spectrum could be assigned to ensure co-existence with existing GPON, RF video and XGPON. However, such a scheme will leave little spectrum available for NG-PON2. Some element of spectral flexibility will be required to accommodate the various co-existence scenarios in operator networks. That said, Verizon expects that FSAN will look for fresh wavelengths for NG-PON2.

"FSAN is a sum of operators opinions and requirements, and it is getting hard," says O'Byrne. "Our preference would be to reuse XGPON wavelengths but, at the last meeting, some operators want to use XGPON in the coming years and aren't too favourable to recharacterising that band."

Another factor regarding spectrum is how widely the wavelengths will be spaced; 50GHz, 100GHz or the most relaxed 200GHz spacing are all being considered. The tradeoff here is hardware design complexity and cost versus spectral efficiency.

There is still work to be done to define the 10Gbps symmetrical rate. "Some folks are also looking for slightly different rates and these are also under discussion," says O'Byrne.

Another challenge is that TWDM-PON will also require the development of tunable optical components. "The ONUs should be something like the cost of a VDSL or a GPON modem, so there is a challenge there for the [tunable] laser manufacturers," says Nesset.

Tunable laser technology is widely used in optical transport, and high access volumes will help the economics, but this is not the case for tunable filters, he says.

The size and power consumption of PON silicon pose further challenges. NG-PON2 will have at least four times the capacity, yet operators will want the OLT to be the same size as for GPON.

Meanwhile, FSAN has several documents in preparation to help progress ITU activities relating to NG-PON2's standardisation.

FSAN has an established record of working effectively through the ITU to define PON standards, starting with Broadband PON (BPON) and Gigabit PON (GPON) to XGPON that operators are now planning to deploy.

FSAN members have already submitted a NG-PON2 requirements document to the ITU. "This sets the framework: what is it this system needs to do?" says Nesset. "This includes what client services it needs to support - Gigabit Ethernet and 10 Gigabit Ethernet, mobile backhaul latency requirements - high level things that the specification will then meet."

In June 2012 a detailed requirements document was submitted as was a preliminary specification for the physical layer. These will be followed by documents covering the NG-PON2 protocol and how the management of the PON end points will be implemented.

If rapid progress continues to be made, the standard could be ratified as early as 2013, says O'Byrne.

60-second interview with .... Sterling Perrin

Heavy Reading has published a report Photonic Integration, Super Channels & the March to Terabit Networks. In this 60-second interview, Sterling Perrin, senior analyst at the market research company, talks about the report's findings and the technology's importance for telecom and datacom.

"PICs will be an important part of an ensemble cast, but will not have the starring role. Some may dismiss PICs for this reason, but that would be a mistake – we still need them."

Sterling Perrin, Heavy Reading

Heavy Reading's previous report on optical integration was published in 2008. What has changed?

The biggest change has been the rise of coherent detection, bringing electronics to prominence in the world of optics. This is a big shift - and it has taken some of the burden off photonic integration. Simply put, electronics has taken some of the job away from optics.

How important is optical Integration, for optical component players and for system vendors?

Until now, photonic integration has not been a ‘must have’ item for systems suppliers. For the most part, there have been other ways to get at lower costs and footprint reductions.

I think we are starting to see photonic integration move into the must-have category for systems suppliers, in certain applications, which means that it becomes a must-have item for the components companies that supply them.

How should one view silicon photonics and what importance does Heavy Reading attach to Cisco System's acquisition of silicon photonics' startup, Lightwire?

When we published the last [2008] report, silicon photonics was definitely within the hype cycle. We’ve seen the hype fade quite a bit – it’s now understood that just because a component is made with silicon, it’s not automatically going to be cheaper. Also, few in the industry continue to talk about a Moore’s Law for optics today. That said, there are applications for silicon photonics, particularly in data centre and short-reach applications, and the technology has moved forward.

Cisco’s acquisition of Lightwire is a good testament for how far the technology has come. This is a strategic acquisition, aimed at long-term differentiation, and Cisco believes that silicon photonics will help them get there.

"It will be interesting to watch what other [optical integration] M&A activity occurs, and how this activity affects the components players"

What are the main optical integration market opportunities?

In long haul, we already see applications for photonic integrated circuits (PICs). Certainly, Infinera’s PIC-based DTN and DTN-X systems stand out. But also, the OIF has specified photonic integration in its 100 Gigabit long haul, DWDM (dense wavelength division multiplexing) MSA (multi-source agreement) – it was needed to get the necessary size reduction.

Moving forward, there is opportunity for PICs in client-side modules as PICs are the best way to reduce module sizes and improve system density. Then, beyond 100G, to super-channel-based long-haul systems, PICs will play a big role here, as parallel photonic integration will be used to build these super-channels.

Were you surprised by any of the report's findings?

When I start researching a report, I am always hopefully for big black and white kinds of findings – this is the biggest thing for the industry or this is a dud. With photonic integration, we found such a wide array of opinions and viewpoints that, in the end, we had to place photonic integration somewhere in the middle.

It’s clear that system vendors are going to need PICs but it’s also clear that PICs alone won’t solve all the industry’s challenges. PICs will be an important part of an ensemble cast, but will not have the starring role. Some may dismiss PICs for this reason, but that would be a mistake – we still need them.

What optical integration trends/ developments should be watched over the next two years?

The year started with two major system suppliers buying PIC companies: Cisco and Lightwire and Huawei and CIP Technologies. With Alcatel-Lucent having in-house abilities, and, of course, Infinera, this should put pressure on other optical suppliers to have a PIC strategy.

It will be interesting to watch what other M&A activity occurs, and how this activity affects the components players.

The editor of Gazettabyte worked with Heavy Reading in researching photonic integration for the report.

Has the restructuring of the optical industry already started?

The view that consolidation in the optical networking industry is needed is not new. For a decade, ever since the end of the optical boom in 2001, consolidation has been called for and has been expected. And while the many optical startups funded then have long exited or been acquired, the optical industry continues to support numerous optical networking and component generalist and specialists.

Given the state of the telecom market, is a more fundamental industry restructuring finally on its way?

"The business model of the communication sector needs to change, and change in a relatively short order"

Larry Schwerin, CEO of Capella Intelligent Subsystems

Larry Schwerin, CEO of Capella Intelligent Subsystems, believes change is inevitable. He argues that the industry supply chain will change, especially as firms become more vertically integrated.

"This is not to say that the market and demand are not there," says Schwerin, but the industry is stuck with a decade-old structure yet the market has changed.

Optical market dynamics

Schwerin starts his argument by highlighting certain fundamental drivers. IP traffic continues to grow at over 30% a year, while the nature of the traffic is changing, especially with cloud computing and as users generate more digital media content.

“The current rate of bandwidth growth coupled with the rate of CapEx spend, the gap is widening and the revenue-per-bit is dropping,” he says. “Some argue that bandwidth growth will slow down as operators charge [users] more, but to date this hasn't been seen.”

These trends are welcome for the optical companies, says Schwerin, as operators adopt lower layer, optical switching as a cheaper alternative to IP routing. “The number of [wavelength-selective] switches per node is growing quite dramatically," he says. "We are now seeing deployments with, on average, 6-8 switches per node and people are projecting as many as 20 as people start deploying colourless, directionless, contentionless-based switching."

But such demand is coupled with fierce competition among numerous players at each layer of the optical industry's supply chain.

"Some 80% of the optics used by system vendors are bought. How do you differentiate on features above and beyond what you are buying?"

Supply chain

The annual global operator market for wireless and wireline equipment is valued at US $250bn, says Schwerin, using market research and financial analyst firms' data.

The global optical networking equipment market is $15bn. The Chinese vendors Huawei and ZTE now account for 30% of the market, while Alcatel-Lucent is the only other major vendor with double-digit share. The rest of the market is split among numerous optical vendors. "If you think about that, if you have 5% or less [optical networking] market share, that really is not a sustainable business given the [companies'] overhead expenses," says Schwerin.

The global optical component market is valued at $5bn. It is likely larger, anything up to $8bn, argues Schwerin, because of the Chinese optical companies supplying Huawei and ZTE.

"You have a $5-8bn market selling products into $15bn, and then the $15bn is trying to repurpose that material and resell it to the carriers - is that really what is going on?" says Schwerin. To this vendor hierarchy is added contract manufacturers, with different players serving the component and the system vendors.

The slim profits operators are making on their services is forcing them to place significant pricing pressure on the system companies that already face fierce competition. Meanwhile, the optical component and contract manufacturers are also trying to make money in this environment.

Looking at gross margin data from Morgan Stanley, Schwerin says that the system vendors' figures range from 35% for the low end to 40% at the high end. "What the figures highlight is a lack of differentiation," he says. "And, in part, it is because they are buying all the same technology."

Schwerin says that some 80% of the optics used by system vendors are bought. "How do you differentiate on features above and beyond what you are buying?"

The optical components vendors' gross margins of a year ago were 30%. More recent data shows these figures are down, with the only segment showing a rise being optical sub-systems.

What next?

Schwerin says one way to improve the health of the industry is greater vertical integration. How this will be done - which players get consumed and how - will only become clear in the next 2-3 years but he is confident it will happen. "There are just too many layers of the ecosystem and it is just too fragmented," he says.

Operator mergers and slower spending put pressure on vendors at each layer of the supply chain, inducing revenue stalls. "These swings seems to be more and more violent," says Schwerin. "It is difficult for companies to maintain themselves in these cycles, let alone innovate."

Schwerin highlights Cisco System's acquisition of silicon photonics start-up, Lightwire, earlier this year, as an example of a system vendor embracing vertical integration while also acquiring innovation. Another example is Huawei's acquisition of optical integration specialist, CIP Technologies.

"The business model of the communication sector needs to change, and change in a relatively short order," says Schwerin, who believes it has already started. He cites the merger between the two large optical component vendors, Oclaro and Opnext, and expects a similar deal among the system vendors: "One of those 5 percenters will be absorbed."

As the market further consolidates, and as system companies drive fundamental technologies, the components' market will start to shrink. "It is then like a chain reaction; it forces itself," he says.

Schwerin's take is that rather than continue with the existing optical component and contract manufacturing model, what is more likely is that what will be supplied will be basic optical components. Differentiation will be driven by the system vendors.

The OTN transport and switching market

Source: Infonetics Research

Source: Infonetics Research

The OTN transport and switching market is forecast to grow at a 17% compound annual growth rate (CAGR) from 2011 to 2016, outpacing the 5.5% CAGR of the optical equipment market (WDM, SONET/SDH). So claims a recent study on the OTN equipment marketplace by Infonetics Research.

A Q&A with report author, Andrew Schmitt, principal analyst for optical at Infonetics.

How should OTN (Optical Transport Network) be viewed? As an intermediate technology bridging the legacy SONET/SDH and the packet world? Or is OTN performing another, more fundamental networking role?

There is a deep misconception that once the voyage to an all-packet nirvana is complete, there is no need for SONET/SDH or an equivalent technology. This isn’t true. Networks that are 100% packet still need an OSI layer 1 mechanism, and to date this is mostly SDH and increasingly OTN.

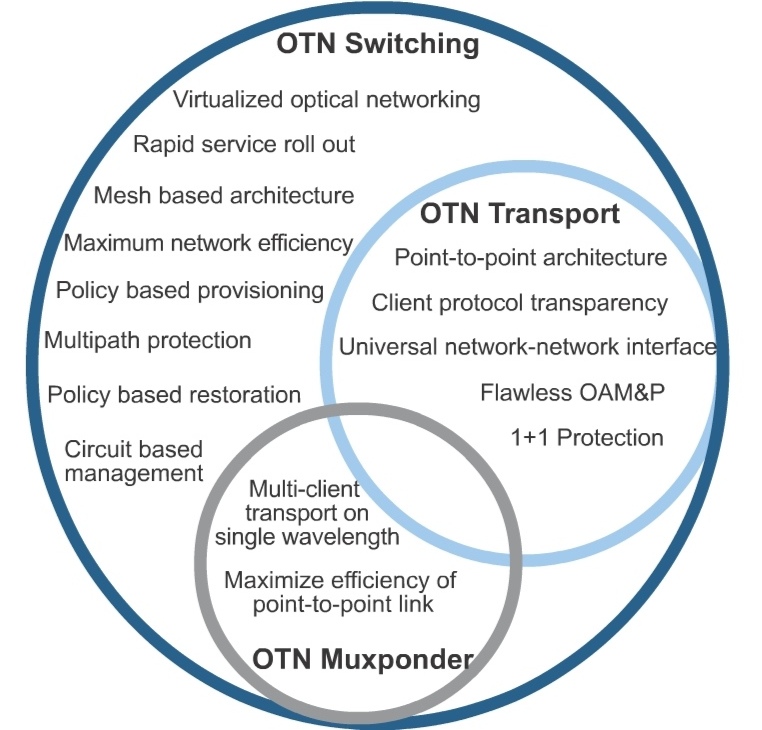

OTN should be viewed as the carrier transport protocol for the foreseeable future. For many carriers, OTN will be used not just for carrying a single packet client, but for interleaving multiple clients onto the same wavelength. This is OTN switching, and it is a superset of OTN transport functionality.

Most people talk about the OTN market but they fail to distinguish between whether OTN is used as a point-to-point technology or as a switching technology that allows the creation of an electronic mesh network.

What is OTN doing within operators' networks that accounts for their strong investment in the technology?

OTN is the new physical layer protocol carrying out the OSI [Open Systems Interconnection] layer 1 functions. Carriers are investing in OTN as part of their continuing investments in WDM [wavelength division multiplexing] equipment, most of which supports OTN transport, a maturing market. The new market is that of OTN switching, which resembles the SONET/SDH multiplexing scheme, but with much better features and management.

OTN switching deployments are directly related to large scale deployments of 40G and 100G transport networks as part of what I like to call The Optical Reboot. As these new wavelength speeds are rolled out, often on unused fibre, other technologies are being introduced at the same time – things like OTN switching and new control plane methods.

"People are underestimating how hard it is to build this [OTN] hardware and combine it with control plane software"

Please explain the difference between the main platforms - OTN transport, OTN switching and P-OTS. And will they have the same relative importance by 2016?

OTN switching is a superset of OTN transport, and the differences are shown in a Venn diagram (chart above) from a recent whitepaper I wrote, Integrated OTN Switching Virtualizes Optical Networks. Somewhere between the two is the muxponder application, which is good for low-volume deployments but becomes expensive and tough to manage when used in quantity.

P-OTS (packet-optical transport systems) are boxes that combine both layer 1 (SONET/SDH and/or OTN switching) with layer 2 (Ethernet, MPLS-TP, other circuit-oriented Ethernet (COE) protocols) in the same hardware and management platform.

Cisco was one of the early leaders in this space with some creative brute-force upgrades to the venerable 15454 platform. Since then, many legacy SONET/SDH multi-service provisioning platforms (MSPPs) have seen upgrades to carry Ethernet. Some of the best examples of this platform type are the Fujitsu 9500, Tellabs' 7100, and Alcatel-Lucent's 1850.

You say a big vendor battle is brewing in the P-OTS space: Cisco, Tellabs, and Alcatel-Lucent are the top 3 vendors, but Fujitsu, Ciena, and Huawei are gaining. What factors will determine a vendor's P-OTS success here?

It really depends. In the metro-regional applications of bigger boxes, things like 100G optics and OTN switching will be more important, as the layer 2 functions are handed off to dedicated layer 2/3 machines. As you get closer to the edge, though, OTN switching will have no importance and everything will depend on the layer 2 and layer zero features.

For layer 2, this means supporting a lightweight circuit-oriented Ethernet protocol with awareness of all the various service types that might be in play. For layer zero, it is all about cheap tunable optics (tunable XFP and SFP+), but particularly ROADMs. I think BTI Photonics, Cyan, Transmode, and ADVA Optical Networking are some of the smaller players to watch here. Mobile backhaul, data centre interconnect, and enterprise data services are the big engines of growth here.

Were there any surprises as part of your research for the report?

There just are not that many vendors shipping OTN switching systems today. I think people are underestimating how hard it is to build this hardware and combine it with control plane software. In 2011, only Ciena, Huawei, and ZTE shipped OTN switching for revenue. This year we should see Alcatel-Lucent, Infinera, Nokia Siemens, and maybe a few more.

Is there one OTN trend currently unclear that you'd highlight as worth watching?

Yes: It isn’t clear to what degree carriers want integrated WDM optics in OTN switches. In the past, big SONET/SDH switches like Ciena’s CoreDirector were always shipped with short-reach optics that connected it to standalone WDM systems. I think going forward, OTN switching and the WDM transport functions must be built into the same hardware in order to get the benefits of OTN switching at the best price, and that’s why I wrote the Integrated OTN Switching white paper – to try to communicate why this is important. It is a shift in the way carriers use this equipment, though, and as you know, some carrier habits are hard to break.

Further reading

OTN Processors from the core to the network edge, click here

Industry underestimating 25 Gigabit parallel optics challenge

Ten Gigabit-based parallel optics is set to dominate the marketplace for several years to come. So claims datacom module specialist, Avago Technologies.

"One customer told us it has to keep the interface speed below 20Gbps due to the cost of the SerDes"

Sharon Hall, Avago

"People are underestimating what is going to be involved in doing 25 Gigabit [channels]," says Sharon Hall, product line manager for embedded optics at Avago Technologies. "Ten Gigabit is going to last quite a bit longer because of the price point it can provide."

Eventually 25 Gig-based parallel optics, with its lower lane count, will be cheaper than 10 Gigabit - but is will take several years. One challenge is the cost of 25 Gigabit-per-second (Gbps) electrical interfaces, due to the large relative size of the circuitry. One customer told Avago that it has to keep the interface speed below 20Gbps for now due to the cost of the serial/ deserialiser (SerDes).

Avago has announced that its 120 Gigabit aggregate bandwidth (12x10Gbps) MiniPod and CXP parallel optics products are now in volume production. The company first detailed the MiniPod and CXP technologies in late 2010 yet many equipment makers are still to launch their first designs.

The CXP is a pluggable optical transceiver while the MiniPod is Avago's packaged optical engine used for embedded designs. The 22x18mm MiniPod is based on Avago's 8x8mm MicroPod optical engine but uses a 9x9 electrical MegArray connector with its more relaxed pitch.

Equipment makers face a non-trivial decision as to whether to adopt copper or optical interfaces for their platform designs. "This is a major design decision with a lot of customers going back and forth deciding which way to go," says Hall. "They might do a mix with some short connections staying copper but if they need 10 Gig at anything longer than a few meters then they are going to go optical."

Having chosen parallel optics, the style of form factor - pluggable or embedded - is largely based on the interface density required. "Certain customers prefer field pluggability [of CXP] with its pay-as-you-go and ease of installation features, but are limited on port density due to the number of CXP transceivers that can physically fit on a 19 inch board," says Hall.

Up to 14 CXPs can fit onto a 19-inch board. In contrast, some 50-100 transmit and receive MiniPod pairs can fit on the 19-inch board. "You have the whole board space to work with," she says. The embedded optics sit closer to the board's ASICs, shortening the electrical path and solving signal integrity issues that can arise using edge-mounted pluggables. Thermal management - not having all the pluggable optics at the card edge furthest from the fans - is also simplified using embedded optics.

Generally, connections to data centre top-of-rack switches and between chassis use the pluggable CXP while internal backplane and mid-plane designs use the MiniPod. The CXP is also used by core switches and routers; Alcatel-Lucent's recently announced 7950 core router has a four-port CXP-based card. But Avago stresses that there are no hard rules: It has customers that have chosen the CXP and others the MiniPod for the same class of platform.

Source: Gazettabyte

25 Gigabit parallel optics

Finisar recently demonstrated its board mounted optical assembly that it says will support channel speeds of 10, 14, 25 and 28Gbps, while silicon photonics vendors Luxtera and Kotura have announced 4x25Gbps optical engines. OneChip Photonics has announced photonic integrated circuits for the 4x25Gbps, 100GBase-LR4 10km standard that will also address short and mid-reach applications

Avago has yet to make an announcement regarding higher speed parallel optics. "It is just a matter of time," says Hall. "We have done a demonstration of our 25Gbps VCSEL in an SFP+ package over a year ago, and we are developing parallel optics 25Gbps solutions."

But 25Gbps will take time before it gets to volume production, says Hall: "It is going to be a long, long design cycle for system companies - doing 25Gbps on their boards and their systems is a completely new design."

Supercomputers and system mid-plane and backplane applications could happen a lot earlier than 4x25GbE applications. "Some customers are interested in getting 4x25Gbps samples in the 2013 timeframe," says Hall. "But we expect that volume is going to take at least another year from that."

Meanwhile, Avago says it has already shipped 600,000 MicroPods which has been generally available for over a year.

A Terabit network processor by 2015?

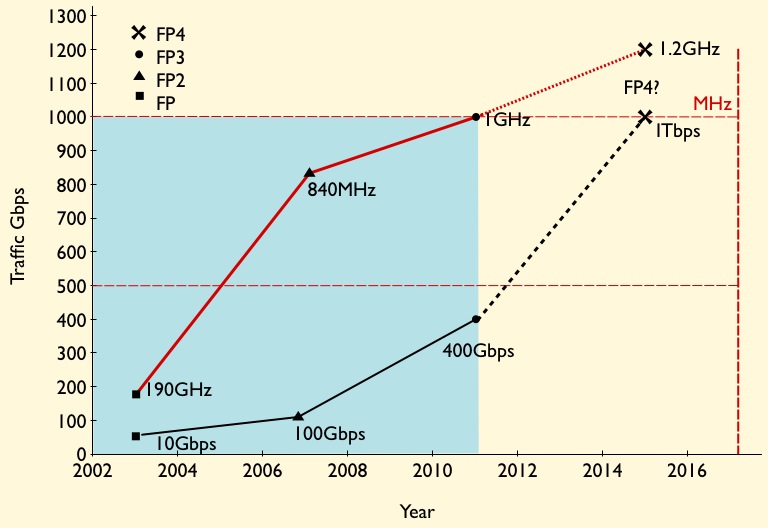

Given that 100 Gigabit merchant silicon network processors will appear this year only, it sounds premature to discuss Terabit devices. But Alcatel-Lucent's latest core router family uses the 400 Gigabit FP3 packet-processing chipset, and one Terabit is the next obvious development.

Source: Gazettabyte

Source: Gazettabyte

Core routers achieved Terabit scale awhile back. Alcatel-Lucent's recently announced IP core router family includes the high-end 32 Terabit 7950 XRS-40, expected in the first half of 2013. The platform has 40 slots and will support up to 160, 100 Gigabit Ethernet interfaces.

Its FP3 400 Gigabit network processor chipset, announced in 2011, is already used in Alcatel-Lucent's edge routers but the 7950 is its first router platform family to exploit fully the chipset's capability.

The 7950 family comes with a selection of 10, 40 and 100 Gigabit Ethernet interfaces. Alcatel-Lucent has designed the router hardware such that the card-level control functions are separate from the Ethernet interfaces and FP3 chipset that both sit on the line card. The re-design is to preserve the service provider's investment. Carrier modules can be upgraded independently of the media line cards, the bulk of the line card investment.

The 7950 XRS-20 platform, in trials now, has 20 slots which take the interface modules - dubbed XMAs (media adapters) - that house the various Ethernet interface options and the FP3 chipset. What Alcatel-Lucent calls the card-level control complex is the carrier module (XCM), of which there are up to are 10 in the system. The XCM, which includes control processing, interfaces to the router's system fabric and holds up to two XMAs.

There are two XCM types used with the 7950 family router members. The 800 Gigabit-per-second (Gbps) XCM supports a pair of 400Gbps XMAs or 200Gbps XMAs, while the 400Gbps XCM supports a single 400Gbps XMA or a pair of 200Gbps XMAs.

The slots that host the XCMs can scale to 2 Terabits, suggesting that the platforms are already designed with the next packet processor architecture in mind.

FP3 chipset

The FP3 chipset, like the previous generation 100Gbps FP2, comprises three devices: the P-chip network processor, a Q-chip traffic manager and the T-chip that interfaces to the router fabric.

The P-chip inspects packets and performs the look ups that determine where the packets should be forwarded. The P-chip determines a packet's class and the quality of service it requires and tells the Q-chip traffic manager in which queue the packet is to be placed. The Q-chip handles the packet flows and makes decisions as to how packets should be dealt with, especially when congestion occurs.

The basic metrics of the 100Gbps FP2 P-chip is that it is clocked at 840GHz and has 112 micro-coded programmable cores, arranged as 16 rows by 7 columns. To scale to 400Gbps, the FP3 P-chip is clocked at 1GHz (x1.2) and has 288 cores arranged as a 32x9 matrix (x2.6). The cores in the FP3 have also been re-architected such that two instructions can be executed per clock cycle. However this achieves a 30-35% performance enhancement rather than 2x since there are data dependencies and it is not always possible to execute instructions in parallel. Collectively the FP3 enhancements provide the needed 4x improvement to achieve 400Gbps packet processing performance.

The FP3's traffic manager Q-chip retains the FP2's four RISC cores but the instruction set has been enhanced and the cores are now clocked at 900GHz.

Terabit packet processing

Alcatel-Lucent has kept the same line card configuration of using two P-chips with each Q-chip. The second P-chip is viewed as an inexpensive way to add spare processing in case operators need to support more complex service mixes in future. However, it is rare that in the example of the FP2-based line card, the capability of the second P-chip has been used, says Alcatel-Lucent.

Having the second P-chip certainly boosts the overall packet processing on the line card but at some point Alcatel-Lucent will develop the FP4 and the next obvious speed hike is 1 Terabit.

Moving to a 28nm or an even more advanced CMOS process will enable the clock speed of the P-chip to be increased but probably not by much. A 1.2GHz clock would still require a further more-than-doubling of the cores, assuming Alcatel-Lucent doesn't also boost processing performance elswhere to achieve the overall 2.5x speed-up to a 1 Terabit FP4.

However, there are two obvious hurdles to be overcome to achieve a Terabit network processor: electrical interface speeds and memory.

Alcatel-Lucent settled on 10Gbps SERDES to carry traffic between the chips and for the interfaces on the T-chip, believing the technology the most viable and sufficiently mature when the design was undertaken. A Terabit FP4 will likely adopt 25Gbps interfaces to provide the required 2.5x I/O boost.

Another even more significant challenge is the memory speed improvement needed for the look up tables and for packet buffering. Alcatel-Lucent worked with the leading memory vendors when designing the FP3 and will do the same for its next-generation design.

Alcatel-Lucent, not surprisingly, will not comment on devices it has yet to announce. But the company did say that none of the identified design challenges for the next chipset are insurmountable.

Further reading:

Network processors to support multiple 100 Gigabit flows

A more detailed look at the FP3 in New Electronics, click here

OpenFlow extends its control to the optical layer

"We see OpenFlow as an additional solution to tackle the problem of network control"

Jörg-Peter Elbers, ADVA Optical Networking

The largest data centre players have a single-mindedness when it comes to service delivery. Players such as Google, Facebook and Amazon do not think twice about embracing and even spurring hardware and software developments if they will help them better meet their service requirements.

Such developments are also having a wider impact, interesting traditional telecom operators that have their own service challenges.

The latest development causing waves is the OpenFlow protocol. An open standard, OpenFlow is being developed by the Open Networking Foundation, an industry body that includes Google, Facebook and Microsoft, telecom operators Verizon, NTT and Deutsche Telekom, and various equipment makers.

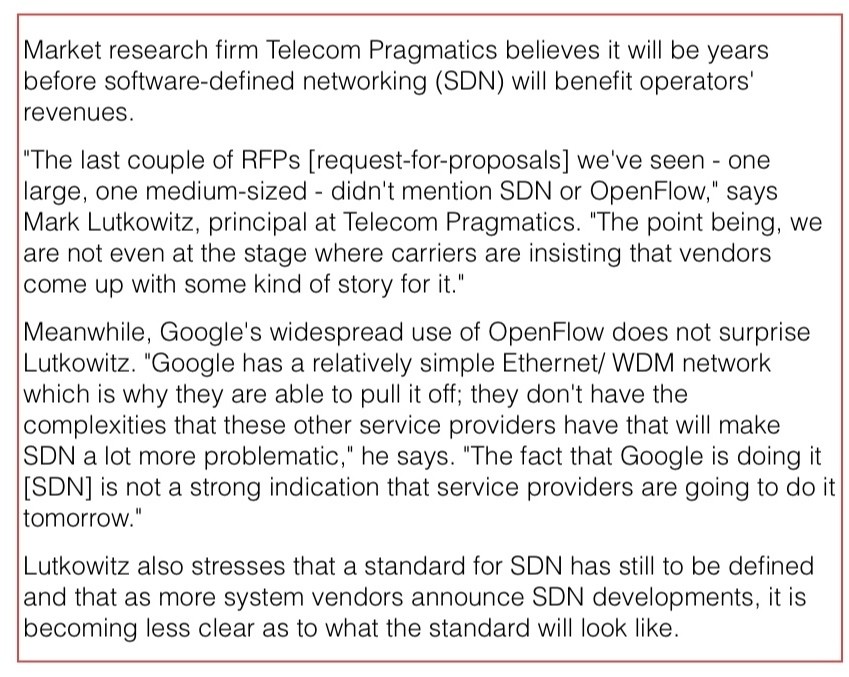

OpenFlow is already being used by Google, and falls under the more general topic of software-defined networking (SDN). A key principle underpinning SDN is the separation of the data and control planes to enable more centralised and simplified management of the network.

OpenFlow is being used in the management of packet switches for cloud services. "The promise of software-defined networking and OpenFlow is to give [data centre operators] a virtualised network infrastructure," says Jörg-Peter Elbers, vice president, advanced technology at ADVA Optical Networking.

The growing interest in OpenFlow is reflected in the activities of the telecom system vendors that have extended the protocol to embrace the optical layer. But whereas the content service provider giants need only worry about tailoring their networks to optimise their particular services, telecom operators must consider legacy equipment and issues of interoperability.

OFELIA

ADVA Optical Networking has started the ball rolling by running an experiment to show OpenFlow controlling both the optical and packet layers of the network. Until now the protocol, which provides a software-programmable interface, has been used to manage packet switches; the adding of the optical layer control is an industry first, the company claims.

The OpenFlow demonstration is part of the European “OpenFlow in Europe, Linking Infrastructure and Applications” (OFELIA) research project involving ADVA Optical Networking and the University of Essex. A test bed has been set up that uses the ADVA FSP 3000 to implement a colourless and directionless ROADM-based optical network.

"We have put a network together such that people can run the optical layer through an OpenFlow interface, as they do the packet switching layer, under one uniform control umbrella," says Elbers. "The purpose of this project is to set up an experimental facility to give researchers access to, and have them play with, the capabilities of an OpenFlow-enabled network."

"The fact that Google is doing it [SDN] is not a strong indication that service providers are going to do it tomorrow"

Mark Lutkowitz, Telecom Pragmatics

Remote researchers can access the test bed via GÉANT, a high-bandwidth pan-European backbone connecting national research and education networks.

ADVA Optical Networking hopes the project will act as a catalyst to gain useful feedback and ideas from the users, leading to further developments to meet emerging requirements.

OpenFlow and GMPLS

A key principle of SDN, as mentioned, is the separation of the data plane from the control plane. "The aim is to have a more unified control of what your network is doing rather than running a distributed specialised protocol in the switches," says Elbers.

That is not that much different from the Generalized Multi-Protocol Label Switching (GMPLS), he says: "With GMPLS in an optical network you effectively have a data plane - a wavelength switched data plane - and then you have a unified control plane implementation running on top, decoupled from the data plane."

But clearly there are differences. OpenFlow is being used by data centre operators to control their packet switches and generate packet flows. The goal is for their networks to gain flexibility and agility: "A virtualised network that can be run as you, the user, want it," said Elbers.

But the protocol only gives a user the capability to manage the forwarding behavior of a switch: an incoming packet's header is inspected and the user can program the forwarding table to determine how the packet stream is treated and the port it goes out on.

And while OpenFlow has since been extended to cater for circuit switches as well as wavelength circuits, there are aspects at the optical layer which OpenFlow is not designed to address - issues that GMPLS does.

To run end-to-end, the control plane needs to be aware of the blocking constraints of an optical switch, while when provisioning it must also be aware of such aspects as the optical power levels and optical performance constraints. "The management of optical is different from managing a packet switch or a TDM [circuit switched] platform," says Elbers. “We need to deal with transmission impairments and constraints that simply do not exist inside a packet switch.”

That said, having GMPLS expertise, it is relatively simple for a vendor to provide an OpenFlow interface to an optical controlled network, he says: "We see OpenFlow as an additional solution to tackle the problem of network control."

Operators want mature and proven interoperable standards for network control, that incorporate all the different network layers and that use GMPLS.

"We are seeing that in the data centre space, the players think that they may not have to have that level of complexity in their protocols and can run something lower level and streamlined for their applications," says Elbers.

While operators see the benefit of OpenFlow for their own data centres and managed service offerings, they also are eyeing other applications such as for access and aggregation to allow faster service mobility and for content management, says Elbers.

ADVA Optical Networking sees the adding of optical to OpenFlow as a complementary approach: the integration of optical networking into an existing framework to run it in a more dynamic fashion, an approach that benefits the data centre operators and the telcos.

"If you have one common framework, when you give server and compute jobs then you know what kind of connectivity and latency needs to go with this and request these resources and reconfigure the network accordingly," says Elbers.

But longer term the impact of OpenFlow and SDN will likely be more far-reaching: applications themselves could program the network, or it could be used to enable dial-up bandwidth services in a more dynamic fashion. "By providing software programmability into a network, you can develop your own networking applications on top of this - what we see as the heart of the SDN concept," says Elbers. “The long term vision is that the network will also become a virtualised resource, driven by applications that require certain types of connectivity.”

Providing the interface is the first step, the value-add will be the things that players do with the added network flexibility, either the vendors working with operators, or by the operators' customers and by third-party developers.

"This is a pretty significant development that addresses the software side of things," says Elbers, adding that software is becoming increasingly important, with OpenFlow being an interesting step in that direction.

OTN processors from the core to the network edge

The latest silicon design announcements from PMC and AppliedMicro reflect the ongoing network evolution of the Optical Transport Network (OTN) protocol.

"There is a clear march from carriers, led in particular by China, to adopt OTN in the metro"

Scott Wakelin, PMC

The OTN standard, defined by the telecom standards body of the International Telecommunication Union (ITU-T), has existed for a decade but only recently has it emerged as a key networking technology.

OTN's growing importance is due to the enhanced features being added to the protocol coupled with developments in the network. In particular, OTN enhances capabilities that operators have long been used to with SONET/SDH, while also supporting packet-based traffic. Moreover chip vendors are unveiling OTN designs that now span the core to the network edge.

"OTN switching is a foundational technology in the network"

Michael Adams, Ciena

OTN supports 1 Gigabit Ethernet (GbE) with ODU0 framing alongside ODU1 (2.5G), ODU2 (10G), ODU3 (40G) and ODU4 (100G). The standard packs efficiently client signals such as SONET/SDH, Ethernet, video and Fibre Channel, at the various speed increments up to 100Gbps prior to transmission over lightpaths. Meanwhile, the Optical Internetworking Forum (OIF) has recently developed the OTN-over-Packet-Fabric standard that allows OTN to be switched using packet fabrics.

"OTN switching is a foundational technology in the network," says Michael Adams, Ciena’s vice president of product & technology marketing.

Operator benefits

Whereas 10Gbps services matched 10Gbps lightpaths only a few years ago, transport speeds have now surged ahead. Common services are at 1 and 10 GbE while transport is now at 40Gbps and 100Gbps speeds. OTN switching allows client signals to be combined efficiently to fill the higher capacity lightpaths and avoid stranded bandwidth in the network.

OTN also benefits network connectivity changes. With AT&T's Optical Mesh Service, for example, customers buy a total capacity and, using a web portal, can adapt connectivity between their sites as requirements change. "It [OTN] can manage GbE streams and switch them through the network in an efficient manner," says Adams.

The ability to adapt connectivity is also an important requirement for cloud computing, with OTN switching and a mesh control plane seen as a promising way to enable dynamic networking that provides guaranteed bandwidth when needed, says Ciena.

OTN also offers an alternative to IP-over-DWDM, argues Ciena. By adding a 100Gbps wavelength, service routers can exploit OTN to add 10G services as needed rather than keep adding a 10Gbps wavelength for each service using IP-over-DWDM. "To enable service creation quickly, why not put your router network on top of that network versus running it directly?" says Adams.

OTN hardware announcements

The latest OTN chip announcements from PMC and Applied Micro offer enhanced capacity when aggregating and switching client signals, while also supporting the interfacing to various switch fabrics.

PMC has announced two metro OTN processors, dubbed the HyPHY 20Gflex and 10Gflex. The devices are targeted at compact "pizza boxes" that aggregate residential, enterprise and mobile backhaul traffic, as well as packet-optical and optical transport platforms.

AppliedMicro's TPACK unit has unveiled two additions to its OTN designs: a 100Gbps chipset and the TPO134. The company also announced the general availability of its 100Gbps muxponder and transponder OTN design, now being deployed in the network.

Source: AppliedMicro

Source: AppliedMicro

"OTN has long had a home in the core of the network," says Scott Wakelin, product manager for HyPHY flex at PMC. "But there is a clear march from carriers, led in particular by China, to adopt OTN in the metro, whether layer-zero or layer-one switched."

Using various market research forecasts, PMC expects the global OTN chip market to reach US $600 million in 2015, the bulk being metro.

PMC and AppliedMicro offer application-specific standard product (ASSP) OTN ICs while AppliedMicro also offers FPGA-based OTN designs.

The benefits of using an FPGA, says AppliedMicro, include time-to-market, the ability to reprogramme the design to accommodate standards’ tweaks, and enabling system vendors to add custom logic elements to differentiate their designs. PMC develops ASSPs only, arguing that such chips offer superior integration, power efficiency and price points.

Both companies, when developing an ASSP, know that the resulting design will be adopted by end customers. When PMC announced its original HyPhy family of devices, seven of the top nine OEMs were developing board designs based on the chip family.

PMC's metro OTN processors

The HyPHY 20Gflex has 16 SFP (up to 5Gbps) and two 10Gbps XFP/SFP+ interfaces, whose streams it can groom using the device's 100Gbps cross-connect. The cross-connect can manipulate streams down to SONET/SDH STS-1/ STM-0 rates and ODU0 (1GbE) OTN channels.

Both ODU0 and ODUflex channels are supported. Before adding ODU0, a Gigabit Ethernet channel could only sit in a 2.5Gbps (ODU1) container, which wastes half the capacity. Similarly by supporting ODUflex, signals such as video can be mapped into frames made up of increments of 1.25Gbps. "For efficient use of resources from the metro into the core, you need to start at the access," said Wakelin.

Source: PMC

Source: PMC

The chip also supports the OTN-over-Packet-Fabric protocol. The devices can interface to OTN, SONET/SDH and packet switch fabrics.

The 20Gflex offers 40Gbps of OTN framing and a further 20Gbps of OTN mapping. The OTN mapping is used for those client signals to be fitted into ODU frames. With the additional 40Gbps interfaces that connect to the switch fabric, the total interface throughput is 100Gbps, matching the device's cross-connect capacity.

Other chip features include Fast Ethernet, Gigabit Ethernet and 10GbE MACs for carrier Ethernet transport, and support for timing over packet standards, including IEEE 1588v2 over OTN, used to carry mobile backhaul timing information.

The 10Gflex variant has similar functionality to the 20Gflex but with lower throughput.

PMC is now sampling the HyPHY Gflex devices to lead customers.

AppliedMicro's OTN designs

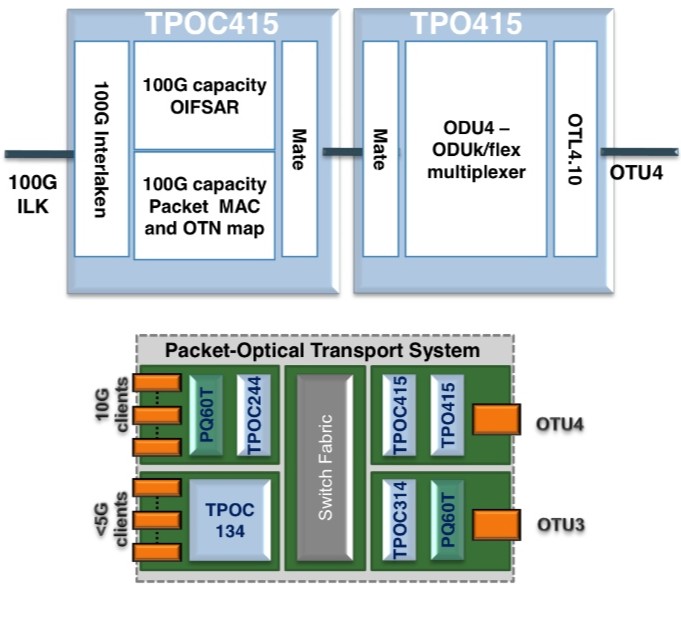

AppliedMicro's TPACK unit has unveiled two OTN designs: a TPO415/C415 OTN multiplexer chipset for use in 100Gbps packet optical transport line cards, and the TPO134 device used at the network edge.

The two devices combined - the TPO415 and TPOC415 - are implemented using FPGAs, what AppliedMicro dubs softsilicon. The two devices interface between the 100Gbps line side and the switch fabric. The TPO415 takes the OTU4 line side OTN signal and demultiplexes it to the various channel constituents. These can be ODU0, ODU1, ODU2, ODU3, ODU4 and ODUflex - capacity from 1Gbps to 100Gbps.

"The [100Gbps muxponder] design comes with an API that makes it look like one component"

Lars Pedersen, AppliedMicro

The TPOC415 has a 100Gbps, 80-channel segmentation and reassembly function (SAR) compliant with the OIF OTN-over-Packet-Fabric standard. The TPOC415 also has a 100Gbps, 80 channel packet mapper function for the transport of Ethernet and MPLS-TP over ODUk or ODUflex. The device's 100Gbps Interlaken interface is used to connect to the switch fabric for packet switching and ODU cross-connection. The devices can also be used in a standalone fashion for designs where the switch fabric does not use Interlaken, or when working with integrated switches and network processors.

Source: AppliedMicro

Source: AppliedMicro

"This is the first solution in the market for doing these hybrid functions at 100Gbps," says Lars Pedersen, CTO of AppliedMicro's TPACK.

The second design is the softsilicon TPO134, a 10Gbps add/drop multiplexer that can take in up to 16 clients signals and has two OTU2 interfaces. In between is the cross-connect that supports ODU0, ODU1 and ODUflex channels. Two devices can be combined to support 32 client channels and four OTU2 interfaces. Such a dual-design in a pizza-box system would be used to combine multiple client streams.

Being softsilicon, the TPO134 can also be used for packet optical transport systems. Here by downloading a different FPGA image, the design can also implement the segmentation and reassembly function required for the OIF's OTN-over-Packet-Fabric standard. "The interface to the switch fabric is Interlaken again," says Pedersen.

The TPO134 design doubles the capacity of AppliedMicro's previous add/drop multiplexer designs and is the first to support the OIF standard.

AppliedMicro has also announced the general availability of its 100G muxponder design. The muxponder design is a three-device chipset based on two PQ60 ASSPs and a TPO404 softsilicon design.

The PQ60T devices map 10 and 40Gbps clients into OTN and the TPO404 performs the multiplexing to OTU4 with forward error correction. The client signals supported include SONET/SDH, Ethernet and Fibre Channel. On the line side the design also supports various FEC schemes including an enhanced FEC. The TPO404 differ from the TPOT414/424 devices that link 100GbE and 100Gbps line side.

"The [100Gbps muxponder] design comes with an API [application programming interface] that makes it look [from a software perspective] like one component with some client and line ports, similar to the TPO134 device," says Pedersen.

Further reading:

Transport processors now at 100 Gigabit

60-second interview with .... Vladimir Kozlov

A Q&A with Vladimir Kozlov, CEO of market research firm, LightCounting. The first of occasional, brief interviews with industry figures.

"You have to look over a longer time frame to understand the industry and appreciate the progress"

What exactly does your job entail?

As the founder and CEO my primary responsibility is to ensure that LightCounting's business grows smoothly. In practice this means managing a small team of industry experts to deliver quality market intelligence to our customers, co-ordinating sales and marketing and looking for new business opportunities.

What aspect of the job do you most enjoy?

I love talking with clients and industry experts. A good discussion is an essential element of market research, sales and business development. Not to mention that many of these people are my good friends by now.

How is the optical industry changing?

Slowly but steadily. If you look at it on a daily or quarterly time frame, it is full of problems and nothing is really changing. You have to look over a longer time frame to understand the industry and appreciate the progress.

What are you working on now?

I recently attended Optinet China held in Beijing. I am working on a report based on that exhibition and starting to work on a forecast report. China is a wild card, the economic systems of Europe are falling apart, and the U.S. is full of uncertainty. Someone needs to come up with a forecast for the next four years, despite this uncertainty. It is a big responsibility and a privilege at the same time.

You have been covering optical as an analyst for over a decade. What advice about market research would you give to someone starting now?

Doing a really good job in market research is much harder than it looks. You have to live on the edge to be able to do it right. Scanning the news and running forecast models is the easy part. Digging deep into the industry by finding the right questions and people who can answer them is much more challenging, but it is also a lot of fun.

Unless you can live on the edge and get excited by challenges, do not go into market research. Really good market research people are a bit crazy…I am not really good at it, but I am learning every day and I will certainly be crazy when it is time to retire.

LightCounting is a market research and consulting company focused on high speed interconnects for the datacom, telecom, and consumer communications.

OneChip Photonics targets the data centre with its PICs

OneChip Photonics is developing integrated optical components for the IEEE 40GBASE-LR4 and 100GBASE-LR4 interface standards.

The company believes its photonic integrated circuits (PICs) will more than halve the cost of the 40 and 100 Gigabit 10km-reach interfaces, enough for LR4 to cost-competitively address shorter reach applications in the data centre.

"I think we can cut the price [of LR4 modules] by half or better”

Andy Weirich, OneChip Photonics

The products mark an expansion of the Canadian startup's offerings. Until now OneChip has concentrated on bringing PIC-based passive optical network (PON) transceivers to market.

LR4 PICs

The startup is developing separate LR4 transmitter and receiver PICs. The 40 and 100GBASE-LR4 receivers are due in the third quarter of 2012, while the transmitters are expected by the year end.

The 40GBASE-LR4 receiver comprises a wavelength demultiplexer - a 4-channel arrayed waveguide grating (AWG) - and four photo-detectors operating around 1300nm. A spot-size converter - an integrated lens - couples the receiver's waveguide's mode field to the connecting fibre.

"[Data centre operators] are saying that they are having to significantly bend out of shape their data centre architecture to accommodate even 300m reaches”

The 40GBASE-LR4 transmitter PIC comprises four directly-modulated distributed feedback (DFB) lasers while the 100GBASE-LR4 use four electro-absorption modulator DFB lasers. Different lasers for the two PICs are required since the four wavelengths at 100 Gig, also around 1300nm, are more tightly spaced: 5nm versus 20nm. "They are much closer together than the 40 Gig version,” says Andy Weirich, OneChip Photonics' vice president of product line management.

Another consequence of the wider wavelength spacings is that the 40 Gig transmitter uses four discrete lasers. “Because the 40 Gig wavelengths are much further apart, putting all the lasers on the one die is problematic," says Weirich. The 40GBASE-LR4 design thus uses five indium phosphide components: four lasers and the AWG, while the 40GBASE-LR4 receiver and the two 100GBASE-LR4 devices are all monolithic PICs.

Both LR4 transmitter designs also include monitor photo-diodes for laser control

Lower size and cost

OneChip says the resulting PICs are tiny, measuring less than 3mm in length. “We think the PICs will enable the packaging of LR4 in a QSFP,” says Weirich. 40GBASE-LR4 products already exists in the QSFP form factor but the 100GBASE-LR4 uses a CFP module.

The startup expects module makers to use its receiver chips once they become available rather than wait for the receiver-transmitter PIC pair. "Reducing the size of one half the solution is possibly good enough to fit the whole hybrid design - the PIC for the receive and discretes for the transmit - into a QSFP,” says Weirich.

The PICs are expected to reduce significantly the cost of LR4 modules. "I think we can cut the price by half or better,” says Weirich. “Right now the LR4 is far too expensive to be used for data centre interconnect.” OneChip expects its LR4 PICs to be cost-competitive with the 2km reach 10x10 MSA interface.

Meanwhile, short-reach 40 and 100 Gig interfaces use VCSEL technology and multi-mode fibre to address 100m reach requirements. In larger data centres this reach is limiting. Extended reach - 300-400m - multimode interfaces have emerged but so far these are at 40 Gig only.

"[Data centre operators] are saying that they are having to significantly bend out of shape their data centre architecture to accommodate even 300m reaches,” says Weirich. “They really want more than that.”

OneChip believes interfaces distances of 200m-2km is underserved and it is this market opportunity that it is seeking to address with its LR4 designs.

Roadmap

Will OneChip integrate the design further to product a single PIC LR4 transceiver?

"It can be put into one chip but it is not clear that there is an economic advantage,” says Weirich. Indeed one PIC might even be more costly than the two-PIC chipset.

Another factor is that at 100 Gig, the 25Gbps electronics present a considerable signal integrity design challenge. “It is very important to keep the electronics very close to the photo-detectors and the modulators,” he says. “That becomes more difficult if you put it all on the one chip.” The fabrication yield of a larger single PIC would also be reduced, impacting cost.

OneChip, meanwhile, has started limited production of its PON optical network unit (ONU) transceivers based on its EPON and GPON PICs. The company's EPON transceivers are becoming generally available while the GPON transceivers are due in two months’ time.

The company has yet to decide whether it will make its own LR4 optical modules. For now OneChip is solely an LR4 component supplier.

Further reading:

See OFC/ NFOEC 2012 highlights, the Kotura story in the Optical Engines section