OFC/NFOEC 2013 industry reflections - Part 2

Bill Gartner, vice president and general manager of high-end routing and optical business unit at Cisco Systems.

There were several key themes during this year’s OFC conference, but what I found most compelling were the disruptive trends and technologies that stand to significantly impact the optical communications market in the coming years.

"SDN could be the single biggest disruptor in the transport industry and has the potential to transform network programmability and orchestration"

One of the hottest themes at this year’s OFC conference is the role of silicon photonics and the benefits it presents to service providers and carriers. Silicon photonics is truly one of the most interesting advancements taking place in the industry as it has the potential to drastically lower the density, power and overall cost of ASICs.

Several carriers at the show, including CenturyLink and AT&T, presented their view that optics is becoming a larger portion of their spend and now exceeds the cost of packet switching technologies.

A second key trend coming out of the show is software-defined networking (SDN) and its impact on networking. There is tremendous industry interest around this topic and it extended to the Anaheim Convention Center.

With SDN, our customers can increase flexibility in terms of selecting the features and protocols that make sense for their network application – whether it is a data centre application, a service provider application or a large-scale enterprise application.

The last theme that resonated during OFC was around the convergence of packet and optical solutions. As service providers look for ways to decrease both CapEx and OpEx related to the network, incremental technology improvements will decrease costs. However, for many customers, their network capacity is growing far faster than their revenues, so incremental improvements will not yield required reductions.

"As an industry we have to evolve organisationally and technically. Those who fail to recognise that face extinction."

This shows us that we need to explore more fundamental shifts in architectures that have the potential to yield significant savings in OpEx and CapEx. Enter the convergence of IP and optical – this may take the form of converged platforms, but will also involve multi-layer control planes that allow the exchange of information between the packet and optical layers. This convergence helps answer questions like: How well is the network utilised? Can it be optimised? Are there multi-layer protection/ restoration schemes that make better use of the available resources?

During the conference, I had the opportunity to present at the OSA Executive Forum, which brought together more than 150 senior-level executives to discuss key themes, opportunities and challenges facing the next generation in optical communications.

What struck me is that this industry is constantly evolving, which presents challenges and opportunities. We are looking at an industry that is highly fragmented at the moment and requires further streamlining.

You have new players at every level of the value chain that bring exciting, unique perspectives and advanced technologies that increase efficiency and decrease costs. But none of this innovation comes without change; as an industry we have to evolve organisationally and technically. Those who fail to recognise that face extinction.

"This is like solving a simultaneous equation where the variables are power, cost and density – you need to solve for all three"

The key themes discussed at OFC are an indication of what is to come in optical transport and mirror our top priorities at Cisco.

In the coming year, we expect to see CMOS photonics technology enable lower power pluggables. This is the case with CPAK, but more broadly, we will see this technology find its way into low cost board-to-board interconnect and chassis-to-chassis interconnect.

As an industry, we have made great progress in reducing the cost of transmitting bits over a long distance but much more remains to be done. As bit rates increase to beyond 100 Gigabit, we must look for ways to drive this cost down faster, while decreasing both power and size. This is like solving a simultaneous equation where the variables are power, cost and density – you need to solve for all three.

During the next five years, I think that SDN could be the single biggest disruptor in the transport industry and has the potential to transform network programmability and orchestration.

We will see an entire software industry emerge around SDN, but it is important to note that this is really all about multilayer control – Layer 0 to Layer 3. SDN is not simply an optical transport problem to be solved. The advantage will go to those who are looking at this holistically.

Brandon Collings, CTO of the communications and commercial optical products group at JDSU

I found it interesting that the major network equipment manufactures had a significantly increased presence on the exhibition floor.

"This year’s focus and buzz was all on silicon photonics with researchers leveraging it against nearly every function in telecom and datacom"

I learned a lot about SDN at levels above the photonic network. This is a very complex topic likely to take some time to fully mature within telecom networks; however, the potential values appear compelling.

This year’s focus and buzz was all on silicon photonics with researchers leveraging it against nearly every function in telecom and datacom. I expect it will be interesting for industry watchers how this promising technology evolves within the industry, where it achieves its promise and where it runs into practical roadblocks.

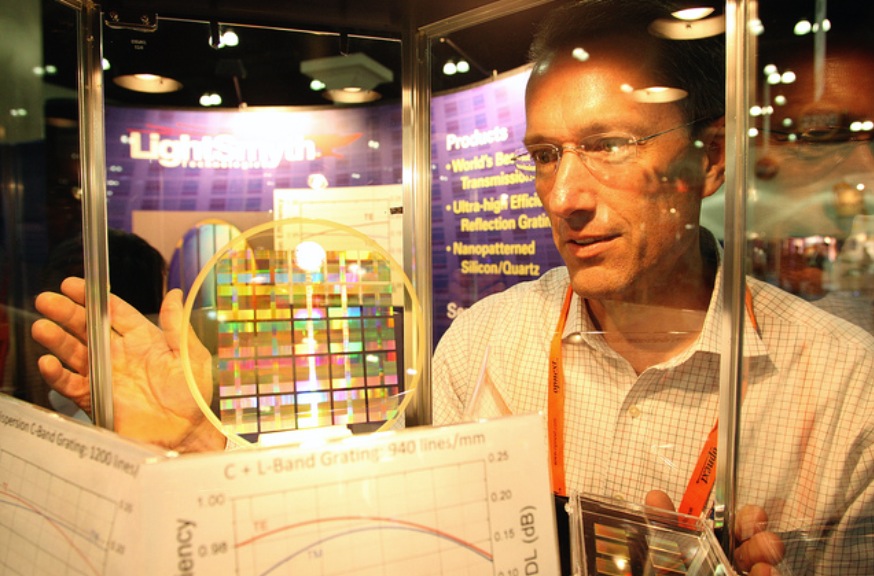

Vladimir Kozlov, CEO of LightCounting

This was the best OFC since 2000. The optical community is once again energised. Some attribute the improved mood to high-value acquisitions of companies LightWire and Nicira that were made last year, but this is just part of the story.

Yes, the potential of silicon photonics and software-defined networking (which LightWire and Nicira were focussed on, respectively) do broaden the horizon for optical technologies in communication networks and data centres. But the excitement is not limited to just these two ideas. All the new - and old or forgotten - ideas, technologies and products once again have a shot at making a difference. Demand for optics is strong and the customers are hungry for innovation.

"Demand for optics is strong and the customers are hungry for innovation"

In contrast to 2000, few people are getting carried away with the excitement. The mood is much more constructive this time and it makes me hope that most of this new energy will not be wasted.

I would not single out a specific technology or application to watch out for in the next few years. All of them have opportunities and challenges ahead. We will keep track of as many developments as we can and make sure that hype does lead the industry off the tracks this time.

Effie Favreau, marketing, Sumitomo Electric

One hundred Gigabit technology is here. Last year there was a lot of hype about 100 Gigabit and now it is reality; vendors have products that are shipping.

Sumitomo and ClariPhy partnered on pluggable coherent modules. Together, we hosted an impressive demonstration with all the components to make pluggable coherent modules available next year.

"For the enterprise/ data centre, vendors requiring low cost, high density equipment really need the CFP4"

One thing I learned from the show is that vendors need to re-purpose their existing equipment. There was much discussion regarding software-enabled applications and passives to enhance the performance of networks and make them more intelligent.

There was the introduction of the CFP2 from several vendors as well as Cisco's CPAK. For the enterprise/ data centre, vendors requiring low cost, high density equipment really need the CFP4. At Sumitomo, we are concentrating our R&D efforts on the CFP4.

See also:

Part 1: Software-defined networking: A network game-changer? click here

Part 3: OFC/NFOEC 2013 industry reflections, click here

Part 4: OFC/NFOEC industry reflections, click here

Part 5: OFC/NFEC 2013 industry reflections, click here

Software-defined networking: A network game-changer?

OFC/NFOEC reflections: Part 1

"We [operators] need to move faster"

Andrew Lord, BT

Q: What was your impression of the show?

A: Nothing out of the ordinary. I haven't come away clutching a whole bunch of results that I'm determined to go and check out, which I do sometimes.

I'm quite impressed by how the main equipment vendors have moved on to look seriously at post-100 Gigabit transmission. In fact we have some [equipment] in the labs [at BT]. That is moving on pretty quickly. I don't know if there is a need for it just yet but they are certainly getting out there, not with live chips but making serious noises on 400 Gig and beyond.

There was a talk on the CFP [module] and whether we are going to be moving to a coherent CFP at 100 Gig. So what is going to happen to those prices? Is there really going to be a role for non-coherent 100 Gig? That is still a question in my mind.

"Our dream future is that we would buy equipment from whomever we want and it works. Why can't we do that for the network?"

I was quite keen on that but I'm wondering if there is going to be a limited opportunity for the non-coherent 100 Gig variants. The coherent prices will drop and my feeling from this OFC is they are going to drop pretty quickly when people start putting these things [100 Gig coherent] in; we are putting them in. So I don't know quite what the scope is for people that are trying to push that [100 Gigabit direct detection].

What was noteworthy at the show?

There is much talk about software-defined networking (SDN), so much talk that a lot of people in my position have been describing it as hype. There is a robust debate internally [within BT] on the merits of SDN which is essentially a data centre activity. In a live network, can we make use of it? There is some skepticism.

I'm still fairly optimistic about SDN and the role it might have and the [OFC/NFOEC] conference helped that.

I'm expecting next year to be the SDN conference and I'd be surprised if SDN doesn't have a much greater impact then [OFC/NFOEC 2014] with more people demoing SDN use cases.

Why is there so much excitement about SDN?

Why now when it could have happened years ago? We could have all had GMPLS (Generalised Multi-Protocol Label Switching) control planes. We haven't got them. Control plane research has been around for a long time; we don't use it: we could but we don't. We are still sitting with heavy OpEx-centric networks, especially optical.

"The 'something different' this conference was spatial-division multiplexing"

So why are we getting excited? Getting the cost out of the operational side - the software-development side, and the ability to buy from whomever we want to.

For example, if we want to buy a new network, we put out a tender and have some 10 responses. It is hard to adjudicate them all equally when, with some of them, we'd have to start from scratch with software development, whereas with others we have a head start as our own management interface has already been developed. That shouldn't and doesn't need to be the case.

Opening the equipment's north-bound interface into our own OSS (operating systems support) in theory, and this is probably naive, any specific OSS we develop ought to work.

Our dream future is that we would buy equipment from whomever we want and it works. Why can't we do that for the network?

We want to as it means we can leverage competition but also we can get new network concepts and builds in quicker without having to suffer 18 months of writing new code to manage the thing. We used to do that but it is no longer acceptable. It is too expensive and time consuming; we need to move faster.

It [the interest in SDN] is just competition hotting up and costs getting harder to manage. This is an area that is now the focus and SDN possibly provides a way through that.

Another issue is the ability to put quickly new applications and services onto our networks. For example, a bank wants to do data backup but doesn't want to spend a year and resources developing something that it uses only occasionally. Is there a bandwidth-on-demand application we can put onto our basic network infrastructure? Why not?

SDN gives us a chance to do something like that, we could roll it out quickly for specific customers.

Anything else at OFC/NFOEC that struck you as noteworthy?

The core networks aspect of OFC is really my main interest.

You are taking the components, a big part of OFC, and then the transmission experiments and all the great results that they get - multiple Terabits and new modulation formats - and then in networks you are saying: What can I build?

The networks have always been the poor relation. It has not had the great exposure or the same excitement. Well, now, the network is becoming centre stage.

As you see components and transmission mature - and it is maturing as the capacity we are seeing on a fibre is almost hitting the natural limit - so the spectral efficiency, the amount of bits you can squeeze in a single Hertz, is hitting the limit of 3,4,5,6 [bit/s/Hz]. You can't get much more than that if you want to go a reasonable distance.

So the big buzz word - 70 to 80 percent of the OFC papers we reviewed - was flex-grid, turning the optical spectrum in fibre into a much more flexible commodity where you can have wherever spectrum you want between nodes dynamically. Very, very interesting; loads of papers on that. How do you manage that? What benefits does it give?

What did you learn from the show?

One area I don't get yet is spatial-division multiplexing. Fibre is filling up so where do we go? Well, we need to go somewhere because we are predicting our networks continuing to grow at 35 to 40 percent.

Now we are hitting a new era. Putting fibre in doesn't really solve the problem in terms of cost, energy and space. You are just layering solutions on top of each other and you don't get any more revenue from it. We are stuffed unless we do something different.

The 'something different' this conference was spatial-division multiplexing. You still have a single fibre but you put in multiple cores and that is the next way of increasing capacity. There is an awful lot of work being done in this area.

I gave a paper [pointing out the challenges]. I couldn't see how you would build the splicing equipment, how you would get this fibre qualified given the 30-40 years of expertise of companies like Corning making single mode fibre, are we really going to go through all that again for this new fibre? How long is that going to take? How do you align these things?

"SDN for many people is data centres and I think we [operators] mean something a bit different."

I just presented the basic pitfalls from an operator's perspective of using this stuff. That is my skeptic side. But I could be proved wrong, it has happened before!

Anything you learned that got you excited?

One thing I saw is optics pushing out.

In the past we saw 100 Megabit and one Gigabit Ethernet (GbE) being king of a certain part of the network. People were talking about that becoming optics.

We are starting to see optics entering a new phase. Ten Gigabit Ethernet is a wavelength, a colour on a fibre. If the cost of those very simple 10GbE transceivers continues to drop, we will start to see optics enter a new phase where we could be seeing it all over the place: you have a GigE port, well, have a wavelength.

[When that happens] optics comes centre stage and then you have to address optical questions. This is exciting and Ericsson was talking a bit about that.

What will you be monitoring between now and the next OFC?

We are accelerating our SDN work. We see that as being game-changing in terms of networks. I've seen enough open standards emerging, enough will around the industry with the people I've spoken to, some of the vendors that want to do some work with us, that it is exciting. Things like 4k and 8k (ultra high definition) TV, providing the bandwidth to make this thing sensible.

"I don't think BT needs to be delving into the insides of an IP router trying to improve how it moves packets. That is not our job."

Think of a health application where you have a 4 or 8k TV camera giving an ultra high-res picture of a scan, piping that around the network at many many Gigabits. These type of applications are exciting and that is where we are going to be putting a bit more effort. Rather than the traditional just thinking about transmission, we are moving on to some solid networking; that is how we are migrating it in the group.

When you say open standards [for SDN], OpenFlow comes to mind.

OpenFlow is a lovely academic thing. It allows you to open a box for a university to try their own algorithms. But it doesn't really help us because we don't want to get down to that level.

I don't think BT needs to be delving into the insides of an IP router trying to improve how it moves packets. That is not our job.

What we need is the next level up: taking entire network functions and having them presented in an open way.

For example, something like OpenStack [the open source cloud computing software] that allows you to start to bring networking, and compute and memory resources in data centres together.

You can start to say: I have a data centre here, another here and some networking in between, how can I orchestrate all of that? I need to provide some backup or some protection, what gets all those diverse elements, in very different parts of the industry, what is it that will orchestrate that automatically?

That is the kind of open theme that operators are interested in.

That sounds different to what is being developed for SDN in the data centre. Are there two areas here: one networking and one the data centre?

You are quite right. SDN for many people is data centres and I think we mean something a bit different. We are trying to have multi-vendor leverage and as I've said, look at the software issues.

We also need to be a bit clearer as to what we mean by it [SDN].

Andrew Lord has been appointed technical chair at OFC/NFOEC

Further reading

Part 2: OFC/NFOEC 2013 industry reflections, click here

Part 3: OFC/NFOEC 2013 industry reflections, click here

Part 4: OFC/NFOEC industry reflections, click here

Part 5: OFC/NFEC 2013 industry reflections, click here

Luxtera's interconnect strategy

Part 1: Optical interconnect

Luxtera demonstrated a 100 Gigabit QSFP optical module at the OFC/NFOEC 2013 exhibition.

"We're in discussions with a lot of memory vendors, switch vendors and different ASIC providers"

"We're in discussions with a lot of memory vendors, switch vendors and different ASIC providers"

Chris Bergey, Luxtera

The silicon photonics-based QSFP pluggable transceiver was part of the Optical Internetworking Forum's (OIF) multi-vendor demonstration of the 4x25 Gigabit chip-to-module interface, defined by the CEI-28G-VSR Implementation Agreement.

The OIF demonstration involved several optical module and chip companies and included CFP2 modules running the 100GBASE-LR4 10km standard alongside Luxtera's 4x28 Gigabit-per-second (Gbps) silicon photonics-based QSFP28.

Kotura also previewed a 100Gbps QSFP at OFC/NFOEC but its silicon photonics design uses two chips and wavelength-division multiplexing (WDM).

The Luxtera QSFP28 is being aimed at data centre applications and has a 500m reach although Luxtera says up to 2km is possible. The QSFP28 is sampling to initial customers and will be in production next year.

100 Gigabit modules

Current 100GBASE-LR4 client-side interfaces are available in the CFP form factor. OFC/NFOEC 2013 saw the announcement of two smaller pluggable form factors at 100Gbps: the CFP2, the next pluggable on the CFP MSA roadmap, and Cisco Systems' in-house CPAK.

Now silicon photonics player Luxtera is coming to market with a QSFP-based 100 Gigabit interface, more compact than the CFP2 and CPAK.

The QSFP is already available as a 40Gbps interface. The 40Gbps QSFP also supports four independent 10Gbps interfaces. The QSFP form factor, along with the SFP+, are widely used on the front panels of data centre switches.

"The QSFP is an inside-the-data-centre connector while the CFP/CFP2 is an edge of the data centre, and for telecom, an edge router connector," says Chris Bergey, vice president of marketing at Luxtera. "These are different markets in terms of their power consumption and cost."

Bergey says the big 'Web 2.0' data centre operators like the reach and density offered by the 100Gbps QSFP as their data centres are physically large and use flatter, less tiered switch architectures.

"If you are a big systems company and you are betting on your flagship chip, you better have multiple sources"

The content service providers also buy transceivers in large volumes and like that the Luxtera QSFP works over single-mode fibre which is cheaper than multi-mode fibre. "All these factors lead to where we think silicon photonics plays in a big way," says Bergey.

The 100Gbps QSFP must deliver a lower cost-per-bit compared to the 40Gbps QSFP if it is to be adopted widely. Luxtera estimates that the QSFP28 will cost less than US $1,000 and could be as low as $250.

Optical interconnect

Luxtera says its focus is on low-cost, high-density interconnect rather than optical transceivers. "We want to be a chip company," says Bergey.

The company defines optical interconnect as covering active optical cable and transceivers, optical engines used as board-mounted optics placed next to chips, and ASICs with optical SerDes (serialiser/ deserialisers) rather than copper ones.

Optical interconnect, it argues, will have a three-stage evolution: starting with face-plate transceivers, moving to mid-board optics and then ASICS with optical interfaces. Such optical interconnect developments promise lower cost high-speed designs and new ways to architect systems.

Currently optics are largely confined to transceivers on a system׳s front panel. The exceptions are high-end supercomputer systems and emerging novel designs such as Compass-EOS's IP core router.

"The problem with the front panel is the density you can achieve is somewhat limited," says Bergey. Leading switch IC suppliers using a 40nm CMOS process are capable of a Terabit of switching. "That matches really well if you put a ton of QSFPs on the front panel," says Bergey.

But once switch IC vendors use the next CMOS process node, the switching capacity will rise to several Terabits. This becomes far more challenging to meet using front panel optics and will be more costly compared to putting board-mounted optics alongside the chip.

"When we build [silicon photonics] chips, we can package them in QSFPs for the front panel, or we can package them for mid-board optics," says Bergey.

"If it [silicon photonics] is viewed as exotic, it is never going to hit the volumes we aspire to."

The use of mid-board optics by system vendors is the second stage in the evolution of optical interconnect. "It [mid-board optics] is an intermediate step between how you move from copper I/O [input/output] to optical I/O," says Bergey.

The use of mid-board optics requires less power, especially when using 25Gbps signals, says Bergey: “You dont need as many [signal] retimers.” It also saves power consumed by the SerDes - from 2W for each SerDes to 1W, since the mid-board optics are closer and signals need not be driven all the way to the front panel. "You are saving 2W per 100 Gig and if you are doing several Terabits, that adds up," says Bergey.

The end game is optical I/O. This will be required wherever there are dense I/O requirements and where a lot of traffic is aggregated.

Luxtera, as a silicon photonics player, is pursuing an approach to integrate optics with VLSI devices. "We're in discussions with a lot of memory vendors, switch vendors and different ASIC providers," says Bergey.

Silicon photonics fab

Last year STMicroelectronics (ST) and Luxtera announced they would create a 300mm wafer silicon photonics process at ST's facility in Crolles, France.

Luxtera expects that line to be qualified, ramped and in production in 2014. Before then, devices need to be built, qualified and tested for their reliability.

"If you are a big systems company and you are betting on your flagship chip, you better have multiple sources," says Bergey. "That is what we are doing with ST: it drastically expands the total available market of silicon photonics and it is something that ST and Luxtera can benefit from.”

Having multiple sources is important, says Bergey: "If it [silicon photonics] is viewed as exotic, it is never going to hit the volumes we aspire to."

Part 2: Bell Labs on silicon photonics click here

Part 3: Is silicon photonics an industry game-changer? click here

Kotura demonstrates a 100 Gigabit QSFP

“QSFP will be the long-term winner at 100 Gig; the same way QSFP has been a high volume winner at 40 Gig”

“QSFP will be the long-term winner at 100 Gig; the same way QSFP has been a high volume winner at 40 Gig”

Arlon Martin, Kotura

The device is aimed at plugging the gap between vertical-cavity surface-emitting laser (VCSEL) -based 100GBASE-SR10 designs that have span 100m, and the CFP-based 100GBASE-LR4 that has a 10km reach.

“It is aimed at the intermediate space, which the IEEE is looking at a new standard for," says Arlon Martin, vice president of marketing at Kotura.

The device is similar to Luxtera's 100 Gigabit-per-second (Gbps) QSFP, also detailed at the OFC/NFOEC 2013 exhibition, and is targeting the same switch applications in the data centre. “Where we differ is our ability to do wavelength-division multiplexing (WDM) on a chip,” says Martin. Kotura also uses third-party electronics such as laser drivers and transimpedance amplifiers (TIA) whereas Luxtera develops and integrates its own.

The Kotura QSFP uses four wavelengths, each at 25Gbps, that operate around 1550nm. “We picked 1550nm because that is where a lot of the WDM applications are," says Martin. “There are also some customers that want more than four channels.” The company says it is also doing development work at 1310nm.

Although Kotura's implementation doesn't adhere to an IEEE standard - the standard is still work in progress - Martin points out that the 10x10 MSA is also not an IEEE standard, yet is probably the best selling client-side 100Gbps interface.

Optical component and module vendors including Avago Technologies, Finisar, Oclaro, Oplink, Fujitsu Optical Components and NeoPhotonics all announced CFP2 module products at OFC/NFOEC 2013. The CFP2 is the next pluggable form factor on the CFP MSA roadmap and is approximately half the size of the CFP.

The advent of the CFP2 enables eight 100Gbps pluggable modules on a system's front panel compared to four CFPs. But with the QSFP, up to 24 modules can be fitted while 48 are possible when mounted double sidedly - ’belly-to-belly’ - across the panel. “QSFP will be the long-term winner at 100 Gig; the same way QSFP has been a high volume winner at 40 Gig,” says Martin.

The QSFP uses 28Gbps pins, which is also called the QSFP28, but Kotura refers to it 100Gbps product as a QSFP. The design consumes 3.5W and uses two silicon photonic chips. Kotura says 80 percent of the total power consumption is due to the electronics.

One of the two chips is the silicon transmitter which houses the platform for the four lasers (gain chips) combined as a four-channel array. Each is an external cavity laser where part of the cavity is within the indium phosphide device and the rest in the silicon photonics waveguide. The gain chips are flip-chipped onto the silicon. The transmitter also includes a grating that sets each laser's wavelength, four modulators, and a WDM multiplexer to combine the four wavelengths before transmission on the fibre.

Kotura's 4x25 Gig transmitter and receiver chips. Source: Kotura

Kotura's 4x25 Gig transmitter and receiver chips. Source: Kotura

The receiver chip uses a four-channel demultiplexer with each channel fed to a germanium photo-detector. Two chips are used as it is easier to package each as a transmitter optical sub-assembly (TOSA) or receiver optical sub-assembly (ROSA), says Martin. The 100Gbps QSFP will be generally available in 2014.

Disruptive system design

The recent Compass-EOS IP router announcement is a welcome development, says Kotura, as it brings the optics inside the system - an example of mid-board optics - as opposed to the front panel. Compass-EOS refers to its novel icPhotonics chip combining a router chip and optics as silicon photonics but in practice it is an integrated optics design. The 168 VCSELs and 168 photodetectors per chip is massively parallel interconnect, says Martin.

“The advantage, from our point of view of silicon photonics, is to do WDM on the same fibre in order to reduce the amount of cabling and interconnect needed,” he says. At 100 Gigabit this reduces the cabling by a factor of four and this will grow with more 25Gbps wavelength channels used to 10x or even 40x eventually.

“What we want to do is transition from the electronics to the optical domain as close to those large switching chips as possible,” says Martin. “Pioneers [like Compass-EOS] demonstrating that style of architecture are to be welcomed."

Kotura says that every company that is building large switching and routing ASICs is looking at various interface options. "We have talked to quite a few of them,” says Martin.

One solution suited to silicon photonics is to place the lasers on the front panel while putting the modulation, detection and WDM devices - packaged using silicon photonics - right next to the ASICs. This way the laser works at the cooler room temperature while the rest of the circuitry can be at the temperature of the chip, says Martin.

Infinera speeds up network restoration

- Claimed to be the only hardware implementation of the Shared Mesh Protection protocol

- Provides network-wide protection against multiple network failures

- The chip is already within the DTN-X system; protocol will be activated this year

Pravin Mahajan, Infinera

Pravin Mahajan, Infinera

Infinera has developed a chip to speed up network restoration following faults.

The chip implements the Shared Mesh Protection (SMP) protocol being developed by the International Telecommunication Union (ITU) and the Internet Engineering Task Force (IETF) and Infinera believes it is the only vendor with hardware acceleration of the protocol.

The SMP standard is still being worked on and will be completed this year. Infinera demonstrated its hardware SMP implementation at OFC/NFOEC 2013 and will activate the scheme in operators' networks using a platform software upgrade this year.

The chip, dubbed Fast Shared Mesh Protection (FastSMP), is sprinkled across cards within Infinera's DTN-X platform and will be linked to other FastSMP ICs across the network. The FastSMP chips exchange signalling information and use internal look-up tables with pre-calculated routing data to determine the required protection action when one or more network failures occur.

Network faults

The causes of network faults range from fibre cuts from construction work to natural disasters such as Hurricane Sandy and the Asia Pacific tsunami. Level 3 Communications cited in 2011 that squirrels were the second most common cause of fibre cuts after construction work. The squirrels, chewing through fibre, accounted for 17 percent of all cuts. Meanwhile, one Indian service provider says it experiences 100 fibre cuts nationwide each day, according to Infinera.

Operators are also having to share their network maps with enterprises that want to assess the risk based on geography before choosing a service provider. "End customers no longer necessarily trust the service level agreements they have with operators," says Pravin Mahajan, director, corporate marketing and messaging at Infinera. In riskier regions, for example those prone to earthquakes, enterprises may choose two operators. "A form of 1+1 protection,” says Mahajan.

Operators want resilient networks that adapt to faults quickly, ideally within 50ms, without adding extra cost.

Traditional resiliency schemes include SONET/SDH’s 1+1 protection. This meets the sub-50ms requirement but addresses single faults only and requires dedicated back-up for each circuit. At the IP/MPLS (Internet Protocol/ Multiprotocol Label Switching) layer, the MPLS Fast Re-Route scheme caters for multiple failures and is sub-50ms. But it only addresses local faults, not the full network. And being packet-based - at a higher layer of the network - the scheme is costlier to implement.

"End customers no longer necessarily trust the service level agreements they have with operators"

Infinera's protection scheme uses its digital optical networking approach based on its photonic integrated circuits (PICs) coupled with Optical Transport Networking (OTN). OTN resides between the packet and optical layers, and using a mesh network topology, it can handle multiple failures. By sharing bandwidth at the transport layer, the approach is cheaper than at the packet layer. But being software-based, restoration takes seconds.

Infinera has speeded up the scheme by implementing SMP with its chip such that it meets the 50ms goal.

FastSMP chip

Infinera plans for multiple failures using the Generalized Multiprotocol Label Switching (GMPLS) control plane. “The same intelligence is now implemented in hardware [using the FastSMP processor],” says Mahajan.

The chip is on each 500 Gigabit-per-second (Gbps) line card, within the platform's OTN switch fabric, the client side and as part of the controller. The FastSMP, described as a co-processor to the CPU, hosts look-up tables with rules as to what should happen with each failure. The chips, located in the platform and across the network, then adjust to the back-up plan for each service failure.

Infinera says that the protection is at the service level not at the link level. "It does this at ODU [OTN's optical data unit] granularity," says Mahajan; each circuit can hold different sized services, 2.5 Gigabit-per-second (Gbps) or 10Gbps for example, all carried within a 100Gbps light path. "By defining failure scenarios on a per-service basis, you now need to put all these entries in hardware," says Mahajan.

To program the chip, network failures are simulated using Infinera's network planning tool to determine the required back-up schemes. These can be chosen based on shortest path or lowest latency, for example.

The GMPLS control plane protocol determines the rules as to how the network should be adapted and these are written on-chip. When a failure occurs, the chip detects the failure and performs the required actions.

The FastSMP chip is already on all the DTN-X line cards Infinera has shipped and will be enabled using software upgrade.

The GMPLS control plane recomputes backup paths after a failure has occurred. Typically no action is required but if several failures occur, the new GMPLS backup paths will be distributed to update the FastSMPs' tables. "Only on the third or fourth failure typically will a new backup plan be needed," says Mahajan.

In effect, the more meshed the network topology, the greater the number of failures that can be tolerated. "When you have three or four failures, you need to have new computation at the GMPLS control plane and then it can repopulate the backups for failures 3, 4, and 5," he says.

Instant bandwidth and FastSMP

Infinera is able to turn up bandwidth in real-time using its 500Gbps super-channel PIC. "We slice up the 500 Gig capacity available per line card into 100 Gig chunks," says Mahajan.

This feature, combined with FastSMP, aids operators dealing with failures once traffic is rerouted. The next backup route, if it is close to its full capacity, can have an extra 100 Gigabit of capacity added in case the link is called into use.

A study based on an example 80-node network by ACG Research estimates that the Shared Mesh Protection scheme uses 30 percent less line-side ports compared to an equivalent network implementing the 1+1 protection scheme.

Aurrion mixes datacom and telecom lasers on a wafer

"There is an inevitability of the co-mingling of electronics and optics and we are just at the beginning"

"There is an inevitability of the co-mingling of electronics and optics and we are just at the beginning"

Eric Hall, Aurrion

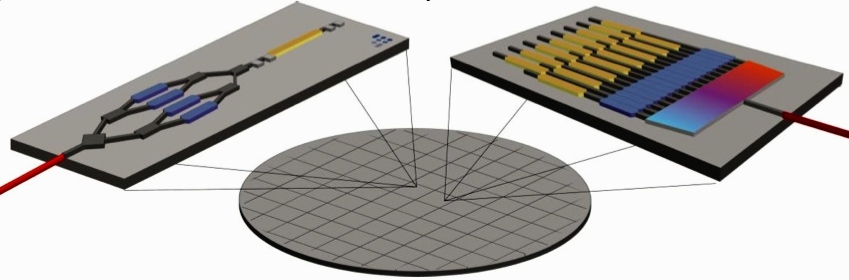

Aurrion's long-term vision for its heterogeneous integration approach to silicon photonics is to tackle all stages of a communication link: the high-bandwidth transmitter, switch and receiver. Heterogeneous integration refers to the introduction of III-V material - used for lasers, modulators and receivers - onto the silicon wafer where it is processed alongside the silicon using masks and lithography.

In a post-deadline paper given at OFC/NFOEC 2013, the fabless start-up detailed the making of various transmitters on a silicon wafer. These include tunable lasers for telecom that cover the C- and L-bands, and uncooled laser arrays for datacom.

The lasers are narrow-linewidth tunable devices for long-haul coherent applications. According to Aurrion, achieving a narrow-linewidth laser typically requires an external cavity whose size makes it difficult to produce a compact design when integrated with the modulator.

Having a tunable laser integrated with the modulator on the same silicon photonics platform will enable compact 100 Gigabit coherent pluggable modules. "The 100 Gig equivalent of the tunable XFP or SFP+," says Eric Hall, vice president of business development at Aurrion.

Hall admits that traditional indium-phosphide laser manufacturers will likely integrate tunable lasers with the modulator to produce compact narrow-linewidth designs. "There will be other approaches but it is exciting that we can now make this laser and modulator on this platform," says Hall. "And it becomes very exciting when you make these on the same wafer as high-volume datacom components."

Aurrion's vision of a coherent transmitter and a 16-laser array made on the same wafer. Source: Aurrion

Aurrion's vision of a coherent transmitter and a 16-laser array made on the same wafer. Source: Aurrion

The wafer's datacom devices include a 4-channel laser array for 100GBASE-LR4 10km reach applications and a 400 Gigabit transmitter design comprising 2x8 wavelength division multiplexing (WDM) arrays for a 16x25Gbps design, each laser spaced 200GHz apart. These could be for 10km or 40km applications depending on the modulator used. "These arrays are for uncooled applications," says Hall. "The idea is these don't have to be coarse WDM but tighter-spaced WDM that hold their wavelength across 20-80oC."

Coarse WDM-based laser arrays do not require a thermo-electric cooler (TEC) but the larger spacing of the wavelengths makes it harder to design beyond 100 Gigabit, says Hall: "Being able to pack in a bunch of wavelengths yet not need a TEC opens up a lot of applications."

Such lasers coupled with different modulators could also benefit 100 Gigabit shorter-reach interfaces currently being discussed in the IEEE, including the possibility of multi-level modulation schemes, says the company.

Aurrion says it is seeing the trend of photonics moving closer to the electronics due to emerging applications.

"Electronics never really noticed photonics because it was so far away and suddenly photonics has encroached into its personal space," says Hall. "There is an inevitability of the co-mingling of electronics and optics and we are just at the beginning."

Cisco Systems demonstrates 100 Gigabit technologies

* Announces 100 Gigabit transmission over 4,800km

"CPAK helps accelerate the feasibility and cost points of deploying 100Gbps"

Stephen Liu, Cisco

Cisco Sytems has announced that its 100 Gigabit coherent module has achieved a reach of 4,800km without signal regeneration. The span was achieved in the lab and the system vendor intends to verify the span in a customer's network.

The optical transmission system achieved a reach of 3,000km over low-loss fibre when first announced in 2012. The extended reach is not a result of a design upgrade, rather the 100 Gigabit-per-second (Gbps) module is being used on a link with Raman amplification.

Cisco says it started shipping its 100Gbps coherent module in June 2012. "We have shipped over 2,000 100Gbps coherent dense WDM ports," says Sultan Dawood, marketing manager at Cisco. The 100Gbps ports include line-side 100Gbps interfaces integrated within Cisco's ONS 15454 multi-service transport platform and its CRS core router supporting its IP-over-DWDM elastic core architecture.

Cisco has also coupled the ASR 9922 series router to the ONS 15454. "We are extending what we have done for IP and optical convergence in the core," says Stephen Liu, director of market management at Cisco. "There is now a common solution to the [network] edge."

None of Cisco's customers has yet used 100Gbps over a 3,000km span, never mind 4,800km. But the reach achieved is an indicator of the optical transmission performance. "The [distance] performance is really a proxy for usefulness," says Liu. "If you take that 3,000km over low-loss fibre, what that buys you is essentially a greater degree of tolerance for existing fibre in the ground."

Much industry attention is being given to the next-generation transmission speeds of 400Gbps and one Terabit. This requires support for super-channels - multi-carrier signals to transmit 400Gbps and one Terabit as well as flexible spectrum to pack the multi-carrier signals efficiently across the fibre's spectrum. But Cisco argues that faster transmission is only one part of the engineering milestones to be achieved, especially when 100Gbps deployment is still in its infancy.

To benefit 100Gbps deployments, Cisco has officially announced its own CPAK 100Gbps client-side optical transceiver after discussing the technology over the last year. "CPAK helps accelerate the feasibility and cost points of deploying 100Gbps," says Liu.

CPAK

The CPAK is Cisco' first optical transceiver using silicon photonics technology following its acquisition of LightWire. The CPAK is a compact optical transceiver to replace the larger and more power hungry 100Gbps CFP interfaces.

The CPAK is being launched at the same time as many companies are announcing CFP2 multi-source agreement (MSA) optical transceiver products. Cisco stresses that the CPAK conforms to the IEEE 100GBASE-LR4 and -SR10 100Gbps standards. Indeed at OFC/NFOEC it is demonstrating the CPAK interfacing with a CFP2.

The CPAK will be used across several Cisco platforms but the first implementation is for the ONS 15454.

The CPAK transceiver will be generally available in the summer of 2013.

OFC/NFOEC 2013 to highlight a period of change

Next week's OFC/NFOEC conference and exhibition, to be held in Anaheim, California, provides an opportunity to assess developments in the network and the data centre and get an update on emerging, potentially disruptive technologies.

Source: Gazettabyte

Source: Gazettabyte

Several networking developments suggest a period of change and opportunity for the industry. Yet the impact on optical component players will be subtle, with players being spared the full effects of any disruption. Meanwhile, industry players must contend with the ongoing challenges of fierce competition and price erosion while also funding much needed innovation.

The last year has seen the rise of software-defined networking (SDN), the operator-backed Network Functions Virtualization (NFV) initiative and growing interest in silicon photonics.

SDN has already being deployed in the data centre. Large data centre adopters are using an open standard implementation of SDN, OpenFlow, to control and tackle changing traffic flow requirements and workloads.

Telcos are also interested in SDN. They view the emerging technology as providing a more fundamental way to optimise their all-IP networks in terms of processing, storage and transport.

Carrier requirements are broader than those of data centre operators; unsurprising given their more complex networks. It is also unclear how open and interoperable SDN will be, given that established vendors are less keen to enable their switches and IP routers to be externally controlled. But the consensus is that the telcos and large content service providers backing SDN are too important to ignore. If traditional switching and routers hamper the initiative with proprietary add-ons, newer players will willing fulfill requirements.

Optical component players must assess how SDN will impact the optical layer and perhaps even components, a topic the OIF is already investigating, while keeping an eye on whether SDN causes market share shifts among switch and router vendors.

The ETSI Network Functions Virtualization (NFV) is an operator-backed initiative that has received far less media attention than SDN. With NFV, telcos want to embrace IT server technology to replace the many specialist hardware boxes that take up valuable space, consume power, add to their already complex operations support systems (OSS) while requiring specialist staff. By moving functions such as firewalls, gateways, and deep packet inspection onto cheap servers scaled using Ethernet switches, operators want lower cost systems running virtualised implementations of these functions.

The two-year NFV initiative could prove disruptive for many specialist vendors albeit ones whose equipment operate at higher layers of the network, removed from the optical layer. But the takeaway for optical component players is how pervasive virtualisation technology is becoming and the continual rise of the data centre.

Silicon photonics is one technology set to impact the data centre. The technology is already being used in active optical cables and optical engines to connect data centre equipment, and soon will appear in optical transceivers such as Cisco Systems' own 100Gbps CPAK module.

Silicon photonics promises to enable designs that disrupt existing equipment. Start-up Compass-EOS has announced a compact IP core router that is already running live operator traffic. The router makes use of a scalable chip coupled to huge-bandwidth optical interfaces based on 168, 8 Gigabit-per-second (Gbps) vertical-cavity surface-emitting lasers (VCSELs) and photodetectors. The Terabit-plus bandwidth enables all the router chips to be connected in a mesh, doing away with the need for the router's midplane and switching fabric.

The integrated silicon-optics design is not strictly silicon photonics - silicon used as a medium for light - but it shows how optics is starting to be used for short distance links to enable disruptive system designs.

Some financial analysts are beating the drum of silicon photonics. But integrated designs using VCSELs, traditional photonic integration and silicon photonics will all co-exist for years to come and even though silicon photonics is expected to make a big impact in the data centre, the Compass-EOS router highlights how disruptive designs can occur in telecoms.

Market status

The optical component industry continues to contend with more immediate challenges after experiencing sharp price declines in 2012.

The good news is that market research companies do not expect a repeat of the harsh price declines anytime soon. They also forecast better market prospects: The Dell'Oro Group expects optical transport to grow through 2017 at a compound annual growth rate (CAGR) of 10 percent, while LightCounting expects the optical transceiver market to grow 50 percent, to US $5.1bn in 2017. Meanwhile Ovum estimates the optical component market will grow by a mid-single-digit percent in 2013 after a contraction in 2012.

In the last year it has become clear how high-speed optical transport will evolve. The equipment makers' latest generation coherent ASICs use advanced modulation techniques, add flexibility by trading transport speed with reach, and use super-channels to support 400 Gigabit and 1 Terabit transmissions. Vendors are also looking longer term to techniques such as spatial-division multiplexing as fibre spectrum usage starts to approach the theoretical limit.

Yet the emphasis on 400 Gigabit and even 1 Terabit is somewhat surprising given how 100 Gigabit deployment is still in its infancy. And if the high-speed optical transmission roadmap is now clear, issues remain.

OFC/NFOEC 2013 will highlight the progress in 100 Gigabit transponder form factors that follow the 5x7-inch MSA, 100 Gigabit pluggable coherent modules, and the uptake of 100 Gigabit direct-detection modules for shorter reach links - tens or hundreds of kilometers - to connect data centres, for example.

There is also an industry consensus regarding wavelength-selective switches (WSSes) - the key building block of ROADMs - with the industry choosing a route-and-select architecture, although that was already the case a year ago.

There will also be announcements at OFC/NFOEC regarding client-side 40 and 100 Gigabit Ethernet developments based on the CFP2 and CFP4 that promise denser interfaces and Terabit capacity blades. Oclaro has already detailed its 100GBASE-LR4 10km CFP2 while Avago Technologies has announced its 100GBASE-SR10 parallel fibre CFP2 with a reach of 150m over OM4 fibre.

The CFP2 and QSFP+ make use of integrated photonic designs. Progress in optical integration, as always, is one topic to watch for at the show.

PON and WDM-PON remain areas of interest. Not so much developments in state-of-the-art transceivers such as for 10 Gigabit EPON and XG-PON1, though clearly of interest, but rather enhancements of existing technologies that benefit the economics of deployment.

The article is based on a news analysis published by the organisers before this year's OFC/NFOEC event.

OFC/NFOEC 2013: Technical paper highlights

Source: The Optical Society

Source: The Optical Society

Network evolution strategies, state-of-the-art optical deployments, next-generation PON and data centre interconnect are just some of the technical paper highlights of the upcoming OFC/NFOEC conference and exhibition, to be held in Anaheim, California from March 17-21, 2013. Here is a selection of the papers.

Optical network applications and services

Fujitsu and AT&T Labs-Research (Paper Number: 1551236) present simulation results of shared mesh restoration in a backbone network. The simulation uses up to 27 percent fewer regenerators than dedicated protection while increasing capacity by some 40 percent.

KDDI R&D Laboratories and the Centre Tecnològic de Telecomunicacions de Catalunya (CTTC), Spain (Paper Number: 1553225) show results of an OpenFlow/stateless PCE integrated control plane that uses protocol extensions to enable end-to-end path provisioning and lightpath restoration in a transparent wavelength switched optical network (WSON).

In invited papers, Juniper highlights the benefits of multi-layer packet-optical transport, IBM discusses future high-performance computers and optical networking, while Verizon addresses multi-tenant data centre and cloud networking evolution.

Network technologies and applications

A paper by NEC (Paper Number: 1551818) highlights 400 Gigabit transmission using four parallel 100 Gigabit subcarriers over 3,600km. Using optical Nyquist shaping each carrier occupies 37.5GHz for a total bandwidth of 150GHz.

In an invited paper Andrea Bianco of the Politecnico de Torino, Italy details energy awareness in the design of optical core networks, while Verizon's Roman Egorov discusses next-generation ROADM architecture and design.

FTTx technologies, deployment and applications

In invited papers, operators share their analysis and experiences regarding optical access. Ralf Hülsermann of Deutsche Telekom evaluates the cost and performance of WDM-based access networks, while France Telecom's Philippe Chanclou shares the lessons learnt regarding its PON deployments and details its next steps.

Optical devices for switching, filtering and interconnects

In invited papers, MIT's Vladimir Stojanovic discusses chip and board scale integrated photonic networks for next-generation computers. Alcatel-Lucent's Bell Labs' Nicholas Fontaine gives an update on devices and components for space-division multiplexing in few-mode fibres, while Acacia's Long Chen discusses silicon photonic integrated circuits for WDM and optical switches.

Optoelectronic devices

Teraxion and McGill University (Paper Number: 1549579) detail a compact (6mmx8mm) silicon photonics-based coherent receiver. Using PM-QPSK modulation at 28 Gbaud, up to 4,800 km is achieved.

Meanwhile, Intel and the UC-Santa Barbara (Paper Number: 1552462) discuss a hybrid silicon DFB laser array emitting over 200nm integrated with EAMs (3dB bandwidth> 30GHz). Four bandgaps spread over greater than 100nm are realised using quantum well intermixing.

Transmission subsystems and network elements

In invited Papers, David Plant of McGill University compares OFDM and Nyquist WDM, while AT&T's Sheryl Woodward addresses ROADM options in optical networks and whether to use a flexible grid or not.

Core networks

Orange Labs' Jean-Luc Auge asks whether flexible transponders can be used to reduce margins. In other invited papers, Rudiger Kunze of Deutsche Telekom details the operator's standardisation activities to achieve 100 Gig interoperability for metro applications, while Jeffrey He of Huawei discusses the impact of cloud, data centres and IT on transport networks.

Access networks

Roberto Gaudino of the Politecnico di Torino discusses the advantages of coherent detection in reflective PONs. In other invited papers, Hiroaki Mukai of Mitsubishi Electric details an energy efficient 10G-EPON system, Ronald Heron of Alcatel-Lucent Canada gives an update on FSAN's NG-PON2 while Norbert Keil of the Fraunhofer Heinrich-Hertz Institute highlights progress in polymer-based components for next-generation PON.

Optical interconnection networks for datacom and computercom

Use of orthogonal multipulse modulation for 64 Gigabit Fibre Channel is detailed by Avago Technologies and the University of Cambridge (Paper Number: 1551341).

IBM T.J. Watson (Paper Number: 1551747) has a paper on a 35Gbps VCSEL-based optical link using 32nm SOI CMOS circuits. IBM is claiming record optical link power efficiencies of 1pJ/b at 25Gb/s and 2.7pJ/b at 35Gbps.

Several companies detail activities for the data centre in the invited papers.

Oracle's Ola Torudbakken has a paper on a 50Tbps optically-cabled Infiniband data centre switch, HP's Mike Schlansker discusses configurable optical interconnects for scalable data centres, Fujitsu's Jun Matsui details a high-bandwidth optical interconnection for an densely integrated server while Brad Booth of Dell also looks at optical interconnect for volume servers.

In other papers, Mike Bennett of Lawrence Berkeley National Lab looks at network energy efficiency issues in the data centre. Lastly, Cisco's Erol Roberts addresses data centre architecture evolution and the role of optical interconnect.