Fulcrum's Alta switch chips add programmable pipeline to keep pace with standards

Part 2: Ethernet switch chips

Fulcrum Microsystems has announced its latest FocalPoint chip family of Ethernet switches. The Alta FM6000 series family supports up to 72 10-Gigabit ports and can process over one billion packets a second.

“Instead of every top-of-rack switch having a CPU subsystem, you could put all the horsepower into a set of server blades”

Gary Lee, Fulcrum Microsystems

The company’s Alta FM6000 series is its third generation of FocalPoint Ethernet switches. Based on a 65nm CMOS process, the Alta switch architecture includes a programmable packet-processing pipeline that can support emerging standards for data centre networking. These include Data Center Bridging (DCB), Transparent Interconnection of Lots of Links (TRILL), and two server virtualisation protocols: the IEEE 802.1Qbg Edge Virtual Bridging and the IEEE 802.1Qbh Bridge Port Extension.

Why is this important?

Data centre networking is undergoing a period of upheaval due to server virtualisation. Data centre operators must cope with the changing nature of traffic flows, as the predominant traffic becomes server-to-server (east-west traffic) rather than between the servers and end users (north-south).

In turn, IT staff want to consolidate the multiple networks they must manage - for LAN, storage and high-performance computing - onto a single network based on DCB.

They also want to reduce the number of switch platforms they must manage. This is leading switch vendors to develop larger, flatter architectures; instead of the traditional three tiers of switches, vendors are developing sleeker two-tier and even a single-layer, logical switch architecture that spans the data centre.

“There are people out there that have enterprise gear where their data centre connection has to go through the access, aggregation and core [switches],” says Gary Lee, director of product marketing at Fulcrum Microsystems. “They may not want to swap out that gear so they are going to continue to have three tiers even if it is not that efficient.”

But other customers such as large cloud computing players do not require such a switch hierarchy and its associated software complexity, says Lee: “They are the ones that are driving to a ‘lean core’, made up of top-of-rack and the end-of-row switch that acts as a core switch.”

Switch vendor Voltaire, a customer of Fulcrum’s ICs, uses such an arrangement to create 288 10-Gigabit Ethernet ports based on two tiers of 24-port switch chips. With the latest 72-port FM6000 series, a two-tier architecture with over 2,500 10-Gigabit ports becomes possible. “The software can treat the entire structure of chips as a single large virtual switch,” says Lee.

Alta architecture

Fulcrum's Alta FM6000 series architecture. Source: Fulcrum MicrosystemsFulcrum’s FocalPoint 6000 series comprises nine devices with capacities from 160 to 720 Gigabit-per-second (Gbps). The Alta chip architecture has three main components:

Fulcrum's Alta FM6000 series architecture. Source: Fulcrum MicrosystemsFulcrum’s FocalPoint 6000 series comprises nine devices with capacities from 160 to 720 Gigabit-per-second (Gbps). The Alta chip architecture has three main components:

- Input-output ports

- RapidArray shared memory and

- the FlexPipe array pipeline.

Like Fulcrum’s second generation Bali architecture, the 6000 series has 96 serial/ deserialiser (serdes) ports but these have been upgraded from 3.125Gbps to 10Gbps.“We have very flexible port logic,” says Lee. “We can group four serdes to create a XAUI [10 Gigabit Ethernet] port or create an IEEE 40 Gigabit Ethernet port.”

RapidArray is a single shared memory which can be written to and read from at full line rate from all the ports simultaneous, says Fulcrum. Each memory output port has a set of eight class-of-service queues, while the shared memory can be partitioned to separate storage traffic from data traffic.

“The shared memory design is where we get the low latency, and good multicast performance which people in the broadband access market like for video distribution,” says Lee.

The architecture’s third main functional block is the FlexPipe array pipeline. The pipeline, new to Alta, is what enables up to a billion 64 byte packets to be processed each second. The packet-processing pipeline combines look-up tables and microcode-programmable functional blocks that process a packet’s fields. Being able to program the array pipeline means the device can accommodate standards’ changes as they evolve, as well as switch vendors’ proprietary protocols.

OpenFlow

The FocalPoint software development kit that comes with the chips supports OpenFlow. OpenFlow is an academic initiative that allows networking protocols to be explored using existing hardware but it is of growing interest to data centre operators.

“It creates an industry-standard application programming interface (API) to the switches,” explains Lee. It would allow the likes of a Google or a Yahoo! to switch vendors’ switch platforms as long as both vendors supported OpenFlow.

OpenFlow also establishes the idea of a central controller that would run on a server to configure the network. “Instead of every top-of-rack switch having a CPU subsystem, you could put all the horsepower into a set of server blades,” says Lee. This promises to lower the cost of switches and more importantly enable operators to unshackle themselves from switch vendors’ software.

Lee points out that OpenFlow is still in its infancy. But Fulcrum has added an ‘OpenFlow vSwitch stub’ to its software that translates between OpenFlow APIs and FocalPoint APIs.

What next?

Fulcrum says it continues to monitor the various evolving standards such as DCB, TRILL and the virtualisation work. Fulcrum is also getting requests to support latency measurement on its chips using techniques such as synchronous Ethernet to ensure service level agreements are met.

As for future FocalPoint designs, these will have greater throughput, with larger table sizes, packet buffers and higher-speed 100 Gigabit Ethernet interfaces.

Meanwhile all nine FM6000 series’ chip members will be available from the second quarter, 2011.

Click here for Part 1: Single-layer switch architectures

Click here for Part 3: Networking developments

Packet optical transport: Hollowing the network core

The platform enables a fully-meshed metropolitan network Intune Networks' CEO, Tim Fritzley (right) and John Dunne, co-founder and CTO with software support for web-based services, claims the Irish start-up.

Intune Networks' CEO, Tim Fritzley (right) and John Dunne, co-founder and CTO with software support for web-based services, claims the Irish start-up.

“What we have designed allows for the sharing of the same fibre switching assets across multiple services in the metro,” says Tim Fritzley, Intune’s CEO.

The company is in talks with several operators about its OPST system, which is being used for a nationwide network in Ireland. The system is also part of an EC seventh framework project that includes Spanish operator Telefónica.

OPST architecture

Intune’s OPST system, dubbed the Verisma iVX8000, uses dense wavelength division multiplexing (DWDM) technology but with a twist. Each wavelength is assigned to a particular destination port, over which the data is transmitted in bursts. The result is an architecture that uses both wavelength-division and time-division multiplexing.

To enable the approach, Intune has developed a control algorithm that can switch and lock a tunable laser’s wavelength “in nanoseconds”. Such rapid laser switching enables wavelength addressing - assigning a dedicated wavelength to each destination port.

As packets arrive at the iVX8000, they are ‘coloured’ and queued before being sent on the required wavelength to their destination. In effect packets are routed at the optical layer, in contrast to traditional systems where traffic is packed onto a lightpath that has a fixed predefined point-to-point optical path.

The packets are sent in bursts based on their class-of-service. Intune uses a proprietary framing scheme for transmission, with Ethernet frames restored at the destination. At the input port, all the packets are queued based on their wavelength and class-of-service. The scheduler, which composes the bursts, picks bits to transmit from the queues based on their class, with the bits sent without having to be aligned with a frame’s boundaries.

“Instead of assigning an electrical address to a fixed wavelength, we are assigning electrical addresses to dynamic wavelengths”

“Instead of assigning an electrical address to a fixed wavelength, we are assigning electrical addresses to dynamic wavelengths”

Tim Fritzley, Intune Networks

Intune also uses dynamic bandwidth allocation: any bandwidth unused by the higher classes of service is assigned to lower priority traffic. This achieves over 80 percent utilisation of the Ethernet switching and the fibre, says Fritzley.

“You are responding to the dynamic loading of the traffic as it comes in, on a destination-by-destination, colour-by-colour basis,” says Fritzley “Instead of assigning an electrical address to a fixed wavelength [as with traditional systems], we are assigning electrical addresses to dynamic wavelengths.”

The result is a fully meshed architecture with any transponder able to talk to any other transponder on the network, says Fritzley.

System’s span

The network architecture is arranged as a ring with up to a 300km span. The ring connects up to 16 iVX8000 nodes each comprising four 10 Gigabit-per-second (Gbps) ports and switching hardware. Each port is assigned a particular wavelength, equating to a total switch capacity of 640Gbps.

Intune has an 80-wavelength design even though only 64 are used. Indeed it uses two optical rings in parallel. The two rings run in opposite directions, providing optical protection for each port and effectively doubling overall capacity.

For the client side interfaces, the iVX8000 uses four 10 Gigabit Ethernet ports. Since transmissions are in bursts, multiple ports can transmit data to the same destination port even though they share the same wavelength.

The system’s 300km span is an artificial value set by Intune to guarantee “plug-and-play” performance. If the individual chassis are less than 65km apart and the total ring is 300km or under, Intune guarantees no DWDM engineering is required. “We auto-discover all the optical paths and nodes in the network; we automatically adjust all the amplification and set up the dispersion compensation,” says Fritzley. “This saves thousands of engineer-hours and truck rolls.”

Intune points out that it has engineered a 700km network but claims that for distances beyond 1,000km, point-to-point links connecting regions make more sense.

John Dunne, co-founder and CTO of Intune, claims the metro architecture simplifies networking greatly when connecting the network edge to the IP core. “It is different to what is there today because there are no routeing decisions to be made,” says Dunne. “All of the routes pre-exist, and that is because the tunable lasers contain all the colours of all the ports on the ring.”

As a result, setting up a flow of packets between the edge and core involves using a single interface to the ring. “You don’t have to talk to all the [ring’s] elements, you just talk to the ring,” says Dunne. “The ring is pre-engineered so it knows it’s a ring; it also knows how to guarantee the latency, the bandwidth, the jitter of any flow.”

This is the system’s main merit, says Dunne, the pre-engineered ring hides all the difficulty of building a control layer on top of a dynamic optical and layer-two switching system.

Bringing the web into the network

Intune realised that traditional telecom software would struggle to make best use of its distributed optical packet switch architecture. The company has adopted the representational state transfer (REST) software approach for its architecture instead.

“REST is the heart of web services,” says Fritzley. “The reason we did this is that there are hundreds of thousands of programmers that understand how to program it, so you are not into the arcane telecom languages of SNMP and TL1.” Adopting a 'RESTful' approach, claims Intune, reduces code development by 70 percent.

Moreover, REST by its nature is distributed such that it lends itself to supporting distributed transactions across Intune’s switch. “We have put a mini-http server on every card; we do not centralise control inside a node,” says Fritzley. “Every card peers with all of its peer-functions on the ring.”

In terms of the switch's operation, high-level XML commands are used instead of sending low-level instructions to numerous elements. “For example you ask the ring - set up this flow of packets with this bandwidth, this jitter and this delay,” says Dunne. “The ring replies that it can set this up and it performs the low-level stuff internal to the ring."

Such a capability will ultimately enable a machine to provision bandwidth for services, and enable machine-to-machine communications, says Intune. It will also enable third-party application developers to use the switch for service provisioning. This isn’t possible today because there is a lack of control, says Dunne.

“We have a full suite of XML-based interface commands,” he says. “All [the interface commands] would go to the carrier, the carrier would expose a subset to the Googles, the Googles would expose a subset to their application writers, and the application writers would expose a subset to the consumer.” Were the consumer to send a command to request some bandwidth, the call would be passed through the various layers directly into the switch, all in a controlled manner.

Provisioning of bandwidth in such an automated fashion is possible because Intune’s underlying network is bounded and predictable, says Dunne, with the optical path pre-engineered to work with the data path.

Meanwhile until XML becomes more commonplace, Intune uses a code translator that converts the XML code to SNMP or TL1 to interface to existing systems.

“The ring is pre-engineered so it knows it’s a ring; it also knows how to guarantee the latency, the bandwidth, the jitter of any flow”

“The ring is pre-engineered so it knows it’s a ring; it also knows how to guarantee the latency, the bandwidth, the jitter of any flow”

John Dunne, Intune Networks

Applications

The iVX8000 is being targetted at applications such as cloud computing services and the moving of virtualised environments between data centres. But the real target is using the platform to support multiple services – 3G and 4G wireless backhaul, on-demand IP TV as well as cloud. “No-one can do traffic planning anymore around such services,” says Fritzley.

The platform addresses what one large European operator calls ‘hollowing the core’. The operator wants to simplify its metro network by moving such networking elements as broadband remote access servers (BRASs) to the network edge. These will be connected using a simpler layer-two network that lessens the use of large, expensive IP core routers.”All the IP processing is on the edge and you go edge-to-edge on a flat layer two,” says Fritzley.

Market developments

Intune is using its system to enable the Exemplar network in Ireland. Backed by the Irish Government, the company’s systems will be used to build a nationwide network. The first phase involves a lab for application development and testing. So far 40 multi-nationals have signed up to use the network. Starting next year, a ring network will be up and running around Dublin to be followed with a nationwide roll-out in 2013.

The Irish start-up is also part of an EC Seventh Framework research project called MAINS. The project, which started in January, involves Telefónica which is using the iVX8000 to move virtualised resources between data centres depending on user demand and latency requirements. The project uses XML commands to call for bandwidth from the networking layer.

Meanwhile, Intune says that it is “deeply engaged” with four to five of the largest operators in North America and Europe.

Rafik Ward Q&A - final part

"Feedback we are getting from customers is that the current 100 Gig LR4 modules are too expensive"

Rafik Ward, Finisar

Q: Broadway Networks, why has Finisar acquired the company?

A: We spent quite some time talking to Broadway and understanding their business. We also talked to Broadway’s customers and the feedback we got on the technical team, the products and what this little start-up was able to accomplish was unanimously very positive.

We think what Broadway has done, for instance their EPON* stick product, is very interesting. With that product, an end user has the ability to make any SFP* port on a low-end Ethernet switch an EPON ONU* interface. This opens up a whole new set of potential customers and end users for EPON.

In reality, consumers will never have Ethernet switches with SFP ports in their house. Where we do see such Ethernet switches are in every major enterprise and many multi-dwelling units. It is an interesting technology that enables enterprises and multi-dwelling units to quickly tool-up for EPON.

* [EPON - Ethernet passive optical network, SFP - small form-factor pluggable optical transceiver, ONU - optical network unit]

Optical transceivers have been getting smaller and faster in the last decade yet laser and photo-detector manufacturing have hardly changed, except in terms of speed. Is this about to change?

Speed is one of the focus areas for the industry and will continue to be. Looking forward in a number of applications, though, we are going to hit the limit for these lasers and we are going to have to look more carefully outside of just raw laser speed to move up the data rate curve.

"We are going to hit the limit for these lasers"

A lot of this work has already started on the line side using different modulation formats and DSP* technology. Over time the question is: What happens on the client side? In future, do we look to other modulation formats on the client side? Eventually we will get there; it may take several years before we need to do things like that. But as an industry we would be foolish to think we won’t have to do this.

WDM* is going to be an increasingly important technology on the client side. We are already seeing this with the 40GBASE-LR4 and 100GBASE-LR4 standards.

* [DSP - digital signal processing, WDM - wavelength-division multiplexing]

Google gave a presentation at ECOC that argued for the need for another 100Gbps interface. What is Finisar’s view?

Feedback we are getting from customers is that the current 100 Gig LR4 modules are too expensive. We have spent a lot of time with customers helping them understand how the current LR4 standard, as is written, actually enables a very low cost optical interface, and the timeframes we believe are very quick in terms of how we can get cost down considerably on 100 Gig.  Rafik Ward (right) giving Glenn Wellbrock, director of backbone network design at Verizon Business, a tour of Finisar's labsThat was part of the details that [Finisar’s] Chris Cole also presented at ECOC.

Rafik Ward (right) giving Glenn Wellbrock, director of backbone network design at Verizon Business, a tour of Finisar's labsThat was part of the details that [Finisar’s] Chris Cole also presented at ECOC.

There has certainly been a lot of media attention on the two [ECOC] presentations between Finisar and Google. This really is not so much about the quote, ‘drama’, or two companies that have a disagreement which optical interface makes more sense. It is more fundamental than that.

What it comes down to is that, as an industry, we have pretty limited resources. The best thing all of us can do is try to direct these resources – this limited pool we have combined throughout the industry - on a path that makes the most sense to reduce bandwidth cost most significantly.

The best way to do that, and that is already established, is through standards. The [IEEE] standard got it right that the path the industry is on is going to enable the lowest cost 100 Gig [interface]. Like everything, there is some investment required to get us there. The 25 Gig technology now [used as 4x25 Gig] is becoming mainstream and will soon enable the lowest cost solution. My view is that within 18 months to two years this will be a moot point.

If the technology was available 18 months sooner, we wouldn’t even be having this discussion. But that is the position that we, as an industry, are in. With that, it creates some tensions, some turmoil, where customers don’t like to pay more than they perceive they have to.

There is the CFP form factor that is relatively large. Is the point that if current technology was available 18 months ago, 100Gbps could have come out in a QSFP?

The heart of the debate is cost.

There are other elements that always play into a debate like this. Beyond the cost argument, how quickly can two optical interfaces, like a 4x25 Gig versus a 10x10 Gig, each enable a smaller form factor solution.

But I think that is secondary. Had we not had the cost problem that we have now between 4x25 Gig versus 10x10 Gig, I don’t think we would be talking about it.

So it’s the current cost of the 4x25 Gig that is the issue?

Correct.

In September, the ECOC conference and exhibition was held. What were your impressions and did you detect any interesting changes?

There wasn’t so much an overwhelming theme this year at ECOC. In ECOC 2009, it was the year of coherent detection. This year there wasn’t a theme that resonated strongly throughout.

The mood was relatively upbeat. From our perspective, ECOC seemed a little bit smaller in terms of the size of the floor. But all the key people you would expect to be at the show were there.

Maybe the strongest theme – and I wrote about this in my blog – was colourless, directionless, contentionless (CDC) [ROADMs]. I think what I said is that they should have renamed it not ECOC but the ECDC show.

"A blog ... enables a much more informal mechanism to communicate to a broad audience."

Do you read business books and is there one that is useful for your job?

Probably the book I think about the most in my job is Clayton Christensen's The Innovator’s Dilemma.

He talks about how, when you look at very successful technology companies that have failed, what causes them to fail is often new solutions that come from the very low end of the market.

A lot of companies, and he cites examples from the disk drive industry, prided themselves on focussing on the high end of the market but ultimately ended up failing because there was a surprise upstart, someone who came in at the market's low end – in terms of performance, cost etc. – that continued to innovate using their low-end architecture, making it suitable for the core market.

For these large, well-established companies, once they realised they had this competitor, it was too late.

I think about that business book probably more than others. It’s a very interesting take on technology and the threat that can be posed to people in high-tech companies.

Your job sounds intensive and demanding. What do you do outside work to relax?

I’m a big [ice] hockey fan. I’ve been a hockey fan for many years; it’s a pretty intense sport. These days I tend to watch more hockey than I play but I very much enjoy the sport.

The other thing I started up this year that I had never done before – a little side project – was vegetable gardening. Surprisingly, it ended up taking a lot of my attention and I think it was a good distraction for me.

It can be quite remarkable, when you have your own little vegetable garden, how often you go and look at its progress. I’d find often coming home from work, first thing I’d want to do is go see how things were progressing in my vegetable garden.

You are the face of Finisar’s blog. What have you learnt from the experience?

A blog is an interesting tool to get information out to a broad audience. For companies like Finisar, it serves as a very important communication vehicle that didn’t exist previously.

In the old days, if you wanted to get information out to a broad group of customers, you either had to meet and communicate that information face-to-face, or via email; very targeted, one customer-at-a-time communication.

Another way was the press release. A press release was a very easy way to broadcast that information. But the challenge is that not all information that you want to broadcast is suitable for a press release.

The reason why I really like the blog is that it enables a much more informal mechanism to communicate to a broad audience.

Has it helped your job in any tangible way?

We found some interesting customer opportunities. These have come in through the blog when we’ve talked about specific products. That hasn’t happened extremely frequently but we have had a few instances. So it’s probably the most tangible thing: we can point to enhanced business because of it.

But the strength of something like a blog goes much deeper than that, in terms of the communication vehicle it enables.

You have about a year’s experience running a blog. If an optical component company is thinking about starting a blog, what is your advice?

The best advice I can give to anybody looking to do a blog is that it is something you have to commit to up-front.

A blog where you don’t continue to refresh the content regularly becomes a tired blog very quickly. We have made a conscious effort to have updated postings as best we can, on a weekly basis or even more frequently. There are certainly periods where we have gone longer than that but if you look back, in general, we have a wide variety of content that has been refreshed regularly.

I have to give credit to others - guest bloggers - within the organisation that help to maintain the content. This is critical. I would struggle to keep up with the pace if it was just myself every week.

Click here for the first part of Rafik Ward's Q&A.

Is Broadcom’s chip powering Juniper’s Stratus?

Part 1: Single-layer switch architectures

Juniper Networks’ Stratus switch architecture, designed for next-generation data centres, is several months away from trials. First detailed in 2009, Stratus is being engineered as a single-layer switch with an architecture that will scale to support tens of thousands of 10 Gigabit-per-second (Gbps) ports.

Stratus will be in customer trials in early 2011.

Andy Ingram, Juniper Networks

Data centres use a switch hierarchy, made up of three layers commonly. Multiple servers are connected to access switches, such as top-of-rack designs, which are connected to aggregation switches whose role is to funnel traffic to large, core data centre switches.

Moving to a single-layer design promises several advantages. Not only does a single-layer architecture reduce the overall number of managed platforms, bringing capital and operational expense savings, it also reduces switch latency.

Broadcom’s IC for Stratus?

The Stratus architecture has yet to be detailed by Juniper. But the company has said that the design will be based on a 64x10Gbps ASIC building block dubbed a path-forwarding engine (PFE).

“The building block – the PFE – that can have that kind of density (64x10Gbps) gives us the ability to build out the network fabric in a very economical way,” says Andy Ingram, vice president of product marketing and business development of the fabric and switching technologies business group at Juniper Networks.

Stratus is being designed to provide any-to-any connectivity and operate at wire speed. “You have a very dense, very high-cross-sectional bandwidth fabric,” says Ingram. “The only way to make it economical is to use dense ASICs.”

Broadcom’s latest StrataXGS Ethernet switch family - the BCM56840 series - comprises three devices to date, the largest of which - the BCM56845 – also has 64x10Gbps ports.

Juniper will not disclose whether it is using its own ASIC or a third-party device for Stratus.

Broadcom, however, has said that its BCM56840 series is being used by vendors developing flat, single-layer switch architectures. “Anyone using merchant Ethernet switching silicon to build a single-stage environment is probably using our technology,” says Nick Ilyadis, chief technical officer for Broadcom’s infrastructure networking group.

Stratus will be in customer trials in early 2011. “In a lot less than 6 months”, says Ingram. “We have some customers that have some very difficult networking challenges that are signed up to be our early field trials and we will work with them extensively.”

The timeline aligns with Broadcom’s claim that samples of the BCM56840 ICs have been available for months and will be in production by year-end.

According to Broadcom, only a handful of switch vendors have the resources to design such a complex switch ASIC and also expect to recoup their investment. Moreover, a switch vendor using Broadcom's IC has plenty of scope to differentiate their design using software, and even FPGA hardware if needed. It is software that brings out the many features of the BCM56845, says Broadcom.

The BCM56845

Broadcom’s BCM56840 ICs share a common feature set but differ in their switching capacity. The largest, the BCM56845, has a switching capacity of 640Gbps. The device’s 64x10 Gigabit Ethernet (GbE) ports can also be configured as 16x40 GbE ports.

The BCM56845 supports data center bridging (DCB), the Ethernet protocol enhancement that enables lossless transmission of storage and high-performance computing traffic. It also supports the Fibre Channel over Ethernet (FCoE) protocol that frames Fibre Channel storage traffic over DCB-enhanced Ethernet.

Besides DCB Ethernet, the series switch includes layer 3 packet processing and routeing. There is also a multi-stage content-aware engine that allows higher layer, more complex packet inspection (layer 4 to 7 of the Open Systems Interconnection model) and policy management.

The content-aware functional block can also be used for packet cut-through; a technique to reduce switch latency by inspecting header information and forwarding all the while the packet’s payload is arriving. Broadcom says the switch’s latency is less than one microsecond.

Most importantly, the BCM56845 addresses the move to a flatter switching architecture in the data centre.

It supports the Transparent Interconnection of Lots of Links (TRILL) standard ratified by the Internet Engineering Task Force (IETF) in July. Ethernet uses a spanning tree technique to avoid the creation of loops within a network. However the spanning tree becomes unwieldy as the Ethernet network size grows and works only at the expense of halving the available networking bandwidth. TRILL is designed to allow much larger Ethernet networks while using all available bandwidth.

Broadcom has its own protocol know as HiGig that adds tags to packets. Using HiGig, a very large logical switch can be created and managed, made up of multiple interconnected switches. Any port of the IC can be configured as a HiGig port.

So has Broadcom’s BCM56845 been chosen by Juniper Networks for Stratus? “I really can’t comment on which customers are using this,” says Ilyadis.

Click here for Part 2: Ethernet switch chips

Click here for Part 3: Networking developments

Q&A with Rafik Ward - Part 1

"This is probably the strongest growth we have seen since the last bubble of 1999-2000." Rafik Ward, Finisar

"This is probably the strongest growth we have seen since the last bubble of 1999-2000." Rafik Ward, Finisar

Q: How would you summarise the current state of the industry?

A: It’s a pretty fun time to be in the optical component business, and it’s some time since we last said that.

We are at an interesting inflexion point. In the past few years there has been a lot of emphasis on the migration from 1 to 2.5 Gig to 10 Gig. The [pluggable module] form factors for these speeds have been known, and involved executing on SFP, SFP+ and XFPs.

But in the last year there has been a significant breakthrough; now a lot of the discussion with customers are around 40 and 100 Gig, around form factors like QSFP and CFP - new form factors we haven’t discussed before, around new ways to handle data traffic at these data rates, and new schemes like coherent modulation.

It’s a very exciting time. Every new jump is challenging but this jump is particularly challenging in terms of what it takes to develop some of these modules.

From a business perspective, certainly at Finisar, this is probably the strongest growth we have seen since the last bubble of 1999-2000. It’s not equal to what it was then and I don’t think any of us believes it will be. But certainly the last five quarters has been the strongest growth we’ve seen in a decade.

What is this growth due to?

There are several factors.

There was a significant reduction in spending at the end of 2008 and part of 2009 where end users did not keep up with their networking demands. Due to the global financial crisis, they [service providers] significantly cut capex so some catch-up has been occurring. Keep in mind that during the global financial crisis, based on every metric we’ve seen, the rate of bandwidth growth has been unfazed.

From a Finisar perspective, we are well positioned in several markets. The WSS [wavelength-selective switch] ROADM market has been growing at a steady clip while other markets are growing quite significantly – at 10 Gig, 40 Gig and even now 100 Gig. The last point is that, based on all the metrics we’ve seen, we are picking up market share.

Your job title is very clear but can you explain what you do?

I love my job because no two days are the same. I come in and have certain things I expect to happen and get done yet it rarely shapes out how I envisaged it.

There are really three elements to my job. Product management is the significant majority of where I focus my efforts. It’s a broad role – we are very focussed on the products and on the core business to win market share. There is a pretty heavy execution focus in product management but there is also a strategic element as well.

The second element of my job is what we call strategic marketing. We spend time understanding new, potential markets where we as Finisar can use our core competencies, and a lot of the things we’ve built, to go after. This is not in line with existing markets but adjacent ones: Are there opportunities for optical transceivers in things like military and consumer applications?

One of the things I’m convinced of is that, as the price of optical components continues to come down, new markets will emerge. Some of those markets we may not even know today, and that is what we are finding. That’s a pretty interesting part of my job but candidly I spend quite a bit less time on it [strategic marketing] than product management.

The third area is corporate communications: talking to media and analysts, press releases, the website and blog, and trade shows.

"40Gbps DPSK and DQPSK compete with each other, while for 40 Gig coherent its biggest competitor isn’t DPSK and DQPSK but 100 Gig."

Some questions on markets and technology developments.

Is it becoming clearer how the various 40Gbps line side optics – DPSK, DQPSK and coherent – are going to play out?

The situation is becoming clearer but that doesn’t mean it is easier to explain.

The market is composed of customers and end users that will use all of the above modulation formats. When we talk to customers, every one has picked one, two or sometimes all three modulation formats. It is very hard to point to any trend in terms of picks, it is more on a case-by-case basis. Customers are, like us at the component level, very passionate about the modulation format that they have chosen and will have a variety of very good reasons why a particular modulation format makes sense.

Unlike certain markets where you see a level of convergence, I don’t think that there will be true convergence at 40 Gbps. Coherent – DP-QPSK - is a very powerful technology but the biggest challenge 40 Gig has with DP-QPSK is that you have the same modulation format at 100 Gig.

The more I look at the market, 40Gbps DPSK and DQPSK compete with each other, while for 40 Gig coherent its biggest competitor isn’t DPSK and DQPSK but 100 Gig.

Finisar has been quiet about its 100 Gig line side plans, what is its position?

We view these markets - 40 and 100 Gig line side – as potentially very large markets at the optical component level. Despite that fact that there are some customers that are doing vertical integrated solutions, we still see these markets as large ones. It would be foolish for us not to look at these markets very carefully. That is probably all I would say on the topic right now.

"Photonic integration is important and it becomes even more important as data rates increase."

Finisar has come out with an ‘optical engine’, a [240Gbps] parallel optics product. Why now?

This is a very exciting part of our business. We’ve been looking for some time at the future challenges we expect to see in networking equipment. If you look at fibre optics today, they are used on the front panel of equipment. Typically it is pluggable optics, sometimes it is fixed, but the intent is that the optics is the interface that brings data into and out of a chassis.

People have been using parallel optics within chassis – for backplane and other applications – but it has been niche. The reason it’s niche is that the need hasn’t been compelling for intra-chassis applications. We believe that need will change in the next decade. Parallel optics intra-chassis will be needed just to be able to drive the amount of bandwidth required from one printed circuit board to another or even from one chip to another.

The applications driving this right now are the very largest supercomputers and the very largest core routers. So it is a market focussed on the extreme high-end but what is the extreme high-end today will be mainstream a few years from now. You will see these things in mainstream servers, routers and switches etc.

Photonic integration – what’s happening here?

Photonic integration is something that the industry has been working on for several years in different forms; it continues to chug on in the background but that is not to understate its importance.

For vendors like Finisar, photonic integration is important and it becomes even more important as data rates increase. What we are seeing is that a lot of emerging standards are based around multiple lasers within a module. Examples are the 40GBASE-LR4 and the 100GBASE-LR4 (10km reach) standards, where you need four lasers and four photo-detectors and the corresponding mux-demux optics to make that work.

The higher the number of lasers required inside a given module, and the more complexity you see, the more room you have to cost-reduce with photonic integration.

Google and the optical component industry

According to a report by Pauline Rigby, Google wants something in between two existing IEEE interface standards. The 100GBase-SR10, which has 10 parallel channels and a 125m span, has too short a reach for Google.

“What is good for an 800-pound gorilla is not necessarily good for the industry. It [Google] should have been at the table when the IEEE was working on the standard."

“What is good for an 800-pound gorilla is not necessarily good for the industry. It [Google] should have been at the table when the IEEE was working on the standard."

Daryl Inniss, practice leader, components, Ovum

The second interface, the 100GBase-LR4, uses four channels that are multiplexed onto a single fibre and has a 10km reach. The issue here is that Google doesn’t need a 10km reach and while a single fibre is better than the multi-mode fibre based SR10, the interface is costly with its “gearbox” IC that translates between 10 lanes of 10Gbps and four lanes each at 25Gbps. Both IEEE interfaces are also implemented using a CFP form factor which is bulky.

What Google wants

Google wants optical component vendors to develop a new 100 Gigabit Ethernet multi-source agreement (MSA) that is based on a single-mode interface with a 2km reach, reports Rigby. Such a design would use a ten-channel laser array whose output is multiplexed onto a fibre, a similar laser array-multiplexer arrangement that has already been developed by Santur. Using such a part, the new interface could be developed quickly and cheaply, says Google.

The proposed interface clearly has merits and Google, an important force with an appetite for optics, makes some valid points. But the industry is developing 4x25Gbps interfaces and while such interfaces may be challenging, no-one doubts they will come.

Google’s next moves

Google has a history of being contrarian if it believes it best serves its business. The way the internet giant designs data centres is one example, using massive numbers of cheap servers arranged in a fault-tolerant architecture.

But there is only so much it can do in-house and developing a new optical interface will require help from optical component players.

Google has the financial muscle to hire an optical component firm to engineer and manufacture a custom interface. A recent example of such a partnership is IBM's work with Avago Technologies to develop board-level optics – or an optical engine – for use within IBM’s POWER7 supercomputer systems.

According to Karen Liu, vice president, components and video technologies at market research firm Ovum, once such an interface is developed, Google could allow others to buy it to help reduce its price. “Remember the Lucent form factor which became a de facto standard but wasn’t originally intended to be?” says Liu. “This approach could work.”

Taking a longer term view, Google could also invest in optical component start-ups. The return may take years and as the experience of the last decade has shown, optical components is a risky business. But Google could encourage a supply of novel, leading-edge technologies over the next decade.

The optical component industry is right to push back with regard Google’s request for a new 100 Gigabit Ethernet MSA, as Finisar has done. While Google may be an important player that can drive interface requirements, many players have helped frame the IEEE 100Gbps Ethernet standards work. In the last decade the optical industry has also seen other giant firms try to drive the industry only to eventually exit.

“The industry needs to move on,” says Daryl Inniss, practice leader, components at Ovum. “What is good for an 800-pound gorilla is not necessarily good for the industry.” Inniss also suggests a simple and effective way Google could have influenced the 100 Gigabit Ethernet MSA work: “It [Google] should have been at the table when the IEEE was working on the standard."

Cisco Systems' coherent power move

Cisco Systems announced its intent to acquire the optical transmission specialist CoreOptics back in May. CoreOptics has digital signal processing expertise used to enhance high-speed long-haul dense wavelength division multiplexing (DWDM) optical transmission. Cisco’s acquisition values the German company at US $99m.

"Let me be clear, we don’t believe 100Gbps serial will dominate the market for a long time, or 40Gbps for that matter"

Mark Lutkowitz, Telecom Pragmatics

“It has become clear that Cisco, with a few exceptions, has cornered the coherent market for 40 Gig and 100 Gig,” says Mark Lutkowitz, principal at market research firm, Telecom Pragmatics, which has published a report on Cisco's move.

Prior to Cisco’s move, several system vendors were working with CoreOptics for coherent transmission technology at 40 and 100 Gigabit-per-second (Gbps). Nokia Siemens Networks (NSN) was one and had invested in the company, another was Fujitsu Network Communications. Telecom Pragmatics believes other firms were also working with CoreOptics including Xtera and Ericsson (CoreOptics had worked with Marconi before it was acquired by Ericsson).

ACG Research in its May report Cisco/ CoreOptics Acquisition: What Does It Mean for the Packet Optical Transport Space? also claimed that the Cisco acquisition would set back NSN and Ericsson and listed other system vendors such as ADVA Optical Networking and Transmode that may have been considering using CoreOptics’ 100Gbps multi-source agreement (MSA) design.

“The mere fact that you have all these companies working with CoreOptics - and we don’t know all of them – says it all,” says Lutkowitz. “This was the company they were initially going to be depending on and Cisco made a power move that was brilliant.”

With Cisco bringing CoreOptics in-house, these system vendors will need to find a new coherent technology partner. “The next chance would be with a company like Opnext coming out with a sub-system,” says Lutkowitz. “There is no doubt about it – this was a major coup for Cisco.”

For Cisco, the deal is important for its router business more than its optical transmission business. “In terms of transceivers that go into routers and switches it was absolutely essential that Cisco comes up with coherent technology,” says Lutkowitz. Cisco views transport as a low-margin business unlike IP core routers. “This [acquisition] is about protecting Cisco’s bread and butter – the router business,” he says.

The acquisition also has consequences among the router vendors. Alcatel-Lucent has its own 100Gbps coherent technology which it could add to its router platforms. In contrast, the other main router player, Juniper Networks, must develop the technology internally or partner. Telecom Pragmatics claims Juniper has an internal coherent technology development programme.

40 and 100 Gig markets

Cisco kick-started the 40Gbps market when it added the high-speed interface on its IP core router and Lutkowitz expects Cisco to do the same at 100Gbps. “But let me be clear, we don’t believe 100Gbps serial will dominate the market for a long time, or 40Gbps for that matter.”

In Telecom Pragmatics’ view, multiple channels of 10Gbps will be the predominant approach. First, 10Gbps DWDM systems are widely deployed and their cost continues to come down. And while Alcatel-Lucent and Ciena already have 100Gbps systems, they remain expensive given the infancy of the technology.

But with business with large US operators to be won, systems vendors must have a 100Gbps optical transport offering. Verizon has an ultra-long haul request for proposal (RFP), AT&T has named Ciena as its first domain supplier for its optical and transport equipment but a second partner is still to be announced. And according to ACG Research, Google also has DWDM business.

What next?

Besides Alcatel-Lucent, Ciena, Infinera, Huawei, and now Cisco developing coherent technology, several optical module players are also developing 100Gbps line-side optics. These include Opnext, Oclaro and JDS Uniphase. There are also players such as Finisar that has yet to detail their plans. Lutkowitz believes that if Finisar is holding off developing 100Gbps coherent modules, it may prove a wise move given the continuing strength of the 10Gbps DWDM market.

Opnext acquired subsystem vendor StrataLight Communications in January 2009 and one benefit was gaining StrataLight’s systems expertise and its direct access to operators. Oclaro made its own subsystem move in July, acquiring Mintera. Oclaro has also partnered with Clariphy, which is developing coherent receiver ASICs.

But Telecom Pragmatics questions the long-term prospects of high-end line-side module/ subsystem vendors. “This [technology] is the guts of systems and where the money is made,” says Lutkowitz. “Ultimately all the system vendors will look to develop their own subsystems.”

Lutkowitz highlights other challenges facing module firms. Since they are foremost optical component makers it is challenging for them to make significant investment in subsystems. He also questions when the market 100Gbps will take off. “Some of our [market research] competitors talk about 2014 but they don’t know,” says Lutkowitz.

But is not the trend that over time, 40Gbps and 100Gbps modules will gain increasing share of the line side systems optics, as has happened at 10Gbps?

That is certainly LightCounting’s view that sees Cisco’s move as good news for component and transceiver vendors developing 40 and 100Gbps products. LightCounting argues that with Cisco’s commitment to the technology, other system vendors will have to follow suit, boosting demand for the higher-margin products.

“There will be all types of module vendors but it is possible that going higher in the food chain will not work out,” says Lutkowitz. “There will be more module and component vendors than we have now but all I question is: where are the examples of companies that have gone into subsystems that have done relatively well?”

Opnext is likely to be the next vendor with 100Gbps product, says Lutkowitz, and Oclaro could easily come out with its own offering. “All I’m saying is that there is a possibility that, in the final analysis, systems vendors take the technology and do it themselves.”

AT&T domain suppliers

|

Date |

Domain |

Partners |

|

Sept 2009 |

Wireline Access |

Ericsson |

|

Feb 2010 |

Radio Access Network |

Alcatel-Lucent, Ericsson |

|

April 2010 |

Optical and transport equipment |

Ciena |

|

July 2010 |

IP/MPLS/Ethernet/Evolved Packet Core |

Alcatel-Lucent, Juniper, Cisco |

The table shows the selected players in AT&T's domain supplier programme announced to date.

AT&T has stated that there will likely be eight domain supplier categories overall so four more have still to be detailed.

Looking at the list, several thoughts arise:

- AT&T has already announced wireless and wireline infrastructure providers whose equipment spans the access network all the way to ultra long-haul. The networking technologies also address the photonic layer to IP or layer 3.

- Alcatel-Lucent and Ericsson already play in two domains while no Asian vendor has yet to be selected.

- One or two more players may be added to the wireline access and optical and transport infrastructure domains but this part of the network is pretty much done.

So what domains are left? Peter Jarich, service director at market research firm Current Analysis, suggests the following:

- Datacentre

- OSS/BSS

- IP Service Layer (IP Multimedia Subsystem, subscriber data management, service delivery platform)

- Voice Core (circuit, softswitch)

- Content Delivery (IP TV, etc.)

AT&T was asked to comment but the operator said that it has not detailed any domains beyond those that have been announced.

|

Date |

Domain |

Partners |

|

Sept 2009 |

Wireline Access |

Ericsson |

|

Feb 2010 |

Radio Access Network |

Alcatel-Lucent, Ericsson |

|

April 2010 |

Optical and transport equipment |

Ciena |

|

July 2010 |

IP/MPLS/Ethernet/Evolved Packet Core |

Alcatel-Lucent, Juniper, Cisco |

Bringing WDM-PON to market

"We see just one way to bring down the cost, form-factor and energy consumption of the OLT’s multiple transceivers: high integration of transceiver arrays"

Klaus Grobe, ADVA Optical Networking

Considerable engineering effort will be needed to make next-generation optical access schemes using multiple wavelengths competitive with existing passive optical networks (PONs).

Such a multi-wavelength access scheme, known as a wavelength division multiplexing-passive optical network (WDM-PON), will need to embrace new architectures based on laser arrays and reflective optics, and use advanced photonic integration to meet the required size, power consumption and cost targets.

Current PON technology uses a single wavelength to deliver downstream traffic to end users. A separate wavelength is used for upstream data, with each user having an assigned time slot to transmit.

Gigabit PON (GPON) delivers 2.5 Gigabit-per-second (Gbps) to between 32 or 64 users, while the next development, XG-PON, will extend GPON’s downstream data rate to 10 Gbps. The alternative PON scheme, Ethernet PON (EPON), already has a 10 Gbps variant. Vendors are also extending PON’s reach from 20km to 80km or more using signal amplification.

But the industry view is that after 10 Gigabit PON, the next step will be to introduce multiple wavelengths to extend the capacity beyond what a time-sharing approach can support. Extending the access network's reach to 100km will also be straightforward using WDM transport technology.

The advent of WDM-PON is also an opportunity for new entrants, traditional WDM optical transport vendors, to enter the access market. ADVA Optical Networking is one firm that has been vocal about its plans to develop next-generation access systems.

“We are seriously investigating and developing a next-generation access system and it is very likely that it will be a flavour of WDM-PON,” says Klaus Grobe, senior principal engineer at ADVA Optical Networking. “It [next-generation access] must be based on WDM simply because of bandwidth requirements.”

The system vendor views WDM-PON as addressing three main applications: wireless backhaul, enterprise connectivity and residential broadband. But despite WDM-PON’s potential to reduce operating costs significantly, the challenge facing vendors is reducing the cost of WDM-PON hardware. Indeed it is the expense of WDM-PON systems that so far has assigned the technology to specialist applications only.

A non-reflective tunable laser-based WDM-PON ONU. Source: ADVA Optical NetworkingAccording to Grobe, cost reduction is needed at both ends of the WDM-PON: the client receiver equipment known as the optical networking unit (ONU) and the optical line terminal (OLT) housed within an operator’s central office.

A non-reflective tunable laser-based WDM-PON ONU. Source: ADVA Optical NetworkingAccording to Grobe, cost reduction is needed at both ends of the WDM-PON: the client receiver equipment known as the optical networking unit (ONU) and the optical line terminal (OLT) housed within an operator’s central office.

ADVA Optical Networking plans to use low-cost tunable lasers rather than a broadband light source and reflective optics for the ONU transceivers. “For the OLT, we see just one way to bring down the cost, form-factor and energy consumption of the OLT’s multiple transceivers: high integration of transceiver arrays,” says Grobe.

This is a considerable photonic integration challenge: a 40- or 80-wavelength WDM-PON uses 40 or 80 transceiver bi-directional clients, equating to 80 and 160 wavelengths. If 80 SFPs optical modules were used at the OLT, the resulting cost, size and power consumption would be prohibitive, says Grobe.

ADVA Optical Networking is working with several firms, one being CIP Technologies, to develop integrated transceiver arrays. ADVA Optical Networking and CIP Technologies are part of the EU-funded project, C-3PO, that includes the development of integrated transceiver arrays for WDM-PON.

Splitters versus filters

One issue with WDM-PON is that there is no industry-accepted definition. ADVA Optical Networking views WDM-PON as an architecture based on optical filters rather than splitters. Two consequences result once that choice is made, says Grobe.

One is insertion loss. Choosing filters implies arrayed waveguide gratings (AWGs), says Grobe. “No other filter technology is seriously considered for WDM-PON if filters are used,” he says.

With an AWG, the insertion loss is independent of the number of wavelengths supported. This differs from using a splitter-based architecture where every 1x2 device introduces a 3dB loss - “closer to 3.5dB”, he says. Using a 1x64 splitter, the insertion loss is 14 or 15dB whereas for a 40-channel AWG the loss can be as low as 4dB. “I just saw specs of a first 96-channel AWG, even that one isn’t much higher [than 4dB],” says Grobe. Thus using filters rather than splitters, the insertion loss is much lower for a comparable number of client ONUs.

There is also a cost benefit associated with a low insertion loss. To limit the cost of next-generation PON, the transceiver design must be constrained to a 25dB power budget associated with existing PON transceivers. “This is necessary to keep these things cheap, possibly dirt cheap,” says Grobe.

The alternative, using XG-PON’s sophisticated 10 Gbps burst-mode transceiver with its associated 35dB power budget, achieving low cost is simply not possible, he says. To live with transceivers with a 25dB power budget, the insertion loss of the passive distribution network must be minimised, explaining why filters are favoured.

The other main benefit of using filters is security. With a filter-based PON, wavelength point-to-point connections result. “You are not doing broadcast,” says Grobe. “You immediately get rid of almost all security aspects.” This is an issue with PON where traffic is shared.

Low power

Achieving a low-power WDM-PON system is another key design consideration. “In next-gen access, it is absolutely vital,” says Grobe. “If the technology is deployed on a broad scale - that is millions of user lines – every single watt counts, otherwise you end up with differences in the approaches that go into the megawatts and even gigawatts.”

There is also a benchmarking issue, says Grobe: the WDM-PON OLT will be compared to XG-PON’s even if the two schemes differ. Since XG-PON uses time-division multiplexing, there will be only one transceiver at the OLT. But this is what a 40- or 80-channel WDM-PON OLT will be compared with, even if the comparison is apples to pears, says Grobe.

WDM-PON workings

There are two approaches to WDM-PON.

In a fully reflective architecture, the OLT array and the ONUs are seeded using multi-wavelength laser arrays; both ends use the lasers arrays in combination with reflective optics for optical transmission.

ADVA Optical Networking is interested in using a reflective approach at the OLT but for the ONU it will use tunable lasers due to technical advantages. For example, using the same wavelength for the incoming and modulated streams in a reflective approach, Rayleigh crosstalk is an issue when the ONUs are 100km from the OLT. In contrast, Rayleigh crosstalk at the OLT is avoided because the multi-wavelength laser array is located only a few metres from the reflective electro-absorption modulators (REAMs).

REAMs are used rather than semiconductor optical amplifiers (SOAs) to modulate data at the OLT because they support higher bandwidth 10 Gbps wavelengths. Indeed the C-3PO project is likely to use a monolithically integrated SOA-REAM for this task. “The reflective SOA is narrower in bandwidth but has inherent gain while the REAM has loss rather than gain – it is just a modulator,” says Grobe. “The combination of the two is the ideal: giving high modulation bandwidth and high transmit power.”

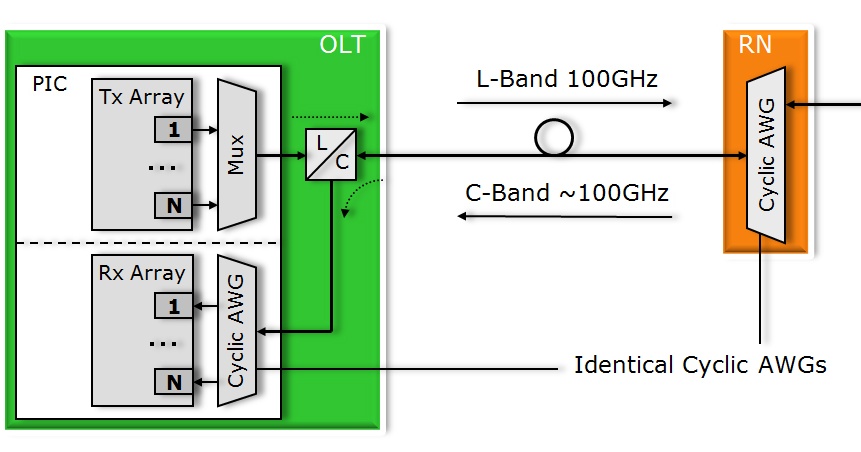

The integrated WDM-PON OLT. In practice the transmit array uses a reflective architecture based on SOA-REAMs and is fed with a multi-wavelength laser source. Source: ADVA Optical Networking

The integrated WDM-PON OLT. In practice the transmit array uses a reflective architecture based on SOA-REAMs and is fed with a multi-wavelength laser source. Source: ADVA Optical Networking

For the OLT, a multi-wavelength laser is fed via an AWG into an array of SOA-REAMs which modulate the wavelengths and return them through the AWG where they are multiplexed and transmitted to the ONUs via a demultiplexing AWG. An added benefit of this approach, says Grobe, is that the same multi-wavelength laser source can be use to feed several WDM-PON OLTs, further decreasing system cost.

For the upstream path, each ONU’s wavelength is separated by the OLT’s AWG and fed to the receiver array. In a WDM-PON system, the OLT transmit wavelengths and receive wavelengths (from the ONUs) operate in separate optical bands.

Grobe expects its resulting WDM-PON system to use 40 or 80 channels. And to best meet size, power and cost constraints, the OLT design will likely implemented as a photonic integrated circuit. “We are after a single PIC solution,” he says. “It is clear that with the OLT, integration is the only way to meet requirements.” A photonically-integrated OLT design is one of the products expected from the C-3PO project, using CIP Technologies' hybrid integration technology.

ADVA Optical Networking has already said that its WDM-PON OLT will be implemented using its FSP 3000 platform.

- To see some WDM-PON architecture slides, click here.