OTN hardware gets the 100 Gigabit treatment

“The real market demand is for simple systems - the transponder and interfaces to the routers"

“The real market demand is for simple systems - the transponder and interfaces to the routers"

Lars Pedersen, AppliedMicro

Why is this significant?

The OTN standard, defined by the telecom standards body of the International Telecommunication Union (ITU-T), has existed for a decade but has emerged recently as a key networking technology.

“SONET/SDH is now legacy while packet optical is next-generation work,” says Sterling Perrin, senior analyst at Heavy Reading. “OTN has emerged as an interim step away from SONET/SDH that is able to handle packets.”

With the advent of 100 Gigabit-per-second (Gbps) optical transmission, OTN has been upgraded to handle 100Gbps signals and multiplex existing 10Gbps and 40Gbps OTN within the 100 Gigabit framing format. AppliedMicro claims to be first-to-market with merchant 100Gbps OTN hardware.

AppliedMicro’s 100Gbps OTN designs are implemented using field-programmable gate arrays (FPGAs) and will become available to system vendors this quarter. Using FPGAs allows vendors to start their hardware designs early, adding AppliedMicro’s FPGA software as the OTN design is completed.

What has been done?

The TPOT414 and TPOT424 designs, implemented on a line card, perform mapping - taking a 100 Gigabit client-side signal and turning into a 100Gbps line-side signal for transmission - and regeneration of a 100Gbps signal.

The 100Gbps OTN muxponder uses framing and mapping but adds multiplexing between 10, 40 Gbps and 100Gbps streams. One application is a router taking IP traffic at different rates and framing them before transmission over a 100Gbps dense wavelength division multiplexing (DWDM) network.

The 100Gbps muxponder comprises AppliedMicro’s PQ60 framer/mapper chip and multiplexing FPGA products, referred to by AppliedMicro as soft silicon. “It [soft silicon] is a combination of an FPGA and the programming image delivered as one unified component,” says Lars Pedersen, TPACK’s CTO. “There is still some uncertainty as to the specification and what is needed.”

The benefits of a soft silicon approach compared to an application-specific standard product (ASSP) include the ability to reprogram the design to accommodate standards’ changes, and allowing system vendors to add new elements as they customise their designs.

AppliedMicro also provides an application programming interface (API) which simplifies control and maintenance when several of its designs are combined to implement a more complex function. “From a software perspective it looks like one combined function,” says Pedersen. The 100Gbps muxponder, for example, is controlled via the API. The API also allows software reuse were AppliedMicro to offer the functions as an ASSP chip.

The TPOT OTN architecture

The two functions – the TPOT414 and TPOT424 – are implemented on a common FPGA design.

The TPOT414: Source: AppliedMicro

The TPOT414: Source: AppliedMicro

The TPOT414 has a 100 Gigabit Ethernet (GbE) CAUI interface (10 x 11.2Gbps) and performs physical coding sub-layer (PCS) monitoring per lane before mapping the signal into OTU4, prior to long-haul transmission. The two signals - the 100GbE and the 100Gbps line side - have separate clocks and the role of the mapper is to place the 100GbE stream into the OTN format.

The TPOT414 could be used to interface two optical modules on a line card: a CFP module that takes in a 100GbE client signal and an MSA-168 long-haul transponder whose electrical input is the OTN OTU4 signal.

The TPOT424 Source: AppliedMicro

The TPOT424 Source: AppliedMicro

The second design, the TPOT424, takes in an OTU4 signal made up of a payload and overhead components. The overhead part that includes a forward error correction (FEC) is terminated - errors corrected and signal measurements made – before the payload is put into a new OTU4 frame and a fresh overhead including a new FEC scheme is applied.

Both the TPOT414 and TPOT424 use standard FEC from the ITU-T G.709 standard. Separate devices in the optical module are needed if more powerful FECs are used. AppliedMicro says it will support more powerful FECs in future 100Gbps OTN devices.

“These [the TPOT414 and TPOT424] are the bulk of the emerging market and are the most needed components to start with,” says Pedersen.

The 100G OTN muxponder also supports the multiplexing function, including support for 10GbE and 40GbE, OC-192 and OC-768 SONET/SDH, and 8Gbps and 10Gbps Fibre Channel signals

What next?

Pedersen says there is now significant demand for its 100Gbps OTN designs as vendors prepare to launch systems supporting 100Gbps interfaces in 2011 and 2012. These include packet optical transport platforms and 100Gbps IP router line cards.

“The real market demand is for simple systems - the transponder and interfaces to the routers,” says Pedersen. “But at the same time there are many vendors working on packet optical transport platforms.”

The company does not rule out developing ASSP designs that support100Gbps OTN.

u2t Photonics: Adapting to a changing marketplace

u2t Photonics' Jens Fiedler, vice president sales and marketing (left), and CEO Andreas Umbach.

u2t Photonics' Jens Fiedler, vice president sales and marketing (left), and CEO Andreas Umbach.

u2t Photonics has begun sampling its second-generation coherent receiver module. The dual-polarisation, quadrature phase-shift keying (DP-QPSK) coherent transmission receiver adds polarisation diversity to the company’s first-generation design – an indium-phosphide 90O hybrid design that includes balanced photo-detectors – all within an integrated module. u2t Photonics has developed two such coherent receiver designs, to address the 40 Gigabit-per-second (Gbps) and the 100Gbps markets, adding to the company’s first-generation design now available in small volumes.

“We can explore [next-gen coherent] solutions before we need them and we learn a lot from these partnerships” Andreas Umbach

The latest coherent receiver design represents what CEO Andreas Umbach believes u2t Photonics does best: using its radio frequency (RF) and optical component expertise to design high-speed integrated optical receiver modules.

Differentiation at 100 Gigabit

u2t Photonics is a leading component supplier for the 40 Gigabit market with its photo-detectors and more recently differential phase-shift keying (DPSK) integrated receiver designs that combine a delay-line interferometer with a balanced receiver. Such receivers are used for optical transponder and line card designs. Now, with its latest integrated coherent receiver, the German company aims to exploit the emerging 100 Gigabit market.

“The 40 Gig market will be strong for awhile yet, but 100 Gig is coming and will start to squeeze 40 Gig,” says Umbach. “Right now we do not see 100 Gig cannibalising 40 Gig,” says Jens Fiedler, vice president sales and marketing at u2t Photonics. “For DP-QPSK, 100 Gig might cannibalise 40 Gig since the technology for 40 Gig does not offer a big cost benefit for the customer.”

The emergence of 100 Gigabit optical links and its use of more advanced modulation have changed component requirements. Whereas the DPSK modulation scheme for 40Gbps requires photo-detectors with bandwidths that match the data rate, 100Gbps coherent requires photo-detectors with bandwidths of 28GHz only.

“In principle, not having the requirement of a very high-speed photo-detector makes it [100Gbps] a little bit easier, yet having 40 Gig serial is not unique anymore; there are differences in performance but it is not a limiting factor,” says Umbach.

Instead what matters for optical component players is to understand the 100Gbps functional requirements and deliver a design that meets them as early as possible, says Umbach. The challenges after that are scaling volume production and driving down cost. “We are the first company with a second generation design, offering the highest integration based on the [100G OIF] standard,” says Fiedler. “No doubt our competition is tough, but so far we are doing pretty well.”

u2t Photonics dismisses the view that the advent of high-speed CMOS ASICs that execute digital signal processing algorithms at the DP-QPSK receiver is eroding the need for the company’s expertise by enabling less specialist optics to be used. The optical specification requirements the company faces are challenging enough because customers still want to get the best performance from the links, it says.

"u2t Photonics has grown its revenue tenfold in the last five years" Jens Fiedler

“The challenge for us now is not just a photo-detector with a higher bandwidth but a coherent receiver that can detect the polarisation, phase and amplitude of the optical signal,” says Umbach. “That puts a much higher challenge on the components – not just high speed and efficiency but linearity in all these parameters.”

EC Galactico and Mirthe projects

To keep on top of next-generation coherent optical transmission schemes, u2t Photonics is a member of two European Commission (EC) Framework 7 projects dubbed Galactico (Blending diverse photonics and electronics on silicon for integrated and fully functional coherent Tb Ethernet) and Mirthe (Monolithic InP-based dual polarization QPSK integrated receiver and transmitter for coherent 100-400Gb Ethernet).

The Galactico project, which includes Nokia Siemens Networks, will develop photonic integrated circuits (PICS) that will implement a 100Gbps DP-QPSK coherent transmitter and receiver, a 600Gbps dense wavelength division multiplexing (DWDM) DP-QPSK coherent transmitter and receiver and a 280Gbps DP-128 quadrature amplitude modulation (QAM) transmitter that will deliver 10bit/sec/Hz spectral efficiency. The second project, Mirthe, is tasked with developing multi-level coding schemes using QPSK and QAM.

“Both projects address next generation [high-speed optical transmission] and the next level of integration of coherent receivers and transmitters for complex coherent systems,” says Umbach.

u2t is working with the projects’ partners in defining the devices needed for next-generation networks. In particular it is helping define the specifications needed for the ICs to drive such optical devices as well as what the product should look like to aid integration and packaging. “We have partners in the Mirthe project such as the Heinrich Hertz Institute and [Alcatel Thales] III V Lab that are focusing on chip design according to our requirements and matching our packaging development efforts,” says Umbach. The Galactico project is similar but here u2t Photonics is also contributing its integrated modulator technology for more complex transmission formants compared to the current DP-QPSK.

The company says its involvement in these projects is less to do with the research funding made available. Rather, it is the chance to work with partners on the R&D side. “We can explore solutions before we need them and we learn a lot from these partnerships,” says Umbach.

Changing markets

The emergence of three or four dominant module makers as the optical market matures presents new challenges for u2t Photonics. These emerging leaders are increasingly vertically integrated, using their own in-house components within their modules.

“Vertical integration is something we have to face and are fully aware of,” says Fiedler. To remain a valid component supplier, what matters is delivering component performance, volume capability, and cost that meet customer targets and challenge their own developments. “That is what we need to – at least be the second source for these vertical integrated companies,” says Fiedler.

Umbach points out that many of the system vendors are developing their own 100Gbps systems on line cards, and this represents another market opportunity for u2t Photonics, independent of the module makers.

“u2t might not offer the best pricing and might have issues - technical challenges common when you have early, leading-edge components,” says Fiedler. “But finally u2t is chosen as the supplier. They know we deliver the products.” To prove his point, Fiedler claims u2t Photonics has grown its revenue tenfold in the last five years.

Europe’s optical vendors

The last few years has seen significant consolidation among European optical component firms. Whereas Europe has system vendors that include Alcatel-Lucent, Nokia Siemens Networks, Ericsson, ADVA Optical Networking and Transmode Systems, the number of component vendors has continued to shrink. Bookham became a US company before merging with Avanex to become Oclaro, MergeOptics folded and its assets were acquired by FCI, while CoreOptics was acquired by Cisco Systems in May 2010.

Oclaro may be a US-registered company but its main operations are in China and Europe, points out Umbach. And many companies’ operations in the US and elsewhere have large headcounts in the Far East such that they could be view as more Asian companies, he adds.

“I believe there are lot of systems and components expertise in Europe - in Italy, the UK and Germany,” says Umbach. “Maybe they are just teams out of global players, like the CoreOptics team which will stay in Nuremberg although it is now a US company.” u2t Photonics itself has opened a unit in the UK. “We don’t feel too lonely,” he adds. “There is a lot of know-how we can look at here in these areas, not only other companies but academia in all the photonics fields.”

In turn, the market is a global one, says the firm, with the Chinese market being particular important with its large 40Gbps DPSK deployments and the importance of Huawei as a leading system vendor. Fiedler says the Chinese market is rapidly moving and has the potential to be a huge market for high-speed optical transmission. Yet despite emerging Chinese optical component players, the likes of Huawei are no different to other system vendors in terms of the criteria used when choosing optical components: performance capabilities and cost.

u2t Photonics remains open to all developments. “We have the opportunity to grow and expand our own business, and face the challenge with our bigger competitors,” says Umbach. “And if there is a reasonable path into consolidation, there is nothing that keeps us from going this way.”

Further information:

A presentation on Galactico, click here

A presentation on Mirthe, click here

MultiPhy eyes 40 and 100 Gigabit direct-detect and coherent schemes

MultiPhy's Avi Shabtai (left) and Ronen Weinberg

MultiPhy's Avi Shabtai (left) and Ronen Weinberg

MultiPhy is developing transceiver designs to boost the transmission performance of metro and long-haul 40 and 100 Gigabit-per-second (Gbps) links. The start-up is aiming its advanced digital signal processing (DSP) chips at direct detection and coherent-based modulation schemes.

“We are the only company, as far as we know, who is doing DSP-based semiconductors for the 40G and 100G direct-detect world,” says Avi Shabtai, CEO of Multiphy.

At 40Gbps the main direct-detection schemes are differential phase-shift keying (DPSK) and differential quadrature phase-shift keying (DQPSK), while at 100Gbps several direct-detect modulation schemes are being considered. “The fact that we are doing DSP at 40G and 100G enables us to achieve much better performance than regular hard-detection technology,” says Shabtai.

Established in 2007, the fabless semiconductor start-up raised US$7.2m in its latest funding round in May. MultiPhy is targeting its physical layer chips at module makers and system vendors. “While there is a clear ecosystem involving optical module companies and systems vendors, there is a lot of overlap,” says Shabtai. “You can find module companies that develop components; you can find system companies that skip the module companies, buying components to make their own line cards.”

MultiPhy’s CMOS chips include high-speed analogue-to-digital converters (ADC) and hardware to implement the maximum-likelihood sequence estimation (MLSE) algorithm. The company is operating the MLSE algorithm at “tens of gigasymbols-per-second”, says Shabtai. “We believe we are the only company implementing MLSE at these speeds.”

MultiPhy's office is alongside Finisar's Israeli headquartersMultiPhy will not disclose the exact sampling rate but says it is sampling at the symbol rate rather than at the Nyquist sampling theorem rate of double the symbol rate. Since commercial ADCs for 100Gbps have been announced that sample at 65Gsample/s, it suggests MultiPhy is sampling at up to half that rate.

MLSE is used to compensate for the non-linear impairments of fibre transmission, to improve overall transmission performance. “We implement an anti-aliasing filter at the input to the ADC and we use the MLSE engine to compensate for impairments due to the low-bandwidth sampling,” says Shabtai.

“There is a good chance that 100Gbps will leapfrog 40Gbps coherent deployments”

Avi Shabtai, MultiPhy

MultiPhy benefits from using one-sample-per-symbol in terms of simplifying the chip design and its power consumption but the MLSE algorithm must counter the resulting distortion. Shabtai claims the result is a significant reduction in power consumption compared to the tradition two-samples-per-symbol approach: “Tens of percent – I won’t say the exact number but it is not 10 percent.”

Other chip companies implementing MLSE designs for optical transmission include CoreOptics, which was acquired by Cisco in May, and Clariphy. (See Oclaro and Clariphy)

Does using MLSE make sense for 40Gbps DPSK and DQPSK?

“If you use DSP for DQPSK at 40Gbps you can significantly improve polarisation mode dispersion tolerance, the limiting factor today of DQPSK transceivers,” says Shabtai. MultiPhy expects the 40 Gigabit direct-detect market to shift towards DQPSK, accounting for the bulk of deployments in two years’ time.

Market applications

MultiPhy is delivering two solutions: for 40 and 100Gbps direct-detect, and 40 and 100Gbps coherent designs. The company has not said when it will deliver products but hinted that first it will address the direct-detect market and that chip samples will be available in 2011.

Not only will the samples enhance the reach of DQPSK-modulation based links but also allow the optical component specifications to be relaxed. For example, cheaper 10Gbps optical components can be used which, says MultiPhy, will reduce total design cost by “tens of percent”.

This is noteworthy, says Shabtai, as the direct-detect markets are increasingly cost-sensitive. “Coherent is being positioned as the high-end solution, and there will be pressure on the direct-detect market to show lower cost solutions,” he says.

MultiPhy is eyeing two 100Gbps spaces

MultiPhy is eyeing two 100Gbps spaces

MultiPhy’s view is that direct-detect modulation schemes will be deployed for quite some time due to their price and power advantage compared to coherent detection.

Another factor against 40Gbps coherent technology will be the price difference between 40Gbps and 100Gbps coherent schemes. “There is a good chance that 100Gbps will leapfrog 40Gbps coherent deployments,” he says. “The 40Gbps coherent modules will need to go a long way to get to the right price.” MultiPhy says it is hearing about the expense of coherent modules from system vendors and module makers, as well as industry analysts.

Metro and long-haul

The company says it has received several requests for 40Gbps and 100Gbps direct-detect schemes for the metro due to its sensitivity to cost and power consumption. “We are getting to the point in optical communications where one solution does not fit all – that the same solution for long-haul will also suit metro,” says Shabtai.

He believes 100Gbps coherent will become a mainstream solution but will take time for the technology to mature and its costs to come down. It will thus take time before 100Gbps coherent expands beyond long-haul and into the metro. He also expects a different 100Gbps coherent solution to be used in the metro. “The requirements are different – in reach, in power constraints” he says. “The metro will increasingly become a segment, not only for direct-detect but also for coherent.”

Coherent: Already a crowded market

There are at least a dozen companies actively developing silicon for coherent transmission, while half-a-dozen leading system vendors developing designs in-house. In addition, no-one really knows when the 100Gbps market will take off. So how does MultiPhy expect to fare given the fierce competition and uncertain time-to-revenues?

“It is very hard to predict the exact ramp up to high volumes,” says Shabtai. “At the end of the day, 100Gbps will come instead of 10Gbps and when people look back in five and six years’ time, they will say: ‘Gee, who would have expected so much capacity would have been needed?’.”

The big question mark is when will coherent technology ramp and this explains why MultiPhy is also targeting next-generation direct-detect schemes with its technology. “We cannot come to market doing the same thing as everyone else,” says Shabtai. “Having a solution that addresses power consumption based on one-sample-per-symbol gives us a significant edge.”

MultiPhy admits it has received greater market interest following Cisco’s acquisition of CoreOptics. “While Cisco said it would fulfill all previous commitments, still it worried some of CoreOptics’ customers,” says Shabtai. The acquisition also says something else to Shabtai: 100Gbps coherent is a strategic technology.

Did Cisco consider MultiPhy as a potential acquisition target? “First, I can’t comment, and I wasn’t at the company at the time,” says Shabtai.

As for design wins, Shabtai says MultiPhy is in “advanced discussion” with several leading module and system vendor companies concerning its 40Gbps and 100Gbps direct-detect and coherent technologies.

Further reading

See Opnext's multiplexer IC plays its part in 100Gbps trial

Cisco's P-OTS: Denser and distributed

Cisco claims the CPT is its second-generation packet optical transport system (P-OTS), complementing the ONS 15454. But some analysts view the CPT as the vendor’s first true packet optical transport product.

"This announcement is an acknowledgement that P-OTS equipment is important and that operators are insisting on it"

"This announcement is an acknowledgement that P-OTS equipment is important and that operators are insisting on it"

Sterling Perrin, Heavy Reading

The CPT family comprises the CPT 200 and CPT 600 platforms, while the CPT 50 port extension shelf enables the CPT products to be implemented as a distributed switch architecture.

Gazettabyte spoke to Stephen Liu, manager, service provider marketing at Cisco Systems about the announcement and asked three analysts on the significance of Cisco’s CPT, how the product family advances packet optical transport and how the platforms will benefit operators.

Carrier packet transport family

The CPT platforms are aimed at operators transitioning their metro networks from traditional SONET/SDH to packet-based transport.

Cisco says the CPT is its second-generation P-OTS. A first generation P-OTS supports dense wavelength division multiplexing (DWDM) with some Ethernet capability. “The truly integrated P-OTS that unites the simplicity of optical delivery with packet routing is in the second generation,” says Cisco’s Liu.

Market research firm, Heavy Reading, defines P-OTS as a platform that combines SONET/SDH, connection-oriented Ethernet, DWDM and, depending on where the platform is used within the network, also optical transport network (OTN) switching and reconfigurable optical add-drop multiplexers (ROADMs). The global P-OTS market will total $870 million in 2010, says Heavy Reading.

The CPT combines DWDM, OTN, Ethernet, multi-protocol label switching – transport profile (MPLS-TP) and ROADMs. MPLS-TP is a stripped down version of the multi-protocol label switching (MPLS) protocol and is used for point-to-point communication. MPLS-TP’s ability to interoperate with IP-MPLS allows operators to combine packet-based technology with transport control in the access and aggregation part of the network, says Cisco.

So what is new with the introduction of the CPT platforms? “The ability to do high-density packet optical transport with MPLS-TP,” says Liu.

Cisco has fitted 160 Gigabit-per-second (Gbps) switching capacity into the two-rack-sized CPT 200 platform and 480Gbps in the six-rack CPT 600. The respective platform port counts are 176 Gigabit Ethernet (GbE) and 352 GbE ports, says Liu.

Cisco also stresses the functionality integrated into the dense platforms. “We have ROADMs coming together with transponders that do the electrical-to-optical conversion, and the TDM/Ethernet switching functions,” says Liu. “It takes about 30 inches of ROADM/transponder and TDM/Ethernet switching functions on separate platforms; with the CPT it is condensed into 10.5 inches of rack space.”

The result, says Liu, is a 60% operational expense (OpEx) saving in power consumption, cooling and space. Cisco also claims that unifying the management of the optical and packet transport domains will result in a 20% OpEx saving.

The CPT 50 satellite shelf complements the CPT platforms. The CPT 50 has 44 GbE ports and four 10GbE uplink ports. “The shelf can be deployed locally next to a CPT platform or up to 80km away, but from a management point-of-view it all looks like a single box,” says Liu.

The platforms do not support 40 or 100Gbps interfaces but that is part of the product roadmap, says Liu. Earlier this year, Cisco acquired 40 and 100 Gigabit transport specialist, CoreOptics. Nor will the platform family be limited to the metro. “Long-haul opportunities are certainly open to us,” says Liu.

Cisco says that the CPT platforms are being trialled and will be available from 1Q of 2011. Several large operators including Verizon, XO Communications and BT are in various stages of platform evaluation.

Analysts’ comments

Sterling Perrin, senior analyst at Heavy Reading

We believe the CPT is Cisco’s most significant optical announcement since its acquisition spree at the beginning of the decade.

Cisco has always positioned its legacy product, the ONS 15454, as packet transport but really it is a multi-service provision platform (MSPP) – or as Cisco calls it, a multi-service transport platform (MSTP) – with SONET/SDH and DWDM. We have not counted that as a P-OTS. What it is doing now is entering the [P-OTS] market.

Cisco is an IP router and Ethernet switch company and is strong on IP-over-DWDM. It has pushed that story to operators for years and while that has been happening, there has been the packet optical transport trend which has been gaining steam. Vendors have either used P-OTS for next-generation networks or have had a dual strategy of switches and routers and P-OTS. Cisco have always been in the switch-router space. This announcement is an acknowledgement that P-OTS equipment is important and that operators are insisting on it.

Cisco will be competitive with the CPT based on its newness. The density looks impressive – 480Gbps for the six-rack and 160Gbps for the two-rack platform. But this is a generational thing; in time as everyone else releases their next product, they will also have a dense platform. But for now it is a differentiator. The remote shelf is also interesting but it is unclear to what degree that will be telling with operators.

As for the operators mentioned in the Cisco press release, Verizon has already picked Fujitsu and Tellabs as the P-OTS suppliers for its metro and regional networks. The big opportunity with Verizon is in the core, and the first two CPT platforms are not for core.

Mention of BT is also interesting as the operator is in favour of the opposite approach, based on switches and routers from Alcatel-Lucent and Juniper, and has moved away from P-OTS. XO is probably the most likely operator [of the three mentioned] to adopt the platform and already uses Cisco’s ONS 15454.

The opportunity for Cisco is protecting the ONS 15454 customer base that is looking to move from MSPPs to packet optical transport.

Heavy Reading believes the standalone DWDM and MSPP markets are declining, but will remain large markets for the next two years. Accordingly, it makes sense for Cisco to continue supporting the legacy product line.

Eve Griliches, managing partner, ACG Research

The CPT is more along the lines of a purpose built P-OTS than some variations that have came to market. It has all the requirements a P-OTS should have including a hybrid switch fabric that supports packet and OTN. I suspect the packet functionality is very good, and possibly better than other transport carriers have delivered, but the operators are still testing and they will speak soon. I do know that operators I've spoken with are already very impressed with what they’ve seen.

"Operators I've spoken with are already very impressed with what they’ve seen"

Eve Griliches, ACG Research

In terms of how the CPT will benefit operators, the CPT is a metro aggregation P-OTS box, and it will have to compete with Tellabs and Fujitsu who have been shipping equipment for the metro for two years. But Cisco will likely bring better packet functionality, which is what operators have been waiting for.

Rick Talbot, senior analyst, transport and routing infrastructure, Current Analysis

Cisco is introducing a product into a space recently defined by other vendors – packet-based access/ aggregation devices for backhaul, currently mobile backhaul. Example devices are the Alcatel-Lucent 1850 TSS-100, ECI Telecom’s BG-64 and the Ericsson OMS 1410.

"The CPT will likely blur the line between metro P-OTS and packet-based access/ aggregation devices"

Rick Talbot, Current Analysis

CPT brings quite a significant advantage in port density and packet-switching capacity. The CPT 200’s 160Gbps capacity is twice that of the OMS 1410, the current leader in that category. The CPT 600 boasts the capacity of a full metro P-OTS in a chassis the size of a small MSPP. From Cisco’s perspective, the CPT product line is not about introducing a new access/ aggregation device but extending the metro architecture closer to cell towers and end-users.

The CPT will likely blur the line between metro P-OTS and packet-based access/ aggregation devices. It has a modest size and power consumption. It also extends MPLS, in the form of MPLS-TP, to the very edge of the operator’s network, enabling a single end-to-end packet-forwarding method.

The high capacity and low-power consumption of the CPT will, of course, save operators OpEx and CapEx. In addition, the platform extends a single connection-oriented management view to the end-user site, minimising management expense.

The flexibility of the platform will further benefit the operator if and when the operator deploys cache content storage at the network edge. But such deployment of servers beyond the central office remains to be seen.

Related links:

See also Intune Networks' packet optical transport platform

Xilinx's 400 Gigabit Ethernet FPGA

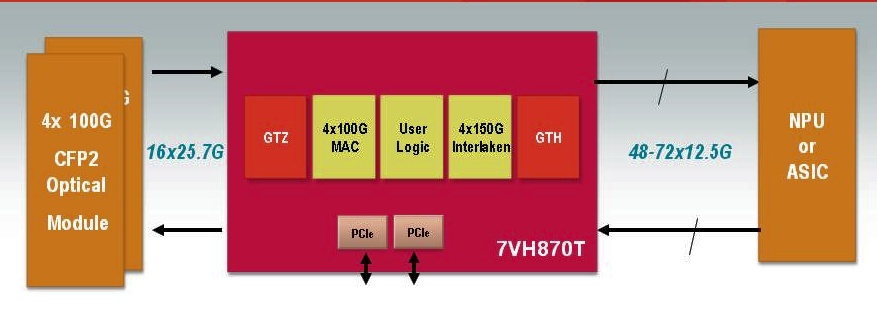

A single FPGA will support 400 Gigabit Ethernet duplex traffic. The FPGA can also support 4x100Gig MACs and 4x150Gbps Interlaken interfaces. Source: Xilinx

A single FPGA will support 400 Gigabit Ethernet duplex traffic. The FPGA can also support 4x100Gig MACs and 4x150Gbps Interlaken interfaces. Source: Xilinx

Why is it important?

Xilinx says its switch and router customers are more than doubling the traffic capacity of their platforms every three years. “They are looking for silicon that will support a doubling of capacity within the same form-factor and the same power budget,” says Giles Peckham, EMEA marketing director at Xilinx.

An FPGA has an advantage when compared to an application-specific standard product (ASSP) chip or an ASIC: being programmable and a volume-manufactured device, it is easier for an FPGA design to contend with changes in standards and the escalating cost of implementing chip designs in ever-finer CMOS geometries.

The Virtex-7HT will support 28 Gigabit-per-second (Gbps) transceivers (serial/ deserialiser or serdes). Used in a four-channel configuration, a 100Gbps interface can be implemented. Indeed the largest member of the Virtex-7HT family - the XC7VH870T - will have 16 x 28.05Gbps transceivers, enabling 4x100Gbps or even a 400 Gigabit Ethernet interface.

The 28Gbps transceivers will be used to interface to optical modules such as the emerging CFP2 pluggable form-factor. The CFP2 multi-source agreement is expected to be ratified in the second half of 2011 and start shipping in the second half of 2012, says Xilinx.

“Network processors and ASICs are typically a [CMOS] process node or two behind us"

“Network processors and ASICs are typically a [CMOS] process node or two behind us"

Giles Peckham, Xilinx

And with the additional 72, 13.1Gbps transceivers on-chip, the XC7VH870T will have sufficient input-output (I/O) to support bi-directional 400 Gigabit Ethernet traffic. The FPGA's lower-speed 13.1Gbps serdes are included to interface to network processors (NPUs) or ASICs that only support the lower-speed transceivers. “Network processors and ASICs are typically a [CMOS] process node or two behind us – partly because of cost - such that they end up at a technology disadvantage, as in transceiver speed,” says Peckham.

The additional 13.1Gbps transceivers - only 40 of the 72 transceivers are needed for the 400 Gigabit Ethernet port – will enable the FPGA to interface to other chips.

Xilinx says it will be at least a year and possibly 18 months before samples of the Virtex-7HT FPGA family become available. But it is making the Virtex-7HT announcement now because it has tested successfully the 28Gbps transceiver design.

Front panel evolution from 48 SFP+ to 4 CFPs to 8 CFP2s. Source: Xilinx

Front panel evolution from 48 SFP+ to 4 CFPs to 8 CFP2s. Source: Xilinx

What has been done

There are three devices in the Virtex-7HT family which have 4, 8 and 16, 28Gbps transceivers. Xilinx claims this is four times the transceiver count of any competing 28nm FPGA detailed to date. But Peckham admits that additional announcements from competitors are inevitable before the Virtex-7HT devices become available in 2012.

In September Altera announced that it had successfully demonstrated a 25Gbps transceiver test chip. And in November, Intel and Achronix Semiconductor formed a strategic relationship that will allow the FPGA start-up to use Intel's leading-edge 22nm CMOS manufacturing process.

The three Virtex-7HT FPGAs also come with different amounts of programmable logic cells, memory blocks and Xilinx’s XtremeDSP building blocks tailored for digital signal processing.

Xilinx says meeting the CEI-28G electrical interface jitter specification has proved challenging. At 10 Gigabit the signal period is 100 picoseconds (ps) and the jitter allowance is 35ps, while the signal period at 28Gbps is 35ps. “When you realise the jitter spec on the 10 Gigabit interface is the same as the full period in the 28 Gigabit spec – 35 picoseconds – there is quite a lot of work to be done in reducing the jitter when migrating to 28 Gigabit,” says Peckham.

Xilinx uses pre-emphasis techniques on the signals before they are transmitted across the printed circuit board to reduce loss. In addition, the FPGA maker has enhanced the noise isolation between the FPGA's digital and analogue CMOS circuitry. “The short spiky current loads in the digital circuitry can impact the noise in the analogue circuitry and increase the jitter,” says Peckham.

What next?

Xilinx has created a test vehicle 28Gbps transceiver. This allows Xilinx to validate and fine-tune the design. The rest of the FPGA design needs to be completed while another design iteration of the 28Gbps test vehicle is likely. “We have a lot of things to do yet,” he says.

Meanwhile system vendors can start to design their systems based on the FPGA family in advance of samples that are expected in the first half of 2012.

- For a video demonstration of the 28Gbps test vehicle, click here.

Virtualisation set to transform data centre networking

Part 3: Networking developments

The adoption of virtualisation techniques is causing an upheaval in the data centre. Virtualisation is being used to boost server performance, but its introduction is changing how switching equipment is networked.

“This is the most critical period of data centre transformation seen in decades,” says Raju Rajan, global system networking evangelist at IBM.

“We are on a long hard path – it is going to be a really challenging transition”

“We are on a long hard path – it is going to be a really challenging transition”

Stephen Garrison, Force10 Networks

Data centre managers want to accommodate variable workloads, and that requires the moving of virtualised workloads between servers and even between data centres. That is leading to new protocol developments and network consolidation, all the while making IT management more demanding, and hence requiring greater automation.

“We used to share the mainframe, now we share a pool of resources,” says Andy Ingram, vice president of product marketing and business development at the fabric and switching technologies business group at Juniper Networks. “What we are trying to get to is better utilisation of resources for the purpose of driving applications - the data centre is about applications.”

Networking challenges

New standards to meet the networking challenges created by virtualisation are close to completion and are already appearing in equipment. In turn, equipment makers are developing switch architectures that will scale to support tens of thousands of 10-Gigabit-per-second (Gbps) Ethernet ports. But industry experts expect these developments will take up to a decade to become commonplace due to the significant hurdles to be overcome.

“We are all marketing to a very distant future where most of the users are still trying to get their arms around eight virtual machines on a server,” says Stephen Garrison, vice president marketing at Force10 Networks. “We are on a long hard path – it is going to be a really challenging transition.”

IBM points out how its customers are used to working in IT siloes, selecting subsystems independently. New work practices across divisions will be needed if the networking challenges are to be addressed. “For the first time, you cannot make a networking choice without understanding the server, virtualisation, storage, security and the operations support strategies,” says Rajan.

A lot of the future value of these various developments will be based on enabling IT automation. “That is a big hurdle for IT to get over: allowing systems to manage themselves,” says Zeus Kerravala, senior vice president, global enterprise and consumer research at market research firm, Yankee Group. “Do I think this vision will happen? Sure I do, but it will take a lot longer than people think.” Yankee expects it will be closer to ten years before these developments become commonplace.

Networking provides the foundation for servers and storage and, ultimately, the data centre’s applications. “Fifty percent of the data centre spend is servers, 35% is storage and 15% networking,” says Ingram. “The key resources I want to be efficient are the servers and the storage; what interconnects them is the network.”

Traditionally, applications have resided on dedicated servers, but equipment usage has been low, at 10% commonly. Given the huge numbers of servers deployed in data centres, this is no longer acceptable.

“From an IT perspective, the cost of computing should fall quite dramatically; if it doesn’t fall by half we will have failed”

“From an IT perspective, the cost of computing should fall quite dramatically; if it doesn’t fall by half we will have failed”

Zeus Kerravala, Yankee Group

Virtualisation splits a server’s processing into time-slots to support 10, 100 and even 1,000 virtual machines, each with its own application. The server usage improvement that results ranges from 20% to as high as 70%.

That could result in significant efficiencies when you consider the growth of server virtualisation: In 2010, deployed virtual machines will outnumber physical servers for the first time, claims market research firm, IDC. And Gartner has said that half of all workloads will be virtualised by 2012, quotes Cisco’s Kash Shaikh, Cisco’s group manager, data center product marketing.

In enterprise and hosting data centres, servers are typically connected using three tiers of switching. The servers are linked to access switches which in turn connect to aggregation switches whose role is to funnel traffic to the large, core switches.

An access switch typically sits on top of the server rack, explaining why it is also known as a top-of-rack switch. Servers are now moving from a 1Gbps to having a 10Gbps interface, with a top-of-rack switch connecting up to 40 servers typically.

Broadcom’s latest BCM56845 switch chip has 64x10Gbps ports. The BCM56845 can link 40, 10Gbps-based servers to an aggregation switch via four high-capacity 40Gbps links. Each aggregation switch will likely have 6 to 12, 40Gbps ports per line card and between eight and 16 cards per chassis. In turn, the aggregation switches connect to the core switches. The result is a three-tier architecture that can link thousands, even tens of thousands, of servers in the data centre.

The rise of virtualisation impacts data centre networking profoundly. Applications are no longer confined to single machines but are shared across multiple servers for scaling. Nor is the predominant traffic ‘north-south’, across this three-layer switch hierarchy. Instead, virtualisation promotes greater ‘east-west’ traffic, across the same tiered equipment.

“The network has to support these changes and it can’t be the bottleneck,” says Cindy Borovick, vice president, enterprise communications infrastructure and data centre networks, at IDC. The result is networking change on several fronts.

“The [IT] resource needs to scale to one large pool and it needs to be dynamic, to allow workloads to be moved around,” says Ingram. But that is the challenge: “The inherent complexity of the network prevents it from scaling and prevents it from being dynamic.”

Data Center Bridging

Currently, IT staff manage several separate networks: Ethernet for the LAN, Fibre Channel for storage and Infiniband for high-performance computing. To migrate the traffic types onto a common network, the IEEE is developing the Data Center Bridging (DCB) Ethernet standard. A separate Fibre Channel over Ethernet (FCoE) standard, developed by the International Committee for Information Technology Standards, enables Fibre Channel to be encapsulated onto DCB.

“No-one is coming to the market with 10-Gigabit [Ethernet ports] without DCB bundled in”

Raju Rajan, IBM

DCB is designed to enable the consolidation of many networks to just one within the data centre. A single server typically has multiple networks connected to it, including Fibre Channel and several separate 1Gbps Ethernet networks.

The DCB standard has several components including Priority Flow Control which provides eight classes of traffic; Enhanced Transmission Selection which manages the bandwidth allocated to different flows and Congestion Notification which, if a port begins to fill up, can notify upstream along all the hops to the source to back off from sending traffic.

“These standards - Priority Flow Control, Congestion Notification and Enhanced Transmission Selection – are some 98% complete, waiting for procedural things,” says Nick Ilyadis, chief technical officer for Broadcom’s infrastructure networking group. DCB is sufficiently stable to be encapsulated in silicon and is being offered on increasing numbers of platforms.

With these components DCB can transport Fibre Channel in a lossless way. Fibre Channel is intolerant to loss and can require minutes to recover from a lost packet. Now with DCB, critical storage traffic such as FCoE, iSCSI and network-attached storage is supported over Ethernet.

Network convergence may be the primary driver for DCB, but its adoption also benefits virtualisation. Since higher server usage results in extra port traffic, virtualisation promotes the transition from 1-Gigabit to 10-Gigabit Ethernet ports. “No-one is coming to the market with 10-Gigabit [Ethernet ports] without DCB bundled in,” says IBM’s Rajan.

The uptake is also being helped by the significant reduction in the cost of 10-Gigabit ports with DCB. “This year we will see 10-Gigabit DCB at about $350 per port, down from over $800 last year,” says Rajan. The upgrade is attractive when the alternative is using several 1-Gigabit Ethernet ports for server virtualisation, each port costing $50-$75.

Yet while DCB may be starting to be deployed, networking convergence remains in its infancy.

“FCoE seems to be lagging behind general industry expectations,” says Rajan. “For many of our data centre owners, virtualisation is the overriding concern.” Network convergence may be a welcome cost-reducing step, but it introduces risk. “So the net gain [of convergence] is not very clear, yet, to our end customers,” says Rajan. “But the net gain of virtualisation and cloud is absolutely clear to everybody.”

Global Crossing has some 20 data centres globally, including 14 in Latin America. These serve government and enterprise customers with storage, connectivity and firewall managed services.

“We are not using lossless Ethernet,” says Mike Benjamin, vice president at Global Crossing. “The big push for us to move to that [DCB] would be doing storage as a standard across the Ethernet LAN. “We today maintain a separate Fibre Channel fabric for storage. We are not prepared to make the leap to iSCSI or FCoE just yet.”

TRILL

Another networking protocol under development is the Internet Engineering Task Force’s (IETF) Transparent Interconnection of Lots of Links (TRILL) that promotes large-scale Ethernet networks. TRILL’s primary role is to replace the spanning tree algorithm that was never designed to address the latest data centre requirements.

Spanning tree disables links in a layer-two network to avoid loops to ensure traffic has only one way to get to a port. But disabling links can remove up to half the available network bandwidth. TRILL enables large layer-two network linking switches that avoids loops without turning off precious bandwidth.

“TRILL treats the Ethernet network as the complex network it really is,” says Benjamin. “If you think of the complexity and topologies of IP networks today, TRILL will have similar abilities in terms of truly understanding a topology to forward across, and permit us to use load balancing which is a huge step forward.”

"Data centre operators are cognizant of the fact that they are sitting in the middle of the battle of [IT vendor] giants and they want to make the right decisions”

Cindy Borovick, IDC

Collapsing tiers

Switch vendors are also developing flatter switch architectures to reduce the switching tiers from three to two to ultimately one large, logical switch. This promises to reduce the overall number of platforms and their associated management as well as switch latency.

Global Crossing’s default data centre switch design is a two-tier switch. “Unless that top tier starts to hit scaling problems, at which time we move into a three-tier,” says Benjamin. “A two-tier switch architecture really does have benefits in terms of cost and low-latency switching.”

Juniper Networks is developing a single-layer logical switch architecture. Dubbed Stratus, the architecture will support tens of thousands of 10Gbps ports and span the data centre. While Stratus has still to be detailed, Juniper has said the design will be based on a 64x10-Gbps building block chip. Stratus will be in customer trials by early 2011. “We have some customers that have some very difficult networking challenges that are signed up to be our early field trials,” says Ingram.

Brocade is about to launch its virtual cluster switching (VCS) architecture. “There will be 10 switches within a cluster and they will be managed as if it is one chassis,” says Simon Pamplin, systems engineering pre-sales manager for Brocade UK and Ireland. VCS supports TRILL and DCB.

“We have the ability to make much larger flat layer two networks which ease management and the mobility of [servers’] virtual machines, whereas previously you were restricted to the size of the spanning tree layer-two domain you were happy to manage, which typically wasn’t that big,” says Pamplin.

Cisco’s Shaikh argues multi-tiered switching is still needed, for system scaling and separation of workloads: “Sometimes [switch] tiers are used for logical separation, to separate enterprise departments and their applications.” However, Cisco itself is moving to fewer tiers with the introduction of its FabricPath technology within its Nexus switches that support TRILL.

“There are reasons why you want a multi-tier,” agrees Force10 Network’s Garrison. “You may want a core and a top-of rack switch that denotes the server type, or there are some [enterprises] that just like a top-of-rack as you never really touch the core [switches]; with a single-tier you are always touching the core.”

Garrison argues that a flat network should not be equated with single tier: “What flat means is: Can I create a manageable domain that still looks like it is layer two to the packet even if it is a multi-tier?”

Global Crossing has been briefed by vendors such as Juniper and Brocade on their planned logical switch architectures and the operator sees much merit in these developments. But its main concern is what happens once such an architecture is deployed.

“Two years down the road, not only are we forced back to the same vendor no matter what other technology advancements another vendor has made, we also risk that they have phased out that generation of switch we installed,” says Benjamin. If the vendor does not remain backwards compatible, the risk is a complete replacement of the switches may be necessary.

Benjamin points out that while it is the proprietary implementations that enable the single virtual architectures, the switches also support the network standards. Accordingly a data centre operator can always switch off the proprietary elements that enable the single virtual layer and revert back to a traditional switched architecture.

Edge Virtual Bridging and Bridge Port Extension

A networking challenge caused by virtualisation is switching virtual machines and moving them between servers. A server’s software-based hypervisor that oversees the virtual machines comes with a virtual switch. But the industry consensus it that hardware rather than software running on a server is best for switching.

There are two standards under development to handle virtualisation requirements: the IEEE 802.1Qbg Edge Virtual Bridging (EVB) and the IEEE 802.1Qbh Bridge Port Extension. The 802.1Qbg camp is backed by many of the leading switch and network interface card vendors, while 802.1Qbh is based on Cisco Systems’ VN-Tag technology.

Virtual Ethernet Port Aggregation (VEPA), a proprietary element used as part of 802.1Qbg, is the transport mechanism used. In terms of networking, VEPA allows traffic to exit and re-enter the same server physical port to enable switching between virtual ports. EVB’s role is to provide the required virtual machine configuration and management.

“The network has to recognise the virtual machine appearing on the virtual interfaces and provision the network accordingly,” says Broadcom’s Ilyadis. “That is where EVB comes in, to recognise the virtual machine and use its credentials for the configuration.”

Brocade supports EVB and VEPA as part of its converged network adaptor (CNA) card that also support DCB and FCoE. “You have software switches within the hypervisor, you have some capabilities in the CNA and some in the edge switch,” says Pamplin. “We don’t see the soft-switch as too beneficial, as some CPU cycles are stolen to support it.”

Instead Brocade does the switching within the CNA. When a virtual machine within a server needs to talk to another virtual machine, the switching takes place at the adaptor. “We vastly reduce what needs to go out on the core network,” says Pamplin. “If we do have traffic that we need to monitor and put more security around, we can take that traffic out through the adaptor to the switch and switch it back – ‘hairpin’ it - into the server.”

The 802.1Qbh Bridge Port Extension uses a tag that is added to an Ethernet frame. The tag is used to control and identify the virtual machine traffic, and enable port extension. According to Cisco, the port extension allows the aggregation of a large number of ports through hierarchical switches. “This provides a way of doing a large fan-out while maintaining smaller management tiering,” says Prashant Gandhi, technical leader internet business systems unit at Cisco.

For example, top-of-rack switches could be port-extenders and be managed by the next-tier switch. “This would significantly simplifying provisioning and management of a large number of physical and virtual Ethernet ports,” says Gandhi.

“The common goal of both [802.1Qbg and 802.1Qbh] standards is to help us with configuration management, to allow virtual machines to move with their entire configuration and not require us to apply and keep that configuration in sync across every single switch,” says Global Crossing’s Benjamin. “That is a huge step for us as an operator.”

“Our view is that VEPA will be needed,” says Gary Lee, director of product marketing at Fulcrum Microsystems, which has just announced its first Alta family switch chip that supports 72x10-Gigabit ports and can process over one billion packets per second.

Benjamin hopes both standards will be adopted by the industry: “I don’t think it’s a bad thing if they both evolve and you get the option to do the switching in software as well as in hardware based on the application or the technology that a certain data centre provider requires.” Broadcom and Fulcrum are supporting both standards to ensure its silicon will work in both environments.

“This [the Edge Virtual Bridging and Bridge Port Extension standards’ work] is still in flux,” says Ilyadis. “At the moment there are a lot of proprietary implementations but it is coming together and will be ready next year.”

The big picture

For Global Crossing, it will be economics or reliability considerations that will determine which technologies are introduces first. DCB may be first once its economics and reliability for storage cannot be ignored, says Benjamin. Or it will be the networking redundancy and reliability offered by the likes of TRILL that will be needed as the operator uses virtualization more.

And whether it is five or ten years, what will be the benefit of all these new protocols and switch architectures?

Malcolm Mason, EMEA hosting product manager at Global Crossing, says there will be less equipment doing more, which will save power and require less cabling. The new technologies will also enable more stringent service level agreements to be met.

“The end-user won’t notice a lot of difference, but what they should notice is more consistent application performance,” says Yankee’s Kerravala. “From an IT perspective, the cost of computing should fall quite dramatically; if it doesn’t fall by half we will have failed.”

Meanwhile data centre operators are working to understand these new technologies. “I get a lot of questions about end-to-end architectures,” says Borovick. “They are cognizant of the fact that they are sitting in the middle of the battle of [IT vendor] giants and they want to make the right decisions.”

Click here for Part 1: Single-layer switch architectures

Click here for Part 2: Ethernet switch chips

LightReading Market Spotlight: ROADMs

Click here for the market spotlight ROADM article written for LightReading. See also the comment discussions.

Fujitsu Labs adds processing to boost optical reach

“That is one of the virtues of the technology; it is not dependent on the modulation format or the bit rate”

Takeshi Hoshida, Fujitsu Labs

Why is it important?

Much progress has been made in developing digital signal processing techniques for 100Gbps coherent receivers to compensate for undesirable fibre transmission effects such as polarisation mode dispersion and chromatic dispersion (See Performance of Dual-Polarization QPSK for Optical Transport Systems). Both dispersions are linear in nature and are compensated for using linear digital filtering. What Fujitsu Labs has announced is the next step: a digital filter design that compensates for non-linear effects.

A key challenge facing optical-transmission designers is extending the reach of 100Gbps transmissions to match that of 10Gbps systems. In the simplest sense, reach falls with increased transmission speed because the shorter-pulsed signals contain less photons. Channel impairments also become more prominent the higher the transmission speed.

Engineers can increase system reach by boosting the optical signal-to-noise ratio but this gives rise to non-linear effects in the fibre. “When the signal power is higher, the refractive index of the fibre changes and that distorts the phase of the optical signal,” says Takeshi Hoshida, a senior researcher at Fujitsu Labs.

The non-linear effect, combined with polarisation mode dispersion and chromatic dispersion, interact with the signal in a complicated way. “The linear and non-linear effects combine to result in a very complex distortion of the received signal,” says Hoshida.

Fujitsu has developed a non-linear distortion compensation technique that recovers 2dB of the transmitted optical signal. Moreover, the compensation technique will equally benefit 400 Gigabit or 1 Terabit channels, says Hoshida: “That is one of the virtues of the technology; it is not dependent on the modulation format or the bit rate.”

Fujitsu plans to extend the reach of its long-haul optical transmission systems using the technique. The 2dB equates to a 1.6x distance improvement. But, as Hoshida points out, this is the theoretical benefit. In practice, the benefit is less since a greater transmission distance means the signal passes through more amplifier and optical add-drop stages that introduce their own signal impairments.

Method used

Fujitsu Labs has implemented a two-stage filtering block. The first filter stage is linear and compensates for chromatic dispersion, while the second unit counteracts the fibre's non-linear effect on the optical signal. To achieve the required compensation, Fujitsu Labs uses multiple filter-stage blocks in cascade.

According to Hoshida, optical phase is rotated according to the optical power: “If the power is higher, the more phase rotation occurs – that is the non-linear effect in the fibre.” The effect is distributed, occurring along the length of the fibre, and is also coupled with chromatic dispersion. “Chromatic dispersion changes the optical intensity waveform, and that intensity waveform induces the non-linear effect,” says Hoshida. “Those two problems are coupled to each other so you have to solve both.”

Fujitsu tackles the problem by applying a filter stage to compensate for each optical span – the fibre segment between repeaters. For a terrestrial transmission system there can be as many as 20 or 30 such spans. “But [using a filter stage per span] is rather inefficient,” says Hoshida. By inserting a weighted-average technique, Fujitsu has reduced by a factor of four the filter stages needed.

Weighted-averaging is a filtering operation that smoothes the signal in the time domain. “It is not necessary to change the weights [of the filter] symbol-by-symbol; it is almost static,” says Hoshida. Changes do occur but infrequently, depending on the fibre’s condition such as changes in temperature, for example.

Fujitsu has been surprised that the weighted-averaging technique is so effective. The technique’s use and the subsequent 4x reduction in filter stages reduce by 70% the hardware needed to implement the compensation. The reason it is not the full 75% is that extra hardware for the weighted averaging must be added to each stage.

What next?

Fujitsu has demonstrated that the technique is technically feasible but practical issues remain such as power consumption. According to Hoshida, the power consumption is too high even using an advanced 40nm CMOS process, and will likely require a 28nm process. Fujitsu thus expects the technique to be deployed in commercial systems by 2015 at the latest.

There are also further optical performance improvements to be claimed, says Hoshida, by addressing cross-phase modulation. This is another non-linear effect where one lightpath affects the phase of another.

Fujitsu Labs has developed two algorithms to address cross-phase modulation which is a more challenging problem since it is modulation-dependent.

For a copy of Fujitsu’s ECOC 2010 slides, please click here.

Oclaro points its laser diodes at new markets

“To succeed in any market ... you need to be the best at something, to have that sustainable differentiator”

Yves LeMaitre, Oclaro

Now LeMaitre is executive vice president at Oclaro, managing the company’s advanced photonics solutions (APS) arm. The APS division is tasked with developing non-telecom opportunities based on Oclaro’s high-power laser diode portfolio, and accounts for 10%-15% of the company’s revenues.

“The goal is not to create a separate business,” says LeMaitre. “Our goal is to use the infrastructure and the technologies we have, find those niche markets that need these technologies and grow off them.”

Recently Oclaro opened a design centre in Tucson, Arizona that adds packing expertise to its existing high-power laser diode chip business. The company bolstered its laser diode product line in June 2009 when Oclaro gained the Newport Spectra Physics division in a business swap. “We became the largest merchant vendor for high-power laser diodes,” says LeMaitre.

The products include single laser chips, laser arrays and stacked arrays that deliver hundred of watts of output power. “We had all that fundamental chip technology,” says LeMaitre. “What we have been less good at is packaging those chips - managing the thermals as well as coupling that raw chip output power into fibre.”

The new design centre is focussed on packaging which typically must be tailored for each product.

Laser diodes

There are three laser types that use laser diodes, either directly or as ‘pumps’:

- Solid-state laser, known as diode-pumped solid-state (DPSS) lasers.

- Fibre laser, where the fibre is the medium that amplifies light.

- Direct diode laser - here the semiconductor diode itself generates the light.

All three types use laser diodes that operate in the 800-980nm range. Oclaro has much experience in gallium arsenide pump-diode designs for telecom that operate at 920nm wavelengths and above.

Laser diode designs for non-telecom applications are also gallium arsenide-based but operate at 800nm and above. They are also scaled-up designs, says LeMaitre: “If you can get 1W on a single mode fibre for telecom, you can get 10W on a multi-mode fibre.” Combining the lasers in an array allows 100-200W outputs. And by stacking the arrays while inserting cooling between the layers, several hundreds of watts of output power are possible.

The lasers are typically sold as packaged and cooled designs, rather than as raw chips. The laser beam can be collimated to precisely deliver the light, or the beam may be coupled when fibre is the preferred delivery medium.

“The laser beam is used to heat, to weld, to burn, to mark and to engrave,” says LeMaitre. “That beam may be coming directly from the laser [diode], or from another medium that is pumped by the laser [diode].” Such designs require specialist packaging, says LeMaitre, and this is what Oclaro secured when it acquired the Spectra Physics division.

Applications

Laser diodes are used in four main markets which Oclaro values at US$800 million a year.

One is the mature, industrial market. Here lasers are used for manufacturing tasks such as metal welding and metal cutting, marking and welding of plastics, and scribing semiconductor wafers.

Another is high-quality printing where the lasers are used to mark large printing plates. This, says LeMaitre, is a small specialist market.

Health care is a growing market for lasers which are used for surgery, although the largest segment is now skin and hair treatment.

The final main market is consumer where vertical-cavity surface-emitting lasers (VCSELs) are used. The VCSELs have output powers in the tens or hundreds of milliwatts only and are used in computer mouse interfaces and for cursor navigation in smartphones.

“These are simple applications that use lasers because they provide reliable, high-quality optical control of the device,” says LeMaitre. “We are talking tens of millions of [VCSEL] devices [a year] that we are shipping right now for these types of applications.”

Oclaro is a supplier of VCSELs for Light Peak, Intel’s high-speed optical cable technology to link electronic devices. “There will be adoptions of the initial Light Peak starting the end of this year or early next year, and we are starting to ramp up production for that,” says LeMaitre. “In the meantime, there are many alternative [designs] happening – the market is extremely active – and we are talking to a lot of players.” Oclaro sells the laser chips for such interface designs; it does not sell optical engines or the cables.

Is Oclaro pursuing optical engines for datacom applications, linking large switch and IP router systems? “We are actively looking at that but we haven’t made any public announcements,” he says.

Market status

LeMaitre has been at Oclaro since 2008 when Avanex merged with Bookham (to become Oclaro). Before that, he was CEO at optical component start-up, LightConnect.

How does the industry now compare with that of a decade ago?

“At that time [of the downturn] the feeling was that it was going to be tough for maybe a year or two but that by 2002 or 2003 the market would be back to normal,” says LeMaitre. “Certainly no-one expected the downturn would last five years.” Since then, nearly all of the start-ups have been acquired or have exited; Oclaro itself is the result of the merger of some 15 companies.

“People were talking about the need for consolidation, well, it has happened,” he says. Oclaro’s main market – optical components for metro and long haul transmission – now has some four main players. “The consolidation has allowed these companies, including Oclaro, to reach a level of profitability which has not been possible until the last two years,” says LeMaitre.

Demand for bandwidth has continued even with the recent economic downturn, and this has helped the financial performance of the optical component companies.

“The need for bandwidth has still sustained some reasonable level of investment even in the dark times,” he says. “The market is not as sexy as it was in those [boom] days but it is much more healthy; a sign of the industry maturing.”

Industry maturity also brings corporate stability which LeMaitre says provides a healthy backdrop when developing new business opportunities.

The industrial, healthcare and printing markets require greater customisation than optical components for telecom, he says, whereas the consumer market is the opposite, being characterised by vastly greater unit volumes.

“To succeed in any market – this is true for this market and for the telecom market – you need to be the best at something, to have that sustainable differentiator,” says LeMaitre. For Oclaro, its differentiator is its semiconductor laser chip expertise. “If you don’t have a sustainable differentiator, it just doesn’t work.”