Deutsche Telekom's Access 4.0 transforms the network edge

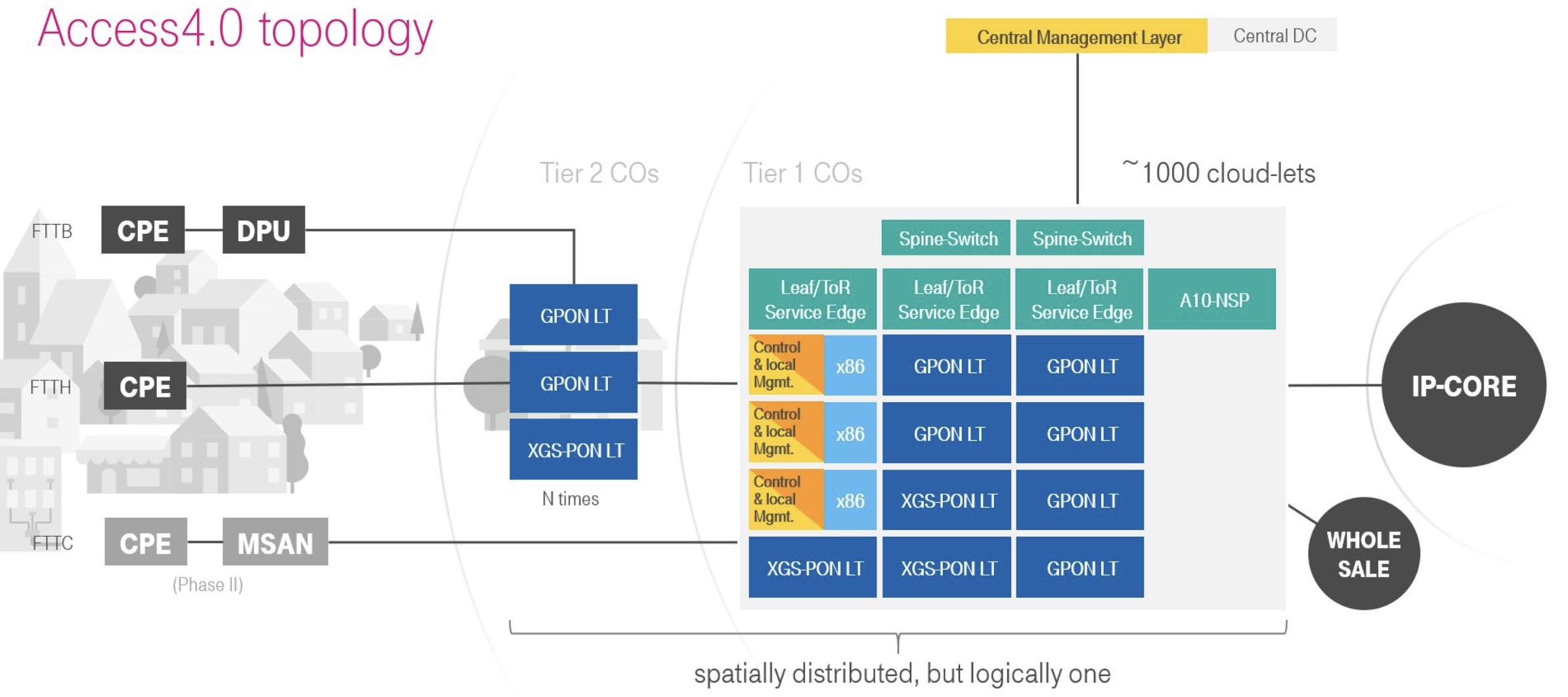

Deutsche Telekom has a working software platform for its Access 4.0 architecture that will start delivering passive optical network (PON) services to German customers later this year. The architecture will also serve as a blueprint for future edge services.

Access 4.0 is a disaggregated design comprising open-source software and platforms that use merchant chips – ‘white-boxes’ – to deliver fibre-to-the-home (FTTH) and fibre-to-the-building (FTTB) services.

“One year ago we had it all as prototypes plugged together to see if it works,” says Hans-Jörg Kolbe, chief engineer and head of SuperSquad Access 4.0. “Since the end of 2019, our target software platform – a first end-to-end system – is up and running.”

Deutsche Telekom has about 1,000 central office sites in Germany, several of which will be upgraded this year to the Access 4.0 architecture.

“Once you have a handful of sites up and running and you have proven the principle, building another 995 is rather easy,” says Robert Soukup, senior program manager at Deutsche Telekom, and another of the co-founders of the Access 4.0 programme.

Origins

The Access 4.0 programme emerged with the confluence of two developments: a detailed internal study of the costs involved in building networks and the advent of the Central Office Re-architected as a Datacentre (CORD) industry initiative.

Deutsche Telekom was scrutinising the costs involved in building its networks. “Not like removing screws here and there but looking at the end-to-end costs,” says Kolbe.

Separately, the operator took an interest in CORD that was, at the time, being overseen by ON.Labs.

At first, Kolbe thought CORD was an academic exercise but, on closer examination, he and his colleague, Thomas Haag, the chief architect and the final co-founder of Access 4.0, decided the activity needed to be investigated internally. In particular, to assess the feasibility of CORD, how bringing together cloud technologies with access hardware would work, and quantify the cost benefits.

“The first goal was to drive down cost in our future network,” says Kolbe. “And that was proven in the first month by a decent cost model. Then, building a prototype and looking into it, we found more [cost savings].”

Given the cost focus, the operator hadn’t considered the far-reaching changes involve with adopting white boxes and the disaggregation of software and hardware, nor the consequences of moving to a mainly software-based architecture in how it could shorten the introduction of new services.

“I knew both these arguments were used when people started to build up Network Functions Virtualisation (NFV) but we didn’t have this in mind; it was a plain cost calculation,” says Kolbe. “Once we starting doing it, however, we found both these things.”

Cost engineering

Deutsche Telekom says it has learnt a lot from the German automotive industry when it comes to cost engineering. For some companies, cost is part of the engineering process and in others, it is part of procurement.

“The issue is not talking to a vendor and asking for a five percent discount on what we want it to deliver,” says Soukup, adding that what the operator seeks is fair prices for everybody.

“Everyone needs to make a margin to stay in business but the margin needs to be fair,” says Soukup. “If we make with our customers a margin of ’X’, it is totally out of the blue that our vendors get a margin of ‘10X’.”

The operator’s goal with Access 4.0 has been to determine how best to deploy broadband internet access on a large scale and with carrier-grade quality. Access is an application suited to cost reduction since “the closer you come to the customer, the more capex [capital expenditure] you have to spend,” says Soukup, adding that since capex is always less than what you’d like, creativity is required.

“When you eat soup, you always grasp a spoon,” says Soukup. “But we asked ourselves: ‘Is a spoon the right thing to use?’”

Software and White Boxes

Access 4.0 uses two components from the Open Networking Foundation (ONF): Voltha and the Software Defined Networking (SDN) Enabled Broadband Access (SEBA) reference design.

Voltha provides a common control and management system for PON white boxes while making the PON network appear to the SDN controller that resides above as a programmable switch. “It abstracts away the [PON] optical line terminal (OLT) so we can treat it as a switch,” says Soukup

SEBA supports a range of fixed broadband technologies that include GPON and XGS-PON. “SEBA 2.0 is a design we are using and are compliant,” says Soukup.

“We are bringing our technology to geographically-distributed locations – central offices – very close to the customer,” says Kolbe. Some aspects are common with the cloud technology used in large data centres but there are also differences.

For example, virtualisation technologies such as Kubernetes are shared while large data centres use OpenStack which is not needed for Access 4.0. In turn, a leaf-spine switching architecture is common as is the use of SDN technology.

“One thing we have learned is that you can’t just take the big data centre technology and put it in distributed locations and try to run heavy-throughput access networks on them,” says Kolbe. “This is not going to work and it led us to the white box approach.”

The issue is that certain workloads cannot be tackled efficiently using x86-based server processors. An example is the Broadband Network Gateway (BNG). “You need to do significant enhancements to either run on the x86 or you offload it to a different type of hardware,” says Kolbe.

Deutsche Telekom started by running a commercial vendor’s BNG on servers. “In parallel, we did the cost calculation and it was horrible because of the throughput-per-Euro and the power-per-Euro,” says Kolbe. And this is where cost engineering comes in: looking at the system, the biggest cost driver was the servers.

“We looked at the design and in the data path there are three programmable ASICs,” says Kolbe. “And this is what we did; it is not a product yet but it is working in our lab and we have done trials.” The result is that the operator has created an opportunity for a white-box design.

There are also differences in the use of switching between large data centres and access. In large data centres, the switching supports the huge east-west traffic flows while in carrier networks, especially close to the edge, this is not required.

Instead, for Access 4.0, traffic from PON trees arrives at the OLT where it is aggregated by a chipset before being passed on to a top-of-rack switch where aggregation and packet processing occur.

The leaf-and-spine architecture can also be used to provide a ‘breakout’ to support edge-cloud services such as gaming and local services. “There is a traffic capability there but we currently don’t use it,” says Kolbe. “But we are thinking that in the future we will.”

Deutsche Telekom has been public about working with such companies as Reply, RtBrick and Broadcom. Reply is a key partner while RtBrick contributes a major element of the speciality domain BNG software.

Kolbe points out that there is no standard for using network processor chips: “They are all specific which is why we need a strong partnership with Broadcom and others and build a common abstraction layer.”

Deutsche Telekom also works closely with Intel, incumbent network vendors such as ADTRAN and original design manufacturers (ODMs) including EdgeCore Networks.

Challenges

About 80 percent of the design effort for Access 4.0 is software and this has been a major undertaking for Deutsche Telekom.

“The challenge is to get up to speed with software; that is not a thing that you just do,” says Kolbe. “We can’t just pretend we are all software engineers.”

Deutsche Telekom also says the new players it works with – the software specialists – also have to better understand telecom. “We need to meet in the middle,” says Kolbe.

Soukup adds that mastering software takes time – years rather than weeks or months – and this is only to be expected given the network transformation operators are undertaking.

But once achieved, operators can expect all the benefits of software – the ability to work in an agile manner, continuous integration/ continuous delivery (CI/DC), and the more rapid introduction of services and ideas.

“This is what we have discovered besides cost-savings: becoming more agile and transforming an organisation which can have an idea and realise it in days or weeks,” says Soukup. The means are there, he says: “We have just copied them from the large-scale web-service providers.”

Status

The first Access 4.0 services will be FTTH delivered from a handful of central offices in Germany later this year. FTTB services will then follow in early 2021.

“Once we are out there and we have proven that it works and it is carrier-grade, then I think we are very fast in onboarding other things,” says Soukup. “But they are [for now] not part of our case.”

Infinera buying Coriant will bring welcome consolidation

Infinera is to purchase privately-held Coriant for $430 million. The deal will effectively double Infinera’s revenues, add 100 new customers and expand the systems vendor’s product portfolio.

Infinera's CEO, Tom FallonBut industry analysts, while welcoming the consolidation among optical systems suppliers, highlight the challenges Infinera faces making the Coriant acquisition a success.

Infinera's CEO, Tom FallonBut industry analysts, while welcoming the consolidation among optical systems suppliers, highlight the challenges Infinera faces making the Coriant acquisition a success.

“The low price reflects that this isn't the best asset on the market,” says Sterling Perrin, principal analyst, optical networking and transport at Heavy Reading. “They are buying $1 of revenue for 50 cents; the price reflects the challenges.”

Benefits

According to Perrin, there are still too many vendors facing "brutal price pressures" despite the optical industry being mature. Removing one vendor that has been cutting prices to win business is good news for the rest.

For Infinera, the acquisition of Coriant promises three main benefits, as outlined by its CEO, Tom Fallon, during a briefing addressing the acquisition.

The first is expanding its vertically-integrated business model across a wider portfolio of products. Infinera develops its own optical technology: its indium-phosphide photonic integrated circuits (PICs) and accompanying coherent DSPs that power its platforms. Having its own technology differentiates the optical performance of its platforms and helps it achieve leading gross margins of over 40 percent, said Fallon.

Exploiting the vertical integration model will be a central part of the Coriant acquisition. Indeed, the company mentioned vertical integration 21 times in as many minutes during its briefing outlining the deal. Infinera expects to deliver industry-leading growth and operating margins once it exploits the benefits of vertical integration across an expanded portfolio of platforms, said Fallon.

Having a seat at the table with the largest global service providers to strategise about where their business is going will be invaluable

Buying Coriant also gives Infinera much-needed scale. Not only will Infinera double its revenues - Coriant’s revenues were about $750 million in 2017 while Infinera’s were $741 million for the same period - but it will expand its customer base including key tier-one service providers and webscale players. According to Fallon, the newly combined company will include nine of the top 10 global tier-one service providers and the six leading global internet content providers.

Infinera admits it has struggled to break into the tier-one operators and points out that trying to enter is an expensive and time-consuming process, estimated at between $10 million to $20 million each time. “[Now, with Coriant,] having a seat at the table with the largest global service providers to strategise about where their business is going will be invaluable,” said Fallon.

Sterling Perrin of Heavy Reading The third benefit Infinera gains is an expanded product portfolio. Coriant has expertise in layer 3 networking, in the metro core with its mTera universal transport platform as well as SDN orchestration and white box technologies. Heavy Reading’s Perrin says Coriant has started development of a layer-3 router white box for edge applications.

Sterling Perrin of Heavy Reading The third benefit Infinera gains is an expanded product portfolio. Coriant has expertise in layer 3 networking, in the metro core with its mTera universal transport platform as well as SDN orchestration and white box technologies. Heavy Reading’s Perrin says Coriant has started development of a layer-3 router white box for edge applications.

Combining the two companies also results in a leading player in data centre interconnect.

“Coriant expands our portfolio, particularly in packet and automation where significant network investment is expected over the next decade,” said Fallon. The deal is happening at the right time, he said, as operators ramp spending as they undertake network transformation.

Infinera will pay $230 million in cash - $150 million up front and the rest in increments - and a further $200 million in shares for Coriant. The company expects to achieve cost savings of $250 million between 2019 and 2021 by combining the two firms, $100 million in 2019 alone. The deal is expected to close in the third quarter of 2018.

If a company is going to put that integrated product into their network, it’s a full-blown RFP process which Infinera may or may not win

Challenges

Industry analysts, while seeing positives for Infinera, have concerns regarding the deal.

A much-needed consolidation of weaker vendors is how George Notter, an analyst at the investment bank, Jefferies, describes the deal. For Infinera, however, continuing as before was not an option. Heavy Reading’s Perrin agrees: ”Infinera has been under a lot of pressure; their core business of long-haul has slowed.”

The deal brings benefits to Infinera: scale, complementary product sets, and the promise of being able to invest more in R&D to benefit its PIC technology, says Notter in a research note.

Gaining customers is also a key positive. “Infinera is really excited about getting the new set of customers and that is what they are paying for,” says Vladimir Kozlov, CEO of LightCounting Market Research. “However, these customers were gained by pricing products at steep discounts.”

What is vital for Infinera is that it delivers its upcoming 2.4-terabit Infinite Capacity Engine 5 (ICE5) optical engine on time. The ICE5 is expected to ship in early 2019. In parallel, Infinera is developing its ICE6 due two years later. Infinera is developing two generations of ICE designs in parallel after being late to market with its current 1.2-terabit optical engine.

Infinera is really excited about getting the new set of customers and that is what they are paying for

But even if the ICE5 is delivered on time, upgrading Coriant's platforms will be a major undertaking. “It sounds like they are going to fit their optical engines in all of Coriant’s gear; I don’t see how that is going to happen anytime quickly,” says Perrin.

Customers bought Coriant's equipment for a reason. Once upgraded with Infinera’s PICs, these will be new products that have to undergo extensive lab testing and full evaluations.

Perrin questions how moving customers off legacy platforms to the new will not result in the service providers triggering a new request-for-proposal (RFP). “If a company is going to put that integrated product into their network, it’s a full-blown RFP process which Infinera may or may not win,” says Perrin. “Infinera talked a lot about the benefits of vertical integration but they didn’t really address the challenges and the specific steps they would take to make that work.”

LightCounting's Vladimir KozlovLightCounting’s Kozlov also questions how this will work.

LightCounting's Vladimir KozlovLightCounting’s Kozlov also questions how this will work.

“The story about vertical integration and scaling up PIC production is compelling, but how will they support Coriant products with the PIC?” he says. “Will they start making pluggable modules internally? Will Coriant’s customers be willing to move away from the pluggables and get locked into Infinera’s PICs? Do they know something that we don’t?”

While Infinera is a top five optical platform supplier globally it hasn’t dominated the market with its PIC technologies, says Perrin. “Even if they technically pull off the vertical integration with the Coriant products, how much is that going to win business for them?” he says. “It is one architecture in a mix that has largely gone to pluggables.”

Transmode

Infinera already has experience acquiring a systems vendor when it bought in 2015 metro-access player, Transmode. Strategically, this was a very solid acquisition, says Perrin, but the jury is still out as to its success.

“The integration, making it work, how Transmode has performed within Infinera hasn’t gone as well as they wanted,” says Perrin. “That said, there are some good opportunities going forward for the Transmode group.”

Infinera also had planned to integrate its PIC technology within Transmode’s products but it didn't make economic sense for the metro market. There may also have been pushback from customers that liked the Transmode products, says Perrin: “With Coriant it looks like they really are going to force the vertical integration.”

Infinera acknowledges the challenges ahead and the importance of overcoming them if it is to secure its future.

“Given the comparable sizes of each company’s revenues and workforce, we recognise that integration will be challenging and is vital for our ultimate success,” said Fallon.

Will white boxes predominate in telecom networks?

Will future operator networks be built using software, servers and white boxes or will traditional systems vendors with years of network integration and differentiation expertise continue to be needed?

AT&T’s announcement that it will deploy 60,000 white boxes as part of its rollout of 5G in the U.S. is a clear move to break away from the operator pack.

The service provider has long championed network transformation, moving from proprietary hardware and software to a software-controlled network based on virtual network functions running on servers and software-defined networking (SDN) for the control switches and routers.

Glenn WellbrockNow, AT&T is going a stage further by embracing open hardware platforms - white boxes - to replace traditional telecom hardware used for data-path tasks that are beyond the capabilities of software on servers.

Glenn WellbrockNow, AT&T is going a stage further by embracing open hardware platforms - white boxes - to replace traditional telecom hardware used for data-path tasks that are beyond the capabilities of software on servers.

For the 5G deployment, AT&T will, over several years, replace traditional routers at cell and tower sites with white boxes, built using open standards and merchant silicon.

“White box represents a radical realignment of the traditional service provider model,” says Andre Fuetsch, chief technology officer and president, AT&T Labs. “We’re no longer constrained by the capabilities of proprietary silicon and feature roadmaps of traditional vendors.”

But other operators have reservations about white boxes. “We are all for open source and open [platforms],” says Glenn Wellbrock, director, optical transport network - architecture, design and planning at Verizon. “But it can’t just be open, it has to be open and standardised.”

Wellbrock also highlights the challenge of managing networks built using white boxes from multiple vendors. Who will be responsible for their integration or if a fault occurs? These are concerns SK Telecom has expressed regarding the virtualisation of the radio access network (RAN), as reported by Light Reading.

“These are the things we need to resolve in order to make this valuable to the industry,” says Wellbrock. “And if we don’t, why are we spending so much time and effort on this?”

Gilles Garcia, communications business lead director at programmable device company, Xilinx, says the systems vendors and operators he talks to still seek functionalities that today’s white boxes cannot deliver. “That’s because there are no off-the-shelf chips doing it all,” says Garcia.

We’re no longer constrained by the capabilities of proprietary silicon and feature roadmaps of traditional vendors

White boxes

AT&T defines a white box as an open hardware platform that is not made by an original equipment manufacturer (OEM).

A white box is a sparse design, built using commercial off-the-shelf hardware and merchant silicon, typically a fast router or switch chip, on which runs an operating system. The platform usually takes the form of a pizza box which can be stacked for scaling, while application programming interfaces (APIs) are used for software to control and manage the platform.

As AT&T’s Fuetsch explains, white boxes deliver several advantages. By using open hardware specifications for white boxes, they can be made by a wider community of manufacturers, shortening hardware design cycles. And using open-source software to run on such platforms ensures rapid software upgrades.

Disaggregation can also be part of an open hardware design. Here, different elements are combined to build the system. The elements may come from a single vendor such that the platform allows the operator to mix and match the functions needed. But the full potential of disaggregation comes from an open system that can be built using elements from different vendors. This promises cost reductions but requires integration, and operators do not want the responsibility and cost of both integrating the elements to build an open system and integrating the many systems from various vendors.

Meanwhile, in AT&T’s case, it plans to orchestrate its white boxes using the Open Networking Automation Platform (ONAP) - the ‘operating system’ for its entire network made up of millions of lines of code.

ONAP is an open software initiative, managed by The Linux Foundation, that was created by merging a large portion of AT&T’s original ECOMP software developed to power its software-defined network and the OPEN-Orchestrator (OPEN-O) project, set up by several companies including China Mobile and China Telecom.

AT&T has also launched several initiatives to spur white-box adoption. One is an open operating system for white boxes, known as the dedicated network operator system (dNOS). This too will be passed to The Linux Foundation.

The operator is also a key driver of the open-based reconfigurable optical add/ drop multiplexer multi-source agreement, the OpenROADM MSA. Recently, the operator announced it will roll out OpenROADM hardware across its network. AT&T has also unveiled the Akraino open source project, again under the auspices of the Linux Foundation, to develop edge computing-based infrastructure.

At the recent OFC show, AT&T said it would limit its white box deployments in 2018 as issues are still to be resolved but that come 2019, white boxes will form its main platform deployments.

Xilinx highlights how certain data intensive tasks - in-line security, performed on a per-flow basis, routing exceptions, telemetry data, and deep packet inspection - are beyond the capabilities of white boxes. “White boxes will have their place in the network but there will be a requirement, somewhere else in the network for something else, to do what the white boxes are missing,” says Garcia.

Transport has been so bare-bones for so long, there isn’t room to get that kind of cost reduction

AT&T also said at OFC that it expects considerable capital expenditure cost savings - as much as a halving - using white boxes and talked about adopting in future reverse auctioning each quarter to buy its equipment.

Niall Robinson, vice president, global business development at ADVA Optical Networking, questions where such cost savings will come from: “Transport has been so bare-bones for so long, there isn’t room to get that kind of cost reduction. He also says that there are markets that already use reverse auctioning but typically it is for items such as components. “For a carrier the size of AT&T to be talking about that, that is a big shift,” says Robinson.

Layer optimisation

Verizon’s Wellbrock first aired reservations about open hardware at Lightwave’s Open Optical Conference last November.

In his talk, Wellbrock detailed the complexity of Verizon’s wide area network (WAN) that encompasses several network layers. At layer-0 are the optical line systems - terminal and transmission equipment - onto which the various layers are added: layer-1 Optical Transport Network (OTN), layer-2 Ethernet and layer-2.5 Multiprotocol Label Switching (MPLS). According to Verizon, the WAN takes years to design and a decade to fully exploit the fibre.

“You get a significant saving - total cost of ownership - from combining the layers,” says Wellbrock. “By collapsing those functions into one platform, there is a very real saving.” But there is a tradeoff: encapsulating the various layers’ functions into one box makes it more complex.

“The way to get round that complexity is going to a Cisco, a Ciena, or a Fujitsu and saying: ‘Please help us with this problem’,” says Wellbrock. “We will buy all these individual piece-parts from you but you have got to help us build this very complex, dynamic network and make it work for a decade.”

Next-generation metro

Verizon has over 4,000 nodes in its network, each one deploying at least one ROADM - a Coriant 7100 packet optical transport system or a Fujitsu Flashwave 9500. Certain nodes employ more than one ROADM; once one is filled, a second is added.

“Verizon was the first to take advantage of ROADMs and we have grown that network to a very large scale,” says Wellbrock.

The operator is now upgrading the nodes using more sophiticated ROADMs, as part of its next-generation metro. Now each node will need only one ROADM that can be scaled. In 2017, Verizon started to ramp and upgraded several hundred ROADM nodes and this year it says it will hit its stride before completing the upgrades in 2019.

“We need a lot of automation and software control to hide the complexity of what we have built,” says Wellbrock. This is part of Verizon’s own network transformation project. Instead of engineers and operational groups in charge of particular network layers and overseeing pockets of the network - each pocket being a ‘domain’, Verizon is moving to a system where all the networks layers, including ROADMs, are managed and orchestrated using a single system.

The resulting software-defined network comprises a ‘domain controller’ that handles the lower layers within a domain and an automation system that co-ordinates between domains.

“Going forward, all of the network will be dynamic and in order to take advantage of that, we have to have analytics and automation,” says Wellbrock.

In this new world, there are lots of right answers and you have to figure what the best one is

Open design is an important element here, he says, but the bigger return comes from analytics and automation of the layers and from the equipment.

This is why Wellbrock questions what white boxes will bring: “What are we getting that is brand new? What are we doing that we can’t do today?”

He points out that the building blocks for ROADMs - the wavelength-selective switches and multicast switches - originate from the same sub-system vendors, such that the cost points are the same whether a white box or a system vendor’s platform is used. And using white boxes does nothing to make the growing network complexity go away, he says.

“Mixing your suppliers may avoid vendor lock-in,” says Wellbrock. “But what we are saying is vendor lock-in is not as serious as managing the complexity of these intelligent networks.”

Wellbrock admits that network transformation with its use of analytics and orchestration poses new challenges. “I loved the old world - it was physics and therefore there was a wrong and a right answer; hardware, physics and fibre and you can work towards the right answer,” he says. “In this new world, there are lots of right answers and you have to figure what the best one is.”

Evolution

If white boxes can’t perform all the data-intensive tasks, then they will have to be performed elsewhere. This could take the form of accelerator cards for servers using devices such as Xilinx’s FPGAs.

Adding such functionality to the white box, however, is not straightforward. “This is the dichotomy the white box designers are struggling to address,” says Garcia. A white box is light and simple so adding extra functionality requires customisation of its operating system to run these application. And this runs counter to the white box concept, he says.

We will see more and more functionalities that were not planned for the white box that customers will realise are mandatory to have

But this is just what he is seeing from traditional systems vendors developing designs that are bringing differentiation to their platforms to counter the white-box trend.

One recent example that fits this description is Ciena’s two-rack-unit 8180 coherent network platform. The 8180 has a 6.4-terabit packet fabric, supports 100-gigabit and 400-gigabit client-side interfaces and can be used solely as a switch or, more typically, as a transport platform with client-side and coherent line-side interfaces.

The 8180 is not a white box but has a suite of open APIs and has a higher specification than the Voyager and Cassini white-box platforms developed by the Telecom Infra Project.

“We are going through a set of white-box evolutions,” says Garcia. “We will see more and more functionalities that were not planned for the white box that customers will realise are mandatory to have.”

Whether FPGAs will find their way into white boxes, Garcia will not say. What he will say is that Xilinx is engaged with some of these players to have a good view as to what is required and by when.

It appears inevitable that white boxes will become more capable, to handle more and more of the data-plane tasks, and as a response to the competition from traditional system vendors with their more sophisticated designs.

AT&T’s white-box vision is clear. What is less certain is whether the rest of the operator pack will move to close the gap.

ON2020 rallies industry to address networking concerns

Source: ON2020

Source: ON2020

The slide shows how router-blade client interfaces are scaling at 40% annually compared to the 20% growth rate of general single-wavelength interfaces (see chart).

Extrapolating the trend to 2024, router blades will support 20 terabits while client interfaces will only be at one terabit. Each blade will thus require 20 one-terabit Ethernet interfaces. “That is science fiction if you go off today’s technology,” says Winzer, director of optical transmission subsystems research at Nokia Bell Labs and a member of the ON2020 steering committee.

This is where ON2020 comes in, he says, to flag up such disparities and focus industry efforts so they are addressed.

ON2020

Established in 2016, the companies driving ON2020 are Fujitsu, Huawei, Nokia, Finisar, and Lumentum.

The reference to 2020 signifies how the group looks ahead four to five years, while the name is also a play on 20/20 vision, says Brandon Collings, CTO of Lumentum and also a member of the steering committee.

Brandon CollingsON2020 addresses a void in the industry, says Collings. The Optical Internetworking Forum (OIF) organisation may have a similar conceptual mission but it is more hands-on, focussing on components and near-term implementations. ON2020 looks further out.

“Maybe you could argue it is a two-step process,” says Collings. “First, ON2020 is longer term followed by the OIF’s definition in the near term.”

To build a longer-term view, ON2020 surveyed network operators worldwide including the largest internet content providers players and leading communications service providers.

ON2020 reported its findings at the recent ECOC show under three broad headings: traffic growth and the impact on fibre capacity and interfaces, interconnect requirements, and network management and operations.

Things will have to get cheaper; that is the way things are.

Network management

One key survey finding is the importance network operators attach to software-defined networking (SDN) although the operators are frustrated with the lack of SDN solutions available, forcing them to work with vendors to address their needs.

Peter WinzerThe network operators also see value in white boxes and disaggregation, to lower hardware costs and avoid vendor lock-in. But as with SDN, there are challenges with white boxes and disaggregation.

“Let’s not forget that SDN comes from the big webscales,” says Winzer, companies with abundant software and control experience. Telecom companies don’t have such sophisticated resources.

“This produces a big conundrum for the telecom operators: they want to get the benefits without spending what the webscales are spending,” says Winzer. The telcos also need higher network reliability such that their job is even harder.

Responding to ON2020’s anonymous survey, the telecom players stress how SDN, disaggregation and the adoption of white boxes will require a change in practices and internal organisation and even the employment of system integrators.

“They are really honest. They say, nice, but we are just overwhelmed,” says Winzer. “It highlights the very important organisational challenges operators are facing.”

Operators are frustrated with the lack of SDN solutions available.

Capacity and connectivity

The webscales and telecom operators were also surveyed about capacity and connectivity issues.

Both classes of operator use 10-terabit links or more and this will soon rise to 40 terabits. The consensus is that the C-band alone is insufficient given their capacity needs.

Those operators with limited fibre want to grow capacity by also using the L-band with the C-band, while operators with plenty of fibre want to combine fibre pairs - a form of spatial division multiplexing - and using the C and L bands. The implication here is that there is an opportunity for hardware integration, says ON2020.

Network operators use backbone wavelengths at 100, 200 and 400 gigabits. As for service feeds - what ON2020 refers to as granularity - webscale players favour 25 gigabit-per-second (Gbps) whereas telecom operators continue to deal with much slower feeds - 10Mbps, 100Mbps, and 1Gbps.

What can ON2020 do to address the demanding client-interface requirements of IP router blades, referred to in the chart?

Xiang Liu, distinguished scientist, transmission product line at Huawei and a key instigator in the creation of ON2020, says photonic integration and a tighter coupling between photonics and CMOS will be essential to reduce the cost-per-bit and power-per-bit of future client interfaces.

Xiang Liu

Xiang Liu

“As the investment for developing routers with such throughputs could be unprecedentedly high, it makes sense for our industry to collectively define the specifications and interfaces,” says Liu. “ON2020 can facilitate such an industry-wide effort.”

Another survey finding is that network operators favour super-channels once client interfaces reach 400 gigabits and higher rates. Super-channels are more efficient in their use of the fibre’s spectrum while also delivering operations, administration, and management (OAM) benefits.

The network operators were also asked about their node connectivity needs. While they welcome the features of advanced reconfigurable optical add-drop multiplexers (ROADMs), they don’t necessarily need them all. A typical response being they will adopt such features if they are practically for free.

This, says Winzer, is typical of carriers. “Things will have to get cheaper; that is the way things are.”

Photonic integration and a tighter coupling between photonics and CMOS will be essential to reduce the cost-per-bit and power-per-bit of future client interfaces

Future plans

ON2020 is still seeking feedback from additional network operators, the survey questionnaire being availability for download on its website. “The more anonymous input we get, the better the results will be,” says Winzer.

Huawei’s Liu says the published findings are just the start of the group’s activities.

ON2020 will conduct in-depth studies on such topics as next-generation ROADM and optical cross-connects; transport SDN for resource optimisation and multi-vendor interoperability; 5G-oriented optical networking that delivers low latency, accurate synchronisation and network slicing; new wavelength-division multiplexing line rates beyond 200 gigabit; and optical link technologies beyond just the C-band and new fibre types.

ON2020 will publish a series of white papers to stimulate and guide the industry, says Liu.

The group also plans to provide input to standardisation organisations to enhance existing standards and start new ones, create proof-of-concept technology demonstrators, and enable multi-vendor interoperable tests and field trials.

Discussions have started for ON2020 to become an IEEE Industry Connections programme. “We don’t want this to be an exclusive club of five [companies],” says Winzer. “We want broad participation.”