Nokia’s PSE-2s delivers 400 gigabit on a wavelength

Four hundred gigabit transmission over a single carrier is enabled using Nokia’s second-generation programmable Photonic Service Engine coherent processor, the PSE2, part of several upgrades to Nokia's flagship PSS 1830 family of packet-optical transport platforms.

Kyle Hollasch“One thing that is clear is that performance will have a key role to play in optics for a long time to come, including distance, capacity per fiber, and density,” says Sterling Perrin, senior analyst at Heavy Reading.

Kyle Hollasch“One thing that is clear is that performance will have a key role to play in optics for a long time to come, including distance, capacity per fiber, and density,” says Sterling Perrin, senior analyst at Heavy Reading.

This limits the appeal of the so-called “white box” trend for many applications in optics, he says: “We will continue to see proprietary advances that boost performance in specific ways and which gain market traction with operators as a result”.

The 1830 Photonic Service Switch

The 1830 PSS family comprises dense wavelength-division multiplexing (DWDM) platforms and packet-OTN (Optical Transport Network) switches.

The DWDM platform includes line amplifiers, reconfigurable optical add-drop multiplexers (ROADMs), transponder and muxponder cards. The 1830 platforms span the PSS-4, -8, -16 and the largest and original -32, while the 1830 PSS packet-OTN switches include the PSS-36 and the PSS-64 platforms. The switches include their own coherent uplinks but can be linked to the 1830 DWDM platforms for their line amps and ROADMs.

The 1830 PSS upgrades include a 500-gigabit muxponder card for the DWDM platforms that feature the PSE2, new ROADM and line amplifiers that will support the L-band alongside the C-band to double fibre capacity, and the PSS-24x that complements the two existing OTN switch platforms.

100-gigabit as a service

In DWDM transmissions, 100-gigabit wavelengths are commonly used to transport multiplexed 10-gigabit signals. Nokia says it is now seeing increasing demand to transport 100-gigabit client signals.

“One hundred gigabit is becoming the new currency,” says Kyle Hollasch, director, optical marketing at Nokia. “No longer is the thinking of 100 gigabit just as a DWDM line rate but 100 gigabit as a service, being handed from a customer for transport over the network.”

Current PSS 1830 platform line cards support 50-gigabit, 100-gigabit and 200-gigabit coherent transmission using polarisation-multiplexed, binary phase-shift keying (PM-BPSK), quadrature phase-shift keying (PM-QPSK) and 16 quadrature amplitude modulation (PM-16QAM), respectively. Nokia now offers a 500-gigabit muxponder card that aggregates and transports 100-gigabit client signals. The 500-gigabit muxponder card has been available since the first quarter and already several hundred cards have been shipped.

“The challenge is not just to crank up capacity but to do so profitably,” says Hollasch. “Keeping the cost-per-bit down, the power consumption down while pushing towards the Shannon limit [of fibre] to carry more capacity.”

Source: Nokia

Source: Nokia

Modulation formats

The PSE2 family of coherent processors comprises two designs: the high-end super-coherent PSE-2s and the compact low-power PSE-2c.

Nokia joins the likes of Ciena and Infinera in developing several coherent ASICs, highlighting how optical transport requirements are best met using custom silicon. Infinera also announced its latest generation photonic integrated circuit that supports up to 2.4 terabits.

The high-end PSE-2s is a significant enhancement on the PSE coherent chipset first announced in 2012. Implemented using 28nm CMOS, the PSE-2s has a power consumption similar to the original PSE yet halves the power consumption-per-bit given its higher throughput.

The PSE-2s adds four modulation formats to the PSE’s existing three and supports two symbol rates: 32.5 gigabaud and 44.5 gigabaud. The modulation schemes and distances they enable are shown in the chart.

The 1.4 billion transistor PSE-2s has sufficient processing performance to support two coherent channels. Each channel can implement a different modulation format if desired, or the two can be tightly coupled to form a super-channel. The only exception is the 400-gigabit single wavelength format. Here the PSE-2s supports only one channel implemented using a 45 gigabaud symbol rate and PM-64QAM. The 400-gigabit wavelength has a relatively short 100-150km reach, but this suits data centre interconnect applications where links are short and maximising capacity is key.

Nokia recently conducted a lab experiment resulting in the sending of 31.2 terabits of data over 90km of standard single-mode fibre using 78, 400-gigabit channels spaced 50GHz apart across the C-band. "We were only limited by the available hardware from reaching 35 terabits," says Hollasch.

Using the 45-gigabaud rate and PM-16QAM enables two 250-gigabit channels. This is how the 500-gigabit muxponder card is achieved. The 250-gigabit wavelength has a reach of 900km, and this can be extended to 1,000km but at 200 gigabit by dropping to the 32-gigabaud symbol rate, as implemented with the current PSE chipset.

Nokia also offers 200 gigabit implemented using 45 gigabaud and 8-QAM. “The extra baud rate gets us [from 150 gigabit] to 200 gigabit; this is very valuable,” says Hollasch. The resulting reach is 2,000km and he expects this format to gain the most market traction.

The PSE-2s, like the PSE, also implements PM-QPSK and PM-BPSK but with reaches of 3,000-5,000km and 10,000km, respectively.

The PSE-2s introduces a fourth modulation format dubbed set-partition QPSK (SP-QPSK).

Standard QPSK uses amplitude and phase modulation resulting in a 4-point constellation. With SP-QPSK, only three out of the possible four constellation points are used for any given symbol. The downside of the approach is that a third fewer constellation points are used and hence less data is transported but the lost third can be restored using the higher 45-gigabaud symbol rate.

The benefit of SP-QPSK is its extended reach. “By properly mapping the sequence of symbols in time, you create a greater Euclidean distance between the symbol points,” says Hollasch. “What that gives you is gain.” This 2.5dB extra gain compared to PM-QPSK equates to a reach beyond 5,000km. “That is the territory most implementation are using BPSK and also addresses a lot of sub-sea applications,” says Hollasch. “Using SP-QPSK [at 100 gigabit] also means fewer carriers and hence, it is more spectrally efficient than [50-gigabit] BPSK.”

The PSE-2c

The second coherent DSP-ASIC in the new family is the PSE-2c compact, also implemented in 28nm CMOS, designed for smaller, low-power metro platforms and metro-regional reaches.

The PSE-2c supports a 100-gigabit line rate using PM-QPSK and will be used alongside the CFP2-ACO line-side pluggable module. The PSE-2c consumes a third of the power of the current PSE operating at 100 gigabit.

“We are putting the PSE2 [processors] in multiple form factors and multiple products,” says Hollasch.

The recent Infinera and Nokia announcements highlight the electronic processing versus photonic integration innovation dynamics, says Heavy Reading's Perrin. He notes how innovations in electronics are driving transmission across greater distances and greater capacities per fibre and finding applications in both long haul and metro networks as a result.

“Parallel photonic integration is a density play, but even Infinera’s ICE announcement is a combination of photonic integration and electronic processing advancements,” says Perrin. “In our view, electronic processing has taken a front seat in importance for addressing fibre capacity and transmission distance, which is why the need for parallel photonic integration in transport has not really spread beyond Infinera so far.”

The PSS-24x showing the 24, 400 gigabit line cards and 3 switch fabric cards, 2 that are used and one for redundancy. Source: Nokia

The PSS-24x showing the 24, 400 gigabit line cards and 3 switch fabric cards, 2 that are used and one for redundancy. Source: Nokia

PSS-24x OTN switch

Nokia has also unveiled its latest 28nm CMOS Transport Switch Engine, a 2.4-terabit non-blocking OTN switch chip that is central to its latest PSS-24x switch platform. Two such chips are used on a fabric card to achieve 4.8 terabits, and three such cards are used in the PSS-24x, two active cards and a third for redundancy. The result is 9.6 terabits of switching capacity instead of the current platforms' 4 terabits, while power consumption is halved.

Nokia says it already has a roadmap to 48-terabits of switching capacity. “The current generation [24x] shipping in just a few months is 400-gigabit per slot,” says Hollasch. The 24 slots that fit within the half chassis results in 9.6 terabits of switching capacity. However, Nokia's platform roadmap will achieve 1 terabit-per-slot by 2018-19. The backplane is already designed to support such higher speeds, says Hollasch. This would enable 24 terabits of switching capacity per shelf and with two shelves in a bay, a total switching capacity of 48 terabits.

The transport switch engine chip switches OTN only. It is not designed as a packet and OTN switch. “A cell-based agnostic switching architecture comes with a power and density penalty,” explains Hollasch, adding that customers prefer the lowest possible power consumption and highest possible density.

The result is a centralised OTN switch fabric with line-card packet switching. Nokia will introduce packet switching line cards next year that will support 300 gigabit per card. Two such cards will be ‘pair-able’ to boost capacity to 600 gigabit but Hollasch stresses that the PSS-24x will not switch packets through its central fabric.

Doubling capacity with the L-band

By extending the 1830 PSS platform to include the L-band, up to 70 terabits of data can be supported on a fibre, says Hollasch.

Nokia has developed a line card that supports both C-band and L-band amplification that will be available around the fourth quarter of this year. The ROADM and 500-gigabit muxponder card for the L-band will be launched in 2017.

Once the amplification is available, operators can start future-proofing their networks. Then when the L-band ROADMs and muxponder cards become available, operators can pay as they grow; extending wavelengths into the L-band, once all 96 channels of the C-band are used, says Hollasch.

BT makes plans for continued traffic growth in its core

Part 1

Kevin Smith: “A lot of the work we are doing with the trials have demonstrated we can scale our networks gracefully rather than there being a brick wall of a problem.”

Kevin Smith: “A lot of the work we are doing with the trials have demonstrated we can scale our networks gracefully rather than there being a brick wall of a problem.”

BT is confident that its core network will accommodate the expected IP traffic growth for the next decade. Traffic in BT’s core is growing at between 35 and 40 percent annually, compared to the global average growth rate of 20 to 30 percent. BT attributes the higher growth to the rollout of fibre-based broadband across the UK.

The telco is deploying 100-gigabit wavelengths in high-traffic areas of its network. “These are key sites where we're running out of wavelengths such that we need to implement higher-speed ones,” says Kevin Smith, research leader for BT’s transport networks. The operator is now trialling 200-gigabit wavelengths using polarisation multiplexing, 16-quadrature amplitude modulation (PM-16QAM).

Adopting higher-order modulation increases capacity and spectral efficiency but at the expense of a loss in system performance which can be significant.

Systems vendors use polarisation-multiplexed, quadrature phase-shift keying (PM-QPSK) for 100-gigabit wavelengths. Moving to PM-16QAM doubles the bits on the wavelength but the received data has less tolerance to noise. The result is a 6-decibel loss compared to PM-QPSK, such that the transmission distance drops to a quarter. If PM-QPSK spans a 4,000km link, using PM-16QAM the reach on the same link is only 1,000km.

The transmitted capacity can also be increased by using pulse-shaping at the transmitter to cram a wavelength into a narrower channel. BT’s existing optical network uses fixed 50GHz-wide channels. But in a recent network trial with Huawei, a 3 terabit super-channel was transmitted over a 360km link using a flexible grid.

The super-channel comprised 15 channels, each carrying 200 gigabit using PM-16QAM. Using the flexible grid, each carrier occupied a 33.5GHz channel, increasing fibre capacity by a factor of 1.5 compared to a 50GHz fixed-grid. “For 16-QAM, it [33.5GHz] is pretty close to the limit,” says Smith.

Increasing the baud rate is the most structurally-efficient way to accommodate the high speed

Another way to boost the carrier’s data as well as reduce system cost is to up the signalling rate. Current optical transport systems use a 30Gbaud symbol rate. Here, two carriers each using PM-16QAM are needed to deliver 400 gigabit. Doubling the symbol rate to 60Gbaud enables a single 400 gigabit wavelength. Doubling the baud rate also halves a platform’s transponder count, reducing the overall cost-per-bit, and increases platform density.

“Increasing the baud rate is the most structurally-efficient way to accommodate the high speed,” says Smith. Going to 16QAM increases the data that is carried but at the expense of reach. By increasing the baud rate, reach can be extended while also keeping the modulation rate at a lower level, he says.

BT says it is seeing signs of such ‘flexrate’ transponders that can adapt modulation format and baud rate. “This is a very interesting area we can mine,” says Smith. The fundamental driver is about reducing cost but also giving BT more flexibility in its network, he says.

Traffic growth

Coping with traffic growth is a constant challenge, says BT.

“I’m not worried about a capacity crunch,” says Smith. “A lot of the work we are doing with the trials have demonstrated we can scale our networks gracefully rather than there being a brick wall of a problem.”

The operator is confident that 25 to 30 terabit of traffic can be squeezed into the C-band using flexgrid and narrower bands. Beyond that, BT says broadening the spectral window using additional spectral bands such as the L-band could boost a fibre’s capacity to 100 terabit. Vendors are already looking at extending the spectral window, says BT.

Sliceable transponders

BT is also part of longer-term research exploring an extension to the ‘flexrate' transponder, dubbed the sliceable bit rate variable transponder (S-BVT).

“It is very much early days but the idea is to put multiple modulators on the same big super transponder so that it can kick out super-channels that can be provisioned on demand,” says Andrew Lord, head of optical research at BT.

The large multi-terabit super-channel would be sent out and sliced further down the network by flexible grid wavelength-selective switches such that parts of the super-channel would end up at different destinations. “You don’t need all that capacity to go to one other node but you might need it to go to multiple nodes,” says Lord.

Such a sliceable transponder promises several benefits. One is an ability to keep repartitioning the multi-terabit slice based on demand. “It is a good thing if we see that kind of dynamics happening, but not fast dynamics,” says Lord. The repartitioning would more likely be occasional, adding extra capacity between nodes based on demand. Accordingly, the sliced multi-terabit super-channel would end up at fewer destinations over time.

The sliceable transponder concept also promises cost reduction through greater component integration.

BT stresses this is still early research but such a transponder could end up in the network in five years’ time.

Space-division multiplexing

Another research area that promises to increase significantly the overall capacity of a fibre is space-division multiplexing (SDM).

SDM promises to boost the capacity by a factor of between 10 and 100 through the adoption of parallel transmission paths. The simplest way to create such parallel paths is to bundle several standard single-mode fibres in a cable. But speciality fibre could also be used, either multi-core or multi-mode.

BT says it is not researching spatial multiplexing.

”I’m very much more interested in how we use the fibre we have already got,” says Lord. The priority is pushing channels together as close as possible and getting the 25 terabit figure higher, as well as exploring the L-band. “That is a much more practical way to go forward,” says Lord.

However, BT welcomes the research into SDM. “What it [SDM] is pushing into the industry is a knowledge about how to do integration and the expertise that comes out of that is still really valid,” says Lord. “As it is, I don’t see how it fits.”

Infinera targets the metro cloud

Infinera has styled its latest Cloud Xpress product used to connect data centres as a stackable platform, similar to how servers and storage systems are built. The development is another example of how the rise of the data centre is influencing telecoms.

"There is a drive in the industry that is coming from the data centre world that is starting to slam into the telecom world," says Stuart Elby, Infinera's senior vice president of cloud network strategy and technology.

Cloud Xpress is designed to link data centres up to 200km apart, a market Infinera coins the metro cloud. The two-rack-unit-high (2RU) stackable box features Infinera's 500 Gigabit photonic integrated circuit (PIC) for line side transmission and a total of 500 Gigabit of client side links made up of 10, 40 or 100 Gigabit interfaces. Typically, up to 16 units will be stacked in a rack, providing 8 Terabits of transmission capacity over a fibre.

Cloud Xpress has also been designed with the data centre's stringent power and space requirements in mind. The resulting platform has significantly improved power consumption and density metrics compared to traditional metro networking platforms, claims Infinera.

Metro split

Elby describes how the metro network is evolving into two distinct markets: metro aggregation and metro cloud. Metro aggregation, as the name implies, combines lower speed multi-service traffic from consumers' broadband links and from enterprises into a hub where it is switched onto a network backbone. Metro cloud, in contrast, concerns date centre interconnect: point-to-point links that, for the larger data centres, can total several terabits of capacity.

Cloud Xpress is Infinera's first metro platform that uses its PIC. "We have plans to offer it all the way out to ultra long haul," says Elby. "There are some data centres that need to get tied between continents."

Cloud Xpress is being aimed at several classes of customer: internet content providers companies (or webcos), entreprises, cloud operators and traditional service providers. The primary end users are webcos and enterprises, which is why the platform is designed as a rack-and-stack. "These are not networking companies, they are data centre ones; they think of equipment in the context of the data centre," says Elby.

But Infinera expects telcos will also adopt Cloud Xpress. They need to connect their data centres and link data centres to points-of-presence, especially when increasing amounts of traffic from end users now goes to the cloud. Equally, a business customer may link to a cloud service provider through a colocation point, operated by companies such as Equinix, Rackspace and Verizon Terremark.

"There will be a bleed-over of the use of this product into all these metro segments," says Elby. "But the design point [of Cloud Xpress] was for those that operate data centres more than those that are network providers."

Google has shared that a single internet search query travels on average 2,400km before being resolved, while Facebook has revealed that a single http request generates some 930 server-to-server interactions.

The Magnification Effect

Webcos' services generate significantly more internal traffic than the triggering event, what Elby calls the magnification effect.

Google has shared that a single internet search query travels on average 2,400km before being resolved, while Facebook has revealed that a single http request generates some 930 server-to-server interactions. These servers may be in one data centre or spread across centres.

"It is no longer one byte in, one byte out," says Elby. "The amount of traffic generated inside the network, between data centres, is much greater than the flow of traffic into or out of the data centre." This magnification effect is what is driving the significant bandwidth demand between data centres. "When we talk to the internet content providers, they talk about terabits," says Elby.

Cloud Xpress

Cloud Xpress is already being evaluated by customers and will be generally available from December.

The stackable platform will have three client-side faceplate options: 10 Gig, 40 Gig and 100 Gig. The 10 Gig SFP+ faceplate is the sweet spot, says Elby, and there is also a 40 Gig one, while the 100 Gig is in development. "In the data centre world, we are hearing that they [webcos] are much more interested in the QSFP28 [optical module]."

Infinera says that the Ethernet client signals connect to a simple mapping function IC before being placed onto 100 Gig tributaries. Elby says that Infinera has minimised the latency through the box, to achieve 4.4 microseconds. This is an important requirement for certain data centre operators.

The 500 Gig PIC supports Infinera's 'instant bandwidth' feature. Here, all the 500 Gig super-channel capacity is lit but a user can add 100 Gig increments as required. This avoids having to turn up wavelengths and simplifies adding more capacity when needed.

The Cloud Xpress rack can accommodate 21 stackable units but Elby says 16 will be used typically. On the line side, the 500 Gigabit super-channels are passively multiplexed onto a fibre to achieve 8 Terabits. The platform density of 500 Gig per rack unit (500 Gig client and 500 Gig line side per 2RU box), exceeds any competitor's metro platform, says Elby, saving important space in the data centre.

The worse-case power consumption is 130W-per-100 Gig, an improvement on the power consumption performance of competitors' platforms. This is despite the fact that coherent detection is always used, even for links as short as between a data centre's buildings. "We have different flavours of the optical engine for different reaches," says Elby. "It [coherent] is just used because it is there."

The reduced power consumption of Cloud Xpress is achieved partly because of Infinera's integrated PIC, and by scrapping Optical Transport Network (OTN) framing and switching which is not required. "There are no extra bells and whistles for things that aren't needed for point-to-point applications," says Elby. The stackable nature of the design, adding units as needed, also helps.

The Cloud Xpress rack can be controlled using either Infinera's management system or software-defined networking (SDN) application programming interfaces (APIs). "It supports the sort of interfaces the SDN community wants: Web 2.0 interfaces, not traditional telco ones."

Infinera is also developing a metro aggregation platform that will support multi-service interfaces and aggregate flows to the hub, a market that it expects to ramp from 2016.

Infinera introduces flexible grid 500G super-channel ROADM

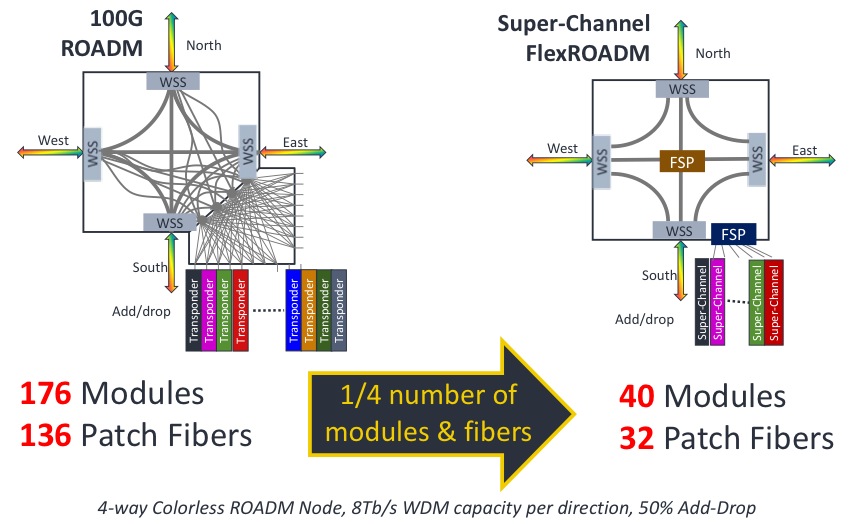

An example showing the impact of a 500G super-channel ROADM node. Source: Infinera

An example showing the impact of a 500G super-channel ROADM node. Source: Infinera

"The FlexROADM will open up the Tier-1 operators in a way Infinera has not been able to do before," says Dana Cooperson, vice president, network infrastructure at market research firm, Ovum. "The DTN-X was necessary but not sufficient; the ROADM is the last piece."

The FlexROADM is claimed to deliver two industry firsts: it can add and drop flexible-grid-based 500 Gig super-channels, and uses the Internet Engineering Task Force’s (IETF) spectrum switched optical networks (SSON).

"SSON is the next generation of WSON [Wavelength Switched Optical Network control plane], except it manages spectrum," says Ron Kline, principal analyst, network infrastructure also at Ovum.

The DTN-X platform combines Infinera's 500 Gig photonic integrated circuits and OTN (Optical Transport Network) switching. With the FlexROADM, Infinera has added switching at the optical layer in 500 Gig increments. Infinera can now offer enhanced multi-layer network optimisation with the combination of electrical and optical switching.

"Optical bypass before was manual using patch cords, now operators can reconfigure with the FlexROADM," says Kline. "It also provides new optical restoration capabilities that Infinera did not have."

The FlexROADM supports up to nine degrees, and is available in colourless, colourless and directionless, and full colourless, directionless and contentionless (CDC) versions.

"The debate about contentionless continues," says Kline. "It is safe to assume that for the majority of applications flexible grid, colourless and directionless will be the high runner." Contentionless will be used by the big carriers, he says, but in certain locations only.

Infinera says the line system announced will support up to 24 Terabit-per-second (Tbps) when it ships in September. The maximum long-haul capacity using its current PM-QPSK super-channels is 9.5Tbps per fibre pair.

"In the future when we enable metro-reach super-channels using PM-16-QAM, they will support 24 Terabit-per-second per fibre pair using the line system we are announcing," says Geoff Bennett, director, solutions and technology at Infinera.

Bennett says the data rate and the spectral efficiency for a given sub-carrier can be varied depending on the reach required. The spacing between sub-carriers that make up a super-channel also can be varied depending on reach. Many different transmission possibilities exist, says Bennett, but to explain the concept, he cites two examples.

The 24Tbps capacity with PM-16-QAM modulation uses pulse shaping at the transmitter to achieve 'Nyquist DWDM' channel spacing, the spacing between channels that approximates the baud rate, says Bennett.

"At this time we are not disclosing the details of the channel spacing, or the number of sub-carriers used by our future line modules," says Bennett. "But the total super-channel spectral width is the equivalent of 200GHz if you are transmitting a one Terabit super-channel, for example." This equates to a spectral efficiency of 5b/s/Hz, and using 16-QAM, the reach achieved will be 600-700km.

"The system we have just launched is designed to operate in long-haul networks and uses PM-QPSK," says Bennett. "For an ultra long-haul reach requirement of 4,500km, the super-channel comprises ten sub-carriers; a total of 500 Gbps over a spectral width of 250 GHz." These line cards are available now, he says.

Infinera continues to make steady market progress, according to Ovum. The company is in the top 10 system vendors globally, while in backbone and 100 Gigabit, Infinera is fourth.

OFC/NFOEC 2012 industry reflections - Part 1

The recent OFC/NFOEC show, held in Los Angeles, had a strong vendor presence. Gazettabyte spoke with Infinera's Dave Welch, chief strategy officer and executive vice president, about his impressions of the show, capacity challenges facing the industry, and the importance of the company's photonic integrated circuit technology in light of recent competitor announcements.

OFC/NFOEC reflections: Part 1

"I need as much fibre capacity as I can get, but I also need reach"

Dave Welch, Infinera

Dave Welch values shows such as OFC/NFOEC: "I view the show's benefit as everyone getting together in one place and hearing the same chatter." This helps identify areas of consensus and subjects where there is less agreement.

And while there were no significant surprises at the show, it did highlight several shifts in how the network is evolving, he says.

"The first [shift] is the realisation that the layers are going to physically converge; the architectural layers may still exist but they are going to sit within a box as opposed to multiple boxes," says Welch.

The implementation of this started with the convergence of the Optical Transport Network (OTN) and dense wavelength division multiplexing (DWDM) layers, and the efficiencies that brings to the network.

That is a big deal, says Welch.

Optical designers have long been making transponders for optical transport. But now the transponder isn't an element in the integrated OTN-DWDM layer, rather it is the transceiver. "Even that subtlety means quite a bit," say Welch. "It means that my metrics are no longer 'gray optics in, long-haul optics out', it is 'switch-fabric to fibre'."

Infinera has its own OTN-DWDM platform convergence with the DTN-X platform, and the trend was reaffirmed at the show by the likes of Huawei and Ciena, says Welch: "Everyone is talking about that integration."

The second layer integration stage involves multi-protocol label switching (MPLS). Instead of transponder point-to-point technology, what is being considered is a common platform with an optical management layer, an OTN layer and, in future, an MPLS layer.

"The drive for that box is that you can't continue to scale the network in terms of bandwidth, power and cost by taking each layer as a silo and reducing it down," says Welch. "You have to gain benefits across silos for the scaling to keep up with bandwidth and economic demands."

Super-channels

Optical transport has always been about increasing the data rates carried over wavelengths. At 100 Gigabit-per-second (Gbps), however, companies now use one or two wavelengths - carriers - onto which data is encoded. As vendors look to the next generation of line-side optical transport, what follows 100Gbps, the use of multiple carriers - super-channels - will continue and this was another show trend.

Infinera's technology uses a 500Gbps super-channel based on dual polarisation, quadrature phase-shift keying (DP-QPSK). The company's transmit and receive photonic integrated circuit pair comprise 10 wavelengths (two 50Gbps carriers per 50GHz band).

Ciena and Alcatel-Lucent detailed their next-generation ASICs at OFC. These chips, to appear later this year, include higher-order modulation schemes such as 16-QAM (quadrature amplitude modulation) which can be carried over multiple wavelengths. Going from DP-QPSK to 16-QAM doubles the data rate of a carrier from 100Gbps to 200Gbps, using two carriers each at 16-QAM, enables the two vendors to deliver 400Gbps.

"The concept of this all having to sit on one wavelength is going by the wayside," say Welch.

Capacity challenges

"Over the next five years there are some difficult trends we are going to have to deal with, where there aren't technical solutions," says Welch.

The industry is already talking about fibre capacities of 24 Terabit using coherent technology. Greater capacity is also starting to be traded with reach. "A lot of the higher QAM rate coherent doesn't go very far," says Welch. "16-QAM in true applications is probably a 500km technology."

This is new for the industry. In the past a 10Gbps service could be scaled to 800 Gigabit system using 80 DWDM wavelengths. The same applies to 100Gbps which scales to 8 Terabit.

"I'm used to having high-capacity services and I'm used to having 80 of them, maybe 50 of them," says Welch. "When I get to a Terabit service - not that far out - we haven't come up with a technology that allows the fibre plant to go to 50-100 Terabit."

This issue is already leading to fundamental research looking at techniques to boost the capacity of fibre.

PICs

However, in the shorter term, the smarts to enable high-speed transmission and higher capacity over the fibre are coming from the next-generation DSP-ASICs.

Is Infinera's monolithic integration expertise, with its 500 Gigabit PIC, becoming a less important element of system design?

"PICs have a greater differentiation now than they did then," says Welch.

Unlike Infinera's 500Gbps super-channel, the recently announced ASICs use two carriers and 16-QAM to deliver 400Gbps. But the issue is the reach that can be achieved with 16-QAM: "The difference is 16-QAM doesn't satisfy any long-haul applications," says Welch.

Infinera argues that a fairer comparison with its 500Gbps PIC is dual-carrier QPSK, each carrier at 100Gbps. Once the ASIC and optics deliver 400Gbps using 16-QAM, it is no longer a valid comparison because of reach, he says.

Three parameters must be considered here, says Welch: dollars/Gigabit, reach and fibre capacity. "I have to satisfy all three for my application," he says.

Long-haul operators are extremely sensitive to fibre capacity. "I need as much fibre capacity as I can get," he says. "But I also need reach."

In data centre applications, for example, reach is becoming an issue. "For the data centre there are fewer on and off ramps and I need to ship truly massive amounts of data from one end of the country to the other, or one end of Europe to the other."

The lower reach of 16-QAM is suited to the metro but Welch argues that is one segment that doesn't need the highest capacity but rather lower cost. Here 16-QAM does reduce cost by delivering more bandwidth from the same hardware.

Meanwhile, Infinera is working on its next-generation PIC that will deliver a Terabit super-channel using DP-QPSK, says Welch. The PIC and the accompanying next-generation ASIC will likely appear in the next two years.

Such a 1 Terabit PIC will reduce the cost of optics further but it remains to be seen how Infinera will increase the overall fibre capacity beyond its current 80x100Gbps. The integrated PIC will double the 100Gbps wavelengths that will make up the super-channel, increasing the long-haul line card density and benefiting the dollars/ Gigabit and reach metrics.

In part two, ADVA Optical Networking, Ciena, Cisco Systems and market research firm Ovum reflect on OFC/NFOEC. Click here

Reflections and predictions: 2011 & 2012 - Part 1

"For 2012, the macroeconomy is likely to dominate any other developments"

Martin Geddes, telecom consultant @martingeddes

Sometimes the important stuff is slow-burning: we're seeing a continued decline in the traditional network equipment providers, and the rise in Genband, Acme, Sonus and Metaswitch in their place. Smaller, leaner, and more used to serving Tier 2 and Tier 3 operators and enterprise players and their lower cost structures.

The recognition of the decline of SMS and telephony became mainstream in 2011 -- maybe I can close down my Telepocalypse blog as what I foresaw is reality.

We've seen absolute declines in revenue and usage of telco voice and messaging in leading markets like Norway and Netherlands. The creation of Telefonica Digital is a landmark reorganisation around new markets. No longer are those initiatives endlessly parked in business development whilst marketing dream up a new price plan for minutes, messages and megabytes.

If I had to pick one thing to characterise 2011, it was the year of the App.

For 2012, the macroeconomy is likely to dominate any other developments. The scenarios are "distress", "meltdown" and "collapse".

Telecoms is well-placed to weather the storm. Even £600 smartphones may remain in vogue as people defer purchases like cars and holidays, and hide their fiscal distress with status symbols hewn out of pure blocks of profit.

Voice will be much more prominent, after decades of languishing, as LTE sets up a complex dynamic of service innovation driven by over-the-top applications - which will increasingly come from telcos as well as telecoms outsiders. Microsoft's purchase of Skype is the one to watch - if they get it right, it joins Windows and Office in the hall of fame; get it wrong, and Microsoft is probably out of the smartphone game due to a lack of competitive differentiation and advantage.

So 2012 is the year when (mobile) voice gets vocal again - because we're going to have a lot to talk about, and want to do it much cheaper and better.

Brandon Collings, CTO for communications and commercial optical products at JDS Uniphase

For the course of 2011, the tunable XFP shipped in volume and it rather quickly supplanted the 300-pin transceiver. On the service/ market trend, over-the-top consumer video (Netflix) grew rapidly to be the dominant traffic on the internet.

"Solutions for the next generation ROADM networks - self aware networks - are now firm"

I expect the maturation of 100 Gigabit to continue through 2012 with the introduction of a number of new 100 Gigabit solutions, both network equipment makers and at the transceiver level.

Also, as the adoption percentage of consumers using over-the-top video usage still seems to be relatively small, yet is growing strongly and is already the dominant traffic on the internet, it will be interesting to see how this trend continues as it strongly drives bandwidth yet with potentially unfavorable revenue models for the network operators who need to deliver it.

Lastly, I expect that as the solutions for the next generation ROADM networks - self aware networks - are now firm, the practical assessment of the value and advantages of these networks can quantitatively take place.

Eve Griliches, managing partner, ACG Research @EveGr

The Juniper PTX announcement really caught the market by surprise. I'm not so much sure why but clearly it rocked some folks back on their heels. Momentum for the product has been good as well. I think you can count this as a success story.

Another one is the Infinera 500Gbps release with super-channels. A pretty impressive technology and service providers are waiting for final product to test.

The death of Steve Jobs rattled us all. I think it struck a note for everyone in how different he was and how he touched us all.

"Content providers ask for simple, scalable and low-featured products. Those who deliver will be rewarded for listening."

I continue to be amazed at how much optical equipment content providers [the Googles, Facebooks, MSNs of this world] are deploying and how few folks at the vendor level are doing anything about getting into their networks. Maybe that is a 2012 thing, I don't know.

As for 2012, we'll definitely see some mergers and acquisitions - expect low acquisition prices too - and some companies exiting this market. I love optics and it really pains me to say that, but there are just more companies out there who can't support the declining margins. I think margin erosion will be key to who survives.

Cisco and Infinera should be bringing some cool products to market in the next six months. We hope the products are good because it will generate debate for the final vendor choices for operators such as AT&T and Verizon.

Again, content providers ask for simple, scalable and low-featured products. Those who deliver will be rewarded for listening. Some don't listen, and will wonder what happened.

Peter Jarich, service director, service provider infrastructure, mobile ecosystem, Current Analysis @pnjarich

2012 is going to be the year for LTE-Advanced (LTE-A). Why? One, vendors always like to talk up what’s next, and LTE-A is what follows LTE (Long Term Evolution).

At the same time, operators who haven’t yet deployed LTE will want to look to start with the latest and greatest. Of course, LTE-A brings real advances for operators: carrier aggregation for dealing with fragmented spectrum assets; heterogeneous networks for dealing with the interaction of small cell and macrocell networks; relaying for improved cell edge performance.

Avi Shabtai, CEO of MultiPhy

The most significant development of 2011 was the availability of CMOS technology that allows next-generation optical transport solutions for 100 Gigabit. And specifically, metro-focused solutions that hit the cost and power numbers required by this industry.

On top of that, optical communication has entered the era of digital signal processing receivers. We have also seen the potential segmentation in 100 Gigabit of metro versus long-haul, each with its specific set of solutions.

"We will see a huge growth in video consumption. This has already started but it is just the tip of the iceberg."

The transition of the telecom and datacom market to 100 Gigabit has also begun - from the transport optical network all the way to copper backplanes - it's all a 4x25Gbps architecture. This year has also seen consolidation in the ecosystem, especially among module companies.

This consolidation will continue at all industry levels in 2012: semiconductors, subsystems, systems and the carriers. The consolidation will coincide with an across-the-board price reduction in emerging technologies like 100 Gigabit transport.

The increase in capacity demand will also force an increase in requirements for various solutions supporting 100 Gigabit. I expect to see more CMOS-based devices introduced.

From a services point or view, we will see a huge growth in video consumption. This has already started but it is just the tip of the iceberg. Video will have a tremendous influence on network evolution.

Gilles Garcia, director, wired communication at Xilinx @gllsgarcia

The CFP2 and CFP4 optical modules are arriving a lot faster than it took for the CFP to follow the XFP optical module.

The CFP standard took 3-4 years to complete while the standard for the CFP2 just closed after two years. Now the CFP4 standard has been launched and is expected to take 18 months only. The new form factors are being driving by the cost-per-port of 100 Gigabit and how to reduce it. The CFP2 doubles the density when compared to the CFP while the CFP4 doubles it again.

"Programmability is becoming the key trend among telecom system vendors as operators look to react faster to standards, new feature requests and deployment of new services."

Telecom application-specific standard product (ASSP) players have been relatively quiet in 2011. Word from customers is that such vendors are pushing out their roadmap/ product availability because of too much flux in the various IEEE and ITU-T telecom standards and difficulties to justify the return-on-investment. This is proving a perfect opportunity for FPGAs.

Large system vendors are growing their network services as operators continue to outsource their network management and maintenance. As reported in their financial reports, this is an important source of business for the likes of Ericsson, Huawei and Alcatel-Lucent.

It is leading the vendors to push more of their own hardware, as they look to add value-add services and integrate the services using their own platforms. Some equipment vendors realise they do not have a full portfolio and have established partnerships for the missing platforms. They are also starting to develop platforms to generate more revenue.

In 2012, I’m not expecting a telecom revolution but I do expect accelerated evolution. And I foresee big disruptions in the ASSP market as it continues to consolidate: I expect several mergers and acquisitions among the top 20 ASSP suppliers.

Programmability is becoming the key trend among telecom system vendors as operators look to react faster to standards, new feature requests and deployment of new services. Programmability also improves time-to-market to deliver these services and reduce time-to-revenue.

Mobile backhaul will be a market driver in 2012. The growth in mobile data terminals will lead to a new generation of mobile backhaul networks. This will drive the move from 1 to 10 Gigabit Ethernet, higher-feature packet processing, and traffic management integration into mobile infrastructure to better control and bill bandwidth usage i.e. pay for what you use.

The 'God box' - packet optical transport systems and the like - are back, but really it is network needs that is driving this.

And one topic to watch that will become clearer in 2012 is how cloud computing impacts the networking market with regard such issues as security, cacheing and higher speed links.

Google is becoming an important internal - for its own usage -networking equipment player. And Google will be joined by others - Facebook, Amazon etc. What impact will this have on the traditional system networking vendors? Such new players are defining and building networks platforms tailored for their needs. This is competition to the traditional system vendors who are not getting this piece of the business. Semiconductors, including FPGAs, could serve those companies directly.

Other issues to note: What will Intel do in the networking space? Intel acquired Fulcrum in 2011 and has invested in several networking companies.

There are also technology issues.

What will happen to ternary content addressable memory (TCAM)? Broadcom's acquisition of NetLogic Microsystems has created a hole in the TCAM market. Will Broadcom continue with TCAM? Will customers want to give their TCAM business to Broadcom?

Xilinx FPGAs have added network search engines IP in the solution portfolio as multi-core ‘search engine’ face increasing difficulty in sustaining the performance required.

And of course there is the continual issue of power optimisation.

For Part 2, click here

For Part 3, click here

High fives: 5 Terabit OTN switching and 500 Gig super-channels.

Infinera has announced a core network platform that combines Optical Transport Network (OTN) switching with dense wavelength division multiplexing (DWDM) transport. "We are looking at a system that integrates two layers of the network," says Mike Capuano, vice president of corporate marketing at Infinera.

"This is 100Tbps of non-blocking switching, all functioning as one system. You just can't do that with merchant silicon."

"This is 100Tbps of non-blocking switching, all functioning as one system. You just can't do that with merchant silicon."

Mike Capuano, Infinera

The DTN-X platform is based on Infinera's third-generation photonic integrated circuit (PIC) that supports five, 100Gbps coherent channels.

Each DTN-X platform can deliver 5 Terabits-per-second (Tbps) of non-blocking OTN switching using an Infinera-designed ASIC. Ten DTN-X platforms can be combined to scale the OTN switching and transport capacity to 50Tbps currently.

Infinera also plans to add Multiprotocol Label Switching (MPLS) to turn the DTN-X into a hybrid OTN/ MPLS switch. With the next upgrades to the PIC and the switching, the ten DTN-X platforms will scale to 100Tbps optical transport and 100Tbps OTN and MPLS switching capacity.

The platform is being promoted by Infinera as a way for operators to tackle network traffic growth and support developments such as cloud computing where applications and content increasingly reside in the network. "What that means [for cloud-based services to work] is a network with huge capacity and very low latency," says Capuano.

Platform details

The 5x100Gbps PIC supports what Infinera calls a 500Gbps 'super-channel'. Each super-channel is a multi-carrier implementation comprising five, 100Gbps wavelengths. Combined with OTN, the 500Gbps super-channel can be filled with 1, 10, 40 and 100 Gigabit streams (SONET/SDH, Ethernet, video etc). Moreover, there is no spectral efficiency penalty: the super-channel uses 250GHz of fibre spectrum, provisioning five 50GHz-wide, 100Gbps wavelengths at a time.

"We have seen 40 and 100Gbps come on the market and they are definitely helping with fibre capacity issues," says Capuano. "But they are more expensive from a cost-per-bit perspective than 10Gbps." By introducing the 500Gbps PIC, Infinera says it is reducing the cost-per-bit performance of high speed optical transport.

DTN-X: shown are 5 line and tributary cards top and bottom with switching cards in the centre of the chassis. Source: Infinera

DTN-X: shown are 5 line and tributary cards top and bottom with switching cards in the centre of the chassis. Source: Infinera

Integrating OTN switching within the platform results in the lowest cost solution and is more efficient when compared to multiplexed transponders (muxponder) configured manually, or an external OTN switch which must be optically connected to the transport platform.

The DTN-X also employs Generalised MPLS (GMPS) software. "GMPLS makes it easy to deploy networks and services with point-and-click provisioning," says Capuano.

Each DTX-N line card supports a 500Gbps PIC but the chassis backplane is specified at 1Tbps, ready for Infinera's next-generation 10x100Gbps PIC that will upgrade the DTN-X to a 10Tbps system. "We have already presented our test results for our 1Tbps PIC back in March," says Capuano. The fourth-generation PIC, estimated around 2014 (based on a company slide although Infinera has made no public comment), will support a 1Tbps super-channel.

Adding MPLS will add the transport capability of the protocol to the DTN-X. "You will have MPLS transport, OTN switching and DWDM all in one platform," says Capuano.

OTN switching is the priority of the tier-one operators to carry and process their SONET/SDH traffic; adding MPLS will enable extra traffic processing capabilities to the system, he says.

Infinera says that by eventually integrating MPLS switching into the optical transport network, operators will be able to bypass expensive router ports and simplify their network operation.

Performance

Infinera says that the DTX-N 5Tbps performance does not dip however the system is configured: whether solely as a switch (all line card slots filled with tributary modules), mixed DWDM/ switching (half DWDM/ half tributaries, for example) or solely as a DWDM platform. Depending on the cards in the DTN-X platform, the transport/ switching configuration can be varied but the 5Tbps I/O capacity is retained. Infinera says other switches on the market do lose I/O capacity as the interface mix is varied.

Overall, Infinera claims the platform requires half the power of competing solutions and takes up a third less space.

The DTN-X will be available in the first half of 2012.

Analysis

Gazettabyte asked several market research firms about the significance of the DTN-X announcement and the importance of combining OTN, DWDM and soon MPLS within one platform.

Ovum

Ron Kline, principal analyst, and Dana Cooperson, vice president, of the network infrastructure practice

"MPLS switching is setting up a very interesting competitive dynamic among vendors"

Dana Cooperson, Ovum

The DTN-X is a platform for the largest service providers and their largest sites, says Ovum.

It sees the DTN-X in the same light as other integrated OTN/ WDM platforms such as Huawei's OSN 8800, Nokia Siemens Networks' hiT 7100, Alcatel-Lucent's 1830 PSS and Tellabs' 7100 OTS.

"It fits the mold for Verizon's long-haul optical transport platform (LH OTP), especially once MPLS is added," says Kline. "NSN is also claiming it will add MPLS to the 7100. Once MPLS is added, then you have the big packet optical transport box that Verizon wants."

The DTN-X platform will boost the business case for 100 Gig in a similar way to how Infinera's current PIC has done at 10 Gig. "The others will be forced to lower price," says Kline.

Having GMPLS is important, especially if there is a need to do dynamic bandwidth allocation, however it is customer-dependent. "When you start digging, it's hard to find large-scale implementations of GMPLS," says Kline.

The Ovum analysts stress that the need for OTN in the core depends on the customer. Content service providers like Google couldn't care less about OTN. "It's really an issue for multi-service providers like BT and AT&T," says Cooperson,

There is a consensus about the need for MPLS in the core. "Different service providers are likely to take different approaches — some might prefer an integrated box and others might not, it depends on their business," she says. "I think MPLS switching is setting up a very interesting competitive dynamic among vendors that focus on IP/MPLS, those that focus on optical, and those that are trying to do both [optical and IP/MPLS].

Ovum highlights several aspects regarding the DTN-X's claimed performance.

"Assuming it performs as advertised, this should finally give Infinera what it needs to be of real interest to the tier-ones," says Cooperson. "The message of scalability, simplicity, efficiency, and profitability is just what service providers want to hear."

Cooperson also highlights Infinera's approach to optical-electrical-optical conversion and the benefit this could deliver at line speeds greater than 100Gbps.

At present ROADMs are being upgraded to support flexible spectrum channel configurations, also known as gridless. This is to enable future line speeds that will use more spectrum than current 50GHz DWDM channels. Operators want ROADMs that support flexible spectrum requirements but managing the network to support these variable width channels is still to resolved.

"It fits the mold for Verizon's long-haul optical transport platform (LH OTP), especially once MPLS is added"

"It fits the mold for Verizon's long-haul optical transport platform (LH OTP), especially once MPLS is added"

Ron Kline, Ovum

Infinera's approach is based on conversion to the electrical domain when dropping and regenerating wavelengths such that the issue of flexible channels does not arise or is at least forestalled. This, says Cooperson, could be Infinera's biggest point of differentiation.

"What impresses me is the 500Gbps super-channel using five, 100Gbps carriers and the size of the switch fabric," adds Kline. The 5Tbps switching performance also exceeds that of everyone else: "Alcatel-Lucent is closest with 4Tbps but most range from 1-3Tbps and top out at 3Tbps."

The ease of use is also a big deal. Infinera did very well in marketing rapid turn up: 10 Gig in 10 days for example, says Kline: "It looks like they will be able to do the same here with 100 Gig."

Infonetics Research

Andrew Schmitt, directing analyst, optical

"GMPLS isn't that important, yet."

The DTN-X is a WDM platform which optionally includes a switch fabric for carriers that want it integrated with the transport equipment, says Schmitt. Once MPLS is added, it has the potential to be a full-blown packet-optical system.

"[The announcement is] pretty significant though not unexpected," says Schmitt. "I think the key question is what it costs, and whether the 500G PIC translates into compelling savings."

Having MPLS support is important for some carriers such as XO Communications and Google but not for others.

Schmitt also says GMPLS isn't that important, yet. "Infinera's implementation of regen-rich networks should make their GMPLS implementation workable," he says. "It has been building networks like that for a while."

OTN in the core is still an open debate but any carrier that doesn't have the luxury of a homogenous data network needs it, he says

Schmitt has yet to speak with carriers who have used the DTN-X: "I can't comment on claimed performance but like I said, cost is important."

ACG Research

Eve Griliches, managing partner

"Infinera has already introduced the 500G PIC, but the OTN is significant in that it can be used as a standalone OTN switch, and it has the largest capacity out there today"

The DTN-X as an OTN/ WDM platform awaiting label switch router (LSR) functionality, says Griliches: "With the LSR functionality it will be able to do statistical multiplexing for direct router connections."

Infinera has already introduced the 500 Gig PIC but the OTN is significant in that it can be used as a standalone OTN switch, and it has the largest capacity out there today. An OTN survey conducted last year by ACG Research found that the switch capacity sweet spot is between 4 and 8Tbps.

Griliches says that LSR-based products are taking time to incorporate WDM and OTN technologies, while it is unclear when the DTN-X will support MPLS to add LSR capabilities. The race is on as to whom can integrate everything first, but DWDM and OTN before MPLS is the right direction for most tier-one operators, she says.

Infinera has over eight thousand of its existing DTNs deployed at 85 customers in 50 countries. The scale of the DTN-X will likely broaden Infinera's customer base to include tier-one operators, says Griliches.

ACG Research has heard positive feedback from operators it has spoken to. One stressed that the decreased port count due to the larger OTN cross-connect significantly improves efficiencies. Another operator said it would pick Infinera and said the beta version of the 500Gbps PIC is "working beautifully".