Ciena offers enterprises vNF pick and choose

Ciena, working with partners, has developed a platform for service providers to offer enterprises network functions they can select and configure with the click of a button.

Dubbed Agility Matrix, the product enables enterprises to choose their IT and connectivity services using software running on servers. It also promises to benefit service providers' revenues, enabling more adventurous service offerings due to the flexibility and new business models the virtual network functions (vNFs) enable. Currently, managed services require specialist equipment and on-site engineering visits for their set-up and management, while the contracts tend to be lengthy and inflexible.

"It offers an ecosystem of vNF vendors with a licensing structure that can give operators flexibility and vendors a revenue stream," says Eric Hanselman, chief analyst at 451 Research. "There are others who have addressed the different pieces of the puzzle, but Ciena has wrapped the products with the business tools to make it attractive to all of the players involved."

Ciena has created an internal division, dubbed Ciena Agility, to promote the venture. The unit has 100 staff while its technology, Agility Matrix, is being trialled by service providers although Ciena has declined to say how many.

"Why a separate devision? To move fast in a market that is moving rapidly," says Kevin Sheehan, vice president and general manager of Ciena Agility.

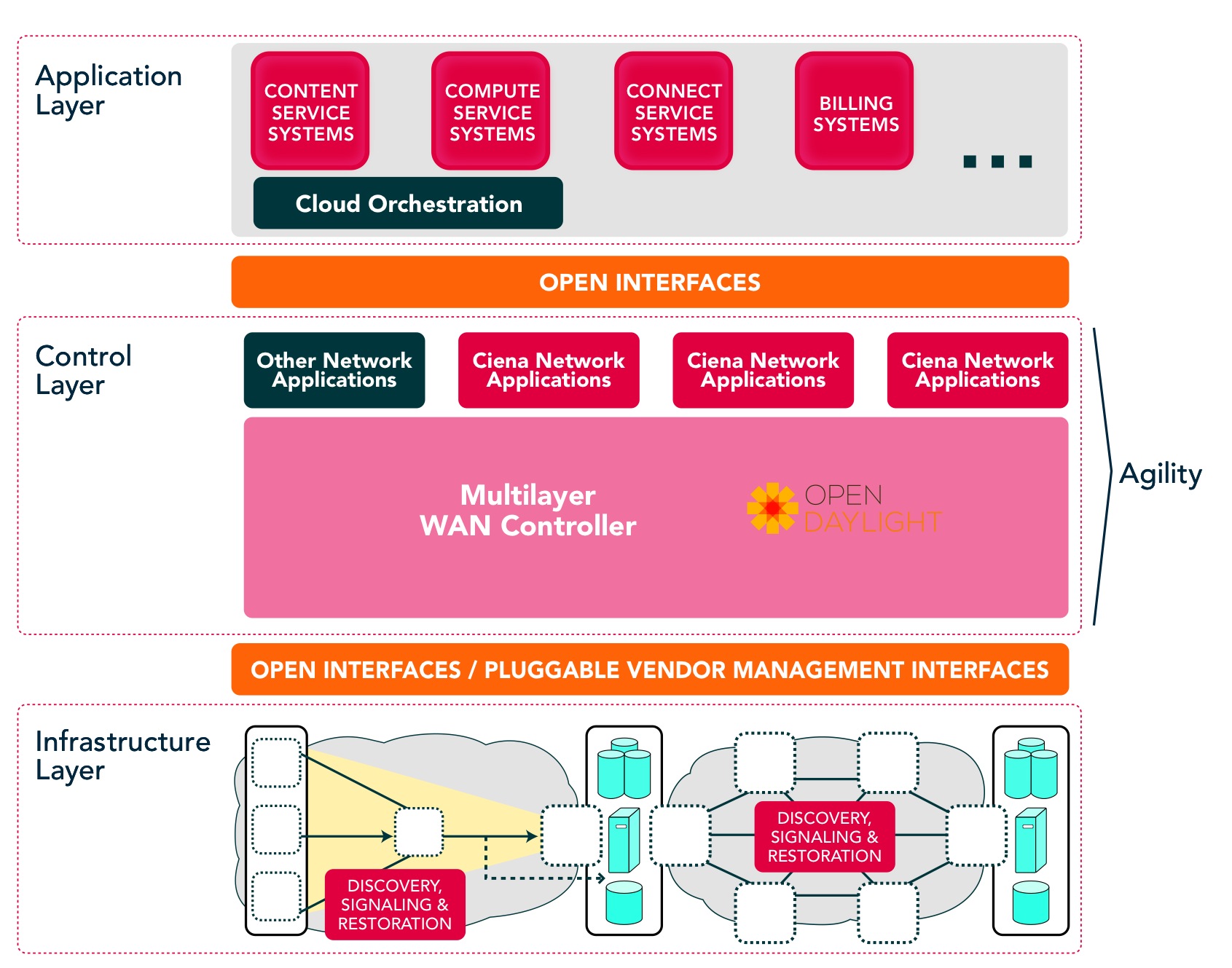

The unit inherits Agility products previously announced by Ciena. These include the multi-layer WAN controller that Ciena is co-developing with Ericsson, and certain applications that run on the software-defined networking (SDN) controller.

"The unique aspect of Ciena’s offering is the comprehensive approach to virtualised functions, says Hanselman. "It tackles everything from service orchestration out to monetisation."

Source: Ciena

Source: Ciena

What has been done

Agility Matrix comprises three elements: the vNF Market, Director and the host. The vNF Market is cloud-based and enables a service provider to offer a library of vNFs that its enterprise customers can choose from. An enterprise IT manager can select the vNFs required using a secure portal.

The Director, the second element, does the rest. The Director, built using Openstack software, delivers the vNFs to the host, an x86 instruction set-based server located at the enterprise's premises or in the service provider's central office or data centre.

The Director generates a software licence, the enterprise customer confirms the vNFs are working, which prompts the Director to generate post-payment charging data records. The VNF Market then invoices the service provider and pays the vNF vendors selected.

"Agility Matrix enables a pay-as-you-earn model for the service provider, much different from today's managed services providers' experiences," says Sheenan, who points out that a service provider currently buys custom hardware in bulk based on their enterprise-demand forecast, shipping products one by one. Now, with Agility Matrix, the service provider pays for a licence only after its enterprise customer has purchased one.

Ciena has launched Agility Matrix with five vNF partners. The partners and their vNF products are shown in the table.

Source: Gazettabyte

Source: Gazettabyte

AT&T Domain 2.0 programme

Ciena is one of the vendors selected by AT&T for its Supplier Domain 2.0 programme. Does AT&T's programme influence this development?

“We are always working with our customers on addressing their current and future problems," says Sheehan. "When we bring something like Agility Matrix to the market, it is created by working with our partners and customers to develop a solution that is designed to meet everyone’s needs."

"Ciena has application programming interfaces that can support integration at several levels, but it is not clear that Agility is part of the deployment within Domain 2.0," says Hanselman. "The interesting things in Domain 2.0 are the automation and virtualisation pieces; Ciena can handle the automation part with its existing products."

Meanwhile, AT&T has announced its 'Network on Demand' that enables businesses to add and change network services in 'near real-time' using a self-service online portal.

Ciena adds software to enhance network control

Engineers at Ciena have developed software to provide service providers with greater control over their networks. The operators' customers will also benefit from the software control, using a web portal to meet their own networking needs.

Source: Ciena

Source: Ciena

"Networks can become more dynamic," says Tom Mock, senior vice president, corporate communications at Ciena. "Operators can now offer more on-demand services." If much work has been done in recent years to make the network's lower layers dynamic, attention is turning to software to make the networks programmable, he says.

Ciena's announced Agility software portfolio, which resides in the network management centre running on standard computing hardware, includes:

- A multi-layer software-defined networking (SDN) controller

- Three networking applications: Navigate, Protect and Optimize. Navigate is used to determine the ideal route for a connection, Protect is a restoration path calculator used to protect against network failures, while Optimize frees up stranded bandwidth across the network's layers.

- Enhancements to Ciena's existing V-WAN network services module.

Ciena chose to implement the SDN controller using the OpenDaylight framework to ensure it will work with other vendors' equipment, while third-party developers writing software using the open source framework will benefit from Ciena's apps and platforms.

"We think the market is evolving so quickly that there isn't any one company that can deal with all the things end users will require," says Mock. "This idea of openness is not so much a nice thing as a requirement; it is going to require the cooperation of multiple vendors to build the kind of network that service providers are going to require."

At the top of the SDN architecture is the application layer, which resides above the control layer that, in turn, oversees the underlying infrastructure layer where the equipment resides. Agility's three network applications sit above the SDN controller while still being part of the control layer (see diagram).

This idea of openness is not so much a nice thing as a requirement; it is going to require the cooperation of multiple vendors to build the kind of network that service providers are going to require

End users can now control their network requirements using the V-WAN orchestrator. Ciena has added monitoring and control interfaces to enhance V-WAN. End users can now control their networking requirements using a web portal. The operator and the end user also have improved visibility about the network's health due to the performance monitoring. More plug-in adaptors have also been added to interface the platform to more equipment, while service providers can use V-WAN to set up VPNs for multiple users.

"[V-WAN] provides for an outside application to control the network directly," says Mock. "A service provider doesn't have to change the connectivity map, or establish or take down a connection."

V-WAN sits between the SDN's upper two layers, allowing applications in the applications layer to access the SDN controller. Ciena has already detailed work with Brocade that allows the vendor's data centre orchestrator - the Application Resource Broker (ARB) used to set up storage and compute resources - can request cloud resources in a remote data centre when demand can no longer be fulfilled in the existing one. Ciena has provided a plug-in adapter between Brocade's orchestrator and V-WAN to establish a connection between the data centres to allow workload transfers as required.

V-WAN will also be used by Equinix to allow end users to connect its data centres with other cloud computing providers. "If an Equinix end user today wants to run part of their applications on Amazon, they can do that, and if tomorrow they have a different set of applications that they want to run on Microsoft, they can do that as well, without changing a real lot of their physical infrastructure," says Mock.

The Agility software portfolio is Ciena's own work, developed prior to its strategic partnership with Ericsson that was announced earlier this year. However, the two companies are now working to add Ericsson's layer-3 capability to the OpenDaylight SDN controller. Mock says the enhanced SDN controller will be available in 2015.

Meanwhile, the V-WAN product is available now. The SDN controller and the three network applications are being trialled and will be available later this year.

Colt's network transformation

Colt's technology and architecture specialist, Mirko Voltolini, talks to Gazettabyte about how the service provider has transformed its network from one based on custom platforms to an open, modular design.

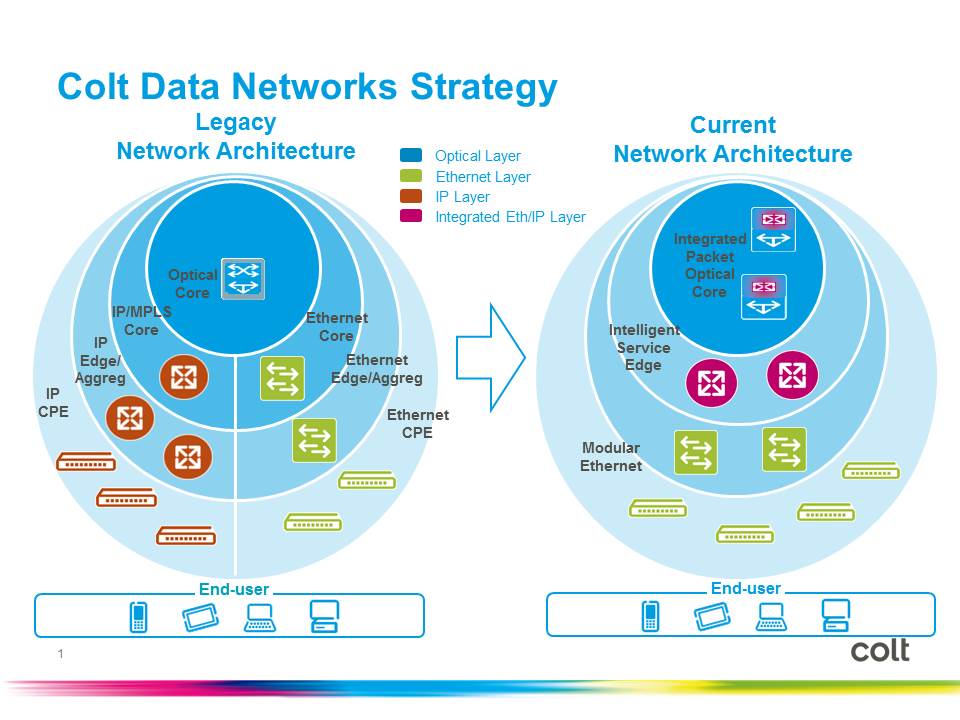

It was obvious to Colt that something had to change. Its network architecture based on proprietary platforms running custom software was not sustainable; the highly customised network was cumbersome, resistant to change and expensive to run. The network also required a platform to be replaced - or at least a new platform added alongside an existing one - every five to seven years.

Mirko Voltolini

Mirko Voltolini

"The cost of this approach is enormous," says Mirko Voltolini, vice president technology and architecture at Colt Technology Services. "Not just in money but the time it takes to roll out a new platform."

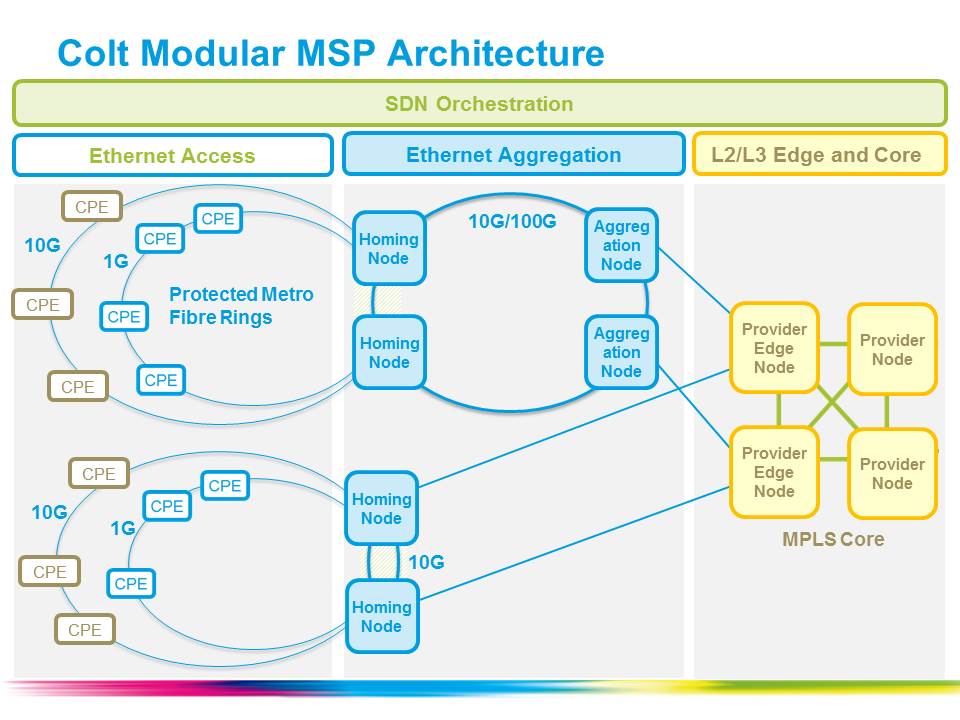

Instead, the service provider has sought a modular approach to network design using standardised platforms that are separated from each other. That way, a new platform with a better feature set or improved economics can be slotted in without impacted the other platforms. Colt calls its resulting network a modular multi-service platform (MSP).

The MSP now delivers the majority of Colt's data networking and all-IP services. These includes Carrier Ethernet point-to-point, hub-and-spoke and private networks services, as well as internet access, IP VPNs and VoIP IP-based services.

The vendors chosen for the MSP include Cyan with its Z-Series packet-optical transport system (P-OTS) and Blue Planet software-defined networking (SDN) platform and Accedian Networks' customer premise equipment (CPE). Cyan's Z-Series does not support IP, so Colt uses Juniper Networks' and Alcatel-Lucent's IP edge platforms. Colt also has a legacy 20-year-old SDH network but despite using a P-OTS platform, it has decided to leave the SDH platform alone, with the modular MSP running alongside it.

Colt chose its vendors based on certain design goals. "The key was openness," says Voltolini. "We didn't want to have a closed system." It was Cyan's management system, the Blue Planet platform, that led Colt to choose Cyan.

Associated with Blue Planet is an ecosystem that allows the management software to control other vendors' platforms. Cyan uses 'element adapters' that mediate between its SDN interface software and the proprietary interfaces of its vendor partners. Cyan says that its Z-Series P-OTS appears as a third-party piece of equipment to its Blue Planet software in the same way as the other vendors' equipment are; a view confirmed by Colt. "Because of its openness, we have been able to integrate other vendors to use the same management system as if they were Cyan components," says Voltolini.

"Cyan was probably the best option available and we decided to go with it," says Voltolini. The company was looking at what was available two years ago and Voltolini points out that the market has evolved significantly since then. "In the end, if you want to move ahead, you need to make decisions," he says. "We are quite happy with what we have picked and we continue to improve it."

Colt says that as well as SDN, network functions virtualisation (NFV) is also important. "With the same modular platform we have created a virtual component which is a layer-3 CPE," says Voltolini. The company is issuing a request-for-information (RFI) regarding other CPE functions like firewalls, load-balancers and other networking components.

Benefits and lessons learned

Adopting the MSP has speeded up Colt's service delivery. Before the modular network, it would take between 30 and 45 days for Colt to fulfil a customer's request for a three-month-long Ethernet link upgrade, from 100 Megabit to 200 Megabit. Now, such a request can be fulfilled in seconds. "We didn't need any more layer-3 CPE and we can upgrade remotely the bandwidth," says Voltolini.

Colt also estimates that it will halve its operational costs once the new network is fully deployed; the network went live in November 2013 and has not been deployed in all locations. The operational expense improvement and the greater service flexibility both benefit Colt's bottom line, says Voltolini.

A key lesson learned from the network transformation is the importance of leading staff through change rather than any technological issues. "The technology has been a challenge but in the end, with the suppliers, you can design anything you want if you have the right level of collaboration," says Voltolini. "But when you completely transform the way you deliver services, you are touching everything that is part of the engine of the company."

Colt cites aspects such as engineering solutions, service delivery, service operations, systems and processes, and the sales process. "You need to lead the transition is such a way that everybody is going to follow you," says Voltolini.

Colt encountered obstacles created because of the staff's natural resistance to change. "Certain things took longer," says Voltolini. "We had to overcome obstacles that weren't really obstacles, just people's fear of change."

OIF prepares for virtual network services

The Optical Internetworking Forum has begun specification work for virtual network services (VNS) that will enable customers of telcos to define their own networks. VNS will enable a user to define a multi-layer network (layer-1 and layer-2, for now) more flexibly than existing schemes such as virtual private networks.

Vishnu Shukla"Here, we are talking about service, and a simple way to describe it [VNS] is network slicing," says OIF president, Vishnu Shukla. "With transport SDN [software-defined networking], such value-added services become available."

Vishnu Shukla"Here, we are talking about service, and a simple way to describe it [VNS] is network slicing," says OIF president, Vishnu Shukla. "With transport SDN [software-defined networking], such value-added services become available."

The OIF work will identify what carriers and system vendors must do to implement VNS. Shukla says the OIF already has experience working across multiple networking layers, and is undertaking transport SDN work. "VNS is a really valuable extension of the transport SDN work," says Shukla.

The OIF expects to complete its VNS Implementation Agreement work by year-end 2015.

Meanwhile, the OIF's Carrier Working Group has published its recommendations document, entitled OIF Carrier WG Requirements for Intermediate Reach 100G DWDM for Metro Type Applications, that provides input for the OIF's Physical Link Layer (PLL) Working Group.

The PLL Working Group is defining the requirements needed for a compact, low-cost and low-power 100 Gig interface for metro and regional networks. This is similar to the OIF work that successfully defined the first 100 Gig coherent modules in a 5x7-inch MSA.

The Carrier Working Group report highlights key metro issues facing operators. One is the rapid growth of metro traffic which, according to Cisco Systems, will surpass long-haul traffic in 2014. Another is the change metro networks are undergoing. The metro is moving from a traditional ring to a mesh architecture with the increasing use of reconfigurable optical add/drop multiplexers (ROADMs). As a result, optical wavelengths have further to travel, must contend with passing through more ROADMs stages and more fibre-induced signal impairments.

Shukla stresses there are differences among operators as to what is considered a metro network. For example, metro networks in North America span 400-600km typically and can be as much as 1,000km. In Europe such spans are considered regional or even long-haul networks. Metro networks also vary greatly in their characteristics. "Because of these variations, the requirements on optical modules varies so much, from unit to unit and area to area," says Shukla.

Given these challenges, operators want a module with sufficient optical performance to contend with the ROADM stages, and variable distances and network conditions encountered. "Sometimes we feel that the requirements [between metro and long-haul] won't be that much [different]," says Shukla. Indeed, the Carrier Working Group report discusses how the boundaries between metro and long-haul networks are blurring.

Yet operators also want such robust optical module performance at a greatly reduced price. One of the report's listed requirements is the need for the 100 Gig intermediate-reach interfaces to cost 'significantly' less than the cheapest long-haul 100 Gig.

To this aim, the report recommends that the 100 Gig pluggable optical modules such as the CFP or CFP2 be used. Standardising on industry-accepted pluggable MSAs will drive down cost as happened with the introduction of 100 Gig long haul 5x7-inch MSA modules.

Metro and regional coherent interfaces will also allow the specifications to be relaxed in terms of the DSP-ASIC requirements and the modulation schemes used. "When we come to the metro area, chances are that some of the technologies can be done more simply, and the cost will go down," says Shukla. Using pluggables will also increase 100 Gig line card densities, further reducing cost, while the report also favours the DSP-ASIC being integrated into the pluggable module, where possible.

Contributors to the Carrier Working Group report include representatives from China Telecom, Deutsche Telekom, Orange, Telus and Verizon, as well as module maker Acacia.

WDM and 100G: A Q&A with Infonetics' Andrew Schmitt

The WDM optical networking market grew 8 percent year-on-year, with spending on 100 Gigabit now accounting for a fifth of the WDM market. So claims the first quarter 2014 optical networking report from market research firm, Infonetics Research. Overall, the optical networking market was down 2 percent, due to the continuing decline of legacy SONET/SDH.

In a Q&A with Gazettabyte, Andrew Schmitt, principal analyst for optical at Infonetics Research, talks about the report's findings.

Q: Overall WDM optical spending was up 8% year-on-year: Is that in line with expectations?

Andrew Schmitt: It is roughly in line with the figures I use for trend growth but what is surprising is how there is no longer a fourth quarter capital expenditure flush in North America followed by a down year in the first quarter. This still happens in EMEA but spending in North America, particularly by the Tier-1 operators, is now less tied to calendar spending and more towards specific project timelines.

This has always been the case at the more competitive carriers. A good example of this was the big order Infinera got in Q1, 2014.

You refer to the growth in 100G in 2013 as breathtaking. Is this growth not to be expected as a new market hits its stride? Or does the growth signify something else?

I got a lot of pushback for aggressive 100G forecasts in 2010 and 2011 when everyone was talking about, and investing in, 40G. You can read a White Paper I wrote in early 2011 which turned out to be pretty accurate.

My call was based on the fact that, fundamentally, coherent 100G shouldn’t cost more than 40G, and that service providers would move rapidly to 100G. This is exactly what has happened, outside AT&T, NTT and China which did go big with 40G. But even my aggressive 100G forecasts in 2012 and 2013 were too conservative.

I have just raised my 2014 100G forecast after meeting with Chinese carriers and understanding their plans. 100G will essentially take over almost all of the new installations in the core by 2016, worldwide, and that is when metro 100G will start. But there is too much hype on metro 100G right now given the cost, but within two years the price will be right for volume deployment by service providers.

There is so much 'blah blah blah' about video but 90 percent is cacheable. Cloud storage is not

You say the lion's share of 100G revenue is going to five companies: Alcatel-Lucent, Ciena, Cisco, Huawei, and Infinera. Most of the companies are North American. Is the growth mainly due to the US market (besides Huawei, of course). And if so, is it due to Verizon, AT&T and Sprint preparing for growing LTE traffic? Or is the picture more complex with cable operators, internet exchanges and large data centre players also a significant part of the 100G story, as Infinera claims.

It’s a lot more complex than the typical smartphone plus video-bandwidth-tsunami narrative. Many people like to attach the wireless metaphor to any possible trend because it is the only area perceived as having revenue and profitability growth, and it has a really high growth rate. But something big growing at 35 percent adds more in a year than something small growing at 70 percent.

The reality is that wireless bandwidth, as a percentage of all traffic, is still small. 100G is being used for the long lines of the network today as a more efficient replacement for 10G and while good quantitative measures don’t exist, my gut tells me it is inter-data-centre traffic and consumer/ business to data centre traffic driving most of the network growth today.

I use cloud storage for my files. I’m a die-hard Quicken user with 15 years of data in my file. Every time I save that file, it is uploaded to the cloud – 100MB each time. The cloud provider probably shifts that around afterwards too. Apply this to a single enterprise user - think about how much data that is for just one person. There is so much 'blah blah blah' about video but 90 percent is cacheable. Cloud storage is not.

Each morning a hardware specialist must wake up and prove to the world that they still need to exist

Cisco is in this list yet does not seek much media attention about its 100G. Why is it doing well in the growing 100G market?

Cisco has a slice of customers that are fibre-poor who are always seeking more spectral efficiency. I also believe Cisco won a contract with Amazon in Q4, 2013, but hey, it’s not Google or Facebook so it doesn’t get the big press. But no one will dispute Amazon is the real king of public cloud computing right now.

You’ve got to do hard stuff that others can’t easily do or you are just a commodity provider

In the data centre world, there is a sense that the value of specialist hardware is diminishing as commodity platforms - servers and switches - take hold. The same trend is starting in telecoms with the advent of Network Functions Virtualisation (NFV) and software-defined networking (SDN). WDM is specialist hardware and will remain so. Can WDM vendors therefore expect healthy annual growth rates to continue for the rest of the decade?

I am not sure I agree.

There is no reason transport systems couldn’t be white-boxed just like other parts of the network. There is an over-reaction to the impact SDN will have on hardware but there have always been constant threats to the specialist.

Each morning a hardware specialist must wake up and prove to the world that they still need to exist. This is why you see continued hardware vertical integration by some optical companies; good examples are what Ciena has done with partners on intelligent Raman amplification or what Infinera has done building a tightly integrated offering around photonic-integrated circuits for cheap regeneration. Or Transmode which takes a hacker’s approach to optics to offer customers better solutions for specific category-killer applications like mobile backhaul. Or you swing to the other side of the barbell, and focus on software, which appears to be Cyan’s strategy.

You’ve got to do hard stuff that others can’t easily do or you are just a commodity provider. This is why Cisco and Intel are investing in silicon photonics – they can use this as an edge against commodity white-box assemblers and bare-metal suppliers.

NFV moves from the lab to the network

Dor Skuler

Dor Skuler

In October 2012, several of the world's leading telecom operators published a document to spur industry action. Entitled Network Functions Virtualisation - Introductory White Paper, the document stressed the many benefits such a telecom transformation would bring: reduced equipment costs, power consumption savings, portable applications, and nimbleness instead of ordeal when a service is launched.

Eighteen months on and much progress has been made. Operators and vendors have been identifying the networking functions to virtualise on servers, and the impact Network Functions Virtualisation (NFV) will have on the network.

A group within ETSI, the standards body behind NFV, is fleshing out the architectural layers of NFV: the virtual network functions layer that resides above the management and orchestration one that oversees the servers, distributed in data centres across the network.

In the lab, network functions have been put on servers and then onto servers in the cloud. "Now we are at the start of the execution phase: leaving the lab and moving into first deployments in the network," says Dor Skuler, vice president and general manager of CloudBand, the NFV spin-in of Alcatel-Lucent. Skuler views 2014 as the year of experimentation for NFV. By 2015, there will be pockets of deployments but none at scale; that will start in 2016.

SDN is a simple way for virtual network functions to get what they need from the network through different commands

Deploying NFV in the network and at scale will require software-defined networking (SDN). That is because network functions make unique requirements of the network, says Skuler. Because the network functions are distributed, each application must make connections to the different sites on demand. "SDN is a simple way for virtual network functions to get what they need from the network through different commands," he says.

CloudBand's customers include Deutsche Telekom, Telefonica and NTT. Overall, the company says it is involved in 14 customer projects.

CloudBand 2.0

CloudBand has developed a management and orchestration platform, and launched an 'ecosystem' that includes 25 companies. Companies such as Radware and Metaswitch Networks are developing virtual network functions that use the CloudBand platform.

More recently, CloudBand has upgraded its platform, what it calls CloudBand 2.0, and has launched its own virtualised network functions (VNFs) for the Long Term Evolution (LTE) cellular standard. In particular, VNFs for the Evolved Packet Core (EPC), IP Multimedia Subsystem (IMS) and the radio access network (RAN). "These are now virtualised and running in the cloud," says Skuler.

SDN technology from Nuage Networks, another Alcatel-Lucent spin-in, has been integrated into the CloudBand node that is set up in a data centre. The platform also has enhanced management systems. "How to manage the many nodes into a single logical cloud, with a lot of tools that help applications," says Skuler. CloudBand 2.0 has also added support for OpenStack alongside its existing support for CloudStack. OpenStack and CloudStack are open-source platforms supporting cloud.

For the EPC, the functions virtualised are on the network side of the basestation: the Mobility Management Entity (MME), the Serving Gateway and Packet Data Network Gateway (S- and P-Gateways) and the Policy and Charging Rules Function (PCRF).

IMS is used for Voice over LTE (VoLTE). "Operators are looking for more efficient ways of delivering VoLTE," says Skuler. This includes reducing deployment times and scalability, growing the service as more users sign up.

The high-frequency parts of the radio access network, typically located in a remote radio head (RRH), cannot be virtualised. What can is the baseband processing unit (BBU). The BBUs run on off-the-shelf servers in pools up to 40km away from the radio heads. "This allows more flexible capacity allocation to different radio heads and easier scaling and upgrading," says Skuler.

Skuler points out that virtualising a function is not simply a case of putting a piece of code on a server running a platform such as CloudBand. "The VNF itself needs to go through a lot of change; a big monolithic application needs to be broken up into small components," he says.

"The VNF needs to use the development tools we offer in CloudBand so it can give rules so it can run in the cloud." The VNF also needs to know what key performance indicators to look at, and be able to request scaling, and inform the system when it is unhealthy and how to remedy the situation.

These LTE VNFs are designed to run on CloudBand and on other vendors' platforms. "CloudBand won't be run everywhere which is why we use open standards," says Skuler.

Pros and cons

The benefits from adopting NFV include prompt service deployment, "Today it can take 9-18 months for an operator to scale [a service]," says Skuler. The services, effectively software on servers, can scale more easily whereas today, typically, operators have to overprovision to ensure extra capacity is in place.

Less equipment also needs to be kept by operators for maintainance. "A typical North America mobile operator may have 450,000 spare parts," says Skuler; items such as line cards and power supplies. With automation and the use of dedicated servers, the number of spare parts held is typically reduced by a factor of ten.

Services can be scaled and healed, while functionality can be upgraded using software alone. "If I have a new verison of IMS, I can test it in parallel and then migrate users; all behind my desk at the push of a button," says Skuler.

The NFV infrastructure - comprising compute, storage, and networking resources - reside at multiple locations - the operator's points-of-presence. These resources are designed to be shared by applications - VNFs - and it is this sharing of a common pool of resources that is one of the biggest advantages of NFV, says Skuler.

But there are challenges.

"Operating [existing] systems has been relatively simple; if there is a faulty line card, you simply replace it," says Skuler. "Now you have all these virtual functions sitting on virtual machines across data centres and that creates complexities."

An application needs to be aware of this and provide the required rules to the management and orchestration system such as CloudBand. Such systems need to provide the necessary operational tools to operators to enable automated upgrades and automated scaling as well as pinpoint causes of failures.

For example, an IMS core might have 12 tiers. In cloud-speak, a tier is one of a set of virtual machines making up a virtual network function. Examples of a tier include a load balancer, an application or a database server. Each tier consists of one or more virtual machines. Scaling of capacity is enabled by adding or removing virtual machines from a tier.

In a cloud deployment, these linkages between tiers must be understood by the system to allow scaling. Two tiers may be placed in the same data centre to ensure low latency, but an extra pair of the tier-pair may be placed in separate sites in case one pair goes down. SDN is used to connect the different sites, says Skuler: "All this needs to be explained simply to the system so that it understands it and execute it".

That, he says, is what CloudBand does.

See also:

Telcos eye servers and software to meet networking needs, click here

Netronome prepares cards for SDN acceleration

Source: Netronome

Source: Netronome

Netronome has unveiled 40 and 100 Gigabit Ethernet network interface cards (NICs) to accelerate data-plane tasks of software-defined networks.

Dubbed FlowNICs, the cards use Netronome's flagship NFP-6xxx network processor (NPU) and support open-source data centre technologies such as the OpenFlow protocol, the Open vSwitch virtual switch, and the OpenStack cloud computing platform.

Four FlowNIC cards have been announced with throughputs of 2x40 Gigabit Ethernet (GbE), 4 x 40GbE, 1 x 100GbE and 2 x 100GbE. The NICs use either two or four PCI Express 3.0 x8 lane interfaces to achieve the 100GbE and 200GbE throughputs.

"We are the first to provide these interface densities on an intelligent network interface card, built for SDN use-cases," says Robert Truesdell, product manager, software at Netronome. "We have taken everything that has been done in Open vSwitch that would traditionally run on an [Intel] x86-based server, and run it on our NFP-6xxx-based FlowNICs at a much faster rate."

The cards have accompanying software that supports OpenFlow and Open vSwitch. There are also two additional software packages: cloud services, which performs such tasks as implementing the tunnelling protocols used for network virtualisation, and cyber-security.

Implementing the switch and packet processing functions on a FlowNIC card instead of using a virtual switch frees up valuable computation resources on a server's CPUs, enabling data centre operators to better use their servers for revenue-generating tasks. "They are trying to extract the maximum revenue out of the server and that is what this buys," says Truesdell.

Designing the FlowNICs around a programable network processor has other advantages. "As standards evolve, that allows us to do a software revision rather than rely on a hardware or silicon revision," says Truesdell. The OpenFlow specification, for example, is revised every 6-12 months, he says, the most recent release being V1.4.

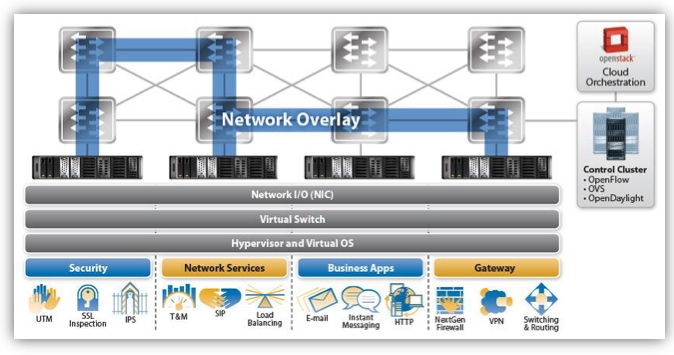

In the data centre, software-defined networking (SDN) uses a central controller to oversee the network connecting the servers. A server's NIC provides networking input/ output, while the server runs the software-based virtualised switch and the hypervisor that supports virtual machines and operating systems on which the applications run. Applications include security tasks such as SSL inspection, intrusion detection and intrusion prevention systems; load balancing; business applications like email, the hypertext transfer protocol and instant messaging; and gateway functions such as firewalls and virtual priviate networks (VPNs).

We have taken everything that has been done in Open vSwitch that would traditionally run on an x86-based server, and run it on our NFP-6xxx-based FlowNICs at a much faster rate

With Open vSwitch running on a server, the SDN protocols such as OpenFlow pass communications between the data plane device - either the softswitch or the FlowNIC - and the controller. "By supporting Open vSwitch and OpenFlow, it allows us to inherit compatibility with the [SDN] controller and orchestration layers," says Truesdell. The FlowNIC is compatible with controllers from OpenDaylight, Ryu and VMWare's NFX network virtualisation system, as well as orchestration platforms like OpenStack.

The virtual switch performs two main tasks. It inspects packet headers and passes the traffic to the appropriate virtual machines. The virtual switch also implements network virtualisation: setting up network overlays between servers for virtual machines to talk to each other.

Packet encapsulation and unwrapping performance required for network virtualisation tops out when implemented using the virtual switch, such that the throughput suffers. Moreover, packet header inspections can be nested if, for example, encryption and network virtualisation are used, further impacting performance. "The throughput rates can really suffer on a server because it is not optimised for this type of workload," says Truesdell.

Performing the tasks using the FlowNIC's network processor enables packet processing to keep up with the line rate including for the most demanding, shortest packet sizes. The issue with packet size is that the shorter the packet (the smaller the data payload), the more frequent the header inspections. "Data centre traffic is very bursty," says Truesdell. "These are not long-lived flows - they have high connection rates - and this drives the packet sizes down."

For a 10 Gigabit stream performing the Network Virtualization using Generic Routing Encapsulation (NVGRE) protocol, the forwarding throughput is at line rate for all packet sizes using the company's existing FlowNIC acceleration cards, based on its previous generation NFP-3240 network processor.

In contrast, NVGRE performance using Open vSwitch on the server is at 9Gbps for lengthy 1,500-byte packets and drops continually to 0.5Gbps for 64-byte packets. The average packet length is around 400 bytes, says Truesdell.

Overall, Netronome claims that using its FlowNICs, virtualised networking functions and server applications are boosted by over twentyfold compared to using the virtual switch on the server's CPU.

Netronome's FlowNIC-32xx cards are already used by one Tier 1 operator to perform gateway functions. The gateway, overseen using a Ryu controller running OpenFlow, translates between multi-tenant IP virtual LANs in the data centre and MPLS-based VPNs that connect the operator's enterprise customers.

The NFP-6xxx-based FlowNICs will be available for early access partners later this quarter. FlowNIC customers include data centre equipment makers, original design manufacturers and the largest content service providers - the 'internet juggernauts' - that operate hyper-scale data centres.

For an article written for Fibre Systems on network virtualisation and data centre trends, click here

OFC 2014 industry reflections - Part 2

The high cost of 100 Gigabit Ethernet client modules has been a major disappointment to me as it has slowed adoption

Joe Berthold, Ciena

Joe Berthold, vice president of network architecture at Ciena.

OFC 2014 was another great event, with interesting programmes, demonstrations and papers presented. A few topics that really grabbed my interest were discussions around silicon photonics, software-defined networking (SDN) and 400 Gigabit Ethernet (GbE).

The intense interest we saw at last year’s OFC around silicon photonics grew this year with lots of good papers and standing-room-only sessions. I look forward to future product announcements that deliver on the potential of this technology to significantly reduce cost of interconnecting systems over modest distances. The high cost of 100GbE client modules has been a major disappointment to me as it has slowed adoption.

Another area of interest at this year’s show was the great deal of experimental work around SDN, some more practical than others.

I particularly liked the reviews of the latest work under the DARPA-sponsored CORONET programme, whose Phase 3 focused on SDN control of multi-layer, multi-vendor, multi-data centre cloud networking across wide area networks.

In particular, there were talks from three companies I noted: Anne Von Lehman of Applied Communication Sciences, the prime contractor, provided a good program overview; Bob Doverspike of AT&T described a very extensive testbed using equipment of the type currently deployed in AT&T’s network, as well as two different processing and storage virtualisation platforms; and Doug Freimuth of IBM described its contributions to CORONET including an OpenStack virtualisation environment, as well as other IBM distributed cloud networking research.

All the action on rates above 100 Gig lies with the selection of client signals. 400 Gig seems to have the major mindshare but there are still calls for flexible rate clients and Terabit clients.

One thing I enjoyed about these talks was that they described an approach to SDN for distributed data centre networking that is pragmatic and could be realised soon.

I also really liked a workshop held on the Sunday on the question whether SDN will kill GMPLS. While there was broad consensus that GMPLS has failed in delivering on its original turn-of-the-century vision of IP routers control of multi-layer, multi-domain networks, most speakers recognised the value distributed control planes have in simplifying and speeding the control of single layer, single domain networks.

What I took away was that single layer distributed control planes are here to stay as important network control functions, but instead will work under the direction of an SDN network controller.

As we all know, 400 Gigabit dense wavelength division multiplexing (DWDM) is here from the technology perspective, but awaiting standardisation of the 400 Gig Ethernet signal from the IEEE, and follow-on work by the ITU-T on signal mapping to OTN. In fact, from the perspective of DWDM transmission systems, 1 Terabit-per-second systems can be had for the asking.

All the action on rates above 100 Gig lies with the selection of client signals. 400 Gig seems to have the major mindshare but there are still calls for flexible rate clients and Terabit clients.

One area that received a lot of attention, with many differing points of view, was the question of the 400GbE client. As the 400GbE project begins soon in the IEEE, it is time to take a lesson from the history of the 100 Gig client modules and do better.

Let us all agree that we don’t need 400 Gig clients until they can do better in cost, face plate density, and power dissipation than the best 100 Gig modules that will exist then.

The first 100 Gig DWDM transceivers were introduced in 2009. It is now 2014 and 100 Gig is the transmission rate of choice for virtually all high capacity DWDM network applications, with a strong economic value proposition versus 10 Gig. Yet the industry has not yet managed to achieve cost/bit parity between 100 Gig and 10 Gig clients - far from it!

Last year's OFC, we saw many show floor demonstrations of CFP2 modules. They promise lower costs, but evidence of their presence in shipping products is still lacking. At the exhibit this year we saw 100 Gig QSFP28 modules. While progress is slow, the cost of the 100 Gig client module continues to result in many operators favouring 10 Gig handoffs to their 100 Gig optical networking systems.

Let us all agree that we don’t need 400 Gig clients until they can do better in cost, face plate density, and power dissipation than the best 100 Gig modules that will exist then. At this juncture the 100 Gig benchmark we should be comparing 400 Gig to is a QSFP28 package.

Lastly, last year we heard about the launch of an OIF project to create a pluggable analogue coherent optical module. There were several talks that referenced this project, and discussed its implications for shrinking size and supporting higher transceiver card density.

Broad adoption of this component will help drive down costs of coherent transceivers, so I look forward to its hearing about its progress at OFC 2015.

Daryl Inniss, vice president and practice leader, Ovum.

There was no shortage of client-side announcements at OFC and I’ve spent time since the conference trying to organise them and understand what it all means.

I’m tempted to say that the market is once again developing too many options and not quickly agreeing on a common solution. But I’m reminded that this market works collaboratively and the client-side uncertainty we’re seeing today is a reflection of a lack of market clarity.

Let me describe three forces affecting suppliers:

The IEEE 100GBASE-xxx standards represent the best collective information that suppliers have. Not surprisingly, most vendors brought solutions to OFC supporting these standards. Vendors sharpened their products and focused on delivering solutions with smaller form factors and lower power consumption. Advances in optical components (lasers, TOSAs and ROSAs), integrated circuits (CDRs, TIAs, drivers), transceivers, active optical cables, and optical engines were all presented. A promising and robust supply base is emerging that should serve the market well.

A second driver is that hyperscale service providers want a cost-effective solution today that supports 500m to 2km. This is non-standard and suppliers have not agreed on the best approach. This is where the market becomes fragmented. The same vendors supporting the IEEE standard are also pushing non-standard solutions. There are at least four different approaches to support the hyperscale request:

- Parallel single mode (PSM4) where an MSA was established in January 2014

- Coarse wavelength division multiplexing—using uncooled directly modulated lasers and single mode fibre

- Dense wavelength division multiplexing—this one just emerged on the scene at OFC with Ranovus and Mellanox introducing the OpenOptics MSA

- Complex modulation—PAM-8 for example and carrier multi-tone.

Admittedly, the presence of this demand disrupts the traditional process. But I believe the suppliers’ behavior reflects their unhappiness with the standardisation solution.

The good news is these approaches are using established form factors like the QSFP. And silicon photonic products are starting to emerge. Suppliers will continue to innovate.

Ambiguity will persist but we believe that clarity will ultimately prevail.

The third issue lurking in the background is knowledge that 400 Gig and one Terabit will soon be needed. The best-case scenario is to use 100 Gig as a platform to support the next generation. Some argue for complex modulation as you reduce the number of optical components thereby lowering cost. That’s good but part of the price is higher power consumption, an issue that is to be determined.

Part of today’s uncertainty is whether the standard solution is suitable to support the market to the next generation. Sixteen channels at 25 Gig is doable but feels more like a stopgap measure than a long-term solution.

These forces leave suppliers innovating in search of the best path forward. The approaches and solutions differ for each vendor. Timing is an issue too with hyperscale looking for solutions today while the mass market may be years away.

We believe that servers with 25 Gig and/ or 40 Gig ports will be one of the catalysts to drive the mass market and this will not start until about 2016. Meanwhile, each vendor and the market will battle for the apparent best solution to meet the varying demands. Ambiguity will persist but we believe that clarity will ultimately prevail.

OFC 2014 industry reflections - Part 1

T.J. Xia, distinguished member of technical staff at Verizon

The CFP2 form factor pluggable - analogue coherent optics (CFP2-ACO) at 100 and 200 Gig will become the main choice for metro core networks in the near future.

I learnt that the discrete multitone (DMT) modulation format seems the right choice for a low-cost, single-wavelength direct-detection 100 Gigabit Ethernet (GbE) interface for data ports, and a 4xDMT for 400GbE ports.

As for developments to watch, photonic switches will play a much more important role for intra-data centre connections. As the port capacity of top-of-rack switches gets larger, photonic switches have more cost advantages over middle stage electrical switches.

Don McCullough, Ericsson's director of strategic communications at group function technology

The biggest trend in networking right now is software-defined networking (SDN) and Network Function Virtualisation (NFV), and both were on display at OFC. We see that the combination of SDN and NFV in the control and software domains will directly impact optical networks. The Ericsson-Ciena partnership embodies this trend with its agreement to develop joint transport solutions for IP-optical convergence and service provider SDN.

We learnt that network transformation, both at the control layer (SDN and NFV) and at the data plane layer, including optical, is happening at the network operators. Related to that, we also saw interest at OFC in the announcement that AT&T made at Mobile World Congress about their User-Defined Network Cloud and Domain 2.0 strategy where AT&T has selected to work with Ericsson on integration and transformation services.

We learnt that network transformation, both at the control layer (SDN and NFV) and at the data plane layer, including optical, is happening at the network operators. Related to that, we also saw interest at OFC in the announcement that AT&T made at Mobile World Congress about their User-Defined Network Cloud and Domain 2.0 strategy where AT&T has selected to work with Ericsson on integration and transformation services.

We will continue to watch the on-going deployment of SDN and NFV to control wide area networks including optical. We expect more joint developments agreements to connect SDN and NFV with optical networking, like the Ericsson-Ciena one.

One new thing for 2014 is that we expect to see open source projects like OpenStack and Open DayLight play increasingly important roles in the transformation of networks.

Brandon Collings, JDSU's CTO for communications and commercial optical products

The announcements of integrated photonics for coherent CFP2s was an important development in the 100 Gig progression. While JDSU did not make an announcement at OFC, we are similarly engaged with our customers on pluggable approaches for coherent 100 Gig.

I would like to see convergence around 400 Gig client interface standards

There is a lack of appreciation of the data centre operators who aren’t big household names. While the mega data centre operators have significant influence and visibility, the needs of the numerous, smaller-sized operators are largely under-represented.

I would like to see convergence around 400 Gig client interface standards. Lots of complex technology here, challenges to solve and options to do so. But ambiguity in these areas is typically detrimental to the overall industry.

Mike Freiberger, principal member of technical staff, Verizon

The emergence of 100 Gig for metro, access, and data centre reach optics generated a lot of contentious debate. Maybe the best way forward as an industry isn’t really solidified just yet.

What did I learn? Verizon is a leader in wireless backhaul and is growing its options at a rate faster than the industry.

The two developments that caught my attention are 100 Gig short-reach and above-100-Gig research. 100 Gig short-reach because this will set the trigger point for the timing of 100 Gig interfaces really starting to sell in volume. Research on data rates faster than 100 Gig because price-per-bit always has to come downward.

Ciena uses software to dip into the photonic layer

Ciena has enhanced its control plane and line elements to enable software to control the optical networking layer. The additions are part of Ciena's OPn network architecture evolution to enable greater visibility and automation. "It is about putting software into a system to allow you to program the photonic line," says Michael Adams, vice president of product & technology marketing at Ciena.

"For an SDN controller to control a photonic line, we need to present it as a programmable layer. The infrastructure is now there to be programmed."

Michael Adams, Ciena

Dubbed WaveLogic Photonics, the enhancements address the optical line system, made up of Ciena's WaveLogic coherent module, amplifier and reconfigurable optical add/ drop multiplexer (ROADM) elements. Ciena's ROADM is colourless and directionless and supports flexible-grid lightpaths, while the contentionless attribute will be added in the second half of the year.

Making the optical layer programmable is tricky. The OTN, Ethernet and IP networking layers above the line system are digital, lending themselves to software control. The optical layer, however, is not. Its performance is determined by linear and non-linear fibre transmission effects and parameters such as the optical signal-to-noise ratio.

"We believe the photonic line is equally important to be programmed, but the challenge has been that it is an analogue domain," says Adams.

To this aim, Ciena's WaveLogic Photonics introduces three changes:

- The OneConnect Intelligent Control Plane has been extended to include the photonic layer.

- Software-based line monitoring has been added to Raman to simplify amplifier deployment.

- Network analytics has been introduced to identify faults and optical signal loss.

By extending the OneConnect Control Plane to the photonic level, service providers can offer customers more tailored service-level agreements (SLAs). Customers that want protection against double fibre cuts can add automated optical restoration. After the first cut, the 50-millisecond OTN layer restoration kicks in. If a second cut occurs, OneConnect will restore the network in tens of seconds. At present, a truck roll and manual repair is needed after the second cut and that can take hours to repair. "The combination of the two [OTN and optical restoration] gives you a much more flexible system of SLAs that can be offered," says Adams.

The second line system enhancement, dubbed Smart Raman, adds a software-based optical time-domain reflectometer (OTDR) to Ciena's hybrid Raman/ EDFA amplifiers to simplify their deployment. The OTDR enables the amp to monitor and characterise the line.

"The Raman provides simple and controlled turn-up and will not turn on until it has checked that the surrounding fibre does not have any high losses," says Adams. Such automation replaces the careful manual configuration otherwise required when deploying high-powered Raman amps.

Ciena is also using the line data collected by the OTDR to provide network analytics. The line's condition can be plotted over time, helping identify any degradation in line elements. The analytics will also locate faults across fibre spans without requiring a truck roll. "Now from the NOC [network operations centre], that [fault] visibility is within 3m," says Adams.

Comcast has already used Smart Raman and the analytics as part of a Terabit trial conducted with Ciena. The cable operator located signal loss points on the line. "Comcast was able to recover several dBs of margin on that fibre," says Adams. "With 16-QAM used for the Terabit trial, they were able to go much farther; they achieved 1,000km even on marginal fibre." Ciena will also introduce advanced 16-QAM signaling in the second half of the year.

Ciena says WaveLogic Photonics should be viewed as enhancing the OneConnect control plane at the OTN and optical levels, while paving the way for software-defined networking (SDN) and applications-driven automation.

"For an SDN controller to control a photonic line, we need to present it as a programmable layer," says Adams. "The infrastructure is now there to be programmed."

The Smart Raman and analytics software is available and shipping in volume, says Ciena, while the photonic additions to the control plane are being trialled by customers and will be available in several weeks as part of the Release 10.0 software for Ciena's 6500 platform.

See also:

Ovum: Ciena launches WaveLogic Photonics, click here