100 Gigabit 'unstoppable'

A Q&A with Andrew Schmitt (@aschmitt), directing analyst for optical at Infonetics Research.

"40Gbps has even less value in the metro than in the core"

Andrew Schmitt, Infonetics Research

A study from market research firm, Infonetics Research, has found that operators have a strong preference for deploying 100 Gigabit-per-second (Gbps) technology as they upgrade their networks.

Infonetics interviewed 21 incumbent service providers, competitive operators and mobile operators that have either 40Gbps, 100Gbps or both wavelength types installed in their networks, or that plan to install by next year (2013).

The operators surveyed, from all the major regions, account for over a quarter (28%) of worldwide telecom carrier revenue and capital expenditure.

The study's findings include:

- A strong preference by the carriers for 100Gbps transport in both Brownfield and Greenfield installations. Carriers will use 40 and 100Gbps to the same degree in existing Brownfield networks while favouring 100Gbps for new, Greenfield builds.

- The reasons to deploy 40Gbps and 100Gbps optical transport equipment include lowering the cost per bit, taking advantage of the superior dispersion performance of coherent optics, and lowering incremental common equipment costs due to the increased spectral efficiency.

- Most respondents indicate 40Gbps is only a short-term solution and will move the majority of installations to 100Gbps once those products become widely available.

- Non-coherent 100Gbps is not yet viewed as an important technology.

- Colourless and directionless ROADMs and Optical Transport Network (OTN) switching are important components of Greenfield builds; gridless and contentionless ROADMs are much less so.

Q&A with Andrew Schmitt

Q. A key finding is that 40Gbps and 100Gbps are equally favoured for Brownfield routes. And is it correct that Brownfield refers to existing routes carrying 10Gbps and maybe 40Gbps wavelengths while Greenfield involves new 100Gbps wavelengths? What is it about Brownfield that 40Gbps and 100Gbps have equal footing? Equally, for Greenfield, is the thinking: "If we are deploying a new lit fibre, we might as well start with the newest and fastest"?

A: The assumptions on Brownfield versus Greenfield are correct, the definitions in the survey and the report are more detailed but that is right.

It is more an issue that they [carriers] are building with 40Gbps now but will transition to 100Gbps where it can be used. Where it can't be used they stick with 40Gbps. There are many reasons why 100Gbps may not work in existing networks.

Q: Another finding is that 40Gbps is seen as a short-term solution. What is short term? And will that also be true for the metro or does metro have its own dynamic?

A: We didn't test timing explicitly for Greenfield versus Brownfield networks. It [40Gbps] doesn't necessarily peak, it is just not growing at the same rate as 100Gbps. And 40Gbps has even less value in the metro than in the core, particularly in Greenfield builds. With Greenfield 100Gbps combined with soft-decision forward error correction (SD-FEC), it is almost as good as 40Gbps.

Q: The study found that non-coherent 100Gbps isn't yet viewed as an important technology. Why do you think that is so? And what is your take on the non-coherent 100Gbps opportunity?

A: The jury is still out.

The large customers I spoke with haven't looked at it and therefore can't form an opinion. A lot of promises and marketing at this point but that doesn't mean it won't work. Module vendors are pretty excited about it and they aren't stupid.

Q: You say colourless and directionless is seen as important ROADM attributes, gridless and contentionless much less so. If operators are building 100Gbps Greenfield overlays, is not gridless a must to future-proof the network investment?

A: The gridless requirement is completely overblown and folks positioning it as a requirement today haven't done the work to understand the issues trying to use it today. This survey was even more negative than I expected.

ECI Telecom's Apollo mission

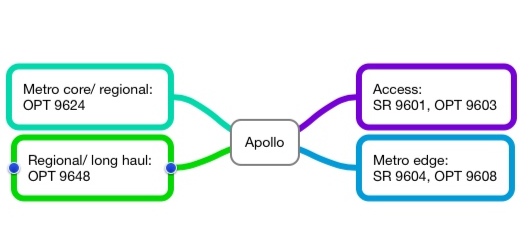

The privately-owned system vendor has launched Apollo, a family of what it calls optimised multi-layer transport platforms.

Event

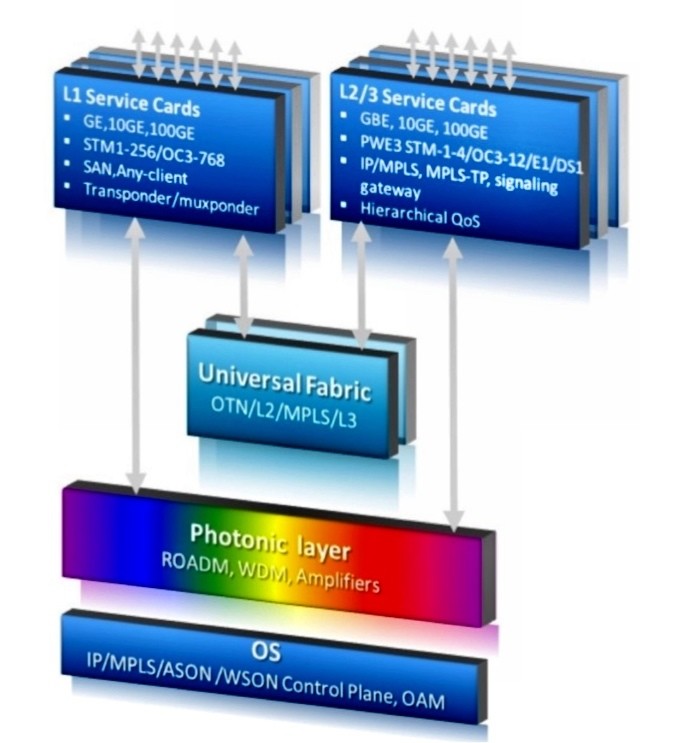

ECI Telecom has launched a family of platforms that combines optical transmission, Ethernet and optical transport network (OTN) switching and IP routing.

The 9600 series platforms, dubbed Apollo, combines the functionality of what until now has required a packet-optical transport system (P-OTS) and a carrier Ethernet switch router (CESR).

The Apollo 9600 series architecture. Source: ECI Telecom

The Apollo 9600 series architecture. Source: ECI Telecom

ECI refers to the capabilities of such a combined platform as optimised multi-layer transport (OMLT). Analysts view the platform as a natural evolution of P-OTS rather than a new category of system.

Why is it important?

ECI's Apollo 9600 series is the first to combine dense wavelength-division multiplexing (DWDM) with carrier Ethernet switch routing. It is also one of the first platforms that bring OTN switching to the metro; until now OTN switching has been confined to the network core.

Apollo addresses a shortfall of packet optical transport, namely its limited layer 2 capabilities, says ECI. This is addressed with Apollo that also adds layer 3 routing, another first.

“In the buying cycle, operators start with optical networking and add carrier Ethernet switch routing,” says Oren Marmur, head of optical networking & CESR lines of Business at ECI Telecom. Now with Apollo, operators can simplify their networks: they don't have to provision, or maintain, two separate platforms.

ECI claims the Apollo platform, with 100 Gigabit-per-second (Gbps) transport and hybrid Ethernet and OTN cards, more than halves the equipment cost compared to using separate ROADM and CESR platforms. The company also says such an Apollo configuration reduces rack space by 38% and power consumption by some 60%.

What has been done

ECI has announced six Apollo platforms that span the access, metro and core networks. The platforms include the SR 9601 and OPT 9603 for metro access and the metro edge SR 9604 and OPT 9608 with four and eight input-output (I/O) cards respectively that support WDM or 100Gbps Ethernet MPLS packet switching. The final two platforms are the OPT 9624 for metro core and the OPT 9648 for regional and long haul, and both can accommodate a terabit universal switch.

Overall Apollo can support 44 or 88 light paths at 10, 40 and 100Gbps, 2-degree and multi-degree colourless, directionless and contentionless ROADMs, OTN and Ethernet switching, and IP/ MPLS and MPLS-TP. "MPLS-TP versus IP/ MPLS is almost a religious issue yet both are valid," says Marmur, who adds that at 40 Gig, ECI will use coherent and direct detection technologies but at 100 Gig it will use only coherent.

The universal fabric of the OPT 9624 and 9648 is cell based - ODUs and packets, not lower-order SONET/SDH traffic. If an operator has any significant amount of SONET/SDH traffic, ECI’s XDM platform or another aggregation box is needed.

The platforms can be configured as CESR platforms, OTN switches, optical transport platforms or combinations of the three.

Analysis

Gazettabyte asked Sterling Perrin, senior analyst at Heavy Reading; Rick Talbot, senior analyst, optical infrastructure at Current Analysis and Dana Cooperson, vice president of the network infrastructure practice at Ovum for their views about the ECI announcement.

Sterling Perrin, Heavy Reading

Apollo has several noteworthy aspects, according to Heavy Reading.

“It is a big announcement for ECI and a big announcement for the industry," says Perrin. “They are doing with the technology some fundamental things that are new.” That said, it remains to be seen how quickly operators will embrace an OMLT-style platform, he says.

Apollo confirms one networking trend - moving the OTN switching fabric into the metro network. So far OTN has been confined largely to the core network. “I know operators are interested but they are still evaluating it,” says Perrin. “But OTN will migrate down from the core to the metro.” Others that have announced such a capability include Ciena and Huawei.

ECI has also put the DWDM transport with the CESR platform. “This is another trend we figured would happen,” he says. “This puts ECI very early, if not first, in doing that function.”

Perrin has his doubts about how quickly the layer 3 functionality added to the platform will be embraced by operators: “What I've seen from the industry is that MPLS-TP will give you that functionality over time as it matures, so this sort of platform may not need the full layer 3 functions.”

The modular nature of the design that allows operators to add the functionality they need helps avoid one issue associated with integrated platforms, paying for functionality that is not needed. And there are cost savings by having a single integrated platform. “You do want to save capex and opex and this is definitely a way to get that done,” says Perrin.

In the network core, the question remains whether packet needs to be combined with the optics. “Metro lends itself more to the integration than the core does,” he says.

ECI’s biggest competitor is probably Huawei and over time also ZTE, says Perrin. ECI has done well in India and other emerging markets that many of the system vendors were ignoring. “Now they have Huawei in the mix, it is definitely tougher,” he says. “This [Apollo] announcement will definitely help them.”

Rick Talbot, Current Analysis

Current Analysis categorises the smaller members of the Apollo family as a packet-optical access (POA) portfolio, playing the same role as Ericsson’s SPO 1400 family and Cisco’s CPT series. The market research firm views the largest two Apollo platforms - the OPT 9624 and 48 - as packet-optical transport systems.

The Apollo POAs bring protocol-agnostic packet switching to the aggregation network, says Talbot, a rarity in this part of the network. Several vendors offer P-OTS with universal switching fabrics but most do not extend that architecture into the aggregation network, Tellabs with the 7100 Nano OTS being the exception. Also the 9600 series IP/ MPLS and MPLS-TP options are very strong, providing what Cisco and Ericsson call unified MPLS, he says.

For Current Analysis, the significance of the portfolio is that the Apollo family delivers converged packet and time-division multiplexing (TDM) switching in a single switch fabric, and provides an infrastructure that extends from the network core to the access network edge.

The switching fabric provides the greatest efficiency for the ultimate traffic type - packets - while simplifying the network architecture and minimising equipment cost. In turn, the breadth of the portfolio provides a common set of capabilities across an operator’s network, minimising training costs and spares inventory.

As for the specification, the wide range of MPLS features integrated into this product family, its terabit universal switch and its 100Gbps DWDM transport capabilities are impressive, says Talbot.

“The primary gap in the portfolio, and it is hard to fault ECI for this, is that the highest capacity member of the family supports ’only’ 1 Terabit-per-second of switching capacity,” he says. “This is not large enough for a Tier 1 core optical switch.”

ECI must first execute on the production of the Apollo family, but if it does, Talbot believe that ECI will capture the interest of larger and more end-to-end operators in markets they already serve.

ECI will also have positioned itself to capture the attention of many European operators and, if it makes a push there, the North American market. However Talbot believes ECI will still be challenged to capture the attention of Tier 1 operators because of the family’s limited maximum scale.

Dana Cooperson, Ovum

Size and scale breeds specialisation, says Cooperson. “Large service providers, including the Tier 1s, won’t be so interested in the OMLT, but they aren’t the target anyway,” she says. Large service providers need plenty of scale when it comes to WDM and CESR functionality, while they also tend to have compartmentalised operations groups. “So an all-in-one product like the OMLT isn’t targeted at them,” she says.

ECI has always done well selling to the Tier 2 and Tier 3 carriers as well as enterprises such as utilities that have carrier-like networks. That is because ECI's modular, packet-based platforms are sized and priced to match such operators' and enterprises’ requirements. “I see the OMLT as a continuation of ECI's positioning of its XDM platform,” she says.

Cooperson says that it can be difficult to position vendors’ switch announcements and that they should do more to explain where they sit. But she stresses that the Apollo 9600 series is very different from Juniper's PTX, for example.

“The PTX is positioned in the core as a lower-cost alternative to core routers, while the OMLT as a CESR or even an OTN switch is meant more for smaller sites,” she says. Also the switch capacities of the smaller Apollo platforms fit with ECI's focus and positioning on smaller customers and smaller sites.

Cooperson also highlights the need for the XDM platform if an operator requires SONET/SDH support but says ECI has alluded to add/drop multiplexer blades as well as packet blades. "The [Apollo] focus is on the packet and photonic bits,” says Cooperson. “ECI did emphasize that the XDM isn’t going anywhere, but we’ll see what happens over time and how much SONET/SDH ECI builds in [if any to the Apollo].”

Further Reading

For accompanying White Papers, click here

Transmode chooses coherent for 100 Gigabit metro

Transmode has detailed its 100 Gigabit metro strategy based on a stackable rack, a concept borrowed from the datacom world.

The Swedish system vendor has adopted coherent detection technology for 100 Gigabit-per-second (Gbps) optical transmission, unlike other recent metro announcements from ADVA Optical Networking and MultiPhy based on 100Gbps direct-detection.

"Metro is a little bit diverse. You see different requirements that you have to adapt to."

Sten Nordell, Transmode

"We are getting requests for this and we think 2012 is when people are going to put in a low number of [100Gbps] links into the metro," says Sten Nordell, CTO at Transmode.

The 100Gbps requirements Transmode is seeing include connecting data centres over various distances. The data centres can be close - tens of kilometers - or hundreds of kilometers apart.

"They [data centre operators] want to get more capacity over longer distances over the fibre they have rented," says Nordell. "That is why we are going down the standards path of coherent technology that gives you that boost in power and distance."

Nordell says that customers typically only want one or two 100Gbps light paths to expand fibre capacity or to connect IP routers over a link already carrying multiple 10Gbps light paths. "Metro is a little bit diverse," he says. "You see different requirements that you have to adapt to."

Rack system approach

Transmode has adopted a stackable approach to its 100Gbps TM-series of chassis. The TM-2000 is a 4U-high dual 100Gbps rack that implements transponder, muxponder or regeneration functions. "We have borrowed from Ethernet switches - you add as you grow," says Nordell.

Up to four TM-2000 are used with one TM-301 or TM-3000 master rack, with the architecture supporting up to 80, 100Gbps wavelengths overall.

"If you have too many ROADMs in the way it is going to hurt you. We have seen that with 40 Gig."

The system also uses daughter boards that support various client-side interfaces while keeping the 100Gbps line-side interface - the most expensive system component - intact. "You can install a muxponder of 10x10Gig modules,” says Nordell. "When an IP router upgrades to a 100 Gig interface, you take out the daughter board and put in a 100 Gig transponder."

Transmode will offer two line-side coherent options, with a reach of 750km or 1,500km. "We want to make sure that customers' metro and long-haul requirements will be covered," says Nordell.

The reach of various 100Gbps technologies for the metro edge, core and regional networks. Source: Gazettabyte

The company chose coherent technology because it is an industry-backed standard. "We can benefit from coherent technology," he says. "If the industry aligns, the volumes of the components come down in price."

Coherent also simplifies the setting up and commissioning of agile photonic networks, especially as more ROADMs are introduced in the metro. "Coherent will help simplify this. All the others are more complex," he says. "Beforehand metro was more point-to-point, now we are seeing more flexibility."

Transmode recently announced it is supplying its systems to Virgin Mobile for mobile backhaul. "That is a metro network with all ROADMs in it," says Nordell. Such networks support multiple paths and that translates to a need for greater reach. "The power budget we need to have in the metro is going up a little bit."

Direct-detection technology was considered by Transmode but it chose coherent as it gives customers a better networking design capability.

Direct detection is also not as spectrally efficient as coherent: 200GHz or 100GHz-wide channels for a 100Gbps signal rather that coherent's 50GHz. "If you have too many ROADMs in the way it is going to hurt you, says Nordell.”We have seen that with 40 Gig."

The TM-2000 rack will begin testing in customers' networks at the start of 2012, with limited availability from mid-2012. The platform and daughter boards will be available in volume by year-end 2012.

High fives: 5 Terabit OTN switching and 500 Gig super-channels.

Infinera has announced a core network platform that combines Optical Transport Network (OTN) switching with dense wavelength division multiplexing (DWDM) transport. "We are looking at a system that integrates two layers of the network," says Mike Capuano, vice president of corporate marketing at Infinera.

"This is 100Tbps of non-blocking switching, all functioning as one system. You just can't do that with merchant silicon."

"This is 100Tbps of non-blocking switching, all functioning as one system. You just can't do that with merchant silicon."

Mike Capuano, Infinera

The DTN-X platform is based on Infinera's third-generation photonic integrated circuit (PIC) that supports five, 100Gbps coherent channels.

Each DTN-X platform can deliver 5 Terabits-per-second (Tbps) of non-blocking OTN switching using an Infinera-designed ASIC. Ten DTN-X platforms can be combined to scale the OTN switching and transport capacity to 50Tbps currently.

Infinera also plans to add Multiprotocol Label Switching (MPLS) to turn the DTN-X into a hybrid OTN/ MPLS switch. With the next upgrades to the PIC and the switching, the ten DTN-X platforms will scale to 100Tbps optical transport and 100Tbps OTN and MPLS switching capacity.

The platform is being promoted by Infinera as a way for operators to tackle network traffic growth and support developments such as cloud computing where applications and content increasingly reside in the network. "What that means [for cloud-based services to work] is a network with huge capacity and very low latency," says Capuano.

Platform details

The 5x100Gbps PIC supports what Infinera calls a 500Gbps 'super-channel'. Each super-channel is a multi-carrier implementation comprising five, 100Gbps wavelengths. Combined with OTN, the 500Gbps super-channel can be filled with 1, 10, 40 and 100 Gigabit streams (SONET/SDH, Ethernet, video etc). Moreover, there is no spectral efficiency penalty: the super-channel uses 250GHz of fibre spectrum, provisioning five 50GHz-wide, 100Gbps wavelengths at a time.

"We have seen 40 and 100Gbps come on the market and they are definitely helping with fibre capacity issues," says Capuano. "But they are more expensive from a cost-per-bit perspective than 10Gbps." By introducing the 500Gbps PIC, Infinera says it is reducing the cost-per-bit performance of high speed optical transport.

DTN-X: shown are 5 line and tributary cards top and bottom with switching cards in the centre of the chassis. Source: Infinera

DTN-X: shown are 5 line and tributary cards top and bottom with switching cards in the centre of the chassis. Source: Infinera

Integrating OTN switching within the platform results in the lowest cost solution and is more efficient when compared to multiplexed transponders (muxponder) configured manually, or an external OTN switch which must be optically connected to the transport platform.

The DTN-X also employs Generalised MPLS (GMPS) software. "GMPLS makes it easy to deploy networks and services with point-and-click provisioning," says Capuano.

Each DTX-N line card supports a 500Gbps PIC but the chassis backplane is specified at 1Tbps, ready for Infinera's next-generation 10x100Gbps PIC that will upgrade the DTN-X to a 10Tbps system. "We have already presented our test results for our 1Tbps PIC back in March," says Capuano. The fourth-generation PIC, estimated around 2014 (based on a company slide although Infinera has made no public comment), will support a 1Tbps super-channel.

Adding MPLS will add the transport capability of the protocol to the DTN-X. "You will have MPLS transport, OTN switching and DWDM all in one platform," says Capuano.

OTN switching is the priority of the tier-one operators to carry and process their SONET/SDH traffic; adding MPLS will enable extra traffic processing capabilities to the system, he says.

Infinera says that by eventually integrating MPLS switching into the optical transport network, operators will be able to bypass expensive router ports and simplify their network operation.

Performance

Infinera says that the DTX-N 5Tbps performance does not dip however the system is configured: whether solely as a switch (all line card slots filled with tributary modules), mixed DWDM/ switching (half DWDM/ half tributaries, for example) or solely as a DWDM platform. Depending on the cards in the DTN-X platform, the transport/ switching configuration can be varied but the 5Tbps I/O capacity is retained. Infinera says other switches on the market do lose I/O capacity as the interface mix is varied.

Overall, Infinera claims the platform requires half the power of competing solutions and takes up a third less space.

The DTN-X will be available in the first half of 2012.

Analysis

Gazettabyte asked several market research firms about the significance of the DTN-X announcement and the importance of combining OTN, DWDM and soon MPLS within one platform.

Ovum

Ron Kline, principal analyst, and Dana Cooperson, vice president, of the network infrastructure practice

"MPLS switching is setting up a very interesting competitive dynamic among vendors"

Dana Cooperson, Ovum

The DTN-X is a platform for the largest service providers and their largest sites, says Ovum.

It sees the DTN-X in the same light as other integrated OTN/ WDM platforms such as Huawei's OSN 8800, Nokia Siemens Networks' hiT 7100, Alcatel-Lucent's 1830 PSS and Tellabs' 7100 OTS.

"It fits the mold for Verizon's long-haul optical transport platform (LH OTP), especially once MPLS is added," says Kline. "NSN is also claiming it will add MPLS to the 7100. Once MPLS is added, then you have the big packet optical transport box that Verizon wants."

The DTN-X platform will boost the business case for 100 Gig in a similar way to how Infinera's current PIC has done at 10 Gig. "The others will be forced to lower price," says Kline.

Having GMPLS is important, especially if there is a need to do dynamic bandwidth allocation, however it is customer-dependent. "When you start digging, it's hard to find large-scale implementations of GMPLS," says Kline.

The Ovum analysts stress that the need for OTN in the core depends on the customer. Content service providers like Google couldn't care less about OTN. "It's really an issue for multi-service providers like BT and AT&T," says Cooperson,

There is a consensus about the need for MPLS in the core. "Different service providers are likely to take different approaches — some might prefer an integrated box and others might not, it depends on their business," she says. "I think MPLS switching is setting up a very interesting competitive dynamic among vendors that focus on IP/MPLS, those that focus on optical, and those that are trying to do both [optical and IP/MPLS].

Ovum highlights several aspects regarding the DTN-X's claimed performance.

"Assuming it performs as advertised, this should finally give Infinera what it needs to be of real interest to the tier-ones," says Cooperson. "The message of scalability, simplicity, efficiency, and profitability is just what service providers want to hear."

Cooperson also highlights Infinera's approach to optical-electrical-optical conversion and the benefit this could deliver at line speeds greater than 100Gbps.

At present ROADMs are being upgraded to support flexible spectrum channel configurations, also known as gridless. This is to enable future line speeds that will use more spectrum than current 50GHz DWDM channels. Operators want ROADMs that support flexible spectrum requirements but managing the network to support these variable width channels is still to resolved.

"It fits the mold for Verizon's long-haul optical transport platform (LH OTP), especially once MPLS is added"

"It fits the mold for Verizon's long-haul optical transport platform (LH OTP), especially once MPLS is added"

Ron Kline, Ovum

Infinera's approach is based on conversion to the electrical domain when dropping and regenerating wavelengths such that the issue of flexible channels does not arise or is at least forestalled. This, says Cooperson, could be Infinera's biggest point of differentiation.

"What impresses me is the 500Gbps super-channel using five, 100Gbps carriers and the size of the switch fabric," adds Kline. The 5Tbps switching performance also exceeds that of everyone else: "Alcatel-Lucent is closest with 4Tbps but most range from 1-3Tbps and top out at 3Tbps."

The ease of use is also a big deal. Infinera did very well in marketing rapid turn up: 10 Gig in 10 days for example, says Kline: "It looks like they will be able to do the same here with 100 Gig."

Infonetics Research

Andrew Schmitt, directing analyst, optical

"GMPLS isn't that important, yet."

The DTN-X is a WDM platform which optionally includes a switch fabric for carriers that want it integrated with the transport equipment, says Schmitt. Once MPLS is added, it has the potential to be a full-blown packet-optical system.

"[The announcement is] pretty significant though not unexpected," says Schmitt. "I think the key question is what it costs, and whether the 500G PIC translates into compelling savings."

Having MPLS support is important for some carriers such as XO Communications and Google but not for others.

Schmitt also says GMPLS isn't that important, yet. "Infinera's implementation of regen-rich networks should make their GMPLS implementation workable," he says. "It has been building networks like that for a while."

OTN in the core is still an open debate but any carrier that doesn't have the luxury of a homogenous data network needs it, he says

Schmitt has yet to speak with carriers who have used the DTN-X: "I can't comment on claimed performance but like I said, cost is important."

ACG Research

Eve Griliches, managing partner

"Infinera has already introduced the 500G PIC, but the OTN is significant in that it can be used as a standalone OTN switch, and it has the largest capacity out there today"

The DTN-X as an OTN/ WDM platform awaiting label switch router (LSR) functionality, says Griliches: "With the LSR functionality it will be able to do statistical multiplexing for direct router connections."

Infinera has already introduced the 500 Gig PIC but the OTN is significant in that it can be used as a standalone OTN switch, and it has the largest capacity out there today. An OTN survey conducted last year by ACG Research found that the switch capacity sweet spot is between 4 and 8Tbps.

Griliches says that LSR-based products are taking time to incorporate WDM and OTN technologies, while it is unclear when the DTN-X will support MPLS to add LSR capabilities. The race is on as to whom can integrate everything first, but DWDM and OTN before MPLS is the right direction for most tier-one operators, she says.

Infinera has over eight thousand of its existing DTNs deployed at 85 customers in 50 countries. The scale of the DTN-X will likely broaden Infinera's customer base to include tier-one operators, says Griliches.

ACG Research has heard positive feedback from operators it has spoken to. One stressed that the decreased port count due to the larger OTN cross-connect significantly improves efficiencies. Another operator said it would pick Infinera and said the beta version of the 500Gbps PIC is "working beautifully".

Terabit Consortium embraces OFDM

“This project is very challenging and very important”

“This project is very challenging and very important”

Shai Stein, Tera Santa Consortium

Given the continual growth in IP traffic, higher-speed light paths are going to be needed, says Shai Stein, chairman of the Tera Santa Consortium and ECI Telecom’s CTO: “If 100 Gigabit is starting to be deployed, within five years we’ll start to see links with tenfold that capacity, meaning one Terabit.”

The project is funded by the seven participating firms and the Israeli Government. According to Stern, the Government has invested little in optical projects in recent years. “When we look at the [Israeli] academies and industry capabilities in optical, there is no justification for this,” says Stern. “We went with this initiative in order to get Government funding for something very challenging that will position us in a totally different place worldwide.”

Orthogonal frequency division multiplexing

OFDM differs from traditional dense wavelength division multiplexing (DWDM) technology in how fibre bandwidth is used. Rather than sending all the information on a lightpath within a single 50 or 100GHz channel – dubbed single-carrier transmission – OFDM uses multiple narrow carriers. “Instead of using the whole bandwidth in one bulk and transmitting the information over it, [with OFDM] you divide the spectrum into pieces and on each you transmit a portion of the data,” says Stein. “Each sub-carrier is very narrow and the summation of all of them is the transmission.”

“Each time there is a new arena in telecom we find that there is a battle between single carrier modulation and OFDM; VDSL began as single carrier and later moved to OFDM,” says Amitai Melamed, involved in the project and a member of ECI’s CTO office. “In the optical domain, before running to [use] single-carrier modulation as is currently done at 100 Gigabit, it is better to look at the OFDM domain in detail rather than jump at single-carrier modulation and question whether this was the right choice in future.”

OFDM delivers several benefits, says Stern, especially in the flexibility it brings in managing spectrum. OFDM allows a fibre’s spectrum band to be used right up to its edge. Indeed Melamed is confident that by adopting OFDM for optical, the spectrum efficiency achieved will eventually match that of wireless.

“OFDM is very tolerant to rate adaptation.”

Amitai Melamed, ECI Telecom

The technology also lends itself to parallel processing. “Each of the sub-carriers is orthogonal and in a way independent,” says Stern. “You can use multiple small machines to process the whole traffic instead of a single engine that processes it all.” With OFDM, chromatic dispersion is also reduced because each sub-carrier is narrow in the frequency domain.

Using OFDM, the modulation scheme used per sub-carrier can vary depending on channel conditions. This delivers a flexibility absent from existing single-carrier modulation schemes such as quadrature phase-shift keying (QPSK) that is used across all the channel bandwidth at 100 Gigabit-per-second (Gbps). “With OFDM, some of the bins [sub-carriers] could be QPSK but others could be 16-QAM or even more,” says Melamed.

The approach enables the concept of an adaptive transponder. “I don’t always need to handle fibre as a time-division multiplexed link – either you have all the capacity or nothing,” says Melamed. “We are trying to push this resource to be more tolerant to the media: We can sense the channels' and adapt the receiver to the real capacity.” Such an approach better suits the characteristics of packet traffic in general he says: “OFDM is very tolerant to rate adaptation.”

The Consortium’s goal is to deliver a 1 Terabit light path in a 175GHz channel. At present 160, 40Gbps can be crammed within the a fibre's C-band, equating to 6.4Tbps using 25GHz channels. At 100Gbps, 80 channels - or 8Tbps - is possible using 50GHz channels. A 175GHz channel spacing at 1Tbps would result in 23Tbps overall capacity. However this figure is likely to be reduced in practice since frequency guard-bands between channels are needed. The spectrum spacings at speeds greater than 100Gbps are still being worked out as part of ITU work on "gridless" channels (see OFC announcements and market trends story).

ECI stresses that fibre capacity is only one aspect of performance, however, and that at 1Tbps the optical reach achieved is reduced compared to transmissions at 100Gbps. “It is not just about having more Gigabit-per-second-per-Hertz but how we utilize the resource,” says Melamed. “A system with an adaptive rate optimises the resource in terms of how capacity is managed.” For example if there is no need for a 1Tbps link at a certain time of the day, the system can revert to a lower speed and use the spectrum freed up for other services. Such a concept will enable the DWDM system to be adaptive in capacity, time and reach.

Project focus

The project is split between digital and analogue, optical development work. The digital part concerns OFDM and how the signals are processed in a modular way.

The analogue work involves overcoming several challenges, says Stern. One is designing and building the optical functions needed for modulation and demodulation with the accuracy required for OFDM. Another is achieving a compact design that fits within an optical transceiver. Dividing the 1Tbps signal into several sub-bands will require optical components to be implemented as a photonic integrated circuit (PIC). The PIC will integrate arrays of components for sub-band processing and will be needed to achieve the required cost, space and power consumption targets.

Taking part in the project are seven Israeli companies - ECI Telecom, the Israeli subsidiary of Finisar, MultiPhy, Civcom, Orckit-Corrigent, Elisra-Elbit and Optiway- as well as five Israeli universities.

Two of the companies in the Consortium

“There are three types of companies,” says Stern. “Companies at the component level – digital components like digital signal processors and analogue optical components, sub-systems such as transceivers, and system companies that have platforms and a network view of the whole concept.”

The project goal is to provide the technology enablers to build a terabit-enabled optical network. A simple prototype will be built to check the concepts and the algorithms before proceeding to the full 1Terabit proof-of-concept, says Stern. The five Israeli universities will provide a dozen research groups covering issues such as PIC design and digital signal processing algorithms.

Any intellectual property resulting from the project is owned by the company that generates it although it will be made available to any other interested Consortium partner for licensing.

Project definition work, architectures and simulation work have already started. The project will take between 3-5 years but it has a deadline after three years when the Consortium will need to demonstrate the project's achievements. “If the achievements justify continuation I believe we will get it [a funding extension],” says Stern. “But we have a lot to do to get to this milestone after three years.

Project funding for the three years is around US $25M, with the Israeli Office of the Chief Scientist (OCS) providing 50 million NIS (US $14.5M) via the Magnet programme, which ECI says is “over half” of the overall funding.

Further reading:

Infinera details Terabit PICs, 5x100G devices set for 2012

Infinera has given first detail of its terabit coherent detection photonic integrated circuits (PICs). The pair - a transmitter and a receiver PIC – implement a ten-channel 100 Gigabit-per-second (Gbps) link using polarisation multiplexing quadrature phase-shift keying (PM-QPSK). The Infinera development work was detailed at OFC/NFOEC held in Los Angeles between March 6-10.

Infinera has recently demonstrated its 5x100Gbps PIC carrying traffic between Amsterdam and London within Interoute Communications’ pan-European network. The 5x100Gbps PIC-based system will be available commercially in 2012.

“We think we can drive the system from where it is today – 8 Terabits-per-fibre - to around 25 Terabits-per-fibre”

Dave Welch, Infinera

Why is this significant?

The widespread adoption of 100Gbps optical transport technology will be driven by how quickly its cost can be reduced to compete with existing 40Gbps and 10Gbps technologies.

Whereas the industry is developing 100Gbps line cards and optical modules, Infinera has demonstrated a 5x100Gbps coherent PIC based on 50GHz channel spacing while its terabit PICs are in the lab.

If Infinera meets its manufacturing plans, it will have a compelling 100Gbps offering as it takes on established 100Gbps players such as Ciena. Infinera has been late in the 40Gbps market, competing with its 10x10Gbps PIC technology instead.

40 and 100 Gigabit

Infinera views 40Gbps and 100Gbps optical transport in terms of the dynamics of the high-capacity fibre market. In particular what is the right technology to get most capacity out of a fibre and what is the best dollar-per-Gigabit technology at a given moment.

For the long-haul market, Dave Welch, chief strategy officer at Infinera, says 100Gbps provides 8 Terabits (Tb) of capacity using 80 channels versus 3.2Tb using 40Gbps (80x40Gbps). The 40Gbps total capacity can be doubled to 6.4Tb (160x40Gbps) if 25GHz-spaced channels are used, which is Infinera’s approach.

“The economics of 100 Gigabit appear to be able to drive the dollar-per-gigabit down faster than 40 Gigabit technology,” says Welch. If operators need additional capacity now, they will adopt 40Gbps, he says, but if they have spare capacity and can wait till 2012 they can use 100Gbps. “The belief is that they [operators] will get more capacity out of their fibre and at least the same if not better economics per gigabit [using 100Gbps],” says Welch. Indeed Welch argues that by 2012, 100Gbps economics will be superior to 40Gbps coherent leading to its “rapid adoption”.

For metro applications, achieving terabits of capacity in fibre is less of a concern. What matters is matching speeds with services while achieving the lowest dollar-per-gigabit. And it is here – for sub-1000km networks – where 40Gbps technology is being mostly deployed. “Not for the benefit of maximum fibre capacity but to protect against service interfaces,” says Welch, who adds that 40 Gigabit Ethernet (GbE) rather than 100GbE is the preferred interface within data centres.

Shorter-reach 100Gbps

Companies such as ADVA Optical Networking and chip company MultiPhy highlight the merits of an additional 100Gbps technology to coherent based on direct detection modulation for metro applications (for a MultiPhy webinar on 100Gbps direct detection, click here). Direct detection is suited to distances from 80km up to 1000km, to connect data centres for example.

Is this market of interest to Infinera? “This is a great opportunity for us,” says Welch.

The company’s existing 10x10Gbps PIC can address this segment in that it is least 4x cheaper than emerging 100Gbps coherent solutions over the next 18 months, says Welch, who claims that the company’s 10x10Gbps PIC is making ‘great headway’ in the metro.

“If the market is not trying to get the maximum capacity but best dollar-per-gigabit, it is not clear that full coherent, at least in discrete form, is the right answer,” says Welch. But the cost reduction delivered by coherent PIC technology does makes it more competitive for cost-sensitive markets like metro.

A 100Gbps coherent discrete design is relatively costly since it requires two lasers (one as a local oscillator (LO - see fig 1 - at the receiver), sophisticated optics and a high power-consuming digital signal processor (DSP). “Once you go to photonic integration the extra lasers and extra optics, while a significant engineering task, are not inhibitors in terms of the optics’ cost.”

Coherent PICs can be used ‘deeper in the network’ (closer to the edge) while shifting the trade-offs between coherent and on-off keying. However even if the advent of a PIC makes coherent more economical, the DSP’s power dissipation remains a factor regarding the tradeoff at 100Gbps line rates between on-off keying and coherent.

Welch does not dismiss the idea of Infinera developing a metro-centric PIC to reduce costs further. He points out that while such a solution may be of particular interest to internet content companies, their networks are relatively simple point-to-point ones. As such their needs differ greatly from cable operators and telcos, in terms of the services carried and traffic routing.

PIC challenges

Figure 1: Infinera's terabit PM-QPSK coherent receiver PIC architecture

Figure 1: Infinera's terabit PM-QPSK coherent receiver PIC architecture

There are several challenges when developing multi-channel 100Gbps PICs. “The most difficult thing going to a coherent technology is you are now dealing with optical phase,” says Welch. This requires highly accurate control of the PIC’s optical path lengths.

The laser wavelength is 1.5 micron and with the PIC's indium phosphide waveguides this is reduced by a third to 0.5 micron. Fine control of the optical path lengths is thus required to tenths of a wavelength or tens of nanometers (nm).

Achieving a high manufacturing yield of such complex PICs is another challenge. The terabit receiver PIC detailed in the OFC paper integrates 150 optical components, while the 5x100Gbps transmit and receive PIC pair integrate the equivalent of 600 optical components.

Moving from a five-channel (500Gbps) to a ten-channel (terabit) PIC is also a challenge. There are unwanted interactions in terms of the optics and the electronics. “If I turn one laser on adjacent to another laser it has a distortion, while the light going through the waveguides has potential for polarisation scattering,” says Welch. “It is very hard.”

But what the PICs shows, he says, is that Infinera’s manufacturing process is like a silicon fab’s. “We know what is predictable and the [engineering] guys can design to that,” says Welch. “Once you have got that design capability, you can envision we are going to do 500Gbps, a terabit, two terabits, four terabits – you can keep on marching as far as the gigabits-per-unit [device] can be accomplished by this technology.”

The OFC post-deadline paper details Infinera's 10-channel transmitter PIC which operates at 10x112Gbps or 1.12Tbps.

Power dissipation

The optical PIC is not what dictates overall bandwidth achievable but rather the total power dissipation of the DSPs on a line card. This is determined by the CMOS process used to make the DSP ASICs, whether 65nm, 40nm or potentially 28nm.

Infinera has not said what CMOS process it is using. What Infinera has chosen is a compromise between “being aggressive in the industry and what is achievable”, says Welch. Yet Infinera also claims that its coherent solution consumes less power than existing 100Gbps coherent designs, partly because the company has implemented the DSP in a more advanced CMOS node than what is currently being deployed. This suggests that Infinera is using a 40nm process for its coherent receiver ASICs. And power consumption is a key reason why Infinera is entering the market with a 5x100Gbps PIC line card. For the terabit PIC, Infinera will need to move its ASICs to the next-generation process node, he says.

Having an integrated design saves power in terms of the speeds that Infinera runs its serdes (serialiser/ deserialiser) circuitry and the interfaces between blocks. “For someone else to accumulate 500Gbps of bandwdith and get it to a switch, this needs to go over feet of copper cable, and over a backplane when one 100Gbps line card talks to a second one,” says Welch. “That takes power - we don’t; it is all right there within inches of each other.”

Infinera can also trade analogue-to-digital (A/D) sampling speed of its ASIC with wavelength count depending on the capacity required. “Now you have a PIC with a bank of lasers, and FlexCoherent allows me to turn a knob in software so I can go up in spectral efficiency,” he says, trading optical reach with capacity. FlexCoherent is Infinera’s technology that will allow operators to choose what coherent optical modulation format to use on particular routes. The modulation formats supported are polarisation multiplexed binary phase-shift keying (PM-BPSK) and PM-QPSK.

Dual polarisation 25Gbaud constellation diagrams

Dual polarisation 25Gbaud constellation diagrams

What next?

Infinera says it is an adherent of higher quadrature amplitude modulation (QAM) rates to increase the data rate per channel beyond 100Gbps. As a result FlexCoherent in future will enable the selection of higher-speed modulation schemes such as 8-QAM and 16-QAM. “We think we can drive the system from where it is today –8 Terabits-per-fibre - to around 25 Terabits-per-fiber.”

But Welch stresses that at 16-QAM and even higher level speeds must be traded with optical reach. Fibre is different to radio, he says. Whereas radio uses higher QAM rates, it compensates by increasing the launch power. In contrast there is a limit with fibre. “The nonlinearity of the fibre inhibits higher and higher optical power,” says Welch. “The network will have to figure out how to accommodate that, although there is still significant value in getting to that [25Tbps per fibre]” he says.

The company has said that its 500 Gigabit PIC will move to volume manufacturing in 2012. Infinera is also validating the system platform that will use the PIC and has said that it has a five terabit switching capacity.

Infinera is also offering a 40Gbps coherent (non-PIC-based) design this year. “We are working with third-party support to make a module that will have unique performance for Infinera,” says Welch.

The next challenge is getting the terabit PIC onto the line card. Based on the gap between previous OFC papers to volume manufacturing, the 10x100Gbps PIC can be expected in volume by 2014 if all goes to plan.

Fujitsu Labs adds processing to boost optical reach

“That is one of the virtues of the technology; it is not dependent on the modulation format or the bit rate”

Takeshi Hoshida, Fujitsu Labs

Why is it important?

Much progress has been made in developing digital signal processing techniques for 100Gbps coherent receivers to compensate for undesirable fibre transmission effects such as polarisation mode dispersion and chromatic dispersion (See Performance of Dual-Polarization QPSK for Optical Transport Systems). Both dispersions are linear in nature and are compensated for using linear digital filtering. What Fujitsu Labs has announced is the next step: a digital filter design that compensates for non-linear effects.

A key challenge facing optical-transmission designers is extending the reach of 100Gbps transmissions to match that of 10Gbps systems. In the simplest sense, reach falls with increased transmission speed because the shorter-pulsed signals contain less photons. Channel impairments also become more prominent the higher the transmission speed.

Engineers can increase system reach by boosting the optical signal-to-noise ratio but this gives rise to non-linear effects in the fibre. “When the signal power is higher, the refractive index of the fibre changes and that distorts the phase of the optical signal,” says Takeshi Hoshida, a senior researcher at Fujitsu Labs.

The non-linear effect, combined with polarisation mode dispersion and chromatic dispersion, interact with the signal in a complicated way. “The linear and non-linear effects combine to result in a very complex distortion of the received signal,” says Hoshida.

Fujitsu has developed a non-linear distortion compensation technique that recovers 2dB of the transmitted optical signal. Moreover, the compensation technique will equally benefit 400 Gigabit or 1 Terabit channels, says Hoshida: “That is one of the virtues of the technology; it is not dependent on the modulation format or the bit rate.”

Fujitsu plans to extend the reach of its long-haul optical transmission systems using the technique. The 2dB equates to a 1.6x distance improvement. But, as Hoshida points out, this is the theoretical benefit. In practice, the benefit is less since a greater transmission distance means the signal passes through more amplifier and optical add-drop stages that introduce their own signal impairments.

Method used

Fujitsu Labs has implemented a two-stage filtering block. The first filter stage is linear and compensates for chromatic dispersion, while the second unit counteracts the fibre's non-linear effect on the optical signal. To achieve the required compensation, Fujitsu Labs uses multiple filter-stage blocks in cascade.

According to Hoshida, optical phase is rotated according to the optical power: “If the power is higher, the more phase rotation occurs – that is the non-linear effect in the fibre.” The effect is distributed, occurring along the length of the fibre, and is also coupled with chromatic dispersion. “Chromatic dispersion changes the optical intensity waveform, and that intensity waveform induces the non-linear effect,” says Hoshida. “Those two problems are coupled to each other so you have to solve both.”

Fujitsu tackles the problem by applying a filter stage to compensate for each optical span – the fibre segment between repeaters. For a terrestrial transmission system there can be as many as 20 or 30 such spans. “But [using a filter stage per span] is rather inefficient,” says Hoshida. By inserting a weighted-average technique, Fujitsu has reduced by a factor of four the filter stages needed.

Weighted-averaging is a filtering operation that smoothes the signal in the time domain. “It is not necessary to change the weights [of the filter] symbol-by-symbol; it is almost static,” says Hoshida. Changes do occur but infrequently, depending on the fibre’s condition such as changes in temperature, for example.

Fujitsu has been surprised that the weighted-averaging technique is so effective. The technique’s use and the subsequent 4x reduction in filter stages reduce by 70% the hardware needed to implement the compensation. The reason it is not the full 75% is that extra hardware for the weighted averaging must be added to each stage.

What next?

Fujitsu has demonstrated that the technique is technically feasible but practical issues remain such as power consumption. According to Hoshida, the power consumption is too high even using an advanced 40nm CMOS process, and will likely require a 28nm process. Fujitsu thus expects the technique to be deployed in commercial systems by 2015 at the latest.

There are also further optical performance improvements to be claimed, says Hoshida, by addressing cross-phase modulation. This is another non-linear effect where one lightpath affects the phase of another.

Fujitsu Labs has developed two algorithms to address cross-phase modulation which is a more challenging problem since it is modulation-dependent.

For a copy of Fujitsu’s ECOC 2010 slides, please click here.

Packet optical transport: Hollowing the network core

The platform enables a fully-meshed metropolitan network Intune Networks' CEO, Tim Fritzley (right) and John Dunne, co-founder and CTO with software support for web-based services, claims the Irish start-up.

Intune Networks' CEO, Tim Fritzley (right) and John Dunne, co-founder and CTO with software support for web-based services, claims the Irish start-up.

“What we have designed allows for the sharing of the same fibre switching assets across multiple services in the metro,” says Tim Fritzley, Intune’s CEO.

The company is in talks with several operators about its OPST system, which is being used for a nationwide network in Ireland. The system is also part of an EC seventh framework project that includes Spanish operator Telefónica.

OPST architecture

Intune’s OPST system, dubbed the Verisma iVX8000, uses dense wavelength division multiplexing (DWDM) technology but with a twist. Each wavelength is assigned to a particular destination port, over which the data is transmitted in bursts. The result is an architecture that uses both wavelength-division and time-division multiplexing.

To enable the approach, Intune has developed a control algorithm that can switch and lock a tunable laser’s wavelength “in nanoseconds”. Such rapid laser switching enables wavelength addressing - assigning a dedicated wavelength to each destination port.

As packets arrive at the iVX8000, they are ‘coloured’ and queued before being sent on the required wavelength to their destination. In effect packets are routed at the optical layer, in contrast to traditional systems where traffic is packed onto a lightpath that has a fixed predefined point-to-point optical path.

The packets are sent in bursts based on their class-of-service. Intune uses a proprietary framing scheme for transmission, with Ethernet frames restored at the destination. At the input port, all the packets are queued based on their wavelength and class-of-service. The scheduler, which composes the bursts, picks bits to transmit from the queues based on their class, with the bits sent without having to be aligned with a frame’s boundaries.

“Instead of assigning an electrical address to a fixed wavelength, we are assigning electrical addresses to dynamic wavelengths”

“Instead of assigning an electrical address to a fixed wavelength, we are assigning electrical addresses to dynamic wavelengths”

Tim Fritzley, Intune Networks

Intune also uses dynamic bandwidth allocation: any bandwidth unused by the higher classes of service is assigned to lower priority traffic. This achieves over 80 percent utilisation of the Ethernet switching and the fibre, says Fritzley.

“You are responding to the dynamic loading of the traffic as it comes in, on a destination-by-destination, colour-by-colour basis,” says Fritzley “Instead of assigning an electrical address to a fixed wavelength [as with traditional systems], we are assigning electrical addresses to dynamic wavelengths.”

The result is a fully meshed architecture with any transponder able to talk to any other transponder on the network, says Fritzley.

System’s span

The network architecture is arranged as a ring with up to a 300km span. The ring connects up to 16 iVX8000 nodes each comprising four 10 Gigabit-per-second (Gbps) ports and switching hardware. Each port is assigned a particular wavelength, equating to a total switch capacity of 640Gbps.

Intune has an 80-wavelength design even though only 64 are used. Indeed it uses two optical rings in parallel. The two rings run in opposite directions, providing optical protection for each port and effectively doubling overall capacity.

For the client side interfaces, the iVX8000 uses four 10 Gigabit Ethernet ports. Since transmissions are in bursts, multiple ports can transmit data to the same destination port even though they share the same wavelength.

The system’s 300km span is an artificial value set by Intune to guarantee “plug-and-play” performance. If the individual chassis are less than 65km apart and the total ring is 300km or under, Intune guarantees no DWDM engineering is required. “We auto-discover all the optical paths and nodes in the network; we automatically adjust all the amplification and set up the dispersion compensation,” says Fritzley. “This saves thousands of engineer-hours and truck rolls.”

Intune points out that it has engineered a 700km network but claims that for distances beyond 1,000km, point-to-point links connecting regions make more sense.

John Dunne, co-founder and CTO of Intune, claims the metro architecture simplifies networking greatly when connecting the network edge to the IP core. “It is different to what is there today because there are no routeing decisions to be made,” says Dunne. “All of the routes pre-exist, and that is because the tunable lasers contain all the colours of all the ports on the ring.”

As a result, setting up a flow of packets between the edge and core involves using a single interface to the ring. “You don’t have to talk to all the [ring’s] elements, you just talk to the ring,” says Dunne. “The ring is pre-engineered so it knows it’s a ring; it also knows how to guarantee the latency, the bandwidth, the jitter of any flow.”

This is the system’s main merit, says Dunne, the pre-engineered ring hides all the difficulty of building a control layer on top of a dynamic optical and layer-two switching system.

Bringing the web into the network

Intune realised that traditional telecom software would struggle to make best use of its distributed optical packet switch architecture. The company has adopted the representational state transfer (REST) software approach for its architecture instead.

“REST is the heart of web services,” says Fritzley. “The reason we did this is that there are hundreds of thousands of programmers that understand how to program it, so you are not into the arcane telecom languages of SNMP and TL1.” Adopting a 'RESTful' approach, claims Intune, reduces code development by 70 percent.

Moreover, REST by its nature is distributed such that it lends itself to supporting distributed transactions across Intune’s switch. “We have put a mini-http server on every card; we do not centralise control inside a node,” says Fritzley. “Every card peers with all of its peer-functions on the ring.”

In terms of the switch's operation, high-level XML commands are used instead of sending low-level instructions to numerous elements. “For example you ask the ring - set up this flow of packets with this bandwidth, this jitter and this delay,” says Dunne. “The ring replies that it can set this up and it performs the low-level stuff internal to the ring."

Such a capability will ultimately enable a machine to provision bandwidth for services, and enable machine-to-machine communications, says Intune. It will also enable third-party application developers to use the switch for service provisioning. This isn’t possible today because there is a lack of control, says Dunne.

“We have a full suite of XML-based interface commands,” he says. “All [the interface commands] would go to the carrier, the carrier would expose a subset to the Googles, the Googles would expose a subset to their application writers, and the application writers would expose a subset to the consumer.” Were the consumer to send a command to request some bandwidth, the call would be passed through the various layers directly into the switch, all in a controlled manner.

Provisioning of bandwidth in such an automated fashion is possible because Intune’s underlying network is bounded and predictable, says Dunne, with the optical path pre-engineered to work with the data path.

Meanwhile until XML becomes more commonplace, Intune uses a code translator that converts the XML code to SNMP or TL1 to interface to existing systems.

“The ring is pre-engineered so it knows it’s a ring; it also knows how to guarantee the latency, the bandwidth, the jitter of any flow”

“The ring is pre-engineered so it knows it’s a ring; it also knows how to guarantee the latency, the bandwidth, the jitter of any flow”

John Dunne, Intune Networks

Applications

The iVX8000 is being targetted at applications such as cloud computing services and the moving of virtualised environments between data centres. But the real target is using the platform to support multiple services – 3G and 4G wireless backhaul, on-demand IP TV as well as cloud. “No-one can do traffic planning anymore around such services,” says Fritzley.

The platform addresses what one large European operator calls ‘hollowing the core’. The operator wants to simplify its metro network by moving such networking elements as broadband remote access servers (BRASs) to the network edge. These will be connected using a simpler layer-two network that lessens the use of large, expensive IP core routers.”All the IP processing is on the edge and you go edge-to-edge on a flat layer two,” says Fritzley.

Market developments

Intune is using its system to enable the Exemplar network in Ireland. Backed by the Irish Government, the company’s systems will be used to build a nationwide network. The first phase involves a lab for application development and testing. So far 40 multi-nationals have signed up to use the network. Starting next year, a ring network will be up and running around Dublin to be followed with a nationwide roll-out in 2013.

The Irish start-up is also part of an EC Seventh Framework research project called MAINS. The project, which started in January, involves Telefónica which is using the iVX8000 to move virtualised resources between data centres depending on user demand and latency requirements. The project uses XML commands to call for bandwidth from the networking layer.

Meanwhile, Intune says that it is “deeply engaged” with four to five of the largest operators in North America and Europe.

ROADMs: reconfigurable but still not agile

Part 2: Wavelength provisioning and network restoration

How are operators using reconfigurable optical add-drop multiplexers (ROADMs) in their networks? And just how often are their networks reconfigured? gazettabyte spoke to AT&T and Verizon Business.

Operators rarely make grand statements about new developments or talk in terms that could be mistaken for hyperbole.

“You create new paths; the network is never finished”

“You create new paths; the network is never finished”

Glenn Wellbrock, Verizon Business

AT&T’s Jim King certainly does not. When questioned about the impact of new technologies, his answers are thoughtful and measured. Yet when it comes to developments at the photonic layer, and in particular next-generation reconfigurable optical add-drop multiplexers (ROADMs), his tone is unmistakable.

“We really are at the cusp of dramatic changes in the way transport is built and architected,” says King, executive director of new technology product development and engineering at AT&T Labs.

ROADMs are now deployed widely in operators’ networks.

AT&T’s ultra-long-haul network is all ROADM-based as are the operator’s various regional networks that bring traffic to its backbone network.

Verizon Business has over 2,000 ROADMs in its medium-haul metropolitan networks. “Everywhere we deploy FiOS [Verizon’s optical access broadband service] we put a ROADM node,” says Glenn Wellbrock, director of backbone network design at Verizon Business.

“FiOS requires a lot of bandwidth to a lot of central offices,” says Wellbrock. Whereas before, one or two OC-48 links may have been sufficient, now several 10 Gigabit-per-second (Gbps) links are needed, for redundancy and to meet broadcast video and video-on-demand requirements.

According to Infonetics Research, the optical networking equipment market has been growing at an annual compound rate of 8% since 2002 while ROADMs have grown at 46% annually between 2005 and 2009. Ovum, meanwhile, forecasts that the global ROADM market will reach US$7 billion in 2014.

While lacking a rigid definition, a ROADM refers to a telecom rack comprising optical switching blocks—wavelength-selective switches (WSSs) that connect lightpaths to fibres —optical amplifiers, optical channel monitors and control plane and management software. Some vendors also include optical transponders.

ROADMs benefit the operators’ networks by allowing wavelengths to remain in the optical domain, passing through intermediate locations without requiring the use of transponders and hence costly optical-electrical conversions. ROADMs also replace the previous arrangement of fixed optical add-drop multiplexers (OADMs), external optical patch panels and cabling.

Wellbrock estimates that with FiOS, ROADMs have halved costs. “Beforehand we used OADMs and SONET boxes,” he says. “Using ROADMs you can bypass any intermediate node; there is no SONET box and you save on back-to-back transponders.”

Verizon has deployed ROADMs from Tellabs, with its 7100 optical transport series, and Fujitsu with its 9500 packet optical networking platform. The first generation Verizon platform ROADMs are degree-4 with 100GHz dense wavelength division multiplexing (DWDM) channel spacings while the second generation platforms have degree-8 and 50GHz spacings. The degree of a WSS-enabled ROADM refers to the number of directions an optical lightpath can be routed.

Network adaptation

Wavelength provisioning and network restoration are the main two events requiring changes at the photonic layer.

Provisioning is used to deliver new bandwidth to a site or to accommodate changes in the network due to changing traffic patterns. Operators try and forecast demand in advance but inevitably lightpaths need to be moved to achieve more efficient network routing. “You create new paths; the network is never finished,” says Wellbrock.

“We want to move around those wavelengths just like we move around channels or customer VPN circuits in today’s world”

Jim King, AT&T Labs

In contrast, network restoration is all about resuming services after a transport fault occurs such as a site going offline after a fibre cut. Restoration differs from network protection that involves much faster service restoration – under 50 milliseconds – and is handled at the electrical layer.

If the fault can be fixed within a few hours and the operator’s service level agreement with a customer will not be breached, engineers are sent to fix the problem. If the fault is at a remote site and fixing it will take days, a restoration event is initiated to reroute the wavelength at the physical layer. But this is a highly manual process. A new wavelength and new direction need to be programmed and engineers are required at both route ends. As a result, established lightpaths are change infrequently, says Wellbrock.

At first sight this appears perplexing given the ‘R’ in ROADMs. Operators have also switched to using tunable transponders, another core component needed for dynamic optical networking.

But the issue is that when plugged into a ROADM, tunability is lost because the ROADM’s restricts the operating wavelength. The lightpath's direction is also fixed. “If you take a tunable transponder that can go anywhere and plug it into Port 2 facing west, say, that is the only place it can go at that [network] ingress point,” says Wellbrock.

When the wavelength passes through intermediate ROADM stages – and in the metro, for example, 10 to 20 ROADM stages can be encountered - the lightpath’s direction can at least be switched but the wavelength remains fixed. “At the intermediate points there is more flexibility, you can come in on the east and send it out west but you can’t change the wavelength; at the access point you can’t change either,” says Wellbrock.

“Should you not be able to move a wavelength deployed on one route onto another more efficiently? Heck, yes,” says King. “We want to move around those wavelengths just like we move around channels or customer VPN circuits in today’s world.”

Moving to a dynamic photonic layer is also a prerequisite for more advanced customer services. “If you want to do cloud computing but the infrastructure is [made up of] fixed ‘hard-wired’ connections, that is basically incompatible,” says King. “The Layer 1 cloud should be flexible and dynamic in order to enable a much richer set of customer applications.”]

To this aim operators are looking to next-generation WSS technology that will enable ROADMS to change a signal’s direction and wavelength. Known as colourless and directionless, these ROADMs will help enable automatic wavelength provisioning and automatic network restoration, circumventing manual servicing. To exploit such ROADMs, advances in control plane technology will be needed (to be discussed in Part 3) but the resulting capabilities will be significant.

“The ability to deploy an all-ROADM mesh network and remotely control it, to build what we need as we need it, and reconfigure it when needed, is a tremendously powerful vision,” says King.

When?

Verizon’s Wellbrock expects such next-generation ROADMs to be available by 2012. “That is when we will see next-generation long-haul systems,” he says, adding that the technology is available now but it is still to be integrated.

King is less willing to commit to a date and is cautious about some of the vendors’ claims. “People tell me 100Gbps is ready today,” he quipped.

Other sections of this briefing

Part 1: Still some way to go

Part 3: To efficiency and beyond

Still some way to go

Part 1: The vision .... back in 2000

I came across this article (below) on the intelligent all-optical network. I wrote it in 2000 while working at the EMAP magazine, Communications Week International, later to become Total Telecom.

What is striking is just how much of the vision of a dynamic photonic layer is still to be realised. Back then it had also been discussed for over a decade. And bandwidth management, like in 2000, is still largely at the electrical layer.

And yet much progress has been made in networking technology. But the way the network has evolved means that a more flexible photonic layer, while wanted by operators, is only one aspect of the network optimisation they seek to reduce the cost of transporting bits.

The second and third parts of the dynamic optical networking briefing will discuss how often operators reconfigure their networks and what is required, as well as developments in reconfigurable optical add-drop multiplexer (ROADM) and control plane technologies that promise to increase the flexibility of the photonic layer.

--+++--

Seeing the light (April 17th, 2000)

The next generation of networks is coming, with abundant bandwidth and flexible services available on-demand, and intelligent management and provisioning at the optical layer. Roy Rubenstein finds out what's in store and who's set fair in this optical future.

For all its air of novelty, all-optical networking is actually a mature idea. Discussed for the best part of a decade, all-optical networks have perennially promised to deliver the next generation of “intelligent” services, yet besides the stir caused by the arrival of dense wavelength division multiplexing (DWDM), forcing greater capacity over fiber networks, there has been little in the way of tangible development.

Now, with limited ceremony, optical networking is reasserting itself, and the signs are that you could reap the benefits sooner than you think.

What excites operators most is the prospect of bandwidth on demand: high-speed links set up with little more than a few mouse clicks. But the technology is creating dilemmas as well as opportunities. On the one hand, the newer operators can enter the market with a sleeker network - fewer layers and fewer nodes - accompanied by the latest billing and management software. On the other hand, incumbent operators are facing the dilemma of when to embrace the technology and how to integrate it with their legacy equipment.

“Most of the network planners agree this is the way to go,” says Barry Flanigan, senior consultant at Ovum Ltd., of London. "The question is the precise technology and timing."

Flexible bandwidth

Flexible bandwidth provisioning will enable a range of services that have not been practicable until now. For example, network planners in corporations will no longer have to guess - and live with the consequences - each time they budget their capacity requirements and agree horribly rigid contract terms.

In fact all manner of on-tap services become possible when bandwidth is set up and collapsed on an hourly or minute-by-minute basis. One example is bandwidth trading between carriers, enabling operators with their own networks to grab business such as voice services while demand is there, and off load capacity when it is not.

A further example is the broadcasting of sporting events. Instead of satellite coverage, a TV company could set up a cheaper terrestrial network link to each sporting venue, but provision capacity only for the duration of the event. And content providers can offer services locally. Opening pipes, a provider can download and store video on demand on a country-by-country basis ready for delivery, before closing the links.

“That way the service seems a lot quicker,” says Andy Wood, chief technology officer at Storm Telecommunications Ltd., based in London.

Adding intelligence

The key to this flexible bandwidth provisioning is optical switches, which introduce “intelligence” to the optical layer. An optical switch-whether electrically based or all-optical-routes complete wavelengths of light packed with up to 10 megabits of data.

“The scenario today is that bandwidth management is at the electrical layer,” says Richard Dade, director for industry liaison, optical networking group, at Lucent Technologies Inc., Murray Hill, New Jersey. “By the end of this year-2001 it will transition into the optical layer.”

This is also the view of Nick Critchell, product marketing manager for core optical internetworking products at San Jose, California-based Cisco Systems Inc. “Looking forward two years to the core routing, it will provide intelligent switching and intelligent restoration,” he says.

But others question the impact such technologies are having on the awareness of the underlying optical network. “Intelligence may be too strong a word for it,” says Dr. David Huber, chief executive of Corvis Corp., the Columbia, Maryland-based optical networking technology start-up.

What interests him is the sheer data traffic-handling capabilities--transporting terabits of data--and network efficiencies that all-optical switches promise. For example Huber predicts network utilization will exceed 80% using all optical switches. Current network utilization figures are below 50%.

When it comes to the operators, it seems the newer breed is keenest to embrace the technology. For them, adopting intelligent optical switching provides a simpler network, removing the need for Sonet/Synchronous Digital Hierarchy (SDH) transmission equipment. They also gain in reduced operating costs and system efficiencies through the use of the latest network operating system, billing and management systems.

Established operators, in contrast, have an enormous legacy of network equipment. “Different telecoms operators have different levels of awareness [in adopting intelligent optical networks],” is the view of Margaret Hopkins, principal analyst at Cambridge, England-based consultancy Analysys Ltd.

And Hopkins is quick to stress that whatever the merits of the latest optical switching, it will not cause more established technologies to disappear any time soon. “Sonet gives you very fast reconfiguration [if a fiber is cut],” says Hopkins, pointing out that optical networks have some way to go before assuming this role. “For other users, SDH performs functions such as mixing different types of traffic - pulse coded modulated voice and IP - on the one wavelength. This is important, because a wavelength is an awful lot of capacity."

Some way to go

The future of Sonet/SDH is also secure while voice-over-Internet protocol (VoIP) traffic remains low, particularly as a proportion of all voice traffic. “With voice on packet networks, growth has been modest from a European perspective,” says Eric Owen, London-based senior director for European telecommunications at International Data Corp., Framingham, Massachusetts. “When asked about VoIP - medium-to-large enterprises across Europe - only 4% to 5% are doing it,” he says.

But operators are aware that they cannot afford to ignore intelligent optical switches, and several are already trialing the technology including MCI WorldCom Inc. and Williams Communications Inc.

One next-generation carrier has been bolder still. “The first indication of an optical switching network is the announcement from Storm,” says Chris Lewis, managing director of research and consulting at the Yankee Group Europe, of Watford, England. “Storm is basing its case on being able to switch in bandwidth pretty quickly,” he adds.

Storm, a carriers’ carrier, announced last month that it has acquired $100 million-worth of dark fiber to which it will connect optical equipment from Chelmsford, Massachusetts-based Sycamore Networks Inc. “It's the first example we've come across of concrete plans,” says Lewis.

The significance of Storm's announcement is the promise of bandwidth on demand. Mark Stewart, Storm Telecom's business development director, says its network users - carriers, large corporations and Internet service providers - can have the bandwidth they require, with costs based on usage. “A customer may need five STM-1, 155-megabit-persecond links one week and nine the next,” says Stewart.