Infinera’s ICE6 sends 800 gigabits over a 950km link

Infinera has demonstrated the coherent transmission of an 800-gigabit signal across a 950km span of an operational network.

Infinera used its Infinite Capacity Engine 6 (ICE6), comprising an indium-phosphide photonic integrated circuit (PIC) and its FlexCoherent 6 coherent digital signal processor (DSP).

The ICE6 supports 1.6 terabits of traffic: two channels, each supporting up to 800-gigabit of data.

The trial, conducted over an unnamed operator’s network in North America, sent the 800-gigabit signal as an alien wavelength over a third-party line-system carrying live traffic.

“We have proved not only the state of our 800-gigabit with ICE6 but also the distances it can achieve,” says Robert Shore, senior vice president of marketing at Infinera.

800G trials

Several systems vendors have undertaken 800-gigabit optical trials.

Ciena detailed two demonstrations using its WaveLogic 5 Extreme (WL5e). One was an interoperability trial involving Verizon and Juniper Networks while the second connected two data centres belonging to the operator, Southern Cross Cable, to confirm the deployment of the WL5e cards in a live network environment.

Neither Ciena trial was designed to demonstrated WL5e’s limit of optical performance. Accordingly, no distances were quoted although both links were sub-100km, according to Ciena.

Meanwhile, Huawei has trialled its 800-gigabit technology in the networks of operators Turkcell and China Mobile.

The motivation for vendors to increase the speed of line-side optical transceivers is to reduce the cost of data transport. “One laser generating more data,” says Shore. “But it is not just high-speed transmissions, it is high-speed transmissions over distance.”

Infinera’s first 800-gigabit demonstration involved the ICE6 sending the signal over 800km of Corning’s TXF low-loss fibre.

“We did the demo on that fibre and we realised we had a ton of margin left over after completing the 800-gigabit circuit,” says Shore. The company then looked for a suitable network trial using standard optical fibre.

Infinera used a third-party’s optical line system to highlight that the 950km reach wasn’t due to a combination of the ICE6 module and the company’s own line system.

“What we have shown is that you can take any link anywhere, use anyone’s line system, carrying any kind of traffic, drop in the ICE6 and get 800-gigabit connections over 950km,” says Shore.

ICE 6

Infinera attributes the ICE6’s optical performance to its advanced coherent toolkit and the fact that the company has both photonics and coherent DSP technology, enabling their co-design to optimise the system’s performance.

One toolkit technique is Nyquist sub-carriers. Here, data is sent using several Nyquist sub-carriers across the channel instead of modulating the data onto a single carrier. The ICE6 is Infinera’s second-generation design to use sub-carriers, the first being ICE4, that doubles the number from four to eight.

The benefit of using sub-carriers is that high data rates can be achieved while the baud rate used for each one is much lower. And a lower baud rate is more tolerant to non-linear channel impairments during optical transmission.

Sub-carriers also improve spectral efficiency as the channels have sharper edges and can be packed tightly.

Infinera applies probabilistic constellation shaping to each sub-carrier, allowing fine-tuning of the data each carries. As a result, more data can be sent on the inner sub-carriers and less on the outer two outer sub-carrier where signal recovering is harder.

The sweet spot for sub-carriers is a symbol rate of 8-11 gigabaud (GBd). For the Infinera trial, eight sub-carriers were used, each at 12GBd, for an overall symbol rate of 96GBd.

“While it is best to stay as close to 8-11GBd, the coding gain you get as you go from 11GBd to 12GBd per sub-carrier is greater than the increased non-linear penalties,” says Shore.

Another feature of the coherent DSP is its use of soft-decision forward-error correction (SD-FEC) gain sharing. By sharing the FEC codes, processing resources can be shifted to one of the PIC’s two optical channels that needs it the most.

The result is that some of the strength of the stronger signal can be traded to bolster the weaker one, extending its reach or potentially allowing a higher modulation scheme to be used.

Applications

Linking data centres is one application where the ICE6 will be used. Another is sub-sea optical transmission involving spans that can be thousands of kilometres long, requiring lower modulation schemes and lower data rates.

“It’s not just cost-per-bit and power-per-bit, it is also spectral efficiency,” says Shore. “And a higher-performing optical signal can maintain a higher modulation rate over longer distances as well.”

Infinera says that at 600 gigabits-per-second (Gbps), link distances will be “significantly better” than 1,600km. The company is exploring suitable links to quantify ICE6’s reach at 600Gbps.

The ICE6 is packaged in a 5×7-inch optical module. Infinera’s Groove series will first adopt the ICE6 followed by the XTC platforms, part of the DTN-X series. First network deployments will occur in the second half of this year.

Infinera is also selling the ICE6 5×7-inch module to interested parties.

XR Optics

Infinera is not addressing the 400ZR coherent pluggable module market. The 400ZR is the OIF-defined 400-gigabit coherent standard developed to connect equipment in data centres up to 120km apart.

Infinera is, however, eyeing the emerging ZR+ opportunity using XR Optics. ZR+ is not a standard but it extends the features of 400ZR.

XR Optics is the brainchild of Infinera that is based on coherent sub-carriers. All the sub-carriers can be sent to the same destination for point-to-point links, but they can also be sent to different locations to allow for point-to-multipoint communications. Such an arrangement allows for traffic aggregation.

“You can steer all the sub-carriers coming out of an XR transceiver to the same destination to get a 400-gigabit point-to-point link to compete with ZR+,” says Shore. “And because we are using sub-carriers instead of a single carrier, we expect to get significantly better performance.”

Infinera is developing the coherent DSPs for XR Optics and has teamed up with optical module makers, Lumentum and II-VI.

Other unnamed partners have joined Infinera to bring the technology to market. Shore says that the partners include network operators that have contributed to the technology’s development.

Infinera planned to showcase XR Optics at the OFC conference and exhibition held recently in San Diego.

Shore says to expect XR Optics announcements in late summer, from Infinera and perhaps others. These will detail the XR Optics form factors and how they function as well as the products’ schedules.

Ciena launches the 8700 metro Ethernet-over-WDM platform

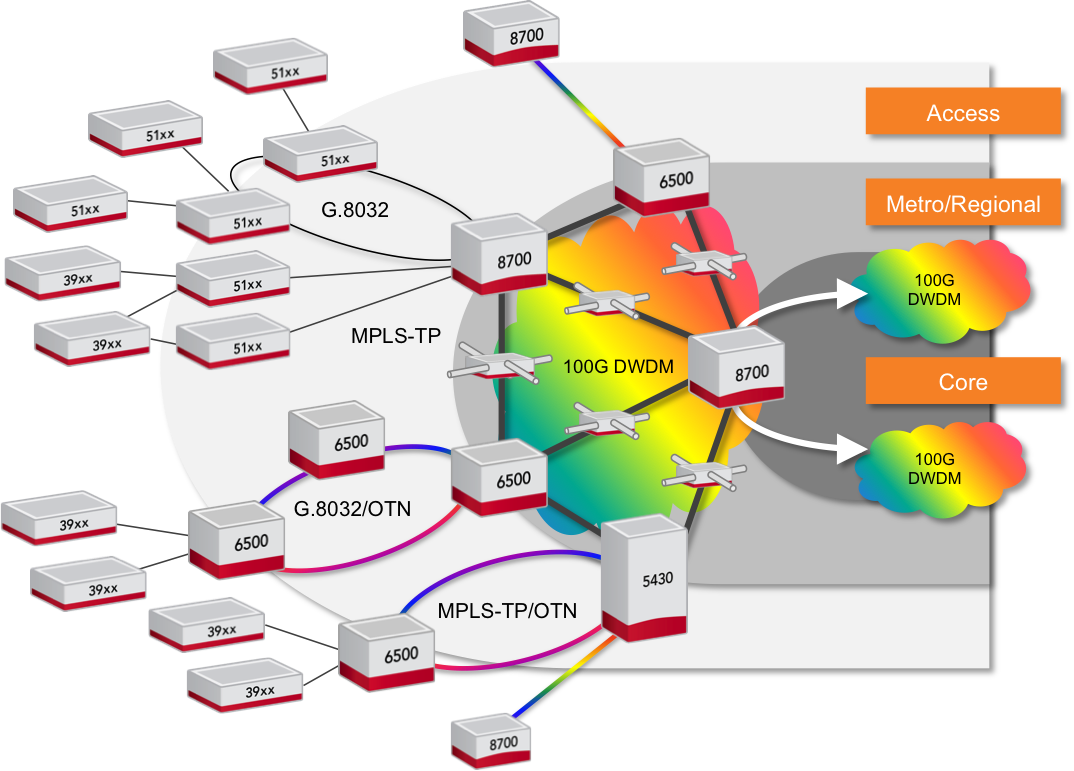

- The 8700 Packetwave is an Ethernet-over-DWDM platform

- The 8700 is claimed to deliver double the Ethernet density while halving the power consumption and space required.

Source: Ciena

Source: Ciena

Ciena has launched the 8700 Packetwave platform that combines high-capacity Ethernet switching with 100 Gigabit coherent optical transmission.

The platform is designed to cater for the significant growth in metro traffic, estimated at between 30 and 50 percent each year, and the ongoing shift from legacy to Ethernet services.

Brian Lavallée

Analysts predict that by 2017, 75 percent of the overall bandwidth in the metro will be Ethernet-based.

"In the major markets we compete in, our customers are telling us that Ethernet has already surpassed 75 percent," says Brian Lavallée, director of technology & solutions marketing at Ciena.

"What you're seeing is a trend towards convergence," says Ray Mota, managing partner at ACG Research. "The economics make sense and it should have happened but organisational issues have caused the delay."

Many service providers have two separate groups, one for packet and another for transport. "Now, many service providers are merging the two groups, feeling the time is right, so you will see more and more converge products get more penetration," says Mota.

The 8700 can be viewed as a slimmed-down packet-optical transport system (P-OTS), tailored for Ethernet. The platform's packet features include Ethernet and MPLS-TP for connection-oriented Ethernet, while optically it has 100 Gigabit coherent WDM.

"This is a more specialised machine hitting this target spot in the aggregation networks," says Michael Howard, co-founder and principal analyst, carrier networks at Infonetics Research. "It doesn't need much MPLS, it doesn’t need OTN switching, and it doesn’t need SDH/TDM."

The main applications for the 8700 include aggregation of telcos' business services, data centre interconnect, wireless backhaul, and the distribution of cable operators' Ethernet traffic. "It [the 8700] is a good product for edge aggregation, where the bandwidth is getting cranked up," says Howard. "I see it as an Ethernet-over-DWDM platform, performing the aggregation on the customer side and the fan-in on the upstream side."

Ray MotaTwo Packetwave platforms have been announced: an 800 Gigabit full-duplex switching capacity platform and a 2-Terabit one. The platform's line cards support 10, 40 and 100 Gigabit client-side interfaces while a line-side card has two 100 Gigabit coherent interfaces based on Ciena's WaveLogic DSP-ASIC technology.

Ciena says the platform will support double the capacity when it introduces WaveLogic devices that deliver 100 and 200 Gig rates. "It has been tested," says Lavallée. "It is just a matter of changing the cards."

The 8700 is claimed to deliver double the Ethernet density compared to competing platforms, while halving the power consumption and space required. "Given it is a new category of product, we don't have a direct competitor," says Lavallée. "But when we say half the power and space, that is the average across these multiple products from competitors."

Lavallée would not detail the competitor platforms used in the comparison but Mota cites Alcatel-Lucent's 7450 and 7950 platforms, Juniper's MX and PTX platforms and Cisco's ASR 9000 as the ones likely used.

Using merchant silicon for the Ethernet switching has helped achieve greater density, as has using Ciena's own WaveLogic DSP-ASIC. "The further development we have done on our [WaveLogic] coherent optical processor does give us significant savings, not just in power but also real-estate," says Lavallée.

Being a layer-2 platform, the 8700 has none of the packet processing and specialist memory hardware requirements associated with layer-3 IP routers, also benefitting the platform's overall power consumption.

Michael HowardCiena stresses that P-OTS is not going away and that it will continue to deliver significant value for certain customers. "The biggest concern of customers is complexity," says Lavallée. "There are a lot of ways of reducing complexity in your network and some customers believe that is Ethernet-over-dense WDM."

ACG's Mota sees the launch of the 8700 as an important move by Ciena. "The metro is the hot area that needs transitioning," he says. "Many of the traditional core requirements are moving to the metro so the timing of Ciena playing in this space with a converge platform could be strategic, providing they partner well with companies like Ericsson and the network functions virtualisation software providers."

Lavallée says that with the advent of software-defined networking and the applications that make use of the technology, there is an underlying shift from the hardware towards software. But he dismisses the notion that hardware is becoming less important.

"What is lost in this whole discussion is that if you don't have a programmable piece of hardware below, you can't write these apps," says Lavallée. The 8700 hardware is programmable and there are open interfaces to access it, he says: "We have a lot of knobs and switches that the software can use."

Further reading

Paper: Ciena 8700 Packetwave platform, click here

WDM and 100G: A Q&A with Infonetics' Andrew Schmitt

The WDM optical networking market grew 8 percent year-on-year, with spending on 100 Gigabit now accounting for a fifth of the WDM market. So claims the first quarter 2014 optical networking report from market research firm, Infonetics Research. Overall, the optical networking market was down 2 percent, due to the continuing decline of legacy SONET/SDH.

In a Q&A with Gazettabyte, Andrew Schmitt, principal analyst for optical at Infonetics Research, talks about the report's findings.

Q: Overall WDM optical spending was up 8% year-on-year: Is that in line with expectations?

Andrew Schmitt: It is roughly in line with the figures I use for trend growth but what is surprising is how there is no longer a fourth quarter capital expenditure flush in North America followed by a down year in the first quarter. This still happens in EMEA but spending in North America, particularly by the Tier-1 operators, is now less tied to calendar spending and more towards specific project timelines.

This has always been the case at the more competitive carriers. A good example of this was the big order Infinera got in Q1, 2014.

You refer to the growth in 100G in 2013 as breathtaking. Is this growth not to be expected as a new market hits its stride? Or does the growth signify something else?

I got a lot of pushback for aggressive 100G forecasts in 2010 and 2011 when everyone was talking about, and investing in, 40G. You can read a White Paper I wrote in early 2011 which turned out to be pretty accurate.

My call was based on the fact that, fundamentally, coherent 100G shouldn’t cost more than 40G, and that service providers would move rapidly to 100G. This is exactly what has happened, outside AT&T, NTT and China which did go big with 40G. But even my aggressive 100G forecasts in 2012 and 2013 were too conservative.

I have just raised my 2014 100G forecast after meeting with Chinese carriers and understanding their plans. 100G will essentially take over almost all of the new installations in the core by 2016, worldwide, and that is when metro 100G will start. But there is too much hype on metro 100G right now given the cost, but within two years the price will be right for volume deployment by service providers.

There is so much 'blah blah blah' about video but 90 percent is cacheable. Cloud storage is not

You say the lion's share of 100G revenue is going to five companies: Alcatel-Lucent, Ciena, Cisco, Huawei, and Infinera. Most of the companies are North American. Is the growth mainly due to the US market (besides Huawei, of course). And if so, is it due to Verizon, AT&T and Sprint preparing for growing LTE traffic? Or is the picture more complex with cable operators, internet exchanges and large data centre players also a significant part of the 100G story, as Infinera claims.

It’s a lot more complex than the typical smartphone plus video-bandwidth-tsunami narrative. Many people like to attach the wireless metaphor to any possible trend because it is the only area perceived as having revenue and profitability growth, and it has a really high growth rate. But something big growing at 35 percent adds more in a year than something small growing at 70 percent.

The reality is that wireless bandwidth, as a percentage of all traffic, is still small. 100G is being used for the long lines of the network today as a more efficient replacement for 10G and while good quantitative measures don’t exist, my gut tells me it is inter-data-centre traffic and consumer/ business to data centre traffic driving most of the network growth today.

I use cloud storage for my files. I’m a die-hard Quicken user with 15 years of data in my file. Every time I save that file, it is uploaded to the cloud – 100MB each time. The cloud provider probably shifts that around afterwards too. Apply this to a single enterprise user - think about how much data that is for just one person. There is so much 'blah blah blah' about video but 90 percent is cacheable. Cloud storage is not.

Each morning a hardware specialist must wake up and prove to the world that they still need to exist

Cisco is in this list yet does not seek much media attention about its 100G. Why is it doing well in the growing 100G market?

Cisco has a slice of customers that are fibre-poor who are always seeking more spectral efficiency. I also believe Cisco won a contract with Amazon in Q4, 2013, but hey, it’s not Google or Facebook so it doesn’t get the big press. But no one will dispute Amazon is the real king of public cloud computing right now.

You’ve got to do hard stuff that others can’t easily do or you are just a commodity provider

In the data centre world, there is a sense that the value of specialist hardware is diminishing as commodity platforms - servers and switches - take hold. The same trend is starting in telecoms with the advent of Network Functions Virtualisation (NFV) and software-defined networking (SDN). WDM is specialist hardware and will remain so. Can WDM vendors therefore expect healthy annual growth rates to continue for the rest of the decade?

I am not sure I agree.

There is no reason transport systems couldn’t be white-boxed just like other parts of the network. There is an over-reaction to the impact SDN will have on hardware but there have always been constant threats to the specialist.

Each morning a hardware specialist must wake up and prove to the world that they still need to exist. This is why you see continued hardware vertical integration by some optical companies; good examples are what Ciena has done with partners on intelligent Raman amplification or what Infinera has done building a tightly integrated offering around photonic-integrated circuits for cheap regeneration. Or Transmode which takes a hacker’s approach to optics to offer customers better solutions for specific category-killer applications like mobile backhaul. Or you swing to the other side of the barbell, and focus on software, which appears to be Cyan’s strategy.

You’ve got to do hard stuff that others can’t easily do or you are just a commodity provider. This is why Cisco and Intel are investing in silicon photonics – they can use this as an edge against commodity white-box assemblers and bare-metal suppliers.

Apps over packet-optical: Ciena boosts 6500's packet handling

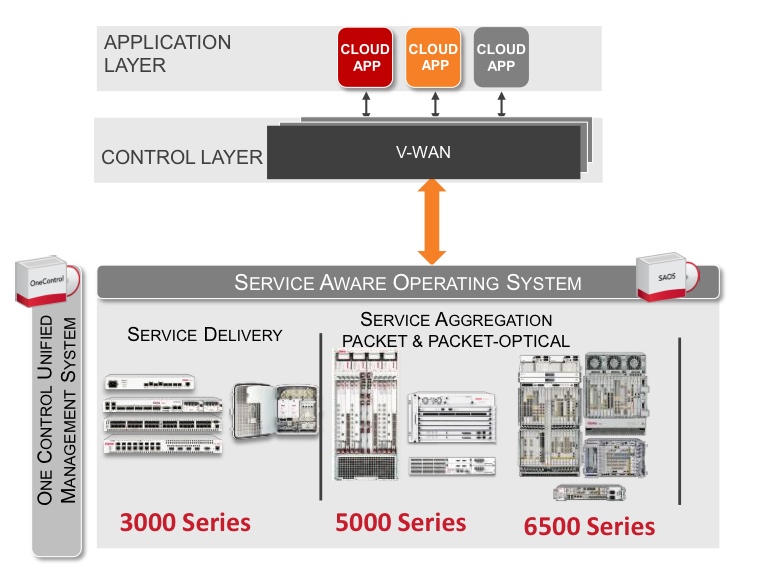

Source: Ciena

Source: Ciena

Ciena has enhanced its packet-optical equipment portfolio by adding packet support to its flagship 6500 platform.

Cards and software from Ciena's established Carrier Ethernet packet platforms have been added to the 6500, a packet-optical platform that features reconfigurable optical add-drop multiplexing (ROADM), WaveLogic3 coherent transponders, Optical Transport Network (OTN) switching and SONET/SDH aggregation. The system vendor has also developed packet aggregation and switch fabric cards for the 6500.

"You can now use the 6500 for 100 percent packet switching, 100 percent OTN switching, or any mix in between," says Michael Adams, vice president of product and technical marketing at Ciena.

The development is part of a general trend to combine optical and packet to create scalable, manageable networks. It also addresses the operators' growing need for programmable networks to deliver cloud-based services and dynamic bandwidth.

Applications

Ciena has a virtual wide-area network (VWAN) control layer that resides above the networking layer that abstracts the hardware and through which software applications can be executed (see chart).

"We have a scheduler 'app' through the control layer VWAN that allows bandwidth to change between sites, for example," says Adams. "Every night I want to do a backup between these times and I want this much bandwidth as I do it."

Another application is machine-to-machine communication that can be used to link data centres. "If you can virtualise within a data centre, why not virtualise across data centres?" says Adams.

As [servers'] virtual machines move between data centres, the performance of the network becomes key. Ciena has an application programming interface (API) that links to the server's hypervisor that allows machine-to-machine communication to be intercepted to benefit the bandwidth made available for the virtual machine traffic. "We are not doing it today but we have the software to link between two data centres," says Adams.

6500 enhancements

Until now it has been difficult to combine packet with packet optical, requiring different platforms, each with their own management system, says Adams. "It has been hard to take a base station that needs only packet, put the Carrier Ethernet traffic onto a ring [network] and then onto a 100 Gigabit wavelength," he says. "You either built pure packet or used a form of packet optical but it was hard to mix."

Ciena has added hardware and software to the 6500 from its existing packet platforms. The packet platforms are used to deliver Ethernet services and infrastructure and are a $40 million-a-quarter business for Ciena, with over 300,000 network elements deployed.

The service-aware operating system (SAOS), developed for the Ethernet packet platforms, has also been ported onto the 6500's new packet and fabric cards.

With the 6500 running the same software as its packet platforms, service management across the network becomes simpler. "Now, one system can deploy services, and look at performance visualisation between the layers," says Adams.

Ciena's latest hardware cards include blades with 1 and 10 Gigabit-per-second (Gbps) aggregation that operate independently of the 6500's switch fabric. "You don't touch the fabric, just run [them] over a WDM wavelength," says Adams. The stackable blades support 120Gbps to 300Gbps of packet traffic.

Meanwhile, the 6500 switch fabric cards add 600 Gigbit or 1.2 Terabit packet switching capacity that will be increased further in future.

"We have got these blades that can be stacked besides each other for resiliency or scale," says Adams. "And if you want to scale those up, there is a [switch] fabric solution."

Further reading:

100 Gigabit and packet optical loom large in the metro

P-OTS 2.0: 60-second interview with Heavy Reading's Sterling Perrin

ECI Telecom demos 100 Gigabit over 4,600km

- 4,600km optical transmission over submarine cable

- The Tera Santa Consortium, chaired by ECI, will show a 400 Gigabit/ 1 Terabit transceiver prototype in the summer

- 100 Gigabit direct-detection module on hold as the company eyes new technology developments

"When we started the project it was not clear whether the market would go for 400 Gig or 1 Terabit. Now it seems that the market will start with 400 Gig."

"When we started the project it was not clear whether the market would go for 400 Gig or 1 Terabit. Now it seems that the market will start with 400 Gig."

Jimmy Mizrahi, ECI Telecom

ECI Telecom has transmitted a 100 Gigabit signal over 4,600km without signal regeneration. Using Bezeq International's submarine cable between Israel and Italy, ECI sent the 100 Gigabit-per-second (Gbps) signal alongside live traffic. The Apollo optimised multi-layer transport (OMLT) platform was used, featuring a 5x7-inch MSA 100Gbps coherent module with soft-decision, forward error correction (SD-FEC).

"We set a target for the expected [optical] performance with our [module] partner and it was developed accordingly," says Jimmy Mizrahi, head of the optical networking line of business at ECI Telecom. "The [100Gbps] transceiver has superior performance; we have heard that from operators that have tested the module's capabilities and performance."

One geography that ECI serves is the former Soviet Union which has large-span networks and regions of older fibre.

Tera Santa Consortium

ECI used the Bezeq trial to also perform tests as part of the Tera Santa Consortium project involving Israeli optical companies and universities. The project is developing a transponder capable of 400 Gigabit and 1 Terabit rates. The project is funded by seven participating firms and the Israeli Government.

"When we started the project it was not clear whether the market would go for 400 Gig or 1 Terabit,” says Mizrahi. “Now it seems that the market will start with 400 Gig."

The Tera Santa Consortium expects to demonstrate a 1 Terabit prototype in August and is looking to extend the project a further three years.

100 Gigabit direct detection

In 2012 ECI announced it was working with chip company, MultiPhy, to develop a 100 Gigabit direct-detection module. The 100 Gigabit direct detection technology uses 4x28Gbps wavelengths and is a cheaper solution than 100Gbps coherent. The technology is aimed at short reach (up to 80km) links used to connect data centres, for example, and for metro applications.

“We have changed our priorities to speed up the [100Gbps] coherent solution,” says Mizrahi. “It [100Gbps direct detection] is still planned but has a lower priority.”

ECI says it is monitoring alternative technologies coming to market in the next year. “We are taking it slowly because we might jump to new technologies,” says Mizrahi. “The line cards will be ready, the decision will be whether to go for new technologies or for direct detection."

Mizrahi would not list the technologies but hinted they may enable cheaper coherent solutions. Such coherent modules would not need SD-FEC to meet the shorter reach, metro requirements. Such a module could also be pluggable, such as the CFP or even the CFP2, and use indium phosphide-based modulators.

“For certain customers pricing will always be the major issue,” says Mizrahi. “If you have a solution at half the price, they will take it.”

Cisco Systems demonstrates 100 Gigabit technologies

* Announces 100 Gigabit transmission over 4,800km

"CPAK helps accelerate the feasibility and cost points of deploying 100Gbps"

Stephen Liu, Cisco

Cisco Sytems has announced that its 100 Gigabit coherent module has achieved a reach of 4,800km without signal regeneration. The span was achieved in the lab and the system vendor intends to verify the span in a customer's network.

The optical transmission system achieved a reach of 3,000km over low-loss fibre when first announced in 2012. The extended reach is not a result of a design upgrade, rather the 100 Gigabit-per-second (Gbps) module is being used on a link with Raman amplification.

Cisco says it started shipping its 100Gbps coherent module in June 2012. "We have shipped over 2,000 100Gbps coherent dense WDM ports," says Sultan Dawood, marketing manager at Cisco. The 100Gbps ports include line-side 100Gbps interfaces integrated within Cisco's ONS 15454 multi-service transport platform and its CRS core router supporting its IP-over-DWDM elastic core architecture.

Cisco has also coupled the ASR 9922 series router to the ONS 15454. "We are extending what we have done for IP and optical convergence in the core," says Stephen Liu, director of market management at Cisco. "There is now a common solution to the [network] edge."

None of Cisco's customers has yet used 100Gbps over a 3,000km span, never mind 4,800km. But the reach achieved is an indicator of the optical transmission performance. "The [distance] performance is really a proxy for usefulness," says Liu. "If you take that 3,000km over low-loss fibre, what that buys you is essentially a greater degree of tolerance for existing fibre in the ground."

Much industry attention is being given to the next-generation transmission speeds of 400Gbps and one Terabit. This requires support for super-channels - multi-carrier signals to transmit 400Gbps and one Terabit as well as flexible spectrum to pack the multi-carrier signals efficiently across the fibre's spectrum. But Cisco argues that faster transmission is only one part of the engineering milestones to be achieved, especially when 100Gbps deployment is still in its infancy.

To benefit 100Gbps deployments, Cisco has officially announced its own CPAK 100Gbps client-side optical transceiver after discussing the technology over the last year. "CPAK helps accelerate the feasibility and cost points of deploying 100Gbps," says Liu.

CPAK

The CPAK is Cisco' first optical transceiver using silicon photonics technology following its acquisition of LightWire. The CPAK is a compact optical transceiver to replace the larger and more power hungry 100Gbps CFP interfaces.

The CPAK is being launched at the same time as many companies are announcing CFP2 multi-source agreement (MSA) optical transceiver products. Cisco stresses that the CPAK conforms to the IEEE 100GBASE-LR4 and -SR10 100Gbps standards. Indeed at OFC/NFOEC it is demonstrating the CPAK interfacing with a CFP2.

The CPAK will be used across several Cisco platforms but the first implementation is for the ONS 15454.

The CPAK transceiver will be generally available in the summer of 2013.

Fibre-to-the-NPU: optics reshapes the IP core router

Start-up Compass Electro-Optical Systems has announced an IP core router based on a chip with a Terabit-plus optical interface.

Asaf Somekh, vice president of marketing, showing Gazettabyte Compass-EOS's novel icPhotonics chip

Asaf Somekh, vice president of marketing, showing Gazettabyte Compass-EOS's novel icPhotonics chip

Having an optical interface linking directly to the chip, which includes a merchant network processor, simplifies the system design and enables router features such as real output queuing. The r10004 IP router is in production and is already deployed in an operator's network.

The company's icPhotonics chip integrates 168, 8 Gigabit VCSELs and 168 photodetectors for a bandwidth of 1.344 Terabit-per-second (Tbps) each direction. Eight of the chips are connected in a full mesh, doing away with the need for a router's switch fabric and mid-plane used to interconnect the router cards.

The resulting architecture saves power, space and cost, says Asaf Somekh, vice president of marketing at Compass-EOS. The start-up estimates that its platform's total cost of ownership over five years is a quarter to a third of existing IP core routers.

The high-bandwidth optical links will also be used to connect multiple platforms, enabling operators to add routing resources as required. Compass-EOS is coming to market with a 6U-high standalone platform but says it will scale up to 21 platforms to appear as one logical router.

The 800Gbps-capacity r10004 comes with 2x100 Gigabit-per-second (Gbps) and 20x10Gbps line cards options. The platform has real output queuing where all the input ports' packets are queued with quality of service applied prior to the exit port. The router also supports software-defined networking (SDN) that enables external control of traffic routing.

The company has its own clean room where it makes its optical interface. Compass-EOS has also developed its own ASICs and the router software for the r10004.

Somekh says developing the optical interface has been challenging, requiring years of development working with the Fraunhofer Institute and Tel-Aviv University. One challenge was developing a glue to fix the VCSELs on top of the silicon.

The start-up has raised US $120M with investors such as Cisco Systems, Deutsche Telekom and Comcast as well as several venture capitalist firms.

icPhotonics technology

Compass-EOS refers to its optical interface IC as silicon photonics but a more accurate description is integrated silicon-optics; silicon itself is not used as a medium for light. But its use of embedded optics to the chip has created a disruptive system.

The optical-interconnect addresses two chip design challenges: signal integrity for long transmission lengths and chip input/output (I/O).

With high-speed interfaces, achieving signal integrity across a high-speed line card and between boards is challenging. Routers use a midplane and switch fabric to connect the the router cards within a platform and parallel optics to connect chassis.

Compass-EOS has taken board-mounted optics one step further and integrated VCSELs and photodetectors to the packaged chip. This simplifies the platform by connecting cards using a mesh architecture, and allows scaling by linking systems.

The chip window shows the VCSELs and photodetectors Source: Compass-EOS

The chip window shows the VCSELs and photodetectors Source: Compass-EOS

The design also addresses chip I/O issues. "The I/O density is about 30x higher than traditional solutions and the gap will grow in future," says Somekh.

Directly attaching the optical interconnect to the CMOS chip overcomes limitations imposed by ball grid array and printed circuit board (PCB) technologies.

Typically data is routed from the host PCB to an ASIC via a ball grid array matrix which has a ball pitch of 0.8mm. Shrinking this further is non-trivial given PCB signal integrity issues. Moreover, each electrical serdes (serialiser/ deserialiser) for data I/O uses at least eight bumps (transmit, receive, signal and ground) occupying a cell of 3.2×1.6 mm. For a 10Gbps device the resulting duplex data density is 2Gbps/mm2, increasing to 5Gbps/mm2 if a 25Gbps device is used, according to Compass-EOS.

The start-up says its optical-interconnect achieves a chip I/O of 61Gbps/mm2. "This will increase to 243Gbps/mm2 once we move to 32Gbps."

The resulting design uses 10 percent of the total CMOS area for I/O. "This is a more efficient chip design," says Somekh. "Most of the silicon is used for logic tasks."

The serdes on chip still need to interface to hundreds of 8Gbps channels. And moving to 32Gbps will present a greater challenge. In comparison, silicon photonics promises to simplify the coupling of optics and electronics.

Another design challenge is that the VCSELs are co-packaged with a large chip consuming 30-50W and generating heat. The design needs to make sure that the operating temperature of the VCSELs is not affected by the heat from the chip.

This is another promised advantage of silicon photonics where the operating temperature of the optics and silicon are matched.

Analysts' perspective

Gazettabyte asked two analysts - IDC's Vernon Turner and ACG Research's Eve Griliches - about the significance of Compass-EOS's announcement. The analysts were also asked for their views on the router's modularity, the total cost of ownership claims, the support for SDN and real output queueing, and whether the platform will gain market share from the IP core router incumbents.

IDC

Vernon Turner, senior vice president & general manager enterprise computing, network, telecom, storage, consumer and infrastructure.

One of the hardest places to innovate in the ICT (information and communications technology) world is at or around the speed of light. Anytime you can make things run faster, the last hurdle tends to be the speed by which things travel over an optical network.

Therefore, to see something that changes the form factor of a network router and innovates at the interconnect speed, it may be able to disrupt a significant part of the network industry.

"Separating the interconnect with the physical building block is huge. It means that you scale the pieces that you need, when and where you want them; this is not just a repackaging announcement"

Building the capacity of a router as needed is great for service providers and large enterprises since you deploy capacity only as you need it. Second, by using a photonics interconnect, the speed and distance over which two devices can sit is enhanced greatly, changing the way one builds network infrastructures.

Separating the interconnect with the physical building block is huge. It means that you scale the pieces that you need, when and where you want them; this is not just a repackaging announcement.

Regarding the total-cost-of-ownership claims, if these are valid, they are of a magnitude that does fit into a 'disruptive innovation' class where it will deliver network services to an underserved market and create new network services markets.

SDN is the latest buzzword [regarding the router's support for SDN]. But it is the last part of the virtualised data centre as the compute and I/O have already been figured out. SDN is not new, but the need to separate the data plane from the control plane for the service provider industry means that they can begin to create network services through virtualisation without impacting the network performance, something that already happens in server and storage performance.

Existing core router vendors use their own ASIC designs as the last-stop differentiation, so to do this [as Compass-EOS has done] on merchant silicon could have wide implications on router commoditisation, or at least at a faster rate than current trends.

ACG Research

Eve Griliches, vice president of optical networking

As to the significance of the announcement, it is not huge in the scheme of things, but it does bring the optical component use of replacing a backplane to market earlier than what has been quoted to ACG Research.

"Virtual output queueing is a smart way to do quality of service"

In theory, the router should be a smaller footprint which results in better total cost of ownership due to the optical modules. The advantage with this optical patch-panel approach is that it allows a much higher bandwidth to cross the backplane which is now an optical interconnect. That means you don't have to do as much flow control, or drop as many packets, or keep the utilization of the router so low. You can bring up the utilisation rate from let's say 15 percent to maybe 25 percent or higher. All that results in lower total cost of ownership in theory.

SDN in a bit nebulous. Virtual output queueing is a smart way to do quality of service, but there are key software features like how many BGP (border gateway protocol) peers are supported, multicast capability, as well as signaling for MPLS (multiprotocol label switching), do they support RSVP-TE (resource reservation protocol - traffic engineering) or LDP (label distribution protocol)? Or both? Building a real router still takes years of work.

Faster interconnects are the way to go across routing and optical platforms, period. This [Compass-EOS platform] can help. Do I see this optical piece fitting nicely into an already existing router? Yes. I think if that doesn't happen, they will have a bit of an uphill battle nudging the incumbents.

On the other hand, if full router functionality is not needed at some junctures, as we've seen with the LSR (label switch router) technology, then they may have a place in the network. But operators don't like to play around with their routed network too much, so it may be greenfield application that are mostly available to them [Compass-EOS] initially.

Cisco Systems' intelligent light

Network optimisation continues to exercise operators and content service providers as their requirements evolve with the growth of services such as cloud computing. Cisco Systems' announced elastic core architecture aims to tackle networking efficiency and address particular service provider requirements.

“The core [network] needs to be more robust, agile and programmable”

Sultan Dawood, Cisco

“The core [network] needs to be more robust, agile and programmable – especially with the advent of cloud,” says Sultan Dawood, senior manager, service provider marketing at Cisco. “As service providers look at next-generation infrastructure, convergence of IP and optical is going to have a big play.”

Cisco's elastic core architecture combines several developments. One is the integration of Cisco's 100 Gigabit-per-second (Gbps) dense wavelength division multiplexing (DWDM) coherent transponder, first introduced on its ROADM platform, onto its router to enable IP-over-DWDM.

This is part of what Cisco calls nLight – intelligent light - which itself has three components: its 100Gbps coherent ASIC hardware, the nLight control plane and nLight colourless and contentionless ROADMs. “As packet and optical networks converge, intelligence between the layers is needed,” says Dawood. “Today how the ROADM and the router communicate is limited."

There is the GMPLS [Generalized Multi-Protocol Label Switching] layer working at the IP layer, and WSON [Wavelength Switched Optical Layer] working at the optical layer. These two protocols are doing control plane functions at each of their respective layers. "What nLight is doing is communicating between these two layers [using existing parameters] and providing the interaction," says Dawood.

Ron Kline, principal analyst for network infrastructure at Ovum, describes nLight more generally as Cisco’s strategy for software-defined networking: "Interworking control planes to share info across platforms and add the dynamic capabilities."

The second component of Cisco's announcement is an upgrade of its carrier-grade services engine, from 20Gbps to 80Gbps, that fits within Cisco's CSR-3 core router and will be available from May 2013. The services engine enables such services as IPv6 and 'cloud routing' - network positioning which determines the most suitable resource for a customer’s request based on the content’s location and the data centre's loading.

Cisco has also added anti distributed denial of service (anti-DDoS) software to counter cyber threats. “We have licensed software that we have put into our CRS-3 so that with our VPN services we can provide threat mitigation and scrub any traffic liable to hurt our customers,” says Dawood.

nLight

According to Cisco, several issues need to be addressed between the IP and optical layers. For example, how the router and the optical infrastructure exchange information like circuit ID, path identifiers and real-time information in order to avoid the manual intervention used currently.

“With this intelligent data that is extracted due to these layers communicating, I can now make better, faster decisions that result in rapid service provisioning and service delivery,” says Dawood.

Cisco cites as an example a financial customer requesting a low-latency path. In this case, the optical network comes back through this nLight extraction process and highlights the most appropriate path. That path has a circuit ID that is assigned to the customer. If the customer then comes back to request a second identical circuit, the network can make use of the existing intelligence to deliver a similar-specification circuit.

Such a framework avoids lengthy, manual interactions between the IP and transport departments of an operator required when setting up an IP VPN, for example. By exchanging data between layers, service providers can understand and improve their network topology in real-time, and be more dynamic in how they shift resources and do capacity planning in their network.

Service providers can also improve their protection and restoration schemes and also how they configure and provision services. Such capabilities will enable operators to be more efficient in the introduction and delivery of cloud and mobile services.

Total cost of ownership

Market research firm ACG Research has done a total cost of ownership (TCO) analysis of Cisco's elastic core architecture. It claims using nLight achieves up to a halving of the TCO of the optical and packet core networks in designs using protected wavelengths. It also avoids a 10% overestimation of required capacity.

Meanwhile, ACG claims an 18-month payback and 156% return on investment from a CRS CGSE service module with its anti‐DDoS service, and a 24% TCO savings from demand engineering with the improved placement of routes and cloud service workload location.

Cisco says its designed framework architecture is being promoted in the Internet Engineering Task Force (IETF). The company is also liaising with the International Telecommunication Union (ITU) and the Optical Internetworking Forum (OIF) where relevant.

Latest coherent ASICs set the bar for the optical industry

Feature: Beyond 100G - Part 3

Alcatel-Lucent has detailed its next-generation coherent ASIC that supports multiple modulation schemes and allow signals to scale to 400 Gigabit-per-second (Gbps).

The announcement follows Ciena's WaveLogic 3 coherent chipset that also trades capacity and reach by changing the modulation scheme.

"They [Ciena and Alcatel-Lucent] have set the bar for the rest of the industry," says Ron Kline, principal analyst for Ovum’s network infrastructure group.

"We will employ [the PSE] for all new solutions on 100 Gigabit"

"We will employ [the PSE] for all new solutions on 100 Gigabit"

Kevin Drury, Alcatel-Lucent

Photonic service engine

Dubbed the photonic service engine (PSE), Alcatel-Lucent's latest ASIC will be used in 100Gbps line cards that will come to market in the second half of 2012.

The PSE compromises coherent transmitter and receiver digital signal processors (DSPs) as well as soft-decision forward error correction (SD-FEC). The transmit DSP generates the various modulation schemes, and can perform waveform shaping to improve spectral efficiency. The coherent receiver DSP is used to compensate for fibre distortions and for signal recover.

The PSE follows Alcatel-Lucent's extended reach (XR) line card announced in December 2011 that extends its 100Gbps reach from 1,500 to 2,000km. "This [PSE] will be the chipset we will employ for all new solutions on 100 Gigabit," says Kevin Drury, director of optical marketing at Alcatel-Lucent. The PSE will extend 100Gbps reach to over 3,000km.

Ciena's WaveLogic 3 is a two-device chipset. Alcatel-Lucent has crammed the functionality onto a single device. But while the device is referred to as the 400 Gigabit PSE, two PSE ASICs are needed to implement a 400Gbps signal.

"They [Ciena and Alcatel-Lucent] have set the bar for the rest of the industry"

Ron Kline, Ovum

"There are customers that are curious and interested in trialling 400Gbps but we see equal, if not higher, importance in pushing 100Gbps limits," says Manish Gulyani, vice president, product marketing for Alcatel-Lucent's networks group.

In particular, the equipment maker has improved 100Gbps system density with a card that requires two slots instead of three, and extends reach by 1.5x using the PSE.

Performance

Alcatel-Lucent makes several claims about the performance enhancements using the PSE:

- Reach: The reach is extended by 1.5x.

- Line card density: At 100Gbps the improvement is 1.5x. The current 100Gbps muxponder (10x10Gbps client input) and transponder (100Gbps client) line card designs occupy three slots whereas the PSE design will occupy two slots only. Density will be improved by 4x by adopting a 400Gbps muxponder that occupies three slots.

- Power consumption: By going to a more advanced CMOS process and by enhancing the design of the chip architecture, the PSE consumes a third less power per Gigabit of transport: from 650mW/Gbps to 425mW/Gbps. Alcatel-Lucent is not saying what CMOS process technology is used for the PSE. The company's current 100Gbps silicon uses a 65nm process and analysts believe the PSE uses a 40nm process.

- System capacity: The channel width occupied by the signal can be reduced by a third. A 50GHz 100Gbps wavelength can be compressed to occupy a 37.5GHz. This would improve overall 100Gbps system capacity from 8.8 Terabit-per-second (Tbps) to 11.7Tbps. Overall capacity can be improved from 88, 100Gbps ports to 44, 400Gbps interfaces. That doubles system capacity to 17.6Tbps. Using waveform shaping, this is improved by a further third, to greater than 23Tbps.

"We are not saying we are breaking the 50GHz channel spacing today and going to a flexible grid, super-channel-type construct," says Drury. "But this chip is capable of doing just that." Alcatel-Lucent will at least double network capacity when its system adopts 44 wavelengths, each at 400Gbps.

400 Gigabit

To implement a 400Gbps signal, a dual-carrier, dual-polarisation 16-QAM coherent wavelength is used that occupies 100GHz (two 50GHz channels). Alcatel-Lucent says that should it commercialise 400Gbps using waveform shaping, the channel spacing would reduce to 75GHz. But this more efficient grid spacing only works alongside a flexible grid colourless, directionless and contentionless (CDC) ROADM architecture.

A 400Gbps PSE card showing four 100 Gigabit Ethernet client signals going out as a 400Gbps wavelength. The three-slot card is comprised of three daughter boards. Source: Alcatel-Lucent.

A 400Gbps PSE card showing four 100 Gigabit Ethernet client signals going out as a 400Gbps wavelength. The three-slot card is comprised of three daughter boards. Source: Alcatel-Lucent.

Alcatel-Lucent is not ready to disclose the reach performance it can achieve with the PSE using the various modulation schemes. But it does say the PSE supports dual-polarisation bipolar phase-shift keying (DP-BPSK) for longest reach spans, as well as quadrature phase-shift keying (DP-QPSK) and 16-QAM (quadrature amplitude modulation).

"[This ability] to go distances or to sacrifice reach to increase bandwidth, to go from 400km metro to trans-Pacific by tuning software, that is a big advantage," says Ovum's Kline. "You don't then need as many line cards and that reduces inventory."

Market status

Alcatel-Lucent says that it has 55 customers that have deployed over 1,450 100Gbps transponders.

A software release later this year for Alcatel-Lucent's 1830 Photonic Service Switch will enable the platform to support 100Gbps PSE cards.

A 400Gbps card will also be available this year for operators to trial.

Cisco Systems' 100 Gigabit spans metro to ultra long-haul

Cisco Systems has demonstrated 100 Gigabit transmission over a 3,000km span. The coherent-based system uses a single carrier in a 50GHz channel to transmit at 100 Gigabit-per-second (Gbps). According to Cisco, no Raman amplification or signal regeneration is needed to achieve the 3,000km reach.

Feature: Beyond 100G - Part 2

"The days of a single modulation scheme on a part are probably going to come to an end in the next two to three years"

Greg Nehib, Cisco

The 100Gbps design is also suited to metro networks. Cisco's design is compact to meet the more stringent price and power requirements of metro. The company says it can fit 42, 100Gbps transponders in its ONS 15454 Multi-service Transport Platform (MSTP), which is a 7-foot rack. "We think that is double the density of our nearest competitor today," claims Greg Nehib, product manager, marketing at Cisco Systems.

Also shown as part of the Cisco demonstration was the use of super-channels, multiple carriers that are combined to achieve 400 Gigabit or 1 Terabit signals.

Single-carrier 100 Gigabit

Several of the first-generation 100Gbps systems from equipment makers use two carriers (each carrying 50Gbps) in a 50GHz channel, and while such equipment requires lower-speed electronics, twice as many coherent transmitters and receivers are needed overall.

Alcatel-Lucent is one vendor that has a single-carrier 50GHz system and so has Huawei. Ciena via its Nortel acquisition offers a dual-carrier 100Gbps system, as does Infinera. With Ciena's announcement of its WaveLogic 3 chipset, it is now moving to a single-carrier solution. Now Cisco is entering the market with a single-carrier system.

"When you have a single carrier, you can get upwards of 96 channels of 100Gbps in the C-band," says Nehib. "The equation here is about price, performance, density and power."

What has been done

Cisco's 100Gbps design fits on a 1RU (rack unit) card and uses the first 100Gbps coherent receiver ASIC designed by the CoreOptics team acquired by Cisco in May 2010.

The demonstrated 3,000km reach was made using low-loss fibre. "This is to some degree a hero experiment," says Nehib. "We have achieved 3,000km with SMF ULL fibre from Corning; the LL is low loss." Normal fibre has a loss of 0.20-0.25dB/km while for ULL fibre it is in the 0.17dB/km range.

"You can do the maths and calculate the loss we are overcoming over 3,000km. We just want to signal that we have very good performance for ultra long-haul," says Nehib, who admits that results will vary in networks, depending on the fibre.

Nehib says Cisco's coherent receiver achieves a chromatic dispersion tolerance of 70,000 ps/nm and 100ps differential group delay. Differential group delay is a non-linear effect, says Nehib, that is overcome using the DSP-ASIC. The greater the group delay tolerance, the better the distance performance. These metrics, claims Cisco, are currently unmatched in the industry.

The company has not said what CMOS process it is using for its ASIC design. But this is not the main issue, says Nehib: "We are trying to develop a part that is small so that it fits in many different platforms, and we can now use a single part number to go from metro performance all the way to ultra long-haul."

Another factor that impacts span performance is the number of lit channels. Cisco, in the test performed by independent test lab EANTC, the European Advanced Network Test Center, used 70 wavelengths. "With 70 channels the performance would have been very close to what we would have achieved with [a full complement of] 80 channels," says Nehib.

Super-channels

A super-channel refers to a signal made up of several wavelengths. Infinera, with its DTN-X, uses a 500Gbps super-channel, comprising five 100Gbps wavelengths.

Using a super-channel, an operator can turn up multiple 100Gbps channels at once. If an operator wants to add a 100Gbps wavelength, a client interface is simply added to a spare 100Gbps wavelength making up the super-channel. In contrast turning up a 100Gbps wavelength in current systems usually requires several days of testing to ensure it can carry live traffic alongside existing links.

Another benefit of super-channels is scale by turning up multiple wavelengths simultaneously. As traffic grows so does the work load on operators' engineering teams. Super-channels aid efficiency.

"There is one other point that we hear quite often," says Nehib. "One other attraction of super-channels is overall spectral efficiency." The carriers that make up the signal can be packed more closely, expanding overall fibre capacity.

"Just like with 10 Gig, we think at some point in the future the 100 Gig network will be depleted, especially in the largest networks, and operators will be interested in 400 Gig and Terabit interfaces," says Nehib. "If that wavelength can further benefit from advanced modulation schemes and super-channels through flex[ible] spectrum deployment then you can get more total bandwidth on the fibre and better utilisation of your amplifiers."

Cisco's 100Gbps lab demonstration also showed 400 Gigabit and 1 Terabit super-channels, part of its research work with the Politechnico di Torino. "We are going to move on to other advanced modulation techniques and deliver 400 Gigabit and Terabit interfaces in future," says Nehib.

Existing 100Gbps systems use dual-polarisation, quadrature phase-shift keying (DP-QPSK). Using 16-QAM (quadrature amplitude modulation) at the same baud rate doubles the data rate. Using 16-QAM also benefits spectral utilisation. If the more intelligent modulation format is used in a super-channel format, and the signal is fitted in the most appropriate channel spacing using flexible spectrum ROADMs, overall capacity is increased. However, the spectral efficiency of 16-QAM comes at the expense of overall reach.

"You are able to best match the rate to the reach to the spectrum," says Nehib. "The days of a single modulation scheme on a part are probably going to come to an end in the next two to three years."

Cisco has yet to discuss the addition of a coherent transmitter DSP which through spectral shaping can bunch wavelengths. Such an approach has just been detailed by Ciena with its WaveLogic 3 and Alcatel-Lucent with its 400 Gig photonic service engine.

For the Terabit super-channel demonstration, Cisco used 16-QAM and a flexible spectrum multiplexer. "The demo that we showed is not necessarily indicative of the part we will bring to market," says Nehib, pointing out that it is still early in the development cycle. "We are looking at the spectral efficiency of super-channels, different modulation schemes, flex-spectrum multiplexer, availability, quality, loss etc.," says Nehib. "We have not made firm technology choices yet."

Cisco's 100Gbps system is in trials with some 40 customers and can be ordered now. The product will be generally available in the near future, it says.

Further reading:

Light Reading: EANTC's independent test of Cisco's CloudVerse architecture. Part 4: Long-haul optical transport