Nuage uses SDN to aid enterprise connectivity needs

"Across the WAN and out to the branch, the context is increasingly complicated, with the need to deliver legacy and cloud applications to users - and sometimes customers - that are increasingly mobile, spanning several networks," says Brad Casemore, research director, data centre networks at IDC. These networks can include MPLS, Metro Ethernet, broadband and 3G and 4G wireless.

The data centre is a great microcosm of the network - Houman Modarres

The data centre is a great microcosm of the network - Houman Modarres

At present, remote offices use custom equipment that require a visit from an engineer. In contrast, VNS uses SDN technology to deliver enterprise services to a generic box, or software that runs on the enterprise's server. The goal is to speed up the time it takes an enterprise to set up or change their business services at a remote site, while also simplifying the service provider's operations.

What has been done

Nuage designed its SDN-enabled connectivity products from the start for use in the data centre and beyond. "The data centre is a great microcosm of the network," says Modarres. "But we designed it in such a way that the end points could be flexible, within and across data centres but also anywhere."

Nuage uses open protocols like OpenFlow to enable the control plane to talk to any device, while its software agents that run on a server can work with any hypervisor. The control plane-based policies are downloaded to the end points via its SDN controller.

Using VNS, services can be installed without a visit from a specialist engineer. A user powers up the generic hardware or server and connects it to the network whereby policies are downloaded. The user enters a sent code that enables their privileges as defined by the enterprise's policies.

"Just as in the data centre, there is a real need for greater agility through automation, programmability, and orchestration," says IDC's Casemore. "One could even contend that for many enterprises, the pain is more acutely felt on the WAN, especially as they grapple with how to adapt to cloud and mobility."

Extending the connectivity end points beyond the data centre has required Nuage to bolster security and authentication procedures. Modarres points out that data centers and service provider central offices are secured environments; a remote office that could be a worker's home is not.

"You need to do authentication differently and IPsec connections are needed for security, but what if you unplug it? What if it is stolen?" he says. "If someone goes to the bank and steals a router, are they a bank branch now?"

To address this, once a remote office device is unplugged for a set time - typically several minutes - its configuration is reset. Equally, when a router is deliberated unplugged, for example during an office move, if notification is given, the user receives a new authentication code on the move's completion and the policies are restored.

Nuage's virtualised services platform comprise three elements: the virtualised services directory (VSD), virtualised services controller (VSC) - the SDN controller - and the virtual routing and switching module (VR&S).

"The only thing we are changing is the bottom layer, the network end point, which used to be in the data centre as the VR&S, and is now broken out of the data centre, as in the network services gateway, to be anywhere," says Modarres. "The network services gateway has physical and virtual form factors based on standard open compute."

Nuage is finding that businesses are benefitting from an SDN approach in surprising ways.

The company cites banks as an example that are forced by regulation to ensure that there are no security holes at their remote locations. One bank with 400 branches periodically sends individuals to each to check the configuration to ensure no human errors in its set-up could lead to a security flaw. With 400 branches, this procedure takes months and is costly.

With SDN and its policy-level view of all locations - what each site and what each group can do - there are predefined policy templates. There may be 10, 20 or 30 templates but they are finite, says Modarres: "At the push of a button, an organisation can check the templates, daily if needed".

This is not why a bank will adopt SDN, says Modarres, but the compliance department will be extremely encouraging for the technology to be used, especially when it saves the department millions of dollars in ensuring regulatory compliance.

Nuage Networks says it has 15 customer wins and 60 ongoing trials globally for its products. Customers that have been identified include healthcare provider UPMC, financial services provider BBVA, cloud provider Numergy, hosting provider OVH, infrastructure providers IDC Frontier and Evonet, and telecom providers TELUS and NTT Communications.

Netronome prepares cards for SDN acceleration

Source: Netronome

Source: Netronome

Netronome has unveiled 40 and 100 Gigabit Ethernet network interface cards (NICs) to accelerate data-plane tasks of software-defined networks.

Dubbed FlowNICs, the cards use Netronome's flagship NFP-6xxx network processor (NPU) and support open-source data centre technologies such as the OpenFlow protocol, the Open vSwitch virtual switch, and the OpenStack cloud computing platform.

Four FlowNIC cards have been announced with throughputs of 2x40 Gigabit Ethernet (GbE), 4 x 40GbE, 1 x 100GbE and 2 x 100GbE. The NICs use either two or four PCI Express 3.0 x8 lane interfaces to achieve the 100GbE and 200GbE throughputs.

"We are the first to provide these interface densities on an intelligent network interface card, built for SDN use-cases," says Robert Truesdell, product manager, software at Netronome. "We have taken everything that has been done in Open vSwitch that would traditionally run on an [Intel] x86-based server, and run it on our NFP-6xxx-based FlowNICs at a much faster rate."

The cards have accompanying software that supports OpenFlow and Open vSwitch. There are also two additional software packages: cloud services, which performs such tasks as implementing the tunnelling protocols used for network virtualisation, and cyber-security.

Implementing the switch and packet processing functions on a FlowNIC card instead of using a virtual switch frees up valuable computation resources on a server's CPUs, enabling data centre operators to better use their servers for revenue-generating tasks. "They are trying to extract the maximum revenue out of the server and that is what this buys," says Truesdell.

Designing the FlowNICs around a programable network processor has other advantages. "As standards evolve, that allows us to do a software revision rather than rely on a hardware or silicon revision," says Truesdell. The OpenFlow specification, for example, is revised every 6-12 months, he says, the most recent release being V1.4.

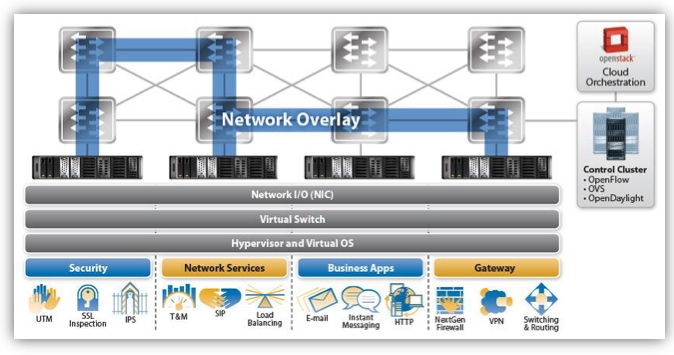

In the data centre, software-defined networking (SDN) uses a central controller to oversee the network connecting the servers. A server's NIC provides networking input/ output, while the server runs the software-based virtualised switch and the hypervisor that supports virtual machines and operating systems on which the applications run. Applications include security tasks such as SSL inspection, intrusion detection and intrusion prevention systems; load balancing; business applications like email, the hypertext transfer protocol and instant messaging; and gateway functions such as firewalls and virtual priviate networks (VPNs).

We have taken everything that has been done in Open vSwitch that would traditionally run on an x86-based server, and run it on our NFP-6xxx-based FlowNICs at a much faster rate

With Open vSwitch running on a server, the SDN protocols such as OpenFlow pass communications between the data plane device - either the softswitch or the FlowNIC - and the controller. "By supporting Open vSwitch and OpenFlow, it allows us to inherit compatibility with the [SDN] controller and orchestration layers," says Truesdell. The FlowNIC is compatible with controllers from OpenDaylight, Ryu and VMWare's NFX network virtualisation system, as well as orchestration platforms like OpenStack.

The virtual switch performs two main tasks. It inspects packet headers and passes the traffic to the appropriate virtual machines. The virtual switch also implements network virtualisation: setting up network overlays between servers for virtual machines to talk to each other.

Packet encapsulation and unwrapping performance required for network virtualisation tops out when implemented using the virtual switch, such that the throughput suffers. Moreover, packet header inspections can be nested if, for example, encryption and network virtualisation are used, further impacting performance. "The throughput rates can really suffer on a server because it is not optimised for this type of workload," says Truesdell.

Performing the tasks using the FlowNIC's network processor enables packet processing to keep up with the line rate including for the most demanding, shortest packet sizes. The issue with packet size is that the shorter the packet (the smaller the data payload), the more frequent the header inspections. "Data centre traffic is very bursty," says Truesdell. "These are not long-lived flows - they have high connection rates - and this drives the packet sizes down."

For a 10 Gigabit stream performing the Network Virtualization using Generic Routing Encapsulation (NVGRE) protocol, the forwarding throughput is at line rate for all packet sizes using the company's existing FlowNIC acceleration cards, based on its previous generation NFP-3240 network processor.

In contrast, NVGRE performance using Open vSwitch on the server is at 9Gbps for lengthy 1,500-byte packets and drops continually to 0.5Gbps for 64-byte packets. The average packet length is around 400 bytes, says Truesdell.

Overall, Netronome claims that using its FlowNICs, virtualised networking functions and server applications are boosted by over twentyfold compared to using the virtual switch on the server's CPU.

Netronome's FlowNIC-32xx cards are already used by one Tier 1 operator to perform gateway functions. The gateway, overseen using a Ryu controller running OpenFlow, translates between multi-tenant IP virtual LANs in the data centre and MPLS-based VPNs that connect the operator's enterprise customers.

The NFP-6xxx-based FlowNICs will be available for early access partners later this quarter. FlowNIC customers include data centre equipment makers, original design manufacturers and the largest content service providers - the 'internet juggernauts' - that operate hyper-scale data centres.

For an article written for Fibre Systems on network virtualisation and data centre trends, click here

SDN starts to fulfill its network optimisation promise

Infinera, Brocade and ESnet demonstrate the use of software-defined networking to provision and optimise traffic across several networking layers.

Infinera, Brocade and network operator ESnet are claiming a first in demonstrating software-defined networking (SDN) performing network provisioning and optimisation using platforms from more than one vendor.

Mike Capuano, Infinera

Mike Capuano, Infinera

The latest collaboration is one of several involving optical vendors that are working to extend SDN to the WAN. ADVA Optical Networking and IBM are working to use SDN to connect data centres, while Ciena and partners have created a test bed to develop SDN technology for the WAN.

The latest lab-based demonstration uses ESnet's circuit reservation platform that requests network resources via an SDN controller. ESnet, the US Department of Energy's Energy Sciences Network, conducts networking R&D and operates a large 100 Gigabit network linking research centres and universities. The SDN controller, the open source Floodlight Project design, oversees the network comprising Brocade's 100 Gigabit MLXe IP router and Infinera's DTN-X platform.

The goal of provisioning and optimising traffic across the routing, switching and optical layers has been a work in progress for over a decade. System vendors have undertaken initiatives such as External Network-Network Interface (ENNI) and multi-domain GMPLS but with limited success. "They have been talked about, experimented with, but have never really made it out of the labs," says Mike Capuano, vice president of corporate marketing at Infinera. "SDN has the opportunity to solve this problem for real."

"In the world of Web 2.0, the general approach is not to sit and wait till standards are done, but to prototype, test, find the gaps, report back, and do it again"

"SDN, and technologies like the OpenFlow protocol, allow all of the resources of the entire network to be abstracted to this higher level control," says Daniel Williams, director of product marketing for data center and service provider routing at Brocade.

Daniel William, BrocadeInfinera and ESnet demonstrated OpenFlow provisioning transport resources a year ago. This latest demonstration has OpenFlow provisioning at the packet and optical layers and performing network optimisation. "We have added more carrier-grade capabilities," says Capuano. "Not just provisioning, but now we have topology discovery and network configuration."

Daniel William, BrocadeInfinera and ESnet demonstrated OpenFlow provisioning transport resources a year ago. This latest demonstration has OpenFlow provisioning at the packet and optical layers and performing network optimisation. "We have added more carrier-grade capabilities," says Capuano. "Not just provisioning, but now we have topology discovery and network configuration."

“The demonstration is a positive step in the development of SDN because it showcases the multi-layer transport provisioning and management that many operators consider the prime use case for transport SDN,” says Rick Talbot, principal analyst, optical infrastructure at Current Analysis. "The demonstration’s real-time network optimisation is an excellent example of the potential benefits of transport SDN, leveraging SDN to minimise transit traffic carried at the router layer, saving both CapEx and OpEx."

Using such an SDN setup, service providers can request high-bandwidth links to meet specific networking requirements. "There can be a request from a [software] app: 'I need a 80 Gigabit flow for two days from Switzerland to California with a 95ms latency and zero packet loss'," says Capuano. "The fact that the network has the facility to set that service up and deliver on those parameters automatically is a huge saving."

Such a link can be established the same day of the request being made, even within minutes. Traditionally, such requests involving the IP and optical layers - and different organisations within a service provider - can take weeks to fulfill, says Infinera.

Current Analysis also highlights another potential benefit of the demonstration: how the control of separate domains - the Infinera wavelength and TDM domain and the Brocade layer 2/3 domain - with a common controller illustrates how SDN can provide end-to-end multi-operator, multi-vendor control of connections.

What next

The Open Networking Foundation (ONF) has an Optical Transport Working Group that is tasked with developing OpenFlow extensions to enable SDN control beyond the packet layer to include optical.

How is the optical layer in the demonstration controlled given the ONF work is unfinished?

"Our solution leverages Web 2.0 protocols like RESTful and JSON integrated into the Open Transport Switch [application] that runs on the DTN-X," says Capuano. "In the world of Web 2.0, the general approach is not to sit and wait till standards are done, but to prototype, test, find the gaps, report back, and do it again."

Further work is needed before the demonstration system is robust enough for commercial deployment.

"This is going to take some time: 2014 is the year of test and trials in the carrier WAN while 2015 is when you will see production deployment," says Capuano. "If service providers are making decision on what platforms they want to deploy, it is important to chose ones that are going to position them well to move to SDN when the time comes."

OIF defines carrier requirements for SDN

The Optical Internetworking Forum (OIF) has achieved its first milestone in defining the carrier requirements for software-defined networking (SDN).

The orchestration layer will coordinate the data centre and transport network activities and give easy access to new applications

Hans-Martin Foisel, OIF

The OIF's Carrier Working Group has begun the next stage, a framework document, to identify missing functionalities required to fulfill the carriers' SDN requirements. "The framework document should define the gaps we have to bridge with new specifications," says Hans-Martin Foisel of Deutsche Telekom, and chair of the OIF working group.

There are three main reasons why operators are interested in SDN, says Foisel. SDN offers a way for carriers to optimise their networks more comprehensively than before; not just the network but also processing and storage within the data centre.

"IP-based services and networks are making intensive use of applications and functionalities residing in the data centre - they are determining our traffic matrix," says Foisel. The data centre and transport network need to be coordinated and SDN can determine how best to distribute processing, storage and networking functionality, he says.

SDN also promises to simplify operators' operational support systems (OSS) software, and separate the network's management, control and data planes to achieve new efficiencies.

SDN architecture

The OIF's focus is on Transport SDN, involving the management, control and data plane layers of the network. Also included is an orchestration layer that will sit above the data centre and transport network, overseeing the two domains. Applications then reside on top of the orchestration layer, communicating with it and the underlying infrastructure via a programmable interface.

"Aligning the thinking among different people is quite an educational exercise, and we will have to get to a new understanding"

"The orchestration layer will coordinate the data centre and transport network activities and give, northbound, easy access to new applications," says Foisel.

A key SDN concept is programmability and application awareness, he says. The orchestration layer will require specified interfaces to ease the adding of applications independent of whether they impact the data centre, transport network or both.

Foisel says the OIF work has already highlighted the breadth of vision within the industry regarding how SDN should look. "Aligning the thinking among different people is quite an educational exercise, and we will have to get to a new understanding," he says.

Having equipment prototypes is also helping in understanding SDN. "Implementations that show part of this big picture - it is doable, it is working and how it is working - is quite helpful," says Foisel.

The OIF Carrier Working Group is working closely with the Open Networking Foundation's (ONF) Optical Transport Working Group to ensure that the two group are aligned. The ONF's Optical Transport Group is developing optical extensions to the OpenFlow standard.

Nuage Networks uses SDN to tackle data centre networking bottlenecks

Three planes of the network that host Nuage's .Virtualised Services Platform (VSP). Source: Nuage Networks

Three planes of the network that host Nuage's .Virtualised Services Platform (VSP). Source: Nuage Networks

Alcatel-Lucent has set up Nuage Networks, a business venture addressing networking bottlenecks within and between data centres.

The internal start-up combines staff with networking and IT skills include web-scale services. "You can't solve new problems with old thinking," says Houman Modarres, senior director product marketing at Nuage Networks. Another benefit of the adopted business model is that Nuage benefits from Alcatel-Lucent's software intellectual property.

"It [the Nuage platform] is a good approach. It should scale well, integrate with the wide area network (WAN) and provide agility"

Joe Skorupa, Gartner

Network bottlenecks

Networking in the data centre connects computing and storage resources. Servers and storage have already largely adopted virtualisation such that networking has now become the bottleneck. Virtual machines on servers running applications can be enabled within seconds or minutes but may have to wait days before network connectivity is established, says Modarres.

Nuage has developed its Virtualised Services Platform (VSP) software, designed to solve two networking constraints.

"We are making the network instantiation automated and instantaneous rather than slow, cumbersome, complex and manual," says Modarres. "And rather than optimise locally, such as parts of the data centre like zones or clusters, we are making it boundless."

"It [the Nuage platform] is a good approach," says Joe Skorupa, vice president distinguished analyst, data centre convergence, data centre, at Gartner. "It should scale well, integrate with the wide area network (WAN) and provide agility."

Resources to be connected can now reside anywhere: within the data centre, and between data centres, including connecting the public cloud to an enterprise's own private data centre. Moreover, removing restrictions as to where the resources are located boosts efficiency.

"Even in cloud data centres, server utilisation is 30 percent or less," says Modarres. "And these guys spend about 60 percent of their capital expenditure on servers."

It is not that the hypervisor, used for server virtualisation, is inefficient, stresses Modarres: "It is just that when the network gets in the way, it is not worthwhile to wait for stuff; you become more wasteful in your placement of workloads as their mobility is limited."

"A lot of money is wasted on servers and networking infrastructure because the network is getting in the way"

Houman Modarres, Nuage Networks

SDN and the Virtualised Services Platform

Nuage's Virtualised Services Platform (VSP) uses software-defined networking (SDN) to optimise network connectivity and instantiation for cloud applications.

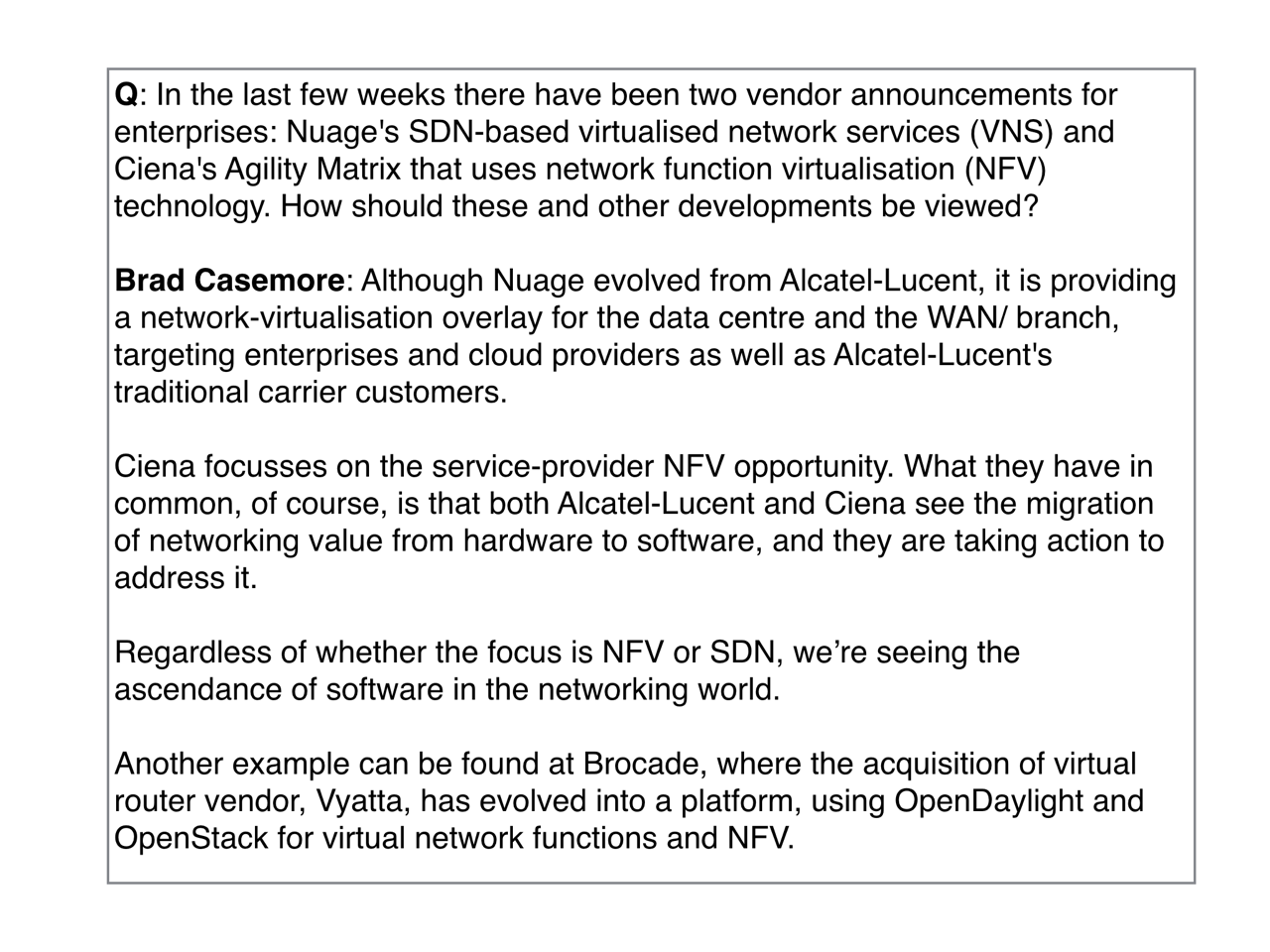

The VSP comprises three elements:

- the Virtualised Services Directory,

- the Virtualised Services Controller,

- and the Virtual Routing & Switching module.

The elements each reside at a different network layer, as shown (see chart, top).

The top layer, the cloud services management plane, houses the Virtualised Services Directory (VSD). The VSD is a policy and analytics engine that allows the cloud service provider to partition the network for each customer or group of tenants.

"Each of them get their zones for which they can place their applications and put [rules-based] permissions as to whom can use what, and who can talk to whom," says Modarres. "They do that in user-friendly terms like application containers, domains and zones for the different groups."

Domains and zones are how an IT administrator views the data centre, explains Modarres: "They don't need to worry about VLANs, IP addresses, Quality of Service policies and access control lists; the network maps that through its abstraction." The policies defined and implemented by the VSD are then adopted automatically when new users join.

The layer below the cloud services management plane is the data centre control plane. This is where the second platform element, the Virtualised Services Controller (VSC), sits. The VSC is the SDN controller: the control element that communicates with the data plane using the OpenFlow open standard.

The third element, the Virtual Routing & Switching module (VRS), sits in the data path, enabling the virtual machines to communicate to enable applications rapidly. The VRS sits on the hypervisor of each server. When a virtual machine gets instantiated, it is detected by the VRS which polls the SDN controller to see if a policy has already been set up for the tenant and the particular application. If a policy has been set up, the connectivity is immediate. Moreover, this connectivity is not confined to a single data centre zone but the whole data centre and even across data centres.

More than one data centre is involved for disaster recovery scenarios, for example. Another example involving more than one data centre is to boost overall efficiency. This is enhanced by enabling spare resources in other data centres to be used by applications as appropriate.

Meanwhile, the linking to an enterprise's own data centre is done using a virtual private network (VPN), bridging a private data centre with the public cloud. "We are the first to do this," says Modarres.

The VSP works with whatever server, hypervisor, networking equipment and cloud management platform is used in a data centre. The SDN controller is based on the same operating system that is used in Alcatel-Lucent's IP routers that supports a wealth of protocols. Meanwhile, the virtual switch in the VRS integrates with various hypervisors on the market, ensuring interoperability.

Nuage's Dimitri Stiliadis, chief architect at Nuage Networks, describes its VSP architecture as a distributed implementation of the functions performed by its router products.

The control plane of the router is effectively moved to the SDN controller. The router's 'line cards' become the virtual switches in the hypervisors. "OpenFlow is the protocol that allows our controller to talk to the line cards," says Stiliadis. "While the border gateway protocol (BGP) is the protocol that allows our controller to talk to other controllers in the rest of the network."

Michael Howard, principal analyst, carrier networks at Infonetics Research, says there are several noteworthy aspects to Nuage's product including the fact that operators participated at the company's launch and that the software is not tied to Alcatel-Lucent's routers but will run over other vendors' equipment.

"It also uses BGP, as other vendors are proposing, to tie together data centres and the carrier WAN," says Howard. "Several big operators say BGP is a good approach to integrate data centres and carrier WANs, including AT&T and Orange."

Nuage says that trials of its VSP began in April. The European and North America trial partners include UK cloud service provider Exponential-e, French telecoms service provider SFR, Canadian telecoms service provider TELUS and US healthcare provider, the University of Pittsburgh Medical Center (UPMC). The product will be generally available from mid-2013.

"There are other key use cases targeted for SDN that are not data centre related: content delivery networks, Evolved Packet Core, IP Multimedia Subsystem, service-chaining and cloudbox"

Michael Howard, Infonetics Research

Challenges

The industry analysts highlight that this market is still in its infancy and that challenges remain.

Gartner's Skorupa points out that the data centre orchestration systems still need to be integrated and that there is a need for cheaper, simpler hardware.

"Many vendors have proposed solutions but the market is in its infancy and customer acceptance and adoption is still unknown," says Skorupa.

Infonetics highlights dynamic bandwidth as a key use case for SDNs and in particularly between data centres.

"There are other key use cases targeted for SDN that are not data centre related: content delivery networks, Evolved Packet Core, IP Multimedia Subsystem, service-chaining and cloudbox," says Howard.

Cloudbox is a concept being developed by operators where an intelligent general purpose box is placed at a customer's location. The box works in conjunction with server-based network functions delivered via the network, although some application software will also run on the box.

Customers will sign up for different service packages out of firewall, intrusion detection system (IDS), parental control, turbo button bandwidth bursting etc., says Howard. Each customer's traffic is guided by the SDNs and uses Network Functions Virtualisation - those network functions such as a firewall or IDS formerly in individual equipment - such that the services subscribed to by a user are 'chained' using SDN software.

OFC/NFOEC 2013 industry reflections - Final part

Gazettabyte spoke with Jörg-Peter Elbers, vice president, advanced technology at ADVA Optical Networking about the state of the optical industry following the recent OFC/NFOEC exhibition.

"There were many people in the OFC workshops talking about getting rid of pluggability and the cages and getting the stuff mounted on the printed circuit board instead, as a cheaper, more scalable approach"

Jörg-Peter Elbers, ADVA Optical Networking

Q: What was noteworthy at the show?

A: There were three big themes and a couple of additional ones that were evolutionary. The headlines I heard most were software-defined networking (SDN), Network Functions Virtualisation (NFV) and silicon photonics.

Other themes include what needs to be done for next-generation data centres to drive greater capacity interconnect and switching, and how do we go beyond 100 Gig and whether flexible grid is required or not?

The consensus is that flex grid is needed if we want to go to 400 Gig and one Terabit. Flex grid gives us the capability to form bigger pipes and get those chunks of signals through the network. But equally it allows not only one interface to transport 400 Gig or 1 Terabit as one chunk of spectrum, but also the possibility to slice and dice the signal so that it can use holes in the network, similar to what radio does.

With the radio spectrum, you allocate slices to establish a communication link. In optics, you have the optical fibre spectrum and you want to get the capacity between Point A and Point B. You look at the spectrum, where the holes [spectrum gaps] are, and then shape the signal - think of it as software-defined optics - to fit into those holes.

There is a lot of SDN activity. People are thinking about what it means, and there were lots of announcements, experiments and demonstrations.

At the same time as OFC/NFOEC, the Open Networking Foundation agreed to found an optical transport work group to come up with OpenFlow extensions for optical transport connectivity. At the show, people were looking into use cases, the respective technology and what is required to make this happen.

SDN starts at the packet layer but there is value in providing big pipes for bandwidth-on-demand. Clearly with cloud computing and cloud data centres, people are moving from a localised model to a cloud one, and this adds merit to the bandwidth-on-demand scenario.

This is probably the biggest use case for extending SDN into the optical domain through an interface that can be virtualised and shared by multiple tenants.

"This is not the end of III-V photonics. There are many III-V players, vertically integrated, that have shown that they can integrate and get compact, high-quality circuits"

Network Functions Virtualisation: Why was that discussed at OFC?

At first glance, it was not obvious. But looking at it in more detail, much of the infrastructure over which those network functions run is optical.

Just take one Network Functions Virtualisation example: the mobile backhaul space. If you look at LTE/ LTE Advanced, there is clearly a push to put in more fibre and more optical infrastructure.

At the same time, you still have a bandwidth crunch. It is very difficult to have enough bandwidth to the antenna to support all the users and give them the quality of experience they expect.

Putting networking functions such as cacheing at a cell site, deeper within the network, and managing a virtualised session there, is an interesting trend that operators are looking at, and which we, with our partnership with Saguna Networks, have shown a solution for.

Virtualising network functions such as cacheing, firewalling and wide area network (WAN) optimisation are higher layer functions. But as you do that, the network infrastructure needs to adapt dynamically.

You need orchestration that combines the control and the co-ordination of the networking functions. This is more IT infrastructure - server-based blades and open-source software.

Then you have SDN underneath, supporting changes in the traffic flow with reconfiguration of the network infrastructure.

There was much discussion about the CFP2 and Cisco's own silicon photonics-based CPAK. Was this the main silicon photonics story at the show?

There is much interest in silicon photonics not only for short reach optical interconnects but more generally, as an alternative to III-V photonics for integrated optical functions.

For light sources and amplification, you still need indium phosphide and you need to think about how to combine the two. But people have shown that even in the core network you can get decent performance at 100 Gig coherent using silicon photonics.

This is an interesting development because such a solution could potentially lower cost, simplify thermal management, and from a fab access and manufacturing perspective, it could be simpler going to a global foundry.

But a word of caution: there is big hype here too. This is not the end of III-V photonics. There are many III-V players, vertically integrated, that have shown that they can integrate and get compact, high-quality circuits.

You mentioned interconnect in the data centre as one evolving theme. What did you mean?

The capacities inside the data centre are growing much faster than the WAN interconnects. That is not surprising because people are trying to do as much as possible in the data centre because WAN interconnect is expensive.

People are looking increasingly at how to integrate the optics and the server hardware more closely. This is moving beyond the concept of pluggables all the way to mounted optics on the board or even on-chip to achieve more density, less power and less cost.

There were many people in the OFC workshops talking about getting rid of pluggability and the cages and getting the stuff mounted on the printed circuit board instead, as a cheaper, more scalable approach.

"Right now we are running 28 Gig on a single wavelength. Clearly with speeds increasing and with these kind of developments [PAM-8, discrete multi-tone], you see that this is not the end"

What did you learn at the show?

There wasn't anything that was radically new. But there were some significant silicon photonics demonstrations. That was the most exciting part for me although I'm not sure I can discuss the demos [due to confidentiality].

Another area we are interested in revolves around the ongoing IEEE work on short reach 100 Gigabit serial interfaces. The original objective was 2km but they have now honed in on 500m.

PAM-8 - pulse amplitude modulation with eight levels - is one of the proposed solutions; another is discrete multi-tone (DMT). [With DMT] using a set of electrical sub-carriers and doing adaptive bit loading means that even with bandwidth-limited components, you can transmit over the required distances. There was a demo at the exhibition from Fujitsu Labs showing DMT over 2km using a 10 Gig transmitter and receiver.

This is of interest to us as we have a 100 Gigabit direct detection dense WDM solution today and are working on the product evolution.

We use the existing [component/ module] ecosystem for our current direct detect solution. These developments bring up some interesting new thoughts for our next generation.

So you can go beyond 100 Gigabit direct detection?

Right now we are running 28 Gig on a single wavelength. Clearly with speeds increasing and with these kind of developments [PAM-8, DMT], you see that this is not the end.

Part 1: Software-defined networking: A network game-changer, click here

Part 2: OFC/NFOEC 2013 industry reflections, click here

Part 3: OFC/NFOEC 2013 industry reflections, click here

Part 4: OFC/NFOEC industry reflections, click here

Software-defined networking: A network game-changer?

OFC/NFOEC reflections: Part 1

"We [operators] need to move faster"

Andrew Lord, BT

Q: What was your impression of the show?

A: Nothing out of the ordinary. I haven't come away clutching a whole bunch of results that I'm determined to go and check out, which I do sometimes.

I'm quite impressed by how the main equipment vendors have moved on to look seriously at post-100 Gigabit transmission. In fact we have some [equipment] in the labs [at BT]. That is moving on pretty quickly. I don't know if there is a need for it just yet but they are certainly getting out there, not with live chips but making serious noises on 400 Gig and beyond.

There was a talk on the CFP [module] and whether we are going to be moving to a coherent CFP at 100 Gig. So what is going to happen to those prices? Is there really going to be a role for non-coherent 100 Gig? That is still a question in my mind.

"Our dream future is that we would buy equipment from whomever we want and it works. Why can't we do that for the network?"

I was quite keen on that but I'm wondering if there is going to be a limited opportunity for the non-coherent 100 Gig variants. The coherent prices will drop and my feeling from this OFC is they are going to drop pretty quickly when people start putting these things [100 Gig coherent] in; we are putting them in. So I don't know quite what the scope is for people that are trying to push that [100 Gigabit direct detection].

What was noteworthy at the show?

There is much talk about software-defined networking (SDN), so much talk that a lot of people in my position have been describing it as hype. There is a robust debate internally [within BT] on the merits of SDN which is essentially a data centre activity. In a live network, can we make use of it? There is some skepticism.

I'm still fairly optimistic about SDN and the role it might have and the [OFC/NFOEC] conference helped that.

I'm expecting next year to be the SDN conference and I'd be surprised if SDN doesn't have a much greater impact then [OFC/NFOEC 2014] with more people demoing SDN use cases.

Why is there so much excitement about SDN?

Why now when it could have happened years ago? We could have all had GMPLS (Generalised Multi-Protocol Label Switching) control planes. We haven't got them. Control plane research has been around for a long time; we don't use it: we could but we don't. We are still sitting with heavy OpEx-centric networks, especially optical.

"The 'something different' this conference was spatial-division multiplexing"

So why are we getting excited? Getting the cost out of the operational side - the software-development side, and the ability to buy from whomever we want to.

For example, if we want to buy a new network, we put out a tender and have some 10 responses. It is hard to adjudicate them all equally when, with some of them, we'd have to start from scratch with software development, whereas with others we have a head start as our own management interface has already been developed. That shouldn't and doesn't need to be the case.

Opening the equipment's north-bound interface into our own OSS (operating systems support) in theory, and this is probably naive, any specific OSS we develop ought to work.

Our dream future is that we would buy equipment from whomever we want and it works. Why can't we do that for the network?

We want to as it means we can leverage competition but also we can get new network concepts and builds in quicker without having to suffer 18 months of writing new code to manage the thing. We used to do that but it is no longer acceptable. It is too expensive and time consuming; we need to move faster.

It [the interest in SDN] is just competition hotting up and costs getting harder to manage. This is an area that is now the focus and SDN possibly provides a way through that.

Another issue is the ability to put quickly new applications and services onto our networks. For example, a bank wants to do data backup but doesn't want to spend a year and resources developing something that it uses only occasionally. Is there a bandwidth-on-demand application we can put onto our basic network infrastructure? Why not?

SDN gives us a chance to do something like that, we could roll it out quickly for specific customers.

Anything else at OFC/NFOEC that struck you as noteworthy?

The core networks aspect of OFC is really my main interest.

You are taking the components, a big part of OFC, and then the transmission experiments and all the great results that they get - multiple Terabits and new modulation formats - and then in networks you are saying: What can I build?

The networks have always been the poor relation. It has not had the great exposure or the same excitement. Well, now, the network is becoming centre stage.

As you see components and transmission mature - and it is maturing as the capacity we are seeing on a fibre is almost hitting the natural limit - so the spectral efficiency, the amount of bits you can squeeze in a single Hertz, is hitting the limit of 3,4,5,6 [bit/s/Hz]. You can't get much more than that if you want to go a reasonable distance.

So the big buzz word - 70 to 80 percent of the OFC papers we reviewed - was flex-grid, turning the optical spectrum in fibre into a much more flexible commodity where you can have wherever spectrum you want between nodes dynamically. Very, very interesting; loads of papers on that. How do you manage that? What benefits does it give?

What did you learn from the show?

One area I don't get yet is spatial-division multiplexing. Fibre is filling up so where do we go? Well, we need to go somewhere because we are predicting our networks continuing to grow at 35 to 40 percent.

Now we are hitting a new era. Putting fibre in doesn't really solve the problem in terms of cost, energy and space. You are just layering solutions on top of each other and you don't get any more revenue from it. We are stuffed unless we do something different.

The 'something different' this conference was spatial-division multiplexing. You still have a single fibre but you put in multiple cores and that is the next way of increasing capacity. There is an awful lot of work being done in this area.

I gave a paper [pointing out the challenges]. I couldn't see how you would build the splicing equipment, how you would get this fibre qualified given the 30-40 years of expertise of companies like Corning making single mode fibre, are we really going to go through all that again for this new fibre? How long is that going to take? How do you align these things?

"SDN for many people is data centres and I think we [operators] mean something a bit different."

I just presented the basic pitfalls from an operator's perspective of using this stuff. That is my skeptic side. But I could be proved wrong, it has happened before!

Anything you learned that got you excited?

One thing I saw is optics pushing out.

In the past we saw 100 Megabit and one Gigabit Ethernet (GbE) being king of a certain part of the network. People were talking about that becoming optics.

We are starting to see optics entering a new phase. Ten Gigabit Ethernet is a wavelength, a colour on a fibre. If the cost of those very simple 10GbE transceivers continues to drop, we will start to see optics enter a new phase where we could be seeing it all over the place: you have a GigE port, well, have a wavelength.

[When that happens] optics comes centre stage and then you have to address optical questions. This is exciting and Ericsson was talking a bit about that.

What will you be monitoring between now and the next OFC?

We are accelerating our SDN work. We see that as being game-changing in terms of networks. I've seen enough open standards emerging, enough will around the industry with the people I've spoken to, some of the vendors that want to do some work with us, that it is exciting. Things like 4k and 8k (ultra high definition) TV, providing the bandwidth to make this thing sensible.

"I don't think BT needs to be delving into the insides of an IP router trying to improve how it moves packets. That is not our job."

Think of a health application where you have a 4 or 8k TV camera giving an ultra high-res picture of a scan, piping that around the network at many many Gigabits. These type of applications are exciting and that is where we are going to be putting a bit more effort. Rather than the traditional just thinking about transmission, we are moving on to some solid networking; that is how we are migrating it in the group.

When you say open standards [for SDN], OpenFlow comes to mind.

OpenFlow is a lovely academic thing. It allows you to open a box for a university to try their own algorithms. But it doesn't really help us because we don't want to get down to that level.

I don't think BT needs to be delving into the insides of an IP router trying to improve how it moves packets. That is not our job.

What we need is the next level up: taking entire network functions and having them presented in an open way.

For example, something like OpenStack [the open source cloud computing software] that allows you to start to bring networking, and compute and memory resources in data centres together.

You can start to say: I have a data centre here, another here and some networking in between, how can I orchestrate all of that? I need to provide some backup or some protection, what gets all those diverse elements, in very different parts of the industry, what is it that will orchestrate that automatically?

That is the kind of open theme that operators are interested in.

That sounds different to what is being developed for SDN in the data centre. Are there two areas here: one networking and one the data centre?

You are quite right. SDN for many people is data centres and I think we mean something a bit different. We are trying to have multi-vendor leverage and as I've said, look at the software issues.

We also need to be a bit clearer as to what we mean by it [SDN].

Andrew Lord has been appointed technical chair at OFC/NFOEC

Further reading

Part 2: OFC/NFOEC 2013 industry reflections, click here

Part 3: OFC/NFOEC 2013 industry reflections, click here

Part 4: OFC/NFOEC industry reflections, click here

Part 5: OFC/NFEC 2013 industry reflections, click here

Netronome uses its network flow processor for OpenFlow

Part 2: Hardware for SDN

Netronome has demonstrated its flow processor chip implementing the OpenFlow protocol, an open standard implementation of software-defined networking (SDN).

"What OpenFlow does is let you control the hardware that is handling the traffic in the network. The value to the end customer is what they can do with that"

David Wells, Netronome

The reference design demonstration, which took place at an Open Networking User Group meeting, used the fabless semiconductor player's NFP-3240 network flow processor. The NFP-3240 was running the latest 1.3.0 version of the OpenFlow protocol.

Last year Netronome announced its next-generation flow processor family, the NFP-6xxx. The OpenFlow demonstration hints at what the newest flow processor will enable once first samples become available at the year end.

Netronome believes its flow processor architecture is well placed to tackle emerging intelligent networking applications such as SDN due to its emphasis on packet flows.

“In security, mobile and other spaces, increasingly there needs to be equipment in the network that is looking at content of packets and states of a flow - where you are looking at content across multiple packets - to figure out what is going on,” says David Wells, co-founder of Netronome and vice president of technology. “That is what we term flow processing."

This requires equipment able to process all the traffic on network links at 10 and 40 Gigabit-per-second (Gbps), and with next-generation equipment at 100Gbps. "This is where you do more than look at the packet header and make a switching decision," says Wells.

Software-defined networking

Operators and content service providers are interested in SDN due to its promise to deliver greater efficiencies and control in how they use their switches and routers in the data centre and network. With SDN, operators can add their own intelligence to tailor how traffic is routed in their networks.

In the data centre, a provider may be managing a huge number of servers running virtualised applications. "The management of the servers and applications is clever enough to optimise where it moves virtual machines and where it puts particular applications," says Wells. "You want to be able to optimise how the traffic flows through the network to get to those servers in the same way you are optimising the rest of the infrastructure."

Without OpenFlow, operators depend on routing protocols that come with existing switches and routers. "It works but it won't necessarily take the most efficient route through the network," says Wells.

OpenFlow lets operators orchestrate from the highest level of the infrastructure where applications reside, map the flows that go to them, determine their encapsulation and the capacity they have. "The service can be put in a tunnel, for example, and have resource allocated to it so that you know it is not going to be contended with," says Wells, guaranteeing services to customers.

"What OpenFlow does is let you control the hardware that is handling the traffic in the network," says Wells. "The value to the end customer is what they can do with that, in conjunction with other things they are doing."

Operators are also interested in using OpenFlow in the wide area network. "The attraction of OpenFlow is in the core and the edge [of the network] but it is the edge that is the starting point," says Wells.

OpenFlow demonstration

Netronome's OpenFlow demonstration used an NFP-3240 on a PCI Express (PCIe) card to run OpenFlow while other Netronome software runs on the host server in which the card resides.

The NFP-3240 classifies the traffic and implements the actions to be taken on the flows. The software on the host exposes the OpenFlow application programming interface (API) enabling the OpenFlow controller, the equipment that oversees how traffic is handled, to address the NFP device and influence how flows are processed.

Early OpenFlow implementations are based on Ethernet switch chips that interface to a CPU that provides the OpenFlow API. However, the Ethernet chips support the OpenFlow 1.1.0 specification and have limited-sized look-up tables with 98, 64k or 100k entries, says Wells.

The OpenFlow controller can write to the table and dictate how traffic is handled, but its size is limited. "That is a starting point and is useful," says Wells. "But to really do SDN, you need hardware platforms that can handle many more flows than these switches."

This is where the NFP processor is being targeted: it is programmable with capabilities driven by software rather than the hardware architecture, says Wells.

NFP-6xxx architecture

The NFP-6xxx is Netronome's latest network flow processor (NFP) family, rated at 40 to 200Gbps. No particular devices have yet been detailed but the highest-end NFP-6xxx device will comprise 216 processors: 120 flow processors (see chart - Netronome's sixth generation device) and new to its NFP devices, 96 packet processors.

The architecture is made up of 'islands', units that comprise a dozen flow processors. Netronome will combine different numbers of islands to create the various NFP-6xxx devices.

The input-output bandwidth of the device is 800Gbps while the on-chip memory totals 30 Megabyte. The device also interfaces directly to QSFP, SFP+ and CFP optical transceivers.

The 120 flow processors tackle the more complex, higher-layer tasks. Netronome has added packet processors to the NFP-6xxx to free the flow processors from tasks such as taking packets from the input stream and passing them on to where they are processed. The packet processors are programmable and perform such tasks as header classification before being processed by the flow processors.

The NFP-6xxx devices will include some 100 hardware accelerator engines for tasks such as traffic management, encryption and deep packet inspection.

The device will be implemented using Intel's latest 22nm 3D Tri-Gate CMOS process and is designed to work with high-end general purpose CPUs such as Intel's x86 devices, Broadcom's XLP and Freescale's PowerPC.

Markets

The data centre, where SDN is already being used, is one promising market for the device as customers look to enhance their existing capabilities.

There are requirements for intelligent gateways now but this is a market that is a year or two out, says Wells. Use of OpenFlow to control large IP core routers or core optical switches is a longer term application. "Those areas will come but it will be further out," says Wells.

For other markets such as security, there is a need for knowledge about the state of flows. This is more sophisticated treatment of packets than the simple looking up the action required based on a packet's header. Netronome believes that OpenFlow will develop to not only forward or terminate traffic at a certain destination but will also send traffic to a service before it is returned.

"You could insert a service in a OpenFlow environment and what it would do is guide packets to that service and return it but inside that service you may do something that is stateful," says Wells. This is just the sort of task security performs on flows. For example, an intrusion prevention system as a service or a firewall function. This function could be run on a dedicated platform or as a virtual application running on Netronome's flow processor.

Further reading:

Part 1: The role of software defined networking for telcos

EZchip expands the role of the network processor, click here

The role of software-defined networking for telcos

The OIF's Carrier Working Group is assessing how software-defined networking (SDN) will impact transport. Hans-Martin Foisel, chair of the OIF working group, explains SDN's importance for operators.

Briefing: Software-defined networking

Part 1: Operator interest in SDN

"Using SDN use cases, we are trying to derive whether the transport network is ready or if there is some missing functionality"

"Using SDN use cases, we are trying to derive whether the transport network is ready or if there is some missing functionality"

Hans-Martin Foisel, OIF

Hans-Martin Foisel, of Deutsche Telekom and chair of the OIF Carrier Working Group, says SDN is of great interest to operators that view the emerging technology as a way of optimising all-IP networks that increasingly make use of data centres.

"Software-defined networking is an approach for optimising the network in a much larger sense than in the past," says Foisel whose OIF working group is tasked with determining how SDN's requirements will impact the transport network.

Network optimisation remains an ongoing process for operators. Work continues to improve the interworking between the network's layers to gain efficiencies and reduce operating costs (see Cisco Systems' intelligent light).

With SDN, the scope is far broader. "It [SDN] is optimising the network in terms of processing, storage and transport," says Foisel. SDN takes the data centre environment and includes it as part of the overall optimisation. For example, content allocation becomes a new parameter for network optimisation.

Other reasons for operator interest in SDN, says Foisel, include optimising operation support systems (OSS) software, and the characteristic most commonly associated with SDN, making more efficient use of the network's switches and routers.

"A lot of carriers are struggling with their OSSes - these are quite complex beasts," he says. "With data centres involved, you now have a chance to simplify your IT as all carriers are struggling with their IT."

The Network Functions Virtualisation (NFV) industry specification group is a carrier-led initiative set up in January by the European Telecommunications Standards Institute (ETSI). The group is tasked with optimising software components, the OSSes, involved for processing, storage and transport.

The initiative aims to make use of standard servers, storage and Ethernet switches to reduce the varied equipment making up current carrier networks to improve service innovation and reduce the operators' capital and operational expenditure.

The NFV and SDN are separate developments that will benefit each other. The ETSI group will develop requirements and architecture specifications for the hardware and software infrastructure needed for the virtualized functions, as well as guidelines for developing network functions.

The third reason for operator interest in SDN - separating management, control and data planes - promises greater efficiencies, enabling network segmentation irrespective of the switch and router deployments. This allows flexible use the network, with resources shifted based on particular user requirements.

"Optimising the network as a whole - including the data centre services and applications - is a concept, a big architecture," says Foisel. "OpenFlow and the separation of data, management and control planes are tools to achieve them."

OpenFlow is an open standard implementation of the SDN concept. The OpenFlow protocol is being developed by the Open Networking Foundation, an industry body that includes Google, Facebook and Microsoft, telecom operators Verizon, NTT, Deutsche Telekom, and various equipment makers.

Transport SDN

The OIF Working Group will identify how SDN impacts the transport network including layers one and two, networking platforms and even components. By undertaking this work, the operators' goal is to make SDN "carrier-grade'.

Foisel admits that the working group does not yet know whether the transport layer will be impacted by SDN. To answer the question, SDN applications will be used to identify required transport SDN functionalities. Once identified, a set of requirements will be drafted.

"Using SDN use cases, we are trying to derive whether the transport network is ready or if there is some missing functionality," says Foisel.

The work will also highlight any areas that require standardisation, for the OIF and for other standards bodies, to ensure future SDN interworking between vendors' solutions. The OIF expects to have a first draft of the requirements by July 2013.

"In the transport network we are pushed by the mobile operators but also by the over-the-top applications to be faster and be more application-aware," says Foisel. "With SDN we have a chance to do so."

Part 2: Hardware for SDN

OpenFlow extends its control to the optical layer

"We see OpenFlow as an additional solution to tackle the problem of network control"

Jörg-Peter Elbers, ADVA Optical Networking

The largest data centre players have a single-mindedness when it comes to service delivery. Players such as Google, Facebook and Amazon do not think twice about embracing and even spurring hardware and software developments if they will help them better meet their service requirements.

Such developments are also having a wider impact, interesting traditional telecom operators that have their own service challenges.

The latest development causing waves is the OpenFlow protocol. An open standard, OpenFlow is being developed by the Open Networking Foundation, an industry body that includes Google, Facebook and Microsoft, telecom operators Verizon, NTT and Deutsche Telekom, and various equipment makers.

OpenFlow is already being used by Google, and falls under the more general topic of software-defined networking (SDN). A key principle underpinning SDN is the separation of the data and control planes to enable more centralised and simplified management of the network.

OpenFlow is being used in the management of packet switches for cloud services. "The promise of software-defined networking and OpenFlow is to give [data centre operators] a virtualised network infrastructure," says Jörg-Peter Elbers, vice president, advanced technology at ADVA Optical Networking.

The growing interest in OpenFlow is reflected in the activities of the telecom system vendors that have extended the protocol to embrace the optical layer. But whereas the content service provider giants need only worry about tailoring their networks to optimise their particular services, telecom operators must consider legacy equipment and issues of interoperability.

OFELIA

ADVA Optical Networking has started the ball rolling by running an experiment to show OpenFlow controlling both the optical and packet layers of the network. Until now the protocol, which provides a software-programmable interface, has been used to manage packet switches; the adding of the optical layer control is an industry first, the company claims.

The OpenFlow demonstration is part of the European “OpenFlow in Europe, Linking Infrastructure and Applications” (OFELIA) research project involving ADVA Optical Networking and the University of Essex. A test bed has been set up that uses the ADVA FSP 3000 to implement a colourless and directionless ROADM-based optical network.

"We have put a network together such that people can run the optical layer through an OpenFlow interface, as they do the packet switching layer, under one uniform control umbrella," says Elbers. "The purpose of this project is to set up an experimental facility to give researchers access to, and have them play with, the capabilities of an OpenFlow-enabled network."

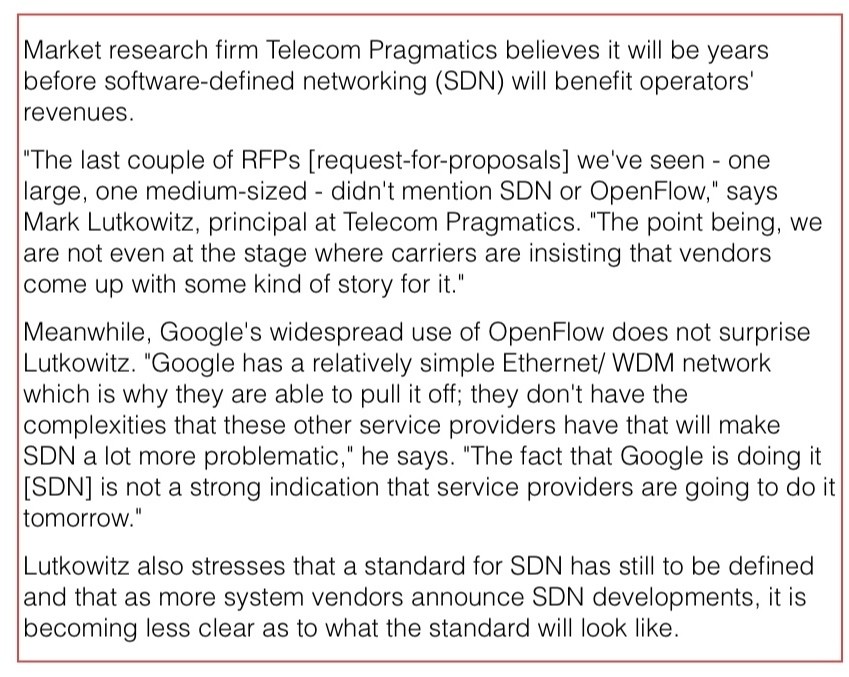

"The fact that Google is doing it [SDN] is not a strong indication that service providers are going to do it tomorrow"

Mark Lutkowitz, Telecom Pragmatics

Remote researchers can access the test bed via GÉANT, a high-bandwidth pan-European backbone connecting national research and education networks.

ADVA Optical Networking hopes the project will act as a catalyst to gain useful feedback and ideas from the users, leading to further developments to meet emerging requirements.

OpenFlow and GMPLS

A key principle of SDN, as mentioned, is the separation of the data plane from the control plane. "The aim is to have a more unified control of what your network is doing rather than running a distributed specialised protocol in the switches," says Elbers.

That is not that much different from the Generalized Multi-Protocol Label Switching (GMPLS), he says: "With GMPLS in an optical network you effectively have a data plane - a wavelength switched data plane - and then you have a unified control plane implementation running on top, decoupled from the data plane."

But clearly there are differences. OpenFlow is being used by data centre operators to control their packet switches and generate packet flows. The goal is for their networks to gain flexibility and agility: "A virtualised network that can be run as you, the user, want it," said Elbers.

But the protocol only gives a user the capability to manage the forwarding behavior of a switch: an incoming packet's header is inspected and the user can program the forwarding table to determine how the packet stream is treated and the port it goes out on.

And while OpenFlow has since been extended to cater for circuit switches as well as wavelength circuits, there are aspects at the optical layer which OpenFlow is not designed to address - issues that GMPLS does.

To run end-to-end, the control plane needs to be aware of the blocking constraints of an optical switch, while when provisioning it must also be aware of such aspects as the optical power levels and optical performance constraints. "The management of optical is different from managing a packet switch or a TDM [circuit switched] platform," says Elbers. “We need to deal with transmission impairments and constraints that simply do not exist inside a packet switch.”

That said, having GMPLS expertise, it is relatively simple for a vendor to provide an OpenFlow interface to an optical controlled network, he says: "We see OpenFlow as an additional solution to tackle the problem of network control."

Operators want mature and proven interoperable standards for network control, that incorporate all the different network layers and that use GMPLS.

"We are seeing that in the data centre space, the players think that they may not have to have that level of complexity in their protocols and can run something lower level and streamlined for their applications," says Elbers.

While operators see the benefit of OpenFlow for their own data centres and managed service offerings, they also are eyeing other applications such as for access and aggregation to allow faster service mobility and for content management, says Elbers.

ADVA Optical Networking sees the adding of optical to OpenFlow as a complementary approach: the integration of optical networking into an existing framework to run it in a more dynamic fashion, an approach that benefits the data centre operators and the telcos.

"If you have one common framework, when you give server and compute jobs then you know what kind of connectivity and latency needs to go with this and request these resources and reconfigure the network accordingly," says Elbers.

But longer term the impact of OpenFlow and SDN will likely be more far-reaching: applications themselves could program the network, or it could be used to enable dial-up bandwidth services in a more dynamic fashion. "By providing software programmability into a network, you can develop your own networking applications on top of this - what we see as the heart of the SDN concept," says Elbers. “The long term vision is that the network will also become a virtualised resource, driven by applications that require certain types of connectivity.”

Providing the interface is the first step, the value-add will be the things that players do with the added network flexibility, either the vendors working with operators, or by the operators' customers and by third-party developers.

"This is a pretty significant development that addresses the software side of things," says Elbers, adding that software is becoming increasingly important, with OpenFlow being an interesting step in that direction.