T-API taps into the transport layer

The Optical Internetworking Forum (OIF) in collaboration with the Open Networking Foundation (ONF) and the Metro Ethernet Forum (MEF) have tested the second-generation transport application programming interface (T-API 2.0).

SK Telecom's Park Jin-hyo

SK Telecom's Park Jin-hyo

T-API 2.0 is a standardised interface, released in late 2017 by the ONF, that enables the dynamic allocation of transport resources using software-defined networking (SDN) technology.

The interface has been created so that when a service provider, or one of its customers, requests a service, the required resources including the underlying transport are configured promptly.

The OIF-led interoperability demonstration tested T-API 2.0 in dynamic use cases involving equipment from several systems vendors. Four service providers - CenturyLink, Telefonica, China Telecom and SK Telecom - provided their networking labs, located in three continents, for the testing.

Packets and transport

SDN technology is generally associated with the packet layer but there is also a need for transport links, from fibre and wavelength-division multiplexing technology at Layer 0 through to Layer 2 Ethernet.

Transport SDN differs from packet-based SDN in several ways. Transport SDN sets up dedicated pipes whereas a path is only established when packets flow for packet SDN. “When you order a 100-gigabit connection in the transport network, you get 100 gigabits,” says Jonathan Sadler, the OIF’s vice president and Networking Interoperability Working Group chair. “You are not sharing it with anyone else.”

Another difference is that at the packet layer with its manipulation of packet headers is a digital domain whereas the photonic layer is analogue. “A lot of the details of how a signal interacts with a fibre, with the wavelength-selective switches, and with the different componentry that is used at Layer 0, are important in order to characterise whether the signal makes it through the network,” says Sadler.

T-API 1.0 is a configure and step-away deployment, T-API 2.0 is where the dynamic reactions to things happening in the network become possible

Prior to SDN, control functions resided on a platform as part of a network’s distributed control plane. Each vendor had their own interface between the control and the optical domain embedded within their platforms. T-API has been created to expose and standardise that interface such that applications can request transport resources independent of the underlying vendor equipment.

NBI refers to a northbound interface while SBI stands for a southbound interface. Source: OIF.

NBI refers to a northbound interface while SBI stands for a southbound interface. Source: OIF.

To fulfil a connection across an operator’s network involves a hierarchy of SDN controllers. An application’s request is first handled by a multi-domain SDN controller that decomposes the request for the various domain controllers associated with the vendor-specific platforms. T-API 2.0’s role is to link the multi-domain controller to the application layer’s orchestrator and also connect the individual domain controllers to the multi-domain SDN controller (see diagram above). T-API is an example of a northbound interface.

The same T-API 2.0 interface is used at both SDN controller levels, what differs is the information each handles. Sadler compares the upper T-API 2.0 interface to a high-level map whereas the individual TAPI 2.0 domain interfaces can be seen as maps with detailed ‘local’ data. “Both [interfaces] work on topology information and both direct the setting-up of connections,” says Sadler. “But the way they are doing it is with different abstractions of the information.”

T-API 2.0

The ONF developed the first T-API interface as part of its Common Information Model (CIM) work. The interface was tested in 2016 as part of a previous interoperability demonstration involving the OIF and the ONF.

One important shortfall revealed during the 2016 demonstrations, and which has slowed its deployment, is that the T-API 1.0 interface didn't fully define how to notify an upper controller of events in the lower domains. For example, if a link is congested, or worst, lost, it couldn’t inform the upper controller to re-route traffic. This has been put right with T-API 2.0.

“T-API 1.0 is a configure and step-away deployment, T-API 2.0 is where the dynamic reactions to things happening in the network become possible,” says Sadler.

When it comes to the orchestrator tying into the transport network, we do believe T-API will be one of the main approaches for these APIs

Interoperability demonstration

In addition to the four service providers, six systems vendors took part in the recent interoperability demonstration: ADVA Optical Networking, Coriant, Infinera, NEC/ Netcracker, Nokia and SM Optics.

The recent tests focussed on the performance of the TAPI-2.0 interface under dynamic network conditions. Another change since the 2016 tests was the involvement of the MEF. The MEF has adopted and extended T-API as part of its Network Resource Modeling (NRM) and Network Resource Provisioning (NRP) projects, elements of the MEF’s Lifecycle Service Orchestration (LSO) architecture. The LSO allows for service provisioning using T-API extensions that support the MEF’s Carrier Ethernet services.

Three aspects of the T-API 2.0 interface were tested as part of the use cases: connectivity, topology and notification.

Setting up a service requires both connectivity and topology. Topology refers to how a service is represented in terms of the node edge points and the links. Notification refers to the northbound aspect of the interface, pushing information upwards to the orchestrator at the application layer. This allows the orchestrator in a multi-domain network to re-route connectivity services across domains.

The four use cases tested included multi-layer network connections whereby topology information is retrieved from a multi-domain network with services provisioned across domains.

T-API 2.0 was also used to show the successful re-routing of traffic when network situations change such as a fault, congestion, or to accommodate maintenance work. Re-routing can be performed across the same layer such as the IP, Ethernet or optical layer, or, more optimally, across two or more layers. Such a capability promises operators the ability to automate re-routing using SDN technology.

The two other use cases tested during the recent demonstration were the orchestrator performing network restoration across two or more domains, and the linking of data centres’ network functions virtualisation infrastructure (NFVI). Such NFVI interconnect is a complex use case involving SDN controllers using T-API to create a set of wide area networks connecting the NFV sites. The use case set up is shown in the diagram below.

Source: OIF

Source: OIF

SK Telecom, one of the operators that participated in the interoperability demonstration, welcomes the advent of T-API 2.0 and says how such APIs will allow operators to enable services more promptly.

“It has been difficult to provide services such as bandwidth-on-demand and networking services for enterprise customers enabled using a portal,” says Park Jin-hyo, executive vice president of the ICT R&D Centre at SK Telecom. “These services will be provided within minutes, according to the needs, using the graphical user interface of SK Telecom’s network-as-service platform.”

SK Telecom stresses the importance of open APIs in general as part of its network transformation plans. As well as implementing a 5G Standalone (SA) Core, SK Telecom aims to provide NFV and SDN-based services across its network infrastructure including optical transport, IP, data centres, wired access as well as networks for enterprise customers.

“Our final goal is to open the network itself to enterprise customers via an open API,” says Park. “Our mission is to create 5G-enabled network-slicing-based business models and services for vertical markets.”

Takeways

The OIF says the use cases have shown that T-API 2.0 enables real-time orchestration and that the main shortcomings identified with the first T-API interface have been addressed with T-API 2.0.

The OIF recognises that while T-API may not be the sole approach available for the industry - the IETF has a separate activity - the successful tests and the broad involvement of organisations such as the ONF and MEF make a strong case for T-API 2.0 as the approach for operators as they seek to automate their networks.

“When it comes to the orchestrator tying into the transport network, we do believe T-API will be one of the main approaches for these APIs,“ says Sadler.

SK Telecom said participating in the interop demonstrations enabled it to test and verify, at a global level, APIs that the operators and equipment manufacturers have been working on. And from a business perspective, the demonstration work confirmed to SK Telecom the potential of the ‘global network-as-a-service’ concept.

Editor note: Added input from SK Telecom on September 1st.

ONF advances its vision for the network edge

The Open Networking Foundation’s (ONF) goal to create software-driven architectures for the network edge has advanced with the announcement of its first reference designs.

In March, eight leading service providers within the ONF - AT&T, Comcast, China Unicom, Deutsche Telekom, Google, NTT Group, Telefonica and Turk Telekom - published their strategic plan whereby they would take a hands-on approach to the design of their networks after becoming frustrated with what they perceived as foot-dragging by the systems vendors.

Timon SloaneThree months on, the service providers have initial drafts of the the first four reference designs: a broadband access architecture, a spine-leaf switch for network functions virtualisation (NFV), a more general networking fabric that uses the P4 packet forwarding programming language, and the open disaggregated transport network (ODTN).

Timon SloaneThree months on, the service providers have initial drafts of the the first four reference designs: a broadband access architecture, a spine-leaf switch for network functions virtualisation (NFV), a more general networking fabric that uses the P4 packet forwarding programming language, and the open disaggregated transport network (ODTN).

The ONF also announced four system vendors - Adtran, Dell EMC, Edgecore Networks, and Juniper Networks - have joined to work with the operators on the reference design programmes.

“We are disaggregating the supply chain as well as disaggregating the technology,” says Timon Sloane, the ONF’s vice president of marketing and ecosystem. “It used to be that you’d buy a complete solution from one vendor. Now operators want to buy individual pieces and put them together, or pay somebody to do it for them.”

We are disaggregating the supply chain as well as disaggregating the technology

CORD and Exemplars

The ONF is known for various open-source initiatives such as its ONOS software-defined networking (SDN) controller and CORD. CORD is the ONF’s cloud optimised remote data centre work, also known as the central office re-architected as a data centre. That said, the ONF points out that CORD can be used in places other than the central office.

“CORD is a hardware architecture but it is really about software,” says Sloane. “It is a landscape of all our different software projects.”

However, the ONF received feedback last year that service providers were putting the CORD elements together slightly differently. “Vendors were using that as an excuse to say that CORD was too complicated and that there was no critical mass: ‘We don’t know how every operator is going to do this and so we are not going to do anything’,” says Sloane.

It led to the ONF’s service providers agreeing to define the assemblies of common components for various network platforms so that vendors would know what the operators want and intend to deploy. The result is the reference designs.

The reference designs offer operators some flexibility in terms of the components they can use. The components may be from the ONF but need not be; they can also be open-source or a vendor’s own solution.

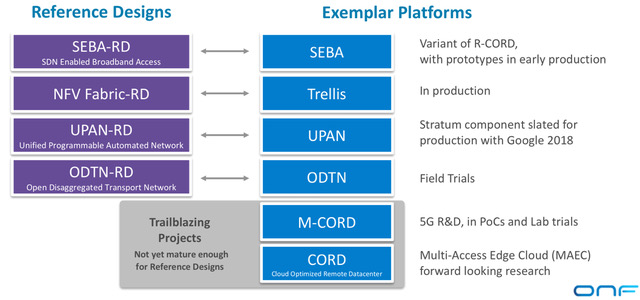

Source: ONF

Source: ONF

The ONF has also announced the exemplar platforms aligned with the reference designs (see diagram). An exemplar platform is an assembly of open-source components that builds an example platform based on a reference design. “The exemplar platforms are the open source projects that pull all the pieces together,” says Sloane. “They are easy to download, trial and deploy.”

The ONF admits that it is much more experienced with open source projects and exemplar platforms that it is with reference designs. The operators are adopting an iterative process involving all three - open source components, exemplar designs and reference designs - before settling on the solutions that will lead to deployments.

Two of the ONF exemplar platforms announced are new: the SDN-enabled broadband access (SEBA) and the universal programmable automated network (UPAN).

Reference designs

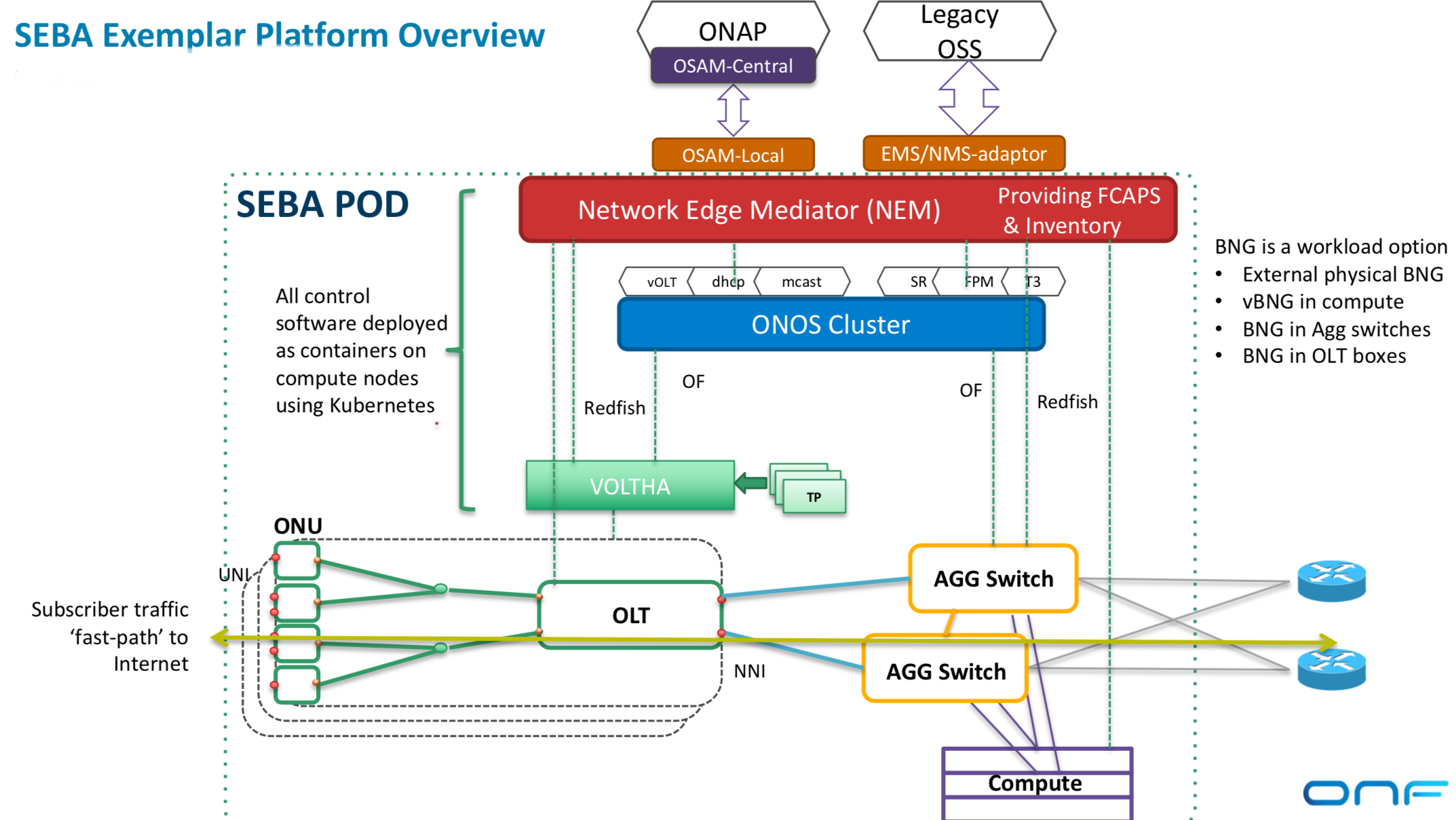

The SEBA reference design is a broadband variant of the ONF’s CORD work and addresses residential and backhauling applications. The design uses Kubernetes, the cloud-native orchestration system that automates the deployment, scaling and management of container-based applications, while the use of the OpenStack platform is optional. “OpenStack is only used if you want to support a virtual machine-based virtual network function,” says Sloane.

Source: ONF

Source: ONF

SEBA uses VOLTHA, the open-source virtual passive optical networking (PON) optical line terminal (OLT) developed by AT&T and contributed to the ONF, and provides interfaces to both legacy operational support systems (OSS) and the Linux Foundation’s Open Networking Automation Platform (ONAP).

SEBA also features FCAPS and mediation. FCAPS is an established telecom capability for network management that can identify faults while the mediation presents information from FCAPS in a way the OSS understands.

“In its slimmest implementation, SEBA doesn’t need CORD switches, just a pair of aggregation switches,” says Sloane. The architecture can place sophisticated forwarding rules onto the optical line terminal and the aggregation switches such that servers and OpenStack are not required. “That has tremendous performance and scale implications,” says Sloane. “No other NFV architecture does this kind of thing.”

The second reference design - the NFV Fabric - ties together two ONF projects - Trellis and ONOS - to create a spine-leaf data centre fabric for edge services and applications.

The two remaining reference designs are UPAN and ODTN.

UPAN can be viewed as an extension of the NFV fabric that adds the P4 data plane programming language. P4 brings programmability to the data plane while the SDN controller enables developers to specify particular forwarding behaviour. “The controller can pull in P4 programs and do intelligent things with them,” says Sloane. “This is a new world where you can write custom apps that will push intelligence into the switch.”

Meanwhile, the ODTN reference design is used to add optical capabilities including reconfigurable optical add-drop multiplexers (ROADMs) and wide-area-network support.

There are also what the ONF calls two trailblazer projects - Mobile CORD (M-CORD) and CORD - that are not ready to become reference designs as they depend on 5G developments that are still taking place.

CORD represents the ONF’s unifying project that brings all the various elements together to address multi-access edge cloud. Also included as part of CORD is an edge cloud services platform. “This is the ultimate vision: what is the app store for edge applications?” says Sloane. “If you write a latency-sensitive application for eyeglasses, for example, how does that get deployed across multiple operators and multiple geographies?”

The ONF says it has already achieved a ‘critical mass’ of vendors to work on the development of the reference designs three months after announcing its strategic plan. The supply chain for each of the reference designs is shown in the table.

Source: ONF

Source: ONF

“We boldly stated that we were going to reconstitute the supply chain as part of this work and bring in partners more aligned to embrace enthusiastically open source and help this ecosystem form and thrive,” says Sloane. “It is a whole new approach and to be able to rally the ecosystem in a short timeframe is notable.”

Our expectation is that at least two of these reference designs will go through this transition this year. This is not a multi-year process.

Next steps

It is the partner operators that are involved in the development of the reference designs. For example, the partners working on ODTN are China Unicom, Comcast and NTT. Once the reference designs are ready, they will be released to ONF members and then publicly.

However, the ONF has yet to give timescales as to when that will happen. “Our expectation is that at least two of these reference designs will go through this transition this year,” says Sloane. “This is not a multi-year process.”

ONF’s operators seize control of their networking needs

- The eight ONF service providers will develop reference designs addressing the network edge.

- The service providers want to spur the deployment of open-source designs after becoming frustrated with the systems vendors failing to deliver what they need.

- The reference designs will be up and running before year-end.

- New partners have committed to join since the consortium announced its strategic plan

The service providers leading the Open Networking Foundation (ONF) will publish open designs to address next-generation networking needs.

Timon SloaneThe ONF service providers - NTT Group, AT&T, Telefonica, Deutsche Telekom, Comcast, China Unicom, Turk Telekom and Google - are taking a hands-on approach to the design of their networks after becoming frustrated with what they perceive as foot-dragging by the systems vendors.

Timon SloaneThe ONF service providers - NTT Group, AT&T, Telefonica, Deutsche Telekom, Comcast, China Unicom, Turk Telekom and Google - are taking a hands-on approach to the design of their networks after becoming frustrated with what they perceive as foot-dragging by the systems vendors.

“All eight [operators] have come together to say in unison that they are going to work inside the ONF to craft explicit plans - blueprints - for the industry for how to deploy open-source-based solutions,” says Timon Sloane, vice president of marketing and ecosystem at the ONF.

The open-source organisation will develop ‘reference designs’ based on open-source components for the network edge. The reference designs will address developments such as 5G and multi-access edge and will be implemented using cloud, white box, network functions virtualisation (NFV) and software-defined networking (SDN) technologies.

By issuing the designs and committing to deploy them, the operators want to attract select systems vendors that will work with them to fulfil their networking needs.

Remit

The ONF is known for such open-source projects as the Central Office Rearchitected as a Datacenter (CORD) and the Open Networking Operating System (ONOS) SDN controller.

The ONF’s scope has broadened over the years, reflecting the evolving needs of its operator members. The organisation’s remit is to reinvent the network edge. “To apply the best of SDN, NFV and cloud technologies to enable not just raw connectivity but also the delivery of services and applications at the edge,” says Sloane.

The network edge spans from the central office to the cellular tower and includes the emerging edge cloud that extends the ‘edge’ to such developments as the connected car and drones.

The operators have been hopeful the whole vendor community would step up and start building solutions and embracing this approach but it is not happening at the speed operators want, demand and need

“The edge cloud is called a lot of different things right now: multi-access edge computing, fog computing, far edge and distributed cloud,” says Sloane. “It hasn’t solidified yet.”

One ONF open-source project is the Open and Disaggregated Transport Network (ODTN), led by NTT. “ODTN is edge related but not exclusively so,” says Sloane. “It is starting off with a data centre interconnect focus but you should think of it as CORD-to-WAN connectivity.”

The ONF’s operators spent months formulated the initiative, dubbed the Strategic Plan, after growing frustrated with a supply chain that has failed to deliver the open-source solutions they need. “The operators have been hopeful the whole vendor community would step up and start building solutions and embracing this approach but it is not happening at the speed operators want, demand and need,” says Sloane.

The ONF’s initiative signals to the industry that the operators are shifting their spending to open-source solutions and basing their procurement decisions on the reference designs they produce.

“It is a clear sign to the industry that things are shifting,” says Sloane. “The longer you sit on the sidelines and wait and see what happens, the more likely you are to lose your position in the industry.”

If operators adopt open-source software and use white boxes based on merchant silicon, how will systems vendors produce differentiated solutions?

“All this goes to show why this is disruptive and creating turbulence in the industry,” says Sloane.

Open-source design equates to industry collaboration to develop shared, non-differentiated infrastructure, he says. That means system vendors can focus their R&D tackling new issues such as running and automating networks, developing applications and solving challenges such as next-generation radio access and radio spectrum management.

“We want people to move with the mark,” says Sloane. “It is not just building a legacy business based on what used to be unique and expecting to build that into the future.”

Reference designs

The operators have identified five reference designs: fixed and mobile broadband, multi-access edge, leaf-and-spine architectures, 5G at the edge, and next-generation SDN.

The ONF has already done much work in fixed and mobile broadband with its residential and mobile CORD projects. Multi-access edge refers to developing one network to serve all types of customers simultaneously, using cloud techniques to shift networking resources dynamically as needed.

At first glance, it is unclear what the ONF can contribute to leaf-and-spine architectures. But the ONF is developing SDN-controlled switch fabric that can perform advanced packet processing, not just packet forwarding.

The ONF’s initiative signals to the industry that the operators are shifting their spending to open-source solutions and basing their procurement decisions on the reference designs they produce.

Sloane says that many virtualised tasks today are run on server blades using processors based on the x86 instruction set. But offloading packet processing tasks to programmable switch chips - referred to as networking fabric - can significantly benefit the price-performance achieved.

“We can leverage [the] P4 [programming language for data forwarding] and start to do things people never envisaged being done in a fabric,” says Sloane, adding that the organisation overseeing P4 is going to merge with the ONF.

The 5G reference design is one application where such a switch fabric will play a role. The ONF is working on implementing 5G network core functions and features such as network slicing, using the P4 language to run core tasks on intelligent fabric.

The ONF has already done work separating the radio access network (RAN) controller from radio frequency equipment and aims to use SDN to control a pool of resources and make intelligent decisions about the placement of subscribers, workloads and how the available radio spectrum can best be used.

The ONF’s fifth reference design addresses next-generation SDN and will use work that Google has developed and is contributing to the ONF.

The ONF manages the OpenFlow protocol, used to define the separation between the control and data forwarding planes. But the ONF is the first to admit that OpenFlow overlooked such issues as equipment configuration and operational issues.

The ONF is now engaged in a next-generation SDN initiative. “We are taking a step back and looking at the whole problem, to address all the pieces that didn’t get resolved in the past,” says Sloane.

Google has also contributed two interfaces that allow device management and the ONF has started its Stratum project that will develop an open-source solution for white boxes to expose these interfaces. This software residing on the white box has no control intelligence and does not make any packet-forwarding decisions. That will be done by the SDN controller that talks to the white box via these interfaces. Accordingly, the ONF is updating its ONOS controller to use these new interfaces.

Source: ONF

Source: ONF

From reference designs to deployment

The ONF has a clear process to transition its reference designs to solutions ready for network deployment.

The reference designs will be produced by the eight operators working with other ONF partners. “The reference design is to help others in the industry to understand where you might choose to swap in another open source piece or put in a commercial piece,” says Sloane.

This explains how the components are linked to the reference design (see diagram above). The ONF also includes the concept of the exemplar platform, the specific implementation of the reference design. “We have seen that there is tremendous value in having an open platform, something like Residential CORD,” says Sloane. “That really is what the exemplar platform is.”

The ONF says there will be one exemplar platform for each reference design but operators will be able to pick particular components for their implementations. The exemplar platform will inevitably also need to interface to a network management and orchestration platform such as the Linux Foundation’s Open Network Automation Platform (ONAP) or ETSI’s Open Source MANO (OSM).

The process of refining the reference design and honing the exemplar platform built using specific components is inevitably iterative but once completed, the operators will have a solution to test, trial and, ultimately, deploy.

The ONF says that since announcing the strategic plan a month ago, several new partners - as yet unannounced - have committed to join.

“The intention is to have the reference designs up and running before the end of the year,” says Sloane.

SDN starts to fulfill its network optimisation promise

Infinera, Brocade and ESnet demonstrate the use of software-defined networking to provision and optimise traffic across several networking layers.

Infinera, Brocade and network operator ESnet are claiming a first in demonstrating software-defined networking (SDN) performing network provisioning and optimisation using platforms from more than one vendor.

Mike Capuano, Infinera

Mike Capuano, Infinera

The latest collaboration is one of several involving optical vendors that are working to extend SDN to the WAN. ADVA Optical Networking and IBM are working to use SDN to connect data centres, while Ciena and partners have created a test bed to develop SDN technology for the WAN.

The latest lab-based demonstration uses ESnet's circuit reservation platform that requests network resources via an SDN controller. ESnet, the US Department of Energy's Energy Sciences Network, conducts networking R&D and operates a large 100 Gigabit network linking research centres and universities. The SDN controller, the open source Floodlight Project design, oversees the network comprising Brocade's 100 Gigabit MLXe IP router and Infinera's DTN-X platform.

The goal of provisioning and optimising traffic across the routing, switching and optical layers has been a work in progress for over a decade. System vendors have undertaken initiatives such as External Network-Network Interface (ENNI) and multi-domain GMPLS but with limited success. "They have been talked about, experimented with, but have never really made it out of the labs," says Mike Capuano, vice president of corporate marketing at Infinera. "SDN has the opportunity to solve this problem for real."

"In the world of Web 2.0, the general approach is not to sit and wait till standards are done, but to prototype, test, find the gaps, report back, and do it again"

"SDN, and technologies like the OpenFlow protocol, allow all of the resources of the entire network to be abstracted to this higher level control," says Daniel Williams, director of product marketing for data center and service provider routing at Brocade.

Daniel William, BrocadeInfinera and ESnet demonstrated OpenFlow provisioning transport resources a year ago. This latest demonstration has OpenFlow provisioning at the packet and optical layers and performing network optimisation. "We have added more carrier-grade capabilities," says Capuano. "Not just provisioning, but now we have topology discovery and network configuration."

Daniel William, BrocadeInfinera and ESnet demonstrated OpenFlow provisioning transport resources a year ago. This latest demonstration has OpenFlow provisioning at the packet and optical layers and performing network optimisation. "We have added more carrier-grade capabilities," says Capuano. "Not just provisioning, but now we have topology discovery and network configuration."

“The demonstration is a positive step in the development of SDN because it showcases the multi-layer transport provisioning and management that many operators consider the prime use case for transport SDN,” says Rick Talbot, principal analyst, optical infrastructure at Current Analysis. "The demonstration’s real-time network optimisation is an excellent example of the potential benefits of transport SDN, leveraging SDN to minimise transit traffic carried at the router layer, saving both CapEx and OpEx."

Using such an SDN setup, service providers can request high-bandwidth links to meet specific networking requirements. "There can be a request from a [software] app: 'I need a 80 Gigabit flow for two days from Switzerland to California with a 95ms latency and zero packet loss'," says Capuano. "The fact that the network has the facility to set that service up and deliver on those parameters automatically is a huge saving."

Such a link can be established the same day of the request being made, even within minutes. Traditionally, such requests involving the IP and optical layers - and different organisations within a service provider - can take weeks to fulfill, says Infinera.

Current Analysis also highlights another potential benefit of the demonstration: how the control of separate domains - the Infinera wavelength and TDM domain and the Brocade layer 2/3 domain - with a common controller illustrates how SDN can provide end-to-end multi-operator, multi-vendor control of connections.

What next

The Open Networking Foundation (ONF) has an Optical Transport Working Group that is tasked with developing OpenFlow extensions to enable SDN control beyond the packet layer to include optical.

How is the optical layer in the demonstration controlled given the ONF work is unfinished?

"Our solution leverages Web 2.0 protocols like RESTful and JSON integrated into the Open Transport Switch [application] that runs on the DTN-X," says Capuano. "In the world of Web 2.0, the general approach is not to sit and wait till standards are done, but to prototype, test, find the gaps, report back, and do it again."

Further work is needed before the demonstration system is robust enough for commercial deployment.

"This is going to take some time: 2014 is the year of test and trials in the carrier WAN while 2015 is when you will see production deployment," says Capuano. "If service providers are making decision on what platforms they want to deploy, it is important to chose ones that are going to position them well to move to SDN when the time comes."

Coriant adds optical control to SDN framework

Coriant's CTO, Uwe Fischer, explains its Intelligent Optical Control and how the system will complement Transport SDN.

"You either master all that complexity at once, or you find the right entry point and provide value for each concrete challenge, and extend step-by-step from there"

Uwe Fischer, CTO of Coriant

Coriant has deployed a networking framework that it says will comply with Transport SDN, the software-defined networking (SDN) implementation for the wide area network (WAN).

The company's Intelligent Optical Control system is already deployed with one large North American operator while Coriant is working to install the system with other Tier 1 customers.

Work to extend SDN technology beyond the data centre to work across operators' transport networks has just begun. The Open Networking Foundation (ONF), for example, has established an Optical Transport Working Group to define the extensions needed to enable SDN control of the transport layer and not just packet.

"SDN and optical networking go together nicely; they are not decoupled but make up an end-to-end overall framework," says Uwe Fischer, CTO at Coriant.

The Intelligent Optical Control is designed to tackle immediate networking issues as Transport SDN is developed. Coriant says its system complies with the ONF's three networking layer SDN model. The top, application layer interfaces with the middle, control layer. And it is at the control layer where the SDN controller oversees the network elements found in the third, infrastructure layer.

Intelligent Optical Control adds two other components to the model. An extra intelligence component in the control layer that sits between the SDN controller and the infrastructure layer. This intelligence is designed to exploit the intricacies of the optical layer.

Coriant has also added an application at the topmost layer to automate operational procedures. "SDN at the application layer is centered around service creation," says Fischer. "We see a complete set of other applications which automate operational workflows."

Optical intelligence

One key benefit of SDN is the central view it has of the network and its resources. Such centralised control works well in the data centre and packet networking. Operators' networks are more complex, however, housing multiple vendors' equipment and multiple networking layers and protocols.

The ONF's Optical Transport Working Group is investigating two approaches - direct and abstract models - to enable the OpenFlow standard to extend its control across all the transport layers.

With the direct model, an SDN controller will talk to each network element, controlling its forwarding behaviour and port characteristics. The abstract model, in contrast, will enable the controller to talk to a network element or an intermediate controller or 'mediation'. This mediation performs a translator role, enacting requests from the SDN controller.

The direct model interests certain ONF members due to its potential of reduce the cost of networking equipment by moving much of the software from each element to the SDN controller. The abstract model, in contrast, has the benefit of limiting how much the controller needs to be exposed to the underlying network's details.

Coriant says it has yet to form a view as to the benefits of the direct and abstract ONF models. That said, Fischer does not see any mechanisms being discussed in the ONF that will fully exploit the potential of the photonic network. Accordingly, Coriant has added its own intelligence that sits between the SDN controller and the photonic layer.

“We fully comply with the approach of an SDN controller, however, we put another layer in between the control layer and the infrastructure layer,” says Fischer. “We consider it a part of the control layer, but adding the planning and routing intelligence to leverage the full performance of the infrastructure layer underneath."

Fischer says there is a role for abstraction at the photonic layer but perhaps only for metro networks. "We currently don't think this will really extend to the wide area photonic layer," he says.

"The added intelligence can leverage the full performance of the WDM network because it knows all the planning rules in detail," says Fischer. It does multi-layer optimisation across the transport layers. Coriant has added the intelligence because it does not think the transport-network-specific aspects can be centralised in a generic way.

Automated operations

Coriant's Intelligent Optical Controller also adds an application to automate operational procedures. Fischer cites how the application layer component benefits the workflow when a service is activated in the network.

With each service request, the Intelligent Optical Control details whether the new service can be squeezed onto existing infrastructure and details the service performance parameters to be expected, such as latency and the guaranteed bandwidth. "The operator can immediately judge the service level they would get," says Fischer.

Another planning mode supports the adding of equipment at the infrastructure layer. This enables a comparison to be made as to how the service level would improve with extra equipment in place.

If the operator can justify that business case for new hardware, the workflow is then automated. The tool creates the bill of materials, the electronic order, and the configuration and planning data needed to implement the hardware in the network.

Coriant says equipment and services can be time-tagged. If an engineer is known to be visiting a site once the hardware becomes available, the card can be pre-assigned and automatically used once it is plugged in. "There is a full consistency as to how the hardware is managed and optimised towards service creation," says Fischer.

Coriant is working with its major customers to create a testbed to demonstrate an SDN implementation of IP-over-DWDM. "It will involve interworking with third-party routers, and using SDN controllers to control the packet part of the network with Openflow and other mechanisms, and then connected to the Intelligent Optical Controller."

The goal is to demonstrate that Coriant's approach complies with this use case while better exploiting the optical network's capabilities.

Fischer says optical networking is moving to a new phase as transmission speeds move beyond 100 Gigabit.

"We are entering an interesting phase as capacity and reach hit the limits of practical networks," he says. "This means we are talking about flexible modulation formats and variously composed super-channels for 400 Gigabit and 1 Terabit."

In effect, a virtualisation of bandwidth is taking place at the photonic layer. "This fits nicely into the SDN principle as on the one hand it virtualises capacity, which very much fits in the model of virtualising infrastructure."

But it also brings challenges.

"There is currently not a good practical means to manage such flexible capacity at the photonic layer," says Fischer. This, says Coriant, it what its customers are saying. It also explains Coriant's decision to add the optical controller. "You either master all that complexity at once, or you find the right entry point and provide value for each concrete challenge, and extend step-by-step from there," says Fischer.

OIF defines carrier requirements for SDN

The Optical Internetworking Forum (OIF) has achieved its first milestone in defining the carrier requirements for software-defined networking (SDN).

The orchestration layer will coordinate the data centre and transport network activities and give easy access to new applications

Hans-Martin Foisel, OIF

The OIF's Carrier Working Group has begun the next stage, a framework document, to identify missing functionalities required to fulfill the carriers' SDN requirements. "The framework document should define the gaps we have to bridge with new specifications," says Hans-Martin Foisel of Deutsche Telekom, and chair of the OIF working group.

There are three main reasons why operators are interested in SDN, says Foisel. SDN offers a way for carriers to optimise their networks more comprehensively than before; not just the network but also processing and storage within the data centre.

"IP-based services and networks are making intensive use of applications and functionalities residing in the data centre - they are determining our traffic matrix," says Foisel. The data centre and transport network need to be coordinated and SDN can determine how best to distribute processing, storage and networking functionality, he says.

SDN also promises to simplify operators' operational support systems (OSS) software, and separate the network's management, control and data planes to achieve new efficiencies.

SDN architecture

The OIF's focus is on Transport SDN, involving the management, control and data plane layers of the network. Also included is an orchestration layer that will sit above the data centre and transport network, overseeing the two domains. Applications then reside on top of the orchestration layer, communicating with it and the underlying infrastructure via a programmable interface.

"Aligning the thinking among different people is quite an educational exercise, and we will have to get to a new understanding"

"The orchestration layer will coordinate the data centre and transport network activities and give, northbound, easy access to new applications," says Foisel.

A key SDN concept is programmability and application awareness, he says. The orchestration layer will require specified interfaces to ease the adding of applications independent of whether they impact the data centre, transport network or both.

Foisel says the OIF work has already highlighted the breadth of vision within the industry regarding how SDN should look. "Aligning the thinking among different people is quite an educational exercise, and we will have to get to a new understanding," he says.

Having equipment prototypes is also helping in understanding SDN. "Implementations that show part of this big picture - it is doable, it is working and how it is working - is quite helpful," says Foisel.

The OIF Carrier Working Group is working closely with the Open Networking Foundation's (ONF) Optical Transport Working Group to ensure that the two group are aligned. The ONF's Optical Transport Group is developing optical extensions to the OpenFlow standard.

Ciena and partners build SDN testbed for carriers

Ciena, working with partners, is building a network to enable the development of software-defined networking (SDN) applied to the wide area network (WAN). The motivation in creating the testbed network is to boost carrier confidence in SDN while aiding its development.

"When you get very serious vice presidents in tier-one carriers saying, 'This [SDN] is the biggest change in my career', there is something to it."

"When you get very serious vice presidents in tier-one carriers saying, 'This [SDN] is the biggest change in my career', there is something to it."

Chris Janz, Ciena

Many software elements will be needed for SDN in the carrier environment, spanning the network through to the back office. Much is made of the benefits SDN will deliver, but it is difficult for operators to gauge SDN's full potential until they transform their networks. Carriers also want the confidence that the industry will deliver the SDN components needed.

To this aim, Ciena, along with research and education partners, CANARIE, Internet2 and StarLight, are developing the SDN test network. Carriers, research partners and Ciena's R&D team will use the network to experiment and validate SDN's benefits for packet and optical WANs.

Parts of the SDN network have already been demonstrated and Ciena expects the SDN test environment to be up and running in the next couple of months. Many of the components are in prototype form.

"The goal is to leap to the end point by providing the key parts of the future system for a carrier-style SDN-powered WAN, thereby demonstrating conclusively the macro SDN service cases that people imagine can be delivered," says Chris Janz, vice president of market development at Ciena.

Another aim is to help carriers determine how best to migrate their networks to the future SDN framework.

Testbed

The testbed conforms to the Open Networking Foundation's SDN architecture that comprises three layers. "Two of them are software: business applications talking to a network controller system which drives the physical network," says Janz.

The business application layer includes such systems as customer management, service creation and billing. "What we think of as OSS/ BSS and cloud orchestration systems," says Janz. Components such as cloud orchestration systems and portals that simulate customer actions are being contributed by partners to exercise the testbed.

Ciena has chosen OpenFlow, the open standard, to drive the packet and transport layers. "This [SDN] is not the data centre, it is not all packet; it is a model carrier-style network," he says.

The SDN controller is designed to add flexibility and open up the design. "There is a clear spirit in SDN that customers want to take more affirmative control of their competitive destiny," says Janz. "They do not want to be locked into services, features and functions that their vendors deliver to them and their competitors."

Ciena is part of OpenDaylight, the Linux-based SDN controller industry initiative, and this will be included. "There is a modular structure with internal interfaces," says Janz. "There is leveraging of some early generation open source components for part of the structure."

The control system is designed to be the heart of what Janz refers to as 'autonomic intelligence' to deliver the sought-after benefits of SDN.

One such benefit is for carriers to contain their capital costs by better filling their networks with traffic - running them 'hotter'. "Can they move their networks from 35 percent average utilisation to 95 percent?" says Janz.

Software-based intelligence as delivered by SDN can match dynamically demand with fulfillment. "You have all the service demands coming into the [SDN] control system, and you have control at that point of the entire configuration and state of the network," says Janz.

Ciena has added real-time analytics software to the controller prototype to aid such optimisation. "It is piece parts like this that prove the postulated benefits of SDN," he says.

The platforms used for the network includes 4 Terabit core switches with 400 Gigabit packet blades and optical and Optical Transport Network (OTN) transport using Ciena's 6500 and 5400 converged packet-optical product families, all configured using OpenFlow.

The 2500km network will connect Ciena’s headquarters in Hanover, Maryland with the company's R&D center in Ottawa. International connectivity is provided by Internet2 through the StarLight International/National Communications Exchange in Chicago and CANARIE, Canada's national optical fiber based advanced R&E network.

"If we look ten years down the road, the whole [software] stack - from the bottom of the network to the top of the back office - will look different to what it does today"

Testbed goals

The initial goal is to implement key SDN services and prove use cases. The open testbed will run indefinitely, says Janz: "SDN will unfold and we view the testbed as a standing platform that will change over time with new software and hardware." In effect, the testbed will implement an end-to-end infrastructure whose state is controlled in fine detail by a centralised controller.

Janz cites mass-customised network-as-a-service (NaaS) as one service SDN-in-the-WAN can enable.

Traditional Ethernet connectivity is a static service where the customer requests a given bandwidth and specifies the end points. "It is a very limited template and once it is locked in, the customer generally can't change it," says Janz.

SDN promises more sophisticated connection services. "Instead of defining just the end points, you can define virtual end points," says Janz. All sorts of parameters can then be specified: bandwidth, latency, availability and the restoration required, and these can be changed with time. Moreover, all can be ordered using an application programming interface (API) to the orchestration system at the customer's site.

"It would enable the customer to have many effective service pipes rather than one big one, and resolve and match each of them to a specific application need or flow," says Janz. The customer can then optimise them as the needs of each changes with time.

The benefits to the operator include better meeting the customer's needs, and an ability to charge across multiple service parameters, not just two. "That should be the path to greater revenue," says Janz.

The trick is managing such a system. "Can you price it effectively and know that you are targeting maximum revenue? Can you co-manage all these customer changes while respecting the changing service parameters of each?" says Janz. "You need the critical mass of piece parts to show that such a situation is workable and that, hey, I can make more money with a service like that."

60-second interview with Michael Howard

Infonetics Research has interviewed global service providers regarding their plans for software-defined networking (SDN) and network functions virtualisation (NFV). Gazettabyte asked Michael Howard, co-founder and principal analyst, carrier networks, about Infonetics' findings.

"Data centres are simple when compared to carrier networks"

"Data centres are simple when compared to carrier networks"

Michael Howard, Infonetics Research

What is it about SDN and NFV - technologies still in their infancy - that already convinces 86 percent of the operators to deploy the technologies in their optical transport networks?

Michael H: Operators have a universal draw to SDN and NFV for two basic reasons:

1. They want to accelerate revenue by reducing the time to new services and applications.

2. They have operational drivers, of which there are also two parts:

- Carriers expect software-defined networks to give them a single view across multiple vendor equipment, network layers and equipment types for mobile backhaul, consumer digital subscriber line (DSL), passive optical network (PON), optical transport, routers, mobile core and Ethernet access. This global view will allow them to provision, monitor and deliver service-level agreements while controlling services, virtual networks and traffic flows in an easier, more flexible and automated way.

- An additional function possible with such a global view across the multi-vendor network is that traffic can be monitored and re-distributed along pathways to make best use of the network. In this way, the network can run 'hotter' and thereby require less equipment, saving capital expenditure (CapEx).

Optical transport networks have a history of being engineered to effect predictable flows on transport arteries and backbones. Many operators have deployed, or have been experimenting with, GMPLS (Generalized Multi-Protocol Label-Switching) and vendor control planes. So it is natural for them to want to bring this industry standard method of deploying an SDN control plane over the usually multi-vendor transport network.

In our conversations - independent of our survey - we find that several operators believe the biggest bang for the SDN buck is to use SDN for single control plane over multi-layer data - router, Ethernet - and the optical transport network.

"The virtualisation of data centre networks has inspired operators who want to apply the same general principles to their oh-so-much-more complex networks"

Early use of SDN has been in the data centre. How will the technologies benefit networks more generally and optical transport in particular?

SDNs were developed initially to solve the operational problems of un-automated networks. That is to say, slow human labour-intensive network changes required by the automated hypervisor as it moves, adds and changes virtual machines across servers that may be in the same data centre or in multiple data centres.

The virtualisation of data centre networks has inspired operators who want to apply the same general principles to their oh-so-much-more complex networks. Data centres are simple when compared to carrier networks. Data centres are basically large numbers of servers connected by Ethernet LANs and virtual LANs with some router separations of the LANs connecting servers.

"It will be many years before SDNs-NFV will be deployed in major parts of a carrier network"

Service provider networks are a set of many different types of networks including consumer broadband, business virtual private networks, optical transport, access/ aggregation Ethernet and router networks, mobile core and mobile backhaul. Each of these comprises multiple layers and almost certainly involves multiple vendor equipment. This explains why operators are starting their SDN-NFV investigations with small network segments which we call 'contained domains'. It will be many years before SDNs-NFV will be deployed in major parts of a carrier network.

You mention small SDN and NFV deployments. What will these early applications look like?

Our survey respondents indicated that intra-datacentre, inter-datacentre, cloud services, and content delivery networks (CDNs) will be the first to be deployed by the end of 2014. Other areas targeted longer term are optical transport, mobile packet core, IP Multimedia Subsystem, and more.

Was there a finding that struck you as significant or surprising?

Yes. A lot of current industry buzz is about optical transport networks, making me think that we'd see SDNs deployed soon. But what we heard from operators is that optical transport networks are further out in their deployment plans. This makes sense in that the Open Networking Foundation working group for transport networks has just recently got their standardisation efforts going, which usually takes a couple of years.

You say that it will be years before large parts or a whole network will be SDN-controlled. What are the main challenges here regarding SDN and will they ever control a whole network?

As I said earlier, carrier networks are complex beasts, and they are carrying revenue-generating services that cannot be risked by deployment of a new set of technologies that make fundamental changes to the way networks operate.

A major problem yet to be resolved or even addressed much by the industry is how to add SDN control planes to the router-controlled network that uses the MPLS control plane. SDN and MPLS control planes must cooperate or be coordinated in some way since they both control the same network equipment-not an easy problem, and probably the thorniest of all challenges to deploy SDNs and NFV.

The study participants rated CDNs, IP multimedia subsystem (IMS), and virtual routers/ security gateways as the main NFV applications. At least two of these segments already use servers so just how impactful will NFV be for operators?

Many operators see that they can deploy NFV in a much simpler way than deploying control plane changes involved with SDNs.

Many network functions have already been virtualised, that is software-only versions are available, and many more are under development. But these are individual vendor developments, not done according to any industry standards. This means that NFV - network functions run on servers rather than on specialised network equipment like firewalls, intrusion prevention/ intrusion detection systems, Evolved Packet Core hardware - is already in motion.

The formalisation of NFV by the carrier-driven ETSI standards group is underway, developing recommendations and standards so that these virtualised network functions can be deployed in a standardised way.

Infonetics interviewed purchase-decision makers at 21 incumbent, competitive and independent wireless operators from EMEA (Europe, Middle East, Africa), Asia Pacific and North America that have evaluated SDN projects or plan to do so. The carriers represent over half (53 percent) of the world's telecom revenue and CapEx.

OFC/NFOEC 2013 industry reflections - Final part

Gazettabyte spoke with Jörg-Peter Elbers, vice president, advanced technology at ADVA Optical Networking about the state of the optical industry following the recent OFC/NFOEC exhibition.

"There were many people in the OFC workshops talking about getting rid of pluggability and the cages and getting the stuff mounted on the printed circuit board instead, as a cheaper, more scalable approach"

Jörg-Peter Elbers, ADVA Optical Networking

Q: What was noteworthy at the show?

A: There were three big themes and a couple of additional ones that were evolutionary. The headlines I heard most were software-defined networking (SDN), Network Functions Virtualisation (NFV) and silicon photonics.

Other themes include what needs to be done for next-generation data centres to drive greater capacity interconnect and switching, and how do we go beyond 100 Gig and whether flexible grid is required or not?

The consensus is that flex grid is needed if we want to go to 400 Gig and one Terabit. Flex grid gives us the capability to form bigger pipes and get those chunks of signals through the network. But equally it allows not only one interface to transport 400 Gig or 1 Terabit as one chunk of spectrum, but also the possibility to slice and dice the signal so that it can use holes in the network, similar to what radio does.

With the radio spectrum, you allocate slices to establish a communication link. In optics, you have the optical fibre spectrum and you want to get the capacity between Point A and Point B. You look at the spectrum, where the holes [spectrum gaps] are, and then shape the signal - think of it as software-defined optics - to fit into those holes.

There is a lot of SDN activity. People are thinking about what it means, and there were lots of announcements, experiments and demonstrations.

At the same time as OFC/NFOEC, the Open Networking Foundation agreed to found an optical transport work group to come up with OpenFlow extensions for optical transport connectivity. At the show, people were looking into use cases, the respective technology and what is required to make this happen.

SDN starts at the packet layer but there is value in providing big pipes for bandwidth-on-demand. Clearly with cloud computing and cloud data centres, people are moving from a localised model to a cloud one, and this adds merit to the bandwidth-on-demand scenario.

This is probably the biggest use case for extending SDN into the optical domain through an interface that can be virtualised and shared by multiple tenants.

"This is not the end of III-V photonics. There are many III-V players, vertically integrated, that have shown that they can integrate and get compact, high-quality circuits"

Network Functions Virtualisation: Why was that discussed at OFC?

At first glance, it was not obvious. But looking at it in more detail, much of the infrastructure over which those network functions run is optical.

Just take one Network Functions Virtualisation example: the mobile backhaul space. If you look at LTE/ LTE Advanced, there is clearly a push to put in more fibre and more optical infrastructure.

At the same time, you still have a bandwidth crunch. It is very difficult to have enough bandwidth to the antenna to support all the users and give them the quality of experience they expect.

Putting networking functions such as cacheing at a cell site, deeper within the network, and managing a virtualised session there, is an interesting trend that operators are looking at, and which we, with our partnership with Saguna Networks, have shown a solution for.

Virtualising network functions such as cacheing, firewalling and wide area network (WAN) optimisation are higher layer functions. But as you do that, the network infrastructure needs to adapt dynamically.

You need orchestration that combines the control and the co-ordination of the networking functions. This is more IT infrastructure - server-based blades and open-source software.

Then you have SDN underneath, supporting changes in the traffic flow with reconfiguration of the network infrastructure.

There was much discussion about the CFP2 and Cisco's own silicon photonics-based CPAK. Was this the main silicon photonics story at the show?

There is much interest in silicon photonics not only for short reach optical interconnects but more generally, as an alternative to III-V photonics for integrated optical functions.

For light sources and amplification, you still need indium phosphide and you need to think about how to combine the two. But people have shown that even in the core network you can get decent performance at 100 Gig coherent using silicon photonics.

This is an interesting development because such a solution could potentially lower cost, simplify thermal management, and from a fab access and manufacturing perspective, it could be simpler going to a global foundry.

But a word of caution: there is big hype here too. This is not the end of III-V photonics. There are many III-V players, vertically integrated, that have shown that they can integrate and get compact, high-quality circuits.

You mentioned interconnect in the data centre as one evolving theme. What did you mean?

The capacities inside the data centre are growing much faster than the WAN interconnects. That is not surprising because people are trying to do as much as possible in the data centre because WAN interconnect is expensive.

People are looking increasingly at how to integrate the optics and the server hardware more closely. This is moving beyond the concept of pluggables all the way to mounted optics on the board or even on-chip to achieve more density, less power and less cost.

There were many people in the OFC workshops talking about getting rid of pluggability and the cages and getting the stuff mounted on the printed circuit board instead, as a cheaper, more scalable approach.

"Right now we are running 28 Gig on a single wavelength. Clearly with speeds increasing and with these kind of developments [PAM-8, discrete multi-tone], you see that this is not the end"

What did you learn at the show?

There wasn't anything that was radically new. But there were some significant silicon photonics demonstrations. That was the most exciting part for me although I'm not sure I can discuss the demos [due to confidentiality].

Another area we are interested in revolves around the ongoing IEEE work on short reach 100 Gigabit serial interfaces. The original objective was 2km but they have now honed in on 500m.

PAM-8 - pulse amplitude modulation with eight levels - is one of the proposed solutions; another is discrete multi-tone (DMT). [With DMT] using a set of electrical sub-carriers and doing adaptive bit loading means that even with bandwidth-limited components, you can transmit over the required distances. There was a demo at the exhibition from Fujitsu Labs showing DMT over 2km using a 10 Gig transmitter and receiver.

This is of interest to us as we have a 100 Gigabit direct detection dense WDM solution today and are working on the product evolution.

We use the existing [component/ module] ecosystem for our current direct detect solution. These developments bring up some interesting new thoughts for our next generation.

So you can go beyond 100 Gigabit direct detection?

Right now we are running 28 Gig on a single wavelength. Clearly with speeds increasing and with these kind of developments [PAM-8, DMT], you see that this is not the end.

Part 1: Software-defined networking: A network game-changer, click here

Part 2: OFC/NFOEC 2013 industry reflections, click here

Part 3: OFC/NFOEC 2013 industry reflections, click here

Part 4: OFC/NFOEC industry reflections, click here