ECOC 2023 industry reflections - Part 3

Gazettabyte is asking industry figures for their thoughts after attending the recent ECOC show in Glasgow. In particular, what developments and trends they noted, what they learned and what, if anything, surprised them. Here are responses from Coherent, Ciena, Marvell, Pilot Photonics, and Broadcom.

Julie Eng, CTO of Coherent

It had been several years since I’d been to ECOC. Because of my background in the industry, with the majority of my career in data communications, I was pleasantly surprised to see that ECOC had transitioned from primarily telecommunications, and largely academic, into more industry participation, a much bigger exhibition, and a focus on datacom and telecom. There were many exciting talks and demos, but I don’t think there were too many surprises.

In datacom, the focus, not surprisingly, was on architectures and implementations to support artificial intelligence (AI). The dramatic growth of AI, the massive computing time, and the network interconnect required to train models are driving innovation in fibre optic transceivers and components.

There was significant discussion about using Ethernet for AI compared to protocols such as InfiniBand and NVLink. For us as a transceiver vendor, the distinction doesn’t have a significant impact as there is little if any, difference in the transceivers we make for Ethernet compared to the transceivers we make for InfiniBand/NVLink. However, the impact on the switch chip market and the broader industry are significant, and it will be interesting to see how this evolves.

Linear pluggable optics (LPO) was a hot topic, as it was at OFC 2023, and multiple companies, including Coherent, demonstrated 100 gigabit-per-lane LPO. The implementation has pros and cons, and we may find ourselves in a split ecosystem, with some customers preferring LPO and others preferring traditional pluggable optics with DSP inside the module. The discussion is now moving to the feasibility of 200 gigabit-per-lane LPO.

Discussion and demonstrations of co-packaged optics also continued, with switch vendors starting to show Ethernet switches with co-packaged optics. Interestingly, the success of LPO may push out the implementation of co-packaged optics, as LPO realizes some of the advantages of co-packaged optics with a much less dramatic architectural change.

One telecom trend was the transition to 800-gigabit digital coherent optical modules, as customers and suppliers plan for and demonstrate the capability to make this next step. There was also significant interest in and discussion about 100G ZR. We demonstrated a new version with 0dBm high optical output power at ECOC 2023 while other companies showed components to support it. This is interesting for cable providers and potentially for data centre interconnect and mobile fronthaul and backhaul.

I was very proud that our 200 gigabit-per-lane InP-based DFB-MZ laser won the 2023 ECOC Exhibition Industry Award for Most Innovative Product in the category of Innovative Photonics Component.

ECOC was a vibrant conference and exhibition, and I was pleased to attend and participate again.

Loudon Blair, senior director, corporate strategy, Ciena

ECOC 2023 in Glasgow gave me an excellent perspective on the future of optical technology. In the exhibition, integrated photonic solutions, high-speed coherent pluggable optical modules, and an array of testing and interoperability solutions were on display.

I was especially impressed by how high-bandwidth optics is being considered beyond traditional networking. Evolving use cases include optical cabling, the radio access network (RAN), broadband access, data centre fabrics, and quantum solutions. The role of optical connectivity is expanding.

In the conference, questions and conversations revolved around how we solve challenges created by the expanding use cases. How do we accommodate continued exponential traffic growth on our fibre infrastructure? Coherent optics supports 1.6Tbps today. How many more generations of coherent can we build before we move on to a different paradigm? How do we maximize density and continue to minimize cost and power? How do we solve the power consumption problem? How do we address the evolving needs of data centre fabrics in support of AI and machine learning? What is the role of optical switching in future architectures? How can we enhance the optical layer to secure our information traversing the network?

As I revisited my home city and stood on the banks of the river Clyde – at a location once the shipbuilding centre of the world – I remembered visiting my grandfather’s workshop where he built ships’ compasses and clocks out of brass.

It struck me how much the area had changed from my childhood and how modern satellite communications had disrupted the nautical instrumentation industry. In the same place where my grandfather serviced ships’ compasses, the optical industry leaders were now gathering to discuss how advances in optical technology will transform how we communicate.

It is a good time to be in the optical business, and based on the pace of progress witnessed at ECOC, I look forward to visiting San Diego next March for OFC 2024.

Dr Loi Nguyen, executive vice president and general manager of the cloud optics business group, Marvell

What was the biggest story at ECOC? That the story never changes! After 40 years, we’re still collectively trying to meet the insatiable demand for bandwidth while minimizing power, space, heat, and cost. The difference is that the stakes get higher each year.

The public debut of 800G ZR/ZR+ pluggable optics and a merchant coherent DSP marked a key milestone at ECOC 2023. For the first time, small-form-factor coherent optics delivers performance at a fraction of the cost, power, and space compared to traditional transponders. Now, cloud and service providers can deploy a single coherent optics in their metro, regional, and backbone networks without needing a separate transport box. 800 ZR/ZR+ can save billions of dollars for large-scale deployment over the programme’s life.

Another big topic at the show was 800G linear drive pluggable optics (LPO). The multi-vendor live demo at the OIF booth highlighted some of the progress being made. Many hurdles, however, remain. Open standards still need to be developed, which may prove difficult due to the challenges of standardizing analogue interfaces among multiple vendors. Many questions remain about whether LPO can be scaled beyond limited vendor selection and bookend use cases.

Frank Smyth, CTO and founder of Pilot Photonics

ECOC 2023’s location in Glasgow brought me back to the place of my first photonics conference, LEOS 2002, which I attended as a postgrad from Dublin City University. It was great to have the show close to home again, and the proximity to Dublin allowed us to bring most of the Pilot team.

Two things caught my eye. One was 100G ZR. We noted several companies working on their 100G ZR implementations beyond Coherent and Adtran (formerly Adva) who announced the product as a joint development over a year ago.

100G ZR has attracted much interest for scaling and aggregation in the edge network. Its 5W power dissipation is disruptive, and we believe it could find use in other network segments, potentially driving significant volume. Our interest in 100G ZR is in supplying the light source, and we had a working demo of our low linewidth tunable laser and mechanical samples of our nano-iTLA at the booth.

Another topic was carrier and spatial division multiplexing. Brian Smith from Lumentum gave a Market Focus talk on carrier and spatial division multiplexing (CSDM), which Lumentum believes will define the sixth generation of optical networking.

Highlighting the approaching technological limitation on baud rate scaling, the ‘carrier’ part of CSDM refers to interfaces built from multiple closely-spaced wavelengths. We know that several system vendors have products with interfaces based on two wavelengths, but it was interesting to see this from a component/ module vendor.

We argue that comb lasers come into their own when you go beyond two to four or eight wavelengths and offer significant benefits over independent lasers. So CSDM aligns well with Pilot’s vision and roadmap, and our integrated comb laser assembly (iCLA) will add value to this sixth-generation optical networking.

Speaking of comb lasers, I attended an enjoyable workshop on comb lasers on the Sunday before the meetings got too hectic. The title was ‘Frequency Combs for Optical Communications – Hype or Hope’. It was a lively session featuring a technology push team and a market pull team presenting views from academia and industry.

Eric Bernier offered an important observation from HiSilicon. He pointed to a technology gap between what the market needs and what most comb lasers provide regarding power per wavelength, number of wavelengths, and data rate per lane. Pilot Photonics agrees and spotted the same gap several years ago. Our iCLA bridges it, providing a straightforward upgrade path to scaling to multi-wavelength transceivers but with the added benefits that comb lasers bring over independent lasers.

The workshop closed with an audience participation survey in which attendees were asked: Will frequency combs play a major role in short-reach communications? And will they play a major role in long-reach communications?

Unsurprisingly, given an audience interested in comb lasers, the majority’s response to both questions was yes. However, what surprised me was that the short-reach application had a much larger majority on the yes side: 78% to 22%. For long-reach applications the majority was slim: 54% to 46%.

Having looked at this problem for many years, I believe the technology gap mentioned is easier to bridge and delivers greater benefits for long-reach applications than for short-reach, at least in the near term.

Natarajan Ramachandran, director of product marketing, physical layer products division, Broadcom

Retimed pluggables have repeatedly shown resiliency due to their standards-based approach, offering reliable solutions, manufacturing scale, and balancing metrics around latency, cost and power.

At ECOC this year, multiple module vendors demonstrated 800G DR4 and 1.6T DR8 solutions with 200 gigabit-per-lane optics. As the IEEE works towards ratifying the specs around 200 gigabit per lane, one thing was clear at ECOC: the ecosystem – comprising DSP vendors, driver and transimpedence amplifier (TIA) vendors, and VCSEL/EML/silicon photonics vendors – is ready and can deliver.

Several vendors had module demonstrations using 200 gigabit-per-lane DSPs. What also was apparent at ECOC was that the application space and use cases, be it within traditional data centre networks, AI and machine learning clusters and telcom, continue to grow. Multiple technologies will find the space to co-exist.

Marvell kickstarts the 800G coherent pluggable era

Marvell has become the first company to provide an 800-gigabit coherent digital signal processor (DSP) for use in pluggable optical modules.

The 5nm CMOS Orion chip supports a symbol rate of over 130 gigabaud (GBd), more than double that of the coherent DSPs for the OIF’s 400ZR standard and 400ZR+.

Meanwhile, a CFP2-DCO pluggable module using the Orion can transmit a 400-gigabit data payload over 2,000km using the quadrature phase-shift keying (QPSK) modulation scheme.

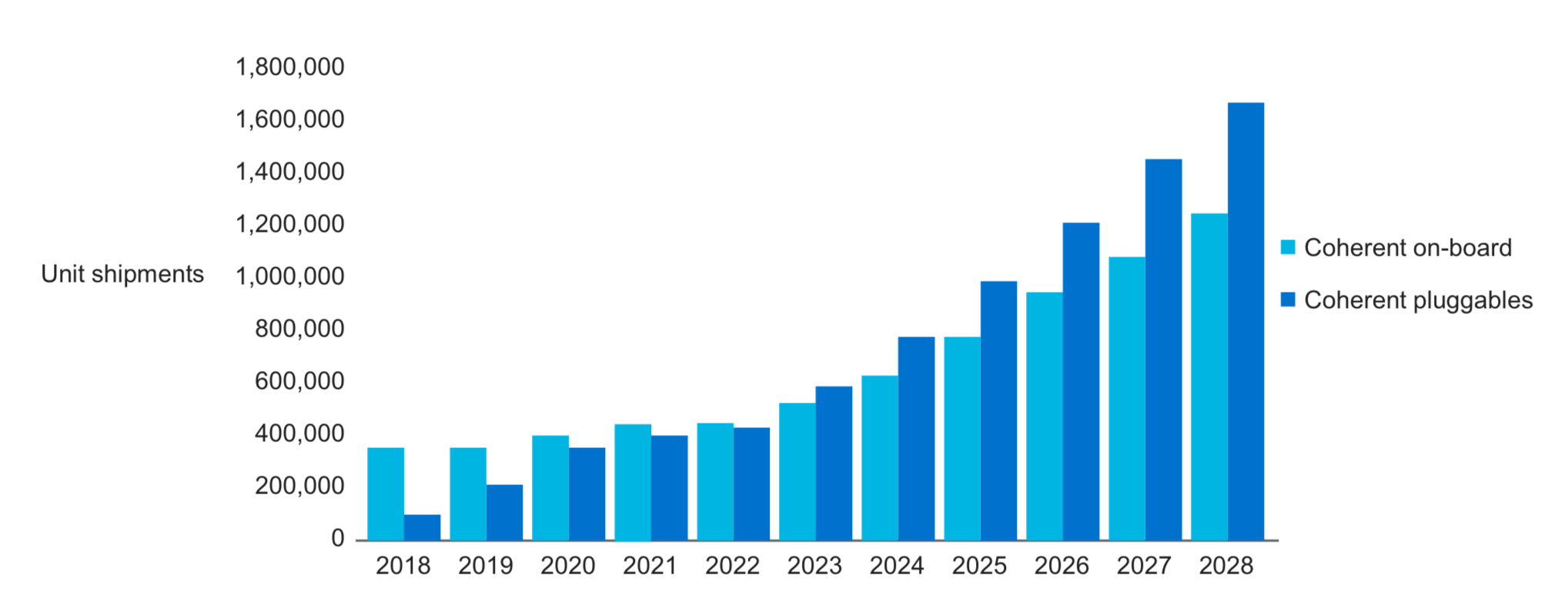

The Orion DSP announcement is timely, given how this year will be the first when coherent pluggables exceed embedded coherent module port shipments.

“We strongly believe that pluggable coherent modules will cover most network use cases, including carrier and cloud data centre interconnect,” says Samuel Liu, senior director of coherent DSP marketing at Marvell.

Marvell also announced its third-generation ColorZ pluggable module for hyperscalers to link equipment between data centres. The Orion-based ColorZ 800-gigabit module supports the OIF’s 800ZR standard and 800ZR+.

Fifth-generation DSP

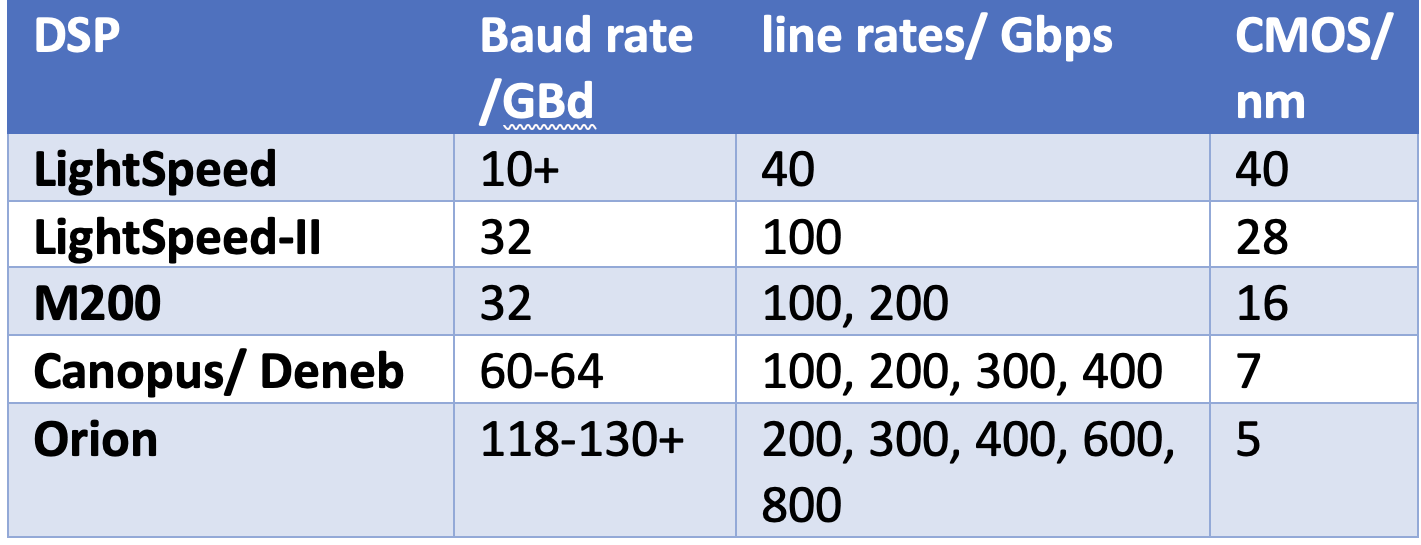

The Orion chip is a fifth-generation design yet Marvell’s first. First ClariPhy and then Inphi developed the previous four generations.

Inphi bought ClariPhy for $275 million in 2016, gaining the first two generation devices: the 40nm CMOS 40-gigabit LightSpeed chip and a 28nm CMOS 100- and 200-gigabit Lightspeed-II coherent DSP products. The 28nm CMOS DSP is now coming to the end of its life, says Liu.

Inphi added two more coherent DSPs before Marvell bought the company in 2021 for $10 billion. Inphi’s first DSP was the 16nm CMOS M200. Until then, Acacia (now Cisco-owned) had been the sole merchant company supplying coherent DSPs for CFP2-DCOs pluggable modules.

Inphi then delivered the 7nm 400-gigabit Canopus for the 400ZR market, followed a year later by the Deneb DSP that supports several 400-gigabit standards. These include 400ZR, 400ZR+, and standards such as OpenZR+, which also has 100-, 200-, and 300-gigabit line rates and supports the OpenROADM MSA specifications. “The cash cow [for Marvell] is [the] 7nm [DSPs],” says Liu.

The Inphi team’s first task after the acquisition was to convince Marvell’s CEO and its chief financial officer to make the most significant investment in a coherent DSP. Developing Orion cost between $100M-300M.

“We have been quiet for the last two years, not making any coherent DSP announcements,” says Liu. “This [the Orion] is the one.”

Marvell views being first to market with a 130GBd-plus generation coherent DSP as critical given how pluggables, including the QSFP-DD and the OSFP form factors, account for over half of all coherent ports shipped.

“It is very significant to be first to market with an 800ZR plug and DSP,” says Jimmy Yu, vice president at market research firm Dell’Oro Group. “I expect Cisco/Acacia to have one available in 2024. So, for now, Marvell is the only supplier of this product.”

Yu notes that vendors such as Ciena and Infinera have had 800 Gigabit-per-second (Gbps) coherent available for some time, but they are for metro and long-haul networks and use embedded line cards.

Use cases

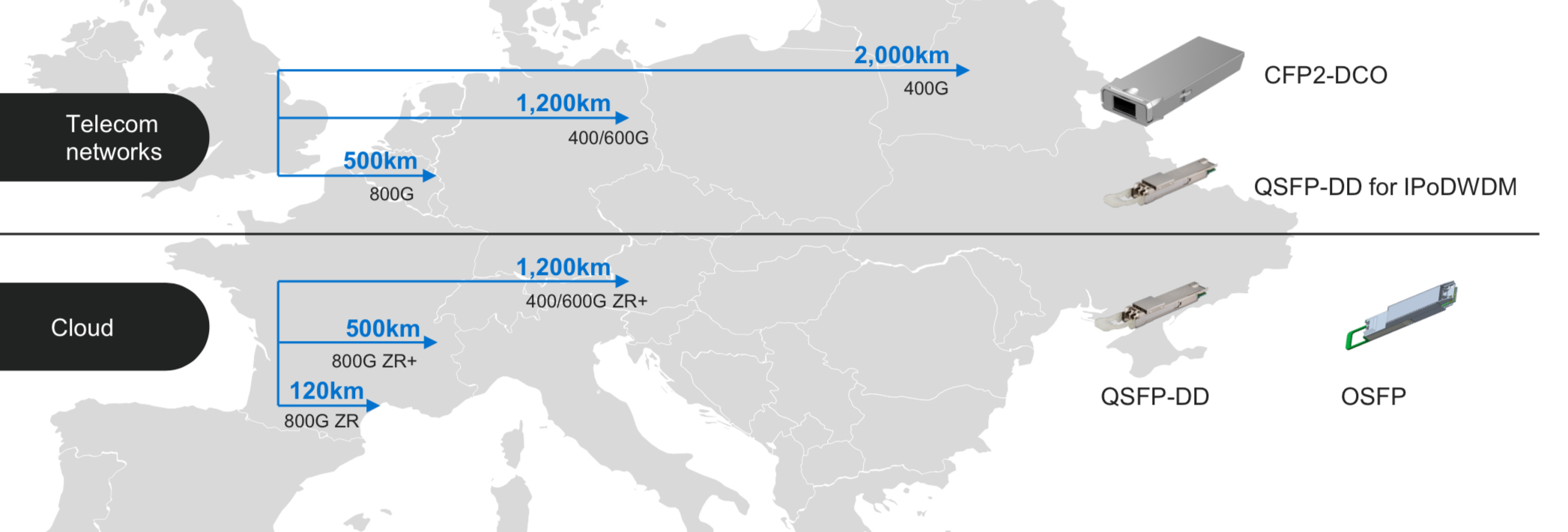

The Orion DSP addresses hyperscalers’ and telecom operators’ coherent needs. The DSP also implements various coherent standards to ensure that the vendors’ pluggable modules work with each other.

Liu says a DSP’s highest speed is what always gets the focus, but the Orion also supports lower line rates such as 600, 400 and 200Gbps for longer spans.

The baud rate, modulation scheme, and the probabilistic constellation shaping (PCS) technique are control levers that can be varied depending on the application. For example, 800ZR uses a symbol rate of only 118GBd and the 16-QAM modulation scheme to achieve the 120km specification while minimising power consumption. When performance is essential, such as sending 400Gbps over 2,000km, the highest baud rate of 130GBd is used along with QPSK modulation.

China is one market where Marvell’s current 7nm CFP2-DCOs are used to transport wavelengths at 100Gbps and 200Gbps.

Using the Orion for 200-gigabit wavelengths delivers an extra 1dB (decibel) of optical signal-to-noise ratio performance. The additional 1dB benefits the end user, says Liu: they can increase the engineering margin or extend the transmission distance. Meanwhile, probabilistic constellation shaping is used when spectral efficiency is essential, such as fitting a transmission within a 100GHz-width channel.

Liu notes that the leading Chinese telecom operators are open to using coherent pluggables to help reduce costs. In contrast, large telcos in North America and Europe use pluggables for their regional networks. Still, they prefer embedded coherent modems from leading systems vendors for long-haul distances greater than 1,000km.

Marvell believes the optical performance enabled by its 130GBd-plus 800-gigabit pluggable module will change this. However, all the leading system vendors have all announced their latest generation embedded coherent modems with baud rates of 130GBd to 150GBd, while Ciena’s 200GBd 1.6-terabit WaveLogic 6 coherent modem will be available next year.

The advent of 800-gigabit coherent will also promote IP over DWDM. 400ZR+ is already enabling the addition of coherent modules directly to IP routers for metro and metro regional applications. An 800ZR and 800ZR+ in a pluggable module will continue this trend beyond 400 gigabit to 800 gigabits.

The advent of an 800-gigabit pluggable also benefits the hyperscalers as they upgrade their data centre switches from 12.8 terabits to 25.6 and 51.2 terabits. The hyperscalers already use 400ZR and ZR+ modules, and 800-gigabit modules, which is the next obvious step. Liu says this will serve the market for the next four years.

Fujitsu Optical Components, InnoLight, and Lumentum are three module makers that all endorsed the Orion DSP announcement.

ColorZ 800 module

In addition to selling its coherent DSPs to pluggable module and equipment makers, Marvell will sell to the hyperscalers its latest ColorZ module for data centre interconnect.

Marvell’s first-generation product was the 100-gigabit coherent ColorZ in 2016 and in 2021 it produced its 400ZR ColorZ. Now, it is offering an 800-gigabit version – ColorZ 800 – to address 800ZR and 800ZR+, which include OpenZR+ and support for lower speeds that extend the reach to metro regional and beyond.

“We are first to market on this module, and it is now sampling,” says Josef Berger, associate vice president of marketing optics at Marvell.

Marvell addressing its module for the hyperscaler market rather than telecoms makes sense, says Yu, as it is the most significant opportunity.

“Most communications service providers’ interest is in having optical plugs with longer reach performance,” says Dell’Oro’s Yu. “So, they are more interested in ZR+ optical variants with high launch power of 0dBm or greater.”

Marvell notes a 30 per cent cost and power consumption reduction for each generation of ColorZ pluggable coherent module.

Liu concludes by saying that designing the Orion DSP was challenging. It is a highly complicated chip comprising over a billion logic gates. An early test chip of the Orion was used as part of a Lumentum demonstration at the OFC show in March.

The ColorZ 800 module will start being sampled this quarter.

What follows the Orion will likely be a 1.6-terabit DSP operating at 240GBd. The OIF has already begun defining the next 1.6T ZR standard.

Working at the limit of optical transmission performance

- Expect to see new optical transmission records at the upcoming ECOC 2023 conference.

- Keysight Technologies’ chart plots the record-setting optical transmission systems of recent years.

- The chart reveals optical transmission performance issues and the importance of the high-speed converters between the analogue and digital domains for test equipment and, by implication, for coherent digital signal processors (DSPs).

Engineers keep advancing optical systems to send more data across an optical fibre.

It requires advances in optical and electronic components that can process faster, higher-bandwidth signals, and that includes the most essential electronics part of all: the coherent DSP chip.

Coherent DSPs use state-of-the-art 5nm and 3nm CMOS chip manufacturing processes. The chips support symbol rates from 130-200 gigabaud (GBd). At 200GBd, the coherent DSP’s digital-to-analogue converters (DACs) and analogue-to-digital converters (ADCs) must operate at at least 200 giga samples-per-second (GSps) and likely closer to 250GSps. DACs drive the optical modulator in the optical transmission path while the ADCs are used at the optical receiver to recover the signal.

Spare a thought for the makers of test equipment used in labs that drive such coherent optical transmission systems. The designers must push their equipments’ DACs and ADCs to the limit to generate and sample the waveforms of these prototype next-generation optical transmission systems.

Optical transmission records

The recent history of record-setting optical transmission systems reveals the design challenges of coherent components and how ADC and DAC designs are evolving.

It is helpful to see how test equipment designers tackle ADC and DAC design, given the devices are a critical element of the coherent DSP, and when vendors are reluctant to detail how they achieve 200GBd baud rates using on-chip CMOS-based ADCs and DACs.

Nokia and Keysight Technologies published a post-deadline paper at the ECOC 2022 conference detailing the transmission of a 260GBd single-wavelength signal over 100km of fibre.

The system achieved the high baud rate using a thin-film lithium niobate modulator driven by Keysight’s M8199B arbitrary waveform generator. The M8199B uses a design consisting of two interleaved DACs to generate signals at 260GSps.

A second post-deadline ECOC 2022 paper, published by NTT, detailed the sending of over two terabits-per-second (Tbps) on a single wavelength. This, too, used Keysight’s M8199B arbitrary waveform generator.

The chart above highlights optical transmission records since 2015, plotting the systems’ net bit rate – from 800 gigabits to 2.2 Tbps – against a symbol rate measured in GBd.

As with commercial coherent optical transport systems, the goal is to keep increasing the symbol rate. A higher symbol rate sends more data over the same fibre spans. For example, the 400ZR coherent transmission standard uses a symbol rate of some 60GBd to send a 400Gbps wavelength, while 800ZR doubles the baud rate to some 120GBd to transmit 800Gbps over similar distances.

“With the 1600ZR project just started by the OIF, this trend will likely continue,” says Fabio Pittalá, product planner, broadband and photonic center of excellence at Keysight.

The signal generator test equipment options include the use of different materials – CMOS and silicon germanium – and moving from one DAC to a parallel multiplexed DAC design.

Single DACs

In 2017, Nokia achieved a 1Tbps transmission using a 100GBd symbol rate. Nokia used a Micram 6-bit 100GSps DAC in silicon germanium for the modulation.

For its next advancement in transmission performance, in 2019, Nokia used the same DAC but a faster ADC at the receiver, moving from a Tektronix instrument using a 70GHz ADC to the Keysight UXR oscilloscope with a 110GHz bandwidth ADC. The resulting net bit rate was nearly 1.4 terabits.

Keysight also developed the M8194A arbitrary waveform generator based on a CMOS-based DAC. The higher sampling rate of this arbitrary waveform generator increased the baud rate to 105GBd, but because of the bandwidth limitation, the net bit rate was lower.

The bandwidth of CMOS DACs can be improved but it tops out in the region of 50-60GHz. “It’s very difficult to scale to a higher baud rate using this technology,” says Pittalá. Silicon germanium, by contrast, supports much higher bandwidths but has a higher power consumption.

In 2020, Nokia reached 1.6Tbps at 128GBd using the Micram DAC5, an 8-bit 128GSps DAC based on silicon germanium. A year later, Keysight released the M8199A arbitrary waveform generator. “This was also based on 8-bit silicon germanium DACs operating at 128GSps, but the signal-to-noise ratio was greatly improved, allowing to generate higher-order quadrature amplitude modulation formats with more than sixteen levels,” says Pittalá.

This arbitrary waveform generator was used in systems that, coupled with advanced equalisation schemes, pushed the net bit rate to almost 2Tbps.

Going parallel

For the subsequent advances in baud rate, parallel DAC designs, multiplexing two or more DACs together, were implemented by different research labs.

In 2015, NTT multiplexed two DACs that advanced the symbol rate from 105GBd to 120GBd. In 2019, NTT moved to a different type of multiplexer, which, used with the same DAC, increased the baud rate to around 170GBd. Nokia also demonstrated a multiplexed design concept, which, together with a novel thin-film lithium niobate modulator, extended the symbol rate to 200GBd, achieving a 1.6Tbps net bit rate.

Last year, Keysight introduced its latest arbitrary waveform generator, the M8199B. The design also adopted a multiplexed DAC design.

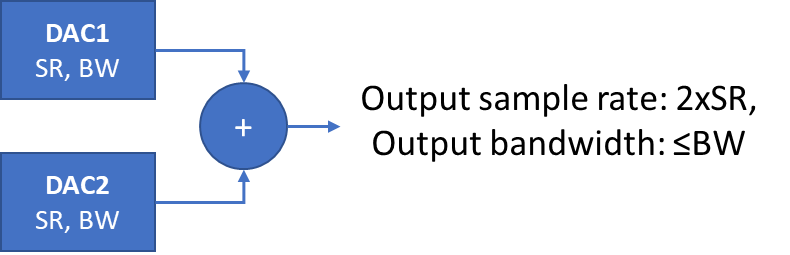

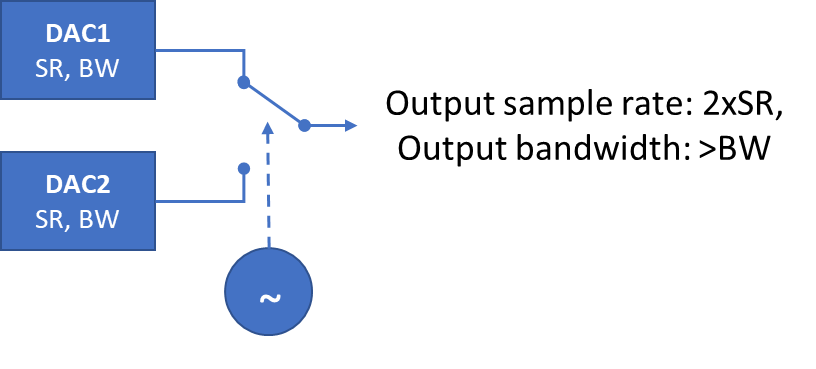

Multiplexing two DACs. SR refers to sample rate, BW refers to bandwidth. Source: Keysight.

“There are two 128GSps 8-bit silicon germanium DACs that are time-interleaved to get a higher speed signal per dimension,” says Pittalá. If the two DACs are shifted in time and added together, the result is a higher sampling rate overall. However, Pittalá points out that while the sample rate is effectively doubled, the overall bandwidth is defined by the individual DACs (see diagram above).

Pittalá also mentions another technique, based on active clocking, that does increase the bandwidth of the system. The multiplexer is clocked and acts like a fast switch between the two DAC channels. “In principle, you can double the bandwidth, ” he says. (See diagram below.)

The Keysight’s M8199B’s improved performance, combined with advances in components such as NTT’s 130GHz indium phosphide amplifier, resulted in over 2Tbps transmission, as detailed in the ECOC 2022 paper. As the baud rate was increased, the modulation scheme used and the net bit rate decreased. (Shown by the red dots on the chart).

In parallel, Keysight worked with Nokia, which used a thin-film lithium niobate modulator for their set-up, a different modulator to NTT’s. The test equipment directly drove the thin-film modulator; no external modulator driver was needed. The system was operated as high as 260GBd, achieving a net bit rate of 800Gbps.

Pittalà notes that while the NTT system differs from Nokia’s, Nokia’s two red points on the extreme right of the chart continue the trajectory of NTT’s six red points as the baud rate increases.

OFC’23 O-band record

The post-deadline papers at the OFC 2023 conference earlier this year did not improve the transmission performances of the ECOC papers.

A post-deadline paper published at OFC 2023 showed a record of coherent transmission in the O-Band. Working with Keysight, McGill University showed 1.6Tbps coherent transmission over 10km using a thin-film lithium niobate modulator. The system operated at 167GBd, used a 64-QAM modulation scheme, and used the Keysight M8199B.

Pittalà expects that at ECOC 2023, to be held in Glasgow in October, new record-breaking transmissions will be announced.

His chart will need updating.

Further information

Thin-film lithium niobate modulators, click here

The market opportunity for linear drive optics

A key theme at OFC earlier this year that surprised many was linear drive optics. Its attention at the optical communications and networking event was intriguing because linear drive – based on using remote silicon to drive photonics – is not new.

“I spoke to one company that had a [linear drive] demo on the show floor,” says Scott Wilkinson, lead analyst for networking components at Cignal AI. “They had been working on the technology for four years and were taken aback; they weren’t expecting people to come by and ask about it. “

The cause of the buzz? Andy Bechtolsheim, famed investor, co-founder and chief development officer of network switching firm Arista Networks and, before that, a co-founder of Sun Microsystems.

“Andy came out and said this is a big deal, and that got many people talking about it,” says Wilkinson, author of a recent linear drive market research report.

Linear Drive

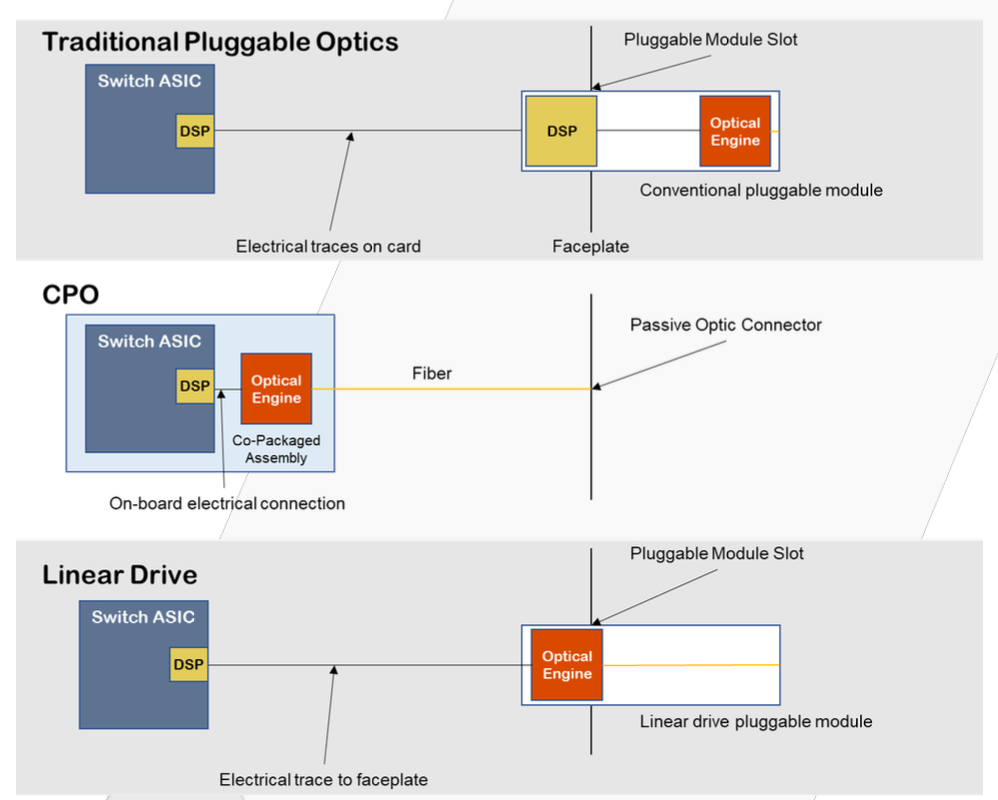

A data centre’s switch chip links to the platform’s pluggable optics via an electrical link. The switch chip’s serialiser-deserialiser (serdes) circuitry drives the signal across the printed circuit board to the pluggable optical module. A digital signal processor (DSP) chip inside the pluggable module cleans and regenerates the received signal before sending it on optically.

With linear drive optics, the switch ASIC’s serdes directly drives the module optics, removing the need for the module’s DSP chip. This cuts the module’s power consumption by half.

The diagram above contrasts linear drive optics compared with traditional pluggables and the emerging technology of co-packaged optics where the optics are adjacent to the switch chip and are packaged together. Linear drive optics can be viewed as a long-distance variant of co-packaged optics that comntinues to advance pluggable modules.

Proponents of linear drive claim that the power savings are a huge deal. “There will probably also be some cost savings, but it is not entirely clear how big they will be,” says Wilkinson. “But the only thing people want to discuss is the power savings.”

Misgivings

If linear drive’s main benefit is reducing power consumption, the technology’s sceptics counter with several technical and business issues.

One shortfall is that a module’s electrical and optical lanes must match in number and hence data rate. If there is a mismatch, the signal speeds must be translated between the electrical and optical lane rates, known as gearboxing. This task requires a DSP. Linear drive optics is thus confined to 800-gigabit optical modules: 800GBASE-DR8 and 800-gigabit 2xFR4. “There are people who think that at least 800 Gig – eight lanes in and eight lanes out – will continue to exist for a long time,” says Wilkinson.

Another question mark concerns the use of optics for artificial intelligence workloads. Adopters of AI will be early users of 200 gigabit-per-lane optics, requiring a gearbox-performing DSP.

Moreover, the advent of 200-gigabit electrical lanes will challenge serdes developers and, hence, linear drive designs. “It will be a technical challenge, the distances will be shorter, and some think it may never work,” says Wilkinson. “No matter how good the serdes is, it will not be easy.”

Co-packaged optics will also hit its stride once 200-gigabit serdes-based switch chips become available.

Another argument is that there are many ways to save power in the data centre; if linear drive introduces complications, why make it a priority?

Linear drive optics requires the switch chip vendors to develop high-quality serdes. Wilkinson says the leading switch vendors remain agnostic to linear drive, which is not a ringing endorsement. And while hyperscalers are investing time and resources into linear-drive technology, none have endorsed the technology such that they can withdraw at any stage without penalty.

“There is one story for linear drive and many stories against it,” admits Wilkinson. “When you compile them, it’s a pretty big story.”

Market opportunity

Cignal AI believes linear-drive optics will prove a niche market, with 800-gigabit linear-drive modules capturing 10 per cent of overall 800-gigabit pluggable shipments in 2027.

Wilkinson says the most promising example of the technology is active optical cables, where the modules and cables are a closed design. And while many companies are invested in the technology, and it will be successful, the opportunity will not be as significant as the proponents hope.

Broadcom's first Jericho3 takes on AI's networking challenge

Add Content

Broadcom’s Jericho silicon has taken an exciting turn.

The Jericho devices are used for edge and core routers.

But the first chip of Broadcom’s next-generation Jericho is aimed at artificial intelligence (AI); another indicator, if one is needed, of AI’s predominance.

Dubbed the Jericho3-AI, the device networks AI accelerator chips that run massive machine-learning workloads.

AI supercomputers

AI workloads continue to grow at a remarkable rate.

The most common accelerator chip used to tackle such demanding computations is the graphics processor unit (GPU).

GPUs are expensive, so scaling them efficiently is critical, especially when AI workloads can take days to complete.

“For AI, the network is the bottleneck,” says Oozie Parizer, (pictured) senior director of product management, core switching group at Broadcom.

Squeezing more out of the network equates to shorter workload completion times.

“This is everything for the hyperscalers,” says Parizer. “How quickly can they finish the job.”

Broadcom shares a chart from Meta (below) showing how much of the run time for its four AI recommender workloads is spent on networking, moving the data between the GPUs.

In the worse case, networking accounts for three fifths (57 per cent) of the time during which the GPUs are idle, waiting for data.

Scaling

Parizer highlights two trends driving networking for AI supercomputers.

One is the GPU’s growing input-output (I/O), causing a doubling of the interface speed of network interface cards (NICs). The NIC links the GPU to the top-of-rack switch.

The NIC interface speeds have progressed from 100 to 200 to now 400 gigabits and soon 800 gigabits, with 1.6 terabits to follow.

The second trend is the amount of GPUs used for an AI cluster.

The largest cluster sizes have used 64 or 256 GPUs, limiting the networking needs. But now machine-learning tasks require clusters of 1,000 and 2,000 GPUs up to 16,000 and even 32,000.

Meta’s Research SuperCluster (RSC), one of the largest AI supercomputers, uses 16,000 Nvidia A100 GPUs: 2,000 Nvidia DGX A100 systems each with eight A100 GPUs. The RSC also uses 200-gigabit NICs.

“The number of GPUs participating in an all-to-all exchange [of data] is growing super fast,” says Parizer.

The Jericho3-AI is used in the top-of-rack switch that connects a rack’s GPUs to other racks in the cluster.

The Jericho3-AI enables clusters of up to 32,000 GPUs, each served with an 800-gigabit link.

An AI supercomputer can used all its GPUs to tackle one large training job or split the GPUs into pools running AI workloads concurrently.

Either way, the cluster’s network must be ‘flat’, with all the GPU-to-GPU communications having the same latency.

Because the GPUs exchange machine-learning training data in an all-to-all manner, only when the last GPU receives its data can the computation move onto the next stage.

“The primary benefit of Jericho3-AI versus traditional Ethernet is predictable tail latency,” says Bob Wheeler, principal analyst at Wheeler’s Network. “This metric is very important for AI training, as it determines job-completion time.”

Data spraying

“We realised in the last year that the premium traffic capabilities of the Jericho solution are a perfect fit for AI,” says Parizer.

The Jericho3-AI helps maximise GPU processing performance by using the full network capacity while traffic routing mechanisms help nip congestion in the bud.

The Jericho also adapts the network after a faulty link occurs. Such adaptation must avoid heavy packet loss otherwise the workload must be restarted, potentially losing days of work.

AI workloads use large packet streams known as ‘elephant’ flows. Such flows tie up their assigned networking path, causing congestion when another flow also needs that path.

“If traffic follows the concept of assigned paths, there is no way you get close to 100 per cent network efficiency,” says Parizer.

The Jericho3-AI, used in a top-of-rack switch, has a different approach.

Of the device’s 28.8 terabits of capacity, half connects the rack’s GPUs’ NICs and a half to the ‘fabric’ that links the rack’s GPUs to all the other cluster’s GPUs.

Broadcom uses the 14.4-terabit fabric link as one huge logical pipe over which traffic is evenly spread. Each destination Jericho3-AI top-of-rack switch then reassembles the ‘sprayed’ traffic.

“From the GPU’s perspective, it is unaware that we are spraying the data,” says Parizer.

Receiver-based flow control

Spraying may ensure full use of the network’s capacity, but congestion can still occur. The sprayed traffic may be spread across the fabric to all the spine switches, but for short periods, several GPUs may send data to the same GPU, known as incast (see diagram).

The Jericho copes with this many-to-one GPU traffic using receiver-based flow control.

Traffic does not leave the receiving Jericho chip just because it has arrived, says Parizer. Instead, the receiving Jericho tells the GPUs with traffic to send and schedules part of the traffic from each.

“Traffic ends up queueing nearer the sender GPUs, notifying each of them to send a little bit now, and now,” says Parizer, who stresses this many-to-one condition is temporary.

Ethernet flow control is used when Jericho chip senses that too much traffic is being sent.

“There is a temporary stop in data transmission to avoid packet loss in network congestion,” says Parizer. “And it is only that GPU that needs to slow down; it doesn’t impact any adjacent GPUs.”

Fault control

At Optica’s Executive Forum event, held alongside the OFC show in March, Google discussed using a 6,000 tensor processor unit (TPU) accelerator system to run large language models.

One Google concern is scaling such clusters while ensuring overall reliability and availability, given the frailty of large-scale accelerator clusters.

“With a huge network having thousands of GPUs, there is a lot of fibre,” says Parizer. “And because it is not negligible, faults happen.”

New paths must be calculated when an optical link goes down in a network arrangement that using flows and assigned paths with significant traffic loss likely.

“With a job that has been running for days, significant packet loss means you must do a job restart,” says Parizer.

Broadcom’s solution, not based on flows and assigned paths, uses load balancing to send data over one less path overall.

Using the Jericho2C+, Broadcom has shown fault detection and recovery in microseconds such that the packet loss is low and no job restart is needed.

The Jericho portfolio of devices

Broadcom’s existing Jericho2 architecture combines an enhanced packet-processing pipeline with a central modular database and a vast memory holding look-up tables.

Look-up tables are used to determine how the packet is treated: where to send it, wrapping it in another packet (tunnel encapsulation), extracting it (tunnel termination), and access control lists (ACLs).

Different stages in the pipeline can access the central modular database, and the store can be split flexibly without changing the packet-processing code.

Jericho2 was the first family device with a 4.8 terabit capacity and 8 gigabytes of high bandwidth memory (HBM) for deep buffering.

The Jericho 2C followed, targeting the edge and service router market. Here, streams have lower bandwidth – 1 and 10 gigabits typically – but need better support in the form of queues, counters and metering, used for controlling packets and flows.

Pariser says the disaggregated OpenBNG initiative supported by Deutsche Telekom uses the Jericho 2C.

Broadcom followed with a third Jericho2 family device, the Jericho 2C+, which combines the attributes of Jericho2 and Jericho2C.

Jericho2C+ has 14.4 terabits of capacity and 144 100-gigabit interfaces, of which 7.2-terabit is network interfacing bandwidth and 7.2-terabit for the fabric interface.

“The Jericho2C+ is a device that can target everything,” says Pariser.

Applications include data centre interconnect, edge and core network routing, and even tiered switching in the data centre.

Hardware design

The Jericho3-AI, made up of tens of billions of transistors in a 5nm CMOS process, is now sampling.

Broadcom says it designed the chip to be cost-competitive for AI.

For example, the packet processing pipeline is simpler than the one used for core and edge routing Jericho.

“This also translates to lower latency which is something hyperscalers also care about,” says Parizer.

The cost and power savings from optimisations will be relatively minor, says Wheeler.

Broadcom also highlights the electrical performance of the Jericho3-AI’s input-output serialiser-deserialiser (serdes) interfaces.

The serdes allows the Jericho3-AI to be used with 4m-reach copper cables linking the GPUs to the top-of-rack switch.

The serdes performance also enables linear-drive pluggables that dont have no digital signal processor (DSP) for retiming with the serdes driving the pluggable directly. Linear drive saves cost and power.

Broadcom’s Ram Valega, senior vice president and general manager of the core switching group, speaking at the Open Compute Project’s regional event held in Prague in April, said 32,000 GPU AI clusters cost around $1 billion, with 10 per cent being the network cost.

Valega showed Ethernet outperforms Infiniband by 10 per cent for a set of networking benchmarks (see diagram above).

“If I can make a $1 billion system ten per cent more efficient, the network pays for itself,” says Valega.

Wheeler says the comparison predates the recently announced NVLink Network, which will first appear in Nvidia’s DGX GH200 platform.

“It [NVLink Network] should deliver superior performance for training models that won’t fit on a single GPU, like large language models,” says Wheeler.

Brandon Collings

There are certain news items on media sites that nothing can prepare you for.

A post by Lumentum on LinkedIn paid tribute to the passing of chief technology officer (CTO) Brandon Collings, aged 51; the unfolding words revealing the magnitude of the company’s loss.

Brandon Collings was a wonderful person and a joy to know. He had that rarest gift of being able to explain complex technologies and make sense of trends with answers of extraordinary clarity.

Who else could explain the intricacies of a colourless, directionless, contentionless, flexible, reconfigurable optical add-drop multiplexer (ROADM) while describing the ROADM market as “glacially slow”?

It was a joy to meet him at shows and interview him by phone.

Early in my interviews with him, I misspelt his name in a printed article. This was a rookie mistake. His response was generous, as if to say it was a most straightforward error.

I once asked Brandon to discuss recent books he had read and rated. He didn’t have much time to read, he said, but he loved reading to his children.

One favourite book in his household was “ish” by Peter Reynolds.

It is about a young boy who loves to draw everywhere. His elder brother sees his work and mocks it.

The boy continues, striving to draw better, but the results are ‘ish’ pictures – for example, a ‘vase-ish’ drawing rather than a vase. Frustrated, he stops.

But his sister loves his ‘ish’-like sketches, giving him the confidence to return to drawing and develop his unique style, which he then extends to his life.

Brandon’s summary: “A cute story about viewing the one’s self and the world through one’s own eyes rather than through others.”

After interviewing the CTO of Ciena late last year, I decided to make it the opening of a series of CTO interviews. Brandon Collings was first on the list.

I last met with him at the OFC show in March. After meeting with him, I was to meet Verizon’s Glenn Wellbrock, and we decided Glenn would come to the Lumentum stand as a meeting place.

After finishing the interview with Brandon, I went looking for Glenn only to spot he was already with Brandon. I watched how the two warmly embraced, talked animatedly and were delighted to share a moment.

The last time I saw Brandon was on the evening of OFC’s penultimate day.

I was in a restaurant, and we spotted Brandon and his Lumentum colleagues at a nearby table. At some point, Brandon got up, went round the table and said goodbye to his colleagues.

My impulse was to try and catch his eye and say goodbye. But he was getting a red-eye flight; he grabbed his backpack and was gone.

It is hard to imagine the void felt among his colleagues at Lumentum or by his beloved family.

The optical industry has many great, kind, and wonderful people. But this is a loss, an industry subtraction.

For me, his passing marks the industry into a before and an after.

OFC 2023 show preview

- Sunday, March 5 marks the start of the Optical Fiber Communication (OFC) conference in San Diego, California

- The three General Chairs – Ramon Casellas, Chris Cole, and Ming-Jun Li – discuss the upcoming conference

OFC 2023 will be a show of multiple themes. That, at least, is the view of the team overseeing and coordinating this year’s conference and exhibition.

General Chair Ming-Jun Li of Corning who is also the recipient of the 2023 John Tyndall Award (see profiles, bottom), begins by highlighting the 1,000 paper submissions, suggesting that OFC has returned to pre-pandemic levels.

Ramon Casellas, another General Chair, highlights this year’s emphasis on the social aspects of technology. “We are trying not to forget what we are doing and why we are doing it,” he says.

Casellas highlights the OFC’s Plenary Session speakers (see section, below), an invited talk by Professor Dimitra Simeonidou of the University of Bristol, entitled: Human-Centric Networking and the Road to 6G, and a special event on sustainability.

This year’s OFC has received more submissions on quantum communications totaling 66 papers.

In the past, papers on quantum communications were submitted across OFC’s tracks addressing networking, subsystems and systems, and devices. However, evaluating them was challenging given that only some reviewers are quantum experts, says Chris Cole, the third General Chair. Now, OFC has a subcommittee dedicated to quantum.

Another first is OFCnet, a production network that will run during the show.

Themes and topics

Machine learning is one notable topic this year. The subject is familiar at OFC, says Casellas, but people are discussing it more.

Casellas highlights one session at OFC 2021 that addressed machine learning for optics and optics for machine learning. “It showed the duality of how you can use photonic components to do machine learning and apply machine learning to optimise networking,” says Casellas.

This year there will be additional aspects of machine learning for networks, transmission, and operations, says Casellas.

Other General Chair highlighted subjects include point-to-multipoint coherent transmission, non-terrestrial and satellite networks, and optical switching and how its benefits networking in the data centre.

Google, for example, is presenting a paper detailing its use of optical switching in its data centres, something the hyperscaler disclosed at the ACM Sigcomm conference in August 2022.

There is also more interest in fibre sensors used in communications networks.

“We see an increasing trend because now if you want smart networks, you need sensors everywhere,” says Li.

“That is another theme that goes across all the tracks, which is a non-traditional optical fibre communication area that we’ve been embracing,” adds Cole.

As examples, Cole cites lidar, radio over fibre, free-space communications, microwave fibre sensing, and optical processing.

OFC has had contributions in these areas, he says, but now these topics have dedicated subcommittee titles.

Plenary session

This year’s three Plenary Session speakers are:

- Patricia Obo-Nai, CEO of Vodafone Ghana, who will discuss Harnessing Digitalization for Effective Social Change,

- Jayshree V. Ullal, president and CEO of Arista Networks, addressing The Road to Petascale Cloud Networking,

- and Wendell P. Weeks, chairman and CEO of Corning, whose talk is entitled Capacity to Transform.

“We thought that having someone who could explain how technology improves society would be very positive,” says Casellas. “I’m proud to have someone who can talk on the benefits of digitisation from the point of view of society, in addition to more technical topics.”

Li highlights how OFC celebrated the 50th anniversary of low-loss fibre two years ago and that last year, OFC celebrated the year of glass, displaying information on panels.

Corning has played an important role in both technologies. “Having a speaker [Wendell Weeks] from a glass company talking about both will be interesting to the OFC audience,” says Li.

Cole highlights the third speaker, Jayshree Ullal, the CEO of Arista. The successful networking player is one of the companies competing in what he describes as a very tough field.

Rump session

This year’s Rump Session tackles silicon photonics, a session moderated by Daniel Kuchta of IBM TJ Watson Research Center and Michael Hochberg of Luminous Computing.

Cole says silicon photonics has received tremendous attention, and the Rump Session is asking some tough questions: “Is silicon photonics for real now? Is it just one of the guys in the toolbox? Or is it being sunsetted or supplemented?”

Cole expects a lively session, not just challenging conventional thinking but having people representing exciting alternatives which are commercially successful alongside silicon photonics.

Show interests

The Chairs also highlight their interests and what they hope to learn from the show.

For Li, it is high-density fibre and cable trends.

Work on space division multiplexing (SDM) – multicore and multimode – fibre has been an OFC topic for over 15 years. One question Li has is whether systems will use SDM.

“It looks like multicore fibre is close, but we want to learn more from customers,” says Li.

Another interest is an alternative development of reduced coating diameter fibres that promise greater cable density. “I always think this is probably the short-term solution, but we’ll see what people think,” says Li.

AI drives interest in fibre density and latency issues in the data centre. Low latency is attracting interest in hollow-core fibre. Microsoft acquired Lumenisity, a UK hollow core fibre specialist, late last year.

Li is keen to learn more about quantum communications. “We want to understand, from a fibre component point of view, what to do in this area.”

Until now industry focus has been on quantum key distribution (QKD), but Li wants to learn about other applications of quantum in telecoms.

The bandwidth challenge facing datacom is Cole’s interest.

As the Rump Session shows, there has been an explosion of technologies to address data challenges, particularly in the data centre. “So I’m looking forward to continuing to see all the great ideas and all the different directions,” says Cole.

Another show interest for Cole is start-ups in components, subsystems and systems, and networking.

At Optica’s Executive Forum, held on Monday, March 6, a session is dedicated to start-ups. Casellas is looking forward to the talks on optical network automation.

Much work has applied machine learning to optical transmission and amplifier optimisation. Casellas wants to see how reinforcement learning is applied to optical network controllers. Telemetry and its use for network monitoring are another of his interests.

“Maybe because I’m an academic and idealistic, but I like everything related to disaggregation and the opening of interfaces,” says Casellas, who too wants to learn more about quantum.

“I have a basic understanding of this, but maybe it is hard to get into something new,” says Casellas. Non-terrestrial and satellite networks are other topics of interest.

Cole concludes with a big-picture view of photonics.

“It’s a great time to be in optics,” he says. “We’re seeing an explosion of creativity in different areas to solve problems.”

Ramon Casellas works at the Centre Tecnològic de Telecomunicacions de Catalunya (CTTC) research institution in Barcelona, Spain. His research focuses on networks – particularly the control plane, operations and management – rather than optical systems and devices.

Ming-Jun Li is a Corporate Fellow at Corning where he has that worked for 32 years.

Li is also this year’s winner of the John Tyndall Award, presented by Optica and the IEEE Photonics Society. The award is for Li’s ‘seminal contributions to advances in optical fibre technology.’

“It was a surprise to me and a great honour,” says Li. “The work is not only for myself but for many people working with me at Corning; I cannot achieve without working with meaningful colleagues.”

Chris Cole is a consultant whose background is in datacom optics. He will be representing the company, Coherent, at OFC.

Nokia jumps a class with its PSE-6s coherent modem

- The 130 gigabaud (GBd) PSE-6s coherent modem is Nokia’s first in-house design for high-end optical transport systems

- The PSE-6s can send an 800 gigabit Ethernet (800GbE) payload over 2,000km and 1.2 terabits of data over 100km.

- Two PSE-6s DSPs can send three 800GbE signals over two 1.2-terabit wavelengths

Nokia has unveiled its latest coherent modem, the super coherent Photonic Service Engine 6s (PSE-6s) that will power its optical transport platforms in the coming years.

The PSE-6s comes three years after Nokia announced its current generation of coherent digital signal processors (DSPs): the PSE-Vs DSP for the long-haul and the compact PSE-Vc for the coherent pluggable market.

Nokia is only detailing the PSE-6s; its next-generation coherent modem for pluggables will be a future announcement.

Nokia will demonstrate the PSE-6s at the upcoming OFC show in March while field trials involving systems using the PSE-6s will start in the year’s second half.

Reducing cost per bit

In 2020, Nokia bought Elenion, a silicon photonics company specialising in coherent optics.

The PSE-6s is Nokia’s first in-house coherent modem – the coherent DSP and associated optics – targeting the most demanding optical transport applications.

Nokia points out that coherent systems started approaching the Shannon limit two generations ago.

In the past, operators could reduce the cost of optical transport by sending more data down a fibre; upgrading the optical signal from 100 to 200 to 400 gigabit required only a 50GHz channel.

“You were getting more fibre capacity with each generation,” says Serge Melle, director of product marketing, optical networks at Nokia. And this helped the continual reduction of the cost-per-bit metric.

But with more advanced DSPs, implemented using 16nm, 7nm, and now 5nm CMOS, going to a higher symbol rate and hence data rate requires more spectrum, says Melle.

Increasing the symbol rate is still beneficial. It allows more data to be sent using the same modulation scheme or transmitting the same data payload over longer distances.

“So one of the things we are looking to do with the PSE-6s is how do we still enable a lower total cost of ownership even though you don’t get more capacity per wavelength or fibre,” says Melle.

Symbol rate classes

Coherent optics from the leading vendors use a symbol rate of 90-107 gigabaud (GBd), while Cisco-owned Acacia’s latest 1.2-terabit coherent modem in a CIM-8 module operates at 140GBd.

Acacia uses a classification system based on symbol rate. First-generation coherent systems operating at 30-34GBd are deemed Class 1. Class 2 doubles the baud rates to 60-68GBd, the symbol rate window used for 400ZR coherent optics, for hyperscalers to connect equipment across their data centres up to 120km apart.

The DSPs from the leading optical transport systems vendors operating at 90-107GBd are an intermediate step between Class 2 and Class 3 using Acacia’s classification. In contrast, Acacia has jumped directly from Class 2 to Class 3 with its 140GBd CIM-8 coherent modem.

Competitors view Acacia’s classification scheme as a marketing exercise and counter that their 90-107GBd optical transport systems benefited customers for over two years.

Nokia’s 90GBd PSE-Vs can send 400 gigabits using quadrature phase-shift keying (QPSK) over 3,000km. This contrasts with its earlier 67GBd PSE-3s that sends 400GbE up to 1,000km using 16-QAM.

However, with the PSE-Vs, Nokia, unlike its optical transport competitors, Infinera, Ciena and Huawei, decided not to support 800-gigabit wavelengths.

Nokia argued that 7nm CMOS, 90-100GBd coherent optics tops out at 600 gigabit when used for distances of several hundred kilometers, while metro-regional distances are more economically served using 400-gigabit pluggable optics such as the CFP2 implementing 400ZR+.

With the 130Gbd PSE-6s, Nokia has a Class 3 coherent modem with the PSE-6s capable of sending 800 gigabits more than 2,000km.

The PSE-6s also doubles the maximum data rate of the PSE-Vs to 1.2 terabits per wavelength. However, at 1.2 terabits, the reach is 100-plus km, valuable for very high capacity metro transport and data centre interconnect.

Scale, reach and power consumption per bit

Nokia highlights the PSE-6s’ main three performance metric improvements.

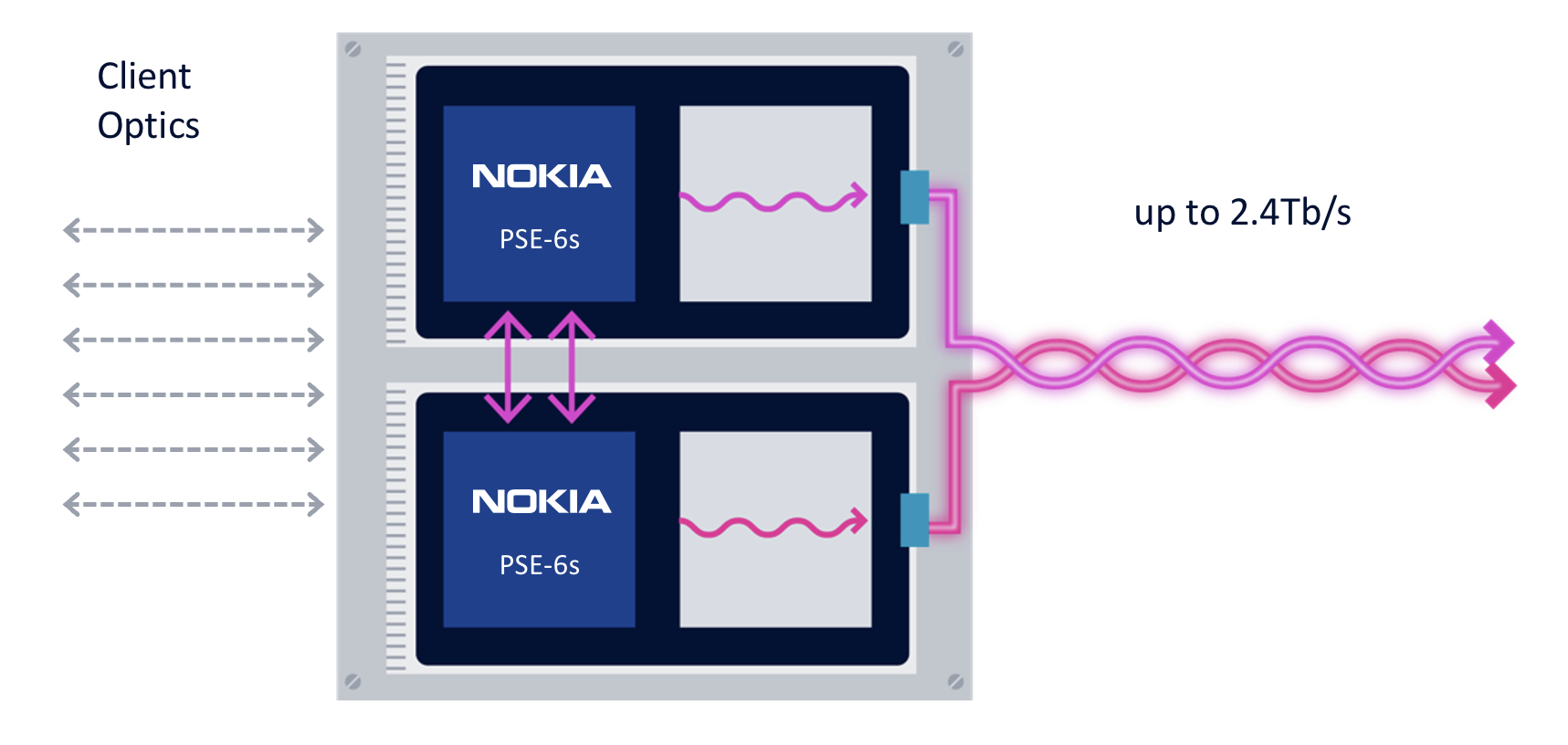

First, the coherent modem delivers scaling: two coherent optical engines fit on a line card to deliver 2.4 terabits to transport emerging high-speed services such as 800GbE.

The two PSE-6s are linked using a dedicated interface to share the client-side signals (see diagram).

“We are not the only ones introducing a 5nm solution, but I think we are the only ones that allow two DSPs to work together,” says Melle.

Without the interface, a single 800GbE and up to four 100GbE clients or a 400GbE client can be sent over each DSP’s 1.2-terabit wavelength. Adding the interface, an operator can send three uniform 800GbE clients, with the interface splitting the third 800GbE client between the two DSPs.

“In a single line card, you can stripe the three 800-gigabit services rather than have to deploy three separate line cards in the network,” says Melle.

Nokia is not detailing the interface used to link the DSPs but said that the interface is used for data only and not to share signal processing resources between the ASICs.

“There is an extra amount of circuitry to share the client bandwidth across the two DSPs, but it is not high power consuming, and most transponders have some circuitry between the clients and the DSP,” says Melle. “So the incremental ‘power tax’ is marginal; it doesn’t add any significant power overhead.”

The resulting 2.4-terabit transmission is sent as two 1.2-terabit wavelengths, each occupying a 150GHz-wide channel. Existing systems that operate at 90-107GBd typically use a 112.5GHz channel for an 800-gigabit transmission, so the PSE-6s delivers a fibre capacity benefit.

The two wavelengths can be bonded, as in a two-channel ‘super-channel’, or sent to separate locations.

The second improvement is optical performance. For example, an 800-gigabit payload can travel over 2,000km. Nokia claims this is 3x the reach of existing commercial optical transport systems.

The improved transmission performance is achieved using a combination of the 130GBd baud rate, probabilistic constellation shaping (PCS), and improved forward error correction (FEC). Melle says the contributions to the improvement are 90 per cent baud rate and 10 per cent due to coherent modem algorithm tweaks.

“Baud rate is king; that is what really drives this improved performance,” says Melle.

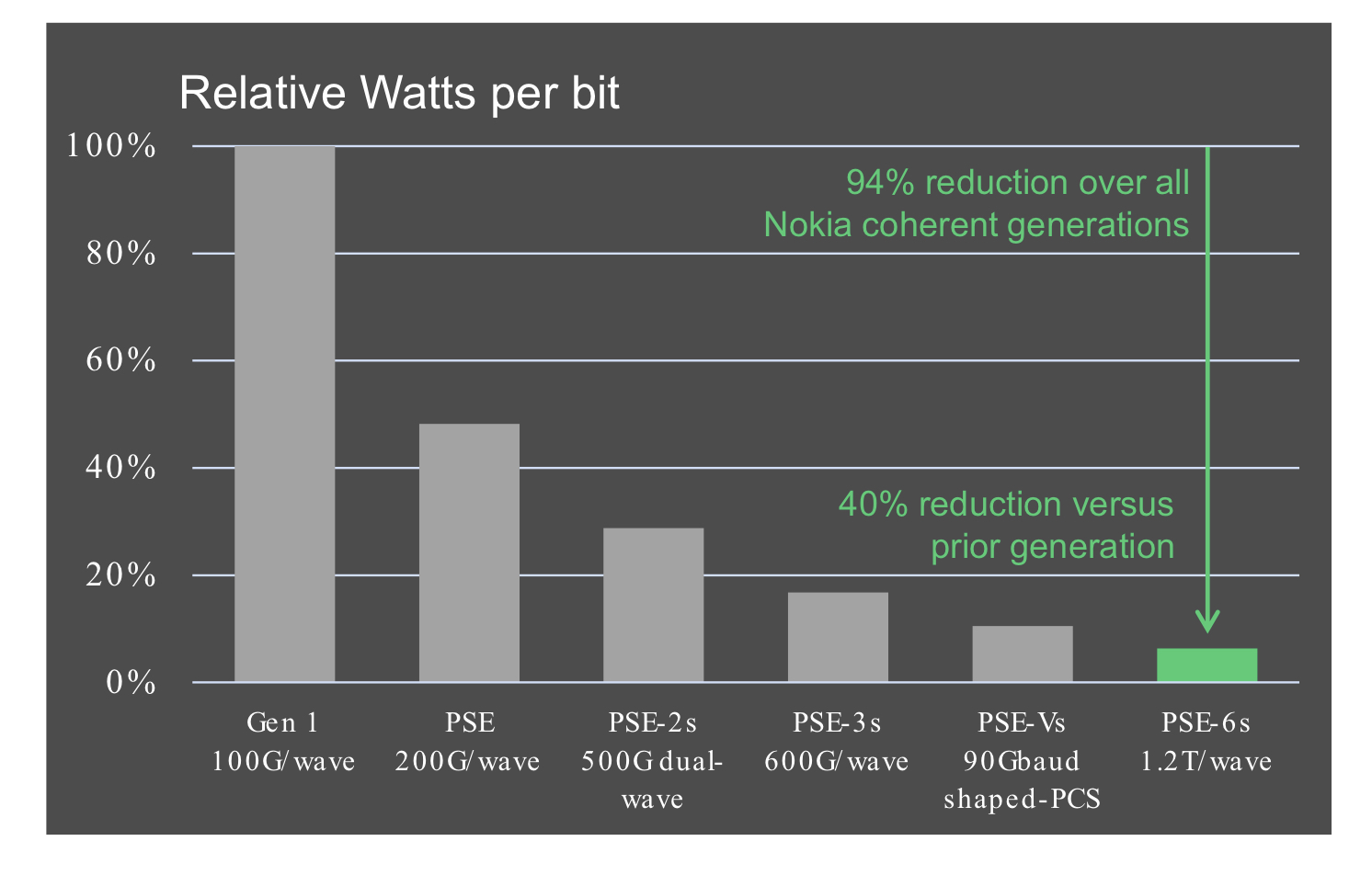

The third benefit is reduced power consumption at the device and system (networking) levels.

Using a 5nm finFET CMOS process to make the PSE-6s DSP ASIC and developing denser line cards (two modems per card) means systems will consume 60 per cent less power than Nokia’s existing coherent technology.

According to Nokia, the PSE-6s optical engine consumes 40 per cent fewer Watts per bit compared to the PSE-Vs.

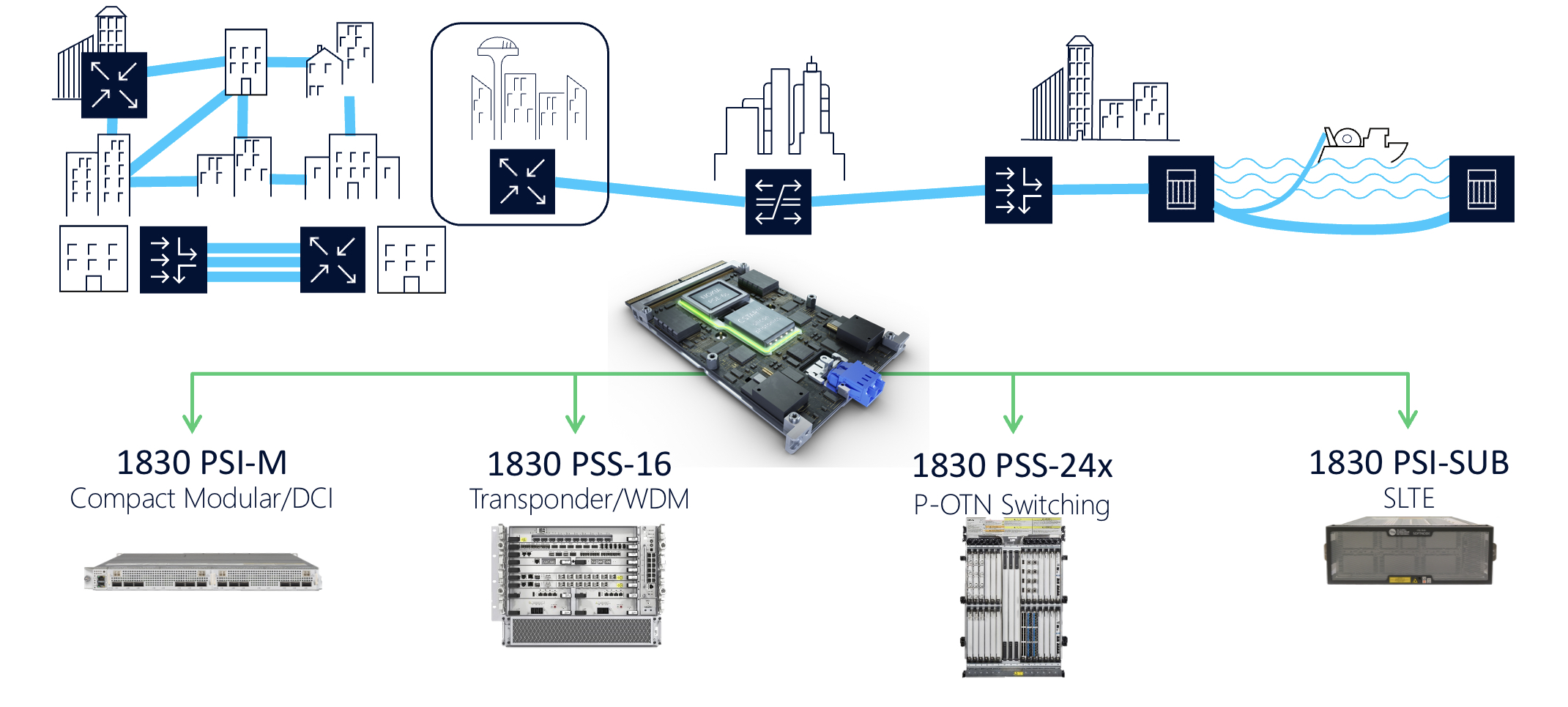

Nokia 1830 transport systems

The PSE-6s line cards fit into Nokia’s existing range of 1830 transport platforms.

These include the 1830 PSI-M compact modular data centre interconnect, the 1830 PSS-16 transponder and WDM line system, the 1830 PSS-24x P-OTN and switching chassis, and the 1830 PSI-SUB subsea line-terminating equipment.

For example, the PSI-M platform can hold two line cards, each with two PSE-6s.

ECOC '22 Reflections - Part 3

Gazettabyte is asking industry and academic figures for their thoughts after attending ECOC 2022, held in Basel, Switzerland. In particular, what developments and trends they noted, what they learned, and what, if anything, surprised them.

In Part 3, BT’s Professor Andrew Lord, Scintil Photonics’ Sylvie Menezo, Intel’s Scott Schube, and Quintessent’s Alan Liu share their thoughts.

Professor Andrew Lord, Senior Manager of Optical Networks Research, BT

There was strong attendance and a real buzz about this year’s show. It was great to meet face-to-face with fellow researchers and learn about the exciting innovations across the optical communications industry.

The clear standouts of the conference were photonic integrated circuits (PICs) and ZR+ optics.

PICs are an exciting piece of technology; they need a killer use case. There was a lot of progress and discussion on the topic, including an energetic Rump session hosted by Jose Pozo, CTO at Optica.

However, there is still an open question about what use cases will command volumes approaching 100,000 units, a critical milestone for mass adoption. PICs will be a key area to watch for me.

We’re also getting more clarity on the benefits of ZR+ for carriers, with transport through existing reconfigurable optical add-drop multiplexer (ROADM) infrastructures. Well done to the industry for getting to this point.

All in all, ECOC 2022 was a great success. As one of the Technical Programme Committee (TPC) Chairs for ECOC 2023 in Glasgow, we are already building on the great show in Basel. I look forward to seeing everyone again in Glasgow next year.

Sylvie Menezo, CEO of Scintil Photonics

What developments and trends did I note at ECOC? There is a lot of development work on emergent hybrid modulators.

Scott Schube, Senior Director of Strategic Marketing and Business Development, Silicon Photonics Products Division at Intel.

There were not a huge amount of disruptive announcements at the show. I expect the OFC 2023 event will have more, particularly around 200 gigabit-per-lane direct-detect optics.

Several optics vendors showed progress on 800 gigabit/ 2×400 gigabit optical transceiver development. There are now more vendors, more flavours and more components.

Generalising a bit, 800 gigabit seems to be one case where the optics are ready ahead of time, certainly ahead of the market volume ramp.

There may be common-sense lessons from this, such as the benefits of technology reuse, that the industry can take into discussions about the next generation of optics.

Alan Liu, CEO of Quintessent

Several talks focused on the need for high wavelength count dense wavelength division multiplexing (DWDM) optics in emerging use cases such as artificial intelligence/ machine learning interconnects.

Intel and Nvidia shared their vision for DWDM silicon photonics-based optical I/O. Chris Cole discussed the CW-WDM MSA on the show floor, looking past the current Ethernet roadmap at finer DWDM wavelength grids for such applications. Ayar Labs/Sivers had a DFB array DWDM light source demo, and we saw impressive research from Professor Keren Bergman’s group.

An ecosystem is coalescing around this area, with a healthy portfolio and pipeline of solutions being innovated on by multiple parties, including Quintessent.

The heterogeneous integration workshop was standing room only despite being the first session on a Sunday morning.

In particular, heterogeneously integrated silicon photonics at the foundry level was an emergent theme as we heard updates from Tower, Intel, imec, and X-Celeprint, among other great talks. DARPA has played – and plays – a key role in seeding the technology development and was also present to review such efforts.

Fibre-attach solutions are an area to watch, in particular for dense applications requiring a high number of fibres per chip. There is some interesting innovation in this area, such as from Teramount and Suss Micro-Optics among others.

Shortly after ECOC, Intel also showcased a pluggable fibre attach solution for co-packaged optics.

Reducing the fibre packaging challenge is another reason to employ higher wavelength count architectures and DWDM to reduce the number of fibres needed for a given aggregate bandwidth.