The quiet progress of Network Functions Virtualisation

Network Functions Virtualisation (NFV) is a term less often heard these days.

Yet the technology framework that kickstarted a decade of network transformation by the telecom operators continues to progress.

The working body specifying NFV, the European Telecommunications Standards Institute’s (ETSI) Industry Specification Group (ISG) Network Functions Virtualisation (NFV), is working on the latest releases of the architecture.

The releases add AI and machine learning, intent-based management, power savings, and virtual radio access network (VRAN) support.

ETSI is also shortening the time between NFV releases.

“NFV is quite a simple concept but turning the concept into reality in service providers’ networks is challenging,” says Bruno Chatras, ETSI’s ISG NFV Chairman and senior standardisation manager at Orange Innovation. “There are many hidden issues, and the more you deploy NFV solutions, the more issues you find that need to be addressed via standardisation.”

NFV’s goal

Thirteen leading telecom operators published a decade ago the ETSI NFV White Paper.

The operators were frustrated. They saw how the IT industry and hyperscalers innovated using software running on servers while they had cumbersome networks that couldn’t take advantage of new opportunities.

Each network service introduced by an operator required specialised kit that had to be housed, powered, and maintained by skilled staff that were increasingly hard to find. And any service upgrade required the equipment vendor to write a new release, a time-consuming, costly process.

The telcos viewed NFV as a way of turning network functions into software. Such network functions – constituents of services – could then be combined and deployed.

“We believe Network Functions Virtualisation is applicable to any data plane packet processing and control plane function in fixed and mobile network infrastructures,” claimed the authors in the seminal NFV White Paper.

A decade on

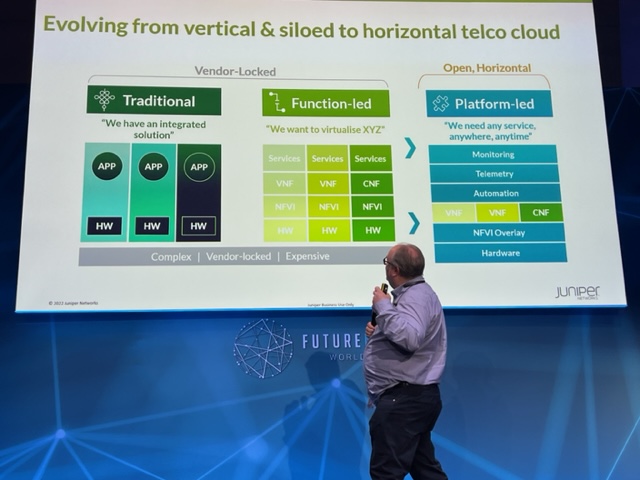

Virtual network functions (VNFs) now run across the network, and the transformation buzz has moved from NFV to such topics as 5G, Open RAN, automation and cloud-native.

Yet NFV changed the operators’ practices by introducing virtualisation, disaggregation, and open software practices.

A decade of network transformation has given rise to new challenges while technologies such as 5G and Open RAN have emerged.

Meanwhile, the hyperscalers and cloud have advanced significantly in the last decade.

“When we coined the term NFV in the summer of 2012, we never expected the cloud technologies we wanted to leverage to stand still,” says Don Clarke, one of the BT authors of the original White Paper. “Indeed, that was the point.”

NFV releases

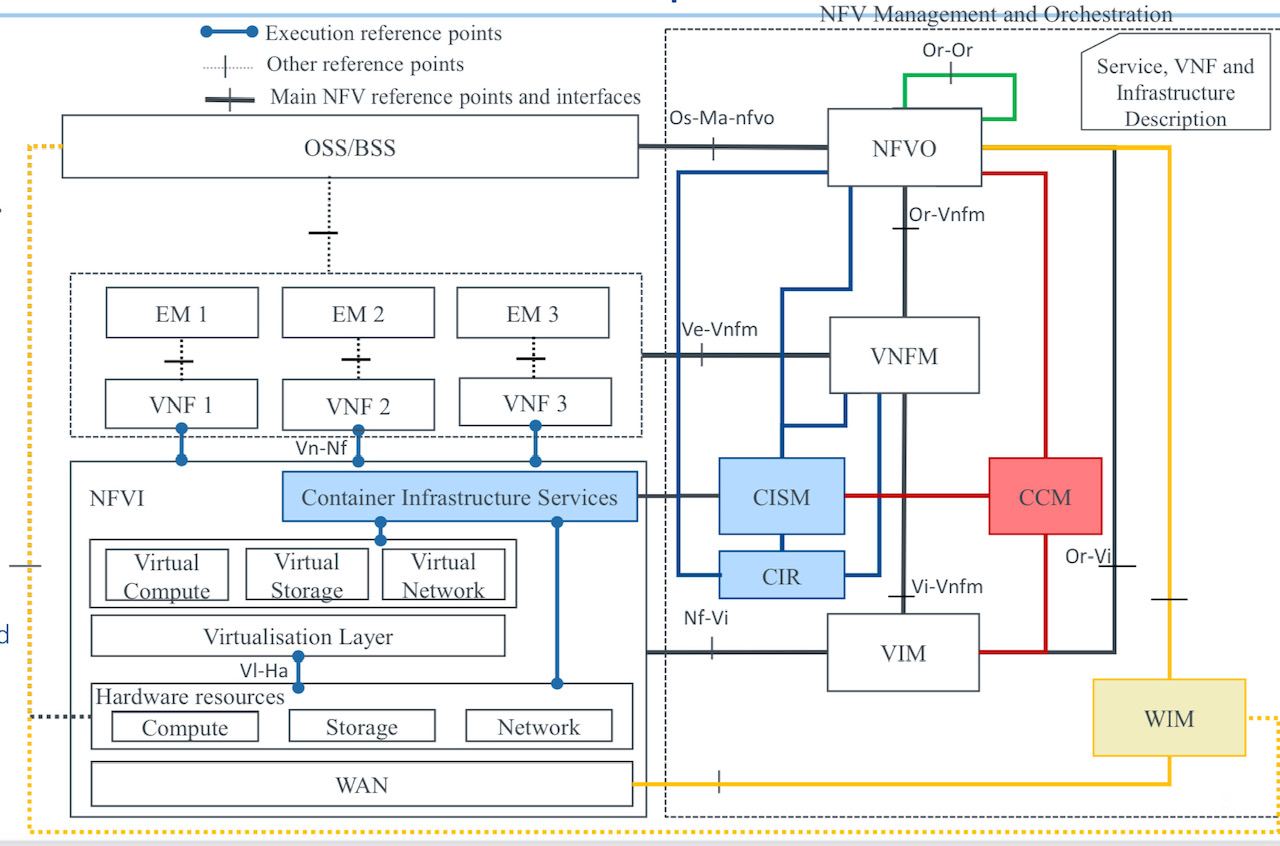

The ISG NFV’s work began with a study to confirm NFV’s feasibility, and the definition of the NFV architecture and terminology.

Release 2 tackled interoperability. The working group specified application programming interfaces (APIs) between the NFV management and orchestration (MANO) functions using REST interfaces, and also added ‘descriptors’.

A VNF descriptor is a file that contains the information needed by the VNFM, an NFV-MANO functional block, to deploy and manage a VNF.

Release 3, whose technical content is now complete, added a policy framework. Policy rules given to the NFV orchestrator determine where best to place the VNFs in a distributed infrastructure.

Other features include VNF snapshotting for troubleshooting and MANO functions to manage the VNFs and network services.

Release 3 also addressed multi-site deployment. “If you have two VNFs, one is in a data centre in Paris, and another in a data centre in southern France, interconnected via a wide area network, how does that work?” says Chatras.

The implementation of a VNF implemented using containers was always part of the NFV vision, says ETSI.

Initial specifications concentrated on virtual machines, but Release 3 marked NFV’s first container VNF work.

Release 4 and 5

The ISG NFV working group is now working on Releases 4 and 5 in parallel.

Each new release item starts with a study phase and, based on the result, is turned into specifications.

The study phase is now limited to six months to speed up the NFV releases. The project is earmarked for a later release if the work takes longer than expected.

Two additions in NFV Release 4 are container management frameworks such as Kubernetes, a well-advanced project, and network automation: adding AI and machine learning and intent management techniques.

Network automation

NFV MANO functions already provide automation using policy rules.

“Here, we are going a step further; we are adding a management data analytics function to help the NFV orchestrator make decisions,” says Chatras. “Similarly, we are adding an intent management function above the NFV orchestrator to simplify interfacing to the operations support systems (OSS).”

Intent management is an essential element of the operators’ goal of end-to-end network automation.

Without intent management, if the OSS wants to deploy a network service, it sends a detailed request using a REST API to the NFV orchestrator on how to proceed. For example, the OSS details the VNFs needed for the network service, their interconnections, the bandwidth required, and whether IPv4 or IPv6 is used.

“With an intent-based approach, that request sent to the intent management function will be simpler,” says Chatras. “It will just set out the network service the operator wants, and the intent management function will derive the technical details.”

The intent management function, in effect, knows what is technically possible and what VNFs are available to do the work.

The work on intent management and management data analytics has just started.

“We have spent quite a lot of time on a study phase to identify what is feasible,” says Chatras.

Release 5 work started a year ago with the ETSI group asking its members what was needed.

The aim is to consolidate and close functional gaps identified by the industry. But two features are being added: Green NFV and support for VRAN.

Green NFV and VRAN

Energy efficiency was one of the expected benefits listed in the original White Paper.

ETSI has a Technical Committee for Environmental Engineering (TC EE) with a working group to reduce energy consumption in telecommunications.

The energy-saving work of Release 5 is solely for NFV, one small factor in the overall picture, says Chatras.

Just using the orchestration capabilities of NFV can reduce energy costs.

“You can consolidate workloads on fewer servers during off-peak hours,” says Chatras. “You can also optimise the location of the VNF where the cost of energy happens to be lower at that time.“

Release 5 goes deeper by controlling the energy consumption of a VNF dynamically using the power management features of servers.

The server can change the CPU’s clock frequency. Release 5 will address whether the VNF management or orchestration does this. There is also a tradeoff between lowering the clock speed and maintaining acceptable performance.

“So, many things to study,” says Chatras.

The Green NFV study will provide guidelines for designing an energy-efficient VNF by reducing execution time and memory consumption and whether hardware accelerators are used, depending on the processor available.

“We are collecting use cases of what operators would like to do, and we hope that we can complete that by mid-2022,” says Chatras.

The VRAN work involves checking the work done in the O-RAN Alliance to verify whether the NFV framework supports all the requirements. If not, the group will evaluate proposed solutions before changing specifications.

“We are doing that because we heard from various people that things are missing in the ETSI ISG NFV specifications to support VRAN properly,” says Chatras.

Is the bulk of the NFV work already done? Chatras thinks before answering: “It is hard to say.”

The overall ecosystem is evolving, and NFV must remain aligned, he says, and this creates work.

The group will complete the study phases of Green NFV and NFV for VRAN this summer before starting the specification work.

NFV deployments

ETSI ISG NFV has a group known as the Network Operator Council, comprising operators only.

The group creates occasional surveys to assess where NFV technology is being used.

“What we see is confidential, but roughly there are NFV deployments across nearly all network segments: mobile core, fixed networks, RAN and enterprise customer premise equipment,” says Chatras.

VNFs to CNFs

Now there is a broad industry interest in cloud-native network functions. But the ISG NFV group believes that there is a general misconception regarding NFV.

“In ETSI, we do not consider that cloud-native network functions are something different from VNFs,” says Chatras. “For us, a cloud-native function is a VNF with a particular software design, which happens to be cloud-native, and which in most cases is hosted in containers.”

The NFV framework’s goal, says ETSI, is to deliver a generic solution to manage network functions regardless of the technology used to deploy them.

Chatras is not surprised that the NFV is less mentioned: NFV is 10-years-old and it happens to many technologies as they mature. But from a technical standpoint, the specifications being developed by ETSI NFV comply with the cloud model.

Most operators will admit that NFV has proved very complex to deploy.

Running VNFs on third-party infrastructure is complicated, says Chatras. That will not change whether an NFV specification is used or something else based on Kubernetes.

Chatras is also candid about the overall progress of network transformation. “Is it all happening sufficiently rapidly? Of course, the answer is no,” he says.

Network transformation has many elements, not just standards. The standardisation work is doing its part; whenever an issue arises, it is tackled.

“The hallmark of good standardisation is that it evolves to accommodate unexpected twists and turns of technology evolution,” agrees Clarke. “We have seen the growth of open source and so-called cloud-native technologies; ETSI NFV has adapted accordingly and figured out new and exciting possibilities.”

Many issues remain for the operators: skills transformation, organisational change, and each determining what it means to become a ‘digital’ service provider.

In other words, the difficulties of network transformation will not magically disappear, however elegantly the network is architected as it transitions increasingly to software and cloud.

BT’s Open RAN trial: A mix of excitement and pragmatism

“We in telecoms, we don’t do complexity very well.” So says Neil McRae, BT’s managing director and chief architect.

He was talking about the trend of making network architectures open and in particular the Open Radio Access Network (Open RAN), an approach that BT is trialling.

“In networking, we are naturally sceptical because these networks are very important and every day become more important,” says McRae

Whether it is Open RAN or any other technology, it is key for BT to understand its aims and how it helps. “And most importantly, what it means for customers,” says McRae. “I would argue we don’t do enough of that in our industry.”

Open RAN

Open RAN has become a key focus in the development of 5G. Open RAN is backed by leading operators, it promises greater vendor choice and helps counter the dependency on the handful of key RAN vendors such as Nokia and Ericsson. There are also geopolitical considerations given that Huawei is no longer a network supplier in certain countries.

“Huawei and China, once they were the flavour of the month and now they no longer are,” says McRae. “That has driven a lot of concern – there are only Nokia and Ericsson as scaled players – and I think that is a thing we need to worry about.”

McRae points out that Open RAN is an interface standard rather than a technology.

“Those creating Open RAN solutions, the only part that is open is that interface side,” he says. ”If you think of Nokia, Ericsson, Mavenir, Rakuten and Altiostar – any of the guys building this technology – none of their technology is specifically open but you can talk to it via this open interface.”

Opportunity

McRae is upbeat about Open RAN but much work is needed to realise its potential.

“Open RAN, and I would probably say the same about NFV (network functions virtualisation), has got a lot of momentum and a lot of hype well before I think it deserves it,” he says.

Neil McRaeBT favours open architectures and interoperability. “Why wouldn’t we want to to be part of that, part of Open RAN,” says McRae. “But what we are seeing here is people excited about the potential – we are hugely excited about the potential – but are we there yet? Absolutely not.”

BT views Open RAN as a way to support the small-cell neutral host model whereby a company can offer operators coverage, one way Open RAN can augment macro cell coverage.

Open RAN can also be used to provide indoor coverage such as in stadiums and shopping centres. McRae says Open RAN could also be used for transportation but there are still some challenges there.

“We see Open RAN and the Open RAN interface specifications as a great way for building innovation into the radio network,” he says. “If there is one part that we are hugely excited about, it is that.”

BT’s Open RAN trial

BT is conducting an Open RAN trial with Nokia in Hull, UK.

“We haven’t just been working with Nokia on this piece of work, other similar experiments are going on with others,” says McRae.

McRae equates Open RAN with software-defined networking (SDN). SDN uses several devices that are largely unintelligent while a central controller – ’the big brain’ – controls the devices and in the process makes them more valuable.

“SDN has this notion of a controller and devices and the Open RAN solution is no different: it uses a different interface but it is largely the same concept,” says McRae.

This central controller in Open RAN is the RAN Intelligent Controller (RIC) and it is this component that is at the core of the Nokia trial.

“That controller allows us to deploy solutions and applications into the network in a really simple and manageable way,” says McRae.

The RIC architecture is composed of a near-real-time RIC that is very close to the radio and that makes almost instantaneous changes based on the current situation.

There is also a non-real-time controller – that is used for such tasks as setting policy, the overall run cycle for the network, configuration and troubleshooting.

“You kind of create and deploy it, adjust it or add or remove things, not in real-time,” says McRae. “It is like with a train track, you change the signalling from red to green long before the train arrives.”

BT views the non-real-time aspect of the RIC as a new way for telcos to automate and optimise the core aspects of radio networking.

McRae says the South Bank, London is one of the busiest parts of BT’s network and the operator has had to keep adding spectrum to the macrocells there.

“It is getting to the point where the macro isn’t going to be precise enough to continue to build a great experience in a location like that,” he says.

One solution is to add small cells and BT has looked at that but has concluded that making macros and small cells work together well is not straightforward. This is where the RIC can optimise the macro and small cells in a way that improves the experience for customers even when the macro equipment is from one vendor and the small cells from another.

“The RIC allows us to build solutions that take the demand and the requirements of the network a huge step forward,” he says. “The RIC makes a massive step – one of the biggest steps in the last decade, probably since massive MIMO – in ensuring we can get the most out of our radio network.”

BT is focussed on the non-real-time RIC for the initial use cases it is trialling. It is using Nokia’s equipment for the Hull trial.

BT is also testing applications such as load optimisation between different layers of the network and between different neighbouring sites. Also where there is a failure in the network it is using ‘Xapps’ to reroute traffic or re-optimise the network.

Nokia also has AI and machine learning software which BT is trialling. BT sees AI and machine learning-based solutions as a must as ultimately human operators are too slow.

Trial goals

BT wants to understand how Open RAN works in deployment. For example, how to manage a cell that is part of a RIC cluster.

In a national network, there will likely be multiple RICs used.

“We expect that this will be a distributed architecture,” says McRae. “How do you control that? Well, that’s where the non-real-time RIC has a job to do, effectively to configure the near-real-time RIC, or RICs as we understand more about how many of them we need.”

Another aspect of the trial is to see if, by using Open RAN, the network performance KPIs can be improved. These include time on 4G/ time on 5G, and the number of handovers and dropped calls.

“Our hope and we expect that all of these get better; the early signs in our labs are that they should all get better, the network should perform more effectively,” he says.

BT will also do coverage testing which, with some of the newer radios it is deploying, it expects to improve.

“We’ve done a lot of learning in the lab,” says McRae. “Our experience suggests that translating that into operational knowledge is not perfect. So we’re doing this to learn more about how this will work and how it will benefit customers at the end of the day.”

Openness and diversity

Given that Open RAN aims to open vendor choice, some have questioned whether BT’s trial with Nokia is in the spirit of the initiative.

“We are using the Open RAN architecture and the Open RAN interface specs,” says McRae. “Now, for a lot of people, Open RAN means you have got to have 12 vendors in the network. Let me tell you, good luck to everyone that tries that.”

BT says there are a set of flavours of Open RAN appearing. One is Rakuten and Symphony, another is Mavenir. These are end-to-end solutions being built that can be offered to operators as a solution.

“Service providers are terrible at integrating things; it is not our core competency,” says McRae. “We have got better over the years but we want to buy a solution that is tested, that has a set of KPIs around how it operates, that has all the security features we need.”

This is key for a platform that in BT’s case serves 30 million users. As McRae puts it, if Open RAN becomes too complicated, it is not going to get off the ground: “So we welcome partnerships, or ecosystems that are forming because we think that is going to make Open RAN more accessible.”

McRae says some of the reaction to its working with Nokia is about driving vendor diversity.

BT wants diverse vendors that can provide it with greater choice and benefit from competition. But McRae points out that many of the vendors’ equipment use the same key components from a handful of chip companies; and chips that are made in two key locations.

“What we want to see is those underlying components, we want to see dozens of companies building them all over the world,” he says. “They are so crucial to everything we do in life today, not just in the network, but in your car, smartphone, TV and the microwave.”

And while more of the network is being implemented in software – BT’s 5G core is all software – hardware is still key where there are are packet or traffic flows.

“The challenge in some of these components, particularly in the radio ecosystem, is you need strong parallel processing,” says McRae. “In software that is really difficult to do.”

“Intel, AMD, Broadcom and Qualcomm are all great partners,“ says McRae. “But if any one of these guys, for some reason, doesn’t move the innovation curve in the way we need it to move, then we run into real problems of how to grow and develop the network.”

What BT wants is as much IC choice as possible, but how that will be achieved McRae is less certain. But operators rightly have to be concerned about it, he says.

Telecoms' innovation problem and its wider cost

Imagine how useful 3D video calls would have been this last year.

The technologies needed – a light field display and digital compression techniques to send the vast data generated across a network – do exist but practical holographic systems for communication remain years off.

But this is just the sort of application that telcos should be pursuing to benefit their businesses.

A call for innovation

“Innovation in our industry has always been problematic,” says Don Clarke, formerly of BT and CableLabs and co-author of a recent position paper outlining why telecoms needs to be more innovative.

Entitled Accelerating Innovation in the Telecommunications Arena, the paper’s co-authors include representatives from communications service providers (CSPs), Telefonica and Deutsche Telekom.

In an era of accelerating and disruptive change, CSPs are proving to be an impediment, argues the paper.

The CSPs’ networking infrastructure has its own inertia; the networks are complex, vast in scale and costly. The operators also require a solid business case before undertaking expensive network upgrades.

Such inertia is costly, not only for the CSPs but for the many industries that depend on connectivity.

But if the telecom operators are to boost innovation, practices must change. This is what the position paper looks to tackle.

NFV White Paper

Clarke was one of the authors of the original Network Functions Virtualisation (NFV) White Paper, published by ETSI in 2012.

The paper set out a blueprint as to how the telecom industry could adopt IT practices and move away from specialist telecom platforms running custom software. Such proprietary platforms made the CSPs beholden to systems vendors when it came to service upgrades.

The NFV paper also highlighted a need to attract new innovative players to telecoms.

“I see that paper as a catalyst,” says Clarke. “The ripple effect it has had has been enormous; everywhere you look, you see its influence.”

Clarke cites how the Linux Foundation has re-engineered its open-source activities around networking while Amazon Web Services now offers a cloud-native 5G core. Certain application programming interfaces (APIs) cited by Amazon as part of its 5G core originated in the NFV paper, says Clarke.

Software-based networking would have happened without the ETSI NFV white paper, stresses Clarke, but its backing by leading CSPs spurred the industry.

However, building a software-based network is hard, as the subsequent experiences of the CSPs have shown.

“You need to be a master of cloud technology, and telcos are not,” says Clarke. “But guess what? Riding to the rescue are the cloud operators; they are going to do what the telcos set out to do.”

For example, as well as hosting a 5G core, AWS is active at the network edge including its Internet of Things (IoT) Greengrass service. Microsoft, having acquired telecom vendors Metaswitch and Affirmed Networks, has launched ‘Azure for Operators’ to offer 5G, cloud and edge services. Meanwhile, Google has signed agreements with several leading CSPs to advance 5G mobile edge computing services.

“They [the hyperscalers] are creating the infrastructure within a cloud environment that will be carrier-grade and cloud-native, and they are competitive,” says Clarke.

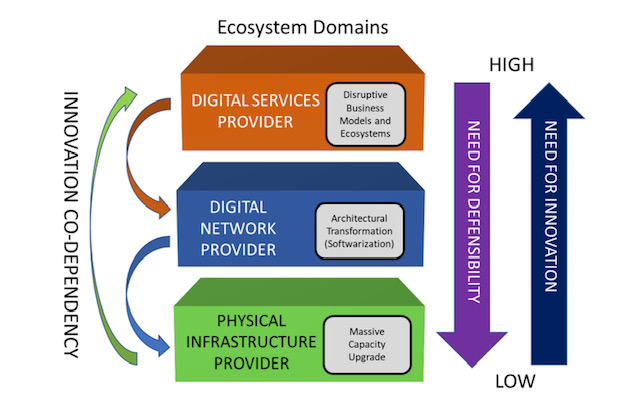

The new ecosystem

The position paper describes the telecommunications ecosystem in three layers (see diagram).

The CSPs are examples of the physical infrastructure providers (bottom layer) that have fixed and wireless infrastructure providing connectivity. The physical infrastructure layer is where the telcos have their value – their ‘centre of gravity’ – and this won’t change, says Clarke.

The infrastructure layer also includes the access network which is the CSPs’ crown jewels.

“The telcos will always defend and upgrade that asset,” says Clarke, adding that the CSPs have never cut access R&D budgets. Access is the part of the network that accounts for the bulk of their spending. “Innovation in access is happening all the time but it is never fast enough.”

The middle, digital network layer is where the nodes responsible for switching and routing reside, as do the NFV and software-defined networking (SDN) functions. It is here where innovation is needed most.

Clarke points out that the middle and upper layers are blurring; they are shown separately in the diagram for historical reasons since the CSPs own the big switching centres and the fibre that connect them.

But the hyperscalers – with their data centres, fibre backbones, and NFV and SDN expertise – play in the middle layer too even if they are predominantly known as digital service providers, the uppermost layer.

The position paper’s goal is to address how CSPs can better address the upper two network layers while also attracting smaller players and start-ups to fuel innovation across all three.

Paper proposal

The paper identifies several key issues that curtail innovation in telecoms.

One is the difficulty for start-ups and small companies to play a role in telecoms and build a business.

Just how difficult it can be is highlighted by the closure of SDN-controller specialist, Lumina Networks, which was already engaged with two leading CSPs.

In a Telecom TV panel discussion about innovation in telecoms, that accompanied the paper’s publication, Andrew Coward, the then CEO of Lumina Networks, pointed out how start-ups require not just financial backing but assistance from the CSPs due to their limited resources compared to the established systems vendors.

It is hard for a start-up to respond to an operator’s request-for-proposals that can be thousands of pages long. And when they do, will the CSPs’ procurement departments consider them due to their size?

Coward argues that a portion of the CSP’ capital expenditure should be committed to start-ups. That, in turn, would instill greater venture capital confidence in telecoms.

The CSPs also have ‘organisational inertia’ in contrast to the hyperscalers, says Clarke.

“Big companies tend towards monocultures and that works very well if you are not doing anything from one year to the next,” he says.

The hyperscalers’ edge is their intellectual capital and they work continually to produce new capabilities. “They consume innovative brains far faster and with more reward than telcos do, and have the inverse mindset of the telcos,” says Clarke.

The goals of the innovation initiative are to get CSPs and the hyperscalers – the key digital service providers – to work more closely.

“The digital service providers need to articulate the importance of telecoms to their future business model instead of working around it,” says Clarke.

Clarke hopes the digital service providers will step up and help the telecom industry be more dynamic given the future of their businesses depend on the infrastructure improving.

In turn, the CSPs need to stand up and articulate their value. This will attract investors and encourage start-ups to become engaged. It will also force the telcos to be more innovative and overcome some of the procurement barriers, he says.

Ultimately, new types of collaboration need to emerge that will address the issue of innovation.

Next steps

Work has advanced since the paper was published in June and additional players have joined the initiative, to be detailed soon.

“This is the beginning of what we hope will be a much more interesting dialogue, because of the diversity of players we have in the room,” says Clarke. “It is time to wake up, not only because of the need for innovation in our industry but because we are an innovation retardant everywhere else.”

Further information:

Telecom TV’s panel discussion: Part 2, click here

Tom Nolle’s response to the Accelerating Innovation in the Telecommunications Arena paper, click here

The key elements of NFV usage: A guide

Orchestration, service assurance, service fulfilment, automation and closed-loop automation. These are important concepts associated with network functions virtualisation (NFV) technology being adopted by telecom operators as they transition their networks to become software-driven and cloud-based.

Prayson Pate (pictured), CTO of the Ensemble division at ADVA Optical Networking, explains the technologies and their role and gives each a status update.

Orchestration

Network functions virtualisation (NFV) is based on the idea of replacing physical appliances - telecom boxes - with software running on servers performing the same networking role.

Using NFV speeds up service development and deployment while reducing equipment and operational costs.

Using NFV speeds up service development and deployment while reducing equipment and operational costs.

It also allows operators to work with multiple vendors rather than be dependent on a single vendor providing the platform and associated custom software.

Operators want to adopt software-based virtual network functions (VNFs) running on standard servers, storage and networking, referred to as NFV infrastructure (NFVI).

In such an NFV world, the term orchestration refers to the control and management of virtualised services, composed of virtual network functions and executed on the NFV infrastructure.

The use of virtualised services has created the need for a new orchestration layer that sits between the existing operations support system-billing support system (OSS-BSS) and the NFV infrastructure (see diagram below). This orchestration layer performs the following tasks:

- Manages the catalogue of virtual network functions developed by vendors and by the open-source communities.

- Translates incoming service requests to create the virtualised implementation using the underlying infrastructure.

- Links the virtual network functions as required to create a service, referred to as a service chain. This service chain may be on one server or it may be distributed across the network.

- Performs the various management tasks for the virtual network functions: setting them up, scaling them up and down, updating and upgrading them, and terminating them - the ‘lifecycle management’ of virtual network functions. The orchestrator also ensures their resiliency.

The ETSI standards body, the NFV Industry Specification Group (ETSI NFV ISG), leads the industry effort to define the architecture for NFV, including orchestration.

Several companies are providing proprietary and pre-standard NFV orchestration solutions, including ADVA Optical Networking, Amdocs, Ciena, Ericsson, IBM, Netcracker and others. In addition, there are open source initiatives such as the Linux Foundation Networking Fund’s Open Network Automation Platform (ONAP), ETSI NFV ISG’s Open Source MANO (OSM) and OpenStack’s Tacker initiative.

Source: ETSI GS NFV 002

Source: ETSI GS NFV 002

Service assurance

Service providers promise that their service will meet a certain level of performance defined in the service level agreement (SLA).

Service assurance refers to the measurement of parameters such as packet loss and latency associated with a service; parameters which are compared against the SLA. Service assurance also remedies any SLA shortfalls. More sophisticated parameters can also be measured such as privacy and responses to distributed denial-of-service (DDOS) attacks.

NFV enables telcos to create and launch services more quickly and economically. But an end customer only cares about the service, not the underlying technology. Customers will not stand for a less reliable service or a service with inferior performance just because it is implemented as a virtual function on a server.

Service assurance is not a new concept, but the nature of a virtualised implementation means a new approach is required. No longer is there a one-to-one association between services and network elements, so the linkages between services, the building-block virtual network functions, and the underlying virtual infrastructure need to be understood. Just as the services are virtualised, so the service assurance process needs virtualised components such as virtual probes and test heads.

The telcos’ operations groups are concerned about how to deploy and support virtualised services. Innovations in service assurance will make their job easier and enable them to do what they could not do before.

EXFO, Ixia, Spirent, and Viavi supply virtual probes and test heads. These may be used for initial service verification, ongoing monitoring, and active troubleshooting. Active troubleshooting is a powerful concept as it enables an operator to diagnose issues without dispatching a technician and equipment.

Service fulfilment

Service fulfilment refers to the configuration and delivery of a service to a customer at one or more locations.

Service fulfilment is essential for an operator because it is how orders are turned into revenue. The more quickly and accurately a service is fulfilled, the sooner the operator gets paid. Prompt fulfilment also leads to greater customer satisfaction and reduced churn.

Early-adopter operators see NFV as a way to improve service fulfilment. Verizon is using its NFV-based service offering to speed up service fulfilment. When a customer orders a service, Verizon instructs the manufacturer to ship a server to the customer. Once connected and powered at the customer’s site, the server calls home and is configured. Combined with optional LTE, a customer can get a service on demand without waiting for a technician. This significantly improves the traditional model where a customer may wait weeks before being able to use the telco’s service.

Network automation

Network automation uses machines instead of trained staff to operate the network. For NFV, the automated software tasks include configuration, operation and monitoring of network elements.

The benefits of network automation include speed and accuracy of service fulfilment - humans can err - along with reduced operational costs.

Telcos have been using network automation for high-volume services and to manage complexity. That said, many operators include manual steps in their process. Such a hands-on approach doesn’t work with cloud technologies such as NFV. Cloud customers can acquire, deploy and operate services without any manual interaction from the webscale players. Likewise, NFV must be automated if telecom operators are to benefit from its potential.

Network automation is closely tied to orchestration. Commercial suppliers and open-source groups are working to ensure that service orders flow automatically from high-level systems down to implementation, dubbed flow-through provisioning and that ‘zero-touch’ provisioning that removes all manual steps becomes a reality. But for this to happen, open and standard interfaces are needed.

Closed-loop automation

Closed-loop automation adds a feedback loop to network automation. The feedback enables the automation to take into account changing network conditions such as loading and network failures, as well as dynamic service demands such as bandwidth changes or services wanted by users.

Closed-loop automation compares the network’s state against rules and policies, replacing what were previously staff decisions. These systems are sometimes referred to as intent-based, as they focus on the desired intent or result rather than on the inputs to the network controls.

Service providers are also investigating adding artificial intelligence and machine learning to closed loop automation. Artificial intelligence and machine learning can replace the hard-coded rules with adaptive and dynamic pattern recognition, allowing anomalies to be detected, adapted to, and even predicted.

Closed-loop automation offloads operational teams not only from manual control but also from manual management processes. Human decisions and planning are replaced by policy-driven control, while human reasoning is replaced by artificial intelligence and machine learning algorithms.

Policy systems or ‘engines’ have existed for a while for functions such as network and file access, but these engines were not closed-loop; there was no feedback. These policy concepts have now been updated to include desired network state, such that a feedback loop is needed to compare the current status with the desired one.

A closed-loop automation system makes dynamic changes to ensure a targeted operational state is reached even when network or service conditions change. This approach enables service providers to match capacity with demand, solve traffic management and network quality issues, and manage 5G and Internet of Things upgrades.

Closed loop automation is complex. Employing artificial intelligence and machine learning will require interfaces to be defined that allow network data into the intelligent systems and enable the outputs to be used.

Several suppliers have announced products supporting closed-loop automation or intent-based networking, including Apstra, Cisco Systems, Forward Networks, Juniper Networks, Nokia, and Veriflow Systems. In addition, the open source ONAP project is also pursuing work in this area.

ONF’s operators seize control of their networking needs

- The eight ONF service providers will develop reference designs addressing the network edge.

- The service providers want to spur the deployment of open-source designs after becoming frustrated with the systems vendors failing to deliver what they need.

- The reference designs will be up and running before year-end.

- New partners have committed to join since the consortium announced its strategic plan

The service providers leading the Open Networking Foundation (ONF) will publish open designs to address next-generation networking needs.

Timon SloaneThe ONF service providers - NTT Group, AT&T, Telefonica, Deutsche Telekom, Comcast, China Unicom, Turk Telekom and Google - are taking a hands-on approach to the design of their networks after becoming frustrated with what they perceive as foot-dragging by the systems vendors.

Timon SloaneThe ONF service providers - NTT Group, AT&T, Telefonica, Deutsche Telekom, Comcast, China Unicom, Turk Telekom and Google - are taking a hands-on approach to the design of their networks after becoming frustrated with what they perceive as foot-dragging by the systems vendors.

“All eight [operators] have come together to say in unison that they are going to work inside the ONF to craft explicit plans - blueprints - for the industry for how to deploy open-source-based solutions,” says Timon Sloane, vice president of marketing and ecosystem at the ONF.

The open-source organisation will develop ‘reference designs’ based on open-source components for the network edge. The reference designs will address developments such as 5G and multi-access edge and will be implemented using cloud, white box, network functions virtualisation (NFV) and software-defined networking (SDN) technologies.

By issuing the designs and committing to deploy them, the operators want to attract select systems vendors that will work with them to fulfil their networking needs.

Remit

The ONF is known for such open-source projects as the Central Office Rearchitected as a Datacenter (CORD) and the Open Networking Operating System (ONOS) SDN controller.

The ONF’s scope has broadened over the years, reflecting the evolving needs of its operator members. The organisation’s remit is to reinvent the network edge. “To apply the best of SDN, NFV and cloud technologies to enable not just raw connectivity but also the delivery of services and applications at the edge,” says Sloane.

The network edge spans from the central office to the cellular tower and includes the emerging edge cloud that extends the ‘edge’ to such developments as the connected car and drones.

The operators have been hopeful the whole vendor community would step up and start building solutions and embracing this approach but it is not happening at the speed operators want, demand and need

“The edge cloud is called a lot of different things right now: multi-access edge computing, fog computing, far edge and distributed cloud,” says Sloane. “It hasn’t solidified yet.”

One ONF open-source project is the Open and Disaggregated Transport Network (ODTN), led by NTT. “ODTN is edge related but not exclusively so,” says Sloane. “It is starting off with a data centre interconnect focus but you should think of it as CORD-to-WAN connectivity.”

The ONF’s operators spent months formulated the initiative, dubbed the Strategic Plan, after growing frustrated with a supply chain that has failed to deliver the open-source solutions they need. “The operators have been hopeful the whole vendor community would step up and start building solutions and embracing this approach but it is not happening at the speed operators want, demand and need,” says Sloane.

The ONF’s initiative signals to the industry that the operators are shifting their spending to open-source solutions and basing their procurement decisions on the reference designs they produce.

“It is a clear sign to the industry that things are shifting,” says Sloane. “The longer you sit on the sidelines and wait and see what happens, the more likely you are to lose your position in the industry.”

If operators adopt open-source software and use white boxes based on merchant silicon, how will systems vendors produce differentiated solutions?

“All this goes to show why this is disruptive and creating turbulence in the industry,” says Sloane.

Open-source design equates to industry collaboration to develop shared, non-differentiated infrastructure, he says. That means system vendors can focus their R&D tackling new issues such as running and automating networks, developing applications and solving challenges such as next-generation radio access and radio spectrum management.

“We want people to move with the mark,” says Sloane. “It is not just building a legacy business based on what used to be unique and expecting to build that into the future.”

Reference designs

The operators have identified five reference designs: fixed and mobile broadband, multi-access edge, leaf-and-spine architectures, 5G at the edge, and next-generation SDN.

The ONF has already done much work in fixed and mobile broadband with its residential and mobile CORD projects. Multi-access edge refers to developing one network to serve all types of customers simultaneously, using cloud techniques to shift networking resources dynamically as needed.

At first glance, it is unclear what the ONF can contribute to leaf-and-spine architectures. But the ONF is developing SDN-controlled switch fabric that can perform advanced packet processing, not just packet forwarding.

The ONF’s initiative signals to the industry that the operators are shifting their spending to open-source solutions and basing their procurement decisions on the reference designs they produce.

Sloane says that many virtualised tasks today are run on server blades using processors based on the x86 instruction set. But offloading packet processing tasks to programmable switch chips - referred to as networking fabric - can significantly benefit the price-performance achieved.

“We can leverage [the] P4 [programming language for data forwarding] and start to do things people never envisaged being done in a fabric,” says Sloane, adding that the organisation overseeing P4 is going to merge with the ONF.

The 5G reference design is one application where such a switch fabric will play a role. The ONF is working on implementing 5G network core functions and features such as network slicing, using the P4 language to run core tasks on intelligent fabric.

The ONF has already done work separating the radio access network (RAN) controller from radio frequency equipment and aims to use SDN to control a pool of resources and make intelligent decisions about the placement of subscribers, workloads and how the available radio spectrum can best be used.

The ONF’s fifth reference design addresses next-generation SDN and will use work that Google has developed and is contributing to the ONF.

The ONF manages the OpenFlow protocol, used to define the separation between the control and data forwarding planes. But the ONF is the first to admit that OpenFlow overlooked such issues as equipment configuration and operational issues.

The ONF is now engaged in a next-generation SDN initiative. “We are taking a step back and looking at the whole problem, to address all the pieces that didn’t get resolved in the past,” says Sloane.

Google has also contributed two interfaces that allow device management and the ONF has started its Stratum project that will develop an open-source solution for white boxes to expose these interfaces. This software residing on the white box has no control intelligence and does not make any packet-forwarding decisions. That will be done by the SDN controller that talks to the white box via these interfaces. Accordingly, the ONF is updating its ONOS controller to use these new interfaces.

Source: ONF

Source: ONF

From reference designs to deployment

The ONF has a clear process to transition its reference designs to solutions ready for network deployment.

The reference designs will be produced by the eight operators working with other ONF partners. “The reference design is to help others in the industry to understand where you might choose to swap in another open source piece or put in a commercial piece,” says Sloane.

This explains how the components are linked to the reference design (see diagram above). The ONF also includes the concept of the exemplar platform, the specific implementation of the reference design. “We have seen that there is tremendous value in having an open platform, something like Residential CORD,” says Sloane. “That really is what the exemplar platform is.”

The ONF says there will be one exemplar platform for each reference design but operators will be able to pick particular components for their implementations. The exemplar platform will inevitably also need to interface to a network management and orchestration platform such as the Linux Foundation’s Open Network Automation Platform (ONAP) or ETSI’s Open Source MANO (OSM).

The process of refining the reference design and honing the exemplar platform built using specific components is inevitably iterative but once completed, the operators will have a solution to test, trial and, ultimately, deploy.

The ONF says that since announcing the strategic plan a month ago, several new partners - as yet unannounced - have committed to join.

“The intention is to have the reference designs up and running before the end of the year,” says Sloane.

Ciena picks ONAP’s policy code to enhance Blue Planet

Operators want to use automation to help tackle the growing complexity and cost of operating their networks.

Kevin Wade“Policy plays a key role in this goal by enabling the creation and administration of rules that automatically modify the network’s behaviour,” says Kevin Wade, senior director of solutions, Ciena’s Blue Planet.

Kevin Wade“Policy plays a key role in this goal by enabling the creation and administration of rules that automatically modify the network’s behaviour,” says Kevin Wade, senior director of solutions, Ciena’s Blue Planet.

Incorporating ONAP code to enhance Blue Planet’s policy engine also advances Ciena’s own vision of the adaptive network.

Automation platforms

ONAP and Ciena’s Blue Planet are examples of network automation platforms.

ONAP is an open software initiative created by merging a large portion of AT&T’s original Enhanced Control, Orchestration, Management and Policy (ECOMP) software developed to power its own software-defined network and the OPEN-Orchestrator (OPEN-O) project, set up by several companies including China Mobile, China Telecom and Huawei.

ONAP’s goal is to become the default automation platform for service providers as they move to a software-driven network using such technologies as network functions virtualisation (NFV) and software-defined networking (SDN).

Blue Planet is Ciena’s own open automation platform for SDN and NFV-based networks. The platform can be used to manage Ciena’s own platforms and has open interfaces to manage software-defined networks and third-party equipment.

Ciena gained the Blue Planet platform with the acquisition of Cyan in 2015. Since then Ciena has added two main elements.

One is the Manage, Control and Plan (MCP) component that oversees Ciena's own telecom equipment. Ciena’s Liquid Spectrum that adds intelligence to its optical layer is part of MCP.

The second platform component added is analytics software to collect and process telemetry data to detect trends and patterns in the network to enable optimisation.

“We have 20-plus [Blue Planet] customers primarily on the orchestration side,” says Wade. These include Windstream, Centurylink and Dark Fibre Africa of South Africa. Out of these 20 or so customers, one fifth do not use Ciena’s equipment in their networks. One such operator is Orange, another Blue Planet user Ciena has named.

A further five service providers are trialing an upgraded version of MCP, says Wade, while two operators are using Blue Planet’s analytics software.

In a closed-loop automation process, the policy subsystem guides the orchestration or the SDN controller, or both, to take actions

Policy

Ciena has been a member of the ONAP open source initiative for one year. By integrating ONAP’s policy components into Blue Planet, the platform will support more advanced closed-loop network automation use cases, enabling smarter adaptation.

“In a closed-loop automation process, the policy subsystem guides the orchestration or the SDN controller, or both, to take actions,” says Wade. Such actions include scaling capacity, restoring the network following failure, and automatic placement of a virtual network function to meet changing service requirements.

In return for using the code, Ciena will contribute bug fixes back to the open source venture and will continue the development of the policy engine.

The enhanced policy subsystem’s functionalities will be incorporated over several Blue Planet releases, with the first release being made available later this year. “Support for the ONAP virtual network function descriptors and packaging specifications are available now,” says Wade.

The adaptive network

Software control and automation, in which policy plays an important role, is one key component of Ciena's envisaged adaptive network.

A second component is network analytics and intelligence. Here, real-time data collected from the network is fed to intelligent systems to uncover the required network actions.

The final element needed for an adaptive network is a programmable infrastructure. This enables network tuning in response to changing demands.

What operators want, says Wade, is automation, guided by analytics and intent-based policies, to scale, configure, and optimise the network based on a continual reading to detect changing demands.

China Mobile plots 400 Gigabit trials in 2017

China Mobile is preparing to trial 400-gigabit transmission in the backbone of its optical network in 2017. The planned trials were detailed during a keynote talk given by Jiajin Gao, deputy general manager at China Mobile Technology, at the OIDA Executive Forum, an OSA event hosted at OFC, held in Los Angeles last week.

The world's largest operator will trial two 400-gigabit variants: polarisation-multiplexed, quadrature phase-shift keying (PM-QPSK) and 16-ary quadrature amplitude modulation (PM-16QAM).

The 400-gigabit 16-QAM will achieve a total transmission capacity of 22 terabits and a reach of 1,500km using ultra-low-loss fibre and Raman amplification, while with Nyquist PM-QPSK, the capacity will be 13.6 terabits and a 2000km reach. China Mobile started to deploy 100 gigabits in its backbone in 2013. It expects to deploy 400 gigabits in its metro and provisional networks from 2018.

Gao also detailed the growth in the different parts of China Mobile's network. Packet transport networking ports grew by 200,000 in 2016 to 1.2 million. The operator also grew its fixed broadband market share, adding over 20 million GPON subscribers to reach 80 million in 2016 while its optical line terminals (OLTs) grew from 89,000 in 2015 to 113,000 in 2016. Indeed, China Mobile has now overtaken China Unicom as China's second largest fixed broadband provider. Meanwhile, the fibre in its metro networks grew from 1.26 million kilometres in 2015 to 1.41 million in 2016.

The Chinese operator is also planning to adopt a hybrid OTN-reconfigurable optical add-drop multiplexer (OTN-ROADM) architecture which it trialled in the second half of 2016, linking several cities. The operator currently uses electrical cross-connect switches which were first deployed in 2011.

The ROADM is a colourless, directionless and contentionless design that also supports a flexible grid, and the operator is interested in using the hybrid OTN-ROADM in its provisional backbone and metro networks. Using the OTN-ROADM architecture is expected to deliver a power savings of between 13% and 50%, says Gao.

XG-PON was also first deployed in 2016. China Mobile says 95% of its GPON optical network units deployed connect single families. The operator detailed an advanced home gateway that it has designed which six vendors are now developing. The home gateway features application programming interfaces to enable applications to be run on the platform.

For the XG-PON OLTs, China Mobile is using four vendors - Fiberhome, Huawei, ZTE and Nokia Shanghai Bell. The OLTs support 8 ports per card with three of the designs using an ASIC and one an FPGA. "Our conclusion is that 10-gigabit PON is mature for commercialisation," says Gao.

Gao also talked about China Mobile's NovoNet 2020, the vision for its network which was first outlined in a White Paper in 2015. NovaNet will be based on such cloud technologies as software-defined networking (SDN) and network function virtualisation (NFV) and is a hierarchical arrangement of Telecom Integrated Clouds (TICs) that span the core through to access. He outlined how for private cloud services, a data centre will have 3,000 servers typically while for public cloud 4,000 servers per node will be used.

China Mobile has said the first applications on NovoNet will be for residential services, with LTE, 5G enhanced packet core and multi-access edge computing also added to the TICs.

The operator said that it will trial SDN and NFV in its network this year and also mentioned how it had developed its own main SDN controller that oversees the network.

China Mobile reported 854 million mobile subscribers at the end of February, of which 559 million are LTE users, while its wireline broadband users now exceed 83 million.

The art of virtualised network function placement

Cyan is delivering its Blue Planet NFV orchestrator software that will make use of enhancements being made to OpenStack developed by Red Hat in close collaboration with Telefónica.

Gazettabyte asked Nirav Modi, director of software innovations at Cyan, about the work.

Q: The concept of deterministic placement of virtualised network functions. Why is this important?

NM: We are attempting to solve a fundamental technology challenge required to make NFV successful for carriers. Telefónica has been doing a lot of internal trials and its own NFV R&D and has found that the placement of virtualised network functions greatly affects their performance.

We are working with Telefónica and Red Hat to solve this problem, to ensures virtualised network functions perform consistently and at their peak from one instance to another. This is particularly important for composite or clustered virtualised network function architectures, where the placement of various components can affect performance and availability.

Also, you need to ensure that the virtualised network functions are located where the most suitable compute, storage or networking resources are located. Other important performance metrics that need to be considered include latency and bandwidth availability into the cloud environment.

What is involved in enabling deterministic virtualised network functions, and what is the impact on Cyan's orchestration platform?

Deterministic placement requires an orchestration platform, such as Cyan’s Blue Planet, to be aware of the resources available at various NFV points of presence (PoPs) and to map operator-provided placement policies, such as performance and high-availability requirements, into placement decisions. In other words, which servers should be used and which PoP should host the virtualised network function.

For the operator, interested in application service-level agreements (SLAs) and performance, Cyan’s orchestration platform provides the intelligence to translate those policies into a placement architecture.

Does this Telefonica work also require Cyan's Z-Series packet-optical transport system (P-OTS)?

NFV is all about taking network functions currently deployed on purpose-built, vertically-integrated hardware platforms, and deploying them on industry-standard commercial off-the-shelf (COTS) servers, possibly in a virtualised environment running OpenStack, for example.

In such an set-up, Cyan’s Blue Planet orchestration platform is responsible for the deployment of the virtualised network functions into the NFV infrastructure or telco cloud. Cyan’s orchestration software is always deployed on COTS servers. There is no dependency on using Cyan’s P-OTS to use the Blue Planet software-defined networking (SDN) and NFV software.

The Z-Series platform can be used in the metro and wide area network to enable a scalable and programmable network. And this can supplement the virtual network functions deployed in the cloud to replace existing hardware-based solutions, but the Z-Series is not involved in this joint-effort with Telefónica and Red Hat.