Access drives a need for 10G compact aggregation boxes

Infinera has unveiled a platform to aggregate multiple 10-gigabit traffic streams originating in the access network.

The 1.6-terabit HDEA 1600G platform is designed to aggregate 80, 10-gigabit wavelengths. The use of ten-gigabit wavelengths in access continues to grow with the advent of 5G mobile backhaul and developments in cable and passive optical networking (PON).

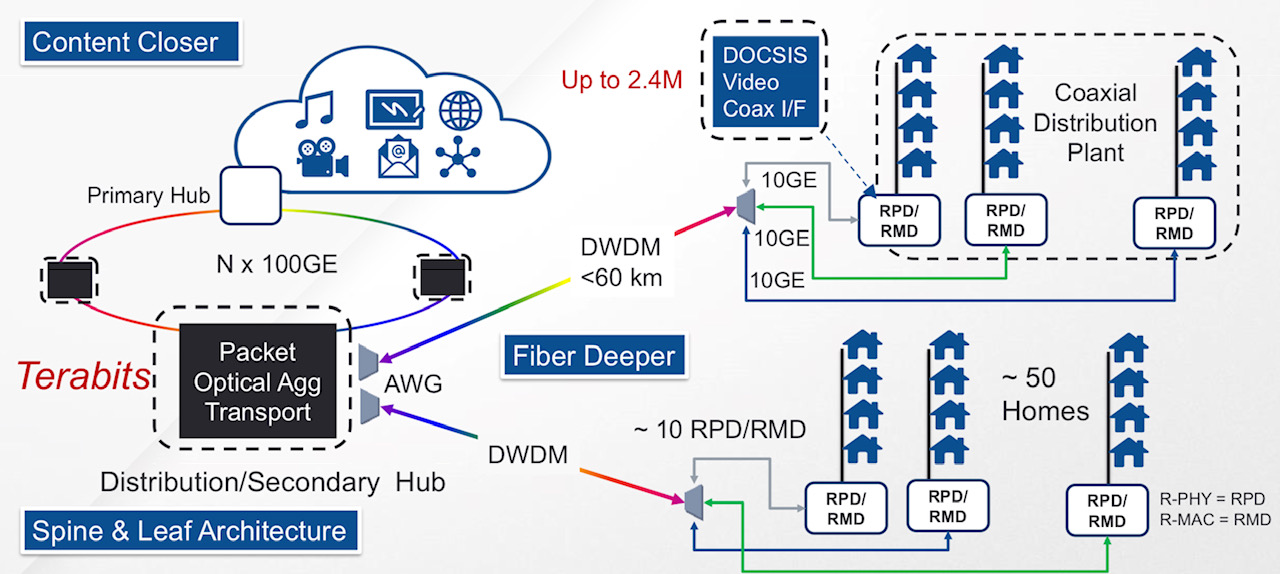

A distributed access architecture being embraced by cable operators. Shown are the remote PHY devices (RPD) or remote MAC-PHY devices (RMD), functionality moved out of the secondary hub and closer to the end user. Also shown is how DWDM technology is moved closer to the edge of the network. Source: Infinera.

A distributed access architecture being embraced by cable operators. Shown are the remote PHY devices (RPD) or remote MAC-PHY devices (RMD), functionality moved out of the secondary hub and closer to the end user. Also shown is how DWDM technology is moved closer to the edge of the network. Source: Infinera.

Infinera has adopted a novel mechanical design for its 1 rack unit (1RU) HDEA 1600G that uses the sides of the platform to fit 80 SFP+ optical modules.

The platform also features a 1.6-terabit Ethernet switch chip that aggregates the traffic from the 10-gigabit streams to fill 100-gigabit wavelengths that are passed to other switching or transport platforms for transmission into the network.

Distributed access architecture

Jon Baldry, metro marketing director at Infinera, cites the adoption of a distributed access architecture (DAA) by cable operators as an example of 10-gigabit links that are set to proliferate in the access network.

DAA is being adopted by cable operators to compete with the telecom operators’ rollout of fibre-to-the-home (FTTH) broadband access technology.

A recent report by market research firm, Ovum, addressing DAA in the North American market, discusses how the architectural approach will free up space in cable headends, reduce the operators’ operational costs, and allow the delivery of greater bandwidth to subscribers.

Implementing DAA involves bringing fibre as well as cable network functionality closer to the user. Such functionality includes remote PHY devices and remote MAC-PHY devices. It is these devices that will use a 10-gigabit interface, says Baldry: “The traffic they will be running at first will be two or three gigabits over that 10-gigabit link.”

Julie Kunstler, principal analyst at Ovum’s Network Infrastructure and Software group, says the choice whether to deploy a remote PHY or a remote MAC-PHY architecture is a issue of an operator's ‘religion’. What is important, she says, is that both options exploit the existing hybrid fibre coax (HFC) architecture to boost the speed tiers delivered to users.

The current, pre-DAA, cable network architecture. Source: Infinera.

In the current pre-DAA architecture, the cable network comprises cable headends and secondary distribution hubs (see diagram above). It is at the secondary hub that the dense wavelength-division multiplexing (DWDM) network terminates. From there, RF over fibre is carried over the hybrid fibre-coax (HFC) plant. The HFC plant also requires amplifier chains to overcome cable attenuation and the losses resulting from the cable splits that deliver the RF signals to the homes.

Typically, an HFC node in the cable network serves up to 500 homes. With the adoption of DAA and the use of remote PHYs, the amplifier chains can be removed with each PHY serving 50 homes (see diagram top).

“Basically DWDM is being pushed out to the remote PHY devices,” says Baldry. The remote PHYs can be as far as 60km from the secondary hub.

“DAA is a classic example where you will have dense 10-gigabit links all coming together at one location,” says Baldry. “Worst case, you can have 600-700 remote PHY devices terminating at a secondary hub.”

The same applies to cellular.

At present 4G networks use 1-gigabit links for mobile backhaul but 5G will use 10-gigabit and 25-gigabit links in a year or two. “So the edge of the WDM network has really jumped from 1 gigabit to 10 gigabit,” says Baldry.

It is the aggregation of large numbers of 10-gigabit links that the HDEA 1600G platform is designed to address.

HDEA 1600G

Only a certain number of pluggable interfaces can fit on the front panel of a 1RH box. To accommodate 80, 10-gigabit streams, the two sides of the platform are used for the interfaces. Using the HDEA’s sides creates much more space for the 1RU’s input-output (I/O) compared to traditional transport kit, says Baldry.

The 40 SFP+ modules on each side of the platform are accessed by pulling the shelf out and this can be done while it is operational (see photo below). Such an approach is used for supercomputing but Baldry believes Infinera is the first to adopt it for a transport product.

Infinera has also adopted MPO connectors to simplify the fibre management involved in connected 80 SFP+, each module requiring a fibre pair.

The HDEA 1600 has two groups of four MPO connectors on the front panel. Each MPO cluster connects 40 modules on each side, with each MPO cable having 20 fibres to connect 10 SFP+ modules.

A site terminating 400 remote PHYs, for example, requires the connection of 40 MPO cables instead of 800 individual fibres, says Baldry, simplifying installation greatly.

>

“DAA is a classic example where you will have dense 10-gigabit links all coming together at one location. Worst case, you can have 600-700 remote PHY devices terminating at a secondary hub.”

The other end of the MPO cable connects to a dense multiplexer-demultiplexer (mux-demux) unit that separates the individual 10-gigabit access wavelengths received over the DWDM link.

Each mux-demux unit uses an arrayed waveguide grating (AWG) that is tailored to the cable operators’ wavelengths needs. The 24-channel mux-demux design supports 20, 100GHz-wide channels for the 10-gigabit wavelengths and four wavelengths reserved for business services. Business services have become an important part of the cable operators’ revenues.

Infinera says the HDEA platform supports the extended C-band for a total of 96 wavelengths.

The company says it will develop different AWG configurations tailored for the wavelengths and channel count required for the different access applications.

In the rack, the HDEA aggregation platform takes up one shelf, while eight mux-demux units take up another 1RU. Space is left in between to house the cabling between the two.

The HDEA 1600G pulled out of the rack, showing the MPO connectors and the space to house the cabling between the HDEA and the rack of compact AWGs. Source: Infinera.

Baldry points out that the four business service wavelengths are not touched by the HDEA platform, Rather, these are routed to separate Ethernet switches dedicated to business customers. "We break those wavelengths out and hand them over to whatever system the operator is using," he says.

The HDEA 1600G also features eight 100-gigabit line-side interfaces that carry the aggregated cable access streams. Infinera is not revealing the supplier of the 1.6 terabit switch silicon - 800-gigabit for client-side capacity and 800-gigabit for line-side capacity - it is using for the HDEA platform.

The platform supports all the software Infinera uses for its EMXP, a packet-optical switch tailored for access and aggregation that is part of Infinera’s XTM family of products. Features include multi-chassis link aggregation group (MC-LAG), ring protection, all the Metro Ethernet Forum services, and synchronisation for mobile networks, says Baldry

Auto-Lambda

Infinera has developed what it calls its Auto-Lambda technology to simplify the wavelength management of the remote PHY devices.

Here, the optics set up the connection instead of a field engineer using a spreadsheet to determine which wavelength to use for a particular remote PHY. Tunable SFP+ modules can be used at the remote PHY devices only with fixed-wavelength (grey) SFP+ modules used by the HDEA platform to save on costs, or both ends can use tunable optics. Using tunable SFP+ modules at each end may be more expensive but the operator gains flexibility and sparing benefits.

Jon Baldry

Establishing a link when using fixed optics within the HDEA platform, the SFP+ is operated in a listening mode only. When a tunable SFP+ transceiver is plugged in at a remote PHY, which could be days later, it cycles through each wavelength. The blocking nature of the AWG means that such cycling does not disturb other wavelengths already in use.

Once the tunable SFP+ reaches the required wavelength, the transmitted signal is passed through the AWG to reach the listening transceiver at the switch. On receipt of the signal, the switch SFP+ turns on its transmitter and talks to the remote transceiver to establish the link.

For the four business wavelengths, both ends of the link use auto-tunable SFP+ modules, what is referred to a duel-ended solution. That is because both end-point systems may not be Infinera platforms and may have no knowledge as to how to manage WDM wavelengths, says Baldry.

In this more complex scenario, the time taken to establish a link is theoretically much longer. The remote end module has to cycle through all the wavelengths and if no connection is made, the near end transceiver changes its transmit wavelength and the remote end’s wavelength cycling is repeated.

Given that a sweep can take two minutes or more, an 80-wavelength system could take close to three hours in the worst case to establish the link; an unacceptable delay.

Infinera is not detailing how its duel-ended scheme works but a combination of scanning and communications is used between the two ends. Infinera had shown such a duel-ended scheme set up a link in 4 minutes and believes it can halve that time.

Finisar detailed its own Flextune fast-tuning technology at ECOC 2018. However, Infinera stresses its technology is different.

Infinera says it is talking to several pluggable optical module makers. “They are working on 25-gigabit optics which we are going to need for 5G,” says Baldry. “As soon as they come along, with the same firmware, we then have auto-tunable for 5G.”

System benefits

Infinera says its HDEA design delivers several benefits. Using the sides of the box means that the platform supports 80 SFP+ interfaces, twice the capacity of competing designs. In turn, using MPO connectors simplifies the fibre management, benefiting operational costs.

Infinera also believes that the platform’s overall power consumption has a competitive edge. Baldry says Infinera incorporates only the features and hardware needed. “We have deliberately not done a lot of stuff in Layer 2 to get better transport performance,” he says. The result is a more power-efficient and lower latency design. The lower latency is achieved using ‘thin buffers’ as part of the switch’s output-buffered queueing architecture, he says.

The platform supports open application programming interfaces (APIs) such that cable operators can make use of such open framework developments as the Cloud-Optimised Remote Datacentre (CORD) initiative being developed by the Open Networking Foundation. CORD uses open-source software-defined networking (SDN) technology such as ONOS and the OpenFlow protocol to control the box.

An operator can also choose to use Infinera’s Digital Network Administrator (DNA) management software, SDN controller, and orchestration software that it has gained following the Coriant acquisition.

The HDEA 1600G is generally available and in the hands of several customers.

Transmode adopts 100 Gigabit coherent CFPs

Transmode has detailed line cards that bring 100 Gigabit coherent CFP optical modules to its packet optical transport platforms.

We can be quicker to market when newer DSP-based CFPs appear

Jon Baldry

"We believe we are the first to market with line-side coherent CFPs, bringing pluggable line-side optics to a WDM portfolio," says Jon Baldry, technical marketing director at Transmode. Baldry says that other system vendors already support non-coherent CFP modules on their line cards and that further vendor announcements using coherent CFPs are to be expected.

The Swedish system vendor announced three line cards: a 100 Gig transponder, a 100 Gig muxponder and what it calls its Ethernet muxponder (EMXP) card. The first two cards support wavelength division multiplexing (WDM) Layer 1 transport: the 100 Gig transponder card supports two 100 Gig CFP modules while the 100 Gig muxponder supports 10x10 Gig ports and a CFP.

The third card, the EMXP220/IIe, has a capacity of 220 Gig: 12x10 Gigabit Ethernet ports and the CFP, with all 13 ports supporting optional Optical Transport Network (OTN) framing. "You can think of it as a Layer 2 switch on a card with 13 embedded transponders," says Baldry. The three cards each take up two line card slots.

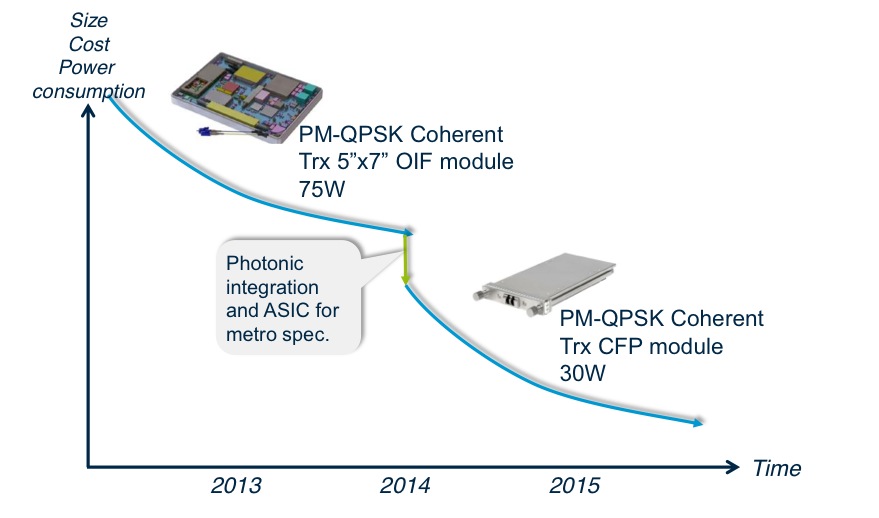

Transmode's platforms are used for metro and metro regional networks. Metro has more demanding cost, space and power efficiency requirements than long distance core networks. "The move that the whole industry is taking to CFP or pluggable-based optics is a big step forward [in meeting metro's requirements]," says Baldry.

The line cards will be used with Transmode's metro edge TM-Series packet optical family that is suited for applications such as mobile backhaul and business services. "Within packet-optical networks, we can do customer premise Gigabit Ethernet all the way through to 100 Gig handover to the core on the same family of cards running the same Layer 2 software," says Baldry. Transmode believes this capability is unique in the industry.

The TM-3000 chassis is 11 rack units (RU) high and can hold eight double-slot cards, for a total of 800 Gig CFP line side capacity.

Source: Transmode

Source: Transmode

100 Gig coherent modules

Transmode is talking to several 100 Gig coherent CFP module makers. The company will use multiple suppliers but says it is currently working with one manufacturer whose product is closer to market.

Acacia Communications announced the first CFP module late last year and other module makers are expected to follow at the upcoming OFC show in March 2014.

The roadmap of the CFP modules envisages the CFP to be followed by the smaller CFP2 and smaller still CFP4. For the CFP2 and CFP4, the coherent digital signal processor (DSP) ASIC is expected to be external to the module's optics, residing on the line card instead. The 100 Gig CFP, however, integrates the DSP-ASIC within the module and this approach is favoured by Transmode.

"We can be quicker to market when newer DSP-based CFPs appear; today we can do 800km and in the future that will go to a longer reach or lower power consumption," says Baldry. "Also, the same port can take a coherent CFP or a 100BASE-SR4 or -LR4 CFP without having the cost burden of a DSP on the card whether it is needed or not."

The company also points out that by using the integrated CFP it can choose from all the coherent designs available whereas modules that separate the optics and DSP-ASIC will inevitably offer a more limited choice.

There are also 100 Gigabit direct detection CFPs from the likes of Finisar and Oplink Communications and Transmode's cards would support such a CFP module. But for now the company says its main interest is in coherent.

"One key requirement for anything we deploy is that it works with the existing optical infrastructure," says Baldry. "One difference between 100 Gig metro and long haul is that a lot of the long distance 100 Gig is new build, whereas the metro will require 100 Gig over existing infrastructure and coherent works very nicely with the existing design rules and existing 10 Gig networks."

The 100 Gig transponder card consumes 75W, with the coherent CFP accounting for 30W of the total. The card can be used for signal regeneration, hosting two 100 Gig coherent CFPs rather than the more typical arrangement of a client-side and a line-side CFP. The card's power consumption exceeds 75W, however, when two coherent CFPs are used.

The 100 Gig transponder and Ethernet muxponder will be available in the second quarter of 2014, says Transmode, while the 100 Gig muxponder card will follow early in the third quarter of the year.