Choosing paths to future Gigabit Ethernet speeds

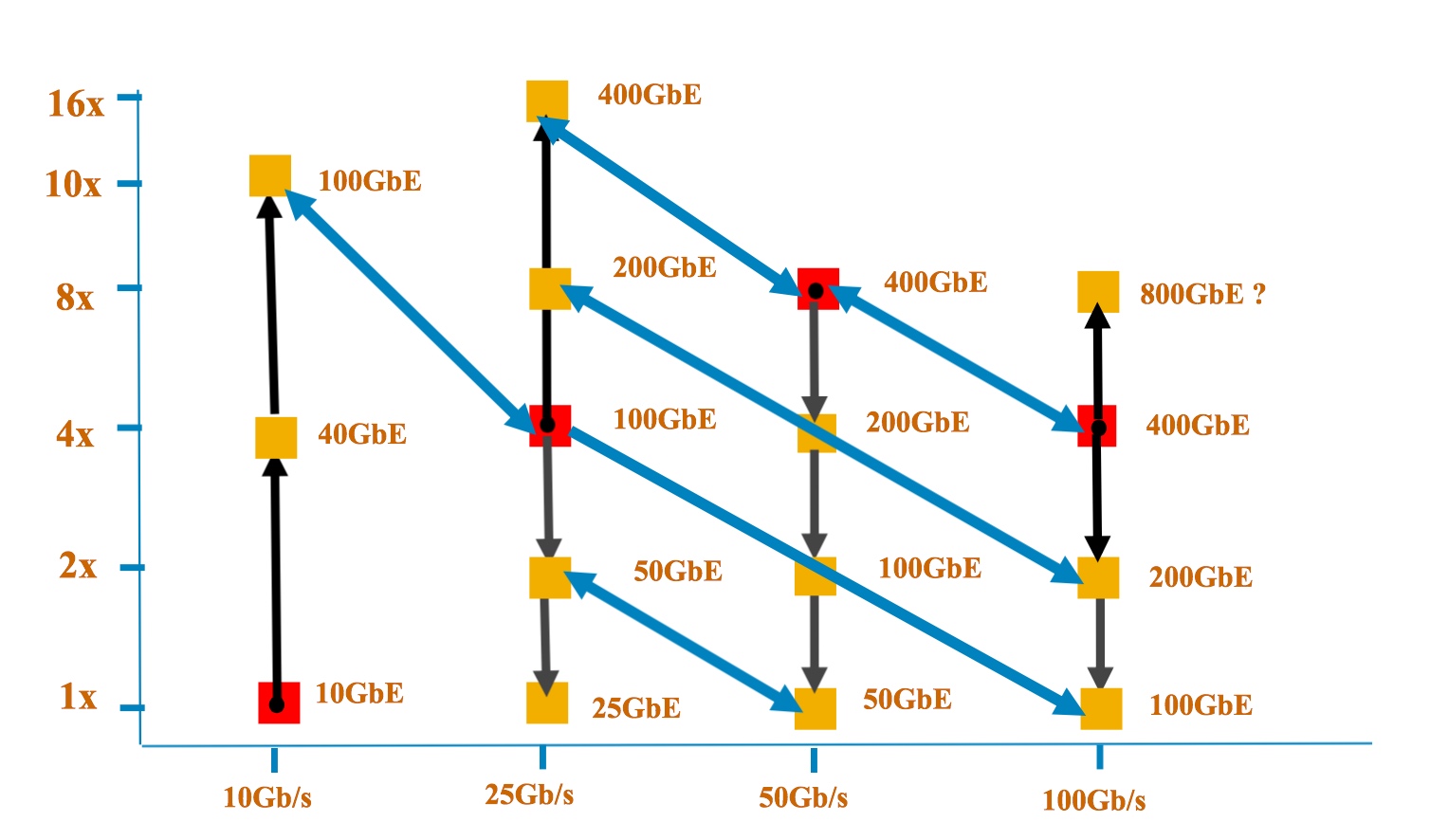

The y-axis shows the number of lanes while the x-axis is the speed per lane. Each red dot shows the Ethernet rate at which the signalling (optical or electrical) was introduced. One challenge that John D'Ambrosia highlights is handling overlapping speeds. "What do we do about 100 Gig based on 4x25, 2x50 and 1x100 and ensure interoperability, and do that for every multiple where you have a crossover?" Source: Dell

The y-axis shows the number of lanes while the x-axis is the speed per lane. Each red dot shows the Ethernet rate at which the signalling (optical or electrical) was introduced. One challenge that John D'Ambrosia highlights is handling overlapping speeds. "What do we do about 100 Gig based on 4x25, 2x50 and 1x100 and ensure interoperability, and do that for every multiple where you have a crossover?" Source: Dell

One catalyst for these discussions has been the progress made in the emerging 400 Gigabit Ethernet (GbE) standard which is now at the first specification draft stage.

“If you look at what is happening at 400 Gig, the decisions that were made there do have potential repercussions for new speeds as well as new signalling rates and technologies,” says John D’Ambrosia, chairman of the Ethernet Alliance.

Before the IEEE P802.3bs 400 Gigabit Ethernet Task Force met in July, two electrical signalling schemes had already been chosen for the emerging standard: 16 channels of 25 gigabit non-return-to-zero (NRZ) and eight lanes of 50 gigabit using PAM-4 signalling.

For the different reaches, three of the four optical interfaces had also been chosen, with the July meeting resolving the fourth - 2km - interface. The final optical interfaces for the four different reaches are shown in the Table.

The adoption of 50 gigabit electrical and optical interfaces at the July meeting has led some industry players to call for a new 50 gigabit Ethernet family to be created, says D’Ambrosia.

Certain players favour the 50 GbE standard to include a four-lane 200 GbE version, just as 100 GbE uses 4 x 25 Gig channels, while others want 50 GbE to be broader, with one, two, four and eight lane variants to deliver 50, 100, 200 and 400 GbE rates.

If you look at what is happening at 400 Gig, the decisions that were made there do have potential repercussions for new speeds as well as new signalling rates and technologies

The 400 GbE standard’s adoption of 100 GbE channels that use PAM-4 signalling has also raised questions as to whether 100 GbE PAM-4 should be added to the existing 100 GbE standard or a new 100 GbE activity be initiated.

“Those decisions have snowballed into a lot of activity and a lot of discussion,” says D’Ambrosia, who is organising an activity to address these issues and to determine where the industry consensus is as to how to proceed.

“These are all industry debates that are going to happen over the next few months,” he says, with the goal being to better meet industry needs by evolving Ethernet more quickly.

Ethernet continues to change, notes D’Ambrosia. The 40 GbE standard exploited the investment made in 10 gigabit signalling, and the same is happening with 25 gigabit signalling and 100 gigabit.

If you buy into the idea of more lanes based around a single signalling speed, then applying that to the next signalling speed at 100 Gigabit Ethernet, does that mean the next speed with be 800 Gigabit Ethernet?

With 50 Gig electrical signalling now starting as part of the 400 GbE work, some industry voices wonder whether, instead of developing one Ethernet family around a rate, it is not better to develop a family of rates around the signalling speed, such as is being proposed with 50 Gig and the use of 1, 2, 4 and 8 lane configurations.

“If you buy into the idea of more lanes based around a single signalling speed, then applying that to the next signalling speed at 100 Gigabit Ethernet, does that mean the next speed with be 800 Gigabit Ethernet?” says D’Ambrosia.

The 400 GbE Task Force is having its latest meeting this week. A key goal is to get the first draft of the standard - Version 1.0 - approved. “To make sure all the baselines have been interpreted correctly,” says D’Ambrosia. What then follows is filling in the detail, turning the draft into a technically-complete document.

Further reading:

LightCounting: 25GbE almost done but more new Ethernet options are coming, click here

An interview with John D'Ambrosia

The chairman of the Ethernet Alliance talks to Gazettabyte about the many ways Ethernet is evolving due to industry requirements.

"We are witnessing the evolution of Ethernet in ways that many of us never planned because there are markets that are demanding different things from it."

John D'Ambrosia describes the industry as feeling like nuts right now. "There is just so much stuff going on in terms of Ethernet," he says.

Besides the development of 400 Gigabit Ethernet (GbE) - the specification work for the emerging Ethernet standard being well underway - new applications are creating requirements that the existing Ethernet specifications cannot meet. These requirements include additional Ethernet speeds; the IEEE 802.3 Ethernet Working Group has created a Study Group to develop single-lane 25GbE for server interconnect.

One busy Ethernet activity involves 100 Gig mid-reach interfaces. Mid-reach covers distances from 500m to 2km. The interfaces are needed in the data centre to connect switches, such as the leaf-spine switch architecture, and to connect switches to the data centre's edge router. The existing IEEE 802.3 Ethernet 100 Gig multi-mode standards - the 100GBASE-SR4 and the 100GBASE-SR10 span 100m only (150m over OM4 fibre), too short for certain data centre applications.

"As we go faster, multimode's reach capabilities are coming down," says D'Ambrosia. "It has got to do with those pesky laws of physics." The next IEEE 802.3 100 Gig interface option, 100GBASE-LR4, has a 10km span, too much for many data centre applications. The 100GBASE-LR4 is also expensive, seven times the cost of the 100GBASE-SR4 interface, according to market research firm, LightCounting.

One of the reasons the IEEE 802.3 Ethernet Working Group created the 802.3bm Task Force was to develop an inexpensive 500m-reach specification. Four proposals resulted: parallel single mode (PSM4), coarse WDM (CWDM), pulse amplitude modulation and discrete multi-tone. None were adopted since each failed to muster sufficient backing. The optical industry then pursued a multi-source agreement (MSA) approach, and since January 2014, four single-mode mid-reach interfaces have emerged: the CLR4 Alliance, the CWDM4, the PSM4 and OpenOptics.

D'Ambrosia says the mid-reach optics debate first arose in 2007 when the IEEE 802.3ba group, developing 40 GbE and 100 GbE standards, discussed whether a 3-4km 100 Gig reach interface was required. "There was still enough people that needed 10km," says D'Ambrosia, and if 3-4km had been chosen then the 10km requirement would have been addressed with an even more complex 40km interface. "In hindsight, I'm not sure that was the right decision but it was the right decision at the time," says D'Ambrosia.

The PSM4 100 GbE mid-reach MSA used four individual fibres for each direction, each fibre operating at 25 Gig. The other three mid-reach interfaces have a 2km reach and use 4x25 Gig wavelengths and duplex fibre, a single fibre in each direction.

The decision to use the ribbon fibre PSM4 or one of the other three WDM-based schemes depends on the existing fibre plant used in a data centre, and the link distance required. The PSM4 module may prove to be less costly that the other three module types but its ribbon fibre is more expensive compared to similar length duplex fibre; the longer the link, the more significant the fibre becomes as part of the overall link cost. "What someone really wants is the lowest cost solution for their application," says D'Ambrosia.

The PSM4 has other, secondary uses that are part of its appeal. "With a breakout solution, even in copper, you can get to lower speeds," says D'Ambrosia. For example, a 40 GbE QSFP optical module using parallel fibre can be viewed as a 40 Gig interface or as a dense 4x10 Gig interface, with each fibre a 10 Gig interface. Such a 'breakout' solution is likely to be attractive earlier on, as applications transition to higher speeds.

Does it serve the industry to have four mid-reach solutions? D'Ambrosia says opinion varies. "My own personal belief is that it would be better for the industry overall if we didn't have so many choices," he says. "But the reality is there are a lot of different applications out there."

25 Gigabit Ethernet

Work has also started on a 25 GbE standard. An IEEE 802.3 Study Group has been created to investigate a copper-based and a multi-mode server interconnect at 25 Gig. In July, the 25G Ethernet Consortium was also announced by firms Google, Microsoft, Arista, Mellanox and Broadcom that is also backing 25 GbE for server interconnect.

"There are a lot of people who are worried that 25 GbE will go everywhere; you just don't introduce a new rate of Ethernet," says D'Ambrosia. And as with 100 Gig mid-reach with its proliferation of MSAs, now there is a concern about a proliferation of Ethernet speeds, he says.

But if there is one thing that D'Ambrosia has learned in his years active in Ethernet standards, it is not to second-guess the market. "If there is a cool application out there that will help save money, the market will figure it out and it [the solution] will become popular."

For now, the IEEE 802.3 25G Study Group has chosen to focus on single lane server interconnects. "That is what the charter is," says D'Ambrosia. "But that doesn't mean 25 Gigabit Ethernet will end there; there is never a single rate project."

400 Gigabit Ethernet

D'Ambrosia, who is also chair of the IEEE 802.3 400G Ethernet Task Force, also highlights the latest developments of the next Ethernet speed increment. There is a multi-mode 400 GbE fibre standard being worked on as well as three single mode fibre objectives.

The multi-mode solution will have a reach of 100m while the single mode options will span 500m, 2km and 10km. "For 500m, that is where everyone thinks parallel fibre can be used," says D'Ambrosia. At 10km, not surprisingly, it will be duplex fibre, while at 2km it is likely to be duplex simply because of the cost of long spans of parallel fibre.

In November, at the next Task Force meeting, proposals will be made as to how best to implement these differing requirements. For the multi-mode, talk is of a 16x25 Gig implementation. "I believe that is what we will see in the proposals in November," says D'Ambrosia. The Task Force is also looking at 50 Gig electrical interfaces for the longer 400 Gig reaches. Such an interface is likely to be ready by the time the 400G Task Force work is completed in 2017.

No one has suggested a 16x25 Gig single mode fibre optical interface, he says: "Do we do it as 50 Gig or 100 Gig?" Non-return-to-zero [NRZ], PAM4 and discrete multi-tone modulation schemes are all being considered. "For NRZ, we might see 8x50 Gig though that is not solidifying yet," he says. "For 500m there is talk of a x4 bundle and also pulse amplitude modulation for a single 100 Gig wavelength."

The November meeting is the last one for new proposals and in January 2015 decisions will be made.

The Ethernet Alliance is sponsoring an industry event this month entitled: "The Rate Debate" at the TEF 2014 event in Santa Clara, CA, on October 16th. The event will look at whether 40 Gig or 50 Gig Ethernet makes more sense, and the likely evolution. And if 50Gig is adopted, will 100 GbE based on 4 channels evolve to 200 Gigabit? There is also interest in extending Category 5 cable from 1 Gig to 2.5 Gig and even 5 Gig to extend the useful life of campus cabling, and that will also be addressed. More recently, there have been two Calls-For-Interest: for a Next Generation Enterprise Access BASE-T PHY and a 25GBASE-T and these will also likely be discussed.

Ethernet speeds used to evolve by a factor of 10, then by a factor of 4 and now 2.5. In future, with 50 Gig, it might also double. "With 40 Gig and 50 Gig, which one will dominate?" says D'Ambrosia. "But they are so close, why can't we come up with a solution that shares technology at both [speeds]?" These are just some of the issues to be discussed at the event.

"We are witnessing the evolution of Ethernet in ways that many of us never planned because there are markets that are demanding different things from it," says D'Ambrosia.

The uphill battle to keep pace with bandwidth demand

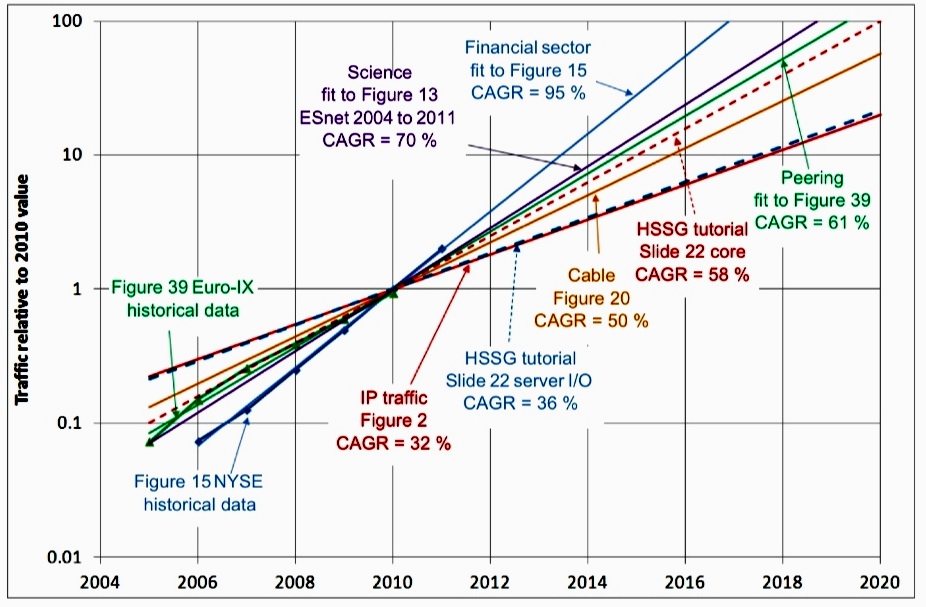

Relative traffic increase normalised to 2010 Source: IEEE

Relative traffic increase normalised to 2010 Source: IEEE

Optical component and system vendors will be increasingly challenged to meet the expected growth in bandwidth demand.

According to a recent comprehensive study by the IEEE (The IEEE 802.3 Industry Connections Ethernet Bandwidth Assessment report), bandwidth requirements are set to grow 10x by 2015 compared to demand in 2010, and a further 10x between 2015 and 2020. Meanwhile, the technical challenges are growing for the vendors developing optical transmission equipment and short-reach high-speed optical interfaces.

Fibre bandwidth is becoming a scarce commodity and various techniques will be required to scale capacity in metro and long-haul networks. The IEEE is expected to develop the next-higher speed Ethernet standard to follow 100 Gigabit Ethernet (GbE) in 2017 only. The IEEE is only talking about capacities and not interface speeds. Yet, at this early stage, 400 Gigabit Ethernet looks the most likely interface.

"The various end-user markets need technology that scales with their bandwidth demands and does so economically. The fact that vendors must work harder to keep scaling bandwidth is not what they want to hear"

A 400GbE interface will comprise multiple parallel lanes, requiring the use of optical integration. A 400GbE interface may also embrace modulation techniques, further adding to the size, complexity and cost of such an interface. And to achieve a Terabit, three such interfaces will be needed.

All these factors are conspiring against what the various end-user bandwidth sectors require: line-side and client-side interfaces that scale economically with bandwidth demand. Instead, optical components, optical module and systems suppliers will have to invest heavily to develop more complex solutions in the hope of matching the relentless bandwidth demand.

The IEEE 802.3 Bandwidth Assessment Ad Hoc group, which produced the report that highlights the hundredfold growth in bandwidth demand between 2010 and 2020, studied several sectors besides core networking and data centre equipment such as servers. These include Internet exchanges, high-performance computing, cable operators (MSOs) and the scientific community.

The difference growth rates in bandwidth demand it found for the various sectors are shown in the chart above.

Optical transport

A key challenge for optical transport is that fibre spectrum is becoming a precious commodity. Scaling capacity will require much more efficient use of spectrum.

To this aim, vendors are embracing advanced modulation schemes, signal processing and complex ASIC designs. The use of such technologies also raises new challenges such as moving away from a rigid spectrum grid, requiring the introduction of flexible-grid switching elements within the network.

And it does not stop there.

Already considerable development work is underway to use multi-carriers - super-channels - whose carrier count can be adapted on-the-fly depending on demand, and which can be crammed together to save spectrum. This requires advanced waveform shaping based on either coherent orthogonal frequency division multiplexing (OFDM) or Nyquist WDM, adding further complexity to the ASIC design.

At present, a single light path can be increased from 100 Gigabit-per-second (Gbps) to 200Gbps using the 16-QAM amplitude modulation scheme. Two such light paths give a 400Gbps data rate. But 400Gbps requires more spectrum than the standard 50GHz band used for 100Gbps transmission. And using QAM reduces the overall optical transmission reach achieved.

The shorter resulting reach using 16-QAM or 64-QAM may be sufficient for metro networks (~1000km) but to achieve long-haul and ultra-long-haul spans will require super-channels based on multiple dual-polarisation, quadrature phase-shift keying (DP-QPSK) modulated carriers, each occupying 50GHz. Building up a 400Gbps or 1 Terabit signal this way uses 4 or 10 such carriers, respectively - a lot of spectrum. Some 8Tbps to 8.8Tbps long-haul capacity result using this approach.

The main 100Gbps system vendors have demonstrated 400Gbps using 16-QAM and two carriers. This doubles system capacity to 16-17.6Tbps. A further 30% saving in bandwidth using spectral shaping at the transmitter crams the carriers closer together, raising the capacity to some 23Tbps. The eventual adoption of coherent OFDM or Nyquist WDM will further boost overall fibre capacity across the C-band. But the overall tradeoff of capacity versus reach still remains.

Optical transport thus has a set of techniques to improve the amount of traffic it can carry. But it is not at a pace that matches the relentless exponential growth in bandwidth demand.

After spectral shaping, even more complex solutions will be needed. These include extending transmission beyond the C-band, and developing exotic fibres. But these are developments for the next decade or two and will require considerable investment.

The various end-user markets need technology that scales with their bandwidth demands and does so economically. The fact that vendors must work harder to keep scaling bandwidth is not what they want to hear.

"No-one is talking about a potential bandwidth crunch but if it is to be avoided, greater investment in the key technologies will be needed. This will raise its own industry challenges. But nothing like those to be expected if the gap between bandwidth demand and available solutions grows"

Higher-speed Ethernet

The IEEE's Bandwidth Assessment study lays the groundwork for the development of the next higher-speed Ethernet standard.

Since the standard work has not yet started, the IEEE stresses that it is premature to discuss interface speeds. But based on the state of the industry, 400GbE already looks the most likely solution as the next speed hike after 100GbE. Adopting 400GbE, several approaches could be pursued:

- 16 lanes at 25Gbps: 100GbE is moving to a 4x25Gbps electrical interface and 400GbE could exploit such technology for a 16-lane solution, made up of four, 4x25Gbps interfaces. "If I was a betting man, I'd probably put better odds on that [25Gbps lanes] because it is in the realm of what everyone is developing," John D'Ambrosia, chair of the IEEE 802.3 Industry Connections Higher Speed Ethernet Consensus group and chair of the the IEEE 802.3 Bandwidth Assessment Ad Hoc group, told Gazettabyte.

- 10 lanes at 40Gbps: The Optical Internetworking Forum (OIF) has started work on an electrical interface operating between 39 and 56Gbps (Common Electrical Interface - 56G-Close Proximity Reach). This could lead to 40Gbps lanes and a 10x40Gbps implementation for a 400Gbps Ethernet design.

- Modulation: For the 100Gbps backplane initiative, the IEEE is working on pulse-amplitude modulation (PAM), says D'Ambrosia. Such modulation could be used for 400GbE. Modulation is also being considered by the IEEE to create a single-lane 100Gbps interface. Such a solution could lead to a 4-lane 400GbE solution. But adopting modulation comes at a cost: more sophisticated electronics, greater size and power consumption.

As with any emerging standard, first designs will be large, power-hungry and expensive. The industry will have to work hard to produce more integrated 16-lane or 10-lane designs. Size and cost will also be important given that three 400GbE modules will be needed to implement a Terabit interface.

The challenge for component and module vendors is to develop such multi-lane designs yet do so economically. This will require design ingenuity and optical integration expertise.

Timescales

Super-channels exist now - Infinera is shipping its 5x100Gbps photonic integrated circuit. Ciena and Alcatel-Lucent are introducing their latest generation DSP-ASICs that promise 400Gbps signals and spectral shaping while other vendors have demonstrated such capabilities in the lab.

The next Ethernet standard is set for completion in 2017. If it is indeed based on a 400GbE Ethernet interface, it will likely use 4x25Gbps components for the first design, benefiting from emerging 100GbE CFP2 and CFP4 modules and their more integrated designs. But given the standard will only be completed in five years' time, new developments should also be expected.

No-one is talking about a potential bandwidth crunch but if it is to be avoided, greater investment in the key technologies will be needed. This will raise its own industry challenges. But nothing like those to be expected if the gap between bandwidth demand and available solutions grows.

The next high-speed Ethernet standard starts to take shape

Source: Gazettabyte

Source: Gazettabyte

The IEEE has begun work to develop the next-speed Ethernet standard beyond 100 Gigabit to address significant predicted growth in bandwidth demand.

The standards body has set up the IEEE 802.3 Industry Connections Higher Speed Ethernet Consensus group, chaired by John D’Ambrosia, who previously chaired the 40 and 100 Gigabit IEEE P802.3ba Ethernet standards ratified in June 2010. "I guess I’m a glutton for punishment,” quips D'Ambrosia.

The Higher Speed Ethernet standard could be completed by early 2017.

The group has been set up after an extensive one-year study by the IEEE 802.3 Bandwidth Assessment Ad Hoc group investigating networking capacity growth trends in various markets. The study looked beyond core networking and data centres - the focus of the 40 and 100 Gigabit Ethernet (GbE) study work - to include high-performance computing, financial markets, Internet exchanges and the scientific community.

One of the resulting report's conclusions (IEEE 802.3 Industry Connections Ethernet Bandwidth Assessment report) is that Terabit capacity will likely be required by 2015, growing a further tenfold by 2020.

“By 2015 core networks on average will need ten times the bandwidth of 2010, and one hundred times [the bandwidth] by 2020,” says D’Ambrosia, who is also the chair of the IEEE 802.3 Ethernet Bandwidth Assessment Ad Hoc group, as well as chief Ethernet evangelist, CTO office at Dell. “If you look at Ethernet in 2010, it was at 100 Gigabit, so ten times 100 Gigabit in 2015 is a Terabit and a hundred times 2010 is 10 Terabit by 2020.”

"We have got to the point where the pesky laws of physics are challenging us"

John D'Ambrosia, chair of the IEEE 802.3 Industry Connections Higher Speed Ethernet Consensus group

D'Ambrosia stresses that the Ad Hoc group's role is to talk about capacity requirements, not interface speeds. The technical details of any interface implementation will only become clear once the standardisation effort is well under way.

A second Ethernet Bandwidth Assessment study finding is that network aggregation nodes are growing faster, and hence require greater capacity earlier, than the network's end points.

"There is also a growing deviation between the big guys and the rest of the market," says D'Ambrosia. He has heard individuals from the largest internet content providers say they need Terabit connections by 2013, while others claim it will be 2020 before a mass market develops for such an interconnect.

D'Ambrosia says the main findings are not necessarily surprising but there were two 'aha' moments during the study.

One was that the core networking growth rates predicted in 2007 by the 40 and 100 Gig High-speed Study Group are still valid five years on.

The other concerned the New York Stock Exchange that had forecast that it would need to install four 100Gbps links in its data centre yet ended up using 13. "If there is any company that has a lot of money on the line and would have the best chance of nailing down their needs, I would put the New York Stock Exchange up there," says D'Ambrosia. "That tells you something about bandwidth growth and that you can still underestimate what is going to happen."

"The reality is that I can't give you any solutions right now that are attractive to do a Terabit"

What next

The IEEE standardisation work for the next speed Ethernet has not started but the completed Ethernet Bandwidth Assessment study will likely form an important input for the Industry Connections Higher Speed Ethernet Consensus group.

The start of the standardisation work is expected in either March or July 2013 with the Study Group phase then taking a further eight months. This compares to 18 months for the IEEE 40GbE and 100GbE Study Group work (see chart above). The Task Force's work - writing the specification - is then expected to take a further two and a half years, completing the standard in early 2017 if all goes to plan.

Technology options

While stressing that the IEEE is talking about capacities and not yet interface speeds, Terabit capacity could be solved using multiple 400 Gigabit Ethernet interfaces, says D'Ambrosia.

At present there is no 400GbE project underway. However, the industry does believe that 400GbE is "doable" economically and technically. "Much of the supply base, when we are talking about Ethernet, is looking at 400 Gigabit," says D'Ambrosia.

Achieving a 1TbE interface looks much more distant. "People pushing for 1 Terabit tend to be the people looking at it from the bandwidth perspective and then looking at upgrading their networks and making multiple investments," he says. "But the reality is that I can't give you any solutions right now that are attractive to do a Terabit."

All agree that the technical challenges facing the industry to meet growing bandwidth demands are starting to mount. "We have got to the point where the pesky laws of physics are challenging us," says D'Ambrosia.

Further reading:

IEEE 802.3 Industry Connections Higher Speed Ethernet Ad Hoc

Next-gen 100 Gigabit optics

Briefing: 100 Gigabit

Part 2: Interview

Gazettabyte spoke to John D'Ambrosia about 100 Gigabit technology

John D'Ambrosia, chair of the IEEE 100 Gig backplane and copper cabling task force

John D'Ambrosia, chair of the IEEE 100 Gig backplane and copper cabling task force

John D'Ambrosia laughs when he says he is the 'father of 100 Gig'.

He spent five years as chair of the IEEE 802.3ba group that created the 40 and 100 Gigabit Ethernet (GbE) standards. Now he is the chair of the IEEE task force looking at 100 Gig backplane and copper cabling. D'Ambrosia is also chair of the Ethernet Alliance and chief Ethernet evangelist in the CTO office of Dell's Force10 Networks.

“People are also starting to talk about moving data operations around the network based on where electricity is cheapest”

"Part of the reason why 100 Gig backplane technology is important is that I don't know anybody that wants a single 100 Gig port off whatever their card is," says D'Ambrosia. "Whether it is a router, line card, whatever you want to call it, they want multiple 100 Gig [interfaces]: 2, 4, 8 - as many as they can."

Earlier this year, there was a call for interest for next-generation 100 Gig optical interfaces, with the goal of reducing the cost and power consumption of 100 Gig interfaces while increasing their port density. "This [next-generation 100 Gig optical interfaces] is going to become very interesting in relation to what is going on in the industry,” he said.

Next-gen 100 Gig

The 10x10 MSA is an industry initiative that is an alternative 100 Gig interface to the IEEE 100 Gigabit Ethernet standards. Members of the 10x10 MSA include Google, Brocade, JDSU, NeoPhotonics (Santur), Enablence, CyOptics, AFOP, MRV, Oplink and Hitachi Cable America.

"Unfortunately, that [10x10 MSA] looks like it could cause potential interop issues,” says D'Ambrosia. That is because the 10x10 MSA has a 10-channel 10 Gigabit-per-second (Gbps) optical interface while the IEEE 100GbE use a 4x25Gbps optical interface.

The 10x10 interface has a 2km reach and the MSA has since added a 10km variant as well as 4x10x10Gbps and 8x10x10Gbps versions over 40km.

The advent of the 10x10 MSA has led to an industry discussion about shorter-reach IEEE interfaces. "Do we need something below 10km?” says D’Ambrosia.

Reach is always a contentious issue, he says. When the IEEE 802.3ba was choosing the 10km 100GBASE-LR4, there was much debate as to whether it should be 3 or 4km. "I won’t be surprised if you have people looking to see what they can do with the current 100GBASE-LR4 spec: There are things you can do to reduce the power and the cost," he says.

One obvious development to reduce size, cost and power is to remove the gearbox chip. The gearbox IC translates between 10x10Gbps and the 4x25Gbps channels. The chip consumes several watts each way (transmit to receive and vice versa). By adopting a 4x25Gbps input electrical interface, the gearbox chip is no longer needed - the electrical and optical channels will then be matched in speed and channel count. The result is that the 100GbE designs can be put into the upcoming, smaller CFP2 and even smaller CFP4 form factors.

As for other next-gen 100Gbps developments, these will likely include a 4x25Gbps multi-mode fibre specification and a 100 Gig, 2km serial interface, similar to the 40GBASE-FR.

The industry focus, he says, is to reduce the cost, power and size of 100Gbps interfaces rather than develop multiple 100 Gig link interfaces or expand the reach beyond 40km. "We are going to see new systems introduced over the next few years not based on 10 Gig but designed for 25 Gig,” says D’Ambrosia. The ASIC and chip designers are also keen to adopt 25Gbps signalling because they need to increase input-output (I/O) yet have only so may pins on a chip, he says.

D’Ambrosia is also part of an Ethernet bandwidth assessment ad-hoc committee that is part of the IEEE 802.3 work. The group is working with the industry to quantify bandwidth demand. “What you see is a lot of end users talking about needing terabit and a lot of suppliers talking about 400 Gig,” he says. Ultimately, what will determine the next step is what technologies are going to be available and at what cost.

Backplane I/0 and switching

Many of the systems D'Ambrosia is seeing use a single 100Gbps port per card. "A single port is a cool thing but is not that useful,” he says. “Frankly, four ports is where things start to become interesting.”

This is where 25Gbps electrical interfaces come into play. "It is not just 25 Gig for chip-to-chip, it is 25 Gig chip-to-module and 25 Gig to the backplane."

Moreover modules, backplane speeds, and switching capacity are all interrelated when designing systems. Designing a 10 Terabit switch, for example, the goal is to reduce the number of traces on a board and that go through the backplane to the switch fabric and other line cards.

Using 10Gbps electrical signals, between 1,200 to 2,000 signals are needed depending on the architecture, says D'Ambrosia. With 25Gbps the interface count reduces to 500-750. “The electrical signal has an impact on the switch capacity,” he says.

100 Gig in the data centre

D’Ambrosia stresses that care is needed when discussing data centres as the internet data centres (IDC) of a Google or a Facebook differ greatly from those of enterprises. “In the case of IDCs, those people were saying they needed 100 Gig back in 2006,” he says.

Such mega data centres use tens of thousands of servers connected across a flat switching architecture unlike traditional data centres that use three layers of aggregated switching. According to D'Ambrosia such flat architectures can justify using 100Gbps interfaces even when the servers each have a 1 Gig Ethernet interfaces only. And now servers are transitioning to 10 GbE interfaces.

“You are going to have to worry about the architecture, you are going to have to worry about the style of data centre and also what the server applications are,” says D'Ambrosia. “People are also starting to talk about moving data operations around the network based on where electricity is cheapest.” Such an approach will require a truly wide, flat architecture, he says.

D'Ambrosia cites the Amsterdam Internet exchange that announced in May its first customer using a 100 Gig service. "We are starting to see this happen,” he says.

One lesson D'Ambrosia has learnt is that there is no clear relationship between what comes in and out of the cloud and what happens within the cloud. Data centres themselves are one such example.

100 Gig direct detection

In recent months lower power, 200km to 800km reach, 100Gbps direct detection interfaces that are cheaper than coherent transmission have been announced by ADVA Optical Networking and MultiPhy. Such interfaces have a role in the network and are of varying interest to telco operators. But these are vendor-specific solutions.

D’Ambrosia stresses the importance of standards such as the IEEE and the work of the Optical Internetworking Forum (OIF) that has adopting coherent. “I still see customers that want a standards-based solution,” says D'Ambrosia, who adds that while the OIF work is not a standard, it is an interoperability agreement. “It allows everyone to develop the same thing," he says.

There are also other considerations regarding 100 Gig direct-detection besides cost, power and a pluggable form factor. Vendors and operators want to know how many people will be able to source this, he says.

D'Ambrosia says that new systems being developed now will likely be deployed in 2013. Vendors must assess the attractiveness of any alternative technologies to where industry backed technologies like coherent and the IEEE standards will be then.

The industry will adopt a variety of 100Gbps solutions, he says, with particular decisions based on a customer’s cost model, its long term strategy and its network.

For Part 1 - 100 Gig: An operator view click here