Telecoms' innovation problem and its wider cost

Imagine how useful 3D video calls would have been this last year.

The technologies needed – a light field display and digital compression techniques to send the vast data generated across a network – do exist but practical holographic systems for communication remain years off.

But this is just the sort of application that telcos should be pursuing to benefit their businesses.

A call for innovation

“Innovation in our industry has always been problematic,” says Don Clarke, formerly of BT and CableLabs and co-author of a recent position paper outlining why telecoms needs to be more innovative.

Entitled Accelerating Innovation in the Telecommunications Arena, the paper’s co-authors include representatives from communications service providers (CSPs), Telefonica and Deutsche Telekom.

In an era of accelerating and disruptive change, CSPs are proving to be an impediment, argues the paper.

The CSPs’ networking infrastructure has its own inertia; the networks are complex, vast in scale and costly. The operators also require a solid business case before undertaking expensive network upgrades.

Such inertia is costly, not only for the CSPs but for the many industries that depend on connectivity.

But if the telecom operators are to boost innovation, practices must change. This is what the position paper looks to tackle.

NFV White Paper

Clarke was one of the authors of the original Network Functions Virtualisation (NFV) White Paper, published by ETSI in 2012.

The paper set out a blueprint as to how the telecom industry could adopt IT practices and move away from specialist telecom platforms running custom software. Such proprietary platforms made the CSPs beholden to systems vendors when it came to service upgrades.

The NFV paper also highlighted a need to attract new innovative players to telecoms.

“I see that paper as a catalyst,” says Clarke. “The ripple effect it has had has been enormous; everywhere you look, you see its influence.”

Clarke cites how the Linux Foundation has re-engineered its open-source activities around networking while Amazon Web Services now offers a cloud-native 5G core. Certain application programming interfaces (APIs) cited by Amazon as part of its 5G core originated in the NFV paper, says Clarke.

Software-based networking would have happened without the ETSI NFV white paper, stresses Clarke, but its backing by leading CSPs spurred the industry.

However, building a software-based network is hard, as the subsequent experiences of the CSPs have shown.

“You need to be a master of cloud technology, and telcos are not,” says Clarke. “But guess what? Riding to the rescue are the cloud operators; they are going to do what the telcos set out to do.”

For example, as well as hosting a 5G core, AWS is active at the network edge including its Internet of Things (IoT) Greengrass service. Microsoft, having acquired telecom vendors Metaswitch and Affirmed Networks, has launched ‘Azure for Operators’ to offer 5G, cloud and edge services. Meanwhile, Google has signed agreements with several leading CSPs to advance 5G mobile edge computing services.

“They [the hyperscalers] are creating the infrastructure within a cloud environment that will be carrier-grade and cloud-native, and they are competitive,” says Clarke.

The new ecosystem

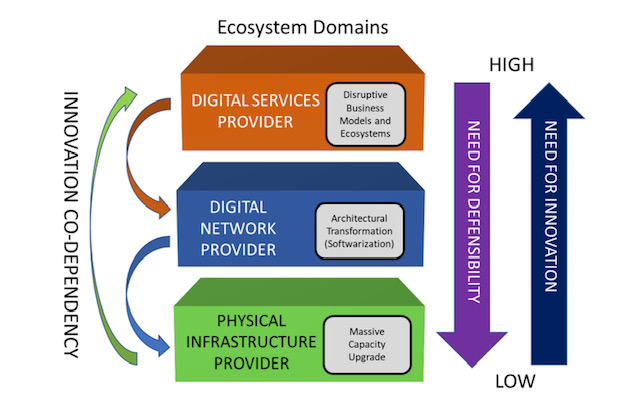

The position paper describes the telecommunications ecosystem in three layers (see diagram).

The CSPs are examples of the physical infrastructure providers (bottom layer) that have fixed and wireless infrastructure providing connectivity. The physical infrastructure layer is where the telcos have their value – their ‘centre of gravity’ – and this won’t change, says Clarke.

The infrastructure layer also includes the access network which is the CSPs’ crown jewels.

“The telcos will always defend and upgrade that asset,” says Clarke, adding that the CSPs have never cut access R&D budgets. Access is the part of the network that accounts for the bulk of their spending. “Innovation in access is happening all the time but it is never fast enough.”

The middle, digital network layer is where the nodes responsible for switching and routing reside, as do the NFV and software-defined networking (SDN) functions. It is here where innovation is needed most.

Clarke points out that the middle and upper layers are blurring; they are shown separately in the diagram for historical reasons since the CSPs own the big switching centres and the fibre that connect them.

But the hyperscalers – with their data centres, fibre backbones, and NFV and SDN expertise – play in the middle layer too even if they are predominantly known as digital service providers, the uppermost layer.

The position paper’s goal is to address how CSPs can better address the upper two network layers while also attracting smaller players and start-ups to fuel innovation across all three.

Paper proposal

The paper identifies several key issues that curtail innovation in telecoms.

One is the difficulty for start-ups and small companies to play a role in telecoms and build a business.

Just how difficult it can be is highlighted by the closure of SDN-controller specialist, Lumina Networks, which was already engaged with two leading CSPs.

In a Telecom TV panel discussion about innovation in telecoms, that accompanied the paper’s publication, Andrew Coward, the then CEO of Lumina Networks, pointed out how start-ups require not just financial backing but assistance from the CSPs due to their limited resources compared to the established systems vendors.

It is hard for a start-up to respond to an operator’s request-for-proposals that can be thousands of pages long. And when they do, will the CSPs’ procurement departments consider them due to their size?

Coward argues that a portion of the CSP’ capital expenditure should be committed to start-ups. That, in turn, would instill greater venture capital confidence in telecoms.

The CSPs also have ‘organisational inertia’ in contrast to the hyperscalers, says Clarke.

“Big companies tend towards monocultures and that works very well if you are not doing anything from one year to the next,” he says.

The hyperscalers’ edge is their intellectual capital and they work continually to produce new capabilities. “They consume innovative brains far faster and with more reward than telcos do, and have the inverse mindset of the telcos,” says Clarke.

The goals of the innovation initiative are to get CSPs and the hyperscalers – the key digital service providers – to work more closely.

“The digital service providers need to articulate the importance of telecoms to their future business model instead of working around it,” says Clarke.

Clarke hopes the digital service providers will step up and help the telecom industry be more dynamic given the future of their businesses depend on the infrastructure improving.

In turn, the CSPs need to stand up and articulate their value. This will attract investors and encourage start-ups to become engaged. It will also force the telcos to be more innovative and overcome some of the procurement barriers, he says.

Ultimately, new types of collaboration need to emerge that will address the issue of innovation.

Next steps

Work has advanced since the paper was published in June and additional players have joined the initiative, to be detailed soon.

“This is the beginning of what we hope will be a much more interesting dialogue, because of the diversity of players we have in the room,” says Clarke. “It is time to wake up, not only because of the need for innovation in our industry but because we are an innovation retardant everywhere else.”

Further information:

Telecom TV’s panel discussion: Part 2, click here

Tom Nolle’s response to the Accelerating Innovation in the Telecommunications Arena paper, click here

Silicon photonics: concerns but viable and still evolving

Blaine Bateman set himself an ambitious goal when he started researching the topic of silicon photonics. The president of the management consultancy, EAF LLC, wanted to answer some key questions for a broad audience, not just academics and researchers developing silicon photonics but executives working in data centres, telecom and IT.

The result is a 192-page report entitled Silicon Photonics: Business Situation Report, 59 pages alone being references. In contrast to traditional market research reports, there is also no forecast or company profiles.

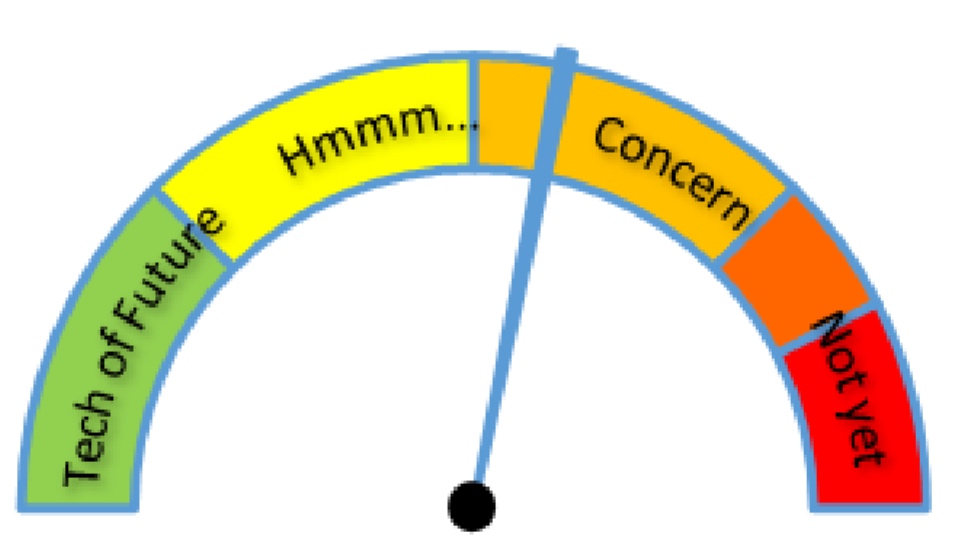

Blaine Bateman's risk meter for silicon photonics. Eleven key elements needed to deploy a silicon photonics solution were considered. And these were assessed from the perspective of various communities involved or impacted by the technology, from silicon providers to cloud-computing users. Source: EAF LLC.

Blaine Bateman's risk meter for silicon photonics. Eleven key elements needed to deploy a silicon photonics solution were considered. And these were assessed from the perspective of various communities involved or impacted by the technology, from silicon providers to cloud-computing users. Source: EAF LLC.

“I thought it would be helpful to give people a business view,” says Bateman.

Bateman works with companies on strategy in such areas as antennas, wireless technologies and more recently analytics and machine learning. But a growing awareness of photonics made him want to research the topic. “I could see a convergence between the evolution of telecom switching centres to become more like data centres, and data centres starting to look more like telecoms,” he says.

The attraction of silicon photonics is that it is an emerging technology with wide applicability in communications.

Just watching entirely new technologies emerge and become commercially viable in the span of ten years; it is astonishing

“Silicon Photonics is a good topic to research and publish to help a broader community because it is highly technical,” says Bateman. “It is also a great case study, just watching entirely new technologies emerge and become commercially viable in the span of ten years; it is astonishing.”

Bateman spent two years conducting interviews and reading a vast number of academic papers and trade-press articles before publishing the report earlier this year.

Blaine BatemanThe main near-term opportunity for silicon photonics he investigated is the data centre. Moreover, not just large-scale data centre players with an obvious need for cheaper optics to interconnect servers but also enterprises facing important decisions regarding their cloud-computing strategy.

“The view that I developed is that it is still very early,” he says. “The price points for a given performance [of optics] are significantly higher than a Facebook thinks they need to meet their long-term business perspectives.”

The price-performance figure commonly floated is one dollar per gigabit but current 100-gigabit pluggable modules, whether using indium phosphide or silicon photonics, are several times more costly than that.

This is an important issue for cloud providers and for enterprises determining their cloud strategy.

Do cloud provider invest money in silicon photonics technologies for their data centres or do they let others be early adopters and come in later when prices have dropped? Equally, an enterprise considering moving their business operations to the cloud is in a precarious position, says Bateman. “If you pick the wrong horse, you could be boxed into a level of price and performance, while you will have competitors starting with cloud providers that have a 30 to 50 percent price-performance advantage,” he says. “In my view, it will trickle all the way to the large consumers of cloud resources.”

Longer term, the market will resolve the relative success of silicon photonics versus traditional optics but, near term, companies have some expensive decisions to make. “The price curve is still in the early phase,” says Bateman. “It just hasn’t come down enough that it is an easy decision.”

Bateman’s advice to enterprises considering a potential cloud provider is to ask about its roadmap plans regarding the deployment of photonics.

Findings

To help understand the technology and business risks associated with silicon photonics, Bateman has created risk meters. These are intuitive graphics that show the status of the different elements making up silicon photonics and the issues involved when making silicon phonics devices. These include the light source, modulation method, formation of the waveguides, fibering the chip and fabrication plants.

“The reason the fab is such a high risk is that even though the idea was to leverage existing foundries, in truth it is very much new processes,” says Bateman. “There is also a limited number of fabs that can build these things.”

The report also includes a risk meter summarising the overall status of silicon photonics (see above).

Bateman says there are concerns regarding silicon photonics which people need to be aware of but stresses that it is a viable technology.

This is one of two main conclusions he highlights. Silicon photonics is not mature enough to be at a commodity price. Accordingly, taking a non-commodity or early adopter technology could damage a company’s business plan in terms of cost and performance.

The second takeaway is that for every single aspect of silicon photonics, much is still open. “One of the reasons I made all these lists in the report - and I studied research from all over the globe - is that I wanted to show the management level that silicon photonics is still emerging,” says Bateman.

China is focused on innovation now, and has formidable resources

This surprised him. When a new technology comes to market, it typically uses R&D developed decades earlier. “In this area, I was shocked by the huge amount of basic research this is still ongoing and more and more is being done every day,” says Bateman. “It is daunting; it is moving so fast.”

Another aspect that surprised him was the amount of research coming out of Asia and in particular China. “This is also something new, seeing original work in China and other parts of the world,” he says.

The stereotypical view that China is a source of cheap manufacturing but little in terms of innovation must change, he says. In the US, in particular, there is still a large body of people that think this way, says Bateman: “I feel they have their head in the sand - China is focused on innovation now, and has formidable resources.”

The connected vehicle - driving in the cloud

Cars are already more silicon than steel. As makers add LTE high speed broadband, they are destined to become more app than automobile. The possibilities that come with connecting your car to the cloud are scintillating. No wonder Gil Golan, director at General Motors' Advanced Technical Center in Israel, says the automotive industry is at an 'inflection point'.

"If you put LTE to the vehicle ... you are going to open a very wide pipe and you can send to the cloud and get results with almost no latency"

"If you put LTE to the vehicle ... you are going to open a very wide pipe and you can send to the cloud and get results with almost no latency"

Gil Golan, General Motors

After a century continually improving the engine, suspension and transmission, car makers are now busy embracing technologies outside their traditional skill sets. The result is a period of unprecedented change and innovation.

Gil Golan, director at General Motors' Advanced Technical Center in Israel, cites the use of in-camera car systems to aid driving and parking as an example. "Five years ago almost no vehicle used a camera whereas now increasing numbers have at least one, a fish eye-camera facing backwards." Vehicles offering 360-degree views using five cameras are taking to the road and such numbers will become the norm in the next five years.

The result is that the automotive industry is hiring people with optics and lens expertise, as well as image processing skills to analyse the images and video the cameras produce. "This is just the camera; the vehicle is going to be loaded with electronics," says Golan.

In 2004 the [automotive] industry crossed the point where, on average, we spend more on silicon than on steel

Moore's Law

Semiconductor advances driven by Moore's Law have already changed the automotive industry. "In 2004 the [automotive] industry crossed the point where, on average, we spend more on silicon than on steel," says Golan.

Moore's Law continues to improve processor and memory performance while driving down cost. "Every small system can now be managed or controlled in a better way," says Golan. "With a processor and memory, everything can be more accurate, more functionality can be built in, and it doesn't matter if it is a windscreen wiper or a sophisticated suspension system."

Current high-end vehicles have over 100 microprocessors. In turn, chip makers are developing 100 Megabit and 1 Gigabit Ethernet physical devices, media access controllers and switching silicon for in-vehicle networking to link the car's various electronic control units (ECUs).

The growing number of on-board microprocessors is also reflected in the software within vehicles. According to Golan, the Chevrolet Volt has over 10 million lines of code while the latest Lockheed Martin F-35 fighter has 8.7 million. "These are software vehicles on four wheels," says Golan. Moreover, the design of the Chevy Volt started nearly a decade ago.

Car makers must keep vehicles, crammed with electronics and software, updated despite their far longer life cycles compared to consumer devices such as smartphones.

According to General Motors, each car model has its content sealed every four or five years. A car design sealed today may only come on sale in 2016 after which it will be manufactured for five years and remain on the road for a further decade. "A vehicle sealed today is supposed to be updated and relevant through to 2030," says Golan. "This, in an era where things are changing at an unprecedented pace."

As a result car makers work on ways to keep vehicles updated after the design is complete, during its manufacturing phase, and then when the vehicle is on the road, says Golan.

Industry trends

Two key trends are driving the automotive industry:

- The development of autonomous vehicles

- The connected vehicle

Leading car makers are all working towards the self-driving car. Such cars promise far greater safety and more efficient and economical driving. Such a vehicle will also turn the driver into a passenger, free to do other things. Automated vehicles will need multiple sensors coupled to on-board algorithms and systems that can guide the vehicle in real-time.

Golan says camera sensors are now available that see at night, yet some sensors can perform poorly in certain weather conditions and can be confused by electromagnetic fields - the car is a 'noisy' environment. As a result, multiple sensor types will be needed and their outputs fused to ensure key information is not missed.

"Remember, we are talking about life; this is not computers or mobile handsets," says Golan. "If you put more active safety systems on-board, it means you have to have a very solid read on what is going on around you."

The Chevrolet Volt has over 10 million lines of code while the latest Lockheed Martin F-35 fighter has 8.7 million

Wireless

Wireless communications will play a key role in vehicles. The most significant development is the advent of the Long Term Evolution (LTE) cellular standard that will bring broadband to the vehicle.

Golan says there are different perimeters within and around the car where wireless will play a role. The first is within the vehicle for wireless communication between devices such as a user's smart phone or tablet and the vehicle's main infotainment unit.

Wireless will also enable ECUs to talk, eliminating wiring inside the vehicle. "Wires are expensive, are heavy and impact the fuel economy, and can be a source for different problems: in the connectors and the wires themselves," says Golan.

A second, wider sphere of communication involves linking the vehicle with the immediate surroundings. This could be other vehicles or the infrastructure such as traffic lights, signs, and buildings. The communication could even be with cyclists and pedestrians carrying cellphones. Such immediate environment communication would use short-range communications, not the cellular network.

Wide-area communication will be performed using LTE. Such communication could also be performed over wireline. "If it is an electric vehicle, you can exchange data while you charge the vehicle," says Golan.

This ability to communicate across the network and connect to the cloud is what excites the car makers.

You can talk to the vehicle and the processing can be performed in the cloud

Cloud and Big Data

"If you put LTE to the vehicle, you are showing your customers that you are committed to bringing the best technology to the vehicle, you are going to open a very wide pipe and you can send to the cloud and get results with almost no latency," says Golan.

LTE also raises the interesting prospect of enabling some of the current processing embedded in the vehicle to be offloaded onto servers. "I can control the vehicle from the cloud," says Golan. "You can talk to the vehicle and the processing can be performed in the cloud."

The processing and capabilities offered in the cloud are orders of magnitude greater than what can be done on the vehicle, says Golan: "The results are going to be by far better than what we are familiar with today."

Clearly pooling and processing information centrally will offer a broader view than any one vehicle can provide but just what car processing functions can be offloaded are less clear, especially when a broadband link will always be dependent on the quality of the cellular coverage.

Safety critical systems will remain onboard, stresses Golan, but some of the infotainment and some of the extra value creation will come wirelessly.

Choosing the LTE operator to use is a key decision for an automotive company. "We have to make sure you [the driver] are on a very good network," says Golan. "The service provider has to show us, prove to us [their network], and in some cases we run basic and sporadic tests with our operator to make sure that we do have the network in place."

Automotive companies see opportunity here.

"When you get into a vehicle, there is a new type of behaviour that we know," says Golan. "We know a lot about your vehicle, we know your behaviour while you are driving: your driving style, what coffee you like to drink and your favourite coffee store, and that you typically fill up when you have a half tank and you go to a certain station."

This knowledge - about the car and the driver's preferences when driving - when combined with the cloud, is a powerful tool, says Golan. Car companies can offer an ecosystem that supports the driver. "We can have everything that you need while in the vehicle, served by General Motors," says Golan. "Let your imagination think about the services because I'm not going to tell you; we have a long list of stuff that we work on."

If we don't see that what we work on creates tremendous value, we drop it

General Motors already owns a 'huge' data centre and being a global company with a local footprint, will use cloud service providers as required.

So automotive is part of the Big Data story? "Oh, big time," says Golan. "Business analytics is critical for any industry including the automotive industry."

Innovation

Given the opportunities new technologies such as sensors, computing, communication and cloud enable, how do automotive companies remain focussed?

"If we don't see that what we work on creates tremendous value, we drop it," says Golan. "We have no time or resources to spend on spinning wheels."

General Motors has its own venture capital arm to invest in promising companies and spends a lot of time talking to start-ups. "We talk to every possible start-up; if you see them for the first time you would say: 'where is the connection to the automotive industry?'," says Golan. "We talk to everybody on everything."

The company says it will always back ideas. "If some team member comes up with a great idea, it does not matter how thin the company is spread, we will find the resources to support that," says Golan.

General Motors set up its research centre in Israel a decade ago and is the only automotive company to have an advanced development centre there, says Golan."The management had the foresight to understand that the industry is undergoing mega trends and an entrepreneurial culture - an innovation culture - is critically important for the future of the auto industry."

The company also has development sites in Silicon Valley and several other locations. "This is the pipe that is going to feed you innovation, and to do the critical steps needed towards securing the future of the company," says Golan. "You have to go after the technology."

Further reading:

Google's Original X-Man, click here

Rational and innovative times: JDSU's CTO Q&A Part II

"What happens after 100 Gig is going to be very interesting"

Brandon Collings (right), JDSU

How has JDS Uniphase (JDSU) adapted its R&D following the changes in the optical component industry over the last decade?

JDSU has been a public company for both periods [the optical boom of 1999-2000 and now]. The challenge JDSU faced in those times, when there was a lot of venture capital (VC) money flowing into the system, was that the money was sort of free money for these companies. It created an imbalance in that the money was not tied to revenue which was a challenge for companies like JDSU that ties R&D spend to revenue. You also have much more flexibility [as a start-up] in setting different price points if you are operating on VC terms.

The situation now is very straightforward, rational and predictable.

There is not a huge army of R&D going on. That lack of R&D does not speed up the industry but what it does do is allow those companies doing R&D - and there is still a significant number - a lot of focus and clarity. It also requires a lot of partnership between us, our customers [equipment makers] and operators. The people above us can't just sit back and pick and choose what they like today from myriad start-ups doing all sorts of crazy things.

We very much appreciate this rational time. Visions can be more easily discussed, things are more predictable and everyone is playing from a similar set of rules.

Given the changes at the research labs of system vendor and operators, is there a risk that insufficient R&D is being done, impeding optical networking's progress?

It is hard to say absolutely not as less people doing things can slow things down. But the work those labs did, covered a wide space including outside of telecom.

There is still a sufficient critical mass of research at placed like Alcatel-Lucent Bell Labs, AT&T and BT; there is increasingly work going on in new regions like Asia Pacific, and a lot more in and across Europe. It is also much more focussed - the volume of workers may have decreased but the task still remains in hand.

"There are now design tradeoffs [at speeds higher than 100Gbps] whereas before we went faster for the same distance"

How does JDSU foster innovation and ensure it is focussing on the right areas?

I can't say that we have at JDSU a process that ensures innovation. Innovation is fleeting and mysterious.

We stay very connected to our key customers who are more on the cutting edge. We have very good personal and professional relationships with their key people. We have the same type of relationship with the operators.

I and my team very regularly canvass and have open discussions about what is coming. What does JDSU see? What do you see? What technologies are blossoming? We talk through those sort of things.

That isn't where innovation comes from. But what that can do is sow the seeds for the opportunity for innovation to happen.

We take that information and cycle it through all our technology teams. The guys in the trenches - the material scientists, the free-space optics design guys - we try to educate them with as much of an understanding of the higher-level problems that ultimately their products, or the products they design into, will address.

What we find is that these guys are pretty smart. If you arm them with a wider understanding, you get a much more succinct and powerful innovation than if you try to dictate to a material scientist here is what we need, come back when you are done.

It is a loose approach, there isn't a process, but we have found that the more we educate our keys [key guys] to the wider set of problems and the wider scope of their product segments, the more they understand and the more they can connect their sphere of influence from a technology point of view to a new capability. We grab that and run with it when it makes sense.

It is all about communicating with our customers and understanding the environment and the problem, then spreading that as wide as we can so that the opportunity for innovation is always there. We then nurse it back into our customers.

Turning to technology, you recently announced the integration of a tunable laser into an SFP+, a product you expect to ship in a year. What platforms will want a tunable laser in this smallest pluggable form factor?

The XFP has been on routers and OTN (Optical Transport Network) boxes - anything that has 10 Gig - and those interfaces have been migrated over to SFP+ for compactness and face plate space. There are already packet and OTN devices that use SFP+, and DWDM formats of the SFP+, to do backhaul and metro ring application. The expectation is that while there are more XFP ports today, the next round of equipment will move to SFP+.

Certainly the Ciscos, Junipers and the packet guys are using tunable XFPs in great volume for IP over DWDM and access networks, but the more telecom-centric players riding OTN links or maybe native Ethernet links over their metro rings are probably the larger volume.

What distance can the tunable SFP+ achieve?

The distances will be pretty much the same as the tunable XFP. We produce that in a number of flavours, whether it is metro and long-haul. The initial SFP+ will like be the metro reaches, 80km and things like that.

What is the upper limit of the tunable XFP?

We produce a negative chirp version which can do 80km of uncompensated dispersion, and then we produce a zero chirp which is more indicative of long-haul devices.

In that case the upper limit is more defined by the link engineering and the optical signal-to-noise ratio (OSNR), the extent of the dispersion compensation accuracy and the fibre type. It starts to look and smell like a long-haul lithium niobate transceiver where the distances are limited by link design as much as by the transceiver itself. As for the upper limit, you can push 1000km.

An XFP module can accommodate 3.5W while an SFP+ is about 1.5W. How have you reduced the power to fit the design into an SFP+?

It may be a generation before we get to that MSA level so we are working with our customers to see what level they can tolerate. We'll have to hit a lot less that 3.5W but it is not clear that we have to hit the SFP+ MSA specification. We are already closer now to 1.5W than 3.5W.

"I can't say that we have at JDSU a process that ensures innovation. Innovation is fleeting and mysterious."

Semiconductors now play a key role in high-speed optical transmission. Will semiconductors take over more roles and become a bigger part of what you do?

Coherent transmission [that uses an ASIC incorporating a digital signal processor (DSP)] is not going away. There is a lot of differentiation at the moment in what happens in that DSP, but I think overall it is going to be a tool the system houses use to get the job done.

If you look at 10 Gig, the big advancement there was FEC [forward error correction] and advanced FEC. In 2003 the situation was a lot like it is today: who has the best FEC was something that was touted.

If you look at coherent technology, it is certainly a different animal but it is a similar situation: that is, the big enabler for 40 and 100 Gig. Coherent is advanced technology, enhanced FEC was advanced technology back then, and over time it turned into a standardised, commoditised piece that is central and ubiquitously used for network links.

Coherent has more diversity in what it can do but you'll see some convergence and commoditisation of the technology. It is not going to replace or overtake the importance of photonics. In my mind they play together intimately; you can't replace the functions of photonics with electronics any time soon.

From a JDSU perspective, we have a lot of work to do because the bulk of the cost, the power and the size is still in the photonics components. The ASIC will come down in power, it will follow Moore's Law, but we will still need to work on all that photonics stuff because it is a significant portion of the power consumption and it is still the highest portion of the cost.

JDSU has made acquisitions in the area of parallel optics. Given there is now more industry activity here, why isn't JDSU more involved in this area?

We have been intermittently active in the parallel optics market.

The reality is that it is a fairly fragmented market: there are a lot of applications, each one with its own requirements and customer base. It is tough to spread one platform product around these applications. That said, parallel optics is now a mainstay for 40 and 100 Gig client [interfaces] and we are extremely active in that area: the 4x10, 4x25 and 12x10G [interfaces]. So that other parallel optics capability is finding its way into the telecom transceivers.

We do stay active in the interconnect space but we are more selective in what we get engaged in. Some of the rules there are very different: the critical characteristics for chip interconnect are very different to transceivers, for example. It may be much better to have on-chip optics versus off-chip optics. Obviously that drives completely different technologies so it is a much more cloudy, fragmented space at the moment.

We are very tied into it and are looking for those proper opportunities where we do have the technologies to fit into the application.

How does JDSU view the issues of 200, 400 Gigs and 1 Terabit optical transmission?

What happens after 100 Gig is going to be very interesting.

Several things have happened. We have used up the 50GHz [DWDM] channel, we can't go faster in the 50GHz channel - that is the first barrier we are bumping into.

Second, we're finding there is a challenge to do electronics well beyond 40 Gigabit. You start to get into electronics that have to operate at much higher rates - analogue-to-digital converters, modulator drivers - you get into a whole different class of devices.

Third, we have used all of our tools: we have used FEC, we are using soft-decision FEC and coherent detection. We are bumping into the OSNR problem and we don't have any more tools to run lines rates that have less power to noise yet somehow recover that with some magic technology like FEC at 10 Gig, and soft decision FEC and coherent at 40 and 100 Gig.

This is driving us into a new space where we have to do multi-carrier and bigger channels. It is opening up a lot of flexibility because, well, how wide is that channel? How many carriers do you use? What type of modulation format do you use?

What format you use may dictate the distance you go and inversely the width of the channel. We have all these new knobs to play with and they are all tradeoffs: distance versus spectral efficiency in the C-band. The number of carriers will drive potentially the cost because you have to build parallel devices. There are now design tradeoffs whereas before we went faster for the same distance.

We will be seeing a lot of devices and approaches from us and our customers that provide those tradeoffs flexibly so the carriers can do the best they can with what mother nature will allow at this point.

That means transponders that do four carriers: two of them do 200 Gig nicely packed together but they only achieve a few hundred kilometers, but a couple of other carriers right next door go a lot further but they are a little bit wider so that density versus reach tradeoff is in play. That is what is going to be necessary to get the best of what we can do with the technology.

That is the transmission side, the transport side - the ROADMS and amplifiers - they have to accommodate this quirky new formats and reach requirements.

We need to get amplifiers to get the noise down. So this is introducing new concepts like Raman and flex[ible] spectrum to get the best we can do with these really challenging requirements like trying to get the most reach with the greatest spectral efficiency.

How do you keep abreast of all these subject areas besides conversations with customers?

It is a challenge, there aren't many companies in this space that are broader than JDSU's optical comms portfolio.

We do have a team and the team has its area of focus, whether it is ROADMs, modulators, transmission gear or optical amplifiers. We segment it that way but it is a loose segmentation so we don't lose ideas crossing boundaries. We try to deal with the breadth that way.

Beyond that, it is about staying connected with the right people at the customer level, having personal relationships so that you can have open discussions.

And then it is knowing your own organisation, knowing who to pull into a nebulous situation that can engage the customer, think on their feet and whiteboard there and then rather than [bringing in] intelligent people that tend to require more of a recipe to do what they are doing.

It is all about how to get the most from each team member and creating those situations where the right things can happen.

For Part I of the Q&A, click here