Giving telecom networks a computing edge

But a subtler approach is taking hold as networks evolve whereby what a user does will change depending on their location. And what will enable this is edge computing.

Source: Senza Fili Consulting

Source: Senza Fili Consulting

Edge computing

“This is an entirely new concept,” says Monica Paolini, president and founder at Senza Fili Consulting. “It is a way to think about service which is going to have a profound impact.”

Edge computing has emerged as a consequence of operators virtualising their networks. Virtualisation of network functions hosted in the cloud has promoted a trend to move telecom functionality to the network core. Functionality does not need to be centralised but initially, that has been the trend, says Paolini, especially given how virtualisation promotes the idea that network location no longer matters.

“That is a good story, it delivers a lot of cost savings,” says Paolini, who recently published a report on edge computing. *

But a realisation has emerged across the industry that location does matter; centralisation may save the operator some costs but it can impact performance. Depending on the application, it makes sense to move servers and storage closer to the network edge.

The result has been several industry initiatives. One is Mobile Edge Computing (MEC) being developed by the European Telecommunications Standards Institute (ETSI). In March, ETSI renamed the Industry Specification Group undertaking the work to Multi-access Edge Computing to reflect the operators requirements beyond just cellular.

“What Multi-access Edge Computing does is move some of the core functionality from a central location to the edge,” says Paolini.

Another initiative is M-CORD, the mobile component of the Central Office Re-architected as a Datacenter initiative, overseen by the Open Networks Labs non-profit organisation. Other initiatives Paolini highlights include the Open Compute Project, Open Edge Computing and the Telecom Infra Project.

This is an entirely new concept. It is a way to think about service which is going to have a profound impact.

Location

The exact location of the ‘edge’ where the servers and storage reside is not straightforward.

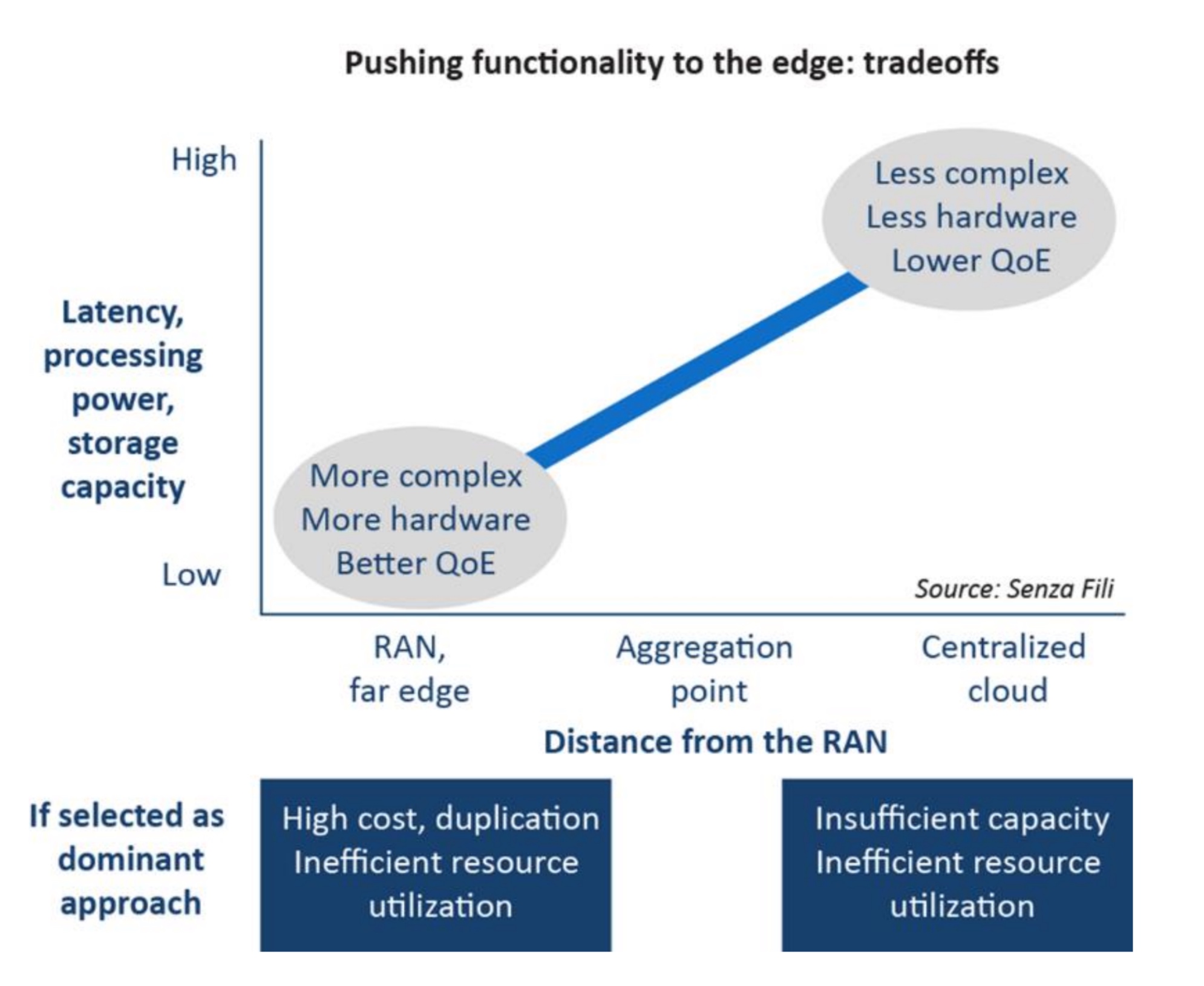

In general, edge computing is located somewhere between the radio access network (RAN) and the network core. Putting everything at the RAN is one extreme but that would lead to huge duplication of hardware and exceed what RAN locations can support. Equally, edge computing has arisen in response to the limitations of putting too much functionality in the core.

The matter of location is blurred further when one considers that the RAN itself is movable to the core using the Cloud RAN architecture.

Paolini cites another reason why the location of edge computing is not well defined: the industry does not yet know. And it will only be in the next year or two when operators start trialling the technology. “There is going to be some trial and error by the operators,” she says.

Use cases

An enterprise located across a campus is one example use of edge computing, given how much of the content generated stays on-campus. If the bulk of voice calls and data stay local, sending traffic to the core and back makes little sense. There are also security benefits keeping data local. An enterprise may also use the edge computing to run services locally and share them across networks, for example using cellular or Wi-Fi for calls.

Another example is to install edge computing at a sports stadium, not only to store video of the game’s play locally - again avoiding going to the core and back with content - but also to cache video from games taking place elsewhere for viewing by attending fans.

Virtual reality and augmented reality are other applications that require low-latency, another performance benefit of having local computation.

Paolini expects the uptake of edge computing to be gradual. She also points to its challenging business case, or at least how operators typically assess a business case may not tell the full story.

Operators view investing in edge computing as an extra cost but Paolini argues that operators need to look carefully at the financial benefits. Edge computing delivers better utilisation of the network and lower latency. “The initial cost for multi-access edge computing is compensated for by the improved utilisation of the existing network,” she says.

When Paolini started the report it was to research low-latency and the issues of distributed network design, reliability and redundancy. But she soon realised that multi-access edge computing was something broader and that edge computing is beyond what ETSI is doing.

This is not like an operator rolling out LTE and reporting to shareholders how much of the population now has coverage. “It is a very different business to learn how to use networks better,” says Paolini.

* Click here to access the report, Power at the edge. MEC, edge computing, and the prominence of location

ETSI embraces AI to address rising network complexity

The growing complexity of networks is forcing telecom operators and systems vendors to turn to machine intelligence for help. It has led the European Telecommunications Standards Institute, ETSI, to set up an industry specification group to define how artificial intelligence (AI) can be applied to networking.

“With the advent of network functions virtualisation and software-defined networking, we can see the eventuality that network management is going to get very much more complicated,” says Ray Forbes, convenor of the ETSI Industry Specification Group, Experimental Network Intelligence (ISG-ENI).

Source: ETSI

Source: ETSI

The AI will not just help with network management, he says, but also with the introduction of services and the more efficient use of network resources.

Visibility of events at many locations in the network will be needed with the deployment of network functions virtualisation (NFV), says Forbes. In current networks, a large switch may serve hundreds of thousands of users but with NFV, virtual network functions will be at many locations. The ETSI group will look at how AI can be used to manage and control this distributed deployment of virtual network functions, says Forbes.

The group’s work has started by inviting interested parties to bring and discuss use cases from which a set of requirements will be generated. In parallel, the group is looking at AI techniques.

The aim is to use computing to derive data from across the network. The data will be analysed, and by having 'context awareness', the machine intelligence will compute various scenarios before presenting the most promising ones for consideration by the network management team. “The process is collecting data, analysing it, testing out various scenarios and then advising people on what would happen in the better scenarios,” says Forbes.

With the advent of NFV and SDN, we can see the eventuality that network management is going to get very much more complicated

ETSI's goal is to make it easier for operators to deploy services quickly, reroute around networking faults, and make better use of networking resources. “In very large cities like Shanghai and Tokyo, where there are populations of 25 million, there is a need for this,” says Forbes. “In London, with about 12 million people, there is still a need but not quite so quickly.”

Operators and system vendors have some understanding of AI but there is a learning curve in bringing more and more AI experts on board, says Forbes: "Hence, we are trying to involve various universities in the research project."

Project schedule

The ISG-ENI's initial document work will be followed by defining the architecture and specifying the parameters needed to measure the network and the 'intelligence' of the scenarios.

“ETSI has a two-year project with the possibility of an extension,” says Forbes, with AI deployed in networks as early as 2019.

Forbes says open-source software to add AI to networks could be available as soon as 2018. Such open-source software will be developed by operators and systems vendors rather than ETSI.

60-second interview with Michael Howard

Infonetics Research has interviewed global service providers regarding their plans for software-defined networking (SDN) and network functions virtualisation (NFV). Gazettabyte asked Michael Howard, co-founder and principal analyst, carrier networks, about Infonetics' findings.

"Data centres are simple when compared to carrier networks"

"Data centres are simple when compared to carrier networks"

Michael Howard, Infonetics Research

What is it about SDN and NFV - technologies still in their infancy - that already convinces 86 percent of the operators to deploy the technologies in their optical transport networks?

Michael H: Operators have a universal draw to SDN and NFV for two basic reasons:

1. They want to accelerate revenue by reducing the time to new services and applications.

2. They have operational drivers, of which there are also two parts:

- Carriers expect software-defined networks to give them a single view across multiple vendor equipment, network layers and equipment types for mobile backhaul, consumer digital subscriber line (DSL), passive optical network (PON), optical transport, routers, mobile core and Ethernet access. This global view will allow them to provision, monitor and deliver service-level agreements while controlling services, virtual networks and traffic flows in an easier, more flexible and automated way.

- An additional function possible with such a global view across the multi-vendor network is that traffic can be monitored and re-distributed along pathways to make best use of the network. In this way, the network can run 'hotter' and thereby require less equipment, saving capital expenditure (CapEx).

Optical transport networks have a history of being engineered to effect predictable flows on transport arteries and backbones. Many operators have deployed, or have been experimenting with, GMPLS (Generalized Multi-Protocol Label-Switching) and vendor control planes. So it is natural for them to want to bring this industry standard method of deploying an SDN control plane over the usually multi-vendor transport network.

In our conversations - independent of our survey - we find that several operators believe the biggest bang for the SDN buck is to use SDN for single control plane over multi-layer data - router, Ethernet - and the optical transport network.

"The virtualisation of data centre networks has inspired operators who want to apply the same general principles to their oh-so-much-more complex networks"

Early use of SDN has been in the data centre. How will the technologies benefit networks more generally and optical transport in particular?

SDNs were developed initially to solve the operational problems of un-automated networks. That is to say, slow human labour-intensive network changes required by the automated hypervisor as it moves, adds and changes virtual machines across servers that may be in the same data centre or in multiple data centres.

The virtualisation of data centre networks has inspired operators who want to apply the same general principles to their oh-so-much-more complex networks. Data centres are simple when compared to carrier networks. Data centres are basically large numbers of servers connected by Ethernet LANs and virtual LANs with some router separations of the LANs connecting servers.

"It will be many years before SDNs-NFV will be deployed in major parts of a carrier network"

Service provider networks are a set of many different types of networks including consumer broadband, business virtual private networks, optical transport, access/ aggregation Ethernet and router networks, mobile core and mobile backhaul. Each of these comprises multiple layers and almost certainly involves multiple vendor equipment. This explains why operators are starting their SDN-NFV investigations with small network segments which we call 'contained domains'. It will be many years before SDNs-NFV will be deployed in major parts of a carrier network.

You mention small SDN and NFV deployments. What will these early applications look like?

Our survey respondents indicated that intra-datacentre, inter-datacentre, cloud services, and content delivery networks (CDNs) will be the first to be deployed by the end of 2014. Other areas targeted longer term are optical transport, mobile packet core, IP Multimedia Subsystem, and more.

Was there a finding that struck you as significant or surprising?

Yes. A lot of current industry buzz is about optical transport networks, making me think that we'd see SDNs deployed soon. But what we heard from operators is that optical transport networks are further out in their deployment plans. This makes sense in that the Open Networking Foundation working group for transport networks has just recently got their standardisation efforts going, which usually takes a couple of years.

You say that it will be years before large parts or a whole network will be SDN-controlled. What are the main challenges here regarding SDN and will they ever control a whole network?

As I said earlier, carrier networks are complex beasts, and they are carrying revenue-generating services that cannot be risked by deployment of a new set of technologies that make fundamental changes to the way networks operate.

A major problem yet to be resolved or even addressed much by the industry is how to add SDN control planes to the router-controlled network that uses the MPLS control plane. SDN and MPLS control planes must cooperate or be coordinated in some way since they both control the same network equipment-not an easy problem, and probably the thorniest of all challenges to deploy SDNs and NFV.

The study participants rated CDNs, IP multimedia subsystem (IMS), and virtual routers/ security gateways as the main NFV applications. At least two of these segments already use servers so just how impactful will NFV be for operators?

Many operators see that they can deploy NFV in a much simpler way than deploying control plane changes involved with SDNs.

Many network functions have already been virtualised, that is software-only versions are available, and many more are under development. But these are individual vendor developments, not done according to any industry standards. This means that NFV - network functions run on servers rather than on specialised network equipment like firewalls, intrusion prevention/ intrusion detection systems, Evolved Packet Core hardware - is already in motion.

The formalisation of NFV by the carrier-driven ETSI standards group is underway, developing recommendations and standards so that these virtualised network functions can be deployed in a standardised way.

Infonetics interviewed purchase-decision makers at 21 incumbent, competitive and independent wireless operators from EMEA (Europe, Middle East, Africa), Asia Pacific and North America that have evaluated SDN projects or plan to do so. The carriers represent over half (53 percent) of the world's telecom revenue and CapEx.

Telcos eye servers & software to meet networking needs

- The Network Functions Virtualisation (NFV) initiative aims to use common servers for networking functions

- The initiative promises to be industry disruptive

"The sheer massive [server] volumes is generating an innovation dynamic that is far beyond what we would expect to see in networking"

"The sheer massive [server] volumes is generating an innovation dynamic that is far beyond what we would expect to see in networking"

Don Clarke, NFV

Telcos want to embrace the rapid developments in IT to benefit their networks and operations.

The Network Functions Virtualisation (NFV) initiative, set up by the European Telecommunications Standards Institute (ETSI), has started work to use servers and virtualisation technology to replace the many specialist hardware boxes in their networks. Such boxes can be expensive to maintain, consume valuable floor space and power, and add to the operators' already complex operations support systems (OSS).

"Data centre technology has evolved to the point where the raw throughput of the compute resources is sufficient to do things in networking that previously could only be done with bespoke hardware and software," says Don Clarke, technical manager of the NFV industry specification group, and who is BT's head of network evolution innovation. "The data centre is commoditising server hardware, and enormous amounts of software innovation - in applications and operations - is being applied.”

"Everything above Layer 2 is in the compute domain and can be put on industry-standard servers"

The operators have been exploring independently how IT technology can be applied to networking. Now they have joined forces via the NFV initiative.

"The most exciting thing about the technology is piggybacking on the innovation that is going on in the data centre," says Clarke. "The sheer massive volumes is generating an innovation dynamic that is far beyond what we would expect to see in networking."

Another key advantage is that once networks become software-based, enormous amounts of flexibility results when creating new services, bringing them to market quickly while also reducing costs.

NFV and SDN

The NFV initiative is being promoted as a complement to software-defined networking (SDN).

The complementary relationship between NFV and SDN. Source: NFV.

The complementary relationship between NFV and SDN. Source: NFV.

SDN is focussed on control mechanisms to reconfigure networks that separate the control plane and the data plane. The transport network can be seen as dumb pipes with the control mechanisms adding the intelligence.

“There are other areas of the network where there is intrinsic complexity of processing rather than raw throughput,” says Clarke.

These include firewalls, session border controllers, deep packet inspection boxes and gateways - all functions that can be ported onto servers. Indeed, once running as software on servers such networking functions can be virtualised.

"Everything above Layer 2 is in the compute domain and can be put on industry-standard servers,” says Clarke. This could even include core IP routers but clearly that is not the best use of general-purpose computing, and the initial focus will be equipment at the edge of the network.

Clarke describes how operators will virtualise network elements and interface them to their existing OSS systems. “We see SDN as a longer journey for us,” he says. “In the meantime we want to get the benefits of network virtualisation alongside existing networks and reusing our OSS where we can.”

NFV will first be applied to appliances that lend themselves to virtualisation and where the impact on the OSS will be minimal. Here the appliance will be loaded as software on a common server instead of current bespoke systems situated at the network's end points. “You [as an operator] can start to draw a list of target things as to what will be of most interest,” says Clarke.

Virtualised network appliances are not a new concept and examples are already available on the market. Vanu's software-based radio access network technology is one such example. “What has changed is the compute resources available in servers is now sufficient, and the volume of servers [made] is so massive compared to five years ago,” says Clarke

The NFV forum aims to create an industry-wide understanding as to what the challenges are while ensuring that there are common tools for operators that will also increase the total available market.

Clarke stresses that the physical shape of operators' networks - such as local exchange numbers - will not change greatly with the uptake of NFV. “But the kind of equipment in those locations will change, and that equipment will be server-based," says Clarke.

"One of the things the software world has shown us is that if you sit on your hands, a player comes out of nowhere and takes your business"

One issue for operators is their telecom-specific requirements. Equipment is typically hardened and has strict reliability requirements. In turn, operators' central offices are not as well air conditioned as data centres. This may require innovation around reliability and resilience in software such that should a server fail, the system adapts and the server workload is moved elsewhere. The faulty server can then be replaced by an engineer on a scheduled service visit rather than an emergency one.

"Once you get into the software world, all kinds of interesting things that enhance resilience and reliability become possible," says Clarke.

Industry disruption

The NFV initiative could prove disruptive for many telecom vendors.

"This is potentially massively disruptive," says Clarke. "But what is so positive about this is that it is new." Moreover, this is a development that operators are flagging to vendors as something that they want.

Clarke admits that many vendors have existing product lines that they will want to protect. But these vendors have unique telecom networking expertise which no IT start-up entering the field can match.

"It is all about timing," says Clarke. "When do they [telecom vendors] decisively move their product portfolio to a software version is an internal battle that is happening right now. Yes, it is disruptive, but only if they sit on their hands and do nothing and their competitors move first."

Clarke is optimistic about to the vendors' response to the initiative. "One of the things the software world has shown us is that if you sit on your hands, a player comes out of nowhere and takes your business," he says.

Once operators deploy software-based network elements, they will be able to do new things with regard services. "Different kinds of service profiles, different kinds of capabilities and different billing arrangements become possible because it is software- not hardware-based."

Work status

The NFV initiative was unveiled late last year with the first meeting being held in January. The initiative includes operators such as AT&T, BT, Deutsche Telekom, Orange, Telecom Italia, Telefonica and Verizon as well as telecoms equipment vendors, IT vendors and technology providers.

One of the meeting's first tasks was to identify the issues to be addressed to enable the use of servers for telecom functions. Around 60 companies attended the meeting - including 20-odd operators - to create the organisational structure to address these issues.

Two experts groups - on security, and on performance and portability - were set up. “We see these issues as key for the four working groups,” says Clarke. These four working groups cover software architecture, infrastructure, reliability and resilience, and orchestration and management.

Work has started on the requirement specifications, with calls between the members taking place each day, says Clarke. The NFV work is expected to be completed by the end of 2014.

Further information:

White Paper: Network Functions Virtualisation: An Introduction, Benefits, Enablers, Challenges & Call for Action, click here