OFC: After the aliens, a decade to rewire the Earth

At the OFC 2025 Rump Session, held in San Francisco, three teams were set a weighty challenge. If a catastrophic event—a visit by aliens —caused the destruction of the global telecommunications network, how would each team’s ‘superheroes’ go about designing the replacement network? What technologies would they use? And what issues must be considered?

The Rump Session tackled a provocative thought experiment. If the Earth’s entire communication infrastructure vanished overnight, how would the teams go about rebuilding it?

Twelve experts – eleven from industry and one academic – were split into three teams.

The teams were given ten years to build their vision network. A decade was chosen as it is a pragmatic timescale and would allow the teams to consider using emerging technologies.

The Rump Session had four rounds, greater detail being added after each.

The first round outlined the teams’ high-level visions, followed by a round of architectures. Then a segment detailed technology, the round where the differences in the team’s proposals were most evident. The final round (Round 4), each team gave a closing statement before the audience chose the winning proposal.

The Rump Session mixed deep thinking with levity and was enjoyed by the participants and audience alike.

Round 1: Network vision

The session began with each team highlighting their network replacement vision.

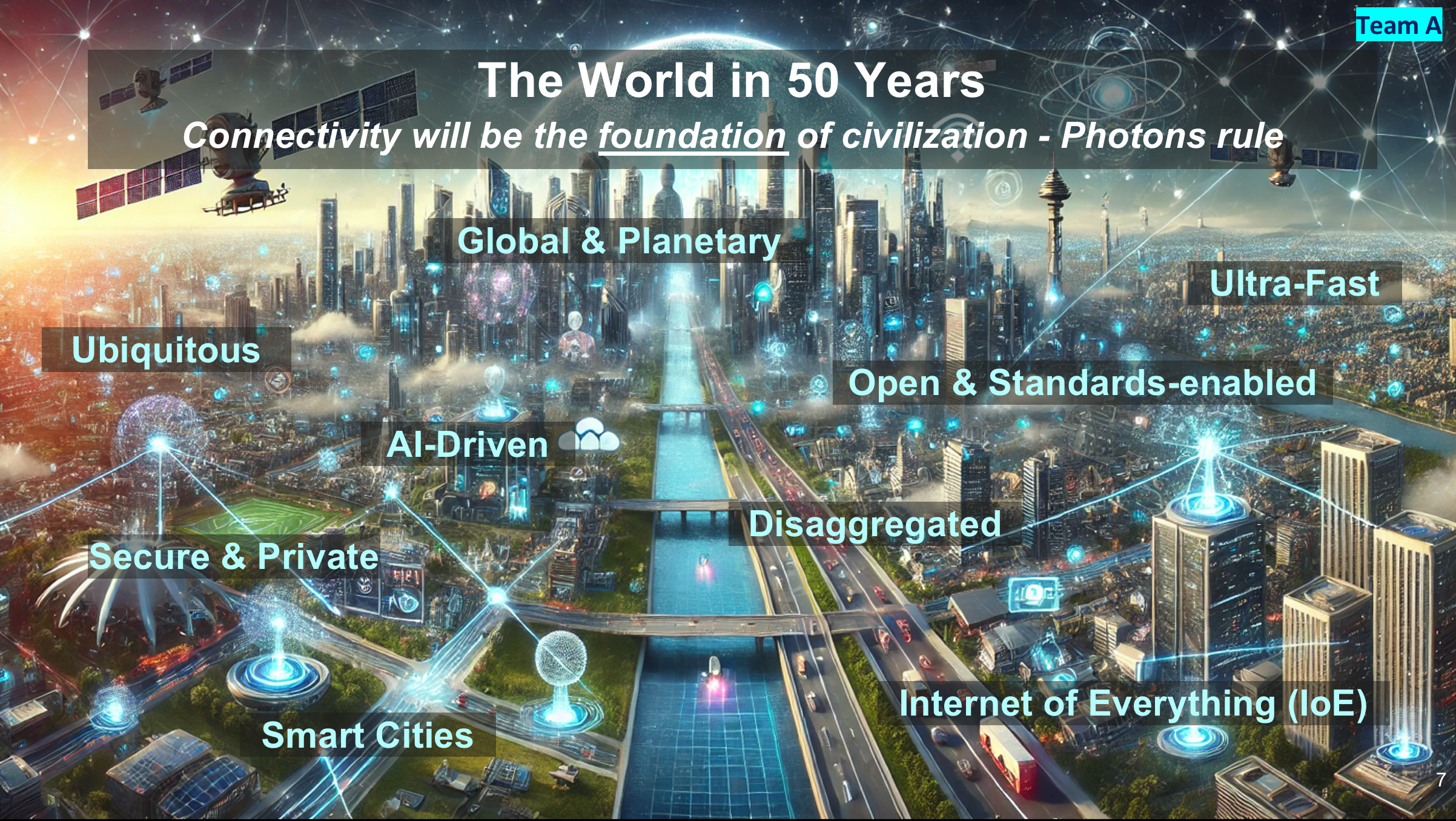

Team A’s Rebecca Schaevitz opened by looking across a hundred-year window. Looking back fifty years to 1975, networking and computing were all electrical, she said, telephone lines, mainframe computing, radio and satellite.

Schaevitz said that by 2075, fifty years hence, connectivity will be the foundation of civilisation. The key difference between the networks a century apart is the marked transition from electrons to photons.

In the future vision, everything will be connected—clothes, homes, roads, even human brains—using sensors and added intelligence. As for work, offices will be replaced with real-time interactive holograms (suggesting humanity will still be working in 2075).

Schaevitz then outlined what must be done in the coming decade to enable Team A’s Network 2075 vision.

The network’s backbone must be optical, supporting multiple wavelengths and quantum communications. Team A will complement the fixed infrastructure with terabit-speed wireless and satellite mega-constellations. And AI will enable the network to be self-healing and adaptive, ensuring no downtime.

Vijay Vusirikala outlined Team B’s network assumptions. Any new network will need to support the explosive growth in computing and communications while being energy constrained. “We must reinvent communications from the ground up for maximum energy savings,” said Visurikala.

But scarcity—in this case energy—spurs creativity. The goal is to achieve 1000x more capacity for the same energy demand.

The network will have distributed computing based on mega data centres and edge client computing. Massive bandwidth will be made available to link humans and to link machines. Lastly, just enough standardisation will be used for streamlined networking.

Team C’s Katharine Schmidtke closed the network vision round. The goal is universal and cheap communications, with lots of fibre deployed to achieve this.

The emphasis will be on creating a unified fixed-mobile network to aid quick deployment and a unified fibre-radio spectrum for ample connectivity.

Team C stressed the importance of getting the network up and running by using a modular network node. It also argued for micro data centres to deliver computing close to end users.

Global funding will be needed for the infrastructure rebuild, and unlimited rights of way will be a must. Unconstrained equipment and labour will be used at all layers of the network.

Team C will also define the communication network using one infrastructure standard for interoperability. One audience member questioned the wisdom of a tiny committee alone specifying such a grand global project.

The network will also be sustainable by recycling the heat from data centres for crop production and supporting local communities.

Round 2: Architectures

Team A’s Tad Hofmeister opened Round 2 by saying what must change: the era of copper will end – no copper landlines will be installed. The network will also only use packet switching, no more circuit switch technology. And IPv4 will be retired (to great cheering from the audience).

Team A also proposed a staged deployment. First, a network of airborne balloons will communicate with smartphones and laptops, which will be connected to the ground using free-space optical links.

Stage 2 will add base stations complemented with satellite communications. Fibre will be deployed on a massive scale along roads, railways, and public infrastructure.

Hofmeister stressed the idea of the network being open and disaggregated with resiliency and security integral to the design.

There will be no single mega-telecom or hyperscaler; instead, multiple networks and providers will be encouraged. To ensure interoperability, the standards will be universal.

Security will be based on a user’s DNA key. What about twins? asked an audience member. Hofmeister had that covered: time-of-birth data will be included.

Professor Polina Bayvel detailed Team B’s architectural design. Here, packet and circuit switching is proposed to minimise energy/bit/ km. It will be a network with super high bandwidths, including spokes of capacity extending from massive data centres connecting population centres.

Bayvel argued the case for underwater data centres: 15 per cent of the population live near the coast, she said, and an upside would be that people could work from the beach.

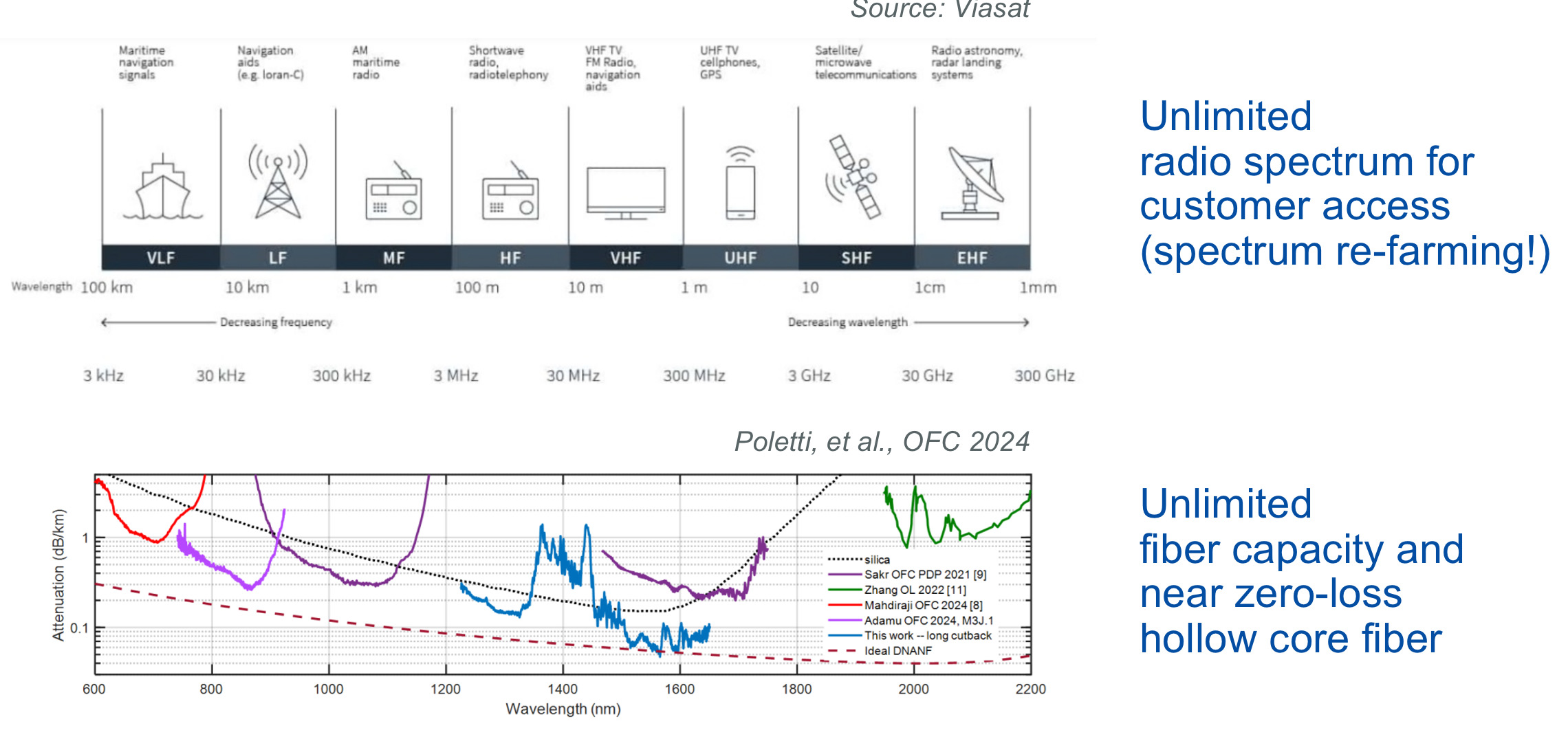

Team C’s Glenn Wellbrock proposed unleashing as much bandwidth as possible by freeing up the radio spectrum and laying hollow-core fibre to offer as much capacity as possible.

Wellbrock views hollow-core fibre as a key optical communications technology that promises years of development, just like first erbium-doped fibre amplifiers (EDFAs) and then coherent optics technology have done.

Team C showed a hierarchical networking diagram mapped onto the geography of the US – similar to today’s network – with 10s of nodes for the wide area network, 100s of metropolitan networks, and 10,000s of access nodes.

Wellbrock proposes self-container edge nodes based on standardised hardware to deliver high-speed wireless (using the freed-up radio spectrum) and fibre access. There would also be shared communal hardware, though service providers could add their own infrastructure. Differentiation would be based on services.

AI would provide the brains for network operations, with expert staff providing the initial training.

Round 3: Technologies

Round 3, the enabling technologies for the new network, revealed the teams’ deeper thinking.

Team A’s Chris Doerr advocated streamlining and pragmatism to ensure rapid deployment. Silicon photonics will make a quick, massive-scale, and economic deployment of optics possible. Doerr also favours massive parallelism based on 200 gigabaud on-off keying (not PAM-4 signalling). With co-packaged optics added to chips, such parallel optical input-output and symbol rate will save significant power.

Standards for all aspects of networking will be designed first. Direct detection will be used inside the data centre; coherent digital signal processing will be used everywhere else. More radically, in the first five years, all generated intellectual property regarding series, converters, modems, and switch silicon will be made available to all competition. Chips will be assembled using chiplets.

For line systems, C-band only followed by the deployment of Vibranium-doped optical amplifiers (Grok 3 gives a convincing list of the hypothetical benefits of VDFAs). Parallelism will also play a role here, with spatial division multiplexing preferred to combining a fibre’s O, S, C and L bands.

Like Team C, Doerr also wants vast amounts of hollow-core fibre. It may cost more, but the benefits will be long-term, he said.

Peter Winzer (Team B) also argued for parallelism and a rethink in optics: the best ‘optical’ network may not be ‘optical’ given that photons get more expensive the higher the carrier frequency. So, inside the data centre, using the terahertz band and guided-wave wire promises 100x energy per bit benefits compared to using O-band or C-band optics.

Winzer also argues for 1000x more energy-efficient backbone connectivity by moving to 10-micron wavelengths and ultra-wideband operation to compensate for the 10x spectral efficiency loss that results. But for this to work, lots of fibre will be needed. Here, hollow-core fibre is a possible option.

Chris Cole brought the round to a close with radical ways to get the networking deployed. He mentioned Meta’s Bombyx, an installation machine that spins compact fibre cables along power lines.

Underground cabling would use nuclear fibre boring (including the patent number) which produces so much heat that it bores a tunnel while lining its walls with the molten material it produces. An egg-shaped portable nuclear reactor to power data centre containers was also proposed.

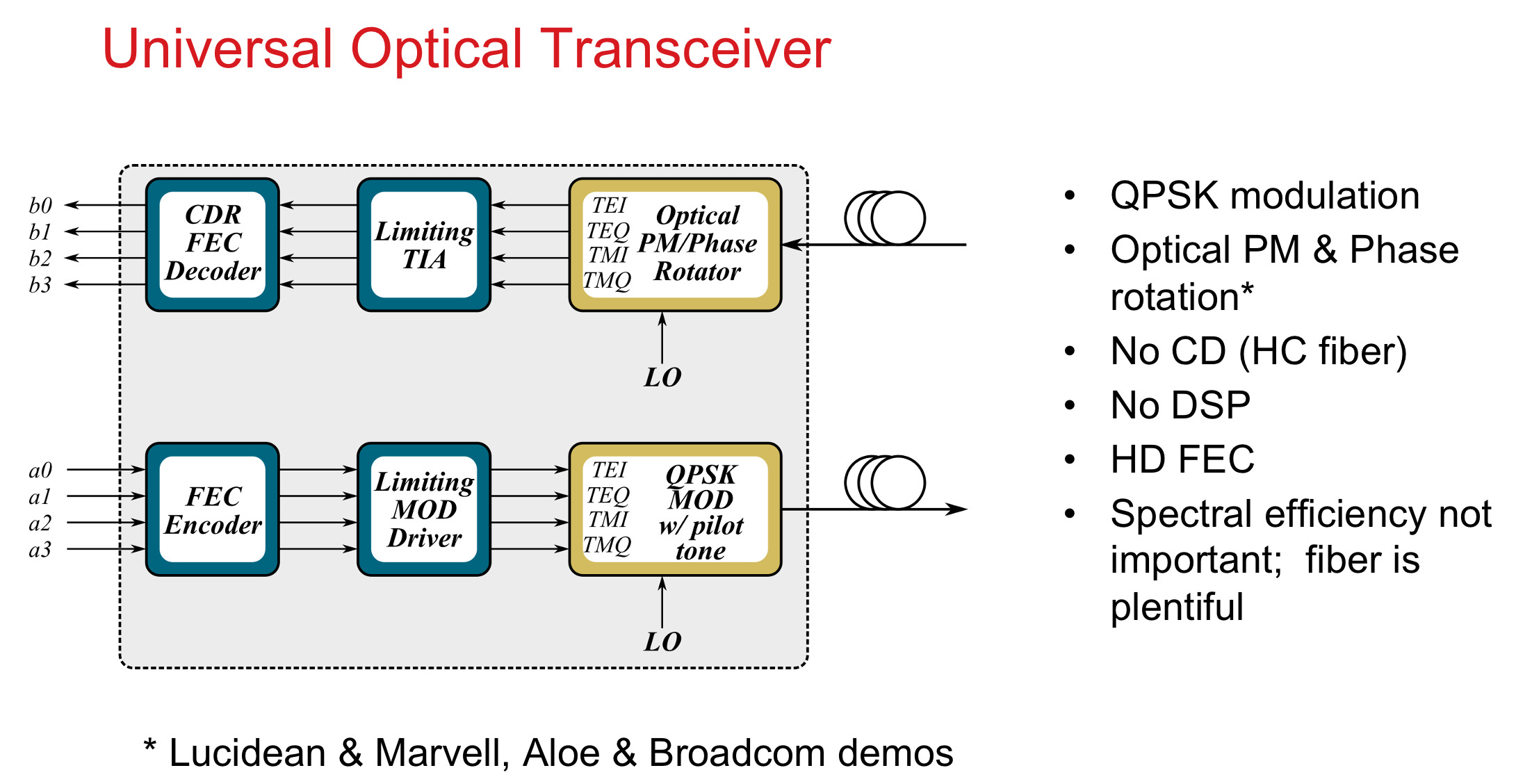

Cole defined a ‘universal’ transceiver with quadruple phase-shift keying (QPSK) modulation with no digital signal processing. “Spectral efficiency is not important as fibre will be plentiful,” says Cole.

Completing arguments

After each team had spent a total of some 14 minutes outlining their networks, they were given one more round for final statements.

Maxim Kuschnerov expanded on the team’s first-round slide, which outlined the ingredients needed to enable its Network 2075 vision. He also argued that every network element and connected device should be part of a global AI network. And AI will help co-design the new access network.

The new network will enable a massive wave of intelligent devices. Data will be kept at the edge, and the network will enable low-latency communications and inferencing at the edge.

Team B’s Dave Welch outlined some key statements: fusion energy will power the data centres with 80 per cent of the energy recycled from the heat. Transistors will pass the 10THz barrier, there will be 1000x scaling for the same energy, and an era of atto-joules/bit will begin. “And human-to-human interactions will still make the world go round,” says Welch.

Team C’s Jörg-Peter Elbers ended the evening presentations by outlining schemes to enable the new network: high-altitude platforms in a mega constellation (20km up) trailing fibre to the ground.

Such fibres and free-space links would also act as a sensing early-warning system in case the aliens returned.

Lastly, Elbers suggested we all get a towel (an important multi-purpose tool as outlined in Douglas Adams’ The Hitchhiker’s Guide to the Galaxy). A towel can be used for hand-to-hand combat (when wet), ward off noxious fumes, and help avoid the gaze of the Ravenous Bugblatter beast of Traal. Lastly, and in the spirit of the evening, if all else fails, a towel can be used for sending line-of-sight, low-bandwidth smoke signals.

Team C ended the presentations by throwing towels into the audience, like tennis stars after a match.

Common threads

All the teams agreed that fibre was necessary for the network backbone, with hollow-core fibre widely touted.

Two of the teams emphasised a staged rollout and all outlined ways to avoid the ills of existing legacy networks.

Differences included using satellites rather than fibre-fed high-altitude balloons, which are quicker and cheaper to deploy, and the idea of container edges rather than a more centralised service edge. All the teams were creative with their technological approaches.

What wasn’t discussed – it wasn’t in the remit – was the impact of a global disconnect on the world’s population. We would suddenly become broadband have-nots for several years, disconnected from smartphones and hours-per-day screen time.

The teams’ logical assumption was to get the network up and running with even greater bandwidth in the future. But would gaining online access after years offline change our habits? Would we be much more precious in using our upload and download bits? And what impact would a global comms disconnect have on society? Would we become more sociable? Would letter-writing become popular again? And would local communities be strengthened?

Maxim Kuschnerov came closest to this when, in his summary talk, he spoke about how the following iteration of network and communications should be designed to be a force for good for humanity and for its economic prospects.

Team winners

The audience chose Team B’s network proposal. However, the choice was controversial.

An online voting scheme, which would have allowed users to vote and change their vote as the session progressed, worked perfectly, but keeled over on the night.

The organisers’ fallback plan, measuring the decibel level of the audience’s cheers for each team, ended in controversy.

First, not all the Session attendees were present at the end. Second, a couple of the participants were seen self-cheering into a microphone. Evidence, if needed, as to the seriousness the ‘superheroes’ embraced architecting a new global network.

“It has been an evening of pure creative chaos: the more time I spend reflecting on the generated ideas, the more their value increases to me,” says Antonio Tartaglia of Ericsson, one of the organisers. “The voting chaos has been an act of God, because all three teams deserved to win.”

Tartaglia came up with this year’s theme for the Rump Session.

“Rump sessions are all about creative debate, and this year’s event took that to its full potential,” says Dirk van den Borne of Juniper Networks, another of the organisers. “Micro data centres, fibre-tethered balloons, Terahertz waveguides, and communication by pigeon; the sheer breath of ideas shows what an exciting and inventive industry we’re working in.”

The evening ended with a tribute to Team C’s Glenn Wellbrock. BT’s Professor Andrew Lord acknowledged Wellbrock’s career and contribution to optical communications.

Wellbrock officially retired days before the Rump Session.

Webinar: Scaling AI clusters with optical interconnects

A reminder that this Thursday, September 14th, 8:00-9:00 am PT, I will be taking part in a webcast as part of the OCP Educational Webinar Programme that explores the future of AI computing with optical interconnects.

Data and computation drive AI success, and the hyperscaler are racing to build massive AI accelerator-based compute clusters. The impact of large language models and ChatGPT has turbocharged this race. Scaling demands innovation in accelerator chips, node linkages, fabrics, and topology.

For this webinar, industry experts will discuss the challenge of scaling AI clusters. The other speakers include Cliff Grossner Ph.D., Yang Chen, and Bob Shine. To register, please click here

Silicon Photonics: Fueling the Next Information Revolution

New book to be published in December 2016

Silicon Photonics: Fueling the Next Information Revolution is the title of the book Daryl Inniss and I have just completed.

We started writing the book at the end of 2014. We felt the timing was right for a silicon photonics synthesis book that assesses the significant changes taking place in the datacom, telecom, and semiconductor industries, and explains the market opportunities that will result and the role silicon photonics will play.

Silicon photonics is coming to market at a time of momentous change. Internet content providers are driving new requirements as they scale their data centres. The chip industry is grappling with the end of Moore’s law. And the telecom community faces its own challenges as the bandwidth-carrying capacity of fibre starts to be approached.

Silicon photonics will be a key technology for a post–Moore’s law era, and it will be the chip industry, not the photonics industry, that will drive optics

Each of these changes – the data center, the end of Moore’s law, and a looming capacity crunch – is significant in its own right. But collectively they signify a need for new thinking regarding chips, optics, and systems. Such requirements will also give rise to new business opportunities and industry change. Silicon photonics is arriving at an opportune time.

Despite this, the optical industry still questions the significance of silicon photonics while, for the chip industry, optics remains a science peripheral to their daily concerns. This too will change.

The book discusses how silicon photonics is set to influence both industries. For the optical industry, the technology will allow designs to be tackled in new ways. For the chip industry, silicon photonics may be a peripheral if interesting technology, but it will impact chip design.

The focus of the book is the telecom and datacom industries; these are and will remain the primary markets for silicon photonics for the next decade at least. But we also note other developments where silicon photonics can play an important role.

Silicon photonics will be a key technology for a post–Moore’s law era, and it will be the chip industry, not the photonics industry, that will drive optics.

The book is being published by Elsevier’s Morgan Kaufman and will be available from mid-December. To see the contents of the book, click here.

Verizon's move to become a digital service provider

Working with Dell, Big Switch Networks and Red Hat, the US telco announced in April it had already brought online five data centres. Since then it has deployed more sites but is not saying how many.

Source: Verizon

“We are laying the foundation of the programmable infrastructure that will allow us to do all the automation, virtualisation and the software-defining we want to do on top of that,” says Chris Emmons, director, network infrastructure planning at Verizon.

“This is the largest OpenStack NFV deployment in the marketplace,” says Darrell Jordan-Smith, vice president, worldwide service provider sales at Red Hat. “The largest in terms of the number of [server] nodes that it is capable of supporting and the fact that it is widely distributed across Verizon’s sites.”

OpenStack is an open source set of software tools that enable the management of networking, storage and compute services in the cloud. “There are some basic levels of orchestration while, in parallel, there is a whole virtualised managed environment,” says Jordon-Smith.

This announcement suggests that Verizon feels confident enough in its experience with its vendors and their technology to take the longer-term approach

“Verizon is joining some of the other largest communication service providers in deploying a platform onto which they will add applications over time,” says Dana Cooperson, a research director at Analysys Mason.

Most telcos start with a service-led approach so they can get some direct experience with the technology and one or more quick wins in the form of revenue in a new service arena while containing the risk of something going wrong, explains Cooperson. As they progress, they can still lead with specific services while deploying their platforms, and they can make decisions over time as to what to put on the platform as custom equipment reach their end-of-life.

A second approach - a platform strategy - is a more sophisticated, longer-term one, but telcos need experience before they take that plunge.

“This announcement suggests that Verizon feels confident enough in its experience with its vendors and their technology to take the longer-term approach,” says Cooperson.

Applications

The Verizon data centres are located in core locations of its network. “We are focussing more on core applications but some of the tools we use to run the network - backend systems - are also targeted,” says Emmons.

The infrastructure is designed to support all of Verizon’s business units. For example, Verizon is working with its enterprise unit to see how it can use the technology to deliver virtual managed services to enterprise customers.

“Wherever we have a need to virtualise something - the Evolved Packet Core, IMS [IP Multimedia Subsystem] core, VoLTE [Voice over LTE] or our wireline side, our VoIP [Voice over IP] infrastructure - all these things are targeted to go on the platform,” says Emmons. Verizon plans to pool all these functions and network elements onto the platform over the next two years.

Red Hat’s Jordon-Smith talks about a two-stage process: virtualising functions and then making them stateless so that applications can run on servers independent of the location of the server and the data centre.

“Virtualising applications and running on virtual machines gives some economies of scale from a cost perspective and density perspective,” says Jordon-Smith. But there is a cost benefit as well as a level of performance and resiliency once such applications can run across data centres.

And by having a software-based layer, Verizon will be able to add devices and create associated services applications and services. “With the Internet of Things, Verizon is looking at connecting many, many devices and add scale to these types of environments,” says Jordon-Smith.

Architecture

Verizon is deploying a ‘pod and core architecture’ in its data centres. A pod contains racks of servers, top-of-rack or leaf switches, and higher-capacity spine switches and storage, while the core network is used to enable communications between pods in the same data centre and across sites (see diagram, top).

Dell is providing Verizon with servers, storage platforms and white box leaf and spine switches. Big Switch Networks is providing software that runs on the Dell switches and servers, while the OpenStack platform and ceph storage software is provided by Red Hat.

Each Dell rack houses 22 servers - each server having 24 cores and supporting 48 hyper threads - and all 22 servers connect to the leaf switch. Each rack is teamed with a sister rack and the two are connected to two leaf switches, providing switch level redundancy.

“Each of the leaf switches is connected to however many spine switches are needed at that location and that gives connectivity to the outside world,” says Emmons. For the five data centres, a total of 8 pods have been deployed amounting to 1,000 servers and this has not changed since April.

This is the largest OpenStack NFV deployment in the marketplace

Verizon has deliberately chosen to separate the pods from the core network so it can innovate at the pod level independently of the data centre’s network.

“We wanted the ability to innovate at the pod level and not be tied into any technology roadmap at the data centre level,” says Emmons who points out that there are several ways to evolve the data centre network. For example, in some cases, an SDN controller is used to control the whole data centre network.

“We don't want our pods - at least initially - to participate in that larger data centre SDN controller because we were concerned about the pace of innovation and things like that,” says Emmons. “We want the pod to be self-contained and we want the ability to innovate and iterate in those pods.”

Its first-generation pods contain equipment and software from Dell, Big Switch and Red Hat but Verizon may decide to swap out some or all of the vendors for its next-generation pod. “So we could have two generations of pod that could talk to each other through the core network,” says Emmons. “Or they could talk to things that aren't in other pods - other physical network functions that have not yet been virtualised.”

Verizon’s core networks are its existing networks in the data centres. “We didn't require any uplift and migration of the data centre networks,” says Emmons, However, Verizon has a project investigating data-centre interconnect platforms for core networking.

What we have been doing with Red Hat and Big Switch is not a normal position for a telco where you test something to death; it is a lot different to what people are used to

Benefits

Verizon expects capital expenditure and operational expense benefits from its programmable network but says it is too early to quantify. What more excites the operator is the ability to get services up and running quickly, and adapt and scale the network according to demand.

“You can reconfigure and reallocate your network once it is all software-defined,” says Emmons. There is still much work to be done, from the network core to the edge. “These are the first steps to that programmable infrastructure that we want to get to,” says Emmons.

Capital expenditure savings result from adopting standard hardware. “The more uniform you can keep the commodity hardware underneath, the better your volume purchase agreements are,” says Emmons. Operational savings also result from using standardised hardware. “Spares becomes easier, troubleshooting becomes easier as does the lifecycle management of the hardware,” he says.

Challenges

“We are tip-of-the-spear here,” admits Emmons. “What we have been doing with Red Hat and Big Switch is not a normal position for a telco where you test something to death; it is a lot different to what people are used to.”

Red Hat’s Jordon-Smith also talks about the accelerated development environment enabled by the software-enabled network. The OpenStack platform undergoes a new revision every six months.

“There are new services that are going to be enabled through major revisions in the not too distant future - the next 6 to 12 months,” says Jordon-Smith. “That is one of the key challenges for operators like Verizon have when they are moving in what is now a very fast pace.”

Verizon continues to deploy infrastructure across its network. The operator has completed most of the troubleshooting and performance testing at the cloud-level and in parallel is working on the applications in various of its labs. “Now it is time to put it all together,” says Emmons.

One critical aspect of the move to become a digital service provider will be the operators' ability to offer new services more quickly - what people call service agility, says Cooperson. Only by changing their operations and their networks can operators create and, if needed, retire services quickly and easily.

"It will be evident that they are truly doing something new when they can launch services in weeks instead of months or years, and make changes to service parameters upon demand from a customer, as initiated by the customer," says Cooperson. “Another sign will be when we start seeing a whole new variety of services and where we see communications service providers building those businesses so that they are becoming a more significant part of their revenue streams."

She cites as examples cloud-based services and more machine-to-machine and Internet of Things-based services.

FPGAs with 56-gigabit transceivers set for 2017

The company demonstrated a 56-gigabit transceiver using 4-level pulse-amplitude modulation (PAM-4) at the recent OFC show. The 56-gigabit transceiver, also referred to as a serialiser-deserialiser (serdes), was shown successfully working over backplane specified for 25-gigabit signalling only.

”Optical module [makers] will take another year to make something decent using PAM-4," says Garcia, Xilinx's director marketing and business development, wired communications. "Our 7nm FPGAs will follow very soon afterwards.”

The company is still to detail its next-generation FPGA family but says that it will include an FPGA capable of supporting 1.6 terabit of Optical Transport Network (OTN) using 56-gigabit serdes only. At first glance that implies at least 28 PAM-4 transceivers on a chip but OTN is a complex design that is logic not I/O limited suggesting that the FPGA will feature more than 28, 56-gigabit serdes.

Applications

Xilinx’s Virtex UltraScale and its latest UltraScale+ FPGA families feature 16-gigabit and 25-gigabit transceivers. Managing power consumption and maximising reach of the high-speed serdes are key challenges for its design engineers. Xilinx says it has 150 engineers for serdes design.

“Power is always a key challenge because as soon as you talk about 400-gigabit to 1-terabit per line card, you need to be cautious about the power your serdes will use,” says Garcia. He says the serdes need to adapt to the quality of the traces for backplane applications. Customers want serdes that will support 25 gigabit on existing 10-gigabit backplane equipment.

Xilinx describes its Virtex UltraScale as a 400-gigabit capable single-chip system supporting up to 104 serdes: 52 at 16 gigabit and 52 at 25 gigabit.

The UltraScale+ is rated as a 500-gigabit to 600-gigabit capable system, depending on the application. For example, the FPGA could support three, 200-gigabit OTN wavelengths, says Garcia.

Xilinx says the UltraScale+ reduces power consumption by 35% to 50% compared to the same designs implemented on the UltrasScale. The Virtex UltraScale+ devices also feature dedicated hardware to implement RS-FEC, freeing up programmable logic for other uses. RS-FEC is used with multi-mode fibre or copper interconnects for error correction, says Xilinx. Six UltraScale+ FPGAs are available and the VU13P, not yet out, will feature up to 128 serdes, each capable of up to 32 gigabit.

We don’t need retimers so customers can connect directly to the backplane at 25 gigabit, thereby saving space, power and cost

The UltraScale and UltraScale+ FPGAs are being used in several telecom and datacom applications.

For telecom, 500-gigabit and 1-terabit OTN designs are an important market for the UltraScale FPGAs. Another use for the FPGA serdes is for backplane applications. “We don’t need retimers so customers can connect directly to the backplane at 25 gigabit, thereby saving space, power and cost,” says Garcia. Such backplane uses include OTN platforms and data centre interconnect systems.

The FPGA family’s 16-gigabit serdes are also being used in 10-gigabit PON and NG-PON2 systems. “When you have an 8-port or 16-port system, you need to have a dense serdes capability to drive the [PON optical line terminal’s] uplink,” says Garcia.

For data centre applications, the FPGAs are being employed in disaggregated storage systems that involved pooled storage devices. The result is many 16-gigabit and 25-gigabit streams accessing the storage while the links to the data centre and its servers are served using 100-gigabit links. The FPGA serdes are used to translate between the two domains (see diagram).

Source: Xilinx

For its next-generation 7nm FPGAs with 56-gigabit transceivers, Xilinx is already seeing demand for several applications.

Data centre uses include server-to-top-of-rack links as the large Internet providers look move from 25 gigabit to 50- and 100-gigabit links. Another application is to connect adjacent buildings that make up a mega data centre which can involve hundreds of 100-gigabit links. A third application is meeting the growing demands of disaggregated storage.

For telecom, the interest is being able to connect directly to new optical modules over 50-gigabit lanes, without the need for gearbox ICs.

Optical FPGAs

Altera, now part of Intel, developed an optical FPGA demonstrator that used co-packaged VCSELs for off-chip optical links. Since then Altera announced its Stratix 10 FPGAs that include connectivity tiles - transceiver logic co-packaged and linked with the FPGA using interposer technology.

Xilinx says it has studied the issue of optical I/O and that there is no technical reason why it can’t be done. But the issue is a business one when integrating optics in an FPGA, he says: “Who is responsible for the yield? For the support?”

Garcia admits Xilinx could develop its own I/O designs using silicon photonics and then it would be responsible for the logic and the optics. “But this is not where we are seeing the business growing,” he says.

COBO looks inside and beyond the data centre

The Consortium of On-Board Optics is working on 400 gigabit optics for the data centre and also for longer-distance links. COBO is a Microsoft-led initiative tasked with standardising a form factor for embedded optics.

Established in March 2015, the consortium already has over 50 members and expects to have early specifications next year and first hardware by late 2017.

Brad Booth

Brad Booth

Brad Booth, the chair of COBO and principal architect for Microsoft’s Azure Global Networking Services, says Microsoft plans to deploy 100 gigabit in its data centres next year and that when the company started looking at 400 gigabit, it became concerned about the size of the proposed pluggable modules, and the interface speeds needed between the switch silicon and the pluggable module.

“What jumped out at us is that we might be running into an issue here,” says Booth.

This led Microsoft to create the COBO industry consortium to look at moving optics onto the line card and away from the equipment’s face plate. Such embedded designs are already being used for high-performance computing, says Booth, while data centre switch vendors have done development work using the technology.

On-board optics delivers higher interface densities, and in many cases in the data centre, a pluggable module isn’t required. “We generally know the type of interconnect we are using, it is pretty structured,” says Booth. But the issue with on-board optics is that existing designs are proprietary; no standardised form factor exists.

“It occurred to us that maybe this is the problem that needs to be solved to create better equipment,” says Booth. Can the power consumed between switch silicon and the module be reduced? And can the interface be simplified by eliminating components such as re-timers?

“This is worth doing if you believe that in the long run - not the next five years, but maybe ten years out - optics needs to be really close to the chip, or potentially on-chip,” says Booth.

400 gigabit

COBO sees 400 gigabit as a crunch point. For 100 gigabit interconnect, the market is already well served by various standards and multi-source agreements so it makes no sense for COBO to go head-to-head here. But should COBO prove successful at 400 gigabit, Booth envisages the specification also being used for 100, 50, 25 and even 10 gigabit links, as well as future speeds beyond 400 gigabit.

The consortium is developing standardised footprints for the on-board optics. “If I want to deploy 100 gigabit, that footprint will be common no matter what the reach you are achieving with it,” says Booth. “And if I want a 400 gigabit module, it may be a slightly larger footprint because it has more pins but all the 400 gigabit modules would have a similar footprint.”

COBO plans to use existing interfaces defined by the industry. “We are also looking at other IEEE standards for optical interfaces and various multi-source agreements as necessary,” says Booth. COBO is also technology agnostic; companies will decide which technologies they use to implement the embedded optics for the different speeds and reaches.

“This is worth doing if you believe that in the long run - not the next five years, but maybe ten years out - optics needs to be really close to the chip, or potentially on-chip."

Reliability

Another issue the consortium is focussing on the reliability of on-board optics and whether to use socketed optics or solder the module onto the board. This is an important consideration given that is it is the vendor’s responsibility to fix or replace a card should a module fail.

This has led COBO to analyse the causes of module failure. Largely, it is not the optics but the connections that are the cause. It can be poor alignment with the electrical connector or the cleanliness of the optical connection, whether a pigtail or the connectors linking the embedded module to the face plate. “The discussions are getting to the point where the system reliability is at a level that you have with pluggables, if not better,” says Booth.

Dropping below $1-per-gigabit

COBO expects the cost of its optical interconnect to go below the $1-per-gigabit industry target. “The group will focus on 400 gigabit with the perception that the module could be four modules on 100 gigabit in the same footprint,” says Booth. Using four 100 gigabit optics in one module saves on packaging and the printed circuit board traces needed.

Booth says that 100 gigabit optics is currently priced between $2 and $3-per-gigabit. “If I integrate that into a 400 gigabit module, the price-per-gig comes down significantly” says Booth. “All the stuff I had to replicate four times suddenly is integrated into one, cutting costs significantly in a number of areas.” Significantly enough to dip below the $1-per-gigabit, he says.

Power consumption and line-side optics

COBO has not specified power targets for the embedded optics in part because it has greater control of the thermal environment compared to a pluggable module where the optics is encased in a cage. “By working in the vertical dimension, we can get creative in how we build the heatsink,” says Booth. “We can use the same footprint no matter whether it is 100 gigabit inside or 100 gigabit outside the data centre, the only difference is I’ve got different thermal classifications, a different way to dissipate that power.”

The consortium is investigating whether its embedded optics can support 100 gigabit long-haul optics, given such optics has traditionally been implemented as an embedded design. “Bringing COBO back to that market is extremely powerful because you can better manage the thermal environment,” says Booth. And by removing the power-hungry modules away from the face plate, surface area is freed up that can be used for venting and improving air flow.

“We should be considering everything is possible, although we may not write the specification on Day One,” says Booth. “I’m hoping we may eventually be able to place coherent devices right next to the COBO module or potentially the optics and the coherent device built together.

“If you look at the hyper-scale data centre players, we have guys that focus just as much on inside the data centre as they do on how to connect the data centres in within a metro area, national area and then sub-sea,” says Booth. “That is having an impact because when we start looking at what we want to do with those networks, we want to have some level of control on what we are doing there and on the cost.

“We buy gazillions of optical modules for inside the data centre. Why is it that we have to pay exorbitant prices for the ones that we are not using inside [the data centre],” he says.

“I can’t help paint a more rosier picture because when you have got 1.4 million servers, if I end up with optics down to all of those, that is a lot of interconnect“

Market opportunities

Having a common form factor for on-board optics will allow vendors to focus on what they do best: the optics. “We are buying you for the optics, we are not buying you for the footprint you have on the board,” he says.

Booth is sensitive to the reservations of optical component makers to such internet business-led initiatives. “It is a very tough for these guys to extend themselves to do this type of work because they are putting a lot of their own IP on the line,” says Booth. “This is a very competitive space.”

But he stresses it is also fiercely competitive between the large internet businesses building data centres. “Let’s sit down and figure out what does it take to progress this industry. What does it take to make optics go everywhere?”

Booth also stresses the promising market opportunities COBO can serve such as server interconnect.

“When I look at this market, we are talking about doing optics down to our servers,” says Booth. “I can’t help paint a more rosier picture because when you have got 1.4 million servers, if I end up with optics down to all of those, that is a lot of interconnect.“

Reporting the optical component & module industry

LightCounting recently published its six-monthly optical market research covering telecom and datacom. Gazettabyte interviewed Vladimir Kozlov, CEO of LightCounting, about the findings.

When people forecast they always make a mistake on the timeline because they overestimate the impact of new technology in the short term and underestimate in the long term

Q: How would you summarise the state of the optical component and module industry?

VK: At a high level, the telecom market is flat, even hibernating, while datacom is exceeding our expectations. In datacom, it is not only 40 and 100 Gig but 10 Gig is growing faster than anticipated. Shipments of 10 Gigabit Ethernet (GbE) [modules] will exceed 1GbE this year.

The primary reason is data centre connectivity - the 'spine and leaf' switch architecture that requires a lot more connections between the racks and the aggregation switch - that is increasing demand. I suspect it is more than just data centres, however. I wouldn't be surprised if enterprises are adopting 10GbE because it is now inexpensive. Service providers offer Ethernet as an access line and use it for mobile backhaul.

Can you explain what is causing the flat telecom market?

Part of the telecom 'hibernation' story is the rapidly declining SONET/SDH market. The decline has been expected but in fact it had been growing up till as recently as two years ago. First, 40 Gigabit OC-768 declined and then the second nail in the coffin was the decline in 10 Gig sales: 10GbE is all SFP+ whereas 0C-192 SONET/SDH is still in the XFP form factor.

The steady dense WDM module market and the growth in wireless backhaul are compensating for the decline in SONET/SDH market as well as the sharp drop this year in FTTx transceiver and BOSA (bidirectional optical sub assembly) shipments, and there is a big shift from transceivers to BOSAs.

LightCounting highlights strong growth of 100G DWDM in 2013, with some 40,000 line card port shipments expected this year. Yet LightCounting is cautious about 100 Gig deployments. Why the caution?

We have to be cautious, given past history with 10 Gig and 40 Gig rollouts.

If you look at 10 Gig deployments, before the optical bubble (1999-2000) there was huge expected demand before the market returned to normality, supporting real traffic demand. Whatever 10 Gig was installed in 1999-2000 was more than enough till 2005. In 2006 and 2007 10 Gig picked up again, followed by 40 Gig which reached 20,000 ports in 2008. But then the financial crisis occurred and the 40 Gig story was interrupted in 2009, only picking up from 2010 to reach 70,000 ports this year.

So 40 Gig volumes are higher than 100 Gig but we haven't seen any 40 Gig in the metro. And now 100 Gig is messing up the 40G story.

The question in my mind is how much metro is a bottleneck today? There may be certain large cities which already require such deployments but equally there was so much fibre deployed in metropolitan areas back in the bubble. If fibre cost is not an issue, why go into 100 Gig? The operator will use fibre and 10 Gig to make more money.

CenturyLink recently announced its first customer purchasing 100 Gig connections - DigitalGlobe, a company specialising in high-definition mapping technology - which will use 100 Gig connectivity to transfer massive amounts of data between its data centers. This is still a special case, despite increasing number of data centers around the world.

There is no doubt that 100 Gig will be a must-have technology in the metro and even metro-access networks once 1GbE broadband access lines become ubiquitous and 10 Gig will be widely used in the access-aggregation layer. It is starting to happen.

So 100 Gigabit in the metro will happen; it is just a question of timing. Is it going to be two to three years or 10-15 years? When people forecast they always make a mistake on the timeline because they overestimate the impact of new technology in the short term and underestimate in the long term.

LightCounting highlights strong sales in 10 Gig and 40 Gig within the data centre but not at 100 Gig. Why?

If you look at the spine and leaf architecture, most of the connections are 10 Gig, broken out from 40 Gig optical modules. This will begin to change as native 40GbE ramps in the larger data centres.

If you go to super-spine that takes data from aggregation to the data centre's core switches, there 100GbE could be used and I'm sure some companies like Google are using 100GbE today. But the numbers are probably three orders of magnitude lower than in a spine and leaf layers. The demand for volume today for 100GbE is not that high, and it also relates to the high price of the modules.

Higher volumes reduce the price but then the complexity and size of the [100 Gig CFP] modules needs to be reduced as well. With 10 Gig, the major [cost reduction] milestone was the transition to a 10 Gig electrical interface. It has to happen with 100 Gig and there will be the transition to a 4x25Gbps electrical interface but it is a big transition. Again, forget about it happening in two-three years but rather a five- to 10-year time frame.

I suspect that one reason for Google offerings of 1Gbps FTTH services to a few communities in the U.S. is to find out what these new application are, by studying end-user demand

You also point out the failure of the IEEE working group to come up with a 100 GbE solution for the 500m-reach sweet spot. What will be the consequence of this?

The IEEE is talking about 400GbE standards now. Go back to 40GbE that was only approved some three years, the majority of the IEEE was against having 40GbE at all, the objective being to go to 100GbE and skip 40GbE altogether. At the last moment a couple of vendors pushed 40GbE. And look at 40GbE now, it is [deployed] all over the place: the industry is happy, suppliers are happy and customers are happy.

Again look at 40GbE which has a standard at 10km. If you look at what is being shipped today, only 10 percent of 40GBASE-LR4 modules are compliant with the standard. The rest of the volume is 2km parts - substandard devices that use Fabry-Perot instead of DFB (distributed feedback) lasers. The yields are higher and customers love them because they cost one tenth as much. The market has found its own solution.

The same thing could happen at 100 Gig. And then there is Cisco Systems with its own agenda. It has just announced a 40 Gig BiDi connection which is another example of what is possible.

What will LightCounting be watching in 2014?

One primary focus is what wireline revenues service providers will report, particularly additional revenues generated by FTTx services.

AT&T and Verizon reported very good results in Q3 [2013] and I'm wondering if this is the start of a longer trend as wireline revenues from FTTx pick up, it will give carriers more of an incentive to invest in supporting those services.

AT&T and Verizon customers are willing to pay a little more for faster connectivity today, but it really takes new applications to develop for end-user spending on bandwidth to jump to the next level. Some of these applications are probably emerging, but we do not know what these are yet. I suspect that one reason for Google offerings of 1Gbps FTTH services to a few communities in the U.S. is to find out what these new application are, by studying end-user demand.

A related issue is whether deployments of broadband services improve economic growth and by how much. The expectations are high but I would like to see more data on this in 2014.

Q&A with Jerry Rawls - Part 2

The concluding part of the interview with Finisar's executive chairman and company co-founder, Jerry Rawls, to mark the company's 25th anniversary.

Second and final part

Guys that are in the silicon photonics industry have a religion. It does not make any difference what the real economics are, what the real performance is, they talk with a religious fervour about what might be possible with silicon

Q: Over 25 years, what has been one of your better decisions?

Jerry Rawls: After the crash of 2001, we asked what are we going to do in the optics business? Are we going to stay in it? Is there a bright future? And if so, how are we going to respond to it?

We still believed that this was an attractive market and we had built an important brand. And, we knew we could make it more successful in the future, but we were going to have to change the way we did business.

Deciding to become vertically integrated was the key change. At that time, every other company was trying to sell their assets and remove their fixed costs. They were outsourcing manufacturing instead of bringing it in-house. Everyone wanted a variable cost business model, not a fixed cost model. We clearly went against the mainstream.

That is one of the better decisions we ever made.

Equally, with the benefit of hindsight, what do you regret?

A couple of acquisitions that we made in our early years turned out less than desirable. We were sold some technology for which we believed the probability of success was high. We bought the companies based on their technology, not necessarily on their business, and it did not pan out. One thing we learned from those experiences is that when we buy a company, we try to be much more careful about our due diligence.

Another one I regret, although I don't think it was a bad decision: We had created a division in the company called Network Tools that was the leading company in the SAN (storage area network) industry for protocol analysis.

Every company in the world that was creating SAN equipment bought our protocol analysers for Fibre Channel. That was about a $40 million-a-year business and nicely profitable. We sold it [to JDSU] in 2009 and I regret that because we started that business from scratch. It really helped create the SAN industry; it helped our customers prove their equipment interoperability.

We sold it because we had that $250 million in debt we had to pay off. We had borrowed the money and it was now due. It [2009] was still not a great time, we were trying to raise cash and one asset that had value was this division.

How would you describe the current state of the optical component industry and the main challenges it faces?

The optical component industry is in a pretty healthy place. For the most part, the larger companies are doing quite well. Our business is doing nicely. We have had four quarters in a row where revenues have grown, our profitability metrics are improving and our outlook is good. A lot of that has to do with our focus on the data centre market.

We anticipate increasing dollars spent worldwide by phone companies over the next five years

The speeds and feeds in data centres are increasing dramatically: data centres are becoming larger, the connections are faster - connections that used to be copper back in the days of Gigabit Ethernet are now at 10 Gigabits and mostly optical. That transformation of copper to optics that took place in the telephone world 35 years ago is now in full bloom in the data centres. So it is a great time to be in optics because the trends are rolling our way.

We are anticipating spending growth in the telecommunications world with an upgrade in global networks to deal with growing Internet traffic. These networks are changing to very sophisticated ROADM [reconfigurable optical add/drop multiplexer] architectures and 100 Gigabit transmission rates.

We anticipate increasing dollars spent worldwide by phone companies over the next five years. So that sector is going to become healthier and hopefully a larger percentage of our business.

I believe the optical component industry has a number of market opportunities that are going to keep it pretty healthy for some time.

It does not mean that we don't have challenges. The industry, and in particular telecommunications, is fragmented. There are a number of competitors that have very small market share. Many of these competitors are focussing their R&D efforts on the same products - the next generation of telecom equipment - and that is very inefficient. That is the main challenge that the optical industry has, that this fragmentation leads to inefficiency.

That limits the margins of the companies and the industry. It also means that pricing in the industry is at a lower level than component suppliers would like to see.

How that works out is not clear. You could say that in a fragmented industry, you would like to see more consolidation. There will be a little of that. But there are some parts of the industry where consolidation will be very slow.

For example, all of the Japanese optical suppliers are likely to stay in business for some time. Almost every big Japanese electronics company has an optical division, and they always have. None went out of business in the crash of '01 and none went out in the crash of '08 – ’09. That is because these optics divisions are small parts of giant conglomerates. This fragmentation problem is difficult to solve.

Datacom and the data centre appear to be a more interesting segment in terms of driving change than telecom. How do you view the two segments going forward?

I think both are interesting.

The data centre is interesting because of the increased density of Gigabits-per-square-inch on the faceplates of equipment, whether it is switches, storage or servers. Then there is the faster connection speeds between devices and the demand for low latency. The physical size of some of these data centres is demanding that certain connections become single mode - more like wiring a campus as opposed to multi-mode historically used in single buildings.

The datacom market is also very interesting because of a number of connections changing from copper to optical as speeds get faster. Copper transmission demands too much power through big cables at these higher speeds.

In telecom, today what is really exciting is the advent of coherent transmission systems, in particular at 100 Gigabits moving to 400 Gigabit and 1 Terabit-per-second in the next decade.

Coherent transmission is revolutionary in that by using electronics rather than optics to do signal correction for long distance fibre transmission, these signals can be much more efficient, run faster and be much less costly than they have ever been in the past.

Coupled with that is the automation of these optical networks through the extensive use of sophisticated ROADMs. With the next generation of networks, truck rolls to do provisioning and reconfigurations will be almost eliminated.

So there is a lot of excitement for us just because of what is coming to telecom networks. We have been through a lull for the last couple of years but it is a cyclical industry that tends to follow technology waves. We are entering the 100 Gigabit transmission wave and the sophisticated use of many, many ROADMs in these networks for automation.

We have designed silicon photonic chips here at Finisar and have evaluations that are ongoing

Silicon photonics is spoken of as a disruptive technology for datacom and telecom. It also promises to disrupt the component supply chain. What is Finisar's take on the technology?

As a company, we are very product focussed and we want to deliver transmission products and switching products, etc. that fulfill our customers' needs. We don't really care what the technology is. We are going to invest in technology that enables us to build the highest performing and most efficient devices that we can.

Silicon photonics is an interesting technology. We haven't used it in any of our products so far with the exception of a silicon waveguide in an integrated receiver. The most interesting thing about silicon photonics is not just to be able to make waveguides for multiplexers or demultiplexers, but to make modulators.

People have been speculating for years that we will have to use external modulators to achieve higher transmission speeds as we won’t be able to directly drive a laser fast enough.

We make VCSELs by the tens of millions. When we were making them at one Gigabit-per-second [Gbps], there were those in the industry that predicted that we would never be able to run at 2 Gbps as it would be impossible to modulate the lasers that fast. Then we did 2 Gbps, and then there were those that said it would be impossible to do 4, 8 or 10 Gigabits. Well, we are shipping devices today that are 25Gbps VCSELs that are directly modulated.

At every one of those steps there were people investing in silicon photonics companies because they could build modulators they thought would run that fast. I believe every one of those silicon photonics companies went broke.

We now have a new wave of silicon photonics companies. And because Cisco Systems happened to buy one [LightWire], there has been a lot of excitement about silicon photonics.

Well, the physics are such that it is always more efficient to directly modulate a laser - that is, to drive it with an injection of current - than it is to have a continuous wave laser where you externally modulate the light. The external modulation takes more power, more components and more cost.

Guys that are in the silicon photonics industry have a religion. It does not make any difference what the real economics are, what the real performance is, they talk with a religious fervor about what might be possible with silicon.

To date, no one has been able to make light out of silicon. That means one can make a silicon modulator and a silicon waveguide but still have to buy an indium phosphide laser to create light. Then they would have to bond that laser to the silicon substrate in a way that it efficiently launches light, is mechanically stable, and hermetic and that it will stand the rigours of all these networks. That means it can be deployed for 10 or 20 years over temperatures of 0 to 85 degrees C, and survive the qualification torture tests of high humidity, high heat and temperature cycling.

One of the things in the silicon photonics industry to date has been that the packaging - and therefore the yields - have been so difficult, such that the costs have been very high.

I promise you today that for almost every application, silicon photonics costs are higher than using traditional indium phosphide and gallium arsenide lasers and direct modulation.

We don't ignore silicon photonics as a potential technology.

We have designed silicon photonic chips here at Finisar and have evaluations that are ongoing. There are many companies that now offer silicon photonics foundry services. You can lay out a chip and they will build it for you.

We can go to a foundry; we can use their design rules and libraries and design silicon modulators and waveguides and put together a chip with as many splits and Mach-Zehnders that we want. The problem is we haven't found a place where it can be as efficient or offer the performance as using traditional lasers and free-space optics.

Our packaging has been more efficient and our output has been at a higher performance level. Remember that silicon is optically quite lossy. That means you have to launch a lot of light into it to get a little light out.

So far we just haven't found a product where we thought silicon photonics modulation was as efficient as we could build using some other technology. That is true today.

We may use silicon photonics one of these days. In fact, if we look back five or 10 years ago, when we predicted what we would need to build a 100 Gig transponder, silicon photonics was one of our alternatives, and one of the paths we went down in parallel in completing the design.

As it turns out, traditional optics and micro-optical components exceeded our own expectations.

I compare it to the disc drive industry. Twenty years ago people were predicting the demise of the disc drive industry because of solid state memory. It was thought impossible that disc drives would be around five years hence. Well, the guys in the disc industry learned how to increase the bit density and the resolution of the heads and look at the industry today. You can buy a Terabyte drive for less than a hundred dollars. The amazing technology advances they have made have kept them in the game.

What are the biggest challenges facing Finisar?

The biggest challenge we face is meeting the changes in the industry. The use of information is becoming so pervasive - video everywhere and 4G networks - that means all the kids are going to be streaming HD video to some device in their hand. And there is going to be billions of them.

Also, another challenge is managing the expectations of our customers - the equipment companies - in terms of delivering the speeds, densities and the low power performance needed to provide all this information.

It is a daunting task.

We have customers today trying to design systems that will have Terabit-per-second optical links. We don't know how we are going to get there yet but I promise you we will.

The industry in 25 years' time: Still datacom & telecom or something else by then?

In 25 years' time, datacom and telecom will be much more converged.

The data center today is becoming more like wiring a campus network than it is wiring a building as the distances become larger and the speeds faster. Today in data centers we only use point-to-point connections; we use no multiple wavelengths on fibres.

In the telephone world, everything is WDM. Today we are using mostly 96 wavelengths on a single fibre. Those 96 channels can all run at 100 Gbps – a total of nearly 10 Terabit on a single fiber. In the data center world most connections are single wavelengths, point-to-point. But in 25 years, the data centers are going to be using many of the techniques that are used in the telecom networks today in terms of making efficient use of fibres, using multiple colors of light, and being able to switch those individual colours.

For the first part, click here

60-second interview with Michael Howard

Infonetics Research has interviewed global service providers regarding their plans for software-defined networking (SDN) and network functions virtualisation (NFV). Gazettabyte asked Michael Howard, co-founder and principal analyst, carrier networks, about Infonetics' findings.

"Data centres are simple when compared to carrier networks"

"Data centres are simple when compared to carrier networks"

Michael Howard, Infonetics Research

What is it about SDN and NFV - technologies still in their infancy - that already convinces 86 percent of the operators to deploy the technologies in their optical transport networks?

Michael H: Operators have a universal draw to SDN and NFV for two basic reasons:

1. They want to accelerate revenue by reducing the time to new services and applications.

2. They have operational drivers, of which there are also two parts:

- Carriers expect software-defined networks to give them a single view across multiple vendor equipment, network layers and equipment types for mobile backhaul, consumer digital subscriber line (DSL), passive optical network (PON), optical transport, routers, mobile core and Ethernet access. This global view will allow them to provision, monitor and deliver service-level agreements while controlling services, virtual networks and traffic flows in an easier, more flexible and automated way.

- An additional function possible with such a global view across the multi-vendor network is that traffic can be monitored and re-distributed along pathways to make best use of the network. In this way, the network can run 'hotter' and thereby require less equipment, saving capital expenditure (CapEx).

Optical transport networks have a history of being engineered to effect predictable flows on transport arteries and backbones. Many operators have deployed, or have been experimenting with, GMPLS (Generalized Multi-Protocol Label-Switching) and vendor control planes. So it is natural for them to want to bring this industry standard method of deploying an SDN control plane over the usually multi-vendor transport network.

In our conversations - independent of our survey - we find that several operators believe the biggest bang for the SDN buck is to use SDN for single control plane over multi-layer data - router, Ethernet - and the optical transport network.

"The virtualisation of data centre networks has inspired operators who want to apply the same general principles to their oh-so-much-more complex networks"

Early use of SDN has been in the data centre. How will the technologies benefit networks more generally and optical transport in particular?

SDNs were developed initially to solve the operational problems of un-automated networks. That is to say, slow human labour-intensive network changes required by the automated hypervisor as it moves, adds and changes virtual machines across servers that may be in the same data centre or in multiple data centres.

The virtualisation of data centre networks has inspired operators who want to apply the same general principles to their oh-so-much-more complex networks. Data centres are simple when compared to carrier networks. Data centres are basically large numbers of servers connected by Ethernet LANs and virtual LANs with some router separations of the LANs connecting servers.

"It will be many years before SDNs-NFV will be deployed in major parts of a carrier network"

Service provider networks are a set of many different types of networks including consumer broadband, business virtual private networks, optical transport, access/ aggregation Ethernet and router networks, mobile core and mobile backhaul. Each of these comprises multiple layers and almost certainly involves multiple vendor equipment. This explains why operators are starting their SDN-NFV investigations with small network segments which we call 'contained domains'. It will be many years before SDNs-NFV will be deployed in major parts of a carrier network.

You mention small SDN and NFV deployments. What will these early applications look like?

Our survey respondents indicated that intra-datacentre, inter-datacentre, cloud services, and content delivery networks (CDNs) will be the first to be deployed by the end of 2014. Other areas targeted longer term are optical transport, mobile packet core, IP Multimedia Subsystem, and more.

Was there a finding that struck you as significant or surprising?

Yes. A lot of current industry buzz is about optical transport networks, making me think that we'd see SDNs deployed soon. But what we heard from operators is that optical transport networks are further out in their deployment plans. This makes sense in that the Open Networking Foundation working group for transport networks has just recently got their standardisation efforts going, which usually takes a couple of years.

You say that it will be years before large parts or a whole network will be SDN-controlled. What are the main challenges here regarding SDN and will they ever control a whole network?

As I said earlier, carrier networks are complex beasts, and they are carrying revenue-generating services that cannot be risked by deployment of a new set of technologies that make fundamental changes to the way networks operate.

A major problem yet to be resolved or even addressed much by the industry is how to add SDN control planes to the router-controlled network that uses the MPLS control plane. SDN and MPLS control planes must cooperate or be coordinated in some way since they both control the same network equipment-not an easy problem, and probably the thorniest of all challenges to deploy SDNs and NFV.

The study participants rated CDNs, IP multimedia subsystem (IMS), and virtual routers/ security gateways as the main NFV applications. At least two of these segments already use servers so just how impactful will NFV be for operators?

Many operators see that they can deploy NFV in a much simpler way than deploying control plane changes involved with SDNs.

Many network functions have already been virtualised, that is software-only versions are available, and many more are under development. But these are individual vendor developments, not done according to any industry standards. This means that NFV - network functions run on servers rather than on specialised network equipment like firewalls, intrusion prevention/ intrusion detection systems, Evolved Packet Core hardware - is already in motion.

The formalisation of NFV by the carrier-driven ETSI standards group is underway, developing recommendations and standards so that these virtualised network functions can be deployed in a standardised way.

Infonetics interviewed purchase-decision makers at 21 incumbent, competitive and independent wireless operators from EMEA (Europe, Middle East, Africa), Asia Pacific and North America that have evaluated SDN projects or plan to do so. The carriers represent over half (53 percent) of the world's telecom revenue and CapEx.

Dan Sadot on coherent's role in the metro and the data centre

Gazettabyte went to visit Professor Dan Sadot, academic, entrepreneur and founder of chip start-up MultiPhy, to discuss his involvement in start-ups, his research interests and why he believes coherent technology will not only play an important role in the metro but also the data centre.

"Moore's Law is probably the most dangerous enemy of optics"

Professor Dan Sadot

The Ben-Gurion University campus in Beer-Sheba, Israel, is a mixture of brightly lit, sharp-edged glass-fronted buildings and decades-old Palm trees.

The first thing you notice on entering Dan Sadot's office is its tidiness; a paperless desk on which sits a MacBook Air. "For reading maybe the iPad could be better but I prefer a single device on which I can do everything," says Sadot, hinting at a need to be organised, unsurprising given his dual role as CTO of MultiPhy and chairman of Ben-Gurion University's Electrical and Computer Engineering Department.

The department, ranked in the country's top three, is multi-disciplinary. Just within the Electrical and Electronics Department there are eight tracks including signal processing, traditional communications and electro-optics. "That [system-oriented nature] is what gives you a clear advantage compared to experts in just optics," he says.

The same applies to optical companies: there are companies specialising in optics and ASIC companies that are expert in algorithms, but few have both. "Those that do are the giants: [Alcatel-Lucent's] Bell Labs, Nortel, Ciena," says Sadot. "But their business models don't necessarily fit that of start-ups so there is an opportunity here."

MultiPhy

MultiPhy is a fabless start-up that specialises in high-speed digital signal processing-based chips for optical transmission. In particular it is developing 100Gbps ICs for direct detection and coherent.

Sadot cites a rule of thumb that he adheres to religiously: "Everything you can do electronically, do not do optically. And vice versa: do optically only the things you can't do electronically." This is because using optics turns out to be more expensive.

And it is this that MultiPhy wants to exploit by being an ASIC-only company with specialist knowledge of the algorithms required for optical transmission.

"Electronics is catching up," says Sadot. "Moore's Law is probably the most dangerous enemy of optics."

Ben-Gurion University Source: Gazettabyte

Ben-Gurion University Source: Gazettabyte

Direct detection

Not only have developments in electronics made coherent transmission possible but also advances in hardware. For coherent, accurate retrieval of phase information is needed and that was not possible with available hardware until recently. In particular the phase noise of lasers was too high, says Sadot. Now optics is enabling coherent, and the issues that arise with coherent transmission can be solved electronically using DSP.

MultiPhy has entered the market with its MP1100Q chip for 100Gbps direct detection. According to Sadot, 100Gbps is the boundary data rate between direct detection and coherent. Below 100Gbps coherent is not really needed, he says, even though some operators are using the technology for superior long-haul optical transmission performance at 40Gbps.

"Beyond 100 Gig you need the spectral efficiency, you need to do denser [data] constellations so you must have coherent," says Sadot. "You are also much more vulnerable to distortions such as chromatic dispersion and you must have the coherent capability to do that."

But at 100 Gig the two - coherent and direct detection - will co-exist.

MultiPhy's first device runs the maximum likelihood sequence estimation (MLSE) algorithm that is used to counter fibre transmission distortions. "MLSE offers the best possible theoretical solution on a statistical basis without retrieving the exact phase," says Sadot. "That is the maximum you can squeeze out of direct detection."

The MLSE algorithm benefits optical performance by extending the link's reach while allowing lower cost, reduced-bandwidth optical components to be used. MultiPhy claims 4x10Gbps can be used for the transmit and the receive path to implement the 4x28Gbps (100Gbps) design.

Sadot describes MLSE as a safety net in its ability to handle transmitter and/or receiver imperfections. "We have shown that performance is almost identical with a high quality transmitter and a lower quality transmitter; MLSE is an important addition." he says.

Ben-Gurion University Source: Gazettabyte

Ben-Gurion University Source: Gazettabyte

Coherent metro

System vendors such as Ciena and Alcatel-Lucent have recently announced their latest generation coherent ASICs designed to deliver long-haul transmission performance. But this, argues Sadot, is overkill for most applications when ultra-long haul is not needed: metro alone accounts for 75% of all the line side ports.

He also says that the power consumption of long-haul solutions is over 3x what is required for metro: 75W versus the CFP pluggable module's 24W. This means the power available solely for the ASIC would be 15W.

"This is not fine-tuning; you really need to design the [coherent metro ASIC] from scratch," says Sadot. "This is what we are doing."

To achieve this, MultiPhy has developed patents that involve “sub-Nyquist” sampling. This allows the analogue-to-digital converters and the DSP to operate at half the sampling rate, saving power. To use sub-Nyquist sampling, a low-pass anti-aliasing filter is applied but this harms the received signal. Using the filter, sampling at half the rate can occur and using the MLSE algorithm, the effects of the low-pass filtering can be countered. And because of the low pass filtering, reduced bandwidth opto-electronics can be used which reduces cost.

The result is a low power, cost-conscious design suited for metro networks.

Coherent elsewhere

Next-generation PON is also a likely user of coherent technology for such schemes as ultra-dense WDM-PON.

Sadot believes coherent will also find its way into the data centre. "Again you will have to optimise the technology to fit the environment - you will not find an over-design here," he says.

Why would coherent, a technology associated with metro and long-haul, be needed in the data centre?

"Even though there is the 10x10 MSA, eventually you will be limited by spectral efficiency," he says. Although there is a tremendous amount of fibre in the data centre, there will be a need to use this resource to the maximum. "Here it will be all about spectral efficiency, not reach and optical signal-to-noise," says Sadot.

Sadot's start-ups

Sadot had a research posting at the optical communications lab at Stanford University. The inter-disciplinary and systems-oriented nature of the lab was an influence on Sadot when he founded the optical communications lab at Ben-Gurion University around the time of the optical boom. "A pleasant time to come up with ideas," is how he describes that period - 1999-2000.

The lab's research focus is split between optical and signal processing topics. Work there resulted in two start-ups during the optical bubble which Sadot was involved in: Xlight Photonics and TeraCross.

Xlight focused on ultra-fast lasers as part of a tunable transponder. Xlight eventually merged with another Israeli start-up Civcom, which in turn was acquired by Padtek.

The second start-up, TeraCross, looked at scheduling issues to improve throughput in Terabit routers. "The start-up led to a reference design that was plugged into routers in Cisco's Labs in Santa Clara [California]," says Sadot. "It was the first time a scheduler showed the capability to support a one Terabit data stream, and route in a sophisticated, global manner."

But with the downturn of the market, the need for terabit routers disappeared and the company folded.

Sadot's third and latest start-up, MultiPhy, also has its origins in Ben-Gurion's optical communications lab's work on enabling system upgrades without adding to system cost.

MultiPhy started as a PON company looking at how to upgrade GPON and EPON to 10 Gigabit PON without changing the hardware. "The magic was to use previous-generation hardware which introduces distortion as it doesn't really fit this upgrade speed, and then to compensate by signal processing," says Sadot.

After several rounds of venture funding the company shifted its focus from PON, applying the concept to 100 Gigabit optical transmission instead.