Intel adds multi-channel lasers to its silicon photonics toolbox

Intel has developed an 8-lane parallel-wavelength laser array to tackle the growing challenge of feeding data to integrated circuits (ICs).

Optical input-output (I/O) promises to solve the challenge of getting data into and out of high-end silicon devices.

These ICs include Ethernet switch chips and ‘XPUs’, shorthand for processors (CPUs), graphics processing units (GPUs) and data processor units (DPUs).

The laser array is Intel’s latest addition to its library of silicon photonics devices.

Power wall

A key challenge facing high-end chip design is the looming ‘power wall’. The electrical I/O power consumption of advanced ICs is rising faster than the power the chip consumes processing data.

James Jaussi, senior principal engineer and director, PHY research lab at Intel Labs, says if this trend continues, all the chip’s power will be used for communications and none will be left for processing, what is known as the power wall.

One way to arrest this trend is to use optical rather than electrical I/O by placing chiplets around the device to send and receive data optically.

Using optical I/O simplifies the electrical I/O needed since the chip only sends data a short distance to the adjacent chiplets. Once in the optical domain, the chiplet can send data at terabit-per-second (Tbps) speeds over tens of meters.

However, packaging optics with a chip is a significant design challenge and changes how computing and switching systems are designed and operated.

Laser array

Intel has been developing silicon photonics technology for two decades. The library of devices includes ring-resonators used for modulation and detection, photo-detectors, lasers, and semiconductor optical amplifiers.

Intel can integrate lasers and gain blocks given its manufacturing process allows for the bonding of III-V materials to a 300mm silicon wafer, what is known as heterogeneous integration.

The company has already shipped over 6 million silicon photonics-based optical modules – mainly its 100-gigabit PSM-4 and 100-gigabit CWDM-4 – since 2016.

Intel also ships such modules as the 100G LR4, 100G DR/FR, 200G FR4, 400G DR4 and 400G FR4. The company says it makes two million optical modules a year.

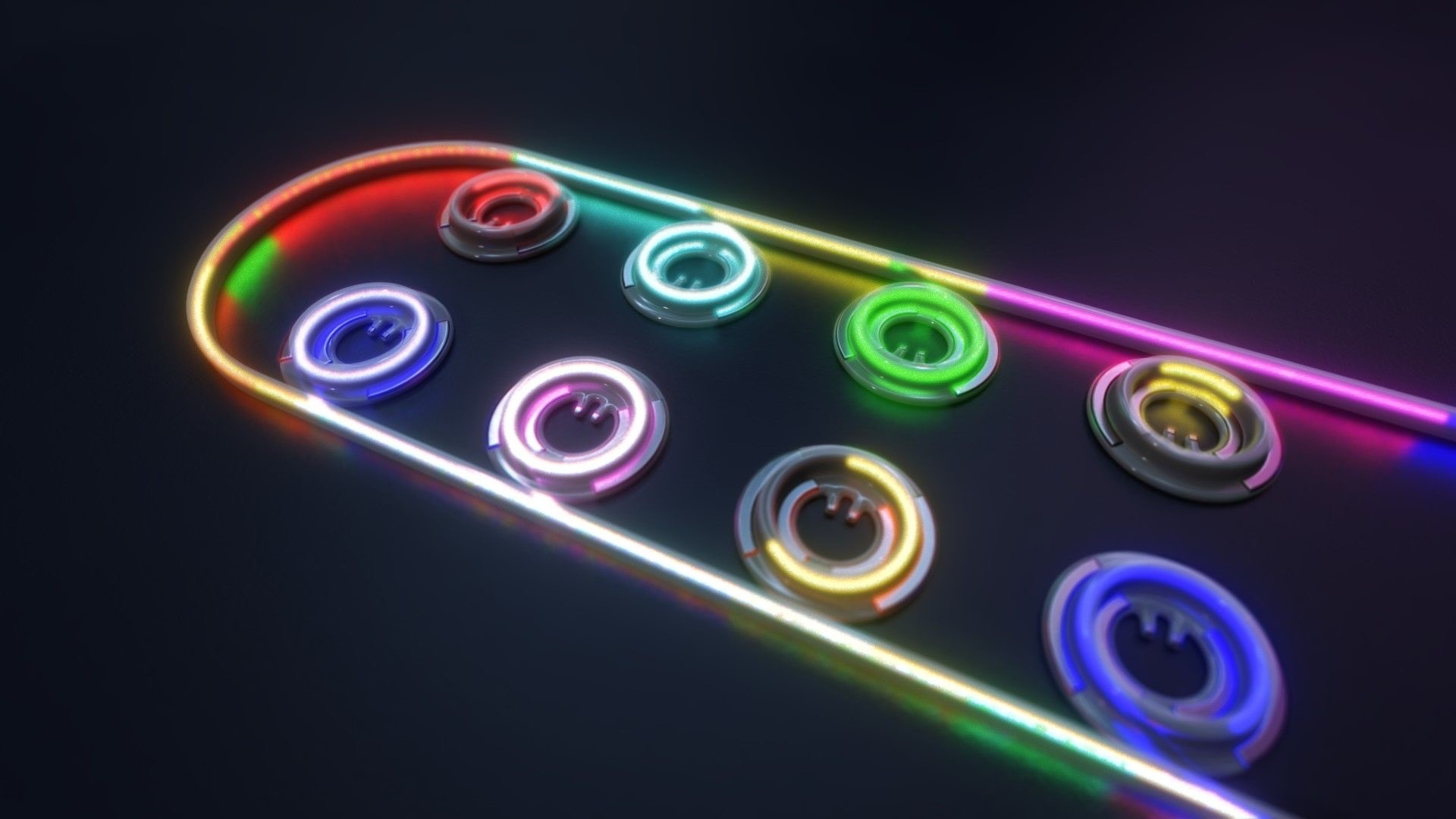

Now Intel Labs has demonstrated a laser array that integrates eight distributed feedback (DFB) lasers for wavelength-division multiplexing (WDM) transmissions. In addition, the laser array is compliant with the CW-WDM multi-source agreement.

“This is a much more difficult design,” says Haisheng Rong, senior principal engineer, photonics research at Intel Labs. “The challenge here is that you have a very small channel spacing of 200GHz.”

Each laser’s wavelength is defined by the structure of the silicon waveguide – less than 1 micron wide and tens of microns long – and the periodicity of a Bragg reflector grating.

The lasers in the array are almost identical, says Rong, their difference being defined by the Bragg grating’s period. There is a 0.2nm difference in the grating period of adjacent – 200GHz apart – lasers. For 100GHz spacing, the grating period difference will need to be 0.1nm.

Specifications

The resulting eight wavelengths have uniform separation. Intel says each wavelength is 200GHz apart with a tolerance of plus or minus 13GHz, while the lasers’ output power varies by plus or minus 0.25dB.

Such performance is well inside the CW-WDM MSA specifications that call for a plus or minus 50GHz tolerance for 200GHz channel spacings and plus or minus 1dB variability in output power.

Rong says that using a 200GHz channel enables a baud rate of 64 gigabaud (GBd) or 128GBd. Intel has already demonstrated its electronic and photonic ICs (EIC/ PIC) operating at 50 gigabit-per-second (Gbps) and 112Gbps.

In future, higher wavelength counts – 16- and 32-channel designs – will be possible, as specified by the CW-WDM MSA.

The laser array’s wavelengths vary with temperature and bias current. For example, the laser array operates at 80oC, but Intel says it can work at 100oC.

Products

The working laser array is the work of Intel Labs, not Intel’s Silicon Photonics Products Division. Intel has yet to say when the laser array will be adopted in products.

But Intel says the technology will enable terabit-per-second (Tbps) transmissions over fibre and reach tens of meters. The laser array also promises 4x greater I/O density and energy efficiency of 0.25 picojoules-per-bit (pJ/b), two-thirds that of the PCI Express 6.0 standard.

Another benefit of optical I/O is low latency, under 10ns plus the signal’s time of flight, determined by the speed of light in the fibre and the fibre’s length.

An electrical IC is needed alongside the optical chiplet to drive the optics and control the ring-resonator modulators and lasers. The chip uses a 28nm CMOS process and Intel is investigating using a 22nm process.

Optical I/O goals

Intel announced in December 2021 that it was working with seven universities as part of its Integrated Photonics Research Center.

The goal is to create building-block circuits that will meet optical I/O needs for the next decade-plus, says Jaussi.

Intel aims to demonstrate by 2024 sending 4Tbps over a fibre while consuming 0.25pJ/b.

The future of optical I/O is more parallel links

Chris Cole has a lofty vantage point regarding how optical interfaces will likely evolve.

As well as being an adviser to the firm II-VI, Cole is Chair of the Continuous Wave-Wavelength Division Multiplexing (CW-WDM) multi-source agreement (MSA).

The CW-WDM MSA recently published its first specification document defining the wavelength grids for emerging applications that require eight, 16 or even 32 optical channels.

And if that wasn’t enough, Cole is also the Co-Chair of the OSFP MSA, which will standardise the OSFP-XD (XD standing for extra dense) 1.6-terabit pluggable form factor that will initially use 16, 100 gigabits-per-second (Gbps) electrical lanes. And when 200Gbps electrical input-output (I/O) technology is developed, OSFP-XD will become a 3.2-terabit module.

Directly interfacing with 100Gbps ASIC serialiser/ deserialiser (serdes) lanes means the 1.6-terabit module can support 51.2-terabit single rack unit (1RU) Ethernet switches without needing 200Gbps ASIC serdes required by eight-lane modules like the OSFP.

“You might argue that it [the OSFP-XD] is just postponing what the CW-WDM MSA is doing,” says Cole. “But I’d argue the opposite: if you fundamentally want to solve problems, you have to go parallel.”

CW-WDM specification

The CW-WDM MSA is tasked with specifying laser sources and the wavelength grids for use by higher wavelength count optical interfaces.

The lasers will operate in a subset of the O-band (1280nm-1320nm) building on work already done by the ITU-T and IEEE standards bodies for datacom optics.

In just over a year since its launch, the MSA has published Revision 1.0 of its technical specification document that defines the eight, 16 and 32 channels.

The importance of specifying the wavelengths is that lasers are the longest lead items, says Cole: “You have to qualify them, and it is expensive to develop more colors.”

In the last year, the MSA has confirmed there is indeed industry consensus regarding the wavelength grids chosen. The MSA has 11 promoter members that helped write the specification document and 35 observer status members.

“The aim was to get as many people on board as possible to make sure we are not doing something stupid,” says Cole.

As well as the wavelengths, the document addresses such issues as total power and wavelength accuracy.

Another issue raised is four-wavelength mixing. As the channel count increases, the wavelengths are spaced closer together. Four-wavelength mixing refers to an undesirable effect that impacts the link’s optical performance. It is a well-known effect in dense WDM transport systems where wavelengths are closely spaced but is less commonly encountered in datacom.

“The first standard is not a link budget specification, which would have included how much penalty you need to allocate, but we did flag the issue,” says Cole. “If we ever publish a link specification, it will include four-wavelength mixing penalty; it is one of those things that must be done correctly.”

Innovation

The MSA’s specification work is incomplete, and this is deliberate, says Cole.

“We are at the beginning of the technology, there are a lot of great ideas, but we are going to resist the temptation to write a complete standard,” he says.

Instead, the MSA will wait to see how the industry develops the technology and how the specification is used. Once there is greater clarity, more specification work will follow.

“It is a tricky balance,” says Cole. “If you don’t do enough, what is the value of it? But if you do too much, you inhibit innovation.”

“The key aspect of the MSA is to help drive compliance in an emerging market,” says Matt Sysak of Ayar Labs and editor of the MSA’s technical specification. “This is not yet standardised, so it is important to have a standard for any new technology, even if it is a loose one.”

The MSA wants to see what people build. “See which one of the grids gain traction,” says Sysak.

Ayar Labs’ SuperNova remote light source for co-packaged optics is one of the first products that is compliant with the CW-WDM MSA.

Sysak notes that at recent conferences co-packaged optics is a hot topic but what is evident is that it is more of a debate.

“The fact that the debate doesn’t seem to coagulate around particular specification definitions and industry standards is indicative of the fact that the entire industry is struggling here,” says Sysak.

This is why the CW-WDM MSA is important, to help promote economies of scale that will advance co-packaged optics.

Applications

Cole notes that, if anything, the industry has become more entrenched in the last year.

The Ethernet community is fixed on four-wavelength module designs. To be able to support such designs as module speeds increase, higher-order modulation schemes and more complex digital signal processors (DSPs) are needed.

“The problem right now is that all the money is going into signal processing: the analogue-to-digital converters and more powerful DSPs,” says Cole.

His belief is that parallelism is the right way to go, both in terms of more wavelengths and more fibers (physical channels).

“This won’t come from Ethernet but emerging applications like machine learning that are not tied to backward compatibility issues,” says Cole. “It is emerging applications that will drive innovation here.”

Cole adds that there is hyperscaler interest in optical channel parallelism. “There is absolutely a groundswell interest here,” says Cole. “This is not their main business right now, but they are looking at their long-term strategy.”

The likelihood is that laser companies will step in to develop the laser sources and then other companies will develop the communications gear.

“It will be driven by requirements of emerging applications,” says Cole. “This is where you will see the first deployments.”

Turning to optical I/O to open up computing pinch points

Getting data in and out of chips used for modern computing has become a key challenge for designers.

A chip may talk to a neighbouring device in the same platform or to a chip across the data centre.

The sheer quantity of data and the reaches involved – tens or hundreds of meters – is why the industry is turning to optical for a chip’s input-output (I/O).

It is this technology transition that excites Ayar Labs.

The US start-up showcased its latest TeraPHY optical I/O chiplet operating at 1 terabit-per-second (Tbps) during the OFC virtual conference and exhibition held in June.

Evolutionary and revolutionary change

Ayar Labs says two developments are driving optical I/O.

One is the exponential growth in the capacity of Ethernet switch chips used in the data centre. The emergence of 25.6-terabit and soon 51.2-terabit Ethernet switches continue to drive technologies and standards.

This, says Hugo Saleh, vice president of business development and marketing, and recently appointed as the managing director of Ayar Labs’ new UK subsidiary, is an example of evolutionary change.

But artificial intelligence (AI) and high-performance computing have networking needs independent of the Ethernet specification.

“Ethernet is here to stay,” says Saleh. “But we think there is a new class of communications that is required to drive these advanced applications that need low latency and low power.”

Manufacturing processes

Ayar Labs’ TeraPHY chiplet is manufactured using GlobalFoundries’ 45nm RF Silicon on Insulator (45RFSOI) process. But Ayar Labs is also developing TeraPHY silicon using GlobalFoundries’ emerging 45nm CMOS-silicon photonics CLO process (45CLO).

The 45RFSOI process is being used because Ayar Labs is already supplying TeraPHY devices to customers. “They have been going out quite some time,” says Saleh.

But the start-up’s volume production of its chiplets will use GlobalFoundries’ 45CLO silicon photonics process. Version 1.0 of the process design kit (PDK) is expected in early 2022, leading to qualified TeraPHY parts based on the process.

One notable difference between the two processes is that 45RFSOI uses a vertical grating coupler to connect the fibre to the chiplet which requires active alignment. The 45CLO process uses a v-groove structure such that passive alignment can be used, simplifying and speeding up the fibre attachment.

“With high-volume manufacturing – millions and even tens of millions of parts – things like time-in-factory make a big difference,” says Saleh. Every second spent adds cost such that the faster the processes, the more cost-effective and scalable the manufacturing becomes.

Terabit TeraPHY

The TeraPHY chiplet demonstrated during OFC uses eight optical transceivers. Each transceiver comprises eight wavelength-division multiplexed (WDM) channels, each supporting 16 gigabit-per-second (Gbps) of data. The result is a total optical I/O bandwidth of 1.024Tbps operating in each direction (duplex link).

“The demonstration is at 16Gbps and we are going to be driving up to 25Gbps and 32Gbps next,” says Saleh.

The chiplet’s electrical I/O is slower and wider: 16 interfaces, each with 80, 2Gbps channels implementing Intel’s Advanced Interface Bus (AIB) technology.

Last December, Ayar Labs showcased advanced parts using the CLO process. The design was a direct-drive part – a prototype of a future-generation product, not the one demonstrated for OFC.

“The direct-drive part has a serial analogue interface that could come from the host ASIC directly into the ring resonators and modulate them whereas the part we have today is the productised version of an AIB interface with all the macros and all the bandwidth enabled,” says Saleh.

Ayar Labs also demonstrated its 8-laser light source, dubbed SuperNova, that drives the chiplet’s optics.

The eight distributed feedback (DFB) lasers are mixed using a planar lightwave circuit to produce eight channels, each comprising eight frequencies of light.

Saleh compares the SuperNova to a centralised power supply in a server that power pools of CPUs and memory. “The SuperNova mimics that,” he says. “One SuperNova or a 1 rack-unit box of 16 SuperNovas distributing continuous-wave light just like distributed voltage [in a server].”

The current 64-channel SuperNova powers a single TeraPHY but future versions will be able to supply light to two or more.

Ayar Labs is using Macom as its volume supplier of DFB lasers.

Significance

Ayar Labs believes the 1-terabit chip-to-chip WDM link is an industry first.

The demo also highlights how the company is getting closer to a design that can be run in the field. The silicon was made less than a month before the demonstration and was assembled quickly. “It was not behind glass and was operating at room temperature,” says Saleh. “It’s not a lab setting but a production setting.”

The same applies to the SuperNova. The light source is compliant with the Continuous-Wave Wavelength Division Multiplexing (CW-WDM) Multi-Source Agreement (MSA) Group that released its first specification revision to coincide with OFC. The CW-WDM MSA Group has developed a specification for 8, 16, and 32-wavelength optical sources.

The CW-WDM MSA promoter and observer members include all the key laser makers as well as the leading ASIC vendors. “We hope to establish an ecosystem on the laser side but also on the optics,” says Saleh.

“Fundamentally, there is a change at the physical (PHY) level that is required to open up these bottlenecks,” says Saleh. “The CW-WDM MSA is key to doing that; without the MSA you will not get that standardisation.”

Saleh also points to the TeraPHY’s optical I/O’s low power consumption which for each link equates to 5pJ/bit. This is about a tenth of the power consumed by electrical I/O especially when retimers are used. Equally, the reach is up to 2km not tens of centimetres associated with electrical links.

Chiplet demand

At OFC, Arista Networks outlined how pluggable optics will be able to address 102.4 terabit Ethernet switches while Microsoft said it expects to deploy co-packaged optics by the second half of 2024.

Nvidia also discussed how it clusters its graphics processing units (GPUs) that are used for AI applications. However, when a GPU from one cluster needs to talk to a GPU in another cluster, a performance hit occurs.

Nvidia is looking for the optical industry to develop interfaces that will enable its GPU systems to scale while appearing as one tightly coupled cluster. This will require low latency links. Instead of microseconds and milliseconds depending on the number of hops, optical I/O reduces the latency to tens of nanoseconds.

“We spec our chiplet as sub-5ns plus the time of flight which is about 5ns per meter,” says Saleh. Accordingly, the transit time between two GPUs 1m apart is 15ns.

Ayar Labs says that after many conversations with switch vendors and cloud players, the consensus is that Ethernet switches will have to adopt co-packaged optics. There will be different introductory points for the technology but the industry direction is clear.

“You are going to see co-packaged optics for Ethernet by 2024 but you should see the first AI fabric system with co-packaged I/O in 2022,” says Saleh.

Intel published a paper at OFC involving its Stratix 10 FPGA using five Ayar Labs’ chiplets, each one operating at 1.6 terabits (each optical channel operating at 25Gbps, not 16Gbps). The resulting FPGA has an optical I/O capacity of 8Tbps, the design part of the US DARPA PIPES (Photonics in the Package for Extreme Scalability) project.

“A key point of the paper is that Intel is yielding functional units,” says Saleh. The paper also highlighted the packaging and assembly achievements and the custom cooling used.

Intel Capital is a strategic investor in Ayar Labs, as is GlobalFoundries, Lockheed Martin Ventures, and Applied Materials.