ADVA's 100 Terabit data centre interconnect platform

- The FSP 3000 CloudConnect comes in several configurations

- The data centre interconnect platform scales to 100 terabits of throughput

- The chassis use a thin 0.5 RU QuadFlex card with up to 400 Gig transport capacity

- The optical line system has been designed to be open and programmable

ADVA Optical Networking has unveiled its FSP 3000 CloudConnect, a data centre interconnect product designed to cater for the needs of the different data centre players. The company has developed several sized platforms to address the workloads and bandwidth needs of data centre operators such as Internet content providers, communications service providers, enterprises, cloud and colocation players.

Certain Internet content providers want to scale the performance of their computing clusters across their data centres. A cluster is a grouping of distributed computing comprising a defined number of virtual machines and processor cores (see Clusters, pods and recipes explained, bottom). Yet there are also data centre operators that only need to share limited data between their sites.

ADVA Optical Networking highlights two internet content providers - Google and Microsoft with its Azure cloud computing and services platform - that want their distributed clusters to act as one giant global cluster.

“The performance of the combined clusters is proportional to the bandwidth of the interconnect,” says Jim Theodoras, senior director, technical marketing at ADVA optical Networking. “No matter how many CPU cores or servers, you are now limited by the interconnect bandwidth.”

ADVA Optical Networking cites a Google study that involved running an application on different cluster configurations, starting with a single cluster; then two, side-by-side; two clusters in separate buildings through to clusters across continents. Google claimed the distributed clusters only performed at 20 percent capacity due to the limited interconnect bandwidth. “The reason you are hearing these ridiculous amounts of connectivity, in the hundreds of terabits, is only for those customers that want their clusters to behave as a global cluster,” says Theodoras.

Yet other internet content providers have far more modest interconnect demands. ADVA cites one, as large as the two global cluster players, that wants only 1.2 terabit-per-second (Tbps) between its sites. “It is normal duplication/ replication between sites,” says Theodoras. “They want each campus to run as a cluster but they don’t want their networks to behave as a global cluster.”

FSP 3000 CloudConnect

The FSP 3000 CloudConnect has several configurations. The company stresses that it designed CloudConnect as a high-density, self-contained platform that is power-efficient and that comes with advanced data security features.

All the CloudConnect configurations use the QuadFlex card that has a 800 Gigabit throughput: up to 400 Gigabit client-side interfaces and 400 Gigabit line rates.

Jim TheodorasThe QuadFlex card is thin, measuring only a half rack unit (RU). Up to seven can be fitted in ADVA’s four rack-unit (4 RU) platform, dubbed the SH4R, for a line side transport capacity of 2.8 Tbps. The SH4R’s remaining, eighth slot hosts either one or two management controllers.

Jim TheodorasThe QuadFlex card is thin, measuring only a half rack unit (RU). Up to seven can be fitted in ADVA’s four rack-unit (4 RU) platform, dubbed the SH4R, for a line side transport capacity of 2.8 Tbps. The SH4R’s remaining, eighth slot hosts either one or two management controllers.

The QuadFlex line-side interface supports various rates and reaches, from 100 Gigabit ultra long-haul to 400 Gigabit metro/ regional, in increments of 100 Gigabit. Two carriers, each using polarisation-multiplexing, 16 quadrature amplitude modulation (PM-16-QAM), are used to achieve the 400 Gbps line rate, whereas for 300 Gbps, 8-QAM is used on each of the two carriers.

“The reason you are hearing these ridiculous amounts of connectivity, in the hundreds of terabits, is only for those customers that want their clusters to behave as a global cluster”

The advantage of 8-QAM, says Theodoras, is that it is 'almost 400 Gigabit of capacity' yet its can span continents. ADVA is sourcing the line-side optics but uses its own code for the coherent DSP-ASIC and module firmware. The company has not confirmed the supplier but the design matches Acacia's 400 Gigabit coherent module that was announced at OFC 2015.

ADVA says the CloudConnect 4 RU chassis is designed for customers that want a terabit-capacity box. To achieve a terabit link, three QuadFlex cards and an Erbium-doped fibre amplifier (EDFA) can be used. The EDFA is a bidirectional amplifier design that includes an integrated communications channel and enables the 4 RU platform to achieve ultra long-haul reaches. “There is no need to fit into a [separate] big chassis with optical line equipment,” says Theodoras. Equally, data centre operators don’t want to be bothered with mid-stage amplifier sites.

Some data centre operators have already installed 40 dense WDM channels at 100GHz spacing across the C-band which they want to keep. ADVA Optical Networking offers a 14 RU configuration that uses three SH4R units, an EDFA and a DWDM multiplexer, that enables a capacity upgrade. The three SH4R units house a total of 20 QuadFlex cards that fit 200 Gigabit in each of the 40 channels for an overall transport capacity of 8 terabits.

ADVA CloudConnect configuration supporting 25.6 Tbps line side capacity. Source: ADVA Optical Networking

ADVA CloudConnect configuration supporting 25.6 Tbps line side capacity. Source: ADVA Optical Networking

The last CloudConnect chassis configuration is for customers designing a global cluster. Here the chassis has 10 SH4R units housing 64 QuadFlex cards to achieve a total transport capacity of 25.6 Tbps and a throughput of 51.2 Tbps.

Also included are 2 EDFAs and a 128-channel multiplexer. Two EDFAs are needed because of the optical loss associated with the high number of channels, such that an EDFA is allocated for each of the 64 channels. “For the [14 RU] 40 channels [configuration], you need only one EDFA,” says Theodoras.

The vendor has also produced a similar-sized configuration for the L-band. Combining the two 40 RU chassis delivers 51.2Tbps of transport and 102.4 Tbps of throughput. “This configuration was built specifically for a customer that needed that kind of throughput,” says Theodoras.

Other platform features include bulk encryption. ADVA says the encryption does not impact the overall data throughput while adding only a very slight latency hit. “We encrypt the entire payload; just a few framing bytes are hidden in the existing overhead,” says Theodoras.

The security management is separate from the network management. “The security guys have complete control of the security of the data being managed; only they can encrypt and decrypt content,” says Theodoras.

CloudConnect consumes only 0.5W/ Gigabit. The platform does not use electrical multiplexing of data streams over the backplane. The issue with using such a switched backplane is that power is consumed independent of traffic. The CloudConnect designers has avoided this approach. “The reason we save power is that we don’t have all that switching going on over the backplane.” Instead all the connectivity comes from the front panel of the cards.

The downside of this approach is that the platform does not support any-port to any-port connectivity. “But for this customer set, it turns out that they don’t need or care about that.”

Open hardware and software

ADVA Optical Networking claims is 4 RU basic unit addresses a sweet spot in the marketplace. The CloudConnect also has fewer inventory items for the data centre operators to manage compared to competing designs based on 1 RU or 2 RU pizza boxes, it says.

Theodoras also highlights the system’s open hardware and software design.

“We will let anybody’s hardware or software control our network,” says Theodoras. “You don’t have to talk to our software-defined networking (SDN) controller to control our network.” ADVA was part of a demonstration last year whereby an NEC and a Fujitsu controller oversaw ADVA’s networking elements.

Every vendor is always under pressure to have the best thing because you are only designed in for 18 months

By open hardware, what is meant is that programmers can control the optical line system used to interconnect the data centres. “We have found a way of simplifying it so it can be programmed,” says Theodoras. “We have made it more digital so that they don’t have to do dispersion maps, polarisation mode dispersion maps or worry about [optical] link budgets.” The result is that data centre operators can now access all the line elements.

“At OFC 2015, Microsoft publicly said they will only buy an open optical line system,” says Theodoras. Meanwhile, Google is writing a specification for open optical line systems dubbed OpenConfig. “We will be compliant with Microsoft and Google in making every node completely open.”

General availability of the CloudConnect platforms is expected at the year-end. “The data centre interconnect platforms are now with key partners, companies that we have designed this with,” says Theodoras.

Clusters, pods and recipes explained

A cluster is made up of a number of virtual machines and CPU cores and is defined in software. A cluster is a virtual entity, says Theodoras, unrelated to the way data centre managers define their hardware architectures.

“Clusters vary a lot [between players],” says Theodoras. “That is why we have had to make scalability such a big part of CloudConnect.”

The hardware definition is known as a pod or recipe. “How these guys build the network is that they create recipes,” says Theodoras. “A pod with this number of servers, this number of top-of-rack switches, this amount of end-of-row router-switches and this transport node; that will be one recipe.”

Data centre players update their recipes every 18 months. “Every vendor is always under pressure to have the best thing because you are only designed in for 18 months,” says Theodoras.

Vendors are informed well in advance what the next hardware requirements will be, and by when they will be needed to meet the new recipe requirements.

In summary, pods and recipes refer to how the data centre architecture is built, whereas a cluster is defined at a higher, more abstract layer.

Photonics and optics: interchangeable yet different

Many terms in telecom are used interchangeably. Terms gain credibility with use but over time things evolve. For example, people understand what is meant by the term carrier [of traffic] or operator [of a network] and even the term incumbent [operator] even though markets are now competitive and 'telephony' is no longer state-run.

"For me, optics is the equivalent of electrical, and photonics is the equivalent of electronics - LSI, VLSI chips and the like" - Mehdi Asghari

Operators - ex-incumbents or otherwise - also do more that oversee the network and now provide complex services. But of course they differ from service providers such as the over-the-top players [third-party providers delivering services over an operator's infrastructure, rather than any theatrical behaviour] or internet content providers.

Google is an internet content provider but with its gigabit broadband service it is rolling out in the US, it is also an operator/ carrier/ communications service provider. And Google may soon become a mobile virtual network operator.

So having multiple terms can be helpful, adding variety especially when writing, but the trouble is it is also confusing.

Recent discussions including interviewing silicon photonics pioneer, Richard Soref, raised the question whether the terms photonics and optics are the same. I decided to ask several industry experts, starting with The Optical Society (OSA).

Tom Hausken, the OSA's senior engineering & applications advisor, says that after many years of thought he concludes the following:

-

People have different definitions for them [optics and photonics] that range all over the map.

-

I find it confusing and unhelpful to distinguish them.

-

The National Academies's report is on record saying there is no difference as far as that study is concerned.

-

That works for me.

Michael Duncan, the OSA's senior science advisor, puts the difference down to one of cultural usage. "Photonics leans more towards the fibre optics, integrated optics, waveguide optics, and the systems they are used in - mostly for communication - while optics is everything else, especially the propagation and modification of coherent and incoherent light," says Duncan. "But I could easily go with Tom's third bullet point."

"Photonics does include the quantum nature, and sort of by convention, the term optics is seen to mean classical" - Richard Soref

Duncan also cites Wikipedia, with its discussion of classical optics that embraces the wave nature of light, and modern optics that also includes light's particle nature. And this distinction is at the core of the difference, without leading to an industry consensus.

"Photonics does include the quantum nature, and sort of by convention, the term optics is seen to mean classical," says Richard Soref. He points out that the website Arxiv.org categorises optics as the subset of physics, while the OSA Newsletter is called Optics & Photonics News, covering all bases.

"Photonics is the larger category, and I might have been a bit off base when throwing around the optics term," says Soref. If only everyone was as off base as Professor Soref.

"We need to remember that there is no canonical definition of these terms, and there is no recognised authority that would write or maintain such a definition," says Geoff Bennett, director, solutions and technology at Infinera. For Bennett, this is a common issue, not confined to the terms optics and photonics: "We see this all the time in the telecoms industry, and in every other industry that combines rapid innovation with aggressive marketing."

That said, he also says that optics refers to classical optics, in which light is treated as a wave, whereas photonics is where light meets active semiconductors and so the quantum nature of light tends to dominate. Examples of the latter would be photonic integrated circuits (PICs). "These contain active lasers components, semiconductor optical amplifiers and photo-detectors " says Bennett. "All of these rely on quantum effects to do their job."

"We need to remember that there is no canonical definition of these terms, and there is no recognised authority that would write or maintain such a definition" - Geoff Bennett

Bennett says that the person who invented the term semiconductor optical amplifier (SOA) was not aware of the definition because the optical amplifier works on quantum principles, the same way a laser does. "So really it should be a semiconductor photonic amplifier," he says.

"At Infinera, we seem for the most part to have abided to the definitions in terminology that we use, but I can’t say that this was a conscious decision," says Bennett. "I am sure that if our marketing department thought that photonic sounded better than optical in a given situation they would have used it."

Mehdi Asghari, vice president, silicon photonics research & development at Mellanox, says optics refers to the classical use and application of light, with light as a ray. He describes optics as having a system-level approach to it.

"We create a system of lenses to make a microscope or telescope to make an optical instrument using classical optics models or we use optical components to create an optical communication system," he says. This classical or system-level perspective makes it optics or optical, a term he prefers. "We are not concerned with the nature - particle versus wave - of light, rather its classical behaviour, be it in an instrument or a system," he says.

But once things are viewed closer, at the device level, especially devices comparable in size of photons, then a system-level approach no longer works and is replaced with a quantum approach. "Here we look at photons and the quantum behaviour they exhibit," says Asghari.

In a waveguide, be it silicon photonics (integrated devices based on silicon), a planar lightwave circuit (glass-based integrated devices), or a PIC based on III-V or active devices, the size of the structure or device used is often comparable or even smaller than the size of the photons it is manipulating, he says: "This is where we very much feel the quantum nature of light, and this is where light becomes photons - photonics - and not optics."

ADVA Optical Networking's senior principal engineer, Klaus Grobe, held a discussion with the company's physicists, and both, independently, had the same opinion.

"Both [photonics and optics] are not strictly defined," he says. "Optics clearly also includes classic school-book ray optics and the like. Photonics already deals with photons, the wave-particle dualism, and hence, at least indirectly, with quantum mechanics, and possibly also quantum electro-dynamics (QED)."

Since in fibre-optics for transport, ray-propagation models no longer can be used, and also since they rely on the quantum-mechanical behaviour, for example of diode receivers, fibre-optics are better filed under photonics, says Grobe: "But they are not called fibre-photonics".

So, the industry view seems to be that the two terms are interchangeable but optics implies the classical nature of light while photonics suggests light as particles. Which term includes both seems to be down to opinion. Some believe optics covers both, others believe photonics is the more encompassing term.

Mellanox's Asghari once famously compared photons and electrons to cats and dogs. Electrons are like dogs: they behave, stick by you and are loyal; they do exactly as you tell them, he said, whereas cats are their own animals and do what they like. Just like photons. So what is his take?

He believes optics is more general than photonics. He uses the analogy of electrical versus electronics to make his point. An electronics system or chip is still an electrical device but it often refers to the integrated chip, while an electrical system is often seen as global and larger, made up of classical devices.

"For me, optics is the equivalent of electrical, and photonics is the equivalent of electronics - LSI, VLSI chips and the like," says Asghari. "One is a subset or specialised version of the other due to the need to get specific on the quantum nature of light and the challenges associated with integration."

"Optics refers to all types of cats, be it the tiger or the lion or the domestic pet. Photonics refers to the so called domestic cat that has domesticated and slaved us to look after it" - Mehdi Asghari

To back up his point, Ashgari says take a look at older books and publications that use the term optics. The term photonics started to be used once integration and size reduction became important, just as how electrical devices got replaced with electronic devices.

Indeed, this rings true in the semiconductor industry: microelectronics has now become nano-electronics as CMOS feature sizes have moved from microns to nanometer dimensions.

And this is why optical fibre or the semiconductor optical amplifier are used because these terms were invented and used when the industry was primarily engaged with the use of light at a system level and away from the quantum limits and challenges of integration.

"In short, photonics is used when we acknowledge that light is made of photons with all the fun and challenges that photons bring to us and optics is when we deal with light at a system level or a classical approach is sufficient," says Asghari.

Happily, cats and dogs feature here too.

"Optics refers to all types of cats, be it the tiger or the lion or the domestic pet," says Asghari. "Photonics refers to the so called domestic cat that has domesticated and slaved us to look after it."

Last word to Infinera's Bennett: "I suppose the moral is: be aware of the different meanings, but don’t let it bug you when people misuse them."

MultiPhy eyeing 400 Gig after completing funding round

MultiPhy is developing a next-generation chip design to support 100 and 400 Gigabit direct-detection optical transmission. The start-up raised a new round of funding in 2013 but has neither disclosed the amount raised nor the backers except to say it includes venture capitalists and a 'strategic investor'.

The start-up is already selling its 100 Gig multiplexer and receiver chips to system vendors and module makers. The devices are being used for up to 80km point-to-point links and dense WDM metro/ regional networks spanning hundreds of kilometers. "In every engagement we have, the solutions are being sold in both data centre and telecom environments," says Avi Shabtai, CEO of MultiPhy.

The industry has settled on coherent technology for long-distance 100 Gig optical transmission but coherent is not necessarily a best fit for certain markets if such factors as power consumption, cost and compatibility with existing 10 Gig links are considered, says Shabtai.

The requirement to connect geographically-dispersed data centres has created a market for 100 Gig direct-detection technology. The types of data centre players include content service providers, financial institution such as banks, and large enterprises that may operate their own networks.

In every engagement we have, the solutions are being sold in both data centre and telecom environments

MultiPhy's two chips are the MP1101Q, a 4x25 Gig multiplexer device, and the MP1100Q four-channel receiver IC that includes a digital signal processor implementing the MLSE algorithm.

The chipset enables 10 Gig opto-electronics to be used to implement the 25 Gig transmitter and receiver channels. This results in a cost advantage compared to other 4x25 Gig designs. A design using the chipset can achieve 100 Gig transmissions over a 200GHz-wide channel or a more spectrally efficient 100GHz one. The latter achieves a transmission capacity of 4 Terabits over a fibre.

ADVA Optical Networking is one system vendor offering 100 Gig direct-detection technology while Finisar and Oplink Communications are making 100 Gigabit direct-detection optical modules. Oplink announced that it is using MultiPhy's chipset in 2013.

Overall, at least four system vendors are in advanced stages of developing 100 Gig direct-detection, and not all will necessarily announce their designs, says Shabtai. Whereas all the main optical transmission vendors have 100 Gig coherent technology, those backing 100 Gig direct detection may remain silent so as not to tip off their competitors, he says.

We assume we can do more using those [25 Gig] optical components with our technology

Meanwhile, the company is using the latest round of funding to develop its next-generation design. MultiPhy is focussed on high-speed direct-detection despite having coherent technology in-house. "Coherent is on our roadmap but direct detection is a very good opportunity over the next two years," says Shabtai. "You will see us come with solutions that also support 400 Gig."

A 400 Gigabit direct-detection design using its next generation chipset will likely come to market only in 2016 at the earliest by which time 25 Gig components will be more mature and cheaper. Using existing 25 Gig technology, a 400 Gig design requires 16, 25 Gig channels. However, the company will likely extend the performance of 25 Gig components to achieve even faster channel speeds, just like it does now with 10 Gig components to achieve 25 Gig speeds. The result will be a 400 Gig design with fewer than 16 channels. "We assume we can do more using those [25 Gig] optical components with our technology," says Shabtai.

OpenFlow extends its control to the optical layer

"We see OpenFlow as an additional solution to tackle the problem of network control"

Jörg-Peter Elbers, ADVA Optical Networking

The largest data centre players have a single-mindedness when it comes to service delivery. Players such as Google, Facebook and Amazon do not think twice about embracing and even spurring hardware and software developments if they will help them better meet their service requirements.

Such developments are also having a wider impact, interesting traditional telecom operators that have their own service challenges.

The latest development causing waves is the OpenFlow protocol. An open standard, OpenFlow is being developed by the Open Networking Foundation, an industry body that includes Google, Facebook and Microsoft, telecom operators Verizon, NTT and Deutsche Telekom, and various equipment makers.

OpenFlow is already being used by Google, and falls under the more general topic of software-defined networking (SDN). A key principle underpinning SDN is the separation of the data and control planes to enable more centralised and simplified management of the network.

OpenFlow is being used in the management of packet switches for cloud services. "The promise of software-defined networking and OpenFlow is to give [data centre operators] a virtualised network infrastructure," says Jörg-Peter Elbers, vice president, advanced technology at ADVA Optical Networking.

The growing interest in OpenFlow is reflected in the activities of the telecom system vendors that have extended the protocol to embrace the optical layer. But whereas the content service provider giants need only worry about tailoring their networks to optimise their particular services, telecom operators must consider legacy equipment and issues of interoperability.

OFELIA

ADVA Optical Networking has started the ball rolling by running an experiment to show OpenFlow controlling both the optical and packet layers of the network. Until now the protocol, which provides a software-programmable interface, has been used to manage packet switches; the adding of the optical layer control is an industry first, the company claims.

The OpenFlow demonstration is part of the European “OpenFlow in Europe, Linking Infrastructure and Applications” (OFELIA) research project involving ADVA Optical Networking and the University of Essex. A test bed has been set up that uses the ADVA FSP 3000 to implement a colourless and directionless ROADM-based optical network.

"We have put a network together such that people can run the optical layer through an OpenFlow interface, as they do the packet switching layer, under one uniform control umbrella," says Elbers. "The purpose of this project is to set up an experimental facility to give researchers access to, and have them play with, the capabilities of an OpenFlow-enabled network."

"The fact that Google is doing it [SDN] is not a strong indication that service providers are going to do it tomorrow"

Mark Lutkowitz, Telecom Pragmatics

Remote researchers can access the test bed via GÉANT, a high-bandwidth pan-European backbone connecting national research and education networks.

ADVA Optical Networking hopes the project will act as a catalyst to gain useful feedback and ideas from the users, leading to further developments to meet emerging requirements.

OpenFlow and GMPLS

A key principle of SDN, as mentioned, is the separation of the data plane from the control plane. "The aim is to have a more unified control of what your network is doing rather than running a distributed specialised protocol in the switches," says Elbers.

That is not that much different from the Generalized Multi-Protocol Label Switching (GMPLS), he says: "With GMPLS in an optical network you effectively have a data plane - a wavelength switched data plane - and then you have a unified control plane implementation running on top, decoupled from the data plane."

But clearly there are differences. OpenFlow is being used by data centre operators to control their packet switches and generate packet flows. The goal is for their networks to gain flexibility and agility: "A virtualised network that can be run as you, the user, want it," said Elbers.

But the protocol only gives a user the capability to manage the forwarding behavior of a switch: an incoming packet's header is inspected and the user can program the forwarding table to determine how the packet stream is treated and the port it goes out on.

And while OpenFlow has since been extended to cater for circuit switches as well as wavelength circuits, there are aspects at the optical layer which OpenFlow is not designed to address - issues that GMPLS does.

To run end-to-end, the control plane needs to be aware of the blocking constraints of an optical switch, while when provisioning it must also be aware of such aspects as the optical power levels and optical performance constraints. "The management of optical is different from managing a packet switch or a TDM [circuit switched] platform," says Elbers. “We need to deal with transmission impairments and constraints that simply do not exist inside a packet switch.”

That said, having GMPLS expertise, it is relatively simple for a vendor to provide an OpenFlow interface to an optical controlled network, he says: "We see OpenFlow as an additional solution to tackle the problem of network control."

Operators want mature and proven interoperable standards for network control, that incorporate all the different network layers and that use GMPLS.

"We are seeing that in the data centre space, the players think that they may not have to have that level of complexity in their protocols and can run something lower level and streamlined for their applications," says Elbers.

While operators see the benefit of OpenFlow for their own data centres and managed service offerings, they also are eyeing other applications such as for access and aggregation to allow faster service mobility and for content management, says Elbers.

ADVA Optical Networking sees the adding of optical to OpenFlow as a complementary approach: the integration of optical networking into an existing framework to run it in a more dynamic fashion, an approach that benefits the data centre operators and the telcos.

"If you have one common framework, when you give server and compute jobs then you know what kind of connectivity and latency needs to go with this and request these resources and reconfigure the network accordingly," says Elbers.

But longer term the impact of OpenFlow and SDN will likely be more far-reaching: applications themselves could program the network, or it could be used to enable dial-up bandwidth services in a more dynamic fashion. "By providing software programmability into a network, you can develop your own networking applications on top of this - what we see as the heart of the SDN concept," says Elbers. “The long term vision is that the network will also become a virtualised resource, driven by applications that require certain types of connectivity.”

Providing the interface is the first step, the value-add will be the things that players do with the added network flexibility, either the vendors working with operators, or by the operators' customers and by third-party developers.

"This is a pretty significant development that addresses the software side of things," says Elbers, adding that software is becoming increasingly important, with OpenFlow being an interesting step in that direction.

100 Gigabit for the metro

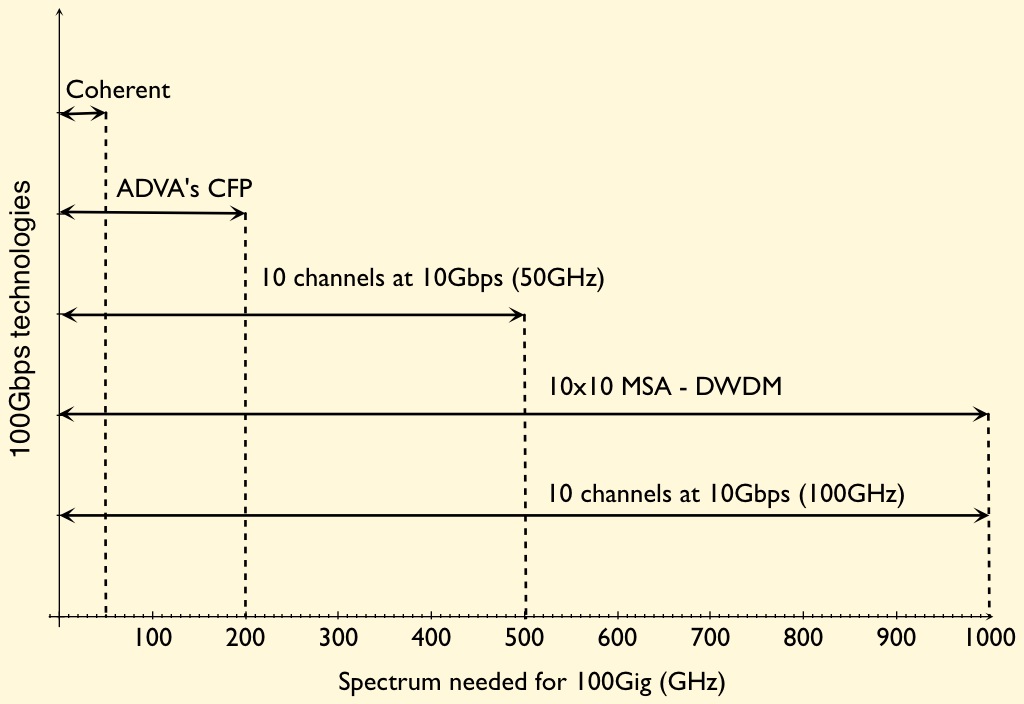

The firm claims this is an industry first: a direct-detection-based 100 Gigabit-per-second (Gbps) design using four, 28Gbps channels rather than current 10x10Gbps schemes.

"Data centre operators want to make best use of the fibre insfrastructure and get lower overall cost, footprint and power consumption"

Jörg-Peter Elbers, ADVA Optical Networking

The card, designed for the FSP 3000 platform, delivers a 2.5x greater spectral efficiency compared to 10Gbps dense WDM (DWDM) systems. In turn, the 100Gbps metro card has half the cost of a 100 Gigabit coherent design while requiring half the power and space.

ADVA Optical Networking is using a CFP optical module to implement the 100Gbps metro design. This allows the card to use other CFP-based interfaces such at the IEEE 100 Gigabit Ethernet (GbE) standards. The design also benefits from the economies of scale of the CFP as the module of choice for 100GbE, and from future smaller modules such as the CFP2 and CFP4 being developed as the 100GbE market evolves.

The 100Gbps metro CFP's four, 28Gbps signals are modulated using optical duo-binary. By choosing duo-binary, cheaper 10Gbps optics can be used akin to a 4x10Gbps design. Duo-binary is also more resilient to dispersion than standard on-off keying.

The CFP-based card requires 200GHz of spectrum for each 100Gbps light path. This is 2.5x more spectrally efficient than 10x10Gbps based on 50GHz channel spacings. However, while the design is cheaper, denser and less power hungry than 100Gbps coherent, it has only a quarter of the spectral efficiency of coherent (see chart).

Jörg-Peter Elbers, vice president, advanced technology at ADVA Optical Networking, says duo-binary delivers closer channel spacing such that a doubling in spectral density will be possible in a future design (100Gbps in a 100GHz channel). The 100Gbps metro card supports 500km links using dispersion-compensated fibre.

Non-coherent designs for the metro are starting to appear despite 100Gbps optical transport being in its infancy. Besides ADVA Optical Networking's design, a component vendor is promoting a 100Gbps direct detection DWDM design for the metro. The 10x10 MSA has also announced a DWDM extension that will support four and eight 100Gbps channels.

The 100G metro card showing the CFP. Source: ADVA Optical Networking

Metro direct-detection also faces competition from system vendors developing coherent designs tailored for the metro.

System vendors, module makers, optical and IC component companies all believe there is a market for lower cost 100Gbps metro transport. This is backed by keen interest from service providers and large content providers that want cheaper 100Gbps interfaces to connect data centres.

Elbers highlights two such applications that will first likely use the 100 Gigabit metro card.

One is connecting the data centres of enterprises that use rented fibre. "They have a multitude of interfaces and services - 10GbE, 8 Gigabit Fibre Channel - and they often rent fibre," says Elbers. "They need to get as much capacity as possible to make the fibre rent worthwhile while being constrained on rack space and power."

The second application is to connect 100GbE-enabled IP routers across the metro. Here service providers may not have heavily loaded DWDM networks and can afford to use a 100Gbps metro link rather than the more spectrally efficient, if more expensive, 100Gbps coherent interface. Equally, such links may be less than 500km while coherent is designed for long-haul links, 1000km or greater.

Elbers says samples of the metro card are available now with volume production beginning at the end of 2011.

Introducing 100G Metro (ADVA Optical video)

Bringing WDM-PON to market

"We see just one way to bring down the cost, form-factor and energy consumption of the OLT’s multiple transceivers: high integration of transceiver arrays"

Klaus Grobe, ADVA Optical Networking

Considerable engineering effort will be needed to make next-generation optical access schemes using multiple wavelengths competitive with existing passive optical networks (PONs).

Such a multi-wavelength access scheme, known as a wavelength division multiplexing-passive optical network (WDM-PON), will need to embrace new architectures based on laser arrays and reflective optics, and use advanced photonic integration to meet the required size, power consumption and cost targets.

Current PON technology uses a single wavelength to deliver downstream traffic to end users. A separate wavelength is used for upstream data, with each user having an assigned time slot to transmit.

Gigabit PON (GPON) delivers 2.5 Gigabit-per-second (Gbps) to between 32 or 64 users, while the next development, XG-PON, will extend GPON’s downstream data rate to 10 Gbps. The alternative PON scheme, Ethernet PON (EPON), already has a 10 Gbps variant. Vendors are also extending PON’s reach from 20km to 80km or more using signal amplification.

But the industry view is that after 10 Gigabit PON, the next step will be to introduce multiple wavelengths to extend the capacity beyond what a time-sharing approach can support. Extending the access network's reach to 100km will also be straightforward using WDM transport technology.

The advent of WDM-PON is also an opportunity for new entrants, traditional WDM optical transport vendors, to enter the access market. ADVA Optical Networking is one firm that has been vocal about its plans to develop next-generation access systems.

“We are seriously investigating and developing a next-generation access system and it is very likely that it will be a flavour of WDM-PON,” says Klaus Grobe, senior principal engineer at ADVA Optical Networking. “It [next-generation access] must be based on WDM simply because of bandwidth requirements.”

The system vendor views WDM-PON as addressing three main applications: wireless backhaul, enterprise connectivity and residential broadband. But despite WDM-PON’s potential to reduce operating costs significantly, the challenge facing vendors is reducing the cost of WDM-PON hardware. Indeed it is the expense of WDM-PON systems that so far has assigned the technology to specialist applications only.

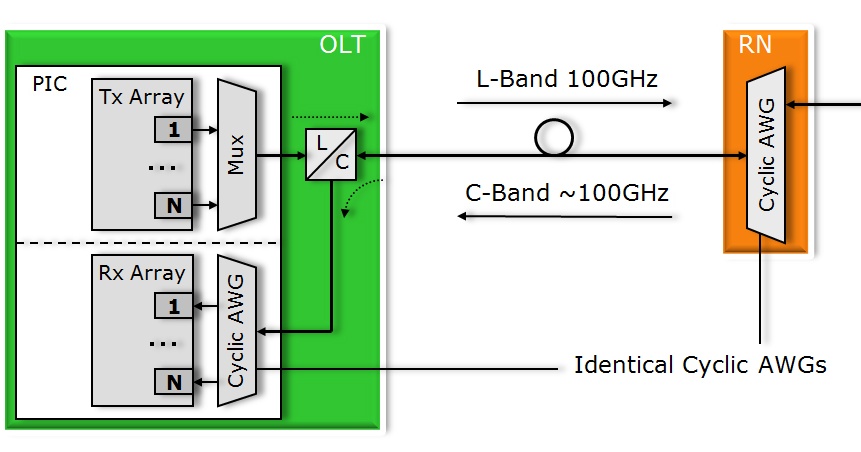

A non-reflective tunable laser-based WDM-PON ONU. Source: ADVA Optical NetworkingAccording to Grobe, cost reduction is needed at both ends of the WDM-PON: the client receiver equipment known as the optical networking unit (ONU) and the optical line terminal (OLT) housed within an operator’s central office.

A non-reflective tunable laser-based WDM-PON ONU. Source: ADVA Optical NetworkingAccording to Grobe, cost reduction is needed at both ends of the WDM-PON: the client receiver equipment known as the optical networking unit (ONU) and the optical line terminal (OLT) housed within an operator’s central office.

ADVA Optical Networking plans to use low-cost tunable lasers rather than a broadband light source and reflective optics for the ONU transceivers. “For the OLT, we see just one way to bring down the cost, form-factor and energy consumption of the OLT’s multiple transceivers: high integration of transceiver arrays,” says Grobe.

This is a considerable photonic integration challenge: a 40- or 80-wavelength WDM-PON uses 40 or 80 transceiver bi-directional clients, equating to 80 and 160 wavelengths. If 80 SFPs optical modules were used at the OLT, the resulting cost, size and power consumption would be prohibitive, says Grobe.

ADVA Optical Networking is working with several firms, one being CIP Technologies, to develop integrated transceiver arrays. ADVA Optical Networking and CIP Technologies are part of the EU-funded project, C-3PO, that includes the development of integrated transceiver arrays for WDM-PON.

Splitters versus filters

One issue with WDM-PON is that there is no industry-accepted definition. ADVA Optical Networking views WDM-PON as an architecture based on optical filters rather than splitters. Two consequences result once that choice is made, says Grobe.

One is insertion loss. Choosing filters implies arrayed waveguide gratings (AWGs), says Grobe. “No other filter technology is seriously considered for WDM-PON if filters are used,” he says.

With an AWG, the insertion loss is independent of the number of wavelengths supported. This differs from using a splitter-based architecture where every 1x2 device introduces a 3dB loss - “closer to 3.5dB”, he says. Using a 1x64 splitter, the insertion loss is 14 or 15dB whereas for a 40-channel AWG the loss can be as low as 4dB. “I just saw specs of a first 96-channel AWG, even that one isn’t much higher [than 4dB],” says Grobe. Thus using filters rather than splitters, the insertion loss is much lower for a comparable number of client ONUs.

There is also a cost benefit associated with a low insertion loss. To limit the cost of next-generation PON, the transceiver design must be constrained to a 25dB power budget associated with existing PON transceivers. “This is necessary to keep these things cheap, possibly dirt cheap,” says Grobe.

The alternative, using XG-PON’s sophisticated 10 Gbps burst-mode transceiver with its associated 35dB power budget, achieving low cost is simply not possible, he says. To live with transceivers with a 25dB power budget, the insertion loss of the passive distribution network must be minimised, explaining why filters are favoured.

The other main benefit of using filters is security. With a filter-based PON, wavelength point-to-point connections result. “You are not doing broadcast,” says Grobe. “You immediately get rid of almost all security aspects.” This is an issue with PON where traffic is shared.

Low power

Achieving a low-power WDM-PON system is another key design consideration. “In next-gen access, it is absolutely vital,” says Grobe. “If the technology is deployed on a broad scale - that is millions of user lines – every single watt counts, otherwise you end up with differences in the approaches that go into the megawatts and even gigawatts.”

There is also a benchmarking issue, says Grobe: the WDM-PON OLT will be compared to XG-PON’s even if the two schemes differ. Since XG-PON uses time-division multiplexing, there will be only one transceiver at the OLT. But this is what a 40- or 80-channel WDM-PON OLT will be compared with, even if the comparison is apples to pears, says Grobe.

WDM-PON workings

There are two approaches to WDM-PON.

In a fully reflective architecture, the OLT array and the ONUs are seeded using multi-wavelength laser arrays; both ends use the lasers arrays in combination with reflective optics for optical transmission.

ADVA Optical Networking is interested in using a reflective approach at the OLT but for the ONU it will use tunable lasers due to technical advantages. For example, using the same wavelength for the incoming and modulated streams in a reflective approach, Rayleigh crosstalk is an issue when the ONUs are 100km from the OLT. In contrast, Rayleigh crosstalk at the OLT is avoided because the multi-wavelength laser array is located only a few metres from the reflective electro-absorption modulators (REAMs).

REAMs are used rather than semiconductor optical amplifiers (SOAs) to modulate data at the OLT because they support higher bandwidth 10 Gbps wavelengths. Indeed the C-3PO project is likely to use a monolithically integrated SOA-REAM for this task. “The reflective SOA is narrower in bandwidth but has inherent gain while the REAM has loss rather than gain – it is just a modulator,” says Grobe. “The combination of the two is the ideal: giving high modulation bandwidth and high transmit power.”

The integrated WDM-PON OLT. In practice the transmit array uses a reflective architecture based on SOA-REAMs and is fed with a multi-wavelength laser source. Source: ADVA Optical Networking

The integrated WDM-PON OLT. In practice the transmit array uses a reflective architecture based on SOA-REAMs and is fed with a multi-wavelength laser source. Source: ADVA Optical Networking

For the OLT, a multi-wavelength laser is fed via an AWG into an array of SOA-REAMs which modulate the wavelengths and return them through the AWG where they are multiplexed and transmitted to the ONUs via a demultiplexing AWG. An added benefit of this approach, says Grobe, is that the same multi-wavelength laser source can be use to feed several WDM-PON OLTs, further decreasing system cost.

For the upstream path, each ONU’s wavelength is separated by the OLT’s AWG and fed to the receiver array. In a WDM-PON system, the OLT transmit wavelengths and receive wavelengths (from the ONUs) operate in separate optical bands.

Grobe expects its resulting WDM-PON system to use 40 or 80 channels. And to best meet size, power and cost constraints, the OLT design will likely implemented as a photonic integrated circuit. “We are after a single PIC solution,” he says. “It is clear that with the OLT, integration is the only way to meet requirements.” A photonically-integrated OLT design is one of the products expected from the C-3PO project, using CIP Technologies' hybrid integration technology.

ADVA Optical Networking has already said that its WDM-PON OLT will be implemented using its FSP 3000 platform.

- To see some WDM-PON architecture slides, click here.

Reflecting light to save power

System vendors will be held increasingly responsible for the power consumption of their telecom and datacom platforms. That’s because for each watt the equipment generates, up to six watts is required for cooling. It is a burden that will only get heavier given the relentless growth in network traffic.

"Enterprises are looking for huge capacity at low cost and are increasingly concerned about the overall impact on power consumption"

"Enterprises are looking for huge capacity at low cost and are increasingly concerned about the overall impact on power consumption"

David Smith, CIP Technologies

No surprise, then, that the European 7th Framework Programme has kicked-off a research project to tackle power consumption. The Colorless and Coolerless Components for Low-Power Optical Networks (C-3PO) project involves six partners that include component specialist CIP Technologies and system vendors ADVA Optical Networking.

CIP is the project’s sole opto-electronics provider while ADVA Optical Networking's role is as system integrator.

“It’s not the power consumption of the optics alone,” says David Smith, CTO of CIP Technologies. “The project is looking at component technology and architectural issues which can reduce overall power consumption.”

The data centre is an obvious culprit, requiring up to 5 megawatts. Power is consumed by IT and networking equipment within the data centre – not a C-3PO project focus – and by optical networking equipment that links the data centre to other sites. “Large enterprises have to transport huge amounts of capacity between data centres, and requirements are growing exponentially,” says Smith. “They [enterprises] are looking for huge capacity at low cost and are increasingly concerned about the overall impact on power consumption.”

One C-3PO goal is to explore how to scale traffic without impacting the data centre’s overall power consumption. Conventional dense wavelength division multiplexing (DWDM) equipment isn’t necessarily the most power-efficient given that DWDM tunable lasers requires their own cooling. “There is the power that goes into cooling the transponder, and to get the heat away you need to multiply again by the power needed for air conditioning,” says Smith.

Another idea gaining attention is operating data centres at higher ambient temperatures to reduce the air conditioning needed. This idea works with chips that have a wide operating temperature but the performance of optics - indium phosphide-based actives - degrade with temperature such that extra cooling is required. As such, power consumption could even be worse, says Smith

A more controversial optical transport idea is changing how line-side transport is done. Adding transceivers directly to IP core routers saves on the overall DWDM equipment deployed. This is not a new idea, says Smith, and an argument against this is it places tunable lasers and their cooling on an IP router which operates at a relatively high ambient temperature. The power reduction sought may not be achieved.

But by adopting a new transceiver design, using coolerless and colourless (reflective) components, operating at a wider temperature range without needing significant cooling is possible. “It is speculative but there is a good commercial argument that this could be effective,” says Smith.

C-3PO will also exploit material systems to extend devices’ temperature range - 75oC to 85oC - to eliminate as much cooling as possible. Such material systems expertise is the result of CIP’s involvement in other collaborative projects.

"If the [WDM-PON] technology is deployed on a broad scale - that is millions of user lines – every single watt counts"

Klaus Grobe, ADVA Optical Networking

Indeed a companion project, to be announced soon, will run alongside C-3PO based on what Smith describes as ‘revolutionary new material systems’. These systems will greatly improve the temperature performance of opto-electronics. “C-3PO is not dependent on this [project] but may benefit from it,” he says.

Colourless and coolerless

CIP’s role in the project will be to integrate modulators and arrays of lasers and detectors to make coolerless and colourless optical transmission technology. CIP has its own hybrid optical integration technology called HyBoard.

“Coolerless is something that will always be aspirational,” says Smith. C-3PO will develop technology to reduce and even eliminate cooling where possible to reduce overall power consumption. “Whether you can get all parts coolerless, that is something to be strived for,” he says.

Colourless implies wavelength independence. For light sources, one way to achieve colourless operation is by using tunable lasers, another is to use reflective optics.

CIP Technologies has been working on reflective optics as part of its work on wavelength division multiplexing, passive optical networks (WDM-PON). Given such reflective optics work for distances up to 100km for optical access, CIP has considered using the technology for metro and enterprise networking applications.

Smith expects the technology to work over 200-300km, at data rates from 10 to 28 Gigabit-per-second (Gbps) per channel. Four 28Gbps channels would enable low-cost 100Gbps DWDM interfaces.

Reflective transmission

CIP’s building-block components used for colourless transmission include a multi-wavelength laser, an arrayed waveguide grating (AWG), reflective modulators and receivers (see diagram).

Reflective DWDM architecture. Source: CIP Technologies

Reflective DWDM architecture. Source: CIP Technologies

Smith describes the multi-wavelength laser as an integrated component, effectively an array of sources. This is more efficient for longer distances than using a broadband source that is sliced to create particular wavelengths. “Each line is very narrow, pure and controlled,” says Smith.

The laser source is passed through the AWG which feds individual wavelengths to the reflective modulators where they are modulated and passed back through the AWG. The benefit of using a reflective modulator rather than a pass-through one is a simpler system. If the light source is passed through the modulator, a second AWG is needed to combine all the sources, as well as a second fibre. Single-ended fibre is also simpler to package.

For data rates of 1 or 2Gbps, the reflective modulator used can be a reflective semiconductor optical amplifier (RSOA). At speeds of 10Gbps and above, the complementary SOA-REAM (reflective electro-absorption modulator) is used; the REAM offers a broader bandwidth while the SOA offers gain.

The benefit of a reflective scheme is that the laser source, made athermal and coolerless, consumes far less power than tunable lasers. “It has to be at least half the cost and we think that is achievable,” says Smith.

Using the example of the IP router, the colourless SFP transceiver – made up of a modulator and detector - would be placed on each line card. And the multi-wavelength laser source would be fed to each card’s module.

Another part of the project is looking at using arrays of REAMs for WDM-PON. Such an modulator array would be used at the central office optical line terminal (OLT). “Here there are real space and cost savings using arrays of reflective electro-absorption modulators given their low power requirements,” says Smith. “If we can do this with little or no cooling required there will be significant savings compared to a tunable laser solution.”

ADVA Optical Networking points out that with an 80-channel WDM-PON system, there will be a total of 160 wavelengths (see the business case for WDM-PON). “If you consider 80 clients at the OLT being terminated with 80 SFPs, there will be a cost, energy consumption and form-factor overkill,” says Klaus Grobe, senior principal engineer at ADVA Optical Networking. “The only known solution for this is high integration of the transceiver arrays and that is exactly what C-3PO is about.”

The low-power aspect of C-3PO for WDM-PON is also key. “In next-gen access, it is absolutely vital,” says Grobe. “If the technology is deployed on a broad scale - that is millions of user lines – every single watt counts, otherwise you end up with differences in the approaches that go into the megawatts and even gigawatts.”

There is also a benchmarking issue: the WDM-PON OLT will be compared to the XG-PON standard, the next-generation 10Gbps Gigabit passive optical network (GPON) scheme. Since XG-PON will use time-division multiplexing, there will be only one transceiver at the OLT. But this is what a 40- or 80-channel WDM-PON OLT will be compared with.

There is also a benchmarking issue: the WDM-PON OLT will be compared to the XG-PON standard, the next-generation 10Gbps Gigabit passive optical network (GPON) scheme. Since XG-PON will use time-division multiplexing, there will be only one transceiver at the OLT. But this is what a 40- or 80-channel WDM-PON OLT will be compared with.

CIP will also be working closely with 3-CPO partner, IMEC, as part of the design of the low-power ICs to drive the modulators.

Project timescales

The C-3PO project started in June 2010 and will last three years. The total funding of the project is €2.6 million with the European Union contributing €1.99 million.

The project will start by defining system requirements for the WDM-PON and optical transmission designs.

At CIP the project will employ the equivalent of two full-time staff for the project’s duration though Smith estimates that 15 CIP staff will be involved overall.

ADVA Optical Networking plans to use the results of the project – the WDM-PON and possibly the high-speed transmission interfaces - as part of its FSP 3000 WDM platform.

CIP expects that the technology developed as part of 3-CPO will be part of its advanced product offerings.