The connected vehicle - driving in the cloud

Cars are already more silicon than steel. As makers add LTE high speed broadband, they are destined to become more app than automobile. The possibilities that come with connecting your car to the cloud are scintillating. No wonder Gil Golan, director at General Motors' Advanced Technical Center in Israel, says the automotive industry is at an 'inflection point'.

"If you put LTE to the vehicle ... you are going to open a very wide pipe and you can send to the cloud and get results with almost no latency"

"If you put LTE to the vehicle ... you are going to open a very wide pipe and you can send to the cloud and get results with almost no latency"

Gil Golan, General Motors

After a century continually improving the engine, suspension and transmission, car makers are now busy embracing technologies outside their traditional skill sets. The result is a period of unprecedented change and innovation.

Gil Golan, director at General Motors' Advanced Technical Center in Israel, cites the use of in-camera car systems to aid driving and parking as an example. "Five years ago almost no vehicle used a camera whereas now increasing numbers have at least one, a fish eye-camera facing backwards." Vehicles offering 360-degree views using five cameras are taking to the road and such numbers will become the norm in the next five years.

The result is that the automotive industry is hiring people with optics and lens expertise, as well as image processing skills to analyse the images and video the cameras produce. "This is just the camera; the vehicle is going to be loaded with electronics," says Golan.

In 2004 the [automotive] industry crossed the point where, on average, we spend more on silicon than on steel

Moore's Law

Semiconductor advances driven by Moore's Law have already changed the automotive industry. "In 2004 the [automotive] industry crossed the point where, on average, we spend more on silicon than on steel," says Golan.

Moore's Law continues to improve processor and memory performance while driving down cost. "Every small system can now be managed or controlled in a better way," says Golan. "With a processor and memory, everything can be more accurate, more functionality can be built in, and it doesn't matter if it is a windscreen wiper or a sophisticated suspension system."

Current high-end vehicles have over 100 microprocessors. In turn, chip makers are developing 100 Megabit and 1 Gigabit Ethernet physical devices, media access controllers and switching silicon for in-vehicle networking to link the car's various electronic control units (ECUs).

The growing number of on-board microprocessors is also reflected in the software within vehicles. According to Golan, the Chevrolet Volt has over 10 million lines of code while the latest Lockheed Martin F-35 fighter has 8.7 million. "These are software vehicles on four wheels," says Golan. Moreover, the design of the Chevy Volt started nearly a decade ago.

Car makers must keep vehicles, crammed with electronics and software, updated despite their far longer life cycles compared to consumer devices such as smartphones.

According to General Motors, each car model has its content sealed every four or five years. A car design sealed today may only come on sale in 2016 after which it will be manufactured for five years and remain on the road for a further decade. "A vehicle sealed today is supposed to be updated and relevant through to 2030," says Golan. "This, in an era where things are changing at an unprecedented pace."

As a result car makers work on ways to keep vehicles updated after the design is complete, during its manufacturing phase, and then when the vehicle is on the road, says Golan.

Industry trends

Two key trends are driving the automotive industry:

- The development of autonomous vehicles

- The connected vehicle

Leading car makers are all working towards the self-driving car. Such cars promise far greater safety and more efficient and economical driving. Such a vehicle will also turn the driver into a passenger, free to do other things. Automated vehicles will need multiple sensors coupled to on-board algorithms and systems that can guide the vehicle in real-time.

Golan says camera sensors are now available that see at night, yet some sensors can perform poorly in certain weather conditions and can be confused by electromagnetic fields - the car is a 'noisy' environment. As a result, multiple sensor types will be needed and their outputs fused to ensure key information is not missed.

"Remember, we are talking about life; this is not computers or mobile handsets," says Golan. "If you put more active safety systems on-board, it means you have to have a very solid read on what is going on around you."

The Chevrolet Volt has over 10 million lines of code while the latest Lockheed Martin F-35 fighter has 8.7 million

Wireless

Wireless communications will play a key role in vehicles. The most significant development is the advent of the Long Term Evolution (LTE) cellular standard that will bring broadband to the vehicle.

Golan says there are different perimeters within and around the car where wireless will play a role. The first is within the vehicle for wireless communication between devices such as a user's smart phone or tablet and the vehicle's main infotainment unit.

Wireless will also enable ECUs to talk, eliminating wiring inside the vehicle. "Wires are expensive, are heavy and impact the fuel economy, and can be a source for different problems: in the connectors and the wires themselves," says Golan.

A second, wider sphere of communication involves linking the vehicle with the immediate surroundings. This could be other vehicles or the infrastructure such as traffic lights, signs, and buildings. The communication could even be with cyclists and pedestrians carrying cellphones. Such immediate environment communication would use short-range communications, not the cellular network.

Wide-area communication will be performed using LTE. Such communication could also be performed over wireline. "If it is an electric vehicle, you can exchange data while you charge the vehicle," says Golan.

This ability to communicate across the network and connect to the cloud is what excites the car makers.

You can talk to the vehicle and the processing can be performed in the cloud

Cloud and Big Data

"If you put LTE to the vehicle, you are showing your customers that you are committed to bringing the best technology to the vehicle, you are going to open a very wide pipe and you can send to the cloud and get results with almost no latency," says Golan.

LTE also raises the interesting prospect of enabling some of the current processing embedded in the vehicle to be offloaded onto servers. "I can control the vehicle from the cloud," says Golan. "You can talk to the vehicle and the processing can be performed in the cloud."

The processing and capabilities offered in the cloud are orders of magnitude greater than what can be done on the vehicle, says Golan: "The results are going to be by far better than what we are familiar with today."

Clearly pooling and processing information centrally will offer a broader view than any one vehicle can provide but just what car processing functions can be offloaded are less clear, especially when a broadband link will always be dependent on the quality of the cellular coverage.

Safety critical systems will remain onboard, stresses Golan, but some of the infotainment and some of the extra value creation will come wirelessly.

Choosing the LTE operator to use is a key decision for an automotive company. "We have to make sure you [the driver] are on a very good network," says Golan. "The service provider has to show us, prove to us [their network], and in some cases we run basic and sporadic tests with our operator to make sure that we do have the network in place."

Automotive companies see opportunity here.

"When you get into a vehicle, there is a new type of behaviour that we know," says Golan. "We know a lot about your vehicle, we know your behaviour while you are driving: your driving style, what coffee you like to drink and your favourite coffee store, and that you typically fill up when you have a half tank and you go to a certain station."

This knowledge - about the car and the driver's preferences when driving - when combined with the cloud, is a powerful tool, says Golan. Car companies can offer an ecosystem that supports the driver. "We can have everything that you need while in the vehicle, served by General Motors," says Golan. "Let your imagination think about the services because I'm not going to tell you; we have a long list of stuff that we work on."

If we don't see that what we work on creates tremendous value, we drop it

General Motors already owns a 'huge' data centre and being a global company with a local footprint, will use cloud service providers as required.

So automotive is part of the Big Data story? "Oh, big time," says Golan. "Business analytics is critical for any industry including the automotive industry."

Innovation

Given the opportunities new technologies such as sensors, computing, communication and cloud enable, how do automotive companies remain focussed?

"If we don't see that what we work on creates tremendous value, we drop it," says Golan. "We have no time or resources to spend on spinning wheels."

General Motors has its own venture capital arm to invest in promising companies and spends a lot of time talking to start-ups. "We talk to every possible start-up; if you see them for the first time you would say: 'where is the connection to the automotive industry?'," says Golan. "We talk to everybody on everything."

The company says it will always back ideas. "If some team member comes up with a great idea, it does not matter how thin the company is spread, we will find the resources to support that," says Golan.

General Motors set up its research centre in Israel a decade ago and is the only automotive company to have an advanced development centre there, says Golan."The management had the foresight to understand that the industry is undergoing mega trends and an entrepreneurial culture - an innovation culture - is critically important for the future of the auto industry."

The company also has development sites in Silicon Valley and several other locations. "This is the pipe that is going to feed you innovation, and to do the critical steps needed towards securing the future of the company," says Golan. "You have to go after the technology."

Further reading:

Google's Original X-Man, click here

SDN starts to fulfill its network optimisation promise

Infinera, Brocade and ESnet demonstrate the use of software-defined networking to provision and optimise traffic across several networking layers.

Infinera, Brocade and network operator ESnet are claiming a first in demonstrating software-defined networking (SDN) performing network provisioning and optimisation using platforms from more than one vendor.

Mike Capuano, Infinera

Mike Capuano, Infinera

The latest collaboration is one of several involving optical vendors that are working to extend SDN to the WAN. ADVA Optical Networking and IBM are working to use SDN to connect data centres, while Ciena and partners have created a test bed to develop SDN technology for the WAN.

The latest lab-based demonstration uses ESnet's circuit reservation platform that requests network resources via an SDN controller. ESnet, the US Department of Energy's Energy Sciences Network, conducts networking R&D and operates a large 100 Gigabit network linking research centres and universities. The SDN controller, the open source Floodlight Project design, oversees the network comprising Brocade's 100 Gigabit MLXe IP router and Infinera's DTN-X platform.

The goal of provisioning and optimising traffic across the routing, switching and optical layers has been a work in progress for over a decade. System vendors have undertaken initiatives such as External Network-Network Interface (ENNI) and multi-domain GMPLS but with limited success. "They have been talked about, experimented with, but have never really made it out of the labs," says Mike Capuano, vice president of corporate marketing at Infinera. "SDN has the opportunity to solve this problem for real."

"In the world of Web 2.0, the general approach is not to sit and wait till standards are done, but to prototype, test, find the gaps, report back, and do it again"

"SDN, and technologies like the OpenFlow protocol, allow all of the resources of the entire network to be abstracted to this higher level control," says Daniel Williams, director of product marketing for data center and service provider routing at Brocade.

Daniel William, BrocadeInfinera and ESnet demonstrated OpenFlow provisioning transport resources a year ago. This latest demonstration has OpenFlow provisioning at the packet and optical layers and performing network optimisation. "We have added more carrier-grade capabilities," says Capuano. "Not just provisioning, but now we have topology discovery and network configuration."

Daniel William, BrocadeInfinera and ESnet demonstrated OpenFlow provisioning transport resources a year ago. This latest demonstration has OpenFlow provisioning at the packet and optical layers and performing network optimisation. "We have added more carrier-grade capabilities," says Capuano. "Not just provisioning, but now we have topology discovery and network configuration."

“The demonstration is a positive step in the development of SDN because it showcases the multi-layer transport provisioning and management that many operators consider the prime use case for transport SDN,” says Rick Talbot, principal analyst, optical infrastructure at Current Analysis. "The demonstration’s real-time network optimisation is an excellent example of the potential benefits of transport SDN, leveraging SDN to minimise transit traffic carried at the router layer, saving both CapEx and OpEx."

Using such an SDN setup, service providers can request high-bandwidth links to meet specific networking requirements. "There can be a request from a [software] app: 'I need a 80 Gigabit flow for two days from Switzerland to California with a 95ms latency and zero packet loss'," says Capuano. "The fact that the network has the facility to set that service up and deliver on those parameters automatically is a huge saving."

Such a link can be established the same day of the request being made, even within minutes. Traditionally, such requests involving the IP and optical layers - and different organisations within a service provider - can take weeks to fulfill, says Infinera.

Current Analysis also highlights another potential benefit of the demonstration: how the control of separate domains - the Infinera wavelength and TDM domain and the Brocade layer 2/3 domain - with a common controller illustrates how SDN can provide end-to-end multi-operator, multi-vendor control of connections.

What next

The Open Networking Foundation (ONF) has an Optical Transport Working Group that is tasked with developing OpenFlow extensions to enable SDN control beyond the packet layer to include optical.

How is the optical layer in the demonstration controlled given the ONF work is unfinished?

"Our solution leverages Web 2.0 protocols like RESTful and JSON integrated into the Open Transport Switch [application] that runs on the DTN-X," says Capuano. "In the world of Web 2.0, the general approach is not to sit and wait till standards are done, but to prototype, test, find the gaps, report back, and do it again."

Further work is needed before the demonstration system is robust enough for commercial deployment.

"This is going to take some time: 2014 is the year of test and trials in the carrier WAN while 2015 is when you will see production deployment," says Capuano. "If service providers are making decision on what platforms they want to deploy, it is important to chose ones that are going to position them well to move to SDN when the time comes."

Alcatel-Lucent dismisses Nokia rumours as it launches NFV ecosystem

Michel Combes, CEO. Photo: Kobi Kantor.

Michel Combes, CEO. Photo: Kobi Kantor.

The CEO of Alcatel-Lucent, Michel Combes, has brushed off rumours of a tie-up with Nokia, after reports surfaced last week that Nokia's board was considering the move as a strategy option.

"You will have to ask Nokia," said Combes. "I'm fully focussed on the Shift Plan, it is the right plan [for the company]; I don't want to be distracted by anything else."

Combes was speaking at the opening of Alcatel-Lucent's cloud R&D centre in Kfar Saba, Israel, where the company's internal start-up CloudBand is developing cloud technology for carriers.

Network Functions Virtualisation

CloudBand used the site opening to unveil its CloudBand Ecosystem Program to spur adoption of Network Functions Virtualisation (NFV). NFV is a carrier-led initiative, set up by the European Telecommunications Standards Institute (ETSI), to benefit from the IT model of running applications on virtualised servers.

Carriers want to get away from vendor-specific platforms that are expensive to run and cumbersome to upgrade when new services are needed. Adding a service can take between 18 months and three years, said Dor Skuler, vice president and general manager of the CloudBand business unit. Moreover, such equipment can reside in the network for 15 years. "Most of the [telecom] software is running on CPUs that are 15 years old," said Skuler.

Instead, carriers want vendors to develop software 'network functions' executed on servers. NFV promises a common network infrastructure and reduced costs by exploiting the economies of scale associated with servers. Server volumes dwarf those of dedicated networking equipment, and are regularly upgraded with new CPUs.

Applications running on servers can also be scaled up and down, according to demand, using virtualisation and cloud orchestration techniques already present in the data centre. "This is about to make the network scalable and automated," said Combes.

Alcatel-Lucent stresses that not all networking functions are suited for virtualisation. Optical transport is one example. Another is routing, which requires dedicated silicon for packet processing and traffic management.

CloudBand was set up in 2011. The unit is focussed on the orchestration and automation of distributed cloud computing for carriers. "How do you operationalise cloud which may be distributed across 20 to 30 locations?" said Skuler.

CloudBand says it can add a "cloud node" - IT equipment at an operator's site - and have it up and running three hours after power-up. This requires processes that are fully automated, said Skuler. Also used are algorithms developed at Alcatel-Lucent Bell Labs that determine the best location for distributed cloud resources for a given task. The algorithms load-balance the resources based on an application's requirements.

The distributed cloud technology also benefits from software-defined networking (SDN) technology from Alcatel-Lucent's other internal venture, Nuage Networks. Nuage Networks automates and sets up network connections between data centres. "Just as SDN makes use of virtualisation to give applications more memory and CPU resources in the data centre, Nuage does the same for the network," said Skuler.

Open interfaces are needed for NFV to succeed and avoid the issue of proprietary solutions and vendor lock-in. Alcatel-Lucent's NFV solution needs to support third-party applications, while the company's applications will have to run on other vendors' platforms. To this aim, CloudBand has set up an NFV ecosystem for service providers, vendors and developers.

"We have opened up CloudBand to anyone in the industry to test network applications on top of the cloud," said Skuler. "We are the first to do that."

So far, 15 companies have signed up to the CloudBand Ecosystem Program including Deutsche Telekom, Telefonica, Intel and HP.

Technologies such as NFV promise operators a way to regain market traction and avoid the commoditisation of transport, said Combes. Operators can manage their networks more efficiently, and create new business models. For example, operators can sell enterprises network functions such as infrastructure-as-a-service and platform-as-a-service.

Does not software functions run on servers undermine a telecom equipment vendor's primary business? "We are still perceived as a hardware company yet 85 percent of systems is software based," said Combes. Moreover, this is a carrier-driven initiative. "This is where our customers want to go," he said. "You either accept there will be a bit of canabalisation or run the risk of being canabalised by IT players or others."

The Shift Plan

Combes has been in place as Alcatel-Lucent's CEO for four months. In that time he has launched the Shift Plan that focusses the company's activities in three broad directions: IP infrastructure including routing and transport, cloud, and ultra-broadband access including wireless (LTE) and wireline (FTTx).

Combes says the goal is to regain the competitiveness Alcatel-Lucent has lost in recent years. The goal is to improve product innovation, quality of execution and the company's cost structure. Combes has also tackled the balance sheet, refinancing company debt over the summer.

The Shift Plan's target is to get the company back on track by 2015: growing, profitable and industry-leading in the three areas of focus, he said.

Terabit interconnect to take hold in the data centre

Intel and Corning have further detailed their 1.6 Terabit interface technology for the data centre.

The collaboration combines Intel's silicon photonics technology operating at 25 Gigabit-per-fibre with Corning's ClearCurve LX multimode fibre and latest MXC connector.

![]() Silicon photonics wafer and the ClearCurve fibres. Source: Intel

Silicon photonics wafer and the ClearCurve fibres. Source: Intel

The fibre has a 300m reach, triple the reach of existing multi-mode fibre at such speeds, and uses a 1310nm wavelength. Used with the MXC connector that supports 64 fibres, the overall capacity will be 1.6 Terabits-per-second (Tbps).

"Each channel has a send and a receive fibre which are full duplex," says Victor Krutul, director business development and marketing for silicon photonics at Intel. "You can send 0.8Tbps on one direction and 0.8Tbps in the other direction at the same time."

The link supports connections within a rack and between racks; for example, connecting a data centre's top-of-rack Ethernet switch with an end-of-row one.

James Kisner, an analyst at global investment banking firm, Jefferies, views Intel’s efforts as providing important validation for the fledgling silicon photonics market.

However, in a research note, he points out that it is unclear whether large data centre equipment buyers will be eager to adopt the multi-mode fibre solution as it is more expensive than single mode. Equally, large data centres have increasingly longer span requirements - 500m to 2km - further promoting the long term use of single mode fibre.

Rack Scale Architecture

The latest details of the silicon photonics/ ClearCurve cabling were given as part of an Intel update on several data centre technologies including its Atom C2000 processor family for microservers, the FM5224 72-port Ethernet switch chip, and Intel's Rack Scale Architecture (RSA) that uses the new cabling and connector.

Intel is a member of Facebook's Open Compute Project based on a disaggregated system design that separates storage, computing and networking. "When I upgrade the microprocessors on the motherboard, I don't have to throw away the NICs [network interface controllers] and disc drives," says Krutul. The disaggregation can be within a rack or between rows of equipment. Intel's RSA is a disaggregated design example.

The chip company discussed an RSA design for Facebook. The rack has three 100Gbps silicon photonics modules per tray. Each module has four transmit and four receive fibres, or 24 fibres per tray and per cable. “Different versions of RSA will have more or less modules depending on requirements," says Krutul. Intel has also demonstrated a 32-fibre MXC prototype connector.

Corning says the ClearCurve fibre delivers several benefits. The fibre has a smaller bend radius of 7.5mm, enabling fibre routing on a line card. The 50 micron multimode fibre face is also expanded to 180 microns using a beam expander lens. The lenses make connector alignment easier and less sensitive to dust. Corning says the MXC connector comprises seven parts, fewer than other optical connectors.

Fibre and connector standardisation are key to ensure broad use, says Daryl Inniss, vice president and practice leader, components at Ovum.

"Intel is the only 1310nm multimode transmitter and receiver supplier, and expanding this optical link into other applications like enterprise data centres may require a broader supply base," says Inniss in a comment piece. But the fact that Corning is participating in the development signals a big market in the making, he says.

Intel has not said when the silicon photonics transceiver and fibre/ connector will be generally available. "We are not discussing schedules or pricing at this time," says Krutul.

Silicon photonics: Intel's first lab venture

The chip company has been developing silicon photonics technology for a decade.

"As our microprocessors get faster, you need bigger and faster pipes in and around the servers," says Krutul. "That is a our whole goal - feeding our microprocessors."

Intel is setting up what it calls 'lab ventures', with silicon photonics chosen to be the first.

"You have a research organisation that does not do productisation, and business units that just do products," says Krutul. "You need something in between so that technology can move from pure research to product; a lab venture is an organisational structure to allow that movement to happen."

The lab ventures will be discussed more in the coming year.

Coriant adds optical control to SDN framework

Coriant's CTO, Uwe Fischer, explains its Intelligent Optical Control and how the system will complement Transport SDN.

"You either master all that complexity at once, or you find the right entry point and provide value for each concrete challenge, and extend step-by-step from there"

Uwe Fischer, CTO of Coriant

Coriant has deployed a networking framework that it says will comply with Transport SDN, the software-defined networking (SDN) implementation for the wide area network (WAN).

The company's Intelligent Optical Control system is already deployed with one large North American operator while Coriant is working to install the system with other Tier 1 customers.

Work to extend SDN technology beyond the data centre to work across operators' transport networks has just begun. The Open Networking Foundation (ONF), for example, has established an Optical Transport Working Group to define the extensions needed to enable SDN control of the transport layer and not just packet.

"SDN and optical networking go together nicely; they are not decoupled but make up an end-to-end overall framework," says Uwe Fischer, CTO at Coriant.

The Intelligent Optical Control is designed to tackle immediate networking issues as Transport SDN is developed. Coriant says its system complies with the ONF's three networking layer SDN model. The top, application layer interfaces with the middle, control layer. And it is at the control layer where the SDN controller oversees the network elements found in the third, infrastructure layer.

Intelligent Optical Control adds two other components to the model. An extra intelligence component in the control layer that sits between the SDN controller and the infrastructure layer. This intelligence is designed to exploit the intricacies of the optical layer.

Coriant has also added an application at the topmost layer to automate operational procedures. "SDN at the application layer is centered around service creation," says Fischer. "We see a complete set of other applications which automate operational workflows."

Optical intelligence

One key benefit of SDN is the central view it has of the network and its resources. Such centralised control works well in the data centre and packet networking. Operators' networks are more complex, however, housing multiple vendors' equipment and multiple networking layers and protocols.

The ONF's Optical Transport Working Group is investigating two approaches - direct and abstract models - to enable the OpenFlow standard to extend its control across all the transport layers.

With the direct model, an SDN controller will talk to each network element, controlling its forwarding behaviour and port characteristics. The abstract model, in contrast, will enable the controller to talk to a network element or an intermediate controller or 'mediation'. This mediation performs a translator role, enacting requests from the SDN controller.

The direct model interests certain ONF members due to its potential of reduce the cost of networking equipment by moving much of the software from each element to the SDN controller. The abstract model, in contrast, has the benefit of limiting how much the controller needs to be exposed to the underlying network's details.

Coriant says it has yet to form a view as to the benefits of the direct and abstract ONF models. That said, Fischer does not see any mechanisms being discussed in the ONF that will fully exploit the potential of the photonic network. Accordingly, Coriant has added its own intelligence that sits between the SDN controller and the photonic layer.

“We fully comply with the approach of an SDN controller, however, we put another layer in between the control layer and the infrastructure layer,” says Fischer. “We consider it a part of the control layer, but adding the planning and routing intelligence to leverage the full performance of the infrastructure layer underneath."

Fischer says there is a role for abstraction at the photonic layer but perhaps only for metro networks. "We currently don't think this will really extend to the wide area photonic layer," he says.

"The added intelligence can leverage the full performance of the WDM network because it knows all the planning rules in detail," says Fischer. It does multi-layer optimisation across the transport layers. Coriant has added the intelligence because it does not think the transport-network-specific aspects can be centralised in a generic way.

Automated operations

Coriant's Intelligent Optical Controller also adds an application to automate operational procedures. Fischer cites how the application layer component benefits the workflow when a service is activated in the network.

With each service request, the Intelligent Optical Control details whether the new service can be squeezed onto existing infrastructure and details the service performance parameters to be expected, such as latency and the guaranteed bandwidth. "The operator can immediately judge the service level they would get," says Fischer.

Another planning mode supports the adding of equipment at the infrastructure layer. This enables a comparison to be made as to how the service level would improve with extra equipment in place.

If the operator can justify that business case for new hardware, the workflow is then automated. The tool creates the bill of materials, the electronic order, and the configuration and planning data needed to implement the hardware in the network.

Coriant says equipment and services can be time-tagged. If an engineer is known to be visiting a site once the hardware becomes available, the card can be pre-assigned and automatically used once it is plugged in. "There is a full consistency as to how the hardware is managed and optimised towards service creation," says Fischer.

Coriant is working with its major customers to create a testbed to demonstrate an SDN implementation of IP-over-DWDM. "It will involve interworking with third-party routers, and using SDN controllers to control the packet part of the network with Openflow and other mechanisms, and then connected to the Intelligent Optical Controller."

The goal is to demonstrate that Coriant's approach complies with this use case while better exploiting the optical network's capabilities.

Fischer says optical networking is moving to a new phase as transmission speeds move beyond 100 Gigabit.

"We are entering an interesting phase as capacity and reach hit the limits of practical networks," he says. "This means we are talking about flexible modulation formats and variously composed super-channels for 400 Gigabit and 1 Terabit."

In effect, a virtualisation of bandwidth is taking place at the photonic layer. "This fits nicely into the SDN principle as on the one hand it virtualises capacity, which very much fits in the model of virtualising infrastructure."

But it also brings challenges.

"There is currently not a good practical means to manage such flexible capacity at the photonic layer," says Fischer. This, says Coriant, it what its customers are saying. It also explains Coriant's decision to add the optical controller. "You either master all that complexity at once, or you find the right entry point and provide value for each concrete challenge, and extend step-by-step from there," says Fischer.

OIF defines carrier requirements for SDN

The Optical Internetworking Forum (OIF) has achieved its first milestone in defining the carrier requirements for software-defined networking (SDN).

The orchestration layer will coordinate the data centre and transport network activities and give easy access to new applications

Hans-Martin Foisel, OIF

The OIF's Carrier Working Group has begun the next stage, a framework document, to identify missing functionalities required to fulfill the carriers' SDN requirements. "The framework document should define the gaps we have to bridge with new specifications," says Hans-Martin Foisel of Deutsche Telekom, and chair of the OIF working group.

There are three main reasons why operators are interested in SDN, says Foisel. SDN offers a way for carriers to optimise their networks more comprehensively than before; not just the network but also processing and storage within the data centre.

"IP-based services and networks are making intensive use of applications and functionalities residing in the data centre - they are determining our traffic matrix," says Foisel. The data centre and transport network need to be coordinated and SDN can determine how best to distribute processing, storage and networking functionality, he says.

SDN also promises to simplify operators' operational support systems (OSS) software, and separate the network's management, control and data planes to achieve new efficiencies.

SDN architecture

The OIF's focus is on Transport SDN, involving the management, control and data plane layers of the network. Also included is an orchestration layer that will sit above the data centre and transport network, overseeing the two domains. Applications then reside on top of the orchestration layer, communicating with it and the underlying infrastructure via a programmable interface.

"Aligning the thinking among different people is quite an educational exercise, and we will have to get to a new understanding"

"The orchestration layer will coordinate the data centre and transport network activities and give, northbound, easy access to new applications," says Foisel.

A key SDN concept is programmability and application awareness, he says. The orchestration layer will require specified interfaces to ease the adding of applications independent of whether they impact the data centre, transport network or both.

Foisel says the OIF work has already highlighted the breadth of vision within the industry regarding how SDN should look. "Aligning the thinking among different people is quite an educational exercise, and we will have to get to a new understanding," he says.

Having equipment prototypes is also helping in understanding SDN. "Implementations that show part of this big picture - it is doable, it is working and how it is working - is quite helpful," says Foisel.

The OIF Carrier Working Group is working closely with the Open Networking Foundation's (ONF) Optical Transport Working Group to ensure that the two group are aligned. The ONF's Optical Transport Group is developing optical extensions to the OpenFlow standard.

Mobile backhaul chips rise to the LTE challenge

The Long Term Evolution (LTE) cellular standard has a demanding set of mobile backhaul requirements. Gazettabyte looks at two different chip designs for LTE mobile backhaul, from PMC-Sierra and from Broadcom.

"Each [LTE Advanced cell] sector will be over 1 Gig and there will be a need to migrate the backhaul to 10 Gig"

"Each [LTE Advanced cell] sector will be over 1 Gig and there will be a need to migrate the backhaul to 10 Gig"

Liviu Pinchas, PMC-Sierra

LTE is placing new demands on the mobile backhaul network. The standard, with its use of macro and small cells, increases the number of network end points, while the more efficient bandwidth usage of LTE is driving strong mobile traffic growth. Smartphone mobile data traffic is forecast to grow by a factor of 19 globally from 2012 to 2017, a compound annual growth rate of 81 percent, according to Cisco's visual networking index global mobile data traffic forecast.

Mobile networks backhaul links are typically 1 Gigabit. The advent of LTE does not require an automatic upgrade since each LTE cell sector is about 400Mbps, such that with several sectors, the 1 Gigabit Ethernet (GbE) link is sufficient. But as the standard evolves to LTE Advanced, the data rate will be 3x higher. "Each sector will be over 1 Gig and there will be a need to migrate the backhaul to 10 Gig," says Liviu Pinchas, director of technical marketing at PMC.

One example of LTE's more demanding networking requirements is the need for Layer 3 addressing and routing rather than just Layer 2 Ethernet. LTE base stations, known as eNodeBs, must be linked to their neighbours for call handover between radio cells. To do this efficiently requires IP (IPv6), according to PMC.

The chip makers must also take into account system design considerations.

Equipment manufacturers make several systems for the various backhaul media that are used: microwave, digital subscriber line (DSL) and fibre. The vendors would like common silicon and software that can be used for the various platforms.

Broadcom highlights how reducing the board space used is another important design goal, given that backhaul chips are now being deployed in small cells. An integrated design reduces the total integrated circuits (ICs) needed on a card. A power-efficient chip is also important due to thermal constraints and the limited power available at certain sites.

"Integration itself improves system-level power efficiency," says Nick Kucharewski, senior director for Broadcom’s infrastructure and networking group. "We have taken several external components and integrated them in one device."

WinPath4

PMC's WinPath4 supports existing 2G and 3G backhaul requirements, as well as LTE small and macro cells. A cell-side routers that previously served one macrocell will now have to serve one macrocell and up to 10 small cells, says PMC. This means everything is scaled up: a larger routing table, more users and more services.

To support LTE and LTE Advanced, WinPath4 has added additional programmable packet processors - WinGines - and hardware accelerators to meet new protocol requirements and the greater data throughput.

The previous generation 10Gbps WinPath3 has up to 12 WinGines, WinGines are multi-threaded processors, with each thread involving packet processing. Tasks performed include receiving, classifying, modifying, shaping and transmitting a packet.

The 40Gbps WinPath4 uses 48 WinGines and micro-programmable hardware accelerators for such tasks as packet parsing, packet header extraction and traffic matching, tasks too processing-intensive for the WinGines.

WinPath4 also support tables with up to two million IP destination addresses, up to 48,000 queues with four levels of hierarchical traffic shaping, encryption engines to implement the IP Security (IPsec) protocol and supports the IEEE 1588v2 timing protocol.

Two MIPs processor core are used for the control tasks, such as setting up and removing connections.

WinPath4 also supports the emerging software-defined networking (SDN) standard that aims to enhance network flexibility by making underlying switches and routers appear as virtual resources. For OpenFlow, the open standard use for SDN, the processor acts as a switching element with the MIPS core used to decode the OpenFlow commands.

StrataXGS BCM56450

Broadcom says its latest device, the BCM56450, will support the transition from 1GbE to 10GbE backhaul links, and the greater number of cells needed for LTE.

The BCM56450 will be used in what Broadcom calls the pre-aggregation network. This is a first level of aggregation in the wireline network that connects the radio access network's macro and small cells.

Pre-aggregation connects to the aggregation network, defined by Broadcom as having 10GbE uplinks and 1GbE downlinks. The BCM56450 meets these requirements but is referred as a pre-aggregating device since it also supports slower links such as microwave links or Fast Ethernet.

The BCM56450 is a follow-on to Broadcom's 56440 device announced two years ago. The BCM56450 upgrades the switching capacity to 100 Gigabit and doubles the size of the Layer 2 and Layer 3 forwarding tables.

The BCM56450 is one of a family of devices offering aggregation, from the edge through to 100GbE links deep in the network.

The network edge BCM56240 has 1GbE links and is designed for small cell applications, microwave units and small outdoor units. The 56450 is next in terms of capacity, aggregating the uplinks from the 240 device or linking directly to the backhaul end points.

The uplinks of the 56450 are 10GbE interfaces and these can be interfaced to the third family member, the BCM56540. The 56540, announced half a year ago, supports 10GbE downlinks and up to 40GbE uplinks.

The largest device, the BCM56640, used in large aggregation platforms takes 10GbE and 40GbE inputs and has the option for 100GbE uplinks for subsequent optical transport or routing. The 56640 is classed as a broadband aggregation device rather than just for mobile.

Features of the BCM56450 include support for MPLS (MultiProtocol Label Switching) and Ethernet OAM (operations, administration and maintenance), QoS and hardware protection switching. OAM performs such tasks as checking the link for faults, as well as performing link delay and packet loss measurements. This enables service providers to monitor the network's links quality. The device also supports the 1588 timing protocol used to synchronise the cell sites.

Another chip feature is sub-channelisation over Ethernet that allows the multiplexing of many end points into an Ethernet link. "We can support a higher number of downlinks than we have physical serdes on the device by multiplexing the ports in this way," says Kucharewski.

The on-chip traffic manager can also use additional, external memory if increasing the system's packet buffering size is needed. Additional buffering is typically required when a 10GbE interface's traffic is streamed to lower speed 1GbE or a Fast Ethernet port, or when the traffic manager is shaping multiple queues that are scheduled out of a lower speed port.

The BCM56450 integrates a dual-core ARM Cortex-A9 processor to configure and control the Ethernet switch and run the control plane software. The chip also has 10GbE serdes enabling the direct interfacing to optical transceivers.

Analysis

The differing nature of the two devices - the WinPath4 is a programmable chip whereas Broadcom's is a configurable Ethernet switch - means that the WinPath4 is more flexible. However, the greater throughput of the BCM56450 - at 100Gbps - makes it more suited to Carrier Ethernet switch router platforms. So says Jag Bolaria, a senior analyst at The Linley Group.

The WinPath4 also supports legacy T1/E1 TDM traffic whereas Broadcom's BCM56450 supports Ethernet backhaul only

The Linley Group also argues that the WinPath4 is more attractive for backhaul designers needing SDN OpenFlow support, given the chip's programmability and larger forwarding tables.

The WinPath4 and the BCM56450 are available in sample form. Both devices are expected to be generally available during the first half of 2014.

Further reading:

A more detailed piece on the WinPath4 and its protocol support is in New Electronics. Click here

The Linley Group: Networking Report, "Broadcom focuses on mobile backhaul", July 22nd, 2013. Click here (subscription is required)

60-second interview with Michael Howard

Infonetics Research has interviewed global service providers regarding their plans for software-defined networking (SDN) and network functions virtualisation (NFV). Gazettabyte asked Michael Howard, co-founder and principal analyst, carrier networks, about Infonetics' findings.

"Data centres are simple when compared to carrier networks"

"Data centres are simple when compared to carrier networks"

Michael Howard, Infonetics Research

What is it about SDN and NFV - technologies still in their infancy - that already convinces 86 percent of the operators to deploy the technologies in their optical transport networks?

Michael H: Operators have a universal draw to SDN and NFV for two basic reasons:

1. They want to accelerate revenue by reducing the time to new services and applications.

2. They have operational drivers, of which there are also two parts:

- Carriers expect software-defined networks to give them a single view across multiple vendor equipment, network layers and equipment types for mobile backhaul, consumer digital subscriber line (DSL), passive optical network (PON), optical transport, routers, mobile core and Ethernet access. This global view will allow them to provision, monitor and deliver service-level agreements while controlling services, virtual networks and traffic flows in an easier, more flexible and automated way.

- An additional function possible with such a global view across the multi-vendor network is that traffic can be monitored and re-distributed along pathways to make best use of the network. In this way, the network can run 'hotter' and thereby require less equipment, saving capital expenditure (CapEx).

Optical transport networks have a history of being engineered to effect predictable flows on transport arteries and backbones. Many operators have deployed, or have been experimenting with, GMPLS (Generalized Multi-Protocol Label-Switching) and vendor control planes. So it is natural for them to want to bring this industry standard method of deploying an SDN control plane over the usually multi-vendor transport network.

In our conversations - independent of our survey - we find that several operators believe the biggest bang for the SDN buck is to use SDN for single control plane over multi-layer data - router, Ethernet - and the optical transport network.

"The virtualisation of data centre networks has inspired operators who want to apply the same general principles to their oh-so-much-more complex networks"

Early use of SDN has been in the data centre. How will the technologies benefit networks more generally and optical transport in particular?

SDNs were developed initially to solve the operational problems of un-automated networks. That is to say, slow human labour-intensive network changes required by the automated hypervisor as it moves, adds and changes virtual machines across servers that may be in the same data centre or in multiple data centres.

The virtualisation of data centre networks has inspired operators who want to apply the same general principles to their oh-so-much-more complex networks. Data centres are simple when compared to carrier networks. Data centres are basically large numbers of servers connected by Ethernet LANs and virtual LANs with some router separations of the LANs connecting servers.

"It will be many years before SDNs-NFV will be deployed in major parts of a carrier network"

Service provider networks are a set of many different types of networks including consumer broadband, business virtual private networks, optical transport, access/ aggregation Ethernet and router networks, mobile core and mobile backhaul. Each of these comprises multiple layers and almost certainly involves multiple vendor equipment. This explains why operators are starting their SDN-NFV investigations with small network segments which we call 'contained domains'. It will be many years before SDNs-NFV will be deployed in major parts of a carrier network.

You mention small SDN and NFV deployments. What will these early applications look like?

Our survey respondents indicated that intra-datacentre, inter-datacentre, cloud services, and content delivery networks (CDNs) will be the first to be deployed by the end of 2014. Other areas targeted longer term are optical transport, mobile packet core, IP Multimedia Subsystem, and more.

Was there a finding that struck you as significant or surprising?

Yes. A lot of current industry buzz is about optical transport networks, making me think that we'd see SDNs deployed soon. But what we heard from operators is that optical transport networks are further out in their deployment plans. This makes sense in that the Open Networking Foundation working group for transport networks has just recently got their standardisation efforts going, which usually takes a couple of years.

You say that it will be years before large parts or a whole network will be SDN-controlled. What are the main challenges here regarding SDN and will they ever control a whole network?

As I said earlier, carrier networks are complex beasts, and they are carrying revenue-generating services that cannot be risked by deployment of a new set of technologies that make fundamental changes to the way networks operate.

A major problem yet to be resolved or even addressed much by the industry is how to add SDN control planes to the router-controlled network that uses the MPLS control plane. SDN and MPLS control planes must cooperate or be coordinated in some way since they both control the same network equipment-not an easy problem, and probably the thorniest of all challenges to deploy SDNs and NFV.

The study participants rated CDNs, IP multimedia subsystem (IMS), and virtual routers/ security gateways as the main NFV applications. At least two of these segments already use servers so just how impactful will NFV be for operators?

Many operators see that they can deploy NFV in a much simpler way than deploying control plane changes involved with SDNs.

Many network functions have already been virtualised, that is software-only versions are available, and many more are under development. But these are individual vendor developments, not done according to any industry standards. This means that NFV - network functions run on servers rather than on specialised network equipment like firewalls, intrusion prevention/ intrusion detection systems, Evolved Packet Core hardware - is already in motion.

The formalisation of NFV by the carrier-driven ETSI standards group is underway, developing recommendations and standards so that these virtualised network functions can be deployed in a standardised way.

Infonetics interviewed purchase-decision makers at 21 incumbent, competitive and independent wireless operators from EMEA (Europe, Middle East, Africa), Asia Pacific and North America that have evaluated SDN projects or plan to do so. The carriers represent over half (53 percent) of the world's telecom revenue and CapEx.

Apps over packet-optical: Ciena boosts 6500's packet handling

Source: Ciena

Source: Ciena

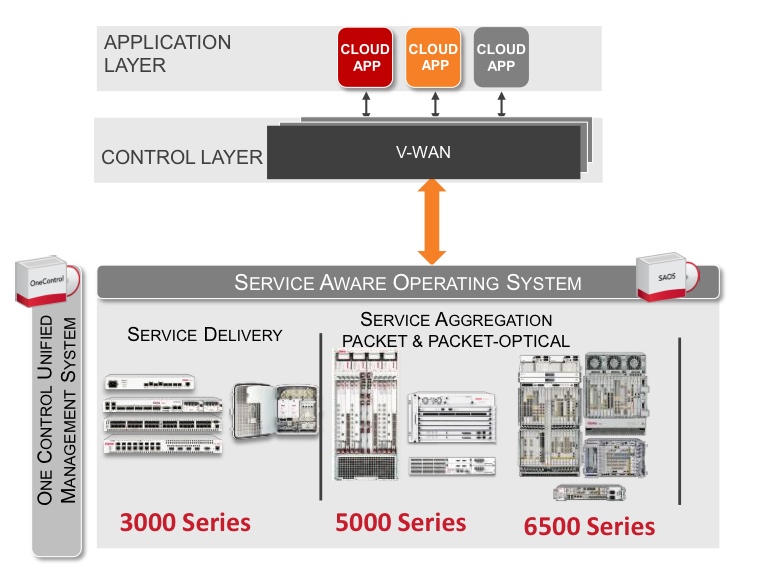

Ciena has enhanced its packet-optical equipment portfolio by adding packet support to its flagship 6500 platform.

Cards and software from Ciena's established Carrier Ethernet packet platforms have been added to the 6500, a packet-optical platform that features reconfigurable optical add-drop multiplexing (ROADM), WaveLogic3 coherent transponders, Optical Transport Network (OTN) switching and SONET/SDH aggregation. The system vendor has also developed packet aggregation and switch fabric cards for the 6500.

"You can now use the 6500 for 100 percent packet switching, 100 percent OTN switching, or any mix in between," says Michael Adams, vice president of product and technical marketing at Ciena.

The development is part of a general trend to combine optical and packet to create scalable, manageable networks. It also addresses the operators' growing need for programmable networks to deliver cloud-based services and dynamic bandwidth.

Applications

Ciena has a virtual wide-area network (VWAN) control layer that resides above the networking layer that abstracts the hardware and through which software applications can be executed (see chart).

"We have a scheduler 'app' through the control layer VWAN that allows bandwidth to change between sites, for example," says Adams. "Every night I want to do a backup between these times and I want this much bandwidth as I do it."

Another application is machine-to-machine communication that can be used to link data centres. "If you can virtualise within a data centre, why not virtualise across data centres?" says Adams.

As [servers'] virtual machines move between data centres, the performance of the network becomes key. Ciena has an application programming interface (API) that links to the server's hypervisor that allows machine-to-machine communication to be intercepted to benefit the bandwidth made available for the virtual machine traffic. "We are not doing it today but we have the software to link between two data centres," says Adams.

6500 enhancements

Until now it has been difficult to combine packet with packet optical, requiring different platforms, each with their own management system, says Adams. "It has been hard to take a base station that needs only packet, put the Carrier Ethernet traffic onto a ring [network] and then onto a 100 Gigabit wavelength," he says. "You either built pure packet or used a form of packet optical but it was hard to mix."

Ciena has added hardware and software to the 6500 from its existing packet platforms. The packet platforms are used to deliver Ethernet services and infrastructure and are a $40 million-a-quarter business for Ciena, with over 300,000 network elements deployed.

The service-aware operating system (SAOS), developed for the Ethernet packet platforms, has also been ported onto the 6500's new packet and fabric cards.

With the 6500 running the same software as its packet platforms, service management across the network becomes simpler. "Now, one system can deploy services, and look at performance visualisation between the layers," says Adams.

Ciena's latest hardware cards include blades with 1 and 10 Gigabit-per-second (Gbps) aggregation that operate independently of the 6500's switch fabric. "You don't touch the fabric, just run [them] over a WDM wavelength," says Adams. The stackable blades support 120Gbps to 300Gbps of packet traffic.

Meanwhile, the 6500 switch fabric cards add 600 Gigbit or 1.2 Terabit packet switching capacity that will be increased further in future.

"We have got these blades that can be stacked besides each other for resiliency or scale," says Adams. "And if you want to scale those up, there is a [switch] fabric solution."

Further reading:

100 Gigabit and packet optical loom large in the metro

P-OTS 2.0: 60-second interview with Heavy Reading's Sterling Perrin

Arista Networks embeds optics to boost 100 Gig port density

Arista Networks' latest 7500E switch is designed to improve the economics of building large-scale cloud networks.

The platform packs 30 Terabit-per-second (Tbps) of switching capacity in an 11 rack unit (RU) chassis, the same chassis as Arista's existing 7500 switch that, when launched in 2010, was described as capable of supporting several generations of switch design.

"The CFP2 is becoming available such that by the end of this year there might be supply for board vendors to think about releasing them in 2014. That is too far off."

Martin Hull, Arista Networks

The 7500E features new switch fabric and line cards. One of the line cards uses board-mounted optics instead of pluggable transceivers. Each of the line card's ports is 'triple speed', supporting 10, 40 or 100 Gigabit Ethernet (GbE). The 7500E platform can be configured with up to 1,152 10GbE, 288 40GbE or 96 100GbE interfaces.

The switch's Extensible Operating System (EOS) also plays a key role in enabling cloud networks. "The EOS software, run on all Arista's switches, enables customers to build, manage, provision and automate these large scale cloud networks," says Martin Hull, senior product manager at Arista Networks.

Applications

Arista, founded in 2004 and launched in 2008, has established itself as a leading switch player for the high-frequency trading market. Yet this is one market that its latest core switch is not being aimed at.

"With the exception of high-frequency trading, the 7500 is applicable to all data centre markets," says Hull. "That it not to say it couldn't be applicable to high-frequency trading but what you generally find is that their networks are not large, and are focussed purely on speed of execution of their transactions." Latency is a key networking performance parameter for trading.

The 7500E is being aimed at Web 2.0 companies and cloud service providers. The Web 2.0 players include large social networking and on-line search companies. Such players have huge data centres with up to 100,000 servers.

The same network architecture can also be scaled down to meet the requirements of large 'Fortune 500' enterprises. "Such companies are being challenged to deliver private cloud as the same competitive price points as the public cloud," says Hull.

The 7500 switches are typically used in a two-tier architecture. For the largest networks, 16 or 32 switches are used on the same switching tier in an arrangement known as a parallel spine.

A common switch architecture for traditional IT applications such as e-mail and e-commerce uses three tiers of switching. These include core switches linked to distribution switches, typically a pair of switches used in a given area, and top-of-rack or access switches connected to each distribution pair.

For newer data centre applications such as social networking, cloud services and search, the computation requirements result in far greater traffic shared on the same tier of switching, referred to as east-west traffic. "What has happened is that the single pair of distribution switches no longer has the capacity to handle all of the traffic in that distribution area," says Hull.

Customers address east-west traffic by throwing more platforms together. Eight or 16 distribution switches are used instead of a pair. "Every access switch is now connected to each one of those 16 distribution switches - we call them spine switches," says Hull.

The resulting two-tier design, comprising access switches and distribution switches, requires that each access switch has significant bandwidth between itself and any other access switch. As a result, many 7500 switches - 16 or 32 - can be used in parallel at the distribution layer.

Source: Arista Networks

Source: Arista Networks

"If I'm a Fortune 500 company, however, I don't need 16 of those switches," says Hull. "I can scale down, where four or maybe two [switches] are enough." Arista also offers a smaller 4-slot chassis as well as the 8 slot (11 RU) 7500E platform.

7500E specification

The switch has a capacity of 30Tbps. When the switch is fully configured with 1,152 10GbE ports, it equates to 23Tbps of duplex traffic. The system is designed with redundancy in place.

"We have six fabric cards in each chassis," says Hull, "If I lose one, I still have 25 Terabits [of switching fabric]; enough forwarding capacity to support the full line rates on all those ports." Redundancy also applies to the system's four power supplies. Supplies can fail and the switch will continue to work, says Hull.

The switch can process 14.4 billion 64-byte packets a second. This, says Hull, is another way of stating the switch capacity while confirming it is non-blocking.

The 7500E comes with four line card options: three use pluggable optics while the fourth uses embedded optics, as mentioned, based on 12 10Gbps transmit and 12 10Gbps receive channels (see table).

Using line cards supporting pluggable optics provides the customer the flexibility of using transceivers with various reach options, based on requirements. "But at 100 Gigabit, the limiting factor for customers is the size of the pluggable module," says Hull.

Using a CFP optical module, each card supports four 100Gbps ports only. The newer CFP2 modules will double the number to eight. "The CFP2 is becoming available such that by the end of this year there might be supply for board vendors to think about releasing them in 2014," says Hull. "That is too far off."

Arista's board mounted optics delivers 12 100GbE ports per line card.

The board-mounted triple-speed ports adhere to the IEEE 100 Gigabit SR10 standard, with a reach of 150m over OM4 fibre. The channels can be used discretely for 10GbE, grouped in four for 40GbE, while at 100GbE they are combined as a set of 10.

"At 100 Gig, the IEEE spec uses 20 out of 24 lanes (10 transmit and 10 receive); we are using all 24," says Hull. "We can do 12 10GbE, we can do three 40GbE, but we can still only do one 100Gbps because we have a little bit left over but not enough to make another whole 100GbE." In turn, the module can be configured as two 40GbE and four 10GbE ports, or 40GbE and eight 10GbE.

Using board-mounted optics reduces the cost of 100Gbps line card ports. A full 96 100GbE switch configuration achieves a cost of $10k/port while using existing CFP modules the cost is $30k-50k/ port, claims Arista.

Arista quotes 10GbE as costing $550 per line card port not including the pluggable transceiver. At 40GbE this scales to $2,200. For 100GbE the $10k/ port comprises the scaled-up port cost at 100GbE ($2.2k x 2.5) to which is added the cost of the optics. The power consumption is under 4W/ port when the system is fully loaded.

The company uses merchant chips rather than an in-house ASIC for its switch platform. Can't other vendors develop similar performance systems based on the same ICs? "They could, but it is not easy," says Hull.

The company points out that merchant chip switch vendors use a CMOS process node that is typically a generation ahead of state-of-the-art ASICs. "We have high-performance forwarding engines, six per line card, each a discrete system-on-chip solution," says Hull. "These have the technology to do all the Layer 2 and Layer 3 processing." All these devices on one board talk to all the other chips on the other cards through the fabric.

In the last year, equipment makers have decided to bring silicon photonics technology in-house: Cisco Systems has acquired Lightwire while Mellanox Technologies has announced its plan to acquire Kotura.

Arista says it is watching silicon photonics developments with keen interest. "Silicon photonics is very interesting and we are following that," says Hull. "You will see over the next few years that silicon photonics will enable us to continue to add density."

There is a limit to where existing photonics will go, and silicon photonics overcomes some of those limitations, he says.

Extensible Operating System

Arista's highlights several characteristics of its switch operating system. The EOS is standards-compliant, self-healing, and supports network virtualisation and software-defined networking (SDN).

The operating system implements such protocols as Border Gateway Protocol (BGP) and spanning tree. "We don't have proprietary protocols," says Hull. "We support VXLAN [Virtual Extensible LAN] an open standards way of doing Layer 2 overlay of [Layer] 3."

EOS is also described as self-healing. The modular operating system is composed of multiple software processes, each process described as an agent. "If you are running a software process and it is killed because it is misbehaving, when it comes back typically its work is lost," says Hull. EOS is self-healing in that should an agent need to be restarted, it can continue with its previous data.

"We have software logic in the system that monitors all the agents to make sure none are misbehaving," says Hull. "If it finds an agent doing stuff that it should not, it stops it, restarts it and the process comes back running with the same data." The data is not packet related, says Hull, rather the state of the network.

The operating system also supports network virtualisation, one aspect being VXLAN. VXLAN is one of the technologies that allows cloud providers to provide a customer with server resources over a logical network when the server hardware can be distributed over several physical networks, says Hull. "Even a VLAN can be considered as network virtualisation but VXLAN is the most topical."

Support for SDN is an inherent part of EOS from its inception, says Hull. “EOS is open - the customers can write scripts, they can write their own C-code, or they can install Linux packages; all can run on our switches." These agents can talk back to the customer's management systems. "They are able to automate the interactions between their systems and our switches using extensions to EOS," he says.

"We encompass most aspects of SDN," says Hull. "We will write new features and new extensions but we do not have to re-architect our OS to provide an SDN feature."

Arista is terse about its switch roadmap.

"Any future product would improve performance - capacity, table sizes, price-per-port and density," says Hull. "And there will be innovation in the platform's software.