The Open ROADM MSA adds new capabilities in Release 2.0

Xavier PougnardThe Open ROADM MSA, set up by AT&T, Ciena, Fujitsu and Nokia, is promoting interoperability between vendors’ ROADMs by specifying open interfaces for their control using software-defined networking (SDN) technology. Now, one year on, the MSA has 10 members, equally split between operators and systems vendors.

Xavier PougnardThe Open ROADM MSA, set up by AT&T, Ciena, Fujitsu and Nokia, is promoting interoperability between vendors’ ROADMs by specifying open interfaces for their control using software-defined networking (SDN) technology. Now, one year on, the MSA has 10 members, equally split between operators and systems vendors.

Orange joined the Open ROADM MSA last July and says it shares AT&T’s view that optical networks lack openness given the proprietary features of the vendors’ systems.

“As service providers, we suffer from lock-in where our networks are composed of equipment from a single vendor,” says Xavier Pougnard, R&D manager for transport networks at Orange Labs. “When we want to introduce another vendor for innovation or economic reasons, it is nearly impossible.”

This is what the MSA group wants to tackle with its open specifications for the data and management planes. The goal is to enable an operator to swap equipment without having to change their control by using a common, open management interface. “Right now, for every new provider, we need IT development for the management of the [network] node,” says Pougnard.

As service providers, we suffer from lock-in where our networks are composed of equipment from a single vendor. When we want to introduce another vendor for innovation or economic reasons, it is nearly impossible.

MSA status

The Open ROADM MSA has published two data sets as part of its Release 1.2. One set tackles 100-gigabit data plane interoperability by defining what is needed for two line-side transponders to talk to each other. The second set of specifications uses the YANG modelling language to allow the management of the transponders and ROADMs.

The group is now working on Release 2.0 that will enable longer reaches and exploit OTN switching. The specifications will also support flexgrid whereas Release 1.2 specifies 50GHz fixed channels only. Release 2.0 is expected to be completed in the second quarter of 2017. “Service providers would like it as soon as possible,” says Pougnard.

Pougnard highlights the speed of development of an open MSA model with new releases issued every few months, far quicker that traditional standardisation bodies. It was this frustration with the slow pace of development of the standards bodies that led Orange to join the Open ROADM MSA.

Orange stresses that the Open ROADM will not be used for all dense wavelength-division multiplexing cases. There will be applications which require extended performance where a specific vendor's equipment will be used. “We do specify the use of an FEC [forward error correction] in the specification but there are more powerful FECs that extend the reach for 100-gigabit interfaces,” says Pougnard. But the underlying flexibility offered by the MSA trumps performance.

Trials

AT&T detailed in December a network demonstration of the Open ROADM technology. The operator used a 100-gigabit optical wavelength in its Dallas area network to connect two IP-MPLS routers using transponders and ROADMs from Ciena and Fujitsu.

Orange is targeting its own lab trials in the first half of this year using a simplified OpenDaylight SDN controller working with ROADMs from three systems vendors. “We want to showcase the technology and prove the added value of an open ROADM,” says Pougnard.

Orange is also a member of the Telecom Infra Project, a venture that includes Facebook and 10 operators to tackle telecom networks from access to the core. The two groups have had discussions about areas of possible collaboration but while the Open ROADM MSA wants to promote a single YANG model that includes the amplifiers of the line system, TIP expects there to be more than a single model. The two organisations also differ in their philosophies: the Open ROADM MSA concerns itself with the interfaces to the platforms whereas TIP also tackles the internal design of platforms.

Coriant, which is a member of TIP and the Open ROADM MSA, is keen for alignment. "As an industry we should try to make sure that certain elements such as open API definitions are aligned between TIP and the Open ROADM MSA," says Uwe Fischer, CTO of Coriant.

Meanwhile, the Open ROADM MSA will announce another vendor member soon and says additional operators are watching the MSA’s progress with interest.

Pougnard stresses how open developments such as the ROADM MSA require WDM engineers to tackle new things. “We have a tremendous shift in skills,” he says. “Now they need to work on the automation capability, on YANG modelling and Netconf.” Netconf - the IETF’s network configuration protocol - uses YANG models to enable the management of network devices such as ROADMs.

NeoPhotonics samples its first CFP-DCO products

“Our rationale [for entering the CFP-DCO market] is we have all the optical components and the [merchant coherent] DSPs are now becoming available,” says Ferris Lipscomb (pictured), vice president of marketing at NeoPhotonics. “It is possible to make this product without developing your own custom DSP, with all the expense that entails.”

-DCO versus -ACO

The pluggable transceiver line-side market is split between Digital Coherent Optics and Analog Coherent Optics (ACO) modules.

Optical module makers are already supplying the more compact CFP2 Analog Coherent Optics (CFP2-ACO) transceivers. The CFP2-ACO integrates the optics only, with the accompanying coherent DSP-ASIC chip residing on the line card. The CFP2-ACO suits system vendors that have their own custom DSP-ASICs and can offer differentiated, higher-transmission performance while choosing the optics in a compact pluggable module from several suppliers.

In contrast, the CFP-DCO suits more standard deployments, and for those end-customers that do not want to be locked into a single vendor and a proprietary DSP. The -DCO is also easier to deploy. In China, currently undertaking large-scale 100-gigabit optical transport deployments, operators want a module that can be deployed in the field by a relatively unskilled technician. Deploying an ACO requires an engineer to perform the calibration due to the analogue interface between the module and the DSP, says NeoPhotonics.

The DCO also suits those systems vendors that do not have their own DSP and do not want to source a merchant coherent DSP and implement the analogue integration on the line card.

Our rationale [for entering the CFP-DCO market] is we have all the optical components and the [merchant coherent] DSPs are now becoming available

One platform, two products

The two announced ClearLight CFP-DCO products are a 100 gigabit-per-second (Gbps) module implemented using polarisation multiplexing, quadrature phase-shift keying modulation (PM-QPSK), and a module that supports both 100Gbps and 200Gbps using PM-QPSK and 16 quadrature amplitude modulation (PM-16QAM), respectively.

The two modules share the same optics and DSP-ASIC. Where they differ is in the software loaded onto the DSP and the host interface used. The lower-speed module has a 4 by 25-gigabit interface whereas the 200-gigabit CFP-DCO uses an 8 by 25-gigabit-wide interface. “The 100-gigabit CFP-DCO plugs into existing client-side slots whereas the 200-gigabit CFPs have to plug into custom designed equipment slots,” says Lipscomb.

The 100-gigabit CFP-DCO has a reach of 1,000km plus and has a power consumption under 24W. Lipscomb points out that the actual specs including the power consumption are negotiated on a customer-by-customer basis. The 200-gigabit CFP-DCO has a reach of 500km.

NeoPhotonics says it is using a latest-generation 16nm CMOS merchant DSP. NTT Electronics (NEL) and Clariphy have both announced 16nm CMOS coherent DSPs.

“We are designing to be able to second-source the DSP,” says Lipscomb. “There are currently only two merchant suppliers but there are others that have developments but are not yet at the point where they would be in the market.”

The CFP-DCO modules also support flexible grid that can fit a carrier within the narrower 37.5GHz channel to increase overall transmission capacity sent across a fibre’s C-band.

NeoPhotonics’s 100Gbps CFP-DCO is already sampling and it expected to be generally available in mid-2017, while the 200Gbps CFP-DCO is expected to be available one-quarter later.

“For 200-gigabit, you need to have customers building slots,” says Lipscomb. “For 100-gigabit, there are lots of slots available that you can plug into; 200-gigabits will take a little bit longer.”

NeoPhotonics’ CFP-DCO delivers the line rate used by the Voyager white box packet optical switch being developed as part of the Telecom Infra Project backed by Facebook and ten operators including Deutsche Telekom and SK Telecom. But the one-rack-unit Voyager packet optical platform uses four 5"x7" modules not pluggable CFP-DCOs to achieve the total line rate of 800Gbps.

Roadmap

NeoPhotonics is developing coherent module designs that will use higher baud rates than the standard 32-35 gigabaud (Gbaud), such as 45Gbaud and 64Gbaud.

The company also plans to develop a CFP2-DCO. Such a module is expected around 2018 once lower-power DSP-ASICs become available that can fit within the 12W power envelope of the CFP2. Such merchant DSP-ASICs will likely be implemented in a more advanced CMOS process such as 12nm or even 7nm.

Acacia Communications is already sampling a CFP2-DCO. Acacia designs its own silicon photonics-based optics and the coherent DSP-ASIC.

NeoPhotonics is also considered future -ACO designs beyond the CFP2 such as the CFP8, the 400-gigabit OSFP form factor and even the CFP4. “We are studying it but we don't know yet which directions things are going to go,” says Lipscomb.

Corrected on Dec 22nd. The Voyager box does not use pluggable CFP-DCO modules.

Infinera unveils first platforms using its latest PIC & DSP

- The Cloud Xpress 2 platform for data centre interconnects packs 1.2 terabits in a 1 rack unit (1RU) box.

- Infinera has also unveiled two DTN-X XT ‘meshponder’ platforms that aggregate client signals and offer sliceable transponder functionality that delivers wavelengths to multiple destinations.

- The company also announced an open flexible grid line system that supports the C and L bands

- Three of the top four US internet content providers are Infinera customers.

Infinera has started to unveil its platform portfolio based on its Infinite Capacity Engine that combines the company’s latest-generation photonic integrated circuit (PIC) and coherent DSP-ASIC technology.

The first platform using the technology is the Cloud Xpress 2, Infinera second-generation data centre interconnect platform, was unveiled in September. More recently, it has added two DTN-X meshponder platforms – the XT-3300 and the XT-3600 – as well as upgrading two of its existing DTN-X platforms.

“It is impressive that Infinera has turned its Infinite Capacity Engine into products fairly quickly,” says Sterling Perrin, senior analyst at Heavy Reading.

Cloud Express 2

The Cloud Xpress 2 uses a PIC that supports six wavelengths, each transmitting at 200 gigabits-per-second (Gbps) using polarisation-multiplexed, 16 quadrature amplitude modulation (PM-16QAM).

According to Infinera, the 1.2-terabit Cloud Xpress 2 is a 1 rack unit (1RU) box can be stacked to deliver a total of 27.6 terabits of capacity using the C-band. The platform also consumes the equivalent of 0.57W-per-gigabit, half the power consumption of the Cloud Xpress that was launched in 2014.

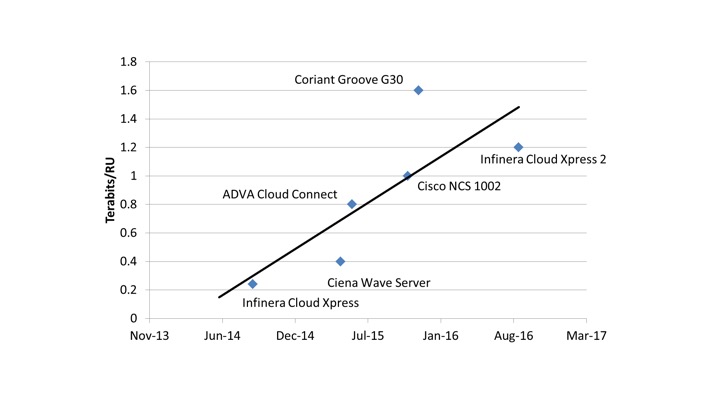

Infinera was the first vendor to unveil a specific product for data centre interconnect when it launched the Cloud Xpress, says Perrin. “Then everyone else came out with products that were not PIC-based, each one leapfrogging the others,” he says. “So Infinera had to do something with the Cloud Xpress because it was falling behind.”

Now, with the Cloud Xpress 2, Infinera is once again at the leading edge of platform performance for data centre interconnect.

The Cloud Xpress 2 also includes integrated multiplexing and amplification. Geoff Bennett, director, solutions and technology at Infinera, points out that competitor data centre interconnect platforms that use CFP2-ACO optical modules have wavelength outputs that need to be multiplexed onto fibre whereas Infinera’s PIC has a single fibre-pair output. The platform also integrates amplification to enable a reach of up to 130km without requiring external line amplification, an important requirement for data centre operators. Using line amplification, the Cloud Xpress 2 has a reach of some 600km.

Infinera’s Infinite Capacity Engine supports up to 12 wavelengths and a range of modulation schemes including PM-16QAM but the company has chosen to use six wavelengths rather than the full 12-wavelength PIC for the Cloud Xpress 2.

The Cloud Xpress 2 already delivers a 4.8x improvement in line-side capacity density compared to the Cloud Xpress, explains Bennett. The Cloud Xpress 2 supports 1.2 terabits in 1RU rack compared to 500 gigabits in the 2RU Cloud Xpress box. “That is already a big jump,” says Bennett.

“We have to see what happens next year. Is everybody else going to come out with round-two products using conventional optics that go ahead of the Cloud Xpress 2? Or is the Infinera platform cemented in there at the leading edge?

There is also an economic consideration in terms of how much bandwidth the compact data centre interconnect platform delivers. Cloud Xpress 2 delivers 1.2 terabits which may not be fully used when installed, with Infinera only being paid for those wavelengths used. “There are breakeven points – granularity points – that are important with this platform,” says Bennett.

A third consideration is the power consumption. “2.4 terabits [a full 12-wavelength PIC] in a rack unit will probably go way beyond what can be powered and cooled,” says Bennett. “In a data centre, a key thing is the power drawn per rack unit.”

Financial analyst George Notter of Jefferies visited Infinera during a recent analyst day. In a research note, he commented how Infinera has been delivering new product offerings in 4-year cycles. Notter said that while PIC development cycle times are still in the four-year range, Infinera is now working on two generations of PICs concurrently.

Infinera expects its fifth-generation PIC to be generally available in 2018. The PIC should provide up to 9.6 terabits, he says.

This suggests Infinera’s next-generation Cloud Xpress could be launched in two years’ time and would use the fifth-generation PIC rather than the existing Infinite Capacity Engine PIC using all 12 wavelengths.

Perrin says that when the Cloud Xpress was first announced, he thought it proved conclusively that a PIC is best suited for data centre interconnect. But given how rapidly the industry responded with platforms based on conventional optics, he is now unsure.

“We have to see what happens next year,” says Perrin. “Is everybody else going to come out with round-two products using conventional optics that go ahead of the Cloud Xpress 2? Or is the Infinera platform cemented in there at the leading edge?”

Cloud Xpress 2 is expected to be generally available early in 2017.

Web-scale transport

Infinera’s two new DTN-X meshponder platforms, the 1.2-terabit XT-3300 and the 2.4-terabit XT-3600, are designed for longer-reach mesh networks. In effect, the two meshponder platforms have enhanced telecom capabilities compared to the Cloud Xpress 2 designed for data centre interconnect, just as Infinera launched the DTN-X XT-500 muxponder after it first launched the Cloud Xpress.

However, the XT-3300 and XT-3600 platforms reflect the growing influence of data centre traffic as part of the overall traffic carried by networks, says Infinera, such that telecom equipment need to accommodate both traffic types.

Data centre operators must cope with massive increases in capacity demand, says Bennett. Their traffic requirements are made up of single ‘linear’ traffic flows, each occupying the full capacity of an optical channel.

Such flows may be 10 gigabits or increasingly 100-gigabit and are typically point-to-point. “All the classical telco strategies for dealing with over-demand don’t work in such web-scale networks because they have ‘elephant’ flows that can’t be broken up,” says Bennett.

How do you allow telcos to take on cloud architectures but still solve telco problems? And how do you allow web-scale data centre operators to scale out to a network that is telco-grade?

In contrast, telcos must support combinations of many, smaller traffic flows from hundreds or thousands of locations. But as operators start to re-engineer their networks to support technologies such as network function virtualisation and cloud services, they increasingly face the same challenges the data centre operators face, says Infinera.

“This is the nub of the problem in how the intelligent transport layer must evolve to be cloud-scale,” says Bennett. “How do you allow telcos to take on cloud architectures but still solve telco problems? And how do you allow web-scale data centre operators to scale out to a network that is telco-grade?”

Meshponders

Infinera’s XT-3300 is styled on the Cloud Xpress 2. It is a stackable 1.2-terabit line-side 1 rack-unit box that is telco grade: it is NEBS-compliant and is designed to work on a dense WDM open line system. The XT-3300 has a dozen 100 Gigabit Ethernet client side ports and like the Cloud Xpress 2, six wavelengths are used to deliver a total of 1.2 terabits of line-side capacity.

“The XT-3300 is more of a data centre product,” says Bennett. “With the Cloud Xpress, we tend to operate over fairly short distances; the XT-3300 is a souped-up version of that.”

In contrast, the XT-3600, at 4RU, is four times the height of the XT 3300, and at 2.4 terabits supports double the line side capacity by using 12 wavelengths. The platform also supports electronic switching and multiplexing.

Both meshponders supports all the advanced coherent toolkit features that Infinera detailed at the start of the year such as sub-carriers, gain sharing and matrix-enhanced phase-shift keying for long-haul links. Both platforms have a maximum reach of 6,000km.

The XT-3600 works with two existing Infinera DTN-X platforms – the XTC-4 and the XTC-10. Accordingly, these platforms have been given a mid-life upgrade with new Infinite Capacity Engine line cards and higher capacity OTN switching to accommodate the traffic from the faster line cards. The XTC-4’s OTN switching capacity has been upgraded to 4.8 terabits, while the XTC-10 OTN switching capacity is upgraded to 12 terabits.

The two XT platforms are dubbed meshponders. With a traditional muxponder, multiple client signals are aggregated before being sent out on a higher capacity single optical channel. A meshponder extends the concept by allowing multiple client signals sent on optical channels that are sent to multiple destinations (see diagrams).

The muxponder is made possible because Infinera’s PIC is ‘sliceable’, the wavelengths supported by the PIC can be used in different optical channel combinations – single channel and super-channels – such that ROADMs within the network can split the optical channels and route them to their destinations.

“The PIC has been redesigned to allow it to be sliced,” says Bennett. To do this, guard bands are added each side of a channel. As such, a sliceable channel is less spectrally efficient than a super-channel whose constituent wavelengths are packed more closely together as all are sent to one destination.

The line side capacity, either a sliced super-channel or a single super-channel, can be terminated on one of Infinera’s core boxes such as the XTC-4 or XTC-10.

Heavy Reading’s Perrin views the meshponders products as an incremental announcement: using its latest PIC, users now have the ability to go point to multipoint using a network of Infinera’s XT systems. The latest XT platforms will appeal to web-scale companies that have metro reaches or greater, says Perrin: “Infinera has got wholesale providers in Europe that transport big chunks of traffic across a greater distance than the Cloud Xpress 2 can do.”

Infinera expects its fifth-generation PIC to be generally available in 2018. The PIC should provide up to 9.6 terabits

Flexible-grid open line systems

Infinera has also announced the MTC-6 flexible grid open line system platform. Infinera’s current FlexILS line system is the 9-slot MTC-9. “The FlexILS is the industry’s most widely deployed flex-grid line system,” says Bennett.

Infinera has also been working with Lumentum to demonstrate its platforms working with Lumentum’s white box open line system. “We have a lot of experience working with open line systems,” says Bennett. Infinera and Lumentum are also part of the open packet optical transport, part of the Telecom Infra Project (TIP) industry initiative co-founded by Facebook, Deutsche Telecom and SK Telecom.

The MTC-6 flexible open line system occupies 6 slots and uses the same cards as its larger counterpart MTC-9. These boards include FlexROADM cards, EDFA amplifiers, RAMAN amplifiers and gain flatteners. The MTC-6 supports the C and L bands, effectively doubling overall transmission capacity from 25.6 terabits using Infinera’s platforms in the C-band to 51.2 terabits using both bands.

Infinera says three of the top four US internet content providers are using its platforms. “We are in the privileged position of the network architects in those companies telling us what they want,” says Bennett.

The MTC-6 FlexILS open line system is available now. The XT-3300 will be available in the first quarter of 2017 with the remaining platforms scheduled for the second quarter of 2017.

Ciena brings data analytics to optical networking

- Ciena's WaveLogic Ai coherent DSP-ASIC makes real-time measurements, enabling operators to analyse and adapt their networks.

- The DSP-ASIC supports 100-gigabit to 400-gigabit wavelengths in 50-gigabit increments.

- The WaveLogic Ai will be used in Ciena’s systems from 2Q 2017.

Ciena has unveiled its latest generation coherent DSP-ASIC. The device, dubbed WaveLogic Ai, follows Ciena’s WaveLogic 3 family of coherent chips which was first announced in 2012. The Ai naming scheme reflects the company's belief that its latest chipset represents a significant advancement in coherent DSP-ASIC functionality.

Helen XenosThe WaveLogic Ai is Ciena's first DSP-ASIC to support two baud rates, 35 gigabaud for fixed-grid optical networks and 56 gigabaud for flexible-grid ones. The design also uses advanced modulation schemes to optimise the data transmission over a given link.

Helen XenosThe WaveLogic Ai is Ciena's first DSP-ASIC to support two baud rates, 35 gigabaud for fixed-grid optical networks and 56 gigabaud for flexible-grid ones. The design also uses advanced modulation schemes to optimise the data transmission over a given link.

Perhaps the most significant development, however, is the real-time network monitoring offered by the coherent DSP-ASIC. The data will allow operators to fine-tune transmissions to adapt to changing networking conditions.

“We do believe we are taking that first step towards a more automated network and even laying the foundation for the vision of a self-driving network,” says Helen Xenos, director, portfolio solutions marketing at Ciena.

All those assumptions of the past [based on static traffic] aren't holding true anymore

Network Analytics

Conservative margins are used when designing links due to a lack of accurate data regarding the optical network's status. This curtails the transmission capacity that can be sent since a relatively large link margin is used. In turn, cloud services and new applications mean networks are being exercised in increasingly dynamic ways. “The business environment has changed a little bit,” says Joe Cumello, vice president, portfolio marketing at Ciena. “All those assumptions of the past [based on static traffic] aren't holding true anymore.”

Ciena is being asked by more and more operators to provide information as to what is happening within their networks. Operators want real-time data that they can feed to analytics software to make network optimisation decisions. "Imagine a network where, instead of those rigid assumptions in place, run on manual spreadsheets, the network is making decisions on its own," says Cumello.

WaveLogic Ai performs real-time analysis, making available network measurements data every 10ms. The data can be fed through application programming interfaces to analytics software whose output is used by operators to adapt their networks.

The network parameters collected include the transmitter and receiver optical power, polarisation channel and chromatic dispersion conditions, error rates and transmission latency. In addition, the DSP-ASIC separates the linear and non-linear noise components of the signal-to-noise ratio. An operator will thus see what the network margin is and allow links to operate more closely to the limit, improving transmissions by exploiting the WaveLogic Ai's 50-gigabit transmission increments.

"Maybe there are only a few wavelengths in the network such that the capacity can be cranked up to 300 gigabits. But as more and more wavelengths are added, if you have the tools, you can tell the operator to adjust,” says Xenos. “This helps them get to the next level; something that has not been available before.”

WaveLogic Ai

The WaveLogic Ai's lower baud rate - 35 gigabaud - is a common symbol rate used by optical transmission systems today. The baud rate is suited to existing fixed-grid networks based on 50GHz-wide channels. At 35 gigabaud, the WaveLogic Ai supports data rates from 100 to 250 gigabits-per-second (Gbps).

The second, higher 56 gigabaud rate enables 400Gbps single-wavelength transmissions and supports data rates of 100 to 400Gbps in increments of 50Gbps.

Using 35 gigabaud and polarisation multiplexing, 16-ary quadrature amplitude modulation (PM-16QAM), a 200-gigabit wavelength has a reach is 1,000km.

With 35-gigabaud and 16-QAM, effectively 8 bits per symbol are sent.

In contrast, 5 bits per symbol are used with the faster 56 gigabaud symbol rate. Here, a more complex modulation scheme is used based on multi-dimensional coding. Multi-dimensional formats add additional dimensions to the four commonly used based on real and imaginary signal components and the two polarisations of light. The higher dimension formats may use more than one time slot, or sub-carriers in the frequency domain, or even use both techniques.

For the WaveLogic Ai, the 200-gigabit wavelength at 56 gigabaud achieves a reach of 3,000km, a threefold improvement compared to using a 35 gigabaud symbol rate. The additional reach occurs because fewer constellation points are required at 56 gigabaud compared to 16-QAM at 35 gigabaud, resulting in a greater Euclidean distance between the constellation points. "That means there is a higher signal-to-noise ratio and you can go a farther distance," says Xenos. "The way of getting to these different types of constellations is using a higher complexity modulation and multi-dimensional coding."

We do believe we are taking that first step towards a more automated network and even laying the foundation for the vision of a self-driving network

The increasingly sophisticated schemes used at 56 gigabaud also marks a new development whereby Ciena no longer spells out the particular modulation scheme used for a given optical channel rate. At 56 gigabaud, the symbol rate varies between 4 and 10 bits per symbol, says Ciena.

The optical channel widths at 56 gigabaud are wider than the fixed grid 50GHz. "Any time you go over 35 gigabaud, you will not fit [a wavelength] in a 50GHz band," says Xenos.

The particular channel width at 56 gigabaud depends on whether a super-channel is being sent or a mesh architecture is used whereby channels of differing widths are added and dropped at network nodes. Since wavelengths making up a super-channel go to a single destination, the channels can be packed more closely, with each channel occupying 60GHz. For the mesh architecture, guard bands are required either side of the wavelength such that a 75GHz optical channel width is used.

The WaveLogic Ai enables submarine links of 14,000km at 100Gbps, 3,000km links at 200Gbps (as detailed), 1,000km at 300Gbps and 300km at 400Gbps.

Hardware details

The WaveLogic Ai is implemented using a 28nm semiconductor process known as fully-depleted silicon-on-insulator (FD-SOI). "This has much lower power than a 16nm or 18nm FinFET CMOS process," says Xenos. (See Fully-depleted SOI vs FinFET)

Using FD-SOI more than halves the power consumption compared to Ciena’s existing WaveLogic 3 coherent devices. "We did some network modelling using either the WaveLogic 3 Extreme or the WaveLogic 3 Nano, depending on what the network requirements were," says Xenos. "Overall, it [the WaveLogic Ai] was driving down [power consumption] more than 50 percent." The WaveLogic 3 Extreme is Ciena's current flagship coherent DSP-ASIC while the Nano is tailored for 100-gigabit metro rates.

Other Ai features include support for 400 Gigabit Ethernet and Flexible Ethernet formats. Flexible Ethernet is designed to support Ethernet MAC rates independent of the Ethernet physical layer rate being used. Flexible Ethernet will enable Ciena to match the client signals as required to fill up the variable line rates.

Further information:

SOI Industry Consortium, click here

STMicroelectronics White Paper on FD-SOI, click here

Other coherent DSP-ASIC announcements in 2016

Infinera's Infinite Capacity Engine, click here

Nokia's PSE-2, click here

US invests $610 million to spur integrated photonics

Prof. Duncan Moore

Prof. Duncan Moore

Dubbed the American Institute for Manufacturing Integrated Photonics (AIM Photonics), the venture has attracted 124 partners includes 20 universities and over 50 companies.

The manufacturing innovation institute will be based in Rochester, New York, and will be led by the Research Foundation for the State University of New York. A key goal is that the manufacturing institute will continue after the initiative is completed in early 2021.

We are at the point in photonics where we were in electronics when we still had transistors, resistors and capacitors. What we are trying to do now is the equivalent of the electronics IC

While the focus is on photonic integrated circuits, the expectation is that the venture will end up being broader. “NASA, the Department of Energy and the Department of Defense are all interested in using this as a vehicle for doing other work,” says Duncan Moore, professor of optics at the University of Rochester.

The venture will address such issues as design, on-chip manufacturing, packaging and assembly of PICs. “We are at the point in photonics where we were in electronics when we still had transistors, resistors and capacitors,” says Moore. “What we are trying to do now is the equivalent of the electronics IC.”

"It is an amazing public-private consortium utilizing an unprecedented $610 million investment in photonics," says Richard Soref, a silicon photonics pioneer and a Group IV photonics researcher. "The large and powerful team of world-class investigators is likely to make research-and-development progress of great importance for the US and the world.”

Project plans

The first six months are being used to fill in project’s details. “There are overall budget numbers but individual projects are not well defined in the proposal,” says Moore, adding that many of the subfields - packaging, sensors and the like - will be defined and request-for-proposals issued.

An executive committee will then determine which projects are funded and to what degree. Project durations will vary from one-offs to the full five years.

The large and powerful team of world-class investigators is likely to make research-and-development progress of great importance for the US and the world

Companies backing the project include indium phosphide specialist Infinera as well as silicon photonics players Acacia Communications, Aurrion, and Intel. How the two technologies as well as Group IV photonics will be accommodated as part of the manufacturing base is still to be determined, says Prof. Moore. His expectation is that all will be investigated before a ‘shakeout’ will occur as the venture progresses.

The focus will be on telecom wavelengths and the mid-wave 3 to 5 micron band. “There are a lot of applications in that [longer] wavelength band: remote sensing, environmental analysis, and for doing things on the battlefield,” says Moore.

A public document will be issued around the year-end describing the project’s organisation.

Further information:

The White House factsheet, click here

A Photonics video interview with the chairman of the institute, Professor Robert Clark, click here

Europe gets its first TWDM-PON field trial

Vodafone is conducting what is claimed to be the first European field trial of a multi-wavelength passive optical networking system using access equipment from Alcatel-Lucent.

Source: Alcatel-Lucent

Source: Alcatel-Lucent

The time- and wavelength-division multiplexed passive optical network (TWDM-PON) technology being used is a next-generation access scheme that follows on from 10 gigabit GPON (XG-PON1) and 10 gigabit EPON.

“There appears to be much more 'real' interest in TWDM-PON than in 10G GPON,” says Julie Kunstler, principal analyst, components at Ovum.

The TWDM-PON standard is close to completion in the Full Service Access Network (FSAN) Group and ITU and supports up to eight wavelengths, each capable of 10 gigabit symmetrical or 10/ 2.5 gigabit asymmetrical speeds.

“You can start building hardware solutions that are fully [standard] compliant,” says Stefaan Vanhastel, director of fixed access marketing at Alcatel-Lucent.

TWDM-PON’s support for additional functionality such as dynamic wavelength management, whereby subscribers could be moved between wavelengths, is still being standardised.

The combination of time and wavelength division multiplexing, allows TWDM-PON to support multiple PONs, each sharing its capacity among 16, 32, 64 or even 128 end points depending on the operator’s chosen split ratio.

There appears to be much more 'real' interest in TWDM PON than in 10G GPON

Alcatel-Lucent first detailed its TWDM-PON technology last year. The system vendor introduced a four-wavelength TWDM-PON based on a 4-port line-card, each port supporting a 10 gigabit PON. The line card is used with Alcatel-Lucent’s 7360 Intelligent Services Access Manager FX platform, and supports fixed and tunable SFP optical modules.

“Several vendors also offer the possibility to use fixed wavelength - XG-PON1 or 10G EPON optics," says Vanhastel. "This reduces the initial cost of a TWDM-PON deployment while allowing you to add tunable optics later."

Operators can thus start with a 10 gigabit PON using fixed-wavelength optics and move to TWDM-PON and tunable modules as their capacity needs grow. “You won’t have to swap out legacy XG-PON1 hardware two years from now,” says Vanhastel.

Alcatel-Lucent has been involved in 16 customer TWDM-PON trials overall, half in Asia Pacific and the rest split between North America and EMEA. Besides Vodafone, Alcatel-Lucent has named two other TWDM-PON triallists: Telefonica and Energia, an energy utility in Japan.

You won’t have to swap out legacy XG-PON1 hardware two years from now

Vanhastel says the company has been surprised that operators are also eyeing the technology for residential access. The high capacity and relative expense of tunable optics made the vendor think that early demand would be for business services and mobile backhaul only.

Source: Gazettabyte

Source: Gazettabyte

There are several reasons for the operator interest in TWDM-PON, says Vanhastel. One is its ample bandwidth - 40 gigabit symmetrical in a four-wavelength implementation - and that wavelengths can be assigned to different aggregation tasks such as backhaul, business and residential. Operators can also pay for wavelengths as needed.

TWDM-PON also allows wavelengths to be shared between operators as part of wholesale agreements. Operators deploying TWDM-PON can lease a wavelength to each other in their respective regions.

Vodafone, for example, is building its own fibre network but is also expanding its overall fixed broadband coverage by developing wholesale agreements across Europe. Vodafone's European broadband network covers 62 million households: 26 million premises covered with its own network and 36 million through wholesale agreements.

First operator TWDM-PON pilot deployments will occur in 2016, says Alcatel-Lucent.

Further reading:

White Paper: TWDM PON is on the horizon: facilitating fast FTTx network monetization, click here

OIF moves to raise coherent transmission baud rate

"We want the two projects to look at those trade-offs and look at how we could build the particular components that could support higher individual channel rates,” says Karl Gass of Qorvo and the OIF physical and link layer working group vice chair, optical.

Karl Gass

Karl Gass

The OIF members, which include operators, internet content providers, equipment makers, and optical component and chip players, want components that work over a wide bandwidth, says Gass. This will allow the modulator and receiver to be optimised for the new higher baud rate.

“Perhaps I tune it [the modulator] for 40 Gbaud and it works very linearly there, but because of the trade-off I make, it doesn’t work very well anywhere else,” says Gass. “But I’m willing to make the trade-off to get to that speed.” Gass uses 40 Gbaud as an example only, stressing that much work is required before the OIF members choose the next baud rate.

"We want the two projects to look at those trade-offs and look at how we could build the particular components that could support higher individual channel rates”

The modulator and receiver optimisations will also be chosen independent of technology since lithium niobate, indium phosphide and silicon photonics are all used for coherent modulation.

The OIF has not detailed timescales but Gass says projects usually take 18 months to two years.

Meanwhile, the OIF has completed two projects, the specification outputs of which are referred to as implementation agreements (IAs).

One is for integrated dual polarisation micro-intradyne coherent receivers (micro-ICR) for the CFP2. At OFC 2015, several companies detailed first designs for coherent line side optics using the CFP2 module.

The second completed IA is the 4x5-inch second-generation 100 Gig long-haul DWDM transmission module.

Cyan's stackable optical rack for data centre interconnent

"The drivers for these [data centre] guys every day of the week is lowest cost-per-gigabit"

Joe Cumello

The amount of traffic moved between data centres can be huge. According to ACG Research, certain cloud-based applications shared between data centres can require between 40 to 500 terabits of capacity. This could be to link adjacent data centre buildings to appear as one large logical one, or connect data centres across a metro, 20 km to 200 km apart. For data centres separated across greater distances, traditional long-haul links are typically sufficient.

Cyan says it developed the N-series platform following conversations conducted with internet content providers over the last two years. "We realised that the white box movement would make its way into the data centre interconnect space," says Cumello.

White box servers and white box switches, manufactured by original design manufacturers (ODMs), are already being used in the data centre due to their lower cost. Cyan is using a similar approach for its N-Series, using commercial-off-the-shelf hardware and open software.

"The drivers for these [data centre] guys every day of the week is lowest cost-per-gigabit," says Cumello.

N-Series platform

Cyan's N-Series N11 is a 1-rack-unit (1RU) box that has a total capacity of 800 Gigabit-per-second (Gbps). The 1RU shelf comprises two units, each using two client-side 100Gbps QSFP28s and a line-side interface that supports 100 Gbps coherent transmission using PM-QPSK, or 200 Gbps coherent using PM-16QAM. The transmission capacity can be traded with reach: using 100 Gbps, optical transmission up to 2,000 km is possible, while capacity can be doubled using 200 Gbps lightpaths for links up to 600 km. Cyan is using Clariphy's CL20010 coherent transceiver/ framer chip. Stacking 42 of the 1RUs within a chassis results in an overall capacity - client side and line side - of 33.6 terabit.

There is a whole ecosystem of companies competing to drive better capacity and scale

The N-Series N11 uses a custom line-side design but Cyan says that by adopting commercial-off-the-shelf design, it will benefit from the pluggable line-side optical module roadmap. The roadmap includes 200 Gbps and 400 Gbps coherent MSA modules, pluggable CFP2 and CFP4 analogue coherent optics, and the CFP2 digital coherent optics that also integrates the DSP-ASIC.

"There is a whole ecosystem of companies competing to drive better capacity and scale," says Cumello. "By using commercial-off-the-shelf technology, we are going to get to better scale, better density, better energy efficiency and better capacity."

To support these various options, Cyan has designed the chassis to support 1RU shelves with several front plate options including a single full-width unit, two half-width ones as used for the N11, or four quarter-width units.

Open software

For software, the N-series platform uses a Linux networking operating system. Using Linux enables third-party applications to run on the N-series, and enables IT staff to use open source tools they already know. "The data centre guys use Linux and know how to run servers and switches so we have provided that kind of software through Cyan's Linux," says Cumello. Cyan has also developed its own networking applications for configuration management, protocol handling and statistics management that run on the Linux operating system.

The open software architecture of the N-Series. Also shown are the two units that make up a rack. Source: Cyan.

The open software architecture of the N-Series. Also shown are the two units that make up a rack. Source: Cyan.

"We have essentially disaggregated the software from the hardware," says Cumello. Should a data centre operator chooses a future, cheaper white box interconnect product, he says, Cyan's applications and Linux networking operating system will still run on that platform.

The N-series will be available for customer trials in the second quarter and will be available commercially from the third quarter of 2015.

EZchip packs 100 ARM cores into one networking chip

The Tile-Mx100. Source: EZchip

The Tile-Mx100. Source: EZchip

- The industry's first detailed chip featuring 100, 64-bit ARM cores

- The Tile-Mx devices will perform control plane processing and data plane processing

- The 100-core chip will have 100 Gigabit Ethernet ports and support 200 Gigabit duplex traffic

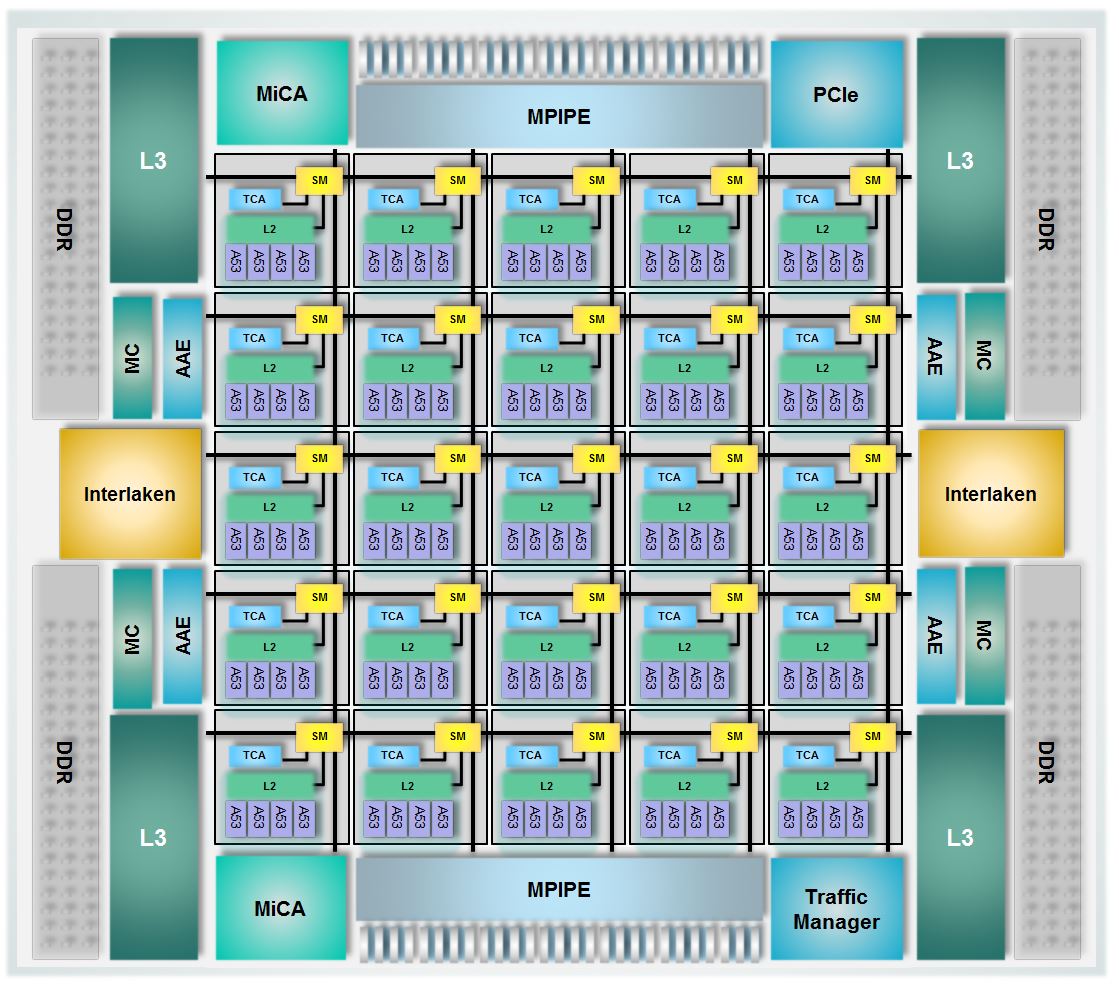

EZchip has detailed the industry's first 100-core processor. Dubbed the Tile-Mx100, the processor will be the most powerful of a family of devices aimed at such applications as software-defined networking (SDN), network function virtualisation (NFV), load-balancing and security. Other uses include video processing and application recognition, to identify applications riding over a carrier's network.

Known for its network processors, EZchip has branched out to also include general-purpose processors following its acquisition of multicore specialist, Tilera. It now competes with such companies as Broadcom, Cavium and Intel.

What's new about the EZchip Tile-Mx100 is that it is the first such processor with 100 cache-coherent programmable CPU cores and it is by far the largest 64-bit ARM processor yet announced

EZchip's NPS network processor is a custom IC designed to maximise packet-processing performance. The Tile-Mx also targets networking but using standard ARM cores. Engineers will benefit from open source software, third-party applications and ARM development tools. "We believe the market needs a standard, open architecture," says Amir Eyal, vice president of business development at EZchip.

"A multicore standard processor tailored for networking is nothing new; numerous such processors have been available for years from several vendors," says Tom Halfhill, senior analyst at The Linley Group. "What's new about the EZchip Tile-Mx100 is that it is the first such processor with 100 cache-coherent programmable CPU cores and it is by far the largest 64-bit ARM processor yet announced."

EZchip has detail three Tile-Mx devices, the most powerful being the Tile-Mx100 that uses 100, 64-bit ARM Cortex-A53 cores. The Cortex-A53 is newer and smaller than the Cortex-A57, and has a relatively low power consumption. Handset and tablet designs are also using the ARM Cortex-A53 core. Both the A53 and A57 cores use the ARMv8-A instruction set.

"We have taken the A53 in order to put more cores on the die," says Eyal. "The idea with networking applications is that the more packets you can process in parallel, the better." A chip hosting many, smaller cores helps meet this goal.

Tile-Mx architecture

The Tile-Mx100 device will process traffic at rates up to 200 Gigabit-per-second (Gbps) rates, or 200 Gbps duplex. In contrast, EZchip's NPS family of devices has a roadmap with a traffic processing performance of 400 Gbps to 800 Gbps duplex.

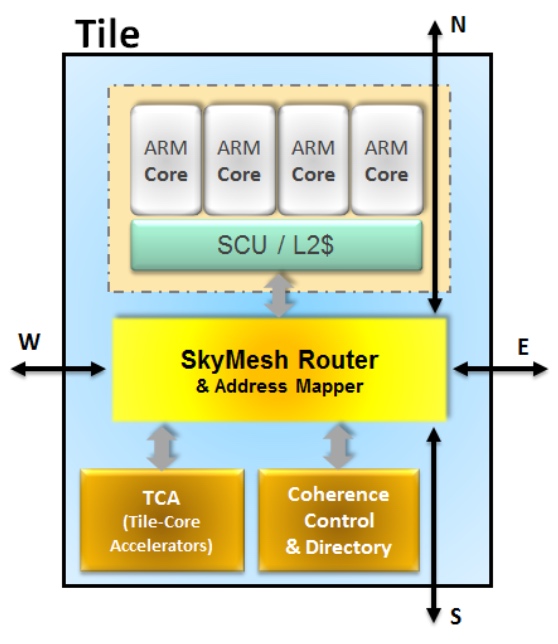

The Tile-Mx uses a two-level architecture. The 100 cores are partitioned into 25 processing clusters or tiles, each comprising four ARM cores that share network acceleration hardware and level-2 cache memory. Each tile also features router hardware, part of the chip's interconnect network that handles the tile's input/ output (I/O) requirements.

Source: EZchip

Source: EZchip

"The key technology for the Tile-Mx architecture is the interconnect that enables 100 CPUs to be connected in a coherent manner," says Jag Bolaria, principal analyst at The Linley Group.

"There are five different networks [part of the mesh] that interconnect the 100 cores in parallel, preventing bottlenecks and contention," says Eyal. The mesh also ensures that each core can talk to the chip's I/O and to the memory. The mesh is a fifth iteration, having been improved with each generation of chip design, says Eyal, and has a total bandwidth of 25 Terabits.

The mesh also implements cache coherency, an important aspect of multi-processor design that ensures that cache memory is updated when accessed by any of the cores without needing to introduce idle states first.

Other chip features include a traffic manager, essentially the one used for EZchip's NPUs, which prioritises traffic, allocates bandwidth and prevents packet loss. There are also hardware units (see MiCA blocks in main chip diagram), developed by Tilera, which do preliminary packet classification before presenting the packets to the cores.

The chip's I/O includes 1, 10, 25, 40, 50 and 100 Gigabit Ethernet interfaces, the Interlaken interface and PCI Express, used to connect the chip to a host processor such as an Intel x86 microprocessor.

The idea with networking applications is that the more packets you can process in parallel, the better

EZchip is not detailing the device's interface mix or such metrics as the chip's pin-count, clock speed or power consumption. However, EZchip says the chip's power consumption will be under 100W.

When a packet is presented to the chip, it is assigned to a core which processes it to completion before sending it typically to the I/O. For the programmer, the 100-core device appears as a single processor; it is the hardware on-chip that handles the details, sending an incoming packet to the next free core.

Ezchip shows examples of possible platforms that could use the Tile-Mx.

One is a 1-rack-unit-high pizza box in the data centre used to deliver virtual network functions. Such a NFV server would benefit from the Tile-Mx's hardware-accelerated table look-ups, packet classification and packet flow management in and out of the device. Another design example is using the device for an intelligent network interface card (NIC) in a standard Intel x86-based server.

The two other Tile-Mx family devices will use 36 and 64 Cortex-A53 cores. First Tile-Mx samples are expected in the second half of 2016.

Multicore trends

The Linley Group says that despite the unprecedented 100 ARM cores, EZchip's family of device faces competition. Moreover, the trend to increase core-count has its limits.

EZchip is already shipping a 72-core processor it acquired from Tilera although the device is not ARM-based. And Cavium's largest processor has 48 cores, says Halfhill. Broadcom's largest processor has only 20 cores, but those CPUs are quad-threaded, so the processor can handle up to 80 packet streams. "Not quite as many as the Tile-Mx100, but it is in the same ballpark," says Halfhill.

"Keep in mind that Tile-Mx100 production is about two years out; a lot can happen in two years," adds Halfhill.

According to Bolaria, multicore designs are good for applications that are highly parallelised such as packet processing and deep packet processing. But NPUs are better if all that is being done is packet processing.

"Many cores is not particularly good for applications that need good single-thread performance," says Bolaria. "This is where [an Intel] Xeon will shine — for applications such as high-performance computing, simulations and algorithms."

Coherent interconnects also limit CPU scaling, says Bolaria. Tile-Mx gets around the interconnect limitation by clustering four ARM cores into a tile, so that effectively 25 nodes only are connected. "With more nodes, it becomes difficult to maintain cache coherency and performance," says Bolaria.

Another limitation is partitioning applications into smaller chunks for execution on 100 cores. Some tasks are serial by nature and cannot benefit from parallel processing. "Amdahl’s law limits performance gains from adding more CPUs," says Bolaria.

Photonics and optics: interchangeable yet different

Many terms in telecom are used interchangeably. Terms gain credibility with use but over time things evolve. For example, people understand what is meant by the term carrier [of traffic] or operator [of a network] and even the term incumbent [operator] even though markets are now competitive and 'telephony' is no longer state-run.

"For me, optics is the equivalent of electrical, and photonics is the equivalent of electronics - LSI, VLSI chips and the like" - Mehdi Asghari

Operators - ex-incumbents or otherwise - also do more that oversee the network and now provide complex services. But of course they differ from service providers such as the over-the-top players [third-party providers delivering services over an operator's infrastructure, rather than any theatrical behaviour] or internet content providers.

Google is an internet content provider but with its gigabit broadband service it is rolling out in the US, it is also an operator/ carrier/ communications service provider. And Google may soon become a mobile virtual network operator.

So having multiple terms can be helpful, adding variety especially when writing, but the trouble is it is also confusing.

Recent discussions including interviewing silicon photonics pioneer, Richard Soref, raised the question whether the terms photonics and optics are the same. I decided to ask several industry experts, starting with The Optical Society (OSA).

Tom Hausken, the OSA's senior engineering & applications advisor, says that after many years of thought he concludes the following:

-

People have different definitions for them [optics and photonics] that range all over the map.

-

I find it confusing and unhelpful to distinguish them.

-

The National Academies's report is on record saying there is no difference as far as that study is concerned.

-

That works for me.

Michael Duncan, the OSA's senior science advisor, puts the difference down to one of cultural usage. "Photonics leans more towards the fibre optics, integrated optics, waveguide optics, and the systems they are used in - mostly for communication - while optics is everything else, especially the propagation and modification of coherent and incoherent light," says Duncan. "But I could easily go with Tom's third bullet point."

"Photonics does include the quantum nature, and sort of by convention, the term optics is seen to mean classical" - Richard Soref

Duncan also cites Wikipedia, with its discussion of classical optics that embraces the wave nature of light, and modern optics that also includes light's particle nature. And this distinction is at the core of the difference, without leading to an industry consensus.

"Photonics does include the quantum nature, and sort of by convention, the term optics is seen to mean classical," says Richard Soref. He points out that the website Arxiv.org categorises optics as the subset of physics, while the OSA Newsletter is called Optics & Photonics News, covering all bases.

"Photonics is the larger category, and I might have been a bit off base when throwing around the optics term," says Soref. If only everyone was as off base as Professor Soref.

"We need to remember that there is no canonical definition of these terms, and there is no recognised authority that would write or maintain such a definition," says Geoff Bennett, director, solutions and technology at Infinera. For Bennett, this is a common issue, not confined to the terms optics and photonics: "We see this all the time in the telecoms industry, and in every other industry that combines rapid innovation with aggressive marketing."

That said, he also says that optics refers to classical optics, in which light is treated as a wave, whereas photonics is where light meets active semiconductors and so the quantum nature of light tends to dominate. Examples of the latter would be photonic integrated circuits (PICs). "These contain active lasers components, semiconductor optical amplifiers and photo-detectors " says Bennett. "All of these rely on quantum effects to do their job."

"We need to remember that there is no canonical definition of these terms, and there is no recognised authority that would write or maintain such a definition" - Geoff Bennett

Bennett says that the person who invented the term semiconductor optical amplifier (SOA) was not aware of the definition because the optical amplifier works on quantum principles, the same way a laser does. "So really it should be a semiconductor photonic amplifier," he says.

"At Infinera, we seem for the most part to have abided to the definitions in terminology that we use, but I can’t say that this was a conscious decision," says Bennett. "I am sure that if our marketing department thought that photonic sounded better than optical in a given situation they would have used it."

Mehdi Asghari, vice president, silicon photonics research & development at Mellanox, says optics refers to the classical use and application of light, with light as a ray. He describes optics as having a system-level approach to it.

"We create a system of lenses to make a microscope or telescope to make an optical instrument using classical optics models or we use optical components to create an optical communication system," he says. This classical or system-level perspective makes it optics or optical, a term he prefers. "We are not concerned with the nature - particle versus wave - of light, rather its classical behaviour, be it in an instrument or a system," he says.

But once things are viewed closer, at the device level, especially devices comparable in size of photons, then a system-level approach no longer works and is replaced with a quantum approach. "Here we look at photons and the quantum behaviour they exhibit," says Asghari.

In a waveguide, be it silicon photonics (integrated devices based on silicon), a planar lightwave circuit (glass-based integrated devices), or a PIC based on III-V or active devices, the size of the structure or device used is often comparable or even smaller than the size of the photons it is manipulating, he says: "This is where we very much feel the quantum nature of light, and this is where light becomes photons - photonics - and not optics."

ADVA Optical Networking's senior principal engineer, Klaus Grobe, held a discussion with the company's physicists, and both, independently, had the same opinion.

"Both [photonics and optics] are not strictly defined," he says. "Optics clearly also includes classic school-book ray optics and the like. Photonics already deals with photons, the wave-particle dualism, and hence, at least indirectly, with quantum mechanics, and possibly also quantum electro-dynamics (QED)."

Since in fibre-optics for transport, ray-propagation models no longer can be used, and also since they rely on the quantum-mechanical behaviour, for example of diode receivers, fibre-optics are better filed under photonics, says Grobe: "But they are not called fibre-photonics".

So, the industry view seems to be that the two terms are interchangeable but optics implies the classical nature of light while photonics suggests light as particles. Which term includes both seems to be down to opinion. Some believe optics covers both, others believe photonics is the more encompassing term.

Mellanox's Asghari once famously compared photons and electrons to cats and dogs. Electrons are like dogs: they behave, stick by you and are loyal; they do exactly as you tell them, he said, whereas cats are their own animals and do what they like. Just like photons. So what is his take?

He believes optics is more general than photonics. He uses the analogy of electrical versus electronics to make his point. An electronics system or chip is still an electrical device but it often refers to the integrated chip, while an electrical system is often seen as global and larger, made up of classical devices.

"For me, optics is the equivalent of electrical, and photonics is the equivalent of electronics - LSI, VLSI chips and the like," says Asghari. "One is a subset or specialised version of the other due to the need to get specific on the quantum nature of light and the challenges associated with integration."

"Optics refers to all types of cats, be it the tiger or the lion or the domestic pet. Photonics refers to the so called domestic cat that has domesticated and slaved us to look after it" - Mehdi Asghari

To back up his point, Ashgari says take a look at older books and publications that use the term optics. The term photonics started to be used once integration and size reduction became important, just as how electrical devices got replaced with electronic devices.

Indeed, this rings true in the semiconductor industry: microelectronics has now become nano-electronics as CMOS feature sizes have moved from microns to nanometer dimensions.

And this is why optical fibre or the semiconductor optical amplifier are used because these terms were invented and used when the industry was primarily engaged with the use of light at a system level and away from the quantum limits and challenges of integration.

"In short, photonics is used when we acknowledge that light is made of photons with all the fun and challenges that photons bring to us and optics is when we deal with light at a system level or a classical approach is sufficient," says Asghari.

Happily, cats and dogs feature here too.

"Optics refers to all types of cats, be it the tiger or the lion or the domestic pet," says Asghari. "Photonics refers to the so called domestic cat that has domesticated and slaved us to look after it."

Last word to Infinera's Bennett: "I suppose the moral is: be aware of the different meanings, but don’t let it bug you when people misuse them."