Books in 2019

Gazettabyte asks industry figures each year to cite the memorable books they have read. These include fiction, non-fiction and work-related titles.

Here are the choices of Cisco’s Bill Gartner, Sylvie Menezo of silicon photonics start-up, Scintil Photonics, and Andrew Schmitt, directing analyst at Cignal AI.

Bill Gartner, Senior Vice President and General Manager, Cisco Optical Systems and Optics.

At the top of my list is The Gene: An Intimate History, by Siddhartha Mukherjee. Mukherjee does an amazing job of telling the story of the gene, providing historical context dating back to pre-Darwin times through to modern advances in gene therapy. The material is complex but he is great at describing the evolution of thinking about genes and progress in the genome project in layman’s terms.

The book leaves me in awe of how much has been accomplished, especially in the past 20 years, and yet how much more we have to learn about this fascinating topic, how progress in this area might be applied to solve some of medicine’s most challenging problems, and the moral dilemma that we confront as we think about altering nature’s work.

The Billionaire Who Wasn’t: How Chuck Feeney Secretly Made and Gave Away a Fortune by Conor O’Clery is an amazing story of a man who went from rags to riches, built one of the most profitable private businesses in history (Duty-Free Shops), and earned billions. He then gave it all away and did so anonymously. He lived frugally and was adamant that his contributions be kept secret. It is an inspiring story of an American hero who touched the lives of millions who will never know.

Longitude: The True Story of a Lone Genius Who Solved the Greatest Scientific Problem of His Time by Dava Sobel includes a foreword by Neil Armstrong. I am fascinated by stories that highlight how one individual persists in a vision and has a major impact on the world. In the 18th century, it was common for entire fleets of ships to run aground or get lost as navigation techniques were primitive.

Latitude was relatively straightforward, based on the angle of the sun relative to the horizon (and the date), but determining longitudinal position was often guesswork. After several disasters, including one where over 200 sailors were killed, the British government established a prize for the solution.

This is a fantastic story of a relatively unknown watchmaker who single-handedly solved the problem and then persuaded the sceptics that his chronometer was superior to any available method.

Lastly, I read Franklin and Winston: An Intimate Portrait of an Epic Friendship by Jon Meacham. This is a fantastic story of the intimate and at times stormy relationship between FDR and Winston Churchill. The story, unlike many WWII narratives, is told from the perspective of their interactions. FDR and Churchill were magnificent leaders, each of whom took a principled stand against Nazism and Fascism. It is also frightening to contemplate the course history may have taken had lesser leaders been in place.

Sylvie Menezo, CEO and CTO of Scintil Photonics.

The book I recommend is a novel I read this summer, La Tresse (The Braid) by Laetitia Colombani. It is a tale of three women, each from a different continent and experiencing different living conditions, yet their lives happen to be connected by something at the end of the book. To me, all three are very beautiful and strong women figures, moved by a ‘different something’ deep inside them, and that is what makes them beautiful!

Andrew Schmitt, founder and directing analyst at Cignal AI

It was a good reading year for me. Starting with fiction, my overall pick of the year is the Three-Body Problem series by Cixin Liu, a science fiction story of epic scale that stretches from the Cultural Revolution in China into the distant future.

It was written in Chinese and as a result, the style, prose and cultural perspective are different in a refreshing way. This series is right up there with Dune, Asimov and all the sci-fi greats. It is a must-read if that is your thing.

Martha Wells turned out more short novels to conclude the Murderbot Diaries, a series that I reviewed in 2018. I also read Neal Stephenson’s FALL; or, Dodge in Hell: A Novel this year. He’s maintained a steady production of books but I don’t think his latest books are as good as his archive (Snow Crash, Cryptonomicon, others). FALL was very disappointing, particularly the second half – I don’t recommend it. Read the archive instead.

It was an intense non-fiction year, so I’ll hit the good stuff that I strongly recommend.

I picked up Nobody Wants to Read Your Sh*t: And Other Tough-Love Truths to Make You a Better Writer by Steven Pressfield on a twitter recommendation and it resonated with me. So much written market research lacks respect and appreciation of the client’s time and Pressfield shares simple, useful tips to make your reader care about what you are writing. Anyone who writes for others should read this, and it is quick.

This book leads me to one of Pressfield’s big hits, Gates of Fire: An Epic Novel of the Battle of Thermopylae, a narrative history of the Spartans and the battle. As an engineer, I never had the time – and frankly, the interest – to study Ancient Greece. Pressfield vividly brings Sparta and Greece to life and recounts the events leading up to the battle of the famous “300”. A fantastic book.

My son had to read Midnight in Chernobyl: The Untold Story of the World’s Greatest Nuclear Disaster by Adam Higginbotham over the summer for High School.

We read it together; a highly recommended thing to do with your teenagers. Better yet, after the book, we were treated with the excellent “Chernobyl” drama on HBO. If you liked the HBO series, definitely read the book as it tells the story in a comprehensive and detailed way without an artistic license. The size, scale, and sacrifices endured by the Soviets to contain the disaster are incredible. The organisational ineptitude before and right after the event are horrifying. The same top-down decision hierarchy that caused the problem was paradoxically the only way to get it cleaned up.

My last recommendation is Shoe Dog: A Memoir – by the Creator of Nike, by Phil Knight. It recounts the genesis of the company as a supplier of track shoes made in Japan following WWII as the country rapidly emerged as an export powerhouse. It is a book about post-war Japan, raw entrepreneurship, and building what at the time was a new sales and marketing model combining athletics and fashion. One of the better business books I’ve read.

Books in 2019 – Final part, click here

ECOC 2019 industry reflections II

Gazettabyte requested the thoughts of industry figures after attending the ECOC show, held in Dublin. In particular, what developments and trends they noted, what they learned and what, if anything, surprised them. Input from II-VI, Ciena, Fujitsu Optical Components and Acacia Communications. The second and final part.

State of play for 400 Gigabit Ethernet (GbE). Form factors ‘right-sized’ for faceplate densities

Sanjai Parthasarathi, chief marketing officer at II-VI

One new theme at ECOC is the demand for lower-cost 100-gigabit coherent transceivers for deployment in optical access for wireless access and fibre-deep cable TV. Such demand would significantly expand the market.

It was noteworthy at the show how 5G has become a significant factor influencing the wireless access market, with the potential for wide deployment of dense wavelength-division multiplexing (DWDM) technology with wavelength switching and tuning functions, not only in traditional network architectures but interesting new ones too.

This could drive significant demand for low-cost wavelength-selective switch (WSS) modules, tunable transceivers and 100-gigabit coherent transceivers, which is exciting.

As for surprises at the show, ECOC validated the view that developments in digital signal processor (DSP) technology for transceivers have accelerated to the point of having caught up with the state-of-the-art in photolithography, previously the province of DSPs for consumer electronics, high-performance computing and processors.

DSPs, for next-generation transceivers, are increasingly leveraging 7nm CMOS.

Patricia Bower, senior manager of product marketing at Ciena

A key talking point at ECOC was the state of play for 400 Gigabit Ethernet (GbE). Form factors ‘right-sized’ for faceplate densities – QSFP-DD, for example – and developments in short-range optical signalling supporting 100 gigabit-per-lambda are enablers for this next-generation client rate.

Market projections for 400GbE indicate a faster ramp for 400GbE than for 100GbE in previous years and that 400GbE client-side modules will ship in 2020 with broad, market-wide volumes ramping in 2021.

In parallel, 400-gigabit DWDM is projected to grow very strongly. Starting in early 2020, deployments of 800 gigabit-capacity DWDM systems will enable the industry to efficiently transport 400GbE anywhere in the network, including transoceanic propagation.

Following this, 400ZR will enable 400 gigabits-per-second over short point-to-point, single-span data centre interconnect links using coherent technology in the same compact QSFP-DD mechanical forms which will go hand-in-hand with the volume uptake of 400GbE.

Co-packaged optics

Discussions continued around approaches to package optics and electronics in switch-fabric ICs.

The consensus was that the approach will be mainstream in future 51.2 terabits-per-second (Tbps) switch chips, a couple of iterations from where we are today.

I learned more about the progress supporting wafer-scale manufacturability of co-packaged switch cores and optical input/ outputs, including on-chip laser integration.

Consideration of the relative trade-offs among power dissipation, cost, thermal management, and reliability compared to off-chip lasers are key. Electrical signalling also remains key in this approach. Even moving data off a chip package optically, electrical intra-chip signaling to the switching core is still needed for what effectively is a multi-chip module or modular system-on-chip.

Companies with key design skills in electrical and optical components will be best placed to address such designs.

I wasn’t surprised but pleased to see the progress by the industry for 400ZR demonstrated at the OIF booth. Various companies showed IC-TROSA electro-optic samples which is a contributing element for a 400ZR solution.

Mechanical mock-ups of the intended module packages (QSFP-DD and OSFP) were also shown as well as a mock-up of a switch-router platform to highlight 400ZR integration.

This level of progress is in line with the expected ramp-up of 400ZR in 2021.

Yukiharu Fuse, chief marketing officer, vice president/ general manager, business strategy division, Fujitsu Optical Components Limited

Several items were of interest at ECOC, but two I’d highlight are 400-gigabit coherent pluggable optics and XR Optics.

Vendors demonstrated the progress being made in the development of 400-gigabit coherent pluggable transceivers.

The key is their success is the development of a low-power coherent digital signal processor (DSP) that fits within a QSFP-DD or OSFP module, and this now seems feasible.

With this innovation, data centre operators will be able to install these modules in the slots used for client Ethernet, allowing the operators to support data centre interconnect without the need for transport gear.

The OIF-standardised 400ZR implementation will support linking data centres up to 120km apart using interoperable pluggable modules. The data centre operators also want longer reaches that ZR offers even if the power consumption of the transceiver inevitably goes up.

To address this, NEL and Acacia together with Lumentum and Fujitsu Optical Components introduced OpenZR+ to support longer distance links for data centre interconnect and other applications.

This will act as a potential de-facto standard with multi-source transceivers to support distances beyond ZR.

Such a development will be a big step for the data center operators, enabling wider coverage without the need for transport equipment.

XR Optics

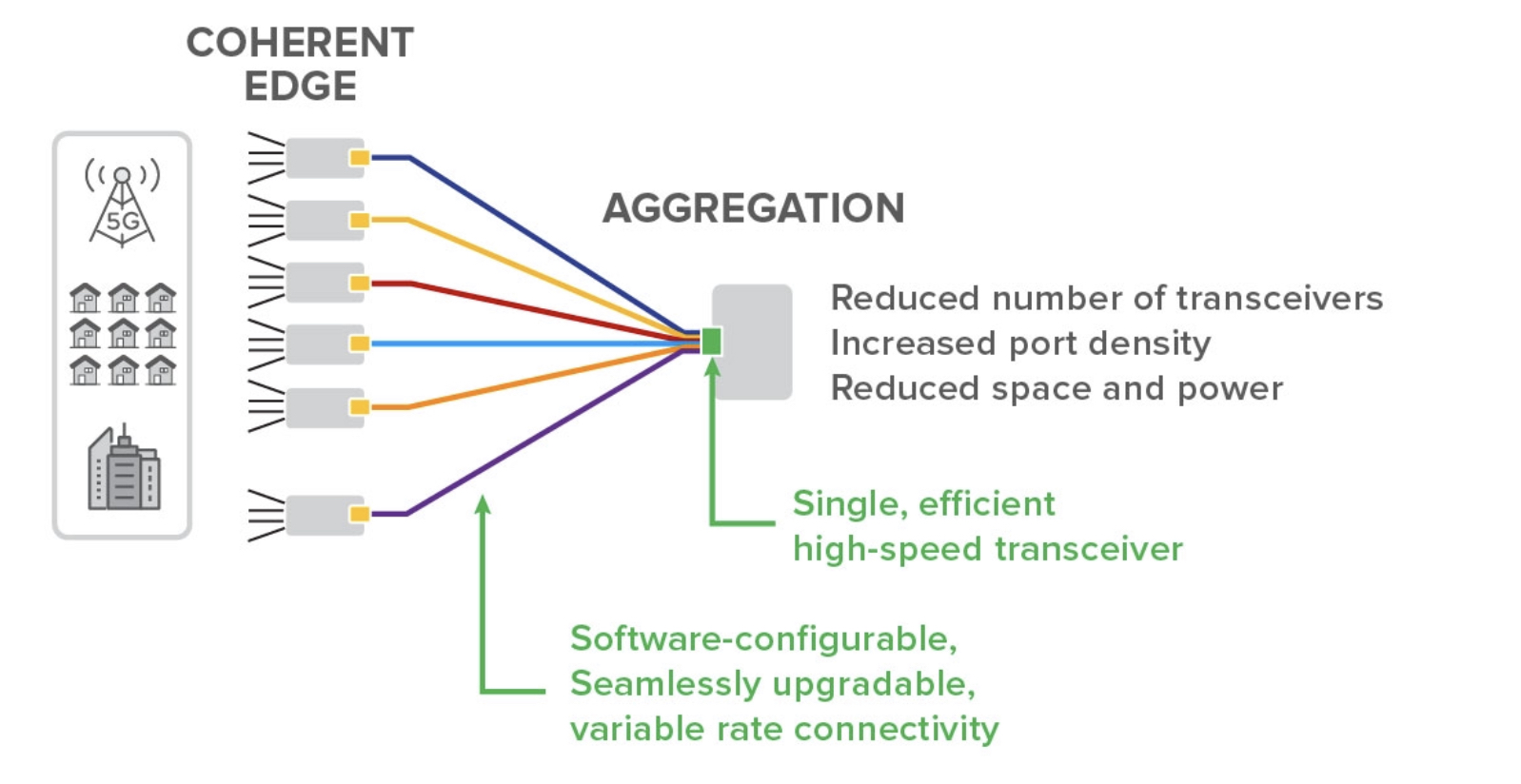

Infinera introduced at ECOC a new concept of point-to-multi-point communications for access and aggregation network, dubbed XR Optics. Using Nyquist subcarriers, XR Optics can distribute up to 16 points according to the bandwidth requirements.

This concept may create a new market for coherent optics that until now has focussed on high-capacity, point-to-point applications.

Infinera introduced at ECOC a technology not a product. It will be interesting to see how the technology evolves into products and the support it gets with the goal of creating a multi-source supply chain.

I’m curious about the concept, though, with the key being how to achieve low-cost coherent optics needed for access and aggregation networks. I will watch this development with interest.

Tom Williams, vice president of marketing, Acacia Communications

We are seeing a trend toward increasing use of silicon photonics in client and transport optics. There are multiple approaches in the industry to address the challenges of power, size and cost, but silicon photonics has become established as an important technology for a variety of applications.

We were also happy to see the positive feedback for the OpenZR+ solution that we, in collaboration with several other companies, defined at the show.

I’ve participated in the 400ZR effort and the CableLabs project to define a coherent interface in access networks, so I was interested to learn more about the Infinera XR optics proposal. I’m still trying to understand the details, but it’s always interesting to see a different approach to solving a technical challenge.

As for unexpected developments at the show, I was surprised how difficult it can be to get a taxi in Dublin when Ariana Grande is in town!

Deutsche Telekom’s edge for cloud gaming

Deutsche Telekom believes its network gives it an edge in the emerging game-streaming market.

The operator is trialling a cloud-based service similar to the likes of Google and Microsoft.

The operator already offers IP TV and music as part of its entertainment offerings and will decide if gaming will be the third component. The operator will launch its MagentaGaming cloud-based service in 2020.

“Since 2017, the biggest market in entertainment is gaming,” says Dominik Lauf, project lead, MagentaGaming at Deutsche Telekom.

Market research firms vary in their estimates but the global video gaming market was of the order of $138 billion in 2018 while the theatrics and home entertainment market totalled just under $100 billion for the same period.

Cloud Gaming

In Germany, half the population play video games with half of those being young adults. The gaming market represents a valuable opportunity to ‘renew the brand’ with a younger audience.

Until now, a user’s gaming experience has been determined by the video-processing capabilities of their gaming console or PC graphics card.

The advent of cloud-based gaming changes all that. A user not only can access the latest game titles via the cloud, they no longer need to own state-of-the-art equipment for the ultimate gaming experience. Instead, video processing for gaming is performed in the cloud. All that the user needs is a display. Any display; a smartphone, tablet, PC or TV.

Lauf says hardcore gamers typically spend over €1,000 each year on equipment, while some 45 per cent of all gamers can’t play the latest games at the highest display quality because their hardware is not up to the task. “[With cloud gaming,] the entry barrier of hardware no longer exists for customers,” says Lauf.

However, for game-streaming to work, the onus is on the service provider to deploy hardware – dedicated servers hosting high-end graphics processing units (GPUs) – and ensure that the game-streaming traffic is delivered efficiently over the network.

Deutsche Telekom points out that while buffering is used for video or music streaming services, this isn’t an option with gaming given its real-time nature.

“Latency and bandwidth play a pivotal role within gaming,” says Lauf. “Connectivity counts here.”

Networking demands

Deutsche Telekom’s game-streaming service requires a 50 megabit-per-second (Mbps) broadband connection.

Gaming traffic requires between 30-40Mbps of capacity to ensure full graphics quality. This is over four times the bandwidth required for a video stream. “We can lower the bandwidth required [for gaming] but you will notice it when using a bigger screen,” says Lauf.

The operator is testing the bandwidth requirements its mobile network must deliver to ensure the required gaming quality.

“With 5G, the bandwidth is more or less there, but bandwidth is not the only point, maybe the more important topic is latency,” says Lauf. The operator has recently launched 5G in five cities in Germany.

An end-to-end latency of 50-80ms ensures a smooth gaming experience. A latency of 100ms decreases an individual’s game-play while a latency of 120ms noticeably impacts responsiveness.

Deutsche Telekom’s fixed network delivers a sub-50ms latency. However, the home environment must also be factored in: the home’s wireless network and signal coverage, as well as other electronic devices in the home, all can influence gaming performance.

And it is not just latency that counts but jitter: the volatility of the latency. “The average may be below 50ms but if there are peaks at 100ms, it will impact your gameplay,” says Lauf.

Moreover, the latency and jitter performance should ideally be consistent across the network; otherwise, it can give an unfair advantage to select users in multi-player games.

5G and edge computing

The MegentaGaming trial is also being used to test how 5G and edge computing – where the servers and GPUs are hosted at the network edge – can deliver a sufficiently low jitter.

5G will provide more bandwidth than the operator’s existing LTE mobile network. This will not only benefit individual game players but also the size of group-gaming plays. At present, hundreds can play each other in a game but this number will grow, says Lauf.

5G will also enable new features, such as network slicing, that will benefit low jitter, says Lauf.

“‘Edge’ is a fuzzy term,” says Lauf. “But we will build our servers in a decentralised way to ensure latency does not affect gamers.”

MobiledgeX, a Deutsche Telekom spin-out that focusses on cloud infrastructure, operates four data centres in Germany and is also testing GPUs. However, for the test phase of MagentaGaming, Deutsche Telekom is deploying its servers and GPUs at the network edge

Lauf says the complete architecture must be designed with latency in mind: “There are a lot of components that can increase latency.” Not only the network but the GPU run times and the storage run times.

Deploying servers and GPUs at the network edge requires investment. And given that cloud gaming is still being trialled, it is too early to assess gaming’s business success.

So how does Deutsche Telekom justify investing in edge infrastructure and will the edge be used for other tasks as well as gaming?

“This is also a focus of our trial, to see when are the server peak times in terms of usage,” says Lauf. “There are capabilities for other use cases on the same GPUs.”

The operator is considering using the GPUs for artificial intelligence tasks.

Cloud-gaming competition

Microsoft and Google are also pursuing gaming-streaming services.

Microsoft is about to launch a preview of xCloud – its Xbox cloud-based service – and has been accepting registrations in certain countries.

Microsoft, too, recognises the importance of network latency and is working with operators such as SK Telecom in South Korea and Vodafone UK. It has also signed an agreement with T-Mobile, the US operator arm of Deutsche Telekom.

Meanwhile, Google is preparing its Stadia service which will launch next month.

Lauf believes Deutsche Telekom has an edge despite such hyperscaler competition.

“We are sure that with our high-quality network – our edge and 5G latency capabilities, and our last mile to our customer – we have an advantage compared to the hyperscalers given how latency and bandwidth count,” he says.

Gaming content also matters and the operator says it is in discussions with gaming developers that welcome the fact that there are alternatives to the hyperscalers’ platforms.

“We are quite sure we can play a role,” concludes Lauf. “Even if we are not on the same global level of a Google, we will have a right to play in this business.”

Game on!

ECOC 2019 industry reflections

Gazettabyte is asking industry figures for their thoughts after attending the recent ECOC show, held in Dublin. In particular, what developments and trends they noted, what they learned and what, if anything, surprised them. Here are the first responses from Huawei, OFS Fitel and ADVA.

James Wangyin, senior product expert, access and transmission product line at Huawei

At ECOC, one technology that is becoming a hot topic is machine learning. There is much work going on to model devices and perform optimisation at the system level.

And while there was much discussion about 400-gigabit and 800-gigabit coherent optical transmissions, 200-gigabit will continue to be the mainstream speed for the coming three-to-five years.

That is because, despite the high-speed ports, most networks are not being run at the highest speed. More time is also needed for 400-gigabit interfaces to mature before massive deployment starts.

BT and China Telecom both showed excellent results running 200-gigabit transmissions in their networks for distances over 1,000km.

We are seeing this with our shipments; we are experiencing a threefold year-on-year growth in 200-gigabit ports.

Another topic confirmed at ECOC is that fibre is a must for 5G. People previously expressed concern that 5G would shrink the investment of fibre but many carriers and vendors now agree that 5G will boost the need for fibre networks.

As for surprises at the show, the main discussion seems to have shifted from high-speed optics to system-level or device-level optimisation using machine learning.

Many people are also exploring new applications based on the fibre network.

For example, at a workshop to discuss new applications beyond 5G, a speaker from Orange talked about extending fibre connections to each room, and even to desktops and other devices. Other operators and systems vendors expressed similar ideas.

Verizon discussed, in another market focus talk, its monitoring of traffic and the speed of cars using fibre deployed alongside roads. This is quite impressive.

We are also seeing the trend of using fibre and 5G to create a fully-connected world.

Such applications will likely bring new opportunities to the optical industry.

Two other items to note.

The Next Generation Optical Transport Network Forum (NGOF) presented updates on optical technologies in China. Such technologies include next-generation OTN standardisation, the transition to 200 gigabits, mobile transport and the deployment of ROADMs. The NGOF also seeks more interaction with the global community.

The 800G Pluggable MSA was also present at ECOC. The MSA is also keen for more companies to join.

Daryl Inniss, director, new business development at OFS Fitel

There were many discussions about co-packaged optics, regarding the growth trends in computing and the technology’s use in the communications market.

This is a story about high-bandwidth interfaces and not just about linking equipment but also the technology’s use for on-board optical interconnects and chip-to-chip communications such as linking graphics processing units (GPUs).

I learned that HPE has developed a memory-centric computing system that improves significantly processing speed and workload capacity. This may not be news but it was new to me. Moreover, HPE is using silicon photonics in its system including a quantum dot comb laser, a technology that will come for others.

As for surprises, there was a notable growing interest in spatial-division multiplexing (SDM). The timescale may be long term but the conversations and debate were lively. Two areas to watch are in proprietary applications such as very short interconnects in a supercomputer and for undersea networks where the hyperscalers quickly consume the capacity on any newly commission link.

Lastly, another topic of note was the use of spectrum outside the C-band and extending the C-band itself to increase the data-carrying capacity of the fibre.

Jörg-Peter Elbers, senior vice president, advanced technology, ADVA

Co-packaging optics with electronics is gaining momentum as the industry moves to higher and higher silicon throughput. The advent of 51.2 terabit-per-second (Tbps) top-of-rack switches looks like a good interception point. Microsoft and Facebook also have a co-packaged optics collaboration initiative.

As for coherent, quo vadis? Well, one direction is higher speeds and feeds. What will the next symbol rate be for coherent after 60-70 gigabaud (GBd)? A half-step or a full-step; incremental or leap-frogging? The growing consensus is a full-step: 120-140 GBd.

Another direction for coherent is new applications such as access/ aggregation networks. Yet cost, power and footprint challenges will have to be solved.

Advanced optical packaging, an example being the OIF IC-TROSA project, as well as compact silicon photonics and next-gen coherent DSPs are all critical elements here.

A further issue arising from ECOC is whether optical networks need to deliver more than just bandwidth.

Latency is becoming increasingly important to address time-sensitive applications as well as for advanced radio technologies such as 5G and beyond.

Additional applications are the delivery of precise timing information (frequency, time of day, phase synchronisation) where the existing fibre infrastructure can be used to deliver additional services.

An interesting new field is the use of the communication infrastructure for sensing, with Glenn Wellbrock giving a presentation on Verizon’s work at the Market Focus.

Other topics of note include innovation in fibres and optics for 5G.

With spatial-division multiplexing, interest in multi-core and multi-mode fibre applications have weakened. Instead, more parallel fibres operating in the linear regime appear as an energy-efficient, space-division multiplexing alternative.

Hollow-core fibres are also making progress, offering not only lower latencies but lower nonlinearity compared to standard fibres.

As for optics for 5G, what is clear is that 5G requires more bandwidth and more intelligence at the edge. How network solutions will look will depend on fibre availability and the associated cost.

With eCPRI, Ethernet is becoming the convergence protocol for 5G transport. While grey and WDM (G.metro) optics, as well as next-generation PON, are all being discussed as optical underlay options. Grey and WDM optics offer an unbundling on the fibre/virtual fibre level whereas (TDM-)PON requires bitstream access.

Another observation is that radio “x-haul” [‘x’ being front, mid or back] will continue to play an important role for locations where fibre is nonexistent and uneconomical.

Lumentum on ROADM growth, ZR+, and 800G

CTO interview: Brandon Collings

- The ROADM market is experiencing a period of sustained growth

- The Open ROADM MSA continues to advance and expand its scope

- ZR+ coherent modules will support some interoperability to avoid becoming siloed but optical performance differentiation remains key

Lumentum reckons the ROADM growth started some 18-24 months ago.

Brandon Collings gave a Market Focus talk at the recent ECOC show in Dublin, where he explained why it is a good time to be in the reconfigurable optical add-drop multiplexer (ROADM) business.

“Quantities are growing substantially and it is not one reason but a multitude of reasons,” says Collings. The CTO of Lumentum reckons the growth started some 18-24 months ago.

ROADM markets

Lumentum highlights three factors fuelling the demand for ROADM components.

The first is the emergence of markets such as China and India that previously did not use ROADMs.

“China has pretty universally adopted ROADMs going forward,” says Collings. Previously, Optical Transport Network (OTN) point-to-point links and large OTN switches have been used. But ongoing traffic growth means this solution alone is not sustainable, both in terms of the switch capacity and the number of optical transceivers required.

“The bandwidth needed for these OTN switches is scaling beyond the rational use of optical-electrical-optical (OEO) node configuration,” says Collings. “You need 50 to 300 terabits of OTN [switch capacity] surrounded by the equivalent amount of optical transceivers, and that is not economical.”

The Chinese service providers have adopted a hybrid ROADM and OTN network architecture. The ROADMs perform optical bypass – passing on lightpaths destined for other nodes in the network – to reduce the optical transceivers and OTN switch capacity needed.

The network operators in India, in contrast, are using ROADMs to cope with the many fibre cuts they experience. The ROADMs are used to restore the network by rerouting traffic around the faults.

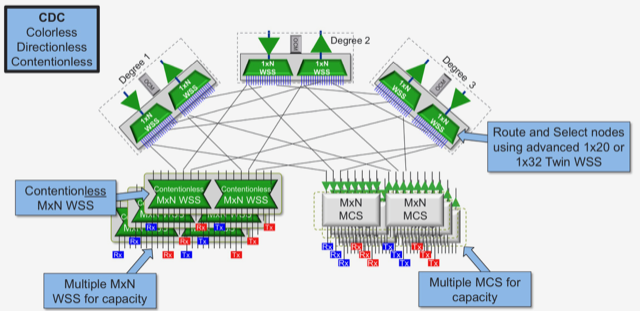

A second market magnifier is how modern ROADM networks use more wavelength-selective switches (WSSes). Both colourless and directionless (CD) ROADMs, and colourless, directionless and contentionless (CDC) ROADMs use more WSSes per node (see diagram above).

Such ROADMs also use more advanced WSS designs. Using an MxN WSS for the multicast switch in a route-and-select CDC ROADM, for example, delivers system benefits especially when adding and dropping wider optical channels that are starting to be used. Collings says Lumentum’s own MxN WSS is now close to volume manufacturing.

The third factor fuelling ROADM growth is the ongoing demand for more capacity. “Every time you fill a fibre, you typically use another degree in your [ROADM] node and light up a second fibre to grow capacity,” says Collings.

Operators with limited fibre are exploiting the fibre’s spectrum by using the C-band and L-band to grow capacity. This, too, requires more WSSes per node.

“All of these growth factors are happening simultaneously,” says Collings.

Open ROADM MSA

Lumentum is also a member of the Open ROADM multi-source agreement (MSA) that has created a disaggregated design to enable interoperability between systems vendors’ ROADMs.

AT&T is deploying Open ROADM systems in its metro networks while the MSA members have begun work on Revision 6.0 of the standard.

“Open ROADM is maturing and increasing its span of interest,” says Collings.

At first glance, Lumentum’s membership is surprising given it supplies ROADM building-blocks to vendors that make the ROADM systems. Moreover, the Open ROADM standard views a ROADM as an enclosed system.

“The Open ROADM has set certain boundaries where it defines interfaces so that vendor A can talk to vendor B,” says Collings. “And it has set that boundary pretty much at the complete ROADM node.”

Yet Lumentum is an MSA member because part of the software involved in controlling the ROADM is within the node. “It is not just a hardware solution, it is hardware and a significant software solution to supply into that,” says Collings.

Pluggable optics is also a part of the Open ROADM MSA, another reason for Lumentum’s interest. “There is a general discussion about potentially making a boundary condition around pluggable optics as well,” he says.

Collings says the MSA continues to build the ecosystem and the management system to help others use Open ROADM, not just AT&T.

400ZR, OpenZR+ and ZR+

As a supplier of coherent optics and line-side modules, Lumentum is interested in the OIF’s 400ZR standard and what is referred to as ZR+.

ZR+ offers an extended set of features and enhance optical performance. Both 400ZR and ZR+ will be implemented using QSFP-DD and OSFP pluggable modules.

The 400ZR specification has been developed for a specific purpose: to deliver 400 Gigabit Ethernet for distances of at least 80km for data centre interconnect applications. But 400ZR is not suited for more demanding metro mesh and longer-distance metro-regional applications.

This is what ZR+ aims to address. However, ZR+, unlike 400ZR, is not a standard and is a broad term.

At ECOC, Acacia Communications and NTT Electronics detailed interoperability between their coherent DSPs using what they call ‘OpenZR+’. OpenZR+ uses Ethernet traffic like 400ZR but also supports the additional data rates of 100, 200 and 300 Gigabit Ethernet. OpenZR+ also borrows from the OpenROADM specification to enable module interoperability between vendors for data centre interconnect applications with reaches beyond 120km.

But ZR+ encompasses differentiated coherent designs that support 400 gigabits in a compact pluggable but also lower transmission rates that trade capacity for reach.

“So, yes, both classes of ‘ZR+’ are emerging,” says Collings.

OpenZR+ seeks interoperability in compact pluggables, as well as higher power, higher performance modes less focused on interoperability, while ZR+ includes proprietary, higher-power solutions. “That [ZR+] is an area where distance and capacity equal money, in terms of savings and value,” says Collings. “That is going to be an area of differentiation, as it has always been for coherent interfaces.”

Collings favours some standardisation around ZR+, to enable interchangeability among module vendors and avoid the creation of a siloed market.

“But I don’t think we are going to find ZR+ interfaces defined for interoperability because you will find yourself walking back on that differentiation in terms of value that the network operators are looking to extract,” says Collings. “They need every bit of distance they can get.”

Network operators want compact, cost-effective solutions that do ‘even more stuff’ than they are used to. “400ZR checks that box but for bigger, broader networks, operators want the same thing,” says Collings.

There is a continuum of possibilities here, he says: “It is high value from a network operator point of view and it’s a technology challenge for the likes of us and the [DSP] chip vendors.”

800G Pluggable MSA

Lumentum also recently joined the 800G Pluggable MSA that was announced at the CIOE show, held in Shenzhen in September.

“Like any client interface where Lumentum is a supplier of the underlying [laser] chips – whether DMLs, EMLs or VCSELs – we feel it is pretty important for us to be in the definition setting of the interface,” says Collings. “We want the interface to be aligned optimally to what the chip can do.”

Lumentum announced last year that it is exiting the client-side module business and therefore will be less involved in the module aspects of the interface work.

“Having moved out of the [client-side] module business, we’re finding an awful lot of customers interested in engaging with us on the chip level, much more than before,” says Collings.

Further information

For an Optical Connections article about OpenZR+, co-authored by Acacia, NTT Electronics, Lumentum, Juniper Networks and Fujitsu Optical Components, click here

Gazettabyte’s 10th anniversary

Gazettabyte’s 10th anniversary passed quietly sometime in August.

The work to create the website started earlier, as did the writing of the first stories to ensure there was content when the site went live in August 2009.

Gazettabyte has since published hundreds of stories and articles covering emerging technologies in the telecom and datacom industries.

The stories highlight the many changes that have taken place over the last decade.

Continual change

Many optical component firms have either folded or have been acquired, including industry-leading firms, in the last decade.

For example, the first Gazettabyte story featured the start-up, OneChip Photonics, that made photonic integrated circuits (PICs) for fibre-to-the-x (FTTx). The company had just received $19.5m in funding.

The company’s technology was impressive but the FTTx market experienced ongoing cost reductions with companies pushing discretes such that the promised benefits of integration didn’t materialise. The start-up, with leading PIC expertise, folded.

There was also an interview with BT about 10G PON in 2009. This highlights another trend in telecoms, technology can take a long time to come to market.

The fastest optical interfaces at the time were 40 and 100 gigabit-per-second.

Fast-forward ten years and now the talk is of 800-gigabit client-side modules and terabit-plus coherent interfaces.

Acacia, an example of a leading player being acquired, recently announced its second-generation AC1200 coherent module that supports 1.2 terabits in a 150-gigahertz optical channel. Nokia has just given a hint about its next-generation 100-gigabaud coherent solution – the PSE-4? – with a 1.3-terabit single-wavelength trial over 93km. The total capacity transmitted over the fibre using Nokia’s technology was 50.8 terabits.

The last decade has also witnessed the continual rise of the internet giants that deliver double-digit yearly revenue growth. Such hyperscalers have become significant consumers of optics and drivers of technology.

Their rise has also stirred the telecoms industry, with the network operators embarking on a radical re-architecting of how they build and operate their networks.

The network operators have seen how the hyperscalers use software and commercial-off-the-shelf hardware and they too want the benefits of disaggregated designs and open networking.

The rise of China is a further key development of the last decade. China’s unbridled ambition has seen it become a huge driver, manufacturer and consumer of leading telecom and datacom technologies.

Change on this scale is unsettling. But it is also to be welcomed. It shows telecom and datacom as healthy industries despite being mature.

Typically, a mature industry is settled: two or three players dominate a segment, the barrier for entry for start-ups is excessively high, and little changes with time.

No close observer of telecom and datacom would describe them as plodding industries.

Past and present

Over the years, Gazettabyte has conducted several feature series. These include CEO and CTO interviews, an acknowledgement of the silicon photonics pioneers and luminaries including Professor Richard Soref, described by another silicon photonics luminary, Andrew Rickman, as the ‘founding father of silicon photonics’.

Gazettabyte also proved a valuable resource during the writing of a book on silicon photonics that was co-authored with OFS Fitel’s Daryl Inniss.

Gazettabyte will mark its 10th anniversary with a series of features and special interviews.

It will revisit the CTO interviews and will focus on some key topics: the network transformation being undertaken by the telcos, co-packaged optics, and certain other key emerging technologies. The first CTO interview will be published next, Lumentum’s Brandon Collings, an ongoing insightful source for Gazettabyte.

This is also an opportunity to acknowledge the sponsors of the site, many of whom have supported Gazettabyte from the start.

Without Gazettabyte’s backers – ADVA, Ciena, Huawei, Infinera, Intel, LightCounting, Lumentum, Nokia, II-VI (Finisar) – the site would not exist.

Open ROADM gets deployed as work starts on Release 6.0

AT&T has deployed Open ROADM technology in its network and says all future reconfigurable optical add-drop multiplexer (ROADM) deployments will be based on the standard.

“At this point, it is in a single metro and we are working on a second large metro area,” says John Paggi, assistant vice president member of technical staff, network infrastructure and services at AT&T.

Open ROADM listed as a requirement in RFPs (Request For Proposals) from many other service providers

As shown are the various elements included in the disaggregated Open ROADM MSA. Also shown is the hierarchical SDN controller architecture with the federated controllers overseeing the optical layer and the multi-layer controller overseeing the path creation across the layer, from IP to optical. Source: Open ROADM MSA

Meanwhile, the Open ROADM multi-source agreement (MSA) continues to progress, with members working on Release 6.0 of the standard.

Motivation

AT&T is a founding member of the Open ROADM MSA along with system vendors Ciena, Fujitsu and Nokia. The organisation has since grown to 23 members, 13 of which operate networks. Besides AT&T, the communications service providers include Deutsche Telekom, Orange, KDDI, SK Telecom and Telecom Italia.

The initiative was created to promote a disaggregated ROADM standard that enables interoperability between vendors’ ROADMs.

The specification work includes the development of open interfaces to control the ROADMs using software-defined networking (SDN) technology. The scope of the disaggregated design has also been expanded beyond ROADMs to include optical transceivers, OTN switching to handle sub-wavelength traffic, and optical amplifiers.

AT&T viewed the MSA as a way to change the traditional model of assigning two ROADM system vendors for each of its metro regions.

“We had two suppliers to keep each other honest,” says Paggi. “But once we had committed a region to a supplier, we were more or less beholden to that supplier for additional ROADM and transponder purchases.”

AT&T wanted ‘true hyper-competition’ among ROADM and transponder suppliers and the Open ROADM MSA was the result.

The operator saw the MSA as a way to reduce costs and speed up innovation by using an open networking model. Opening up and standardising the design would also allow innovative start-up vendors to participate. With the traditional supply model, an operator would favour larger firms knowing it would be dependent on the suppliers for 5-10 years.

“Because you can mix and match different suppliers, Open ROADM allows us to introduce disrupters to our environment,” says Paggi.

Evolution

The first Open ROADM revision used 100-gigabit wavelengths and a 50GHz fixed grid. A flexible grid and in-line amplification that extended the reach of 100-gigabit wavelengths to 1,000km were then added with Revision 2.

“In Revision 3 we made Open ROADM applicable to more use cases,” says Martin Birk, director member of technical staff, network infrastructure and services, AT&T. “We started introducing things like OTUCn and FlexO in preparation for 400 gigabits.” The OTN ‘Beyond 100 gigabit’ OTUCn format comprises ‘n’ multiples of 100-gigabit OTUC frames, while FlexO refers to the Flexible OTN format.

Adopting OTN technologies is part of enabling Open ROADM to support 200-, 300- and 400-gigabit wavelengths.

Revision 4 then added ODUFlex, 400-gigabit clients, and support for low-noise amplifiers to further extend reach, while the latest fifth revision adds streaming telemetry for network monitoring using work from the OpenConfig industry group.

“A lot of features that widen considerably the application of Open ROADM,” says Birk.

Revision 6.0

The frequency of each Open ROADM release was initially once a year but now the scope of each revision has been curtailed to enable two releases a year. Members are polled as to what new features are required at the start of each standardisation process.

Now, the MSA members are working on revision 6.0 that covers ‘all directions’ of the standard.

“We are improving the control plane interoperability with more features,” says Birk. “Right now you have a single network view; in future, you could have an idealised network plan and a network view with actual failures, and you could provision services across these network views.”

And with the advent of 600-gigabit, 800-gigabit and even 1.2-terabit coherent wavelengths, OpenROADM members may add support for faster speeds than 400 gigabits.

“Just as our suppliers continue to evolve their roadmaps, so does the Open ROADM MSA to stay relevant,” says Birk.

AT&T’s Open ROADM deployments support 100-gigabit wavelengths while the 400-gigabit technology is still in development.

“The ROADMs will not change; the only thing that will change is the software,” says Birk. “And in a disaggregated design, you can leave the ROADMs on version 2.0 and upgrade the transponders to 400 gigabits and version 5.0.”

This, says Birk, is why it is much easier to introduce new technology with an open design compared to monolithic platforms where an upgrade involves all the element management systems, ROADMs and transponders.

Status

The Open ROADM MSA says it is up to individual network operator members to declare the status of their Open ROADM network deployments. Accordingly, the status of overall Open ROADM deployments is unclear.

What AT&T will say is that it is being approached by vendors that want to demonstrate their Open ROADM technology to the operator.

“When we ask them why they have done this without any agreement that AT&T would purchase their solutions, they respond that they are seeing Open ROADM listed as a requirement in RFPs (Request For Proposals) from many other service providers,” says Paggi. “They have taken it upon themselves to develop Open ROADM-compliant products.”

At the OFC show earlier this year, an Open ROADM MSA showcased an SDN controller turning up a wavelength to send virtual machines between two data centres. The SDN controller then terminated the optical connection on completion of the transfer.

Operators AT&T and Orange were part of the demonstration as was the University of Texas, Dallas. “They [the University of Texas] are a supercomputing centre and they can create some nice applications on top of Open ROADM,” says Birk.

The system vendors involved in the OFC demonstration included Ciena, Fujitsu, ECI Telecom, Infinera and Juniper Networks.

Infinera rethinks aggregation with slices of light

An optical architecture for traffic aggregation that promises to deliver networking benefits and cost savings was unveiled by Infinera at this week’s ECOC show, held in Dublin.

Traffic aggregation is used widely in the network for applications such as fixed broadband, cellular networks, fibre-deep cable networks and business services.

Infinera has developed a class of optics, dubbed XR optics, that fits into pluggable modules for traffic aggregation. And while the company is focussing on the network edge for applications such as 5G, the technology could also be used in the data centre.

Optics is inherently a point-to-point communications technology, says Infinera. Yet optics is applied to traffic aggregation, a point-to-multipoint architecture, and that results in inefficiencies.

“The breakthrough here is that, for the first time in optics’ history, we have been able to make optics work to match the needs of an aggregation network,” says Dave Welch, founder and chief innovation officer at Infinera.

Infinera proposes coherent sub-carriers for a new class of problem

XR Optics

Infinera came up with the ‘XR’ label after borrowing from the naming scheme used for 400ZR, the 400-gigabit pluggable optics coherent standard.

“XR can do point-to-point like ZR optics,” says Welch. “But XR allows you to go beyond, to point-to-multipoint; ‘X’ being an ill-defined variable as to exactly how you want to set up your network.”

XR optics uses coherent technology and Nyquist sub-carriers. Instead of using a laser to generate a single carrier, pulse-shaping is used at the transmitter to generate multiple carriers, referred to as Nyquist sub-carriers.

The sub-carriers convey the same information as a single carrier but by using several sub-carriers, a lower symbol rate can be used for each. The lower symbol rate improves the tolerance to non-linear effects in a fibre and enables the use of lower-speed electronics.

Infinera first detailed Nyquist sub-carriers as part of its advanced coherent toolkit, and implemented the technology with its Infinite Capacity Engine 4 (ICE4) used for optical transport.

The company is bringing to market its second-generation Nyquist sub-carrier design with its ICE6 technology that supports 800-gigabit wavelengths.

Now Infinera is proposing coherent sub-carriers for a new class of problem: traffic aggregation. But XR optics will need backing and be multi-sourced if it is to be adopted widely.

Network operators will also need to be convinced of the technology’s merits. Infinera claims XR optics will halve the pluggable modules needed for aggregation and remove the need for intermediate digital aggregation platforms, reducing networking costs by 70 percent.

Aggregation optics

XR optics will be required at both ends of a link. The modules will need to understand a protocol that tells them the nature of the sub-carriers to use: their baud rate (and resulting spectral width) and modulation scheme.

Infinera cites as the example a 4GHz-wide sub-carrier modulated using 16-ary quadrature amplitude modulation (16-QAM) that can transmit 25-gigabit of data.

A larger capacity XR coherent module will be used at the aggregation hub and will talk directly with XR modules at the network edge, “casting out” its sub-carriers to the various pluggable modules at the network edge.

For example, the module at the hub may be a 400-gigabit QSFP-DD supporting 16, 25-gigabit sub-carriers, or an 800-gigabit QSFP-DD or OSFP module delivering 32 sub-carriers. A mix of lower-speed XR modules will be used at the edge: 100-gigabit QSFP28 XR modules based on four sub-carriers and single sub-carrier 25-gigabit SFP28s.

“As soon as you have defined that each one of these transceivers is some multiple of that 25-gigabit sub-carrier, they can all talk to each other,” says Welch.

The hub XR module and network-edge modules are linked using optical splitters such that all the sub-channels sent by the hub XR module are seen by each of the edge modules. The hub in effect broadcasts its sub-carriers to all the edge devices, says Welch.

A coding scheme is used such that each edge module’s coherent receiver can pick off its assigned sub-channel(s). In turn, an edge module will send its data using the same frequencies on a separate fibre.

Basing the communications on multiples of sub-carriers means any XR module can talk to any other, irrespective of their overall speeds.

Sub-carriers can also be reassigned.

“In that fashion, today you are a 25-gigabit client module and tomorrow you are 100-gigabit,” says Welch. Reassigning edge-module capacities will not happen often but when undertaken, no truck roll will be needed.

System benefits

In a conventional aggregation network, the edge transceivers send traffic to an intermediate electrical aggregation switch. The switch’s line-side-facing transceivers then send on the aggregated traffic to the hub.

Using XR optics, the intermediate aggregation switch becomes redundant since the higher-capacity XR coherent module aggregates the traffic from the edge. Removing the switch and its one-to-one edge-facing transceivers account for the halving of the overall transceiver count and the overall 70 percent network cost saving (see diagram below).

The disadvantage of getting rid of the intermediate aggregation switch is minor in comparison to the plusses, says Infinera.

“In a network where all the traffic is going left to right, there is always an economic gain,” says Welch. And while a layer-2 aggregation switch enables statistical multiplexing to be applied to the traffic, it is insignificant when compared to the cost-savings XR optics brings, he says.

Challenges

XR transceivers will need to support sub-carriers and coherent signal processing as well as the language that defines the sub-carriers and their assignment codes. Accordingly, module makers will need to make a new class of XR pluggable modules.

“We are working with others,” says Welch. “The object is to bring the technology and a broad-base supply chain to the market.” The fastest way to achieve this, says Welch, is through a series of multi-source agreements (MSAs). Arista Networks and Lumentum were both quoted as part of Infinera’s XR Optics press release.

Another challenge is that a family of coherent digital signal processors (DSPs) will need to be designed that fit within the power constraints of the various slim client-side pluggable form factors.

Infinera stresses it is unveiling a technological development and not a product announcement. That will come later.

However, Welch says that XR optics will support a reach of hundreds of kilometres and even metro-regional distances of over 1,000km.

“We are comfortable we are working with partners to get this out,” says Welch. “We are comfortable we have some key technologies that will enhance these capabilities as well.”

Other applications

Infinera’s is focussing its XR optics on applications such as 5G. But it says the technology will benefit many network applications.

“If you look at the architecture in the data centre or look are core networks, they are all aggregation networks of one flavour or another,” says Welch. “Any type of power, cost, and operational savings of this magnitude should be evaluated across the board on all networks.”

Acacia heralds the era of terabit-plus optical channels

Acacia Communications has unveiled the AC1200-SC2 that delivers 1.2 terabits over a single optical channel.

The SC2 (single chip, single channel) is an upgrade of Acacia’s high-end AC1200 module. The AC1200 too is a 1.2-terabit module but uses two optical channels, each transmitting a 600-gigabit wavelength. The SC2 sends 1.2 terabits using two sub-carriers that fit within a single 150GHz-wide channel.

Each line is a data rate. Shown is the scope of how the baud rate and the modulation scheme can be varied and its impact on channel width, reach and data rate. Source: ADVA.

“In the SC2, we take care of everything so the user configures a single channel that is easier to manage in their network,” says Tom Williams, vice president of marketing at Acacia.

1.2-terabit channel

Acacia unveiled the AC1200 at the ECOC show in 2017. With its introduction, Acacia gained an advantage over its system-vendor rivals in bringing a 1.2-terabit coherent module to market using 600-gigabit wavelengths. The module supports up to 64-ary quadrature amplitude modulation (64-QAM) and a symbol rate of 69 gigabaud (GBd).

Systems vendors such as Ciena, with its WaveLogic 5, and Infinera, with its Infinite Coherent Engine 6 (ICE6), responded with their next-generation coherent designs that use symbol rates approaching 100GBd and support an 800-gigabit wavelength.

Sell-side research analysts interpreted the coherent developments as Acacia having a window of opportunity to exploit the AC1200 until the systems vendors’ coherent designs come to market in the coming year. The analysts also noted how 400 Gigabit Ethernet client signals better fit in an 800-gigabit wavelength compared to a 600-gigabit wavelength.

Then, in July, Acacia’s status as a merchant coherent technology supplier changed with the announcement that Cisco Systems is to acquire the company for $2.6 billion. Now, Acacia has detailed the SC2 as its acquisition awaits completion.

AC1200-SC2

The SC2 uses the same form factor and electrical connector as the AC1200 module, simplifying the upgrading of system designs using the AC1200. However, the SC2 module uses a single fibre pair for its optical output whereas the AC1200 uses two pairs, one for each channel.

The SC2 module shares the same Pico coherent digital signal processor (DSP) and baud rates as the AC1200. The Pico DSP uses fractional quadrature amplitude modulation (QAM) and an adjustable baud rate.

Fractional QAM allows the tuning of the transmitted data rate by using a mix of adjacent modulation formats. For example, 8-QAM and 16-QAM are alternated, and the percentage of time each is used determining the resulting data rate. In turn, the baud rate can be increased to widen the signal’s spectrum, if the optical channel permits, such that using a lower modulation scheme may become possible, improving the reach (see diagram above).

The AC1200 uses 50GHz- and 75GHz-wide channels while the SC2 uses 50-150GHz channels. For 600-gigabit and 1.2-terabit transmissions, the widest channels are used: 75GHz for the AC1200, and 150GHz for the SC2. “But as you go down in data rate, you can address the transmission in multiple ways,” says Williams. “You can run a higher modulation scheme in a narrow channel or, with a wider channel, run a lower modulation scheme to go further.”

The result optical performance means that the SC2 can be used for multiple applications: from short-span data centre interconnect where the full 1.2-terabit capacity is sent using 64-QAM, to metro-regional and long-haul distances using 800-gigabit and 16-QAM, all the way to ultra-long-haul terrestrial and subsea links with 400-gigabitand quadrature phase-shift keying (QPSK) modulation.

The AC1200 and the SC2 have comparable optical performance in terms of spectral efficiency and reach. This is unsurprising given how both modules use the same Pico DSP, baud rates and modulation schemes.

The AC1200 uses two 75GHz channels, each carrying 600 gigabits, to send 1.2 terabits, while the SC2 uses two sub-carriers in a 150GHz channel. However, the SC2 has a slight advantage since no guard band is needed between the two channels as is required with the AC1200 (unless the AC1200 is sending a two-channel ‘superchannel’ whereby no dead zone is needed between the channels).

Acacia is not detailing how it generates the optical sub-carriers besides saying the change stems from the interface between the Pico DSP and its silicon photonics-based photonic integrated circuit (PIC). The company will also not say if the SC2 uses a new PIC design.

Operational benefits

The fact that the SC2 and AC1200 deliver the same reach and capacity may explain why Acacia downplays the argument that the company has again leapfrogged its rivals with the advent of a module that sends 1.2 terabits over a single channel.

Instead, Acacia stresses the system and operational benefits resulting from doubling the data transmitted per channel.

“The SC2 module allows the entire capacity to be managed as a single channel,” says Williams. “The original [AC1200] module is well-suited to brownfield networks operating with 50GHz or 75GHz spacing, while the SC2 offers advantages in greenfield network architectures that can use channel plans up to 150GHz.”

Using a higher-capacity channel requires fewer optical components and reconfigurable optical add/ drop multiplexer (ROADM) ports thereby reducing networking costs, says Williams.

Using 150GHz-wide channels also aligns with an emerging consensus among network operators regarding wavelength roadmaps. “Network operators want to operate on some standardised grid based on regular multiples [50GHz, 75GHz] because it avoids fragmentation,” says Williams.

Availability

Acacia is already providing the SC2 module to certain customers that are undertaking validation testing. The firm is ready to ramp production based on particular customer demand.

Acacia will also be demonstrating its latest module at this week’s ECOC show being held in Dublin.

Companies gear up to make 800 Gig modules a reality

Nine companies have established a multi-source agreement (MSA) to develop optical specifications for 800-gigabit pluggable modules.

The MSA has been created to address the continual demand for more networking capacity in the data centre, a need that is doubling roughly every two years. The largest switch chips deployed have a 12.8 terabit-per-second (Tbps) switching capacity while 25.6-terabit and 51-terabit chips are in development.

“The MSA members believe that for 25.6Tbps and 51.2Tbps switching silicon, 800-gigabit interconnects are required to deliver the required footprint and density,” says Maxim Kuschnerov, a spokesperson for the 800G Pluggable MSA.

A 1-rack-unit (1RU) 25.6-terabit switch platform will use 32, 800-gigabit modules while a 51.2-terabit 2RU platform will require 64.

The MSA has been founded now to ensure that there will be optical and electrical components for 800-gigabit modules...

Motivation

The founding members of the 800G MSA are Accelink, China Telecommunication Technology Labs, H3C, Hisense Broadband, Huawei Technology, Luxshare, Sumitomo Electric Industries, Tencent, and Yamaichi Electronics. Baidu, Inphi and Lumentum have since joined the MSA.

The MSA has been founded now to ensure that there will be optical and electrical components for 800-gigabit modules when 51.2-terabit platforms arrive in 2022.

And an 800-gigabit module will be needed rather than a dual 400-gigabit design since the latter will not be economical.

“Historically, the cost of optical short-reach interfaces has always scaled with laser count,” says Kuschnerov. “Pluggables with 8, 10 or 16 lasers have never been successful in the long run.”

He cites such examples as the first 100-gigabit module implemented using 10×10-gigabit channels, and the early wide-channel 400 Gigabit Ethernet designs such as the SR16 parallel fibre and the FR8 specifications. The yield for optics doesn’t scale in the same way as CMOS for parallel designs, he says.

That said, the MSA will investigate several designs for the different reaches. For 100m, 8-channel and 4-channel parallel fibre designs will be explored while for the longer reaches, single-fibre coarse wavelength division multiplexing (CWDM) technology will be used.

Shown from left to right are a PSM8 and a PSM4 module for 100m spans, and the CWDM4 design for 500m and 2km reaches. Source: 800G Pluggable MSA.

“Right now, we are discussing several technical options, so there’s no conclusion as to which design is best for which reach class,” says Kuschnerov.

The move to fewer channels is similar to how 400 Gigabit Ethernet modules have evolved: the 8-channel FR8 and LR8 module designs address early applications but, as demand ramp, they have made way for more economical four-channel FR4 and LR4 designs.

Specification work

The MSA will focus on several optical designs for the 800G Pluggable MSA, all using 112Gbps electrical input signals.

The first MSA design, for applications up to 100m, will explore 8×100-gigabit optical channels as a fast-to-market solution. This is a parallel single-mode 8-channel (PSM8) design, with each 100-gigabit channel carried over a dedicated fibre. The module will use 16 fibres overall: eight for input and eight for output. The MSA will also explore a PSM4 design – ‘the real 800G’ – where each fibre carries 200 gigabits.

The CWDM designs, for 500m and 2km, will require a digital signal processor (DSP) to implement four-level pulse-amplitude modulation (PAM4) signalling that generates the 200-gigabit channels. An optical multiplexer and demultiplexer will also be needed for the two designs.

The MSA will explore the best technologies for each of the three spans. The modulation technologies to be investigated include silicon photonics, directly modulated lasers (DML) and externally modulated lasers (EML).

Challenges

The MSA foresees several technical challenges at 800 gigabits.

One challenge is developing 100-gigabaud direct-detect optics needed to generate the four 200 gigabit channels using PAM4. Another is fitting the designs into a QSFP-DD or OSFP pluggable module while meeting their specified power consumption limitations. A third challenge is choosing a low-power forward error correction scheme and a PAM4 digital signal processor (DSP) that meet the MSA’s performance and latency requirements.

“We expect first conclusions in the fourth quarter of 2020 with the publication of the first specification,” says Kuschnerov.

The 800G Pluggable MSA is also following industry developments such as the IEEE proposal for the 8×100-gigabit SR8 over multi-mode fibre that uses VCSELs. But the MSA believes VCSELs represent a higher risk.

“Our biggest challenge is creating sufficient momentum for the 800-gigabit ecosystem, and getting key industry contributors involved in our activity,” says Kuschnerov.

Arista Networks, the switch vendor that has long promoted 800-gigabit modules, says it has no immediate plans to join the MSA.

“But as one of the supporters of the OSFP MSA, we are aligned in the need to develop an ecosystem of technology suppliers for components and test equipment for OSFP pluggable optics at 800 gigabits,” says Martin Hull, Arista’s associate vice president, systems engineering and platforms.

Hull points out that the OSFP pluggable module MSA was specified with 800 gigabits in mind.

Next-generation Ethernet

The fact that there is no 800 Gigabit Ethernet standard will not hamper the work, and the MSA cannot wait for the development of such a standard.

“The IEEE is in the bandwidth assessment stage for beyond 400-gigabit rates and we haven’t seen too many contributions,” says Kuschnerov. The IEEE would then need to start a Call For Interest and define an 800GbE Study Group to evaluate the technical feasibility of 800GbE. Only then will an 800GbE Task Force Phase start. “We don’t expect the work on 800GbE in IEEE to progress in line with our target for component sampling,” says Kuschnerov. First prototype 800G MSA modules are expected in the fourth quarter of 2021.

Arista’s Hull stresses that an 800GbE standard is not needed given that 800-gigabit modules support standardised rates based on 2×400-gigabit and 8×100-gigabit.

Moreover, speed increments for Ethernet are typically more than 2x. “That would suggest an expectation for 1 Terabit Ethernet (TbE) or 1.6TbE speeds,” says Hull. This was the case with the bandwidth transition from 10GbE to 40GbE (4x), and 40GbE to 100GbE (2.5x).

“It would be unusual for Ethernet’s evolution to slow to a 2x rate and make 800 Gigabit Ethernet the next step,” says Hull. “The introduction of 112Gbps serdes allows for a doubling of input-output (I/O) on a per-physical interface but this is not the next Ethernet speed.”

Pluggable versus co-packaged optics

There is an ongoing industry debate as to when switch vendors will be forced to transition from pluggable optics on the front panel to photonics co-packaged with the switch ASIC.

The issue is that with each doubling of switch chip speed, it becomes harder to get the data on and off the chip at a reasonable cost and power consumption. Driving the ever faster signals from the chip to the front-panel optics is also becoming challenging.

Packaging the optics with the switch chip enables the high-speed serialiser-deserialiser (serdes), the circuitry that gets data on and off the chip, to be simplified; no longer will the serdes need to drive high-speed signals across the printed circuit board (PCB) to the front panel. Adopting co-packaged optics simplifies the PCB design, constrains the switch chip’s overall power consumption given how hundreds of serdes are used, and reduces the die area reserved for the serdes.

But transitioning to co-packaged optics represents a significant industry shift.

The consensus at a panel discussion at the OFC show, held in March, entitled Beyond 400G for Hyperscaler Data Centres, was that the use of front-panel pluggable optics will continue for at least two more generations of switch chips: at 25.6Tbps and at 51.2Tbps.

It is a view shared by the 800G Pluggable MSA and one of its motivating goals.

“The MSA believes that 800-gigabit pluggables are technically feasible and offer clear benefits versus co-packaging,” says Kuschnerov. “As long as the industry can support pluggables, this will be the preferred choice of the data centre operators.”

It has always paid off to bet on the established technology as long as it is technically feasible due to the sheer amount of investment already made, says Kuschnerov.

Major shifts in interconnects such as coherent replacing direct detect, or PSM/ CWDM pushing out VCSELs, or optics replacing copper have happened only when legacy technologies approach their limits and which can’t be overcome easily, he says: “We don’t believe in such fundamental limitations for 800-gigabit pluggables.”

So when will the industry adopt co-packaged optics?

“We believe that beyond 51.2Tbps there is a very high risk surrounding the serdes and thus co-packaging might become necessary to overcome this limitation,” says Kuschnerov.

Switch-chip-maker, Broadcom, has said that co-packaged optics will be adopted alongside pluggables, enabling the hyperscalers to lessen the risk of the new technology’s introduction. Broadcom believes that co-packaged optics solutions will appear as early as the advent of 25.6-terabit switch chips.

An earlier transitional introduction is also a view shared by Hugo Saleh, vice president of marketing and business development at silicon photonics specialist at Ayar Labs, which recently unveiled its optical I/O chiplet technology is being co-packaged with Intel’s Stratix 10 FPGA.

Saleh says the consensus is that the node past 51.2Tbps must use in-packaged optics. But he also expects overlap before then, especially for high-end and custom solutions.

“It [co-packaged optics] is definitely coming, and it is coming sooner than some folks expect,” says Saleh.

Several companies have contacted the MSA since its 800-gigabit announcement. The 800G MSA is also in discussion with several component and module vendors that are about to join, from Asia and elsewhere. Inphi and Lumentum have joined since the MSA was announced.

Discussions have started with system vendors and hyperscale data center operators; Baidu is one that has since signed up.