Nokia adds 400G coherent modules across its platforms

Nokia is now shipping its 400-gigabit coherent multi-haul CFP2-DCO. The module exceeds the optical performance of 400ZR and ZR+ coherent pluggables.

Nokia’s CFP2-DCO product follows its acquisition of silicon photonics specialist, Elenion Technologies, in 2020.

Nokia has combined Elenion’s coherent optical modulator and receiver with its low-power 64-gigabaud (GBd) PSE-Vc coherent digital signal processor (DSP).

Nokia is also adding coherent pluggables across its platform portfolio.

“Not just optical transport and transponder platforms but also our IP routing portfolio as well,” says Serge Melle, director of product marketing, IP-optical networking at Nokia.

“This [amplifier and filter] allows for much better optical performance,”

“This [amplifier and filter] allows for much better optical performance,”

Melle is an optical networking industry veteran. He joined Nokia two years ago after a 15-year career at Infinera. Melle started at Pirelli in 1995 when it was developing a 4×2.5-gigabit wavelength-division multiplexing (WDM) system. In between Pirelli and Infinera, Melle was at Nortel Networks during the optical boom.

400ZR, ZR+ and the multi-haul CFP2-DCO

The CFP2-DCO’s optical performance exceeds that of the QSFP-DD and OSFP form factors implementing 400ZR and ZR+ but is inferior to line-card coherent transponders used for the most demanding optical transport applications.

The 400ZR coherent OIF standard transmits a 400-gigabit wavelength up to 120km linking equipment across data centres. Being a standard, 400ZR modules are interoperable.

The ZR+ adds additional transmission speeds – 100, 200 and 300-gigabits – and has a greater reach than ZR. ZR+ is not a standard but there is the OpenZR+ multi-source agreement (MSA).

Implementing 400ZR and ZR+ coherent modules in a QSFP-DD or OSFP module means they can be inserted in client-side optics’ ports on switches and routers.

The OIF did not specify a form factor as part of the 400ZR standard, says Melle, with the industry choosing the QSFP-DD and OSFP. But with the modules’ limited power dissipation, certain modes of the coherent DSP are turned off, curtailing the feature set and the reach compared to a CFP2-DCO module.

The modules also have physical size restrictions.

“You don’t have enough thermal budget to put an optical amplifier inside the QSFP-DD package,” says Melle. “So you are left with whatever power the DWDM laser outputs through the modulator.” This is -7dBm to -10dBm for 400ZR and ZR+ optics, he says.

The CFP2-DCO is larger such that the DSP modes of encryption, OTN client encapsulation, LLDP snooping (used to gather data about attached equipment), and remote network monitoring (RMON) can be enabled.

The CFP2-DCO can also house an optical amplifier and tunable filter. The filter reduces the out-of-band optical signal-to-noise ratio (OSNR) thereby increasing the module’s sensitivity. “This [amplifier and filter] allows for much better optical performance,” says Melle. A 400-gigabit multi-haul module has a 0dBm optical output power, typically.

The different transceiver types are shown in the table.

Nokia’s paper at the recent OFC virtual conference and exhibition detailed how its 400-gigabit multi-haul CFP2-DCO achieved a reach of 1,200km.

The paper details the transmission of 52, 400-gigabit signals, each occupying a 75GHz channel, for a total capacity of 20.8 terabits-per-second (Tbps).

Melle stresses that the demonstration was more a lab set-up than a live network where a signal goes through multiple reconfigurable optical add-drop multiplexers (ROADMs) and where amplifier stages may not be equally spaced.

That said, the CFP2-DCO’s reach in such networks is 750km, says Nokia.

IP-optical integration

Having coherent pluggables enables 400 Gigabit Ethernet (400GbE) payloads to be sent between routers over a wide area network, says Nokia.

“Given this convergence in form factor, with the QSFP-DD and ZR/ ZR+, you can now do IP-optical integration, putting coherent optics on the router without sacrificing port density or having locked-in ports,” says Melle.

Nokia is upgrading its IP and optical portfolio with coherent pluggables.

“In the routers, ZR/ ZR+, and in transponders not only the high-performance coherent optics – the [Nokia] PSE-Vs [DSP] – but also the CFP2-DCO multi-haul,” says Melle. “The 400-gigabit multi-haul is also going to be supported in our routers.”

Accordingly, Nokia has developed two sets of input-output (I/O) router cards: one supporting QSFP-DDs suited for metro-access applications, and the second using CFP2-DCO ports for metro and regional networks.

The choice of cards adds flexibility for network operators; they no longer need to have fixed CFP2-DCO slots on their router faceplates, whether they are used or not. But being physically larger, there are fewer CFP2-DCO ports than QSFP-DD ports on the I/O cards.

While the QSFP-DD MSA initially defined the module with a maximum power dissipation of 14.5W, a coherent QSFP-DD module consumes 18-20W. Dissipating the heat generated by the modules is a challenge.

Nokia’s airflow cooling is simplified by placing a module on both sides of the line card rather than stacking two CFP2-DCOs, one on top of the other.

Nokia is adding its CFP2-DCO to its 1830 optical transport portfolio. These include its PSI-M compact modular systems, the PSS transponder systems and also its PSS-x OTN switching systems.

The 400ZR/ZR+ module will be introduced with all its routing platforms this summer – the 7250 IXR, 7750 SR, 7750 SR-s, and the 7950 XRS, whereas the CFP2-DCO will be added to its 7750 and 7950 series later this year.

Nokia will source the 400ZR/ZR+ from third parties as well as from its optical networks division.

Its routers use QSFP-DD form-factor for all 400GbE ports and this is consistent for most router vendors in the industry. “Thus, our use and supply of 400ZR/ZR+ pluggable DCOs will focus on the QSFP-DD form-factor,” says Melle. However, the company says it can offer the OSFP form-factor depending on demand.

Network planning study

Nokia published a paper at OFC on the ideal coherent solution for different applications.

For metro aggregation rings with 4-5 nodes and several ROADM pass-throughs, using ZR+ modules is sufficient. Moreover, using the ZR+ avoids any loss in router port density.

For metro-regional core applications, the ZR+’s optical performance is mostly insufficient. Here, the full 400-gigabit rate can not be used but rather 300 gigabit-per-second (Gbps) or even 200Gbps to meet the reach requirements.

Using a 400-gigabit multi-haul pluggable on a router might not match the density of the QSFP-DD but it enables a full 400-gigabit line rate.

For long-haul, the CFP2-DCO’s performance is “reasonable”, says Nokia, and this is where high-performance transponders are used.

What the OFC paper argues is that there is no one-size-fits-all solution, says Melle.

800-Gigabit coherent pluggables

Traditionally, the IEEE has defined short-reach client-side optics while the OIF defines coherent standards.

“If we want this IP-optical convergence continuing in the next generation of optics, those two worlds are going to have to collaborate more closely,” says Melle.

That’s because when a form-factor MSA will be defined, it will need to accommodate the short-reach requirements and the coherent optics. If this doesn’t happen, says Melle, there is a risk of a new split occuring around the IP and optical worlds.

The next generation of coherent pluggables will also be challenging.

All the vendors got together in 2019 and said that 400ZR was just around the corner yet the modules are only appearing now, says Melle.

The next jump in pluggable coherent optics will use a symbol rate of 90-130GBd.

“That is very much the cutting-edge so it brings back the optics as a critical enabling technology, and not just optics but the packaging,” concludes Melle.

SiDx's use of silicon photonics for blood testing

Part 4: Biosensor start-up, SiDx

A blood sample reveals much about a person’s health. But analysing the sample is complicated given its many constituents.

Identifying a user’s blood type is also non-trivial.

If a patient arriving at hospital needs a blood transfusion, the universal donor blood type, O negative, is administered. That’s because it takes too long – 45 minutes typically – to identify the patient’s blood type. This also explains the huge demand for O negative blood.

Identifying blood type promptly is what start-up SiDx set out to address with a platform based on a silicon photonics sensor. The resulting platform does more than just blood-type identification.

SiDx

The Seattle-based start-up was founded in 2017. By then, SiDx had a decade of research behind it, in silicon-photonics biosensors and the associated biochemistry.

SiDx had also started working with a blood centre in Seattle. Such centres source and sell blood to US hospitals.

“We were looking for an application that justified starting the company,” says Jonas Flueckiger, vice president of engineering at SiDx.

Flueckiger notes that silicon photonics is one of several ways to analyse biological materials. “It has advantages but there are alternatives,” he says. “You have to find an application where the advantages of silicon photonics can shine.”

Marketplace and challenges

Flueckiger splits the biosensor marketplace into three: centralised lab equipment, bedside and portable equipment, and home testing.

For centralised labs, what matters is the scale and the ability to perform parallel testing. Here, trained staff are required for sample preparation and operating the equipment.

The second category, bedside and portable systems, are compact and rugged platforms designed to deliver results quickly; SiDx’s testing system takes 12 minutes. Such platforms are newer and are the focus of SiDx and other silicon photonics biosensor start-ups.

“As for home tests, you don’t need a doctor’s office, you can do it yourself,” says Flueckiger.

But medical diagnostics is a challenging market to enter. “The biggest challenge is that it is very, very hard to bring something new into the medical space,” says Flueckiger.

Hospitals are conservative establishments with rigid protocols that have test systems that doctors trust.

“Even though you show your system will be better, more efficient, faster, and the patient will be better served, it is still very hard to make a case to replace existing technology in a hospital,” says Flueckiger.

A new biosensor system must show it saves money, almost as important as demonstrating improved performance. “If your device is better but it costs more, that is not enough,” says Flueckiger.

Even if a start-up develops a system comparable in price, it must displace existing processes. And that raises a series of questions. Who does the testing? Where do the test results go? And who delivers the news to the patient?

“It is a complex picture and it is not just about technology,” says Flueckiger.

Ring resonators

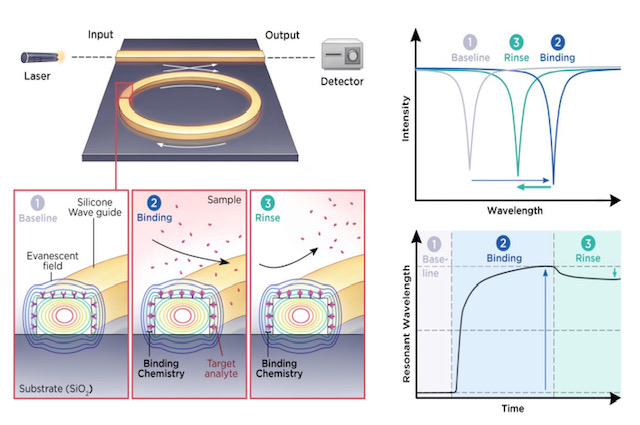

SiDx’s silicon photonics platform measures refractive index changes in light caused by blood sample components attaching to ‘receptors’ placed on the sensor’s surface.

Biochemistry is required to design the receptors for blood analysis and is a core expertise of SiDx.

SiDx uses a laser coupled to a ring resonator. When blood sample constituents attach to the receptors on the ring resonator’s surface, the wavelength at which the sensor resonates changes. This shift in refractive index is used to identify the constituents. (See diagram above.)

A key benefit of the ring resonator approach is its tiny size. Multiple sensors can be integrated into a compact area allowing tests to be performed in parallel. Or as Flueckiger puts it, there is an ability to ask more than one question.

SiDx says it uses ring resonators but it is not detailing its design.

Most emerging integrated-photonics biosensing companies use a laser and ring resonator to read refractive index changes.

One way of get readings is using a tunable laser but alternative designs are possible such as using a fixed laser and tuning the resonator.

That is possible, says Flueckiger, but in a multiplexed design where multiple ring resonators are used, the electrical input-output for all the resonators gets tricky.

“Even for a single test, a single marker, you will have a negative control, a positive control, usually one or two more to make sure you have what you think you have,” says Flueckiger. “With bodily fluids like blood, it is complex and includes stuff that can interfere.”

Silicon photonics also enables label-free detection.

Here, only receptors are used to catch a blood constituent of interest. There is no need for labels with fluorescent attachments designed to link to the constituent.

But labelling improves the probability of identifying what is being looked for. Blood is so complex a sample that doctors may not want label-free testing for just this reason, the risk that another biomarker gives a similar response to what is being sought.

SiDx says sample preparation is key here. Rather than simply squirting the blood sample into the device, additional steps are used such as dilution or separating red blood cells from the serum with testing performed on either. Reagents can also be added to remove all the cells’ membranes.

Such sample preparation steps before label-free testing are important and non-trivial. “Photonics, that is the easy part,” says Flueckiger.

The resulting biosensor comprises optics and biology. Yet it requires a shelf life of 6-12 months. Another reason why medical biosensor design – and the biochemistry in particular – is challenging.

Blood testing and disease screening

Most people understand major blood types such as A, B, AB or O negative, says Flueckiger. But it is more complicated than that in that there are many subgroups. If they are assessed wrongly, it can prove harmful to a patient.

SiDx’s platform can perform blood typing and also Rhesus tests during pregnancy. Rhesus disease is caused by a certain mix of blood types between a pregnant mother and the unborn child.

SiDx sees blood typing as an entry to the market: to prove its technology before branching out. “Once you have blood typing and a sample, you can expand the test portfolio,” says Flueckiger.

The aim is to group tests in an offering that make sense. For example, testing for covid-19 but also testing for the common flu. Or, if a patient tests negative for covid-19, what else could it be? Testing that way and getting an answer avoids the need for a second test.

There are multiple ways to test for covid-19.

A PCR test looks for the DNA of the virus. Analysing a blood sample determines if a body’s immune response has developed antibodies to the virus. If so, it means the person has, or has had, covid-19. SiDx’s biosensor will also be able to test a person’s immune system after a vaccine and determine if a booster jab is needed.

SiDx’s system can detect DNA, but an issue is that DNA needs ‘amplification’; its levels are too small otherwise.

Using integrated photonics coupled with the right capture molecules on the surface allows what is captured to be detected. A DNA molecule is much smaller so other tricks are needed to measure it. As a result, antibodies are more commonly tested for because it is much easier, says Flueckiger.

Prospects

SiDx along with other silicon photonics biosensor companies such as Genalyte, SiPhox, Rockley Photonics and Antelope DX, all received recent funding rounds. SiDx has raised a total of $3 million in funding and $2 million in research funding.

Is this not a vote of confidence in what is a tough market to crack?

There is venture money but it is hard to come by, says Flueckiger. Developing a medical device takes time, a minimum of five years before getting somewhere. If a company starts from scratch, the development time is longer than what venture capitalists are happy with.

Companies pursuing blood testing also can expect greater scrutiny given the story of private company, Theranos, whose claims about developing a breakthrough blood analysis system proved false.

Venture capitalists recognise the potential of benchtop devices but their concern is making money and how quickly a start-up can multiply their investment.

“Unless you can show hockey-stick growth, it’s a tough sell,” says Flueckiger. “These are long-term investments, not like a software company.”

That said, the covid-19 pandemic has helped. People now understand the important role such diagnostic equipment can play. They recognise how long it takes and that if money is thrown at the problem, device development can be accelerated.

Despite the challenges, Flueckiger is upbeat. “We have made lots of progress,” he says. “We have proven to ourselves that our technology works.” SiDx says there are developments that bring its platform closer to market that it cannot disclose at present.

The coronavirus pandemic also provided the company with a motivational boost to launch a product that is far easier to use.

SiDx did consider shifting its focus to address covid-19 testing but the pandemic occurred a year too early. “If it happened now, we would be in a lot better position to turn around very quickly with limited money and have a test ready,” says Flueckiger.

SiDx says that its conversations with investors generate excitement but they want proof of a return.

“You go into this knowing you have a long runway – the next five years will be hard – and then there is the question of whether you will be successful or not,” says Flueckiger.

II-VI expands its 400G and 800G transceiver portfolio

II-VI has showcased its latest high-speed optics. The need for such client-side modules is being driven by the emergence of next-generation Ethernet switches in the data centre.

The demonstrations, part of the OFC virtual conference and exhibition held last month, featured two 800-gigabit and two 400-gigabit optical transceivers.

“We have seen the mushrooming of a lot of datacom transceiver companies, primarily from China, and some have grown pretty big,” says Sanjai Parthasarathi, chief marketing officer at II-VI.

But a key enabler for next-generation modules is the laser. “Very few companies have these leading laser platforms – whether indium phosphide or gallium arsenide, we have all of that,” says Parthasarathi.

During OFC, II-VI also announced the sampling of a 100-gigabit directly modulated laser (DML) and detailed an optical channel monitoring platform.

“We have combined the optical channel monitoring – the channel presence monitoring, the channel performance monitoring – and the OTDR into a single integrated subsystem, essentially a disaggregated monitoring system,” says Parthasarathi.

An optical time-domain reflectometer (OTDR) is used to characterise fibre.

High-speed client-side transceivers

II-VI demonstrated two 800-gigabit datacom products.

One is an OSFP form factor implementing 800-gigabit DR8 (800G-DR8) and the other is a QSFP-DD800 module with dual 400-gigabit FR4s (2x400G-FR4). The DR8 uses eight fibres in each direction, each carrying a 100-gigabit signal. The QSFP-DD800 supports two FR4s, each carrying four, 100-gigabit wavelengths over single-mode fibre.

“These are standard IEEE-compliant reaches: 500m for the DR8 and 2km for the dual FR4 talking to individual FR4s,” says Vipul Bhatt, senior strategic marketing director, datacom at II-VI.

The 800G-DR8 module can be used as an 800-gigabit link or, when broken out, as two 400-gigabit DR4s or eight individual 100-gigabit DR optics.

II-VI chose to implement these two 800-gigabit interfaces based on the large-scale data centre players’ requirements. The latest switches use 25.6-terabit Ethernet chips that have 100-gigabit electrical interfaces while next-generation 51.2-terabit ICs are not far off. “Our optics is just keeping in phase with that rollout,” says Bhatt.

During OFC, II-VI also showcased two 400-gigabit QSFP112 modules: a 400-gigabit FR4 (400G-FR4) and a multi-mode 400-gigabit SR4 (400G-SR4).

The SR4 consumes less power, is more cost-effective but has a shorter reach. “Not all large volume deployments of data centres are necessarily in huge campuses,” says Bhatt.

II-VI demonstrated its 800-gigabit dual FR4 module talking to two of its QSFP112 400-gigabit FR4s.

Bhatt says the IEEE 802.3db standard has two 400G-SR4 variants, one with a 50m reach and the second, a 100m reach. “We chose to demonstrate 100m because it is inclusive of the 50m capability,” says Bhatt.

II-VI stresses its breadth in supporting multi-mode, short-reach single-mode and medium-reach single-mode technologies.

The company says it was the electrical interface rather than the optics that was more challenging in developing its latest 400- and 800-gigabit modules.

The company has 100-gigabit multi-mode VCSELs, single-mode lasers, and optical assembly and packaging. “It was the maturity of the electrical interface [that was the challenge], for which we depend on other sources,” says Bhatt.

100-gigabit PAM-4 DML

II-VI revealed it is sampling a 100-gigabit PAM-4 directly modulated laser (DML).

Traditionally, client-side modules for the data centre come to market using a higher performance indium phosphide externally-modulated laser (EML). The EML may even undergo a design iteration before a same-speed indium phosphide DML emerges. The DML has simpler drive and control circuitry, is cheaper and has a lower power consumption.

“But as we go to higher speeds, I suspect we are going to see both [laser types] coexist, depending on the customer’s choice of worst-case dispersion and power tolerance,” says Bhatt. It is too early to say how the DML will rank with the various worst-case test specifications.

Parthasarathi adds that II-VI is developing 100-gigabit and 200-gigabit-per-lane laser designs. Indeed, the company had an OFC post-deadline paper detailing work on a 200-gigabit PAM-4 DML.

Optical monitoring system

Optical channel monitoring is commonly embedded in systems while coherent transceivers also provide performance metrics on the status of the optical network. So why has II-VI developed a standalone optical monitoring platform?

What optical channel monitors and coherent modules don’t reveal is when a connector is going bad or fibre is getting bent, says Parthasarathi: “The health and the integrity of the fibre plant, there are so many things that affect a transmission.”

Operators may have monitoring infrastructure in place but not necessarily the monitoring of the signal integrity or the physical infrastructure. “If you have an existing network, this is a very easy way to add a monitoring capability,” says Parthasarathi.

“As we can control all the parts – the optical channel monitoring and the OTDR – we can configure it [the platform] to meet the application,” adds Sara Gabba, manager, analysis, intelligence & strategic marcom at II-VI. “Coherent indeed provides a lot of information, but this kind of unit is also suitable for access network applications.”

The optical monitoring system features an optical switch so it can cycle and monitor up to 48 ports.

With operators adopting disaggregated designs, each element in the optical network is required to have more intelligence and more autonomy.

“If you can provide this kind of intelligent monitoring and provide information about a specific link, you create the possibility to be more flexible,” says Gabba.

Using the monitoring platform, intelligence can be more widely distributed in the optical network complementing systems operators may have already deployed, she adds.

Marvell's first Inphi chips following its acquisition

Marvell unveiled three new devices at the recent OFC virtual conference and show.

One chip is its latest coherent digital signal processor (DSP), dubbed Deneb. The other two chips, for use within the data centre, are a PAM-4 (4-level pulse-amplitude modulation) DSP, and a 1.6-terabit Ethernet physical layer device (PHY).

The chips are Marvell’s first announced Inphi products since it acquired the company in April. Inphi’s acquisition adds $0.7 billion to Marvell’s $3 billion annual revenues while the more than 1,000 staff brings the total number of employees to 6,000.

Marvell spends 30 per cent of its revenues on R&D.

Acquisitions

Inphi is the latest of a series of Marvell acquisitions as it focusses on data infrastructure.

Marvell acquired two custom ASIC companies in 2019: Avera Semiconductor, originally the ASIC group of IBM Microelectronics, and Aquantia that has multi-gigabit PHY expertise.

A year earlier Marvell acquired processing and security chip player, Cavium Networks. Cavium had acquired storage specialist, QLogic, in 2017.

These acquisitions have more than doubled Marvell’s staff. Inphi brings electro-optics expertise for the data centre and optical transport and helps Marvell address the cloud and on-premises data centre markets as well as the 5G carrier market.

Marvell is also targeting the enterprise/ campus market and what it highlights as a growth area, automotive. Nigel Alvares, vice president of solutions at Marvell, notes the growing importance of in-vehicle networking, what he calls a ‘data-centre-on-wheels’.

“Inphi’s technology could also help us in automotive as optical technologies are used for self-driving initiatives in future,” says Alvares.

Inphi also brings integration, co-packaging and multi-chip module expertise.

Merchant chip and custom ASIC offerings

Cloud operators and 5G equipment vendors are increasingly developing custom chip designs. Marvell says it is combining its portfolio with their intellectual property (IP) to develop and build custom ICs.

Accordingly, in addition to its merchant chips such as the three OFC-announced devices, Marvell partners with cloud players or 5G vendors, providing them with key IP blocks that are integrated with their custom IP. Marvell can also build the ASICs.

Another chip-design business model Marvell offers is the integration of different hardware in a multi-chip package. The components include a custom ASIC, merchant silicon, high-speed memory and third-party chiplets.

“We co-package and deliver it to a cloud hyperscaler or a 5G technical company,” says Alvares.

Marvell says this chip strategy serves two market sectors: the cloud hyperscalers and the telcos.

Cloud players are developing custom solutions as they become more vertically integrated. They also have deep pockets. But they can’t do everything because they are not chip experts so they partner with companies like Marvell.

“The five to 10 hyperscalers in the world, they are doing so much creative stuff to optimise applications that they need custom silicon,” says Alvares.

The telcos, in contrast, are struggling to grow their revenues and favour merchant ICs, given they no longer have the R&D budgets of the past. It is this split in the marketplace which Marvell is targeting its various chip services.

OFC announcements

At OFC, Marvell announced the Deneb coherent DSP, used for optical transport including the linking of equipment between data centres.

The Deneb DSP is designed with open standards in mind and complements the 400-gigabit CMOS Canopus DSP announced by Inphi in 2019.

Deneb adds the oFEC forward error correction scheme to support open standards such as OpenZR+, 100-gigabit ZR, the 400-gigabit OpenROADM MSA and CableLabs’ 100-gigabit standard.

The 100-gigabit ZR is targeted at the 5G access market and mobile backhaul. Like the OIF 400G ZR, it supports reaches of 80-120km but uses quadrature phase-shift keying (QPSK) modulation.

“Not only do we support 100 gigabit [coherent] but we also have added the full industrial temperature range, from -40oC to 85oC,” says Michael Furlong, associated vice president, product marketing at Marvell.

The Deneb DSP is sampling now. Both the Deneb and Canopus DSPs will have a role in the marketplace, says Furlong.

Atlas PAM-4 DSP and the 1.6-terabit PHY

Marvell also announced at OFC the Atlas PAM-4 DSP and a dual 800-gigabit PHY devices used within the data centre.

Atlas advances Marvell’s existing family of Polaris PAM-4 DSPs in that it integrates physical media devices. “We are integrating [in CMOS] the trans-impedance amplifier (TIA) and laser drivers,” says Alvares.

Using the 200-gigabit Atlas reduces an optical module design from three chips to two; the Atlas comprises a transmit chip and a receiver chip (see diagram below). Using the Atlas chips reduces the module’s bill of materials, while power consumption is reduced by a quarter.

The Atlas chips, now sampling, are not packaged but offered as bare die and will be used for 200-gigabit SR4 and FR4 modules. Meanwhile, Marvell’s 1.6-terabit PHY – the 88X93160, – is a dual 800-gigabit copper DSP that performs retimer and gearbox functions.

“We view this as the key data centre building block for the next decade,” says Alvares. “The world is just starting to design 100-gigabit serial for their infrastructure.”

The device, supporting 16, 100-gigabit lanes, is the industry’s first 100-gigabit serial retimer, says Marvell. The device drives copper cable and backplanes and is being adopted for links between the server and the top-of-tack switch or to connect switches in the data centre.

The device is suitable for next-generation 400-gigabit and 800-gigabit Ethernet links that use 100-gigabit electrical serialisers-deserialisers (serdes).

The 5nm CMOS device supports over a 38dB (decibel) link budget and reduces I/O power by 40 per cent compared to a 50-gigabit Nigel PAM4-based PHY.

The 100-gigabit serdes design will also be used with Marvell’s Prestera switch portfolio.

imec’s research work to advance biosensors

Part 3: Biosensor developments

- Pol Van Dorpe discusses the institute’s use of photonics and silicon to develop new designs for medical diagnostics.

- imec has designed a breathalyser that detects the coronavirus with the accuracy of a polymerase chain reaction (PCR) test, a claimed world first.

Optics and photonics are advancing medical diagnostics in two notable ways.

The technologies are helping to shrink diagnostic systems to create new types of medical devices.

“Going from big lab equipment to something much smaller is a clear trend,” says Pol Van Dorpe, a Fellow at imec, the Belgium R&D nanoelectronics and nanotechnology institute.

Photonics and silicon also benefit central labs by creating more powerful test instruments. More functionality and detectors can be integrated in a given area enabling multiple tests in parallel, a technique dubbed multiplexing.

imec’s biosensor work and espertise

imec began its biosensor research in the 1990s, investigating electrical and surface plasmon-based devices. In more recent years, it has added the development of custom biosensor chips for companies.

As examples, imec worked with Panasonic to develop a chip for PCR, a testing technique now known to the public due to covid-19. The R&D institute also worked with Genalyte, a pioneering silicon photonics medical diagnostics company that uses optical waveguides, ring resonators, and a tunable laser for its multiplexing biosensor product.

imec has also developed in-house expertise across several disciplines needed for biosensor development.

Several groups at imec cover photonics, with Van Dorpe heading the group addressing biophotonics and single-molecule electrical devices.

Another group addresses biochemistry and surface chemistry used to coat and activate a sensor’s surface so that receptors can be attached. Receptors are biochemical materials that enable the sensor to trap and detect materials.

A further team covers microfluidics used to deliver liquid samples to the sensor or to mix solutions precisely.

Semiconductor process steps are used to create high-aspect-ratio structures that implement microfluidic structures. Such structures can also be used to sort cells, known as cytometry.

“There are many sensor types, and each has its own fluidic needs,” says Van Dorpe.

Spin-offs

imec has also spun off several biosensor companies.

One, miDiagnostics, raised $16.5 million in funding in 2020. miDiagnostics has a nanofluidic processor (nFP) that performs diagnostic tests on fluids guided through the system using capillary forces. The liquids can be redirected and can even have their flow reversed.

The nFP is configurable depending on the application. It combines nanofluidic processing and PCR for processing biomarkers: from cells and proteins to nucleic acids and small molecules.

Indigo is another spin-off that is developing a glucose monitoring system. A photonics sensor is embedded under the skin and communicates the user’s blood sugar level to a smartphone.

Market trends

The biosensor market is complex. Many actors – labs, doctors and users – in healthcare must be convinced before adopting a biosensor device. For a device to be successful, it must add value compared to existing equipment. Cost is also key as is the use-case and ease of use.

Portable equipment that delivers results promptly so that medical staff can make quick decisions is one example. Others include identifying if a patient has suffered a heart attack or bacterial infections such as sepsis, or enabling a doctor’s office to determine if a patient has a bacterial or viral infection. But no doctor will have 20 devices in their office, each performing a specific test, he says.

Such biosensor devices could also have played a key role during the current coronavirus pandemic.

“I can tell you we were working with companies and if they were several years ahead in their roadmaps, much of this would have been a lot easier,” says Van Dorpe.

Antigen-based quick tests for covid exist but people don’t trust them completely due to their limited sensitivity. It is also still not known when people become contagious. “If you take a nasal swab but are already recovering then you may not be as contagious,” says Van Dorpe.

imec has developed a coronavirus breathalyser. Blowing into a filter, aerosols and small droplets from a person’s lungs are collected. A 5-minute PCR analysis unit delivers a result, informing the person if their breath is infectious.

The goal is to use such systems at airports and large events, but it doesn’t guarantee that a person won’t get sick. “You could have been infected the previous day,” says Van Dorpe.

In clinical trials with UZ Leuven, the university hospital of Leuven, the system has tested viral RNA in exhaled air with high sensitivity.

“Our chip technology can detect quickly the virus particles with the same accuracy as classical PCR tests,” says Van Dorpe. “We are the first in the world to demonstrate this.”

imec is undertaking more clinical trials while improving the test’s robustness and ease of use. “We are discussing the commercialisation of our technology with different parties,” he says.

Biosensor technologies

imec’s toolbox of technologies include silicon nitride optical waveguides, beam splitters, filters, spectrometers, and in-plane and out-of-plane lenses.

imec can deposit waveguides on CMOS and has exploited the technique with CMOS image sensors that have many detectors. “We can use commercial image-sensor wafers and deposit the waveguide technology and use those pixels as detectors,” says Van Dorpe.

Established diagnostic techniques used in medical labs include ELISA, a reference technique to detect proteins, and PCR that tests for nucleic acid detection (DNA/ RNA).

The importance of both lab techniques will not change anytime soon, says Van Dorpe.

One reason why ELISA and PCR are so established is that they use ‘amplification’ to detect minute amounts of the material being tested for – the analyte – in complex samples.

For amplification, another label is used in addition to the receptors. The analyte is attached to an antibody within the label, and it is this second label that provides greater testing sensitivity. This, however, requires sample preparation by trained staff.

In contrast, newer biosensors technologies such as surface plasmon resonance (SPR) and silicon photonics use a label-free approach that does away with the second analyte-label stage.

But the label-free sensor is less sensitive; the technique measures when something attaches to the receptors but it can’t distinguish what it measures.

Van Dorpe stresses that amplification is chemistry-related and so it can be used with silicon photonics or SPR.

It is the overall diagnostic system that determines sensitivity, the combination of the transduction process and the chemistry, says Van Dorpe.

SPR and silicon photonics

SPR and silicon photonics biosensors work by measuring changes in light caused by passing a sample over the sensor which causes molecules to attach to the surface.

An SPR system comprises a laser, a prism attached to a gold surface, and a detector. Light is shone through the prism and is reflected from the gold layer before being detected. At a certain incident angle, the light causes electron resonance on the gold surface causing the reflected light intensity to dip.

Attaching biochemical receptors to the gold surface tailored to the analyte causes a shift in resonance angle and the angle change is a measure of the analyte’s presence.

In contrast, silicon photonic designs measure refractive index changes in the light caused by analytes attached to receptors on the sensor’s surface. Two sensor designs are used: a laser with either a Mach-Zehnder interferometer (MZI) or a ring resonator.

“Everything that changes the refractive index causes a signal,” says Van Dorpe.

imec’s biosensor developments

imec’s work with Genalyte a decade ago involved a biosensor that used a tunable laser and ring-resonator sensors.

More recently, the R&D institute has developed technologies not reflected in the silicon photonics designs used by biosensor start-ups such as Genalyte, SiDx, Antelope DX and SiPhox.

imec’s biosensor technologies have been developed to be less sensitive to non-specific binding. What is measured is fluorescence that occurs with the binding to the analyte.

“In blood or more complicated samples, there is a lot of stuff [besides what is being tested for],” says Van Dorpe.

One technology imec has developed performs rapid ELISA-style testing without needing the repeated wash stages required with ELISA systems.

ELISA uses an antibody receptor to detect the tested-for material while a second antibody uses an enzyme that produces colour. And it is the colour that is measured. In effect, both antibodies detect the analyte but the second, with its fluorescent label, helps determine how much analyte has bound.

With standard ELISA testing, repeated wash steps are required to remove what has not bound to the receptors and labels. These wash stages lengthen the testing time.

imec’s sensor is sensitive in the region very close to the surface. Measuring the fluorescence near the surface determines its build-up over time (see diagram).

The cleverness of the sensor is that the larger the concentration, the faster the surface fills up, reflected in the rate of change of fluorescence over time.

“You don’t need to wait until everything has stabilised to determine the concentration,” says Van Dorpe. “You can wait, say 2 minutes, measure the slope of the signal and that gives you a direct measure of the concentration.”

The design can be used with blood samples, to measure protein production or proteins that shouldn’t be there.

The sensor allows the real-time measurement of biomarkers, and no wash stages are needed. It also enables a controlled process for the biological production of vaccines or cancer therapy.

The key here is that using waveguides and integrated photonics allows localised sensing.

“Also with waveguide technology, because you route light on a chip, you can address a lot of [sensing] sites at the same time,” says Van Dorpe. “That allows you to measure a lot of spots, what is called multiplexing.”

These are the advantages of integrated photonics: the ability to test in parallel and the precise quantification of concentrations, he says.

imec has developed a second fluorescence technique – called super-critical angled fluorescence – closely related to the first but that does away with the waveguide.

As with the first technique, two antibodies are used, one with a fluorescent label.

By exciting the fluorescent label, light is produced in all directions. If a high-angle beam is used, the light at the surface interface refracts within a critical angle.

A fluorescent molecule close to the surface – less than a wavelength away – emits light into a silicon-oxide material. This helps distinguish molecules far from the surface compared to closer ones.

imec’s compact system filters out fluorescence from labels floating further away while measuring those nearby. This is like what happens with the waveguide of the first technique, where it is routed to the detector. But here the detector is situated underneath to measure the fluorescence. The technique delivers rapid results.

The two imec techniques deliver selective sensing that doesn’t rely on refractive index changes or mass. With the latter techniques, all the signals are picked up: everything that binds, wanted and unwanted materials.

The imec techniques are not perfect. There is some degree of auto-fluorescence but it is low. Also, some antibodies with the label will bind to the surface but that is much smaller than the proteins, says Van Dorpe.

Cytometry

imec is working with Sarcura, a cell therapy firm, on a high-throughput cytometry solution for cell separation. Here photonic integration is used along with a microfluidic solution to measure the cells.

A standard cytometer has a flow of cells and a bank of lasers at multiple wavelengths typically. As the cells pass, they scatter the focused light from the lasers. The scattering is measured while the cells also fluoresce since they are labelled. This allows for cell categorisation.

With cell therapy for cancer treatment, immune cells are grown and need analysing. Another use is identifying tumour cells in the blood.

“There are lots of applications where you want to pick out specific cells, label them, look at their properties and classify,” he says.

Traditional equipment used for these tasks is large and complex, requiring skilled staff.

What silicon photonics and microfluidics allow is the bringing of cells to the channel and, with waveguides, illuminate them and detect them.

The result, says Van Dorpe, is a high-throughput design with many adjacent channels.

Lumentum ships a 400G CFP2-DCO coherent module

Lumentum has started supplying customers with its CFP2-DCO coherent optical module. Operators use the pluggable to add an optical transport capability to equipment.

The company describes the CFP2-DCO as a workhorse; a multi-purpose pluggable for interface requirements ranging from connecting equipment in separate data centres to long-haul optical transmission. The module works at 100-, 200-, 300- and 400-gigabit line rates.

The pluggable also complies with the OpenROADM multi-source agreement. It thus supports the open Forward Error Correction (oFEC) standard, enabling interoperability with oFEC-compliant coherent modules from other vendors.

“We are encountering a fundamental limit set by mother nature around spectral efficiency,”

“Optical communications is getting more diverse and dynamic with the inclusion of the internet content providers (ICPs) alongside traditional telecom operators,” says Brandon Collings, CTO at Lumentum.

The CFP2-DCO module is being adopted by traditional network equipment makers and by the ICPs who favour more open networking.

CFP2-DCOs modules from vendors support the OIF’s 400ZR standard that links switching and routing equipment in data centres up to 120km apart and more demanding custom optical transmission performance requirements, referred to as ZR+.

So what differentiates Lumentum’s CFP2-DCO from other coherent module makers?

Kevin Affolter, Lumentum’s vice president, strategic marketing for transmission, highlights the company’s experience in making coherent modules using the CFP form factor. Lumentum also makes the indium phosphide optical components used for its modules.

“We are by far the leading vendor of CFP2-ACO modules and that will go on for several years yet,” says Affolter.

Unlike the CFP2-DCO that integrates the optics and the digital signal processor (DSP), the earlier generation CFP2-ACO module includes optics only, with the coherent DSP residing on the line card.

The company also offers a 200-gigabit CFP2-DCO that has been shipping for over 18 months.

As a multi-purpose design, Affolter says some customers want to use the CFP2-DCO primarily at 200 gigabits for its long-haul reach while others want the improved performance of the proprietary 400-gigabit mode and its support of Ethernet and OTN clients.

“Each of the [merchant] DSPs has subtly different features,” says Affolter. “Some of those features are important to protect applications, especially for some of the hyperscalers’ applications.”

Higher baud rates

Lumentum did not make any announcements at the recent OFC virtual conference and show regarding indium phosphide-based coherent components operating at the next symbol rate of 128 gigabaud (GBd). But Collings says work continues in its lab: “This is a direction we are all headed.”

The latest coherent optical components operate at 100GBd, making possible 800-gigabit-per-wavelength transmissions. Moving to a 128GBd symbol rate enables a greater reach for the given transmission speed as well as the prospect of 1.2+ terabit wavelengths.

This means fewer coherent modules are needed to send a given traffic capacity, saving costs. But moving to a higher baud rate does not improve overall spectral density since a higher baud rate signal requires a wider channel.

“We are encountering a fundamental limit set by mother nature around spectral efficiency,” says Collings.

Optical transmission technology continues to follow the familiar formula where the more challenging high-end, high-performance coherent systems start as a line-card technology and then, as it matures, transitions to a more compact pluggable format. This trend will continue, says Collings.

The industry goal remains to scale capacity and reduce the dollars-per-bit cost and that applies to high-end line cards and pluggables. This will be achieved using greater integration and increasing the current baud rate.

“Getting capacity up, driving dollars-per-bit down is now what the game is going to be about for a while,” says Collings.

Whether the industry will go significantly above 128GBd such as 256GBd remains to be seen as this is seen as a technically highly challenging task.

However, the industry continues to demand higher network capacity and lower cost-per-bit. So Collings sees a couple of possible approaches to continue satisfying this demand.

The first is to keep driving down the cost of the 128GBd generations of transceivers, satisfying lower cost-per-bit and expanding capacity by using more and more transceivers.

The second approach is to develop transceivers that integrate multiple optical carriers into a single ‘channel’. A channel here refers to a unit of optical spectrum managed through the ROADM network. This would increase capacity per transceiver and lower the cost-per-bit.

“Both approaches are technical and implementation challenges and it remains to be seen which, or both, will be realised across the industry,” says Collings.

100-gigabit PAM-4 directly modulated laser

At OFC Lumentum announced that its 100-gigabit PAM-4 directly modulated laser (DML), which is being used for 500m applications, now supports the 2km-reach FR single-channel and FR4 four-channel client-side module standards.

This is a normal progression of client-side modules for the data centre where the higher performance externally-modulated laser (EML) for a datacom transceiver is the one paving the way. As the technology matures, the EML is replaced by a DML which is cheaper and has simpler drive and control circuitry.

“We started this [trait] with the -LR4 which was dominated by EMLs,” says Mike Staskus, vice president, product line management, datacom at Lumentum. “The fundamental cost savings of a DML is its smaller chip size, more chips per wafer, and fewer processes, fewer regrowths.”

The company is working on a 200-gigabit EML and a next-generation 100-gigabit DML that promises to be lower cost and possibly uncooled.

Reconfigurable optical add-drop multiplexers (ROADMs)

Lumentum is working to expand its wavelength-selective switches (WSSes) to support the extended C-band, and C- and L-band options as a way to increase transmission capacity.

“We are expanding the overall ROADM portfolio to accommodate extended C-band and more efficient C-band and L-band opportunities to continue to build capacity into ROADM networks,” says Collings. “As spectral efficiency saturation sets in, we are going to need more amplified bandwidth and more fibres, and the C- and L-bands will double fibre capacity.”

The work includes colourless and directionless; colourless, directionless and contentionless, and higher-degree ROADM designs.

Marvell exploits 5nm CMOS to add Octeon 10 DPU smarts

The Octeon family has come a long way since the networking infrastructure chip was introduced by Cavium Networks in 2005.

Used for data centre switches and routers, the original chip family featured 1 to 16, 64-bit MIPS cores and hardware acceleration units for packet processing and encryption. The devices were implemented using foundry TSMC’s 130nm CMOS process.

Marvell, which acquired Cavium in 2018, has taped out the first two devices of its latest, seventh-generation Octeon 10 family.

The devices, coined data processing units (DPU), will feature up to 36 state-of-the-art ARM cores, support a 400-gigabit line rate, 1 terabit of switching capacity, and dedicated hardware for machine-learning and vector packet processing (VPP).

Marvell is using TSMC’s latest 5nm CMOS process to cram all these functions on the DPU system-on-chip.

The 5nm-implemented Octeon 10 coupled with the latest ARM cores and improved interconnect fabric will triple data processing performance while halving power consumption compared to the existing Octeon TX2 DPU.

DPUs join CPUs and GPUs

The DPU is not a new class of device but the term has become commonplace for a processor adept at computing and moving and processing packets.

Indeed, the DPU is being promoted as a core device in the data centre alongside central processing units (CPUs) and graphic processing units (GPUs).

As Marvell explains, a general-purpose CPU can perform any processing task but it doesn’t have the computational resources to meet all requirements. For certain computationally-intensive tasks like graphics and artificial intelligence, for example, the GPU is far more efficient.

The same applies to packet processing. The CPU can perform data-plane processing tasks but it is inefficient when it comes to intensive packet processing, giving rise to the DPU.

“The CPU is just not effective from a total cost of ownership, power and performance point of view,” says Nigel Alvares, vice president of solutions marketing at Marvell.

Data-centric tasks

The DPU is used for smart network interface controller (SmartNIC) cards found in computer servers. The DPU is also suited for standalone tasks at the network edge and for 5G.

Marvell says the Octeon DPU can be used for data centres, 5G wireless transport, SD-WAN, and fanless boxes for the network edge.

Data centre computation is moving from application-centric to more data-centric tasks, says Marvell. Server applications used to host all the data they needed when executing algorithms. Now applications gather data from various compute clusters and locations.

“The application doesn’t have all the data but there is a lot of data that needs to be pumped into the application from many points,” says Jeffrey Ho, senior product manager at Marvell. “So a lot of network overlay, a lot of East-West traffic.”

This explains the data-centric nature of tasks or, as Ho describes it, the data centre appearing as a mesh of networks: “It’s a core network, it is a router network, it is an enterprise network – all in one block.”

Octeon 10 archtecture

The Octeon 10 family uses ARM’s latest core architecture, the Neoverse N2 processor, Arm’s first Armv9 Infrastructure CPU, for general-purpose computational tasks. Each ARM core has access to hierarchical cache memory and external DDR5 SDRAM memory.

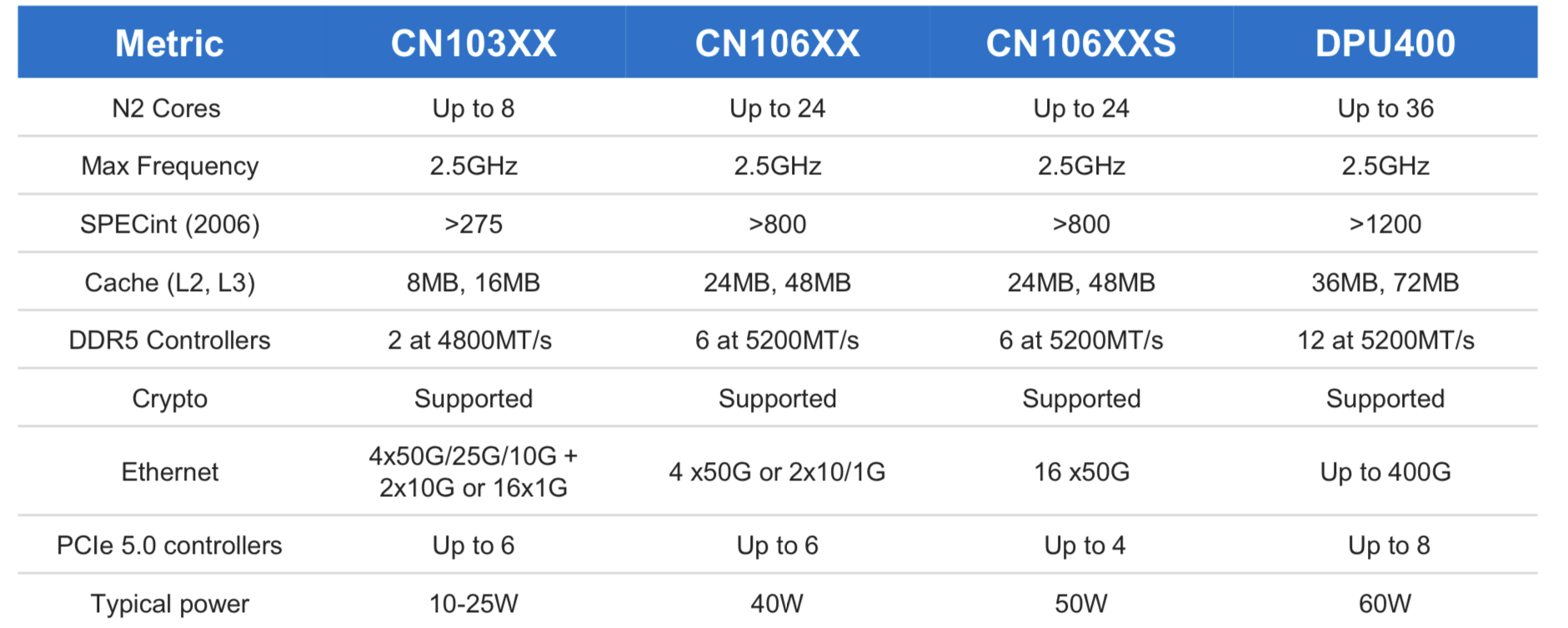

The initial Octeon 10 family members range from the CN103XX which has up to eight ARM N2 cores, each with Level 1 and private Level 2 cache and shared level 2 and 3 caches (8MB and 16MB, respectively).

The most powerful DPU of the Octeon 10 family is the DPU400 which will have up to 36 ARM cores and 36MB level 2 and 72MB level 3 caches.

“Then you have the acceleration hardware that is very friendly to this generic compute,” says Ho.

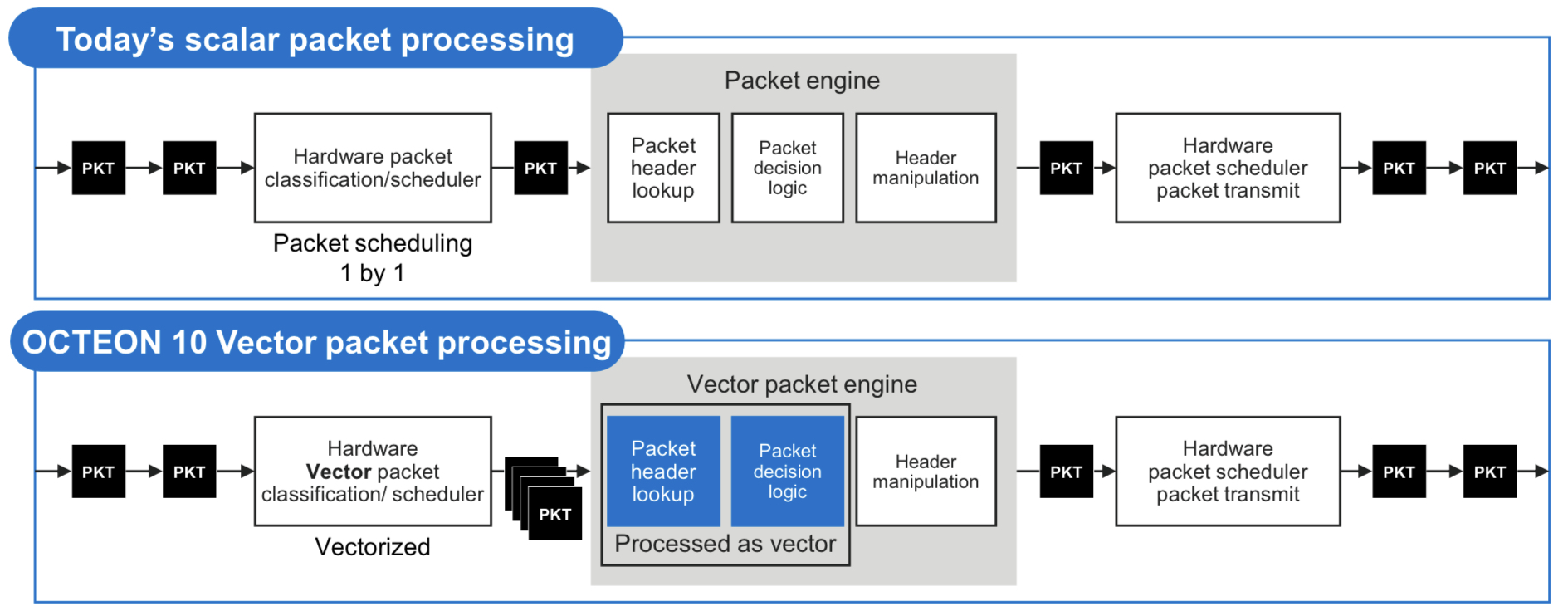

One custom IP block is for vector packet processing (VPP). VPP has become popular since becoming available as open-source software that batch-processes packets with similar attributes. Marvell says that until now, the hardware processed the packets one at a time such that the potential of VPP has not been fully realised.

The Octean 10 is the first device family to feature hardware for VPP acceleration. Accordingly, only one look-up table operation and one logical decision may be required before header manipulation is performed for each of the grouped packets. The specialised hardware accelerates VPP by between 3-5x.

The DPU also integrates on-chip hardware for machine-learning inferencing tasks.

Machine learning can be applied to traffic patterns on a compute cluster such that every day or so, newly learned models can be downloaded onto the DPU for smart malware detection. This is learnt behaviour; no rules need be written in code, says Marvell. Machine learning can determine if a packet is malware to an accuracy of 80 per cent, says Ho.

The hardware can even identify suspect packets by learning application types even when the packets themselves are encrypted using the IPSec protocol.

The DPU’s machine learning inference hardware can also be used for other tasks such as beamforming optimisation in cellular networks.

As for the 400-gigabit rating of the Octeon DPU, this is the maximum input and output that a CPU can cope with if every packet needs processing. And when each packet passes through the IPsec encyption engines, the maximum line rate is 400 gigabits.

In turn, if a packet need not pass through the CPU or no cryptography is required, one terabit of Layer 2/ Layer 3 switching can be done on-chip.

“All these are separate accelerator capability of the platform,” says Ho. The data path bandwidth of the DPU is 400Gbps+, IPSec throughput is 400Gbps+, and the switch capability is 1 terabit.”

Using software, the DPU accelerators are configured according to the data processing needs which may use all or some of the on-chip accelerators, he says.

4G and 5G cellular systems

For radio access networks, the radio head units talk to a distributed unit (DU) and a centralised unit (CU). (See diagram.)

The DU chassis houses six or eight line cards typically. The DU has a main controller that connects all the signals and backhauls them to the CU. This requires a two-chip solution with a switch chip next to each Octeon.

Using a 5nm process, the switch-integrated Octeon DPU reduces the DU’s overall power consumption and bill of materials. This Octeon DPU can be used for the DU, the fronthaul gateway and even the CU.

The DPU also exploits the 1-terabit switching capacity for the DU chassis example. Here, six Octeon Fusion-O chips, which perform Layer 1 processing, are connected to six radio units. Each of the six Fusion-O chips connects to the DPU via a 50-gigabit serialiser/ deserialiser (serdes).

Typical DU designs may use two Octeon DPUs, the second being a standby host DPU. This accounts for six line cards and two Octeon DPUs per DU chassis.

Market status

Marvell says that for 100-gigabit-throughput DPUs, up to 60 per cent of volumes shipped are used in the cloud and 40 per cent at the network edge.

Since throughput rates in the cloud are growing faster than the network edge, as reflected with the advent of 200- and 400-gigabit SmartNIC cards, the overall ratio of devices used for the cloud will rise.

The first two Octeon 10 devices taped out two months ago were the CN106XX and CN106XXS. These devices will sample in the second half of 2021.

The two will be followed by the CN103XX which is expected around spring 2022 and following that will be the DPU400.

Intel details its 800-gigabit DR8 optical module

The company earmarks 2023 for its first co-packaged optics product

Intel is sampling an 800-gigabit DR8 in an OSFP pluggable optical module, as announced at the recent OFC virtual conference and show.

“It is the first time we have done a pluggable module with 100-gigabit electrical serdes [serialisers/ deserialisers],” says Robert Blum, Intel’s senior director, marketing and new business. “The transition for the industry to 100-gigabit serdes is a big step.”

The 800-gigabit DR8 module has eight electrical 100-gigabit interfaces and eight single-mode 100-gigabit optical channels in each transmission direction.

Intel demonstrated a prototype 12.8-terabit co-packaged optics design

The attraction of the single-module DR8 design, says Blum, is that it effectively comprises two 400-gigabit DR4 modules. “The optical interface allows you the flexibility that you can break it out into 400-gigabit DR4,” says Blum. “You can also do single 100-gigabit breakouts or you can do 800-gigabit-to-800-gigabit traffic.”

Intel expects volume production of the DR8 in early 2022. Developing a DR8 in a QSFP-DD800 form factor will depend on customer demand, says Blum.

Intel will follow the 800-gigabit DR8 module with a dual 400G FR4, expected later in 2022. The company is also developing a 400-gigabit FR4 module that is expected then.

Meanwhile, Intel is ramping its 200-gigabit FR4 and 400-gigabit DR4 modules.

51.2-terabit co-packaged optics

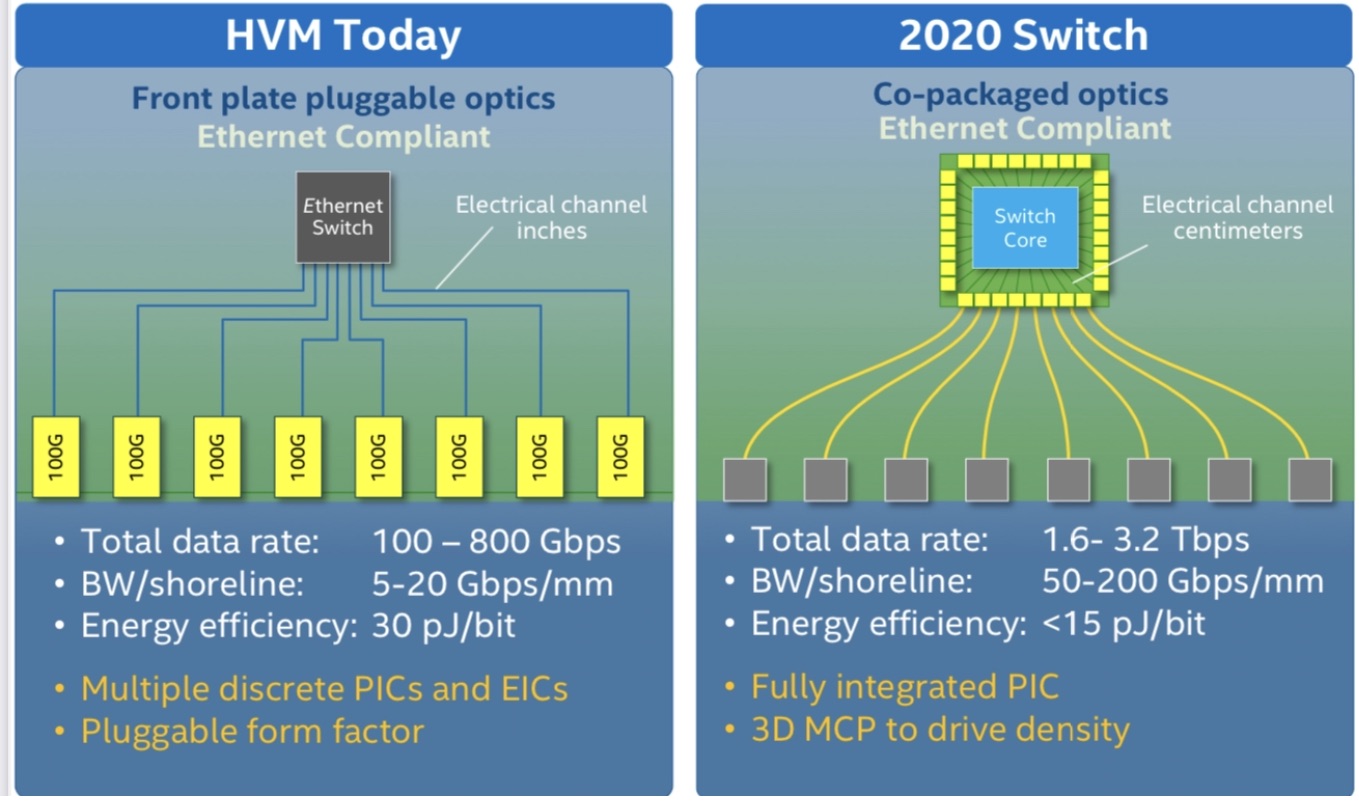

Intel demonstrated a prototype 12.8-terabit co-packaged optics design, where the optics is integrated alongside its Tofino 2 Ethernet switch chip, at last year’s OFC event.

The company says its first co-packaged optics design will be for 51.2-terabit switches and is scheduled in late 2023. “We see smaller-scale deployments at 51.2 terabits,” says Blum.

Moving the industry from pluggable optical modules to co-packaged optics is a big shift, says Intel. The technology brings clear system benefits such as 30 per cent power savings and lower cost but these must be balanced against the established benefits of using pluggable modules and the need to create industry partnerships for the production of co-packaged optics.

The emergence of 800-gigabit client-side pluggable modules such as Intel’s also means a lesser urgency for co-packaged optics. “You have something that works even if it is more expensive,” says Blum.

Thirty-two 800-gigabit modules can serve a 25.6-terabit switch in a one rack unit (1RU) platform.

However, for Intel, the crossover point occurs once 102.4-terabit switch chips and 200-gigabit electrical interfaces emerge.

“We see co-packaged optics as ubiquitous; we think pluggables will no longer make sense at that point,” says Blum.

FPGA-based optical input-output

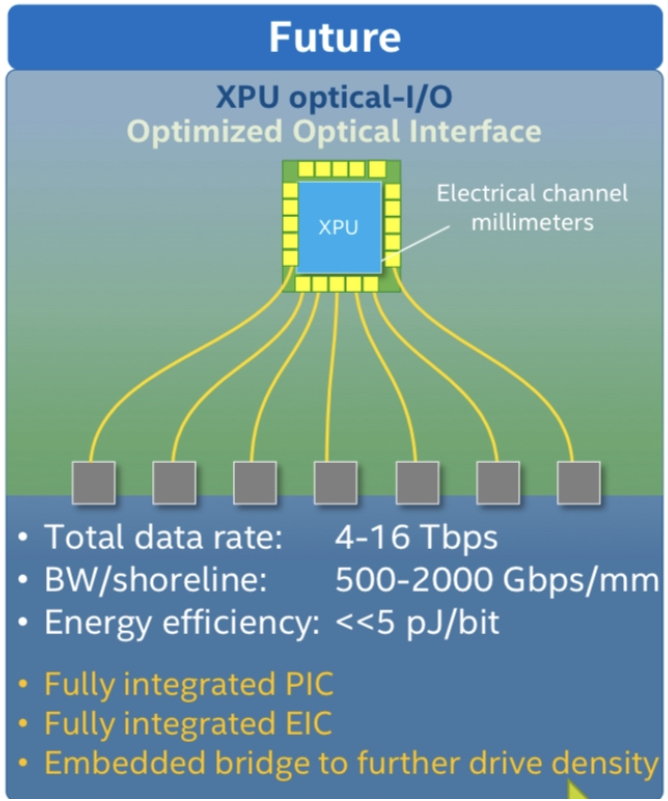

Intel published a paper at OFC 2021 highlighting its latest work a part of the U.S. DARPA PIPES programme.

The paper describes a co-packaged optics design that adds 8 terabits of optical input-output (I/0) to its Stratix 10 FPGA. The design uses Ayar Labs’ TeraPHY chiplet for the optical I/O.

The concept is to use optical I/O to connect compute nodes – in this case, FPGAs – that may be 10s or 100s of meters apart.

Intel detailed its first Stratix 10 with co-packaged optical I/O two years ago.

The latest multi-chip package also uses a Stratix 10 FPGA with Intel’s Advanced Interface Bus (AIB), a parallel electrical interface technology, as well as the Embedded Multi-die Interconnect Bridge (EMIB) technology which supports the dense I/O needed to interface the FPGA to the TeraPHY chiplet. The latest design integrates five TeraPHYs compared to the original one that used two. Each chiplet offers 1.6 terabits of capacity such that the FPGA-based co-package has 8 terabits of I/O in total.

Optically enabling Ethernet silicon or an FPGA is part of the industry’s vision to bring optics close to the silicon. Other devices include CPUs and GPUs and machine-learning devices used in computing clusters that require high-density interconnect (see diagram below).

“It is happening first with some of the highest bandwidth Ethernet switches but it is needed with other processors as well,” says Blum.

The Intel OFC 2021 paper concludes that co-packaged optics is inevitable.

Milestones, LiDAR and sensing

Intel has shipped a total of over 5 million 100-gigabit optical modules, generating over $1 billion of revenues.

Blum also mentioned Intel’s Mobileye unit which in January announced its LiDAR-on-a-chip design for autonomous vehicles.

“We have more than 6,000 individual components on this LiDAR photonic integrated circuit,” says Blum. The count includes building blocks such as waveguides, taps, and couplers.

“We have this mature [silicon photonics] platform and we are looking at where else it can be applied,” says Blum.

LiDAR is one obvious example: the chip has dozens of coherent receivers on a chip and dozens of semiconductor optical amplifiers that boost the output power into free space. “You really need to integrate the different functionalities for it to make sense,” says Blum.

Intel is also open to partnering with companies developing biosensors for healthcare and for other sensing applications.

Certain sensors use spectroscopy and Intel can provide a multi-wavelength optical source on a chip as well as ring-resonator technology.

“We are not yet at a point where we are a foundry and people can come but we could have a collaboration where they have an idea and we make it for them,” says Blum.

Making optical networking feel like cycling downhill

BT’s chief architect, Neil McRae, is a fervent believer in the internet, a technology built on the continual progress of optical networking. He discussed both topics during his invited talk at the recent OFC 2021 virtual conference and exhibition.

Neil McRae’s advocacy of the internet as an educational tool for individuals from disadvantaged backgrounds stems from his childhood experiences.

“When I was a kid, I lived in a deprived area and the only thing that I could do was go to the library,” says McRae, chief architect and managing director for architecture and technology strategy at BT.

His first thought on discovering the internet was just how much there was to read.

“If I’m honest, everything I’ve learnt in technology has been pretty much self-taught,” says McRae.

This is why he so values the internet. It has given him a career where he has travelled widely and worked with talented and creative people.

“Anyone who is out there in the world can do the same thing,” he says. “I strongly believe that the internet brings opportunities to people who are willing to spend the time to learn.”

Optical networking

McRae surveyed the last 20 years of optical networking in his OFC talk. He chose the period since it was only at the end of the last century that the internet started to have a global impact.

“The investment in networking [during this period] has been orders of magnitude bigger than prior years,” says McRae. “There has also been a lot of deregulation across the world, more telecoms companies, more vendors and ultimately more people getting connected.”

In 2000, networks used the SONET/SDH protocol and fixed wavelengths. “We have brought in many new technologies – coherent, coloured optics, programable lasers and silicon photonics – and they have been responsible for pretty significant changes.”

McRae likens optical network to gears on a bike. “It powers the rest of what we do in the network and without those advances, we wouldn’t be the digitally connected society we are today,” says McRae. “If I think about the pandemic of the last year, can you imagine what the pandemic would have been like if it had happened in the year 2000?”

McRae says he spends a fifth of his time on optical networking. This is more than previously due to the relentless growth in network bandwidth.

“Ultimately, if you get optical wrong, it feels like you are in the wrong gear cycling uphill,” says McRae. “If you get it right, you are in the right gear, you are going as fast as you can go and it feels like a downhill ride.”

And it’s not just bandwidth but also from a cost, capability and customer experience perspective. “We recognise the value that it brings to all the other layers right up to the application,” he says.

Research

BT Labs has an optical networking programme that is run by Professor Andrew Lord. The programme’s remit is to help BT address existing and future issues.

“There is a longer-term research aspect to what Andrew and his team do, but there are some here-and-now issues that they support me on like the hollow-core fibre work and some of the 400-gigabit [coherent] platforms we have been reviewing recently,” he says.

He cites as examples the work the programme did for BT’s next-generation optical platform that was designed for growth and which indeed has grown massively in the last decade. “We have launched optical services as a product because of the platform,” says McRae.

The programme has also helped Openreach, BT Group’s copper and fibre plant subsidiary, with its fibre-to-the-premise (FTTP) deployments that use such technologies as GPON and XGS-PON.

Reliable, dynamic, secure networks

McRae admits he is always nervous about predicting the future. But he is confident 400 gigabits will be a significant optical development over the next decade.

This includes inside the data centre, driven by servers, and in the network including long haul.

“The challenge will be around getting the volume and interoperability as quickly as we possibly can,” says McRae.

The other big opportunity is the increased integration of IP and optical using a control plane aligned to both.

“The biggest networking technology out there is IP,” says McRae. “And that will not change in the coming decade.”

The Layer-3 capabilities include working around issues but it is bad at managing bandwidth. Optical is the opposite: great at managing bandwidth but less dynamic for working around problems. Merging the two promises significant benefits.

This idea, advocated as IP-over-DWDM, has long been spoken of but has not been deployed widely. The advent of 400-gigabit coherent implemented using client-side modules means that the line-side interface density can equal that of the host. And other developments such as software-defined networking and artificial intelligence also help.

Software-defined networking will make a big difference because it will enable the move to automation and that will enable new technologies such as artificial networking (AI) and machine-learning to be introduced.

McRae talks of a control plane capable of deciding which interface to send packets down and also determine what paths to create across the optical infrastructure.

“We have seen some of that but we have not seen enough,” says McRae. AI and machine-learning technologies will provide networks with almost autonomous control over which paths to use and enable for the various traffic types the network sees.

McRae stresses that it is getting harder to get the maximum out of the network: “If we maintain human intervention, the network will never see its full potential because of complexity, demands and scale.”

He predicts that once the human component is taken out of the network, some of the silos between the different layers will be removed. Indeed, he believes networks built by AI and aided by automation will look very different to today’s networks.

Another technology McRae highlights is hollow-core fibre which BT Labs has been researching.

“Increasingly, we are starting to reach some limits although many folks have said that before, but hollow-core fibre gives us some interesting and exciting opportunities around latency and the total use of a fibre,” says McRae.

There are still challenges to be overcome such as manufacturing the fibre at scale but he sees a path in many parts of the network where hollow-core fibre could be valuable to BT.

Quantum key distribution (QKD) and the importance of network security is another area starting to gain momentum.

“We have gone from a world where people were scared to send an email rather than a fax to one where the network is controlling mission-critical use cases,” says McRae. “The more secure and reliable we make those networks, the more it will help us in our everyday lives.”

McRae believes this is the decade where the underlying optical network capability coupled with QKD security will take effect.

Making a difference

McRae has run several events involving children with autism although during the pandemic this has not happened. He uses gaming as a way to demonstrate how electronics works – switching things on and off – and then he introduces the concept of computer programming.

“I find that kids with autism get it really quickly” he says. BT runs such events two or three times a year.

McRae also works with children who are learning to program but find it difficult. “Again, it is something self-taught for me,” he says although he quips that the challenge he has is that he teaches them bad programming habits.

“I’m keen to find the next generation of fantastic engineers; covid has shown us that we need them more than ever,” he says.

Sustainability for telecoms is a journey without end

ADVA became, in 2019, only the fourth company in Germany to achieve approval for its emissions target to limit global warming to 2oC above pre-industrial temperatures.

Last year ADVA adopted more stringent emissions targets to limit global warming to 1.5oC, with the Science-Based Targets initiative (SBTi) organisation approving its programme.

Trimming half a degree centigrade may sound minor but the resulting targets become far more challenging, says Klaus Grobe, director, sustainability at ADVA.

“Since there are massive non-linear physical processes in the background, that leads to massively more aggressive reduction targets,” he says.

If ADVA’s 2019 targets required a 20 per cent reduction in emissions from its car fleet and electricity needs, now they are to be reduced to a third by 2032.

“It’s a huge step,” says Grobe.

Motivation

Some 500 companies worldwide have SBTi-approved targets and another 500 companies are committed to getting approval, says Grobe. ADVA is a relatively small firm but it is part of what he refers to as the green 'Fortune 1000'.

Meeting the targets has a cost but the company's motivation is simple: “The cost of doing nothing will be much, much higher,” says Grobe.

Global warming's impact on supply chain management is one example, given that certain of ADVA's suppliers are in regions susceptible to rising sea levels.

ADVA is obliged to perform materiality analysis as part of its non-financial reporting. This is a complement to the risk analysis that companies report. "We have been doing this for four years and there is a persistent outcome: global warming ends up being the most pressing and relevant thing," says Grobe.

The goal of meeting more demanding targets is backed by ADVA’s top management. “It is something we want to do,” says Grobe. “It is also something some of our customers want us to do.”

Targets and Scopes

The company has until 2032 to meet its emission targets. "The SBTi is in favour of targets with a long run-time," says Grobe. The company has metrics that it will track and that will be externally checked.

Grobe says it is likely that as ADVA progresses with the targets, newer targets will be set. These could be related to global warming or other issues such as water, resource depletion or social domain issues. Social domain issues include corporate social responsibility (CSR), aspects in sustainability-related supply-chain management, modern slavery and conflict minerals.

"If you ask me, I expect it will be global warming," says Grobe.

To meet its targets, ADVA needs to address three greenhouse gas protocols, known as scopes.

Scope 1 refers to the company's car fleet while Scope 2 addresses the company's purchased electricity emissions. Scope 1 can be reduced by encouraging less driving and purchasing hybrid and electrical cars. For Scope 2, the strategy is to purchase more green electricity. Already ADVA's UK site in York uses renewable electricity only.

Scope 3 emissions refer to activities associated with a company. For example, the emissions attributed to ADVA's customers using its telecom equipment. Grobe says product emissions are by far its biggest Scope 3 contributor. Grobe adds that the power consumed by its products - a Scope 3 emissions issue for ADVA - is a Scope 2 issue for its telco customers.

ADVA is addressing multiple aspects that contribute to its Scope 3 emissions. One example is the carbon footprint associated with the production of components used in its products. Others include transportation logistics, business travel and employee commuting.

"Almost no one else does this to this level of detail with trackable numbers," says Grobe.

Equipment emissions

The emissions of telecoms equipment are directly influenced by the huge traffic growth over the years.

"The capacity of WDM [wavelength-division multiplexing] equipment has increased massively, some 100x, over the last 20 years," says Grobe. "The energy increase has been perhaps between 2x and 4x, certainly not 100x, so in this case it is difficult to reduce emissions."

To counter this, electricity emissions factors can be used such as renewable sources of electricity.

Grobe says ADVA's target for Scope 3 emissions reduction is 3 per cent only: "I say 'only'; it is a very, very aggressive target."

Klaus Grobe

Grobe says he works with ADVA's CTO office to understand future component and product roadmap trends.

Looking out to 2032 requires a crystal ball, he says, but certain trends are clear. Moore's law is slowing down, as is the area shrinkage needed for bit storage.

"We are approaching quantum mechanics realms; we are some two orders of magnitude away from very definite physical limits," says Grobe.

This means that over the next two decades either the bit rate growth associated with the internet will slow down or it will become the biggest energy-consuming machine on earth. "The likelier case is the slowdown caused by higher energy cost," says Grobe.

However, ADVA stresses it isn't meeting energy but emission targets and it is confident they will be met. "If the internet used renewable energy exclusively, it may use a lot of energy but it would not produce emissions," says Grobe.

ADVA applies an eco-design guide to its hardware and platform designs that offers guidance for energy-efficient designs and how to design products for a circular economy.

In 2020, ADVA saw its business travel decline by 85 per cent while employee commuting was down by 70 per cent. The company is also transitioning from using air freight to sea freight and in 2020 the resulting emissions dropped a quarter. "That is massive," says Grobe.

Sustainability

Despite having fewer than 2,000 staff, ADVA believes it is one of the leading system vendors in terms of its sustainability policy.

Overall though, sustainability in the telecoms industry is very much being led by the operators. "It seems that the incumbent network operators - the BTs and Deutsche Telekoms - are in the lead," he said.

Grobe also cites the work of other systems vendors such as Ericsson and Nokia. Component vendors, however, are doing less. "The smaller component firms are reluctant to do more than they have to," says Grobe.