Books read in 2021: Part 4

In Part IV, two more industry figures pick their reads.

Michael Hochberg, a silicon photonics expert and currently at a start-up in stealth mode, discusses classical Greek history, while Professor Laura Lechuga, a biosensor luminary highlights Michael Lewis’s excellent book about the pandemic, among others.

Michael Hochberg, President of a stealth-mode start-up

One of the primary ways that I mis-spent my youth was by crawling through my father’s library of social science and history books. This activity generally occurred when I was supposed to be asleep, resting up for a full day of stark and abject boredom in school. This resulted in some perverse outcomes, like my tendency to fall asleep in class at an unusually young age.

It’s accepted practice for college students; certainly, many of the students in my classes during my time as an academic got in some excellent naps. I was always sad to see them leave the comfort of their warm beds to nap in a hard, wooden chair while I lectured; I feel like they would have slept better at home.

Of course, the true masters of napping were the faculty, for whom it was a key technique in committee meetings. But this sort of advanced napping is considerably less common in elementary and middle school, and I suspect that some of my teachers took it personally.

Perhaps the most compelling thing I read during those years was Thucydides’ History of the Peloponnesian War. It’s the history (arguably the first historical volume ever written) of the great conflict between Athens and Sparta. If you’ve never read Pericles’ Funeral Oration, I highly recommend it for anyone who has an interest in leadership; it’s quite possibly the greatest political speech ever given. And there’s a new-ish edition that includes necessary maps, references and explanations, which makes reading and understanding the context dramatically easier. This material makes the text accessible to people who aren’t experts and aren’t reading it as part of a course. It’s even out in paperback!

It’s a volume that I’ve returned to twice, because one of the things that amazes me every time I look at it is how little things have changed in the last 2,500 years. I found myself re-reading it this year and thinking about how much more I got out of it than I did ten years ago; the benefit of experience.

The motivations, actions, and behaviors of the people in Thucydides are instantly and intensely familiar. Given all the changes to technology, government, religion, our knowledge of the universe, access to information, communications, our ideas about ourselves, the triumphs of empiricism and the scientific method, et cetera, it’s amazing to see that the bones of how people behave, both as individuals and as groups, really haven’t changed.

People are still motivated by their desire for security, their interests, and their values (including that sometimes-forgotten motive: honour.) Despite the panoply of innovations, we seem to be basically the same. As a technologist, it’s a thought that gives me both pause and comfort.

Thucydides’ History is told primarily from an Athenian perspective. As I dug deeper, I encountered several books over the years that were truly fascinating, and that gave great insight into the leadership and motivations of the Athenians; I’ve developed a keen interest, starting with this reading, in the circumstances under which democratic regimes can emerge and thrive, both historically and in the present day.

Here are a couple of my favorite further readings on the history of Athens:

- Donald Kagan’s biography of Pericles (Pericles of Athens and the Birth of Democracy); Kagan’s other works are also fascinating reading, as are his lectures from his Yale University history course on the history of Ancient Greece, which are on the web.

- John Hale’s biography of Themistocles and his history of the Athenian navy, The Lords of the Sea.

Because this history of Athens is the story of the rise and fall of a great maritime power, it provides only a limited treatment of Sparta, the foremost territorial power of Ancient Greece.

So, what of the Spartans? The world remembers Leonidas and the 300 at Thermopylae. Students of history remember Plataea, and the later alliance between the Spartans and the Persian Empire directed against Athens. But the Spartans, as a people, remained enigmatic after reading Thucydides, at least to me. What motivated them to create their peculiar society? What were the pressures that shaped their thinking? How did they come to be what they were? Why did they make the decisions that they did?

As Thucydides observed: The Athenians built and left behind immense public works. Temples that still stand today. An extensive literature: comedies, tragedies, and philosophies. Art and sculpture. All the trappings of a commercial, vibrant, creative society. They get to explain themselves to us in their own words. But what of the Spartans, who left behind almost nothing of the sort? We remember their military achievements. But they left behind very little that would allow us to understand them.

To quote Thucydides directly, courtesy of Lapham’s Quarterly (possibly my favourite periodical):

“Suppose that the city of Sparta were to become deserted and that only the temples and foundations of buildings remained: I think that future generations would, as time passed, find it very difficult to believe that the place had really been as powerful as it was represented to be. Yet the Spartans occupy two-fifths of the Peloponnese and stand at the head not only of the whole Peloponnese itself but also of numerous allies beyond its frontiers. Since, however, the city is not regularly planned and contains no temples or monuments of great magnificence, but is simply a collection of villages, in the ancient Hellenic way, its appearance would not come up to expectation. If, on the other hand, the same thing were to happen to Athens, one would conjecture from what met the eye that the city had been twice as powerful as in fact it is.

To address the mystery of the Spartans, Paul Rahe, of Hillsdale College, has written four volumes (thus far) on the history of Spartan strategic thought, and the fifth one will go to press soon.

- The Grand Strategy of Classical Sparta

- The Spartan Regime

- Sparta’s First Attic War

- Sparta’s Second Attic War

These volumes are among the finest books I’ve read. They make the strategic dilemmas and choices of the Spartans as clear as the historical record seems to allow, and where the record is silent, Rahe fills in the blanks with speculation informed by a nuanced understanding of the politics and practices of the day. Rahe has filled in the blank spaces in a way that is remarkable. These books are also utterly readable even to someone like me, who is most decidedly a non-expert in the field.

One of my key takeaways from reading Rahe’s work was the importance of understanding, in detail, the capabilities, motivations, aspirations, and commitments of adversaries, and of having a grand strategy that is simple to articulate, understand and implement.

Only then can a wide range of individuals and organizations coordinate their actions in service of such a grand strategy, which is generally what is required for success, in both business and warfare. The conflict between Athens and Sparta was between a maritime society and a territorial power, and this finds echoes in the current conflicts between the United States and the People’s Republic of China.

In that vein, I’ve been reading Elbridge Colby’s fascinating book (I’m about a third through) on the Strategy of Denial, which I recommend as a thought-provoking work to anyone with an interest in US-China relations. My next reading in this area is Thucydides on Strategy: Grand Strategies in the Peloponnesian War and their Relevance Today.

In recent years, with the revolution in machine learning, we’ve started to develop tools that can do super-human things in areas where humans previously had an absolute monopoly. These tools will allow us to do amazing things that we can barely imagine today, and they will have world-changing economic, military, and social impacts. It’s an incredibly exciting time to be a technologist and to have a chance to participate in this revolution.

Many people believe that these tools will produce fundamental changes in how individual humans behave, in how we organise ourselves into polities, and in how we strategise to enhance our security. Perhaps they’re right. But I don’t think so. The community of technologists have believed that our innovations will change human nature before, and we’ve been wrong before.

I believe that the same motivations that Thucydides articulated so well – Fear, Honor, and Interest – will continue to dominate the landscape. I expect that reading Thucydides will still give deep insight into human nature 500 years from now (assuming of course that anyone is still around to read his work). And 2,500 years of western history seem to suggest, at least to me, that new technology doesn’t fundamentally change human nature, and that to think otherwise is arrogance.

Professor Laura Lechuga, Group Leader NanoBiosensors and Bioanalytical Applications Group, Catalan Institute of Nanoscience and Nanotechnology (ICN2), CSIC, BIST and CIBER-BBN.

I used to read a lot but with our frantic way of living before the pandemic – travelling and working, it was hard to find the time. The pandemic has given me back this habit and I am enjoying it very much.

Here are my best readings of 2021:

The Premonition: A Pandemic Story, by Michael Lewis is an excellent description of the chaotic US public health system and how fighting for political power have corrupted the organisations that should help society in any emergency. The result: the United States has had been one of the countries in pandemic management. A must-read book.

Reina Roja, by Juan Gómez-Jurado, a renowned Spanish author. This is a thriller about the world’s smartest woman on the hunt for a serial killer. I especially like how the protagonist interprets, with a female scientific mind, the actions of the murderer and the staging of the crimes. The book grabs your attention from the first page and continues with a frenetic pace that ends up taking your breath away. I´m not going to disclose the identity of the serial killer …

Acoso by Angela Bernardo is the first book that reveals sexual harassment in Spanish science. The book includes testimonies, interviews with specialists in sexual harassment and compiles the scarce data available in Spain regarding this problem.

The author unravels how the structure of Spanish universities and research centres make its very difficult for female scientists to report and find support when they are victims of sexual harassment or harassment due to gender discrimination.

The Man Who Counted: A Collection of Mathematical Adventures, by Melba Tahan (a pen name), is a well known and classic book in maths by Brazilian writer, Júlio César de Mello e Souz. The book describes curious word problems, mathematics puzzles and curiosities. The protagonist is a thirteenth-century Persian scholar of the Islamic Empire. A must-read book!

My last recommendation is Nosotros, los actogésimos (una novela de mundoochenta), by Jesús Zamora Bonilla. This is a Spanish science fiction book describing “Mundochenta”, a planet 140,000 light years from Earth and its inhabitants, the “eightieths”.

A police and scientific thriller, the book takes place in a very distant future but in a society not yet as technologically advanced as ours. “Mundoachenta” is dominated by the Empire and the Church, which face the challenge of assimilating the increasing scientific advances.

The book is a parody of how power and religion have always tried to stop scientific advances to maintain its dominance.

Books read in 2021: Part 3

In Part III, two more industry figures pick their reads of the year: Dana Cooperson of Blue Heliotrope Research and ADVA’s Gareth Spence.

My reading traverses different ground from that of other invited analysts to this yearly section. In addition, my ‘avoid new releases’ approach means my picks are not from 2021. And before jumping straight into recommendations, I’ll preface my comments with an homage to communal aspects of reading that have meant so much to me, especially during these two Covid years.

My two book groups managed to meet steadily during the pandemic, sometimes while sitting outside in the snow, covered with blankets and sipping hot tea.

Beyond ensuring a steady stream of titles to read and discuss, the ladies in my book clubs have supported and encouraged each other through births and deaths and all the highs and lows in between. I tried a third, online alumni book club, this year, but meh: what it provided was not even close to the tight-knit book club experience I treasure.

I have also appreciated the annual August in-person ad hoc book club and reading recommendations sessions that grew out of my college experience, and which have been going strong for 40 years now. My daughters and I also exchanged books and discussed them this last year.

The books I most appreciated of the 20 or so I read in 2021 were those that offered interesting, deep, and well-written windows into people, places, cultures, and identities I didn’t know I needed to know more about. Here are my top picks:

My favourite 2021 read was the 2019 Booker Prize winner Girl, Woman, Other, by Bernadine Evaristo. This funny and touching novel spans space and time to weave the stories of twelve mostly female, mostly Black, and mostly British characters and their ancestors. The characters’ narratives intersect in surprising ways that don’t feel at all artificial or manipulative. The book’s unique style and structure add to the storytelling.

A Tree Grows in Brooklyn, by Betty Smith, is a fantastic autobiographical novel published in 1943. It details the hard yet full life of Frances Nolan, who grows up impoverished in Williamsburg to first-generation parents from immigrant families (one Irish, one Austrian) in the early 20th century. The descriptions are so vivid, and the main character so tenacious, determined, and smart, that the book is positive and affirming despite its often tough subject matter (alcoholism, abuse, poverty).

My daughter, who had taken an Asian-American literature class in college, suggested The Sympathizer, a 2016 Pulitzer winner by Viet Thanh Nguyen. Like “A Tree Grows in Brooklyn,” the subject matter (the fall of Saigon, spying, torture, betrayal, being a stranger in a strange land) is not a simple read. But the characters are again so vivid, the narrative so darkly comic and satirical, and the historic subject matter so relevant to today that I found the book riveting. (Note: Nguyen published a sequel in 2021 that I’ve yet to read.)

American Dirt, by Jeanine Cummins, tells the harrowing tale of a group of desperate migrants trying to complete the dangerous trip from Latin America to the US. As I started reading it, a friend who hadn’t read it noted the controversies swirling around the author (she’s not Latinx enough for some) and the plot (lambasted by some as ‘immigrant porn’). Whatever: I read the book and loved it. This gripping novel made the plight of desperate migrants more real to me than any news story had done.

Other book recommendations:

• The Vanishing Half: A Novel by Britt Bennett, regards two African American sisters from the US South who make very different choices (one passes as white) and how their futures and families are affected by their choices.

• Afterlife, by Julia Alvarez, concerns a retired English professor who is suddenly widowed and trying to figure out how to live her life and deal with her three sister

• The Great Believers, by Rebecca Makkai, is about the AIDS crisis in Chicago. It bounces between 1985 and 2015 as it follows a group of gay men and their born and made families. I found the plot (who lives, who dies) a tad manipulative, but the book shined a light on a pandemic and its victims that we should never forget.

• The Miniaturist, by Jessie Burton, which is set in 17th century Amsterdam, is an atmospheric, magical, and suspenseful novel that made the era of the Dutch East India Company come alive for me. You did not want to be poor, female, Black, or gay in 1686 in the Netherlands, so this book is dark.

• Midnight in the Garden of Good and Evil: A Savannah Story, a non-fiction novel by journalist John Berendt, describes a 1980s murder and trial in Savannah, Georgia. Readers will not easily forget the town’s many characters, especially The Lady Chablis.

It seems fitting to end my 2021 recommendations with a recent read, Oscar Wilde’s The Picture of Dorian Gray, about a young man whose moral decay and debauchery is recorded by his painted portrait even while his body retains its unsullied youth and beauty.

Wilde sure had a way with words: his descriptions of 19th century London high society are as sharp as any knife. For example, Lord Fermor was “a genial if somewhat rough-mannered old bachelor, whom the outside world called selfish because it derived no particular benefit from him, but who was considered generous by Society as he fed the people who amused him.”

I’ll close with Wilde’s musing on art from the last epigram in the novel’s preface: “We can forgive a man for making a useful thing as long as he does not admire it. The only excuse for making a useless thing is that one admires it intensely. All art is quite useless.”

Gareth Spence, Senior Director of Digital Marketing and Public Relations at ADVA.

It’s been a grey and wet holiday season in the UK. Ideal conditions for hunkering down in front of the fire and building a reading list for 2022. If you’re doing the same, here are two suggestions for your book pile.

Both recommendations can loosely be filed under the topic of the American Dream. The first one stretches the rules as it’s only available as an audiobook. It’s Miracle and Wonder: Conversations with Paul Simon, by Malcolm Gladwell and Bruce Headlam.

I was reluctant to listen to this book. I’ve grown tired of Gladwell’s writing style and his tendency to reduce human nature to a digestible catchphrase. Still, the opportunity to hear Simon talk about his career proved too compelling.

As a child, I was an avid listener of Simon. His work shaped my early notions of America and the American Dream. In the book, Simon talks extensively about his anthemic tunes. Where the ideas came from, how the songs were shaped and how his relationship with his music has changed during his long career.

It’s fascinating to hear Simon talk openly about his past. If you have any interest in his songs or the musical process, you’ll enjoy this book. Just try your best to overcome Gladwell’s gushing praise of Simon. The man could rob a bank and Gladwell would find artistic merit in it.

My second recommendation is Nomadland: Surviving America in the Twenty-First Century, by Jessica Bruder. This book is a powerful exploration of the flipside of the American Dream. It follows the lives of a growing community of people who have been cast aside by society and forced to find ways to live outside mainstream America.

Many of the people detailed are over 60 and have lost their homes and livelihoods. They now live in recreational vehicles, vans and even cars and spend their time in laborious, menial jobs. When they’re not working, they’re travelling the country, finding ways to embrace freedoms they never had before.

It’s sobering to read Bruder’s book as she spends over a year exploring this nomadic community. It’s hard to imagine that this group won’t continue to expand as life in America becomes ever more challenging.

But as difficult as it is to read, there’s also hope. The people show resourcefulness and resiliency in how they discover a new way to live and rediscover their country.

Compute vendors set to drive optical I/O innovation

Part 2: Data centre and high-performance computing trends

Professor Vladimir Stojanovic has an engaging mix of roles.

When he is not a professor of electrical engineering and computer science at the University of California, Berkeley, he is the chief architect at optical interconnect start-up, Ayar Labs.

Until recently Stojanovic spent four days each week at Ayar Labs. But last year, more of his week was spent at Berkeley.

Stojanovic is a co-author of a 2015 Nature paper that detailed a monolithic electronic-photonics technology. The paper described a technological first: how a RISC-V processor communicated with the outside world using optical rather than electronic interfaces.

It is this technology that led to the founding of Ayar Labs.

Research focus

“We [the paper’s co-authors] always thought we would use this technology in a much broader sense than just optical I/O [input-output],” says Stojanovic.

This is now Stojanovic’s focus as he investigates applications such as sensing and quantum computing. “All sorts of areas where you can use the same technology – the same photonic devices, the same circuits – arranged in different configurations to achieve different goals,” says Stojanovic.

Stojanovic is also looking at longer-term optical interconnect architectures beyond point-to-point links.

Ayar Labs’ chiplet technology provides optical I/O when co-packaged with chips such as an Ethernet switch or an “XPU” – an IC such as a CPU or a GPU (graphics processing unit). The optical I/O can be used to link sockets, each containing an XPU, or even racks of sockets, to form ever-larger compute nodes to achieve “scale-out”.

But Stojanovic is looking beyond that, including optical switching, so that tens of thousands or even hundreds of thousands of nodes can be connected while still maintaining low latency to boost certain computational workloads.

This, he says, will require not just different optical link technologies but also figuring out how applications can use the software protocol stack to manage these connections. “That is also part of my research,” he says.

Optical I/O

Optical I/O has now become a core industry focus given the challenge of meeting the data needs of the latest chip designs. “The more compute you put into silicon, the more data it needs,” says Stojanovic.

Within the packaged chip, there is efficient, dense, high-bandwidth and low-energy connectivity. But outside the package, there is a very sharp drop in performance, and outside the chassis, the performance hit is even greater.

Optical I/O promises a way to exploit that silicon bandwidth to the full, without dropping the data rate anywhere in a system, whether across a shelf or between racks.

This has the potential to build more advanced computing systems whose performance is already needed today.

Just five years go, says Stojanovic, artificial intelligence (AI) and machine learning were still in their infancy and so were the associated massively parallel workloads that required all-to-all communications.

Fast forward to today, such requirements are now pervasive in high-performance computing and cloud-based machine-learning systems. “These are workloads that require this strong scaling past the socket,” says Stojanovic.

He cites natural language processing that within 18 months has grown 1000x in terms of the memory required; from hosting a billion to a trillion parameters.

“AI is going through these phases: computer vision was hot, now it’s recommender models and natural language processing,” says Stojanovic. “Each generation of application is two to three orders of magnitude more complex than the previous one.”

Such computational requirements will only be met using massively parallel systems.

“You can’t develop the capability of a single node fast enough, cramming more transistors and using high-bandwidth memory,“ he says. High-bandwidth memory (HBM) refers to stacked memory die that meet the needs of advanced devices such as GPUs.

Co-packaged optics

Yet, if you look at the headlines over the last year, it appears that it is business as usual.

For example, there have been a Multi Source Agreement (MSA) announcement for new 1.6-terabit pluggable optics. And while co-packaged optics for Ethernet switch chips continues to advance, it remains a challenging technology; Microsoft has said it will only be late 2023 when it starts using co-packaged optics in its data centres.

Stojanovic stresses there is no inconsistency here: it comes down to what kind of bandwidth barrier is being solved and for what kind of application.

In the data centre, it is clear where the memory fabric ends and where the networking – implemented using pluggable optics – starts. That said, this boundary is blurring: there is a need for transactions between many sockets and their shared memory. He cites Nvidia’s NVLink and AMD’s Infinity Fabric links as examples.

“These fabrics have very different bandwidth densities and latency needs than the traditional networks of Infiniband and Ethernet,” says Stojanovic. “That is where you look at what physical link hardware answers the bottleneck for each of these areas.”

Co-packaged optics is focussed on continuing the scaling of Ethernet switch chips. It is a more scalable solution than pluggables and even on-board optics because it eliminates long copper traces that need to be electrically driven. That electrical interface has to escape the switch package, and that gives rise to that package-bottleneck problem, he says.

There will be applications where pluggables and on-board optics will continue to be used. But they will still need power-consuming retimer chips and they won’t enable architectures where a chip can talk to any other chip as if they were sharing the same package.

“You can view this as several different generations, each trying to address something but the ultimate answer is optical I/O,” says Stojanovic.

How optical connectivity is used also depends on the application, and it is this diversity of workloads that is challenging the best of the system architects.

Application diversity

Stojanovic cites one machine learning approach for natural language processing that Google uses that scales across many compute nodes, referred to as the ‘multiplicity of experiments’ (MoE) technique.

A processing pipeline is replicated across machines, each performing part of the learning. For the algorithm to work in parallel, each must exchange its data set – its learning – with every other processing pipeline, a stage referred to as all-to-all dispatch and combine.

“As you can imagine, all-to-all communications is very expensive,” says Stojanovic. “There is a lot of data from these complex, very large problems.”

Not surprisingly, as the number of parallel nodes used grows, a greater proportion of the overall time is spent exchanging the data.

Using 1,000 AI processors running 2,000 experiments, a third of the time is required for data exchange. Scaling the hardware to 3,000 to 4,000 AI processors and communications dominate the runtime.

This, says Stojanovic, is a very interesting problem to have: it’s an example where adding more compute simply does not help.

“It is always good to have problems like this,” he says. “You have to look at how you can introduce some new technology that will be able to resolve this to enable further scaling, to 10,000 or 100,000 machines.”

He says such examples highlight how optical engineers must also have an understanding of systems and their workloads and not just focus on ASIC specifications such as bandwidth density, latency and energy.

Because of the diverse workloads, what is needed is a mixture of circuit switching and packet switching interconnect.

Stojanovic says high-radix optical switching can connect up to a thousand nodes and, scaling to two hops, up to a million nodes in sub-microsecond latencies. This suits streamed traffic.

But an abundance of I/O bandwidth is also needed to attach to other types of packet switch fabrics. “So that you can also handle cache-line size messages,” says Stojanovic.

These are 64 bytes long and are found with processing tasks such as Graph AI where data searches are required, not just locally but across the whole memory space. Here, transmissions are shorter and involve more random addressing and this is where point-to-point optical I/O plays a role.

“It is an art to architect a machine,” says Stojanovic.

Disaggregation

Another data centre trend is server disaggregation which promises important advantages.

The only memory that meets the GPU requirements is HBM. But it is becoming difficult to realise taller and taller HBM stacks. Stojanovic cites as an example how Nvidia came out with its A100 GPU with 40GB of HBM that was quickly followed a year later, by an 80GB A100 version.

Some customers had to do a complete overall of their systems to upgrade to the newer A100 yet welcomed the doubling of memory because of the exponential growth in AI workloads.

By disaggregating a design – decoupling the compute and memory into separate pools – memory can be upgraded independently of the computing. In turn, pooling memory means multiple devices can share the memory and it avoids ‘stranded memory’ where a particular CPU is not using all its private memory. Having a lot of idle memory in a data centre is costly.

If the I/O to the pooled memory can be made fast enough, it promises to allow GPUs and CPUs to access common DDR memory.

“This pooling, with the appropriate memory controller design, equalises the playing field of GPUs and CPUs being able to access jointly this resource,” says Stojanovic. “That allows you to provide way more capacity – several orders more capacity of memory – to the GPUs but still be within a DRAM read access time.”

Such access time is 50-60ns overall from the DRAM banks and through an optical I/O. The pooling also means that the CPUs no longer have stranded memory.

“Now something that is physically remote can be logically close to the application,” says Stojanovic.

Challenges

For optical I/O to enable such system advances what is needed is an ecosystem of companies. Adding an optical chiplet alongside an ASIC is not the issue; chiplets are aready used by the chip industry. Instead, the ecosystem is needed to address such practical matters as attaching fibres and producing the lasers needed. This requires collaboration among companies across the optical industry.

“That is why the CW-WDM MSA is so important,” says Stojanovic. The MSA defines the wavelength grids for parallel optical channels and is an example of what is needed to launch an ecosystem and enable what system integrators and ultimately the hyperscalers want to do.

Systems and networking

Stojanovic concludes by highlighting an important distinction.

The XPUs have their own design cycles and, with each generation, new features and interfaces are introduced. “These are the hearts of every platform,” says Stojanovic. Optical I/O needs to be aligned with these devices.

The same applies to switch chips that have their own development cycles. “Synchronising these and working across the ecosystem to be able to find these proper insertion points is key,” he says.

But this also implies that the attention given to the interconnects used within a system (or between several systems i.e. to create a larger node) will be different to that given to the data centre network overall.

“The data centre network has its own bandwidth pace and needs, and co-packaged optics is a solution for that,“ says Stojanovic. “But I think a lot more connections get made, and the rules of the game are different, within the node.”

Companies will start building very different machines to differentiate themselves and meet the huge scaling demands of applications.

“There is a lot of motivation from computing companies and accelerator companies to create node platforms, and they are freer to innovate and more quickly adopt new technology than in the broader data centre network environment,” he says

When will this become evident? In the coming two years, says Stojanovic.

Books read in 2021: Part 2

In Part II, two more industry figures pick their reads of the year: Sara Gabba of II-VI and Ciena’s Joe Marsella.

Sara Gabba, Strategic Marketing, II-VI

I’ve always read a lot. I cannot fall asleep without the sweet or the exciting company of a good book!

In the last year, I’ve spent many evenings reading fairy tales to my young daughter and, on top of the traditional ones from Andersen or the Grimm brothers, I’ve surprisingly discovered that she really likes the Greek myths (in an adaptation for children), which are the archetypes of most of the ‘modern’ tales. Love, mystery, jealousy, fear, talent, heroism: all the instincts and passions of humankind are there and able to capture every reader.

Coming to the books that I enjoyed most this past year, I’ll mention three, beginning with L’infinito Tra Le Note: Il Mio Viaggio Nella Musica (My Journey into Music) by the famous orchestra director Riccardo Muti.

In simple words, he leads you through the history of music, disclosing the essence of the main composers and the secrets that are hidden among their notes and silences, all filtered by his sensitivity and his long experience as director of the world’s most important orchestras.

Galeotto fu il collier (A Gallehault was the Collier) is an amusing book from the prolific and always brilliant pen of Andrea Vitali, an Italian writer whose novels typically take place in Bellano, a nice village on the eastern shore of the Lake of Como where he was born and worked as a general practitioner. Bellano is indeed a charming village, in addition to the well-known Bellagio.

This book is a choral novel, able to recreate the atmosphere of common life in 1930’s Italy. The comedy lies in the everyday routine of the many simple characters, in the plot full of anecdotes and of said-unsaid words: an amazing and wonderful comedy of errors!

Lastly, I really loved Liar Moon written by the Italian-American writer, Ben Pastor.

This romance is the second of the saga featuring Martin Bora, the Major of the Wehrmacht whose character was inspired by Claus von Stauffenberg, the German colonel who attempted to assassinate Adolf Hitler in 1944 (maybe you remember the Tom Cruise movie Valkyrie, also inspired by von Stauffenberg’s brave acts).

This historical mystery novel takes place in the North-East region of Italy during the German occupation in the Second World War, where the skilled army officer Bora solves a complex murder case. Martin Bora is fighting for the wrong side in the world conflict, so he obviously has all the characteristics to be a villain. However, he is far from being a stereotype and you cannot avoid but to love him for his torn sense of loyalty to his nation and his daring acts of disobedience to the criminal orders received from his commanders.

Joe Marsella, Vice President, Product Line Management, Routing and Switching at Ciena.

As an evolving society, we often tend to look back on the ‘good old days’ and lament how difficult life has become, often forgetting that as a whole we are much better off than we have ever been.

History, for me, is a healthy way of not only reminding oneself of that simple fact but also serving as an opportunity to learn from past experiences to improve the journey ahead.

With that in mind, one book I found extremely interesting in 2021 is One Minute to Midnight: Kennedy, Khrushchev, and Castro on the Brink of Nuclear War by Michael Dobbs, which tells the story of the days leading up to the Cuban Missile Crisis of 1962 and how close the world came to nuclear annihilation.

The story focuses on how quickly a series of decisions can escalate over a 13-day time frame and the ability of two opposing leaders to reach a compromise for the greater good of not only their respective countries but the world.

As business leaders, we are required to make decisions and negotiate constantly, and while our negotiated outcomes rarely reach the magnitude of Kennedy and Khrushchev in the fall of 1962, it’s reassuring to know that even in the most difficult circumstances agreements can be reached with mutually beneficial results.

Data centre disaggregation with Gen-Z and CXL

Part 1: CXL and Gen-Z

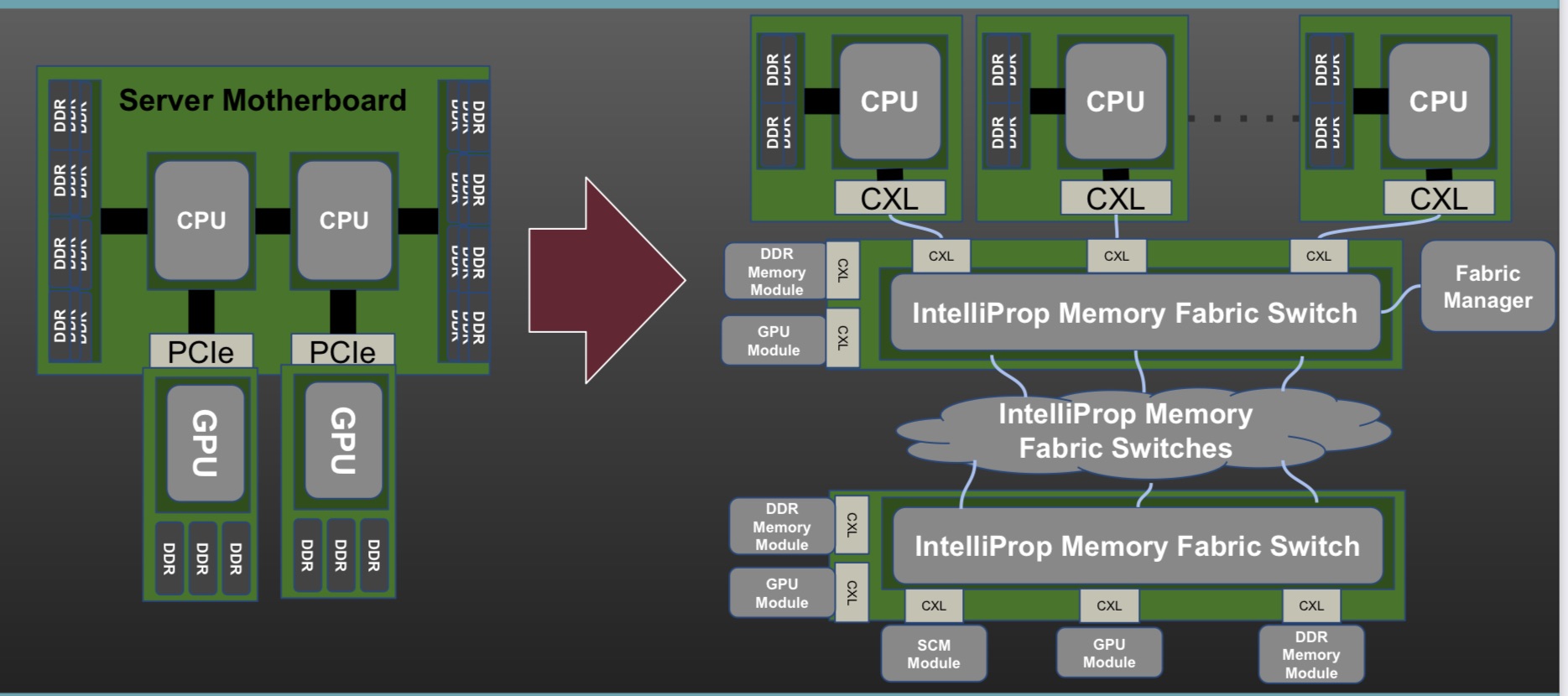

- The Gen-Z and Compute Express Link (CXL) protocols have been shown working in unison to implement a disaggregated processor and memory system at the recent Supercomputing 21 show.

- The Gen-Z Consortium’s assets are being subsumed within the CXL Consortium. CXL will become the sole industry standard moving forward.

- Microsoft and Meta are two data centre operators backing CXL.

Pity Hiren Patel, tasked with explaining the Gen-Z and CXL networking demonstration operating across several booths at the Supercomputing 21 (SC21) show held in St. Louis, Missouri in November.

Not only was Patel wearing a sanitary mask while describing the demo but he had to battle to be heard above cooling fans so loud, you could still be at St. Louis Lambert International Airport.

Gen-Z and CXL are key protocols supporting memory and server disaggregation in the data centre.

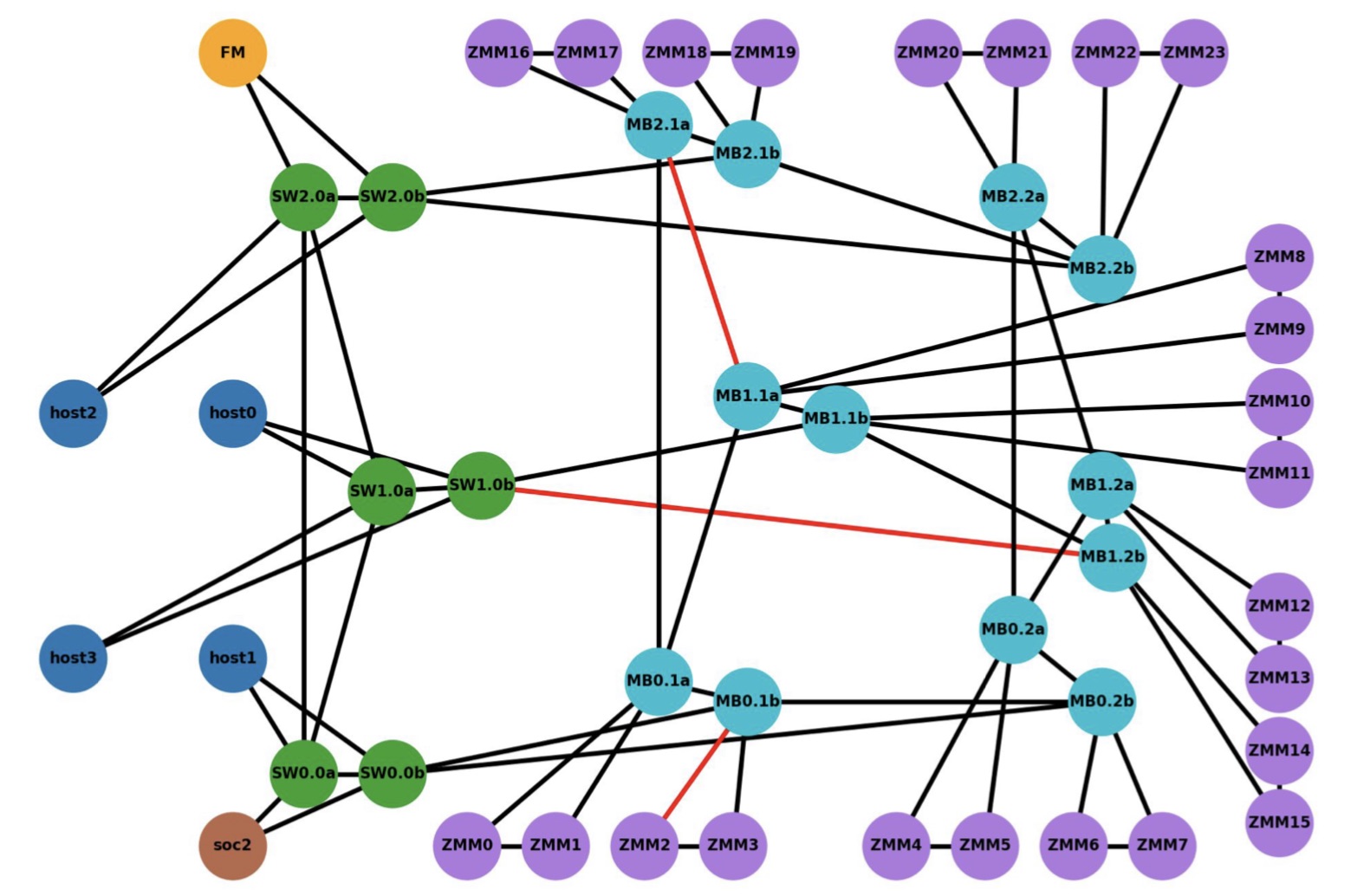

The SC21 demo showed Gen-Z and CXL linking compute nodes to remote ‘media boxes’ filled with memory in a distributed multi-node network (see diagram, bottom).

CXL was used as the host interface on the various nodes while Gen-Z created and oversaw the mesh network linking equipment up to tens of meters apart.

“What our demo showed is that it is finally coming to fruition, albeit with FPGAs,” says Patel, CEO of IP specialist, IntelliProp, and President of the Gen-Z Consortium.

Interconnects

Gen-Z and CXL are two of a class of interconnect schemes announced in recent years.

The interconnects came about to enable efficient ways to connect CPUs, accelerators and memory. They also address a desire among data centre operators to disaggregate servers so that key components such as memory can be pooled separately from the CPUs.

The idea of disaggregation is not new. The Gen-Z protocol emerged from HPE’s development of The Machine, a novel memory-centric computer architecture. The Gen-Z Consortium was formed in 2016, backed by HPE and Dell, another leading high-performance computing specialist. The CXL consortium was formed in 2019.

Other interconnects of recent years include the Open Coherent Accelerator Processor Interface (Open-CAPI), Intel’s own interconnect scheme Omni-Path which it subsequently sold off, Nvidia’s NVLink, and the Cache Coherent Interconnect for Accelerators (CCIX).

The emergence of the host buses was also a result of industry frustration with the prolonged delay in the release of the then PCI Express (PCIe) 4.0 specification.

All these interconnects are valuable, says Patel, but many are implemented in a proprietary manner whereas CXL and Gen-Z are open standards that have gained industry support.

“There is value moving away from proprietary to an industry standard,” says Patel.

Merits of pooling

Disaggregated designs with pooled memory deliver several advantages: memory can be upgraded at different stages to the CPUs, with extra memory added as required. “Memory growth is outstripping CPU core growth,” says Patel. “Now you need banks of memory outside of the server box.”

A disaggregated memory architecture also supports multiple compute nodes – CPUs and accelerators such as graphics processor units (GPUs) or FPGAs – collaborating on a common data set.

Such resources also become configurable: in artificial intelligence, training workloads require a hardware configuration different to inferencing. With disaggregation, resources can be requested for a workload and then released once a task is completed.

Memory disaggregation also helps data centre operators drive down the cost-per-bit of memory. “What data centres spend just on DRAM is extraordinarily high,” says Erich Hanke, senior principal engineer, storage and memory products, at IntelliProp.

Memory can be used more efficiently and need no longer to be stranded. A server can be designed for average workloads, not worse case ones as is done now. And when worst-case scenarios arise, extra memory can be requested.

“This allows the design of efficient data centres that are cost optimised while not losing out on the aggregate performance,” says Hanke.

Hanke also highlights another advantage, minimising data loss during downtimes. Given the huge number of servers in a data centre, reboots and kernel upgrades are a continual occurrence. With disaggregated memory, active memory resources need not be lost.

Gen-Z and CXL

The Gen-Z protocol allows for the allocation and deallocation of resources, whether memory, accelerators or networking. “It can be used to create a temporary or permanent binding of that resource to one or more CPU nodes,” says Hanke.

Gen-Z supports native peer-to-peer requests flowing in any direction through a fabric, says Hanke. This is different to PCIe which supports tree-type topologies.

Gen-Z and CXL are also memory-semantic protocols whereas PCIe is not.

With a memory-semantic protocol, a processor natively issues data loads and stores into fabric-attached components. “No layer of software or a driver is needed to DMA (direct memory access) data out of a storage device if you have a memory-semantic fabric,” says Hanke.

Gen-Z is also hugely scalable. It supports 4,096 nodes per subnet and 64,000 subnets, a total of 256 million nodes per fabric.

The Gen-Z specification is designed modularly, comprising a core specification and other components such as for the physical layer to accommodate changes in serialiser-deserialiser (serdes) speeds.

For example, the SC21 demo using an FPGA implemented 25 giga-transfers a second (25GT/s) but the standard will support 50 and 112GT/s rates. In effect, the Gen-Z specification is largely done.

What Gen-Z does not support is cache coherency but that is what CXL is designed to do. Version 2.0 of the CXL specification has already been published and version 3.0 is expected in the first half of 2022.

CXL 2.0 supports three protocols: CXL.io which is similar to PCIe – CXL uses the physical layer of the PCIe bus, CXL.memory for host-memory accesses, and CXL.cache for coherent host-cache accesses.

“More and more processors will have CXL as their connect point,” says Patel. “You may not see Open-CAPI as a connect point, you may not see NVLink as a connect point, you won’t see Gen-Z as a connect point but you will see CXL on processors.”

SC21 demo

The demo’s goal was to show how computing nodes – hosts – could be connected to memory modules through a switched Gen-Z fabric.

The equipment included a server hosting the latest Intel Sapphire Rapids processor, a quad-core A53 ARM processor on a Xilinx FPGA implemented with a Bittware 250SoC FPGA card, as well as several media boxes housing memory modules.

The ARM processor was used as the Fabric Manager node which oversees the network to allow access to the storage endpoints. There is also a Fabric Adaptor that connects to the Intel processor’s CXL bus on one side and the other to the memory-semantic fabric.

“CXL is in the hosts and everything outside that is Gen-Z,” says Patel.

The CXL V1.1 interface is used with four hosts (see diagram below). The V1.1 specification is point-to-point and as such can’t be used for any of the fabric implementations, says Patel. The 128Gbps CXL host interfaces were implemented as eight lanes of 16Gbps, using the PCIe 4.0 physical layer.

The Intel Sapphire Rapids processor supports a CXL Gen5x16 bus supporting 512Gbps (PCIe 5.0 x 16 lanes) but that is too fast for IntelliProp’s FPGA implementation. “An ASIC implementation of the IntelliProp CXL host fabric adapter would run at the 512Gpbs full rate,” says Patel. With an ASIC, the Gen-Z port court could be increased from 12 to 48 ports while the latency of each hop would be 35ns only.

The media box is a two-rack-unit (2RU) server without a CPU but with fabric-attached memory modules. Each memory module has a switch that enables multipath accesses. A memory module of 256Gbytes could be partitioned across all four hosts, for example. Equally, memory can be shared among the hosts. In the SC21 demo, memory in a media box was accessed by a server 30m away.

IntelliProp implemented the Host Fabric Adapter which included integrated switching, a 12-port Gen-Z switch, and the memory modules featuring integrated switching. All of the SC21 demonstration, outside of the Intel host, was done using FPGAs.

For a data centre, the media boxes would connect to a top-of-rack switch and fan out to multiple servers. “The media box could be co-located in a rack with CPU servers, or adjacent racks or a pod,” says Hanke.

The distances of a Gen-Z network in a data centre would typically be a row- or pod-scale, says Hanke. IntelliProp has had enquiries about going greater distances but above 30m fibre length starts to dictate latency. It’s a 10ns round trip for each meter of cable, says IntelliProp.

What the demo also showed was how well the Gen-Z and CXL protocols combine. “Gen-Z converts the host physical address to a fabric address in a very low latency manner; this is how they will eventually blend,” says Hanke.

What next?

The CXL Consortium and The Gen-Z Consortium signed a memorandum of understanding in 2020 and now Gen-Z’s assets are being transferred to the CXL Consortium. Going forward, CXL will become the sole industry standard.

Meanwhile, Microsoft, speaking at SC21, expressed its interest in CXL to support disaggregated memory and to grow memory dynamically in real-time. Meta is also backing the standard. But both cloud companies need the standard to be easily manageable (software) and stress the importance that CXL and its evolutions have minimal impact on overall latency.

Marvell's 50G PAM-4 DSP for 5G optical fronthaul

- Marvell has announced the first 50-gigabit 4-level pulse-amplitude modulation (PAM-4) physical layer (PHY) for 5G fronthaul.

- The chip completes Marvell’s comprehensive portfolio for 5G radio access network (RAN) and x-haul (fronthaul, midhaul and backhaul).

Marvell has announced what it claims is an industry-first: a 50-gigabit PHY for the 5G fronthaul market.

Dubbed the AtlasOne, the PAM-4 PHY chip also integrates the laser driver. Marvell claims this is another first: implementing the directly modulated laser (DML) driver in CMOS.

“The common thinking in the industry has been that you couldn’t do a DML driver in CMOS due to the current requirements,” says Matt Bolig, director, product marketing, optical connectivity at Marvell. “What we have shown is that we can build that into CMOS.”

Marvell, through its Inphi acquisition, says it has shipped over 100 million ICs for the radio access network (RAN) and estimates that its silicon is in networks supporting 2 billion cellular users.

“We have been in this business for 15 years,” says Peter Carson, senior director, solutions marketing at Marvell. “We consider ourselves the number one merchant RAN silicon provider.”

Inphi started shipping its Polaris PHY for 5G midhaul and backhaul markets in 2019. “We have over a million ships into 5G,” says Bolig. Now Marvell is adding its AtlasOne PHY for 5G fronthaul.

Mobile traffic

Marvell says wireless data has been growing at a compound annual growth rate (CAGR) of over 60 per cent (2015-2021). Such relentless growth is forcing operators to upgrade their radio units and networks.

Stéphane Téral, chief analyst at market research firm, LightCounting, in its latest research note on Marvell’s RAN and x-haul silicon strategy, says that while 5G rollouts are “going gangbusters” around the world, they are traditional RAN implementations.

By that Téral means 5G radio units linked to a baseband unit that hosts both the distributed unit (DU) and centralised unit (CU).

But as 5G RAN architectures evolve, the baseband unit is being disaggregated, separating the distributed unit (DU) and centralised unit (CU). This is happening because the RAN is such an integral and costly part of the network and operators want to move away from vendor lock-in and expand their marketplace options.

For RAN, this means splitting the baseband functions and standardising interfaces that previously were hidden within custom equipment. Splitting the baseband unit also allows the functionality to be virtualised and be located separately, leading to the various x-haul options.

How the RAN is being disaggregated includes virtualised RAN and Open RAN. Marvell says Open RAN is still in its infancy but is a key part of the operators’ desire to virtualise and disaggregate their networks.

“Every Open RAN operator that is doing trials or early-stage deployments is also virtualising and disaggregating,” says Carson.

RAN disaggregation is also occuring in the vertical domain: the baseband functions and how they interface to the higher layers of the network. Such vertical disaggregation is being undertaken by the likes of the ONF and the Open RAN Alliance.

The disaggregated RAN – a mixture of the radio, DU and CU units – can still be located at a common site but more likely will be spread across locations.

Fronthaul is used to link the radio unit and DU when they are at separate locations. In turn, the DU and CU may also be at separate locations with the CU implemented in software running on servers deep within the network. Separating the DU and the CU is leading to the emergence of a new link: midhaul, says Téral.

Fronthaul speeds

Marvell says that the first 5G radio deployments use 8 transmitter/ 8 receiver (8T/8R) multiple-input multiple-output (MIMO) systems.

MIMO is a signal processing technique for beamforming, allowing operators to localise the capacity offered to users. An operator may use tens of megahertz of radio spectrum in such a configuration with the result that the radio unit traffic requires a 10Gbps front-haul link to the DU.

Leading operators are now deploying 100MHz of radio spectrum and massive MIMO – up to 32T/32R. Such a deployment requires 25Gbps fronthaul links.

“What we are seeing now is those leading operators, starting in the Asia Pacific, while the US operators have spectrum footprints at 3GHz and soon 5-6GHz, using 200MHz instantaneous bandwidth on the radio unit,” says Carson.

Here, an even higher-order 64T/64R massive MIMO will be used, driving the need for 50Gbps fronthaul links. Samsung has demonstrated the use of 64T/64R MIMO, enabling up to 16 spatial layers and boosting capacity by 7x.

“Not only do you have wider bandwidth, but you also have this capacity boost from spatial layering which carriers need in the ‘hot zones’ of their networks,” says Carson. “This is driving the need for 50-gigabit fronthaul.”

AtlasOne PHY

Marvell says its AtlasOne PAM-4 PHY chip for fronthaul supports an industrial temperature range and reduces power consumption by a quarter compared to its older PHYs. The power-saving is achieved by optimising the PHY’s digital signal processor and by integrating the DML driver.

Earlier this year Marvell announced its 50G PAM-4 Atlas quad-PHY design for the data centre. The AtlasOne uses the same architecture but differs in that it is integrated into a package for telecom and integrates the DML driver but not the trans-impedance amplifier (TIA).

“In a data centre module, you typically have the TIA and the photo-detector close to the PHY chip; in telecom, the photo-detector has to go into a ROSA (receiver optical sub-assembly),” says Bolig. “And since the photo-detector is in the ROSA, the TIA ends up having to be in the ROSA as well.”

The AtlasOne also supports 10-gigabit and 25-gigabit modes. Not all lines will need 50 gigabits but deploying the PHY future-proofs the link.

The device will start going into modules in early 2022 followed by field trials starting in the summer. Marvell expects the 50G fronthaul market to start in 2023.

RAN and x-haul IC portfolio

One of the challenges of virtualising the RAN is doing the layer one processing and this requires significant computation, more than can be handled in software running on a general-purpose processor.

Marvell supplies two chips for this purpose: the Octeon Fusion and the Octeon 10 data processing unit (DPU) that provides programmability and as well as specialised hardware accelerator blocks needed for 4G and 5G. “You just can’t deploy 4G or 5G on a software-only architecture,” says Carson.

As well as these two ICs and its PHY families for the various x-haul links, Marvell also has a coherent DSP family for backhaul (see diagram). Indeed, LightCounting’s Téral notes how Marvell has all the key components for an all-RAN 5G architecture.

Marvell also offers a 5G virtual RAN (VRAN) DU card that uses the OcteonFusion IC and says it already has five design wins with major cloud and OEM customers.

Books read in 2021: Part 1

Each year Gazettabyte asks industry figures to pick their reads of the year. Paul Brooks and Maxim Kuschnerov kick off this year’s recommended reads.

Dr. Paul Brooks, Optical Transport Director, VIAVI Solutions

Having spent a very happy time serving in the Royal Navy, I am always reading about all things connected with its history.

As a young midshipman, I managed to sleep through many of the history lessons at BRNC Dartmouth so I am using my spare time to catch up on the lessons I missed all those years ago.

One book which I have very much enjoyed this year has been Stephen Taylor’s Sons of the Waves: The Common Seaman in the Heroic Age of Sail.

While many books are written about major figures such as Nelson and Blake, the ordinary sailor with his robustness, loyalty and sense of duty was the key element in the success of the Royal Navy.

This well-researched book is a joy to read as it brings to life the heroic men. I must confess I did hum ‘Heart of Oak’ as I reached for my tot of rum as I read about the jolly Jack Tar on the Victory at Trafalgar!

For any student of history, and indeed anyone interested in social history, this is one for your Christmas list.

Dr. Maxim Kuschnerov, Director of the Optical & Quantum Communications Laboratory

No Rules Rules: Netflix and the Culture of Reinvention, by Reed Hastings and Erin Meyer, got good press last year, so when I saw it at the airport, it was a no brainer to get it.

The book offers a radical approach to management, focusing on totally open feedback and the removal of most controls, whether it’s the lack of vacation policy (take as much as you want) or the absence of higher approvals for most business dealings. Salary adjustments are governed by external market references and not internal processes, which is generally not a bad thing.

Naturally, looking at this corporate culture through the glasses of a German dependency of a Chinese company makes for a big contrast and it would be hard to imagine a German company functioning without any kind of rules. But what the book achieves is to shift the normal operational bias towards a more modern view of team management and it helped me to make adjustments in everyday work, changing the way that I interpreted my role within my team.

This brings me straight to another, older, book by Erin Meyer, The Culture Map: Breaking Through the Invisible Boundaries of Global Business. It reads like a compressed tutorial of inter-cultural communication and decision making, although I have to admit it was almost more fun to naively learn all of this in the field than to have all the findings confirmed at a later point by the conclusions in this book.

I found it particularly interesting to see the historical context for some present cultural behaviour, by which I don’t mean the obvious teaching of Confucius for Chinese people but also current social traits in Europe dating back to the Roman Empire.

So when a Chinese colleague, who recently moved to Germany, described the German personality as a coconut after the first weeks of adjusting to life in Munich, it made me think that we should be providing this book as a compulsory read within the company, just to soften the blow.

Lastly, looking at how big data and analytics started to change our lives in many domains and found their way into sport in the classic Moneyball book, I believe that no other sport has been changed as drastically by a statistical approach to analytics as basketball.

Kirk Goldberry’s Sprawlball: A Visual Tour of the New Era of the NBA explains the dramatic change in the game by findings that, in hindsight, are so obvious that one can only wonder how we all didn’t see it coming in the 1990s when the GOAT Michael Jordan redefined the art of playing ball.

Goldberry explains the historical context for modern-day greats like LeBron James, James Harden and Steph Curry, while also giving a shout-out to my other personal favourite, Dirk Nowitzki, whose 2011 finals run will stay at the top of my sporting moments.

I just wish I could have told my 14-year-old self to stop practising baby hooks and post ups and go straight to 3-point drills.

Waiting for buses: PCI Express 6.0 to arrive on time

- PCI Express 6.0 (PCIe 6.0) continues the trend of doubling the speed of the point-to-point bus every 3 years.

- PCIe 6.0 uses PAM-4 signalling for the first time to achieve 64 giga-transfers per second (GT/s).

- Given the importance of the bus for interconnect standards such as the Compute Express Link (CXL) that supports disaggregation, the new bus can’t come fast enough for server vendors.

The PCI Express 6.0 specification is expected to be completed early next year.

So says Richard Solomon, vice-chair of the PCI Special Interest Group (PCI-SIG) which oversees the long-established PCI Express (PCIe) standard, and that has nearly 900 member companies.

The first announced products will then follow later next year while IP blocks supporting the 6.0 standard exist now.

When the work to develop the point-to-point communications standard was announced in 2019, developing lanes capable of 64 giga transfers-per-second (GT/s) in just two years was deemed ambitious, especially given 4-level pulse amplitude modulation (PAM-4) would be adopted for the first time.

But Solomon says the global pandemic may have benefitted development due to engineers working from home and spending more time on the standard. Demand from applications such as storage and artificial intelligence (AI)/ machine learning have also been driving factors.

Applications

The PCIe standard uses a dual simplex scheme – serial transmissions in both directions – referred to as a lane. The bus can be configured in several lane configurations: x1, x2, x4, x8, x12, x16 and x32, although x2, x12 and x32 are rarely used in practice.

PCIe 6.0’s transfer rate of 64GT/s is double that of the PCIe 5.0 standard that is already being adopted in products.

The PCIe bus is used for storage, processors, AI, the Internet of Things (IoT), mobile, and automotive especially with the advent of advanced driver assistance systems (ADAS). “Advanced driver assistance systems use a lot of AI; there is a huge amount of vision processing going on,” says Solomon.

For cloud applications, the bus is used for servers and storage. For servers, PCIe has been adopted by general-purpose processors and more specialist devices such as FPGAs, graphics processor units (GPUs) and AI hardware.

IBM’s latest 7nm POWER10 16-core processor, for example, is an 18-billion transistor device. The chip uses the PCIe 5.0 bus as part of its input-output.

In contrast, IoT applications typically adopt older generation PCIe interfaces. “It will be PCIe at 8 gigabit when the industry is on 16 and 32 gigabit,” says Solomon.

PCIe is being used for IoT because of it being a widely adopted interface and because PCIe devices interface like memory, using a load-store approach.

The CXL standard – an important technology for the data centre that interconnects processors, accelerator devices, memory, and switching – also makes use of PCIe, sitting on top of the PCIe physical layer.

PCIe roadmap

The PCIe 4.0 came out relatively late but then PCI-SIG quickly followed with PCIe 5.0 and now the 6.0 specification.

The PCIe 6.0 specification built into the schedule an allowance for some slippage while still being ready for when the industry would need the technology. But even with the adoption of PAM-4, the standard has kept to the original ambitious schedule.

PCIe 4.0 incorporated an important change by extending the number of outstanding commands and data. Before the 4.0 specification, PCIe allowed for up to 256 commands to be outstanding. With PCIe 4.0 that was tripled to 768.

To understand why this is needed, a host CPU system may support several add-in cards. When a card makes a read request, it may take the host a while to service the request, especially if the memory system is remote.

A way around that is for the add-in card to issue more commands to hide the latency.

“As the bus goes faster and faster, the transfer time goes down and the systems are frankly busier,” says Solomon. “If you are busy, I need to give you more commands so I can cover that latency.”

The PCIe technical terms are tags, a tag identifying each command, and credits which refers to how the bus takes care of flow control.

“You can think of tags as the sheer number of outstanding commands and credits as more as the amount of overall outstanding data,” says Solomon.

Both tags and credits had to be changed to support up to 768 outstanding commands. And this protocol change has been carried over into PCI 5.0.

In addition to the doubling in transfer rate to 32GT/s, PCI 5.0 requires an enhanced link budget of 36dB, up from 28dB with the PCIe 4.0. “As the frequency [of the signals] goes up, so does the loss,” says Solomon.

PCI 6.0

Moving from 32GT/s to 64GT/s and yet keep ensuring the same typical distances requires PAM-4.

More sophisticated circuitry at each end of the link is needed as well as a forward-error correction scheme which is a first for a PCI express standard implementation.

One advantage is that PAM-4 is already widely used for 56 and 112 gigabit-per-second high-speed interfaces. “That is why it was reasonable to set an aggressive timescale because we are leveraging a technology that is out there,” says Solomon. Here, PAM-4 will be operated at 64Gbps.

The tags and credits have again been expanded for PCI 6.0 to support 16,384 outstanding commands. “Hopefully, it will not be needed to be extended again,” says Solomon.

PCIe 6.0 also supports FLITs – a network packet scheme – that simplifies data transfers. FLITs are introduced with PCIe 6.0, but silicon designed for PCIe 6.0 could use FLITs at lower transfer speeds. Meanwhile, there are no signs of PCI Express needing to embrace optics as the interface speeds continue to advance.

“There is a ton of complexity and additional stuff we have to do to move to 6.0; optical would add to that,” says Solomon. “As long as people can do it on copper, they will keep doing it on copper.”

PCI-SIG is not yet talking about PCIe 7.0 but Solomon points out that every generation has doubled the transfer rate.

Acacia's single-wavelength terabit coherent module

- Acacia has developed a 140-gigabaud, 1.2-terabit coherent module

- The module, using 16-ary quadrature amplitude modulation (16-QAM), can deliver an 800-gigabit wavelength over 90 per cent of the links of a North American operator.

Acacia Communications, now part of Cisco, has announced the first 1.2-terabit single-wavelength coherent pluggable transceiver.

And the first vendor, ZTE, has already showcased a prototype using Acacia’s single-carrier 1.2 terabit-per-second (Tbps) design.

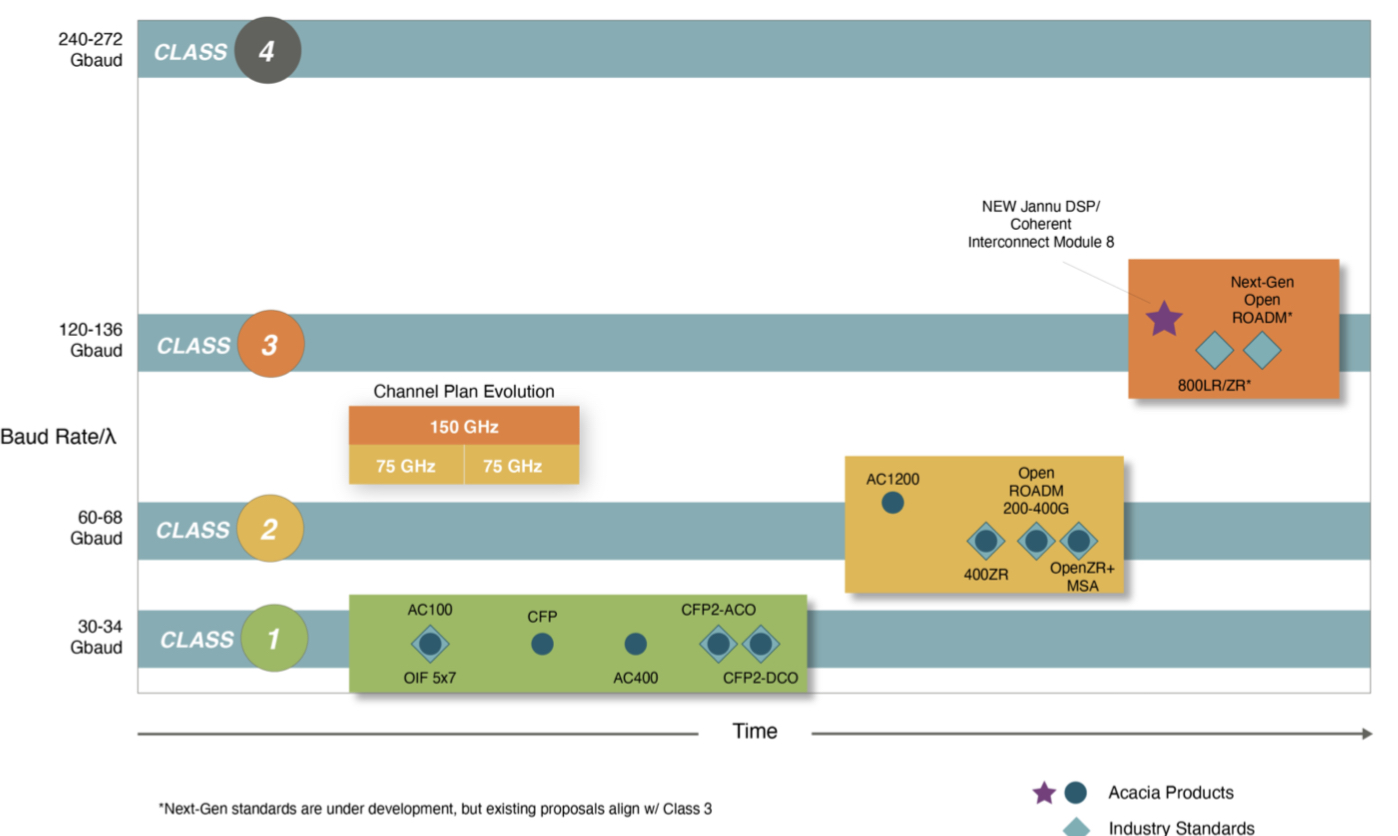

The coherent module operates at a symbol rate of up to 140 gigabaud (GBd) using silicon photonics technology. Until now, indium phosphide has always been the material at the forefront of each symbol rate hike.

The module uses Acacia’s latest Jannu coherent digital signal processor (DSP), implemented in 5nm CMOS. The coherent transceiver also uses a custom form-factor pluggable dubbed the Coherent Interconnect Module 8 (CIM-8).

Trends

Acacia refers to its 1.2-terabit coherent pluggable as a multi-haul design, a break from its product categorisation as either embedded or pluggable.

“We are introducing a pluggable module that supports what has traditionally been the embedded market,” says Tom Williams, senior director of marketing at Acacia. “It supports high-capacity edge applications all the way out to long-haul and submarine.”

Pluggables are the fastest-growing segment of the coherent market. Whereas the mix of custom embedded designs to pluggable interoperable is 2:1, that is forecast to change with coherent pluggables accounting for two-thirds of the total ports.

Acacia highlights the growth of coherent pluggables with two examples.

Data centre operator Microsoft used Inphi’s (now Marvell’s) ColorZ direct-detect 100-gigabit modules for data centre interconnect for up to 80km whereas now the industry is moving to the 400ZR coherent MSA.

In turn, while proprietary embedded coherent solutions would be used for reconfigurable optical add-drop multiplexers (ROADMs), now, interoperable pluggable coherent modules are being adopted with the OpenROADM MSA.

“There is still a significant need in the market for full-performance multi-haul solutions but we think their development needs to be informed and influenced by pluggables,” says Williams.

1.2-terabit capacity

As coherent technology matures, the optical transmission performance is approaching the theoretical limit as defined by Claude Shannon.

“There is still opportunity for improvement,” says Williams. “We still have performance enhancements with each generation but it is becoming more incremental.”

Williams highlights how its latest design offers a 20–25 per cent spectral efficiency improvement compared to Acacia’s AC1200 that uses two wavelengths to deliver up to 1.2Tbps.

“As we increase baud rate, that alone does not give any improvement in spectral efficiency,” says Williams. It is the algorithmic enhancements that still boost performance.

Acacia is adopting an enhanced probabilistic constellation shaping (PCS) algorithm as well as an improved forward-error correction scheme. “There are also some benefits of a single carrier as opposed to using multiple carriers,” says Williams.

Design

The latest design is a natural extension of the AC1200 which can send 400 gigabits over ultra-long-haul distances, 800 gigabits using two wavelengths over most spans, and three 400-gigabit payloads over shorter, network-edge reaches. Now, this can all be done using a single wavelength.

A 150GHz channel is used when transmitting the module’s full rate of 1.2Tbps. And with the module’s adaptive baud rate feature, the rate can be reduced to fit a wavelength in a 75GHz-wide channel. Existing 800-gigabit transmissions use 112.5GHz channel widths and the multi-rate module also supports this spacing.

Williams says 16-QAM is the favoured signalling scheme used for transmission. This is what has been chosen for the 400ZR standard at 64GBd. Doubling the symbol rate means 800 gigabits can be sent using 16-QAM.

Acacia also highlights that future generation coherent designs, what it calls class 4 (see diagram above), will double the symbol rate again to some 240GBd. But the company is not saying whether the technology enabling such rates will be silicon photonics.

The company has long spoken of the benefits of using a silicon packaging approach for its coherent modules in terms of size, power and automated manufacturing. But as the symbol rate doubles, packaging plays a key role to help tackle challenging radio frequency (RF) design issues.

Acacia stacks the driver and trans-impedance amplifier (TIA) circuitry directly on top of its photonic integrated circuit (PIC) while its coherent DSP is also packaged as part of the design. “This gives us much better signal integrity than if we have the optics and DSP packaged separately,” says Williams.

The key to the design is getting the silicon photonics – the optical modulator, in particular – operating at 140GBd. “If you can, the packaging advantages of silicon are significant,” says Williams.

Acacia points out that with the migration of traffic from 100GbE to 400GbE it makes sense to offer a single-wavelength multi-rate design. And 400GbE will remain the mainstay traffic for a while. But once the transition to 800 gigabit occurs, the idea of supporting two coherent wavelengths – a future dual-wavelength “AC2400” – may make sense.

CIM-8

Acacia is using its own form factor and not a multi-source agreement (MSA) because the 1.2-terabit technology exceeds all existing client-side data rates.

In turn, the power consumption of the 1.2-terabit coherent module requires a custom form factor while launching an MSA based on the CIM-8 would have tipped off the competition, says Williams.

That said, Acacia has made no secret that its next high-end design following on from its 64GBd AC1200 would double the symbol rate and that the company would skip the 96GBd rate used by vendors such as Ciena, Huawei and Infinera already offering 800-gigabit wavelength systems.

For Acacia’s multi-rate design that needs to address submarine applications, the goal is to maximise transmission performance. In contrast, for a ZR+ coherent design that fits in a QSFP-DD, the limited power budget of the module constrains the design’s performance.

With 5nm Jannu DSP, Acacia realised it could not fit the design in the QSFP-DD or OSFP. But it could produce a pluggable multi-haul design with its CIM-8 that is slightly larger than the CFP2 form factor. And pluggables are advantageous when 4-8 can be fitted in a one-rack-unit (1RU) platform.

Acacia says its 140GBd module using 16-QAM will deliver an 800-gigabit wavelength over 90 per cent of the links of a North American operator. For the remaining, longest-distance links (the 10 per cent), it will revert to 400 gigabits.

In contrast, existing 800-gigabit systems operating at 96GBd cover up to 20 per cent of the links before having to revert to the slower speed, says Acacia.

Applications

Hyperscaler data centre operators are the main drivers for 1.2Tbps interconnects. The interface would typically be used in the metro to link smaller data centres to a larger aggregation data centre.

“The 1.2-terabit interface is just trying to maximise cost per bit; pushing more bits over the same set of optics,” says Williams.

The communications service providers’ requirements, meanwhile, are focussed on 400 gigabits and at some point will migrate to 800 gigabits, says Williams.

Several system vendors are expected to announce products using the new module in the coming months.

Lumentum bulks up with NeoPhotonics buy

Lumentum is to acquire fellow component and module specialist, NeoPhotonics, for $918 million.

The deal will expand Lumentum’s optical transmission product line, broadening its component portfolio and boosting its high-end coherent line-side product offerings.

Gaining NeoPhotonics’ 400-gigabit coherent offerings will enable Lumentum to better compete with Cisco and Marvell. Lumentum will also gain a talented team of photonics experts as it looks to address new opportunities.

Alan Lowe, Lumentum’s president and CEO, stressed the importance of this collective optical expertise.

Speaking on the call announcing the agreement, Lowe said the expanded know-how would benefit Lumentum’s traditional markets and accelerate its entrance into other, newer markets.

Transaction details

Lumentum will pay $16 in cash for each share of NeoPhotonics, valuing the company at $918 million. Lumentum will also pay $50 million to NeoPhotonics “for growth capex and working capital.”

Cost savings of $50 million in annual run-rate are expected within two years of the deal closing, with 60 per cent of the savings coming from the cost of goods sold.

The deal is reminiscent of Lumentum’s acquisition of Oclaro for $1.8 billion in 2018. Oclaro was also focussed on transmission components and modules.

The acquisition is expected to close in the second half of 2022, subject to the approval of NeoPhotonics’ stockholders and regulatory bodies.

Background

Lumentum’s announcement follows its failed bid early this year for the laser company, Coherent. II-VI ended up winning the bid, paying $6.9 billion.

Coherent’s lasers are used in many markets and the deal would have diversified Lumentum’s business beyond communications and smartphones.

Now, the proposed acquisition of NeoPhotonics boosts Lumentum’s core communications business unit. NeoPhotonics’ focus is cloud and networking although the company has been using its coherent expertise to address LiDAR and medical markets.

Vladimir Kozlov, CEO of market research firm LightCounting, does not see any inconsistency in Lumentum’s strategy to first diversify and then strengthen its core business. “There are many directions to accelerate company growth,” he says.

Lumentum tried one way with Coherent, it didn’t work out, now it is trying another with NeoPhotonics. “You take opportunities as they come along,” says Kozlov.

NeoPhotonics has also been impacted by the trade restrictions on Huawei, a significant customer of the company. NeoPhotonics has had to adapt to on-off sales to Huawei in recent years. Huawei also has a long-term strategy to develop its optical components including tunable lasers for which NeoPhotonics has been their leading supplier.

“That certainly added pressure on NeoPhotonics to be acquired,” says Kozlov.

Business opportunities

Lumentum’s business is split 60 per cent cloud and networking and 40 per cent 3D Sensing, LiDAR, and commercial lasers for industrial applications.

Lumentum’s cloud and networking products include reconfigurable optical add-drop multiplexing (ROADM) sub-systems, optical components for high-speed client-side and line-side modules, and coherent optical modules.

NeoPhotonics brings ultra narrow-linewidth tunable lasers, silicon photonics-based components and transceivers, and high-speed coherent modules and components. NeoPhotonics also has passive and planar lightwave circuit components and an RF chip design capability using gallium arsenide and silicon germanium.

Tim Jenks, president, CEO and chairman of NeoPhotonics, said combining the two firms would accelerate its business developing high-speed optical communications.

In turn, their combined R&D and technology teams can address new markets such as the life sciences, industrial applications, and green markets such as energy efficiency, electric vehicles and climate change green manufacturing concerns.

But no detail was forthcoming on the call beyond Lowe saying the merger will expand the collective know-how and accelerate its entrance into these markets.

Lowe also highlighted the strong growth in high-speed ports due to the 30 per cent year-on-year growth in internet bandwidth.

LightCounting says the dense wavelength division multiplexing (DWDM) coherent market will experience a compound annual growth rate (CAGR) of 20 per cent over the next five years; the general optical market is growing at a 14 per cent CAGR.

Both companies have indium-phosphide components for coherent systems while NeoPhotonics has pluggable 400ZR and ZR+ products as well as silicon photonics components for coherent. Gaining NeoPhotonics’ ultra-narrow linewidth lasers will make Lumentum an even stronger laser supplier.

LightCounting’s Kozlov notes the importance of scale, especially when target markets are not huge and the number of large customers is limited. This is the case with 400ZR/ ZR+ coherent DWDM transceivers that NeoPhotonics started selling in 2021.

Amazon is the biggest buyer of such modules and it uses three suppliers. NeoPhotonics is a distant third in the race behind Acacia, now part of Cisco, and Inphi, part of Marvell. But unlike Acacia and Inphi, NeoPhotonics does not have its own coherent DSP.

Joining forces with Lumentum, NeoPhotonics is more likely to win a larger share of business at key customers, says LightCounting. The new Lumentum may still be third in the race, but it is no longer a distant third.

Recent announcements

Lumentum started shipping its 400-gigabit CFP2-DCO coherent module earlier this year. Its range of indium-phosphide coherent components operates at a 96-gigabaud (GBd) symbol rate that supports up to 800-gigabit wavelengths. Lumentum is developing components that will operate at 128GBd.

Lumentum also has a directly modulated laser (DML) supporting 100-gigabit wavelengths. Such a laser is used for 100-gigabit and 400-gigabit client-side pluggables. The company is also developing electro-absorption modulated laser (EML) technology that supports 200 gigabits and higher performance per lane.

Meanwhile, NeoPhotonics is shipping 400ZR QSFP-DD and OSFP 400ZR coherent optical modules. NeoPhotonics also has a multi-rate CFP2-DCO module with a reach of 1,500km at 400 gigabits. And like Lumentum, the company has indium-phosphide technology that supports 130GBd coherent components.

Kozlov believes Lumentum is in a good position.

On the call announcing the deal, Lumentum also delivered its latest quarterly results. “They can hardly keep up with demand,” he says.

The issue of shortages is getting worse. This is not because the shortages themselves are getting worse but that demand is ramping faster than the shortage issue can be resolved. “It’s a good problem to have,” says Kozlov.

Industry consolidation

The Lumentum-NeoPhotonics deal follows the recent announcement of the merger of two other mature optical players such as the systems vendors: ADTRAN and ADVA.