Transmode chooses coherent for 100 Gigabit metro

Transmode has detailed its 100 Gigabit metro strategy based on a stackable rack, a concept borrowed from the datacom world.

The Swedish system vendor has adopted coherent detection technology for 100 Gigabit-per-second (Gbps) optical transmission, unlike other recent metro announcements from ADVA Optical Networking and MultiPhy based on 100Gbps direct-detection.

"Metro is a little bit diverse. You see different requirements that you have to adapt to."

Sten Nordell, Transmode

"We are getting requests for this and we think 2012 is when people are going to put in a low number of [100Gbps] links into the metro," says Sten Nordell, CTO at Transmode.

The 100Gbps requirements Transmode is seeing include connecting data centres over various distances. The data centres can be close - tens of kilometers - or hundreds of kilometers apart.

"They [data centre operators] want to get more capacity over longer distances over the fibre they have rented," says Nordell. "That is why we are going down the standards path of coherent technology that gives you that boost in power and distance."

Nordell says that customers typically only want one or two 100Gbps light paths to expand fibre capacity or to connect IP routers over a link already carrying multiple 10Gbps light paths. "Metro is a little bit diverse," he says. "You see different requirements that you have to adapt to."

Rack system approach

Transmode has adopted a stackable approach to its 100Gbps TM-series of chassis. The TM-2000 is a 4U-high dual 100Gbps rack that implements transponder, muxponder or regeneration functions. "We have borrowed from Ethernet switches - you add as you grow," says Nordell.

Up to four TM-2000 are used with one TM-301 or TM-3000 master rack, with the architecture supporting up to 80, 100Gbps wavelengths overall.

"If you have too many ROADMs in the way it is going to hurt you. We have seen that with 40 Gig."

The system also uses daughter boards that support various client-side interfaces while keeping the 100Gbps line-side interface - the most expensive system component - intact. "You can install a muxponder of 10x10Gig modules,” says Nordell. "When an IP router upgrades to a 100 Gig interface, you take out the daughter board and put in a 100 Gig transponder."

Transmode will offer two line-side coherent options, with a reach of 750km or 1,500km. "We want to make sure that customers' metro and long-haul requirements will be covered," says Nordell.

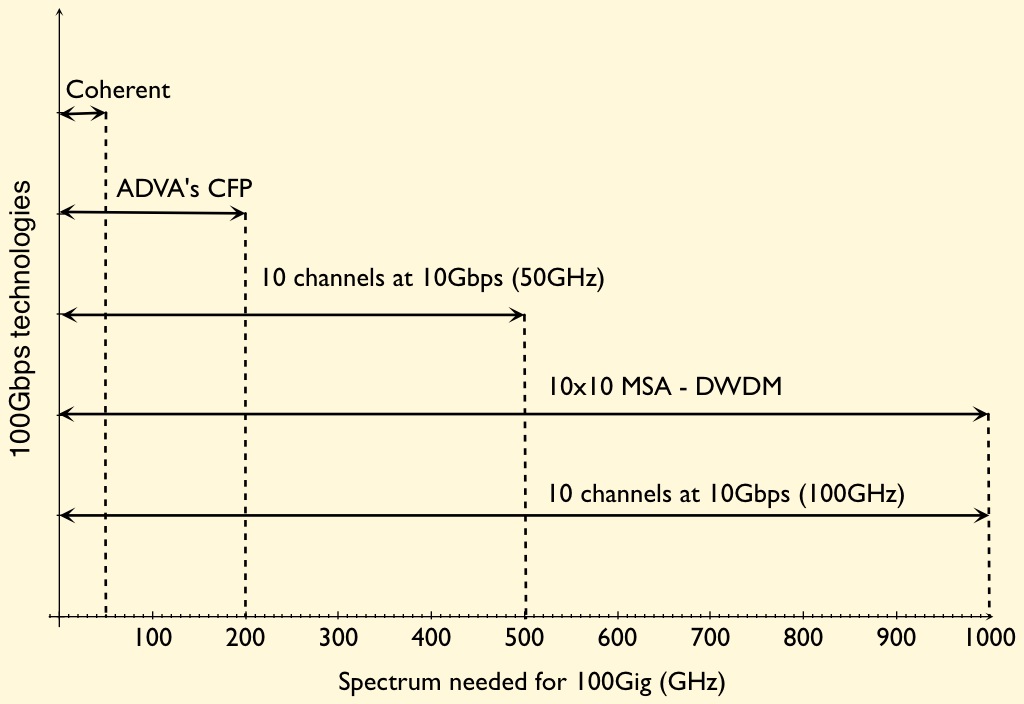

The reach of various 100Gbps technologies for the metro edge, core and regional networks. Source: Gazettabyte

The company chose coherent technology because it is an industry-backed standard. "We can benefit from coherent technology," he says. "If the industry aligns, the volumes of the components come down in price."

Coherent also simplifies the setting up and commissioning of agile photonic networks, especially as more ROADMs are introduced in the metro. "Coherent will help simplify this. All the others are more complex," he says. "Beforehand metro was more point-to-point, now we are seeing more flexibility."

Transmode recently announced it is supplying its systems to Virgin Mobile for mobile backhaul. "That is a metro network with all ROADMs in it," says Nordell. Such networks support multiple paths and that translates to a need for greater reach. "The power budget we need to have in the metro is going up a little bit."

Direct-detection technology was considered by Transmode but it chose coherent as it gives customers a better networking design capability.

Direct detection is also not as spectrally efficient as coherent: 200GHz or 100GHz-wide channels for a 100Gbps signal rather that coherent's 50GHz. "If you have too many ROADMs in the way it is going to hurt you, says Nordell.”We have seen that with 40 Gig."

The TM-2000 rack will begin testing in customers' networks at the start of 2012, with limited availability from mid-2012. The platform and daughter boards will be available in volume by year-end 2012.

MultiPhy boosts 100 Gig direct-detection using digital signal processing

The MP1100Q chip is being aimed at two cost-conscious metro networking requirements: 100 Gigabit point-to-point links and dense wavelength-division multiplexing (DWDM) metro networks.

The MP1100Q as part of a 100 Gig CFP module design. Source: MultiPhy

The MP1100Q as part of a 100 Gig CFP module design. Source: MultiPhy

The 100 Gigabit market is still in its infancy and the technology has so far been used to carry traffic across operators’ core networks. Now 100 Gigabit metro applications are emerging.

Data centre operators want short links that go beyond the IEEE-specified 10km (100GBASE-LR4) and 40km (100GBASE-ER4) reach interfaces, while enterprises are looking to 100 Gigabit-per-second (Gbps) DWDM solutions to boost the capacity and reach of their rented fibre. Existing 100Gbps coherent technologies, designed for long-haul, are too expensive and bulky for the metro.

“There is long-haul and the [IEEE] client interfaces and a huge gap in between,” says Avishay Mor, vice president of product management at MultiPhy.

It is this metro 'gap' that MultiPhy is targeting with its MQ1100Q chip. And the fabless chip company's announcement is one of several that have been made in recent weeks.

ADVA Optical Networking has launched a 100Gbps metro line card that uses a direct-detection CFP, while Transmode has detailed a 100Gbps coherent design tailored for the metro. The 10x10 MSA announced in August a 10km interface as well as a 40km WDM design alongside its existing 10x10Gbps MSA that has a 2km reach.

MultiPhy's MP1100Q IC will enable two CFP module designs: a point-to-point module to connect data centres with a reach of up to 80km, and a DWDM design for metro core and regional networks with a reach up to 800km.

"MLSE is recognised as the best solution for mitigating inter-symbol interference."

Design details

The M1100Q uses a 4x28Gbps direct-detection design, the same approach announced by ADVA Optical Networking for its 100Gbps metro card. But MultiPhy claims that the 100Gbps DWDM CFP module will squeeze the four bands that make up the 100Gbps signal into a 100GHz-wide channel rather than 200GHz, while its IC implements the maximum likelihood sequence estimation (MLSE) algorithm to achieve the 800km reach.

The four optical channels received by a CFP are converted to electrical signals using four receiver optical subassemblies (ROSAs) and sampled using the MP1100Q’s four analogue-to-digital (a/d) converters operating at 28Gbps.

The CFP design using MultiPhy’s chip need only use 10Gbps opto-electronics for the transmit and receive paths. The result is a 100Gbps module with a cost structure based on 4x10Gbps optics.

The lower bill-of-materials impacts performance, however. “When you over-drive these 10Gbps opto-electronics - on the transmit and the receive side - you create what is called inter-symbol interference," says Neal Neslusan, vice president of sales and marketing at MultiPhy.

Inter-symbol interference is an unwanted effect where the energy of a transmitted bit leaks into neighboring signals. This increases the bit-error rate and makes the detector's task harder. "The way that we get around it is using MLSE, recognised as the best solution for mitigating inter-symbol interference," says Neslusan.

Unwanted channel effects introduced by the fibre, like chromatic dispersion, also induce inter-symbol interference and are also countered by the MLSE algorithm on the MP1100Q.

MultiPhy is proposing two CFP designs for its chip. One is based on on-off-keying modulation to achieve 80km point-to-point links and which will require a 200GHz channel to accommodate the 100Gbps signal. The second uses optical duo-binary modulation to achieve the longer reach and more spectrally efficient 100GHz spacings.

The company says the resulting direct-detection CFP using its IC will cost some US $10,000 compared to an estimated $50,000 for a coherent design. In turn the 100G metro CFP’s power consumption is estimated at 24W whereas a coherent design consumes 70W.

MP1100Q samples have been with the company since June, says Mor. First samples will be with customers in the fourth quarter of this year, with general availability starting in early 2012.

If all goes to plan, first CFP module designs using the chip will appear in the second half of 2012, claims MultiPhy.

High fives: 5 Terabit OTN switching and 500 Gig super-channels.

Infinera has announced a core network platform that combines Optical Transport Network (OTN) switching with dense wavelength division multiplexing (DWDM) transport. "We are looking at a system that integrates two layers of the network," says Mike Capuano, vice president of corporate marketing at Infinera.

"This is 100Tbps of non-blocking switching, all functioning as one system. You just can't do that with merchant silicon."

"This is 100Tbps of non-blocking switching, all functioning as one system. You just can't do that with merchant silicon."

Mike Capuano, Infinera

The DTN-X platform is based on Infinera's third-generation photonic integrated circuit (PIC) that supports five, 100Gbps coherent channels.

Each DTN-X platform can deliver 5 Terabits-per-second (Tbps) of non-blocking OTN switching using an Infinera-designed ASIC. Ten DTN-X platforms can be combined to scale the OTN switching and transport capacity to 50Tbps currently.

Infinera also plans to add Multiprotocol Label Switching (MPLS) to turn the DTN-X into a hybrid OTN/ MPLS switch. With the next upgrades to the PIC and the switching, the ten DTN-X platforms will scale to 100Tbps optical transport and 100Tbps OTN and MPLS switching capacity.

The platform is being promoted by Infinera as a way for operators to tackle network traffic growth and support developments such as cloud computing where applications and content increasingly reside in the network. "What that means [for cloud-based services to work] is a network with huge capacity and very low latency," says Capuano.

Platform details

The 5x100Gbps PIC supports what Infinera calls a 500Gbps 'super-channel'. Each super-channel is a multi-carrier implementation comprising five, 100Gbps wavelengths. Combined with OTN, the 500Gbps super-channel can be filled with 1, 10, 40 and 100 Gigabit streams (SONET/SDH, Ethernet, video etc). Moreover, there is no spectral efficiency penalty: the super-channel uses 250GHz of fibre spectrum, provisioning five 50GHz-wide, 100Gbps wavelengths at a time.

"We have seen 40 and 100Gbps come on the market and they are definitely helping with fibre capacity issues," says Capuano. "But they are more expensive from a cost-per-bit perspective than 10Gbps." By introducing the 500Gbps PIC, Infinera says it is reducing the cost-per-bit performance of high speed optical transport.

DTN-X: shown are 5 line and tributary cards top and bottom with switching cards in the centre of the chassis. Source: Infinera

DTN-X: shown are 5 line and tributary cards top and bottom with switching cards in the centre of the chassis. Source: Infinera

Integrating OTN switching within the platform results in the lowest cost solution and is more efficient when compared to multiplexed transponders (muxponder) configured manually, or an external OTN switch which must be optically connected to the transport platform.

The DTN-X also employs Generalised MPLS (GMPS) software. "GMPLS makes it easy to deploy networks and services with point-and-click provisioning," says Capuano.

Each DTX-N line card supports a 500Gbps PIC but the chassis backplane is specified at 1Tbps, ready for Infinera's next-generation 10x100Gbps PIC that will upgrade the DTN-X to a 10Tbps system. "We have already presented our test results for our 1Tbps PIC back in March," says Capuano. The fourth-generation PIC, estimated around 2014 (based on a company slide although Infinera has made no public comment), will support a 1Tbps super-channel.

Adding MPLS will add the transport capability of the protocol to the DTN-X. "You will have MPLS transport, OTN switching and DWDM all in one platform," says Capuano.

OTN switching is the priority of the tier-one operators to carry and process their SONET/SDH traffic; adding MPLS will enable extra traffic processing capabilities to the system, he says.

Infinera says that by eventually integrating MPLS switching into the optical transport network, operators will be able to bypass expensive router ports and simplify their network operation.

Performance

Infinera says that the DTX-N 5Tbps performance does not dip however the system is configured: whether solely as a switch (all line card slots filled with tributary modules), mixed DWDM/ switching (half DWDM/ half tributaries, for example) or solely as a DWDM platform. Depending on the cards in the DTN-X platform, the transport/ switching configuration can be varied but the 5Tbps I/O capacity is retained. Infinera says other switches on the market do lose I/O capacity as the interface mix is varied.

Overall, Infinera claims the platform requires half the power of competing solutions and takes up a third less space.

The DTN-X will be available in the first half of 2012.

Analysis

Gazettabyte asked several market research firms about the significance of the DTN-X announcement and the importance of combining OTN, DWDM and soon MPLS within one platform.

Ovum

Ron Kline, principal analyst, and Dana Cooperson, vice president, of the network infrastructure practice

"MPLS switching is setting up a very interesting competitive dynamic among vendors"

Dana Cooperson, Ovum

The DTN-X is a platform for the largest service providers and their largest sites, says Ovum.

It sees the DTN-X in the same light as other integrated OTN/ WDM platforms such as Huawei's OSN 8800, Nokia Siemens Networks' hiT 7100, Alcatel-Lucent's 1830 PSS and Tellabs' 7100 OTS.

"It fits the mold for Verizon's long-haul optical transport platform (LH OTP), especially once MPLS is added," says Kline. "NSN is also claiming it will add MPLS to the 7100. Once MPLS is added, then you have the big packet optical transport box that Verizon wants."

The DTN-X platform will boost the business case for 100 Gig in a similar way to how Infinera's current PIC has done at 10 Gig. "The others will be forced to lower price," says Kline.

Having GMPLS is important, especially if there is a need to do dynamic bandwidth allocation, however it is customer-dependent. "When you start digging, it's hard to find large-scale implementations of GMPLS," says Kline.

The Ovum analysts stress that the need for OTN in the core depends on the customer. Content service providers like Google couldn't care less about OTN. "It's really an issue for multi-service providers like BT and AT&T," says Cooperson,

There is a consensus about the need for MPLS in the core. "Different service providers are likely to take different approaches — some might prefer an integrated box and others might not, it depends on their business," she says. "I think MPLS switching is setting up a very interesting competitive dynamic among vendors that focus on IP/MPLS, those that focus on optical, and those that are trying to do both [optical and IP/MPLS].

Ovum highlights several aspects regarding the DTN-X's claimed performance.

"Assuming it performs as advertised, this should finally give Infinera what it needs to be of real interest to the tier-ones," says Cooperson. "The message of scalability, simplicity, efficiency, and profitability is just what service providers want to hear."

Cooperson also highlights Infinera's approach to optical-electrical-optical conversion and the benefit this could deliver at line speeds greater than 100Gbps.

At present ROADMs are being upgraded to support flexible spectrum channel configurations, also known as gridless. This is to enable future line speeds that will use more spectrum than current 50GHz DWDM channels. Operators want ROADMs that support flexible spectrum requirements but managing the network to support these variable width channels is still to resolved.

"It fits the mold for Verizon's long-haul optical transport platform (LH OTP), especially once MPLS is added"

"It fits the mold for Verizon's long-haul optical transport platform (LH OTP), especially once MPLS is added"

Ron Kline, Ovum

Infinera's approach is based on conversion to the electrical domain when dropping and regenerating wavelengths such that the issue of flexible channels does not arise or is at least forestalled. This, says Cooperson, could be Infinera's biggest point of differentiation.

"What impresses me is the 500Gbps super-channel using five, 100Gbps carriers and the size of the switch fabric," adds Kline. The 5Tbps switching performance also exceeds that of everyone else: "Alcatel-Lucent is closest with 4Tbps but most range from 1-3Tbps and top out at 3Tbps."

The ease of use is also a big deal. Infinera did very well in marketing rapid turn up: 10 Gig in 10 days for example, says Kline: "It looks like they will be able to do the same here with 100 Gig."

Infonetics Research

Andrew Schmitt, directing analyst, optical

"GMPLS isn't that important, yet."

The DTN-X is a WDM platform which optionally includes a switch fabric for carriers that want it integrated with the transport equipment, says Schmitt. Once MPLS is added, it has the potential to be a full-blown packet-optical system.

"[The announcement is] pretty significant though not unexpected," says Schmitt. "I think the key question is what it costs, and whether the 500G PIC translates into compelling savings."

Having MPLS support is important for some carriers such as XO Communications and Google but not for others.

Schmitt also says GMPLS isn't that important, yet. "Infinera's implementation of regen-rich networks should make their GMPLS implementation workable," he says. "It has been building networks like that for a while."

OTN in the core is still an open debate but any carrier that doesn't have the luxury of a homogenous data network needs it, he says

Schmitt has yet to speak with carriers who have used the DTN-X: "I can't comment on claimed performance but like I said, cost is important."

ACG Research

Eve Griliches, managing partner

"Infinera has already introduced the 500G PIC, but the OTN is significant in that it can be used as a standalone OTN switch, and it has the largest capacity out there today"

The DTN-X as an OTN/ WDM platform awaiting label switch router (LSR) functionality, says Griliches: "With the LSR functionality it will be able to do statistical multiplexing for direct router connections."

Infinera has already introduced the 500 Gig PIC but the OTN is significant in that it can be used as a standalone OTN switch, and it has the largest capacity out there today. An OTN survey conducted last year by ACG Research found that the switch capacity sweet spot is between 4 and 8Tbps.

Griliches says that LSR-based products are taking time to incorporate WDM and OTN technologies, while it is unclear when the DTN-X will support MPLS to add LSR capabilities. The race is on as to whom can integrate everything first, but DWDM and OTN before MPLS is the right direction for most tier-one operators, she says.

Infinera has over eight thousand of its existing DTNs deployed at 85 customers in 50 countries. The scale of the DTN-X will likely broaden Infinera's customer base to include tier-one operators, says Griliches.

ACG Research has heard positive feedback from operators it has spoken to. One stressed that the decreased port count due to the larger OTN cross-connect significantly improves efficiencies. Another operator said it would pick Infinera and said the beta version of the 500Gbps PIC is "working beautifully".

100 Gigabit for the metro

The firm claims this is an industry first: a direct-detection-based 100 Gigabit-per-second (Gbps) design using four, 28Gbps channels rather than current 10x10Gbps schemes.

"Data centre operators want to make best use of the fibre insfrastructure and get lower overall cost, footprint and power consumption"

Jörg-Peter Elbers, ADVA Optical Networking

The card, designed for the FSP 3000 platform, delivers a 2.5x greater spectral efficiency compared to 10Gbps dense WDM (DWDM) systems. In turn, the 100Gbps metro card has half the cost of a 100 Gigabit coherent design while requiring half the power and space.

ADVA Optical Networking is using a CFP optical module to implement the 100Gbps metro design. This allows the card to use other CFP-based interfaces such at the IEEE 100 Gigabit Ethernet (GbE) standards. The design also benefits from the economies of scale of the CFP as the module of choice for 100GbE, and from future smaller modules such as the CFP2 and CFP4 being developed as the 100GbE market evolves.

The 100Gbps metro CFP's four, 28Gbps signals are modulated using optical duo-binary. By choosing duo-binary, cheaper 10Gbps optics can be used akin to a 4x10Gbps design. Duo-binary is also more resilient to dispersion than standard on-off keying.

The CFP-based card requires 200GHz of spectrum for each 100Gbps light path. This is 2.5x more spectrally efficient than 10x10Gbps based on 50GHz channel spacings. However, while the design is cheaper, denser and less power hungry than 100Gbps coherent, it has only a quarter of the spectral efficiency of coherent (see chart).

Jörg-Peter Elbers, vice president, advanced technology at ADVA Optical Networking, says duo-binary delivers closer channel spacing such that a doubling in spectral density will be possible in a future design (100Gbps in a 100GHz channel). The 100Gbps metro card supports 500km links using dispersion-compensated fibre.

Non-coherent designs for the metro are starting to appear despite 100Gbps optical transport being in its infancy. Besides ADVA Optical Networking's design, a component vendor is promoting a 100Gbps direct detection DWDM design for the metro. The 10x10 MSA has also announced a DWDM extension that will support four and eight 100Gbps channels.

The 100G metro card showing the CFP. Source: ADVA Optical Networking

Metro direct-detection also faces competition from system vendors developing coherent designs tailored for the metro.

System vendors, module makers, optical and IC component companies all believe there is a market for lower cost 100Gbps metro transport. This is backed by keen interest from service providers and large content providers that want cheaper 100Gbps interfaces to connect data centres.

Elbers highlights two such applications that will first likely use the 100 Gigabit metro card.

One is connecting the data centres of enterprises that use rented fibre. "They have a multitude of interfaces and services - 10GbE, 8 Gigabit Fibre Channel - and they often rent fibre," says Elbers. "They need to get as much capacity as possible to make the fibre rent worthwhile while being constrained on rack space and power."

The second application is to connect 100GbE-enabled IP routers across the metro. Here service providers may not have heavily loaded DWDM networks and can afford to use a 100Gbps metro link rather than the more spectrally efficient, if more expensive, 100Gbps coherent interface. Equally, such links may be less than 500km while coherent is designed for long-haul links, 1000km or greater.

Elbers says samples of the metro card are available now with volume production beginning at the end of 2011.

Introducing 100G Metro (ADVA Optical video)

R&D: At home or abroad?

Omer Industrial Park in the Negev, Israel - the location of ECI Telecom's latest R&D centre.

Omer Industrial Park in the Negev, Israel - the location of ECI Telecom's latest R&D centre.

Chaim Urbach likes working at the Omer Industrial Park site. Normally located at ECI’s headquarters in Petah Tikva, he visits the Omer site - some 100km away - once or twice a week and finds he is more productive there. Urbach employs an open door policy and has fewer interruptions at the Omer site since engineers are focussed solely on R&D work.

ECI set up its latest R&D centre in May 2010 with a staff of ten. “In 2009 we realised we needed more engineers,” says Urbach. One year on the site employs 150, by the end of the year it will be 200, and by year-end 2012 the company expects to employ 300. ECI has already taken one unit at the Industrial Park and its operations have already spilt over into a second building.

Urbach says that the decision to locate the new site in the south of Israel was not straightforward.

The company has 1,300 R&D staff, with research centres in the US, India and China. Having a second site in Israel helps in terms of issues of language and time zones but employing an R&D engineer in Israel is several times more costly than an engineer in India or China.

The photos on the wall are part of the winning entries in an ECI company-wide photo competition.

The photos on the wall are part of the winning entries in an ECI company-wide photo competition.

But the Israeli Government’s Office of the Chief Scientist (OCS) is keen to encourage local high-tech ventures and has helped with the funding of the site. In return the backed-venture must undertake what is deemed innovative research with the OCS guaranteed royalties from sales of future telecom systems developed at the site.

One difficulty Urbach highlights is recruiting experienced hardware and software engineers given that there are few local high-tech companies in the south of the country. Instead ECI has relocated experienced engineering managers from Petah Tikva, tasked with building core knowledge by training graduates from nearby Ben-Gurion University and from local colleges.

Work on the majority of ECI’s new projects in being done at the Omer site, says Urbach. Projects include developing GPON access technology for a BT tender as well as extending its successful XDM hybrid+ SDH to all-IP transport platform, which has over 30% market share in India. ECI is undertaking the research on one terabit transmission using OFDM technology, part of the Tera Santa Consortium, at its HQ.

“We realised we needed more engineers”

“We realised we needed more engineers”

Chaim Urbach, ECI Telecom

Urbach admits it is a challenge to compete with leading Far Eastern system vendors on cost and given their R&D budgets. But he says the company is focussed on building innovative platforms delivered as part of a complete solution. “We do not just provide a box,” says Urbach. “And customers know if they have a problem, we go the extra mile to solve it.”

Omer Industrial Park

The company is highly business oriented, he says, delivering solutions that fit customers’ needs. “Over 95% of all systems ECI has developed have been sold,” he says.

Urbach also argues that Israeli engineers are suited to R&D. “Engineers don’t do everything by the book,” he says. “And they are dedicated and motivated to succeed.”

For more photos of the Omer Industrial Park, click here

Terabit Consortium embraces OFDM

“This project is very challenging and very important”

“This project is very challenging and very important”

Shai Stein, Tera Santa Consortium

Given the continual growth in IP traffic, higher-speed light paths are going to be needed, says Shai Stein, chairman of the Tera Santa Consortium and ECI Telecom’s CTO: “If 100 Gigabit is starting to be deployed, within five years we’ll start to see links with tenfold that capacity, meaning one Terabit.”

The project is funded by the seven participating firms and the Israeli Government. According to Stern, the Government has invested little in optical projects in recent years. “When we look at the [Israeli] academies and industry capabilities in optical, there is no justification for this,” says Stern. “We went with this initiative in order to get Government funding for something very challenging that will position us in a totally different place worldwide.”

Orthogonal frequency division multiplexing

OFDM differs from traditional dense wavelength division multiplexing (DWDM) technology in how fibre bandwidth is used. Rather than sending all the information on a lightpath within a single 50 or 100GHz channel – dubbed single-carrier transmission – OFDM uses multiple narrow carriers. “Instead of using the whole bandwidth in one bulk and transmitting the information over it, [with OFDM] you divide the spectrum into pieces and on each you transmit a portion of the data,” says Stein. “Each sub-carrier is very narrow and the summation of all of them is the transmission.”

“Each time there is a new arena in telecom we find that there is a battle between single carrier modulation and OFDM; VDSL began as single carrier and later moved to OFDM,” says Amitai Melamed, involved in the project and a member of ECI’s CTO office. “In the optical domain, before running to [use] single-carrier modulation as is currently done at 100 Gigabit, it is better to look at the OFDM domain in detail rather than jump at single-carrier modulation and question whether this was the right choice in future.”

OFDM delivers several benefits, says Stern, especially in the flexibility it brings in managing spectrum. OFDM allows a fibre’s spectrum band to be used right up to its edge. Indeed Melamed is confident that by adopting OFDM for optical, the spectrum efficiency achieved will eventually match that of wireless.

“OFDM is very tolerant to rate adaptation.”

Amitai Melamed, ECI Telecom

The technology also lends itself to parallel processing. “Each of the sub-carriers is orthogonal and in a way independent,” says Stern. “You can use multiple small machines to process the whole traffic instead of a single engine that processes it all.” With OFDM, chromatic dispersion is also reduced because each sub-carrier is narrow in the frequency domain.

Using OFDM, the modulation scheme used per sub-carrier can vary depending on channel conditions. This delivers a flexibility absent from existing single-carrier modulation schemes such as quadrature phase-shift keying (QPSK) that is used across all the channel bandwidth at 100 Gigabit-per-second (Gbps). “With OFDM, some of the bins [sub-carriers] could be QPSK but others could be 16-QAM or even more,” says Melamed.

The approach enables the concept of an adaptive transponder. “I don’t always need to handle fibre as a time-division multiplexed link – either you have all the capacity or nothing,” says Melamed. “We are trying to push this resource to be more tolerant to the media: We can sense the channels' and adapt the receiver to the real capacity.” Such an approach better suits the characteristics of packet traffic in general he says: “OFDM is very tolerant to rate adaptation.”

The Consortium’s goal is to deliver a 1 Terabit light path in a 175GHz channel. At present 160, 40Gbps can be crammed within the a fibre's C-band, equating to 6.4Tbps using 25GHz channels. At 100Gbps, 80 channels - or 8Tbps - is possible using 50GHz channels. A 175GHz channel spacing at 1Tbps would result in 23Tbps overall capacity. However this figure is likely to be reduced in practice since frequency guard-bands between channels are needed. The spectrum spacings at speeds greater than 100Gbps are still being worked out as part of ITU work on "gridless" channels (see OFC announcements and market trends story).

ECI stresses that fibre capacity is only one aspect of performance, however, and that at 1Tbps the optical reach achieved is reduced compared to transmissions at 100Gbps. “It is not just about having more Gigabit-per-second-per-Hertz but how we utilize the resource,” says Melamed. “A system with an adaptive rate optimises the resource in terms of how capacity is managed.” For example if there is no need for a 1Tbps link at a certain time of the day, the system can revert to a lower speed and use the spectrum freed up for other services. Such a concept will enable the DWDM system to be adaptive in capacity, time and reach.

Project focus

The project is split between digital and analogue, optical development work. The digital part concerns OFDM and how the signals are processed in a modular way.

The analogue work involves overcoming several challenges, says Stern. One is designing and building the optical functions needed for modulation and demodulation with the accuracy required for OFDM. Another is achieving a compact design that fits within an optical transceiver. Dividing the 1Tbps signal into several sub-bands will require optical components to be implemented as a photonic integrated circuit (PIC). The PIC will integrate arrays of components for sub-band processing and will be needed to achieve the required cost, space and power consumption targets.

Taking part in the project are seven Israeli companies - ECI Telecom, the Israeli subsidiary of Finisar, MultiPhy, Civcom, Orckit-Corrigent, Elisra-Elbit and Optiway- as well as five Israeli universities.

Two of the companies in the Consortium

“There are three types of companies,” says Stern. “Companies at the component level – digital components like digital signal processors and analogue optical components, sub-systems such as transceivers, and system companies that have platforms and a network view of the whole concept.”

The project goal is to provide the technology enablers to build a terabit-enabled optical network. A simple prototype will be built to check the concepts and the algorithms before proceeding to the full 1Terabit proof-of-concept, says Stern. The five Israeli universities will provide a dozen research groups covering issues such as PIC design and digital signal processing algorithms.

Any intellectual property resulting from the project is owned by the company that generates it although it will be made available to any other interested Consortium partner for licensing.

Project definition work, architectures and simulation work have already started. The project will take between 3-5 years but it has a deadline after three years when the Consortium will need to demonstrate the project's achievements. “If the achievements justify continuation I believe we will get it [a funding extension],” says Stern. “But we have a lot to do to get to this milestone after three years.

Project funding for the three years is around US $25M, with the Israeli Office of the Chief Scientist (OCS) providing 50 million NIS (US $14.5M) via the Magnet programme, which ECI says is “over half” of the overall funding.

Further reading:

Optical engines bring Terabit bandwidth on a card

Such a parallel optics design offer several advantages when used on a motherboard. It offer greater flexibility when cooling since traditional optics are normally in pluggable slots at the card edge, furthest away from the fans. Such optical engines also simplify high-speed signal routing and electromagnetic interference issues since fibre is used rather than copper traces.

Figure 1: Fourteen 120Gbps MiniPods on a board. Source: Avago Technologies

Figure 1: Fourteen 120Gbps MiniPods on a board. Source: Avago Technologies

Avago has two designs – the 8x8mm MicroPod and the 22x18mm MiniPod. The 12x10.3125 Gigabit-per-second (Gbps) MicroPods are being used in IBM’s Blue Gene computer and Avago says it is already shipping tens of thousands of the devices a month.

“The [MicroPod’s] signal pins have a very tight pitch and some of our customers find that difficult to do,” says Victor Krutul, director of marketing for the fibre optics division at Avago Technologies. The MiniPod design tackles this by using the MicroPod optical engine but a more relaxed pitch. The MiniPod uses a 9x9 electrical MegArray connector and is now sampling, says Avago.

Figure 1 shows 14 MiniPod optical engines on a board, each operating at 12x10Gbps. “If you were trying to route all those signals electrically on the board, it would be impossible,” says Krutul. All 14 MiniPods go to one connector, equating to a 1.68Tbps interface.

Figure 2: Sixteen MicroPods in a 4x4 array. Source: Avago Technologies

Figure 2: Sixteen MicroPods in a 4x4 array. Source: Avago Technologies

Figure 2 shows 16 MicroPods in a 4x4 array. “Those [MicroPods] can get even closer,” says Krutul. Also shown are the connectors to the MicroPod array. Avago has worked with US Conec to design connectors whereby the flat ribbon fibres linking the MicroPods can stack on top of each other. In this example, there are four connections for each row of MicroPods.

OFC announcements and market trends

More compact transceiver designs at 10, 40 and 100 Gigabit, advancements in reconfigurable optical add-drop multiplexer (ROADM) technology and parallel optical engine developments were all in evidence at this year’s OFC/NFOEC show held in Los Angeles in March.

“MSAs are designed by committee, and when you have a committee you throw away innovation and you throw away time-to-market”

“MSAs are designed by committee, and when you have a committee you throw away innovation and you throw away time-to-market”

Victor Krutul, Avago Technologies

Finisar said that the show was one of the busiest in recent years. “There was an increasing system-vendor presence at OFC, and there was a lot more interest from investor analysts,” says Rafik Ward, vice president of marketing at Finisar.

Ethernet interfaces

Opnext demonstrated an IEEE 100GBASE-ER4 module design at the show, the 100 Gigabit Ethernet (GbE) standard with a 40km reach. Based on the company’s CFP-based 100GBASE-LR4 10km module, the design uses a semiconductor optical amplifier (SOA) on the receive path to achieve the extended reach. The IEEE standard calls for an SOA in front of the photo-detectors for the 100GBASE-ER4 interface.

“We don’t have that [SOA] integrated yet, we are just showing the [design] feasibility,” says Jon Anderson, director of technology programme at Opnext. The extended reach interface will be used to connect IP core routers to transport system when the two platforms reside in separate facilities. Such a 40km requirement for a 100GbE interface is not common but is an important one to meet, says Anderson.

Opnext’s first-generation LR4, currently shipping, is a discrete design comprising four discrete transmitter optical sub-assemblies (TOSAs) and four receiver optical sub-assemblies (ROSAs) and an optical multiplexer and demultiplexer. The company’s next-generation design will integrate the four lasers and the optical multiplexer into a package and will be used in future more compact CFP2 and CFP4 modules.

The CFP2 module is half the size of the CFP module and the CFP4 is a quarter. In terms of maximum power, the CFP module is rated at 32W, the CFP2 12W and the CFP4 5W. “The CFP4 is a little bit wider and longer than the QSFP,” says Anderson. The first CFP2 modules are expected to become available in 2012 and the CFP4 in 2013.

System vendors are interested in the CFP4 as they want to support over one terabit of capacity on a 15-inch faceplate. Up to 16 ports can be supported –1.6Tbps – on a faceplate using the CFP4, and using a “belly-to-belly” configuration two rows of 16 ports will be possible, says Anderson.

Finisar demonstrated a distributed feedback laser (DFB) laser-based CFP module at OFC that implements the 10km 100GBASE-LR4 standard. The adoption of DFB lasers promises significant advantages compared to existing first-generation -LR4 modules that use electro-absorption modulated lasers (EMLs). “If you look at current designs, ours included, not only do they use EMLs which are significantly more expensive, but each is in its own package and has its own thermo-electric cooler,” says Ward.

Finisar’s use of DFBs means an integrated array of the lasers can be packaged and cooled using a single thermo-electric cooler, significantly reducing cost and nearly halving the power to 12W. “Now that the power [of the DFB-based] LR4 is 12W, we can place it within a CFP2 with its 25-28 Gigabit-per-second (Gbps) electrical I/O,” says Ward.

Moving to the faster input/output (I/O) compared to the CFP’s 10Gbps I/O means that that serialiser/ deserialiser (serdes) chipset can be replaced with simpler clock data recovery (CDR) circuitry. “By the time we move to the CFP4, we remove the CDRs completely,” says Ward. “It’s an un-retimed interface.” Finisar’s existing -LR4 design already uses an integrated four-photodetector array.

An early application of the 100GbE -LR4, as with the -ER4, is linking core routers with optical transport systems in operators’ central offices. Many Ethernet switch vendors have chosen to focus their early high-data efforts at 40GbE but Finisar says the move to 100GbE has started.

Finisar argues that the adoption of DFBs will ultimately prove the cost-benefits of a 4-channel 100GbE design which faces competition from the emerging 10x10 multi-source agreement (MSA). “Everything we have heard about the 10x10 [MSA] has been around cost,” says Ward. “The simple view inside Finisar is that by the time the Gen2 100GbE module that we showed at OFC gets to market, this argument [4x25Gig vs. 10x10Gig] will be a moot point.”

“40Gig is definitely still strong and healthy”

“40Gig is definitely still strong and healthy”

Jon Anderson, Opnext

By then the second-generation -LR4 module design will be cost competitive if not even lower cost than the 10x10 MSA. “If you look at optoelectronic components, at the end of the day what really drives cost is yield,” says Ward. “If we can get our yields of 25Gig DFBs down to a level that is similar to 10Gig DFB yields- it doesn’t have to match, just in the ballpark - then we have a solution where the 4x25Gig looks like a 4x10Gig solution and then I believe everyone will agree that 4x25Gig is a less expensive architecture.” Finisar expects the Gen2 CFP -LR4 in production by the first half of 2012.

Opnext demonstrated a 40GBASE- LR4 (40Gbps, up to 10km) standard in a QSFP+ module at OFC. Anderson says it is seeing demand for such a design from data centre operators and from switch and transport vendors.

Avago Technologies announced a 40Gbps QSFP+ module at OFC that implements the 100m IEEE 40GBASE-SR4. “It will interoperate with Avago’s SFP+ modules,” says Victor Krutul, director of marketing for the fibre optics division at Avago Technologies. The QSFP+ can interface to another QSFP+ module or to four 10Gbps SFP+ modules.

Avago also announced a proprietary mini-SFP+ design, 30% smaller than the standard SFP+ but which is electrically compatible. According to Krutul, the design came about following a request from one of its customers: “What it allows is the ability to have 64 ports on the front [panel] rather than 48.”

Did Avago consider making the mini-SFP+ design an MSA? “What we found with MSAs is that they are designed by committee, and when you have a committee you throw away innovation and you throw away time-to-market,” says Krutul.

Krutul was previously a marketing manager for Intel’s LightPeak before joining Avago over half a year ago.

“There was an increasing system-vendor presence at OFC, and there was a lot more interest from investor analysts”

“There was an increasing system-vendor presence at OFC, and there was a lot more interest from investor analysts”

Rafik Ward, Finisar.

Line-side interfaces

Opnext will be providing select customers with its 100Gbps DP-QPSK coherent module for trialling this quarter. The module has a 5-inch by 7-inch footprint and uses a 168-pin connector. “We are working to try and meet the OIF spec [with regard power consumption] which is 80W.” says Anderson. “It is challenging and it may not be met in the first generation [design].”

The company is also moving its 40Gbps 2km very short reach (VSR) transponder to support the IEEE 40GBASE-FR standard within a CFP module, dubbed the “tri-rate” design. “The 40BASE-FR has been approved, with the specification building on the ITU’s 40Gig VSR,” says Anderson. “It continues to support the [OC-768] SONET/SDH rate, it will support the new OTN ODU3 40Gbps and the intermediate 40 Gigabit Ethernet.”

Opnext and Finisar are both watching with interest the emerging 100Gbps direct detection market, an alternative to 100 Gigabit coherent aimed shorter reach metro applications.

“We certainly are watching this segment and do have an interest, but we don’t have any product plans to share at this point,” says Anderson.

“The [100Gbps] direct-detection market is very interesting,” says Ward. Coherent is not going to be the only way people will deploy 100Gbps light paths. “There will be a market for shorter reach, lower performance 100 Gigabit DWDM that will be used primarily in datacentre-to-datacentre,” he says. Tier 2 and tier 3 carriers will also be interested in the technology for use in shorter metro reaches. “There is definitely a market for that,” says Ward.

Opnext also announced its small form-factor – 3.5-inch by 4.5-inch - 40Gbps DPSK module. “With a smaller form factor, the next generation could move to a CFP type pluggable,” says Anderson. “But that is if our customers are interested in migrating to a pluggable design for DPSK and DQPSK.”

Are there signs that the advent of 100 Gigabit is affecting 40Gbps uptake? “We definitely not seeing that,” says Anderson. “We are continuing to see good solid demand for both 40G line side – DPSK and DQPSK – and a lot of pull to being this tri-rate VSR.”

Such demand is not just from China but also North Ametican carriers. “40 Gig is definitely still strong and healthy,” says Anderson “But there are some operators that are waiting to see how 100G does and approved in for major build-outs.”

At 10Gbps, Opnext also had on show a tunable TOSA for use in an XFP module, while Finisar announced an 80km, 10Gbps SFP+ module. “SFP+ has become a very successful form factor at 10Gbps,” says Ward. “All the market data I see show SFP+ leads in overall volumes deployed by a significant margin.” Its success has been achieved despite being a form factor was not designed to achieve all the 10Gbps reaches required initially. This is some achievement, says Ward, since the XFP+ form factor used for 80km has a power rating of 3.5W while the 80km SFP+ has to work within a less than 2W upper limit.

Parallel Optics

Avago detailed its main parallel optic designs: the CXP module and its two optical engine designs.

The company claims it seeing much interested from high-performance computing vendors such as IBM and Fujitsu for its CXP 120 Gigabit (12x10Gbps) parallel transceiver module. Avago is sampling the module and it will start shipping in the summer.

The company also announced the status of its embedded parallel optics devices (PODs). Such parallel optic designs offer several advantages, says Krutul. Embedding the optics on the motherboard offers greater flexibility in cooling since the traditional optics is normally at the edge of the card, furthest away from the fans. Such optics also simplify high-speed signal routing on the printed circuit board since fibre is used.

Avago offers two designs – the 8x8mm MicroPod and the 22x18mm MiniPod. The 12x10Gbps MicroPods are being used in IBM’s Blue Gene computer and Avago says it is already shipping tens of thousands of the devices a month. “The [MicroPod’s] signal pins have a very tight pitch and some of our customers find that difficult to do,” says Krutul. The MiniPod design tackles this by using the MicroPod optical engine but a more relaxed pitch. At OFC, Avago said that the MiniPod is now sampling.

Gridless ROADMs

Finisar demonstrated what it claims is the first gridless wavelength-selective switch (WSS) module at the show. A gridless ROADM supports variable channel widths beyond the fixed International Telecommunication Union's (ITU) defined spacings. Such a capability enables ROADMs to support variable channel spacings that may be required for transmission rates beyond 100Gbps: 400Gbps, 1Tbps and beyond.

“We have an increasing amount of customer interest in this [FlexGrid], and from what we can tell, there is also an increasing amount of carrier interest as well,” says Ward, adding that the company is already shipping FlexGrid WSSs to customers.

Finisar is a contributing to the ongoing ITU work to define what the grid spacings and the central channels should be for future ROADM deployments. Finisar demonstrated its FlexGrid design implementing integer increments of 12.5GHz spacing. “We could probably go down to 1GHz or even lower than that,” says Ward. “But the network management system required to manage such [fine] granularity would become incredibly complicated.” What is required for gridless is a balance between making good use of the fibre’s spectrum while ensuring the system in manageable, says Ward.

Infinera details Terabit PICs, 5x100G devices set for 2012

Infinera has given first detail of its terabit coherent detection photonic integrated circuits (PICs). The pair - a transmitter and a receiver PIC – implement a ten-channel 100 Gigabit-per-second (Gbps) link using polarisation multiplexing quadrature phase-shift keying (PM-QPSK). The Infinera development work was detailed at OFC/NFOEC held in Los Angeles between March 6-10.

Infinera has recently demonstrated its 5x100Gbps PIC carrying traffic between Amsterdam and London within Interoute Communications’ pan-European network. The 5x100Gbps PIC-based system will be available commercially in 2012.

“We think we can drive the system from where it is today – 8 Terabits-per-fibre - to around 25 Terabits-per-fibre”

Dave Welch, Infinera

Why is this significant?

The widespread adoption of 100Gbps optical transport technology will be driven by how quickly its cost can be reduced to compete with existing 40Gbps and 10Gbps technologies.

Whereas the industry is developing 100Gbps line cards and optical modules, Infinera has demonstrated a 5x100Gbps coherent PIC based on 50GHz channel spacing while its terabit PICs are in the lab.

If Infinera meets its manufacturing plans, it will have a compelling 100Gbps offering as it takes on established 100Gbps players such as Ciena. Infinera has been late in the 40Gbps market, competing with its 10x10Gbps PIC technology instead.

40 and 100 Gigabit

Infinera views 40Gbps and 100Gbps optical transport in terms of the dynamics of the high-capacity fibre market. In particular what is the right technology to get most capacity out of a fibre and what is the best dollar-per-Gigabit technology at a given moment.

For the long-haul market, Dave Welch, chief strategy officer at Infinera, says 100Gbps provides 8 Terabits (Tb) of capacity using 80 channels versus 3.2Tb using 40Gbps (80x40Gbps). The 40Gbps total capacity can be doubled to 6.4Tb (160x40Gbps) if 25GHz-spaced channels are used, which is Infinera’s approach.

“The economics of 100 Gigabit appear to be able to drive the dollar-per-gigabit down faster than 40 Gigabit technology,” says Welch. If operators need additional capacity now, they will adopt 40Gbps, he says, but if they have spare capacity and can wait till 2012 they can use 100Gbps. “The belief is that they [operators] will get more capacity out of their fibre and at least the same if not better economics per gigabit [using 100Gbps],” says Welch. Indeed Welch argues that by 2012, 100Gbps economics will be superior to 40Gbps coherent leading to its “rapid adoption”.

For metro applications, achieving terabits of capacity in fibre is less of a concern. What matters is matching speeds with services while achieving the lowest dollar-per-gigabit. And it is here – for sub-1000km networks – where 40Gbps technology is being mostly deployed. “Not for the benefit of maximum fibre capacity but to protect against service interfaces,” says Welch, who adds that 40 Gigabit Ethernet (GbE) rather than 100GbE is the preferred interface within data centres.

Shorter-reach 100Gbps

Companies such as ADVA Optical Networking and chip company MultiPhy highlight the merits of an additional 100Gbps technology to coherent based on direct detection modulation for metro applications (for a MultiPhy webinar on 100Gbps direct detection, click here). Direct detection is suited to distances from 80km up to 1000km, to connect data centres for example.

Is this market of interest to Infinera? “This is a great opportunity for us,” says Welch.

The company’s existing 10x10Gbps PIC can address this segment in that it is least 4x cheaper than emerging 100Gbps coherent solutions over the next 18 months, says Welch, who claims that the company’s 10x10Gbps PIC is making ‘great headway’ in the metro.

“If the market is not trying to get the maximum capacity but best dollar-per-gigabit, it is not clear that full coherent, at least in discrete form, is the right answer,” says Welch. But the cost reduction delivered by coherent PIC technology does makes it more competitive for cost-sensitive markets like metro.

A 100Gbps coherent discrete design is relatively costly since it requires two lasers (one as a local oscillator (LO - see fig 1 - at the receiver), sophisticated optics and a high power-consuming digital signal processor (DSP). “Once you go to photonic integration the extra lasers and extra optics, while a significant engineering task, are not inhibitors in terms of the optics’ cost.”

Coherent PICs can be used ‘deeper in the network’ (closer to the edge) while shifting the trade-offs between coherent and on-off keying. However even if the advent of a PIC makes coherent more economical, the DSP’s power dissipation remains a factor regarding the tradeoff at 100Gbps line rates between on-off keying and coherent.

Welch does not dismiss the idea of Infinera developing a metro-centric PIC to reduce costs further. He points out that while such a solution may be of particular interest to internet content companies, their networks are relatively simple point-to-point ones. As such their needs differ greatly from cable operators and telcos, in terms of the services carried and traffic routing.

PIC challenges

Figure 1: Infinera's terabit PM-QPSK coherent receiver PIC architecture

Figure 1: Infinera's terabit PM-QPSK coherent receiver PIC architecture

There are several challenges when developing multi-channel 100Gbps PICs. “The most difficult thing going to a coherent technology is you are now dealing with optical phase,” says Welch. This requires highly accurate control of the PIC’s optical path lengths.

The laser wavelength is 1.5 micron and with the PIC's indium phosphide waveguides this is reduced by a third to 0.5 micron. Fine control of the optical path lengths is thus required to tenths of a wavelength or tens of nanometers (nm).

Achieving a high manufacturing yield of such complex PICs is another challenge. The terabit receiver PIC detailed in the OFC paper integrates 150 optical components, while the 5x100Gbps transmit and receive PIC pair integrate the equivalent of 600 optical components.

Moving from a five-channel (500Gbps) to a ten-channel (terabit) PIC is also a challenge. There are unwanted interactions in terms of the optics and the electronics. “If I turn one laser on adjacent to another laser it has a distortion, while the light going through the waveguides has potential for polarisation scattering,” says Welch. “It is very hard.”

But what the PICs shows, he says, is that Infinera’s manufacturing process is like a silicon fab’s. “We know what is predictable and the [engineering] guys can design to that,” says Welch. “Once you have got that design capability, you can envision we are going to do 500Gbps, a terabit, two terabits, four terabits – you can keep on marching as far as the gigabits-per-unit [device] can be accomplished by this technology.”

The OFC post-deadline paper details Infinera's 10-channel transmitter PIC which operates at 10x112Gbps or 1.12Tbps.

Power dissipation

The optical PIC is not what dictates overall bandwidth achievable but rather the total power dissipation of the DSPs on a line card. This is determined by the CMOS process used to make the DSP ASICs, whether 65nm, 40nm or potentially 28nm.

Infinera has not said what CMOS process it is using. What Infinera has chosen is a compromise between “being aggressive in the industry and what is achievable”, says Welch. Yet Infinera also claims that its coherent solution consumes less power than existing 100Gbps coherent designs, partly because the company has implemented the DSP in a more advanced CMOS node than what is currently being deployed. This suggests that Infinera is using a 40nm process for its coherent receiver ASICs. And power consumption is a key reason why Infinera is entering the market with a 5x100Gbps PIC line card. For the terabit PIC, Infinera will need to move its ASICs to the next-generation process node, he says.

Having an integrated design saves power in terms of the speeds that Infinera runs its serdes (serialiser/ deserialiser) circuitry and the interfaces between blocks. “For someone else to accumulate 500Gbps of bandwdith and get it to a switch, this needs to go over feet of copper cable, and over a backplane when one 100Gbps line card talks to a second one,” says Welch. “That takes power - we don’t; it is all right there within inches of each other.”

Infinera can also trade analogue-to-digital (A/D) sampling speed of its ASIC with wavelength count depending on the capacity required. “Now you have a PIC with a bank of lasers, and FlexCoherent allows me to turn a knob in software so I can go up in spectral efficiency,” he says, trading optical reach with capacity. FlexCoherent is Infinera’s technology that will allow operators to choose what coherent optical modulation format to use on particular routes. The modulation formats supported are polarisation multiplexed binary phase-shift keying (PM-BPSK) and PM-QPSK.

Dual polarisation 25Gbaud constellation diagrams

Dual polarisation 25Gbaud constellation diagrams

What next?

Infinera says it is an adherent of higher quadrature amplitude modulation (QAM) rates to increase the data rate per channel beyond 100Gbps. As a result FlexCoherent in future will enable the selection of higher-speed modulation schemes such as 8-QAM and 16-QAM. “We think we can drive the system from where it is today –8 Terabits-per-fibre - to around 25 Terabits-per-fiber.”

But Welch stresses that at 16-QAM and even higher level speeds must be traded with optical reach. Fibre is different to radio, he says. Whereas radio uses higher QAM rates, it compensates by increasing the launch power. In contrast there is a limit with fibre. “The nonlinearity of the fibre inhibits higher and higher optical power,” says Welch. “The network will have to figure out how to accommodate that, although there is still significant value in getting to that [25Tbps per fibre]” he says.

The company has said that its 500 Gigabit PIC will move to volume manufacturing in 2012. Infinera is also validating the system platform that will use the PIC and has said that it has a five terabit switching capacity.

Infinera is also offering a 40Gbps coherent (non-PIC-based) design this year. “We are working with third-party support to make a module that will have unique performance for Infinera,” says Welch.

The next challenge is getting the terabit PIC onto the line card. Based on the gap between previous OFC papers to volume manufacturing, the 10x100Gbps PIC can be expected in volume by 2014 if all goes to plan.

10 Gigabit GPON gets broadband access support

Part 1: XG-PON1 goes commercial

Alcatel-Lucent is making available what it claims is the first broadband access platforms that support XG-PON1, the 10 Gigabit GPON standard. The company has developed an XG-PON1 line card for use in its latest ISAM-FX as well as its existing ISAM-FD access platforms. The ISAM platforms support copper and fibre-based broadband access.

“First [XG-PON1] deployments will likely be in Asia Pacific but we are seeing strong interest from other regions"

Stefaan Vanhastel, Alcatel-Lucent

Why is this significant?

System vendors and operators have been trialling 10 Gigabit GPON technology. Now Alcatel-Lucent has signalled that the technology is ready for commercial deployment. The vendor says operator deployments will start later this year, a claim backed by Infonetics Research. However, the market research firm forecasts 10 Gigabit GPON global deployments will only reach two million ports by 2014.

What has been done?

XG-PON1 is the asymmetrical version of the 10 Gigabit GPON standard delivering 10 Gigabit-per-second (Gbps) data rates downstream (to the user) and 2.5Gbps upstream. This compares to GPON, which delivers 2.5Gbps downstream and 1.25Gbps upstream.

The Alcatel-Lucent XG-PON1 line card has four 10 Gigabit GPON ports, and is available on the existing ISAM-FD products as well as the latest ISAM-FX high-capacity shelves.

There are three ISAM-FX shelves that accommodate four, eight and 16 line cards. The ISAM-FX shelves have a dual-100Gbps backplane capacity, compared to the ISAM-FD which has a 2x10Gbps capacity. The ISAM-FX shelves house up to two controllers, and the role of the backplane is to connect each line card to each controller. The 100Gbps is the capacity linking each line card to each of the two controllers. Since the XG-PON1 line card has four 10Gbps ports, the backplane will clearly support future denser line cards.

The controller acts as a central processing unit taking traffic from the line cards and packaging it for the network uplink. Each controller has a 480Gbps switching matrix, four 10 Gigabit Ethernet uplinks and service intelligence to handle the traffic flows. “You can have two controllers per shelf and then they work in a load sharing mode,” says Stefaan Vanhastel, marketing director wireline access at Alcatel-Lucent. “This gives you a total of eight uplinks and you can add more if needed.”

The PON architecture

The XG-PON1 standard allows operators a straightforward way to upgrade existing GPON networks. “The operator can put the two technologies on the same optical network, with some subscribers on GPON and others on 10 Gig GPON,” says Vanhastel.

Source: Alcatel-Lucent

Source: Alcatel-Lucent

Moving to XG-PON1 not only provides greater bandwidth but also supports more subscribers on the one fibre. According to Alcatel-Lucent the maximum number of PON end terminals or optical network units (ONUs) that GPON supports is 128, dubbed a split ratio of 1:128. In contrast, 1:128 is the starting split ratio for XG-PON1 while the maximum is 1:512.

Source: Alcatel-Lucent

Source: Alcatel-Lucent

What next?

Vanhastel admits that existing GPON provides more than enough bandwidth to subscribers. To ensure that a GPON subscriber gets sufficient bandwidth, the average split ratio operators use is 1:18. “With the higher-capacity XG-PON1, the average split ratio could go up significantly,” says Vanhastel.

Alcatel-Lucent says initial deployments of XG-PON1 will start in the second half of this year with more widespread deployments occurring in 2012. “The first deployments will likely be in Asia Pacific but we are seeing strong interest from other regions,” says Vanhastel.

Initial XG-PON1 deployments will likely be for backhauling traffic from fibre-to-the-building (FTTB) deployments. Here one fibre has a split ratio of 1:16 or 1:32 but each FTTB node supports 24 subscribers typically.

Meanwhile, the company announced in October 2010 trials with operators Verizon and Portugal Telecom involving the symmetrical (downstream and upstream) 10 Gigabit GPON variant known as XG-PON2. XG-PON2 has yet to become a standard.