Xtera demonstrates 40 Terabit using Raman amplification

- Xtera's Raman amplification boosts capacity and reach

- 40 Terabit optical transmission over 1,500km in Verizon trial

- 64 Terabit over 1,500km in 2015 using a Raman module operating over 100nm of spectrum

Herve Fevrier

Herve FevrierSystem vendor Xtera is using all these techniques as part of its Nu-Wave Optima system but also uses Raman amplification to extend capacity and reach.

"We offer capacity and reach using a technology - Raman amplification - that we have been pioneering and working on for 15 years," says Herve Fevrier, executive vice president and chief strategy officer at Xtera.

The distributed amplification profile of Raman (blue) compared to an EDFA's point amplification. Source: Xtera

The distributed amplification profile of Raman (blue) compared to an EDFA's point amplification. Source: XteraOne way vendors are improving the amplification for 100 Gigabit and greater deployments is to use a hybrid EDFA/ Raman design. This benefits the amplifier's power efficiency and the overall transmission reach but the spectrum width is still dictated by Erbium to around 35nm. "And Raman only helps you have spans which are a bit longer," says Fevrier.

Meanwhile, Xtera is working on programable cards that will support the various transmission options. Xtera will offer a 100nm amplifier module this year that extends its system capacity to 24 Terabit (240, 100 Gig channels). Also planned this year is super-channel PM-QPSK implementation that will extend transmissions to 32 Terabit using the 100nm amplifier module. In 2015 Xtera will offer PM-16-QAM that will deliver the 48 Terabit over 2,000km and the 64 Terabit over 1,500km.

For Part 1, click here

Verizon on 100G+ optical transmission developments

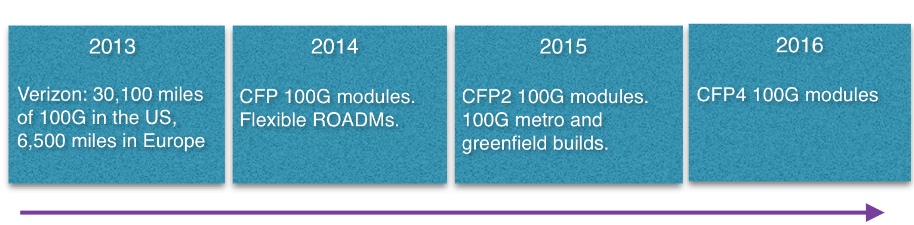

Source: Gazettabyte

Source: Gazettabyte

Feature: 100 Gig and Beyond. Part 1:

Verizon's Glenn Wellbrock discusses 100 Gig deployments and higher speed optical channel developments for long haul and metro.

The number of 100 Gigabit wavelengths deployed in the network has continued to grow in 2013.

According to Ovum, 100 Gigabit has become the wavelength of choice for large wavelength-division multiplexing (WDM) systems, with spending on 100 Gigabit now exceeding 40 Gigabit spending. LightCounting forecasts that 40,000, 100 Gigabit line cards will be shipped this year, 25,000 in the second half of the year alone. Infonetics Research, meanwhile, points out that while 10 Gigabit will remain the highest-volume speed, the most dramatic growth is at 100 Gigabit. By 2016, the majority of spending in long-haul networks will be on 100 Gigabit, it says.

The market research firms' findings align with Verizon's own experience deploying 100 Gigabit. The US operator said in September that it had added 4,800, 100 Gigabit miles of its global IP network during the first half of 2013, to total 21,400 miles in the US network and 5,100 miles in Europe. Verizon expects to deploy another 8,700 miles of 100 Gigabit in the US and 1,400 miles more in Europe by year end.

"We expect to hit the targets; we are getting close," says Glenn Wellbrock, director of optical transport network architecture and design at Verizon.

Verizon says several factors are driving the need for greater network capacity, including its FiOS bundled home communication services, Long Term Evolution (LTE) wireless and video traffic. But what triggered Verizon to upgrade its core network to 100 Gig was converging its IP networks and the resulting growth in traffic. "We didn't do a lot of 40 Gig [deployments] in our core MPLS [Multiprotocol Label Switching] network," says Wellbrock.

The cost of 100 Gigabit was another factor: A 100 Gigabit long-haul channel is now cheaper than ten, 10 Gig channels. There are also operational benefits using 100 Gig such as having fewer wavelengths to manage. "So it is the lower cost-per-bit plus you get all the advantages of having the higher trunk rates," says Wellbrock.

Verizon expects to continue deploying 100 Gigabit. First, it has a large network and much of the deployment will occur in 2014. "Eventually, we hope to get a bit ahead of the curve and have some [capacity] headroom," says Wellbrock.

We could take advantage of 200 Gig or 400 Gig or 500 Gig today

Super-channel trials

Operators, working with optical vendors, are trialling super-channels and advanced modulation schemes such as 16-QAM (quadrature amplitude amplitude). Such trials involve links carrying data in multiples of 100 Gig: 200 Gig, 400 Gig, even a Terabit.

Super-channels are already carrying live traffic. Infinera's DTN-X system delivers 500 Gig super-channels using quadrature phase-shift keying (QPSK) modulation. Orange has a 400 Gigabit super-channel link between Lyon and Paris. The 400 Gig super-channel comprises two carriers, each carrying 200 Gig using 16-QAM, implemented using Alcatel-Lucent's 1830 photonic service switch platform and its photonic service engine (PSE) DSP-ASIC.

"We could take advantage of 200 Gig or 400 Gig or 500 Gig today," says Wellbrock. "As soon as it is cost effective, you can use it because you can put multiple 100 Gig channels on there and multiplex them."

The issue with 16-QAM, however, is its limited reach using existing fibre and line systems - 500-700km - compared to QPSK's 2,500+ km before regeneration. "It [16-QAM] will only work in a handful of applications - 25 percent, something of this nature," says Wellbrock. This is good for a New York to Boston, he says, but not New York to Chicago. "From our end it is pretty simple, it is lowest cost," says Wellbrock. "If we can reduce the cost, we will use it [16-QAM]. However, if the reach requirement cannot be met, the operator will not go to the expense of putting in signal regenerators to use 16-QAM do, he says.

Earlier this year Verizon conducted a trial with Ciena using 16-QAM. The goals were to test 16-QAM alongside live traffic and determine whether the same line card would work at 100 Gig using QPSK and 200 Gig using 16-QAM. "The good thing is you can use the same hardware; it is a firmware setting," says Wellbrock.

We feel that 2015 is when we can justify a new, greenfield network and that 100 Gig or versions of that - 200 Gig or 400 Gig - will be cheap enough to make sense

100 Gig in the metro

Verizon says there is already sufficient traffic pressure in its metro networks to justify 100 Gig deployments. Some of Verizon's bigger metro locations comprise up to 200 reconfigurable optical add/ drop multiplexer (ROADM) nodes. Each node is typically a central office connected to the network via a ROADM, varying from a two-degree to an eight-degree design.

"Not all the 200 nodes would need multiple 100 Gig channels but in the core of the network, there is a significant amount of capacity that needs to be moved around," says Wellbrock. "100 Gig will be used as soon as it is cost-effective."

Unlike long-haul, 100 Gigabit in the metro remains costlier than ten 10 Gig channels. That said, Verizon has deployed metro 100 Gig when absolutely necessary, for example connecting two router locations that need to be connected using 100 Gig. Here Verizon is willing to pay extra for such links.

"By 2015 we are really hoping that the [metro] crossover point will be reached, that 100 Gig will be more cost effective in the metro than ten times 10 [Gig]." Verizon will build a new generation of metro networks based on 100 Gig or 200 Gig or 400 Gig using coherent receivers rather than use existing networks based on conventional 10 Gig links to which 100 Gig is added.

"We feel that 2015 is when we can justify a new, greenfield network and that 100 Gig or versions of that - 200 Gig or 400 Gig - will be cheap enough to make sense."

Data Centres

The build-out of data centres is not a significant factor driving 100 Gig demand. The largest content service providers do use tens of 100 Gigabit wavelengths to link their mega data centres but they typically have their own networks that connect relatively few sites.

"If you have lots of data centres, the traffic itself is more distributed, as are the bandwidth requirements," says Wellbrock.

Verizon has over 220 data centres, most being hosting centres. The data demand between many of the sites is relatively small and is served with 10 Gigabit links. "We are seeing the same thing with most of our customers," says Wellbrock.

Technologies

System vendors continue to develop cheaper line cards to meet the cost-conscious metro requirements. Module developments include smaller 100 Gig 4x5-inch MSA transponders, 100 Gig CFP modules and component developments for line side interfaces that fit within CFP2 and CFP4 modules.

"They are all good," says Wellbrock when asked which of these 100 Gigabit metro technologies are important for the operator. "We would like to get there as soon as possible."

The CFP4 may be available by late 2015 but more likely in 2016, and will reduce significantly the cost of 100 Gig. "We are assuming they are going to be there and basing our timelines on that," he says.

Greater line card port density is another benefit once 100 Gig CFP2 and CFP4 line side modules become available. "Lower power and greater density which is allowing us to get more bandwidth on and off the card." sats Wellbrock.

Existing switch and routers are bandwidth-constrained: they have more traffic capability that the faceplate can provide. "The CFPs, the way they are today, you can only get four on a card, and a lot of the cards will support twice that much capacity," says Wellbrock.

With the smaller form factor CFP2 and CFP4, 1.2 and 1.6 Terabits card will become possible from 2015. Another possible development is a 400 Gigabit CFP which would achieve a similar overall capacity gains.

Coherent, not just greater capacity

Verizon is looking for greater system integration and continues to encourage industry commonality in optical component building blocks to drive down cost and promote scale.

Indeed Verizon believes that industry developments such as MSAs and standards are working well. Wellbrock prefers standardisation to custom designs like 100 Gigabit direct detection modules or company-specific optical module designs.

Wellbrock stresses the importance of coherent receiver technology not only in enabling higher capacity links but also a dynamic optical layer. The coherent receiver adds value when it comes to colourless, directionless, contentionless (CDC) and flexible grid ROADMs.

"If you are going to have a very cost-effective 100 Gigabit because the ecosystem is working towards similar solutions, then you can say: 'Why don't I add in this agile photonic layer?' and then I can really start to do some next-generation networking things." This is only possible, says Wellbrock, because of the tunabie filter offered by a coherent receiver, unlike direct detection technology with its fixed-filter design.

"Today, if you want to move from one channel to the next - wavelength 1 to wavelength 2 - you have to physically move the patch cord to another filter," says Wellbrock. "Now, the [coherent] receiver can simply tune the local oscillator to channel 2; the transmitter is full-band tunable, and now the receiver is full-band tunable as well." This tunability can be enabled remotely rather than requiring an on-site engineer.

Such wavelength agility promises greater network optimisation.

"How do we perhaps change some of our sparing policy? How do we change some of our restoration policies so that we can take advantage of that agile photonics later," says Wellbroack. "That is something that is only becoming available because of the coherent 100 Gigabit receivers."

Part 2, click here

Is silicon photonics an industry game-changer?

Briefing: Silicon Photonics

Part 3: Merits, challenges and applications

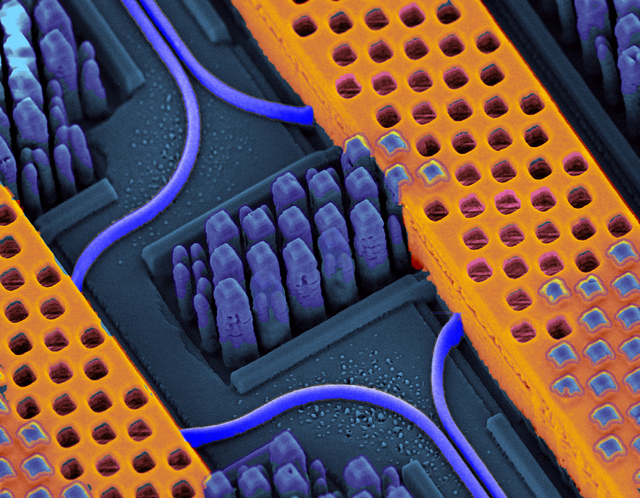

Shown in blue are the optical waveguides (and bend radius) while the copper wires carrying high-speed electrical signals are shown in orange. Source: IBM

Shown in blue are the optical waveguides (and bend radius) while the copper wires carrying high-speed electrical signals are shown in orange. Source: IBM

System vendors have been on a silicon-photonics spending spree.

Cisco Systems started the ball rolling in 2012 when it acquired silicon photonics start-up, LightWire, for $272M. Mellanox Technologies more recently bought Kotura for $82M. Now Huawei has acquired Caliopa, a four-year-old Belgium-based start-up, for an undisclosed fee. The Chinese system vendor has said it is looking to further bolster its European R&D, and highlighted silicon photonics in particular.

Given that it was only just over a decade ago when systems companies were shedding their optical component units, the trend to acquire silicon photonics highlights the growing importance of the fledgling technology.

These system vendors view silicon photonics as a strategic technology. The equipment makers want to develop expertise and experience as they plan to incorporate the technology in upcoming, hopefully differentiated platforms.

"If I have a Terabit of capacity on the front panel, how am I going to manipulate that across the line card, a fabric or the backplane?" says Adam Carter, general manager and senior director of the transceiver modules group at Cisco Systems. "We saw silicon photonics as a technology that could potentially enable us to get there."

System vendors are already using embedded optics - mounted on boards close to the ICs instead of pluggable modules on the front panel - to create platforms with denser interfaces.

"Photonics doesn't need the latest and greatest lithography"

Arista Networks' 7500E switch has a line card with board-mounted optics rather than pluggable transceivers to increase 100 Gigabit port density. The company offers several line cards using pluggable modules but it has designed one card with board-mounted optics that offers flexible interfaces - 10 Gig, 40 Gig and 100 Gig - and a higher port density. When developing the design, the multi-source agreement (MSA) CFP2 pluggable module was not ready, says Arista.

Compass-EOS, a core IP router start-up, has developed chip-mounted optics based on 168 lasers and 168 detectors. The novel Terabit-plus optical interface removes the need for a switch fabric and the mid-plane to interconnect the router card within the platform. The interface also enables linking of platforms to scale the IP core router.

Both companies are using VCSELs, an established laser technology that silicon photonics competes with. Yet the two designs highlight how moving optics closer to chips enables system innovation, a development that plays to silicon photonics' strength.

"I characterise silicon photonics as a technology that will compete in the right applications but won’t displace indium phosphide" Ed Murphy, JDSU

Silicon photonics promises cost savings by enabling vendors to piggyback on the huge investments made by the semiconductor industry. Vendors making their own products, such as optical transceivers, also promises to shake up the existing optical component supply chain.

Cisco Systems' first silicon photonics product is the proprietary 100 Gigabit optical CPAK transceiver that is undergoing qualification. By making its own optical module, Cisco avoids paying the optical module makers' margins. Cisco claims the CPAK's smaller size improves the faceplate density compared to the CFP2.

Pros and cons

Silicon photonics may be able to exploit the huge investment already made in the semiconductor industry, but it does differ from standard CMOS integrated circuits (ICs).

First, optics does not have the equivalent of Moore's Law. Whereas chip economics improve with greater integration, only a few optical functions can be cascaded due to the accumulated signal loss as light travels through the photonic circuit. This is true for optical integration in general, not just silicon photonics.

Another issue is that the size of an optical component - a laser or a modulator - is dictated by the laws of physics rather than lithography. "Photonics doesn't need the latest and greatest lithography," says Martin Zirngibl, domain leader for enabling physical technologies at Alcatel-Lucent's Bell Labs. "You can live with 100nm, 120nm [CMOS] components whereas for electronics you want to have 45nm."

This can lead to the interesting situation when integrating electronics with photonics. "You either don't use the latest technology for electronics or you waste a lot of real estate with very expensive lithography for photonics," says Zirngibl.

Another disadvantage of silicon is that the material does not lase. As a result, either a III-V material needs to be bonded to the silicon wafer or an external laser must be coupled to the silicon photonics circuit.

Silicon also has relatively small waveguides which make it tricky to couple light in and out of a chip.

The advantages of silicon photonics, however, are significant.

The technology benefits from advanced 8- and 12-inch wafers and mature manufacturing processes developed by the semiconductor industry. Using such CMOS processes promises high yields, manufacturing scale, and automation and testing associated with large scale IC manufacturing.

"This is probably the only advantage but it is very significant," says Valery Tolstikhin, founder and former CTO of indium phosphide specialist, OneChip Photonics, and now an independent consultant. "It takes silicon totally off the scale compared to any other photonics materials."

"We can build the single-die optical engine in the same CMOS line where processors are built, where billions [of dollars] of investment has been done"

IBM's high-density silicon photonics optical engine is made using a 90nm CMOS process. "We can build the single-die optical engine in the same CMOS line where processors are built, where billions [of dollars] of investment has been done," says Yurii Vlasov, manager of the silicon nanophotonics department at IBM Research. "We are riding on top of that investment."

Extra processing may be introduced for the photonics, says IBM, but the point is that there is no additional capital investment. "It is the same tooling, the same process conditions; we are changing the way this tooling is used," says Vlasov. "We are changing the process a little bit; the capital investment is in place."

"We believe that even for shorter distance, silicon photonics does compete in terms of cost with VCSELs." Yurii Vlasov, IBM

Stephen Krasulick, CEO of silicon photonics start-up, Skorpios Technologies, makes a similar point. "The real magic with our approach is the ability to integrate it with standard, commercial fabs," he says.

Skorpios is a proponent of heterogeneous integration, or what the company refers to as 'silicon photonics 2.0'. Here silicon and III-V are wafer-bonded and the optical components are created by etching the two materials. This avoids the need to couple external lasers and to use active alignment.

"We do it in a manner such that the CMOS foundry is comfortable letting the wafer back into the CMOS line," says Krasulick, who adds that Skorpios has been working with CMOS partners from the start to ensure that its approach suits their manufacturing flow.

Applications

The first applications adopting silicon photonics span datacom and telecom: from short-reach interconnect in the data centre to 100 Gigabit-per-second (Gbps) long-distance coherent transmission.

Intel is developing silicon photonics technology to help spur sales of its microprocessors. The chip giant is a member of Facebook's Open Compute Project based on a disaggregated system design that separates storage, computing and networking. "When I upgrade the microprocessors on the motherboard, I don't have to throw away the NICs [network interface controllers] and disc drives," says Victor Krutul, director business development and marketing for silicon photonics at Intel. The disaggregation can be within a rack or span rows of equipment.

"Optical modules do not require state-of-the-art lithography or large scale photonic integration, but they do need to be coupled in and out of fibre and they need lasers - none of that silicon photonics has a good solution for"

Intel has developed the Rack Scale Architecture (RSA) which implements a disaggregated design. One RSA implementation for Facebook uses three 100Gbps silicon photonics modules per tray. Each module comprises four transmit and four receive fibres, each at 25Gbps. Each tray uses a Corning-developed MXC connector and its ClearCurve fibre that support data rates up to 1.6Tbps. “Different versions of RSA will have more or less modules depending on requirements," says Krutul.

Luxtera, whose silicon photonics technology has been used for active optical cables, and Mellanox's Kotura, are each developing 100Gbps silicon photonics-based QSFPs to increase data centre reach and equipment face plate density.

One data centre requirement is the need for longer reach links. VCSEL technology is an established solution but at 100Gbps its reach is limited to 100m only. Intel's 100Gbps module, operating at 1310nm and combined with Corning's MXC connector and ClearCurve multi-mode fibre, enables up to 300m links. But for greater distances - 500m to 2,000m - a second technology is required. Data centre managers would like one technology that spans the data centre yet is cost competitive with VCSELs.

"Silicon photonics lends itself to that," says Cisco's Carter. "If we drive the cost lower, can we start looking at replacing or future proofing your network by going to single mode fibre?"

"There are places where silicon photonics will definitely win, such as chip-to-chip optical interconnects, and there are places where there is still a question mark, like fibre-optics interconnects." Valery Tolstikhin

IBM's 25Gbps-per-channel optical engine has been designed for use within data centre equipment. "We are claiming we have density based on optical scaling which is the highest in the industry, and we have done it using monolithic integration: optical devices are built side-by-side with CMOS," says Vlasov.

What is important, says Vlasov, is not so much the size of the silicon waveguide but how sharp its bend radius is. The bend radius dictates how sharply the light can be guided while remaining confined within the integrated circuit. The higher the light confinement, the smaller the bend radius and hence the overall circuit area.

Much progress has been made in improving light confinement over the past two decades, resulting in the bend ratio coming down from 1cm to a micron. IBM claims that with its technology, it can build systems comprising hundreds of devices occupying a millimeter. "That is a major difference in the density of optical integration," says Vlasov.

IBM does not use heterogeneous integration but couples lasers externally."It is not complicated, it is a technical problem we are solving; we believe that is the way to go," says Vlasov. "The reason why we have gone down this path is very simple: we believe in monolithic integration where electrical circuitry sits side by side with optical components."

Such monolithic integration of the optics with the electronics, such as modulator drivers and clock recovery circuitry, reduces significantly the cost of packaging and testing. "We believe that even for shorter distances, silicon photonics does compete in terms of cost with VCSELs if all elements of the cost are taken care of: bill of materials, packaging and testing," says Vlasov.

But not everyone believes silicon photonics will replace VCSELs.

For example, Tolstikhin questions the merits of silicon photonics for transceiver designs, such as for 100 Gig modules in the data centre. "There are places where silicon photonics will definitely win, such as chip-to-chip optical interconnects, and there are places where there is still a question mark, like fibre-optics interconnects," he says.

Tolstikhin argues that silicon photonics offers little advantage for such applications: "Optical modules do not require state-of-the-art lithography or large scale photonic integration, but they do need to be coupled in and out of fibre and they need lasers - none of that silicon photonics has a good solution for."

Cisco says it was first attracted to LightWire's technology because of its suitability for optical transceivers. Six years ago 1W, SFP+ modules were limited to 10km. "Customers wanted 40km, 80km, even WDM," says Carter. "They [LightWire] did a 40km SFP+ using their modulator that consumed only 0.5W - a huge differentiator." Two years ago 100 Gig CFP modules were at 24W while LightWire demonstrated a module under 8W, says Carter.

Tolstikhin believes silicon photonics' great promise is for applications still to emerge. One example is chip-to-chip communication that has modest optical light requirements and does not have to be coupled in and out of fibre.

"Here you have very high requirements for density packaging and the tiny [silicon] waveguides are handy whereas indium phosphide is too big and too expensive here," says Tolstikhin. Longer term still, silicon photonics will be used for on-chip communication but that will likely be based on deep sub-wavelength scale optics such as surface plasmonics rather than classical dielectric waveguides.

Tolstikhin also argues that the economics of using indium phosphide compared to silicon photonics need not be all that gloomy.

Indium phosphide is associated with custom small-scale fabs and small volume markets. But indium phosphide can benefit from the economics of larger industries just as silicon photonics promises to do with the semiconductor industry.

Indium phosphide is used in higher volume for wireless ICs such as power amplifiers. "Quite significantly orders of magnitude higher," says Tolstikhin. The issue is that, conventionally, photonic circuits are fabricated by using multiple epitaxial growth steps, whereas the wireless ICs are made in a single-growth process, hence epitaxy and wafer processing are decoupled.

"If you can give up on regrowth and still preserve the desired photonic functionality, then you can go to commercial RF IC fabs," he says. "This is a huge change in the economic model." It is an approach that enables a fabless model for indium phosphide photonics, with the potential advantages not unlike those claimed by silicon photonics with respect to commercial CMOS fabs.

"That suggests indium phosphide - which has all kinds of physical advantages for those applications that require transmitters, receivers and fibre, plus readily available high-speed analogue electronics for trans-impedance amplifiers and laser or modulator drivers - may be quite a competitive contender," says Tolstikhin.

"Silicon photonics has a certain capability but the hype around it has magnified that capability beyond reality"

Customers don't care which technology is used inside a transceiver. "They care only about cost, power and package density," says Tolstikhin. "Indium phosphide can be competitive and on many occasions beat silicon photonics."

JDSU also believes that long-term, a perfect fit for silicon photonics may be relative short reach interconnects – chip-to-chip and board-to-board reaches. “You need to have very high speed and dense interconnects, I can see that as being a very strong value proposition long term," says Ed Murphy, senior director, communications and commercial optical products at JDSU.

Finisar and JDSU are open to the potential benefits of silicon photonics but remain strong proponents of traditional optical materials such as indium phosphide and gallium arsenide.

"We have designed silicon photonic chips here at Finisar and have evaluations that are ongoing. There are many companies that now offer silicon photonics foundry services. You can lay out a chip and they will build it for you," says Jerry Rawls, executive director of Finisar. "The problem is we haven't found a place where it can be as efficient or offer the performance as using traditional lasers and free-space optics."

"Silicon photonics has a certain capability but the hype around it has magnified that capability beyond reality,” says JDSU's Murphy. "Practitioners of silicon photonics would tell you that as well."

According to Murphy, each application, when looked at in detail, has its advantages and disadvantages when using either silicon photonics or indium phosphide. “Even in those applications where one or the other is better, the level of improvement is measured in a few tens of percent, not factors of ten,” he says. "I characterise silicon photonics as a technology that will compete in the right applications but won’t displace indium phosphide."

Silicon photonics for telecom

At the other extreme of the optical performance spectrum, silicon photonics is being developed for long-distance optical transmission. The technology promises to help shrink coherent designs to fit within the CFP2 module, albeit at the expense of reach. A CFP2 coherent module has extremely challenging cost, size and power requirements.

Teraxion is developing a coherent receiver for CFP2. "We believe silicon photonics is the material of choice to fulfill CFP2 requirements while allowing even smaller size reduction for future modules such as the CFP4," said Martin Guy, Teraxion's vice president of product management and technology.

u2t Photonics and Finisar recently licensed indium phosphide modulator technology to help shrink coherent designs into smaller form factor pluggables. So what benefit does silicon photonics offer here?

"In terms of size there will not be much difference between indium phosphide and silicon photonics technology," says Guy. "However, being on each side on the fence, we know that process repeatability and therefore yield is better with silicon photonics." Silicon photonics thus promises a lower chip cost.

"We have projects spanning everything from access all the way to long haul, and covering some datacom as well," says Rob Stone, vice president of marketing and program management at Skorpios. The start-up has developed a CMOS-based tunable laser with a narrow line width that is suitable for coherent applications.

"If you develop a library of macrocells, you can apply them to do different applications in a straightforward manner, provided all the individual macrocells are validated," says Stone. This is different to the traditional design approach.

Adding a local oscillator to a coherent receiver requires a redesign and a new gold box. "What we've got, we can plug things together, lay it out differently and put it on a mask," says Stone. "This enables us to do a lot of tailoring of designs really quite quickly - and a quick time-to-market is important."

Perhaps the real change silicon photonics brings is a disruption of the supply chain, says Zirngibl.

An optical component maker typically sells its device to a packaging company that puts it in a transmitter or receiver optical sub-assembly (TOSA/ ROSA). In turn, the sub-assemblies are sold to a module company which then sells the optical transceiver to an equipment vendor. Each player in the supply chain adds its own profit.

Silicon photonics promises to break the model. A system company can design its own chip using design tools and libraries and go to a silicon foundry. It could then go to a packaging company to make the module or package the device directly on a card, bypassing the module maker altogether.

Yet the ASIC model can also benefit module makers.

IBM has developed its 25Gbps-per-channel silicon photonics technology for its platforms, for chip-to-chip and backplanes, less for data centre interconnect. But it is open to selling the engine to interested optical module players. "If this technology can be extended to 2km for big data centres, others can come in, the usual providers of transceivers," says Vlasov.

"There are companies with the potential to offer a [silicon photonics] design service or foundry service to others that would like to access this technology," says Cisco's Carter. "Five years ago there wasn't such an ecosystem but it is developing very fast."

The article is an extended version of one that appeared in the exhibition magazine published at ECOC 2013.

Part 1: Optical interconnect, click here

Part 2: Bell Labs on silicon photonics, click here

Bell Labs on silicon photonics

Briefing: Silicon Photonics

Part 2: A system vendor's perspective

- Silicon photonics as a technology has its challenges

- Its biggest impact could be to shake up the industry's optical component supply chain

- Silicon photonics will not displace VCSELs

An interview with Alcatel-Lucent Bell Labs' Martin Zirngibl, domain leader for enabling physical technologies, on the merits and potential impact of silicon photonics

Martin Zirngibl admits he is skeptical when it comes to silicon photonics. "There is a lot of hype around silicon photonics but there are also some real advantages," he says. "We have a strong silicon photonics programme inside Bell Labs and I tell my folks: If you prove me wrong, I'm going to be very happy."

The skepticism stems from the technology's limitations. "There is no Moore's Law in photonics, you cannot cascade many photonic elements," says Zirngibl.

Photonic components are also analogue. Once several devices are cascaded, the signal loss accumulates. This is true for photonic integration in general, not just silicon photonics.

Another issue is that the size of an optical component such as a laser or a modulator is dictated by the laws of physics rather than lithography, used to make ever-smaller transistors with each generation of CMOS process. Zirngibl compares optical transmitters and receivers to cars: they improve with time but the fundamental size does not change.

"Silicon photonics could form an ASIC-like model and break the supply chain"

A consequence of shrinking feature size with semiconductors is that chip performance gets better with integration. Integration in photonics, in contrast, involves compromise and a tradeoff in optical performance.

However, the advantages of silicon photonics are significant. The technology can benefit from the huge investment made in the semiconductor industry. "CMOS foundries exists with 8- and 12-inch wafers," says Zirngibl. These mature processes are extremely well controlled, producing high-yielding devices. "If you match any component with that type of process, you have instant high volume and instant scalability," says Zirngibl.

Silicon photonics may require something different but if it can use these CMOS processes, the result is a free ride on all this investment, he says: "That is the real advantage.”

For Zirngibl, the impact of silicon photonics will more likely be on the industry supply chain. An optical component maker may sell its device to a packaging company that puts it in a transmitter or receiver optical sub-assembly (TOSA/ROSA). In turn, the sub-assemblies are sold to a module company which then sell the optical transceiver to an equipment vendor. Each player in the supply chain adds its own profit.

Silicon photonics promises to break the model. A system company can design its own chip and go to a silicon foundry. It could then go to a packaging company to make the module or package the device directly on a card, bypassing the module maker altogether.

"Silicon photonics could form an ASIC-like model and break the supply chain," says Zirngibl. "This worries the large module makers of the world."

"The problem with coherent is that it needs a lot of optical stuff"

Zirngibl stresses that such a change could also happen with traditional optical components. A system vendor could adopt a similar strategy with indium phosphide chips, for example. But the issue is that indium phosphide does not share the mature processes or the scale of the semiconductor industry, and as such an ASIC model is harder to achieve.

"If you can use CMOS processes for optical components then, all of a sudden, optical could become an ASIC-like supply chain," says Zirngibl. "It could cut out a lot of the module and package vendors."

That is what Cisco Systems has done with its CPAK module based on silicon photonics. "Cisco broke the supply chain model by doing an internal development of a module, they don't rely on anyone else," he says.

Challenges

Silicon photonics faces several challenges. One is silicon has no optical source. “A regular CMOS process will not product a light source." Companies are pursuing several approaches as to how best to couple a III-V source to silicon.

Another issue is that the optical performance of a silicon photonics design must match that of alternative solutions. "At the end of the day in photonics it is always about performance," says Zirngibl.

A 1dB or 2dB worse insertion loss compared with an alternative photonic design may be acceptable but it has to roughly match. "If it does not, even if the device is for free, the fact that you have a performance degradation will make you pay somewhere else [in the system]," says Zirngibl.

"We once tried access; there is nothing more cost-sensitive than fibre-to-the-home (FTTH) and we wanted to push silicon photonics for access," says Zirngibl. FTTH is highly cost-sensitive and is a volume market. But the resulting design had a 5dB worse performance than a free space equivalent. "We didn't have the slightest chance to get in: a 5dB insertion loss in access means a split ratio of 1:16 instead of 1:32 and a 3-4km reach instead of 20km."

One application where optical performance is key is long-distance transmission using coherent technology. Coherent offers significant benefits: 100 Gigabit per channel, reaches of several thousand kilometers, spectral efficiency, and the ability to correct in the digital domain for many of the transmission impairments.

"The problem with coherent is that it needs a lot of optical stuff," says Zirngibl. A coherent line card has a high power consumption and uses lot of expensive optical components. Companies are looking at silicon photonics as a way of reducing cost while shrinking the size to fit within a pluggable transceiver. The tradeoff is reach; instead of a span of 1000km-plus, achieving a few hundred kilometers would be more likely.

"For interconnect, VCSELs are not going to be displaced"

Companies such as Oclaro, and Finisar and u2t Photonics have announced developments involving indium phosphide to achieve a compact-enough design to fit within a CFP2 pluggable module.

"Silicon photonics has a modulator that can be driven with a low voltage, and that could be driven using CMOS, a real advantage," says Zirngibl. "Unfortunately, the modulator has a lot of insertion loss, so you have to solve it elsewhere."

At OFC/NFOEC 2013, Alcatel-Lucent, working with the CEA-Leti foundry, presented a long-distance laser design using silicon photonics. "We do wafer bonding on silicon - you marry indium phoshide with silicon photonics," says Zirngibl. "If you match a process that allows you to do a light source with 8-inch or 12-inch wafers, you have something that could be a winning solution."

Short-reach connections

One important question that impacts the potential silicon photonics opportunity is when does the crossover from electrical to optical occur?

If the link distance is sufficiently short, it makes sense to stay in the electrical domain. This is because going optical inevitable requires electrical-optical and optical-electrical conversions over a link. "If it is very short distance, it will always be electrical,” says Zirngibl. The issue with electrical is that as signal speeds increase to 25 Gig, losses accumulate very quickly with distance and the signal fades.

"We believe that this crossover from electrical to optical is 1 meter at 100Gbps," says Zirngibl, with the 100 Gigabit being four 25Gbps lanes.

Accordingly, for any distance above 1m, optical interconnect will be used for 100 Gig signals between boards and between systems. “The electrical I/O goes to the end of board where you have a VCSEL interconnect and goes to the next line card, where there is another VCSEL interconnect," says Zirngibl.

In such a design, getting the optics closer to the processor makes sense. "A good case for a processor with almost an optical I/O," says Zirngibl. Companies such as Arista Networks and Compass-EOS are already doing this. "The problem is that it is pretty ugly, cables coming out of the processor, and how do you slide in and out a card?" he says. "What would be really cool is a VCSEL and printed optical waveguides."

This is an area that still needs some work, he says, but there are companies developing optical PCBs such as Vario-optics.

Zirngibl believes one promising application for silicon photonics is for a coherent receiver at 100 Gig. "That is when you will see it [silicon photonics] first," he says. "There is demultiplexing, no light source is needed and you can do the detection on silicon photonics."

For short-reach interconnect, Zirngibl believes silicon photonics will not displace VCSELs.

"VCSELs are by nature an incredibly efficient, low-cost solution," he concludes. "For interconnect, VCSELs are not going to be displaced."

Part 1: Optical interconnect, click here

Part 3: Is silicon photonics an industry game-changer? click here

Luxtera's interconnect strategy

Part 1: Optical interconnect

Luxtera demonstrated a 100 Gigabit QSFP optical module at the OFC/NFOEC 2013 exhibition.

"We're in discussions with a lot of memory vendors, switch vendors and different ASIC providers"

"We're in discussions with a lot of memory vendors, switch vendors and different ASIC providers"

Chris Bergey, Luxtera

The silicon photonics-based QSFP pluggable transceiver was part of the Optical Internetworking Forum's (OIF) multi-vendor demonstration of the 4x25 Gigabit chip-to-module interface, defined by the CEI-28G-VSR Implementation Agreement.

The OIF demonstration involved several optical module and chip companies and included CFP2 modules running the 100GBASE-LR4 10km standard alongside Luxtera's 4x28 Gigabit-per-second (Gbps) silicon photonics-based QSFP28.

Kotura also previewed a 100Gbps QSFP at OFC/NFOEC but its silicon photonics design uses two chips and wavelength-division multiplexing (WDM).

The Luxtera QSFP28 is being aimed at data centre applications and has a 500m reach although Luxtera says up to 2km is possible. The QSFP28 is sampling to initial customers and will be in production next year.

100 Gigabit modules

Current 100GBASE-LR4 client-side interfaces are available in the CFP form factor. OFC/NFOEC 2013 saw the announcement of two smaller pluggable form factors at 100Gbps: the CFP2, the next pluggable on the CFP MSA roadmap, and Cisco Systems' in-house CPAK.

Now silicon photonics player Luxtera is coming to market with a QSFP-based 100 Gigabit interface, more compact than the CFP2 and CPAK.

The QSFP is already available as a 40Gbps interface. The 40Gbps QSFP also supports four independent 10Gbps interfaces. The QSFP form factor, along with the SFP+, are widely used on the front panels of data centre switches.

"The QSFP is an inside-the-data-centre connector while the CFP/CFP2 is an edge of the data centre, and for telecom, an edge router connector," says Chris Bergey, vice president of marketing at Luxtera. "These are different markets in terms of their power consumption and cost."

Bergey says the big 'Web 2.0' data centre operators like the reach and density offered by the 100Gbps QSFP as their data centres are physically large and use flatter, less tiered switch architectures.

"If you are a big systems company and you are betting on your flagship chip, you better have multiple sources"

The content service providers also buy transceivers in large volumes and like that the Luxtera QSFP works over single-mode fibre which is cheaper than multi-mode fibre. "All these factors lead to where we think silicon photonics plays in a big way," says Bergey.

The 100Gbps QSFP must deliver a lower cost-per-bit compared to the 40Gbps QSFP if it is to be adopted widely. Luxtera estimates that the QSFP28 will cost less than US $1,000 and could be as low as $250.

Optical interconnect

Luxtera says its focus is on low-cost, high-density interconnect rather than optical transceivers. "We want to be a chip company," says Bergey.

The company defines optical interconnect as covering active optical cable and transceivers, optical engines used as board-mounted optics placed next to chips, and ASICs with optical SerDes (serialiser/ deserialisers) rather than copper ones.

Optical interconnect, it argues, will have a three-stage evolution: starting with face-plate transceivers, moving to mid-board optics and then ASICS with optical interfaces. Such optical interconnect developments promise lower cost high-speed designs and new ways to architect systems.

Currently optics are largely confined to transceivers on a system׳s front panel. The exceptions are high-end supercomputer systems and emerging novel designs such as Compass-EOS's IP core router.

"The problem with the front panel is the density you can achieve is somewhat limited," says Bergey. Leading switch IC suppliers using a 40nm CMOS process are capable of a Terabit of switching. "That matches really well if you put a ton of QSFPs on the front panel," says Bergey.

But once switch IC vendors use the next CMOS process node, the switching capacity will rise to several Terabits. This becomes far more challenging to meet using front panel optics and will be more costly compared to putting board-mounted optics alongside the chip.

"When we build [silicon photonics] chips, we can package them in QSFPs for the front panel, or we can package them for mid-board optics," says Bergey.

"If it [silicon photonics] is viewed as exotic, it is never going to hit the volumes we aspire to."

The use of mid-board optics by system vendors is the second stage in the evolution of optical interconnect. "It [mid-board optics] is an intermediate step between how you move from copper I/O [input/output] to optical I/O," says Bergey.

The use of mid-board optics requires less power, especially when using 25Gbps signals, says Bergey: “You dont need as many [signal] retimers.” It also saves power consumed by the SerDes - from 2W for each SerDes to 1W, since the mid-board optics are closer and signals need not be driven all the way to the front panel. "You are saving 2W per 100 Gig and if you are doing several Terabits, that adds up," says Bergey.

The end game is optical I/O. This will be required wherever there are dense I/O requirements and where a lot of traffic is aggregated.

Luxtera, as a silicon photonics player, is pursuing an approach to integrate optics with VLSI devices. "We're in discussions with a lot of memory vendors, switch vendors and different ASIC providers," says Bergey.

Silicon photonics fab

Last year STMicroelectronics (ST) and Luxtera announced they would create a 300mm wafer silicon photonics process at ST's facility in Crolles, France.

Luxtera expects that line to be qualified, ramped and in production in 2014. Before then, devices need to be built, qualified and tested for their reliability.

"If you are a big systems company and you are betting on your flagship chip, you better have multiple sources," says Bergey. "That is what we are doing with ST: it drastically expands the total available market of silicon photonics and it is something that ST and Luxtera can benefit from.”

Having multiple sources is important, says Bergey: "If it [silicon photonics] is viewed as exotic, it is never going to hit the volumes we aspire to."

Part 2: Bell Labs on silicon photonics click here

Part 3: Is silicon photonics an industry game-changer? click here

Netronome uses its network flow processor for OpenFlow

Part 2: Hardware for SDN

Netronome has demonstrated its flow processor chip implementing the OpenFlow protocol, an open standard implementation of software-defined networking (SDN).

"What OpenFlow does is let you control the hardware that is handling the traffic in the network. The value to the end customer is what they can do with that"

David Wells, Netronome

The reference design demonstration, which took place at an Open Networking User Group meeting, used the fabless semiconductor player's NFP-3240 network flow processor. The NFP-3240 was running the latest 1.3.0 version of the OpenFlow protocol.

Last year Netronome announced its next-generation flow processor family, the NFP-6xxx. The OpenFlow demonstration hints at what the newest flow processor will enable once first samples become available at the year end.

Netronome believes its flow processor architecture is well placed to tackle emerging intelligent networking applications such as SDN due to its emphasis on packet flows.

“In security, mobile and other spaces, increasingly there needs to be equipment in the network that is looking at content of packets and states of a flow - where you are looking at content across multiple packets - to figure out what is going on,” says David Wells, co-founder of Netronome and vice president of technology. “That is what we term flow processing."

This requires equipment able to process all the traffic on network links at 10 and 40 Gigabit-per-second (Gbps), and with next-generation equipment at 100Gbps. "This is where you do more than look at the packet header and make a switching decision," says Wells.

Software-defined networking

Operators and content service providers are interested in SDN due to its promise to deliver greater efficiencies and control in how they use their switches and routers in the data centre and network. With SDN, operators can add their own intelligence to tailor how traffic is routed in their networks.

In the data centre, a provider may be managing a huge number of servers running virtualised applications. "The management of the servers and applications is clever enough to optimise where it moves virtual machines and where it puts particular applications," says Wells. "You want to be able to optimise how the traffic flows through the network to get to those servers in the same way you are optimising the rest of the infrastructure."

Without OpenFlow, operators depend on routing protocols that come with existing switches and routers. "It works but it won't necessarily take the most efficient route through the network," says Wells.

OpenFlow lets operators orchestrate from the highest level of the infrastructure where applications reside, map the flows that go to them, determine their encapsulation and the capacity they have. "The service can be put in a tunnel, for example, and have resource allocated to it so that you know it is not going to be contended with," says Wells, guaranteeing services to customers.

"What OpenFlow does is let you control the hardware that is handling the traffic in the network," says Wells. "The value to the end customer is what they can do with that, in conjunction with other things they are doing."

Operators are also interested in using OpenFlow in the wide area network. "The attraction of OpenFlow is in the core and the edge [of the network] but it is the edge that is the starting point," says Wells.

OpenFlow demonstration

Netronome's OpenFlow demonstration used an NFP-3240 on a PCI Express (PCIe) card to run OpenFlow while other Netronome software runs on the host server in which the card resides.

The NFP-3240 classifies the traffic and implements the actions to be taken on the flows. The software on the host exposes the OpenFlow application programming interface (API) enabling the OpenFlow controller, the equipment that oversees how traffic is handled, to address the NFP device and influence how flows are processed.

Early OpenFlow implementations are based on Ethernet switch chips that interface to a CPU that provides the OpenFlow API. However, the Ethernet chips support the OpenFlow 1.1.0 specification and have limited-sized look-up tables with 98, 64k or 100k entries, says Wells.

The OpenFlow controller can write to the table and dictate how traffic is handled, but its size is limited. "That is a starting point and is useful," says Wells. "But to really do SDN, you need hardware platforms that can handle many more flows than these switches."

This is where the NFP processor is being targeted: it is programmable with capabilities driven by software rather than the hardware architecture, says Wells.

NFP-6xxx architecture

The NFP-6xxx is Netronome's latest network flow processor (NFP) family, rated at 40 to 200Gbps. No particular devices have yet been detailed but the highest-end NFP-6xxx device will comprise 216 processors: 120 flow processors (see chart - Netronome's sixth generation device) and new to its NFP devices, 96 packet processors.

The architecture is made up of 'islands', units that comprise a dozen flow processors. Netronome will combine different numbers of islands to create the various NFP-6xxx devices.

The input-output bandwidth of the device is 800Gbps while the on-chip memory totals 30 Megabyte. The device also interfaces directly to QSFP, SFP+ and CFP optical transceivers.

The 120 flow processors tackle the more complex, higher-layer tasks. Netronome has added packet processors to the NFP-6xxx to free the flow processors from tasks such as taking packets from the input stream and passing them on to where they are processed. The packet processors are programmable and perform such tasks as header classification before being processed by the flow processors.

The NFP-6xxx devices will include some 100 hardware accelerator engines for tasks such as traffic management, encryption and deep packet inspection.

The device will be implemented using Intel's latest 22nm 3D Tri-Gate CMOS process and is designed to work with high-end general purpose CPUs such as Intel's x86 devices, Broadcom's XLP and Freescale's PowerPC.

Markets

The data centre, where SDN is already being used, is one promising market for the device as customers look to enhance their existing capabilities.

There are requirements for intelligent gateways now but this is a market that is a year or two out, says Wells. Use of OpenFlow to control large IP core routers or core optical switches is a longer term application. "Those areas will come but it will be further out," says Wells.

For other markets such as security, there is a need for knowledge about the state of flows. This is more sophisticated treatment of packets than the simple looking up the action required based on a packet's header. Netronome believes that OpenFlow will develop to not only forward or terminate traffic at a certain destination but will also send traffic to a service before it is returned.

"You could insert a service in a OpenFlow environment and what it would do is guide packets to that service and return it but inside that service you may do something that is stateful," says Wells. This is just the sort of task security performs on flows. For example, an intrusion prevention system as a service or a firewall function. This function could be run on a dedicated platform or as a virtual application running on Netronome's flow processor.

Further reading:

Part 1: The role of software defined networking for telcos

EZchip expands the role of the network processor, click here

The role of software-defined networking for telcos

The OIF's Carrier Working Group is assessing how software-defined networking (SDN) will impact transport. Hans-Martin Foisel, chair of the OIF working group, explains SDN's importance for operators.

Briefing: Software-defined networking

Part 1: Operator interest in SDN

"Using SDN use cases, we are trying to derive whether the transport network is ready or if there is some missing functionality"

"Using SDN use cases, we are trying to derive whether the transport network is ready or if there is some missing functionality"

Hans-Martin Foisel, OIF

Hans-Martin Foisel, of Deutsche Telekom and chair of the OIF Carrier Working Group, says SDN is of great interest to operators that view the emerging technology as a way of optimising all-IP networks that increasingly make use of data centres.

"Software-defined networking is an approach for optimising the network in a much larger sense than in the past," says Foisel whose OIF working group is tasked with determining how SDN's requirements will impact the transport network.

Network optimisation remains an ongoing process for operators. Work continues to improve the interworking between the network's layers to gain efficiencies and reduce operating costs (see Cisco Systems' intelligent light).

With SDN, the scope is far broader. "It [SDN] is optimising the network in terms of processing, storage and transport," says Foisel. SDN takes the data centre environment and includes it as part of the overall optimisation. For example, content allocation becomes a new parameter for network optimisation.

Other reasons for operator interest in SDN, says Foisel, include optimising operation support systems (OSS) software, and the characteristic most commonly associated with SDN, making more efficient use of the network's switches and routers.

"A lot of carriers are struggling with their OSSes - these are quite complex beasts," he says. "With data centres involved, you now have a chance to simplify your IT as all carriers are struggling with their IT."

The Network Functions Virtualisation (NFV) industry specification group is a carrier-led initiative set up in January by the European Telecommunications Standards Institute (ETSI). The group is tasked with optimising software components, the OSSes, involved for processing, storage and transport.

The initiative aims to make use of standard servers, storage and Ethernet switches to reduce the varied equipment making up current carrier networks to improve service innovation and reduce the operators' capital and operational expenditure.

The NFV and SDN are separate developments that will benefit each other. The ETSI group will develop requirements and architecture specifications for the hardware and software infrastructure needed for the virtualized functions, as well as guidelines for developing network functions.

The third reason for operator interest in SDN - separating management, control and data planes - promises greater efficiencies, enabling network segmentation irrespective of the switch and router deployments. This allows flexible use the network, with resources shifted based on particular user requirements.

"Optimising the network as a whole - including the data centre services and applications - is a concept, a big architecture," says Foisel. "OpenFlow and the separation of data, management and control planes are tools to achieve them."

OpenFlow is an open standard implementation of the SDN concept. The OpenFlow protocol is being developed by the Open Networking Foundation, an industry body that includes Google, Facebook and Microsoft, telecom operators Verizon, NTT, Deutsche Telekom, and various equipment makers.

Transport SDN

The OIF Working Group will identify how SDN impacts the transport network including layers one and two, networking platforms and even components. By undertaking this work, the operators' goal is to make SDN "carrier-grade'.

Foisel admits that the working group does not yet know whether the transport layer will be impacted by SDN. To answer the question, SDN applications will be used to identify required transport SDN functionalities. Once identified, a set of requirements will be drafted.

"Using SDN use cases, we are trying to derive whether the transport network is ready or if there is some missing functionality," says Foisel.

The work will also highlight any areas that require standardisation, for the OIF and for other standards bodies, to ensure future SDN interworking between vendors' solutions. The OIF expects to have a first draft of the requirements by July 2013.

"In the transport network we are pushed by the mobile operators but also by the over-the-top applications to be faster and be more application-aware," says Foisel. "With SDN we have a chance to do so."

Part 2: Hardware for SDN

FSAN adds WDM for next-generation PON standard

The Full Service Access Network (FSAN) group has chosen wavelength division multiplexing (WDM) to complement PON's traditional time-sharing scheme for the NG-PON2 standard.

"The technology choice allows us to have a single platform supporting both business and residential services"

"The technology choice allows us to have a single platform supporting both business and residential services"

Vincent O'Byrne, Verizon

The TWDM-PON scheme for NG-PON2 will enable operators to run several services over one network: residential broadband access, business services and mobile back-hauling. In addition, NG-PON2 will support dedicated point-to-point links – via a WDM overlay - to meet more demanding service requirements.

FSAN will work through the International Telecommunication Union (ITU) to turn NG-PON2 into a standard. Standards-compliant NG-PON2 equipment is expected to become available by 2014 and be deployed by operators from 2015. But much work remains to flush out the many details and ensure that the standard meets the operators’ varied requirements

Significance

The choice of TWDM-PON represents a pragmatic approach by FSAN. TWDM-PON has been chosen to avoid having to make changes to the operators' outside plant. Instead, changes will be confined to the PON's end equipment: the central office's optical line terminal (OLT) and the home or building's optical networking unit (ONU).

Operators yet to adopt PON technology may use NG-PON2's extended reach to consolidate their network by reducing the number of central offices they manage. Other operators already having deployed PON may use NG-PON2 to boost broadband capacity while consolidating business and residential services onto the one network.

US operator Verizon has deployed GPON and says the adoption of NG-PON2 will enable it to avoid the intermediate upgrade stage of XGPON (10Gbps GPON).

"The [NG-PON2] technology choice allows us to have a single platform supporting both business and residential services," says Vincent O'Byrne, director of technology, wireline access at Verizon. "With the TWDM wavelengths, we can split them: We could have a 10G/10G service or ten individual 1G/1G services and, in time, have also residential customers."

The technology choice for NG-PON2 is also good news for system vendors such as Huawei and Alcatel-Lucent that have already done detailed work on TWDM-PON systems.

Specification

NG-PON2's basic configuration will use four wavelengths, resulting in a 40Gbps PON. Support for eight (80G) and 16 wavelengths (160G) are also being considered.

Each wavelength will support 10Gbps downstream (from the central office to the end users) and 2.5Gbps upstream (XGPON) or 10Gbps symmetrical services for business users.

"The idea is to reuse as much as possible the XGPON protocol in TWDM-PON, and carry that protocol on multiple wavelengths," says Derek Nesset, co-chair of FSAN's NGPON task group.

The PON's OLT will support the 4, 8 or 16 wavelengths using lasers and photo-detectors as well as optical multiplexing, while the ONU will require a tunable laser and a tunable filter, to set the ONU to the PON's particular wavelengths.

Other NG-PON2 specifications include the support of at least 1Gbps services per ONU and a target reach of 40km. NG-PON2 will also support 60-100km links but that will require technologies such as optical amplification.

"The [NG-PON2] ONUs should be something like the cost of a VDSL or a GPON modem, so there is a challenge there for the [tunable] laser manufacturers"

"The [NG-PON2] ONUs should be something like the cost of a VDSL or a GPON modem, so there is a challenge there for the [tunable] laser manufacturers"

Derek Nesset, co-chair of FSAN's NGPON task group

What next?

"The big challenge and the first challenge is the wavelength plan [for NG-PON2]," says O'Byrne.

One proposal is for TWDM-PON's wavelengths to replace XGPON's. Alternatively, new unallocated spectrum could be assigned to ensure co-existence with existing GPON, RF video and XGPON. However, such a scheme will leave little spectrum available for NG-PON2. Some element of spectral flexibility will be required to accommodate the various co-existence scenarios in operator networks. That said, Verizon expects that FSAN will look for fresh wavelengths for NG-PON2.

"FSAN is a sum of operators opinions and requirements, and it is getting hard," says O'Byrne. "Our preference would be to reuse XGPON wavelengths but, at the last meeting, some operators want to use XGPON in the coming years and aren't too favourable to recharacterising that band."

Another factor regarding spectrum is how widely the wavelengths will be spaced; 50GHz, 100GHz or the most relaxed 200GHz spacing are all being considered. The tradeoff here is hardware design complexity and cost versus spectral efficiency.

There is still work to be done to define the 10Gbps symmetrical rate. "Some folks are also looking for slightly different rates and these are also under discussion," says O'Byrne.

Another challenge is that TWDM-PON will also require the development of tunable optical components. "The ONUs should be something like the cost of a VDSL or a GPON modem, so there is a challenge there for the [tunable] laser manufacturers," says Nesset.

Tunable laser technology is widely used in optical transport, and high access volumes will help the economics, but this is not the case for tunable filters, he says.

The size and power consumption of PON silicon pose further challenges. NG-PON2 will have at least four times the capacity, yet operators will want the OLT to be the same size as for GPON.

Meanwhile, FSAN has several documents in preparation to help progress ITU activities relating to NG-PON2's standardisation.

FSAN has an established record of working effectively through the ITU to define PON standards, starting with Broadband PON (BPON) and Gigabit PON (GPON) to XGPON that operators are now planning to deploy.

FSAN members have already submitted a NG-PON2 requirements document to the ITU. "This sets the framework: what is it this system needs to do?" says Nesset. "This includes what client services it needs to support - Gigabit Ethernet and 10 Gigabit Ethernet, mobile backhaul latency requirements - high level things that the specification will then meet."

In June 2012 a detailed requirements document was submitted as was a preliminary specification for the physical layer. These will be followed by documents covering the NG-PON2 protocol and how the management of the PON end points will be implemented.

If rapid progress continues to be made, the standard could be ratified as early as 2013, says O'Byrne.

FSAN close to choosing the next generation of PON

Briefing: Next-gen PON

Part 1: NG-PON2

The next-generation passive optical network (PON) will mark a departure from existing PON technologies. Some operators want systems based on the emerging standard for deployment by 2015.

“One of the goals in FSAN is to converge on one solution that can serve all the markets"

Derek Nesset, co-chair of FSAN's NGPON task group

The Full Service Access Network (FSAN) industry group is close to finalising the next optical access technology that will follow on from 10 Gigabit GPON.

FSAN - the pre-standards forum consisting of telecommunications service providers, testing labs and equipment manufacturers - crafted what became the International Telecommunication Union's (ITU) standards for GPON (Gigabit PON) and 10 Gigabit GPON (XGPON1). In the past year FSAN has been working on NG-PON2, the PON technology that comes next.

“One of the goals in FSAN is to converge on one solution that can serve all the markets - residential users, enterprise and mobile backhaul," says Derek Nesset, co-chair of FSAN's NGPON task group.

Some mobile operators are talking about backhaul demands that will require multiple 10 Gigabit-per-second (Gbps) links to carry the common public radio interface (CPRI), for example. The key design goal, however, is that NG-PON2 retains the capability to serve residential users cost-effectively, stresses Nesset.

FSAN says it has a good description of each of the candidate technologies: what each system looks like and its associated power consumption. "We are trying to narrow down the solutions and the ideal is to get down to one,” says Nesset.

The power consumption of the proposed access scheme is of key interest for many operators, he says. Another consideration is the risk associated with moving to a novel architecture rather than adopting an approach that builds on existing PON schemes.

Operators such as NTT of Japan and Verizon in the USA have a huge installed base of PON and want to avoid having to amend their infrastructure for any next-generation PON scheme unable to re-use power splitters. Other operators such as former European incumbents are in the early phases of their rollout of PON and have Greenfield sites that could deploy other passive infrastructure technologies such as arrayed waveguide gratings (AWG).

"The ideal is we select a system that operates with both types of infrastructure," says Nesset. "Certain flavours of WDM-PON (wavelength division multiplexing PON) don't need the wavelength splitting device at the splitter node; some form of wavelength-tuning can be installed at the customer premises." That said, the power loss of existing optical splitters is higher than AWGs which impacts PON reach – one of several trade-offs that need to be considered.

Once FSAN has concluded its studies, member companies will generate 'contributions' for the ITU, intended for standardisation. The ITU has started work on defining high-level requirements for NG-PON2 through contributions from FSAN operators. Once the NG-PON2 technology is chosen, more contributions that describe the physical layer, the media access controller and the customer premise equipment's management requirements will follow.

Nesset says the target is to get such documents into the ITU by September 2012 but achieving wide consensus is the priority rather than meeting this deadline. "Once we select something in FSAN, we expect to see the industry ramp up its contributions based on that selected technology to the ITU," says Nesset. FSAN will select the NG-PON2 technology before September.

NG-PON2 technologies

Candidate technologies include an extension to the existing GPON and XGPON1 based on time-division multiplexing (TDM). Already vendors such as Huawei have demonstrated prototype 40 Gigabit capacity PON systems that also support hybrid TDM and WDM-PON (TWDM-PON). Other schemes include WDM-PON, ultra-dense WDM-PON and orthogonal frequency division multiplexing (OFDM).

Nesset says there are several OFDM variants being proposed. He views OFDM as 'DSL in the optical domain’: sub-carriers finely spaced in the frequency domain, each carrying low-bit-rate signals.

One advantage of OFDM technology, says Nesset, includes taking a narrowband component to achieve a broadband signal: a narrowband 10Gbps transmitter and receiver can achieve 40Gbps using sub-carriers, each carrying quadrature amplitude modulation (QAM). "All the clever work is done in CMOS - the digital signal processing and the analogue-to-digital conversion," he says. The DSP executes the fast Fourier transform (FFT) and the inverse FFT.

"We are trying to narrow down the solutions and the ideal is to get down to one"

Another technology candidate is WDM-PON including an ultra-dense variant that promises a reach of up to 100km and 1,000 wavelengths. Such a technology uses a coherent receiver to tune to the finely spaced wavelengths.

In addition to being compatible with existing infrastructure, another FSAN consideration is compatibility with existing PON standards. This is to avoid having to do a wholesale upgrade of users. For example, with XGPON1, the optical line terminal (OLT) using an additional pair of wavelengths - a wavelength overlay - sits alongside the existing GPON OLT. ”The same principle is desirable for NG-PON2,” says Nesset.

However, an issue is that spectrum is being gobbled up with each generation of PON. PON systems have been designed to be low cost and the transmit lasers used are not wavelength-locked and drift with ambient temperature. As such they consume spectrum similar to coarse WDM wavelength bands. Some operators such as Verizon and NTT also have a large installed base of analogue video overlay at 1550nm.

”So in the 1500 band you've got 1490nm for GPON, 1550nm for RF (radio frequency) video, and 1577nm for XGPON; there are only a few small gaps,” says Nesset. A technology that can exploit such gaps is both desirable and a challenge. “This is where ultra-dense WDM-PON could come into play,” he says. This technology could fit tens of channels in the small remaining spectrum gaps.

The technological challenges implementing advanced WDM-PON systems that will likely require photonic integration is also a concern for the operators. "The message from the vendors is that ’when you tell us what to do, we have got the technology to do it’,” says Nesset. ”But they need the see the volume applications to justify the investment.” However, operators need to weigh up the technological risks in developing these new technologies and the potential for not realising the expected cost reductions.

Timetable

Nesset points out that each generation of PON has built on previous generations: GPON built on BPON and XGPON on GPON. But NG-PON2 will inevitably be based on new approaches. These include TWDM-PON which is an evolution of XG-PON into the wavelength domain, virtual point-to-point approaches such as WDM-PON that may also use an AWG, and the use of digital signal processing with OFDM or coherent ultra dense WDM-PON. ”It is quite a challenge to weigh up such diverse technological approaches,” says Nesset.

If all goes smoothly it will take two ITU plenary meetings, held every nine months, to finalise the bulk of the NG-PON2 standard. That could mean mid-2013 at the earliest.

FSAN's timetable is based on operators wanting systems deployable in 2015. That requires systems to be ready for testing in 2014.

“[Once deployed] we want NG-PON2 to last quite a while and be scalable and flexible enough to meet future applications and markets as they emerge,” says Nesset.

The post-100 Gigabit era

Feature: Beyond 100G - Part 4

The latest coherent ASICs from Ciena and Alcatel-Lucent coupled with announcements from Cisco and Huawei highlight where the industry is heading with regard high-speed optical transport. But the announcements also raise questions too.

Source: Gazettabyte

Source: Gazettabyte

Observations and queries

- Optical transport has had a clear roadmap: 10 to 40 to 100 Gigabit-per-second (Gbps). 100Gbps optical transport will be the last of the fixed line-side speeds.

- After 100Gbps will come flexible speed-reach deployments. Line-side optics will be able to implement 50Gbps, 100Gbps, 200Gbps or even faster speeds with super-channels, tailored to the particular link.

- Variable speed-reach designs will blur the lines between metro and ultra long-haul. Does a traditional metro platform become a trans-Pacific submarine system simply by adding a new line card with the latest coherent ASIC boasting transmit and receive digital signal processors (DSPs), flexible modulation and soft-decision forward error correction?

Source: Gazettabyte

- The cleverness of optical transport has shifted towards electronics and digital signal processing and away from photonics. Optical system engineers are being taxed as never before as they try to extend the reach of 100, 200 and 400Gbps to match that of 10 and 40Gbps but what is key for platform differentiation is the DSP algorithms and ASIC design.

- Optical is the new radio. This is evident with the adding of a coherent transmit DSP that supports the various modulation schemes and allows spectral shaping, bunching carriers closer to make best use of the fibre's bandwidth.

- The radio analogy is fitting because fibre bandwidth is becoming a scarce resource. Usable fibre capacity has more than doubled with these latest ASIC announcements. Moving to 400Gbps doubles overall capacity to some 18 Terabits. Spectral shaping boosts that even further to over 23 Terabits. Last week 8.8 Terabits (88x100Gbps) was impressive.

- Maximising fibre capacity is why implementing single-carrier 100Gbps signals in 50GHz channels is now important.

- Super-channels, combining multiple carriers, have a lot of operational merits (see the super-channel section in the Cisco story). Infinera announced its 500Gbps super-channel over 250GHz last year. Now Ciena and Alcatel-Lucent highlight how a dual-carrier, dual-polarisation 16-QAM approach in 100GHz implements a 400Gbps signal.

- Despite all the talk of 16-QAM and 400Gbps wavelengths, 100Gbps is still in its infancy and will remain a key technology for years to come. Alcatel-Lucent, one of the early leaders in 100Gbps, has deployed 1,450 100 Gig line units since it launched its system in June 2010.

- Photonic integration for coherent will remain of key importance. Not so much in making yet more complex optical structures than at 100Gbps but shrinking what has already been done.

- Is there a next speed after 100Gbps? Is it 200Gbps until 400Gbps becomes established? Is it 500Gbps as Infinera argues? The answer is that it no longer matters. But then what exactly will operators use to assess the merits of the different vendors' platforms? Reach, power, platform density, spectral efficiency and line speeds are all key performance parameters but assessing each vendor's platform has clearly got harder.