COBO targets year-end to complete specification

Part 3: 400-gigabit on-board optics

- COBO will support 400-gigabit and 800-gigabit interfaces

- Three classes of module have been defined, the largest supporting at least 17.5W

The Consortium for On-board Optics (COBO) is scheduled to complete its module specification this year.

A draft specification defining the mechanical aspects of the embedded optics - the dimensions, connector and electrical interface - is already being reviewed by the consortium’s members.

Brad Booth“The draft specification encompasses what we will do inside the data centre and what will work for the coherent market,” says Brad Booth, chair of COBO and principal network architect for Microsoft’s Azure Infrastructure.

Brad Booth“The draft specification encompasses what we will do inside the data centre and what will work for the coherent market,” says Brad Booth, chair of COBO and principal network architect for Microsoft’s Azure Infrastructure.

COBO was established in 2015 to create an embedded optics multi-source agreement (MSA). On-board optics have long been available but until now these have been proprietary solutions.

“Our goal [with COBO] was to get past that proprietary aspect,” says Booth. “That is its true value - it can be used for optical backplane or for optical interconnect and now designers will have a standard to build to.”

The draft specification encompasses what we will do inside the data centre and what will work for the coherent market

Specification

The COBO modules are designed to be interchangeable. Unlike front-panel optical modules, the COBO modules are not ‘hot-pluggable’ - they cannot be replaced while the card is powered. But the design allows for COBO modules to be interchanged.

The COBO design supports 400-gigabit multi-mode and single-mode optical interfaces. The electrical interface chosen is the IEEE-defined CDAUI-8, eight lanes each at 50 gigabits implemented using a 25-gigabit symbol rate and 4-level pulse-amplitude modulation (PAM-4). COBO also supports an 800-gigabit interface using two tightly-coupled COBO modules.

The consortium has defined three module categories that vary in length. The module classes reflect the power envelope requirements; the shortest module supports multi-mode and the lower-power module designs while the longest format supports coherent designs. “The beauty of COBO is that the connectors and the connector spacings are the same no matter what length [of module] you use,” says Booth.

The COBO module is described as table-like, a very small printed circuit board that sits on two connectors. One connector is for the high-speed signals and the other for the power and control signals. “You don't have to have the cage [of a pluggable module] to hold it because of the two-structure support,” says Booth.

To be able to interchange classes of module, a ‘keep-out’ area is used. This area refers to board space that is deliberately left empty to ensure the largest COBO module form factor will fit. A module is inserted onto the board by first pushing it downwards and then sliding it along the board to fit the connection.

Booth points out that module failures are typically due to the optical and electrical connections rather than the optics itself. This is why the repeated accuracy of pick-and-place machines are favoured for the module’s insertion. “The thing you want to avoid is having touch points in the field,” he says.

Coherent

A working group was set up after the Consortium first started to investigate using the MSA for coherent interfaces. This work has now been included in the draft specification. “We realised that leaving it [the coherent work] out was going to be a mistake,” says Booth.

The main coherent application envisaged is the 400ZR specification being developed by the Optical Internetworking Forum (OIF).

The OIF 400ZR interface is the result of Microsoft’s own Madison project specification work. Microsoft went to the industry with several module requirements for metro and data centre interconnect applications.

Madison 1.0 was a two-wavelength 100-gigabit module using PAM-4 that resulted in Inphi’s 80km ColorZ module that supports up to 4 terabits over a fibre. Madison 1.5 defines a single-wavelength 100-gigabit module to support 6.4 to 7.2 terabits on a fibre. “Madison 1.5 is probably not going to happen,” says Booth. “We have left it to the industry to see if they want to build it and we have not had anyone come forward yet.”

Madison 2.0 specified a 400-gigabit coherent-based design to support a total capacity of 38.4 terabits - 96 wavelengths of 400 gigabits.

Microsoft initially envisioned a 43 gigabaud 64-QAM module. However, the OIF's 400ZR project has since adopted a 60-gigabaud 16-QAM module which will achieve either 48 wavelengths at 100GHz spacing or 64 wavelengths at 75GHz spacing, capacities of 19.2Tbps and 25.6Tbps, respectively.

In 2017, the number of coherent metro links Microsoft will use will be 10x greater than the number of metro and long-haul coherent links it used in 2016.

Once Microsoft starting talking about Madison 2.0, other large internet content providers came forward saying they had similar requirements which led to the initiative being driven into the OIF. The result is the 400ZR MSA that the large-scale data centre players want to be built by as many module companies as possible.

Booth highlights the difference in Microsoft’s coherent interface volume requirements just in the last year. In 2017, the number of coherent metro links Microsoft will use will be 10x greater than the number of metro and long-haul coherent links it used in 2016.

“Because it is an order of magnitude more, we need to have some level of specification, some level of interop because now we're getting to the point where if I have an issue with any single supplier, I do not want my business impeded by it,” he says.

Regarding the COBO module, Booth stresses that it will be the optical designers that will determine the different coherent specifications possible. Thermal simulation work already shows that the module will support 17.5W and maybe more.

“There is a lot more capability in this module that there is in a standard pluggable only because we don't have the constraint of a cage,” says Booth. “We can always go up in height and we can always add more heat sink.”

Booth says the COBO specification will likely need a couple more members’ reviews before its completion. “Our target is still to have this done by the end of the year,” he says.

Amended on Sept 4th, added comment about the 400ZR wavelength plans and capacity options

Meeting the many needs of data centre interconnect

High capacity. Density. Power efficiency. Client-side optical interface choices. Coherent transmission. Direct detection. Open line system. Just some of the requirements vendors must offer to compete in the data centre interconnect market.

“A key lesson learned from all our interactions over the years is that there is no one-size-fits-all solution,” says Jörg-Peter Elbers, senior vice president of advanced technology, standards and IPR at ADVA Optical Networking. “What is important is that you have a portfolio to give customers what they need.”

Jörg-Peter Elbers

Jörg-Peter Elbers

Teraflex

ADVA Optical Networking detailed its Teraflex, the latest addition to its CloudConnect family of data centre interconnect products, at the OFC show held in Los Angeles in March (see video).

The platform is designed to meet the demanding needs of the large-scale data centre operators that want high-capacity, compact platforms that are also power efficient.

A key lesson learned from all our interactions over the years is that there is no one-size-fits-all solution

Teraflex is a one-rack-unit (1RU) stackable chassis that supports three hot-pluggable 1.2-terabit modules or ‘sleds’. A sled supports two line-side wavelengths, each capable of coherent transmission at up to 600 gigabits-per-second (Gbps). Each sled’s front panel supports various client-side interface module options: 12 x 100-gigabit QSFPs, 3 x 400-gigabit QSFP-DDs and lower speed 10-gigabit and 40-gigabit modules using ADVA Optical Networking’s MicroMux technology.

“Building a product optimised only for 400-gigabit would not hit the market with the right feature set,” says Elbers. “We need to give customers the possibility to address all the different scenarios in one competitive platform.”

The Teraflex achieves 600Gbps wavelengths using a 64-gigabaud symbol rate and 64-ary quadrature-amplitude modulation (64-QAM). ADVA Optical Networking is using Acacia’s Communications latest Pico dual-core coherent digital signal processor (DSP) to implement the 600-gigabit wavelengths. ADVA Optical Networking would not confirm Acacia is its supplier but Acacia decided to detail the Pico DSP at OFC because it wanted to end speculation as to the source of the coherent DSP for the Teraflex. That said, ADVA Optical Networking points out that Teraflex’s modular nature means coherent DSPs from various suppliers can be used.

The 1 rack unit Teraflex

The 1 rack unit Teraflex

The line-side optics supports a variety of line speeds – from 600Gbps to 100Gbps, the lower the speed, the longer the reach.

The resulting 3-sled 1RU Teraflex platform thus supports up to 3.6 terabits-per-second (Tbps) of duplex communications. This compares to a maximum 800Gbps per rack unit using the current densest CloudConnect 0.5RU Quadflex card.

Markets

The data centre interconnect market is commonly split into metro and long haul.

The metro data centre interconnect market requires high-capacity, short-haul, point-to-point links up to 80km. Large-scale data centre operators may have several sites spread across a city, given they must pick locations where they can find them. Sites are typically no further apart than 80km to ensure a low-enough latency such that, collectively, they appear as one large logical data centre.

“You are extending the fabric inside the data centre across the data-centre boundary, which means the whole bandwidth you have on the fabric needs to be fed across the fibre link,” says Elbers. “If not, then there are bottlenecks and you are restricted in the flexibility you have.”

Large enterprises also use metro data centre interconnect. The enterprises’ businesses involve processing customer data - airline bookings, for example - and they cannot afford disruption. As a result, they may use twin data centres to ensure business continuity.

Here, too, latency is an issue especially if synchronous mirroring of data using Fibre Channel takes place between sites. The storage protocol requires acknowledgement between the end points such that the round-trip time over the fibre is critical. “The average distance of these connections is 40km, and no one wants to go beyond 80 or 100km,” says Elbers, who stresses that this is not an application for Teraflex given it is aimed at massive Ethernet transport. Customers using Fibre Channel typically need lower capacities and use more tailored solutions for the application.

The second data centre interconnect market - long haul - has different requirements. The links are long distance and the data sent between sites is limited to what is needed. Data centres are distributed to ensure continual business operation and for quality-of-experience by delivering services closer to customers.

Hundreds of gigabits and even terabits are sent over the long-distance links between data centres sites but commonly it is about a tenth of the data sent for metro data centre interconnect, says Elbers.

Direct Detection

Given the variety of customer requirements, ADVA Optical Networking is pursuing direct-detection line-side interfaces as well as coherent-based transmission.

At OFC, the system vendor detailed work with two proponents of line-side direct-detection technology - Inphi and Ranovus - as well as its coherent-based Teraflex announcement.

Working with Microsoft, Arista and Inphi, ADVA detailed a metro data centre interconnect demonstration that involved sending 4Tbps of data over an 80km link. The link comprised 40 Inphi ColorZ QSFP modules. A ColorZ module uses two wavelengths, each carrying 56Gbps using PAM-4 signalling. This is where having an open line system is important.

Microsoft wanted to use QSFPs directly in their switches rather than deploy additional transponders, says Elbers. But this still requires line amplification while the data centre operators want the same straightforward provisioning they expect with coherent technology. To this aim, ADVA demonstrated its SmartAmp technology that not only sets up the power levels of the wavelengths and provides optical amplification but also automatically measures and compensates for chromatic dispersion experienced over a link.

ADVA also detailed a 400Gbps metro transponder card based on PAM-4 implemented using two 200Gbps transmitter optical subassemblies (TOSAs) and two 200Gbps receiver optical subassemblies (ROSAs) from Ranovus.

Clearly there is also space for a direct-detection solution but that space will narrow down over time

Choices

The decision to use coherent or direct detection line-side optics boils down to a link’s requirements and the cost an end user is willing to pay, says Elbers.

As coherent-based optics has matured, it has migrated from long-haul to metro and now data centre interconnect. One way to cost-reduce coherent further is to cram more bits per transmission. “Teraflex is adding chunks of 1.2Tbps per sled which is great for people with very high capacities,” says Elbers, but small enterprises, for example, may only need a 100-gigabit link.

“For scenarios where you don’t need to have the highest spectral efficiency and the highest fibre capacity, you can get more cost-effective solutions,” says Elbers, explaining the system vendor’s interest in direct detection.

“We are seeing coherent penetrating more and more markets but still cost and power consumption are issues,” says Elbers. “Clearly there is also space for a direct-detection solution but that space will narrow down over time.”

Developments in silicon photonics that promise to reduce the cost of optics through greater integration and the adoption of packaging techniques from the CMOS industry will all help. “We are not there yet; this will require a couple of technology iterations,” says Elbers.

Until then, ADVA’s goal is for direct detection to cost half that of coherent.

“We want to have two technologies for the different areas; there needs to be a business justification [for using direct detection],” he says. “Having differentiated pricing between the two - coherent and direct detection - is clearly one element here.”

The OIF’s 400ZR coherent interface starts to take shape

Part 2: Coherent developments

The Optical Internetworking Forum’s (OIF) group tasked with developing two styles of 400-gigabit coherent interface is now concentrating its efforts on one of the two.

When first announced last November, the 400ZR project planned to define a dense wavelength-division multiplexing (DWDM) 400-gigabit interface and a single wavelength one. Now the work is concentrating on the DWDM interface, with the single-channel interface deemed secondary.

Karl Gass"It [the single channel] appears to be a very small percentage of what the fielded units would be,” says Karl Gass of Qorvo and the OIF Physical and Link Layer working group vice chair, optical, the group responsible for the 400ZR work.

Karl Gass"It [the single channel] appears to be a very small percentage of what the fielded units would be,” says Karl Gass of Qorvo and the OIF Physical and Link Layer working group vice chair, optical, the group responsible for the 400ZR work.

The likelihood is that the resulting optical module will serve both applications. “Realistically, probably both [interfaces] will use a tunable laser because the goal is to have the same hardware,” says Gass.

The resulting module may also only have a reach of 80km, shorter than the original goal of up to 120km, due to the challenging optical link budget.

Origins and status

The 400ZR project began after Microsoft and other large-scale data centre players such as Google and Facebook approached the OIF to develop an interoperable 400-gigabit coherent interface they could then buy from multiple optical module makers.

The internet content providers’ interest in an 80km-plus link is to connect premises across the metro. “Eighty kilometres is the magic number from a latency standpoint so that multiple buildings can look like a single mega data centre,” says Nathan Tracy of TE Connectivity and the OIF’s vice president of marketing.

Since then, traditional service providers have shown an interest in 400ZR for their metro needs. The telcos’ requirements are different to the data centre players, causing the group to tweak the channel requirements. This is the current focus of the work, with the OIF collaborating with the ITU.

The catch is how much can we strip everything down and still meet a large percentage of the use cases

“The ITU does a lot of work on channels and they have a channel measurement methodology,” says Gass. “They are working with us as we try to do some division of labour.”

The group will choose a forward error correction (FEC) scheme once there is common agreement on the channel. “Imagine all those [coherent] DSP makers in the same room, each one recommending a different FEC,” says Gass. “We are all trying to figure out how to compare the FEC schemes on a level playing field.”

Meeting the link budget is challenging, says Gass, which is why the link might end up being 80km only. “The catch is how much can we strip everything down and still meet a large percentage of the use cases.”

The cloud is the biggest voice in the universe

400ZR form factors

Once the FEC is chosen, the power envelope will be fine-tuned and then the discussion will move to form factors. The OIF says it is still too early to discuss whether the project will select a particular form factor. Potential candidates include the OSFP MSA and the CFP8.

Nathan TracyThe industry assumption is that the 80km-plus 400ZR digital coherent optics module will consume around 15W, requiring a very low-power coherent DSP that will be made using 7nm CMOS.

Nathan TracyThe industry assumption is that the 80km-plus 400ZR digital coherent optics module will consume around 15W, requiring a very low-power coherent DSP that will be made using 7nm CMOS.

“There is strong support across the industry for this project, evidenced by the fact that project calls are happening more frequently to make the progress happen,” says Tracy.

Why the urgency? “The cloud is the biggest voice in the universe,” says Tracy. To support the move of data and applications to the cloud, the infrastructure has to evolve, leading to the data centre players linking smaller locations spread across the metro.

“At the same time, the next-gen speed that is going to be used in these data centres - and therefore outside the data centres - is 400 gigabit,” says Tracy.

Talking markets: Oclaro on 100 gigabits and beyond

Oclaro’s chief commercial officer, Adam Carter, discusses the 100-gigabit market, optical module trends, silicon photonics, and why this is a good time to be an optical component maker.

Oclaro has started its first quarter 2017 fiscal results as it ended fiscal year 2016 with another record quarter. The company reported revenues of $136 million in the quarter ending in September, 8 percent sequential growth and the company's fifth consecutive quarter of 7 percent or greater revenue growth.

Adam CarterA large part of Oclaro’s growth was due to strong demand for 100 gigabits across the company’s optical module and component portfolio.

Adam CarterA large part of Oclaro’s growth was due to strong demand for 100 gigabits across the company’s optical module and component portfolio.

The company has been supplying 100-gigabit client-side optics using the CFP, CFP2 and CFP4 pluggable form factors for a while. “What we saw in June was the first real production ramp of our CFP2-ACO [coherent] module,” says Adam Carter, chief commercial officer at Oclaro. “We have transferred all that manufacturing over to Asia now.”

The CFP2-ACO is being used predominantly for data centre interconnect applications. But Oclaro has also seen first orders from system vendors that are supplying US communications service provider Verizon for its metro buildout.

The company is also seeing strong demand for components from China. “The China market for 100 gigabits has really grown in the last year and we expect it to be pretty stable going forward,” says Carter. LightCounting Market Research in its latest optical market forecast report highlights the importance of China’s 100-gigabit market. China’s massive deployments of FTTx and wireless front haul optics fuelled growth in 2011 to 2015, says LightCounting, but this year it is demand for 100-gigabit dense wavelength-division multiplexing and 100 Gigabit Ethernet optics that is increasing China’s share of the global market.

The China market for 100 gigabits has really grown in the last year and we expect it to be pretty stable going forward

QSFP28 modules

Oclaro is also providing 100-gigabit QSFP28 pluggables for the data centre, in particular, the 100-gigabit PSM4 parallel single-mode module and the 100-gigabit CWDM4 based on wavelength-division multiplexing technology.

2016 was expected to be the year these 100-gigabit optical modules for the data centre would take off. “It has not contributed a huge amount to date but it will start kicking in now,” says Carter. “We always signalled that it would pick up around June.”

One reason why it has taken time for the market for the 100-gigabit QSFP28 modules to take off is the investment needed to ramp manufacturing capacity to meet the demand. “The sheer volume of these modules that will be needed for one of these new big data centres is vast,” says Carter. “Everyone uses similar [manufacturing] equipment and goes to the same suppliers, so bringing in extra capacity has long lead times as well.”

Once a large-scale data centre is fully equipped and powered, it generates instant profit for an Internet content provider. “This is very rapid adoption; the instant monetisation of capital expenditure,” says Carter. “This is a very different scenario from where we were five to ten years ago with the telecom service providers."

Data centre servers and their increasing interface speed to leaf switches are what will drive module rates beyond 100 gigabits, says Carter. Ten Gigabit Ethernet links will be followed by 25 and 50 Gigabit Ethernet. “The lifecycle you have seen at the lower speeds [1 Gigabit and 10 Gigabit] is definitely being shrunk,” says Carter.

Such new speeds will spur 400-gigabit links between the data centre's leaf and spine switches, and between the spine switches. “Two hundred Gigabit Ethernet may be an intermediate step but I’m not sure if that is going to be a big volume or a niche for first movers,” says Carter.

400 gigabit CFP8

Oclaro showed a prototype 400-gigabit module in a CFP8 module at the recent ECOC show in September. The demonstrator is an 8-by-50 gigabit design using 25 gigabaud optics and PAM-4 modulation. The module implements the 400Gbase-LR8 10km standard using eight 1310nm distributed feedback lasers, each with an integrated electro-absorption modulator. The design also uses two 4-wide photo-detector arrays.

“We are using the four lasers we use for the CWDM4 100-gigabit design and we can show we have the other four [wavelength] lasers as well,” says Carter.

Carter says IP core routers will be the main application for the 400Gbase-LR8 module. The company is not yet saying when the 400-gigabit CFP8 module will be generally available.

We can definitely see the CFP2-ACO could support 400 gigabits and above

Coherent

Oclaro is already working with equipment customers to increase the line-side interface density on the front panel of their equipment.

The Optical Internetworking Forum (OIF) has already started work on the CFP8-ACO that will be able to support up to four wavelengths, each supporting up to 400 gigabits. But Carter says Oclaro is working with customers to see how the line-side capacity of the CFP2-ACO can be advanced. “We can definitely see the CFP2-ACO could support 400 gigabits and above,” says Carter. “We are working with customers as to what that looks like and what the schedule will be.”

And there are two other pluggable form factors smaller than the CFP2: the CFP4 and the QSFP28. “Will you get 400 gigabits in a QSFP28? Time will tell, although there is still more work to be done around the technology building blocks,” says Carter.

Vendors are seeking the highest aggregate front panel density, he says: “The higher aggregate bandwidth we are hearing about is 2 terabits but there is a need to potentially going to 3.2 and 4.8 terabits.”

Silicon photonics

Oclaro says it continues to watch closely silicon photonics and to question whether it is a technology that can be brought in-house. But issues remain. “This industry has always used different technologies and everything still needs light to work which means the basic III-V [compound semiconductor] lasers,” says Carter.

“Producing silicon photonics chips versus producing packaged products that meet various industry standards and specifications are still pretty challenging to do in high volume,” says Carter. And integration can be done using either silicon photonics or indium phosphide. “My feeling is that the technologies will co-exist,” says Carter.

Ranovus shows 200 gigabit direct detection at ECOC

Ranovus has announced it first direct-detection optical products for applications including data centre interconnect.

One product is a 200 gigabit-per-second (Gbps) dense wavelength-division multiplexing (WDM) CFP2 pluggable optical module that spans distances up to 130km. Ranovus will also sell the 200Gbps transmitter and receiver optical engines that can be integrated by vendors onto a host line card.

The dense WDM direct-detection solution from Ranovus is being positioned as a cheaper, lower-power alternative to coherent optics used for high-capacity metro and long-haul optical transport. Using such technology, service providers can link their data centre buildings distributed across a metro area.

The cost [of the CFP2 direct detection] proves in much better than coherent

“The power consumption [of the direct-detection design] is well within the envelope of what the CFP2 power budget is,” says Saeid Aramideh, a Ranovus co-founder and chief marketing. The CFP2 module's power envelop is rated at 12W and while there are pluggable CFP2-ACO modules now available, a coherent DSP-ASIC is required to work alongside the module.

“The cost [of the CFP2 direct detection] proves in much better than coherent does,” says Aramideh, although he points out that for distances greater than 120km, the economics change.

The 200Gbps CFP2 module uses four wavelengths, each at 50Gbps. Ranovus is using 25Gbps optics with 4-level pulse-amplitude modulation (PAM-4) technology provided by fabless chip company Broadcom to achieve the 50Gbps channels. Up to 96, 50 Gbps channels can be fitted in the C-band to achieve a total transmission bandwidth of 4.8 terabits.

Ranovus is demonstrating at ECOC eight wavelengths being sent over 100km of fibre. The link uses a standard erbium-doped fibre amplifier and the forward-error correction scheme built into PAM-4.

Technologies

Ranovus has developed several key technologies for its proprietary optical interconnect products. These include a multi-wavelength quantum dot laser, a silicon photonics based ring-resonator modulator, an optical receiver, and the associated driver and receiver electronics.

The quantum dot technology implements what is known as a comb laser, producing multiple laser outputs at wavelengths and grid spacings that are defined during fabrication. For the CFP2, the laser produces four wavelengths spaced 50GHz apart.

For the 200Gbps optical engine transmitter, the laser outputs are fed to four silicon photonics ring-resonator modulators to produce the four output wavelengths, while at the receiver there is an equivalent bank of tuned ring resonators that delivers the wavelengths to the photo-detectors. Ranovus has developed several receiver designs, with the lower channel count version being silicon photonics based.

The quantum dot technology implements what is known as a comb laser, producing multiple laser outputs at wavelengths and grid spacings that are defined during fabrication.

The use of ring resonators - effectively filters - at the receiver means that no multiplexer or demultiplexer is needed within the optical module.

“At some point before you go to the fibre, there is a multiplexer because you are multiplexing up to 96 channels in the C-band,” says Aramideh. “But that multiplexer is not needed inside the module.”

Company plans

The startup has raised $35 million in investment funding to date. Aramideh says the start-up is not seeking a further funding round but he does not rule it out.

The most recent funding round, for $24 million, was in 2014. At the time the company was planning to release its first product - a QSFP28 100-Gigabit OpenOptics module - in 2015. Ranovus along with Mellanox Technologies are co-founders of the dense WDM OpenOptics multi-source agreement that supports client side interface speeds at 100Gbps, 400Gbps and terabit speeds.

However, the company realised that 100-gigabit links within the data centre were being served by the coarse WDM CWDM4 and CLR4 module standards, and it chose instead to focus on the data centre interconnect market using its direct detection technology.

Ranovus has also been working with ADVA Optical Networking with it data centre interconnect technology. Last year, ADVA Optical Networking announced its FSP 3000 CloudConnect data centre interconnect platform that can span both the C- and L-bands.

Also planned by Ranovus is a 400-gigabit CFP8 module - which could be a four or eight channel design - for the data centre interconnect market.

Meanwhile, the CFP2 direct-detection module and the optical engine will be generally available from December.

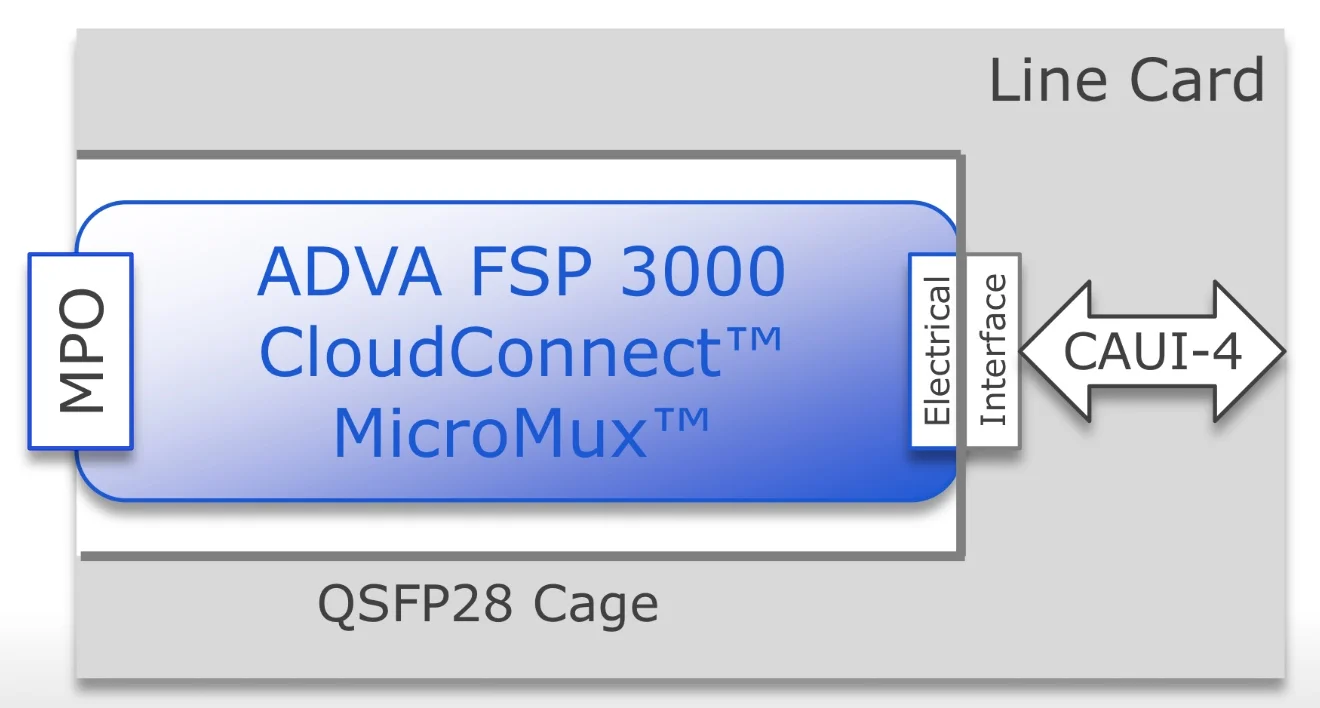

QSFP28 MicroMux expands 10 & 40 Gig faceplate capacity

- ADVA Optical Networking's MicroMux aggregates lower rate 10 and 40 gigabit client signals in a pluggable QSFP28 module

- ADVA is also claiming an industry first in implementing the Open Optical Line System concept that is backed by Microsoft

The need for terabits of capacity to link Internet content providers’ mega-scale data centres has given rise to a new class of optical transport platform, known as data centre interconnect.

Source: ADVA Optical Networking

Source: ADVA Optical Networking

Such platforms are designed to be power efficient, compact and support a variety of client-side signal rates spanning 10, 40 and 100 gigabit. But this poses a challenge for design engineers as the front panel of such platforms can only fit so many lower-rate client-side signals. This can lead to the aggregate data fed to the platform falling short of its full line-side transport capability.

ADVA Optical Networking has tackled the problem by developing the MicroMux, a multiplexer placed within a QSFP28 module. The MicroMux module plugs into the front panel of the CloudConnect, ADVA’s data centre interconnect platform, and funnels either 10, 10-gigabit ports or two, 40-gigabit ports into a front panel’s 100-gigabit port.

"The MicroMux allows you to support legacy client rates without impacting the panel density of the product," says Jim Theodoras, vice president of global business development at ADVA Optical Networking.

Using the MicroMux, lower-speed client interfaces can be added to a higher-speed product without stranding line-side bandwidth. An alternative approach to avoid wasting capacity is to install a lower-speed platform, says Theodoras, but then you can't scale.

ADVA Optical Networking offers four MicroMux pluggables for its CloudConnect data centre interconnect platform: short-reach and long-reach 10-by-10 gigabit QSFP28s, and short-reach and intermediate-reach 2-by-40 gigabit QSFP28 modules.

The MicroMux features an MPO connector. For the 10-gigabit products, the MPO connector supports 20 fibres, while for the 40-gigabit products, it is four fibres. At the other end of the QSFP28, that plugs into the platform, sits a CAUI-4 4x25-gigabit electrical interface (see diagram above).

“The key thing is the CAUI-4 interface; this is what makes it all work," says Theodoras.

Inside the MicroMux, signals are converted between the optical and electrical domains while a gearbox IC translates between 10- or 40-gigabit signals and the CAUI-4 format.

Theodoras stresses that the 10-gigabit inputs are not the old 100 Gigabit Ethernet 10x10 MSA but independent 10 Gigabit Ethernet streams. "They can come from different routers, different ports and different timing domains," he says. "It is no different than if you had 10, 10 Gigabit Ethernet ports on the front face plate."

Using the pluggables, a 5-terabit CloudConnect configuration can support up to 520, 10 Gigabit Ethernet ports, according to ADVA Optical Networking.

The first products will be shipped in the third quarter to preferred customers that help in its development while the products will be generally available at the year-end.

ADVA Optical Networking unveiled the MicroMux at OFC 2016, held in Anaheim, California in March. ADVA also used the show to detail its Open Optical Line System demonstration with switch vendor, Arista Networks.

Two years after Microsoft first talked about the [Open Optical Line System] concept at OFC, here we are today fully supporting it

Open Optical Line System

The Open Optical Line System is a concept being promoted by the Internet content providers to afford them greater control of their optical networking requirements.

Data centre players typically update their servers and top-of-rack switches every three years yet the optical transport functions such as the amplifiers, multiplexers and ROADMs have an upgrade cycle closer to 15 years.

“When the transponding function is stuck in with something that is replaced every 15 years and they want to replace it every three years, there is a mismatch,” says Theodoras.

Data centre interconnect line cards can be replaced more frequently with newer cards while retaining the chassis. And the CloudConnect product is also designed such that its optical line shelf can take external wavelengths from other products by supporting the Open Optical Line System. This adds flexibility and is done in a way that matches the work practices of the data centre players.

“The key part of the Open Optical Line System is the software,” says Theodoras. “The software lets that optical line shelf be its own separate node; an individual network element.”

The data centre operator can then manage the standalone CloudConnect Open Optical Line System product. Such a product can take coloured wavelength inputs and even provide feedback with the source platform, so that the wavelength is tuned to the correct channel. “It’s an orchestration and a management level thing,” says Theodoras.

Arista recently added a coherent line card to its 7500 spine switch family.

The card supports six CFP2-ACOs that have a reach of up to 2,000km, sufficient for most data centre interconnect applications, says Theodoras. The 7500 also supports the layer-two MACsec security protocol. However, it does not support flexible modulation formats. The CloudConnect does, supporting 100-, 150- and 200-gigabit formats. CloudConnect also has a 3,000km reach.

Source: ADVA Optical Networking

Source: ADVA Optical Networking

In the Open Optical Line System demonstration, ADVA Optical Networking squeezed the Arista 100-gigabit wavelength into a narrower 37.5GHz channel, sandwiched between two 100 gigabit wavelengths from legacy equipment and two 200 gigabit (PM-16QAM) wavelengths from the CloudConnect Quadplex card. All five wavelengths were sent over a 2,000km link.

Implementing the Open Optical Line System expands a data centre manager’s options. A coherent card can be added to the Arista 7500 and wavelengths sent directly using the CFP2-ACOs, or wavelengths can be sent over more demanding links, or ones that requires greater spectral efficiency, by using the CloudConnect. The 7500 chassis could also be used solely for switching and its traffic routed to the CloudConnect platform for off-site transmission.

Spectral efficiency is important for the large-scale data centre players. “The data centre interconnect guys are fibre-poor; they typically only have a single fibre pair going around the country and that is their network,” says Theodoras.

The joint demo shows that the Open Optical Line System concept works, he says: “Two years after Microsoft first talked about the concept at OFC, here we are today fully supporting it.”

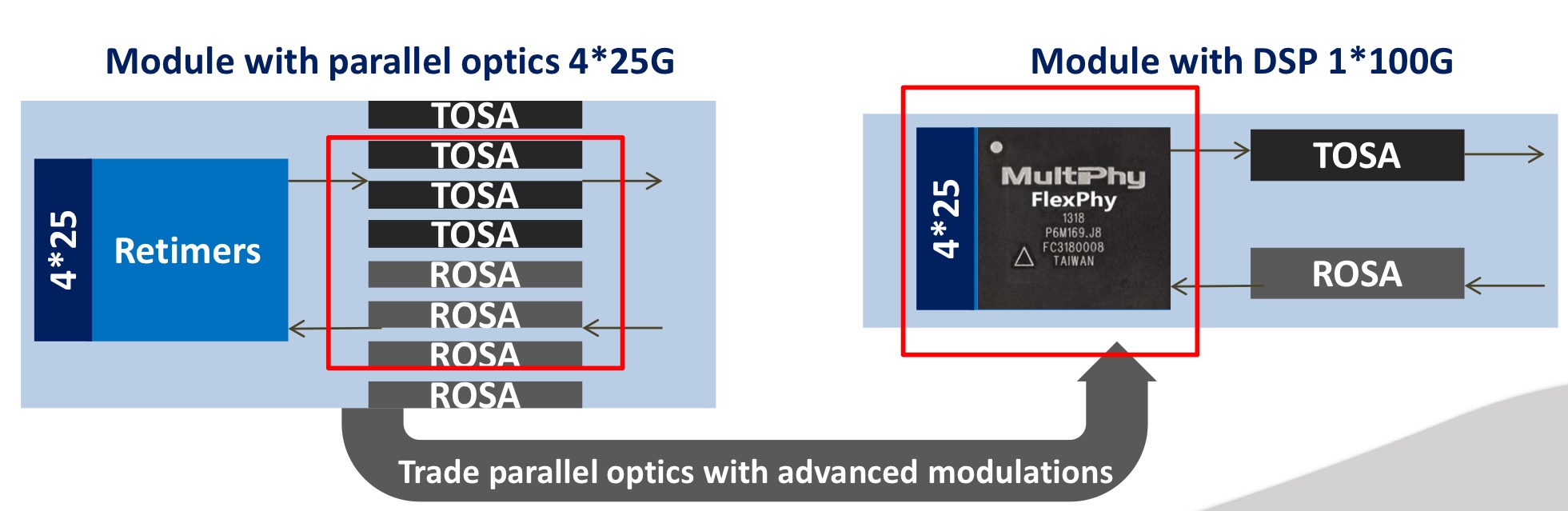

MultiPhy raises $17M to develop 100G serial interfaces

MultiPhy is developing chips to support serial 100-gigabit-per-second transmission using 25-gigabit optical components. The design will enable short reach links within the data centre and up to 80km point-to-point links for data centre interconnect.

Source: MultiPhy

Source: MultiPhy

“It is not the same chip [for the two applications] but the same technology core,” says Avi Shabtai, the CEO of MultiPhy. The funding will be used to bring products to market as well as expand the company’s marketing arm.

There is a huge benefit in moving to a single-wavelength technology; you throw out pretty much three-quarters of the optics

100 gigabit serial

The IEEE has specified 100-gigabit lanes as part of its ongoing 400 Gigabit Ethernet standardisation work. “It is the first time the IEEE has accepted 100 gigabit on a single wavelength as a baseline for a standard,” says Shabtai.

The IEEE work has defined 4-by-100 gigabit with a reach of 500 meters using four-level pulse-amplitude modulation (PAM-4) that encodes 2 bits-per-symbol. This means that optics and electronics operating at 50 gigabit can be used. However, MultiPhy has developed digital signal processing technology that allows the optics to be overdriven such that 25-gigabit optics can be used to deliver the 50 gigabaud required.

“There is a huge benefit in moving to a single-wavelength technology,” says Shabtai. ”You throw out pretty much three-quarters of the optics.”

The chip MultiPhy is developing, dubbed FlexPhy, supports the CAUI-4 (4-by-28 gigabit) interface, a 4:1 multiplexer and 1:4 demultiplexer, PAM-4 operating at 56 gigabaud and the digital signal processing.

The optics - a single transmitter optical sub-assembly (TOSA) and a single receiver optical sub-assembly (ROSA) - and the FlexPhy chip will fit within a QSFP28 module. “Taking into account that you have one chip, one laser and one photo-diode, these are pretty much the components you already have in an SFP module,” says Shabtai. “Moving from a QSFP form factor to an SFP is not that far.”

MultiPhy says new-generation switches will support 128 SFP28 ports, each at 100 gigabit, equating to 12.8 terabits of switching capacity.

Using digital signal processing also benefits silicon photonics. “Integration is much denser using CMOS devices with silicon photonics,” says Shabtai. DSP also improves the performance of silicon photonics-based designs such as the issues of linearity and sensitivity. “A lot of these things can be solved using signal processing,” he says.

FlexPhy will be available for customers this year but MultiPhy would not say whether it already has working samples.

MultiPhy raised $7.2 million venture capital funding in 2010.

Arista adds coherent CFP2 modules to its 7500 switch

Martin Hull

Martin Hull

Several optical equipment makers have announced ‘stackable’ platforms specifically to link data centres in the last year.

Infinera’s Cloud Xpress was the first while Coriant recently detailed its Groove G30 platform. Arista’s announcement offers data centre managers an alternative to such data centre interconnect platforms by adding dense wavelength-division multiplexing (DWDM) optics directly onto its switch.

For customers investing in an optical solution, they now have an all-in-one alternative to an optical transport chassis or the newer stackable data centre interconnnect products, says Martin Hull, senior director product management at Arista Networks. Insert two such line cards into the 7500 and you have 12 ports of 100 gigabit coherent optics, eliminating the need for the separate optical transport platform, he says.

The larger 11RU 7500 chassis has eight card slots such that the likely maximum number of coherent cards used in one chassis is four or five - 24 or 30 wavelengths - given that 40 or 100 Gigabit Ethernet client-side interfaces are also needed. The 7500 can support up to 96, 100 Gigabit Ethernet (GbE) interfaces.

Arista says the coherent line card meets a variety of customer needs. Large enterprises such as financial companies may want two to four 100 gigabit wavelengths to connect their sites in a metro region. In contrast, cloud providers require a dozen or more wavelengths. “They talk about terabit bandwidth,” says Hull.

With the CFP2-ACO, the DSP is outside the module. That allows us to multi-source the optics

As well as the CFP2-ACO modules, the card also features six coherent DSP-ASICs. The DSPs support 100 gigabit dual-polarisation, quadrature phase-shift keying (DP-QPSK) modulation but do not support the more advanced quadrature amplitude modulation (QAM) schemes that carry more bits per wavelength. The CFP2-ACO line card has a spectral efficiency that enables up to 96 wavelengths across the fibre's C-band.

Did Arista consider using CFP coherent optical modules that support 200 gigabit, and even 300 and 400 gigabit line rates using 8- and 16-QAM? “With the CFP2-ACO, the DSP is outside the module,” says Hull. “That allows us to multi-source the optics.”

The line card also includes 256-bit MACsec encryption. “Enterprises and cloud providers would love to encrypt everything - it is a requirement,” says Hull. “The problem is getting hold of 100-gigabit encryptors.” The MACsec silicon encrypts each packet sent, avoiding having to use a separate encryption platform.

CFP4-ACO and COBO

As for denser CFP4-ACO coherent modules, the next development after the CFP2-ACO, Hull says it is still too early, as it is with for 400 gigabit on-board optics being developed by COBO and which is also intended to support coherent. “There is a lot of potential but it is still very early for COBO,” he says.

“Where we are today, we think we are on the cutting edge of what can be delivered on a line card,” says Hull. “Getting everything onto that line card is an engineering achievement.”

Future developments

Arista does not make its own custom ASICs or develop optics for its switch platforms. Instead, the company uses merchant switch silicon from the likes of Broadcom and Intel.

According to Hull, such merchant silicon continues to improve, adding capabilities to Arista’s top-of-rack ‘leaf’ switches and its more powerful ‘spine’ switches such as the 7500. This allows the company to make denser, higher-performance platforms that also scale when coupled with software and networking protocol developments.

Arista claims many of the roles performed by traditional routers can now be fulfilled by the 7500 such as peering, the exchange of large routing table information between routers using the Border Gateway Protocol (BGP). “[With the 7500], we can have that peering session; we can exchange a full set of routes with that other device,” says Hull.

"We think we are on the cutting edge of what can be delivered on a line card”

The company uses what it calls selective route download where the long list of routes is filtered such that the switch hardware is only programmed with the routes to be communicated with. Hull cites as an example a content delivery site that sends content to subscribers. The subscribers are typically confined to a known geographic region. “I don’t need to have every single Internet route in my hardware, I just need the routes to reach that state or metro region,” says Hull.

By having merchant silicon that supports large routing tables coupled with software such as selective route download, customers can use a switch to do the router’s job, he says.

Arista says that in 2016 and 2017 it will continue to introduce leaf and spine switches that enable data centre customers to further scale their networks. In September Arista launched Broadcom Tomahawk-based switches that enable the transition from 10 gigabit server interfaces to 25 gigabit and the transition from 40 to 100 gigabit uplinks.

Longer term, there will be 50 GbE and iterations of 400 and one terabit Ethernet, says Hull. And all this relates to the switch silicon. At present 3.2 terabit switch chips are common and already there is a roadmap to 6.4 and even 12.8 terabits by increasing both the chip’s pin count and using PAM-4 alongside the 25 gigabit signalling to double input/ output again. A 12.8 terabit switch may be a single chip, says Hull, or it could be multiple 3.2 terabit building blocks integrated together.

“It is not just a case of more ports on a box,” says Hull. “The boxes have to be more capable from a hardware perspective so that the software can harness that.”

SDM and MIMO: An interview with Bell Labs

Part 2: The capacity crunch and the role of SDM

The argument for spatial-division multiplexing (SDM) - the sending of optical signals down parallel fibre paths, whether multiple modes, cores or fibres - is the coming ‘capacity crunch’. The information-carrying capacity limit of fibre, for so long described as limitless, is being approached due to the continual yearly high growth in IP traffic. But if there is a looming capacity crunch, why are we not hearing about it from the world’s leading telcos?

“It depends on who you talk to,” says Peter Winzer, head of the optical transmission systems and networks research department at Bell Labs. The incumbent telcos have relatively low traffic growth - 20 to 30 percent annually. “I believe fully that it is not a problem for them - they have plenty of fibre and very low growth rates,” he says.

Twenty to 30 percent growth rates can only be described as ‘very low’ when you consider that cable operators are experiencing 60 percent year-on-year traffic growth while it is 80 to 100 percent for the web-scale players. “The whole industry is going through a tremendous shift right now,” says Winzer.

In a recent paper, Winzer and colleague Roland Ryf extrapolate wavelength-division multiplexing (WDM) trends, starting with 100-gigabit interfaces that were adopted in 2010. Assuming an annual traffic growth rate of 40 to 60 percent, 400-gigabit interfaces become required in 2013 to 2014, and the authors point out that 400-gigabit transponder deployments started in 2013. Terabit transponders are forecast in 2016 to 2017 while 10 terabit commercial interfaces are expected from 2020 to 2024.

In turn, while WDM system capacities have scaled a hundredfold since the late 1990s, this will not continue. That is because systems are approaching the Non-linear Shannon Limit which estimates the upper limit capacity of fibre at 75 terabit-per-second.

Starting with 10-terabit-capacity systems in 2010 and a 30 to 40 percent core network traffic annual growth rate, the authors forecast that 40 terabit systems will be required shortly. By 2021, 200 terabit systems will be needed - already exceeding one fibre’s capacity - while petabit-capacity systems will be required by 2028.

Even if I’m off by an order or magnitude, and it is 1000, 100-gigabit lines leaving the data centre; there is no way you can do that with a single WDM system

Parallel spatial paths are the only physical multiplexing dimension remaining to expand capacity, argue the authors, explaining Bell Labs’ interest in spatial-division multiplexing for optical networks.

If the telcos do not require SDM-based systems anytime soon, that is not the case for the web-scale data centre operators. They could deploy SDM as soon as 2018 to 2020, says Winzer.

The web-scale players are talking about 400,000-server data centres in the coming three to five years. “Each server will have a 25-gigabit network interface card and if you assume 10 percent of the traffic leaves the data centre, that is 10,000, 100-gigabit lines,” says Winzer. “Even if I’m off by an order or magnitude, and it is 1000, 100-gigabit lines leaving the data centre; there is no way you can do that with a single WDM system.”

SDM and MIMO

SDM can be implemented in several ways. The simplest way to create parallel transmission paths is to bundle several single-mode fibres in a cable. But speciality fibre can also be used, either multi-core or multi-mode.

For the demo, Bell Labs used such a fibre, a coupled 3-core one, but Sebastian Randel, a member of technical staff, said its SDM receiver could also be used with a fibre supporting a few spatial modes. By increasing slightly the diameter of a single-mode fibre, not only is a single mode supported but two second-order modes. “Our signal processing would cope with that fibre as well,” says Winzer.

The signal processing referred to, that restores the multiple transmissions at the receiver, implements multiple input, multiple output or MIMO. MIMO is a well-known signal processing technique used for wireless and digital subscriber line (DSL).

They are garbled up, that is what the rotation is; undoing the rotation is called MIMO

Multi-mode fibre can support as many as 100 spatial modes. “But then you have a really big challenge to excite all 100 spatial modes individually and detect them individually,” says Randel. In turn, the digital signal processing computation required for the 100 modes is tremendous. “We can’t imagine we can get there anytime soon,” says Randel.

Instead, Bell Labs used 60 km of the 3-core coupled fibre for its real-time SDM demo. The transmission distance could have been much longer except the fibre sample was 60 km long. Bell Labs chose the coupled-core fibre for the real-time MIMO demonstration as it is the most demanding case, says Winzer.

The demonstration can be viewed as an extension of coherent detection used for long-distance 100 gigabit optical transmission. In a polarisation-multiplexed, quadrature phase-shift keying (PM-QPSK) system, coupling occurs between the two light polarisations. This is a 2x2 MIMO system, says Winzer, comprising two inputs and two outputs.

For PM-QPSK, one signal is sent on the x-polarisation and the other on the y-polarisation. The signals travel at different speeds while hugely coupling along the fibre, says Winzer: “The coherent receiver with the 2x2 MIMO processing is able to undo that coupling and undo the different speeds because you selectively excite them with unique signals.” This allows both polarisations to be recovered.

With the 3-core coupled fibre, strong coupling arises between the three signals and their individual two polarisations, resulting in a 6x6 MIMO system (six inputs and six outputs). The transmission rotates the six signals arbitrarily while the receiver, using 6x6 MIMO, rotates them back. “They are garbled up, that is what the rotation is; undoing the rotation is called MIMO.”

Demo details

For the demo, Bell Labs generated 12, 2.5-gigabit signals. These signals are modulated onto an optical carrier at 1550nm using three nested lithium niobate modulators. A ‘photonic lantern’ - an SDM multiplexer - couples the three signals orthogonally into the fibre’s three cores.

The photonic lantern comprises three single-mode fibre inputs fed by the three single-mode PM-QPSK transmitters while its output places the fibres closer and closer until the signals overlap. “The lantern combines the fibres to create three tiny spots that couple into a single fibre, either single mode or multi-mode,” says Winzer.

At the receiver, another photonic lantern demultiplexes the three signals which are detected using three integrated coherent receivers.

Don’t do MIMO for MIMO’s sake, do MIMO when it helps to bring the overall integrated system cost down

To implement the MIMO, Bell Labs built a 28-layer printed circuit board which connects the three integrated coherent receiver outputs to 12, 5-gigabit-per-second 10-bit analogue-to-digital converters. The result is an 600 gigabit-per-second aggregate output digital data stream. This huge data stream is fed to a Xilinx Virtex-7 XC7V2000T FPGA using 480 parallel lanes, each at 1.25 gigabit-per-second. It is the FPGA that implements the 6x6 MIMO algorithm in real time.

“Computational complexity is certainly one big limitation and that is why we have chosen a relatively low symbol rate - 2.5 Gbaud, ten times less than commercial systems,” says Randel. “But this helps us fit the [MIMO] equaliser into a single FPGA.”

Future work

With the growth in IP traffic, optical engineers are going to have to use space and wavelengths. “But how are you going to slice the pie?” says Winzer.

With the example of 10,000, 100-gigabit wavelengths, will 100 WDM channels be sent over 100 spatial paths or 10 WDM channels over 1,000 spatial paths? “That is a techno-economic design optimisation,” says Winzer. “In those systems, to get the cost-per-bit down, you need integration.”

That is what the Bell Lab’s engineers are working on: optical integration to reduce the overall spatial-division multiplexing system cost. “Integration will happen first across the transponders and amplifiers; fibre will come last,” says Winzer.

Winzer stresses that MIMO-SDM is not primarily about fibre, a point frequently misunderstood. The point is to enable systems with crosstalk, he says.

“So if some modulator manufacturer can build arrays with crosstalk and sell the modulator at half the price they were able to before, then we have done our job,” says Winzer. “Don’t do MIMO for MIMO’s sake, do MIMO when it helps to bring the overall integrated system cost down.”

Further Information:

Space-division Multiplexing: The Future of Fibre-Optics Communications, click here

For Part 1, click here

Coriant's 134 terabit data centre interconnect platform

“We have several customers that have either purpose-built data centre interconnect networks or have data centre interconnect as a key application riding on top of their metro or long-haul networks,” says Jean-Charles Fahmy, vice president of cloud and data centre at Coriant.

Jean-Charles Fahmy

Jean-Charles Fahmy

Each card in the platform is one rack unit (1RU) high and has a total capacity of 3.2 terabit-per-second, while the full G30 rack supports 42 such cards for a total platform capacity of 134 terabits. The G30's power consumption equates to 0.45W-per-gigabit.

The card supports up to 1.6 terabit line-side capacity and up to 1.6 terabit of client side interfaces. The card can hold eight silicon photonics-based CFP2-ACO (analogue coherent optics) line-side pluggables. For the client-side optics, 16, 100 gigabit QSFP28 modules can be used or 20 QSFP+ modules that support 40 or 4x10 gigabit rates.

Silicon photonics

Each CFP2-ACO supports 100, 150 or 200 gigabit transmission depending on the modulation scheme used. For 100 gigabit line rates, dual-polarisation, quadrature phase-shift keying (DP-QPSK) is used, while dual-polarisation, 8 quadrature amplitude modulation (DP-8-QAM) is used for 150 gigabit, and DP-16-QAM for 200 gigabit.

A total of 128 wavelengths can be packed into the C-band equating to 25.6 terabit when using DP-16-QAM.

It [the data centre interconnect] is a dynamic competitive market and in some ways customer categories are blurring. Cloud and content providers are becoming network operators, telcos have their own data centre assets, and all are competing for customer value

Coriant claims the platform can achieve 1,000 km using DP-16-QAM, 2,000 km using 8-QAM and up to 4,000 km using DP-QPSK. That said, the equipment maker points out that the bulk of applications require distances of a few hundred kilometers or less.

This is the first detailed CFP2-ACO module that supports all three modulation formats. Coriant says it has worked closely with its strategic partners and that it is using more than one CFP2-ACO supplier.

Acacia is one silicon photonics player that announced at OFC 2015 a chip that supports 100, 150 and 200 gigabit rates however it has not detailed a CFP2-ACO product yet. Acacia would not comment whether it is supplying modules for the G30 or whether it has used its silicon photonics chip in a CFP2-ACO. The company did say it is providing its silicon photonics products to a variety of customers.

“Coriant has been active in engaging the evolving ecosystem of silicon photonics,” says Fahmy. “We have also built some in-house capability in this domain.” Silicon photonics technology as part of the Groove G30 is a combination of Coriant’s own in-house designs and its partnering with companies as part of this ecosystem, says Fahmy: “We feel that this is one of the key competitive advantages we have.”

The company would not disclose the degree to which the CFP2-ACO coherent transceiver is silicon photonics-based. And when asked if the different CFP2-ACOs supplied are all silicon photonics-based, Fahmy answered that Coriant’s supply chain offers a range of options.

Oclaro would not comment as to whether it is supplying Coriant but did say its indium-phosphide CFP2-ACO has a linear interface that supports such modulation formats as BPSK, QPSK, 8-QAM and 16-QAM.

So what exactly does silicon photonics contribute?

“Silicon photonics offers the opportunity to craft system architectures that perhaps would not have been possible before, at cost points that perhaps may not have been possible before,” says Fahmy.

Modular design

Coriant has used a modular design for its 1RU card, enabling data centre operators to grow their system based on demand and save on up-front costs. For example, Coriant uses ‘sleds’, trays that slide onto the card that host different combinations of CFP2-ACOs, coherent DSP functionality and client-side interface options.

“This modular architecture allows pay-as-you-grow and, as we like to say, power-as-you-grow,” says Fahmy. “It also allows a simple sparing strategy.”

The Groove G30 uses a merchant-supplied coherent DSP-ASIC. In 2011, NSN invested in ClariPhy the DSP-ASIC supplier, and Coriant was founded from the optical networking arm of NSN. The company will noy say the ratio of DSP-ASICs to CFP2-ACOs used but it is possible that four DSP-ASICs serve the eight CFP2-ACOs, equating to two CFP2-ACOs and a DSP-ASIC per sled.

“Web-scale customers will most probably start with a fully loaded system, while smaller cloud players or even telcos may want to start with a few 10 or 40 gigabit interfaces and grow [capacity] as required,” says Fahmy.

Open interfaces

Coriant has designed the G30 with two software environments in mind. “The platform has a full set of open interfaces allowing the product to be integrated into a data centre software-defined networking (SDN) environment,” says Bill Kautz, Coriant’s director of product solutions. “We have also integrated the G30 into Coriant’s network management and control software: the TNMS network management and the Transcend SDN controller.”

Coriant also describes the G30 as a disaggregated transponder/ muxponder platform. The platform does not support dense WDM line functions such as optical multiplexing, ROADMs, amplifiers or dispersion compensation modules. Accordingly, Groove is designed to interoperate with Coriant’s line-system options.

Groove can also be used as a source of alien wavelengths over third-party line systems, says Fahmy. The latter is a key requirement of customers that want to use their existing line systems.

“It [the data centre interconnect] is a dynamic competitive market and in some ways customer categories are blurring,” says Fahmy. “Cloud and content providers are becoming network operators, telcos have their own data centre assets, and all are competing for customer value.”

Further information

IHS hosted a recent webinar with Coriant, Cisco and Oclaro on 100 gigabit metro evolution, click here