High fives: 5 Terabit OTN switching and 500 Gig super-channels.

Infinera has announced a core network platform that combines Optical Transport Network (OTN) switching with dense wavelength division multiplexing (DWDM) transport. "We are looking at a system that integrates two layers of the network," says Mike Capuano, vice president of corporate marketing at Infinera.

"This is 100Tbps of non-blocking switching, all functioning as one system. You just can't do that with merchant silicon."

"This is 100Tbps of non-blocking switching, all functioning as one system. You just can't do that with merchant silicon."

Mike Capuano, Infinera

The DTN-X platform is based on Infinera's third-generation photonic integrated circuit (PIC) that supports five, 100Gbps coherent channels.

Each DTN-X platform can deliver 5 Terabits-per-second (Tbps) of non-blocking OTN switching using an Infinera-designed ASIC. Ten DTN-X platforms can be combined to scale the OTN switching and transport capacity to 50Tbps currently.

Infinera also plans to add Multiprotocol Label Switching (MPLS) to turn the DTN-X into a hybrid OTN/ MPLS switch. With the next upgrades to the PIC and the switching, the ten DTN-X platforms will scale to 100Tbps optical transport and 100Tbps OTN and MPLS switching capacity.

The platform is being promoted by Infinera as a way for operators to tackle network traffic growth and support developments such as cloud computing where applications and content increasingly reside in the network. "What that means [for cloud-based services to work] is a network with huge capacity and very low latency," says Capuano.

Platform details

The 5x100Gbps PIC supports what Infinera calls a 500Gbps 'super-channel'. Each super-channel is a multi-carrier implementation comprising five, 100Gbps wavelengths. Combined with OTN, the 500Gbps super-channel can be filled with 1, 10, 40 and 100 Gigabit streams (SONET/SDH, Ethernet, video etc). Moreover, there is no spectral efficiency penalty: the super-channel uses 250GHz of fibre spectrum, provisioning five 50GHz-wide, 100Gbps wavelengths at a time.

"We have seen 40 and 100Gbps come on the market and they are definitely helping with fibre capacity issues," says Capuano. "But they are more expensive from a cost-per-bit perspective than 10Gbps." By introducing the 500Gbps PIC, Infinera says it is reducing the cost-per-bit performance of high speed optical transport.

DTN-X: shown are 5 line and tributary cards top and bottom with switching cards in the centre of the chassis. Source: Infinera

DTN-X: shown are 5 line and tributary cards top and bottom with switching cards in the centre of the chassis. Source: Infinera

Integrating OTN switching within the platform results in the lowest cost solution and is more efficient when compared to multiplexed transponders (muxponder) configured manually, or an external OTN switch which must be optically connected to the transport platform.

The DTN-X also employs Generalised MPLS (GMPS) software. "GMPLS makes it easy to deploy networks and services with point-and-click provisioning," says Capuano.

Each DTX-N line card supports a 500Gbps PIC but the chassis backplane is specified at 1Tbps, ready for Infinera's next-generation 10x100Gbps PIC that will upgrade the DTN-X to a 10Tbps system. "We have already presented our test results for our 1Tbps PIC back in March," says Capuano. The fourth-generation PIC, estimated around 2014 (based on a company slide although Infinera has made no public comment), will support a 1Tbps super-channel.

Adding MPLS will add the transport capability of the protocol to the DTN-X. "You will have MPLS transport, OTN switching and DWDM all in one platform," says Capuano.

OTN switching is the priority of the tier-one operators to carry and process their SONET/SDH traffic; adding MPLS will enable extra traffic processing capabilities to the system, he says.

Infinera says that by eventually integrating MPLS switching into the optical transport network, operators will be able to bypass expensive router ports and simplify their network operation.

Performance

Infinera says that the DTX-N 5Tbps performance does not dip however the system is configured: whether solely as a switch (all line card slots filled with tributary modules), mixed DWDM/ switching (half DWDM/ half tributaries, for example) or solely as a DWDM platform. Depending on the cards in the DTN-X platform, the transport/ switching configuration can be varied but the 5Tbps I/O capacity is retained. Infinera says other switches on the market do lose I/O capacity as the interface mix is varied.

Overall, Infinera claims the platform requires half the power of competing solutions and takes up a third less space.

The DTN-X will be available in the first half of 2012.

Analysis

Gazettabyte asked several market research firms about the significance of the DTN-X announcement and the importance of combining OTN, DWDM and soon MPLS within one platform.

Ovum

Ron Kline, principal analyst, and Dana Cooperson, vice president, of the network infrastructure practice

"MPLS switching is setting up a very interesting competitive dynamic among vendors"

Dana Cooperson, Ovum

The DTN-X is a platform for the largest service providers and their largest sites, says Ovum.

It sees the DTN-X in the same light as other integrated OTN/ WDM platforms such as Huawei's OSN 8800, Nokia Siemens Networks' hiT 7100, Alcatel-Lucent's 1830 PSS and Tellabs' 7100 OTS.

"It fits the mold for Verizon's long-haul optical transport platform (LH OTP), especially once MPLS is added," says Kline. "NSN is also claiming it will add MPLS to the 7100. Once MPLS is added, then you have the big packet optical transport box that Verizon wants."

The DTN-X platform will boost the business case for 100 Gig in a similar way to how Infinera's current PIC has done at 10 Gig. "The others will be forced to lower price," says Kline.

Having GMPLS is important, especially if there is a need to do dynamic bandwidth allocation, however it is customer-dependent. "When you start digging, it's hard to find large-scale implementations of GMPLS," says Kline.

The Ovum analysts stress that the need for OTN in the core depends on the customer. Content service providers like Google couldn't care less about OTN. "It's really an issue for multi-service providers like BT and AT&T," says Cooperson,

There is a consensus about the need for MPLS in the core. "Different service providers are likely to take different approaches — some might prefer an integrated box and others might not, it depends on their business," she says. "I think MPLS switching is setting up a very interesting competitive dynamic among vendors that focus on IP/MPLS, those that focus on optical, and those that are trying to do both [optical and IP/MPLS].

Ovum highlights several aspects regarding the DTN-X's claimed performance.

"Assuming it performs as advertised, this should finally give Infinera what it needs to be of real interest to the tier-ones," says Cooperson. "The message of scalability, simplicity, efficiency, and profitability is just what service providers want to hear."

Cooperson also highlights Infinera's approach to optical-electrical-optical conversion and the benefit this could deliver at line speeds greater than 100Gbps.

At present ROADMs are being upgraded to support flexible spectrum channel configurations, also known as gridless. This is to enable future line speeds that will use more spectrum than current 50GHz DWDM channels. Operators want ROADMs that support flexible spectrum requirements but managing the network to support these variable width channels is still to resolved.

"It fits the mold for Verizon's long-haul optical transport platform (LH OTP), especially once MPLS is added"

"It fits the mold for Verizon's long-haul optical transport platform (LH OTP), especially once MPLS is added"

Ron Kline, Ovum

Infinera's approach is based on conversion to the electrical domain when dropping and regenerating wavelengths such that the issue of flexible channels does not arise or is at least forestalled. This, says Cooperson, could be Infinera's biggest point of differentiation.

"What impresses me is the 500Gbps super-channel using five, 100Gbps carriers and the size of the switch fabric," adds Kline. The 5Tbps switching performance also exceeds that of everyone else: "Alcatel-Lucent is closest with 4Tbps but most range from 1-3Tbps and top out at 3Tbps."

The ease of use is also a big deal. Infinera did very well in marketing rapid turn up: 10 Gig in 10 days for example, says Kline: "It looks like they will be able to do the same here with 100 Gig."

Infonetics Research

Andrew Schmitt, directing analyst, optical

"GMPLS isn't that important, yet."

The DTN-X is a WDM platform which optionally includes a switch fabric for carriers that want it integrated with the transport equipment, says Schmitt. Once MPLS is added, it has the potential to be a full-blown packet-optical system.

"[The announcement is] pretty significant though not unexpected," says Schmitt. "I think the key question is what it costs, and whether the 500G PIC translates into compelling savings."

Having MPLS support is important for some carriers such as XO Communications and Google but not for others.

Schmitt also says GMPLS isn't that important, yet. "Infinera's implementation of regen-rich networks should make their GMPLS implementation workable," he says. "It has been building networks like that for a while."

OTN in the core is still an open debate but any carrier that doesn't have the luxury of a homogenous data network needs it, he says

Schmitt has yet to speak with carriers who have used the DTN-X: "I can't comment on claimed performance but like I said, cost is important."

ACG Research

Eve Griliches, managing partner

"Infinera has already introduced the 500G PIC, but the OTN is significant in that it can be used as a standalone OTN switch, and it has the largest capacity out there today"

The DTN-X as an OTN/ WDM platform awaiting label switch router (LSR) functionality, says Griliches: "With the LSR functionality it will be able to do statistical multiplexing for direct router connections."

Infinera has already introduced the 500 Gig PIC but the OTN is significant in that it can be used as a standalone OTN switch, and it has the largest capacity out there today. An OTN survey conducted last year by ACG Research found that the switch capacity sweet spot is between 4 and 8Tbps.

Griliches says that LSR-based products are taking time to incorporate WDM and OTN technologies, while it is unclear when the DTN-X will support MPLS to add LSR capabilities. The race is on as to whom can integrate everything first, but DWDM and OTN before MPLS is the right direction for most tier-one operators, she says.

Infinera has over eight thousand of its existing DTNs deployed at 85 customers in 50 countries. The scale of the DTN-X will likely broaden Infinera's customer base to include tier-one operators, says Griliches.

ACG Research has heard positive feedback from operators it has spoken to. One stressed that the decreased port count due to the larger OTN cross-connect significantly improves efficiencies. Another operator said it would pick Infinera and said the beta version of the 500Gbps PIC is "working beautifully".

Tackling the coming network crunch

"In the end you run out of the ability to transmit more information along a single-mode fibre"

"In the end you run out of the ability to transmit more information along a single-mode fibre"

Ian Giles, Phoenix Photonics

The project, dubbed MODE-GAP, is part of the EC's Seventh Framework programme, and includes system vendor Nokia Siemens Networks (NSN), as well as optical component, fibre firms and several universities.

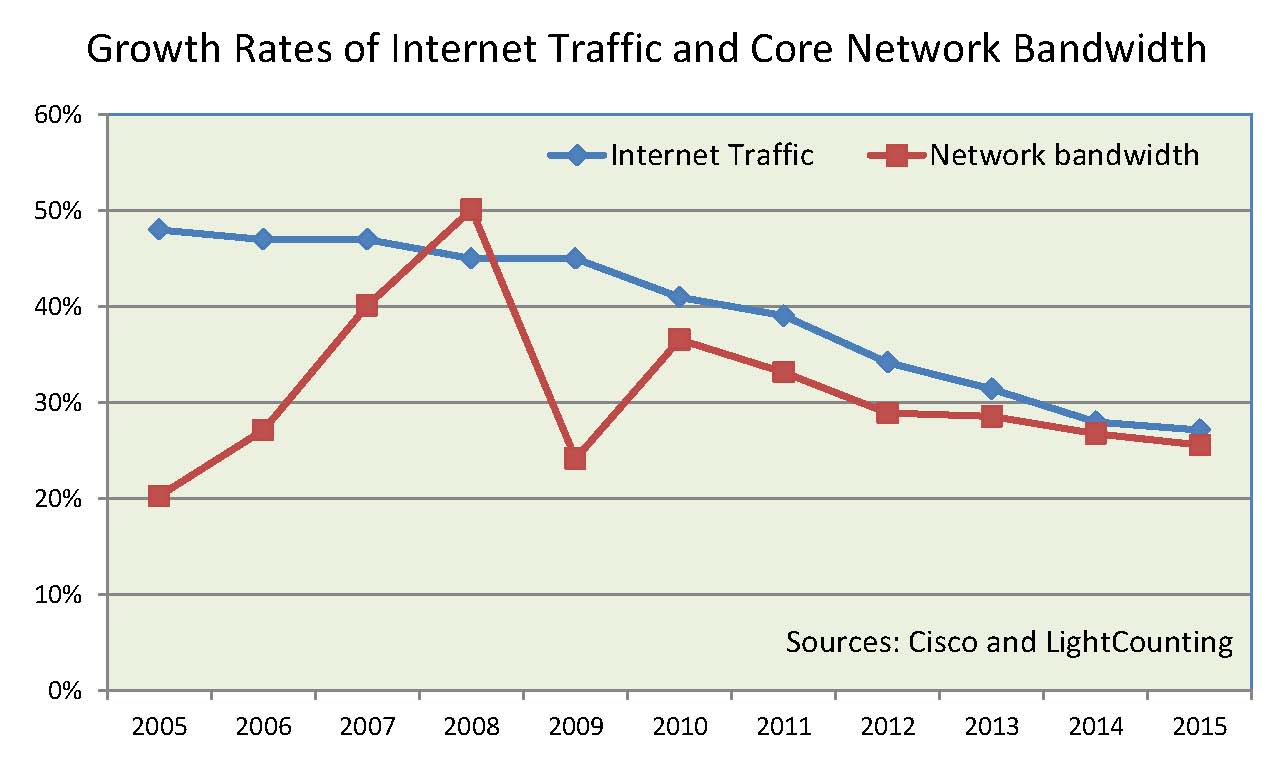

Current 100 Gigabit-per-second (Gbps) dense wavelength division multiplexing (DWDM) systems are able to transmit a total of 10 terabits-per-second of data across a fibre (100 channels, each at 100Gbps). System vendors have said that with further technology development, 25Tbps will be transported across fibre.

But IP traffic in the network is growing at over 30% each year. And while techniques are helping to improve overall transmission, the rate of progress is slowing down. A view is growing in the industry that without some radical technological breakthrough, new transmission media will be needed in the next two decades to avoid an inevitable capacity bottleneck.

"The Shannon Limit - the amount of information that can be transmitted - depends on the signal-to-noise and the amount of power you can put down a fibre," says Ian Giles, CEO of Phoenix Photonics, a fibre component specialist and one of the companies taking part in the project. "You can enhance transmission capacity by modulation techniques to increase bit rate, WDM and polarisation multiplexing but in the end you run out of the ability to transmit more information along a single-mode fibre."

This 'network crunch' is what the MODE-GAP project is looking to tackle.

Project details

One of the approaches that will be investigated is exploiting the multiple paths light travels down a multimode fibre to enable the parallel transmission of more than one channel.

These multiple paths light takes traveling in a multimode fibre disperses the signal. "The proposal we are making is that we take a low-moded fibre and select specific modes for each channel, or a high-moded fibre and select modal groups that are very similar," says Giles. The idea is that by identifying such modes in the multimode fibre, the dispersion for each mode or model groups will be limited.

But implementing such a spatially modulated system is tricky as the modes need to be identified and then have light launched into them. In turn, the modes must be kept apart along the fibre's span.

The project will tackle these challenges as well as use digital signal processing at the output to separate the transmitted channels. The project consortium believes that up to 10 channels could be used per fibre.

The second approach the MODE-GAP project will explore involves using specialist or photonic bandgap fibre. "The problem with solid core fibre is that the core will scatter light, and with higher intensity, you start to see non-linear scattering," says Giles. "So there is a limit to how much power you can put down a fibre without introducing these non-linear effects."

Photonic bandgap fibre has an air core that doesn't create scattering. As a result the non-linear threshold is some 100x higher, meaning that more power can be put into the fibre.

What next?

The MODE-GAP project is still in its infancy. The goal is to develop a system that allows the multiplexing and demultiplexing of the spatially-separated channels on the fibre. That will be done using multimode fibre but Giles stresses that it could eventually be done using photonic bandgap fibre. "You then enhance capacity: you increase the number of channels, and decrease the non-linearities which means you can increase the amount of information sent per channel," says Giles.

"Up till the spatial modulation part, the system is the same as you have now," he adds. "It is only the spatial modulation part that needs new components." NSN will use any prototype developed within its test-bed where it will be trailed. "They don't want to reinvent their equipment at each end," says Giles.

The project will also look to develop a fibre-amplifier that will boost all the fibre's spatial separated channels.

The project's goal is to demonstrate a working system. "The ultimate is to show the hundredfold improvement," says Giles. "We will do that with multiple channel transmission along a single photonic bandgap fibre and higher capacity [data transmission] per channel."

Project partners

In addition to NSN's systems expertise and test-bed, Eblana Photonics will be developing lasers for the project while Phoenix will address the passive components needed to launch and detect specific modes. OFS Fitel is providing the fibre expertise, while the University of Southampton's Optoelectronics Research Centre is leading the project.

The other universities include the COBRA Institute at the Technische Universiteit Eindhoven which has expertise in the processing and transmission of spatial division multiplexed signals, while the Tyndall National Institute of University College Cork is providing system expertise, detectors, transmitters and some of the passive optics and planar waveguide work.

ESPCI ParisTech, working with the University of Southampton, will provide expertise in surface finishes. "The key here is that for the fibres to be low loss, and to maintain the modes in the fibre, they have to have very good inside surfaces," says Giles.

100 Gigabit for the metro

The firm claims this is an industry first: a direct-detection-based 100 Gigabit-per-second (Gbps) design using four, 28Gbps channels rather than current 10x10Gbps schemes.

"Data centre operators want to make best use of the fibre insfrastructure and get lower overall cost, footprint and power consumption"

Jörg-Peter Elbers, ADVA Optical Networking

The card, designed for the FSP 3000 platform, delivers a 2.5x greater spectral efficiency compared to 10Gbps dense WDM (DWDM) systems. In turn, the 100Gbps metro card has half the cost of a 100 Gigabit coherent design while requiring half the power and space.

ADVA Optical Networking is using a CFP optical module to implement the 100Gbps metro design. This allows the card to use other CFP-based interfaces such at the IEEE 100 Gigabit Ethernet (GbE) standards. The design also benefits from the economies of scale of the CFP as the module of choice for 100GbE, and from future smaller modules such as the CFP2 and CFP4 being developed as the 100GbE market evolves.

The 100Gbps metro CFP's four, 28Gbps signals are modulated using optical duo-binary. By choosing duo-binary, cheaper 10Gbps optics can be used akin to a 4x10Gbps design. Duo-binary is also more resilient to dispersion than standard on-off keying.

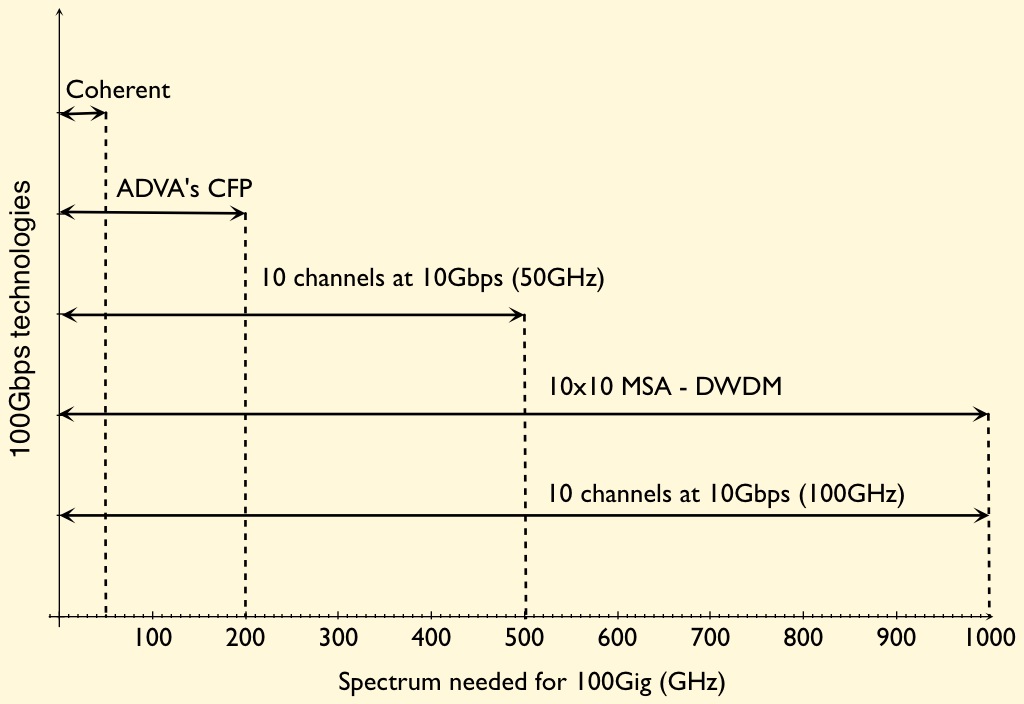

The CFP-based card requires 200GHz of spectrum for each 100Gbps light path. This is 2.5x more spectrally efficient than 10x10Gbps based on 50GHz channel spacings. However, while the design is cheaper, denser and less power hungry than 100Gbps coherent, it has only a quarter of the spectral efficiency of coherent (see chart).

Jörg-Peter Elbers, vice president, advanced technology at ADVA Optical Networking, says duo-binary delivers closer channel spacing such that a doubling in spectral density will be possible in a future design (100Gbps in a 100GHz channel). The 100Gbps metro card supports 500km links using dispersion-compensated fibre.

Non-coherent designs for the metro are starting to appear despite 100Gbps optical transport being in its infancy. Besides ADVA Optical Networking's design, a component vendor is promoting a 100Gbps direct detection DWDM design for the metro. The 10x10 MSA has also announced a DWDM extension that will support four and eight 100Gbps channels.

The 100G metro card showing the CFP. Source: ADVA Optical Networking

Metro direct-detection also faces competition from system vendors developing coherent designs tailored for the metro.

System vendors, module makers, optical and IC component companies all believe there is a market for lower cost 100Gbps metro transport. This is backed by keen interest from service providers and large content providers that want cheaper 100Gbps interfaces to connect data centres.

Elbers highlights two such applications that will first likely use the 100 Gigabit metro card.

One is connecting the data centres of enterprises that use rented fibre. "They have a multitude of interfaces and services - 10GbE, 8 Gigabit Fibre Channel - and they often rent fibre," says Elbers. "They need to get as much capacity as possible to make the fibre rent worthwhile while being constrained on rack space and power."

The second application is to connect 100GbE-enabled IP routers across the metro. Here service providers may not have heavily loaded DWDM networks and can afford to use a 100Gbps metro link rather than the more spectrally efficient, if more expensive, 100Gbps coherent interface. Equally, such links may be less than 500km while coherent is designed for long-haul links, 1000km or greater.

Elbers says samples of the metro card are available now with volume production beginning at the end of 2011.

Introducing 100G Metro (ADVA Optical video)

The great data rate-reach-capacity tradeoff

Source: Gazettabyte

Source: Gazettabyte

Optical transmission technology is starting to bump into fundamental limits, resulting in a three-way tradeoff between data rate, reach and channel bandwidth. So says Brandon Collings, JDS Uniphase's CTO for communications and commercial optical products. See the recent Q&A.

This tradeoff will impact the coming transmission speeds of 200, 400 Gigabit-per-second and 1 Terabit-per-second. For each increased data rate, either the channel bandwidth must increase or the reach must decrease or both, says Collings.

Thus a 200Gbps light path can be squeezed into a 50GHz channel in the C-band but its reach will not match that of 100Gbps over a 50GHz channel (Shown on the graph with a hashed line). A wider version of 200Gbps could match the reach to the 100Gbps, but that would probably need a 75GHz channel, says Collings.

For 400Gbps, the same situation arises suggesting two possible approaches: 400Gbps fitting in a 75GHz channel but with limited reach (for metro) or a 400Gbps signal placed within a 125GHz channel to match the reach of 100Gbps over a 50GHz channel.

Optical transmission technology is starting to bump into fundamental limits resulting in a three-way tradeoff between data rate, reach and channel bandwidth.

Optical transmission technology is starting to bump into fundamental limits resulting in a three-way tradeoff between data rate, reach and channel bandwidth.

"Continue this argument for 1 Terabit as well," says Collings. Here the industry consensus suggests a 200GHz-wide channel will be needed.

Similarly, within this compromise, other options are available such as 400Gbps over a 50GHz channel. But this would have a very limited reach.

Collings does not dismiss the possibility of a technology development which would break this fundamental compromise, but at present this is the situation.

As a result there will likely be multiple formats hitting the market which align the reach needed with the minimised channel bandwidth, says Collings.

Rational and innovative times: JDSU's CTO Q&A Part II

"What happens after 100 Gig is going to be very interesting"

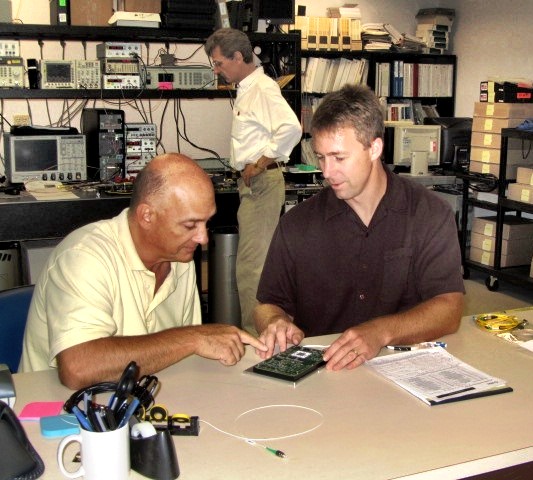

Brandon Collings (right), JDSU

How has JDS Uniphase (JDSU) adapted its R&D following the changes in the optical component industry over the last decade?

JDSU has been a public company for both periods [the optical boom of 1999-2000 and now]. The challenge JDSU faced in those times, when there was a lot of venture capital (VC) money flowing into the system, was that the money was sort of free money for these companies. It created an imbalance in that the money was not tied to revenue which was a challenge for companies like JDSU that ties R&D spend to revenue. You also have much more flexibility [as a start-up] in setting different price points if you are operating on VC terms.

The situation now is very straightforward, rational and predictable.

There is not a huge army of R&D going on. That lack of R&D does not speed up the industry but what it does do is allow those companies doing R&D - and there is still a significant number - a lot of focus and clarity. It also requires a lot of partnership between us, our customers [equipment makers] and operators. The people above us can't just sit back and pick and choose what they like today from myriad start-ups doing all sorts of crazy things.

We very much appreciate this rational time. Visions can be more easily discussed, things are more predictable and everyone is playing from a similar set of rules.

Given the changes at the research labs of system vendor and operators, is there a risk that insufficient R&D is being done, impeding optical networking's progress?

It is hard to say absolutely not as less people doing things can slow things down. But the work those labs did, covered a wide space including outside of telecom.

There is still a sufficient critical mass of research at placed like Alcatel-Lucent Bell Labs, AT&T and BT; there is increasingly work going on in new regions like Asia Pacific, and a lot more in and across Europe. It is also much more focussed - the volume of workers may have decreased but the task still remains in hand.

"There are now design tradeoffs [at speeds higher than 100Gbps] whereas before we went faster for the same distance"

How does JDSU foster innovation and ensure it is focussing on the right areas?

I can't say that we have at JDSU a process that ensures innovation. Innovation is fleeting and mysterious.

We stay very connected to our key customers who are more on the cutting edge. We have very good personal and professional relationships with their key people. We have the same type of relationship with the operators.

I and my team very regularly canvass and have open discussions about what is coming. What does JDSU see? What do you see? What technologies are blossoming? We talk through those sort of things.

That isn't where innovation comes from. But what that can do is sow the seeds for the opportunity for innovation to happen.

We take that information and cycle it through all our technology teams. The guys in the trenches - the material scientists, the free-space optics design guys - we try to educate them with as much of an understanding of the higher-level problems that ultimately their products, or the products they design into, will address.

What we find is that these guys are pretty smart. If you arm them with a wider understanding, you get a much more succinct and powerful innovation than if you try to dictate to a material scientist here is what we need, come back when you are done.

It is a loose approach, there isn't a process, but we have found that the more we educate our keys [key guys] to the wider set of problems and the wider scope of their product segments, the more they understand and the more they can connect their sphere of influence from a technology point of view to a new capability. We grab that and run with it when it makes sense.

It is all about communicating with our customers and understanding the environment and the problem, then spreading that as wide as we can so that the opportunity for innovation is always there. We then nurse it back into our customers.

Turning to technology, you recently announced the integration of a tunable laser into an SFP+, a product you expect to ship in a year. What platforms will want a tunable laser in this smallest pluggable form factor?

The XFP has been on routers and OTN (Optical Transport Network) boxes - anything that has 10 Gig - and those interfaces have been migrated over to SFP+ for compactness and face plate space. There are already packet and OTN devices that use SFP+, and DWDM formats of the SFP+, to do backhaul and metro ring application. The expectation is that while there are more XFP ports today, the next round of equipment will move to SFP+.

Certainly the Ciscos, Junipers and the packet guys are using tunable XFPs in great volume for IP over DWDM and access networks, but the more telecom-centric players riding OTN links or maybe native Ethernet links over their metro rings are probably the larger volume.

What distance can the tunable SFP+ achieve?

The distances will be pretty much the same as the tunable XFP. We produce that in a number of flavours, whether it is metro and long-haul. The initial SFP+ will like be the metro reaches, 80km and things like that.

What is the upper limit of the tunable XFP?

We produce a negative chirp version which can do 80km of uncompensated dispersion, and then we produce a zero chirp which is more indicative of long-haul devices.

In that case the upper limit is more defined by the link engineering and the optical signal-to-noise ratio (OSNR), the extent of the dispersion compensation accuracy and the fibre type. It starts to look and smell like a long-haul lithium niobate transceiver where the distances are limited by link design as much as by the transceiver itself. As for the upper limit, you can push 1000km.

An XFP module can accommodate 3.5W while an SFP+ is about 1.5W. How have you reduced the power to fit the design into an SFP+?

It may be a generation before we get to that MSA level so we are working with our customers to see what level they can tolerate. We'll have to hit a lot less that 3.5W but it is not clear that we have to hit the SFP+ MSA specification. We are already closer now to 1.5W than 3.5W.

"I can't say that we have at JDSU a process that ensures innovation. Innovation is fleeting and mysterious."

Semiconductors now play a key role in high-speed optical transmission. Will semiconductors take over more roles and become a bigger part of what you do?

Coherent transmission [that uses an ASIC incorporating a digital signal processor (DSP)] is not going away. There is a lot of differentiation at the moment in what happens in that DSP, but I think overall it is going to be a tool the system houses use to get the job done.

If you look at 10 Gig, the big advancement there was FEC [forward error correction] and advanced FEC. In 2003 the situation was a lot like it is today: who has the best FEC was something that was touted.

If you look at coherent technology, it is certainly a different animal but it is a similar situation: that is, the big enabler for 40 and 100 Gig. Coherent is advanced technology, enhanced FEC was advanced technology back then, and over time it turned into a standardised, commoditised piece that is central and ubiquitously used for network links.

Coherent has more diversity in what it can do but you'll see some convergence and commoditisation of the technology. It is not going to replace or overtake the importance of photonics. In my mind they play together intimately; you can't replace the functions of photonics with electronics any time soon.

From a JDSU perspective, we have a lot of work to do because the bulk of the cost, the power and the size is still in the photonics components. The ASIC will come down in power, it will follow Moore's Law, but we will still need to work on all that photonics stuff because it is a significant portion of the power consumption and it is still the highest portion of the cost.

JDSU has made acquisitions in the area of parallel optics. Given there is now more industry activity here, why isn't JDSU more involved in this area?

We have been intermittently active in the parallel optics market.

The reality is that it is a fairly fragmented market: there are a lot of applications, each one with its own requirements and customer base. It is tough to spread one platform product around these applications. That said, parallel optics is now a mainstay for 40 and 100 Gig client [interfaces] and we are extremely active in that area: the 4x10, 4x25 and 12x10G [interfaces]. So that other parallel optics capability is finding its way into the telecom transceivers.

We do stay active in the interconnect space but we are more selective in what we get engaged in. Some of the rules there are very different: the critical characteristics for chip interconnect are very different to transceivers, for example. It may be much better to have on-chip optics versus off-chip optics. Obviously that drives completely different technologies so it is a much more cloudy, fragmented space at the moment.

We are very tied into it and are looking for those proper opportunities where we do have the technologies to fit into the application.

How does JDSU view the issues of 200, 400 Gigs and 1 Terabit optical transmission?

What happens after 100 Gig is going to be very interesting.

Several things have happened. We have used up the 50GHz [DWDM] channel, we can't go faster in the 50GHz channel - that is the first barrier we are bumping into.

Second, we're finding there is a challenge to do electronics well beyond 40 Gigabit. You start to get into electronics that have to operate at much higher rates - analogue-to-digital converters, modulator drivers - you get into a whole different class of devices.

Third, we have used all of our tools: we have used FEC, we are using soft-decision FEC and coherent detection. We are bumping into the OSNR problem and we don't have any more tools to run lines rates that have less power to noise yet somehow recover that with some magic technology like FEC at 10 Gig, and soft decision FEC and coherent at 40 and 100 Gig.

This is driving us into a new space where we have to do multi-carrier and bigger channels. It is opening up a lot of flexibility because, well, how wide is that channel? How many carriers do you use? What type of modulation format do you use?

What format you use may dictate the distance you go and inversely the width of the channel. We have all these new knobs to play with and they are all tradeoffs: distance versus spectral efficiency in the C-band. The number of carriers will drive potentially the cost because you have to build parallel devices. There are now design tradeoffs whereas before we went faster for the same distance.

We will be seeing a lot of devices and approaches from us and our customers that provide those tradeoffs flexibly so the carriers can do the best they can with what mother nature will allow at this point.

That means transponders that do four carriers: two of them do 200 Gig nicely packed together but they only achieve a few hundred kilometers, but a couple of other carriers right next door go a lot further but they are a little bit wider so that density versus reach tradeoff is in play. That is what is going to be necessary to get the best of what we can do with the technology.

That is the transmission side, the transport side - the ROADMS and amplifiers - they have to accommodate this quirky new formats and reach requirements.

We need to get amplifiers to get the noise down. So this is introducing new concepts like Raman and flex[ible] spectrum to get the best we can do with these really challenging requirements like trying to get the most reach with the greatest spectral efficiency.

How do you keep abreast of all these subject areas besides conversations with customers?

It is a challenge, there aren't many companies in this space that are broader than JDSU's optical comms portfolio.

We do have a team and the team has its area of focus, whether it is ROADMs, modulators, transmission gear or optical amplifiers. We segment it that way but it is a loose segmentation so we don't lose ideas crossing boundaries. We try to deal with the breadth that way.

Beyond that, it is about staying connected with the right people at the customer level, having personal relationships so that you can have open discussions.

And then it is knowing your own organisation, knowing who to pull into a nebulous situation that can engage the customer, think on their feet and whiteboard there and then rather than [bringing in] intelligent people that tend to require more of a recipe to do what they are doing.

It is all about how to get the most from each team member and creating those situations where the right things can happen.

For Part I of the Q&A, click here

Q&A with JDSU's CTO

In Part 1 of a Q&A with Gazettabyte, Brandon Collings, JDS Uniphase's CTO for communications and commercial optical products, reflects on the key optical networking developments of the coming decade, how the role of optical component vendors is changing and next-generation ROADMs.

"For transmission components, photonic integration is the name of the game. If you are not doing it, you are not going to be a player"

Brandon Collings (left), JDSU

Q: What are the key optical networking trends of the next decade?

A: The two key pieces of technology at the photonic layer in the last decade were ROADMs [reconfigurable optical add-drop multiplexers] and the relentless reduction in size, cost and power of 10 Gigabit transponders.

If you look at the next decade, I see the same trends occupying us.

We are seeing a whole other generation of reconfigurable networks - this whole colourless, directionless, flexible spectrum - all this stuff is coming and it is requiring a complete overhaul of the transport network. We have to support Raman [amplifiers] and we need to support more flexible [optical] channel monitors to deal with flexible spectrum.

We have to overhaul every aspect of the transport system: the components, design, capability, usability and the management. It may take a good eight years for the dust to settle on how that all plays out.

The other piece is transmission size, cost and power.

Right now a 40 Gig or a 100 Gig transponder is large, power-hungry and extremely expensive. Ironically they don't look too different to a 10 Gig transponder in 1998 and you see where that has gone.

You have seen our recent announcement [a tunable laser in an SFP+ optical pluggable module]; that whole thing is now tunable, the size of your pinkie and costs a fraction of what it did in 1998.

I expect that same sort of progression to play out for 100 Gig, and we'll start to get into 400 Gig and some flexible devices in between 100 and 400 Gig.

The name of the game is going to be getting size, cost and power down to ensure density keeps going up and the cost-per-bit keeps going down; all that is enabled by the photonic devices themselves.

Is that what will occupy JDSU for the next decade?

This is what will occupy us at the component level. As you go up one level - and this will impact us more indirectly than it will our customers - we are seeing this ramp of capacity, driven by the likes of video, where the willingness to pay-per-bit is dropping through the floor but the cost to deliver that bit is dropping a lot less.

Operators are caught in the middle and they are after efficiency and cost advantages when operating their networks. We are seeing a re-evaluation of the age-old principles in how networks are operated: How they do protection, how they offer redundancy and how they do aggregation.

People are saying: 'Well, the optical layer is actual fairly cheap compared to the layer two and three. Let's see if we can't ask more of the somewhat cheaper network and maybe pull some of the complexity and requirements out of the upper layers and make that simpler, to end up with an overall cheaper and easier network to operate.'

That is putting a lot of feature requirements on us at the hardware level to build optical networks that are more capable and do more, as well as on our customers that must make that network easier to operate.

That is a challenge that will be a very interesting area of differentiation. There are so many knobs to turn as you try to build a better delivery system optimised over packets, OTN [Optical Transport Network] and photonics.

Are you noting changes among system vendors to become more vertically integrated?

I've heard whisperings of vendors wanting to figure out how they could be more vertically integrated. That's because: 'Well hey, that could make our products cheaper and we could differentiate'. But I think the reality is moving in the opposite direction.

To build differentiated, compelling products, you have to have expertise, capability and technology control all the way down to the materials level almost. Take for example the tunable XFP; that whole thing is enabled by complete technology ownership of an indium-phosphate fab and all the manufacturing that goes around it. That is a herculean effort.

It is tough to say they [system vendors] want to be vertically integrated because to do so effectively you need just a gigantic organisation.

JDSU is vertically integrated. We have an awful lot of technology and we have got a very large manufacturing infrastructure expertise and know-how. We can produce competitive products because for this particular application we use a PLC [planar lightwave circuit], and for that one, gallium arsenide. We can do this because we diversify all this infrastructure, operation and company size across a wide customer base.

Increasingly this is also into adjacent markets like solar, gesture recognition and optical interconnects. These adjacent spaces would not be something that a system vendor would probably want to get into.

The bottom line is that it [the trend] is actually going in the opposite direction because the level, size and scope of the vertical integration would need to be very large and completely non-trivial if system vendors want to be differentiating and compelling. And the business case would not work very well because it would only be for their product line.

"No one says exactly what they will pay for next-gen ROADMs but all can articulate why they want it and what it will do in general terms"

Is this system vendor trend changing the role of optical component players?

Our level of business and our competitors are looking to be more vertically integrated: semiconductors all the way to line cards.

We've proven it with things like our Super Transport Blade that the more you have control over, the more knobs you can turn to create new things when merging multiple functions.

Instead of selling a lot of small black boxes and having the OEMs splice them together, we can integrated those functions and make a more compact and cost-effective solution. But you have to start with the ability to make all those blocks yourself.

Whether it is a line card, a tunable XFP or a 100 Gig module, the more you own and control, the more you can integrate and the more effective your solution will be. This is playing out at the components level because you create more compelling solutions the more functional integration you accomplish.

How do you avoid competing with your customers? If system vendors are just putting cards together, what are they doing? Also, how do you help each vendor differentiate?

It is very true. There are several system vendors that don't build their line cards anymore. They have chosen to do so because they realise that from a design and manufacturing perspective, they don't add much value or even subtract value because we can do more functional integration and they may not be experts in wavelength-selective switch (WSS) construction and various other things.

A fair number of them basically acknowledge that giving these blades to the people who can do them is a better solution for them.

How they differentiate can go two ways.

First, they don't just say: 'Build me a ROADM card.' We work very closely; they are custom design cards for each vendor. They specify what the blade will do and they participate intimately in its design. They make their own choices and put in their own secret sauce.

That means we have very strong partnerships with these operations, almost to the extent that we are part of their development organisations.

The importance of things above the photonic layer collectively is probably more important than the photonic layer. Usability, multiplexing, aggregation, security - all the things that go into the higher levels of a network, this is where system vendors are differentiating.

They can still differentiate at the photonic layer by building strong partnerships with technology engines like JDSU and it allows them to focus more resources at the upper levels where they can differentiate their complete network offering.

"The new generation of reconfigurable networks are not able to reuse anything that is being built today"

"The new generation of reconfigurable networks are not able to reuse anything that is being built today"

Will is happening with regard photonic integration?

For transmission components, photonic integration is the name of the game. If you are not doing it, you are not going to be a player.

If you look at JDSU's tunable [laser] XFP, that is 100% photonic integration. Yes, we build an ASIC to control the device but it is just about getting a little bit extra volume and a little bit more power. The whole thing is about monolithic integration of a tunable laser, the modulator and some power control elements. And that is just 10 Gig.

If you look at 40 Gig, today's modulators are already putting in heavy integration and it is just the first round. These dual-polarisation QPSK modulators, they integrate multiple modulators - one for each polarisation as well as all the polarisation combining functionality, all into one device using waveguide-based integration. Today that is in lithium niobate, which is not a small technology.

100 Gig looks similar, it is just a little bit faster and when you go to 400 Gig, you go multi-carrier which means you make multiple copies of this same device.

So getting these things down in size, cost and power means photonic integration. And just the way 10 Gig migrated from lithium niobate down to monolithic indium phosphide, the same path is going to be followed for 40, 100 and 400 Gig.

It may be more complicated than 10 Gig but we are more advanced with our technology.

Operators are asking for advanced ROADM capabilities while system vendors are willing to provide such features but only once operators will pay for them. Meanwhile, optical component vendors must do significant ROADM development work without knowing when they will see a return. How does JDSU manage this situation and is there a way of working smart here?

I don't think there is a terrifically clever way to look at this other than to say that we speak very carefully and closely with our customers.

These next-generation ROADMs have been going on for three or four years now. We also meet operators globally and ask them very similar questions about when and how and to what extent their interest in these various features [colourless, directionless, contentionless, gridless (flexible spectrum)] lie.

We are a ROADM leader; this is a ROADM question so we'd be making critical decisions if we decided not to invest in this area. We have decided this is going to happen and we have invested very heavily in this space.

It is true; there is not a market there right now.

With anything that is new, if you want to be a market leader you can't enter a market that exists, otherwise you'll be late. So through those discussions with our customers and the trust we have with them, and understanding where their customers and their problems lie, we are confident in that investment.

If you look back at the initial round of ROADMs, the chitchat was the same. When WSSs and ROADMs first came out, the reaction was: 'Wow, these things are really expensive, why would I want this compared to a set of fixed filters which back then cost $100 a pop?".

The commentary on cost was all in that flavour but once they became available and the costs were known, the operators started adopting them because the operators could figure out how they could benefit from the flexibility. Today ROADMs are just about in every network in the world.

We expect the same track to follow. No one is going to say: 'Yes, I’m going to pay twice for this new functionality' because they are being cagey of course.

We are still in the development phase. We are starting to get to the end of that, so the costs and real capabilities - all enabled by the devices we are developing - are becoming clear enough so that our customers can now go to their customers and say: 'Here's what it is, here's what it does and here's what it cost'.

Operators will require time to get comfortable with that and figure out how that will work in their respective networks.

We have seen consistent interest in these next-generation ROADM features. No one says exactly what they will pay for it but all can articulate why they want it and what it will do in general terms.

You say you are starting to get to the end of the development phase of these next-generation ROADMs. What challenges remain?

The new generation of reconfigurable networks are not able to reuse anything that is being built today whether it is from JDSU or Finisar, whether it is MEMS or LCOS (liquid crystal on silicon).

All the devices that are on the shelf today simply are not adequate or you end up with extremely expensive solutions.

This requires us to have a completely new generation of products in the WSS and the multiplexing demultiplexing space - all the devices that will do these functions that were done by AWGs or today by a 1x9 WSS but what is under development, they just look completely different.

They are still WSSs but they use different technologies so without saying exactly what they are and what they do, it is basically a whole new platform of devices.

Can you say when we will know what these look like?

I think the general architecture is fairly well known.

The exact details of the devices and components are still not publicly being talked about but it is the general combination of high-port-count WSSs that support flexible spectrum, fast switching and low loss, and are being used in a route-and-select approach rather than a broadcast-and-select one. That is the node building block.

Then these multicast switches are being built - fibre amplifier arrays; what comprise the colourless, directionless and contentionless multiplexing and demultiplexing.

That is the general architecture - it seems that that is what everyone is settling on. The devices to support that are what the industry is working on.

For Part II of the Q&A with Brandon Collings, click here

Q&A: Ciena’s CTO on networking and technology

In Part 2 of the Q&A, Steve Alexander, CTO of Ciena, shares his thoughts about the network and technology trends.

Part 2: Networking and technology

"The network must be a lot more dynamic and responsive"

Steve Alexander, Ciena CTO

Q. In the 1990s dense wavelength division multiplexing (DWDM) was the main optical development while in the '00s it was coherent transmission. What's next?

A couple of perspectives.

First, the platforms that we have in place today: III-V semiconductors for photonics and collections of quasi-discrete components around them - ASICs, FPGAs and pluggables - that is the technology we have. We can debate, based on your standpoint, how much indium phosphide integration you have versus how much silicon integration.

Second, the way that networks built in the next three to five years will differentiate themselves will be based on the applications that the carriers, service providers and large enterprises can run on them.

This will be in addition to capacity - capacity is going to make a difference for the end user and you are going to have to have adequate capacity with low enough latency and the right bandwidth attributes to keep your customers. Otherwise they migrate [to other operators], we know that happens.

You are going to start to differentiate based on the applications that the service providers and enterprises can run on those networks. I see the value of networking changing from a hardware-based problem-set to one largely software-based.

I'll give you an analogy: You bought your iPhone, I'll claim, not so much because it is a cool hardware box - which it is - but because of the applications that you can run on it.

The same thing will happen with infrastructure. You will see the convergence of the photonics piece and the Ethernet piece, and you will be able to run applications on top of that network that will do things such as move large amounts of data, encrypt large amounts of data, set up transfers for the cloud, assemble bandwidth together so you can have a good cloud experience for the time you need all that bandwidth and then that bandwidth will go back out, like a fluid, for other people to use.

That is the way the network is going to have to operate in future. The network must be a lot more dynamic and responsive.

How does Ciena view 40 and 100 Gig and in particular the role of coherent and alternative transmission schemes (direct detection, DQPSK)? Nortel Metro Ethernet Networks (MEN) was a strong coherent adherent yet Ciena was developing 100Gbps non-coherent solutions before it acquired MEN.

If you put the clock back a couple of years, where were the classic Ciena bets and what were the classic MEN bets?

We were looking at metro, edge of network, Ethernet, scalable switches, lots of software integration and lots of software intelligence in the way the network operates. We did not bet heavily on the long distance, submarine space and ultra long-haul. We were not very active in 40 Gig, we were going straight from 10 to 100 Gig.

Now look at the bets the MEN folks placed: very strong on coherent and applying it to 40 and 100 Gig, strong programme at 100 Gig, and they were focussed on the long-haul. Well, to do long-haul when you are running into things like polarisation mode dispersion (PMD), you've got to have coherent. That is how you get all those problems out of the network.

Our [Ciena's] first 100 Gig was not focussed on long-haul; it was focussed on how you get across a river to connect data centres.

When you look at putting things together, we ended up stopping our developments that were targeted at competing with MEN's long-haul solutions. They, in many cases, stopped developments coming after our switching, carrier Ethernet and software integration solutions. The integration worked very well because the intent of both companies was the same.

Today, do we have a position? Coherent is the right answer for anything that has to do with physical propagation because it simplifies networks. There are a whole bunch of reasons why coherent is such a game changer.

The reason why first 40 Gig implementations didn't go so well was cost. When we went from 10 to 40 Gig, the only tool was cranking up the clock rate.

At that time, once you got to 20GHz you were into the world of microwave. You leave printed circuit boards and normal manufacturing and move into a world more like radar. There are machined boxes, micro-coax and a very expensive manufacturing process. That frustrated the desires of the 40 Gig guys to be able to say: Hey, we've got a better cost point than the 10 Gig guys.

Well, with coherent the fact that I can unlock the bit rate from the baud rate, the signalling rate from the symbol rate, that is fantastic. I can stay at 10GHz clocks and send four-bits per symbol - that is 40Gbps.

My basic clock rate, which determines manufacturing complexity, fabrication complexity and the basic technology, stays with CMOS, which everyone knows is a great place to play. Apply that same magic to 100 Gig. I can send 100Gbps but stay at a 25GHz clock - that is tremendous, that is a huge economic win.

Coherent lets you continue to use the commercial merchant silicon technology base which where you want to be. You leverage the year-on-year cost reduction, a world onto itself that is driving the economics and we can leverage that.

So you get economics with coherent. You get improvement in performance because you simplify the line system - you can pop out the dispersion compensation, and you solve PMD with maths. You also get tunability. I'm using a laser - a local oscillator at the receiver - to measure the incoming laser. I have a tunable receiver that has a great economic cost point and makes the line system simpler.

Coherent is this triple win. It is just a fantastic change in technology.

What is Ciena’s thinking regarding bringing in-house sub-systems/ components (vertical integration), or the idea of partnerships to guarantee supply? One example is Infinera that makes photonic integrated circuits around which it builds systems. Another is Huawei that makes its own PON silicon.

The two examples are good ones.

With Huawei you have to treat them somewhat separately as they have some national intent to build a technology base in China. So they are going to make decisions about where they source components from that are outside the normal economic model.

Anybody in the systems business that has a supply chain periodically goes through the classic make-versus-buy analysis. If I'm buying a module, should I buy the piece-parts and make it? You go through that portion of it. Then you look within the sub-system modules and the piece-parts I'm buying and say: What if I made this myself? It is frequently very hard to say if I had this component fully vertically integrated I'd be better off.

A good question to ask about this is: Could the PC industry have been better if Microsoft owned Intel? Not at all.

You have to step back and say: Where does value get delivered with all these things? A lot of the semiconductor and component pieces were pushed out [by system vendors] because there was no way to get volume, scale and leverage. Unless you corner the market, that is frequently still true. But that doesn't mean you don't go through the make-versus-buy analysis periodically.

Call that the tactical bucket.

The strategic one is much different. It says: There is something out there that is unique and so differentiated, it would change my way of thinking about a system, or an approach or I can solve a problem differently.

"Coherent is this triple win. It is just a fantastic change in technology"

If it is truly strategic and can make a real difference in the marketplace - not a 10% or 20% difference but a 10x improvement - then I think any company is obligated to take a really close look at whether it would be better being brought inside or entering into a good strategic partnership arrangement.

Certainly Ciena evaluates its relationships along these lines.

Can you cite a Ciena example?

Early when Ciena started, there was a technology at the time that was differentiated and that was Fibre Bragg Gratings. We made them ourselves. Today you would buy them.

You look at it at points in time. Does it give me differentiation? Or source-of-supply control? Am I at risk? Is the supplier capable of meeting my needs? There are all those pieces to it.

Optical Transport Network (OTN) integrated versus standalone products. Ciena has a standalone model but plans to evolve to an integrated solution. Others have an integrated product, while others still launched a standalone box and have since integrated. Analysts say such strategies confuse the marketplace. Why does Ciena believe its strategy is right?

Some of this gets caught up in semantics.

Why I say that is because we today have boxes that you would say are switches but you can put pluggable coloured optics in. Would you call that integrated probably depends more on what the competition calls it.

The place where there is most divergence of opinion is in the network core.

Normally people look at it and say: one big box that does everything would be great - that is the classic God-Box problem. When we look at it - and we have been looking at it on and off for 15 years now - if you try to combine every possible technology, there are always compromises.

The simplest one we can point to now: If you put the highest performance optics into a switch, you sacrifice switch density.

You can build switches today that because of the density of the switching ASICs, are I/O-port constrained: you can't get enough connectors on the face plate to talk to the switch fabric. That will change with time, there is always ebb and flow. In the past that would not have been true.

If I make those I/O ports datacom plugabbles, that is about as dense as I'm going to get. If I make them long-distance coherent optics, I'm not going to get as many because coherent optics take up more space. In some cases, you can end up cutting by half your port density on the switch fabric. That may not be the right answer for your network depending on how you are using that switch.

While we have both technologies in-house, and in certain application we will do that. Years ago we put coloured optics on CoreDirector to talk to CoreStream, that was specific for certain applications. The reason is that in most networks, people try to optimise switch density and transport capacity and these are different levers. If you bolt those levers together you don't often get the right optimal point.

Any business books you have read that have been particularly useful for your job?

The Innovator's Dilemma (by Clayton Christensen). What is good about it is that it has a couple of constructs that you can use with people so they will understand the problem. I've used some of those concepts and ideas to explain where various industries are, where product lines are, and what is needed to describe things as innovation.

The second one is called: Fad Surfing in the Boardroom (by Eileen Shapiro). It is a history of the various approaches that have been used for managing companies. That is an interesting read as well.

Click here for Part 1 of the Q&A

How ClariPhy aims to win over the system vendors

“We can build 200 million logic gate designs”

Reza Norouzian, ClariPhy

ClariPhy is in the camp that believes that the 100 Gigabit-per-second (Gbps) market is developing faster than people first thought. “What that means is that instead of it [100Gbps] being deployed in large volumes in 2015, it might be 2014,” says Reza Norouzian, vice president of worldwide sales and business development at ClariPhy.

Yet the fabless chip company is also glad it offers a 40Gbps coherent IC as this market continues to ramp while 100Gbps matures and overcomes hurdles common to new technology: The 100Gbps industry has yet to develop a cost-effective solution or a stable component supply that will scale with demand.

Another challenge facing the industry is reducing the power consumption of 100Gbps systems, says Norouzian. The need to remove the heat from a 100Gbp design - the ASIC and other components - is limiting the equipment port density achievable. “If you require three slots to do 100 Gig - whereas before you could use these slots to do 20 or 30, 10 Gig lines - you are not achieving the density and economies of scale hoped for,” says Norouzian.

40G and 100G coherent ASICs

ClariPhy has chosen a 40nm CMOS process to implement its 40Gbps coherent chip, the CL4010. But it has since decided to adopt 28nm CMOS for its 100Gbps design – the CL10010 - to integrate features such as soft-decision forward error correction (see New Electronics' article on SD-FEC) and reduce the chip’s power dissipation.

The CL4010 integrates analogue-to-digital and digital-to-analogue converters, a digital signal processor (DSP) and a multiplexer/ demultiplexer on-chip. “Normally the mux is a separate chip and we have integrated that,” says Norouzian.

The first CL4010 samples were delivered to select customers three months ago and the company expects volume production to start by the end of September. The CL4010 also interoperates with Cortina Systems’ optical transport network (OTN) processor family of devices, says the company.

The start-up claims there is strong demand for the CL4010. “When we ask them [operators]: ‘With all the hoopla about 100 Gig, why are you buying all this 40 Gig?’, the answer is that it is a pragmatic solution and one they can ship today,” says Norouzian.

ClariPhy expects 40Gbps volumes to continue to ramp for the next three or four years, partly because of the current high power consumption of 100Gbps. The company says several system vendors are using the CL4010 in addition to optical module customers.

The 28nm 100Gbps CL10010 is a 100 million gate ASIC. ClariPhy acknowledges it will not be first to market with an 100Gbps ASIC but that by using the latest CMOS process it will be well position once volume deployments start from 2014.

ClariPhy is already producing a quad-10Gbps chip implementing the maximum likelihood sequence estimation (MLSE) algorithm used for dispersion compensation in enterprise applications. The device covers links up to 80km (10GBASE-ZR) but the main focus is for 10GBASE-LRM (220m+) applications. “Line cards that used to have four times 10Gbps lanes now are moving to 24 and will use six of these chips,” says Norouzian. The device sits on the card and interfaces with SFP+ or Quad-SFP optical modules.

“The CL10010 is the platform to demonstrate all that we can do but some customers [with IP] will get their own derivatives”

System vendor design wins

The 100Gbps transmission ASIC market may be in its infancy but the market is already highly competitive with clear supply lines to the system vendors.

Several leading system vendors have decided to develop their own ASICs. Alcatel-Lucent, Ciena, Cisco Systems (with the acquisition of CoreOptics), Huawei and Infinera all have in-house 100Gbps ASIC designs.

System vendors have justified the high development cost of the ASIC to get a time-to-market advantage rather than wait for 100Gbps optical modules to become available. Norouzian also says such internally-developed 100Gbps line card designs deliver a higher 100Gbps port density when compared to a module-based card.

Alternatively, system vendors can wait for 100Gbps optical modules to become available from the likes of an Oclaro or an Opnext. Such modules may include merchant silicon from the likes of a ClariPhy or may be internally developed, as with Opnext.

System vendors may also buy 100Gbps merchant silicon directly for their own 100Gbps line card designs. Several merchant chip vendors are targeting the coherent marketplace in addition to ClariPhy. These include such players as MultiPhy and PMC-Sierra while other firms are known to be developing silicon.

Given such merchant IC competition and the fact that leading system vendors have in-house designs, is the 100Gbps opportunity already limited for ClariPhy?

Norouzian's response is that the company, unlike its competitors, has already supplied 40Gbps coherent chips, proving the company’s mixed signal and DSP expertise. The CL10010 chip is also the first publicly announced 28nm design, he says: “Our standard product will leapfrog first generation and maybe even second generation [100Gbps] system vendor designs.”

The equipment makers' management will have to decide whether to fund the development of their own second-generation ASICs or consider using ClariPhy’s 28nm design.

ClariPhy acknowledges that leading system vendors have their own core 100Gbps intellectual property (IP) and so offers vendors a design service to develop their own custom systems-on-chip. For example a system vendor could use ClariPhy's design but replace the DSP core with the system vendor’s own hardware block and software.

Source: ClariPhy Communications

Source: ClariPhy Communications

Norouzian says system vendors making 100Gbps ASICs develop their own intellectual property (IP) blocks and algorithms and use companies like IBM or Fujitsu to make the design. ClariPhy offers a similar service while also being able to offer its own 100Gbps IP as required. “The CL10010 is the platform to demonstrate all that we can do,” says Norouzian. “But some customers [with IP] will get their own derivatives.”

The firm has already made such custom coherent devices using customers’ IP but will not say whether these were 40 or 100Gbps designs.

Market view

ClariPhy claims operator interest in 40Gbps coherent is not so much because of its superior reach but its flexibility when deployed in networks alongside existing 10Gbps wavelengths. “You don't have to worry about [dispersion] compensation along routes,” says Norouzian, adding that coherent technology simplifies deployments in the metro as well as regional links.

And while ClariPhy’s focus is on coherent systems, the company agrees with other 100Gbps chip specialists such as MultiPhy for the need for 100Gbps direct-detect solutions for distances beyond 40km. “It is very likely that we will do something like that if the market demand was there,” says Norouzian. But for now ClariPhy views mid-range 100Gbps applications as a niche opportunity.

Funding

ClariPhy raised US $14 million in June. The biggest investor in this latest round was Nokia Siemens Networks (NSN).

An NSN spokesperson says working with ClariPhy will help the system vendor develop technology beyond 100Gbps. “It also gives us a clear competitive edge in the optical network markets, because ClariPhy’s coherent IC and technology portfolio will enable us to offer differentiated and scalable products,” says the spokesperson.

The funding follows a previous round of $24 million in May 2010 where the investors included Oclaro. ClariPhy has a long working relationship with the optical components company that started with Bookham, which formed Oclaro after it merged with Avanex.

“At 100Gbps, Oclaro get some amount of exclusivity as a module supplier but there is another module supplier that also gets access to this solution,” says Norouzian. This second module supplier has worked with ClariPhy in developing the design.

ClariPhy will also supply the CL10010 to the system vendors. “There are no limitations for us to work with OEMs,” he says.

The latest investment will be used to fund the company's R&D effort in 100, 200 and 400Gbps, and getting the CL4010 to production.

Beyond 100 Gig

The challenge at higher data rates that 100Gbps is implementing ultra-large ASICs: closing the timings and laying out vast digital circuitry. This is an area the company has been investing in over the last 18 months. “Now we can build 200 million logic gate designs,” says Norouzian.

Moving from 100Gbps to 200Gbps wavelengths will require higher order modulation, says Norouzian, and this is within the realm of its ASIC.

Going to 400Gbps will require using two devices in parallel. One Terabit transmission however will be far harder. “Going to one Terabit requires a whole new decade of development,” he says.

Further reading:

Q&A: Ciena’s CTO on R&D

Gazettabyte spoke with Steve Alexander, CTO of Ciena, about optical technology and R&D. In Part 1 of the Q&A, Alexander shares his thoughts about the practice and challenges of R&D.

Part 1: R&D

"The R&D model has shifted a lot. Time has marched on and there are newer ways of doing things"

Steve Alexander, Ciena CTO

Q: Industry R&D has changed in the last decade. The optical boom of 1999-2000 resulted in venture capital (VC) money funding hundreds of start-ups. There were vibrant operator labs while system vendors had tightly-coupled optical system/component teams. Now system and optical component vendors must use hard-earned cash to fund R&D. Given the financial constraints of the optical industry, is sufficient R&D being done?

A: The marketplace in general is still a little smarting from what happened ten years ago. So when someone from the component industry warns, the market looks at it and says: ‘Here we go again’. But a great question to ask is: What is different this time from last time?

What is different this time is broadband apps. It's a great time to be a bandwidth company and that is fundamentally what Ciena is.

The first wave of networking was about connecting locations. This was the wireline phone service. That scales at a certain rate; there are so many locations on the planet and while estimates vary, there are about 500 million locations you want to interconnect.

The next wave is people connecting to people with handheld wireless devices. Well, guess what? There are a whole lot more people then there are places - about 5 billion.

Now you are at the point where machines talk to machines. Again there are ten times the machines as there are people - 50 billion things that can talk to each other.

If you sum all these inflection points, you get this phenomenal hockey stick in terms of capacity. That is what is different this time. And capacity bandwidth determines an end-user’s experience in a way that it never did in the past.

Now let's talk R&D.

The R&D model has shifted a lot. There was all this influx of VC money which created lots of new ideas and new entrants. Time has marched on and there are newer ways of doing things.

I'll point you to coherent optics: Why is coherent a good thing? A lot of the R&D in the components space went after things such as polarisation mode dispersion compensation. Lots of companies sprang up, lots of approaches were proposed, all targeted at introducing more photonics complexity to solve the problem. But you can solve that problem with basic maths if you do it with a coherent receiver and digital signal processing. It just completely changes the game. And there are other examples - the emergence of pluggable optical transceivers.

So where the R&D goes, changes.

Could the industry benefit from more overall R&D, more VC money? Sure it could.

But will we get to the stage where optical problems need to be solved and there are insufficient resources?

There is always that risk.

You have seen a reduction in the large national labs - partially funded by industry and sometimes by government - those labs, their role in the marketplace has been reduced dramatically.

Also there isn’t that attractiveness to hardware-based ventures any more. There are some here and there but by and large, if a VC is weighing putting money into something where you can get to first revenue with software, you can do it in a year or two, and with tens of millions of dollars versus first revenue which might be hardware-based, might take three to five years, and require several hundred million dollars; the calculus there is a problem.

VCs will typically fund more of the smaller [software] ones with the expectation that some of them will meet their objectives.

The specialist companies - which Ciena represents - are fortunate in that we are not trying to be everything to everybody. Ciena is focussed on the sweet spot where the bandwidth of photonics and service deliver attractiveness of Ethernet play. We focus our R&D very specifically on places where we think we can drive differentiation.

What you have seen is people focussing their R&D.

There is significant R&D being conducted in Asia Pacific. Engineers’ wages are cheaper in countries like China while the scale of R&D there is hugely impressive. How can established companies such as Ciena compete?

For a North American homegrown - which is where Ciena originated - you have to play on a world stage. That translates into being willing to step out and be a little bit uncomfortable at times in terms of going into other territories and using other ideas.

We have the Gurgaon facility in India to develop a capability to take advantage of these new emerging markets. You get the benefits of wage rate but you also get exposure to new markets and different ways of thinking.

The problem statements in certain of these countries are much different. If they are building infrastructure for the first time they'll typically have a different approach than you might have from North America and Western Europe, where you are building infrastructure that must work with the last three or four generations of infrastructure. You get a different approach which you could argue is another view of innovation and creativity.

At the same time you are mindful of what do you put where. You try to line up complexity and risk with the folks who you think are best at mitigating those; if cost is the issue you align that with the workforce that will get it done at the lowest cost. If you absolutely have to hit a release window, you'll approach it differently compared to whether you have some flexibility in time but you must hit a specific performance or cost point.

You optimise the selection of where work gets done accordingly.

'I'm not sure there is any one industry that if we looked more like them, we would be better off.'

Any sense that Ciena must work smarter because of the scale of competition from other markets that can call on more engineers that you can hope for?

From a Ciena perspective, I wouldn't say it is a new challenge.

Ciena came to market in the mid-90s and the first competitors were behemoths: the Alcatels, Lucents, NECs and Fujitsus. We looked at the resources they had and they were ten times or a hundred times bigger. We have always been the smaller, faster, nimbler start-up.

I'm not sure we view it dramatically differently now other than some of the geographies might have changed.

How do you choose what R&D to perform? Product lines have their own evolutions driven by customer demands but how do you ensure you don't miss important developments?

This is probably the problem in R&D in some cases.

The way I describe it is the white space problem: you sit in a room and you throw up all your ideas, and you throw up all the customer-asks and you stare at them and say: What did we forget? That is the white space: What didn't you remember to put up there?

What you have to do is have an environment, a culture that rewards innovation. It is acknowledging that innovation can come from everywhere and anywhere. It can come off the factory floor, out of R&D, from the CTO, out of sales, customers and marketing. It just happens and you have to have a process by which you rapidly identify ideas, you discuss them, flesh them out and put them into buckets: Is this an incremental extension of the product? Is this taking me into an adjacent space that I hadn't thought of before? Is this going to change the world?

If you are smart about how you do R&D, you purposefully fund a little bit in each. You have to be diligent about making sure that you have got that balance of investment.

Ciena is a select member of AT&T’s Domain programme. How that is influencing your approach to R&D?