NeoPhotonics secures PIC specialist Santur

NeoPhotonics has completed the acquisition of Santur, the tunable laser and photonic integration specialist, boosting the company's annual turnover to a quarter of a billion dollars.

Source: Gazettabyte

Source: Gazettabyte

The acquisition helps NeoPhotonics become a stronger, vertically integrated transponder supplier. In particular, it broadens NeoPhotonics’ 40 and 100 Gigabit-per-second (Gbps) component portfolio, turns the company into a leading provider of tunable lasers and enhances its photonic integration expertise.

“Our business over a number of years has grown as the importance of photonic integrated circuits and the products deriving from them have grown,” says Tim Jenks, CEO of NeoPhotonics. “We believe it is a critical part of the network architecture today and going forward.”

Some US $39.2M in cash has been paid for Santur, and could be up to $7.5M more depending on Santur’s products' market performance over the next year.

NeoPhotonics has largely focussed on telecom but Jenks admits it is broadening its offerings. “Certainly a very significant portion of fibre-optic components are consumed in data and storage, and while historically that has not been a significant part of NeoPhotonics, it is a large and important market overall,” says Jenks.

"It [optical components] portends the future of the technology industry"

Tim Jenks, NeoPhotonics

The company will continue to address telecom but will add products to additional segments, including datacom. In July, the company announced its first CFP module supporting the 40 Gigabit Ethernet (GbE) 40GBASE-LR4 standard. Santur also supplies 40Gbps and 100Gbps 10km transceivers, in QSFP and CFP form factors, respectively.

Santur made its name as a tunable laser supplier and is estimated to have a 50% market share, according to Ovum. More recently it has developed arrays of 10Gbps transmitters. Such photonic integrated circuits (PICs) are used for the 10x10 multi-source agreement (MSA).

The acquisition complements NeoPhotonics’ 40Gbps and 100Gbps integrated indium-phosphide receiver components, enabling the company to provide the various optical components needed for 40 and 100Gbps modules. Santur also has narrow line-width tunable laser technology used at the coherent transmitter and receiver. But Jenks confirms that the company has not announced a transmitter at 28Gbps using this narrow line-width laser.

10x10 MSA

Santur has been a key player in the 10x10 MSA, developed as a low cost competitor to the IEEE 100 Gigabit Ethernet (GbE) 10km 100GBASE-LR4 and 40km -ER4 standards.

Large content service providers such as Google want cheaper 100GbE interfaces and the 10x10 MSA module, built using 10x10Gbps electrical and optical interfaces, is approximately half the cost of the IEEE interfaces.

"There is an opportunity with the 10x10 MSA," says Jenks. "The 10x10 does not require the gearbox IC, it is therefore lower cost and lower power, and fulfills a need that a 4x25Gig, with a rather immature technology and a requirement for a gearbox IC, does not."

In August the 10x10 MSA announced further specifications: a 10km version of the 10x10 MSA as well as two 40km-reach WDM interfaces: a 4x10x10Gbps and an 8x10x10Gbps. "There are end users that want to use these," says Jenks.

“The ability for a system vendor to lead is a challenging task. For a system vendor to lead and simultaneous lead in developing their componentry is a daunting task.”

Acquisitions

NeoPhotonics has made several acquisitions over the years, including four in 2006 (see chart). But Santur's revenues - some $50m - are larger than the aggregated revenues of all the previous acquisitions.

"I think of acquisitions as being inorganic for maybe two years and after that they are all organic," says Jenks. The acquisitions have helped NeoPhotonics broaden its technologies, strengthen the company's know-how and acquire customers and relationships.

“If someone says what did you do with this product from that company, they are asking the wrong question,” says Jenks. “By the law of averages, some [acquisitions] do better, some do worse but overall it has been quite successful.”

System vendors and vertical integration

Jenks says he is aware of system vendors taking steps to develop components and technology in-house but he does not believe this will change the primary role of the component vendors.

"Equipment vendors are building some things in-house for a near-term cost advantage, better insights into cost of production or better insights in how the technology can go,” says Jenks. “All reasons to have some form of vertical integration.”

But in technology leadership, no one company has a monopoly of talent. As such vertical integration is a double-edged sword, he says, a company can become quite expert but it can also isolate itself from what the rest of the world is doing.

“The ability for a system vendor to lead is a challenging task,” says Jenks. “For a system vendor to lead, and simultaneous lead in developing their componentry, is a daunting task.”

The world is flat

Jenks, whose background is in mechanical and nuclear engineering, highlights two aspects that strike him about the optical component industry.

One is that telecoms is ubiquitous and because optical components go into telecoms, optical components is a global industry. "The world is very flat in optical components,” he says.

Second, the hurdles to undertake experiments in optical components is lower than the significant capital investment needed for nuclear engineering, for example. "Colleges and universities turn out graduates in physics and electrical engineering that are well trained and need a lighter physical plant,” says Jenks. This aspect of the education promotes a globally diverse and a rather 'flat' industry.

“When I go to a trade show in China, Europe or the US, I'm running into colleagues from the industry that I know from each country we do business, and that is a lot of countries,” he says.

All this, for Jenks, makes optical components a fascinating industry, one that is on the leading edge of technology and also industrial trend.

"It [optical components] portends the future of the technology industry: flatter and flatter with more global players and more global competition," says Jenks. “At the moment it is novel in optical components but in a few years' time it won't be unique to optical components.”

NeoPhotonics at a glance

The company segments its revenues into the areas of speed and agility (10-100Gbps products, planar lightwave circuits - ROADMs, arrayed waveguide gratings), access (FTTh, cable TV, wireless backhaul) and SDH and slow-speed DWDM, products designed 3-5 years ago.

Historically these three segments' revenues have been equal but this year the access business has been larger, accounting for 40% of revenues due to China's huge FTTx rollout.

Huawei is NeoPhotonics' largest customer. “They have been as much as half our revenue," says Jenks. And depending on the quarter, Ciena and Alcatel-Lucent have been reported as 10% customers.

100 Gigabit: The Coming Metro Opportunity

Gazettabyte has published a Position Paper on the coming 100 Gigabit metro opportunity. (Click here to download a copy.) There has been several announcements in recent weeks from system and component vendors addressing 100 Gigabit metro networks.

The 19-page report looks at the status of the 100 Gigabit market, the drivers for 100 Gigabit deployment, the technology options and their merits. The paper then states how 100 Gigabit technologies such as direct-detection point-to-point, direct-detection WDM and coherent will fare in the metro.

Gazettabyte interviewed over 20 operators, system vendors, optical module and component makers for the Position Paper.

These include ADVA Optical Networks, Alcatel-Lucent, Brocade, BT, BTI Systems, Ciena, Cisco Systems, Cyoptics, John D'Ambrosia - chair of IEEE 100 Gig backplane study group, ECI Telecom, Ericsson, Finisar, Huawei, Infinera, Ixia, Juniper Networks, MultiPhy, Nokia Siemens Networks, Oclaro, Opnext, Level 3 Communications, Transmode, Verizon and ZTE.

Look forward to any comments you may have regarding the report, its position and conclusions.

ECOC 2011: Products and market trends

There were several noteworthy announcements at the European Conference on Optical Communications (ECOC) held in Geneva in September. Gazettabyte spoke to Finisar, Oclaro and Opnext about their ECOC announcements and the associated market trends.

100 Gig module

Opnext announced the first 100 Gigabit-per-second (Gbps) transponder at ECOC, a much anticipated industry development.

"Quite a few system vendors .... are looking at 'make-versus-buy' for the next-generation [of 100 Gig]."

"Quite a few system vendors .... are looking at 'make-versus-buy' for the next-generation [of 100 Gig]."

Ross Saunders, Opnext

The OTM-100 is a dual-polarisation, quadrature phase-shift keying (DP-QPSK) coherent design that fits into a 5x7-inch module and meets the Optical Internetworking Forum's (OIF) multi-source agreement (MSA). The module's coherent receiver uses a digital signal processor (DSP) developed by NTT Electronics.

"At the moment we are going through the bring-up in the lab," says Ross Saunders, general manager, next-gen transport for Opnext Subsystems.

According to Opnext, system vendors that have their own 100Gbps coherent designs are also interested in the 100Gbps module.

"There are a few developing in-house [100Gbps designs] that are not interested in going for the module solution," says Saunders. "But there is another camp - quite a few system vendors - who have their first-generation solution that are looking at 'make-versus-buy' for the next-generation."

System vendors' first-generation 100Gbps designs use hard-decision forward error correction (FEC). But customers want a 100Gbps design with a reach that gets close to matching that of 10Gbps, 40Gbps DPSK and 40Gbps coherent designs, says Opnext.

"There is demand to go to the next-generation with its higher overhead and soft-decision FEC," says Saunders. "That [soft-decision FEC] buys another 2-3dB of performance so you don't need as many regeneration stages." Translated into distances, the reach using soft-decision FEC is 1500-1600km rather than 800-900km, says Saunders.

Opnext expects to deliver samples to lead customers before the year end.

Meanwhile, Oclaro is also developing a 100Gbps coherent module. "It is on track and we expect to ship in early 2012," says Per Hansen, vice president of product marketing, optical networks solutions at Oclaro.

100 Gig receiver

Oclaro announced an integrated 100Gbps coherent receiver at ECOC.

The company claims the device takes less than half the board area as defined by the OIF. "Board space is at a premium on line cards," says Robert Blum, director of product marketing for Oclaro's photonic components. "If you can increase functionality, that translates to lower cost."

100 Gig indium phosphide integrated receiver Source: OclaroThe device has two inputs and four outputs. The inputs are the received 100Gbps optical signal and the local oscillator and the outputs are from the four balanced detectors.

100 Gig indium phosphide integrated receiver Source: OclaroThe device has two inputs and four outputs. The inputs are the received 100Gbps optical signal and the local oscillator and the outputs are from the four balanced detectors.

"The entire 90-degree hybrid mixing and the photo detection are all done in an indium phosphide single chip," says Blum.

40 Gig modules

Oclaro also announced it is shipping in volume its 40Gbps coherent transponder.

"There is a lot of interest from equipment vendors and service providers to use coherent in their networks," says Hansen "Coherent has advantages in the way it can overcome impairments."

Hansen says coherent will be used in the majority of new network deployments in future: "If you are deploying a network that is geared to 40Gbps and above, people will most likely deploy an all-coherent solution."

One reason why coherent is favoured is that the same technology can be scaled to 100Gbps, 400Gbps and even a Terabit.

Coherent technology, whose DSP is used for dispersion compensation, is also suited for mesh networks where switching wavelengths occurs. The coherent technology can compensate when it encounters new dispersion conditions following the switching.

In contrast 40Gbps direct-detection modules interest vendors for use in existing networks alongside 2.5Gbps and 10Gbps wavelengths, says Oclaro.

For networks geared to 40Gbps and above, people will most likely deploy an all-coherent solution

Per Hansen, Oclaro

"They can have very high power which can make it difficult for a new [high-speed] channel to live next to them but direct-detection modules are robust for those types of applications," says Hansen. "Where you will see people upgrading their existing networks, they will use DPSK or DQPSK transponders."

But Oclaro says that the split is not that clear-cut: 40Gbps coherent for new builds and direct-detection schemes when used alongside existing 10Gbps wavelengths. "There is a lot of variability in both of these approaches such that you can tailor them to different applications," says Hansen. "In the end, what it will come down to is what the customer is happy with and the price points, more than fundamental technology capabilities."

40G client-side interfaces

Finisar demonstrated at ECOC a serial 40Gbps CFP module that meets the 2km 40GBASE-FR standard.

"This will be the first 40 Gig serial module that is in a pluggable form factor," says Rafik Ward, vice president of marketing at Finisar. Indeed Finisar's CFP is a tri-rate design that also supports the ITU-T OC-768 SONET/SDH very short reach (VSR) and OTU3 standards.

The FR interface is the IEEE's 40 Gigabit Ethernet equivalent of the existing OC-768 VSR interface. The original 300pin VSR interface has a 16-channel electrical interface, each operating at 2.5Gbps, while the CFP module uses 10Gbps electrical channels.

IP routers can now be connected to DWDM platforms using the pluggable module, says Finisar. The pluggable will also enable system vendors to design denser line cards with two or even four CFP interfaces, as well as the option of changing the CFP to support other standards as required.

The tri-rate FR pluggable module's power consumption will be below 8W, says Finisar, which is shipping samples to customers.

Meanwhile, Opnext has announced it is sampling its 40GBASE-LR4, the 10km 40 Gigabit Ethernet interface, in a QSFP module. "It will be readily available by the end of the year," says Jon Anderson, director of technology programme at Opnext.

"The 40GBASE-LR4 [QSFP] will be readily available by the end of the year"

"The 40GBASE-LR4 [QSFP] will be readily available by the end of the year"

Jon Anderson, Opnext

Tunable laser XFP

Opnext and Oclaro have both announced 10Gbps tunable XFPs at ECOC. Having two new suppliers of tunable XFPs joining JDS Uniphase will increase market competition and reduce the price of the tunable pluggable.

"It really is a replacement for 300-pin transponders," says Blum. "You can now migrate 10Gbps links to a pluggable form factor."

Oclaro's tunable XFP is released for production. Opnext says its tunable XFP will be in volume production by early 2012.

ROADMs get 1x20 WSS

Finisar announced a 1x20 high-port count wavelength selective switch (WSS). The WSS supports a flexible spectrum grid that allows the channel width to be varied in increments of 12.5GHz, enabling future line rates above 100Gbps to be supported.

"This [1x20 WSS] has the possibility to enable some pretty interesting applications for next generation - colourless, directionless, contentionless networks," says Ward.

"This [40GBASE-FR] will be the first 40 Gig serial module that is in a pluggable form factor"

Rafik Ward, Finisar.

One common application of the 1x20 WSS is implementing a multi-degree node. The degree refers to the number of points that node branches out to in a mesh network, says Finisar. "The fundamental question is how many ports do you have in that node?" explains Ward.

For example, an 8-degree node communicates with eight other points in the mesh. With a 1x20 WSS, the architecture uses eight of the 20 as express ports - those 8 ports interfacing with other WSSs in the node - while the remaining 12 ports on that 1x20 WSS are used as add and drop ports.

"The advantage of a 1x20 WSS in this case is enabling a large number of express ports and a large number of add ports," says Ward.

A second application is for colourless or tunable multiplexing.

"One of the problems today enabling colourless ROADM operation is that typically the muxes and demuxes used are AWGs," says Ward. Having a tunable laser is all well and good but it becomes hardwired to a specific port because of the arrayed waveguide grating (AWG). "That specific port is configured for that particular wavelength," he says.

To make an 80-channel colourless design, that does not require manual intervention, four 1x20 WSSs are placed side-by-side with a 1x4 WSS connecting the four. This is a more elegant and compact than using existing 1x9 WSSs, which requires more than twice as many WSS units.

Pump lasers

Oclaro announced two 980nm pump laser products that enable more compact, lower-power amplifier designs.

"Board space is at a premium on line cards"

"Board space is at a premium on line cards"

Robert Blum, Oclaro

One is an uncooled 980nm 500mW pump laser and the second is two 600mW pump lasers in a single package. The dual-pump laser product halves the footprint and requires a single thermo-electric cooler only.

"The power consumption is significantly lower than what it would be for two discrete pump lasers," says Blum. "The 300mW uncooled pump laser doesn't go away but for dual-stage or mid-stage optical amplifiers instead of using multiple [300mW] lasers, you can use a single package," says Blum.

GPON-on a-stick

Finisar announced a 'GPON-on-a-stick' SFP module. The result of its acquisition of Broadway Networks in 2010, the SFP-based GPON optical network unit (ONU) enables an Ethernet switch to be connected to a PON. The product is aimed at enterprises as well as large residential premises. The GPON stick complements the company's existing EPON stick.

Further information:

ECOC 2011 Market focus presentations, click here

Rapid progress in optical transport seen at ECOC 2011, Ovum's Karen Liu, click here

Finisar and Capella enter 1×20 WSS market; signals shift, Ovum's Daryl Inniss, click here

The CTO Interviews

To download the ebook, click here.

To download the ebook, click here.

The book can be read using any ebook reader (the file has an .epub extention) but for best results please use the iBooks app on the iPhone or iPad.

The download file is large: 19M.

The ebook was created using the Book Creator app.

What innovation gives you: Marcus Weldon Q&A Part II

Marcus Weldon discusses network optimisation, the future of optical access, Wikipedia, and why a one-year technology lead is the best a system vendor can hope for, yet that one year can make all the difference. Part II of the Q&A with the corporate CTO of Alcatel-Lucent.

Photo: Denise Panyik-Dale

Photo: Denise Panyik-Dale

"The advantage window [of an innovative product] is typically only a year... Knowing that year exists doesn't mean that there is not a tremendous focus on innovation because that year is everything."

Marcus Weldon, Alcatel-Lucent

Q: Where is the scope for a system vendor to differentiate in the network? Even developments like Alcatel-Lucent's lightRadio have been followed by similar announcements.

A: There is potential for innovation and often other vendors say they are innovating in the same way and it looks like everyone is innovating at once. But when you dig down, there are substantial innovations that still exist and persist and give vendors advantage.

The advantage window is typically only a year because of the power of the R&D communities each of us has and the ability to leverage a rich array of components driven by Moore's Law that can cause even a non-optimal design to be effective.

You don't have to be the world's expert to create a design that works, and one that works at a reasonable price point. So there is a toolbox of components and techniques that people have now that allows them to catch up quickly without producing their own components.

"In wireless, historically, when you win, you win for a decade."

Innovation still exists but I believe the advantage - from the time you have it to the time the competition has it – is typically a year - it varies by domain, whereas perhaps it used to be 3-5 years because you had to design your own components.

Knowing that year exists doesn't mean that there is not a tremendous focus on innovation because that year is everything.

That year gets you mindshare and gets you the early trials in operator labs and networks. And that relationship you build, even if by the time you have completed that cycle your competitors have the same technology, you have a mindshare and an engagement with the potential customer that allows you to win the long-term business.

In wireless, historically, when you win, you win for a decade.

There is still quite a bit of proprietary stuff in wireless networks that it makes it easier to keep going with one vendor if that vendor has a product that is still compatible with their needs.

So the whole argument is that if you can innovate and gain a one-year advantage, you can gain a 10-year advantage potentially in some market segments - particularly wireless - for your product sets.

That is why innovation is still important.

What is Alcatel-Lucent's strategic focus? What are the key areas where you are putting your investments?

Clearly lightRadio is a huge one. We have massively scaled our investment in that area, the focus on the lightRadio architecture, the building of those cube arrays and working with operators to move some of the baseband processing into a pooled configuration running in a cloud.

In the optical domain we are streamlining that portfolio and focussing it around a core set of assets. The 1830 product which has the OTN/ WDM (optical transport network/ wavelength division multiplexing) combination switch in it, with 100 Gig moving to 400 Gig. So there is a strategic focus and a highly optimised, leading-edge optical portfolio which is a significant part of our R&D.

Photo: Denise Panyik-Dale

Photo: Denise Panyik-Dale

To be honest we had a bit of a mess with an optical portfolio left over from the [Alcatel and Lucent] merger and we had not rationalised it appropriately. We have completed that and have a leading-edge portfolio. If you look at our market share numbers, where we were beginning to fall into second place in optics we have turned that around.

The IP layer, of course, is another area. TiMetra, which is the company we bought and is the basis of our IP portfolio, had $20 million revenues when we bought it and now that is over a billion [dollar] business for us.

That team is really one of the biggest innovation engines in our company. It is doing all the packet processing, all the routing work for the company and has a very efficient R&D team that allows them to move into other areas.

So that is the team that is producing our mobile packet core. It is the team that owns our cacheing product. It is the team that increasingly owns some interest we have in the data centre space. That team is a big focus and IP and Ethernet expertise in that team is propagating across our portfolio.

In wireline it is 10G PON and the cross-talk cancellation in DSL. Those are the big focusses for us in terms of R&D effort.

On the software applications and services side, we are beginning to focus around new big themes. We have been a little bit all over the place in the applications business but now we have recently redefined what our business is and it is going to have some focussed themes.

Payment is one area which will remain important but payment moving to smart charging of different services is one focus area. Next-generation communications which means IMS [IP Multimedia Subsystem] but also immersive communications - rendering a communications service in the cloud in a virtual environment composed of the end participants - is a big focus.

The thing called customer experience optimisation is a big focus. Here you leverage all the network intelligence, all the device intelligence, all the call centre intelligence and allow the operator to understand whether a user is likely to churn. And it can optimise the network based on the output of that same decision and say, 'Ah, this seems to be an issue, I’m going to optimised the network so that this user is happier'. That is a big focus for us.

We are beginning to be active in machine-to-machine [communication] as well as this concept of cloud networking, which is distributed cloud, stitching together the network and moving resources around in a distributed resource way, as opposed to the centralised, monolithic data centre, the way that other people are focussing on.

Photo: Denise Panyik-Dale

Photo: Denise Panyik-Dale

"I also like Wikipedia, I have to say. It is 80-90% right and that is often good enough to help you quickly think through a topic"

How do you stay on top of developments across all these telecom segments? Are there business books you have found useful for your job?

I generally read technology treatises rather than business books. It is a failing of mine.

I also like Wikipedia, I have to say. It is 80-90% right and that is often good enough to help you quickly think through a topic. It gets you where you need to be and then you can go and look further into the detail.

So I would argue that Wikipedia is the secret tool of all CTOs and even product marketing managers and R&D managers.

I am a fan of the Freakonomics books. That is the sort of business book I like to read, looking at how to parse things whether they are true causal relationships or correlations, and how one thing affects another. I find those interesting and they have a business sense to them that explains how incentives motivate people.

I'm very interested in that aspect because I think in a company, the big issue a CTO has is how to influence the rest of a company. One of our roles increasingly combines our CTO and strategy teams under the same leader so we are looking at how to effectively evolve a company using the right set of incentives, and the right sort of technology bases, but you still need to provide an incentive for people to move in that new direction whatever it is you choose.

"TiMetra, which is the company we bought and is the basis of our IP portfolio, had $20 million revenues when we bought it and now that is over a billion [dollar] business for us."

I'm fascinated by how to influence people effectively to believe in your vision. Ultimately they have to more than believe, they have to move towards that and that will need to involve some sort of incentive scheme for target teams that you assign to a new project started quickly and that then influences the rest of the company. We have done that a few times.

I don't spend a lot of time reading business books. I spend a lot of time reading technical stuff. I think about how to influence corporate behavior. And I get my financial understanding just reading around work, reading lightly on business topics and talking to colleagues in the strategy department.

My answer would be Wikipedia, Freakonomics and technical treatises. Those are the things I use.

Much work is being done to optimise the network across the IP, Ethernet transport and photonic layers. Once this optimisation has been worked through, what will the network look like?

We were one of the founders of this vision of convergence to the IP-optical layer. Two or three years ago we announced something called the converged backbone transport which we sell as a solution, which is a tight interworking between the IP and optical layers.

Traffic at the optical layer doesn't need to be routed; it is kept at the photonic layer. Only traffic that needs additional treatment is forwarded up to the routing layer, and there is communication back and forth between the two layers.

So, for example, the IP layer has coloured optics and it can be told by the optical layer which wavelength to select in order to send the traffic into the optical layer without the optics having to do optical-electrical-optical regeneration, it can just do optical switching.

We have this intelligent interaction between the optical and IP layer which offloads the IP layer to the optical later which is a lower cost-per-bit proposition. But it also informs the IP layer about what colour wavelength or perhaps what VLAN (Virtual LAN) the optical layer expects to see so that the optical layer can more efficiently process the traffic and not have to do packet processing.

This is the interesting part, and this is where the industry is not aligned yet. We do not think that building full IP-functionality into the optical layer or building full optical functionality into an IP layer makes sense. It becomes essentially a 'God Box' and over the years such platforms ended up becoming a Jack of all trades and a master of none, being compromised in every dimension of performance.

They can't packet process at the density you would want, they can't optically switch at the density you would want, and all you have done is pushed two things into one box for the sake of physical appearance and not for any advantage.

What we believe you should do is keep them in separate boxes - they have separate processors, separate control planes and they even operate at different speeds - and have them tightly interworking. So they are communicating traffic information back and forth taking the traffic as much as possible themselves before forwarding to the other box when it is appropriate for it to handle the traffic.

Most operators agree that in the end having two boxes optimised for each of their activities is the right architecture, communicating effectively back and forth and acting on your traffic as a pair.

You didn't mention layer two.

I mentioned VLANs. And layer two does appear in the optical layer because the optical layer has to become savvy enough to understand Ethernet headers and VLANs is an example of that.

We do not believe that sophisticated packet processing has to appear in the optical layer because if you start doing that, you are building a large part of the router infrastructure - this whole FlexPath processor 400Gbps that we announced in the core of the router. If we move that into the optical layer, the optical layer essentially has the price of the routing layer and that is what you are trying to avoid.

You are trying to use the optical layer for the lower price-per-bit forwarding and the IP layer at the higher price per bit when it needs to be about higher price per bit. The pricing being capex and opex - the total cost of forwarding that bit: the power consumption of the box as well as the cost of the box.

Photo: Denise Panyik-Dale

Photo: Denise Panyik-Dale

"TDM-PON always is good enough, meaning it comes in at an attractive price - often more attractive than WDM - and it doesn't require you to rework the outside plant."

What comes after GPON and EPON and their 10 Gigabit PON variants?

Remarkably, as always, just as we thought we were running out of TDM (time-division multiplexing) PON options, there is a lot of work on 40 Gig PON in Bell Labs and other research institutes.

There are schemes that allow you to do 40 Gig TDM-PON. So once again TDM will survive longer than you thought, but there are options being proposed that are hybrids of WDM and TDM.

For example, it is easy to imagine four wavelengths of 10G PON and that is a flavour of 40G PON. In FSAN (Full Service Access Network), they have something called XG-PON2 which is meant to have all the forward-looking technologies in there.

Now they are getting serious about that because they are done with 10G PON to some extent so let's focus on what is next. There are a lot of proposals going into FSAN for combinations of different technologies.

One is pure 40G TDM, another is four wavelengths of 10G, and there are many other hybrids that I've seen going in there. But there is a sense that it is a combination of a TDM and a WDM solution that might make it into standards for 40G, and it might not.

And 'the might not' is always because you have to redo your outside plant a little bit because you need to take the power splitters for TDM and replace them with wavelength splitters. So there is some reluctance by operators to go back outside and upgrade their plants. Very often they say: 'Well, if I can just do TDM again, why don't I do it that way and reuse the infrastructure already deployed'.

That is always the tension between the two.

It is not that WDM ultimately isn't a good option - it probably is - but TDM always is good enough, meaning it comes in at an attractive price - often more attractive than WDM - and it doesn't require you to rework the outside plant.

But at some point there will be a transition where WDM becomes a more economically attractive than TDM and does merits going back to your outside plant and changing out the splitters you deployed.

It is not clear how operators will make money from an open network so how will Alcatel-Lucent make money from open application programming interfaces (APIs)?

It is something, in all honesty, we have wrestled with. I think we are coming to a firm view on this.

To start, I'll answer the operator question which is important since if they aren't making money, it is very unlikely we'll be making money.

Operators are beginning to see that open APIs are not just about allowing access to their network to what you could call the over-the-top long-tail of applications, although that is part of it.

Netflix, for example, could be over-the-top but you would not call it long-tail because it has got 23 million subscribers. Long-tail is any web service that the user accesses and that might want to access network resources - it might need location information, or it might want the operator to do billing or quality-of-service treatment.

But it is also [operators allowing access] to their own IT organisations so they can more rapidly develop their own set of core service applications. I think of it as the voice application or video application; they open it to their own IT department which makes it much easier to innovate.

They open it to their partners, and those might be verticals in healthcare, banking or some sort of commerce space where they are going to offer a business service. And it is an easier way for partners to innovate on the network. And then of course it is also open to third parties to innovate.

So operators are beginning to see that it is not just be about exposing to the long-tail where it might be hard to imagine any revenue coming, because the business models of those long-tail providers may not even be profitable so how can they pay for something if they are not even profitable?

But for their partners and own IT, it is a no-brainer.

Think of it as the new SDP (service delivery platform) in some ways. It's a web service-type SDP where they expose their own capabilities internally and you can, using a web services approach - which you can think of as a lightweight workflow where you make calls in sequence - you don't have a complex workflow engine that you are using as an orchestrator. They [operators] see that this is a much more efficient way to innovate and build partnerships.

So that makes it interesting to them. That means they will buy a platform that does that. So there is a certain amount of money for Alcatel-Lucent in selling a platform that does that. However the big money is probably not in the selling of that platform, it is in the selling of the network assets that go with it.

There is a business case around that which falls through to the underlying network because the network has capabilities that this layer is exposing. So there is clearly an interest we have in that.

It is very similar to our philosophy about IP TV. We never really owned preeminent IP TV assets. We had middleware that we acquired from Telefonica that we evolved but most of the time we partnered with Microsoft. And the reason we decided to partner was because we saw the real value in pulling through the network and tying into the middleware layer, but not needing to own the middleware layer.

There are people that believe it makes sense to own the exposure layer because it is a point-of-importance to our customers. But in fact a lot of the revenue is probably associated with the network that that layer pulls through.

There is one more part of the API layer that is very interesting.

When you sit in that layer, you see all transactions. And you don't now have to use DPI (deep-packet inspection) to see those transactions - they are actually processing the transactions. Think of the API as a request for a resource by a user for an application. If you sit in that layer, you see all these requests, you can understand the customer needs and the demand patterns much better than having to do DPI.

DPI has bad PR because it seems like it brings something illicit. In the API layer you are doing nothing illicit as you are naturally part of processing the request, so you get to see and understand the traffic in an open and interesting way. So there is a lot of value to that layer.

Can you monitise that? Maybe.

Maybe there is a play in analytics or definition of the customer that allows you to sell an advertising proposition. But certainly it helps you optimise the network because you can understand the traffic flows.

If you have those analytics in the API layer you can dynamically optimise the network which is then another value proposition to better sell the network but also an optimisation engine that runs on top of that network.

So there are lots of pull-through effects for open APIs but there is money associated with the layer itself.

For part one of the Q&A, click here.

Transmode chooses coherent for 100 Gigabit metro

Transmode has detailed its 100 Gigabit metro strategy based on a stackable rack, a concept borrowed from the datacom world.

The Swedish system vendor has adopted coherent detection technology for 100 Gigabit-per-second (Gbps) optical transmission, unlike other recent metro announcements from ADVA Optical Networking and MultiPhy based on 100Gbps direct-detection.

"Metro is a little bit diverse. You see different requirements that you have to adapt to."

Sten Nordell, Transmode

"We are getting requests for this and we think 2012 is when people are going to put in a low number of [100Gbps] links into the metro," says Sten Nordell, CTO at Transmode.

The 100Gbps requirements Transmode is seeing include connecting data centres over various distances. The data centres can be close - tens of kilometers - or hundreds of kilometers apart.

"They [data centre operators] want to get more capacity over longer distances over the fibre they have rented," says Nordell. "That is why we are going down the standards path of coherent technology that gives you that boost in power and distance."

Nordell says that customers typically only want one or two 100Gbps light paths to expand fibre capacity or to connect IP routers over a link already carrying multiple 10Gbps light paths. "Metro is a little bit diverse," he says. "You see different requirements that you have to adapt to."

Rack system approach

Transmode has adopted a stackable approach to its 100Gbps TM-series of chassis. The TM-2000 is a 4U-high dual 100Gbps rack that implements transponder, muxponder or regeneration functions. "We have borrowed from Ethernet switches - you add as you grow," says Nordell.

Up to four TM-2000 are used with one TM-301 or TM-3000 master rack, with the architecture supporting up to 80, 100Gbps wavelengths overall.

"If you have too many ROADMs in the way it is going to hurt you. We have seen that with 40 Gig."

The system also uses daughter boards that support various client-side interfaces while keeping the 100Gbps line-side interface - the most expensive system component - intact. "You can install a muxponder of 10x10Gig modules,” says Nordell. "When an IP router upgrades to a 100 Gig interface, you take out the daughter board and put in a 100 Gig transponder."

Transmode will offer two line-side coherent options, with a reach of 750km or 1,500km. "We want to make sure that customers' metro and long-haul requirements will be covered," says Nordell.

The reach of various 100Gbps technologies for the metro edge, core and regional networks. Source: Gazettabyte

The company chose coherent technology because it is an industry-backed standard. "We can benefit from coherent technology," he says. "If the industry aligns, the volumes of the components come down in price."

Coherent also simplifies the setting up and commissioning of agile photonic networks, especially as more ROADMs are introduced in the metro. "Coherent will help simplify this. All the others are more complex," he says. "Beforehand metro was more point-to-point, now we are seeing more flexibility."

Transmode recently announced it is supplying its systems to Virgin Mobile for mobile backhaul. "That is a metro network with all ROADMs in it," says Nordell. Such networks support multiple paths and that translates to a need for greater reach. "The power budget we need to have in the metro is going up a little bit."

Direct-detection technology was considered by Transmode but it chose coherent as it gives customers a better networking design capability.

Direct detection is also not as spectrally efficient as coherent: 200GHz or 100GHz-wide channels for a 100Gbps signal rather that coherent's 50GHz. "If you have too many ROADMs in the way it is going to hurt you, says Nordell.”We have seen that with 40 Gig."

The TM-2000 rack will begin testing in customers' networks at the start of 2012, with limited availability from mid-2012. The platform and daughter boards will be available in volume by year-end 2012.

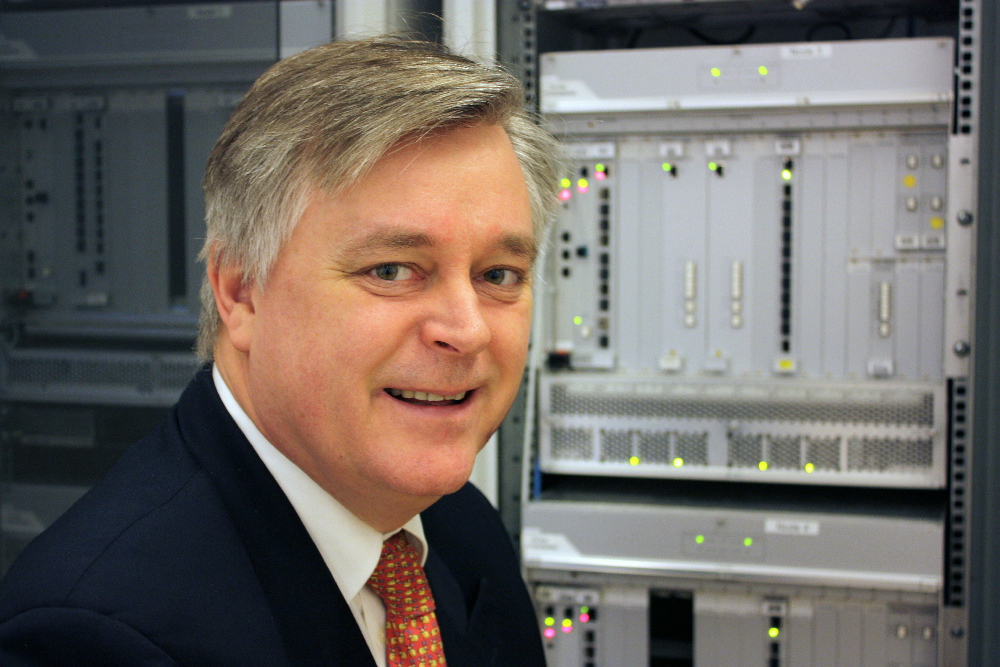

Intelligent networking: Q&A with Alcatel-Lucent's CTO

Alcatel-Lucent's corporate CTO, Marcus Weldon, in a Q&A with Gazettabyte. Here, in Part 1, he talks about the future of the network, why developing in-house ASICs is important and why Bell Labs is researching quantum computing.

Marcus Weldon (left) with Jonathan Segel, executive director in the corporate CTO Group, holding the lightRadio cube. Photo: Denise Panyik-Dale

Marcus Weldon (left) with Jonathan Segel, executive director in the corporate CTO Group, holding the lightRadio cube. Photo: Denise Panyik-Dale

Q: The last decade has seen the emergence of Asian Pacific players. In Asia, engineers’ wages are lower while the scale of R&D there is hugely impressive. How is Alcatel-Lucent, active across a broad range of telecom segments, ensuring it remains competitive?

A: Obviously we have a Chinese presence ourselves and also in India. It varies by division but probably half of our workforce in R&D is in what you would consider a low-cost country. We are already heavily present in those areas and that speaks to the wage issue.

But we have decided to use the best global talent. This has been a trait of Bell Labs in particular but also of the company. We believe one of our strengths is the global nature of our R&D. We have educational disciplines from different countries, and different expertise and engineering foci etc. Some of the Eastern European nations are very strong in maths, engineering and device design. So if you combine the best of those with the entrepreneurship of the US, you end up with a very strong mix of an R&D population that allows for the greatest degree of innovation.

We have no intention to go further towards a low-cost country model. There was a tendency for that a couple of years ago but we have pulled back as we found that we were losing our innovation potential.

We are happy with the mix we have even though the average salary is higher as a result. And if you take government subsidies into account in European nations, you can get almost the same rate for a European engineer as for a Chinese engineer, as far as Alcatel-Lucent is concerned.

One more thing, Chinese university students, interestingly, work so hard up to getting into university that university is a period where they actually slack off. There are several articles in the media about this. The four years that students spend in university, away from home for the first time, they tend to relax.

Chinese companies were complaining that the quality of engineers out of university was ever decreasing because of what was essentially a slacker generation, they were arguing, of overworked high-school students that relaxed at college. Chinese companies found that they had to retrain these people once employed to bring them to the level needed.

So that is another small effect which you could argue is a benefit of not being in China for some of our R&D.

Alcatel-Lucent's Bell Labs: Can you spotlight noteworthy examples of research work being done?

Certainly the lightRadio cube stuff is pure Bell Labs. The adaptive antenna array design, to give you an example, was done between the US - Bell Labs' Murray Hill - and Stuttgart, so two non-Asian sites at Bell Labs involved in the innovations. These are wideband designs that can operate at any frequencies and are technology agnostic so they can operate for GSM, 3G and LTE (Long Term Evolution).

"We believe that next-generation network intelligence, 10-15 years from now, might rely on quantum computing"

The designs can also form beams so you can be very power-efficient. Power efficiency in the antenna is great as you want to put the power where it is needed and not just have omni (directional) as the default power distribution. You want to form beams where capacity is needed.

That is clearly a big part of what Bell Labs has been focussing on in the wireless domain as well as all the overlaying technologies that allow you to do beam-forming. The power amplifier efficiency, that is another way you lose power and you operate at a more costly operational expense. The magic inside that is another focus of Bell Labs on wireless.

In optics, it is moving from 100 Gig to 400 Gig coherent. We are one of the early innovators in 100 Gig coherent and we are now moving forward to higher-order modulation and 400 Gig.

On the DSL side it the vectoring/ crosstalk cancellation work where we have developed our own ASIC because the market could not meet the need we had. The algorithms ended up producing a component that will be in the first release of our products to maintain a market advantage.

We do see a need for some specialised devices like the FlexPath FP3 network processor, the IPTV product, the OTN (Optical Transport Network) switch that is at the heart of our optical products is our own ASIC, and the vectoring/ crosstalk cancellation engine in our DSL products. Those are the innovations Bell Labs comes up with and very often they lead to our portfolio innovations.

There is also a lot of novel stuff like quantum computing that is on the fringes of what people think telecoms is going to leverage but we are still active in some of those forward-looking disciplines.

We have quite a few researchers working on quantum computing, leveraging some of the material expertise that we have to fabricate novel designs in our lab and then create little quantum computing structures.

Why would quantum computing be useful in telecom?

It is very good for parsing and pattern matching. So when you are doing complex searches or analyses, then quantum computing comes to the fore.

We do believe there will be processing that will benefit from quantum computing constructs to make decisions in ever-increasingly intelligent networks. Quantum computing has certain advantages in terms of its ability to recognise complex states and do complex calculations. We believe that next-generation network intelligence, 10-15 years from now, might rely on quantum computing.

We don't have a clear application in mind other than we believe it is a very important space that we need to be pioneering.

"Operators realise that their real-estate resource - including down to the central office - is not the burden that it appeared to be a couple of years ago but a tremendous asset"

You wrote a recent blog on the future of the network. You mentioned the idea of the emergence of one network with the melding of wireless and wireline, and that this will halve the total cost of ownership. This is impressive but is it enough?

The half number relates to the lightRadio architecture. There are many ingredients in it. The most notable is that traffic growth is accounted for in that halving of the total cost of ownership. We calculated what the likely traffic demand would be going forward: a 30-fold increase in five years.

Based on that growth, when we computed how much the lightRadio architecture, involving the adaptive antenna arrays, small cells and the move to LTE, if you combine these things and map it into traffic demand, the number comes up that you can build the network for that traffic demand and with those new technologies and still halve the total cost of ownership.

It really is quite a bit more aggressive than it appears because it is taking account of a very significant growth in traffic.

Can we build that network and still lower the cost? The answer is yes.

You also say that intelligence will be increasingly distributed in the network, taking advantage of Moore's Law. This raises two questions. First, when does it make sense to make your own ASICs?

When I say ASICs I include FPGAs. FPGAs are your own design just on programmable silicon and normally you evolve that to an ASIC design once you get to the right volumes.

There is a thing called an NRE (non-recurring engineering) cost, a non-refundable engineering cost to product an ASIC in a fab. So you have to have a certain volume that makes it worthwhile to produce that ASIC, rather than keeping it in an FPGA which is a more expensive component because it is programmable and has excess logic. On the other hand, there is economics that says an FPGA is the right way for sub-10,000 volumes per annum whereas for millions of parts you would do an ASIC.

We work on both those types of designs. And generally, and I think even Huawei would agree with us, a lot of the early innovation is done in FPGAs because you are still playing with the feature set.

Photo: Denise Panyik-Dale

Photo: Denise Panyik-Dale

Often there is no standard at that point, there may be preliminary work that is ongoing, so you do the initial innovation pre-standard using FPGAs. You use a DSP or FPGA that can implement a brand new function that no one has thought of, and that is what Bell Labs will do. Then, as it starts becoming of interest to the standard bodies, you have it implemented in a way that tries to follow what the standard will be, and you stay in a FPGA for that process. At some point later, you take a bet that the functionality is fixed and the volume will be high enough, and you move to an ASIC.

So it is fairly commonplace for novel technology to be implemented by the [system] vendors. And only in the end stage when it has become commoditised to move to commercial silicon, meaning a Broadcom or a Marvell.

Also around the novel components we produce there are a whole host of commercial silicon components from Texas Instruments, Broadcom, Marvell, Vitesse and all those others. So we focus on the components where the magic is, where innovation is still high and where you can't produce the same performance from a commercial part. That is where we produce our own FPGAs and ASICs.

Is this trend becoming more prevalent? And if so, is it because of the increasing distribution of intelligence in network.

I think it is but only partly because of intelligence. The other part is speed. We are reaching the real edges of processing speed and generally the commercial parts are not at that nanometer of [CMOS process] technology that can keep up.

To give an example, our FlexPath processor for the router product we have is on 40nm technology. Generally ASICs are a technology generation behind FPGAs. To get the power footprint and the packet-processing performance we need, you can't do that with commercial components. You can do it in a very high-end FPGA but those devices are generally very expensive because they have extremely low yields. They can cost hundreds or thousands of dollars.

The tendency is to use FPGAs for the initial design but very quickly move to an ASIC because those [FGPA] parts are so rare and expensive; nor do they have the power footprint that you want. So if you are running at very high speeds - 100Gbps, 400Gbps - you run very hot, it is a very costly part and you quickly move to an ASIC.

Because of intelligence [in the network] we need to be making our own parts but again you can implement intelligence in FPGAs. The drive to ASICs is due to power footprint, performance at very high speeds and to some extent protection of intellectual property.

FPGAs can be reverse-engineered so there is some trend to use ASICs to protect against loss of intellectual property to less salubrious members of the industry.

Second, how will intelligence impact the photonic layer in particular?

You have all these dimensions you can trade off each other. There are things like flexible bit-rate optics, flexible modulation schemes to accommodate that, there is the intelligence of soft-decision FEC (forward error correction) where you are squeezing more out of a channel but not just making it a hard-decision FEC - is it a '0' or a '1' but giving a hint to the decoder as to whether it is likely to be a '0' or a '1'. And that improves your signal-to-noise ratio which allows you to go further with a given optics.

So you have several intelligent elements that you are going to co-ordinate to have an adaptive optical layer.

I do think that is the largest area.

Another area is smart or next-generation ROADMs - we call it connectionless, contentionless, and directionless.

There is a sense that as you start distributing resources in the network - cacheing resources and computing resources - there will be far more meshing in the metro network. There will be a need to route traffic optically to locally positioned resources - highly distributed data centre resources - and so there will be more photonic switching of traffic. Think of it as photonic offload to a local resource.

We are increasingly seeing operators realise that their real-estate resource - including down to the central office - is not the burden that it appeared to be a couple of years ago but a tremendous asset if you want to operate a private cloud infrastructure and offer it as a service, as you are closer to the user with lower latency and more guaranteed performance.

So if you think about that infrastructure, with highly distributed processing resources and offloading that at the photonic layer, essentially you can easily recognise that traffic needs to go to that location. You can argue that there will be more photonic switching at the edge because you don't need to route that traffic, it is going to one destination only.

This is an extension of the whole idea of converged backbone architecture we have, with interworking between the IP and optical domains, you don't route traffic that you don't need to route. If you know it is going to a peering point, you can keep that traffic in the optical domain and not send it up through the routing core and have it constantly routed when you know from the start where it is going.

So as you distribute computing and cacheing resources, you would offload in the optical layer rather than attempt to packet process everything.

There are smarts at that level too - photonic switching - as well as the intelligent photonic layer.

For the second part of the Q&A, click here

Is optical components becoming a buyer's market?

"An organisation's gross margins ride on these new products"

Daryl Inniss, Ovum Components.

The global optical component market was down 2% in the second quarter of 2011 at US $1.55 billion, according to Ovum.

The good news is that the market research company is forecasting that modest growth will resume this quarter now that the build-up in component inventory that led to the market contraction has largely been worked through.

But Ovum is warning that there are signs that the continued weak market conditions and fierce competition could lead to sharp price declines even for newer, high-valued products. "An organisation's gross margins ride on these new products," says Daryl Inniss, practice leader, Ovum Components.

Oclaro's CEO on a recent earnings call said he was being asked for price concessions on 40Gbps products. Ovum also says the ROADM and tunable laser XFPs markets are becoming more crowded and competitive.

Inniss stresses that there is no evidence that companies are cutting prices to gain an edge but while he expects volumes will grow, intense pricing pressure should now be expected.

LightCounting points out that the slowdown in sales of optical component and modules in early 2011 has been limited to products that did very well in 2010 or which had long lead times, like wavelength-selective switches for ROADMs and 40Gbps modules. It says there is little, if any, excess inventory of components accumulated across the broader market.

"The telecom transceiver market remained steady in Q1 2011, but it declined further in Q2 mostly due to lower sales of 40Gig client-side modules," says Vladimir Kozlov, CEO of LightCounting. "We expect that by the end of this year, the telecom market segment will be strong again."

Best in a decade

The second quarter market dip follows a period where the optical components industry experienced its strongest yearly growth for a decade. The market reached US $6 billion for the year ending first quarter 2011 - a first since 2001.

So long as network expansion keeps up with traffic, we are looking at sustainable growth”

So long as network expansion keeps up with traffic, we are looking at sustainable growth”

Vladimir Kozlov, LightCounting

The six quarters of consecutive market growth up to the second quarter was due partly to the overall health of the telecom industry. The service provider industry - wireless and wireline - grew 6% year-on-year between 2Q10 and 1Q11, to reach $1.82 trillion. In turn, the equipment market, mainly telecom vendors but including the likes of Brocade, grew 15% to $41.4 billion.

Ovum attributes the 28% growth in optical components between 2Q10 and 1Q 2011 to strong growth in the fibre-to-the-x (FTTx) market as well as new revenues entering the market from datacom players. A third factor was optical equipment vendors over-ordering long lead-time items – such as ROADMs – to secure supply.

“ROADMS did grow nicely but if you look at wavelength-selective switches, it is not such a big market," says Kozlov. The market research firm says the wavelength-selective switch market was $280 million in 2010.

LightCounting says 10 Gigabit SFP+ optical transceivers was a market highlight in 2010, with volume shipments tripling. Ethernet SFP+ sales alone reached $180 million in 2010, and will grow to $250 million this year.

“The optical component market grew 36% in 2010, and in 2011 we’re projecting it will grow 7%,”says Inniss

But competition is intense. Finisar may be the market leader but only 4% market share separates the players in second through to sixth place, says Ovum. “It’s a very competitive market and there is no breakaway here,” says Inniss.

Another challenge is the emergence of the Chinese optical component players. The large-scale deployment of FTTx being undertaken by the main three Chinese operators means that there is a huge market opportunity for local optical component and module players. The Chinese market also accounts for half the all 40 Gigabit-per-second shipments, according to Infonetics Research.

“Looking at the western suppliers, everyone is reporting slowdowns and drops in the second quarter [of 2011],” says Kozlov. “Yet from the data we are getting from the Chinese optical component players, they grew 35% in 2010 and are on track for 30% growth this year.”

Another challenge is for firms to fund sufficient R&D. Share prices took a severe hit after the companies issued warnings about second-quarter sales. “The entire optical component market is depressed because of the localised correction,” says Inniss. “It will still grow but because it is so much smaller than 2010, capital markets are bashing the companies.”

Since the stock market is an important source of investment, it may take several years for the market to recover the share price levels at the start of 2011. “It won’t stop investment in technology but there is going to be real hard eyes on each decision that is made,” says Inniss.

The main challenge facing optical component players is not so much technical issues but more the requirement to continually decrease costs. This is not new but neither is it going away, says Inniss.

Positive outlook

Yet the analysts expect market growth to continue.

Inniss points to the growing role of optics for short-distance interfaces: “The I/O (input-output) bandwidth requirements are sufficiently high, whether it is the backplane or chip-to-chip connections, that the market realisation is that optics will play a role.”

Ovum also highlights consumer market developments such as the USB 3.0 interface which will drive the market for active optical cables. “It [the consumer market] is not going to happen tomorrow - meaning 2012 - but it is something that is coming and has the potential to transform the industry,” says Inniss.

“Companies such as Finisar and Avago [Technologies] are becoming more assertive in enforcing their intellectual rights,” says Kozlov. This is as a positive development that has been missing in the past: “Protecting your intellectual property ultimately helps you become profitable,” he says.

LightCounting also highlights the need for network investment to keep track with traffic growth. "So long as network expansion keeps up with traffic, we are looking at sustainable growth,” says Kozlov. See Plotting transceiver shipments versus traffic growth.

This article is based on a piece that appeared in the ECOC 2011 exhibition guide.

MultiPhy boosts 100 Gig direct-detection using digital signal processing

The MP1100Q chip is being aimed at two cost-conscious metro networking requirements: 100 Gigabit point-to-point links and dense wavelength-division multiplexing (DWDM) metro networks.

The MP1100Q as part of a 100 Gig CFP module design. Source: MultiPhy

The MP1100Q as part of a 100 Gig CFP module design. Source: MultiPhy

The 100 Gigabit market is still in its infancy and the technology has so far been used to carry traffic across operators’ core networks. Now 100 Gigabit metro applications are emerging.

Data centre operators want short links that go beyond the IEEE-specified 10km (100GBASE-LR4) and 40km (100GBASE-ER4) reach interfaces, while enterprises are looking to 100 Gigabit-per-second (Gbps) DWDM solutions to boost the capacity and reach of their rented fibre. Existing 100Gbps coherent technologies, designed for long-haul, are too expensive and bulky for the metro.

“There is long-haul and the [IEEE] client interfaces and a huge gap in between,” says Avishay Mor, vice president of product management at MultiPhy.

It is this metro 'gap' that MultiPhy is targeting with its MQ1100Q chip. And the fabless chip company's announcement is one of several that have been made in recent weeks.

ADVA Optical Networking has launched a 100Gbps metro line card that uses a direct-detection CFP, while Transmode has detailed a 100Gbps coherent design tailored for the metro. The 10x10 MSA announced in August a 10km interface as well as a 40km WDM design alongside its existing 10x10Gbps MSA that has a 2km reach.

MultiPhy's MP1100Q IC will enable two CFP module designs: a point-to-point module to connect data centres with a reach of up to 80km, and a DWDM design for metro core and regional networks with a reach up to 800km.

"MLSE is recognised as the best solution for mitigating inter-symbol interference."

Design details

The M1100Q uses a 4x28Gbps direct-detection design, the same approach announced by ADVA Optical Networking for its 100Gbps metro card. But MultiPhy claims that the 100Gbps DWDM CFP module will squeeze the four bands that make up the 100Gbps signal into a 100GHz-wide channel rather than 200GHz, while its IC implements the maximum likelihood sequence estimation (MLSE) algorithm to achieve the 800km reach.

The four optical channels received by a CFP are converted to electrical signals using four receiver optical subassemblies (ROSAs) and sampled using the MP1100Q’s four analogue-to-digital (a/d) converters operating at 28Gbps.

The CFP design using MultiPhy’s chip need only use 10Gbps opto-electronics for the transmit and receive paths. The result is a 100Gbps module with a cost structure based on 4x10Gbps optics.

The lower bill-of-materials impacts performance, however. “When you over-drive these 10Gbps opto-electronics - on the transmit and the receive side - you create what is called inter-symbol interference," says Neal Neslusan, vice president of sales and marketing at MultiPhy.

Inter-symbol interference is an unwanted effect where the energy of a transmitted bit leaks into neighboring signals. This increases the bit-error rate and makes the detector's task harder. "The way that we get around it is using MLSE, recognised as the best solution for mitigating inter-symbol interference," says Neslusan.

Unwanted channel effects introduced by the fibre, like chromatic dispersion, also induce inter-symbol interference and are also countered by the MLSE algorithm on the MP1100Q.

MultiPhy is proposing two CFP designs for its chip. One is based on on-off-keying modulation to achieve 80km point-to-point links and which will require a 200GHz channel to accommodate the 100Gbps signal. The second uses optical duo-binary modulation to achieve the longer reach and more spectrally efficient 100GHz spacings.

The company says the resulting direct-detection CFP using its IC will cost some US $10,000 compared to an estimated $50,000 for a coherent design. In turn the 100G metro CFP’s power consumption is estimated at 24W whereas a coherent design consumes 70W.

MP1100Q samples have been with the company since June, says Mor. First samples will be with customers in the fourth quarter of this year, with general availability starting in early 2012.

If all goes to plan, first CFP module designs using the chip will appear in the second half of 2012, claims MultiPhy.