2012: The year of 100 Gigabit transponders

“The world is moving to coherent, there is no question about that”

“The world is moving to coherent, there is no question about that”

Per Hansen, Oclaro

The 100Gbps module expands the company's coherent offerings. Oclaro is already shipping a 40Gbps coherent module. “The world is moving to coherent, there is no question about that,” says Per Hansen, vice president of product marketing, optical networks solutions at Oclaro.

Why is this significant?

Having a selection of 100Gbps long-haul optical modules will aid the uptake of high-capacity links in the network core. Opnext announced in September its OTM-100 100Gbps coherent optical module, in production from April 2012. And at least one other module maker has worked with ADVA Optical Networking to make its 100Gbps module, a non-coherent design.

The 100Gbps coherent optical modules will enable system vendors without their own technology to enter the marketplace. It also presents those system vendors with their own 100Gbps technology - the likes of Alcatel-Lucent, Ciena, Cisco and Huawei - with a dilemma: do they continue to evolve their products or embrace optical modules?

“These system vendors have developed [100Gbps] in-house to have a strategic differentiator," says Hansen. "But with lower volumes you have a higher cost.” The advent of 100Gbps modules diminishes the strategic advantage of in-house technology while enabling system vendors to benefit from cheaper, more broadly available modules, he says.

What has been done

Oclaro is still developing the MI 8000XM module and has yet to reveal the reach performance of the module: “We want to do many more tests before we share,” says Hansen. The module will meet the Optical Internetworking Forum's (OIF) 100Gbps module maximum power consumption limit of 80W, he says.

The OIF 100 Gigabit module architecture

The OIF 100 Gigabit module architecture

The NEL DSP chip is the same device that Opnext is using for its 100Gbps module. “A partnership agreement and sourcing arrangement with NEL allows us to come to market with what we think is a very good product at the right time,” says Hansen.

The DSP uses soft-decision forward error correction. Opnext has said this adds 2-3dB to the optical performance to achieve a reach of 1500-1600km before regeneration.

In 2010 Oclaro announced it had invested US $7.5 million in Clariphy Communications as part of the chip company's development of its 100Gbps coherent receiver chip, the CL10010. As part of the agreement, Oclaro will get a degree of exclusivity as a module supplier (at least one other module maker will also benefit).

ClariPhy has said that while it will not be first to market with a 100Gbps ASIC, the CL10010 will be a 28nm CMOS second-generation chip design. To be able to enter the market with a 100Gbps module next year, Oclaro adopted NEL's design which exists now.

Next

Hansen says that the MI 8000XM, which uses a lithium niobate modulator, is designed to achieve maximum reach and optical performance. But future 100Gbps modules will be developed that may use other modulator technologies and be optimised in terms of power or size.

Hansen is also in no doubt that the next speed hike after 100Gbps will be 400Gbps. Like 100Gbps, there will be some early-adopter operators that embrace the technology one or two years before the consensus.

Such a development is still several years away, however, since an industry standard for 400Gbps must be developed which is only expected in 2014 only.

Alcatel-Lucent adds networking to enhance the cloud

Alcatel-Lucent has developed an architecture that addresses the networking aspects of cloud computing. Dubbed CloudBand, the system will enable operators to deliver network-enhanced cloud services to enterprise customers. Operators can also use CloudBand to deliver their own telecom services.

“As far as we know there is no other system that bridges the gap between the network and the cloud"

Dor Skuler, Alcatel-Lucent

Alcatel-Lucent estimates that moving an operator's services to the cloud will reduce networking costs by 10% while speeding up new service introductions.

“As far as we know there is no other system that bridges the gap between the network and the cloud," says Dor Skuler, vice president of cloud solutions at Alcatel-Lucent.

In an Alcatel-Lucent survey of 3,500 IT decision makers, the biggest issue stopping their adoption of cloud computing was performance. Their issues of concern include service level agreements, customer experience, and ensuring low latency and guaranteed bandwidth.

Using CloudBand, a customer uses a portal to set such cloud parameters as the virtual machine to be used, the hypervisor and the operating system. Users can also set networking parameters such as latency, jitter, guaranteed bandwidth and whether a layer two or layer three VPN is used, for example. The user can even define where data is stored if regulation dictates that the data must reside within the country of origin.

Architecture

CloudBand uses an optimisation algorithm developed at Alcatel-Lucent's Bell Labs. The algorithm takes the requested cloud and networking settings and, knowing the underlying topology, works out the best configuration.

“This is a complex equation to optimise,” says Skuler. “All these resources - all different and in different locations - need to be optimised; the network needs to be optimised, I also have the requirements of the applications and I want to optimise it on price.” Moreover, these parameters change over time.

"We recommend service providers have tiny clouds that look like one logical cloud yet have different attributes"

According to Alcatel-Lucent, operators have an advantage over traditional cloud service providers in owning and being able to optimise their networks for cloud. Operators also have lots of locations - central offices and exchanges - distributed across the network where they can site cloud nodes.

Having such distributed IT resources benefits the end user by having more localised resources even though it makes the optimisation task of the CloudBand algorithm more complicated. “We recommend service providers have tiny clouds that look like one logical cloud yet have different attributes,” says Skuler.

At the heart of the architecture is the management and orchestration system (See diagram). The system takes the output of the optimisation algorithm, and provisions the cloud resources - moving the virtual machine to a particular site, turning it on, assuring its performance, checking the service level agreement and creating the required billing record.

Once assigned a service is fixed, but in future CloudBand will adapt existing services as new services are set up to ensure continual cloud optimisation.

Benefits

"Not every [telecom] service can be virtualised but overall we believe we can shave 10% out of the cost of the network,” says Skuler.

Alcatel-Lucent has already implemented its application store software, content management applications and digital media for use in the cloud. Skuler says video, IP Multimedia Subsystem (IMS) and the applications that run on the IMS architecture can also be moved to the cloud, while Alcatel-Lucent's lightRadio wireless architecture, announced earlier this year, can pool and virtualise cellular base station resources.

But Skuler says that the real benefit for operators moving services to the cloud is agility: operators will be able to introduce new cloud-based services in days rather than months. This will reduce time-to-revenue and costs while allowing operators to experiment with new services.

CloudBand will be ready for trialling in operators’ labs come January. The system will be available commercially in the first half of 2012.

Next-gen 100 Gigabit short reach optics starts to take shape

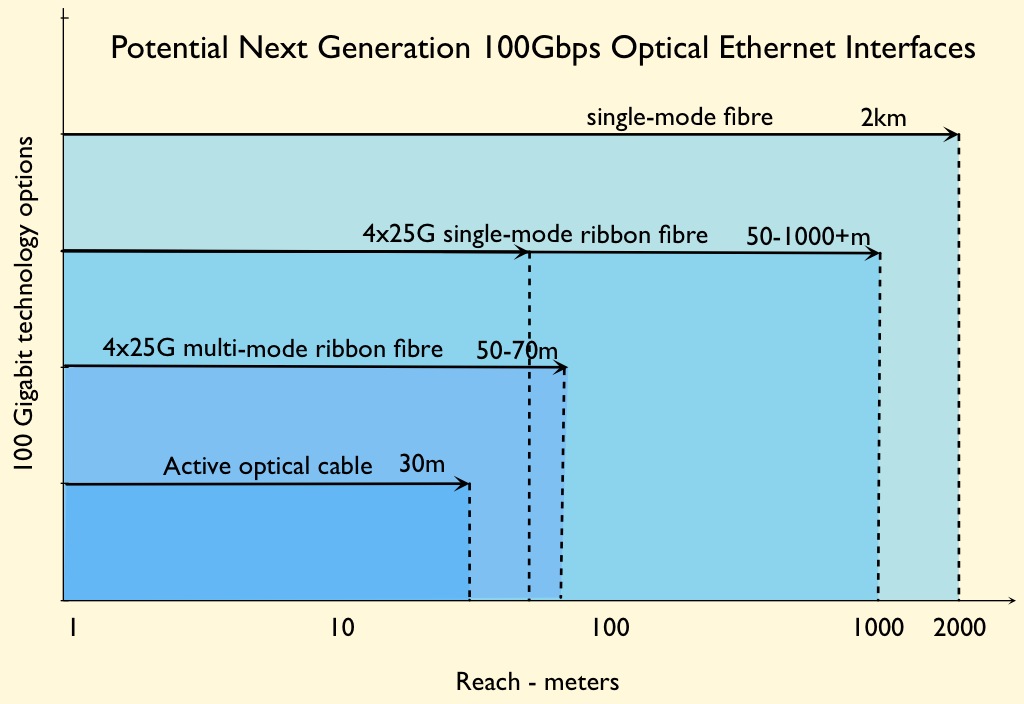

The latest options for 100 Gigabit-per-second (Gbps) interfaces are beginning to take shape following a meeting of the IEEE 802.3 Next Generation 100Gb/s Optical Ethernet Study Group in November.

The interface options being discussed include:

- A parallel multi-mode fibre using a VCSEL with a reach of 50m to 70m. An active optical cable version with a 30m reach, limited by the desired cable length rather than the technology, using silicon photonics or a VCSEL has also been proposed.

- A parallel single-mode fibre using a 1310nm electro-absorption modulated laser (EML) or silicon photonics with a range of 50m to 1000m+.

- A duplex single-mode fiber, using wavelength division multiplexing (WDM) or pulse-width modulation (PAM), an EML or silicon photonics for a 2km reach.

“I think in the end all will be adopted,” says Marek Tlalka, director of marketing at Luxtera. "Users will be able to choose what is most economical."

Jon Anderson, director of technology programme at Opnext, stresses however that these are proposals.

"No decisions were reached by the Study Group on any of these proposals," he says. “The Study Group is only working towards defining objectives for a next-gen 100 Gigabit Ethernet Optics project.” Agreement on technical solutions is outside the scope of the Study Group.

Anderson says there is a general agreement to define a 4x25Gbps multi-mode fibre optical interface. But the issues of reach and multi-mode fibre type (OM3, OM4) are still being studied.

“The Study Group has not reached any agreement on whether a 100GE short reach single-mode objective should be pursued," says Anderson. “Discussion at this point are on reach, power consumption and relative cost of possible solutions with respect to (the 10km) 100GBASE-LR4."

Best blogs, books and apps of 2011?

Also is there a journal or blog site that you have started to read that you have come to value? Apps can also be included.

In the last year I have started to read Ericsson Business Review which I really like. It has impressive forward-looking articles and great infographics. I also like the Telecom Ramblings blog, a valuable resource.

As for apps, I have discovered Zite, an iPad news aggregator app which is excellent. I also now use two fantastic drawing app packages - OmniGraphSketcher for graphs and TouchDraw for diagrams. I have used both for reports and for Gazettabyte. Lastly Book Creator, an ebook-making app.

Please comment and share your thoughts.

Luxtera's 100 Gigabit silicon photonics chip

Luxtera has detailed a 4x28 Gigabit optical transceiver chip. The silicon photonics company is aiming the device at embedded applications such as system backplanes and high-performance computing (HPC). The chip is also being used by Molex for 100 Gigabit active optical cables. Molex bought Luxtera's active optical cable business in January 2011.

“Do I want to invest in a copper backplane for a single generation or do I switch over now to optics and have a future-proof three-generation chassis?”

Marek Tlalka, Luxtera

What has been done

To make the optical transceiver, a distributed-feedback (DFB) laser operating at 1490nm is coupled to the silicon photonics CMOS-based chip. One laser only is required to serve the four individually modulated 28Gbps transmit channels, giving the chip a 112Gbps maximum data rate. There are also four receive channels, each using a germanium-based photo-detector that is grown on-chip.

The DFB is the same laser that Luxtera uses for its 4x10Gbps and 4x14Gbps designs. What has been changed is the Mach-Zehnder waveguide-based modulators that must now operate at 28Gbps, and the electronics amplifiers at the receivers. “The chip [at 5mmx6mm] is pretty much the same size as our 4x10 and 4x14 Gig designs,” says Marek Tlalka, director of marketing at Luxtera.

Source: Luxtera

Source: Luxtera

Luxtera is announcing the 100 Gigabit chip which it is sampling to customers. Molex, for example, will package the chip and the laser to make its active optical cable products. Luxtera will package the transceiver chip and laser in a housing as an OptoPHY, a packaged product it already provides at lower speeds. The company will sell the 100Gbps OptoPHY for embedded applications such as system backplanes and HPC.

Applications

The 100GbE transceiver chip is targeted at next-generation backplane applications as well as active optical cables. And it is enterprise vendors that make switches, routers and blade servers that are considering adopting optical backplanes for their next-generation platforms, says Luxtera.

According to Tlalka, system vendors are moving their backplanes from 15Gbps to 28Gbps: “It is pretty obvious that building an electrical backplane at this data rate will be extremely challenging.”

When vendors design a new chassis, they want it to support three generations of line cards. Even if a system vendor develops a 28Gbps copper-based backplane, it will need to go optical when the backplane data rate increases to 40-50Gbps in 2-3 years’ time and 100Gbps when that speed transition occurs. “Do I want to invest in a copper backplane for a single generation or do I switch over now to optics and have a future-proof three-generation chassis?” says Tlalka.

Exascale computers, 1000x more powerful than existing supercomputers planned for the second half of the decade, is another application area. Here there is a need for 25-28Gbps links between chips, says Tlalka.

System platforms and HPC are ideal candidates for the packaged transceiver chip but longer term Luxtera is eyeing the move of optics inside chips such as ASICs. Such system-on-chip optical integration could include Ethernet switch ICs (See example switch ICs from Broadcom and Intel (Fulcrum)) and network interface cards. Another example highlighted by Tlalka is CPU-memory interfaces.

However such applications are at least five years away and there are significant hurdles to be overcome. These include resolving the business model of such designs as well as the technical challenges of coupling the ASIC to the optics and the associated mechanical design.

Standards

Luxtera's 100Gbps transceiver chip supports a variety of standards.

Operating at 25Gbps per channel, the chip supports 100GbE and Enhanced Data Rate (EDR) Infiniband. The ability to go to 28Gbps per channel means that the transceiver can also support the OTN (optical transport network) standard as well as proprietary backplane protocols that add overhead to the basic 25Gbps data rate.

In addition the chip supports the OIF's short reach and very short reach interfaces that define the interface between an ASIC and the optical module.

The chip is also suited for some of the IEEE Next Generation 100Gbps Optical Ethernet Study Group standards now in development. These interfaces will cover a reach of 30m to 2km.

400GbE and HDR Infiniband

Luxtera says that it is working on different channel ’flavours' of 100G. It is also following developments such as Infiniband Hexadecimal Data Rate (HDR) and 400GbE.

HDR will use 40Gbps channels while there is still an industry debate as to whether 400GbE will be implemented using ten channels, each at 40Gbps, or as a 16x25Gbps design.

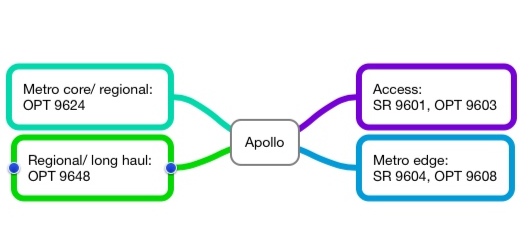

ECI Telecom's Apollo mission

The privately-owned system vendor has launched Apollo, a family of what it calls optimised multi-layer transport platforms.

Event

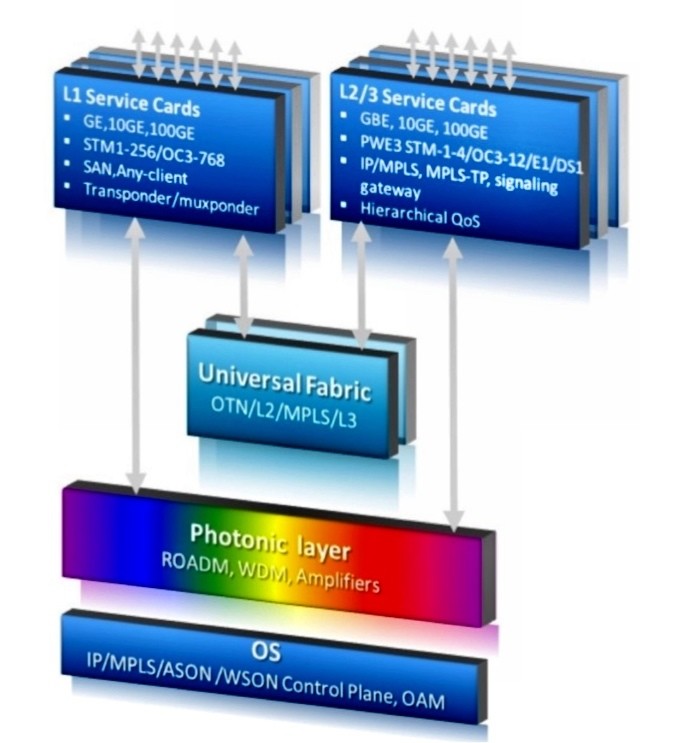

ECI Telecom has launched a family of platforms that combines optical transmission, Ethernet and optical transport network (OTN) switching and IP routing.

The 9600 series platforms, dubbed Apollo, combines the functionality of what until now has required a packet-optical transport system (P-OTS) and a carrier Ethernet switch router (CESR).

The Apollo 9600 series architecture. Source: ECI Telecom

The Apollo 9600 series architecture. Source: ECI Telecom

ECI refers to the capabilities of such a combined platform as optimised multi-layer transport (OMLT). Analysts view the platform as a natural evolution of P-OTS rather than a new category of system.

Why is it important?

ECI's Apollo 9600 series is the first to combine dense wavelength-division multiplexing (DWDM) with carrier Ethernet switch routing. It is also one of the first platforms that bring OTN switching to the metro; until now OTN switching has been confined to the network core.

Apollo addresses a shortfall of packet optical transport, namely its limited layer 2 capabilities, says ECI. This is addressed with Apollo that also adds layer 3 routing, another first.

“In the buying cycle, operators start with optical networking and add carrier Ethernet switch routing,” says Oren Marmur, head of optical networking & CESR lines of Business at ECI Telecom. Now with Apollo, operators can simplify their networks: they don't have to provision, or maintain, two separate platforms.

ECI claims the Apollo platform, with 100 Gigabit-per-second (Gbps) transport and hybrid Ethernet and OTN cards, more than halves the equipment cost compared to using separate ROADM and CESR platforms. The company also says such an Apollo configuration reduces rack space by 38% and power consumption by some 60%.

What has been done

ECI has announced six Apollo platforms that span the access, metro and core networks. The platforms include the SR 9601 and OPT 9603 for metro access and the metro edge SR 9604 and OPT 9608 with four and eight input-output (I/O) cards respectively that support WDM or 100Gbps Ethernet MPLS packet switching. The final two platforms are the OPT 9624 for metro core and the OPT 9648 for regional and long haul, and both can accommodate a terabit universal switch.

Overall Apollo can support 44 or 88 light paths at 10, 40 and 100Gbps, 2-degree and multi-degree colourless, directionless and contentionless ROADMs, OTN and Ethernet switching, and IP/ MPLS and MPLS-TP. "MPLS-TP versus IP/ MPLS is almost a religious issue yet both are valid," says Marmur, who adds that at 40 Gig, ECI will use coherent and direct detection technologies but at 100 Gig it will use only coherent.

The universal fabric of the OPT 9624 and 9648 is cell based - ODUs and packets, not lower-order SONET/SDH traffic. If an operator has any significant amount of SONET/SDH traffic, ECI’s XDM platform or another aggregation box is needed.

The platforms can be configured as CESR platforms, OTN switches, optical transport platforms or combinations of the three.

Analysis

Gazettabyte asked Sterling Perrin, senior analyst at Heavy Reading; Rick Talbot, senior analyst, optical infrastructure at Current Analysis and Dana Cooperson, vice president of the network infrastructure practice at Ovum for their views about the ECI announcement.

Sterling Perrin, Heavy Reading

Apollo has several noteworthy aspects, according to Heavy Reading.

“It is a big announcement for ECI and a big announcement for the industry," says Perrin. “They are doing with the technology some fundamental things that are new.” That said, it remains to be seen how quickly operators will embrace an OMLT-style platform, he says.

Apollo confirms one networking trend - moving the OTN switching fabric into the metro network. So far OTN has been confined largely to the core network. “I know operators are interested but they are still evaluating it,” says Perrin. “But OTN will migrate down from the core to the metro.” Others that have announced such a capability include Ciena and Huawei.

ECI has also put the DWDM transport with the CESR platform. “This is another trend we figured would happen,” he says. “This puts ECI very early, if not first, in doing that function.”

Perrin has his doubts about how quickly the layer 3 functionality added to the platform will be embraced by operators: “What I've seen from the industry is that MPLS-TP will give you that functionality over time as it matures, so this sort of platform may not need the full layer 3 functions.”

The modular nature of the design that allows operators to add the functionality they need helps avoid one issue associated with integrated platforms, paying for functionality that is not needed. And there are cost savings by having a single integrated platform. “You do want to save capex and opex and this is definitely a way to get that done,” says Perrin.

In the network core, the question remains whether packet needs to be combined with the optics. “Metro lends itself more to the integration than the core does,” he says.

ECI’s biggest competitor is probably Huawei and over time also ZTE, says Perrin. ECI has done well in India and other emerging markets that many of the system vendors were ignoring. “Now they have Huawei in the mix, it is definitely tougher,” he says. “This [Apollo] announcement will definitely help them.”

Rick Talbot, Current Analysis

Current Analysis categorises the smaller members of the Apollo family as a packet-optical access (POA) portfolio, playing the same role as Ericsson’s SPO 1400 family and Cisco’s CPT series. The market research firm views the largest two Apollo platforms - the OPT 9624 and 48 - as packet-optical transport systems.

The Apollo POAs bring protocol-agnostic packet switching to the aggregation network, says Talbot, a rarity in this part of the network. Several vendors offer P-OTS with universal switching fabrics but most do not extend that architecture into the aggregation network, Tellabs with the 7100 Nano OTS being the exception. Also the 9600 series IP/ MPLS and MPLS-TP options are very strong, providing what Cisco and Ericsson call unified MPLS, he says.

For Current Analysis, the significance of the portfolio is that the Apollo family delivers converged packet and time-division multiplexing (TDM) switching in a single switch fabric, and provides an infrastructure that extends from the network core to the access network edge.

The switching fabric provides the greatest efficiency for the ultimate traffic type - packets - while simplifying the network architecture and minimising equipment cost. In turn, the breadth of the portfolio provides a common set of capabilities across an operator’s network, minimising training costs and spares inventory.

As for the specification, the wide range of MPLS features integrated into this product family, its terabit universal switch and its 100Gbps DWDM transport capabilities are impressive, says Talbot.

“The primary gap in the portfolio, and it is hard to fault ECI for this, is that the highest capacity member of the family supports ’only’ 1 Terabit-per-second of switching capacity,” he says. “This is not large enough for a Tier 1 core optical switch.”

ECI must first execute on the production of the Apollo family, but if it does, Talbot believe that ECI will capture the interest of larger and more end-to-end operators in markets they already serve.

ECI will also have positioned itself to capture the attention of many European operators and, if it makes a push there, the North American market. However Talbot believes ECI will still be challenged to capture the attention of Tier 1 operators because of the family’s limited maximum scale.

Dana Cooperson, Ovum

Size and scale breeds specialisation, says Cooperson. “Large service providers, including the Tier 1s, won’t be so interested in the OMLT, but they aren’t the target anyway,” she says. Large service providers need plenty of scale when it comes to WDM and CESR functionality, while they also tend to have compartmentalised operations groups. “So an all-in-one product like the OMLT isn’t targeted at them,” she says.

ECI has always done well selling to the Tier 2 and Tier 3 carriers as well as enterprises such as utilities that have carrier-like networks. That is because ECI's modular, packet-based platforms are sized and priced to match such operators' and enterprises’ requirements. “I see the OMLT as a continuation of ECI's positioning of its XDM platform,” she says.

Cooperson says that it can be difficult to position vendors’ switch announcements and that they should do more to explain where they sit. But she stresses that the Apollo 9600 series is very different from Juniper's PTX, for example.

“The PTX is positioned in the core as a lower-cost alternative to core routers, while the OMLT as a CESR or even an OTN switch is meant more for smaller sites,” she says. Also the switch capacities of the smaller Apollo platforms fit with ECI's focus and positioning on smaller customers and smaller sites.

Cooperson also highlights the need for the XDM platform if an operator requires SONET/SDH support but says ECI has alluded to add/drop multiplexer blades as well as packet blades. "The [Apollo] focus is on the packet and photonic bits,” says Cooperson. “ECI did emphasize that the XDM isn’t going anywhere, but we’ll see what happens over time and how much SONET/SDH ECI builds in [if any to the Apollo].”

Further Reading

For accompanying White Papers, click here

Next-gen 100 Gigabit optics

Briefing: 100 Gigabit

Part 2: Interview

Gazettabyte spoke to John D'Ambrosia about 100 Gigabit technology

John D'Ambrosia, chair of the IEEE 100 Gig backplane and copper cabling task force

John D'Ambrosia, chair of the IEEE 100 Gig backplane and copper cabling task force

John D'Ambrosia laughs when he says he is the 'father of 100 Gig'.

He spent five years as chair of the IEEE 802.3ba group that created the 40 and 100 Gigabit Ethernet (GbE) standards. Now he is the chair of the IEEE task force looking at 100 Gig backplane and copper cabling. D'Ambrosia is also chair of the Ethernet Alliance and chief Ethernet evangelist in the CTO office of Dell's Force10 Networks.

“People are also starting to talk about moving data operations around the network based on where electricity is cheapest”

"Part of the reason why 100 Gig backplane technology is important is that I don't know anybody that wants a single 100 Gig port off whatever their card is," says D'Ambrosia. "Whether it is a router, line card, whatever you want to call it, they want multiple 100 Gig [interfaces]: 2, 4, 8 - as many as they can."

Earlier this year, there was a call for interest for next-generation 100 Gig optical interfaces, with the goal of reducing the cost and power consumption of 100 Gig interfaces while increasing their port density. "This [next-generation 100 Gig optical interfaces] is going to become very interesting in relation to what is going on in the industry,” he said.

Next-gen 100 Gig

The 10x10 MSA is an industry initiative that is an alternative 100 Gig interface to the IEEE 100 Gigabit Ethernet standards. Members of the 10x10 MSA include Google, Brocade, JDSU, NeoPhotonics (Santur), Enablence, CyOptics, AFOP, MRV, Oplink and Hitachi Cable America.

"Unfortunately, that [10x10 MSA] looks like it could cause potential interop issues,” says D'Ambrosia. That is because the 10x10 MSA has a 10-channel 10 Gigabit-per-second (Gbps) optical interface while the IEEE 100GbE use a 4x25Gbps optical interface.

The 10x10 interface has a 2km reach and the MSA has since added a 10km variant as well as 4x10x10Gbps and 8x10x10Gbps versions over 40km.

The advent of the 10x10 MSA has led to an industry discussion about shorter-reach IEEE interfaces. "Do we need something below 10km?” says D’Ambrosia.

Reach is always a contentious issue, he says. When the IEEE 802.3ba was choosing the 10km 100GBASE-LR4, there was much debate as to whether it should be 3 or 4km. "I won’t be surprised if you have people looking to see what they can do with the current 100GBASE-LR4 spec: There are things you can do to reduce the power and the cost," he says.

One obvious development to reduce size, cost and power is to remove the gearbox chip. The gearbox IC translates between 10x10Gbps and the 4x25Gbps channels. The chip consumes several watts each way (transmit to receive and vice versa). By adopting a 4x25Gbps input electrical interface, the gearbox chip is no longer needed - the electrical and optical channels will then be matched in speed and channel count. The result is that the 100GbE designs can be put into the upcoming, smaller CFP2 and even smaller CFP4 form factors.

As for other next-gen 100Gbps developments, these will likely include a 4x25Gbps multi-mode fibre specification and a 100 Gig, 2km serial interface, similar to the 40GBASE-FR.

The industry focus, he says, is to reduce the cost, power and size of 100Gbps interfaces rather than develop multiple 100 Gig link interfaces or expand the reach beyond 40km. "We are going to see new systems introduced over the next few years not based on 10 Gig but designed for 25 Gig,” says D’Ambrosia. The ASIC and chip designers are also keen to adopt 25Gbps signalling because they need to increase input-output (I/O) yet have only so may pins on a chip, he says.

D’Ambrosia is also part of an Ethernet bandwidth assessment ad-hoc committee that is part of the IEEE 802.3 work. The group is working with the industry to quantify bandwidth demand. “What you see is a lot of end users talking about needing terabit and a lot of suppliers talking about 400 Gig,” he says. Ultimately, what will determine the next step is what technologies are going to be available and at what cost.

Backplane I/0 and switching

Many of the systems D'Ambrosia is seeing use a single 100Gbps port per card. "A single port is a cool thing but is not that useful,” he says. “Frankly, four ports is where things start to become interesting.”

This is where 25Gbps electrical interfaces come into play. "It is not just 25 Gig for chip-to-chip, it is 25 Gig chip-to-module and 25 Gig to the backplane."

Moreover modules, backplane speeds, and switching capacity are all interrelated when designing systems. Designing a 10 Terabit switch, for example, the goal is to reduce the number of traces on a board and that go through the backplane to the switch fabric and other line cards.

Using 10Gbps electrical signals, between 1,200 to 2,000 signals are needed depending on the architecture, says D'Ambrosia. With 25Gbps the interface count reduces to 500-750. “The electrical signal has an impact on the switch capacity,” he says.

100 Gig in the data centre

D’Ambrosia stresses that care is needed when discussing data centres as the internet data centres (IDC) of a Google or a Facebook differ greatly from those of enterprises. “In the case of IDCs, those people were saying they needed 100 Gig back in 2006,” he says.

Such mega data centres use tens of thousands of servers connected across a flat switching architecture unlike traditional data centres that use three layers of aggregated switching. According to D'Ambrosia such flat architectures can justify using 100Gbps interfaces even when the servers each have a 1 Gig Ethernet interfaces only. And now servers are transitioning to 10 GbE interfaces.

“You are going to have to worry about the architecture, you are going to have to worry about the style of data centre and also what the server applications are,” says D'Ambrosia. “People are also starting to talk about moving data operations around the network based on where electricity is cheapest.” Such an approach will require a truly wide, flat architecture, he says.

D'Ambrosia cites the Amsterdam Internet exchange that announced in May its first customer using a 100 Gig service. "We are starting to see this happen,” he says.

One lesson D'Ambrosia has learnt is that there is no clear relationship between what comes in and out of the cloud and what happens within the cloud. Data centres themselves are one such example.

100 Gig direct detection

In recent months lower power, 200km to 800km reach, 100Gbps direct detection interfaces that are cheaper than coherent transmission have been announced by ADVA Optical Networking and MultiPhy. Such interfaces have a role in the network and are of varying interest to telco operators. But these are vendor-specific solutions.

D’Ambrosia stresses the importance of standards such as the IEEE and the work of the Optical Internetworking Forum (OIF) that has adopting coherent. “I still see customers that want a standards-based solution,” says D'Ambrosia, who adds that while the OIF work is not a standard, it is an interoperability agreement. “It allows everyone to develop the same thing," he says.

There are also other considerations regarding 100 Gig direct-detection besides cost, power and a pluggable form factor. Vendors and operators want to know how many people will be able to source this, he says.

D'Ambrosia says that new systems being developed now will likely be deployed in 2013. Vendors must assess the attractiveness of any alternative technologies to where industry backed technologies like coherent and the IEEE standards will be then.

The industry will adopt a variety of 100Gbps solutions, he says, with particular decisions based on a customer’s cost model, its long term strategy and its network.

For Part 1 - 100 Gig: An operator view click here

Calient brings optical switching to the data centre

Source: Calient

Source: Calient

The Californian-based start-up has as been selling its FC 320, a 320-port 3D MEMS-based switch, since 2006. The optical switch is used by Verizon and AT&T at submarine cable landing sites, and by Government agencies.

Now Calient has raised US $19.4 million (€13.77M) in its latest funding round to complete the development and manufacturing of a more compact, power efficient version of its optical switch.

The company has upgraded the electronics and software of its MEMS-based optical switch module. This, says Gregory Koss, Calient's senior vice president for products and partners, reduces the power consumption to 20W, a 90% reduction compared to its existing design.

The new switch module is also more compact. Using the module in a new 320-port switch platform more than halves the size: from 17 to 7 rack units.

The 3D MEMS optics has not been changed. The MEMS design uses mirrors to form a free-space connection between an fibre input port and any of the 320 output ports. A control system then adjusts the mirrors to maximise the output signal. In all the years Calient has been selling its systems, there has not been a single MEMS failure, says the company.

Calient is also changing its strategy by selling the switch as a module to system vendors. The switch module can be incorporated on a line card, while Calient will work with system vendor partners that want to integrate the module within their own platform designs.

"[Data centre] operators want a future-proofed network. They don't want to rebuild when links are upgraded from 10 to 40 and then 100 Gig."

Gregory Koss, Calient Technologies

Data centre and cloud

Calient's MEMS-based switch will be used to connect large server clusters in content service providers' 'mega' data centres.

According to Koss, content service providers are interested in using an optical switch to link their server clusters. In a typical configuration, 48 servers are connected to a top-of-rack switch. This top-of-rack switch, via a 10 Gigabit Ethernet link, would be one input to the 320-port optical switch.

"[Data centre] operators want a future-proofed network," says Koss. "They don't want to rebuild when links are upgraded from 10 to 40 and then 100 Gig."

Common cabling used in the data centre include copper and multi-mode fibre while Calient's design uses single-mode fibre. According to Koss, data centre managers are installing more single-mode fibre: "It is it not so much for reach but for bandwidth and for scaling.”

The switch can also be used for what Calient calls cloud networking, to monitor and manage an enterprise's fibres as it enters the data centre.

ROADMs

The switch will also address agile optical networking, to enable colourless, directionless and contentionless ROADMs.

The optical module will be used for the add/ drop, alongside rather than replacing 1x9 or 1x20 WSSs which are used for the pass-through lambdas.

Koss says that the company's main focus in 2012 is addressing the data centre market opportunity but that the switch is of interest to ROADM system vendors. Such a 3D MEMS-based ROADM design will take longer to bring to market.

Further reading:

CALIENT's 3D MEMS Technology Enables Exploding Bandwidth Demands (log-in required to download the White Paper)

Webcasts and White Papers

First webcast is LightCounting's “State-of-The-High-Speed Interconnect Industry – Optical and Copper” on Nov 1st, 2011.

To see upcoming webcasts, click here.

100 Gigabit: An operator view

Gazettabyte spoke with BT, Level 3 Communications and Verizon about their 100 Gigabit optical transmission plans and the challenges they see regarding the technology.

Briefing: 100 Gigabit

Part 1: Operators

Operators will use 100 Gigabit-per-second (Gbps) coherent technology for their next-generation core networks. For metro, operators favour coherent and have differing views regarding the alternative, 100Gbps direct-detection schemes. All the operators agree that the 100Gbps interfaces - line-side and client-side - must become cheaper before 100Gbps technology is more widely deployed.

"It is clear that you absolutely need 100 Gig in large parts of the network"

Steve Gringeri, Verizon

100 Gigabit status

Verizon is already deploying 100Gbps wavelengths in its European and US networks, and will complete its US nationwide 100Gbps backbone in the next two years.

"We are at the stage of building a new-generation network because our current network is quite full," says Steve Gringeri, a principal member of the technical staff at Verizon Business.

The operator first deployed 100Gbps coherent technology in late 2009, linking Paris and Frankfurt. Verizon's focus is on 100Gbps, having deployed a limited amount of 40Gbps technology. "We can also support 40 Gig coherent where it makes sense, based on traffic demands," says Gringeri.

Level 3 Communications and BT, meanwhile, have yet to deploy 100Gbps technology.

"We have not [made any public statements regarding 100 Gig]," says Monisha Merchant, Level 3’s senior director of product management. "We have had trials but nothing formal for our own development." Level 3 started deploying 40Gbps technology in March 2009.

BT expects to deploy new high-speed line rates before the year end. "The first place we are actively pursuing the deployment of initially 40G, but rapidly moving on to 100G, is in the core,” says Steve Hornung, director, transport, timing and synch at BT.

Operators are looking to deploy 100Gbps to meet growing traffic demands.

"If I look at cloud applications, video distribution applications and what we are doing for wireless (Long Term Evolution) - the sum of all the traffic - that is what is putting the strain on the network," says Gringeri.

Verizon is also transitioning its legacy networks onto its core IP-MPLS backbone, requiring the operator to grow its base infrastructure significantly. "When we look at demands there, it is clear that you absolutely need 100 Gig in large parts of the network," says Gringeri.

Level 3 points out its network between any two cities has been running at much greater capacity than 100 Gbps so that demand has been there for years, the issue is the economics of the technology. "Right now, going to 100Gbps is significantly a higher cost than just deploying 10x 10Gbps," says Level 3's Merchant.

BT's core network comprises 106 nodes: 20 in a fully-meshed inner core, surrounded by an outer 86-node core. The core carries the bulk of BT's IP, business and voice traffic.

"We are taking specific steps and have business cases developed to deploy 40G and 100G technology: alternative line cards into the same rack," says Hornung.

Coherent and direct detection

Coherent has become the default optical transmission technology for operators' next-generation core networks.

BT says it is a 'no-brainer' that 400Gbps and 1 Terabit-per-second light paths will eventually be deployed in the network to accommodate growing traffic. "Rather than keep all your options open, we need to make the assumption that technology will essentially be coherent going forward because it will be the bandwidth that drives it," says Hornung.

Beyond BT's 106-node core is a backhaul network that links 1,000 points-of-presence (PoPs). It is this part of the network that BT will consider 40Gbps and perhaps 100Gbps direct-detection technology. "If it [such technology] became commercially available, we would look at the price, the demand and use it, or not, as makes sense," says Hornung. "I would not exclude at this stage looking at any technology that becomes available." Such direct-detection 100Gbps solutions are already being promoted by ADVA Optical Networking and MultiPhy.

However, Verizon believes coherent will also be needed for the metro. "If I look at my metro systems, you have even lower quality amplifiers, and generally worse signal-to-noise," says Gringeri. “Based on the performance required, I have no idea how you are going to implement a solution that isn't coherent."

Even for shorter reach metro systems - 200 or 300km- Verizon believes coherent will be the implementation, including expanding existing deployments that carry 10Gbps light paths and that use dispersion-compensated fibre.

Level 3 says it is not wedded to a technology but rather a cost point. As a result it will assess a technology if it believes it will address the operator's needs and has a cost performance advantage.

100 Gig deployment stages

The cost of 100Gbps technology remains a key challenge impeding wider deployment. This is not surprising since 100Gbps technology is still immature and systems shipping are first-generation designs.

Operators are willing to pay a premium to deploy 100Gbps light paths at network pinch-points as it is cheaper that lighting a new fibre.

Metro deployments of new technology such as 100Gbps occur generally occur once the long-haul network has been upgraded. The technology is by then more mature and better suited to the cost-conscious metro.

Applications that will drive metro 100Gbps include linking data centre and enterprises. But Level 3 expects it will be another five years before enterprises move from requesting 10 Gigabit services to 100 Gigabit ones to meet their telecom needs.

Verizon highlights two 100Gbps priorities: the high-end performance dense WDM systems and client-side 'grey' (non-WDM) optics used to connect equipment across distances as short as 100m with ribbon cable to over 2km or 10km over single-mode fibre.

"I would not exclude at this stage looking at any technology that becomes available"

Steve Hornung, BT

"Grey optics are very costly, especially if I’m going to stitch the network and have routers and other client devices and potential long-haul and metro networks, all of these interconnect optics come into play," says Gringeri.

Verizon is a strong proponent of a new 100Gbps serial interface over 2km or 10km. At present there are the 100 Gigabit interface and the 10x10 MSA. However Gringeri says it will be 2-3 years before such a serial interface becomes available. "Getting the price-performance on the grey optics is my number one priority after the DWDM long haul optics," says Gringeri.

Once 100Gbps client-side interfaces do come down in price, operators' PoPs will be used to link other locations in the metro to carry the higher-capacity services, he says.

The final stage of the rollout of 100Gbps will be single point-to-point connections. This is where grey 100Gbps comes in, says Gringeri, based on 40 or 80km optical interfaces.

Source: Gazettabyte

Tackling costs

Operators are confident regarding the vendors’ cost-reduction roadmaps. "We are talking to our clients about second, third, even fourth generation of coherent," says Gringeri. "There are ways of making extremely significant price reductions."

Gringeri points to further photonic integration and reducing the sampling rate of the coherent receiver ASIC's analogue-to-digital converters. "With the DSP [ASIC], you can look to lower the sampling rate," says Gringeri. "A lot of the systems do 2x sampling and you don't need 2x sampling."

The filtering used for dispersion compensation can also be simpler for shorter-reach spans. "The filter can be shorter - you don't need as many [digital filter] taps," says Gringeri. "There are a lot of optimisations and no one has made them yet."

There are also the move to pluggable CFP modules for the line-side coherent optics and the CFP2 for client-side 100Gbps interfaces. At present the only line-side 100Gbps pluggable is based on direct detection.

"The CFP is a big package," says Gringeri. "That is not the grey optics package we want in the future, we need to go to a much smaller package long term."

For the line-side there is also the issue of the digital signal processor's (DSP) power consumption. "I think you can fit the optics in but I'm very concerned about the power consumption of the DSP - these DSPs are 50 to 80W in many current designs," says Gringeri.

One obvious solution is to move the DSP out of the module and onto the line card. "Even if they can extend the power number of the CFP, it needs to be 15 to 20W," says Gringeri. "There is an awful lot of work to get where you are today to 15 to 20W."

* Monisha Merchant left Level 3 before the article was published.

Further Reading:

100 Gigabit: The coming metro opportunity - a position paper, click here

Click here for Part 2: Next-gen 100 Gig Optics