Nuage Networks uses SDN to tackle data centre networking bottlenecks

Three planes of the network that host Nuage's .Virtualised Services Platform (VSP). Source: Nuage Networks

Three planes of the network that host Nuage's .Virtualised Services Platform (VSP). Source: Nuage Networks

Alcatel-Lucent has set up Nuage Networks, a business venture addressing networking bottlenecks within and between data centres.

The internal start-up combines staff with networking and IT skills include web-scale services. "You can't solve new problems with old thinking," says Houman Modarres, senior director product marketing at Nuage Networks. Another benefit of the adopted business model is that Nuage benefits from Alcatel-Lucent's software intellectual property.

"It [the Nuage platform] is a good approach. It should scale well, integrate with the wide area network (WAN) and provide agility"

Joe Skorupa, Gartner

Network bottlenecks

Networking in the data centre connects computing and storage resources. Servers and storage have already largely adopted virtualisation such that networking has now become the bottleneck. Virtual machines on servers running applications can be enabled within seconds or minutes but may have to wait days before network connectivity is established, says Modarres.

Nuage has developed its Virtualised Services Platform (VSP) software, designed to solve two networking constraints.

"We are making the network instantiation automated and instantaneous rather than slow, cumbersome, complex and manual," says Modarres. "And rather than optimise locally, such as parts of the data centre like zones or clusters, we are making it boundless."

"It [the Nuage platform] is a good approach," says Joe Skorupa, vice president distinguished analyst, data centre convergence, data centre, at Gartner. "It should scale well, integrate with the wide area network (WAN) and provide agility."

Resources to be connected can now reside anywhere: within the data centre, and between data centres, including connecting the public cloud to an enterprise's own private data centre. Moreover, removing restrictions as to where the resources are located boosts efficiency.

"Even in cloud data centres, server utilisation is 30 percent or less," says Modarres. "And these guys spend about 60 percent of their capital expenditure on servers."

It is not that the hypervisor, used for server virtualisation, is inefficient, stresses Modarres: "It is just that when the network gets in the way, it is not worthwhile to wait for stuff; you become more wasteful in your placement of workloads as their mobility is limited."

"A lot of money is wasted on servers and networking infrastructure because the network is getting in the way"

Houman Modarres, Nuage Networks

SDN and the Virtualised Services Platform

Nuage's Virtualised Services Platform (VSP) uses software-defined networking (SDN) to optimise network connectivity and instantiation for cloud applications.

The VSP comprises three elements:

- the Virtualised Services Directory,

- the Virtualised Services Controller,

- and the Virtual Routing & Switching module.

The elements each reside at a different network layer, as shown (see chart, top).

The top layer, the cloud services management plane, houses the Virtualised Services Directory (VSD). The VSD is a policy and analytics engine that allows the cloud service provider to partition the network for each customer or group of tenants.

"Each of them get their zones for which they can place their applications and put [rules-based] permissions as to whom can use what, and who can talk to whom," says Modarres. "They do that in user-friendly terms like application containers, domains and zones for the different groups."

Domains and zones are how an IT administrator views the data centre, explains Modarres: "They don't need to worry about VLANs, IP addresses, Quality of Service policies and access control lists; the network maps that through its abstraction." The policies defined and implemented by the VSD are then adopted automatically when new users join.

The layer below the cloud services management plane is the data centre control plane. This is where the second platform element, the Virtualised Services Controller (VSC), sits. The VSC is the SDN controller: the control element that communicates with the data plane using the OpenFlow open standard.

The third element, the Virtual Routing & Switching module (VRS), sits in the data path, enabling the virtual machines to communicate to enable applications rapidly. The VRS sits on the hypervisor of each server. When a virtual machine gets instantiated, it is detected by the VRS which polls the SDN controller to see if a policy has already been set up for the tenant and the particular application. If a policy has been set up, the connectivity is immediate. Moreover, this connectivity is not confined to a single data centre zone but the whole data centre and even across data centres.

More than one data centre is involved for disaster recovery scenarios, for example. Another example involving more than one data centre is to boost overall efficiency. This is enhanced by enabling spare resources in other data centres to be used by applications as appropriate.

Meanwhile, the linking to an enterprise's own data centre is done using a virtual private network (VPN), bridging a private data centre with the public cloud. "We are the first to do this," says Modarres.

The VSP works with whatever server, hypervisor, networking equipment and cloud management platform is used in a data centre. The SDN controller is based on the same operating system that is used in Alcatel-Lucent's IP routers that supports a wealth of protocols. Meanwhile, the virtual switch in the VRS integrates with various hypervisors on the market, ensuring interoperability.

Nuage's Dimitri Stiliadis, chief architect at Nuage Networks, describes its VSP architecture as a distributed implementation of the functions performed by its router products.

The control plane of the router is effectively moved to the SDN controller. The router's 'line cards' become the virtual switches in the hypervisors. "OpenFlow is the protocol that allows our controller to talk to the line cards," says Stiliadis. "While the border gateway protocol (BGP) is the protocol that allows our controller to talk to other controllers in the rest of the network."

Michael Howard, principal analyst, carrier networks at Infonetics Research, says there are several noteworthy aspects to Nuage's product including the fact that operators participated at the company's launch and that the software is not tied to Alcatel-Lucent's routers but will run over other vendors' equipment.

"It also uses BGP, as other vendors are proposing, to tie together data centres and the carrier WAN," says Howard. "Several big operators say BGP is a good approach to integrate data centres and carrier WANs, including AT&T and Orange."

Nuage says that trials of its VSP began in April. The European and North America trial partners include UK cloud service provider Exponential-e, French telecoms service provider SFR, Canadian telecoms service provider TELUS and US healthcare provider, the University of Pittsburgh Medical Center (UPMC). The product will be generally available from mid-2013.

"There are other key use cases targeted for SDN that are not data centre related: content delivery networks, Evolved Packet Core, IP Multimedia Subsystem, service-chaining and cloudbox"

Michael Howard, Infonetics Research

Challenges

The industry analysts highlight that this market is still in its infancy and that challenges remain.

Gartner's Skorupa points out that the data centre orchestration systems still need to be integrated and that there is a need for cheaper, simpler hardware.

"Many vendors have proposed solutions but the market is in its infancy and customer acceptance and adoption is still unknown," says Skorupa.

Infonetics highlights dynamic bandwidth as a key use case for SDNs and in particularly between data centres.

"There are other key use cases targeted for SDN that are not data centre related: content delivery networks, Evolved Packet Core, IP Multimedia Subsystem, service-chaining and cloudbox," says Howard.

Cloudbox is a concept being developed by operators where an intelligent general purpose box is placed at a customer's location. The box works in conjunction with server-based network functions delivered via the network, although some application software will also run on the box.

Customers will sign up for different service packages out of firewall, intrusion detection system (IDS), parental control, turbo button bandwidth bursting etc., says Howard. Each customer's traffic is guided by the SDNs and uses Network Functions Virtualisation - those network functions such as a firewall or IDS formerly in individual equipment - such that the services subscribed to by a user are 'chained' using SDN software.

Hybrid integration specialist Kaiam acquires Gemfire

Kaiam Corp. has secured US $16M in C-round funding and completed the acquisition of Gemfire.

"We have a micro-machine technology that allows us to use standard pick-and-place electronic assembly tools, and with our micro-machine, we achieve sub-micron alignment tolerances suitable for single-mode applications"

Byron Trop, Kaiam

With the acquisition, Kaiam gains planar lightwave circuit (PLC) technology and Gemfire's 8-inch wafer fab in Scotland. This is important for the start-up given there are few remaining independent suppliers of PLC technology.

Working with Oplink Communications, Kaiam has also demonstrated recently a 100 Gigabit 10x10 MSA 40km CFP module.

Hybrid integration technology

Kaiam has developed hybrid integration technology that achieves sub-micron alignment yet only requires standard electronic assembly tools.

"With single-mode optics, it is very, very difficult to couple light between components," says Byron Trop, vice president of marketing and sales at Kaiam. "Most of the cost in our industry is associated with aligning components, testing them and making sure everything works."

The company has developed a micro-machine-operated lens that is used to couple optical components. The position of the lens is adjustable such that standard 'pick-and-place' manufacturing equipment with a placement accuracy of 20 microns can be used. "If you set everything [optical components] up in a transceiver with a 20-micron accuracy, nothing would work," says Trop.

Components are added to a silicon breadboard and the micro-machine enables the lens to be moved in three dimensions to achieve sub-micron alignment. "We have the ability to use coarse tools to manipulate the machine, and at the far end of that machine we have a lens that is positioned to sub-micron levels," says Trop. Photo-diodes on a PLC provide the feedback during the active alignment.

Another advantage of the technique is that any movement when soldering the micro-machine in position has little impact on the lens alignment. "Any movement that happens following soldering is dampened over the distance to the lens," says Trop. "Therefore, movement during the soldering process has negligible impact on the lens position."

Kaiam buys its lasers and photo-detector components, while a fab make its micro-machine. Hybrid integration is used to combine the components for its transmitter optical sub-assembly (TOSA) and receiver optical sub-assembly (ROSA) designs, and these are made by contract manufacturers. Kaiam has a strategic partnership with contract manufacturer, Sanmina-SCI.

The company believes that by simplifying alignment, module and systems companies have greater freedom in the channel count designs they can adopt. "Hybrid integration, this micro-alignment of optical components, is no longer a big deal," says Trop. "You can start thinking differently."

"We will also do more custom optical modules where somebody is trying to solve a particular problem; maybe they want 16 or 20 lanes of traffic"

For 100 Gigabit modules, companies have adopted 10x10 Gigabit-per-second (Gbps) and 4x28Gbps designs. The QSFP28 module, for example, has enabled vendors to revert back to four channels because of the difficulties in assembly.

"Our message is not more lanes is better," says Trop. "Rather, what is the application and don't consider yourself limited because the alignment of sub-components is a challenge."

With the Gemfire acquisition, Kaiam has its own PLC technology for multiplexing and de-multiplexing multiple 10Gbps and, in future, 25Gbps lanes. "Our belief is that PLC is the best way to go and allows you to expand into larger lane counts," says Trop.

Gemfire also owned intellectual property in the areas of polymer waveguides and semiconductor optical amplifiers.

Products and roadmap

Kaiam sells 40Gbps QSFP TOSAs and ROSAs for 2km, 10km and 40km reaches. The company is now selling its 40km 10x10 MSA TOSA and ROSA demonstrated at the recent OFC/NFOEC show. Trop says that the 40km 10x10 CFP MSA module is of great interest to Internet exchange operators that want low cost, point-to-point links.

"Low cost, highly efficient optical interconnect is going to be important and it is not all at 40km reaches," says Trop. "Much of it is much shorter distances and we believe we have a technology that will enable that."

The company is looking to apply its technology to next-generation optical modules such as the CFP2, CFP4 and QSFP28. "We will also do more custom optical modules where somebody is trying to solve a particular problem; maybe they want 16 or 20 lanes of traffic," says Trop.

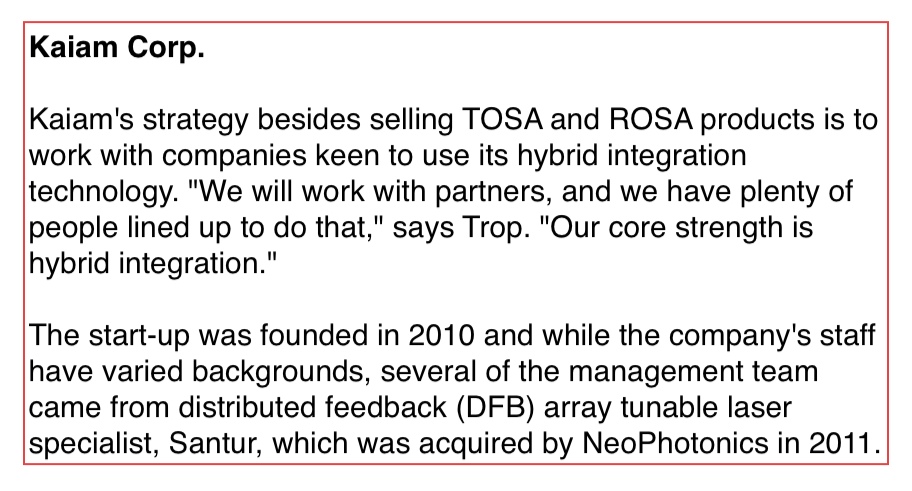

Effdon Networks extends the 10x10 MSA to 80km

Effdon Networks has demonstrated a 100 Gigabit CFP module with an 80km reach; a claimed industry first. The company has also developed the Qbox, a 1 rack unit (1RU) extended reach platform capable of 400-800 Gigabit-per-second (Gbps) with a reach of 80-200km.

Effdon's CFP does not require the use of external DWDM multiplexing/ demultiplexing and can be added directly onto a router. Source: Effdon Networks

Effdon's CFP does not require the use of external DWDM multiplexing/ demultiplexing and can be added directly onto a router. Source: Effdon Networks

Available 100 Gigabit CFP modules have so far achieved 10km. Now with the Effdon module a 80km reach has been demonstrated that uses 10Gbps optics and no specialist silicon.

Effdon's design is based on the 10x10 MSA (multi-source agreement). "We have managed to resolve the technology barriers - using several techniques - to get to 80km," says Eitan Efron, CEO of Effdon Networks.

There is no 100 Gigabit standard for 80km. The IEEE has two 100 Gigabit standards: the 10km long reach 100GBASE-LR4 and the 40km extended reach 100GBASE-ER4.

Meanwhile, the 100 Gigabit 10x10 MSA based on arrays of 10, 10 Gigabit lasers and detectors, has three defined reaches: 2km, 10km and 40km. At the recent OFC/NFOEC exhibition, Oplink Communication and hybrid integration specialist, Kaiam, showed the 10x10 MSA CFP achieving 40km.

Effdon has not detailed how it has achieved 80km but says its designers have a systems background. "All the software that you need for managing wavelength-division multiplexing (WDM) systems is in our device," says Efron. "Basically we have built a system in a module."

These system elements include component expertise and algorithmic know-how. "Algorithms and software; this is the main IP of the company," says Efron. "We are using 40km components and we are getting 80km."

100 Gigabit landscape

Efron says that while there are alternative designs for 100 Gigabit transmission at 80km or more, each has challenges.

A 100Gbps coherent design achieves far greater reaches but is costly and requires a digital signal processor (DSP) receiver ASIC that consumes tens of watts. No coherent design has yet been implemented using a pluggable module.

Alternative CFP-based 100Gbps direct-detection designs based on a 4x28Gbps architecture exist. But their 28Gbps lanes experience greater dispersion that make achieving 80km a challenge.

MultiPhy's MP1100Q DSP chip counters dispersion. The chip used in a CFP module achieves a 55km point-to-point reach using on-off keying and 800km for dense WDM metro networks using duo-binary modulation.

Finisar and Oclaro also offer 100Gbps direct detection CFP modules for metro dense WDM using duo-binary modulation but without a receiver DSP. ADVA Optical Networking is one system vendor that has adopted such 100Gbps direct-detect modules. Another company developing a 4x28Gbps direct detect module is Oplink Communications.

But Effdon points out that its point-to-point CFP achieves 80km without using an external DWDM multiplexer and demultiplexer - the multiplexing/demultiplexing of the wavelengths is done within the CFP - or external amplification and dispersion compensation. As a result, the CFP plugs straight into IP routers and data centre switches.

"What they [data centre managers] want is what they have today at 10 Gig: ZR [80km] optical transceivers," says Efron

Market demand

"We see a lot of demand for this [80km] solution," says Efron. The design, based on 10 Gigabit optics, has the advantage of using mature high volume components while 25Gbps component technology is newer and available in far lower volumes.

"This [cost reduction associated with volume] will continue; we see 10 Gig lasers going into servers, base stations, data centre switches and next generation PON," says Efron. "Ten Gigabit optical components will remain in higher volume than 25 Gig in the coming years."

The 10x10 MSA CFP design can also be used to aggregate multiple 10 Gig signals in data centre and access networks. This is an emerging application and is not straightforward for the more compact, 4x25Gbps modules as they require a gearbox lane-translation IC.

Reach extension

Effdon Networks' Qbox platform provides data centre managers with 400-800Gbps capacity while offering a reach up to 200km. The box is used with data centre equipment that support CXP or QSFP modules but not the CFP. The 1RU box thus takes interfaces with a reach of several tens of meters to deliver extended transmission.

Qbox supports eight client-side ports - either 40 or 100 Gbps - and four line-facing ports at speeds of 100Gbps or 200Gbps for a reach of 80 to 200km. In future, the platform will deliver 400Gbps line speeds, says Efron.

Samples of the 80km CFP and Qbox are available for selected customers, says Effdon, while general availability of the products will start in the fourth quarter of 2013.

OFC/NFOEC 2013 industry reflections - Final part

Gazettabyte spoke with Jörg-Peter Elbers, vice president, advanced technology at ADVA Optical Networking about the state of the optical industry following the recent OFC/NFOEC exhibition.

"There were many people in the OFC workshops talking about getting rid of pluggability and the cages and getting the stuff mounted on the printed circuit board instead, as a cheaper, more scalable approach"

Jörg-Peter Elbers, ADVA Optical Networking

Q: What was noteworthy at the show?

A: There were three big themes and a couple of additional ones that were evolutionary. The headlines I heard most were software-defined networking (SDN), Network Functions Virtualisation (NFV) and silicon photonics.

Other themes include what needs to be done for next-generation data centres to drive greater capacity interconnect and switching, and how do we go beyond 100 Gig and whether flexible grid is required or not?

The consensus is that flex grid is needed if we want to go to 400 Gig and one Terabit. Flex grid gives us the capability to form bigger pipes and get those chunks of signals through the network. But equally it allows not only one interface to transport 400 Gig or 1 Terabit as one chunk of spectrum, but also the possibility to slice and dice the signal so that it can use holes in the network, similar to what radio does.

With the radio spectrum, you allocate slices to establish a communication link. In optics, you have the optical fibre spectrum and you want to get the capacity between Point A and Point B. You look at the spectrum, where the holes [spectrum gaps] are, and then shape the signal - think of it as software-defined optics - to fit into those holes.

There is a lot of SDN activity. People are thinking about what it means, and there were lots of announcements, experiments and demonstrations.

At the same time as OFC/NFOEC, the Open Networking Foundation agreed to found an optical transport work group to come up with OpenFlow extensions for optical transport connectivity. At the show, people were looking into use cases, the respective technology and what is required to make this happen.

SDN starts at the packet layer but there is value in providing big pipes for bandwidth-on-demand. Clearly with cloud computing and cloud data centres, people are moving from a localised model to a cloud one, and this adds merit to the bandwidth-on-demand scenario.

This is probably the biggest use case for extending SDN into the optical domain through an interface that can be virtualised and shared by multiple tenants.

"This is not the end of III-V photonics. There are many III-V players, vertically integrated, that have shown that they can integrate and get compact, high-quality circuits"

Network Functions Virtualisation: Why was that discussed at OFC?

At first glance, it was not obvious. But looking at it in more detail, much of the infrastructure over which those network functions run is optical.

Just take one Network Functions Virtualisation example: the mobile backhaul space. If you look at LTE/ LTE Advanced, there is clearly a push to put in more fibre and more optical infrastructure.

At the same time, you still have a bandwidth crunch. It is very difficult to have enough bandwidth to the antenna to support all the users and give them the quality of experience they expect.

Putting networking functions such as cacheing at a cell site, deeper within the network, and managing a virtualised session there, is an interesting trend that operators are looking at, and which we, with our partnership with Saguna Networks, have shown a solution for.

Virtualising network functions such as cacheing, firewalling and wide area network (WAN) optimisation are higher layer functions. But as you do that, the network infrastructure needs to adapt dynamically.

You need orchestration that combines the control and the co-ordination of the networking functions. This is more IT infrastructure - server-based blades and open-source software.

Then you have SDN underneath, supporting changes in the traffic flow with reconfiguration of the network infrastructure.

There was much discussion about the CFP2 and Cisco's own silicon photonics-based CPAK. Was this the main silicon photonics story at the show?

There is much interest in silicon photonics not only for short reach optical interconnects but more generally, as an alternative to III-V photonics for integrated optical functions.

For light sources and amplification, you still need indium phosphide and you need to think about how to combine the two. But people have shown that even in the core network you can get decent performance at 100 Gig coherent using silicon photonics.

This is an interesting development because such a solution could potentially lower cost, simplify thermal management, and from a fab access and manufacturing perspective, it could be simpler going to a global foundry.

But a word of caution: there is big hype here too. This is not the end of III-V photonics. There are many III-V players, vertically integrated, that have shown that they can integrate and get compact, high-quality circuits.

You mentioned interconnect in the data centre as one evolving theme. What did you mean?

The capacities inside the data centre are growing much faster than the WAN interconnects. That is not surprising because people are trying to do as much as possible in the data centre because WAN interconnect is expensive.

People are looking increasingly at how to integrate the optics and the server hardware more closely. This is moving beyond the concept of pluggables all the way to mounted optics on the board or even on-chip to achieve more density, less power and less cost.

There were many people in the OFC workshops talking about getting rid of pluggability and the cages and getting the stuff mounted on the printed circuit board instead, as a cheaper, more scalable approach.

"Right now we are running 28 Gig on a single wavelength. Clearly with speeds increasing and with these kind of developments [PAM-8, discrete multi-tone], you see that this is not the end"

What did you learn at the show?

There wasn't anything that was radically new. But there were some significant silicon photonics demonstrations. That was the most exciting part for me although I'm not sure I can discuss the demos [due to confidentiality].

Another area we are interested in revolves around the ongoing IEEE work on short reach 100 Gigabit serial interfaces. The original objective was 2km but they have now honed in on 500m.

PAM-8 - pulse amplitude modulation with eight levels - is one of the proposed solutions; another is discrete multi-tone (DMT). [With DMT] using a set of electrical sub-carriers and doing adaptive bit loading means that even with bandwidth-limited components, you can transmit over the required distances. There was a demo at the exhibition from Fujitsu Labs showing DMT over 2km using a 10 Gig transmitter and receiver.

This is of interest to us as we have a 100 Gigabit direct detection dense WDM solution today and are working on the product evolution.

We use the existing [component/ module] ecosystem for our current direct detect solution. These developments bring up some interesting new thoughts for our next generation.

So you can go beyond 100 Gigabit direct detection?

Right now we are running 28 Gig on a single wavelength. Clearly with speeds increasing and with these kind of developments [PAM-8, DMT], you see that this is not the end.

Part 1: Software-defined networking: A network game-changer, click here

Part 2: OFC/NFOEC 2013 industry reflections, click here

Part 3: OFC/NFOEC 2013 industry reflections, click here

Part 4: OFC/NFOEC industry reflections, click here

NextIO simplifies top of rack switching with I/O virtualisation

NextIO has developed virtualised input/output (I/O) equipment that simplifies switch design in the data centre.

"Our box takes a single virtual NIC, virtualises that and shares that out with all the servers in a rack"

"Our box takes a single virtual NIC, virtualises that and shares that out with all the servers in a rack"

John Fruehe, NextIO

The platform, known as vNET, replaces both Fibre Channel and Ethernet top-of-rack switches in the data centre and is suited for use with small one rack unit (1RU) servers. The platform uses PCI Express (PCIe) to implement I/O virtualisation.

"Where we tend to have the best success [with vNET] is with companies deploying a lot of racks - such as managed service providers, service providers and cloud providers - or are going through some sort of IT transition," says John Fruehe, vice president of outbound marketing at NextIO.

Three layers of Ethernet switches are typically used in the data centre. The top-of-rack switches aggregate traffic from server racks and link to end-of-row, aggregator switches that in turn interface to core switches. "These [core switches] aggregate all the traffic from all the mid-tier [switches]," says Fruehe. "What we are tackling is the top-of-rack stuff; we are not touching end-of-row or the core."

A similar hierarchical architecture is used for storage: a top-of-rack Fibre Channel switch, end-of-row aggregation and a core that connects to the storage area network. NextIO's vNET platform also replaces the Fibre Channel top-of-rack switch.

"We are replacing those two top-of-rack switches - Fibre Channel and Ethernet - with a single device that aggregates both traffic types," says Fruehe.

vNET is described by Fruehe as an extension of the server I/O. "All or our connections are PCI Express, we have a simple PCI Express card that sits in the server, and a PCI Express cable," he says. "To the server, it [vNET] looks like a PCI Express hub with a bunch of I/O cards attached to it." The server does not discern that the I/O cards are shared across multiple servers or that they reside in an external box.

For IT networking staff, the box appears as a switch providing 10 Gigabit Ethernet (GbE) ports to the end-of-rack switches, while for storage personnel, the box provides multiple Fibre Channel connections to the end-of-row storage aggregation switch. "Most importantly there is no difference to the software," says Fruehe.

I/O virtualisation

NextIO's technology pools the I/O bandwidth available and splits it to meet the various interface requirements. A server is assigned I/O resources yet it believes it has the resources all to itself. "Our box directs the I/O the same way a hypervisor directs the CPU and memory inside a server for virtualisation," says Fruehe.

There are two NextIO boxes available that support up to 15 or up to 30 servers. One has 30, 10 Gigabit-per-second (Gbps) links and the other 15, 20Gbps links. These are implemented as 30x4 and 15x8 PCIe connections, respectively, that connect directly to the servers.

A customer most likely uses two vNET platforms at the top of the rack, the second being used for redundancy. "If a server is connected to two, you have 20 or 40 Gig of aggregate bandwidth," says Fruehe.

NextIO exploits two PCIe standards known as single root I/O virtualisation (SRIOV) and multi-root I/O virtualisation (MRIOV).

SRIOV allows a server to take an I/O connection like a network card, a Fibre Channel card or a drive controller and share it across multiple server virtual machines. MRIOV extends the concept by allowing an I/O controller to be shared by multiple servers. "Think of SRIOV as being the standard inside the box and MRIOV as the standard that allows multiple servers to share the I/O in our vNET box," says Fruehe.

Each server uses only a single PCIe connection to the vNET with the MRIOV's pooling and sharing happening inside the platform.

The vNET platform showing the PCIe connections to the servers, the 10GbE interfaces to the network and the 8 Gig Fibre Channel connections to the storage area networks (SANs). Source: NextIO

Meanwhile, vNET's front panel has eight shared slots. These house Ethernet controllers and/or Fibre Channel controllers, and these are shared across the multiple servers.

In affect an application running on the server communicates with its operating system to send the traffic over the PCIe bus to the vNET platform, where it is passed to the relevant network interface controller (NIC) or Fibre Channel card.

The NIC encapsulates the data in Ethernet frames before being sent over the network. The same applies with the host bus adaptor (HBA) that converts the data to be stored to Fibre Channel. "All these things are happening over the PCIe bus natively, and they are handled in different streams," says Fruehe.

In effect, a server takes a single physical NIC and partitions it into multiple virtual NICs for all the virtual machines running on the server. "Our box takes a single virtual NIC, virtualises that and shares that out with all the servers in a rack" says Fruehe. "We are using PCIe as the transport all the way back to the virtual machine and all the way forward to that physical NIC; that is all a PCIe channel."

The result is a high bandwidth, low latency link that is also scalable.

NextIO has a software tool that allows bandwidth to be assigned on the fly. "With vNET, you open up a console and grab a resource and drag it over to a server and in 2-3 seconds you've just provisioned more bandwidth for that server without physically touching anything."

The provisioning is between vNET and the servers. In the case of networking traffic, this is in 10GbE chucks. It is the server's own virtualisation tools that do the partitioning between the various virtual machines.

vNET has an additional four vNET slots - for a total of 12 - for assignment to individual servers. "If you are taking all the I/O cards out of the server, you can use smaller form-factor servers," says Fruehe. But such 1RU servers may not have room for a specific I/O card. Accordingly, the four slots are available to host cards - such as a solid-state drive flash memory or a graphics processing unit accelerator - that may be needed by individual servers.

Operational benefits

There are power and cooling benefits using the vNET platform. First, smaller form factor servers draw less power while using PCIe results in fewer cables and better air flow.

To understand why fewer cables are needed, a typical server uses a quad 1GbE controller and a dual-ported Fibre Channel controller, resulting in six cables. To have a redundant system, a second set of Ethernet and Fibre Channel cards are used, doubling the cables to a dozen. With 30 servers in a rack, the total is 360 cables.

Using NextIO's vNET, in contrast, only two PCIe cables are required per server or 60 cables in total.

On the front panel, there are eight shared slots and these can all be either dual 10GbE port cards or dual 8GbE port Fibre Channel cards or a mix of both. This gives a total of 160GbE or 128 Gig of Fibre Channel. NextIO plans to upgrade the platforms to 40GbE interfaces for an overall capacity of 640GbE.

ROADMs and their evolving amplification needs

Technology briefing: ROADMs and amplifiers

Oclaro announced an add/drop routing platform at the recent OFC/NFOEC show. The company explains how the platform is driving new arrayed amplifier and pumping requirements.

A ROADM comprising amplification, line-interfaces, add/ drop routing and transponders. Source: Oclaro

A ROADM comprising amplification, line-interfaces, add/ drop routing and transponders. Source: Oclaro

Agile optical networking is at least a decade-old aspiration of the telcos. Such networks promise operational flexibility and must be scalable to accommodate the relentless annual growth in network traffic. Now, technologies such as coherent optical transmission and reconfigurable optical add/drop multiplexers (ROADMs) have reached a maturity to enable the agile, mesh vision.

Coherent optical transmission at 100 Gigabit-per-second (Gbps) has become the base currency for long-haul networks and is moving to the metro. Meanwhile, ROADMs now have such attributes as colourless, directionless and contentionless (CDC). ROADMs are also being future-proofed to support flexible grid, where wavelengths of varying bandwidths are placed across the fibre's spectrum without adhering to a rigid grid.

Colourless and directionless refer to the ROADM's ability to transmit or drop any light path from any direction or degree at any network interface port. Contentionless adds further flexibility by supporting same-colour light paths at an add or a drop.

"You can't add and drop in existing architectures the same colour [light paths at the same wavelength] in different directions, or add the same colour from a given transponder bank," says Bimal Nayar, director, product marketing at Oclaro's optical network solutions business unit. "This is prompting interest in contentionless functionality."

The challenge for optical component makers is to develop cost-effective coherent and CDC-flexgrid ROADM technologies for agile networks. Operators want a core infrastructure with components and functionality that provide an upgrade path beyond 100 Gigabit coherent yet are sufficiently compact and low-power to minimise their operational expenditure.

ROADM architectures

ROADMs sit at the nodes of a mesh network. Four-degree nodes - the node's degree defined as the number of connections or fibre pairs it supports - are common while eight-degree is considered large.

The ROADM passes through light paths destined for other nodes - known as optical bypass - as well as adds or drops wavelengths at the node. Such add/drops can be rerouted traffic or provisioned new services.

Several components make up a ROADM: amplification, line-interfaces, add/drop routing and transponders (see diagram, above).

"With the move to high bit-rate systems, there is a need for low-noise amplification," says Nayar. "This is driving interest in Raman and Raman-EDFA (Erbium-doped fibre amplifier) hybrid amplification."

The line interface cards are used for incoming and outgoing signals in the different directions. Two architectures can be used: broadcast-and-select and route-and select.

With broadcast-and-select, incoming channels are routed in the various directions using a passive splitter that in effect makes copies the incoming signal. To route signals in the outgoing direction, a 1xN wavelength-selective switch (WSS) is used. "This configuration works best for low node-degree applications, when you have fewer connections, because the splitter losses are manageable," says Nayar.

For higher-degree node applications, the optical loss using splitters is a barrier. As a result, a WSS is also used for the incoming signals, resulting in the route-and-select architecture.

Signals from the line interface cards connect to the routing platform for the add/drop operations. "Because you have signals from any direction, you need not a 1xN WSS but an LxM one," says Nayar. "But these are complex to design because you need more than one switching plane." Such large LxM WSSes are in development but remain at the R&D stage.

Instead, a multicast switch can be used. These typically are sized 8x12 or 8x16 and are constructed using splitters and switches, either spliced or planar lightwave circuit (PLC) based .

"Because the multicast switch is using splitters, it has high loss," says Nayar. "That loss drives the need for amplification."

Add/drop platform

With an 8-degree-node CDC ROADM design, signals enter and exit from eight different directions. Some of these signals pass through the ROADM in transit to other nodes while others have channels added or dropped.

In the Oclaro design, an 8x16 multicast switch is used. "Using this [multicast switch] approach you are sharing the transponder bank [between the directions]," says Nayar.

The 8-degree node showing the add/drop with two 8x16 multicast switches and the 16-transponder bank. Source: Oclaro

A particular channel is dropped at one of the switch's eight input ports and is amplified before being broadcast to all 16, 1x8 switches interfaced to the 16 transponders.

It is the 16, 1x8 switches that enable contentionless operation where the same 'coloured' channel is dropped to more than one coherent transponder. "In a traditional architecture there would only be one 'red' channel for example dropped as otherwise there would be [wavelength] contention," says Nayar.

The issue, says Oclaro, is that as more and more directions are supported, greater amplification is needed. "This is a concern for some, as amplifiers are associated with extra cost," says Nayar.

The amplifiers for the add/drop thus need to be compact and ideally uncooled. By not needing a thermo-electrical cooler, for example, the design is cheaper and consumes less power.

The design also needs to be future-proofed. The 8x16 add/ drop architecture supports 16 channels. If a 50GHz grid is used, the amplifier needs to deliver the pump power for a 16x50GHz or 800GHz bandwidth. But the adoption of flexible grid and super-channels, the channel bandwidths will be wider. "The amplifier pumps should be scalable," says Nayar. "As you move to super-channels, you want pumps that are able to deliver the pump power you need to amplify, say, 16 super-channels."

This has resulted in an industry debate among vendors as to the best amplifier pumping scheme for add/drop designs that support CDC and flexible grid.

EDFA pump approaches

Two schemes are being considered. One option is to use one high-power pump coupled to variable pump splitters that provides the required pumping to all the amplifiers. The other proposal is to use discrete, multiple pumps with a pump used for each EDFA.

Source: Oclaro

Source: Oclaro

In the first arrangement, the high-powered pump is followed by a variable ratio pump splitter module. The need to set different power levels at each amplifier is due to the different possible drop scenarios; one drop port may include all the channels that are fed to the 16 transponders, or each of the eight amplifiers may have two only. In the first case, all the pump power needs to go to the one amplifier; in the second the power is divided equally across all eight.

Oclaro says that while the high-power pump/ pump-splitter architecture looks more elegant, it has drawbacks. One is the pump splitter introduces an insertion loss of 2-3dB, resulting in the pump having to have twice the power solely to overcome the insertion loss.

The pump splitter is also controlled using a complex algorithm to set the required individual amp power levels. The splitter, being PLC-based, has a relatively slow switching time - some 1 millisecond. Yet transients that need to be suppressed can have durations of around 50 to 100 microseconds. This requires the addition of fast variable optical attenuators (VOAs) to the design that introduce their own insertion losses.

"This means that you need pumps in excess of 500mW, maybe even 750mW," says Nayar. "And these high-power pumps need to be temperature controlled." The PLC switches of the pump splitter are also temperature controlled.

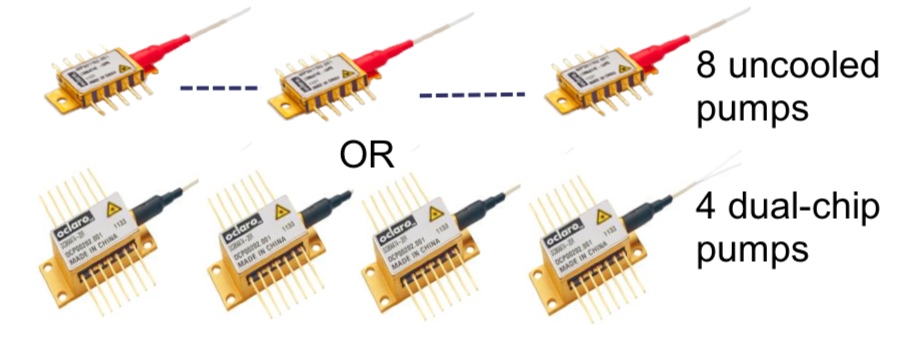

The individual pump-per-amp approach, in contrast, in the form of arrayed amplifiers, is more appealing to implement and is the approach Oclaro is pursuing. These can be eight discrete pumps or four uncooled dual-chip pumps, for the 8-degree 8x16 multicast add/drop example, with each power level individually controlled.

Source: Oclaro

Source: Oclaro

Oclaro says that the economics favour the pump-per-amp architecture. Pumps are coming down in price due to the dramatic price erosion associated with growing volumes. In contrast, the pump split module is a specialist, lower volume device.

"We have been looking at the cost, the reliability and the form factor and have come to the conclusion that a discrete pumping solution is the better approach," says Nayar. "We have looked at some line card examples and we find that we can do, depending on a customer’s requirements, an amplified multicast switch that could be in a single slot."

100 Gigabit and packet optical loom large in the metro

"One hundred Gig metro has become critical in terms of new [operator] wins"

Michael Adams, Ciena

Ciena says operator interest in 100 Gigabit for the metro has been growing significantly.

"One hundred Gig metro has become critical in terms of new [operator] wins," says Michael Adams, vice president of product and technical marketing at Ciena. "Another request is integrated packet switching and OTN (Optical Transport Network) switching to fill those 100 Gig pipes."

The operator CenturyLink announced recently it had selected Ciena's 6500 packet optical transport platform for its network spanning 50 metropolitan regions.

The win is viewed by Ciena as significant given CenturyLink is the third largest telecommunications company in the US and has a global network. "We have already deployed Singapore, London and Hong Kong, and a few select US metropolitans and we are rolling that out across the country," says Adams.

Ciena says CenturyLink wants to offer 1, 10 and 100 Gigabit Ethernet (GbE) services. "In terms of the RFP (request for proposal) process with CenturyLink for next generation metro, the 100 Gigabit wavelength service was key and an important part of the [vendor] selection process."

The vendor offers different line cards based on its WaveLogic 3 coherent chipset depending on a metro or long haul network's specifications. "We firmly believe that 100 Gig coherent in the metro is going to be the way the market moves," says Adams.

At the recent OFC/NFOEC show, Ciena demonstrated WaveLogic 3 based production cards moving between several modulation formats, from binary phase-shift keying (BPSK) to quadrature PSK (QPSK) to 16-QAM (quadrature amplitude modulation).

Ciena showed a 16-QAM-based 400 Gig circuit using two, 200 Gig carriers. "With a flexible grid ROADM, the two [carriers] are pushed together into a spectral grid much less than 100GHz [wide]," says Adams.

The WaveLogic 3 features a transmit digital signal processor (DSP) as well as the receive DSP. "The transmit DSP is key to be able to move the frequencies to much less than 100GHz of spectrum in order to get greater than 20 Terabits [capacity] per fibre," says Adams. "With 88 wavelengths at 100 Gig that is 8.8 Terabits, and with 16-QAM that doubles to 17.6Tbps; we expect at least a [further] 20 percent uplift with the transmit DSP and gridless."

Adams says the company will soon detail the reach performance of its 400 Gig technology using 16-QAM.

It is still early in the market regarding operator demand for 400 Gig transmission. "2013 is the year for 100 Gig but customers always want to know that your platform can deliver the next thing," says Adams. "In the metro regional distances, we believe we can get a 50 percent improvement in economics using 16-QAM." That is because WaveLogic 3 can transmit 100GbE or 10x10GbE in a 50GHz channel, or double that - 2x100GbE or 20x10GbE - using 16-QAM modulation.

The system vendor is also one of AT&T's domain programme suppliers. Ciena will not expand on the partnership beyond saying there is close collaboration between the two. "We give them a lot of insight on roadmaps and on technology; they have a lot of opportunity to say where they would like their partner to be investing," says Adams.

Ciena came top in terms of innovation and leadership in a recent Heavy Reading survey of over 100 operators regarding metro packet-optical. Ciena was rated first, followed by Cisco Systems, Alcatel-Lucent and Huawei. "Our solid packet switching [on the 6500] is why CenturyLink chose us," says Adams.

Avago to acquire CyOptics

- Avago to become the second largest optical component player

- Company gains laser and photonic integration technologies

- The goal is to grow data centre and enterprise market share

- CyOptics achieved revenues of $210M in 2012

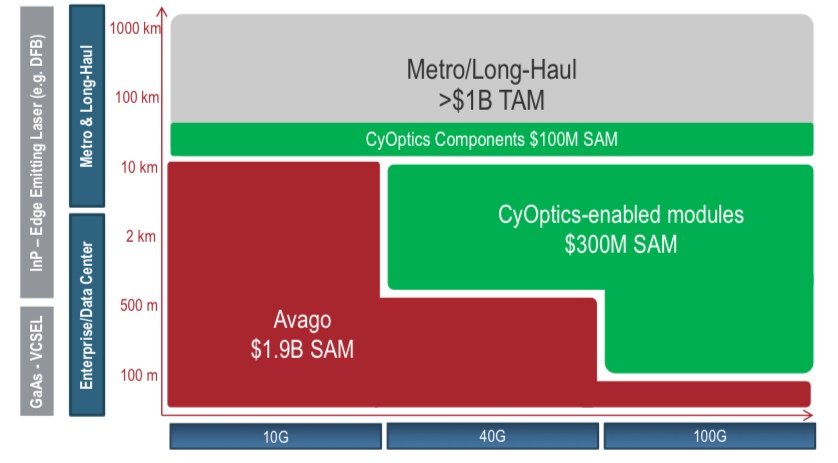

How the acquisition of CyOptics will expand Avago's market opportunities. SAM is the serviceable addressable market and TAM is the total addressable market. Source: Avago

How the acquisition of CyOptics will expand Avago's market opportunities. SAM is the serviceable addressable market and TAM is the total addressable market. Source: Avago

Avago Technologies has announced its plan to acquire optical component player, CyOptics. The value of the acquisition, at US $400M, is double CyOptics' revenues in 2012.

CyOptics' sales were $210M last year, up 21 percent from the previous year. Avago's acquisition will make it the optical component industry's second largest company, behind Finisar, according to market research firm, Ovum. The deal is expected to be completed in the third quarter of the year.

The deal will add indium phosphide and planar lightwave circuit (PLC) technologies to Avago's vertical-cavity surface-emitting laser (VCSEL) and optical transceiver products. In particular, Avago will gain edge laser technology and photonic integration expertise. It will also inherit an advanced automated manufacturing site as well as entry into new markets such as passive optical networking (PON).

Avago stresses its interest in acquiring CyOptics is to bolster its data centre offerings - in particular 40 and 100 Gigabit data centre and enterprise applications - as well as benefit from the growing PON market.

The company has no plans to enter the longer distance optical transmission market beyond supplying optical components.

Significance

Ovum views the acquisition as a shift in strategy. Avago is known as a short distance interconnect supplier based on its VCSEL technology.

"Avago has seen that there are challenges being solely a short-distance supplier, and there are opportunities expanding its portfolio and strategy," says Daryl Inniss, Ovum's vice president and practice leader components.

Such opportunities include larger data centres now being built and their greater use of single-mode fibre that is becoming an attractive alternative to multi-mode as data rates and reach requirements increase.

"Avago's revenues can be lumpy partly because they have a few really large customers," says Inniss.

Another factor motivating the acquisition is that short-distance interconnect is being challenged by silicon photonics. "In the long run silicon photonics is going to win," he says.

What Avago will gain, says Inniss, is one of the best laser suppliers around. And its acquisition will impact adversely other optical module players. "CyOptics is a supplier to several transceiver vendors," says Inniss. "The outlook, two or three years' hence, is decreased business as a merchant supplier."

Inniss points out that CyOptics will represent the second laser manufacturer acquisition this year, following NeoPhotonics's acquisition of Lapis Semiconductor which has 40 Gigabit-per-second (Gbps) electro-absorption modulator lasers (EMLs).

These acquisitions will remove two merchant EML suppliers, given that CyOptics is a strong 10Gbps EML player, and lasers are a key technological asset.

See also:

For a 2011 interview with CyOptics' CEO, click here

OFC/NFOEC 2013 product round-up - Part 2

Second and final part

- Custom add/drop integrated platform and a dual 1x20 WSS module

- Coherent receiver with integrated variable optical attenuator

- 100/200 Gigabit coherent CFP and 100 Gigabit CFP2 roadmaps

- Mid-board parallel optics - from 150 to over 600 Gigabit.

- 10 Gigabit EPON triplexer

Add/drop platform and wavelength-selective switches

Oclaro announced an add/drop routing platform for next-generation reconfigurable optical add/drop multiplexers (ROADMs). The platform, which supports colourless, directionless, contentionless (CDC) and flexible grid ROADMs, can be tailored to a system vendor's requirements and includes such functions as cross-connect switching, arrayed amplifiers and optical channel monitors.

"If we make the whole thing [add/drop platform], we can integrate in a much better way"

"If we make the whole thing [add/drop platform], we can integrate in a much better way"

Per Hansen, Oclaro

After working with system vendors on various line card designs, Oclaro realised there are significant benefits to engineering the complete design.

"You end up with a controller controlling other controllers, and boxes that get bolted on top of each other; a fairly unattractive solution," says Per Hansen, vice president of product marketing, optical networks solutions at Oclaro. "If we make the whole thing, we can integrate in a much better way."

The increasingly complex nature of the add/drop card is due to the dynamic features now required. "You have support for CDC and even flexible grid," says Hansen. "You want to have many more features so that you can control it remotely in software."

A consequence of the add/drop's complexity and automation is a need for more amplifiers. "It is a sign that the optics is getting mature; you are integrating more functionality within your equipment and as you do that, you have losses and you need to put amplifiers into your circuits," says Hansen.

Oclaro continues to expand its amplifier component portfolio. At OFC/NFOEC, the company announced dual-chip uncooled pump lasers in the 10-pin butterfly package multi-source agreement (MSA) it announced at ECOC 2012.

"We have two 500mW uncooled pumps in a single package with two fibres, each pump being independently controlled," says Robert Blum, director of product marketing for Oclaro's photonic components unit.

The package occupies half the space and consumes less than half the power compared to two standard discrete thermo-electrically cooled pumps. The dual-chip pump lasers will be available as samples in July 2013.

Oclaro gets requests to design 4- and 8-degree nodes; with four- and eight-degree signifying the number of fibre pairs emanating from a node.

"Depending on what features customers want in terms of amplifiers and optical channel monitors, we can design these all the way down to single-slot cards," says Hansen. Vendors can then upgrade their platforms with enhanced switching and flexibility while using the same form factor card.

Meanwhile, Finisar demonstrated at OFC/NFOEC a module containing two 1x20 liquid-crystal-on-silicon-based wavelength-selective switches (WSSes). The module supports CDC and flexible grid ROADMs. "This two-port module supports the next-generation route-and-select [ROADM] architecture; one [WSS] on the add side and one on the drop side," says Rafik Ward, vice president of marketing at Finisar.

100Gbps line side components

NeoPhotonics has added two products to its 100 Gigabit-per-second (Gbps) coherent transport product line.

The first is an coherent receiver that integrates a variable optical attenuator (VOA). The VOA sits in front of the receiver to screen the dynamic range of the incoming signal. "This is even more important in coherent systems as coherent is different to direct detection in that you do not have to optically filter the channels coming in," says Ferris Lipscomb, vice president of marketing at NeoPhotonics.

"That is the power of photonic integration: you do a new chip with an extra feature and it goes in the same package."

"That is the power of photonic integration: you do a new chip with an extra feature and it goes in the same package."

Ferris Lipscomb, NeoPhotonics

In a traditional system, he says, a drop port goes through an arrayed waveguide grating which filters out the other channels. "But with coherent you can tune it like a heterodyne radio," says Lipscomb. "You have a local oscillator that you 'beat' against the signal so that the beat frequency for the channel you are tuned to will be within the bandwidth of the receiver but the beat frequency of the adjacent channel will be outside the bandwidth of the receiver."

It is possible to do colourless ROADM drops where many channels are dropped, and using the local oscillator, the channel of interest is selected. "This means that the power coming in can be more varied than in a traditional case," says Lipscomb, depending on how many other channels are present. Since there can be up to 80 channels falling on the detector, the VOA is needed to control the dynamic range of the signal to protect the receiver.

"Because we use photonic integration to make our integrated coherent receiver, we can put the VOA directly on the chip," says Lipscomb. "That is the power of photonic integration: you do a new chip with an extra feature and it goes in the same package."

The VOA integrated coherent receiver is sampling and will be generally available in the third quarter of 2013.

NeoPhotonics also announced a narrow linewidth tunable laser for coherent systems in a micro integrated tunable laser assembly (micro-ITLA). This is the follow-on, more compact version of the Optical Internetworking Forum's (OIF) ITLA form factor for coherent designs.

While the device is sampling now, Lipscomb points out that is it for next-generation designs such that it is too early for any great demand.

Sumitomo Electric Industries and ClariPhy Communications demonstrated 100Gbps coherent CFP technology at OFC/NFOEC.

ClariPhy has implemented system-on-chip (SoC) analogue-to-digital (ADC) and digital-to-analogue (DAC) converter blocks in 28nm CMOS while Sumitomo has indium phosphide modulator and driver technology as well as an integrated coherent receiver, and an ITLA.

The SoC technology is able to support 100Gbps and 200Gbps using QPSK and 16-QAM formats. The companies say that their collaboration will result in a pluggable CFP module for 100Gbps coherent being available this year.

Market research firm, Ovum, points out that the announcement marks a change in strategy for Sumitomo as it enters the long-distance transmission business.

In another development, Oclaro detailed integrated tunable transmitter and coherent receiver components that promise to enable 100 Gigabit coherent modules in the CFP2 form factor.

The company has combined three functions within the transmitter. It has developed a monolithic tunable laser that does not require an external cavity. "The tunable laser has a high-enough output power that you can tap off a portion of the signal and use it as the local oscillator [for the receiver]," says Blum. Oclaro has also developed a discrete indium-phosphide modulator co-packaged with the laser.

The CFP2 100Gbps coherent pluggable module is likely to have a reach of 80-1,000km, suited to metro and metro regional networks. It will also be used alongside next-generation digital signal processing (DSP) ASICs that will use a more advanced CMOS process resulting in a much lower power consumption .

To be able to meet the 12W power consumption upper limit of the CFP2, the DSP-ASIC will reside on the line card, external to the module. A CFP, however, with its upper power limit of 32W will be able to integrate the DSP-ASIC.

Oclaro expects such an CFP2 module to be available from mid-2014 but there are several hurdles to be overcome.

One is that the next-generation DSP-ASICs will not be available till next year. Another is getting the optics and associated electronics ready. "One challenge is the analogue connector to interface the optics and the DSP," says Blum.

Achieving the CFP2 12W power consumption limit is non-trivial too. "We have data that the transmitter already has a low enough power dissipation," says Blum.

Board-mounted optics

Finisar demonstrated its board-mounted optical assembly (BOA) running at 28Gbps-per-channel. When Finisar first detailed the VCSEL-based parallel optics engine, it operated at 10Gbps.

The mid-board optics, being aimed at linking chassis and board-to-board interconnect, can be used in several configurations: 24 transmit channels, 24 receive channels or as a transceiver - 12 transmit and 12 receive. When operated at full rate, the resulting data rate is 672Gbps (24x28Gbps) simplex.

The BOA is protocol-agnostic operating at several speeds ranging from 10Gbps to 28Gbps. For example 25Gbps supports Ethernet lanes for 100Gbps while 28Gbps is used for Optical Transport Network (OTN) and Fibre Channel. Overall the mid-board optics supports Ethernet, PCI Express, Serial Attached SCSI (SAS), Infiniband, Fibre Channel and proprietary protocols. Finisar has started shipping BOA samples.

Avago detailed samples of higher-speed Atlas optical engine devices based on its 12-channel MicroPod and MiniPod designs. The company has extended the channel speed from 10Gbps to 12.5Gbps and to 14Gbps, giving a total bandwidth of 150Gbps and 168Gbps, respectively.

"There is enough of a market demand for applications up to 12.5Gbps that justifies a separate part number," says Sharon Hall, product line manager for embedded optics at Avago Technologies.

The 12x12.5Gbps optical engines can be used for 100GBASE-SR10 (10x10Gbps) as well as quad data rate (QDR) Infiniband. The extra capacity supports Optical Transport Network (OTN) with its associated overhead bits for telecom. There are also ASIC designs that require 12.5Gbps interfaces to maximise system bandwidth.

The 12x14Gbps supports the Fourteen Data Rate (FDR) Infiniband standard and addresses system vendors that want yet more bandwidth.

The Atlas optical engines support channel data rates from 1Gbps. The 12x12.5Gbps devices have a reach of 100m while for the 12x14Gbps devices it is 50m.

Hall points out that while there is much interest in 25Gbps channel rates, the total system cost can be expensive due to the immaturity of the ICs: "It is going to take a little bit of time." Offering a 14Gbps-per-channel rate can keep the overall system cost lower while meeting bandwidth requirements, she says.

10 Gig EPON

Operators want to increase the split ratio - the number of end users supported by a passive optical network - to lower the overall cost.

A PON reach of 20km is another important requirement to operators, to make best use of their central offices housing the optical line terminal (OLT) that serves PON subscribers.

To meet both requirements, the 10G-EPON has a PRX40 specification standard which has a sufficiently high optical link budget. Finisar has announced a 10G-EPON OLT triplexer optical sub-assembly (OSA) that can be used within an XFP module among others that meets the PRX40 specification.

The OSA triplexer supports 10Gbps and 1G downstream (to the user) and 1Gbps upstream. The two downstream rates are needed as not all subscribers on a PON will transition to a 10G-EPON optical network unit (ONU).

To meet the standard, a triplexer design typically uses an externally modulated laser. Finisar has met the specification using a less complex directly modulated laser. The result is a 10G-EPON triplexer supporting a split ratio of 1:64 and higher, and that meets the 20km reach requirement.

Finisar will sell the OSA to PON transceiver makers with production starting first quarter, 2014. Up till now the company has used its designs for its own PON transceivers.

See also:

OFC/NFOEC 2013 product round-up - Part 1, click here

OFC/NFOEC 2013 industry reflections - Part 4

Gazettabyte asked industry figures for their views after attending the recent OFC/NFOEC show.

"Spatial domain multiplexing has been a hot topic in R&D labs. However, at this year's OFC we found that incumbent and emerging carriers do not have a near-term need for this technology. Those working on spatial domain multiplexing development should adjust their efforts to align with end-users' needs"

T.J. Xia, Verizon

T.J. Xia, distinguished member of technical staff, Verizon

Software-defined networking (SDN) is an important topic. Looking forward, I expect SDN will involve the transport network so that all layers in the network are controlled by a unified controller to enhance network efficiency and enable application-driven networking.

Spatial domain multiplexing has been a hot topic in R&D labs. However, at this year's OFC we found that incumbent and emerging carriers do not have a near-term need for this technology. Those working on spatial domain multiplexing development should adjust their efforts to align with end-users' needs.

Several things are worthy to watch. Silicon photonics has the potential to drop the cost of optical interfaces dramatically. Low-cost pluggables such as CFP2, CFP4 and QSFP28 will change the cost model of client connections. Also, I expect adaptive, DSP-enabled transmission to enable high spectral efficiencies for all link conditions.

Andrew Schmitt, principal analyst, optical at Infonetics Research

The Cisco CPAK announcement was noteworthy because the amount of attention it generated was wildly out of proportion to the product they presented. They essentially built the CFP2 with slightly better specs.

"It was very disappointing to see how breathless people were about this [CPAK] announcement. When I asked another analyst on a panel if he thought Cisco could out-innovate the entire component industry he said yes, which I think is just ridiculous."

Cisco has successfully exploited the slave labour and capital of the module vendors for over a decade and I don't see why they would suddenly want to be in that business.

The LightWire technology is much better used in other applications than modules, and ultimately the CPAK is most meaningful as a production proof-of-concept. I explored this issue in depth in a research note for clients.

It was very disappointing to see how breathless people were about this announcement. When I asked another analyst on a panel if he thought Cisco could out-innovate the entire component industry he said yes, which I think is just ridiculous.

There were also some indications surrounding CFP2 customers that cast doubt on the near-term adoption of the technology, with suppliers such as Sumitomo Electric deciding to forgo development entirely in favour of CFP4 and/ or QSFP.

I think CFP2 ultimately will be successful outside of enterprise and data centre applications but there is not a near-term catalyst for adoption of this format, particularly now that Cisco has bowed out, at least for now.

SDN is a really big deal for data centres and enterprise networking but its applications in most carrier networks will be constrained to only a few areas relative to multi-layer management.

Within carrier networks, I think SDN is ultimately a catalyst for optical vendors to potentially add value to their systems, and a threat to router vendors as it makes bypass architectures easier to implement.

"Pluggable coherent is going to be just huge at OFC/NFOEC 2014"

Optical companies like ADVA Optical Networking, Ciena and Infinera are pushing the envelope here and the degree to which optical equipment companies are successful is dependent on who their customers are and how hungry these customers are for solutions.

Meanwhile, pluggable coherent is going to be just huge at OFC/NFOEC 2014, followed by QSFP/ CFP4 prototyping and more important production planning and reliability. Everyone is going to use different technologies to get there and it will be interesting to see what works best.

I also think the second half of 2013 will see an increase in deployment of common equipment such as amplifiers and ROADMs.

Magnus Olson, director hardware engineering, Transmode

Two clear trends from the conference, affecting quite different layers of the optical networks, are silicon photonics and SDN.

"If you happen to have an indium phosphide fab, the need for silicon photonics is probably not that urgent. If you don't, now seems very worthwhile to look into silicon photonics"

Silicon photonics, deep down in the physical layer, is now emerging rapidly from basic research to first product realisation. Whereas some module and component companies barely have taken the step from lithium niobate modulators to indium phospide, others have already advanced indium phosphide photonic integrated circuits (PICs) in place.

If you happen to have an indium phosphide fab, the need for silicon photonics is probably not that urgent. If you don't, now seems very worthwhile to look into silicon photonics.

Silicon photonics is a technology that should help take out the cost of optics for 100 Gigabit and beyond, primarily for short distance, data centre applications.

SDN, on the other hand, continues to mature. There is considerable momentum and lively discussion in the research community as well as within the standardisation bodies that could perhaps help SDN to succeed where Generalized Multi-Protocol Label Switching (GMPLS) failed.

Ongoing industry consolidation has reduced the number of companies to meet and discuss issues with to a reasonable number. The larger optical module vendors all have full portfolios and hence the consolidation would likely slow down for awhile. The spirit at the show was quite optimistic, in a very positive, sustainable way.

As for emerging developments, the migration of form factors for 100 Gigabit, from CFP via CFP2 to CFP4 and beyond, is important to monitor and influence from a wavelength-division multiplexing (WDM) vendor point of view.

We should learn from the evolution of the SFP+, originally invented with purely grey data centre applications. Once the form factor is well established and mature, coloured versions start to appear.

If not properly taken into account from the start in the multi-source agreement (MSA) work with respect to, for example, power classes, it is not easy to accommodate tunable dense WDM versions in these form factors. Pluggable optics are crucial for cost as well as flexibility, on both the client side and line side.

Shai Rephaeli, vice president of interconnect products, Mellanox

At OFC, many companies demonstrated 25 Gigabit-per-second (Gbps) prototypes and solutions, both multi mode and single mode.

Thus, a healthy ecosystem for the 100 Gigabit Ethernet (GbE) and EDR (Enhanced Data Rate) InfiniBand looks to be well aligned with our introduction of new NIC (network interface controller)/ HCA (Infiniband host channel adaptor) and switch systems.

However, a significant increase in power consumption compared to current 10Gbps and 14Gbps product is observed. This requires the industry to focus heavily on power optimisation and thermal solutions.

"One development to watch is 1310nm and 1550nm VCSELs"

Standardisation for 25Gbps single mode fibre solutions is a big challenge. All the industry leaders have products at some level of development, but each company is driving its own technology. There may be a real interoperability barrier, considering the different technologies: WDM/ 1310nm, parallel and pulse-amplitude modulation (PAM) which, itself, may have several flavours: 4-levels, 8-levels and 16-levels.

One development to watch is 1310nm and 1550nm VCSELs, which can bring the data centre/ multi-mode fibre volume and prices into the mid-reach market. This technology can be important for the new large-scale data centres, requiring connections significantly longer than 100m.

Part 1: Software-defined networking: A network game-changer, click here

Part 2: OFC/NFOEC 2013 industry reflections, click here

Part 3: OFC/NFOEC 2013 industry reflections, click here

Part 5: OFC/NFEC 2013 industry reflections, click here