Ciena and partners build SDN testbed for carriers

Ciena, working with partners, is building a network to enable the development of software-defined networking (SDN) applied to the wide area network (WAN). The motivation in creating the testbed network is to boost carrier confidence in SDN while aiding its development.

"When you get very serious vice presidents in tier-one carriers saying, 'This [SDN] is the biggest change in my career', there is something to it."

"When you get very serious vice presidents in tier-one carriers saying, 'This [SDN] is the biggest change in my career', there is something to it."

Chris Janz, Ciena

Many software elements will be needed for SDN in the carrier environment, spanning the network through to the back office. Much is made of the benefits SDN will deliver, but it is difficult for operators to gauge SDN's full potential until they transform their networks. Carriers also want the confidence that the industry will deliver the SDN components needed.

To this aim, Ciena, along with research and education partners, CANARIE, Internet2 and StarLight, are developing the SDN test network. Carriers, research partners and Ciena's R&D team will use the network to experiment and validate SDN's benefits for packet and optical WANs.

Parts of the SDN network have already been demonstrated and Ciena expects the SDN test environment to be up and running in the next couple of months. Many of the components are in prototype form.

"The goal is to leap to the end point by providing the key parts of the future system for a carrier-style SDN-powered WAN, thereby demonstrating conclusively the macro SDN service cases that people imagine can be delivered," says Chris Janz, vice president of market development at Ciena.

Another aim is to help carriers determine how best to migrate their networks to the future SDN framework.

Testbed

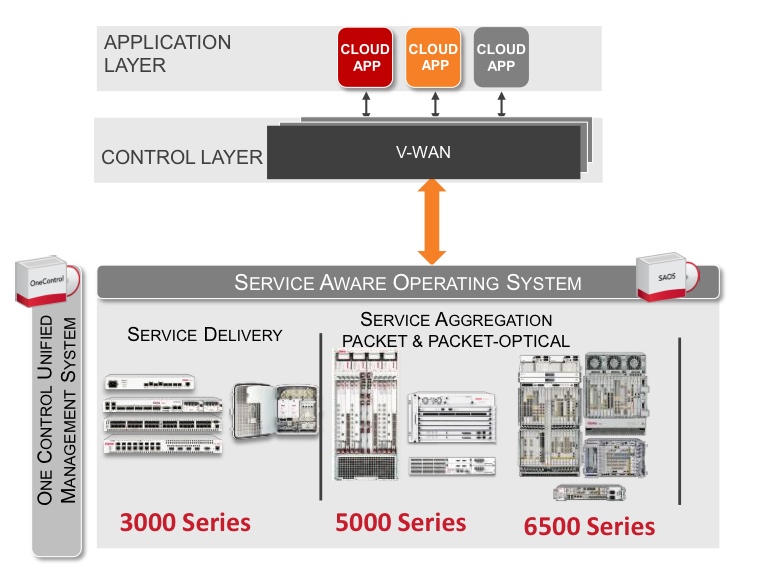

The testbed conforms to the Open Networking Foundation's SDN architecture that comprises three layers. "Two of them are software: business applications talking to a network controller system which drives the physical network," says Janz.

The business application layer includes such systems as customer management, service creation and billing. "What we think of as OSS/ BSS and cloud orchestration systems," says Janz. Components such as cloud orchestration systems and portals that simulate customer actions are being contributed by partners to exercise the testbed.

Ciena has chosen OpenFlow, the open standard, to drive the packet and transport layers. "This [SDN] is not the data centre, it is not all packet; it is a model carrier-style network," he says.

The SDN controller is designed to add flexibility and open up the design. "There is a clear spirit in SDN that customers want to take more affirmative control of their competitive destiny," says Janz. "They do not want to be locked into services, features and functions that their vendors deliver to them and their competitors."

Ciena is part of OpenDaylight, the Linux-based SDN controller industry initiative, and this will be included. "There is a modular structure with internal interfaces," says Janz. "There is leveraging of some early generation open source components for part of the structure."

The control system is designed to be the heart of what Janz refers to as 'autonomic intelligence' to deliver the sought-after benefits of SDN.

One such benefit is for carriers to contain their capital costs by better filling their networks with traffic - running them 'hotter'. "Can they move their networks from 35 percent average utilisation to 95 percent?" says Janz.

Software-based intelligence as delivered by SDN can match dynamically demand with fulfillment. "You have all the service demands coming into the [SDN] control system, and you have control at that point of the entire configuration and state of the network," says Janz.

Ciena has added real-time analytics software to the controller prototype to aid such optimisation. "It is piece parts like this that prove the postulated benefits of SDN," he says.

The platforms used for the network includes 4 Terabit core switches with 400 Gigabit packet blades and optical and Optical Transport Network (OTN) transport using Ciena's 6500 and 5400 converged packet-optical product families, all configured using OpenFlow.

The 2500km network will connect Ciena’s headquarters in Hanover, Maryland with the company's R&D center in Ottawa. International connectivity is provided by Internet2 through the StarLight International/National Communications Exchange in Chicago and CANARIE, Canada's national optical fiber based advanced R&E network.

"If we look ten years down the road, the whole [software] stack - from the bottom of the network to the top of the back office - will look different to what it does today"

Testbed goals

The initial goal is to implement key SDN services and prove use cases. The open testbed will run indefinitely, says Janz: "SDN will unfold and we view the testbed as a standing platform that will change over time with new software and hardware." In effect, the testbed will implement an end-to-end infrastructure whose state is controlled in fine detail by a centralised controller.

Janz cites mass-customised network-as-a-service (NaaS) as one service SDN-in-the-WAN can enable.

Traditional Ethernet connectivity is a static service where the customer requests a given bandwidth and specifies the end points. "It is a very limited template and once it is locked in, the customer generally can't change it," says Janz.

SDN promises more sophisticated connection services. "Instead of defining just the end points, you can define virtual end points," says Janz. All sorts of parameters can then be specified: bandwidth, latency, availability and the restoration required, and these can be changed with time. Moreover, all can be ordered using an application programming interface (API) to the orchestration system at the customer's site.

"It would enable the customer to have many effective service pipes rather than one big one, and resolve and match each of them to a specific application need or flow," says Janz. The customer can then optimise them as the needs of each changes with time.

The benefits to the operator include better meeting the customer's needs, and an ability to charge across multiple service parameters, not just two. "That should be the path to greater revenue," says Janz.

The trick is managing such a system. "Can you price it effectively and know that you are targeting maximum revenue? Can you co-manage all these customer changes while respecting the changing service parameters of each?" says Janz. "You need the critical mass of piece parts to show that such a situation is workable and that, hey, I can make more money with a service like that."

60-second interview with Michael Howard

Infonetics Research has interviewed global service providers regarding their plans for software-defined networking (SDN) and network functions virtualisation (NFV). Gazettabyte asked Michael Howard, co-founder and principal analyst, carrier networks, about Infonetics' findings.

"Data centres are simple when compared to carrier networks"

"Data centres are simple when compared to carrier networks"

Michael Howard, Infonetics Research

What is it about SDN and NFV - technologies still in their infancy - that already convinces 86 percent of the operators to deploy the technologies in their optical transport networks?

Michael H: Operators have a universal draw to SDN and NFV for two basic reasons:

1. They want to accelerate revenue by reducing the time to new services and applications.

2. They have operational drivers, of which there are also two parts:

- Carriers expect software-defined networks to give them a single view across multiple vendor equipment, network layers and equipment types for mobile backhaul, consumer digital subscriber line (DSL), passive optical network (PON), optical transport, routers, mobile core and Ethernet access. This global view will allow them to provision, monitor and deliver service-level agreements while controlling services, virtual networks and traffic flows in an easier, more flexible and automated way.

- An additional function possible with such a global view across the multi-vendor network is that traffic can be monitored and re-distributed along pathways to make best use of the network. In this way, the network can run 'hotter' and thereby require less equipment, saving capital expenditure (CapEx).

Optical transport networks have a history of being engineered to effect predictable flows on transport arteries and backbones. Many operators have deployed, or have been experimenting with, GMPLS (Generalized Multi-Protocol Label-Switching) and vendor control planes. So it is natural for them to want to bring this industry standard method of deploying an SDN control plane over the usually multi-vendor transport network.

In our conversations - independent of our survey - we find that several operators believe the biggest bang for the SDN buck is to use SDN for single control plane over multi-layer data - router, Ethernet - and the optical transport network.

"The virtualisation of data centre networks has inspired operators who want to apply the same general principles to their oh-so-much-more complex networks"

Early use of SDN has been in the data centre. How will the technologies benefit networks more generally and optical transport in particular?

SDNs were developed initially to solve the operational problems of un-automated networks. That is to say, slow human labour-intensive network changes required by the automated hypervisor as it moves, adds and changes virtual machines across servers that may be in the same data centre or in multiple data centres.

The virtualisation of data centre networks has inspired operators who want to apply the same general principles to their oh-so-much-more complex networks. Data centres are simple when compared to carrier networks. Data centres are basically large numbers of servers connected by Ethernet LANs and virtual LANs with some router separations of the LANs connecting servers.

"It will be many years before SDNs-NFV will be deployed in major parts of a carrier network"

Service provider networks are a set of many different types of networks including consumer broadband, business virtual private networks, optical transport, access/ aggregation Ethernet and router networks, mobile core and mobile backhaul. Each of these comprises multiple layers and almost certainly involves multiple vendor equipment. This explains why operators are starting their SDN-NFV investigations with small network segments which we call 'contained domains'. It will be many years before SDNs-NFV will be deployed in major parts of a carrier network.

You mention small SDN and NFV deployments. What will these early applications look like?

Our survey respondents indicated that intra-datacentre, inter-datacentre, cloud services, and content delivery networks (CDNs) will be the first to be deployed by the end of 2014. Other areas targeted longer term are optical transport, mobile packet core, IP Multimedia Subsystem, and more.

Was there a finding that struck you as significant or surprising?

Yes. A lot of current industry buzz is about optical transport networks, making me think that we'd see SDNs deployed soon. But what we heard from operators is that optical transport networks are further out in their deployment plans. This makes sense in that the Open Networking Foundation working group for transport networks has just recently got their standardisation efforts going, which usually takes a couple of years.

You say that it will be years before large parts or a whole network will be SDN-controlled. What are the main challenges here regarding SDN and will they ever control a whole network?

As I said earlier, carrier networks are complex beasts, and they are carrying revenue-generating services that cannot be risked by deployment of a new set of technologies that make fundamental changes to the way networks operate.

A major problem yet to be resolved or even addressed much by the industry is how to add SDN control planes to the router-controlled network that uses the MPLS control plane. SDN and MPLS control planes must cooperate or be coordinated in some way since they both control the same network equipment-not an easy problem, and probably the thorniest of all challenges to deploy SDNs and NFV.

The study participants rated CDNs, IP multimedia subsystem (IMS), and virtual routers/ security gateways as the main NFV applications. At least two of these segments already use servers so just how impactful will NFV be for operators?

Many operators see that they can deploy NFV in a much simpler way than deploying control plane changes involved with SDNs.

Many network functions have already been virtualised, that is software-only versions are available, and many more are under development. But these are individual vendor developments, not done according to any industry standards. This means that NFV - network functions run on servers rather than on specialised network equipment like firewalls, intrusion prevention/ intrusion detection systems, Evolved Packet Core hardware - is already in motion.

The formalisation of NFV by the carrier-driven ETSI standards group is underway, developing recommendations and standards so that these virtualised network functions can be deployed in a standardised way.

Infonetics interviewed purchase-decision makers at 21 incumbent, competitive and independent wireless operators from EMEA (Europe, Middle East, Africa), Asia Pacific and North America that have evaluated SDN projects or plan to do so. The carriers represent over half (53 percent) of the world's telecom revenue and CapEx.

Ovum on Infinera's Intelligent Transport Network strategy

Infinera announced that TeliaSonera International Carrier (TSIC) is extending the use of its DTN-X to its European network, having already adopted the platform in the US. Infinera has also outlined the next evolution in its networking strategy, dubbed the Intelligent Transport Network.

Dana Cooperson

Dana Cooperson

Gazettabyte asked Dana Cooperson, vice president and practice leader, and Ron Kline, principal analyst, both in the network infrastructure group at market research firm, Ovum, about the announcement and Infinera's outlined strategy.

What has been announced

TSIC is adding Infinera's DTN-X to boost network capacity in Europe and accommodate its own growing IP traffic. TSIC already has deployed 100 Gig technology in its European network, using a Coriant product. The wholesale operator will sell 100 Gig services, activating capacity using the DTN-X's 'instant bandwidth' feature based on already-lit 100 Gig light paths that make up its 500 Gigabit super-channels.

Meanwhile, Infinera has detailed its Intelligent Transport Network strategy that extends its digital optical network that performs optical-electrical-optical (OEO) conversion using its 500 Gig photonic integrated circuits (PICs) coupled with OTN (Optical Transport Network) switching to include additional features. These include multi-layer switching – reconfigurable optical add/drop multiplexers (ROADMs) and MPLS (Multi-Protocol Label Switching) – and PICs with terabit capacity

Q&A with Dana Cooperson and Ron Kline

Q. What is significant about Infinera's Intelligent Transport Network strategy?

Dana C: Infinera is being more public about its longer-term strategy - to 2020 - which includes evolving from its digital optical network messaging to a network that includes multiple layers and types of switching, and more automation. Infinera is not announcing more functionality availability now.

Infinera makes much play about its 500 Gig super-channels. More recently it has detailed such platform features as instant bandwidth and Fast Shared Mesh Protection supported in hardware. Are these features giving operators something new and is Infinera gaining market share as a result?

Dana C: Instant Bandwidth provides a way for Infinera’s operator customers to have their cake and eat it. They can install 500 Gig super-channels ahead of demand, and not pay for each 100 Gig sub-channel until they have a need for that bandwidth. It is a simple process at that point to 'turn on' the next 100 Gig worth of bandwidth within the super-channel.

By installing all five 100 Gig channels at once, the operator can simplify operations - lower opex - and allow quicker time-to-revenue without having to take the capex hit until the bandwidth needs materialise. This is an improvement over the DTN platform, which gave customers the 10x10 Gig architecture to let them pre-position bandwidth before the need for it materialised and save on opex, but at the cost of higher up-front capex than was ideal.

Talking to TSIC confirm that this added flexibility the DTN-X provides has allowed them to win wholesale business from competitors while tying capex more directly to revenue.

Ron K: Although pay-as-you go capability is available, analysis of 100 Gig shipments to date indicate most customers are paying for all five up front.

Dana C: I have not directly talked with an Infinera customer that has confirmed the benefit of Fast Shared Mesh Protection, but the feature certainly seems to be of value to customers and prospects. Our research indicates the continued search for better, more efficient mesh protection. Hardware-enabled protection should provide better latency (higher speed).

Ron K: Resiliency and mesh protection are critical requirements if you want to participate in the market. Shared mesh assumes that you have idle protection capacity available in case there is a failure. That is expensive. However, with Infinera’s technology - the PIC and Instant Bandwidth - it is not as difficult.

Restoration is all about speed – how fast can you get the network back up. It is not always milliseconds, sometimes it is half a minute. But during catastrophic failure events such as an earthquake, where a user can loose entire nodes, 30 seconds may not be so bad. Infinera has implemented the switch in hardware, based on a pre-planned map, so it is quicker.

Dana C: As for what impact these capabilities are having on market share, Infinera has climbed to the No.3 player in 100 Gig DWDM in three quarters since the DTN-X has become available.

They’ve jumped back up to No.4 globally in backbone WDM/CPO-T (converged packet optical transport) after sinking to sixth when they were losing share because they were without a viable 40 Gig solution. They made the right call at that time to focus on 100 Gig systems based on the 500 Gig PIC rather than chase 40 Gig. They are both keeping and expanding with existing DTN customers, TSIC being one, and picking up new customers.

Ron Kline

Ron Kline

Ron K:They are definitely picking up share. However, I’m not sure if they can sustain it. The reason for the share jump is they are selling 100 Gig, five at a time. Remember, most customers elect to pay for all five. That means future sales will lag because customers have pre-positioned the bandwidth.

Looking at the customers is probably a better indicator: Infinera has some 27 customers, maybe 30 by now, which provide a good embedded base. Still, 27 customers is low compared to Ciena, Alcatel-Lucent, Huawei and even Cisco.

When Infinera first announced the DTN-X in 2011 it talked about how it would add MPLS support. Now outlining its Intelligent Transport Network strategy it has still to announce MPLS support. Do operators not need this network feature yet in such platforms and if not, why?

Dana C: The market is still sorting out exactly what is needed for sub-wavelength switching and where it is needed. Cisco’s and Juniper’s approaches are very different in the routing world —essentially, a lower-cost MPLS blade for the CRS versus a whole new box in the PTX; there is no right way there.

Within packet-aware optical products, the same is true: What is the right level of integration of OTN versus MPLS? It depends on where you are in the network, what that carrier’s service mix is, and how fast the mix is changing.

Many carriers are still struggling with their rigid organisational structures, and how best to manage products that are optical and packet in equal measure. So I don’t think Infinera is late, they are just reacting to their customers’ priorities and doing other things first.

Ron K: This is the $64,000 question: MPLS versus OTN. I’m not sure how it will eventually play out. I am asking service providers now.

OTN is a carrier protocol developed for carriers by carriers (the replacement for SONET/SDH). They will be the ones to use it because they have multi-service networks and need the transparency OTN provides. Google types and cable operators will not use OTN switching - they will lean towards the label-switched path (LSP) route. Even Tier-1 operators who have both types of networks will most likely maintain separation.

"The trick is to optimise around the requirements that net you the biggest total available market and which maximise your strengths and minimise your weaknesses. You can’t be all things to all carriers."

If Infinera has its digital optical network, why is it now also talking about ROADMs? And does having both benefit operators?

Dana C: Yes, having both benefits operators. From discussions with Infinera's customers, it is true that the digital nodes give them flexibility, but they do introduce added cost. For those nodes where customers have little need to add/ drop traffic, a ROADM would provide a more cost-efficient option to a node that performs OEO for all the traffic. So, with a ROADM option customers would have more control over node design.

Infinera talks about its next-gen PICs that will support a Terabit and more. After nearly a decade of making PICs, how does Ovum view the significance of the technology?

Dana C: While more vendors are doing photonic integration R&D, and some - Huawei comes to mind - have released some PIC-based products, no one has come close to Infinera in what it can do with photonic integration. Speaking with quite a few of Infinera’s customers, they are very happy with the technology, the system, and the support.

Each generation of PIC requires a significant R&D effort, but it does provide differentiation. Infinera has managed to stay focused and implement on time and on spec. I see them as the epitome of a “specialist” vendor. They are of similar size to Coriant and Tellabs, which have seen their fortunes wane, and ADVA Optical Networking. So I would say they are a very good example of what focus and differentiation can do.

Now, is the PIC the only way to approach system architecture? No. As noted before, some Infinera clients have told me that the lack of a ROADM has hurt them in competitive situations, as did the need to pay for all the pre-positioned bandwidth up front (true for the DTN, not the DTN-X).

From my days in product development, I know you have to optimise around a set of requirements, and the trick is to optimise around the requirements that net you the biggest total available market and which maximise your strengths and minimise your weaknesses. You can’t be all things to all carriers.

What is significant about the latest TeliaSonera network win and what does it mean for Coriant?

Dana C: Infinera is announcing an extension of its deployments at TSIC from North America to now include Europe as well. When you ask what this means to Coriant, their incumbent supplier in Europe, the answer is not clear cut. This gives Infinera an expanded hunting licence and it gives Coriant some cause for worry.

TSIC values both vendors and both will have their place in the European network. TSIC plans to use the vendors in different regions.

I am sure TSIC will try and play each off against the other to get the best price. It is looking for more flexibility and some healthy competition.

Apps over packet-optical: Ciena boosts 6500's packet handling

Source: Ciena

Source: Ciena

Ciena has enhanced its packet-optical equipment portfolio by adding packet support to its flagship 6500 platform.

Cards and software from Ciena's established Carrier Ethernet packet platforms have been added to the 6500, a packet-optical platform that features reconfigurable optical add-drop multiplexing (ROADM), WaveLogic3 coherent transponders, Optical Transport Network (OTN) switching and SONET/SDH aggregation. The system vendor has also developed packet aggregation and switch fabric cards for the 6500.

"You can now use the 6500 for 100 percent packet switching, 100 percent OTN switching, or any mix in between," says Michael Adams, vice president of product and technical marketing at Ciena.

The development is part of a general trend to combine optical and packet to create scalable, manageable networks. It also addresses the operators' growing need for programmable networks to deliver cloud-based services and dynamic bandwidth.

Applications

Ciena has a virtual wide-area network (VWAN) control layer that resides above the networking layer that abstracts the hardware and through which software applications can be executed (see chart).

"We have a scheduler 'app' through the control layer VWAN that allows bandwidth to change between sites, for example," says Adams. "Every night I want to do a backup between these times and I want this much bandwidth as I do it."

Another application is machine-to-machine communication that can be used to link data centres. "If you can virtualise within a data centre, why not virtualise across data centres?" says Adams.

As [servers'] virtual machines move between data centres, the performance of the network becomes key. Ciena has an application programming interface (API) that links to the server's hypervisor that allows machine-to-machine communication to be intercepted to benefit the bandwidth made available for the virtual machine traffic. "We are not doing it today but we have the software to link between two data centres," says Adams.

6500 enhancements

Until now it has been difficult to combine packet with packet optical, requiring different platforms, each with their own management system, says Adams. "It has been hard to take a base station that needs only packet, put the Carrier Ethernet traffic onto a ring [network] and then onto a 100 Gigabit wavelength," he says. "You either built pure packet or used a form of packet optical but it was hard to mix."

Ciena has added hardware and software to the 6500 from its existing packet platforms. The packet platforms are used to deliver Ethernet services and infrastructure and are a $40 million-a-quarter business for Ciena, with over 300,000 network elements deployed.

The service-aware operating system (SAOS), developed for the Ethernet packet platforms, has also been ported onto the 6500's new packet and fabric cards.

With the 6500 running the same software as its packet platforms, service management across the network becomes simpler. "Now, one system can deploy services, and look at performance visualisation between the layers," says Adams.

Ciena's latest hardware cards include blades with 1 and 10 Gigabit-per-second (Gbps) aggregation that operate independently of the 6500's switch fabric. "You don't touch the fabric, just run [them] over a WDM wavelength," says Adams. The stackable blades support 120Gbps to 300Gbps of packet traffic.

Meanwhile, the 6500 switch fabric cards add 600 Gigbit or 1.2 Terabit packet switching capacity that will be increased further in future.

"We have got these blades that can be stacked besides each other for resiliency or scale," says Adams. "And if you want to scale those up, there is a [switch] fabric solution."

Further reading:

100 Gigabit and packet optical loom large in the metro

P-OTS 2.0: 60-second interview with Heavy Reading's Sterling Perrin

Classic textbooks and the challenge of ongoing learning

Gazettabyte asked its Facebook followers if there is a textbook they value more than others, and why.

It could be a book from student days or a more recent one, work-related. Also asked were readers' interest and experiences with newer styles of learning - online, and books with interactive elements and accompanying websites. Books here include business and technology titles.

It could be a book from student days or a more recent one, work-related. Also asked were readers' interest and experiences with newer styles of learning - online, and books with interactive elements and accompanying websites. Books here include business and technology titles.

The question regarding textbooks arose after getting a copy of Networked Life: 20 Questions and Answers by Mung Chiang. The book asks and answers such questions as:

- What makes CDMA work?

- How does Google rank web pages?

- What is inside the cloud of iCloud?

This is an undergraduate textbook for a Princeton University course aimed at electrical engineering and computer science students.

Networked Life is very different from traditional textbooks. It stresses real applications and practical examples. Moreover, each question - a chapter in the book - is answered at two levels: a short generally accessible answer and a more rigorous in-depth treatment that includes diagrams, graphs and maths.

Back to Gazettabyte's Facebook followers. Broadbandtends' Teresa Mastrangelo answers that she has a marketing textbook that she still references: Marketing Management by Philip Kotler. "I even bought a more current version of the same book," she says.

Yuriy Babenko goes for Govind Agrawal's Fiber-Optic Communication Systems, "the bible for all things optical".

Professor Izzat Darwazeh, head of the Communications and Information Systems Group at University College London cites two titles: Introduction to Communication Systems by Ferrel G. Stremler and Schilling and Belove's: Electronic Circuits Discrete and Integrated.

"Both books are from my undergraduate days, but still are relevant today and both are very well written and readable," says Darwazeh. "I used them to learn and use them to teach. Both have sufficient detail to understand the subjects, move from absolute beginnings to advanced levels and have plenty of examples and questions at the end of each chapter."

So what textbook do you recommend and why? Would you highlight any business or technology books that are particularly useful and any online learning resources? Please comment.

Further reading:

Website to accompany the Networked Life book, click here.

Gazettabyte's recommended books, click here

And if you are involved in continual learning and skill acquisition, here are two suggested titles:

- Hacking your education by Dale Stephens

- The first 20 hours: How to learn anything ... fast by John Kaufman

Merits and challenges of optical transmission at 64 Gbaud

u2t Photonics announced recently a balanced detector that supports 64Gbaud. This promises coherent transmission systems with double the data rate. But even if the remaining components - the modulator and DSP-ASIC capable of operating at 64Gbaud - were available, would such an approach make sense?

Gazettabyte asked system vendors Transmode and Ciena for their views.

Transmode:

Transmode points out that 100 Gigabit dual-polarisation, quadrature phase-shift keying (DP-QPSK) using coherent detection has several attractive characteristics as a modulation format.

It can be used in the same grid as 10 Gigabit-per-second (Gbps) and 40Gbps signals in the C-band. It also has a similar reach as 10Gbps by achieving a comparable optical signal-to-noise ratio (OSNR). Moreover, it has superior tolerance to chromatic dispersion and polarisation mode dispersion (PMD), enabling easier network design, especially with meshed networking.

The IEEE has started work standardising the follow-on speed of 400 Gigabit. "This is a reasonable step since it will be possible to design optical transmission systems at 400 Gig with reasonable performance and cost," says Ulf Persson, director of network architecture in Transmode's CTO office.

Moving to 100Gbps was a large technology jump that involved advanced technologies such as high-speed analogue-to-digital (A/D) converters and advanced digital signal processing, says Transmode. But it kept the complexity within the optical transceivers which could be used with current optical networks. It also enabled new network designs due to the advanced chromatic dispersion and PMD compensations made possible by the coherent technology and the DSP-ASIC.

For 400Gbps, the transition will be simpler. "Going from 100 Gig to 400 Gig will re-use a lot of the technologies used for 100 Gig coherent," says Magnus Olson, director of hardware engineering.

So even if there will be some challenges with higher-speed components, the main challenge will move from the optical transceivers to the network, he says. That is because whatever modulation format is selected for 400Gbps, it will not be possible to fit that signal into current networks keeping both the current channel plan and the reach.

"From an industry point of view, a metro-centric cost reduction of 100Gbps coherent is currently more important than increasing the bit rate to 400Gbps"

"If you choose a 400 Gigabit single carrier modulation format that fits into a 50 Gig channel spacing, the optical performance will be rather poor, resulting in shorter transmission distances," says Persson. Choosing a modulation format that has a reasonable optical performance will require a wider passband. Inevitably there will be a tradeoff between these two parameters, he says.

This will likely lead to different modulation formats being used at 400 Gig, depending on the network application targeted. Several modulation formats are being investigated, says Transmode, but the two most discussed are:

- 4x100Gbps super-channels modulated with DP-QPSK. This is the same as today's modulation format with the same optical performance as 100Gbps, and requires a channel width of 150GHz.

- 2x200Gbps super-channels, modulated with DP-16-QAM. This will have a passband of about 75GHz. It is also possible to put each of the two channels in separate 50GHz-spaced channels and use existing networks The effective bandwidth will then be 100GHz for a 400GHz signal. However, the OSNR performance for this format is about 5-6 dB worse than the 100Gbps super-channels. That equates to about a quarter of the reach at 100Gbps.

As a result, 100Gbps super-channels are more suited to long distance systems while 200Gbps super-channels are applicable to metro/ regional systems.

Since 200Gbps super-channels can use standard 50GHz spacing, they can be used in existing metro networks carrying a mix of traffic including 10Gbps and 40Gbps light paths.

"Both 400 Gig alternatives mentioned have a baud rate of about 32 Gig and therefore do not require a 64 Gbaud photo detector," says Olson. "If you want to go to a single channel 400G with 16-QAM or 32-QAM modulation, you will get 64Gbaud or 51Gbaud rate and then you will need the 64 Gig detector."

The single channel formats, however, have worse OSNR performance than 200Gbps super-channels, about 10-12 dB worse than 100Gbps, says Transmode, and have a similar spectral efficiency as 200Gbps super-channels. "So it is not the most likely candidates for 400 Gig," says Olson. "It is therefore unclear for us if this detector will have a use in 400 Gigabit transmission in the near future."

Transmode points out that the state-of-the-art bit rate has traditionally been limited by the available optics. This has kept the baud rate low by using higher order modulation formats that support more bits per symbol to enable existing, affordable technology to be used.

"But the price you have to pay, as you can not fool physics, is shorter reach due to the OSNR penalty," says Persson.

Now the challenges associated with the DSP-ASIC development will be equally important as the optics to further boost capacity.

The bundling of optical carriers into super-channels is an approach that scales well beyond what can be accomplished with improved optics. "Again, we have to pay the price, in this case eating greater portions of the spectrum," says Persson.

Improving the bandwidth of the balanced detector to the extent done by u2t is a very impressive achievement. But it will not make it alone into new products, modulators and a faster DSP-ASIC will also be required.

"From an industry point of view, a metro-centric cost reduction of 100Gbps coherent is currently more important than increasing the bit rate to 400Gbps," says Olson. "When 100 Gig coherent costs less than 10x10 Gig, both in dollars and watts, the technology will have matured to again allow for scaling the bit rate, using technology that suits the application best."

Ciena:

How the optical performance changes going from 32Gbaud to 64Gbaud depends largely on how well the DSP-ASIC can mitigate the dispersion penalties that get worse with speed as the duration of a symbol narrows.

BPSK goes twice as far as QPSK which goes about 4.5 times as far as 16-QAM

"I would also expect a higher sensitivity would be needed also, so another fundamental impact," says Joe Berthold, vice president of network architecture at Ciena. "We have quite a bit or margin with the WaveLogic 3 [DSP-ASIC] for many popular network link distances, so it may not be a big deal."

With a good implementation of a coherent transmission system, the reach is primarily a function of the modulation format. BPSK goes twice as far as QPSK which goes about 4.5 times as far as 16-QAM, says Berthold.

"On fibres without enough dispersion, a higher baud rate will go 25 percent further than the same modulation format at half of that baud rate, due to the nonlinear propagation effects," says Berthold. This is the opposite of what occurred at 10 Gigabit incoherent. On fibres with plenty of local dispersion, the difference becomes marginal, approximately 0.05 dB, according to Ciena.

Regarding how spectral efficiency changes with symbol rate, doubling the baud rate doubles the spectral occupancy, says Berthold, so the benefit of upping the baud rate is that fewer components are needed for a super-channel.

"Of course if the cost of the higher speed components are higher this benefit could be eroded," he says. "So the 200 Gbps signal using DP-QPSK at 64 Gbaud would nominally require 75GHz of spectrum given spectral shaping that we have available in WaveLogic 3, but only require one laser."

Does Ciena have an view as to when 64Gbaud systems will be deployed in the network?

Berthold says this hard to answer. "It depends on expectations that all elements of the signal path, from modulators and detectors to A/D converters, to DSP circuitry, all work at twice the speed, and you get this speedup for free, or almost."

The question has two parts, he says: When could it be done? And when will it provide a significant cost advantage? "As CMOS geometries narrow, components get faster, but mask sets get much more expensive," says Berthold.

Arista Networks embeds optics to boost 100 Gig port density

Arista Networks' latest 7500E switch is designed to improve the economics of building large-scale cloud networks.

The platform packs 30 Terabit-per-second (Tbps) of switching capacity in an 11 rack unit (RU) chassis, the same chassis as Arista's existing 7500 switch that, when launched in 2010, was described as capable of supporting several generations of switch design.

"The CFP2 is becoming available such that by the end of this year there might be supply for board vendors to think about releasing them in 2014. That is too far off."

Martin Hull, Arista Networks

The 7500E features new switch fabric and line cards. One of the line cards uses board-mounted optics instead of pluggable transceivers. Each of the line card's ports is 'triple speed', supporting 10, 40 or 100 Gigabit Ethernet (GbE). The 7500E platform can be configured with up to 1,152 10GbE, 288 40GbE or 96 100GbE interfaces.

The switch's Extensible Operating System (EOS) also plays a key role in enabling cloud networks. "The EOS software, run on all Arista's switches, enables customers to build, manage, provision and automate these large scale cloud networks," says Martin Hull, senior product manager at Arista Networks.

Applications

Arista, founded in 2004 and launched in 2008, has established itself as a leading switch player for the high-frequency trading market. Yet this is one market that its latest core switch is not being aimed at.

"With the exception of high-frequency trading, the 7500 is applicable to all data centre markets," says Hull. "That it not to say it couldn't be applicable to high-frequency trading but what you generally find is that their networks are not large, and are focussed purely on speed of execution of their transactions." Latency is a key networking performance parameter for trading.

The 7500E is being aimed at Web 2.0 companies and cloud service providers. The Web 2.0 players include large social networking and on-line search companies. Such players have huge data centres with up to 100,000 servers.

The same network architecture can also be scaled down to meet the requirements of large 'Fortune 500' enterprises. "Such companies are being challenged to deliver private cloud as the same competitive price points as the public cloud," says Hull.

The 7500 switches are typically used in a two-tier architecture. For the largest networks, 16 or 32 switches are used on the same switching tier in an arrangement known as a parallel spine.

A common switch architecture for traditional IT applications such as e-mail and e-commerce uses three tiers of switching. These include core switches linked to distribution switches, typically a pair of switches used in a given area, and top-of-rack or access switches connected to each distribution pair.

For newer data centre applications such as social networking, cloud services and search, the computation requirements result in far greater traffic shared on the same tier of switching, referred to as east-west traffic. "What has happened is that the single pair of distribution switches no longer has the capacity to handle all of the traffic in that distribution area," says Hull.

Customers address east-west traffic by throwing more platforms together. Eight or 16 distribution switches are used instead of a pair. "Every access switch is now connected to each one of those 16 distribution switches - we call them spine switches," says Hull.

The resulting two-tier design, comprising access switches and distribution switches, requires that each access switch has significant bandwidth between itself and any other access switch. As a result, many 7500 switches - 16 or 32 - can be used in parallel at the distribution layer.

Source: Arista Networks

Source: Arista Networks

"If I'm a Fortune 500 company, however, I don't need 16 of those switches," says Hull. "I can scale down, where four or maybe two [switches] are enough." Arista also offers a smaller 4-slot chassis as well as the 8 slot (11 RU) 7500E platform.

7500E specification

The switch has a capacity of 30Tbps. When the switch is fully configured with 1,152 10GbE ports, it equates to 23Tbps of duplex traffic. The system is designed with redundancy in place.

"We have six fabric cards in each chassis," says Hull, "If I lose one, I still have 25 Terabits [of switching fabric]; enough forwarding capacity to support the full line rates on all those ports." Redundancy also applies to the system's four power supplies. Supplies can fail and the switch will continue to work, says Hull.

The switch can process 14.4 billion 64-byte packets a second. This, says Hull, is another way of stating the switch capacity while confirming it is non-blocking.

The 7500E comes with four line card options: three use pluggable optics while the fourth uses embedded optics, as mentioned, based on 12 10Gbps transmit and 12 10Gbps receive channels (see table).

Using line cards supporting pluggable optics provides the customer the flexibility of using transceivers with various reach options, based on requirements. "But at 100 Gigabit, the limiting factor for customers is the size of the pluggable module," says Hull.

Using a CFP optical module, each card supports four 100Gbps ports only. The newer CFP2 modules will double the number to eight. "The CFP2 is becoming available such that by the end of this year there might be supply for board vendors to think about releasing them in 2014," says Hull. "That is too far off."

Arista's board mounted optics delivers 12 100GbE ports per line card.

The board-mounted triple-speed ports adhere to the IEEE 100 Gigabit SR10 standard, with a reach of 150m over OM4 fibre. The channels can be used discretely for 10GbE, grouped in four for 40GbE, while at 100GbE they are combined as a set of 10.

"At 100 Gig, the IEEE spec uses 20 out of 24 lanes (10 transmit and 10 receive); we are using all 24," says Hull. "We can do 12 10GbE, we can do three 40GbE, but we can still only do one 100Gbps because we have a little bit left over but not enough to make another whole 100GbE." In turn, the module can be configured as two 40GbE and four 10GbE ports, or 40GbE and eight 10GbE.

Using board-mounted optics reduces the cost of 100Gbps line card ports. A full 96 100GbE switch configuration achieves a cost of $10k/port while using existing CFP modules the cost is $30k-50k/ port, claims Arista.

Arista quotes 10GbE as costing $550 per line card port not including the pluggable transceiver. At 40GbE this scales to $2,200. For 100GbE the $10k/ port comprises the scaled-up port cost at 100GbE ($2.2k x 2.5) to which is added the cost of the optics. The power consumption is under 4W/ port when the system is fully loaded.

The company uses merchant chips rather than an in-house ASIC for its switch platform. Can't other vendors develop similar performance systems based on the same ICs? "They could, but it is not easy," says Hull.

The company points out that merchant chip switch vendors use a CMOS process node that is typically a generation ahead of state-of-the-art ASICs. "We have high-performance forwarding engines, six per line card, each a discrete system-on-chip solution," says Hull. "These have the technology to do all the Layer 2 and Layer 3 processing." All these devices on one board talk to all the other chips on the other cards through the fabric.

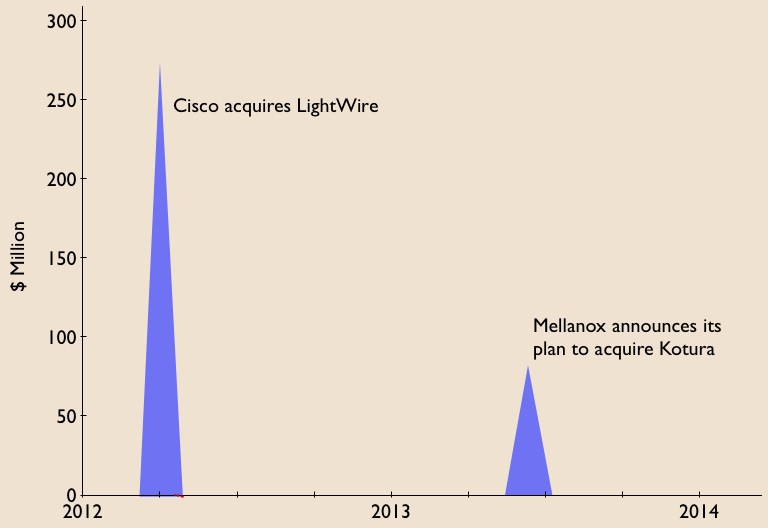

In the last year, equipment makers have decided to bring silicon photonics technology in-house: Cisco Systems has acquired Lightwire while Mellanox Technologies has announced its plan to acquire Kotura.

Arista says it is watching silicon photonics developments with keen interest. "Silicon photonics is very interesting and we are following that," says Hull. "You will see over the next few years that silicon photonics will enable us to continue to add density."

There is a limit to where existing photonics will go, and silicon photonics overcomes some of those limitations, he says.

Extensible Operating System

Arista's highlights several characteristics of its switch operating system. The EOS is standards-compliant, self-healing, and supports network virtualisation and software-defined networking (SDN).

The operating system implements such protocols as Border Gateway Protocol (BGP) and spanning tree. "We don't have proprietary protocols," says Hull. "We support VXLAN [Virtual Extensible LAN] an open standards way of doing Layer 2 overlay of [Layer] 3."

EOS is also described as self-healing. The modular operating system is composed of multiple software processes, each process described as an agent. "If you are running a software process and it is killed because it is misbehaving, when it comes back typically its work is lost," says Hull. EOS is self-healing in that should an agent need to be restarted, it can continue with its previous data.

"We have software logic in the system that monitors all the agents to make sure none are misbehaving," says Hull. "If it finds an agent doing stuff that it should not, it stops it, restarts it and the process comes back running with the same data." The data is not packet related, says Hull, rather the state of the network.

The operating system also supports network virtualisation, one aspect being VXLAN. VXLAN is one of the technologies that allows cloud providers to provide a customer with server resources over a logical network when the server hardware can be distributed over several physical networks, says Hull. "Even a VLAN can be considered as network virtualisation but VXLAN is the most topical."

Support for SDN is an inherent part of EOS from its inception, says Hull. “EOS is open - the customers can write scripts, they can write their own C-code, or they can install Linux packages; all can run on our switches." These agents can talk back to the customer's management systems. "They are able to automate the interactions between their systems and our switches using extensions to EOS," he says.

"We encompass most aspects of SDN," says Hull. "We will write new features and new extensions but we do not have to re-architect our OS to provide an SDN feature."

Arista is terse about its switch roadmap.

"Any future product would improve performance - capacity, table sizes, price-per-port and density," says Hull. "And there will be innovation in the platform's software.

u2t Photonics pushes balanced detectors to 70GHz

- u2t's 70GHz balanced detector supports 64Gbaud for test and measurement and R&D

- The company's gallium arsenide modulator and next-generation receiver will enable 100 Gigabit long-haul in a CFP2

"The performance [of gallium arsenide] is very similar to the lithium niobate modulator"

Jens Fiedler, u2t Photonics

u2t Photonics has announced a balanced detector that operates at 70GHz. Such a bandwidth supports 64 Gigabaud (Gbaud), twice the symbol rate of existing 100 Gigabit coherent optical transmission systems.

The German company announced a coherent photo-detector capable of 64Gbaud in 2012 but that had an operating bandwidth of 40GHz. The latest product uses two 70GHz photo-detectors and different packaging to meet the higher bandwidth requirements.

"The achieved performance is a result of R&D work using our experience with 100GHz single photo-detectors and balanced detector technology at a lower speed,” says Jens Fiedler, executive vice president sales and marketing at u2t Photonics.

The monolithically-integrated balanced detector has been sampling since March. The markets for the device are test and measurement systems and research and development (R&D). "It will enable engineers to work on higher-speed interface rates for system development," says Fiedler.

The balanced detector could be used in next-generation transmission systems operating at 64 Gbaud, doubling the current 100 Gigabit-per-second (Gbps) data rate while using the same dual-polarisation, quadrature phase-shift keying (DP-QPSK) architecture.

A 64Gbaud DP-QPSK coherent system would halve the number of super-channels needed for 400Gbps and 1 Terabit transmissions. In turn, using 16-QAM instead of QPSK would further halve the channel count - a single dual-polarisation, 16-QAM at 64Gbaud would deliver 400Gbps, while three channels would deliver 1.2Tbps.

However, for such a system to be deployed commercially the remaining components - the modulator, device drivers and the DSP-ASIC - would need to be able to operate at twice the 32Gbaud rate; something that is still several years out. That said, Fiedler points out that the industry is also investigating baud rates in between 32 Gig and 64 Gig.

Gallium arsenide modulator

u2t acquired gallium arsenide modulator technology in June 2009, enabling the company to offer coherent transmitter as well as receiver components.

At OFC/NFOEC 2013, u2t Photonics published a paper on its high-speed gallium arsenide coherent modulator. The company's design is based on the Mach-Zehnder modulator specification of the Optical Internetworking Forum (OIF) for 100 Gigabit DP-QPSK applications.

The DP-QPSK optical modulation includes a rotator on one arm and a polarisation beam combiner at the output. u2t has decided to support an OIF compatible design with a passive polarisation rotator and combiner which could also be integrated on chip. The resulting coherent modulator is now being tested before being integrated with the free space optics to create a working design.

"The performance [of gallium arsenide] is very similar to the lithium niobate modulator," says Fiedler. "Major system vendors have considered the technology for their use and that is still ongoing."

The gallium arsenide modulator is considerably smaller than the equivalent lithium niobate design. Indeed u2t expects the technology's power and size requirements, along with the company's coherent receiver, to fit within the CFP2 optical module. Such a pluggable 100 Gigabit coherent module would meet long-haul requirements, says Fiedler.

The gallium arsenide modulator can also be used within the existing line-side 100 Gigabit 5x7-inch MSA coherent transponder. Fiedler points out that by meeting the OIF specification, there is no space saving benefit using gallium arsenide since both modulator technologies fit within the same dimensioned package. However, the more integrated gallium arsenide modulator may deliver a cost advantage, he says.

Another benefit of using a gallium arsenide modulator is its optical performance stability with temperature. "It requires some [temperature] control but it is stable," says Fiedler.

Coherent receiver

u2t's current 100Gbps coherent receiver product uses two chips, each comprising the 90-degree hybrid and a balanced detector. "That is our current design and it is selling in volume," says Fiedler. "We are now working on the next version, according to the OIF specification, which is size-reduced."

The resulting single-chip design will cost less and fit within a CFP2 pluggable module.

The receiver might be small enough to fit within the even smaller CFP4 module, concludes Fiedler.

Mellanox to acquire silicon photonics player Kotura

Source: Gazettabyte

Source: Gazettabyte

Mellanox Technologies has announced its intention to acquire silicon photonics player, Kotura, for $82 million.

The acquisition will enable Mellanox to deliver 100 Gigabit Infiniband and Ethernet interconnect in the coming two years. lt will also provide Kotura with the resources needed to bring its 100 Gigabit QSFP to market. Mellanox will also gain Kotura's optical engine for use in active optical cables and new mid-plane platform designs, as well as future higher speed interfaces.

The news is also significant for the optical component industry. Kotura is one of the three established merchant silicon photonics players - the others being LightWire and Luxtera - that have spent years developing their technologies.

LightWire was acquired by Cisco Systems in March 2012 for US $271 million and now Mellanox plans to acquire Kotura. The two equipment vendors recognise the value of the technology, bringing it in-house to reduce system interconnect costs and as a long term differentiator for their equipment and ASIC designs. Mellanox, as a silicon photonics player, will compete with Intel, with its own silicon photonics technology, and Cisco Systems.

Kotura has been using its technology to sell telecom products such as variable optical attenuators and multiplexers. The start-up recently announced its 100 Gig QSFP that uses wavelength division multiplexing (WDM) transmitter and receiver chips. The product is to become available in 2014.

In an interview last year, Kotura's CTO, Mehdi Asghari, discussed a roadmap showing how its 100 Gigabit silicon photonics technology could scale to 400 Gigabit and eventually 1.6 Terabit.

"Our devices are capable of running at 40 or 50 Gigabit-per-second (Gbps), depending on the electronics. The electronics is going to limit the speed of our devices. We can very easily see going from four channels at 25Gbps to 16 channels at 25Gbps to provide a 400 Gigabit solution," Asghari told Gazettabyte.

Kotura also discussed how the line rate could be increased to 50Gbps either using a non-return-to-zero (NRZ) line rate or using a multi-level modulation such as pulse amplitude modulation (PAM).

"To get to 1.6 Terabit transceivers, we envisage something running at 40Gbps times 40 channels or 50Gbps times 32 channels. We already have done a single receiver chip demonstrator that has 40 channels, each at 40Gbps," said Asghari.

"These things in silicon are not a big deal. The III-V guys really struggle with yield and cost. But you can envisage scaling to that level of complexity in a silicon platform."

Silicon photonics will not replace existing VCSEL or indium phosphide-based transceiver designs. But there is no doubting silicon photonics is emerging as a key optical technology and the segment is heating up.

If the early start-ups are being acquired, there have been more recent silicon photonics players entering the marketplace such as Aurrion, Skorpios Technologies and Teraxion. There are also internal developments among equipment players such as Alcatel-Lucent, HP Labs and IBM. Indeed Kotura has worked closely with Oracle (Sun Microsystems)

Further acquisitions of silicon photonic players should be expected as companies start designing next generation, denser systems and adopt 100 Gigabit and faster interfaces.

Equally, established optical component and module companies will likely enter quietly (and not so quietly) the marketplace adding silicon photonics to their technology toolkits when the timing is right.

Trends to watch

Two industry trends are underway regarding silicon photonics.

The first is system vendors wanting to own the technology to reduce their costs while recognising a need to control and understand the technology as they tackle more complex equipment designs.

The other, what at first glance is a contrarian trend, is the democratisation of silicon photonics.

The technology is slowly passing from the select few to become more generally available for industry use. For this to happen, the relevant design tools need to mature as do third-party fabrication plants that will manufacture the silicon photonics designs.

Appendix:

On June 4th, 2013, Mellanox announced a definitive agreement to acquire chip company IPtronics for $47.5 million as it builds out its in-house technologies for optical interconnect. Click here

Futher reading:

Avago to acquire CyOptics, click here

Ethernet access switch chip boosts service support

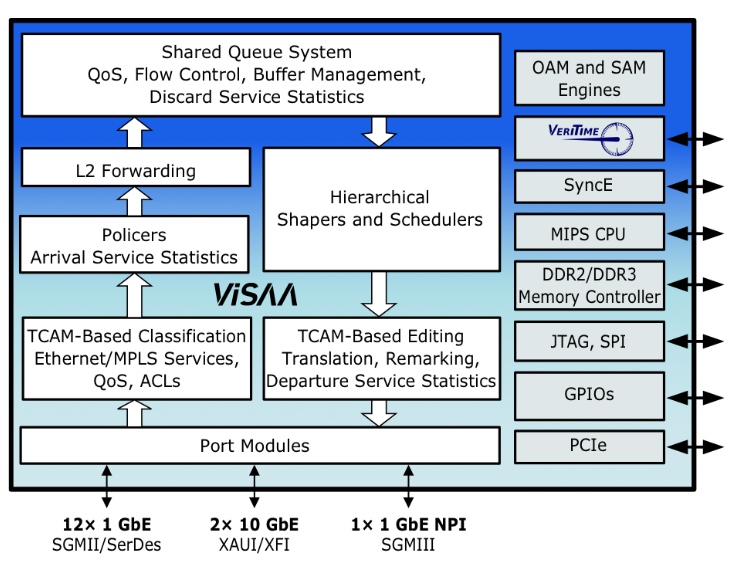

The Serval-2 architecture. Source: Vitesse

Vitesse Semiconductor has detailed its latest Carrier Ethernet access switch for mobile backhaul, cloud and enterprise services.

The Serval-2 chip broadens Vitesse's access switch offerings, adding 10 Gigabit Ethernet (GbE) ports while near-tripling the switching capacity to 32 Gigabit; the Serval-2 has 2x10 GbE and 12 1GbE ports.

The device features Vitesse's service aware architecture (ViSAA) that supports Carrier Ethernet 2.0 (CE 2.0). "We have built a hardware layer into the Ethernet itself which understands and can provision services," says Uday Mudoi, product marketing director at Vitesse.

CE 2.0, developed by the Metro Ethernet Forum (MEF), is designed to address evolving service requirements. First equipment supporting the technology was certified in January 2013. What CE 2.0 does not do is detail how services are implemented, says Mudoi. Such implementations are the work of the ITU, IETF and IEEE standard bodies with protocols such as Muti-Protocol Label Switching (MPLS)/ MPLS-Transport Profile (MPLS-TP) and provider bridging (Q-in-Q). "There is a full set of Carrier Ethernet networking protocols which comes on top of CE 2.0," says Mudoi.

Serval-2 switch

The Serval-2 switch features include 256 Ethernet virtual connections, hierarchical quality of service (QoS), provider bridging, and MPLS/ MPLS-TP.

An Ethernet Virtual Connection (EVC) is a logical representation of an Ethernet service, says Vitesse, a connection that an enterprise, data center or cell site uses to send traffic over the WAN.

Multiple EVCs can run on the same physical interface and can be point-to-point, point-to-multipoint, or multipoint-to-multipoint. Each EVC can have a bandwidth profile that specifies the committed information rate (CIR) and excess information rate (EIR) of the traffic transmitted to, or received from, the Ethernet service provider’s network.

The EVC also supports one or more classes of service and measurable QoS performance metrics. Such metrics include frame delay - latency - and frame loss to meet a particular application performance requirements.

The Serval-2 supports 256x8 class of service (CoS) EVCs, equivalent to over 4,000 bi-directional Ethernet services, says Mudoi.

The Serval-2 also supports per-EVC hierarchical queuing. It allows for 256 bi-directional EVCs with policing, statistics, and QoS guarantees for each CoS and EVC. Hierarchical QoS also enables a mix of any strict or byte-accurate weighting within the EVC, and supports the MEF's dual leaky bucket (DLB) algorithm that shapes traffic per-EVC and per-port.

"Service providers guarantee QoS to subscribers for the services that they buy," says Mudoi. "If each subscriber's traffic - even different applications per-subscriber - is treated using separate queues, then one subscriber's behavior does not impact the QoS of another." Supporting thousands of queues allows service providers to offer thousands of services, each with its own QoS.

Q-in-Q, defined in IEEE 802.1ad, allows for multiple VLAN headers - tags - to be inserted into a frame, says Mudoi, enabling service provider tags and customer tags.

Meanwhile, MPLS/ MPLS-TP direct data from one network node to the next based on shortest path labels rather than on long network addresses, thereby avoiding complex routing table look-ups. The labels identify virtual links between distant nodes rather than endpoints.

MPLS can encapsulate packets of various network protocols. Serval-2's MPLS-TP supports Label Edge Router (LER) with Ethernet pseudo-wires, Label Switch Router (LSR), and H-VPLS edge functions.

Q-in-Q in considered a basic networking function for enterprise and carrier networks, says Mudoi, while MPLS-TP is a more complex protocol.

Serval-2 also supports service activation and Vitesse's implementation of the IEEE 1588v2 timing standard, dubbed VeriTime.

"Before you provision a service, you need to run a test to make sure that once your service is provisioned, the user gets the required service level agreement," says Mudoi. Serval-2 supports the latest ITU-T Y.1564 service activation standard.

IEEE 1588v2 establishes accurate timing across a packet-based network and is used for such applications as mobile. The Serval-2 also benefits from Intellisec, Vitesse's MACsec Layer 2 security standard implementation (see Vitesse's Intellisec ).

"Both [Vitesse's VeriTime IEEE 1588v2 and Intellisec technologies] highly complement what we are doing in ViSAA," says Mudoi.

Availability

Serval-2 samples will be available in the third quarter of 2013. Vitesse expects it will take six months for system qualification such that Ethernet access devices using the chip and carrying live traffic are expected in the first half of 2014.