Verizon on 100G+ optical transmission developments

Source: Gazettabyte

Source: Gazettabyte

Feature: 100 Gig and Beyond. Part 1:

Verizon's Glenn Wellbrock discusses 100 Gig deployments and higher speed optical channel developments for long haul and metro.

The number of 100 Gigabit wavelengths deployed in the network has continued to grow in 2013.

According to Ovum, 100 Gigabit has become the wavelength of choice for large wavelength-division multiplexing (WDM) systems, with spending on 100 Gigabit now exceeding 40 Gigabit spending. LightCounting forecasts that 40,000, 100 Gigabit line cards will be shipped this year, 25,000 in the second half of the year alone. Infonetics Research, meanwhile, points out that while 10 Gigabit will remain the highest-volume speed, the most dramatic growth is at 100 Gigabit. By 2016, the majority of spending in long-haul networks will be on 100 Gigabit, it says.

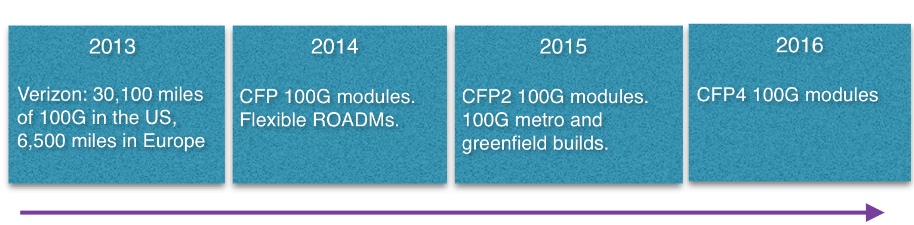

The market research firms' findings align with Verizon's own experience deploying 100 Gigabit. The US operator said in September that it had added 4,800, 100 Gigabit miles of its global IP network during the first half of 2013, to total 21,400 miles in the US network and 5,100 miles in Europe. Verizon expects to deploy another 8,700 miles of 100 Gigabit in the US and 1,400 miles more in Europe by year end.

"We expect to hit the targets; we are getting close," says Glenn Wellbrock, director of optical transport network architecture and design at Verizon.

Verizon says several factors are driving the need for greater network capacity, including its FiOS bundled home communication services, Long Term Evolution (LTE) wireless and video traffic. But what triggered Verizon to upgrade its core network to 100 Gig was converging its IP networks and the resulting growth in traffic. "We didn't do a lot of 40 Gig [deployments] in our core MPLS [Multiprotocol Label Switching] network," says Wellbrock.

The cost of 100 Gigabit was another factor: A 100 Gigabit long-haul channel is now cheaper than ten, 10 Gig channels. There are also operational benefits using 100 Gig such as having fewer wavelengths to manage. "So it is the lower cost-per-bit plus you get all the advantages of having the higher trunk rates," says Wellbrock.

Verizon expects to continue deploying 100 Gigabit. First, it has a large network and much of the deployment will occur in 2014. "Eventually, we hope to get a bit ahead of the curve and have some [capacity] headroom," says Wellbrock.

We could take advantage of 200 Gig or 400 Gig or 500 Gig today

Super-channel trials

Operators, working with optical vendors, are trialling super-channels and advanced modulation schemes such as 16-QAM (quadrature amplitude amplitude). Such trials involve links carrying data in multiples of 100 Gig: 200 Gig, 400 Gig, even a Terabit.

Super-channels are already carrying live traffic. Infinera's DTN-X system delivers 500 Gig super-channels using quadrature phase-shift keying (QPSK) modulation. Orange has a 400 Gigabit super-channel link between Lyon and Paris. The 400 Gig super-channel comprises two carriers, each carrying 200 Gig using 16-QAM, implemented using Alcatel-Lucent's 1830 photonic service switch platform and its photonic service engine (PSE) DSP-ASIC.

"We could take advantage of 200 Gig or 400 Gig or 500 Gig today," says Wellbrock. "As soon as it is cost effective, you can use it because you can put multiple 100 Gig channels on there and multiplex them."

The issue with 16-QAM, however, is its limited reach using existing fibre and line systems - 500-700km - compared to QPSK's 2,500+ km before regeneration. "It [16-QAM] will only work in a handful of applications - 25 percent, something of this nature," says Wellbrock. This is good for a New York to Boston, he says, but not New York to Chicago. "From our end it is pretty simple, it is lowest cost," says Wellbrock. "If we can reduce the cost, we will use it [16-QAM]. However, if the reach requirement cannot be met, the operator will not go to the expense of putting in signal regenerators to use 16-QAM do, he says.

Earlier this year Verizon conducted a trial with Ciena using 16-QAM. The goals were to test 16-QAM alongside live traffic and determine whether the same line card would work at 100 Gig using QPSK and 200 Gig using 16-QAM. "The good thing is you can use the same hardware; it is a firmware setting," says Wellbrock.

We feel that 2015 is when we can justify a new, greenfield network and that 100 Gig or versions of that - 200 Gig or 400 Gig - will be cheap enough to make sense

100 Gig in the metro

Verizon says there is already sufficient traffic pressure in its metro networks to justify 100 Gig deployments. Some of Verizon's bigger metro locations comprise up to 200 reconfigurable optical add/ drop multiplexer (ROADM) nodes. Each node is typically a central office connected to the network via a ROADM, varying from a two-degree to an eight-degree design.

"Not all the 200 nodes would need multiple 100 Gig channels but in the core of the network, there is a significant amount of capacity that needs to be moved around," says Wellbrock. "100 Gig will be used as soon as it is cost-effective."

Unlike long-haul, 100 Gigabit in the metro remains costlier than ten 10 Gig channels. That said, Verizon has deployed metro 100 Gig when absolutely necessary, for example connecting two router locations that need to be connected using 100 Gig. Here Verizon is willing to pay extra for such links.

"By 2015 we are really hoping that the [metro] crossover point will be reached, that 100 Gig will be more cost effective in the metro than ten times 10 [Gig]." Verizon will build a new generation of metro networks based on 100 Gig or 200 Gig or 400 Gig using coherent receivers rather than use existing networks based on conventional 10 Gig links to which 100 Gig is added.

"We feel that 2015 is when we can justify a new, greenfield network and that 100 Gig or versions of that - 200 Gig or 400 Gig - will be cheap enough to make sense."

Data Centres

The build-out of data centres is not a significant factor driving 100 Gig demand. The largest content service providers do use tens of 100 Gigabit wavelengths to link their mega data centres but they typically have their own networks that connect relatively few sites.

"If you have lots of data centres, the traffic itself is more distributed, as are the bandwidth requirements," says Wellbrock.

Verizon has over 220 data centres, most being hosting centres. The data demand between many of the sites is relatively small and is served with 10 Gigabit links. "We are seeing the same thing with most of our customers," says Wellbrock.

Technologies

System vendors continue to develop cheaper line cards to meet the cost-conscious metro requirements. Module developments include smaller 100 Gig 4x5-inch MSA transponders, 100 Gig CFP modules and component developments for line side interfaces that fit within CFP2 and CFP4 modules.

"They are all good," says Wellbrock when asked which of these 100 Gigabit metro technologies are important for the operator. "We would like to get there as soon as possible."

The CFP4 may be available by late 2015 but more likely in 2016, and will reduce significantly the cost of 100 Gig. "We are assuming they are going to be there and basing our timelines on that," he says.

Greater line card port density is another benefit once 100 Gig CFP2 and CFP4 line side modules become available. "Lower power and greater density which is allowing us to get more bandwidth on and off the card." sats Wellbrock.

Existing switch and routers are bandwidth-constrained: they have more traffic capability that the faceplate can provide. "The CFPs, the way they are today, you can only get four on a card, and a lot of the cards will support twice that much capacity," says Wellbrock.

With the smaller form factor CFP2 and CFP4, 1.2 and 1.6 Terabits card will become possible from 2015. Another possible development is a 400 Gigabit CFP which would achieve a similar overall capacity gains.

Coherent, not just greater capacity

Verizon is looking for greater system integration and continues to encourage industry commonality in optical component building blocks to drive down cost and promote scale.

Indeed Verizon believes that industry developments such as MSAs and standards are working well. Wellbrock prefers standardisation to custom designs like 100 Gigabit direct detection modules or company-specific optical module designs.

Wellbrock stresses the importance of coherent receiver technology not only in enabling higher capacity links but also a dynamic optical layer. The coherent receiver adds value when it comes to colourless, directionless, contentionless (CDC) and flexible grid ROADMs.

"If you are going to have a very cost-effective 100 Gigabit because the ecosystem is working towards similar solutions, then you can say: 'Why don't I add in this agile photonic layer?' and then I can really start to do some next-generation networking things." This is only possible, says Wellbrock, because of the tunabie filter offered by a coherent receiver, unlike direct detection technology with its fixed-filter design.

"Today, if you want to move from one channel to the next - wavelength 1 to wavelength 2 - you have to physically move the patch cord to another filter," says Wellbrock. "Now, the [coherent] receiver can simply tune the local oscillator to channel 2; the transmitter is full-band tunable, and now the receiver is full-band tunable as well." This tunability can be enabled remotely rather than requiring an on-site engineer.

Such wavelength agility promises greater network optimisation.

"How do we perhaps change some of our sparing policy? How do we change some of our restoration policies so that we can take advantage of that agile photonics later," says Wellbroack. "That is something that is only becoming available because of the coherent 100 Gigabit receivers."

Part 2, click here

Books in 2013 - Part 1

Gazettabyte is asking various industry figures to highlight books they have read this year and recommend, both work-related and more general titles.

Part 1:

Tiejun J. Xia (TJ), Distinguished Member of Technical Staff, Verizon

The work-related title is Optical Fiber Telecommunications, Sixth Edition, by Ivan Kaminow, Tingye Li and Alan E. Willner. This edition, published in 2013, includes almost all the latest development results of optical fibre communications.

My non-work-related book is Fortune: Secrets of Greatness by the editors of Fortune Magazine. While published in 2006, the book still sheds light on the 'secrets' of people with significant accomplishments.

Christopher N. (Nick) Del Regno, Fellow Verizon

OpenStack Cloud Computing Cookbook, by Kevin Jackson is my work-related title. While we were in the throes of interviewing candidates for our open Cloud product development positions, I figured I had better bone up on some of the technologies.

One of those was OpenStack’s Cloud Computing software. I had seen recommendations for this book and after reading it and using it, I agree. It is a good 'OpenStack for Dummies' book which walks one through quickly setting up an OpenStack-based cloud computing environment. Further, since this is more of a tutorial book, it rightly assumes that the reader would be using some lower-level virtualisation environment (e.g., VirtualBox, etc) in which to run the OpenStack Hypervisor and virtual machines, which makes single-system simulation of a data centre environment even easier.

Lastly, the fact that it is available as a Kindle edition means it can be referenced in a variety of ways in various physical locales. While this book would work for those interested in learning more about OpenStack and virtualisation, it is better suited to those of us who like to get our hands dirty.

My somewhat work-related suggestions include Brilliant Blunders: From Darwin to Einstein – Colossal Mistakes by Great Scientists That Changed Our Understanding of Life and the Universe, by Mario Livio.

I discovered this book while watching Livio’s interview on The Daily Show. I was intrigued by the subject matter, since many of the major discoveries over the past few centuries were accidental (e.g. penicillin, radioactivity, semiconductors, etc). However, this book's focus is on the major mistakes made by some of the greatest minds in history: Darwin, Lord Kelvin, Pauling, Hoyle and Einstein.

It is interesting to consider how often pride unnecessarily blinded some of these scientists to contradictions to their own work. Further, this book reinforces my belief of the importance of collaboration and friendly competition. So many key discoveries have been made throughout history when two seemingly unrelated disciplines compare notes.

Another is Beyond the Black Box: The Forensics of Airplane Crashes, by George Bibel. As a frequent flyer and an aviation buff since childhood, I have always been intrigued by the process of accident investigation. This book offers a good exploration of the crash investigation process, with many case studies of various causes. The book explores the science of the causes and the improvements resulting from various accidents and related investigations. From the use of rounded openings in the skin (as opposed to square windows) to high-temperature alloys in the engines to ways to mitigate the impact of crash forces on the human body, the book is a fascinating journey through the lessons learned and the steps to avoid future lessons.

While enumerating the ways a plane could fail might dissuade some from flying, I found the book reassuring. The application of the scientific method to identifying the cause of, and solution to, airplane crashes has made air travel incredibly safe. In exploring the advances, I’m amazed at the bravery and temerity of early air travelers.

Outside work, my reading includes Doctor Sleep, by Stephen King. The sequel to “The Shining” following the little boy (Dan Torrence) as an adult and Dan’s role-reversal now as the protective mentor of a young child with powerful shining.

I also recommend Joyland (Hard Case Crime), by Stephen King. King tries his hand at writing a hard-case crime novel with great results. Not your typical King…think Stand by Me, Hearts in Atlantis, Shawshank Redemption.

Andrew Schmitt, Principal Analyst, Optical at Infonetics Research

My work-related reading is Research at Google.

Very little signal comes out of Google on what they are doing and what they are buying. But this web page summarises public technical disclosures and has good detail on what they have done.

There are a lot of pretenders in the analyst community who think they know the size and scale of Google's data center business but the reality is this company is sealed up tight in terms of disclosure. I put something together back in 2007 that tried to size 10GbE consumption (5,000 10GbE ports a month ) but am the first to admit that getting a handle on the magnitude of their optical networking and enterprise networking business today is a challenge.

Another offending area is software-defined networking (SDN). Pundits like to talk about SDN and how Google implemented the technology in their wide area network but I would wager few have read the documents detailing how it was done. As a result, many people mistakenly assume that because Google did it in their network, other carriers can do the same thing - which is totally false. The paper on their B4 network shows the degree of control and customisation (that few others have) required for its implementation.

I also have to plug a Transmode book on packet-optical networks. It does a really good job of defining what is a widely abused marketing term, but I’m a little biased as I wrote the forward. It should be released soon.

The non-work-related reading include Nate Silver’s book: The Signal and the Noise: Why So Many Predictions Fail — but Some Don't . I am enjoying it. I think he approaches the work of analysis the right way. I’m only halfway through but it is a good read so far. The description on Amazon summarises it well.

But some very important books that shaped my thinking are from Nassim Taleb . Fooled by Randomness is by far the best read and most approachable. If you like that then go for The Black Swan. Both are excellent and do a fantastic job of outlining the cognitive biases that can result in poor outcomes. It is philosophy for engineers and you should stop taking market advice from anyone who hasn’t read at least one.

The Steve Jobs biography by Walter Isaacson was widely popular and rightfully so.

A Thread Across the Ocean is a great book about the first trans-Atlantic cable, but that is a great book only for inside folks – don’t talk about it with people outside the industry or you’ll be marked as a nerd.

If you are into crazy infrastructure projects try Colossus about the Hoover Dam and The Path Between the Seas about the Panama Canal. The latter discloses interesting facts like how an entire graduating class of French engineers died trying to build it – no joke.

Lastly, I have to disclose an affinity for some favourite fiction: Brave New World, by Aldous Huxley and The Fountainhead by Ayn Rand.

I could go on.

If anyone reading this likes these books and has more ideas please let me know!

Books in 2013 - Part 2, click here

Reporting the optical component & module industry

LightCounting recently published its six-monthly optical market research covering telecom and datacom. Gazettabyte interviewed Vladimir Kozlov, CEO of LightCounting, about the findings.

When people forecast they always make a mistake on the timeline because they overestimate the impact of new technology in the short term and underestimate in the long term

Q: How would you summarise the state of the optical component and module industry?

VK: At a high level, the telecom market is flat, even hibernating, while datacom is exceeding our expectations. In datacom, it is not only 40 and 100 Gig but 10 Gig is growing faster than anticipated. Shipments of 10 Gigabit Ethernet (GbE) [modules] will exceed 1GbE this year.

The primary reason is data centre connectivity - the 'spine and leaf' switch architecture that requires a lot more connections between the racks and the aggregation switch - that is increasing demand. I suspect it is more than just data centres, however. I wouldn't be surprised if enterprises are adopting 10GbE because it is now inexpensive. Service providers offer Ethernet as an access line and use it for mobile backhaul.

Can you explain what is causing the flat telecom market?

Part of the telecom 'hibernation' story is the rapidly declining SONET/SDH market. The decline has been expected but in fact it had been growing up till as recently as two years ago. First, 40 Gigabit OC-768 declined and then the second nail in the coffin was the decline in 10 Gig sales: 10GbE is all SFP+ whereas 0C-192 SONET/SDH is still in the XFP form factor.

The steady dense WDM module market and the growth in wireless backhaul are compensating for the decline in SONET/SDH market as well as the sharp drop this year in FTTx transceiver and BOSA (bidirectional optical sub assembly) shipments, and there is a big shift from transceivers to BOSAs.

LightCounting highlights strong growth of 100G DWDM in 2013, with some 40,000 line card port shipments expected this year. Yet LightCounting is cautious about 100 Gig deployments. Why the caution?

We have to be cautious, given past history with 10 Gig and 40 Gig rollouts.

If you look at 10 Gig deployments, before the optical bubble (1999-2000) there was huge expected demand before the market returned to normality, supporting real traffic demand. Whatever 10 Gig was installed in 1999-2000 was more than enough till 2005. In 2006 and 2007 10 Gig picked up again, followed by 40 Gig which reached 20,000 ports in 2008. But then the financial crisis occurred and the 40 Gig story was interrupted in 2009, only picking up from 2010 to reach 70,000 ports this year.

So 40 Gig volumes are higher than 100 Gig but we haven't seen any 40 Gig in the metro. And now 100 Gig is messing up the 40G story.

The question in my mind is how much metro is a bottleneck today? There may be certain large cities which already require such deployments but equally there was so much fibre deployed in metropolitan areas back in the bubble. If fibre cost is not an issue, why go into 100 Gig? The operator will use fibre and 10 Gig to make more money.

CenturyLink recently announced its first customer purchasing 100 Gig connections - DigitalGlobe, a company specialising in high-definition mapping technology - which will use 100 Gig connectivity to transfer massive amounts of data between its data centers. This is still a special case, despite increasing number of data centers around the world.

There is no doubt that 100 Gig will be a must-have technology in the metro and even metro-access networks once 1GbE broadband access lines become ubiquitous and 10 Gig will be widely used in the access-aggregation layer. It is starting to happen.

So 100 Gigabit in the metro will happen; it is just a question of timing. Is it going to be two to three years or 10-15 years? When people forecast they always make a mistake on the timeline because they overestimate the impact of new technology in the short term and underestimate in the long term.

LightCounting highlights strong sales in 10 Gig and 40 Gig within the data centre but not at 100 Gig. Why?

If you look at the spine and leaf architecture, most of the connections are 10 Gig, broken out from 40 Gig optical modules. This will begin to change as native 40GbE ramps in the larger data centres.

If you go to super-spine that takes data from aggregation to the data centre's core switches, there 100GbE could be used and I'm sure some companies like Google are using 100GbE today. But the numbers are probably three orders of magnitude lower than in a spine and leaf layers. The demand for volume today for 100GbE is not that high, and it also relates to the high price of the modules.

Higher volumes reduce the price but then the complexity and size of the [100 Gig CFP] modules needs to be reduced as well. With 10 Gig, the major [cost reduction] milestone was the transition to a 10 Gig electrical interface. It has to happen with 100 Gig and there will be the transition to a 4x25Gbps electrical interface but it is a big transition. Again, forget about it happening in two-three years but rather a five- to 10-year time frame.

I suspect that one reason for Google offerings of 1Gbps FTTH services to a few communities in the U.S. is to find out what these new application are, by studying end-user demand

You also point out the failure of the IEEE working group to come up with a 100 GbE solution for the 500m-reach sweet spot. What will be the consequence of this?

The IEEE is talking about 400GbE standards now. Go back to 40GbE that was only approved some three years, the majority of the IEEE was against having 40GbE at all, the objective being to go to 100GbE and skip 40GbE altogether. At the last moment a couple of vendors pushed 40GbE. And look at 40GbE now, it is [deployed] all over the place: the industry is happy, suppliers are happy and customers are happy.

Again look at 40GbE which has a standard at 10km. If you look at what is being shipped today, only 10 percent of 40GBASE-LR4 modules are compliant with the standard. The rest of the volume is 2km parts - substandard devices that use Fabry-Perot instead of DFB (distributed feedback) lasers. The yields are higher and customers love them because they cost one tenth as much. The market has found its own solution.

The same thing could happen at 100 Gig. And then there is Cisco Systems with its own agenda. It has just announced a 40 Gig BiDi connection which is another example of what is possible.

What will LightCounting be watching in 2014?

One primary focus is what wireline revenues service providers will report, particularly additional revenues generated by FTTx services.

AT&T and Verizon reported very good results in Q3 [2013] and I'm wondering if this is the start of a longer trend as wireline revenues from FTTx pick up, it will give carriers more of an incentive to invest in supporting those services.

AT&T and Verizon customers are willing to pay a little more for faster connectivity today, but it really takes new applications to develop for end-user spending on bandwidth to jump to the next level. Some of these applications are probably emerging, but we do not know what these are yet. I suspect that one reason for Google offerings of 1Gbps FTTH services to a few communities in the U.S. is to find out what these new application are, by studying end-user demand.

A related issue is whether deployments of broadband services improve economic growth and by how much. The expectations are high but I would like to see more data on this in 2014.

Optical networking spending up in all regions except Europe

Source data: Ovum

Source data: Ovum

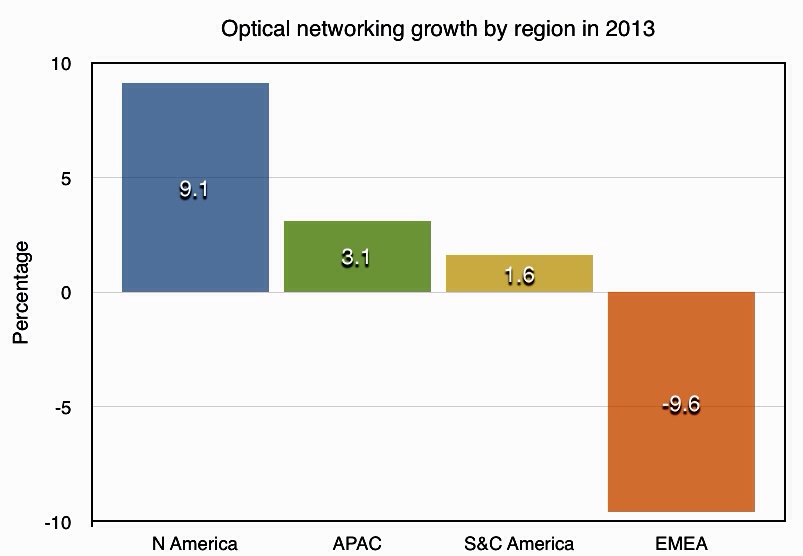

Ovum forecasts that the global optical networking market will grow to US $17.5 billion by 2018, a compound annual growth rate of 3.1 percent.

Optical networking spending in North America will be up 9.1 percent in 2013 after two flat years. North American tier-1 service providers and cable operators are investing in the core network to support all traffic types, and 100 Gigabit is being deployed in volume.

In contrast, optical networking sales in EMEA will contract by nearly 10 percent in 2013. “Non-spending in Europe is the major factor in the overall EMEA decline,” says Ian Redpath, principal analyst, network infrastructure at Ovum.

The major technology trend for this forecast is the ascendancy of 100 Gig, whose sales exceeded 40 Gig revenues in 2Q13

EMEA optical networking spending has been down in four out of the past five years, and the lack of investment is becoming acute, says Ovum. Given that service providers are stretching their existing networks, spending will have to take place eventually to make up for the prolonged period of inactivity.

This year has seen 100 Gigabit become the wavelength of choice for large WDM systems, with sales surging. Spending on 100 Gigabit has now overtaken spending on 40 Gigabit which declined in the first half of the year.

"The major technology trend for this forecast is the ascendancy of 100 Gig, whose sales exceeded 40 Gig revenues in 2Q13," says Redpath.

Further reading:

Ovum: Optical networks forecast: top line steady, 100G surging, click here

The connected vehicle - driving in the cloud

Cars are already more silicon than steel. As makers add LTE high speed broadband, they are destined to become more app than automobile. The possibilities that come with connecting your car to the cloud are scintillating. No wonder Gil Golan, director at General Motors' Advanced Technical Center in Israel, says the automotive industry is at an 'inflection point'.

"If you put LTE to the vehicle ... you are going to open a very wide pipe and you can send to the cloud and get results with almost no latency"

"If you put LTE to the vehicle ... you are going to open a very wide pipe and you can send to the cloud and get results with almost no latency"

Gil Golan, General Motors

After a century continually improving the engine, suspension and transmission, car makers are now busy embracing technologies outside their traditional skill sets. The result is a period of unprecedented change and innovation.

Gil Golan, director at General Motors' Advanced Technical Center in Israel, cites the use of in-camera car systems to aid driving and parking as an example. "Five years ago almost no vehicle used a camera whereas now increasing numbers have at least one, a fish eye-camera facing backwards." Vehicles offering 360-degree views using five cameras are taking to the road and such numbers will become the norm in the next five years.

The result is that the automotive industry is hiring people with optics and lens expertise, as well as image processing skills to analyse the images and video the cameras produce. "This is just the camera; the vehicle is going to be loaded with electronics," says Golan.

In 2004 the [automotive] industry crossed the point where, on average, we spend more on silicon than on steel

Moore's Law

Semiconductor advances driven by Moore's Law have already changed the automotive industry. "In 2004 the [automotive] industry crossed the point where, on average, we spend more on silicon than on steel," says Golan.

Moore's Law continues to improve processor and memory performance while driving down cost. "Every small system can now be managed or controlled in a better way," says Golan. "With a processor and memory, everything can be more accurate, more functionality can be built in, and it doesn't matter if it is a windscreen wiper or a sophisticated suspension system."

Current high-end vehicles have over 100 microprocessors. In turn, chip makers are developing 100 Megabit and 1 Gigabit Ethernet physical devices, media access controllers and switching silicon for in-vehicle networking to link the car's various electronic control units (ECUs).

The growing number of on-board microprocessors is also reflected in the software within vehicles. According to Golan, the Chevrolet Volt has over 10 million lines of code while the latest Lockheed Martin F-35 fighter has 8.7 million. "These are software vehicles on four wheels," says Golan. Moreover, the design of the Chevy Volt started nearly a decade ago.

Car makers must keep vehicles, crammed with electronics and software, updated despite their far longer life cycles compared to consumer devices such as smartphones.

According to General Motors, each car model has its content sealed every four or five years. A car design sealed today may only come on sale in 2016 after which it will be manufactured for five years and remain on the road for a further decade. "A vehicle sealed today is supposed to be updated and relevant through to 2030," says Golan. "This, in an era where things are changing at an unprecedented pace."

As a result car makers work on ways to keep vehicles updated after the design is complete, during its manufacturing phase, and then when the vehicle is on the road, says Golan.

Industry trends

Two key trends are driving the automotive industry:

- The development of autonomous vehicles

- The connected vehicle

Leading car makers are all working towards the self-driving car. Such cars promise far greater safety and more efficient and economical driving. Such a vehicle will also turn the driver into a passenger, free to do other things. Automated vehicles will need multiple sensors coupled to on-board algorithms and systems that can guide the vehicle in real-time.

Golan says camera sensors are now available that see at night, yet some sensors can perform poorly in certain weather conditions and can be confused by electromagnetic fields - the car is a 'noisy' environment. As a result, multiple sensor types will be needed and their outputs fused to ensure key information is not missed.

"Remember, we are talking about life; this is not computers or mobile handsets," says Golan. "If you put more active safety systems on-board, it means you have to have a very solid read on what is going on around you."

The Chevrolet Volt has over 10 million lines of code while the latest Lockheed Martin F-35 fighter has 8.7 million

Wireless

Wireless communications will play a key role in vehicles. The most significant development is the advent of the Long Term Evolution (LTE) cellular standard that will bring broadband to the vehicle.

Golan says there are different perimeters within and around the car where wireless will play a role. The first is within the vehicle for wireless communication between devices such as a user's smart phone or tablet and the vehicle's main infotainment unit.

Wireless will also enable ECUs to talk, eliminating wiring inside the vehicle. "Wires are expensive, are heavy and impact the fuel economy, and can be a source for different problems: in the connectors and the wires themselves," says Golan.

A second, wider sphere of communication involves linking the vehicle with the immediate surroundings. This could be other vehicles or the infrastructure such as traffic lights, signs, and buildings. The communication could even be with cyclists and pedestrians carrying cellphones. Such immediate environment communication would use short-range communications, not the cellular network.

Wide-area communication will be performed using LTE. Such communication could also be performed over wireline. "If it is an electric vehicle, you can exchange data while you charge the vehicle," says Golan.

This ability to communicate across the network and connect to the cloud is what excites the car makers.

You can talk to the vehicle and the processing can be performed in the cloud

Cloud and Big Data

"If you put LTE to the vehicle, you are showing your customers that you are committed to bringing the best technology to the vehicle, you are going to open a very wide pipe and you can send to the cloud and get results with almost no latency," says Golan.

LTE also raises the interesting prospect of enabling some of the current processing embedded in the vehicle to be offloaded onto servers. "I can control the vehicle from the cloud," says Golan. "You can talk to the vehicle and the processing can be performed in the cloud."

The processing and capabilities offered in the cloud are orders of magnitude greater than what can be done on the vehicle, says Golan: "The results are going to be by far better than what we are familiar with today."

Clearly pooling and processing information centrally will offer a broader view than any one vehicle can provide but just what car processing functions can be offloaded are less clear, especially when a broadband link will always be dependent on the quality of the cellular coverage.

Safety critical systems will remain onboard, stresses Golan, but some of the infotainment and some of the extra value creation will come wirelessly.

Choosing the LTE operator to use is a key decision for an automotive company. "We have to make sure you [the driver] are on a very good network," says Golan. "The service provider has to show us, prove to us [their network], and in some cases we run basic and sporadic tests with our operator to make sure that we do have the network in place."

Automotive companies see opportunity here.

"When you get into a vehicle, there is a new type of behaviour that we know," says Golan. "We know a lot about your vehicle, we know your behaviour while you are driving: your driving style, what coffee you like to drink and your favourite coffee store, and that you typically fill up when you have a half tank and you go to a certain station."

This knowledge - about the car and the driver's preferences when driving - when combined with the cloud, is a powerful tool, says Golan. Car companies can offer an ecosystem that supports the driver. "We can have everything that you need while in the vehicle, served by General Motors," says Golan. "Let your imagination think about the services because I'm not going to tell you; we have a long list of stuff that we work on."

If we don't see that what we work on creates tremendous value, we drop it

General Motors already owns a 'huge' data centre and being a global company with a local footprint, will use cloud service providers as required.

So automotive is part of the Big Data story? "Oh, big time," says Golan. "Business analytics is critical for any industry including the automotive industry."

Innovation

Given the opportunities new technologies such as sensors, computing, communication and cloud enable, how do automotive companies remain focussed?

"If we don't see that what we work on creates tremendous value, we drop it," says Golan. "We have no time or resources to spend on spinning wheels."

General Motors has its own venture capital arm to invest in promising companies and spends a lot of time talking to start-ups. "We talk to every possible start-up; if you see them for the first time you would say: 'where is the connection to the automotive industry?'," says Golan. "We talk to everybody on everything."

The company says it will always back ideas. "If some team member comes up with a great idea, it does not matter how thin the company is spread, we will find the resources to support that," says Golan.

General Motors set up its research centre in Israel a decade ago and is the only automotive company to have an advanced development centre there, says Golan."The management had the foresight to understand that the industry is undergoing mega trends and an entrepreneurial culture - an innovation culture - is critically important for the future of the auto industry."

The company also has development sites in Silicon Valley and several other locations. "This is the pipe that is going to feed you innovation, and to do the critical steps needed towards securing the future of the company," says Golan. "You have to go after the technology."

Further reading:

Google's Original X-Man, click here

The CDFP 400 Gig module

- The CDFP will be a 400 Gig short reach module

- Module will enable 4 Terabit line cards

- Specification will be completed in the next year

A CDFP pluggable multi-source agreement (MSA) has been created to develop a 400 Gigabit module for use in the data centre. "It is a pluggable interface, very similar to the QSFP and CXP [modules]," says Scott Sommers, group product manager at Molex, one of the CDFP MSA members.

Scott Sommers, MolexThe CDFP name stands for 400 (CD in Roman numerals) Form factor Pluggable. The MSA will define the module's mechanical properties and its medium dependent interface (MDI) linking the module to the physical medium. The CDFP will support passive and active copper cable, active optical cable and multi-mode fibre.

Scott Sommers, MolexThe CDFP name stands for 400 (CD in Roman numerals) Form factor Pluggable. The MSA will define the module's mechanical properties and its medium dependent interface (MDI) linking the module to the physical medium. The CDFP will support passive and active copper cable, active optical cable and multi-mode fibre.

"The [MSA member] companies realised the need for a low cost, high density 400 Gig solution and they wanted to get that solution out near term," says Sommers. Avago Technologies, Brocade Communications Systems, IBM, JDSU, Juniper Networks, TE Connectivity along with Molex are the founding members of the MSA.

Specification

Samples of the 400 Gig MSA form factor have already been shown at the ECOC 2013 exhibition held in September 2013, as were some mock active optical cable plugs.

"The width of the receptacle - the width of the active optical cable that plugs into it - is slightly larger than a QSFP, and about the same width as the CFP4," says Sommers. This places the width of the CDFP at around 22mm. The CDFP however will use 16, 25 Gigabit electrical lanes instead of the CFP4's four.

"We anticipate a pitch-to-pitch such that we could get 11 [pluggables] on one side of a printed circuit board, and there is nothing to prohibit someone doing belly-to-belly," says Sommers. Belly-to-belly refers to a double-mount PCB design; modules mounted double sidedly. Here, 22 CDFPs would achieve a capacity of 8.8 Terabits.

The MSA group has yet to detail the full dimensions of the form factor nor has it specified the power consumption the form factor will accommodate. "The target applications are switch-to-switch connections so we are not targeting the long reach market that require bigger, hotter modules," says Sommers. This suggests a form factor for distances up to 100m and maybe several hundred meters.

The MSA members are working on a single module design and there is no suggestion of future additional CDFP form factors as this stage.

"The aim is to get this [MSA draft specification] out soon, so that people can take this work and expand upon it, maybe at the IEEE or Infiniband," says Sommers. "Within a year, this specification will be out and in the public domain."

Meanwhile, companies are already active on designs using these building blocks. "In a complex MSA like this, there are pieces such as silicon and connectors that all have to work together," says Sommers.

SDN starts to fulfill its network optimisation promise

Infinera, Brocade and ESnet demonstrate the use of software-defined networking to provision and optimise traffic across several networking layers.

Infinera, Brocade and network operator ESnet are claiming a first in demonstrating software-defined networking (SDN) performing network provisioning and optimisation using platforms from more than one vendor.

Mike Capuano, Infinera

Mike Capuano, Infinera

The latest collaboration is one of several involving optical vendors that are working to extend SDN to the WAN. ADVA Optical Networking and IBM are working to use SDN to connect data centres, while Ciena and partners have created a test bed to develop SDN technology for the WAN.

The latest lab-based demonstration uses ESnet's circuit reservation platform that requests network resources via an SDN controller. ESnet, the US Department of Energy's Energy Sciences Network, conducts networking R&D and operates a large 100 Gigabit network linking research centres and universities. The SDN controller, the open source Floodlight Project design, oversees the network comprising Brocade's 100 Gigabit MLXe IP router and Infinera's DTN-X platform.

The goal of provisioning and optimising traffic across the routing, switching and optical layers has been a work in progress for over a decade. System vendors have undertaken initiatives such as External Network-Network Interface (ENNI) and multi-domain GMPLS but with limited success. "They have been talked about, experimented with, but have never really made it out of the labs," says Mike Capuano, vice president of corporate marketing at Infinera. "SDN has the opportunity to solve this problem for real."

"In the world of Web 2.0, the general approach is not to sit and wait till standards are done, but to prototype, test, find the gaps, report back, and do it again"

"SDN, and technologies like the OpenFlow protocol, allow all of the resources of the entire network to be abstracted to this higher level control," says Daniel Williams, director of product marketing for data center and service provider routing at Brocade.

Daniel William, BrocadeInfinera and ESnet demonstrated OpenFlow provisioning transport resources a year ago. This latest demonstration has OpenFlow provisioning at the packet and optical layers and performing network optimisation. "We have added more carrier-grade capabilities," says Capuano. "Not just provisioning, but now we have topology discovery and network configuration."

Daniel William, BrocadeInfinera and ESnet demonstrated OpenFlow provisioning transport resources a year ago. This latest demonstration has OpenFlow provisioning at the packet and optical layers and performing network optimisation. "We have added more carrier-grade capabilities," says Capuano. "Not just provisioning, but now we have topology discovery and network configuration."

“The demonstration is a positive step in the development of SDN because it showcases the multi-layer transport provisioning and management that many operators consider the prime use case for transport SDN,” says Rick Talbot, principal analyst, optical infrastructure at Current Analysis. "The demonstration’s real-time network optimisation is an excellent example of the potential benefits of transport SDN, leveraging SDN to minimise transit traffic carried at the router layer, saving both CapEx and OpEx."

Using such an SDN setup, service providers can request high-bandwidth links to meet specific networking requirements. "There can be a request from a [software] app: 'I need a 80 Gigabit flow for two days from Switzerland to California with a 95ms latency and zero packet loss'," says Capuano. "The fact that the network has the facility to set that service up and deliver on those parameters automatically is a huge saving."

Such a link can be established the same day of the request being made, even within minutes. Traditionally, such requests involving the IP and optical layers - and different organisations within a service provider - can take weeks to fulfill, says Infinera.

Current Analysis also highlights another potential benefit of the demonstration: how the control of separate domains - the Infinera wavelength and TDM domain and the Brocade layer 2/3 domain - with a common controller illustrates how SDN can provide end-to-end multi-operator, multi-vendor control of connections.

What next

The Open Networking Foundation (ONF) has an Optical Transport Working Group that is tasked with developing OpenFlow extensions to enable SDN control beyond the packet layer to include optical.

How is the optical layer in the demonstration controlled given the ONF work is unfinished?

"Our solution leverages Web 2.0 protocols like RESTful and JSON integrated into the Open Transport Switch [application] that runs on the DTN-X," says Capuano. "In the world of Web 2.0, the general approach is not to sit and wait till standards are done, but to prototype, test, find the gaps, report back, and do it again."

Further work is needed before the demonstration system is robust enough for commercial deployment.

"This is going to take some time: 2014 is the year of test and trials in the carrier WAN while 2015 is when you will see production deployment," says Capuano. "If service providers are making decision on what platforms they want to deploy, it is important to chose ones that are going to position them well to move to SDN when the time comes."

ECOC 2013 review - Part 2

- Oclaro's Raman and hybrid amplifier platform for new networks

- MxN wavelength-selective switch from JDSU

- 200 Gigabit multi-vendor coherent demonstration

- Tunable SFP+ designs proliferate

- Finisar extends 40 Gigabit QSFP+ to 40km

- Oclaro’s tackles wireless backhaul with 2km SFP+ module

Finisar's 40km 40 Gig QSFP+ demo. Source: Finisar

Finisar's 40km 40 Gig QSFP+ demo. Source: Finisar

Amplifier market heats up

Oclaro detailed its high performance Raman and hybrid Raman/ Erbium-doped fibre amplifier platform. "The need for this platform is the high-capacity, high channel rates being installed [by operators] and the desire to be scalable - to support 400 Gig and Terabit super-channels in future," says Per Hansen, vice president of product marketing, optical networks solutions at Oclaro.

"Amplifiers are 'hot' again," says Daryl Inniss, vice president and practice leader components at market research firm, Ovum. For the last decade, amplifier vendors have been tasked with reducing the cost of their amplifier designs. "Now there is a need for new solutions that are more expensive," says Inniss. "It is no longer just cost-cutting."

Amplifiers are used in the network backbone to boost the optical signal-to-noise ratio (OSNR). Raman amplification provides significant noise improvement but it is not power efficient so a Raman amplifier is nearly always matched with an Erbium one. "You can think of the Raman as often working as a pre-amp, and the Erbium-doped fibre as the booster stage of the hybrid amplifier," says Hansen. System houses have different amplifier approaches and how they connect them in the field, while others build them on one card, but Raman/ Erbium-doped fibre are almost always used in tandem, says Hansen.

Oclaro provides Raman units and hybrid units that combine Raman with Erbium-doped fibre. "We can deliver both as a super-module that vendors integrate on their line cards or we can build the whole line card for them" says Hansen.

The Raman amplifier market is way bigger than people have forecast

Since Raman launches a lot of pump power into the fibre, it is vital to have low-loss connections that avoid attenuating the gain. "Raman is a little more sensitive to the quality of the connections and the fibre," says Hansen. Oclaro offers scan diagnostic features that characterise the fibre and determine whether it is safe to turn up the amplification.

"It can analyse the fibre and depending on how much customers want us to do, we can take this to the point that it [the design] can tell you what fibre it is and optimise the pump situation for the fibre," says Hansen. In other cases, the system vendors adopt their own amplifier control.

Oclaro says it is in discussion with customers about implementations. "We are shipping the first products based on this platform," says Hansen.

"[The] Raman [amplifier market] is way bigger than people have forecast," says Inniss. This is due to operators building long distance networks that are scalable to higher data rates. "Coherent transmission is the focal point here, as coherent provides the mechanism to go long distance at high data rates," says Ovum analyst, Inniss.

Wavelength-selective switches

JDSU discussed its wavelength-selective switch (WSS) products at ECOC. The company has previously detailed its twin 1x20 port WSS, which has moved from development to volume production.

At ECOC, JDSU detailed its work on a twin MxN WSS design. "It is a WSS that instead of being a 1xN - 1x20 or a 1x9 - it is an MxN," says Brandon Collings, chief technology officer, communications and commercial optical products at JDSU. "So it has multiple input and output ports on both sides." Such a design is used for the add and drop multiplexer for colourless and directionless reconfigurable optical add/ drop multiplexers (ROADMs).

"People have been able to build colourless and directionless architectures using conventional 1xN WSSes," says Collings. The MxN serves the same functionality but in a single integrated unit, halving the volume and cost for colourless and directionless compared to the current approach.

JDSU says it is also completing the development of a twin multicast switch, the add and drop multiplexer suited to colourless, directionless and contentionless ROADM designs.

200 Gigabit coherent demonstration

ClariPhy Communications, working with NeoPhotonics, Fujitsu Optical Components, u2t Photonics and Inphi, showcased a reference-design demonstration of 200 Gig coherent optical transmission using 16 quadrature amplitude modulation (16-QAM).

For the demonstration, ClariPhy provided the coherent silicon: the digital-to-analogue converter for transmission and the receiver analogue-to digital and digital signal processing (DSP) used to counter channel transmission impairments. NeoPhotonics provided the lasers, for transmission and at the receiver, u2t Photonics supplied the integrated coherent receiver, Fujistu Optical Components the lithium niobate nested modulator while Inphi provided the quad-modulator driver IC.

ClariPhy is developing a 28nm CMOS Lightspeed chip suited for metro and long-haul coherent transmission. The chip will support 100 and 200 Gigabit-per-second (Gbps) data rates and have an adjustable power consumption tailored to the application. The chip will also be suited for use within a coherent CFP module.

"All the components that we are talking about for 100 Gig are either ready or will soon be ready for 200 and 400 Gig," says Ferris Lipscomb, vice president of marketing at NeoPhotonics. To achieve 400Gbps, two 16-QAM channels can be used.

The DWDM market for 10 Gig is now starting to plateau

Tunable SFPs

JDSU first released a 10Gbps SFP+ optical module tunable across the C-band in 2012, a design that dissipates up to 2W. The SFP+ MSA agreement, however, calls for no greater than a 1.5W power consumption. "Our customers had to deal with that higher power dissipation which, in a lot of cases, was doable," says JDSU Collings.

Robert Blum, Oclaro

Robert Blum, Oclaro

JDSU's latest tunable SFP+ design now meets the 1.5W power specification. "This gets into the MSA standard's power dissipation envelop and can now go into every SFP+ socket that is deployed," says Collings. To achieve the power target involved a redesign of the tunable laser. The tunable SFP+ is now sampling and will be generally available one or two quarters hence.

Oclaro and Finisar also unveiled tunable SFP+ modules at ECOC 2013. "The design is using the integrated tunable laser and Mach-Zehnder modulator, all on the same chip," says Robert Blum, director of product marketing for Oclaro's photonic components.

Neither Oclaro nor Finisar detailed their SFP+'s power consumption. "The 1.5W is the standard people are trying to achieve and we are quite close to that," says Blum.

Both Oclaro's and Finisar's tunable SFP+ designs are sampling now.

Reducing a 10Gbps tunable transceiver to a SFP+ in effect is the end destination on the module roadmap. "The DWDM market for 10 Gig is now starting to plateau," says Rafik Ward, vice president of marketing at Finisar. "From an industry perspective, you will see more and more effort on higher data rates in future."

40G QSFP+ with a 40km reach

Finisar demonstrated a 40Gbps QSFP+ with a reach of 40km. "The QSFP has embedded itself as the form-factor of choice at 40 Gig," says Ward.

Until now there has been the 850nm 40GBASE-SR4 with a 100m reach and the 1310nm 40GBASE-LR4 at 10km. To achieve a 40km QSFP+, Finisar is using four uncooled distributed feedback (DFB) lasers and an avalanche photo-detector (APD) operating using coarse WDM (CWDM) wavelengths spaced around 1310nm. The QSFP+ is being used on client side cards for enterprise and telecom equipment, says Finisar.

Module for wireless backhaul

Oclaro announced an SFP+ that supports the wireless Common Public Radio Interface (CPRI) and Open Base Station Architecture Initiative (OBSAI) standards used to link equipment in a wireless cell's tower and the base station controller.

Until now, optical modules for CPRI have been the 10km 10GBASE-LR4 modules. "You have a relatively expensive device for the last mile which is the most cost sensitive [part of the network]," says Oclaro's Hansen.

Oclaro's 1W SFP+ reduces module cost by using a simpler Fabry-Perot laser but at the expense of a 2km reach only. However, this is sufficient for a majority of requirements, says Hansen. The SFP supports 2.5G, 3Gbps, 6Gbps and 10Gbps rates. "CPRI has been used mostly at 3 Gig and 6 Gig but there is interest in 10 Gig due to growing mobile data traffic and the adoption of LTE," says Hansen.

The SFP+ module is sampling and will be in volume production by year end.

For Part 1, click here

ECOC 2013 review - Part 1

Gazettabyte surveys some of the notable product announcements made at the recent European Conference on Optical Communication (ECOC) held in London.

Part 1: Highlights

- First CFP4 module demonstration from Finisar

- Acacia Communications unveils first 100 Gig coherent CFP

- Oplink announces a 100 Gig direct detection CFP

- Second-generation coherent components take shape

100 Gigabit pluggables

Finisar used the ECOC exhibition to demonstrate the first CFP4 optical module, the smallest of the CFP MSA family of modules. The first CFP4 supports the 100GBASE-SR4 standard comprising four electrical and four optical channels, each at 25 Gigabit-per-second (Gbps).

The CFP4 is a quarter of the width of the CFP while the CFP2 is about a half the CFP's width. The CFP4 thus promises to quadruple the faceplate port density compared to using the CFP. Finisar says the CFP4 does even better, supporting line cards with 3.6 Terabits of capacity.

"It is not just the [CFP4's] width but the length and height that are shorter," says Rafik Ward, vice president of marketing at Finisar. The CFP4s can be aligned in two columns - belly-to-belly - on the card, achieving 3.6Tbps, each row comprising 18 CFP4 modules.

We see the CFP4 as a necessity to continue to grow the 100 Gigabit Ethernet market

The CFP4 was always scheduled to follow quickly the launch of the CFP2, says Ward. But the availability of the CFP4 will be important for the MSA. Data centre switch vendor Arista Networks has said that the CFP2 was late to market, while Cisco Systems has developed the CPAK, its own CFP2 alternative. "We see it [the CFP4] as a necessity to continue to grow the 100 Gigabit Ethernet market," says Ward.

Other 100 Gigabit Ethernet (GbE) variants will follow in the CFP4 form factor such as the LR4 and SR10 and the 10x10GbE breakout variant. This raises the interesting prospect of requiring an “inverse gearbox” chip that will translate between the CFP4's four electrical channels and the SR10's 10 optical channels. "We are going to see a lot of design activity around CFP4 in 2014," says Ward.

Meanwhile, Acacia Communications unveiled the AC-100, the first 100 Gig coherent CFP module for metro and regional networks, that includes a digital signal processor (DSP) system-on-chip.

"The DSP can be programmed for different performance and power levels to achieve a range of distances," says Daryl Inniss, vice president and practice leader components at market research firm, Ovum.

Acacia Communications says a CFP-based coherent design provides carriers and content providers with a 100 Gig metro solution that is more economical than 10 Gig.

Oplink Communications announced a direct detection 100 Gig metro CFP at ECOC. The 4x28Gbps CFP uses MultiPhy's maximum likelihood sequence estimation (MLSE) algorithm implemented using its MP1100Q and MP1101Q ICs. The devices enhance the reach of the module and allows 10 Gig optical components to be used for the receive and transmit paths. "Oplink’s CFP is the first module to come to market with our devices inside," says Neal Neslusan, vice president of marketing and sales at MultiPhy.

There are now at least three vendors selling direct-detection 100Gbps modules, says Neslusan, with Oplink and Menara Networks, which has also announced a CFP product, joining Finisar.

MultiPhy is working with several additional companies and that one system vendor will come to market with a product using the company's chips in the coming months. The company is also working on a second-generation direct detection IC design. "We believe there is a compelling roadmap story for direct detection," says Neslusan.

Oclaro announced it is now shipping in volume its 10km 100GBASE-LR4 CFP2 supporting 100GbE and OTU4 (OTN) rates. Oclaro has demonstrated the CFP2 working with a Xilinx Virtex 7 FPGA. "If customers choose that combination of technology, we have already tested it for them and they can rely on those rates [100GbE and OTU4] working," says Per Hansen, vice president of product marketing, optical networks solutions at Oclaro.

JDSU also said that its CFP2 LR4 is nearing completion. "We are getting pretty close to releasing it," says Brandon Collings, chief technology officer, communications and commercial optical products at JDSU.

Ed Murphy, JDSU

The integrated transmit and receive optical sub-assembly (TOSA/ ROSA) designs for the CFP2 LR4 use hybrid integration.

"In this case it is not monolithic integration as it is in the case of the indium phosphide line side modules but hybrid integration taking advantage of our PLC (planar lightwave circuit) technology in combination with arrays of photo detectors or high speed EMLs (externally modulated lasers)," says Murphy.

JDSU has based its module roadmap on the CFP2 TOSA and ROSA designs. The designs are sufficiently integrated to also fit within the QSFP28 and CFP4 modules.

There may be tweaks to the chips to lower the power dissipation, says Murphy, but these will be minor variants on existing parts.

Coherent components

Several component makers discussed their latest compact designs for next-generation coherent transmission line cards and modules.

NeoPhotonics detailed its micro-ITLA narrow linewidth tunable laser (micro-ITLA) that occupies less than a third of the area of the existing ITLA design. The company also announced a small form factor intradyne coherent receiver (SFF-ICR), less than half the size of existing integrated coherent receivers (ICRs).

NeoPhotonics supplies components to module and system vendors, and says customer interest in the second-generation coherent components is for higher port count line cards. "Instead of 100 Gig on a line card, you can have 200 or 400 Gigabit on a line card," says Ferris Lipscomb, vice president of marketing at NeoPhotonics. Moving to pluggable module designs will be a follow-on development, but for now, the market is not quite ready, he says.

An integrated coherent transmitter for metro, combining a tunable laser and integrated indium phosphide modulator in a compact package is also offered by NeoPhotonics.

The laser has two outputs - one output is modulated for the transmission while the second output is a local oscillator source feeding the coherent receiver. About half of all 100Gbps designs use such a split laser source, says Lipscomb, rather than two separate lasers, one each for the transmitter and the receiver. "That means that one transponder can only transmit and receive the same wavelength and is a little less flexible but for cost reduction that is what people are doing," says Lipscomb.

Oclaro is now sampling to customers its next-generation indium phosphide-based coherent components. The company, also a supplier of coherent modules, says the components will enable CFP and CFP2 pluggable coherent transceivers. The pluggable modules are suited for use in metro and metro regional networks.

Oclaro's components include an integrated transmitter comprising an indium phosphide laser and modulator, and the SFF-ICR. Oclaro's micro-ITLA is in volume production and has an output power high enough to perform both the transmit and the local oscillator functions. The micro-ITLA is used for line cards, 5x7-inch and 4x5-inch MSAs module and CFP designs.

u2t Photonics is another company that is developing a SFF-ICR. The company gave a private demonstration at ECOC to its customers of its indium phosphide modulator for use with CFP and CFP2 modules. "We demonstrated technical feasibility; it is a prototype which shows the capability of indium phosphide technology," says Jens Fiedler, executive vice president sales and marketing at u2t Photonics.

u2t Photonics and Finisar both licensed 100Gbps coherent indium phosphide modulator technology developed at the Fraunhofer Heinrich-Hertz-Institute.

There are new coherent DSP chips coming out early next year

Also showcased was u2t's gallium arsenide modulator technology implementing 16-QAM (quadrature amplitude modulation) at ECOC, but the company has yet to announce a product.

JDSU also gave an update on its line side coherent components. It is developing an integrated transmitter - a laser with nested modulators - for coherent applications. "This work is underway as a technology for line side CFP and CFP2 modules," says Ed Murphy, senior director, communications and commercial optical products at JDSU.

The difference between the CFP and CFP2 coherent modules is that the DSP system-on-chip is integrated within the CFP. Acacia's AC-100 CFP is the first example of such a product. For the smaller CFP2, the DSP will reside on the line card.

"There are new DSP [chips] coming out early next year," says Robert Blum, director of product marketing for Oclaro's photonic components. The DSPs will require a power consumption no greater than 20W if the complete design - the DSP and optics - is to comply with the CFP's maximum power rating of 32W.

Pluggable coherent modules promise greater port densities per line card. The modules can also be deployed with traffic demand and, in the case of a fault, can be individually swapped rather than having to replace the line card, says Blum.

JDSU says two factors are driving the metro coherent market. One is the need for lower cost designs to meet metro's cost-sensitive requirements. The second is that the metro distances can use essentially the same devices for 16-QAM to support 200Gbps links as well as 100Gbps. "It is the same modulator structure; maybe a few of the specs are a bit tighter but you can think of it as the same device," says Murphy.

System vendors have trialled 200Gbps links but deployments are expected to start from 2014. The deployments will likely use lithium niobate modulators, says Murphy, but will be followed quickly by indium phosphide designs.

NeoPhotonics used ECOC to declare that it has now integrated the semiconductor optical component arm of Lapis Semiconductor which it acquired for $35.2 million in March 2013.

Ferris Lipscomb

The unit, known as NeoPhotonics Semiconductor GK, makes drivers and externally modulated lasers. "These are key technologies for high-speed 100 Gig and 400 Gig transmissions, both on the line side and on the client side," says Lipscomb.

NeoPhotonics, previously a customer of Lapis, decided to acquire the unit and benefit from vertical integration as it expands its 100 Gig and higher-speed coherent portfolio.

Owning the technology has cost and optical performance benefits, says Lipscomb. It enables the integration of a design on one chip, thereby avoiding interfacing issues.

Further reading:

Part 2, click here

Alcatel-Lucent dismisses Nokia rumours as it launches NFV ecosystem

Michel Combes, CEO. Photo: Kobi Kantor.

Michel Combes, CEO. Photo: Kobi Kantor.

The CEO of Alcatel-Lucent, Michel Combes, has brushed off rumours of a tie-up with Nokia, after reports surfaced last week that Nokia's board was considering the move as a strategy option.

"You will have to ask Nokia," said Combes. "I'm fully focussed on the Shift Plan, it is the right plan [for the company]; I don't want to be distracted by anything else."

Combes was speaking at the opening of Alcatel-Lucent's cloud R&D centre in Kfar Saba, Israel, where the company's internal start-up CloudBand is developing cloud technology for carriers.

Network Functions Virtualisation

CloudBand used the site opening to unveil its CloudBand Ecosystem Program to spur adoption of Network Functions Virtualisation (NFV). NFV is a carrier-led initiative, set up by the European Telecommunications Standards Institute (ETSI), to benefit from the IT model of running applications on virtualised servers.

Carriers want to get away from vendor-specific platforms that are expensive to run and cumbersome to upgrade when new services are needed. Adding a service can take between 18 months and three years, said Dor Skuler, vice president and general manager of the CloudBand business unit. Moreover, such equipment can reside in the network for 15 years. "Most of the [telecom] software is running on CPUs that are 15 years old," said Skuler.

Instead, carriers want vendors to develop software 'network functions' executed on servers. NFV promises a common network infrastructure and reduced costs by exploiting the economies of scale associated with servers. Server volumes dwarf those of dedicated networking equipment, and are regularly upgraded with new CPUs.

Applications running on servers can also be scaled up and down, according to demand, using virtualisation and cloud orchestration techniques already present in the data centre. "This is about to make the network scalable and automated," said Combes.

Alcatel-Lucent stresses that not all networking functions are suited for virtualisation. Optical transport is one example. Another is routing, which requires dedicated silicon for packet processing and traffic management.

CloudBand was set up in 2011. The unit is focussed on the orchestration and automation of distributed cloud computing for carriers. "How do you operationalise cloud which may be distributed across 20 to 30 locations?" said Skuler.

CloudBand says it can add a "cloud node" - IT equipment at an operator's site - and have it up and running three hours after power-up. This requires processes that are fully automated, said Skuler. Also used are algorithms developed at Alcatel-Lucent Bell Labs that determine the best location for distributed cloud resources for a given task. The algorithms load-balance the resources based on an application's requirements.

The distributed cloud technology also benefits from software-defined networking (SDN) technology from Alcatel-Lucent's other internal venture, Nuage Networks. Nuage Networks automates and sets up network connections between data centres. "Just as SDN makes use of virtualisation to give applications more memory and CPU resources in the data centre, Nuage does the same for the network," said Skuler.

Open interfaces are needed for NFV to succeed and avoid the issue of proprietary solutions and vendor lock-in. Alcatel-Lucent's NFV solution needs to support third-party applications, while the company's applications will have to run on other vendors' platforms. To this aim, CloudBand has set up an NFV ecosystem for service providers, vendors and developers.

"We have opened up CloudBand to anyone in the industry to test network applications on top of the cloud," said Skuler. "We are the first to do that."

So far, 15 companies have signed up to the CloudBand Ecosystem Program including Deutsche Telekom, Telefonica, Intel and HP.

Technologies such as NFV promise operators a way to regain market traction and avoid the commoditisation of transport, said Combes. Operators can manage their networks more efficiently, and create new business models. For example, operators can sell enterprises network functions such as infrastructure-as-a-service and platform-as-a-service.

Does not software functions run on servers undermine a telecom equipment vendor's primary business? "We are still perceived as a hardware company yet 85 percent of systems is software based," said Combes. Moreover, this is a carrier-driven initiative. "This is where our customers want to go," he said. "You either accept there will be a bit of canabalisation or run the risk of being canabalised by IT players or others."

The Shift Plan

Combes has been in place as Alcatel-Lucent's CEO for four months. In that time he has launched the Shift Plan that focusses the company's activities in three broad directions: IP infrastructure including routing and transport, cloud, and ultra-broadband access including wireless (LTE) and wireline (FTTx).

Combes says the goal is to regain the competitiveness Alcatel-Lucent has lost in recent years. The goal is to improve product innovation, quality of execution and the company's cost structure. Combes has also tackled the balance sheet, refinancing company debt over the summer.

The Shift Plan's target is to get the company back on track by 2015: growing, profitable and industry-leading in the three areas of focus, he said.